b3077ed86214a6020f64808ce6cffce0.ppt

- Количество слайдов: 26

WP 9 Report & Outlook RWL Jones RAL 10 May 2009

WP 9 Report & Outlook RWL Jones RAL 10 May 2009

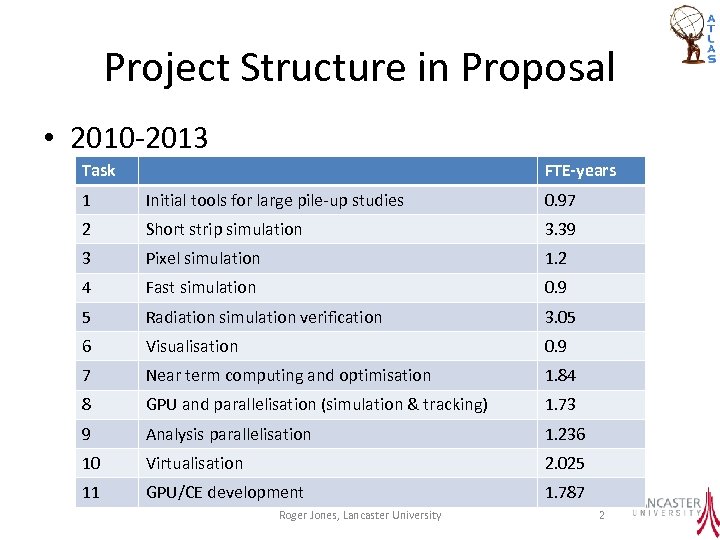

Project Structure in Proposal • 2010 -2013 Task FTE-years 1 Initial tools for large pile-up studies 0. 97 2 Short strip simulation 3. 39 3 Pixel simulation 1. 2 4 Fast simulation 0. 9 5 Radiation simulation verification 3. 05 6 Visualisation 0. 9 7 Near term computing and optimisation 1. 84 8 GPU and parallelisation (simulation & tracking) 1. 73 9 Analysis parallelisation 1. 236 10 Virtualisation 2. 025 11 GPU/CE development 1. 787 Roger Jones, Lancaster University 2

Project Structure in Proposal • 2010 -2013 Task FTE-years 1 Initial tools for large pile-up studies 0. 97 2 Short strip simulation 3. 39 3 Pixel simulation 1. 2 4 Fast simulation 0. 9 5 Radiation simulation verification 3. 05 6 Visualisation 0. 9 7 Near term computing and optimisation 1. 84 8 GPU and parallelisation (simulation & tracking) 1. 73 9 Analysis parallelisation 1. 236 10 Virtualisation 2. 025 11 GPU/CE development 1. 787 Roger Jones, Lancaster University 2

Effect of Descope etc • PPGP recommended delay in GPU etc – Work in this area descoped. – GPU work for HLT, minimum level to allow work later – Tasks rationalized • Some WP 1 effort redeployed into simulation • Pixel simulation priority increased after discussion • RG 15% cut mainly affects academic time – Usually HEFCE funded, but increased risk – Deliverables do not change

Effect of Descope etc • PPGP recommended delay in GPU etc – Work in this area descoped. – GPU work for HLT, minimum level to allow work later – Tasks rationalized • Some WP 1 effort redeployed into simulation • Pixel simulation priority increased after discussion • RG 15% cut mainly affects academic time – Usually HEFCE funded, but increased risk – Deliverables do not change

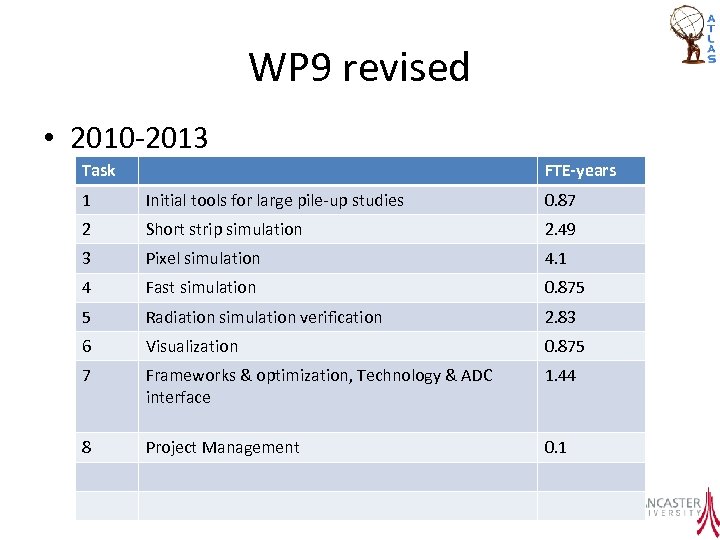

WP 9 revised • 2010 -2013 Task FTE-years 1 Initial tools for large pile-up studies 0. 87 2 Short strip simulation 2. 49 3 Pixel simulation 4. 1 4 Fast simulation 0. 875 5 Radiation simulation verification 2. 83 6 Visualization 0. 875 7 Frameworks & optimization, Technology & ADC interface 1. 44 8 Project Management 0. 1 Roger Jones, Lancaster University 4

WP 9 revised • 2010 -2013 Task FTE-years 1 Initial tools for large pile-up studies 0. 87 2 Short strip simulation 2. 49 3 Pixel simulation 4. 1 4 Fast simulation 0. 875 5 Radiation simulation verification 2. 83 6 Visualization 0. 875 7 Frameworks & optimization, Technology & ADC interface 1. 44 8 Project Management 0. 1 Roger Jones, Lancaster University 4

Start-up Issues • The delayed start to the funded project had large impacts – Strip simulation continued from the previous project, but retention of effort was compromised by the uncertainty – The same was true for the radiation environment work – Fast simulation and visualization began in October – Pixel simulation new effort is only coming online now

Start-up Issues • The delayed start to the funded project had large impacts – Strip simulation continued from the previous project, but retention of effort was compromised by the uncertainty – The same was true for the radiation environment work – Fast simulation and visualization began in October – Pixel simulation new effort is only coming online now

• Simulation Deliverables and Milestones Simulation tools for the Lo. I – Pileup tools (Sep 2011) – Strip and pixel simulation release for Lo. I (Dec 2011) – Initial Atlfast modifications and tune for large pileup and new detectors (Dec 2011) Simulation tools for the design proposals – Upgrade simulation as default with Atlfast tuned for design proposal studies (Mar 2013) – Deisgn proposal pixel simulation (Jan 2013) – Automated tuning procedure for Atlfast (Sept 2012)

• Simulation Deliverables and Milestones Simulation tools for the Lo. I – Pileup tools (Sep 2011) – Strip and pixel simulation release for Lo. I (Dec 2011) – Initial Atlfast modifications and tune for large pileup and new detectors (Dec 2011) Simulation tools for the design proposals – Upgrade simulation as default with Atlfast tuned for design proposal studies (Mar 2013) – Deisgn proposal pixel simulation (Jan 2013) – Automated tuning procedure for Atlfast (Sept 2012)

Simulation • Framework activities Framework development – Made robust and being adapted to incorporate new geometries – Progress towards supporting new, radical, geometries (but this is an ongoing development activity) – Advances in efficient handling of pile-up – Reduced memory requirement for digitisation Note: Phil Clark as Simulation Co-ordinator helps greatly our influence & the upgrade in general

Simulation • Framework activities Framework development – Made robust and being adapted to incorporate new geometries – Progress towards supporting new, radical, geometries (but this is an ongoing development activity) – Advances in efficient handling of pile-up – Reduced memory requirement for digitisation Note: Phil Clark as Simulation Co-ordinator helps greatly our influence & the upgrade in general

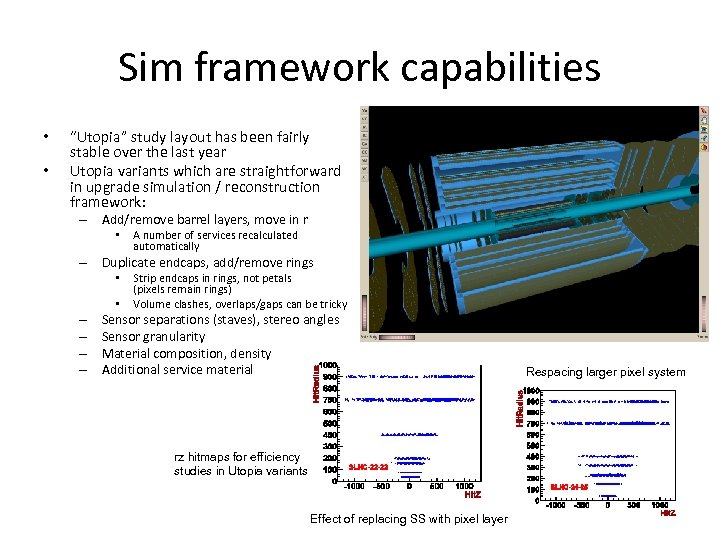

Sim framework capabilities • • “Utopia” study layout has been fairly stable over the last year Utopia variants which are straightforward in upgrade simulation / reconstruction framework: – Add/remove barrel layers, move in r • A number of services recalculated automatically – Duplicate endcaps, add/remove rings • • – – Strip endcaps in rings, not petals (pixels remain rings) Volume clashes, overlaps/gaps can be tricky Sensor separations (staves), stereo angles Sensor granularity Material composition, density Additional service material rz hitmaps for efficiency studies in Utopia variants Effect of replacing SS with pixel layer Respacing larger pixel system

Sim framework capabilities • • “Utopia” study layout has been fairly stable over the last year Utopia variants which are straightforward in upgrade simulation / reconstruction framework: – Add/remove barrel layers, move in r • A number of services recalculated automatically – Duplicate endcaps, add/remove rings • • – – Strip endcaps in rings, not petals (pixels remain rings) Volume clashes, overlaps/gaps can be tricky Sensor separations (staves), stereo angles Sensor granularity Material composition, density Additional service material rz hitmaps for efficiency studies in Utopia variants Effect of replacing SS with pixel layer Respacing larger pixel system

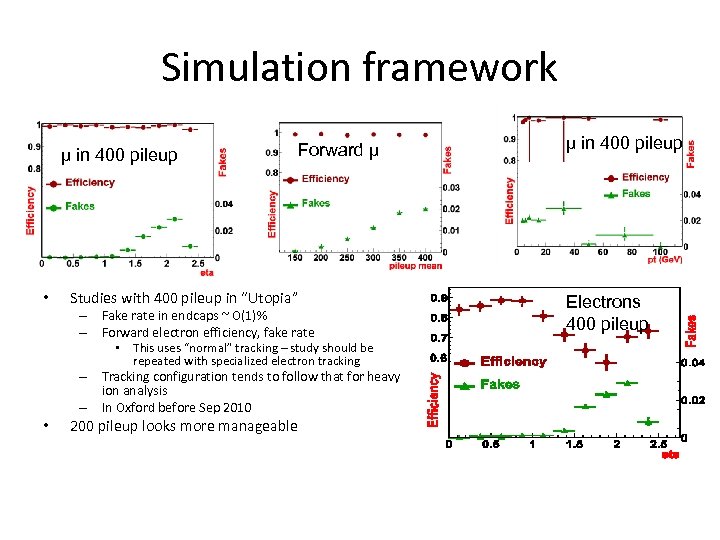

Simulation framework μ in 400 pileup • Forward µ Studies with 400 pileup in “Utopia” – Fake rate in endcaps ~ O(1)% – Forward electron efficiency, fake rate – – • • This uses “normal” tracking – study should be repeated with specialized electron tracking Tracking configuration tends to follow that for heavy ion analysis In Oxford before Sep 2010 200 pileup looks more manageable μ in 400 pileup Electrons 400 pileup

Simulation framework μ in 400 pileup • Forward µ Studies with 400 pileup in “Utopia” – Fake rate in endcaps ~ O(1)% – Forward electron efficiency, fake rate – – • • This uses “normal” tracking – study should be repeated with specialized electron tracking Tracking configuration tends to follow that for heavy ion analysis In Oxford before Sep 2010 200 pileup looks more manageable μ in 400 pileup Electrons 400 pileup

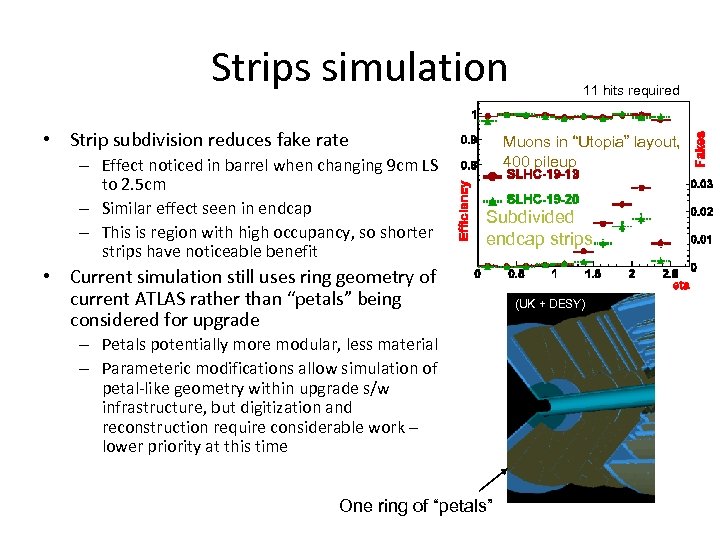

Strips simulation • Strip subdivision reduces fake rate – Effect noticed in barrel when changing 9 cm LS to 2. 5 cm – Similar effect seen in endcap – This is region with high occupancy, so shorter strips have noticeable benefit 11 hits required Muons in “Utopia” layout, 400 pileup Subdivided endcap strips • Current simulation still uses ring geometry of current ATLAS rather than “petals” being considered for upgrade – Petals potentially more modular, less material – Parameteric modifications allow simulation of petal-like geometry within upgrade s/w infrastructure, but digitization and reconstruction require considerable work – lower priority at this time One ring of “petals” (UK + DESY)

Strips simulation • Strip subdivision reduces fake rate – Effect noticed in barrel when changing 9 cm LS to 2. 5 cm – Similar effect seen in endcap – This is region with high occupancy, so shorter strips have noticeable benefit 11 hits required Muons in “Utopia” layout, 400 pileup Subdivided endcap strips • Current simulation still uses ring geometry of current ATLAS rather than “petals” being considered for upgrade – Petals potentially more modular, less material – Parameteric modifications allow simulation of petal-like geometry within upgrade s/w infrastructure, but digitization and reconstruction require considerable work – lower priority at this time One ring of “petals” (UK + DESY)

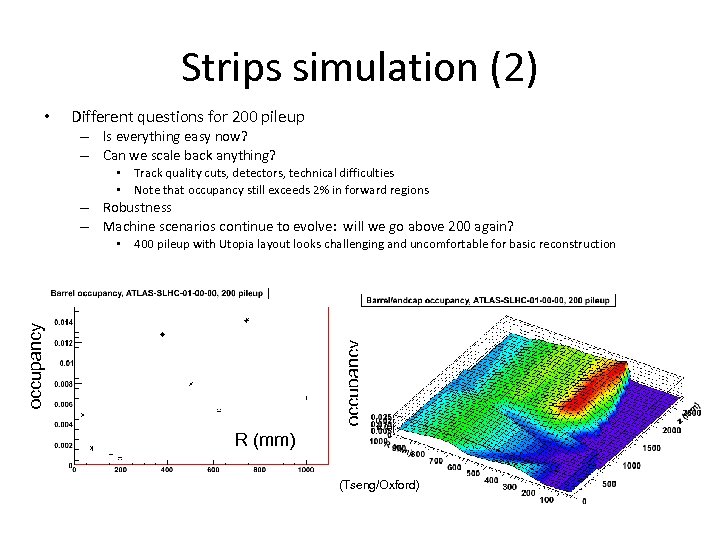

Strips simulation (2) • Different questions for 200 pileup – Is everything easy now? – Can we scale back anything? • Track quality cuts, detectors, technical difficulties • Note that occupancy still exceeds 2% in forward regions – Robustness – Machine scenarios continue to evolve: will we go above 200 again? occupancy • 400 pileup with Utopia layout looks challenging and uncomfortable for basic reconstruction R (mm) (Tseng/Oxford)

Strips simulation (2) • Different questions for 200 pileup – Is everything easy now? – Can we scale back anything? • Track quality cuts, detectors, technical difficulties • Note that occupancy still exceeds 2% in forward regions – Robustness – Machine scenarios continue to evolve: will we go above 200 again? occupancy • 400 pileup with Utopia layout looks challenging and uncomfortable for basic reconstruction R (mm) (Tseng/Oxford)

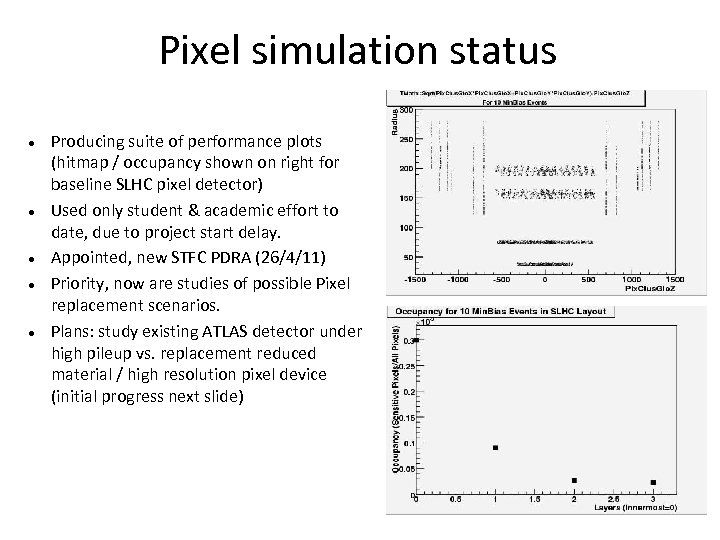

Pixel simulation status Producing suite of performance plots (hitmap / occupancy shown on right for baseline SLHC pixel detector) Used only student & academic effort to date, due to project start delay. Appointed, new STFC PDRA (26/4/11) Priority, now are studies of possible Pixel replacement scenarios. Plans: study existing ATLAS detector under high pileup vs. replacement reduced material / high resolution pixel device (initial progress next slide)

Pixel simulation status Producing suite of performance plots (hitmap / occupancy shown on right for baseline SLHC pixel detector) Used only student & academic effort to date, due to project start delay. Appointed, new STFC PDRA (26/4/11) Priority, now are studies of possible Pixel replacement scenarios. Plans: study existing ATLAS detector under high pileup vs. replacement reduced material / high resolution pixel device (initial progress next slide)

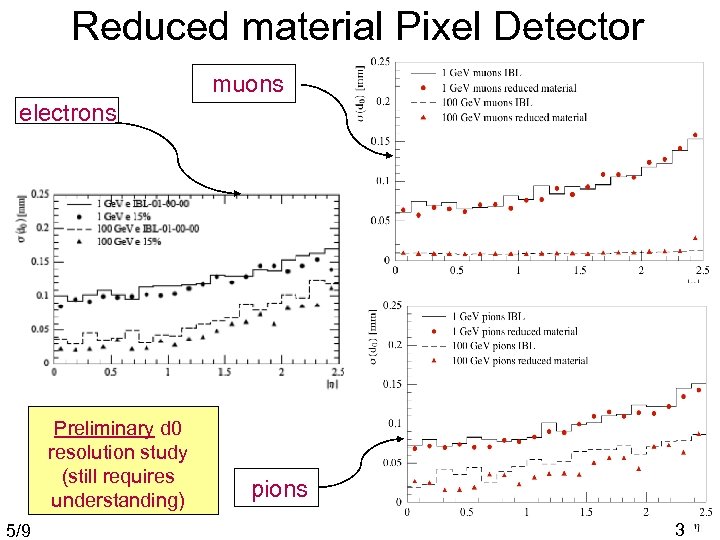

Reduced material Pixel Detector muons electrons Preliminary d 0 resolution study (still requires understanding) 5/9 pions 3

Reduced material Pixel Detector muons electrons Preliminary d 0 resolution study (still requires understanding) 5/9 pions 3

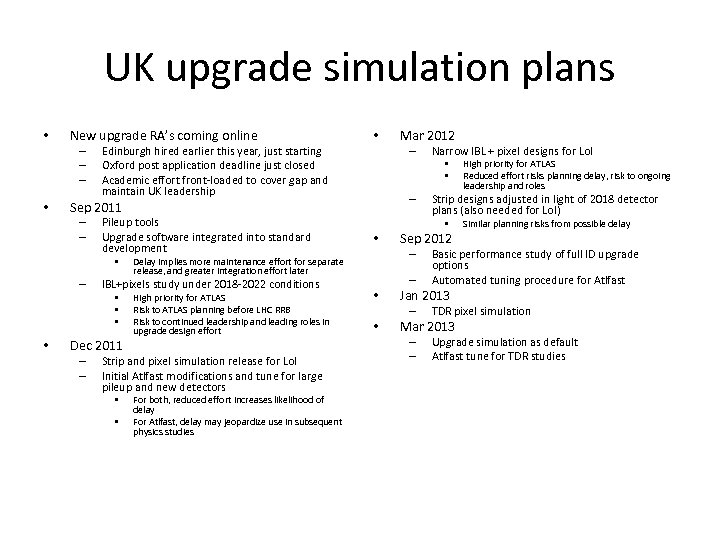

UK upgrade simulation plans • New upgrade RA’s coming online – – – • Pileup tools Upgrade software integrated into standard development • – • • • Dec 2011 – – High priority for ATLAS Risk to ATLAS planning before LHC RRB Risk to continued leadership and leading roles in upgrade design effort Strip and pixel simulation release for Lo. I Initial Atlfast modifications and tune for large pileup and new detectors • • For both, reduced effort increases likelihood of delay For Atlfast, delay may jeopardize use in subsequent physics studies Narrow IBL + pixel designs for Lo. I • • – • Sep 2012 – – • Similar planning risks from possible delay Basic performance study of full ID upgrade options Automated tuning procedure for Atlfast Jan 2013 – • High priority for ATLAS Reduced effort risks planning delay, risk to ongoing leadership and roles Strip designs adjusted in light of 2018 detector plans (also needed for Lo. I) • Delay implies more maintenance effort for separate release, and greater integration effort later IBL+pixels study under 2018 -2022 conditions Mar 2012 – Sep 2011 – – • • Edinburgh hired earlier this year, just starting Oxford post application deadline just closed Academic effort front-loaded to cover gap and maintain UK leadership TDR pixel simulation Mar 2013 – – Upgrade simulation as default Atlfast tune for TDR studies

UK upgrade simulation plans • New upgrade RA’s coming online – – – • Pileup tools Upgrade software integrated into standard development • – • • • Dec 2011 – – High priority for ATLAS Risk to ATLAS planning before LHC RRB Risk to continued leadership and leading roles in upgrade design effort Strip and pixel simulation release for Lo. I Initial Atlfast modifications and tune for large pileup and new detectors • • For both, reduced effort increases likelihood of delay For Atlfast, delay may jeopardize use in subsequent physics studies Narrow IBL + pixel designs for Lo. I • • – • Sep 2012 – – • Similar planning risks from possible delay Basic performance study of full ID upgrade options Automated tuning procedure for Atlfast Jan 2013 – • High priority for ATLAS Reduced effort risks planning delay, risk to ongoing leadership and roles Strip designs adjusted in light of 2018 detector plans (also needed for Lo. I) • Delay implies more maintenance effort for separate release, and greater integration effort later IBL+pixels study under 2018 -2022 conditions Mar 2012 – Sep 2011 – – • • Edinburgh hired earlier this year, just starting Oxford post application deadline just closed Academic effort front-loaded to cover gap and maintain UK leadership TDR pixel simulation Mar 2013 – – Upgrade simulation as default Atlfast tune for TDR studies

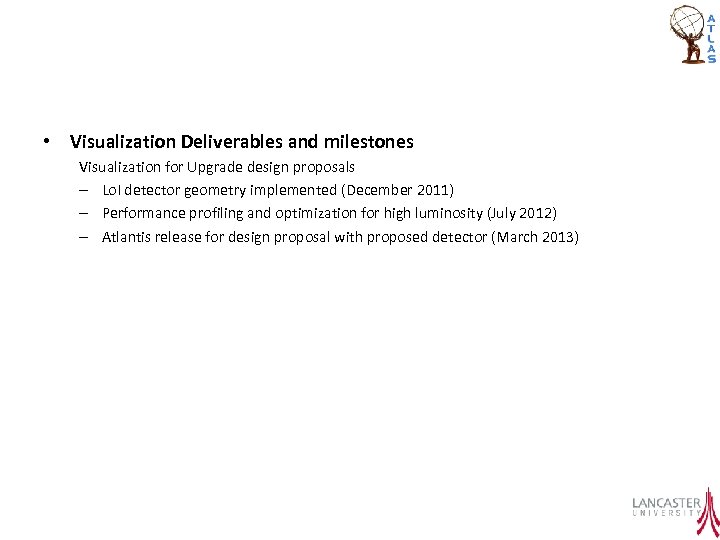

• Visualization Deliverables and milestones Visualization for Upgrade design proposals – Lo. I detector geometry implemented (December 2011) – Performance profiling and optimization for high luminosity (July 2012) – Atlantis release for design proposal with proposed detector (March 2013)

• Visualization Deliverables and milestones Visualization for Upgrade design proposals – Lo. I detector geometry implemented (December 2011) – Performance profiling and optimization for high luminosity (July 2012) – Atlantis release for design proposal with proposed detector (March 2013)

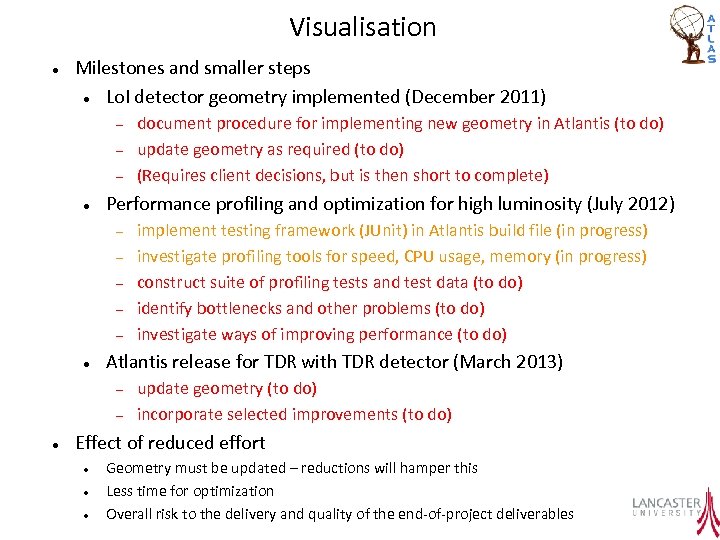

Visualisation Milestones and smaller steps Lo. I detector geometry implemented (December 2011) Performance profiling and optimization for high luminosity (July 2012) implement testing framework (JUnit) in Atlantis build file (in progress) investigate profiling tools for speed, CPU usage, memory (in progress) construct suite of profiling tests and test data (to do) identify bottlenecks and other problems (to do) investigate ways of improving performance (to do) Atlantis release for TDR with TDR detector (March 2013) document procedure for implementing new geometry in Atlantis (to do) update geometry as required (to do) (Requires client decisions, but is then short to complete) update geometry (to do) incorporate selected improvements (to do) Effect of reduced effort Geometry must be updated – reductions will hamper this Less time for optimization Overall risk to the delivery and quality of the end-of-project deliverables

Visualisation Milestones and smaller steps Lo. I detector geometry implemented (December 2011) Performance profiling and optimization for high luminosity (July 2012) implement testing framework (JUnit) in Atlantis build file (in progress) investigate profiling tools for speed, CPU usage, memory (in progress) construct suite of profiling tests and test data (to do) identify bottlenecks and other problems (to do) investigate ways of improving performance (to do) Atlantis release for TDR with TDR detector (March 2013) document procedure for implementing new geometry in Atlantis (to do) update geometry as required (to do) (Requires client decisions, but is then short to complete) update geometry (to do) incorporate selected improvements (to do) Effect of reduced effort Geometry must be updated – reductions will hamper this Less time for optimization Overall risk to the delivery and quality of the end-of-project deliverables

• Radiation Deliverables and Milestones Validated radiation model for upgrade – Apr-2011 Validation of 7 Te. V inner detector fluences and doses – March 2012 Validation of 7 Te. V cavern fluences and impact for ATLAS Upgrade – March 2013 Radiological assessment and proposals for inner detector operations

• Radiation Deliverables and Milestones Validated radiation model for upgrade – Apr-2011 Validation of 7 Te. V inner detector fluences and doses – March 2012 Validation of 7 Te. V cavern fluences and impact for ATLAS Upgrade – March 2013 Radiological assessment and proposals for inner detector operations

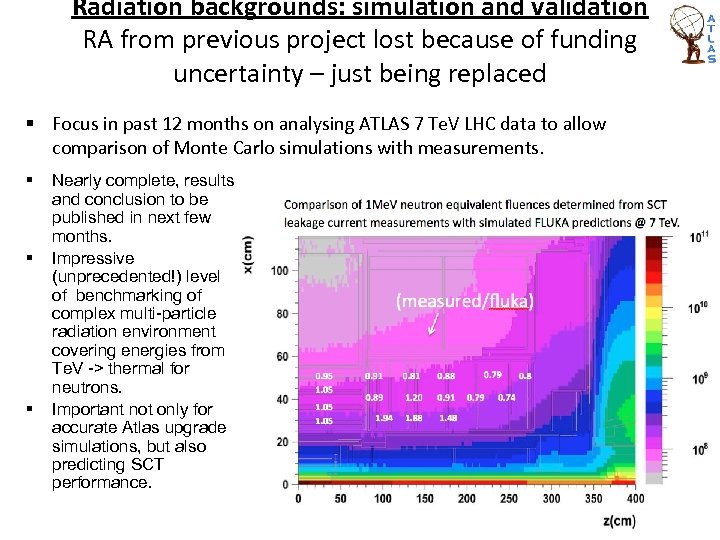

Radiation backgrounds: simulation and validation RA from previous project lost because of funding uncertainty – just being replaced § Focus in past 12 months on analysing ATLAS 7 Te. V LHC data to allow comparison of Monte Carlo simulations with measurements. § § § Nearly complete, results and conclusion to be published in next few months. Impressive (unprecedented!) level of benchmarking of complex multi-particle radiation environment covering energies from Te. V -> thermal for neutrons. Important not only for accurate Atlas upgrade simulations, but also predicting SCT performance.

Radiation backgrounds: simulation and validation RA from previous project lost because of funding uncertainty – just being replaced § Focus in past 12 months on analysing ATLAS 7 Te. V LHC data to allow comparison of Monte Carlo simulations with measurements. § § § Nearly complete, results and conclusion to be published in next few months. Impressive (unprecedented!) level of benchmarking of complex multi-particle radiation environment covering energies from Te. V -> thermal for neutrons. Important not only for accurate Atlas upgrade simulations, but also predicting SCT performance.

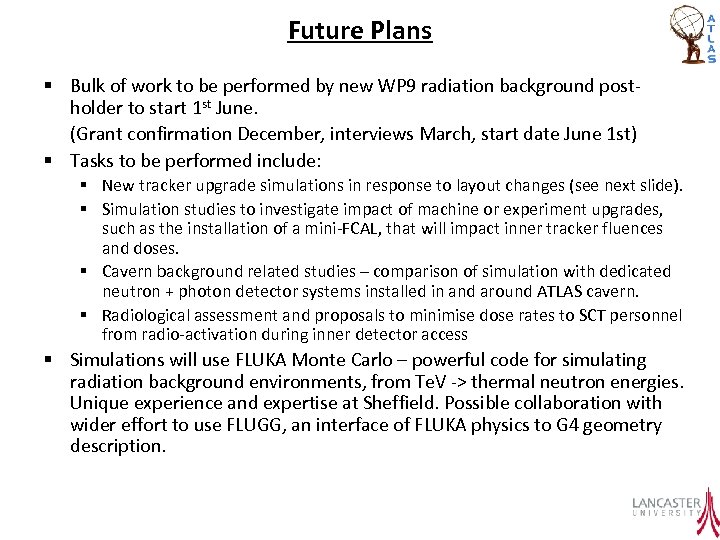

Future Plans § Bulk of work to be performed by new WP 9 radiation background postholder to start 1 st June. (Grant confirmation December, interviews March, start date June 1 st) § Tasks to be performed include: § New tracker upgrade simulations in response to layout changes (see next slide). § Simulation studies to investigate impact of machine or experiment upgrades, such as the installation of a mini-FCAL, that will impact inner tracker fluences and doses. § Cavern background related studies – comparison of simulation with dedicated neutron + photon detector systems installed in and around ATLAS cavern. § Radiological assessment and proposals to minimise dose rates to SCT personnel from radio-activation during inner detector access § Simulations will use FLUKA Monte Carlo – powerful code for simulating radiation background environments, from Te. V -> thermal neutron energies. Unique experience and expertise at Sheffield. Possible collaboration with wider effort to use FLUGG, an interface of FLUKA physics to G 4 geometry description.

Future Plans § Bulk of work to be performed by new WP 9 radiation background postholder to start 1 st June. (Grant confirmation December, interviews March, start date June 1 st) § Tasks to be performed include: § New tracker upgrade simulations in response to layout changes (see next slide). § Simulation studies to investigate impact of machine or experiment upgrades, such as the installation of a mini-FCAL, that will impact inner tracker fluences and doses. § Cavern background related studies – comparison of simulation with dedicated neutron + photon detector systems installed in and around ATLAS cavern. § Radiological assessment and proposals to minimise dose rates to SCT personnel from radio-activation during inner detector access § Simulations will use FLUKA Monte Carlo – powerful code for simulating radiation background environments, from Te. V -> thermal neutron energies. Unique experience and expertise at Sheffield. Possible collaboration with wider effort to use FLUGG, an interface of FLUKA physics to G 4 geometry description.

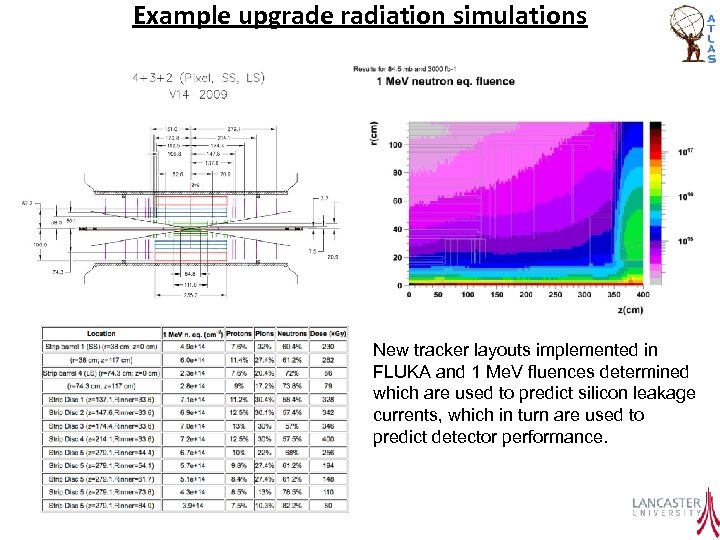

Example upgrade radiation simulations New tracker layouts implemented in FLUKA and 1 Me. V fluences determined which are used to predict silicon leakage currents, which in turn are used to predict detector performance.

Example upgrade radiation simulations New tracker layouts implemented in FLUKA and 1 Me. V fluences determined which are used to predict silicon leakage currents, which in turn are used to predict detector performance.

• Optimization, parallelization, frameworks and interfaces Performant parallelized code for Grid usage – Athena. MP on the Grid August 2011 – Parallelized analysis study Apr 2012 – Optimized parallelized code release march 2013

• Optimization, parallelization, frameworks and interfaces Performant parallelized code for Grid usage – Athena. MP on the Grid August 2011 – Parallelized analysis study Apr 2012 – Optimized parallelized code release march 2013

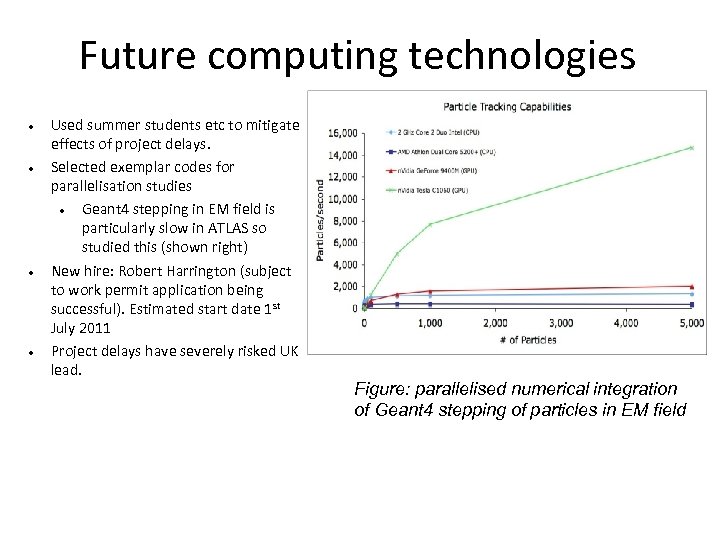

Future computing technologies Used summer students etc to mitigate effects of project delays. Selected exemplar codes for parallelisation studies Geant 4 stepping in EM field is particularly slow in ATLAS so studied this (shown right) New hire: Robert Harrington (subject to work permit application being successful). Estimated start date 1 st July 2011 Project delays have severely risked UK lead. Figure: parallelised numerical integration of Geant 4 stepping of particles in EM field

Future computing technologies Used summer students etc to mitigate effects of project delays. Selected exemplar codes for parallelisation studies Geant 4 stepping in EM field is particularly slow in ATLAS so studied this (shown right) New hire: Robert Harrington (subject to work permit application being successful). Estimated start date 1 st July 2011 Project delays have severely risked UK lead. Figure: parallelised numerical integration of Geant 4 stepping of particles in EM field

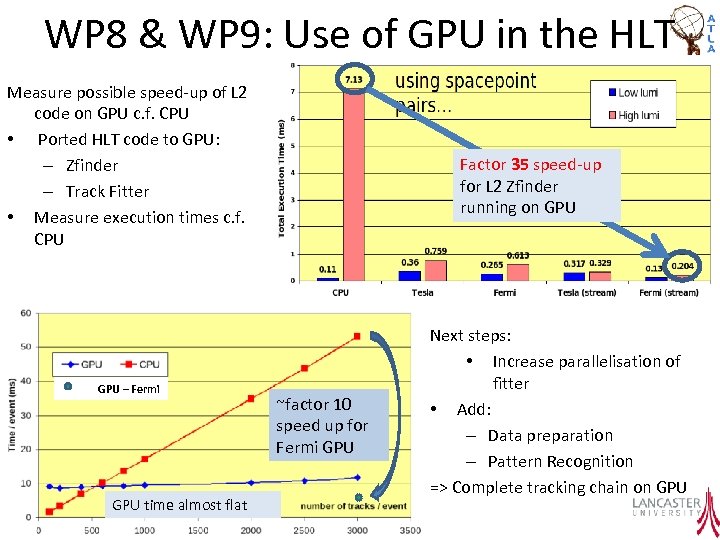

WP 8 & WP 9: Use of GPU in the HLT Measure possible speed-up of L 2 code on GPU c. f. CPU • Ported HLT code to GPU: – Zfinder – Track Fitter • Measure execution times c. f. CPU GPU – Fermi GPU time almost flat Factor 35 speed-up for L 2 Zfinder running on GPU ~factor 10 speed up for Fermi GPU Next steps: • Increase parallelisation of fitter • Add: – Data preparation – Pattern Recognition => Complete tracking chain on GPU

WP 8 & WP 9: Use of GPU in the HLT Measure possible speed-up of L 2 code on GPU c. f. CPU • Ported HLT code to GPU: – Zfinder – Track Fitter • Measure execution times c. f. CPU GPU – Fermi GPU time almost flat Factor 35 speed-up for L 2 Zfinder running on GPU ~factor 10 speed up for Fermi GPU Next steps: • Increase parallelisation of fitter • Add: – Data preparation – Pattern Recognition => Complete tracking chain on GPU

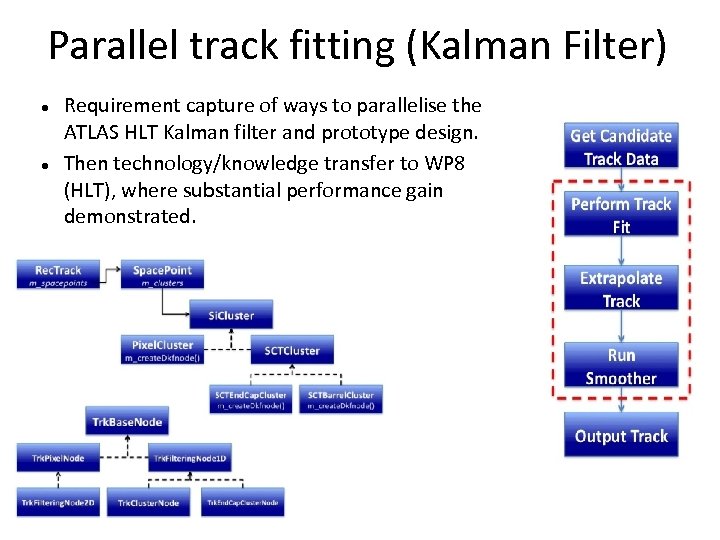

Parallel track fitting (Kalman Filter) Requirement capture of ways to parallelise the ATLAS HLT Kalman filter and prototype design. Then technology/knowledge transfer to WP 8 (HLT), where substantial performance gain demonstrated.

Parallel track fitting (Kalman Filter) Requirement capture of ways to parallelise the ATLAS HLT Kalman filter and prototype design. Then technology/knowledge transfer to WP 8 (HLT), where substantial performance gain demonstrated.

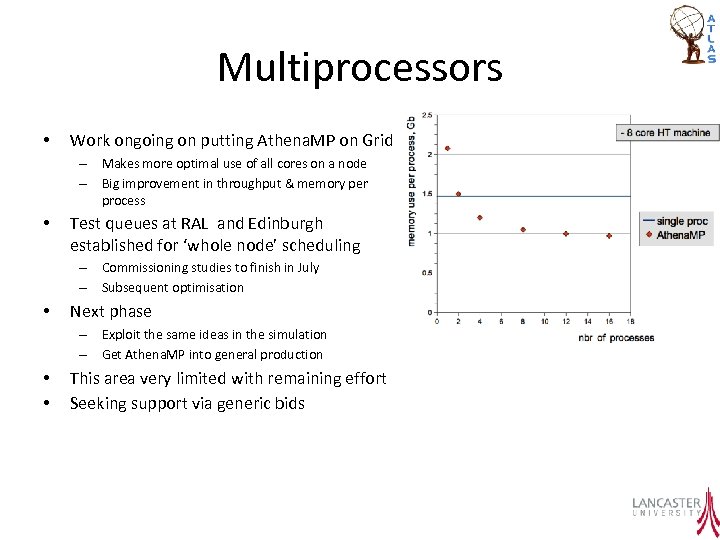

Multiprocessors • Work ongoing on putting Athena. MP on Grid – Makes more optimal use of all cores on a node – Big improvement in throughput & memory per process • Test queues at RAL and Edinburgh established for ‘whole node’ scheduling – Commissioning studies to finish in July – Subsequent optimisation • Next phase – Exploit the same ideas in the simulation – Get Athena. MP into general production • • This area very limited with remaining effort Seeking support via generic bids

Multiprocessors • Work ongoing on putting Athena. MP on Grid – Makes more optimal use of all cores on a node – Big improvement in throughput & memory per process • Test queues at RAL and Edinburgh established for ‘whole node’ scheduling – Commissioning studies to finish in July – Subsequent optimisation • Next phase – Exploit the same ideas in the simulation – Get Athena. MP into general production • • This area very limited with remaining effort Seeking support via generic bids

Summary • The main focus is on simulations of various sorts – This supports all other WPs – We need to be careful to distinguish simulation from performance studies using simulation! • Good progress despite hiring/retention issues • Future technologies etc severely reduced – Attempting to continue this work, partly by other means

Summary • The main focus is on simulations of various sorts – This supports all other WPs – We need to be careful to distinguish simulation from performance studies using simulation! • Good progress despite hiring/retention issues • Future technologies etc severely reduced – Attempting to continue this work, partly by other means