b931c9c80d1e38c564e8dab5336b99a1.ppt

- Количество слайдов: 79

Words and Pictures Rahul Raguram

Words and Pictures Rahul Raguram

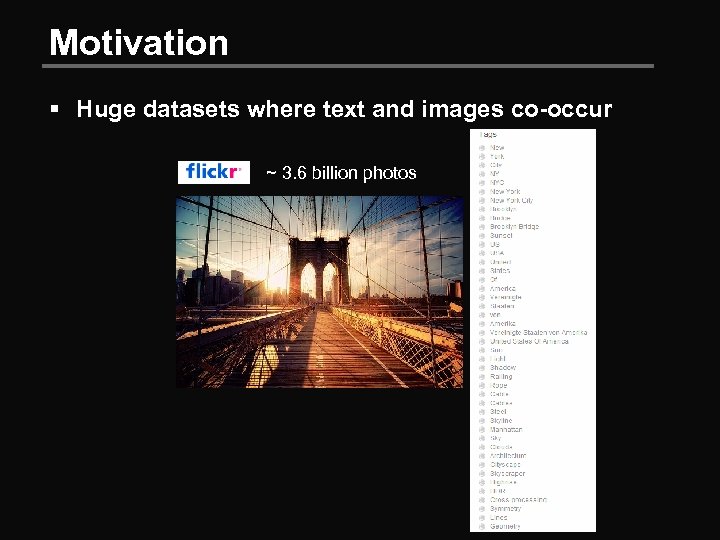

Motivation § Huge datasets where text and images co-occur ~ 3. 6 billion photos

Motivation § Huge datasets where text and images co-occur ~ 3. 6 billion photos

Motivation § Huge datasets where text and images co-occur

Motivation § Huge datasets where text and images co-occur

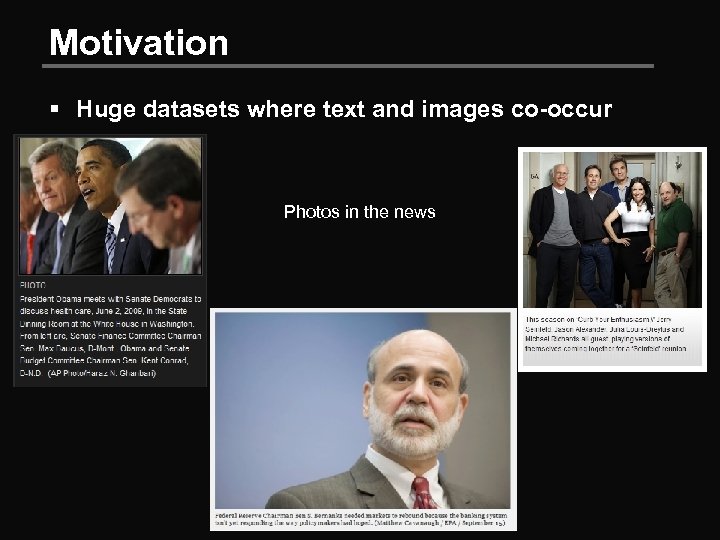

Motivation § Huge datasets where text and images co-occur Photos in the news

Motivation § Huge datasets where text and images co-occur Photos in the news

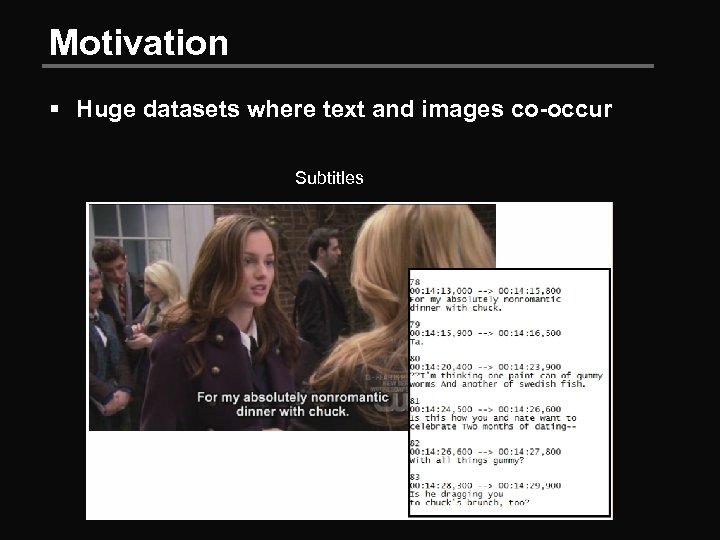

Motivation § Huge datasets where text and images co-occur Subtitles

Motivation § Huge datasets where text and images co-occur Subtitles

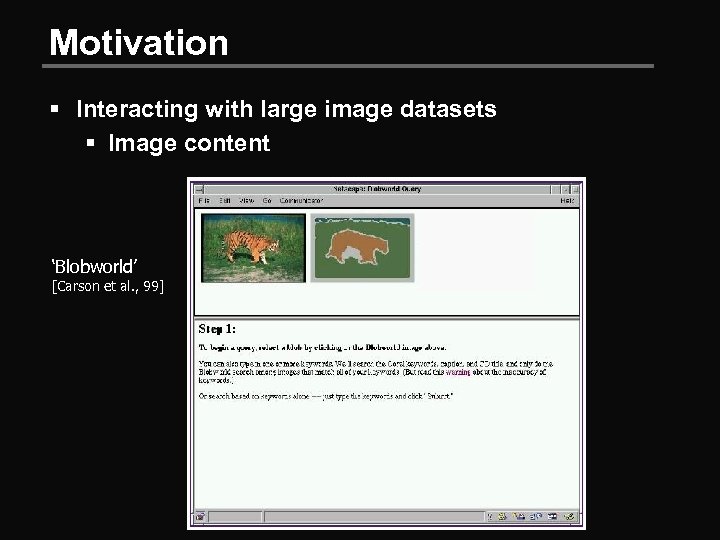

Motivation § Interacting with large image datasets § Image content ‘Blobworld’ [Carson et al. , 99]

Motivation § Interacting with large image datasets § Image content ‘Blobworld’ [Carson et al. , 99]

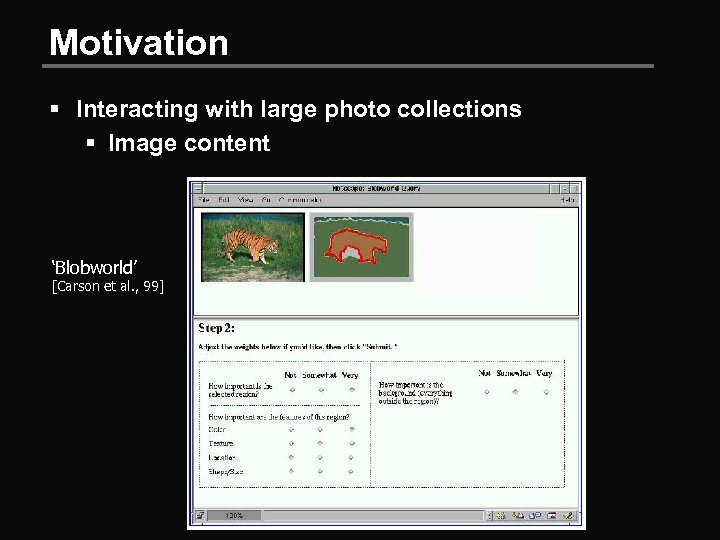

Motivation § Interacting with large photo collections § Image content ‘Blobworld’ [Carson et al. , 99]

Motivation § Interacting with large photo collections § Image content ‘Blobworld’ [Carson et al. , 99]

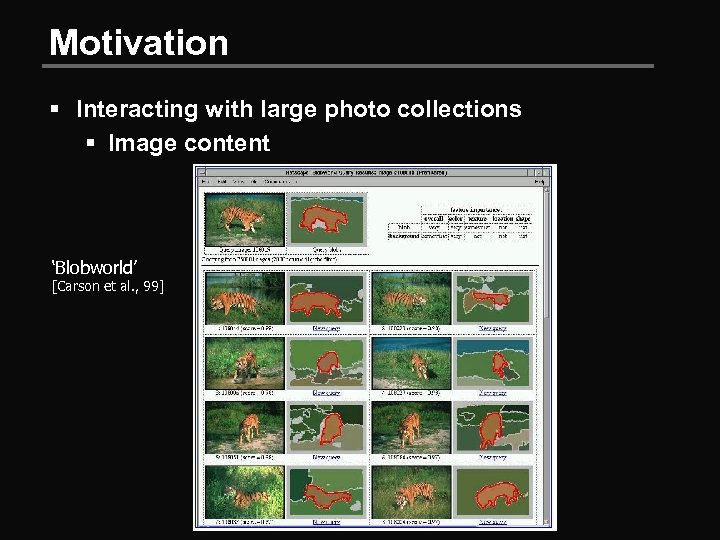

Motivation § Interacting with large photo collections § Image content ‘Blobworld’ [Carson et al. , 99]

Motivation § Interacting with large photo collections § Image content ‘Blobworld’ [Carson et al. , 99]

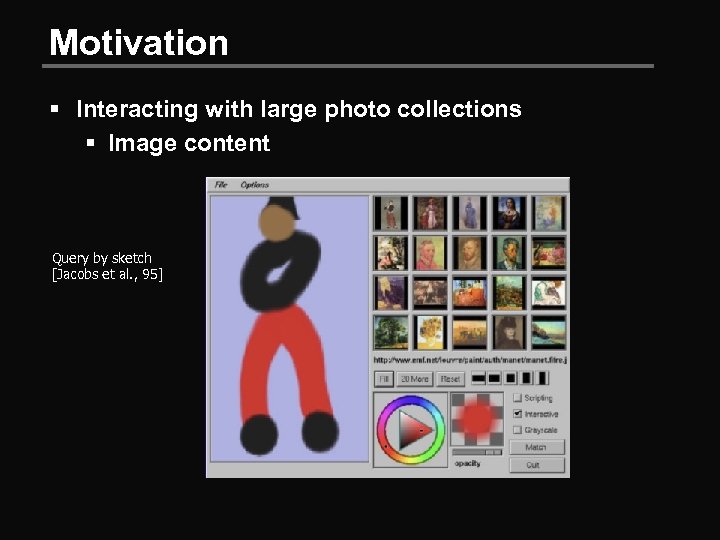

Motivation § Interacting with large photo collections § Image content Query by sketch [Jacobs et al. , 95]

Motivation § Interacting with large photo collections § Image content Query by sketch [Jacobs et al. , 95]

Motivation § Interacting with large photo collections § Image content Query by sketch [Jacobs et al. , 95]

Motivation § Interacting with large photo collections § Image content Query by sketch [Jacobs et al. , 95]

Motivation § Interacting with large photo collections § Large disparity between user needs and what technology provides (Armitage and Enser 1997, Enser 1993, Enser 1995, Markulla and Sormunen 2000) § Queries based on image histograms, texture, overall appearance, etc. are vanishingly small

Motivation § Interacting with large photo collections § Large disparity between user needs and what technology provides (Armitage and Enser 1997, Enser 1993, Enser 1995, Markulla and Sormunen 2000) § Queries based on image histograms, texture, overall appearance, etc. are vanishingly small

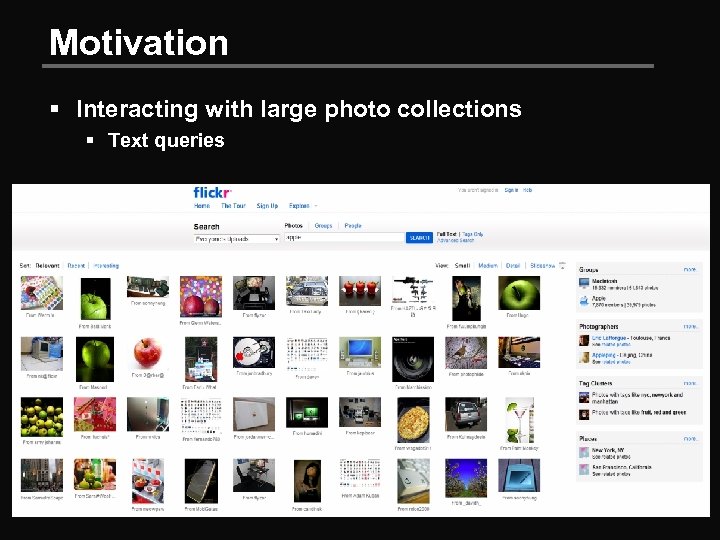

Motivation § Interacting with large photo collections § Text queries

Motivation § Interacting with large photo collections § Text queries

Motivation § Text and images may be separately ambiguous; jointly they tend not to be § Image descriptions often leave out what is visually obvious (eg: the colour of a flower) § …but often include properties that are difficult to infer using vision (eg: the species of the flower)

Motivation § Text and images may be separately ambiguous; jointly they tend not to be § Image descriptions often leave out what is visually obvious (eg: the colour of a flower) § …but often include properties that are difficult to infer using vision (eg: the species of the flower)

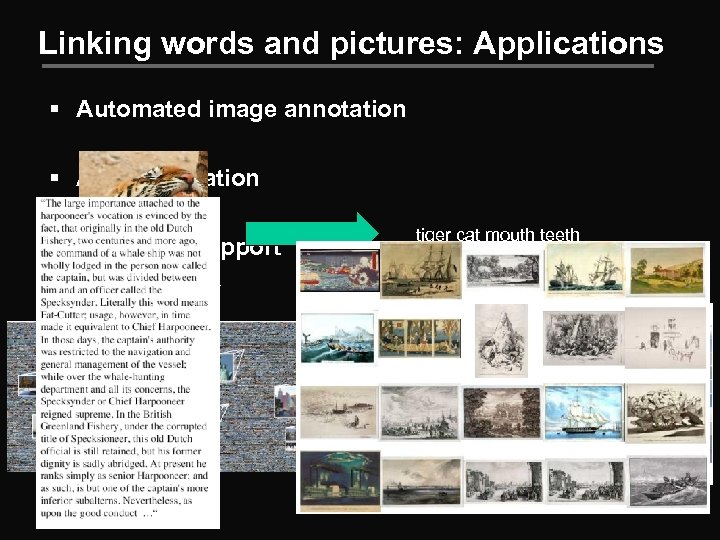

Linking words and pictures: Applications § Automated image annotation § Auto illustration § Browsing support “statue of liberty” tiger cat mouth teeth

Linking words and pictures: Applications § Automated image annotation § Auto illustration § Browsing support “statue of liberty” tiger cat mouth teeth

Learning the Semantics of Words and Pictures Barnard and Forsyth, ICCV 2001

Learning the Semantics of Words and Pictures Barnard and Forsyth, ICCV 2001

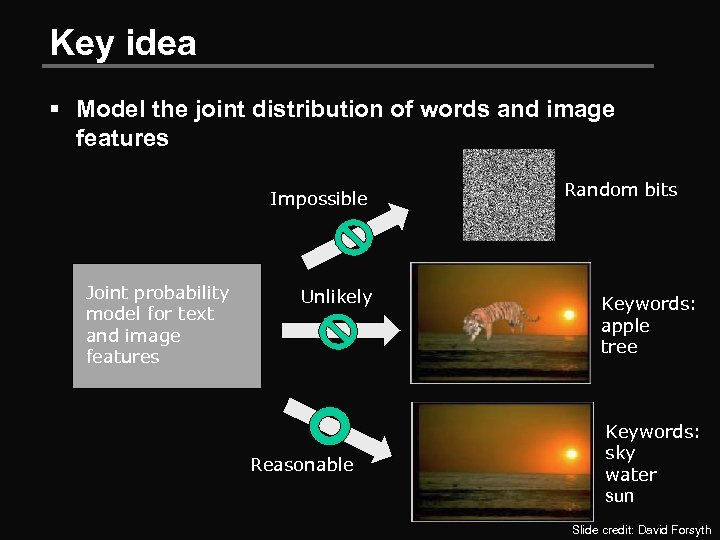

Key idea § Model the joint distribution of words and image features Impossible Joint probability model for text and image features Unlikely Reasonable Random bits Keywords: apple tree Keywords: sky water sun Slide credit: David Forsyth

Key idea § Model the joint distribution of words and image features Impossible Joint probability model for text and image features Unlikely Reasonable Random bits Keywords: apple tree Keywords: sky water sun Slide credit: David Forsyth

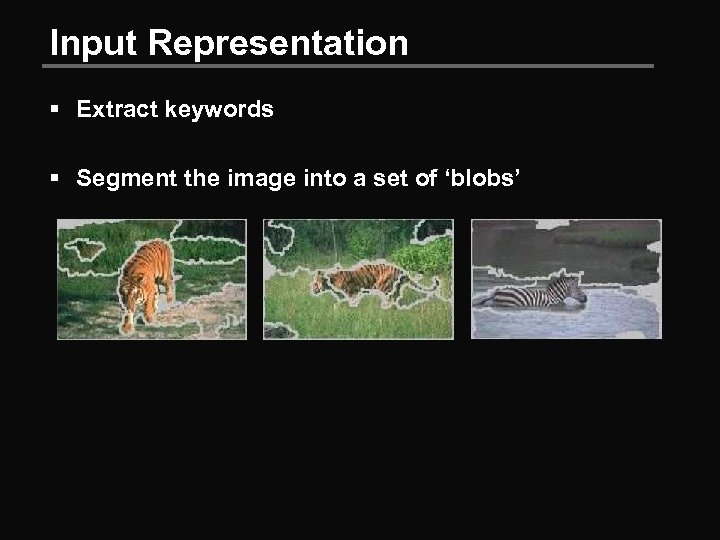

Input Representation § Extract keywords § Segment the image into a set of ‘blobs’

Input Representation § Extract keywords § Segment the image into a set of ‘blobs’

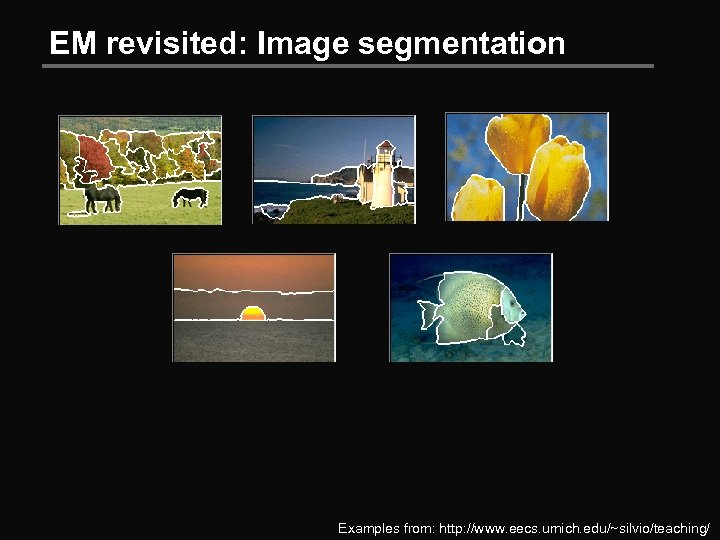

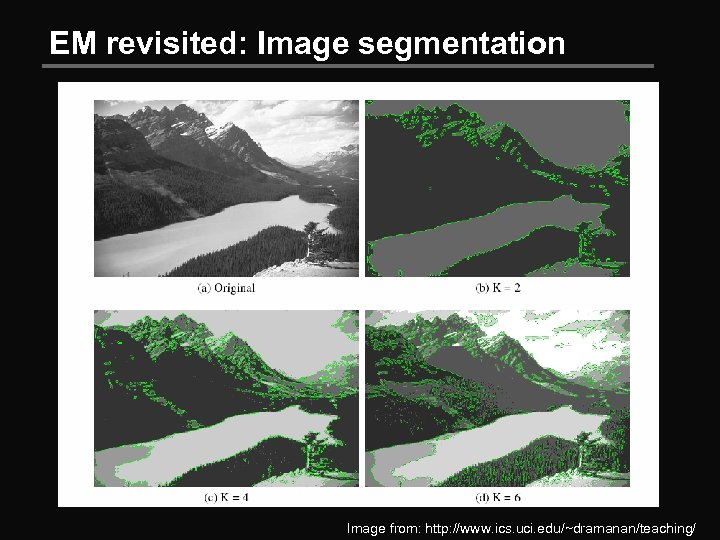

EM revisited: Image segmentation Examples from: http: //www. eecs. umich. edu/~silvio/teaching/

EM revisited: Image segmentation Examples from: http: //www. eecs. umich. edu/~silvio/teaching/

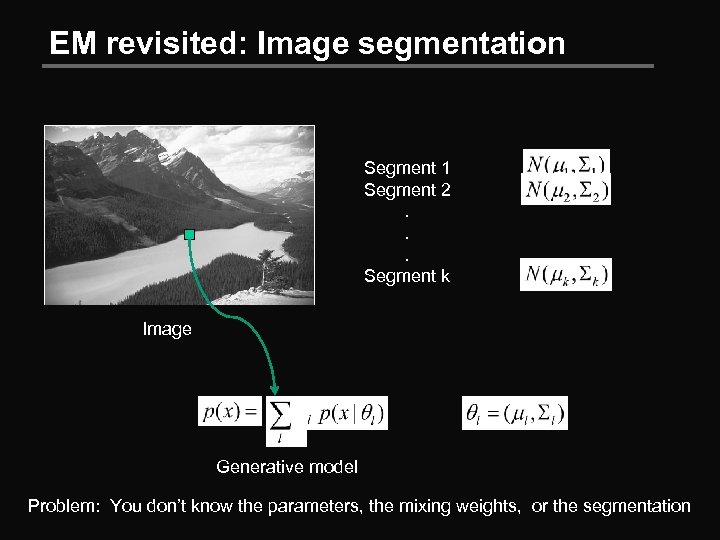

EM revisited: Image segmentation Segment 1 Segment 2. . . Segment k Image Generative model Problem: You don’t know the parameters, the mixing weights, or the segmentation

EM revisited: Image segmentation Segment 1 Segment 2. . . Segment k Image Generative model Problem: You don’t know the parameters, the mixing weights, or the segmentation

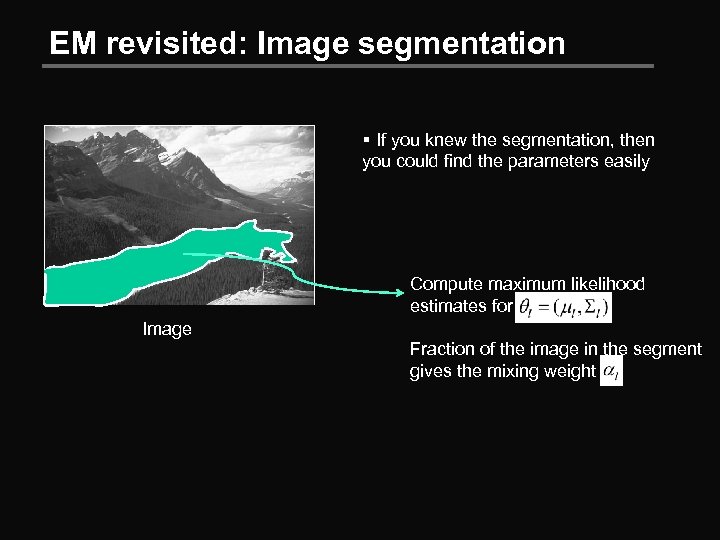

EM revisited: Image segmentation § If you knew the segmentation, then you could find the parameters easily Compute maximum likelihood estimates for Image Fraction of the image in the segment gives the mixing weight

EM revisited: Image segmentation § If you knew the segmentation, then you could find the parameters easily Compute maximum likelihood estimates for Image Fraction of the image in the segment gives the mixing weight

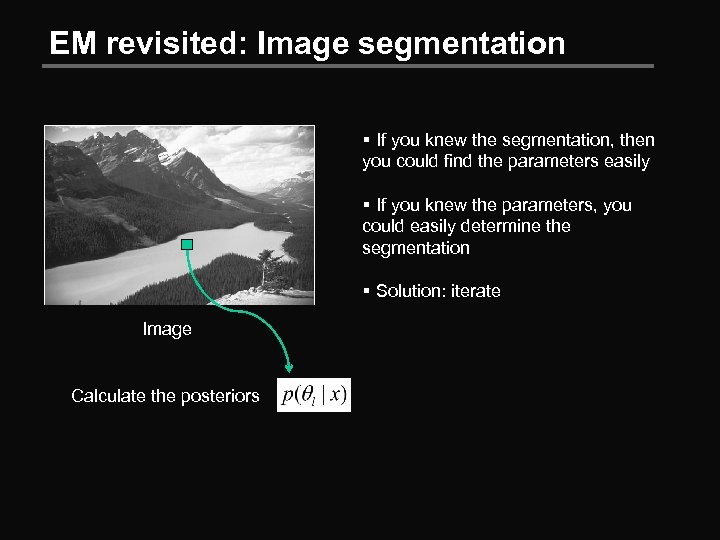

EM revisited: Image segmentation § If you knew the segmentation, then you could find the parameters easily § If you knew the parameters, you could easily determine the segmentation § Solution: iterate Image Calculate the posteriors

EM revisited: Image segmentation § If you knew the segmentation, then you could find the parameters easily § If you knew the parameters, you could easily determine the segmentation § Solution: iterate Image Calculate the posteriors

EM revisited: Image segmentation Image from: http: //www. ics. uci. edu/~dramanan/teaching/

EM revisited: Image segmentation Image from: http: //www. ics. uci. edu/~dramanan/teaching/

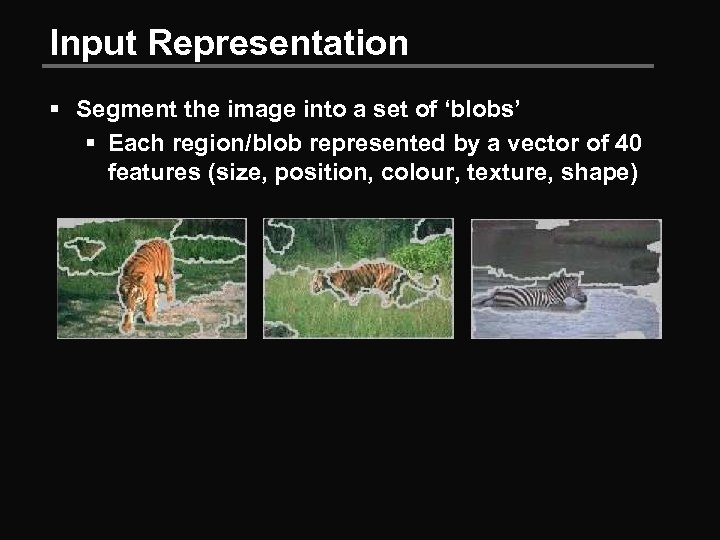

Input Representation § Segment the image into a set of ‘blobs’ § Each region/blob represented by a vector of 40 features (size, position, colour, texture, shape)

Input Representation § Segment the image into a set of ‘blobs’ § Each region/blob represented by a vector of 40 features (size, position, colour, texture, shape)

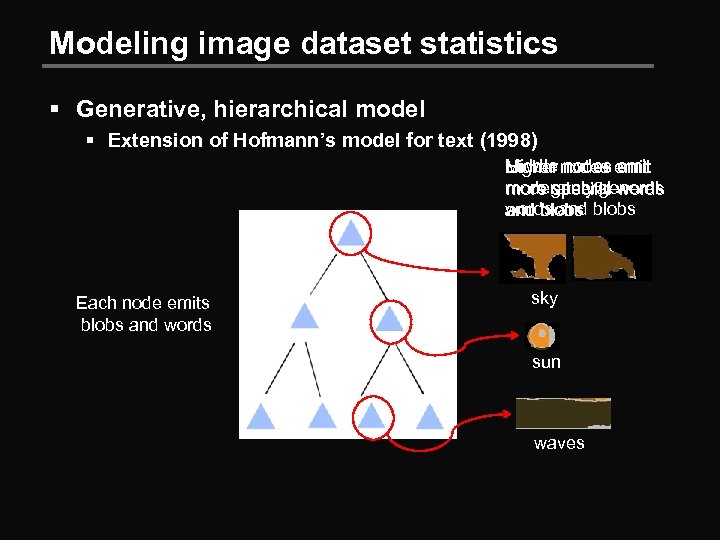

Modeling image dataset statistics § Generative, hierarchical model § Extension of Hofmann’s model for text (1998) Middle nodes emit Higher nodes emit Lower moderately general more general words specific words and blobs Each node emits blobs and words sky sun waves

Modeling image dataset statistics § Generative, hierarchical model § Extension of Hofmann’s model for text (1998) Middle nodes emit Higher nodes emit Lower moderately general more general words specific words and blobs Each node emits blobs and words sky sun waves

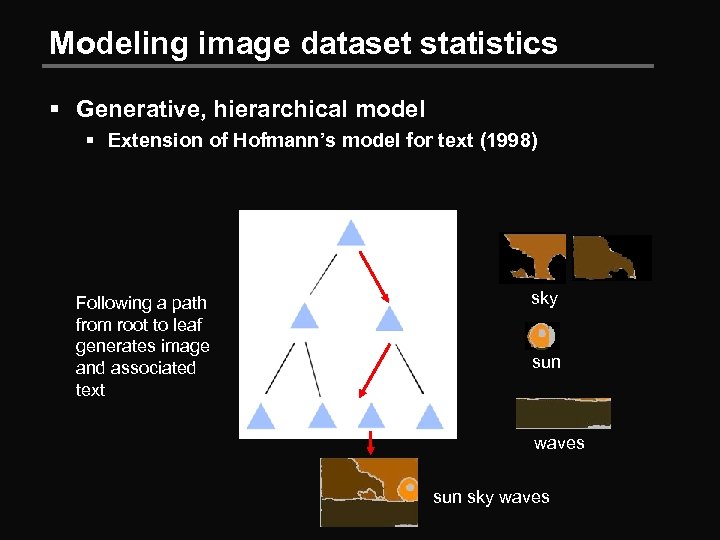

Modeling image dataset statistics § Generative, hierarchical model § Extension of Hofmann’s model for text (1998) Following a path from root to leaf generates image and associated text sky sun waves sun sky waves

Modeling image dataset statistics § Generative, hierarchical model § Extension of Hofmann’s model for text (1998) Following a path from root to leaf generates image and associated text sky sun waves sun sky waves

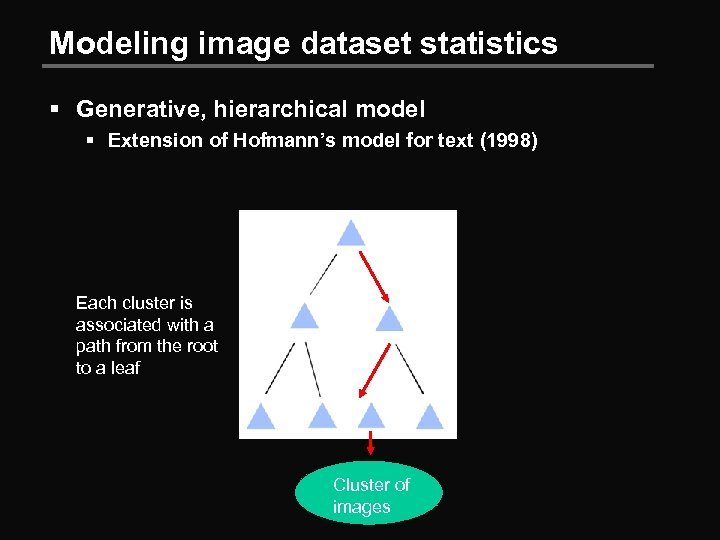

Modeling image dataset statistics § Generative, hierarchical model § Extension of Hofmann’s model for text (1998) Each cluster is associated with a path from the root to a leaf Cluster of images

Modeling image dataset statistics § Generative, hierarchical model § Extension of Hofmann’s model for text (1998) Each cluster is associated with a path from the root to a leaf Cluster of images

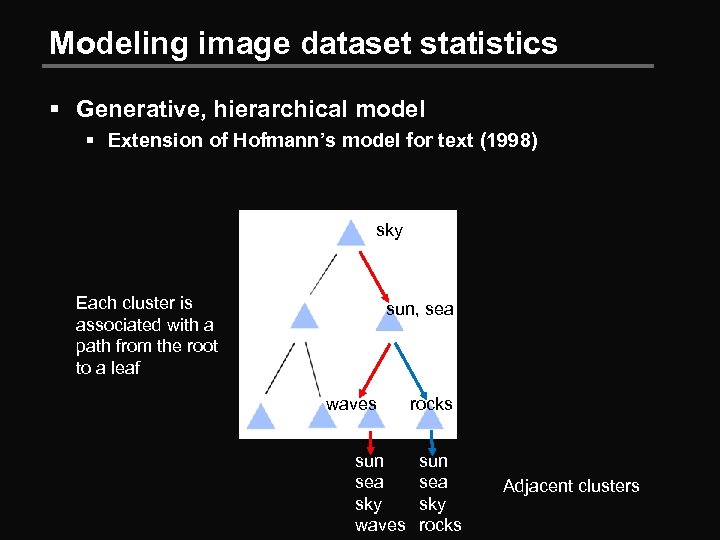

Modeling image dataset statistics § Generative, hierarchical model § Extension of Hofmann’s model for text (1998) sky Each cluster is associated with a path from the root to a leaf sun, sea waves sun sea sky waves rocks sun sea sky rocks Adjacent clusters

Modeling image dataset statistics § Generative, hierarchical model § Extension of Hofmann’s model for text (1998) sky Each cluster is associated with a path from the root to a leaf sun, sea waves sun sea sky waves rocks sun sea sky rocks Adjacent clusters

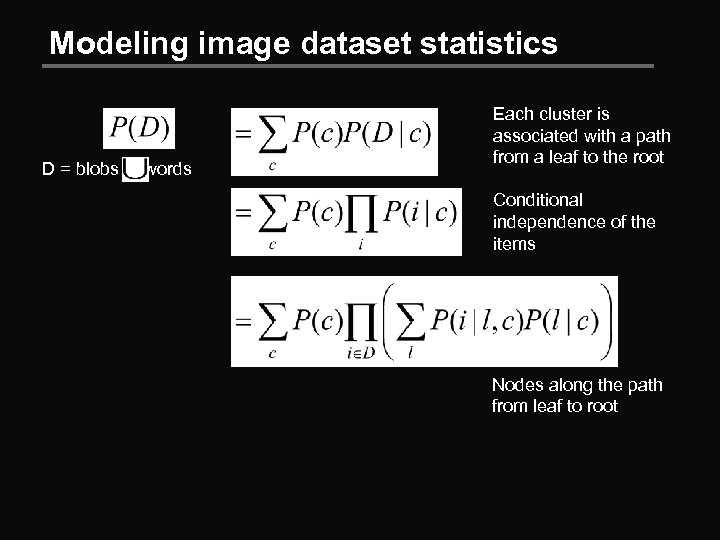

Modeling image dataset statistics D = blobs words Each cluster is associated with a path from a leaf to the root Conditional independence of the items Nodes along the path from leaf to root

Modeling image dataset statistics D = blobs words Each cluster is associated with a path from a leaf to the root Conditional independence of the items Nodes along the path from leaf to root

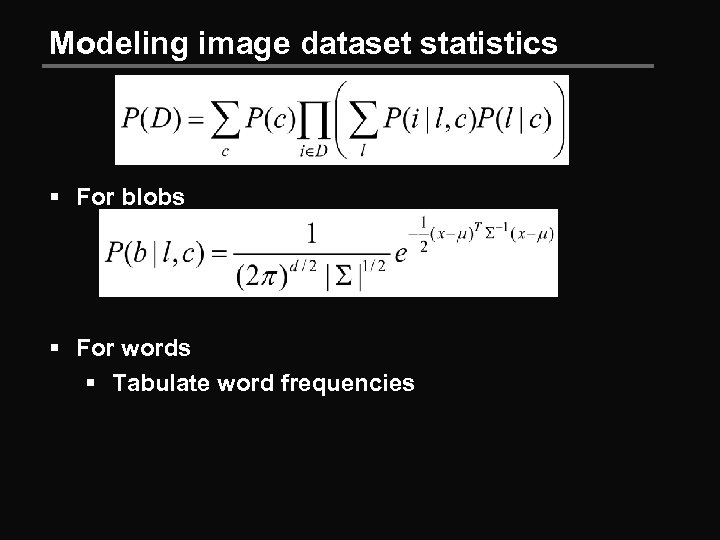

Modeling image dataset statistics § For blobs § For words § Tabulate word frequencies

Modeling image dataset statistics § For blobs § For words § Tabulate word frequencies

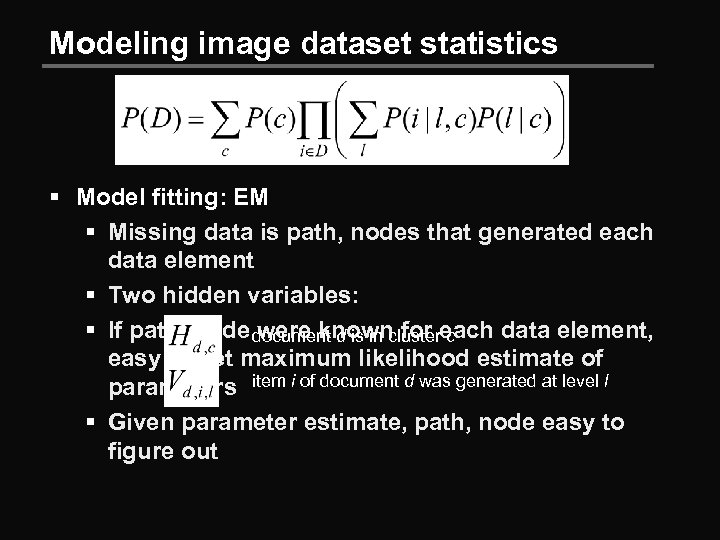

Modeling image dataset statistics § Model fitting: EM § Missing data is path, nodes that generated each data element § Two hidden variables: § If path, node document d is in clustereach data element, were known for c easy to get maximum likelihood estimate of parameters item i of document d was generated at level l § Given parameter estimate, path, node easy to figure out

Modeling image dataset statistics § Model fitting: EM § Missing data is path, nodes that generated each data element § Two hidden variables: § If path, node document d is in clustereach data element, were known for c easy to get maximum likelihood estimate of parameters item i of document d was generated at level l § Given parameter estimate, path, node easy to figure out

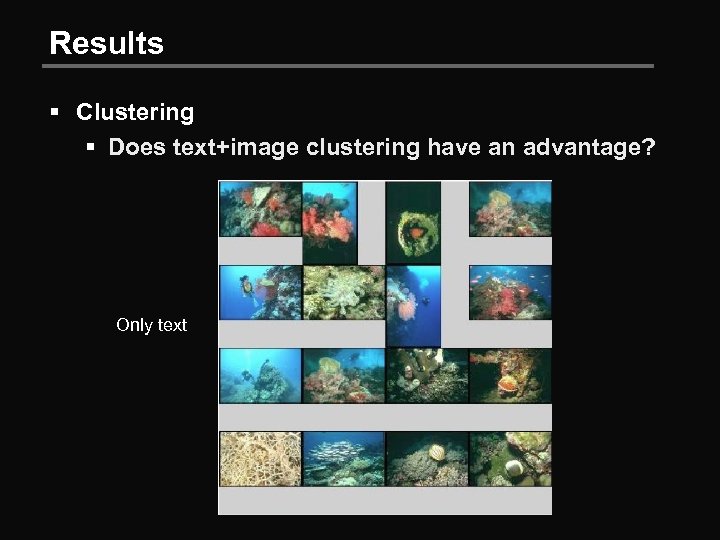

Results § Clustering § Does text+image clustering have an advantage? Only text

Results § Clustering § Does text+image clustering have an advantage? Only text

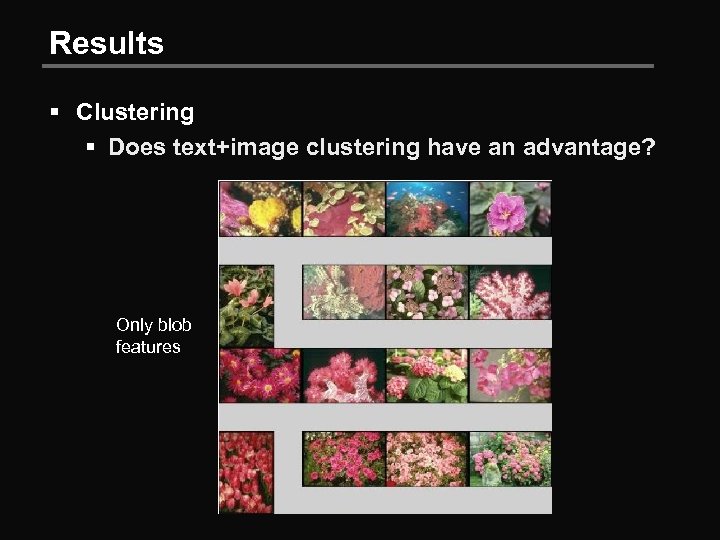

Results § Clustering § Does text+image clustering have an advantage? Only blob features

Results § Clustering § Does text+image clustering have an advantage? Only blob features

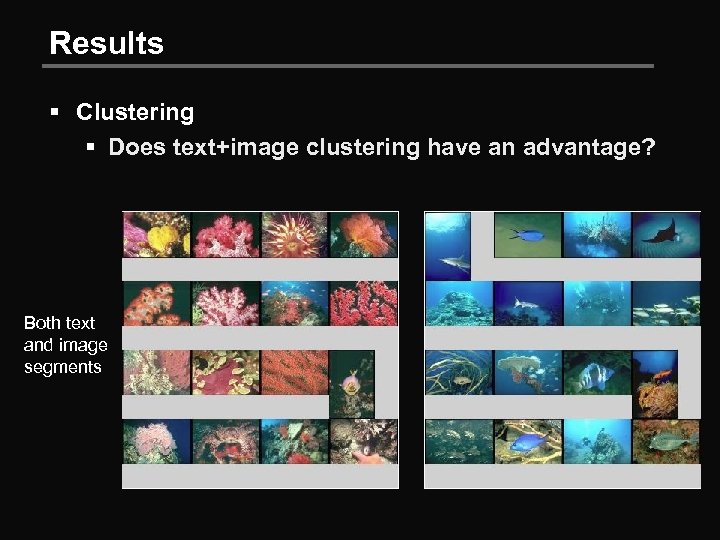

Results § Clustering § Does text+image clustering have an advantage? Both text and image segments

Results § Clustering § Does text+image clustering have an advantage? Both text and image segments

Results § Clustering § Does text+image clustering have an advantage? § User study: § Generate 64 clusters for 3000 images § Generate 64 random clusters from the same images § Present random cluster to user, ask to rate coherence (yes/no) § 94% accuracy

Results § Clustering § Does text+image clustering have an advantage? § User study: § Generate 64 clusters for 3000 images § Generate 64 random clusters from the same images § Present random cluster to user, ask to rate coherence (yes/no) § 94% accuracy

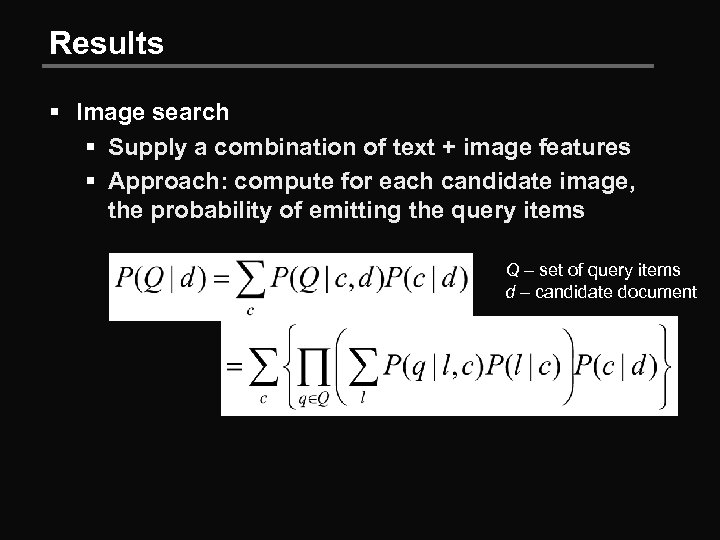

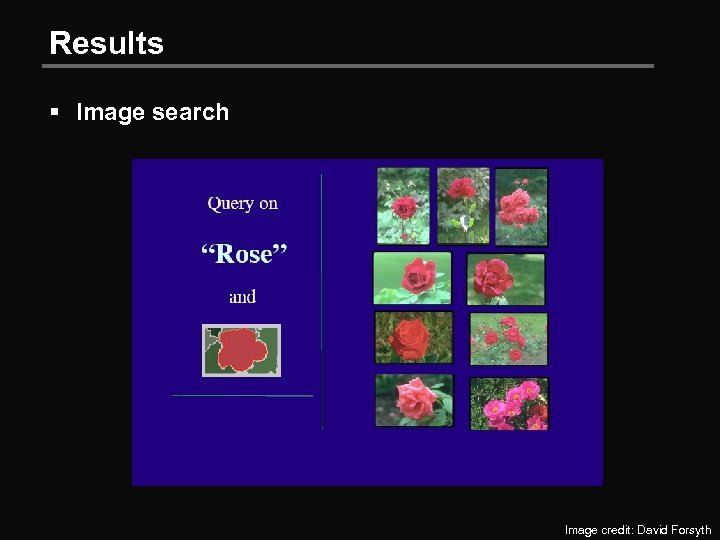

Results § Image search § Supply a combination of text + image features § Approach: compute for each candidate image, the probability of emitting the query items Q – set of query items d – candidate document

Results § Image search § Supply a combination of text + image features § Approach: compute for each candidate image, the probability of emitting the query items Q – set of query items d – candidate document

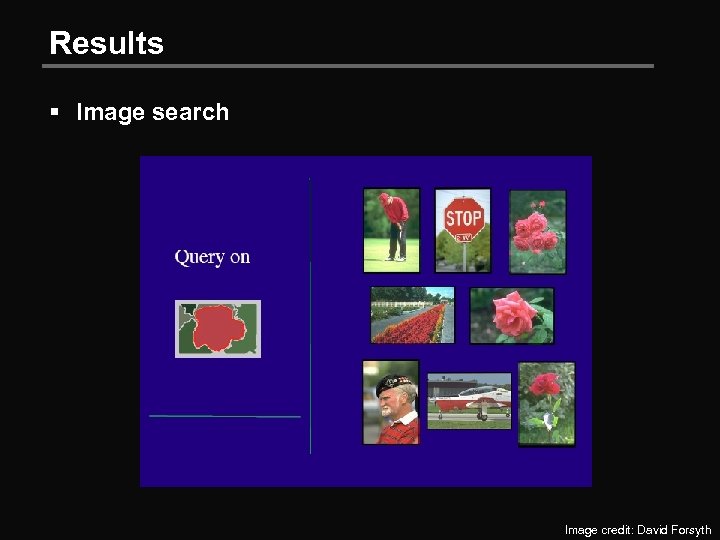

Results § Image search Image credit: David Forsyth

Results § Image search Image credit: David Forsyth

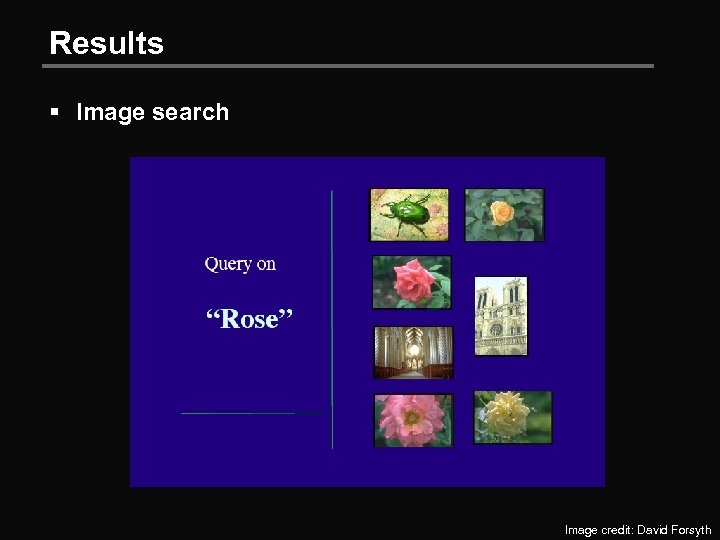

Results § Image search Image credit: David Forsyth

Results § Image search Image credit: David Forsyth

Results § Image search Image credit: David Forsyth

Results § Image search Image credit: David Forsyth

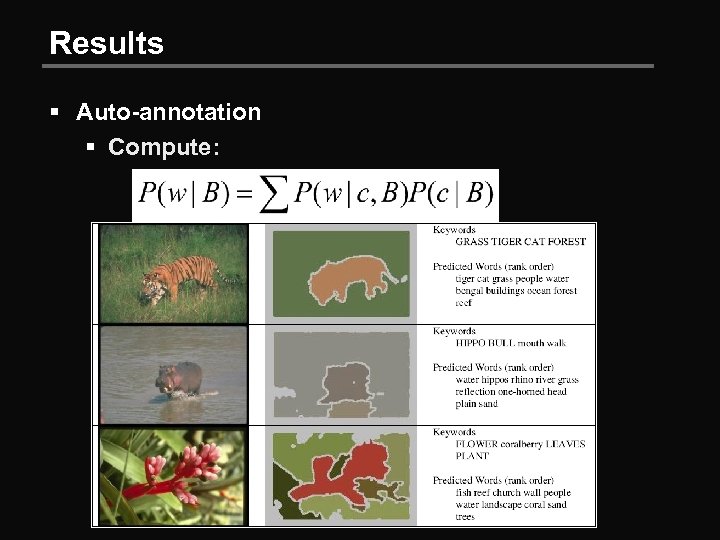

Results § Auto-annotation § Compute:

Results § Auto-annotation § Compute:

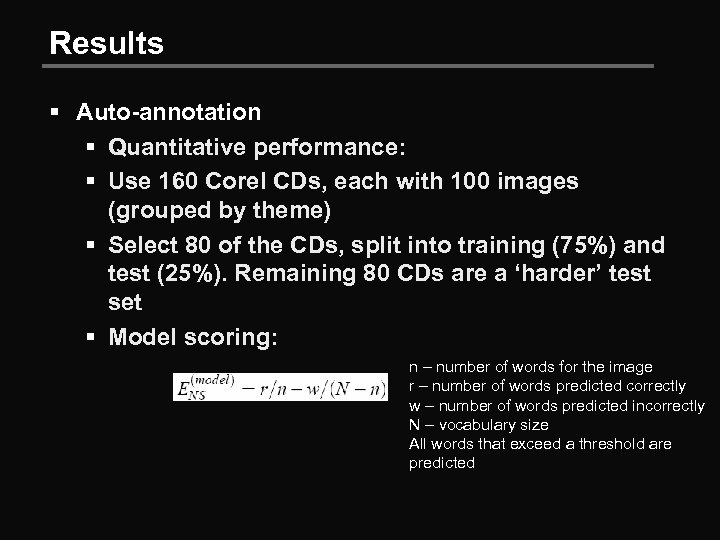

Results § Auto-annotation § Quantitative performance: § Use 160 Corel CDs, each with 100 images (grouped by theme) § Select 80 of the CDs, split into training (75%) and test (25%). Remaining 80 CDs are a ‘harder’ test set § Model scoring: n – number of words for the image r – number of words predicted correctly w – number of words predicted incorrectly N – vocabulary size All words that exceed a threshold are predicted

Results § Auto-annotation § Quantitative performance: § Use 160 Corel CDs, each with 100 images (grouped by theme) § Select 80 of the CDs, split into training (75%) and test (25%). Remaining 80 CDs are a ‘harder’ test set § Model scoring: n – number of words for the image r – number of words predicted correctly w – number of words predicted incorrectly N – vocabulary size All words that exceed a threshold are predicted

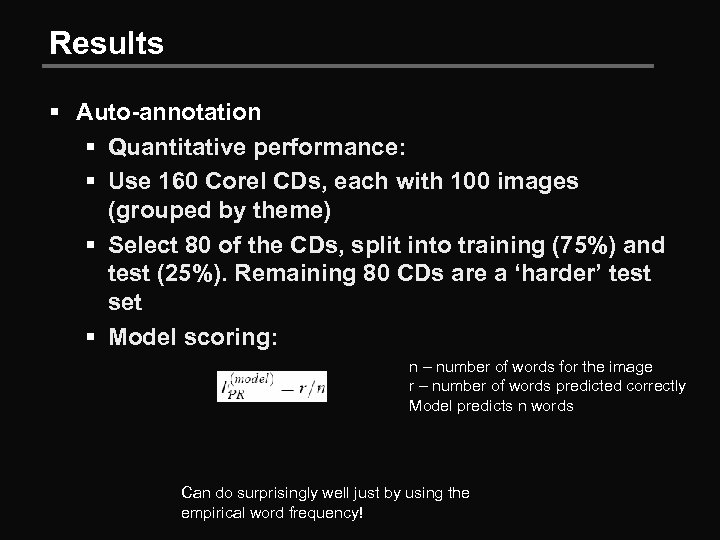

Results § Auto-annotation § Quantitative performance: § Use 160 Corel CDs, each with 100 images (grouped by theme) § Select 80 of the CDs, split into training (75%) and test (25%). Remaining 80 CDs are a ‘harder’ test set § Model scoring: n – number of words for the image r – number of words predicted correctly Model predicts n words Can do surprisingly well just by using the empirical word frequency!

Results § Auto-annotation § Quantitative performance: § Use 160 Corel CDs, each with 100 images (grouped by theme) § Select 80 of the CDs, split into training (75%) and test (25%). Remaining 80 CDs are a ‘harder’ test set § Model scoring: n – number of words for the image r – number of words predicted correctly Model predicts n words Can do surprisingly well just by using the empirical word frequency!

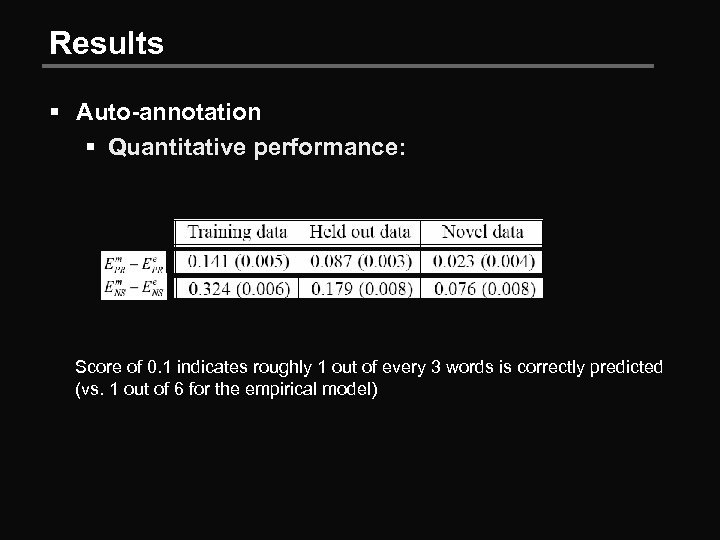

Results § Auto-annotation § Quantitative performance: Score of 0. 1 indicates roughly 1 out of every 3 words is correctly predicted (vs. 1 out of 6 for the empirical model)

Results § Auto-annotation § Quantitative performance: Score of 0. 1 indicates roughly 1 out of every 3 words is correctly predicted (vs. 1 out of 6 for the empirical model)

Names and Faces in the News Berg et al. , CVPR 2004

Names and Faces in the News Berg et al. , CVPR 2004

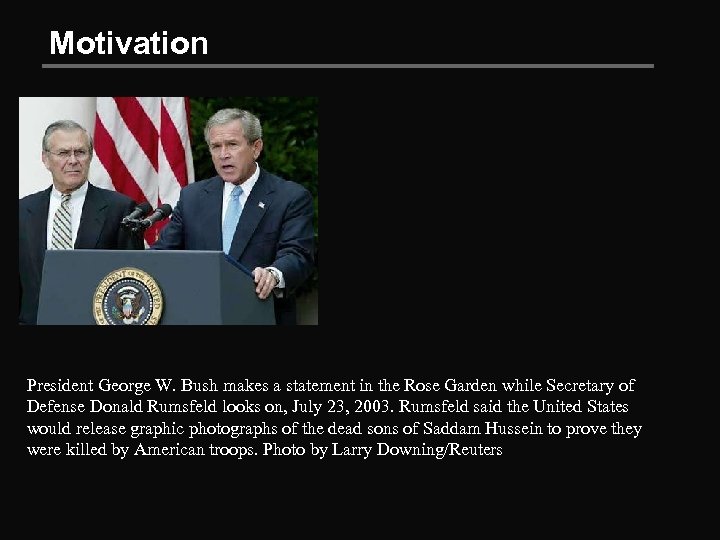

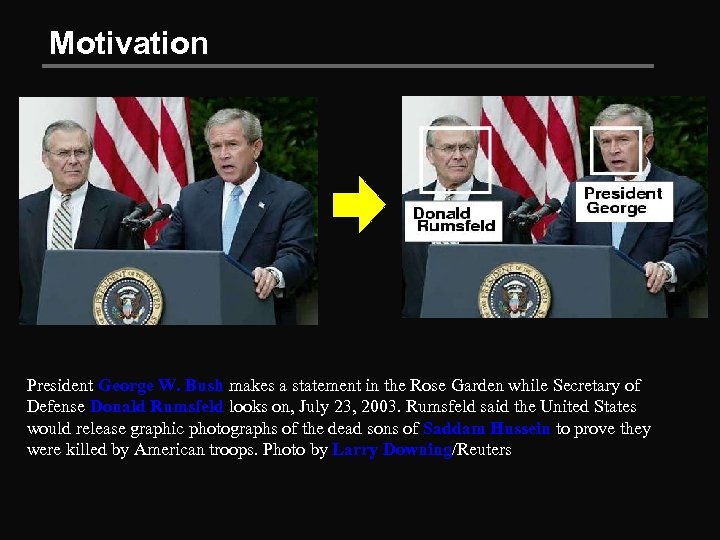

Motivation President George W. Bush makes a statement in the Rose Garden while Secretary of Defense Donald Rumsfeld looks on, July 23, 2003. Rumsfeld said the United States would release graphic photographs of the dead sons of Saddam Hussein to prove they were killed by American troops. Photo by Larry Downing/Reuters

Motivation President George W. Bush makes a statement in the Rose Garden while Secretary of Defense Donald Rumsfeld looks on, July 23, 2003. Rumsfeld said the United States would release graphic photographs of the dead sons of Saddam Hussein to prove they were killed by American troops. Photo by Larry Downing/Reuters

Motivation President George W. Bush makes a statement in the Rose Garden while Secretary of Defense Donald Rumsfeld looks on, July 23, 2003. Rumsfeld said the United States would release graphic photographs of the dead sons of Saddam Hussein to prove they were killed by American troops. Photo by Larry Downing/Reuters

Motivation President George W. Bush makes a statement in the Rose Garden while Secretary of Defense Donald Rumsfeld looks on, July 23, 2003. Rumsfeld said the United States would release graphic photographs of the dead sons of Saddam Hussein to prove they were killed by American troops. Photo by Larry Downing/Reuters

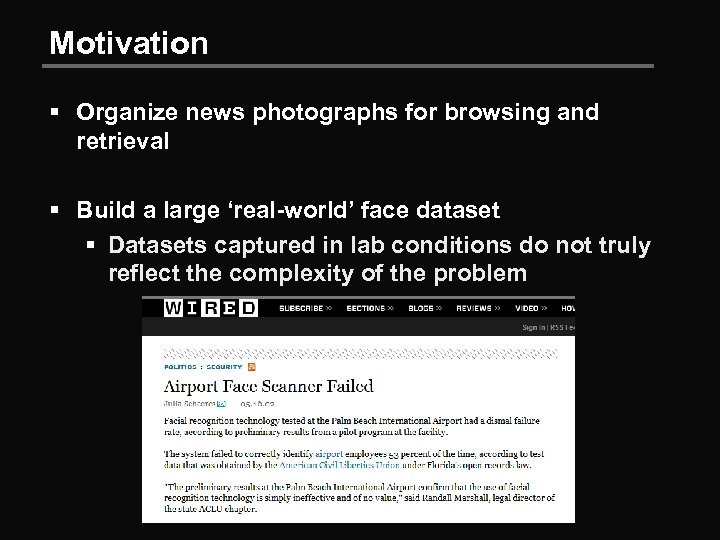

Motivation § Organize news photographs for browsing and retrieval § Build a large ‘real-world’ face dataset § Datasets captured in lab conditions do not truly reflect the complexity of the problem

Motivation § Organize news photographs for browsing and retrieval § Build a large ‘real-world’ face dataset § Datasets captured in lab conditions do not truly reflect the complexity of the problem

Motivation § Organize news photographs for browsing and retrieval § Build a large ‘real-world’ face dataset § Datasets captured in lab conditions do not truly reflect the complexity of the problem § In many traditional face datasets, it’s possible to get excellent performance by using no facial features at all (Shamir, 2008)

Motivation § Organize news photographs for browsing and retrieval § Build a large ‘real-world’ face dataset § Datasets captured in lab conditions do not truly reflect the complexity of the problem § In many traditional face datasets, it’s possible to get excellent performance by using no facial features at all (Shamir, 2008)

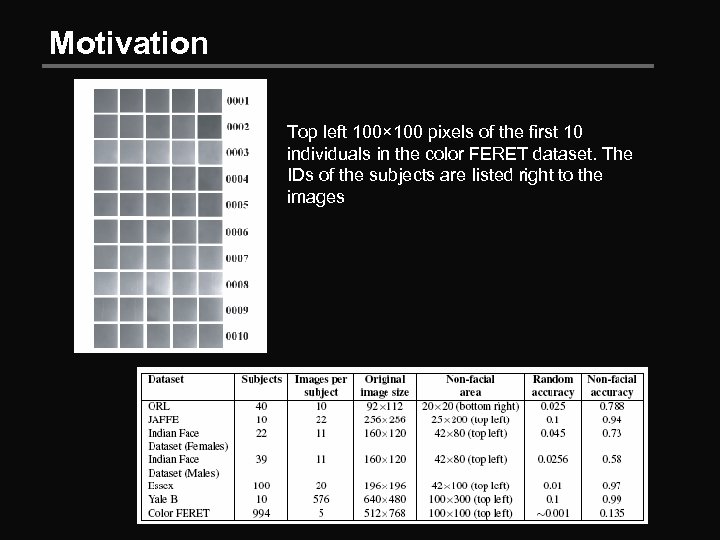

Motivation Top left 100× 100 pixels of the first 10 individuals in the color FERET dataset. The IDs of the subjects are listed right to the images

Motivation Top left 100× 100 pixels of the first 10 individuals in the color FERET dataset. The IDs of the subjects are listed right to the images

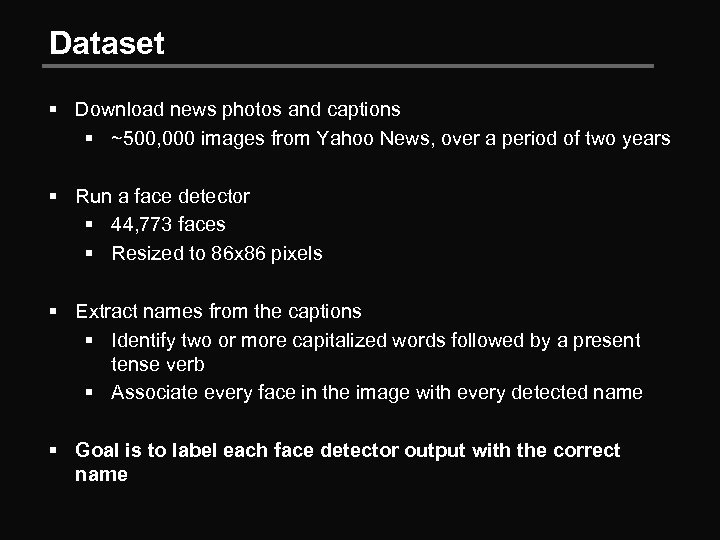

Dataset § Download news photos and captions § ~500, 000 images from Yahoo News, over a period of two years § Run a face detector § 44, 773 faces § Resized to 86 x 86 pixels § Extract names from the captions § Identify two or more capitalized words followed by a present tense verb § Associate every face in the image with every detected name § Goal is to label each face detector output with the correct name

Dataset § Download news photos and captions § ~500, 000 images from Yahoo News, over a period of two years § Run a face detector § 44, 773 faces § Resized to 86 x 86 pixels § Extract names from the captions § Identify two or more capitalized words followed by a present tense verb § Associate every face in the image with every detected name § Goal is to label each face detector output with the correct name

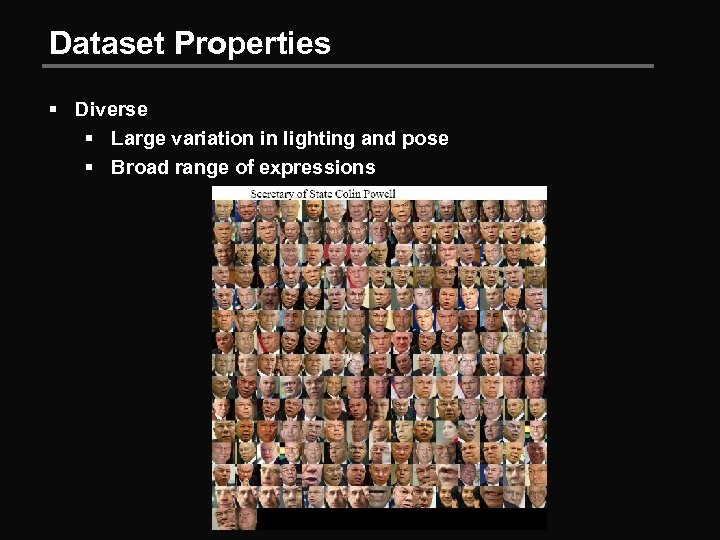

Dataset Properties § Diverse § Large variation in lighting and pose § Broad range of expressions

Dataset Properties § Diverse § Large variation in lighting and pose § Broad range of expressions

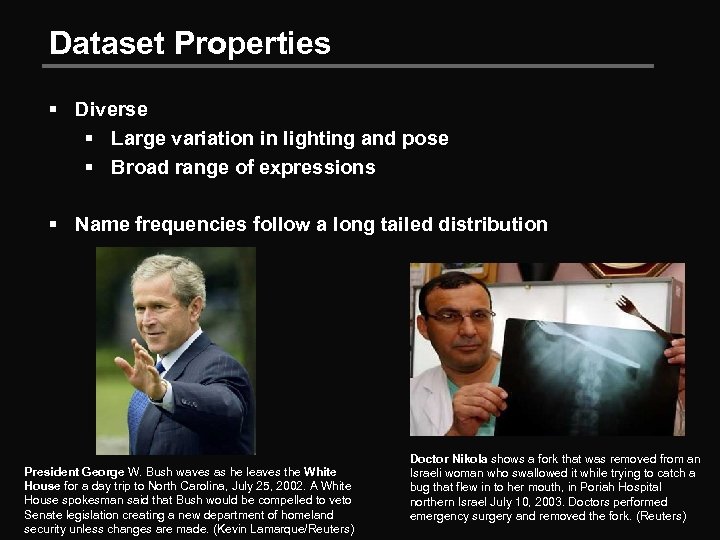

Dataset Properties § Diverse § Large variation in lighting and pose § Broad range of expressions § Name frequencies follow a long tailed distribution President George W. Bush waves as he leaves the White House for a day trip to North Carolina, July 25, 2002. A White House spokesman said that Bush would be compelled to veto Senate legislation creating a new department of homeland security unless changes are made. (Kevin Lamarque/Reuters) Doctor Nikola shows a fork that was removed from an Israeli woman who swallowed it while trying to catch a bug that flew in to her mouth, in Poriah Hospital northern Israel July 10, 2003. Doctors performed emergency surgery and removed the fork. (Reuters)

Dataset Properties § Diverse § Large variation in lighting and pose § Broad range of expressions § Name frequencies follow a long tailed distribution President George W. Bush waves as he leaves the White House for a day trip to North Carolina, July 25, 2002. A White House spokesman said that Bush would be compelled to veto Senate legislation creating a new department of homeland security unless changes are made. (Kevin Lamarque/Reuters) Doctor Nikola shows a fork that was removed from an Israeli woman who swallowed it while trying to catch a bug that flew in to her mouth, in Poriah Hospital northern Israel July 10, 2003. Doctors performed emergency surgery and removed the fork. (Reuters)

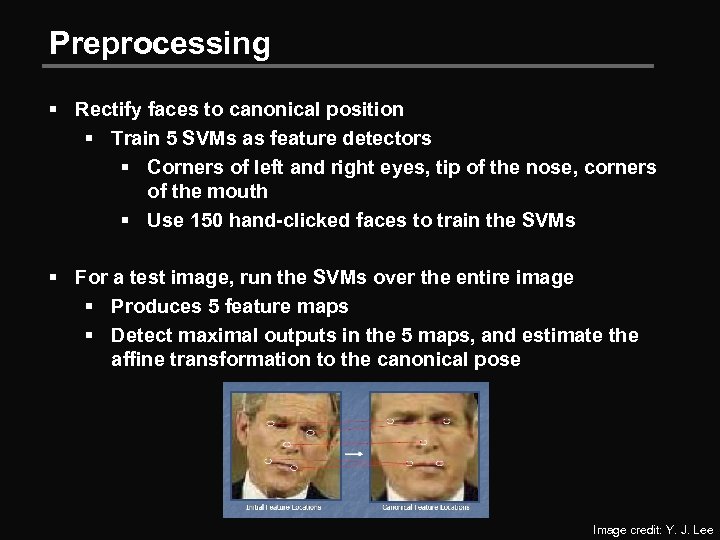

Preprocessing § Rectify faces to canonical position § Train 5 SVMs as feature detectors § Corners of left and right eyes, tip of the nose, corners of the mouth § Use 150 hand-clicked faces to train the SVMs § For a test image, run the SVMs over the entire image § Produces 5 feature maps § Detect maximal outputs in the 5 maps, and estimate the affine transformation to the canonical pose Image credit: Y. J. Lee

Preprocessing § Rectify faces to canonical position § Train 5 SVMs as feature detectors § Corners of left and right eyes, tip of the nose, corners of the mouth § Use 150 hand-clicked faces to train the SVMs § For a test image, run the SVMs over the entire image § Produces 5 feature maps § Detect maximal outputs in the 5 maps, and estimate the affine transformation to the canonical pose Image credit: Y. J. Lee

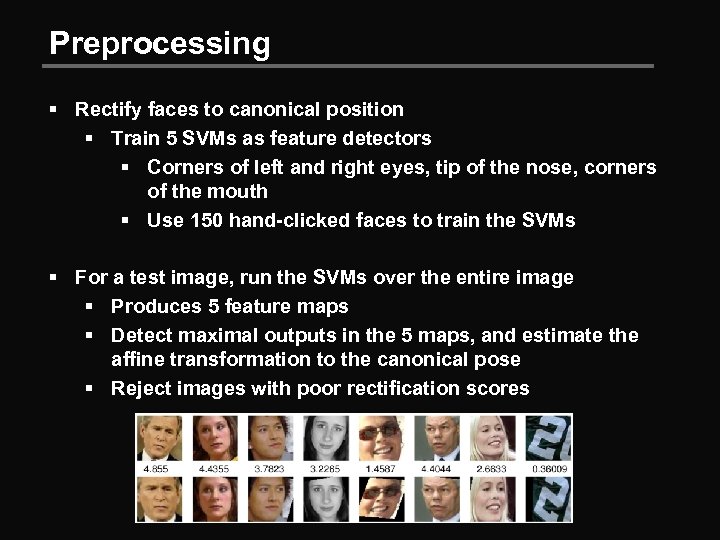

Preprocessing § Rectify faces to canonical position § Train 5 SVMs as feature detectors § Corners of left and right eyes, tip of the nose, corners of the mouth § Use 150 hand-clicked faces to train the SVMs § For a test image, run the SVMs over the entire image § Produces 5 feature maps § Detect maximal outputs in the 5 maps, and estimate the affine transformation to the canonical pose § Reject images with poor rectification scores

Preprocessing § Rectify faces to canonical position § Train 5 SVMs as feature detectors § Corners of left and right eyes, tip of the nose, corners of the mouth § Use 150 hand-clicked faces to train the SVMs § For a test image, run the SVMs over the entire image § Produces 5 feature maps § Detect maximal outputs in the 5 maps, and estimate the affine transformation to the canonical pose § Reject images with poor rectification scores

Preprocessing § Rectify faces to canonical position § Train 5 SVMs as feature detectors § Corners of left and right eyes, tip of the nose, corners of the mouth § Use 150 hand-clicked faces to train the SVMs § For a test image, run the SVMs over the entire image § Produces 5 feature maps § Detect maximal outputs in the 5 maps, and estimate the affine transformation to the canonical pose § Reject images with poor rectification scores § This leaves 34, 623 images § Throw out images with more than 4 names § 27, 742 faces

Preprocessing § Rectify faces to canonical position § Train 5 SVMs as feature detectors § Corners of left and right eyes, tip of the nose, corners of the mouth § Use 150 hand-clicked faces to train the SVMs § For a test image, run the SVMs over the entire image § Produces 5 feature maps § Detect maximal outputs in the 5 maps, and estimate the affine transformation to the canonical pose § Reject images with poor rectification scores § This leaves 34, 623 images § Throw out images with more than 4 names § 27, 742 faces

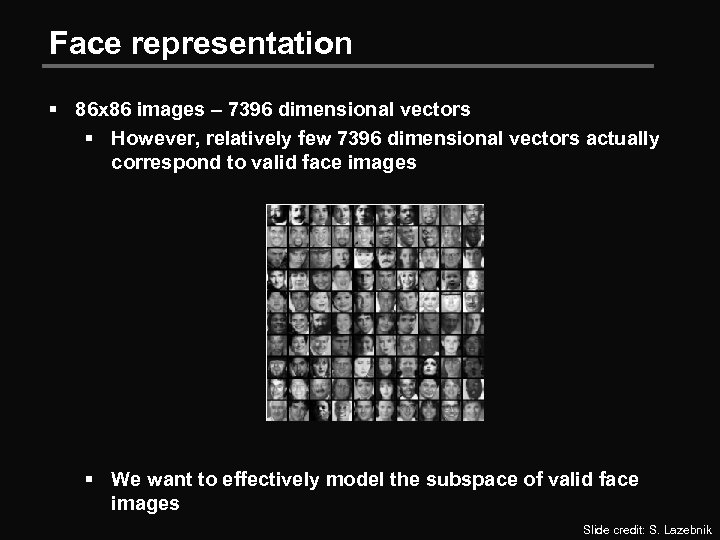

Face representation § 86 x 86 images – 7396 dimensional vectors § However, relatively few 7396 dimensional vectors actually correspond to valid face images § We want to effectively model the subspace of valid face images Slide credit: S. Lazebnik

Face representation § 86 x 86 images – 7396 dimensional vectors § However, relatively few 7396 dimensional vectors actually correspond to valid face images § We want to effectively model the subspace of valid face images Slide credit: S. Lazebnik

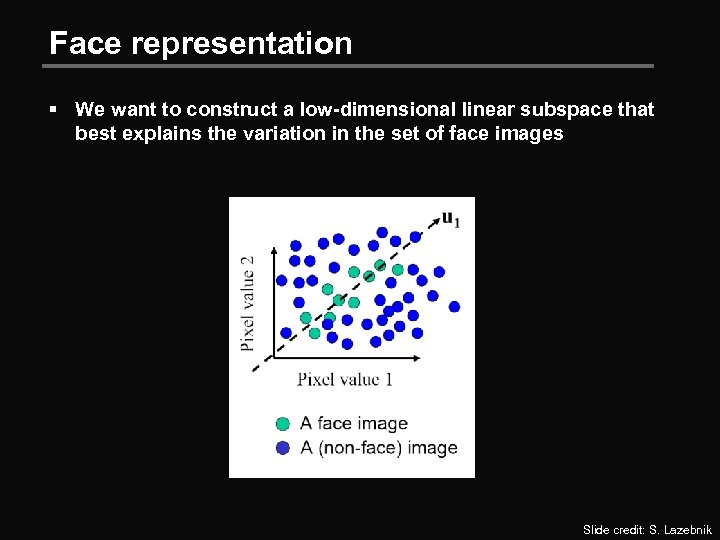

Face representation § We want to construct a low-dimensional linear subspace that best explains the variation in the set of face images Slide credit: S. Lazebnik

Face representation § We want to construct a low-dimensional linear subspace that best explains the variation in the set of face images Slide credit: S. Lazebnik

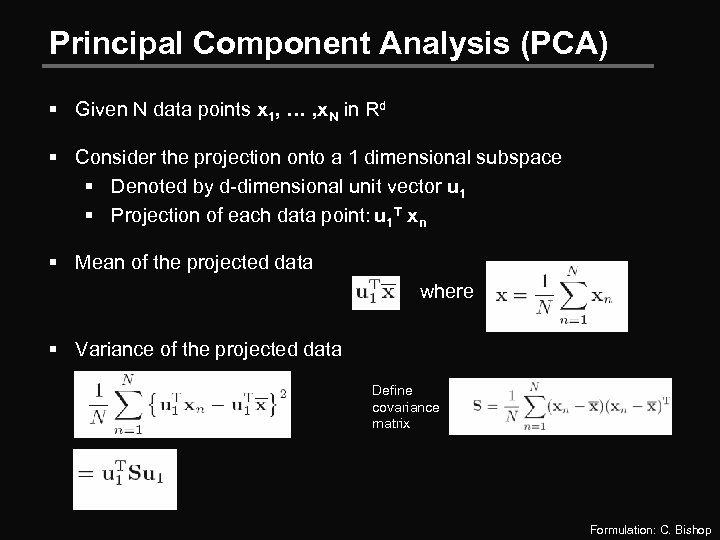

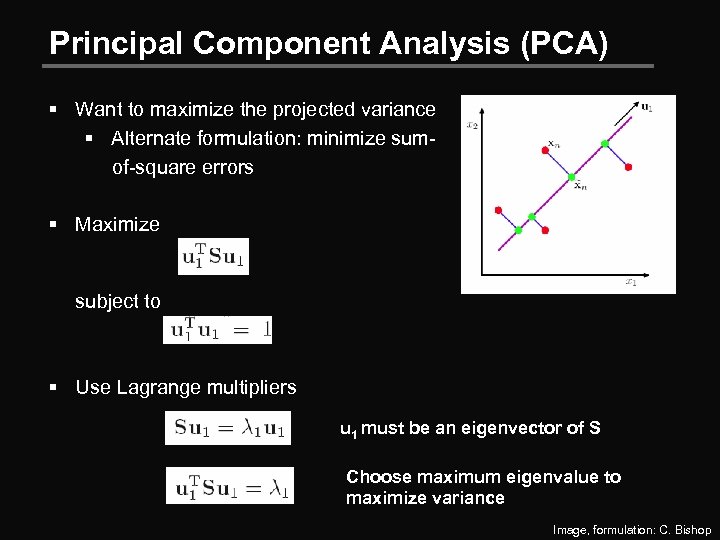

Principal Component Analysis (PCA) § Given N data points x 1, … , x. N in Rd § Consider the projection onto a 1 dimensional subspace § Denoted by d-dimensional unit vector u 1 § Projection of each data point: u 1 T xn § Mean of the projected data where § Variance of the projected data Define covariance matrix Formulation: C. Bishop

Principal Component Analysis (PCA) § Given N data points x 1, … , x. N in Rd § Consider the projection onto a 1 dimensional subspace § Denoted by d-dimensional unit vector u 1 § Projection of each data point: u 1 T xn § Mean of the projected data where § Variance of the projected data Define covariance matrix Formulation: C. Bishop

Principal Component Analysis (PCA) § Want to maximize the projected variance § Alternate formulation: minimize sumof-square errors § Maximize subject to § Use Lagrange multipliers u 1 must be an eigenvector of S Choose maximum eigenvalue to maximize variance Image, formulation: C. Bishop

Principal Component Analysis (PCA) § Want to maximize the projected variance § Alternate formulation: minimize sumof-square errors § Maximize subject to § Use Lagrange multipliers u 1 must be an eigenvector of S Choose maximum eigenvalue to maximize variance Image, formulation: C. Bishop

Principal Component Analysis (PCA) § The direction that captures the maximum covariance of the data is the eigenvector corresponding to the largest eigenvalue of the data covariance matrix § Furthermore, the top k orthogonal directions that capture the most variance of the data are the k eigenvectors corresponding to the k largest eigenvalues Slide credit: S. Lazebnik

Principal Component Analysis (PCA) § The direction that captures the maximum covariance of the data is the eigenvector corresponding to the largest eigenvalue of the data covariance matrix § Furthermore, the top k orthogonal directions that capture the most variance of the data are the k eigenvectors corresponding to the k largest eigenvalues Slide credit: S. Lazebnik

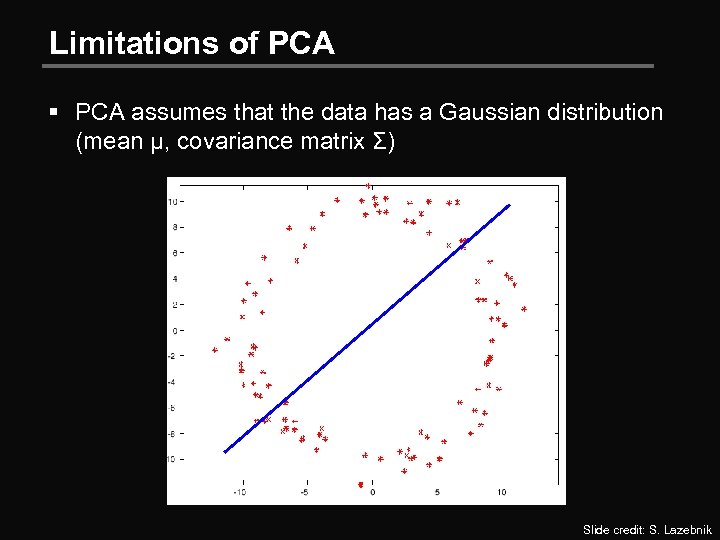

Limitations of PCA § PCA assumes that the data has a Gaussian distribution (mean µ, covariance matrix Σ) Slide credit: S. Lazebnik

Limitations of PCA § PCA assumes that the data has a Gaussian distribution (mean µ, covariance matrix Σ) Slide credit: S. Lazebnik

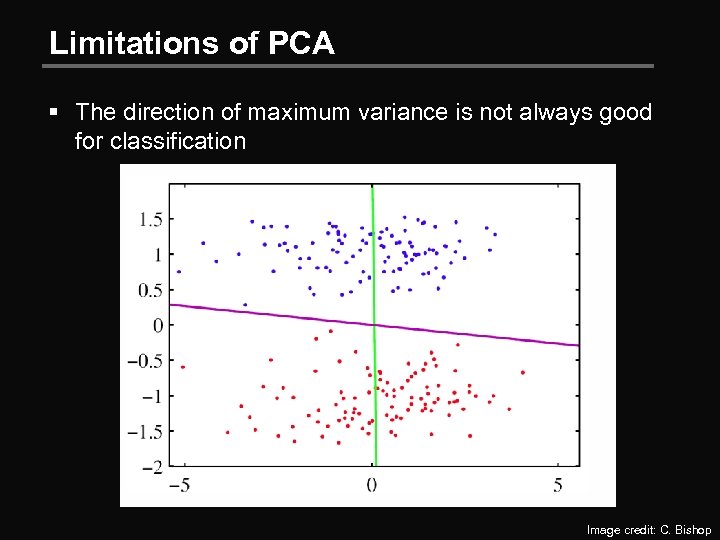

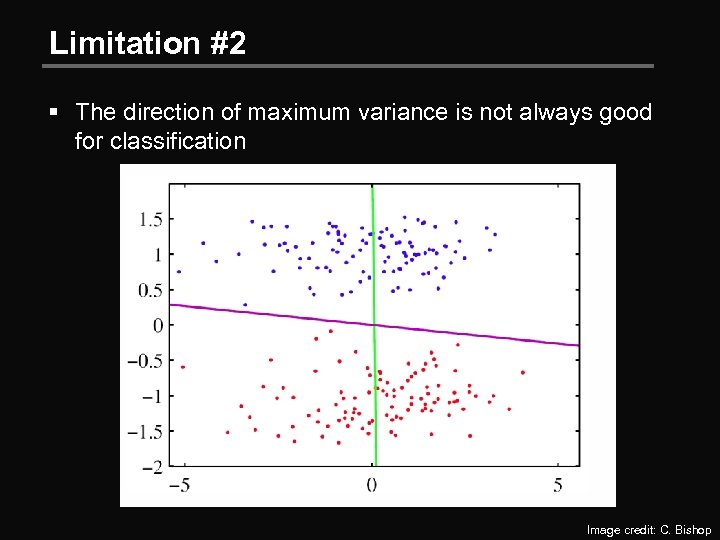

Limitations of PCA § The direction of maximum variance is not always good for classification Image credit: C. Bishop

Limitations of PCA § The direction of maximum variance is not always good for classification Image credit: C. Bishop

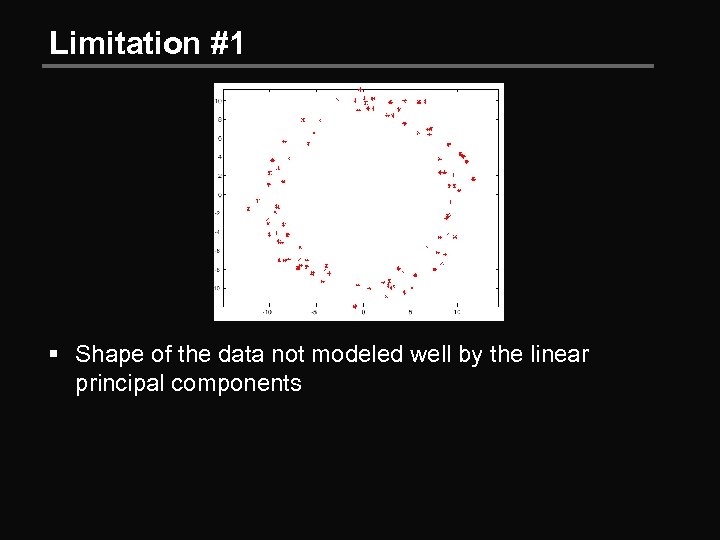

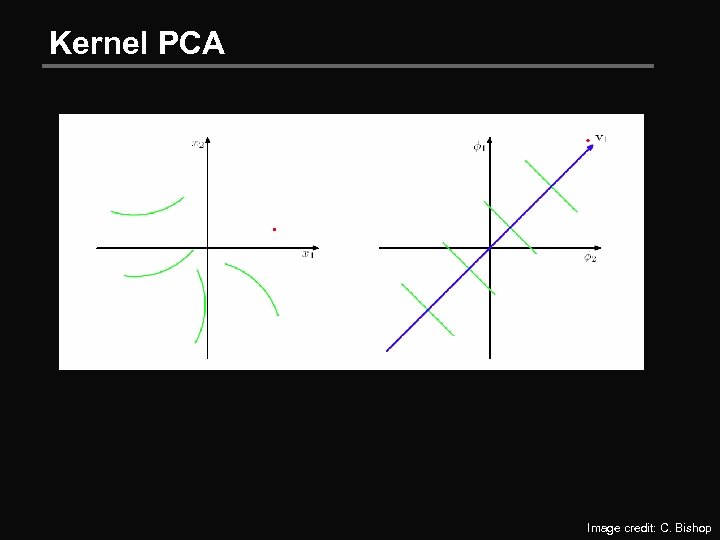

Limitation #1 § Shape of the data not modeled well by the linear principal components

Limitation #1 § Shape of the data not modeled well by the linear principal components

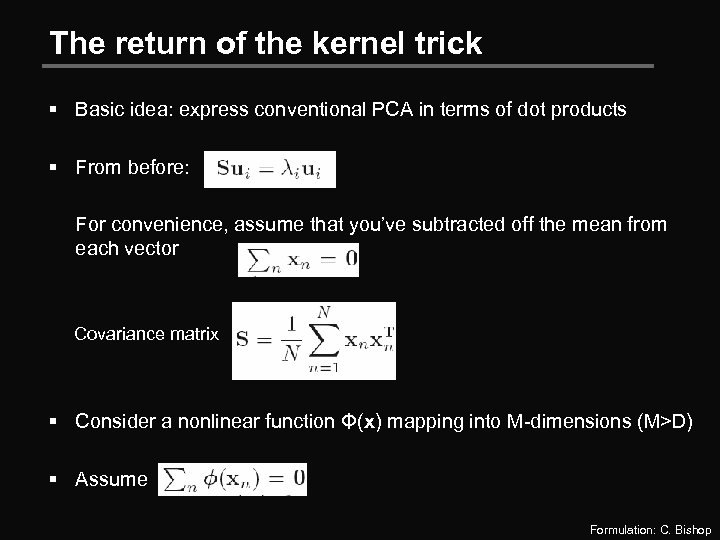

The return of the kernel trick § Basic idea: express conventional PCA in terms of dot products § From before: For convenience, assume that you’ve subtracted off the mean from each vector Covariance matrix § Consider a nonlinear function Φ(x) mapping into M-dimensions (M>D) § Assume Formulation: C. Bishop

The return of the kernel trick § Basic idea: express conventional PCA in terms of dot products § From before: For convenience, assume that you’ve subtracted off the mean from each vector Covariance matrix § Consider a nonlinear function Φ(x) mapping into M-dimensions (M>D) § Assume Formulation: C. Bishop

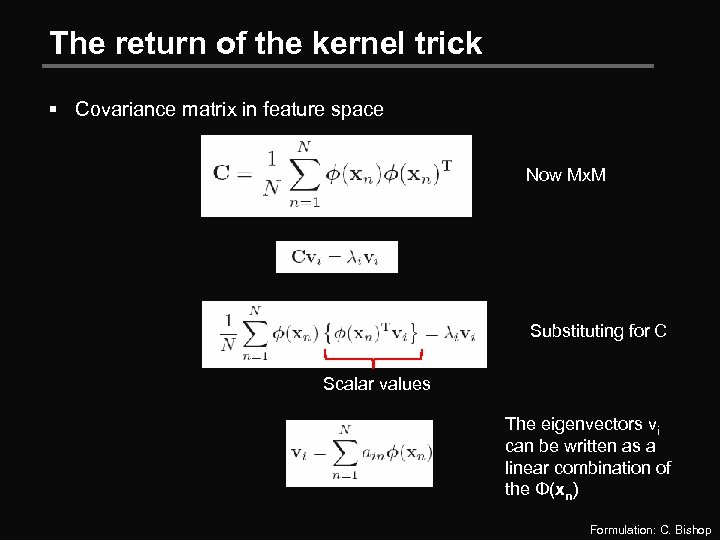

The return of the kernel trick § Covariance matrix in feature space Now Mx. M Substituting for C Scalar values The eigenvectors vi can be written as a linear combination of the Φ(xn) Formulation: C. Bishop

The return of the kernel trick § Covariance matrix in feature space Now Mx. M Substituting for C Scalar values The eigenvectors vi can be written as a linear combination of the Φ(xn) Formulation: C. Bishop

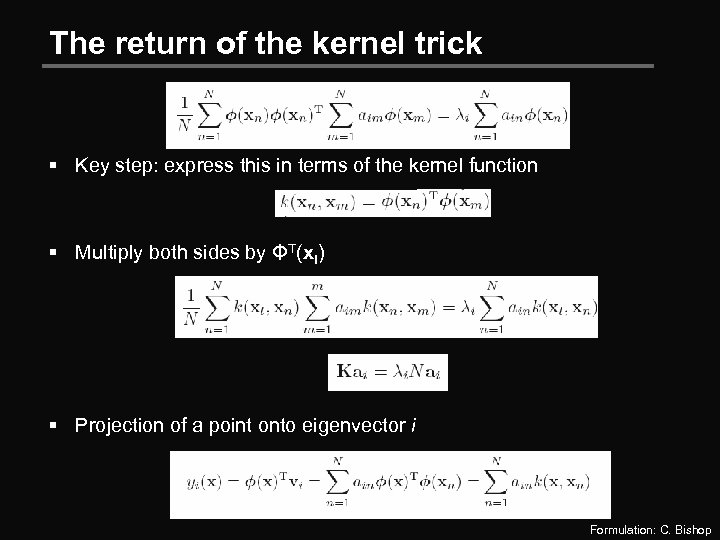

The return of the kernel trick § Key step: express this in terms of the kernel function § Multiply both sides by ΦT(xl) § Projection of a point onto eigenvector i Formulation: C. Bishop

The return of the kernel trick § Key step: express this in terms of the kernel function § Multiply both sides by ΦT(xl) § Projection of a point onto eigenvector i Formulation: C. Bishop

Kernel PCA Image credit: C. Bishop

Kernel PCA Image credit: C. Bishop

Limitation #2 § The direction of maximum variance is not always good for classification Image credit: C. Bishop

Limitation #2 § The direction of maximum variance is not always good for classification Image credit: C. Bishop

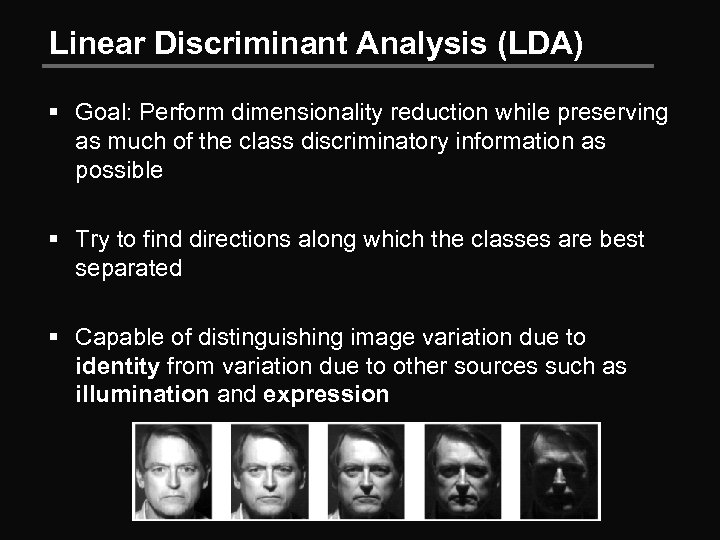

Linear Discriminant Analysis (LDA) § Goal: Perform dimensionality reduction while preserving as much of the class discriminatory information as possible § Try to find directions along which the classes are best separated § Capable of distinguishing image variation due to identity from variation due to other sources such as illumination and expression

Linear Discriminant Analysis (LDA) § Goal: Perform dimensionality reduction while preserving as much of the class discriminatory information as possible § Try to find directions along which the classes are best separated § Capable of distinguishing image variation due to identity from variation due to other sources such as illumination and expression

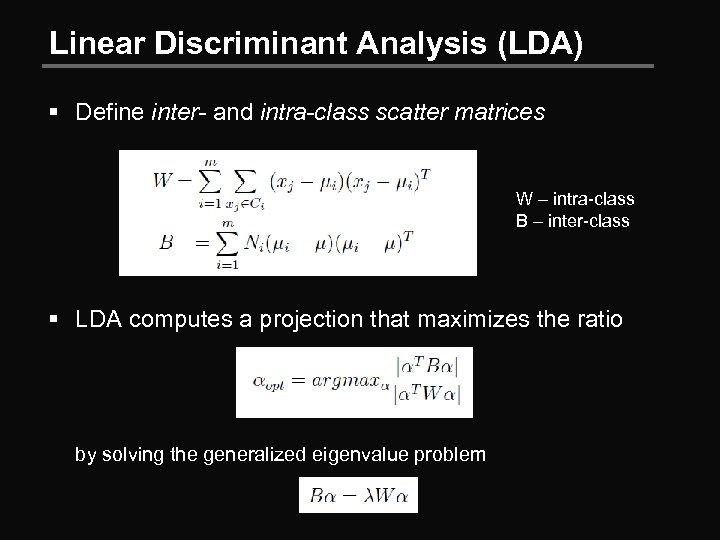

Linear Discriminant Analysis (LDA) § Define inter- and intra-class scatter matrices W – intra-class B – inter-class § LDA computes a projection that maximizes the ratio by solving the generalized eigenvalue problem

Linear Discriminant Analysis (LDA) § Define inter- and intra-class scatter matrices W – intra-class B – inter-class § LDA computes a projection that maximizes the ratio by solving the generalized eigenvalue problem

Class labels for LDA § For the unsupervised names and faces dataset, you don’t have true labels § Use proxy for labeled training data § Images from the dataset with only one detected face and one detected name § Observation: Using LDA on top of the space found by kernel PCA improves performance significantly

Class labels for LDA § For the unsupervised names and faces dataset, you don’t have true labels § Use proxy for labeled training data § Images from the dataset with only one detected face and one detected name § Observation: Using LDA on top of the space found by kernel PCA improves performance significantly

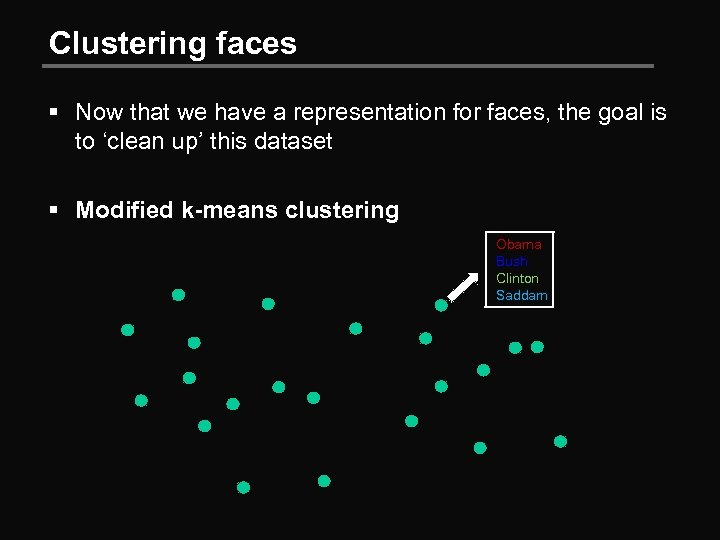

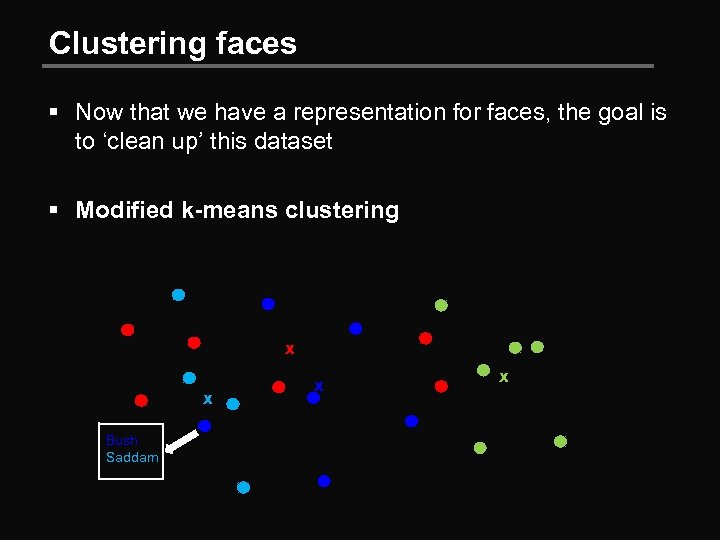

Clustering faces § Now that we have a representation for faces, the goal is to ‘clean up’ this dataset § Modified k-means clustering Obama Bush Clinton Saddam

Clustering faces § Now that we have a representation for faces, the goal is to ‘clean up’ this dataset § Modified k-means clustering Obama Bush Clinton Saddam

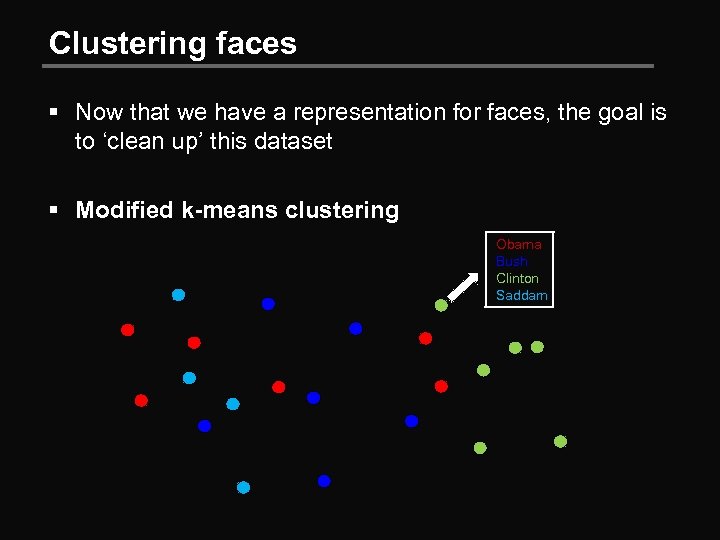

Clustering faces § Now that we have a representation for faces, the goal is to ‘clean up’ this dataset § Modified k-means clustering Obama Bush Clinton Saddam

Clustering faces § Now that we have a representation for faces, the goal is to ‘clean up’ this dataset § Modified k-means clustering Obama Bush Clinton Saddam

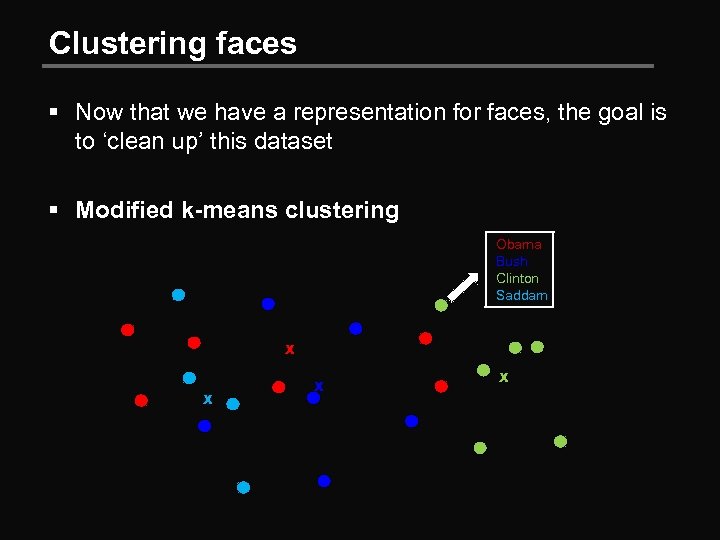

Clustering faces § Now that we have a representation for faces, the goal is to ‘clean up’ this dataset § Modified k-means clustering Obama Bush Clinton Saddam x x

Clustering faces § Now that we have a representation for faces, the goal is to ‘clean up’ this dataset § Modified k-means clustering Obama Bush Clinton Saddam x x

Clustering faces § Now that we have a representation for faces, the goal is to ‘clean up’ this dataset § Modified k-means clustering x x Bush Saddam x x

Clustering faces § Now that we have a representation for faces, the goal is to ‘clean up’ this dataset § Modified k-means clustering x x Bush Saddam x x

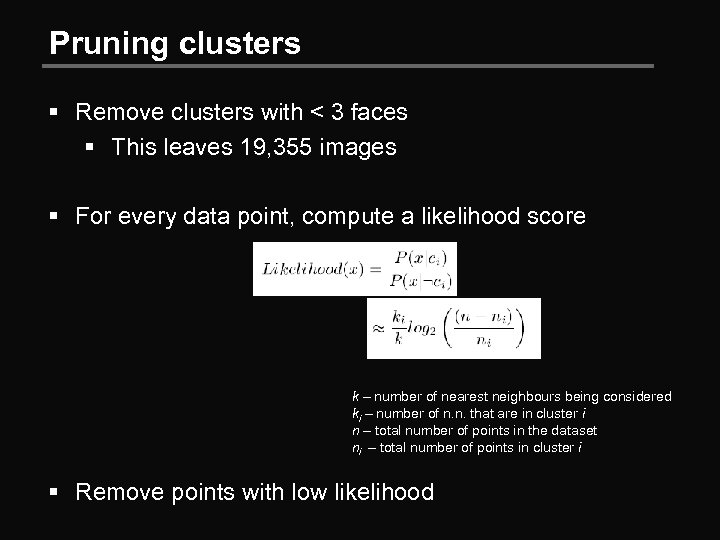

Pruning clusters § Remove clusters with < 3 faces § This leaves 19, 355 images § For every data point, compute a likelihood score k – number of nearest neighbours being considered ki – number of n. n. that are in cluster i n – total number of points in the dataset ni – total number of points in cluster i § Remove points with low likelihood

Pruning clusters § Remove clusters with < 3 faces § This leaves 19, 355 images § For every data point, compute a likelihood score k – number of nearest neighbours being considered ki – number of n. n. that are in cluster i n – total number of points in the dataset ni – total number of points in cluster i § Remove points with low likelihood

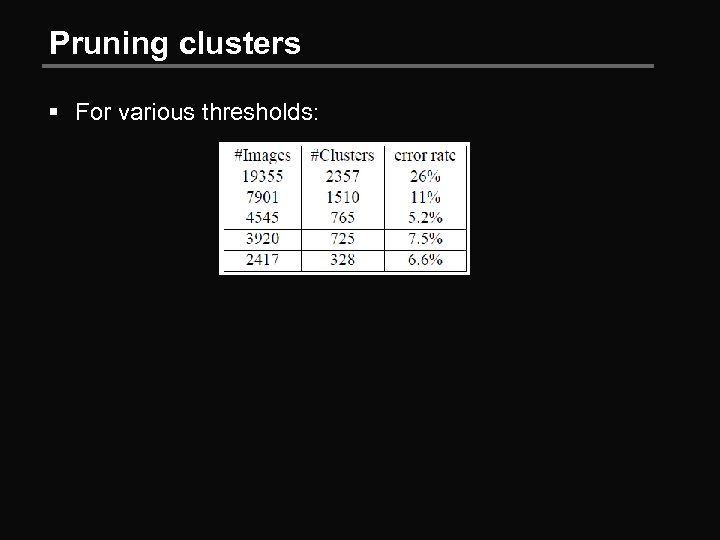

Pruning clusters § For various thresholds:

Pruning clusters § For various thresholds:

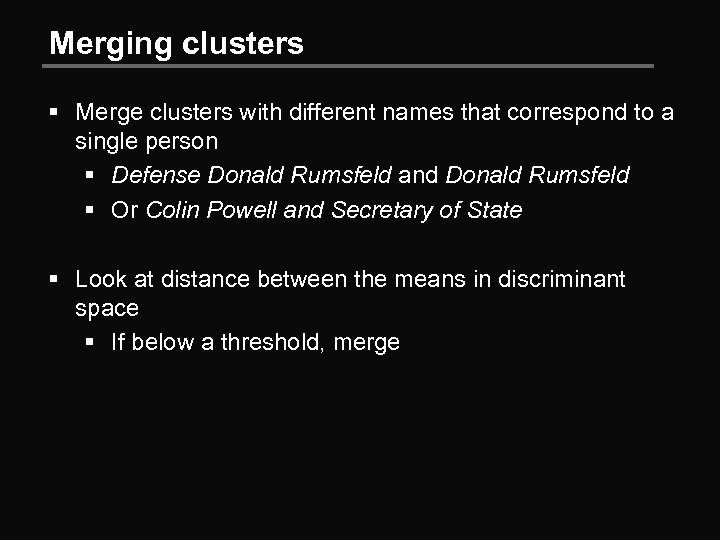

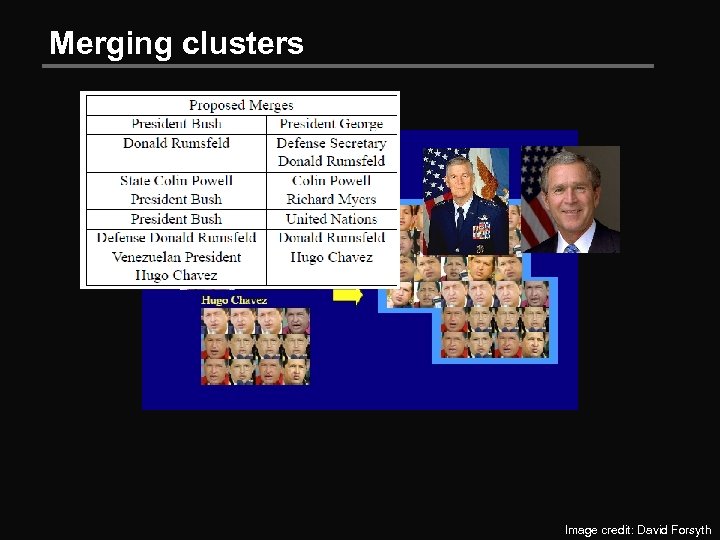

Merging clusters § Merge clusters with different names that correspond to a single person § Defense Donald Rumsfeld and Donald Rumsfeld § Or Colin Powell and Secretary of State § Look at distance between the means in discriminant space § If below a threshold, merge

Merging clusters § Merge clusters with different names that correspond to a single person § Defense Donald Rumsfeld and Donald Rumsfeld § Or Colin Powell and Secretary of State § Look at distance between the means in discriminant space § If below a threshold, merge

Merging clusters Image credit: David Forsyth

Merging clusters Image credit: David Forsyth

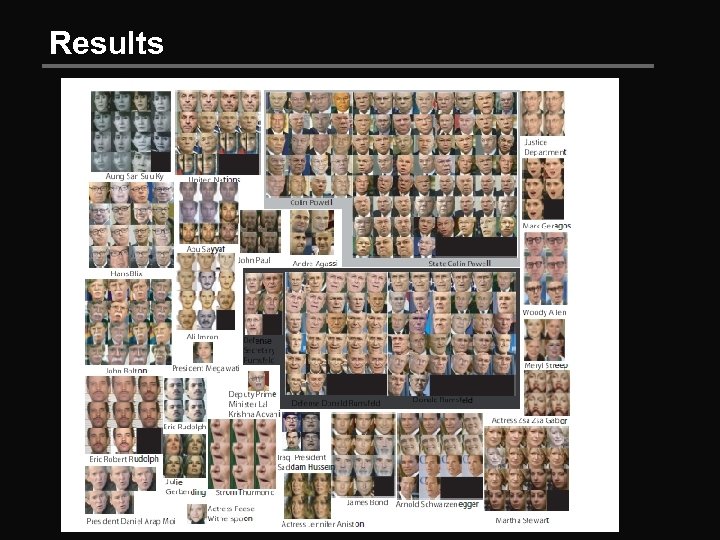

Results

Results