fba7e9bb91f7ccfdeef1916c5b0697dc.ppt

- Количество слайдов: 37

Word Prediction in Hebrew Preliminary and Surprising Results Yael Netzer Meni Adler Michael Elhadad Department of Computer Science Ben Gurion University, Israel August 6 th ISAAC 2008

Outline • • • Objectives and example. Methods of Word Prediction Hebrew Morphology Experiments and Results Conclusions? August 6 th ISAAC 2008 Outline

Word Prediction - Objectives • Ease word insertion in textual software – by guessing the next word – by giving a list of possible options for the next word – by completing a word given a prefix • General idea: guess the next word given the previous ones [Input w 1 w 2] [guess w 3] August 6 th ISAAC 2008 Objectives

)Example( I s_____ August 6 th ISAAC 2008 Word Prediction Example

)Example( I s_____ verb, adverb? August 6 th ISAAC 2008 Word Prediction Example

)Example( I s_____ verb sang? maybe. singularized? hopefully August 6 th ISAAC 2008 Word Prediction Example

)Example( I saw a _____ August 6 th ISAAC 2008 Word Prediction Example

)Example( I saw a _____ noun / adjective August 6 th ISAAC 2008 Word Prediction Example

)Example( I saw a b____ August 6 th ISAAC 2008 Word Prediction Example

)Example( I saw a b____ brown? big? bear? barometer? August 6 th ISAAC 2008 Word Prediction Example

)Example( I saw a bird in the _____ August 6 th ISAAC 2008 Word Prediction Example

![)Example( I saw a bird in the _____ [semantics will do good] August 6 )Example( I saw a bird in the _____ [semantics will do good] August 6](https://present5.com/presentation/fba7e9bb91f7ccfdeef1916c5b0697dc/image-12.jpg)

)Example( I saw a bird in the _____ [semantics will do good] August 6 th ISAAC 2008 Word Prediction Example

)Example( I saw a bird in the z____ August 6 th ISAAC 2008 Word Prediction Example

)Example( I saw a bird in the z____ obvious (? ) August 6 th ISAAC 2008 Word Prediction Example

Statistical Methods • Statistical information – Unigrams: probability of isolated words • Independent of context, offer the most likely words as candidates – More complex language models (Markov Models) • Given w 1. . wn, determine most likely candidate for wn+1 – Most common method in applications is the unigram (see references in [Garay-Vitoria and Abascal, 2004]) August 6 th ISAAC 2008 Word Prediction Methods

![Syntactic Methods • Syntactic knowledge – Consider sequences of part of speech tags [Article] Syntactic Methods • Syntactic knowledge – Consider sequences of part of speech tags [Article]](https://present5.com/presentation/fba7e9bb91f7ccfdeef1916c5b0697dc/image-16.jpg)

Syntactic Methods • Syntactic knowledge – Consider sequences of part of speech tags [Article] [Noun] predict [Verb] – Phrase structure [Noun Phrase] predict [Verb] – Syntactic knowledge can be statistical or based on hand -coded rules August 6 th ISAAC 2008 Word Prediction Methods

Semantic Methods • Semantic knowledge – Assign semantic categories to words – Find a set of rules which constrain the possible candidates for the next word • [eat verb] predict [word of category food] – Not widely used in word prediction, mostly because it requires complex hand coding and is too inefficient for real-time operation August 6 th ISAAC 2008 Word Prediction Methods

Word Prediction Knowledge Sources • Corpora: texts and frequencies • Vocabularies (Can be domain specific( • Lexicons with syntactic and/or semantic knowledge • User’s history • Morphological analyzers • Unknown words models August 6 th ISAAC 2008 Word Prediction Methods

Evaluation of Word Prediction • Keystroke savings • Time savings • Overall satisfaction – Cognitive overload (length of choice list vs. accuracy). • A predictor is considered adequate if its hit ratio is high as the required number of selections decreases. 1 -(# of actual keystrokes/# of expected keystrokes) August 6 th ISAAC 2008 Word Prediction Evaluation

Work in non-English Languages • Languages with rich morphology: – n-gram-based methods offer quite reasonable prediction [Trost et al. 2005] but can be improved with more sophisticated syntactic/semantic tools • Suggestions for inflected languages (e. g. Basque) – Use two lexicons: stems and suffixes – Add syntactic information to dictionaries and grammatical rules to the system, offer stems and suffixes – Combine these two approaches: offer inflected nouns. August 6 th ISAAC 2008 Hebrew Word Prediction

Motivation for Hebrew • We need word prediction for Hebrew – No known previous published research for Hebrew. • We wanted to test our morphological analyzer in a useful application. August 6 th ISAAC 2008 Hebrew

Initial Hypothesis Word prediction in Hebrew will be complicated, morphological and syntactic knowledge will be needed. August 6 th ISAAC 2008

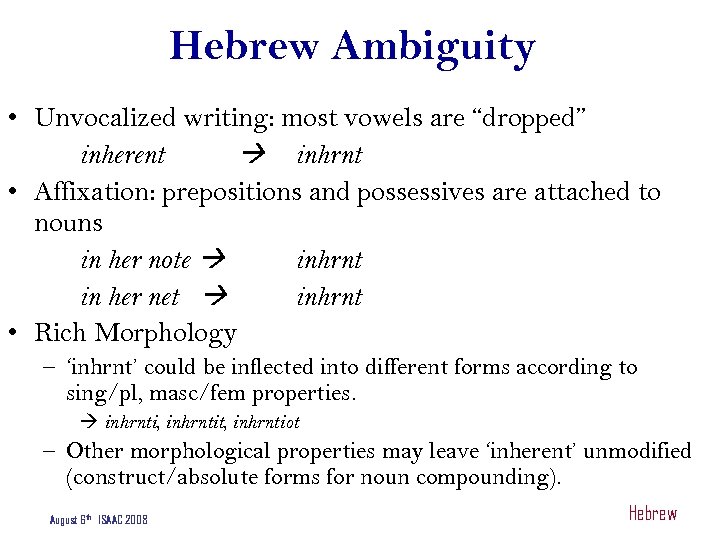

Hebrew Ambiguity • Unvocalized writing: most vowels are “dropped” inherent inhrnt • Affixation: prepositions and possessives are attached to nouns in her note inhrnt in her net inhrnt • Rich Morphology – ‘inhrnt’ could be inflected into different forms according to sing/pl, masc/fem properties. inhrnti, inhrntit, inhrntiot – Other morphological properties may leave ‘inherent’ unmodified (construct/absolute forms for noun compounding). August 6 th ISAAC 2008 Hebrew

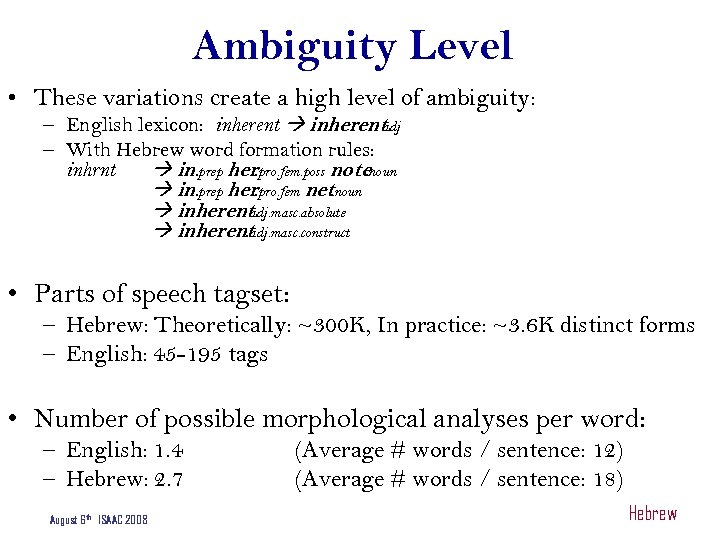

Ambiguity Level • These variations create a high level of ambiguity: – English lexicon: inherent. adj – With Hebrew word formation rules: inhrnt in. prep her. pro. fem. poss notenoun. in. prep her. pro. fem netnoun. inherent. adj. masc. absolute inherent. adj. masc. construct • Parts of speech tagset: – Hebrew: Theoretically: ~300 K, In practice: ~3. 6 K distinct forms – English: 45 -195 tags • Number of possible morphological analyses per word: – English: 1. 4 – Hebrew: 2. 7 August 6 th ISAAC 2008 (Average # words / sentence: 12) (Average # words / sentence: 18) Hebrew

(Real Hebrew) Morphological Ambiguity ● בצלם bzlm – בצלם bzelem (name of an association) – בצלם b-zalem (while taking a picture) – בצלם bzalam (their onion) – בצלם b-zila-m (under their shades) – בצלם b-zalam (in a photographer) – בצלם ba-zalam (in the photographer) – בצלם b-zelem (in an idol) – בצלם ba-zelem (in the idol) August 6 th ISAAC 2008 Hebrew Morphology

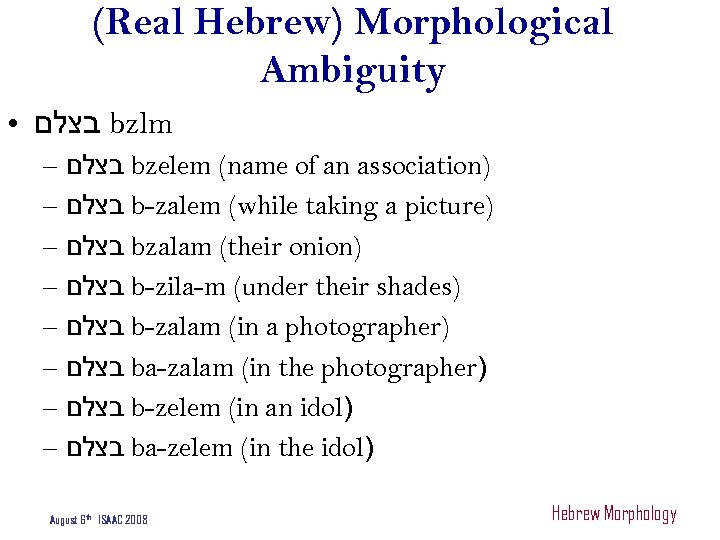

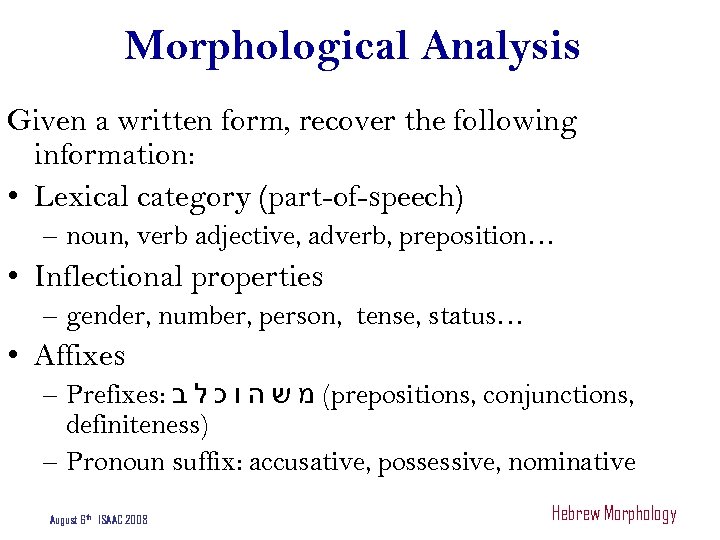

Morphological Analysis Given a written form, recover the following information: • Lexical category (part-of-speech) – noun, verb adjective, adverb, preposition… • Inflectional properties – gender, number, person, tense, status… • Affixes – Prefixes: ( מ ש ה ו כ ל ב prepositions, conjunctions, definiteness) – Pronoun suffix: accusative, possessive, nominative August 6 th ISAAC 2008 Hebrew Morphology

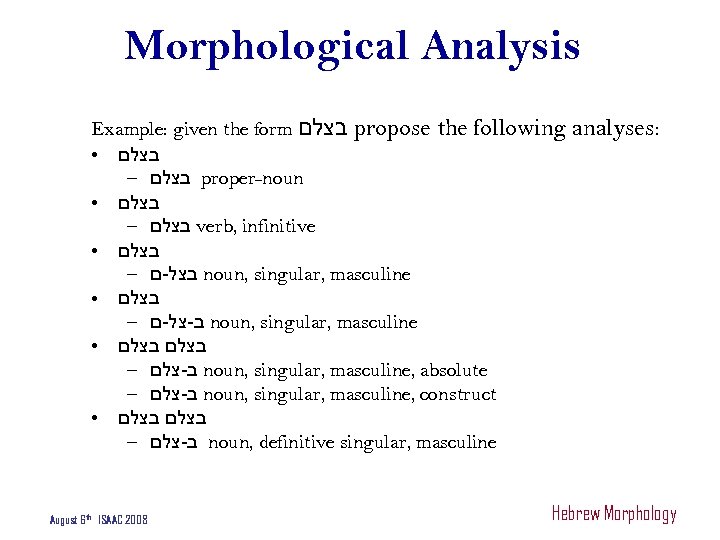

Morphological Analysis Example: given the form בצלם propose the following ● בצלם – בצלם proper-noun ● בצלם – בצלם verb, infinitive ● בצלם – בצל-ם noun, singular, masculine ● בצלם – ב-צל-ם noun, singular, masculine ● בצלם – ב-צלם noun, singular, masculine, absolute – ב-צלם noun, singular, masculine, construct ● בצלם – ב-צלם noun, definitive singular, masculine August 6 th ISAAC 2008 analyses: Hebrew Morphology

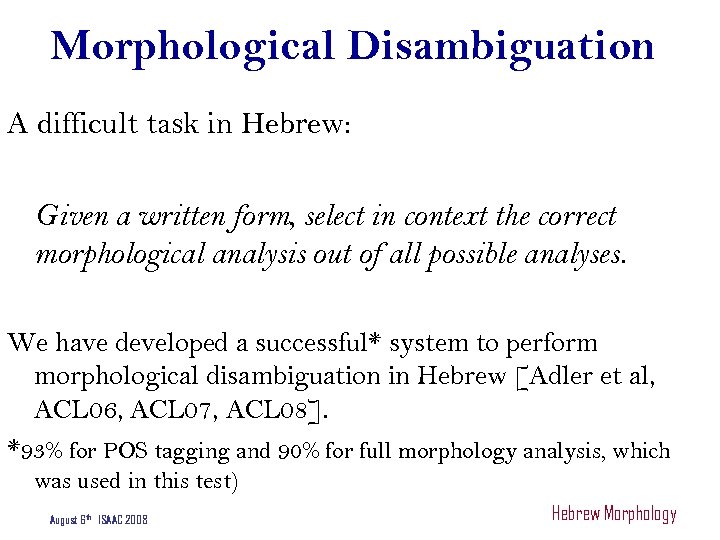

Morphological Disambiguation A difficult task in Hebrew: Given a written form, select in context the correct morphological analysis out of all possible analyses. We have developed a successful* system to perform morphological disambiguation in Hebrew [Adler et al, ACL 06, ACL 07, ACL 08]. *93% for POS tagging and 90% for full morphology analysis, which was used in this test) August 6 th ISAAC 2008 Hebrew Morphology

Word Prediction in Hebrew • We looked at Word Prediction as a sample task to show off the quality of our Morphological Disambiguator • But first… we checked a simple baseline August 6 th ISAAC 2008 Hebrew Word Prediction

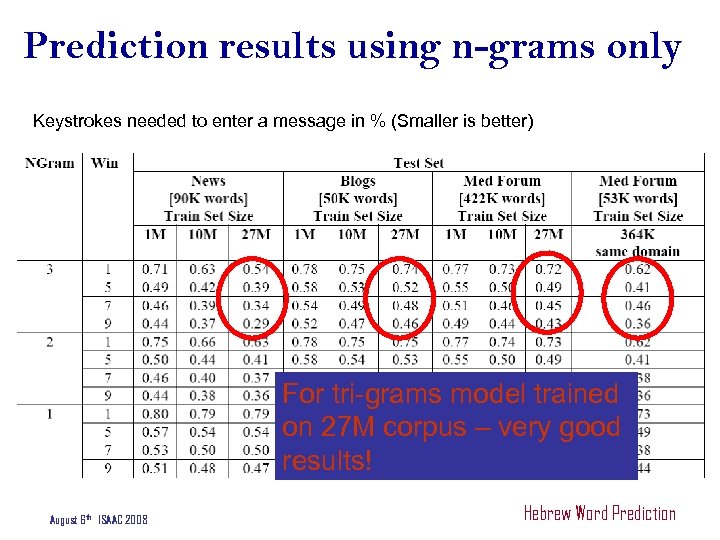

Baseline: n-gram methods • Check n-gram methods (unigram, bigram, trigram) • Four sizes of selection menus: 1, 5, 7 and 9 • Various training sets of 1 M, 10 M and 27 M words to learn the probabilities of n-grams. • Various genres. August 6 th ISAAC 2008 Hebrew Word Prediction

Prediction results using n-grams only Keystrokes needed to enter a message in % (Smaller is better) For tri-grams model trained on 27 M corpus – very good results! August 6 th ISAAC 2008 Hebrew Word Prediction

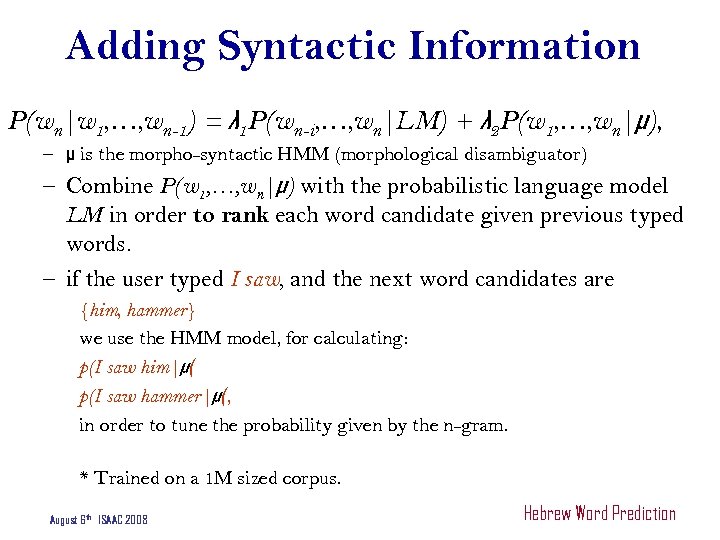

Adding Syntactic Information P(wn|w 1, …, wn-1) = λ 1 P(wn-i, …, wn|LM) + λ 2 P(w 1, …, wn|μ), – μ is the morpho-syntactic HMM (morphological disambiguator) – Combine P(w 1, …, wn|μ) with the probabilistic language model LM in order to rank each word candidate given previous typed words. – if the user typed I saw, and the next word candidates are {him, hammer} we use the HMM model, for calculating: p(I saw him|μ( p(I saw hammer|μ(, in order to tune the probability given by the n-gram. * Trained on a 1 M sized corpus. August 6 th ISAAC 2008 Hebrew Word Prediction

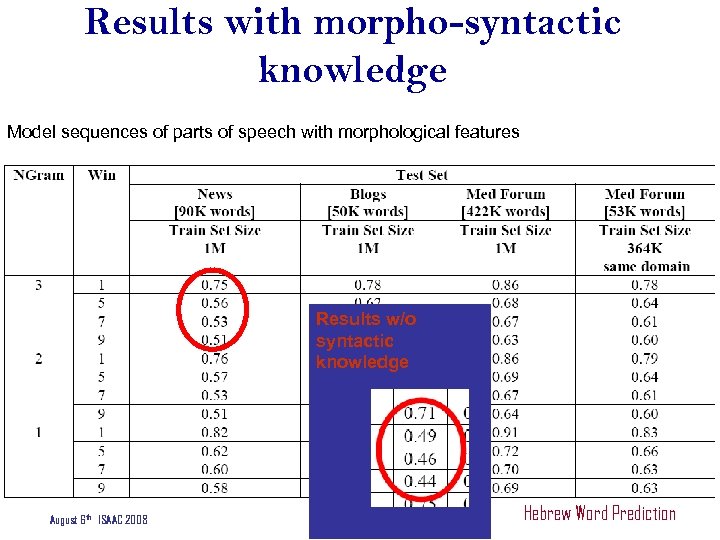

Results with morpho-syntactic knowledge Model sequences of parts of speech with morphological features Results w/o syntactic knowledge August 6 th ISAAC 2008 Hebrew Word Prediction

Some Notes on Results • n-grams perform very well (high level of keystroke saving) • High rate for all genres • And the expected: – Better prediction when trained on more data – Better prediction with tri-grams – Better prediction with larger window • Morpho-syntactic information did not improve results (in fact, it hurt!) August 6 th ISAAC 2008 Results

Conclusion • Statistical data on a language with rich morphology yields good results – up to 29% with nine word proposals – 34% for seven proposals – 54% for a single proposal • Syntactic information did not improve the prediction. • Explanation - morphology didn't improve due the use of p(w 1, …, wn|μ) of an unfinished sentence August 6 th ISAAC 2008 Hebrew Word Prediction - Conclusions

תודה Thank you August 6 th ISAAC 2008

Technical Information • CMU – N-grams • Storage – Berkeley DB to store knowledge for WP: Mapping n-grams • More questions on technology – meni. adler@gmail. com August 6 th ISAAC 2008 Hebrew Word Prediction

fba7e9bb91f7ccfdeef1916c5b0697dc.ppt