35c917e9b2297baf6a23785acc01112c.ppt

- Количество слайдов: 29

What Works? Evaluating the Impact of Active Labor Market Policies May 2010, Budapest, Hungary Joost de Laat (Ph. D), Economist, Human Development

Outline • Why Evidence Based Decision Making? • Active Labor Market Policies: Summary of Findings • Where is the Evidence? The Challenge of Evaluating Program Impact • Ex Ante and Ex Post Evaluation

Why Evidence Based Decision Making? • Limited resources to address needs • Multiple policy options to address needs • Rigorous evidence often lacking to prioritize policy options and program elements 3

Active Labor Market Policies: Getting Unemployed into Jobs • • • 4 Improve matching of workers and jobs • Assist in job search Improve quality of labor supply • Business training, vocational training Provide direct labor incentives • Job creation schemes such as public works

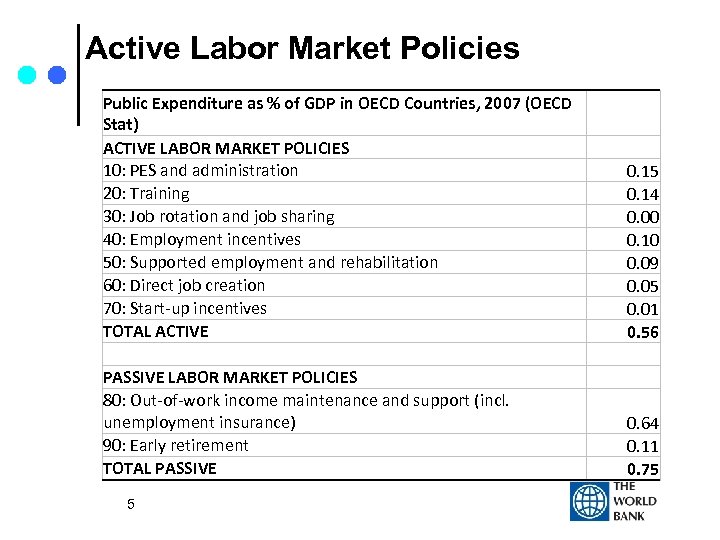

Active Labor Market Policies Public Expenditure as % of GDP in OECD Countries, 2007 (OECD Stat) ACTIVE LABOR MARKET POLICIES 10: PES and administration 20: Training 30: Job rotation and job sharing 40: Employment incentives 50: Supported employment and rehabilitation 60: Direct job creation 70: Start-up incentives TOTAL ACTIVE PASSIVE LABOR MARKET POLICIES 80: Out-of-work income maintenance and support (incl. unemployment insurance) 90: Early retirement TOTAL PASSIVE 5 0. 14 0. 00 0. 10 0. 09 0. 05 0. 01 0. 56 0. 64 0. 11 0. 75

International Evidence on Effectiveness of ALMPs • Active Labor Market Policy Evaluations: A Meta Analysis. By David Card, Jochen Kluve, and Andrea Weber (2009) • Review of 97 studies between 1995 -2007 • The Effectiveness of European Active Labor Market Policy. By Jochen Kluve (2006) • Review of 73 studies between 2002 -2005 6

Do ALMPs Help Unemployed Find Work? (Card et al. (2009), Kluve (2006)) • Subsidized public sector employment • Relatively Ineffective • Job search assistance (often least expensive) • Generally favorable, especially in short run • Combined with sanctions (e. g. UK “New Deal”) promising • Classroom and on-the-job training • Not especially favorable in short-run • More positive impacts after 2 years 7

Do ALMPs Help Unemployed Find Work? (Card et al. (2009), Kluve (2006)) • ALMPs targeted at youth • Findings mixed 8

The Impact Evaluation Challenge Impact is difference in outcome with and without program for those beneficiaries who participate in the program • Problem: beneficiaries have only one existence; they participate in the program or they do not. • 9

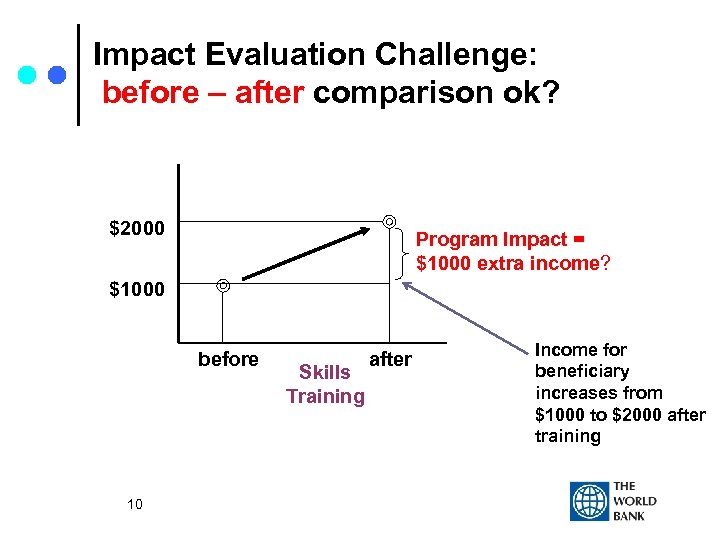

Impact Evaluation Challenge: before – after comparison ok? $2000 Program Impact = $1000 extra income? $1000 before 10 Skills Training after Income for beneficiary increases from $1000 to $2000 after training

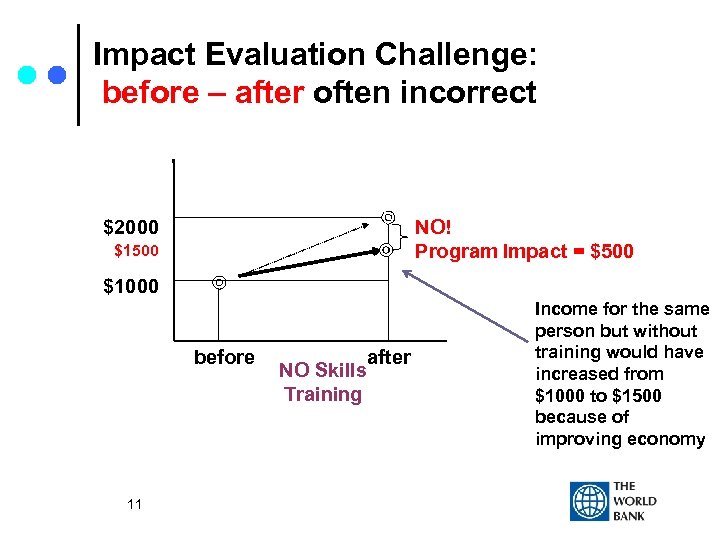

Impact Evaluation Challenge: before – after often incorrect NO! Program Impact = $500 $2000 $1500 $1000 before 11 NO Skills Training after Income for the same person but without training would have increased from $1000 to $1500 because of improving economy

Impact Evaluation Challenge • Solution: a proper comparison group • Comparison outcomes must be identical to treatment group outcomes, if the treatment group did not participate in the program. 12

Impact Evaluation Approaches Ex ante: 1. Randomized evaluations 2. Double-difference (DD) methods Ex post: 3. Propensity score matching (PSM) 4. Regression discontinuity (RD) design 5. Instrumental variable (IV) methods 13

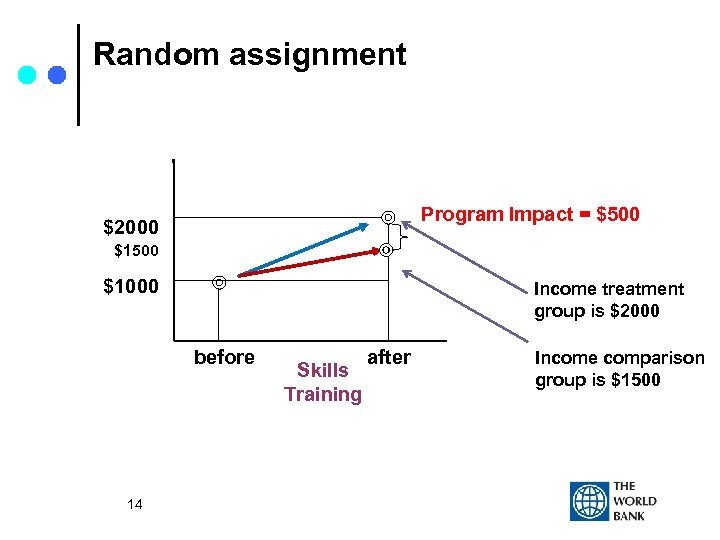

Random assignment Program Impact = $500 $2000 $1500 $1000 Income treatment group is $2000 before 14 Skills Training after Income comparison group is $1500

Randomized Assignment Ensures Proper Comparison Group • Ensures treatment and comparison at start of program are the same (background and outcomes) • Any differences that arise after program must be due to the program and not due to selection-bias • “Gold” standard for evaluations; not always feasible 15

Examples Randomized ALMP Evaluations • Improve matching of workers and jobs • Counseling the unemployed in France • Improve quality of labor supply • Providing vocationally focused training for disadvantaged youth in USA (Job Corps) • Provide direct labor demand / supply incentives • Canadian Self-Sufficiency Project 16

Challenges to Randomized Designs • Cost • Ethical concerns: withholding a potentially beneficial program may be unethical • Ethical concern must be balanced with: • programs cannot reach all beneficiaries (and randomization may be fairest) • knowing the program impact may have large potential benefits for society … 17

Societal Benefits • Rigorous findings lead to scale-up: • Various US ALMP programs – funding by US Congress contingent on positive IE findings • Opportunidades (PROGRESA) – Mexico • Primary school deworming – Kenya • Balsakhi remedial education – India 18

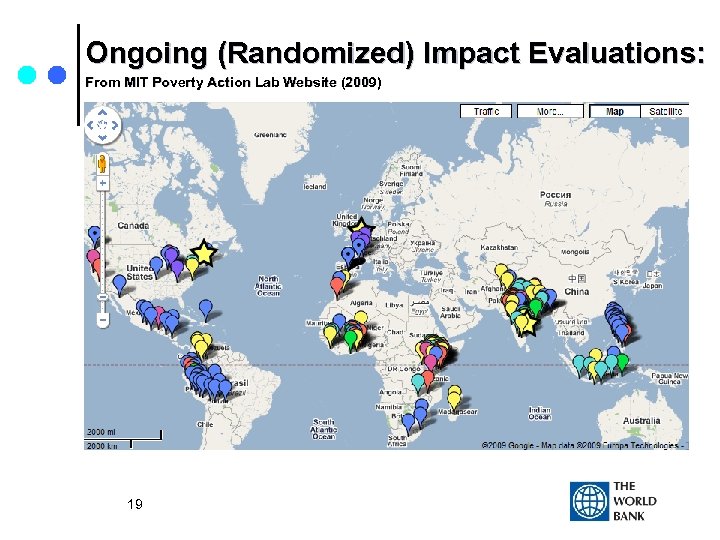

Ongoing (Randomized) Impact Evaluations: From MIT Poverty Action Lab Website (2009) 19

World Bank’s Development Impact Evaluation Initiative (DIME) • 12 Impact Evaluation Clusters: • • • 20 Conditional Cash Transfers Early Childhood Development Education Service Delivery HIV/AIDS Treatment and Prevention Local Development Malaria Control Pay-for-Performance in Health Rural Roads Rural Electrification Urban Upgrading ALMP and Youth Employment

Other Evaluation Approaches Ex ante: 1. Randomized evaluations 2. Double-difference (DD) methods Ex post: 3. Propensity score matching (PSM) 4. Regression discontinuity (RD) design 5. Instrumental variable (IV) methods 21

Non-Randomized Impact Evaluations “Quasi-experimental methods” • Comparison group constructed by evaluator • Challenge: evaluator can never be sure if behaviour of comparison group mimics that of treatment group without program: selection bias 22

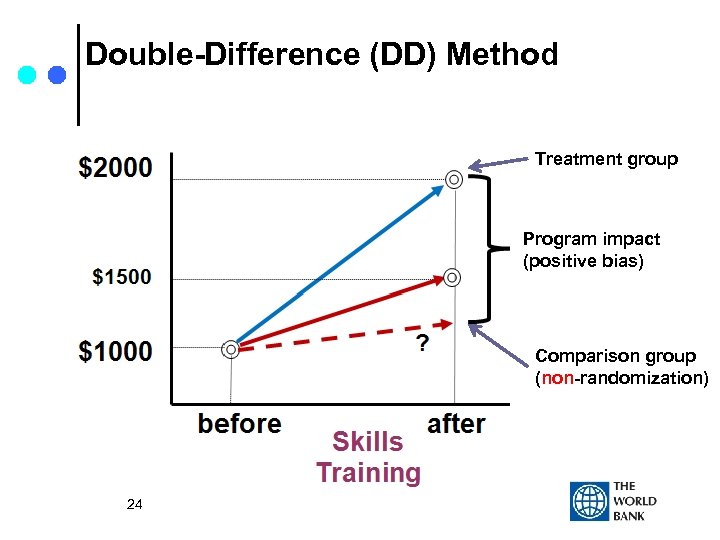

Example: Suppose Only Very Motivated Underemployed Seek Extra Skills Training • Data on (very motivated) under-employed individuals who participated in skills training. • Construct comparison group from (less motivated) under-employed who did not participate in skills training. • DD method: evaluator compares increase in average incomes between two groups 23

Double-Difference (DD) Method Treatment group Program impact (positive bias) Comparison group (non-randomization) 24

Non-experimental design • May provide unbiased impact answer • Relies on assumptions regarding comparison • Usually impossible to verify assumptions • Bias always smaller if evaluator has detailed background variables (covariates) 25

Assessing Validity of Non-Randomized Impact Evaluations • Verify pre-program characteristics are same between treatment and comparison • Test ‘impact’ of program on outcome variable that should not be affected by the program • Note: will always hold in properly designed randomized evaluations 26

Conclusion • Everything else equal, experimental designs are preferred. Assess case-by-case. • Most appropriate when: • New program in pilot phase • Not in pilot phase but receives large amounts of resources and its impact is questioned • Non-experimental evaluations often cheaper; interpretation of results requires more scrutiny 27

THANK YOU! 28

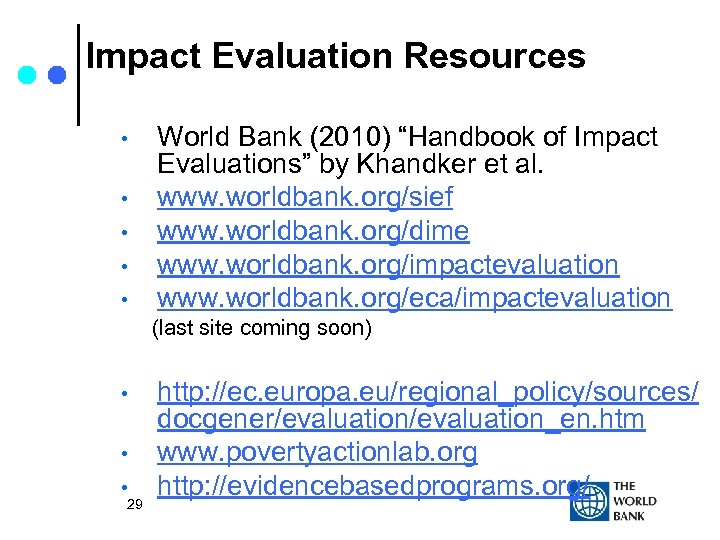

Impact Evaluation Resources • • • World Bank (2010) “Handbook of Impact Evaluations” by Khandker et al. www. worldbank. org/sief www. worldbank. org/dime www. worldbank. org/impactevaluation www. worldbank. org/eca/impactevaluation (last site coming soon) • • • 29 http: //ec. europa. eu/regional_policy/sources/ docgener/evaluation_en. htm www. povertyactionlab. org http: //evidencebasedprograms. org/

35c917e9b2297baf6a23785acc01112c.ppt