3795c668f1bc941a9835e14ce44544c9.ppt

- Количество слайдов: 18

What is SRM? Alex Sim Scientific Data management Research Group Computational Research Division Lawrence Berkeley National Laboratory A. Sim, CRD, L B N L OSG Site Administrators Meeting, Aug. 7, 2009 1

What is SRM? Alex Sim Scientific Data management Research Group Computational Research Division Lawrence Berkeley National Laboratory A. Sim, CRD, L B N L OSG Site Administrators Meeting, Aug. 7, 2009 1

What is SRM? • Storage Resource Managers (SRMs) • Middleware components in Grid • Provide file and space management on shared storage resources • Provide dynamic space allocation/reservation • Different implementations for underlying storage systems • Based on the SRM specification A. Sim, CRD, L B N L OSG Site Administrators Meeting, Aug. 7, 2009 2

What is SRM? • Storage Resource Managers (SRMs) • Middleware components in Grid • Provide file and space management on shared storage resources • Provide dynamic space allocation/reservation • Different implementations for underlying storage systems • Based on the SRM specification A. Sim, CRD, L B N L OSG Site Administrators Meeting, Aug. 7, 2009 2

What does that mean? • SRMs in the data grid • Get/put files from/into storage spaces • Archived files on mass storage systems • Based on standard interface • File transfers from/to remote sites, file replication • Negotiate transfer protocols • Manages shared storage space allocation & reservation • important for data intensive applications • File and space management with lifetime • Supports non-blocking (asynchronous) requests • Supports directory management • Interoperate with other SRMs A. Sim, CRD, L B N L OSG Site Administrators Meeting, Aug. 7, 2009 3

What does that mean? • SRMs in the data grid • Get/put files from/into storage spaces • Archived files on mass storage systems • Based on standard interface • File transfers from/to remote sites, file replication • Negotiate transfer protocols • Manages shared storage space allocation & reservation • important for data intensive applications • File and space management with lifetime • Supports non-blocking (asynchronous) requests • Supports directory management • Interoperate with other SRMs A. Sim, CRD, L B N L OSG Site Administrators Meeting, Aug. 7, 2009 3

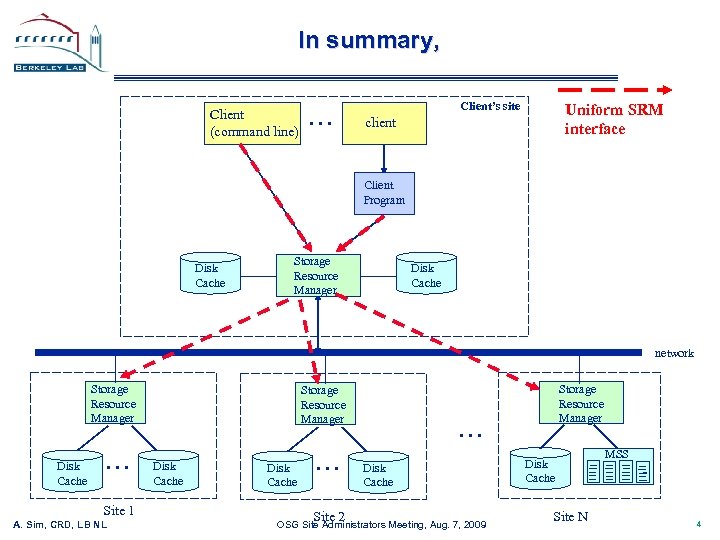

In summary, Client (command line) . . . Client’s site Uniform SRM interface client Client Program Disk Cache Storage Resource Manager Disk Cache network Storage Resource Manager Disk Cache . . . Site 1 A. Sim, CRD, L B N L Storage Resource Manager Disk Cache . . . Site 2 Storage Resource Manager . . . Disk Cache OSG Site Administrators Meeting, Aug. 7, 2009 Disk Cache Site N MSS 4

In summary, Client (command line) . . . Client’s site Uniform SRM interface client Client Program Disk Cache Storage Resource Manager Disk Cache network Storage Resource Manager Disk Cache . . . Site 1 A. Sim, CRD, L B N L Storage Resource Manager Disk Cache . . . Site 2 Storage Resource Manager . . . Disk Cache OSG Site Administrators Meeting, Aug. 7, 2009 Disk Cache Site N MSS 4

How many SRMs out there? • • Berkeley Storage Manager (Be. St. Man) – LBNL CASTOR – CERN, RAL d. Cache – FNAL, DESY, NDGF Disk Pool Manager (DPM) – CERN Storage Resource Manager (Sto. RM) - INFN/CNAF, ICTP/EGRID SRM on SRB - SINICA – TWGRID/EGEE OSG supports – Be. St. Man, d. Cache • Clients • LBNL SRM clients, FNAL SRM clients, LCG-utils, FTS, Phedex, … • S 2, SRM-Tester • SRMs at Work • Europe/Asia/Canada/South America/Australia/Afraca : LCG/EGEE • 250+ deployments, managing more than 10 PB (as of 11/11/2008) • US • Estimated at about 70 deployments (as of 5/30/2009) • OSG, ESG, … A. Sim, CRD, L B N L OSG Site Administrators Meeting, Aug. 7, 2009 5

How many SRMs out there? • • Berkeley Storage Manager (Be. St. Man) – LBNL CASTOR – CERN, RAL d. Cache – FNAL, DESY, NDGF Disk Pool Manager (DPM) – CERN Storage Resource Manager (Sto. RM) - INFN/CNAF, ICTP/EGRID SRM on SRB - SINICA – TWGRID/EGEE OSG supports – Be. St. Man, d. Cache • Clients • LBNL SRM clients, FNAL SRM clients, LCG-utils, FTS, Phedex, … • S 2, SRM-Tester • SRMs at Work • Europe/Asia/Canada/South America/Australia/Afraca : LCG/EGEE • 250+ deployments, managing more than 10 PB (as of 11/11/2008) • US • Estimated at about 70 deployments (as of 5/30/2009) • OSG, ESG, … A. Sim, CRD, L B N L OSG Site Administrators Meeting, Aug. 7, 2009 5

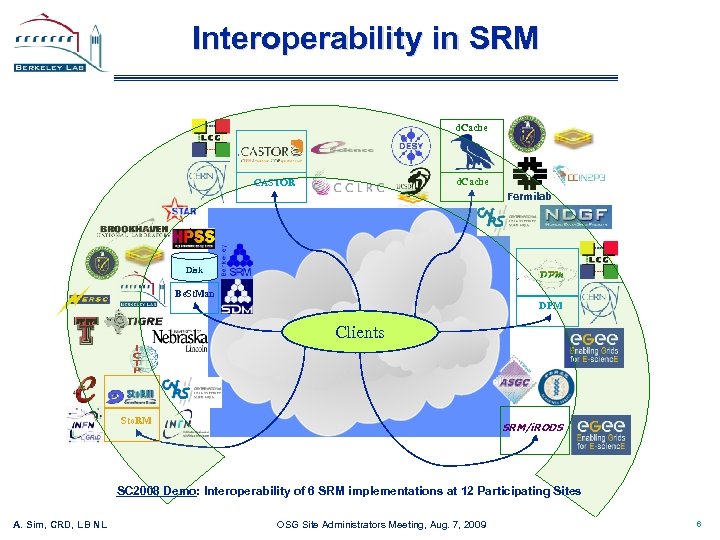

Interoperability in SRM d. Cache CASTOR Fermilab Disk Be. St. Man DPM Clients Sto. RM SRM/i. RODS SC 2008 Demo: Interoperability of 6 SRM implementations at 12 Participating Sites A. Sim, CRD, L B N L OSG Site Administrators Meeting, Aug. 7, 2009 6

Interoperability in SRM d. Cache CASTOR Fermilab Disk Be. St. Man DPM Clients Sto. RM SRM/i. RODS SC 2008 Demo: Interoperability of 6 SRM implementations at 12 Participating Sites A. Sim, CRD, L B N L OSG Site Administrators Meeting, Aug. 7, 2009 6

Summary and Current Status • Storage Resource Management – essential for Grid • OGF Standard • Multiple implementations interoperate • Permits special purpose implementations for unique products • Permits interchanging one SRM product by another • Multiple SRM implementations exist • In production use A. Sim, CRD, L B N L OSG Site Administrators Meeting, Aug. 7, 2009 7

Summary and Current Status • Storage Resource Management – essential for Grid • OGF Standard • Multiple implementations interoperate • Permits special purpose implementations for unique products • Permits interchanging one SRM product by another • Multiple SRM implementations exist • In production use A. Sim, CRD, L B N L OSG Site Administrators Meeting, Aug. 7, 2009 7

Documents and Support • SRM Collaboration and SRM Specifications • http: //sdm. lbl. gov/srm-wg • • Be. St. Man (Berkeley Storage Manager) : http: //sdm. lbl. gov/bestman CASTOR (CERN Advanced STORage manager) : http: //www. cern. ch/castor • d. Cache : http: //www. dcache. org • • DPM (Disk Pool Manager) : https: //twiki. cern. ch/twiki/bin/view/LCG/Dpm. Information Sto. RM (Storage Resource Manager) : http: //storm. forge. cnaf. infn. it • SRM-SRB : http: //lists. grid. sinica. edu. tw/apwiki/SRM-SRB • Other info • osg-storage@opensciencegrid. org • srm@lbl. gov A. Sim, CRD, L B N L OSG Site Administrators Meeting, Aug. 7, 2009 8

Documents and Support • SRM Collaboration and SRM Specifications • http: //sdm. lbl. gov/srm-wg • • Be. St. Man (Berkeley Storage Manager) : http: //sdm. lbl. gov/bestman CASTOR (CERN Advanced STORage manager) : http: //www. cern. ch/castor • d. Cache : http: //www. dcache. org • • DPM (Disk Pool Manager) : https: //twiki. cern. ch/twiki/bin/view/LCG/Dpm. Information Sto. RM (Storage Resource Manager) : http: //storm. forge. cnaf. infn. it • SRM-SRB : http: //lists. grid. sinica. edu. tw/apwiki/SRM-SRB • Other info • osg-storage@opensciencegrid. org • srm@lbl. gov A. Sim, CRD, L B N L OSG Site Administrators Meeting, Aug. 7, 2009 8

Extra A. Sim, CRD, L B N L OSG Site Administrators Meeting, Aug. 7, 2009 9

Extra A. Sim, CRD, L B N L OSG Site Administrators Meeting, Aug. 7, 2009 9

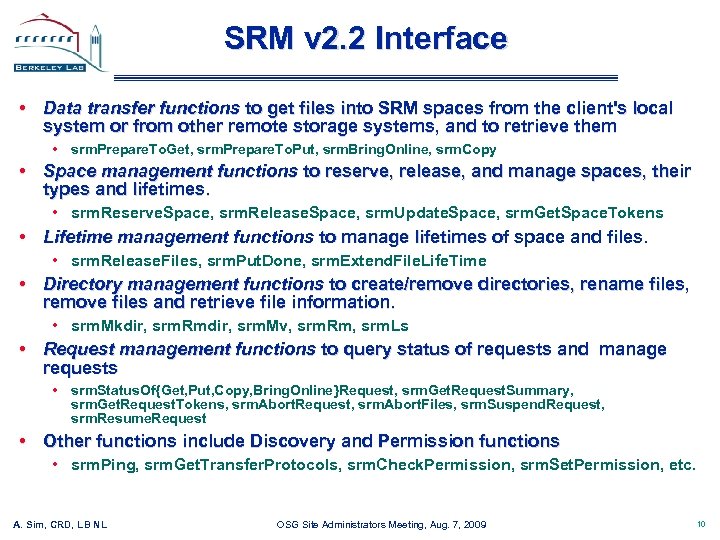

SRM v 2. 2 Interface • Data transfer functions to get files into SRM spaces from the client's local system or from other remote storage systems, and to retrieve them • srm. Prepare. To. Get, srm. Prepare. To. Put, srm. Bring. Online, srm. Copy • Space management functions to reserve, release, and manage spaces, their types and lifetimes. • srm. Reserve. Space, srm. Release. Space, srm. Update. Space, srm. Get. Space. Tokens • Lifetime management functions to manage lifetimes of space and files. • srm. Release. Files, srm. Put. Done, srm. Extend. File. Life. Time • Directory management functions to create/remove directories, rename files, remove files and retrieve file information. • srm. Mkdir, srm. Rmdir, srm. Mv, srm. Rm, srm. Ls • Request management functions to query status of requests and manage requests • srm. Status. Of{Get, Put, Copy, Bring. Online}Request, srm. Get. Request. Summary, srm. Get. Request. Tokens, srm. Abort. Request, srm. Abort. Files, srm. Suspend. Request, srm. Resume. Request • Other functions include Discovery and Permission functions • srm. Ping, srm. Get. Transfer. Protocols, srm. Check. Permission, srm. Set. Permission, etc. A. Sim, CRD, L B N L OSG Site Administrators Meeting, Aug. 7, 2009 10

SRM v 2. 2 Interface • Data transfer functions to get files into SRM spaces from the client's local system or from other remote storage systems, and to retrieve them • srm. Prepare. To. Get, srm. Prepare. To. Put, srm. Bring. Online, srm. Copy • Space management functions to reserve, release, and manage spaces, their types and lifetimes. • srm. Reserve. Space, srm. Release. Space, srm. Update. Space, srm. Get. Space. Tokens • Lifetime management functions to manage lifetimes of space and files. • srm. Release. Files, srm. Put. Done, srm. Extend. File. Life. Time • Directory management functions to create/remove directories, rename files, remove files and retrieve file information. • srm. Mkdir, srm. Rmdir, srm. Mv, srm. Rm, srm. Ls • Request management functions to query status of requests and manage requests • srm. Status. Of{Get, Put, Copy, Bring. Online}Request, srm. Get. Request. Summary, srm. Get. Request. Tokens, srm. Abort. Request, srm. Abort. Files, srm. Suspend. Request, srm. Resume. Request • Other functions include Discovery and Permission functions • srm. Ping, srm. Get. Transfer. Protocols, srm. Check. Permission, srm. Set. Permission, etc. A. Sim, CRD, L B N L OSG Site Administrators Meeting, Aug. 7, 2009 10

Berkeley Storage Manager (Be. St. Man) LBNL • • Java implementation Designed to work with unixbased disk systems • As well as MSS to stage/archive from/to its own disk (currently HPSS) • Adaptable to other file systems and storages (e. g. NCAR MSS, Hadoop, Lustre, Xrootd) • Uses in-memory database (Berkeley. DB) • Multiple transfer protocols • Space reservation • Directory management (no ACLs) • Can copy files from/to remote SRMs • Can copy entire directory robustly • Local Policy • Fair request processing • File replacement in disk • Garbage collection • Large scale data movement of thousands of files • Recovers from transient failures (e. g. MSS maintenance, network down) A. Sim, CRD, L B N L OSG Site Administrators Meeting, Aug. 7, 2009 11

Berkeley Storage Manager (Be. St. Man) LBNL • • Java implementation Designed to work with unixbased disk systems • As well as MSS to stage/archive from/to its own disk (currently HPSS) • Adaptable to other file systems and storages (e. g. NCAR MSS, Hadoop, Lustre, Xrootd) • Uses in-memory database (Berkeley. DB) • Multiple transfer protocols • Space reservation • Directory management (no ACLs) • Can copy files from/to remote SRMs • Can copy entire directory robustly • Local Policy • Fair request processing • File replacement in disk • Garbage collection • Large scale data movement of thousands of files • Recovers from transient failures (e. g. MSS maintenance, network down) A. Sim, CRD, L B N L OSG Site Administrators Meeting, Aug. 7, 2009 11

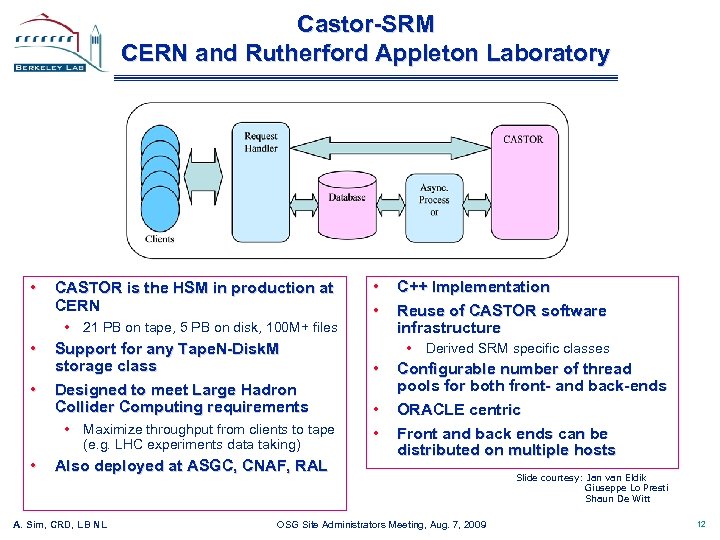

Castor-SRM CERN and Rutherford Appleton Laboratory • CASTOR is the HSM in production at CERN • 21 PB on tape, 5 PB on disk, 100 M+ files • • Support for any Tape. N-Disk. M storage class Designed to meet Large Hadron Collider Computing requirements • Maximize throughput from clients to tape (e. g. LHC experiments data taking) • Also deployed at ASGC, CNAF, RAL A. Sim, CRD, L B N L • • C++ Implementation Reuse of CASTOR software infrastructure • Derived SRM specific classes • • • Configurable number of thread pools for both front- and back-ends ORACLE centric Front and back ends can be distributed on multiple hosts OSG Site Administrators Meeting, Aug. 7, 2009 Slide courtesy: Jan van Eldik Giuseppe Lo Presti Shaun De Witt 12

Castor-SRM CERN and Rutherford Appleton Laboratory • CASTOR is the HSM in production at CERN • 21 PB on tape, 5 PB on disk, 100 M+ files • • Support for any Tape. N-Disk. M storage class Designed to meet Large Hadron Collider Computing requirements • Maximize throughput from clients to tape (e. g. LHC experiments data taking) • Also deployed at ASGC, CNAF, RAL A. Sim, CRD, L B N L • • C++ Implementation Reuse of CASTOR software infrastructure • Derived SRM specific classes • • • Configurable number of thread pools for both front- and back-ends ORACLE centric Front and back ends can be distributed on multiple hosts OSG Site Administrators Meeting, Aug. 7, 2009 Slide courtesy: Jan van Eldik Giuseppe Lo Presti Shaun De Witt 12

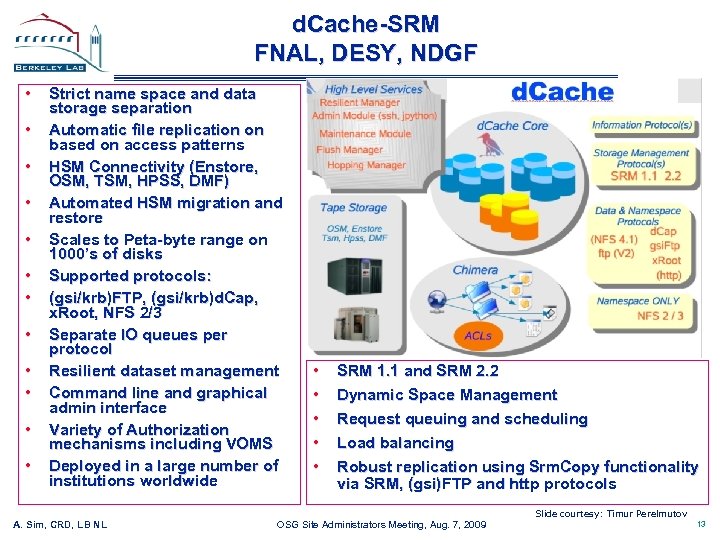

d. Cache-SRM FNAL, DESY, NDGF • • • Strict name space and data storage separation Automatic file replication on based on access patterns HSM Connectivity (Enstore, OSM, TSM, HPSS, DMF) Automated HSM migration and restore Scales to Peta-byte range on 1000’s of disks Supported protocols: (gsi/krb)FTP, (gsi/krb)d. Cap, x. Root, NFS 2/3 Separate IO queues per protocol Resilient dataset management Command line and graphical admin interface Variety of Authorization mechanisms including VOMS Deployed in a large number of institutions worldwide • • • SRM 1. 1 and SRM 2. 2 Dynamic Space Management Request queuing and scheduling Load balancing Robust replication using Srm. Copy functionality via SRM, (gsi)FTP and http protocols Slide courtesy: Timur Perelmutov A. Sim, CRD, L B N L OSG Site Administrators Meeting, Aug. 7, 2009 13

d. Cache-SRM FNAL, DESY, NDGF • • • Strict name space and data storage separation Automatic file replication on based on access patterns HSM Connectivity (Enstore, OSM, TSM, HPSS, DMF) Automated HSM migration and restore Scales to Peta-byte range on 1000’s of disks Supported protocols: (gsi/krb)FTP, (gsi/krb)d. Cap, x. Root, NFS 2/3 Separate IO queues per protocol Resilient dataset management Command line and graphical admin interface Variety of Authorization mechanisms including VOMS Deployed in a large number of institutions worldwide • • • SRM 1. 1 and SRM 2. 2 Dynamic Space Management Request queuing and scheduling Load balancing Robust replication using Srm. Copy functionality via SRM, (gsi)FTP and http protocols Slide courtesy: Timur Perelmutov A. Sim, CRD, L B N L OSG Site Administrators Meeting, Aug. 7, 2009 13

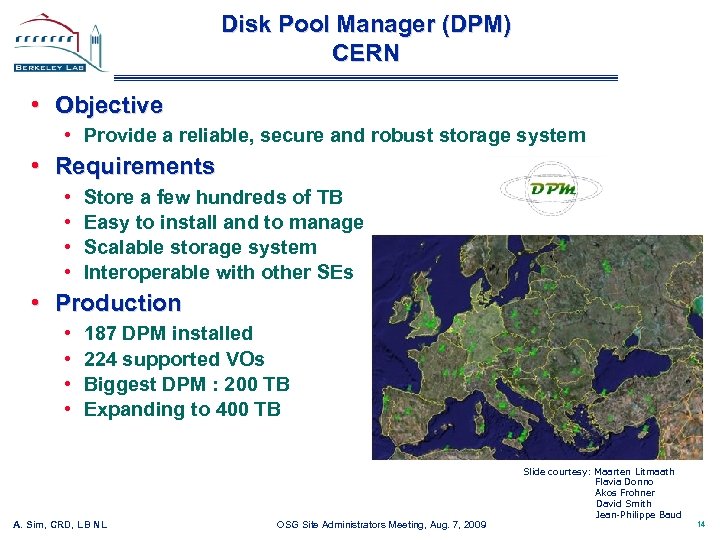

Disk Pool Manager (DPM) CERN • Objective • Provide a reliable, secure and robust storage system • Requirements • • Store a few hundreds of TB Easy to install and to manage Scalable storage system Interoperable with other SEs • Production • • 187 DPM installed 224 supported VOs Biggest DPM : 200 TB Expanding to 400 TB A. Sim, CRD, L B N L OSG Site Administrators Meeting, Aug. 7, 2009 Slide courtesy: Maarten Litmaath Flavia Donno Akos Frohner David Smith Jean-Philippe Baud 14

Disk Pool Manager (DPM) CERN • Objective • Provide a reliable, secure and robust storage system • Requirements • • Store a few hundreds of TB Easy to install and to manage Scalable storage system Interoperable with other SEs • Production • • 187 DPM installed 224 supported VOs Biggest DPM : 200 TB Expanding to 400 TB A. Sim, CRD, L B N L OSG Site Administrators Meeting, Aug. 7, 2009 Slide courtesy: Maarten Litmaath Flavia Donno Akos Frohner David Smith Jean-Philippe Baud 14

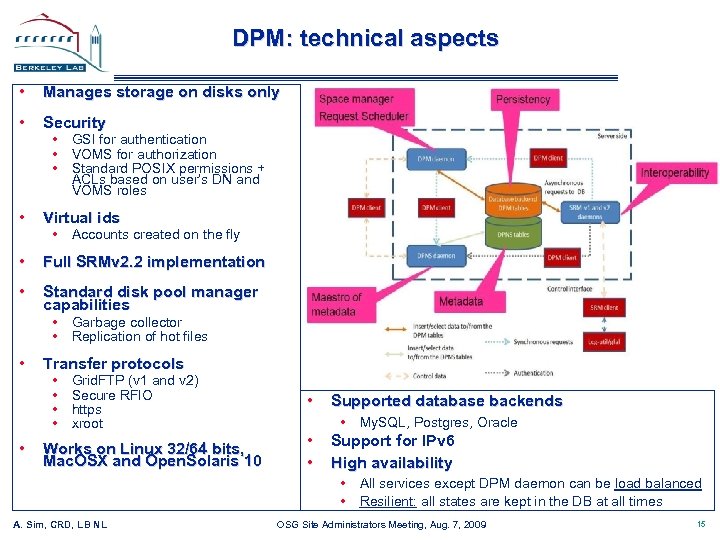

DPM: technical aspects • Manages storage on disks only • Security • Virtual ids • Full SRMv 2. 2 implementation • Standard disk pool manager capabilities • GSI for authentication • VOMS for authorization • Standard POSIX permissions + ACLs based on user’s DN and VOMS roles • Accounts created on the fly • Garbage collector • Replication of hot files • • Transfer protocols • • Grid. FTP (v 1 and v 2) Secure RFIO https xroot Works on Linux 32/64 bits, Mac. OSX and Open. Solaris 10 • Supported database backends • My. SQL, Postgres, Oracle • • Support for IPv 6 High availability • All services except DPM daemon can be load balanced • Resilient: all states are kept in the DB at all times A. Sim, CRD, L B N L OSG Site Administrators Meeting, Aug. 7, 2009 15

DPM: technical aspects • Manages storage on disks only • Security • Virtual ids • Full SRMv 2. 2 implementation • Standard disk pool manager capabilities • GSI for authentication • VOMS for authorization • Standard POSIX permissions + ACLs based on user’s DN and VOMS roles • Accounts created on the fly • Garbage collector • Replication of hot files • • Transfer protocols • • Grid. FTP (v 1 and v 2) Secure RFIO https xroot Works on Linux 32/64 bits, Mac. OSX and Open. Solaris 10 • Supported database backends • My. SQL, Postgres, Oracle • • Support for IPv 6 High availability • All services except DPM daemon can be load balanced • Resilient: all states are kept in the DB at all times A. Sim, CRD, L B N L OSG Site Administrators Meeting, Aug. 7, 2009 15

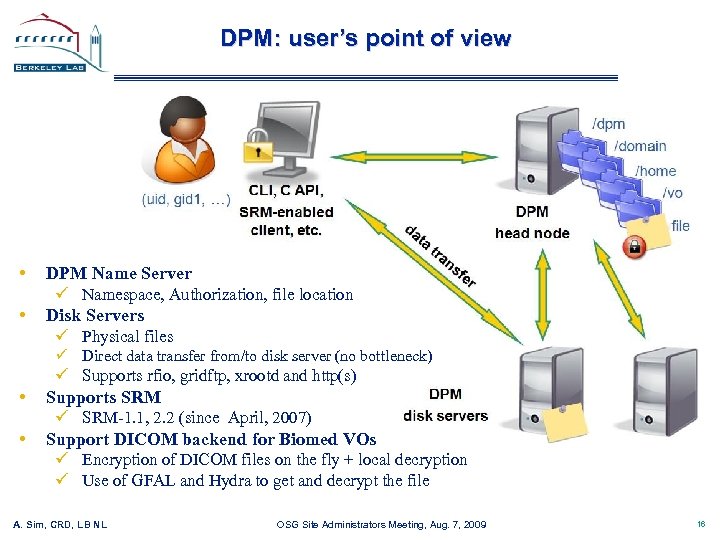

DPM: user’s point of view • • DPM Name Server ü Namespace, Authorization, file location Disk Servers ü Physical files ü Direct data transfer from/to disk server (no bottleneck) ü Supports rfio, gridftp, xrootd and http(s) • • Supports SRM ü SRM-1. 1, 2. 2 (since April, 2007) Support DICOM backend for Biomed VOs ü Encryption of DICOM files on the fly + local decryption ü Use of GFAL and Hydra to get and decrypt the file A. Sim, CRD, L B N L OSG Site Administrators Meeting, Aug. 7, 2009 16

DPM: user’s point of view • • DPM Name Server ü Namespace, Authorization, file location Disk Servers ü Physical files ü Direct data transfer from/to disk server (no bottleneck) ü Supports rfio, gridftp, xrootd and http(s) • • Supports SRM ü SRM-1. 1, 2. 2 (since April, 2007) Support DICOM backend for Biomed VOs ü Encryption of DICOM files on the fly + local decryption ü Use of GFAL and Hydra to get and decrypt the file A. Sim, CRD, L B N L OSG Site Administrators Meeting, Aug. 7, 2009 16

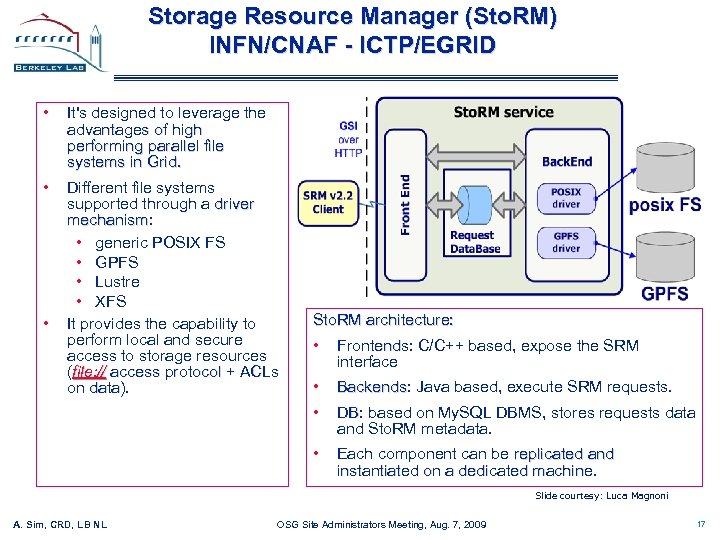

Storage Resource Manager (Sto. RM) INFN/CNAF - ICTP/EGRID • It's designed to leverage the advantages of high performing parallel file systems in Grid. • Different file systems supported through a driver mechanism: mechanism • generic POSIX FS • GPFS • Lustre • XFS It provides the capability to perform local and secure access to storage resources (file: // access protocol + ACLs on data). data • Sto. RM architecture: • Frontends: C/C++ based, expose the SRM Frontends interface • • Backends: Java based, execute SRM requests. Backends • Each component can be replicated and instantiated on a dedicated machine. DB: based on My. SQL DBMS, stores requests data DB and Sto. RM metadata. Slide courtesy: Luca Magnoni A. Sim, CRD, L B N L OSG Site Administrators Meeting, Aug. 7, 2009 17

Storage Resource Manager (Sto. RM) INFN/CNAF - ICTP/EGRID • It's designed to leverage the advantages of high performing parallel file systems in Grid. • Different file systems supported through a driver mechanism: mechanism • generic POSIX FS • GPFS • Lustre • XFS It provides the capability to perform local and secure access to storage resources (file: // access protocol + ACLs on data). data • Sto. RM architecture: • Frontends: C/C++ based, expose the SRM Frontends interface • • Backends: Java based, execute SRM requests. Backends • Each component can be replicated and instantiated on a dedicated machine. DB: based on My. SQL DBMS, stores requests data DB and Sto. RM metadata. Slide courtesy: Luca Magnoni A. Sim, CRD, L B N L OSG Site Administrators Meeting, Aug. 7, 2009 17

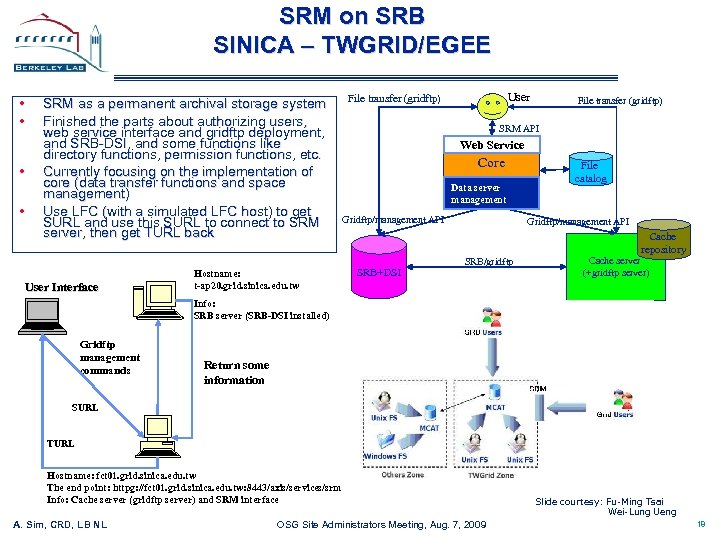

SRM on SRB SINICA – TWGRID/EGEE • • SRM as a permanent archival storage system Finished the parts about authorizing users, web service interface and gridftp deployment, and SRB-DSI, and some functions like directory functions, permission functions, etc. Currently focusing on the implementation of core (data transfer functions and space management) Use LFC (with a simulated LFC host) to get SURL and use this SURL to connect to SRM server, then get TURL back User Interface Hostname: t-ap 20. grid. sinica. edu. tw User File transfer (gridftp) SRM API Web Service Core Data server management Gridftp/management API File catalog Gridftp/management API Cache repository SRB+DSI SRB/gridftp Cache server (+gridftp server) Info: SRB server (SRB-DSI installed) Gridftp management commands Return some information SURL TURL Hostname: fct 01. grid. sinica. edu. tw The end point: httpg: //fct 01. grid. sinica. edu. tw: 8443/axis/services/srm Info: Cache server (gridftp server) and SRM interface A. Sim, CRD, L B N L OSG Site Administrators Meeting, Aug. 7, 2009 Slide courtesy: Fu-Ming Tsai Wei-Lung Ueng 18

SRM on SRB SINICA – TWGRID/EGEE • • SRM as a permanent archival storage system Finished the parts about authorizing users, web service interface and gridftp deployment, and SRB-DSI, and some functions like directory functions, permission functions, etc. Currently focusing on the implementation of core (data transfer functions and space management) Use LFC (with a simulated LFC host) to get SURL and use this SURL to connect to SRM server, then get TURL back User Interface Hostname: t-ap 20. grid. sinica. edu. tw User File transfer (gridftp) SRM API Web Service Core Data server management Gridftp/management API File catalog Gridftp/management API Cache repository SRB+DSI SRB/gridftp Cache server (+gridftp server) Info: SRB server (SRB-DSI installed) Gridftp management commands Return some information SURL TURL Hostname: fct 01. grid. sinica. edu. tw The end point: httpg: //fct 01. grid. sinica. edu. tw: 8443/axis/services/srm Info: Cache server (gridftp server) and SRM interface A. Sim, CRD, L B N L OSG Site Administrators Meeting, Aug. 7, 2009 Slide courtesy: Fu-Ming Tsai Wei-Lung Ueng 18