e2c63ef5a6e18ca6e31ccdea43deaab7.ppt

- Количество слайдов: 61

Web-Based Surveys: Questions, Answers, and Designs Kent L. Norman Department of Psychology Laboratory for Automation Psychology and Decision Processes http: //lap. umd. edu Human/Computer Interaction Lab http: //cs. umd. edu/hcil 2 nd International Congress on Interface Design and HCI Rio de Janeiro, Brazil

Reasons for Web-based Surveys • • • Cost-efficiency Dissemination-collection Central control Automation Hypermedia

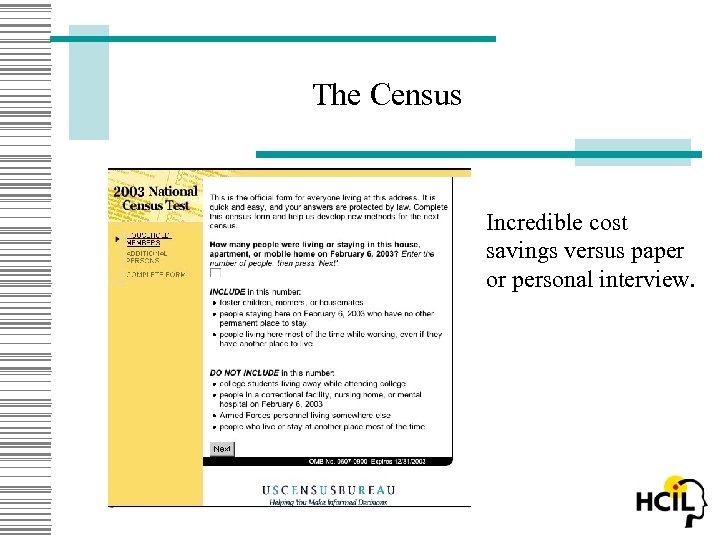

The Census Incredible cost savings versus paper or personal interview.

Warnings: Pitfalls of Bad Design • Good ideas sometimes don't always migrate directly to the Web. • Bad design is easier to generate than good design. • One bad design leads to another.

Interface Issues with Surveys • • • Formatting and Navigation Organization of Items and Navigation Automatic Customization Conditional Jumps and Navigation Edits and Corrections and Navigation

Advantages of Computerized Questionnaires • Computerized surveys are generated in a similar way to paper-and-pencil or personal interview surveys, but they are installed on a WWW server. • The server delivers the survey to any number of client browsers. • The respondent data is returned and stored on the server and then analyzed.

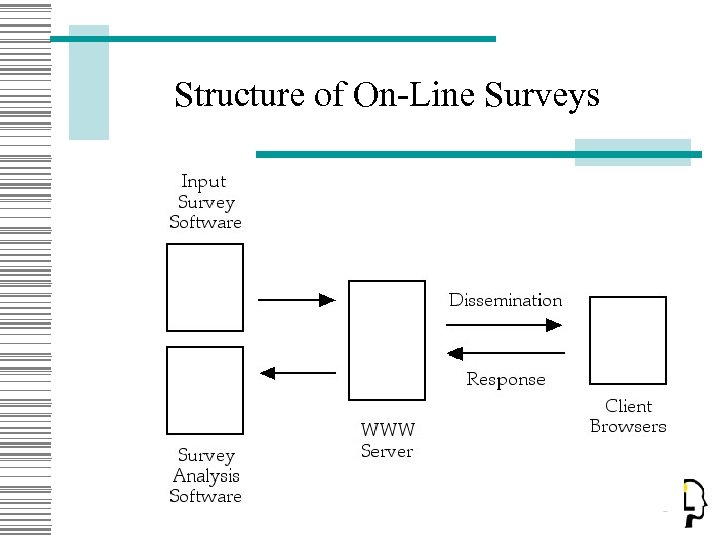

Structure of On-Line Surveys

Problems

Vendors An amazing number of companies offer software and services to generate surveys and questionnaires on-line with varying degrees of pricing and quality. • Dipolar • Formsite • Hosted Survey • insitefulsurveys. com • instantsurvey. com • Perseus Development Corporation • Stat. Pac • Sum. Quest • Software Survey Chef • Survey. Connect Inc. • Survey. Gold Survey. Pro • The Survey System by Creative Research • Systems surveytracker. com

HCI Problems While many advertise the ease of use and functionality of the generation, dissemination, collection and analysis of results, there is an amazing silence when it comes to a key issue: the design of the human/computer interface for questionnaire.

Control Hosting the surveys on a WWW server allows for central control of dissemination of the survey and collection of the data. – Time of start up and shut down – Restricted access to the survey and authentication of the client – Limited control over the client's workstation and environmental variables (e. g. , monitor size, open applications, bystanders)

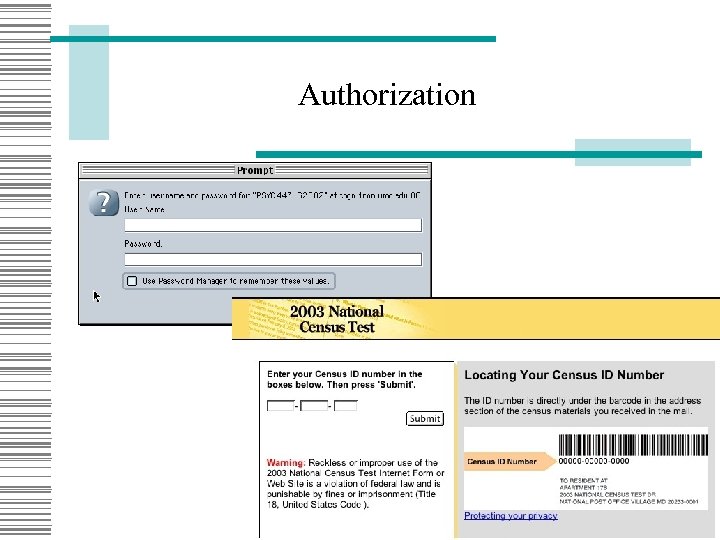

Authorization

Automation On-line surveys afford nearly endless options of automation from totally manual (paper-and-pencil like) surveys to interviews conducted by an intelligent agent.

Things that can be easily automated • • Search for questions, topics, words Sorting of questions by topic or type Smart clustering of questions Conditional branching Smart navigation Error checking and correction Automatic form filling from database Unfortunately, few of the on-line software packages provide or even afford any automation on the client side.

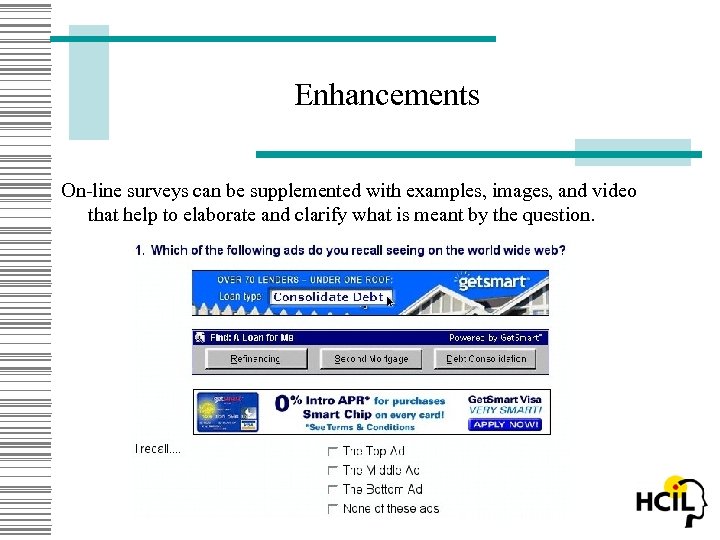

Enhancements On-line surveys can be supplemented with examples, images, and video that help to elaborate and clarify what is meant by the question.

Guidelines for Multimedia Guidelines: • Always ask whether the multimedia material is an enhancement that solves an information problem or whether it is a distraction ("eye candy"). • Does the illustration over specify the question or the alternative when the it should refer to a more general case?

Bad Design: Mindless Migration Most often we do things either the way we did them before or the way most easily afforded by the tool that we are using rather than the way that makes the most sense to the end user (survey respondent). • Reproduce paper surveys on-line as is: familiarity with layout transfers but other problems ensue (use of mouse and keyboard, scrolling, , etc). • Some things fit and others don't: layout is extremely important. • Remember navigation is a totally new thing on screen as opposed to paper.

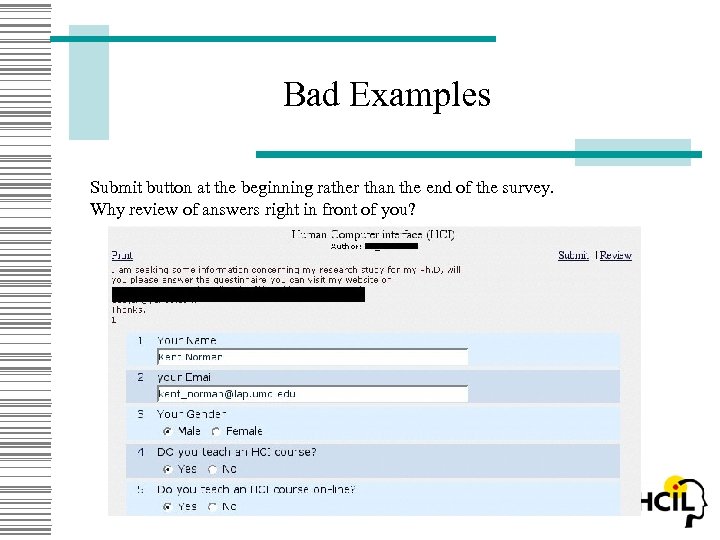

Bad Examples Submit button at the beginning rather than the end of the survey. Why review of answers right in front of you?

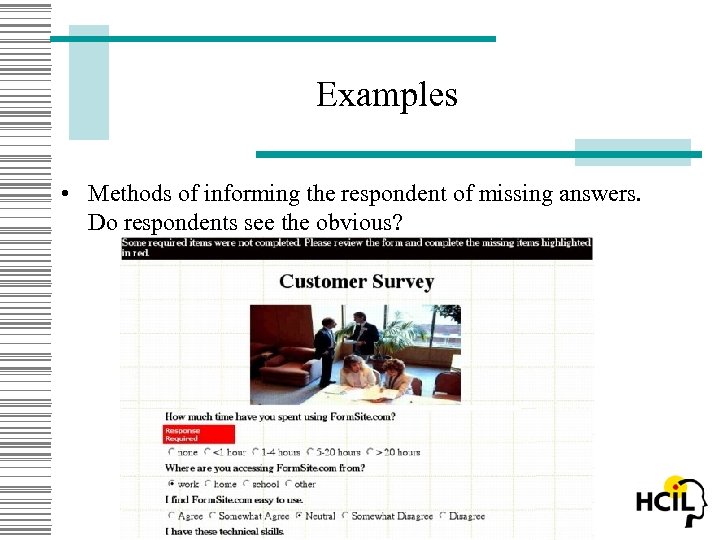

Examples • Methods of informing the respondent of missing answers. Do respondents see the obvious?

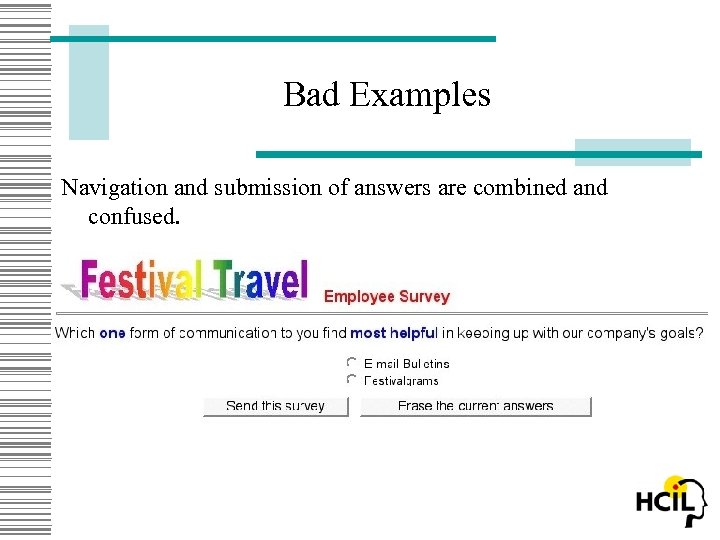

Bad Examples Navigation and submission of answers are combined and confused.

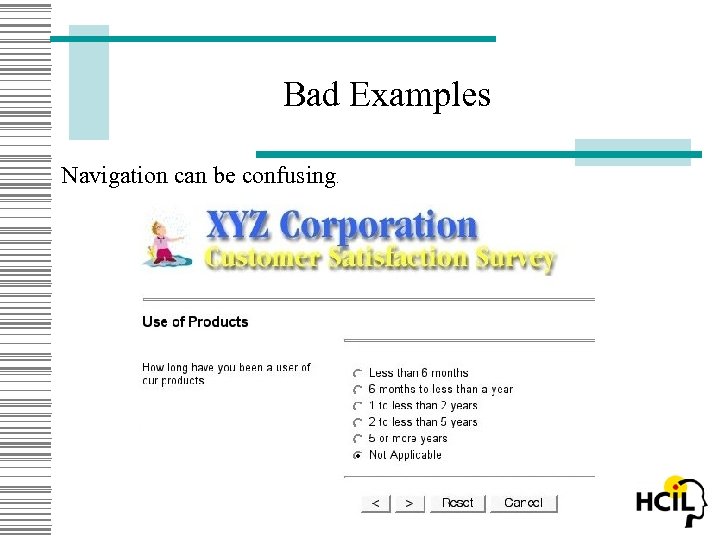

Bad Examples Navigation can be confusing.

Bad Examples Problems with paging AND scrolling.

Bad Templates With the rapid deployment of the surveys on the WWW, many people are turning to software that generates surveys on-the-fly. Unfortunately, many of these survey generation programs focus on ease of generation and analysis of results rather than on good survey design. Consequently, they tend to rapidly replicate bad design.

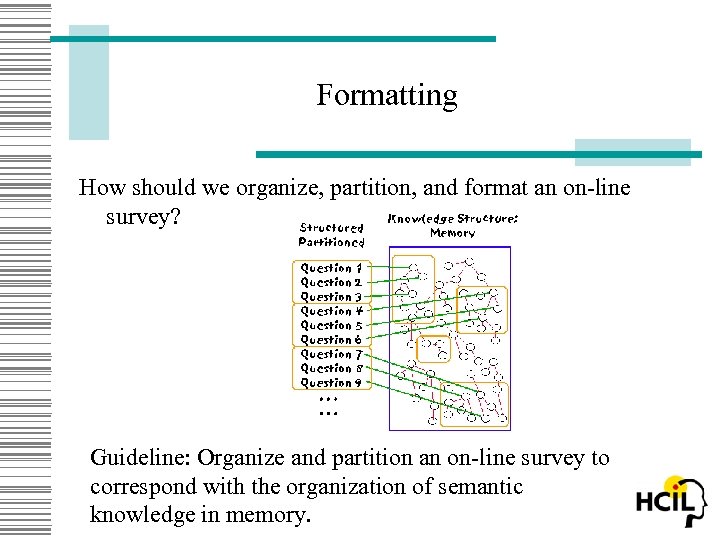

Formatting How should we organize, partition, and format an on-line survey? Guideline: Organize and partition an on-line survey to correspond with the organization of semantic knowledge in memory.

Access-Ability Then how should the survey be partitioned on-line into pages? Should it be Item-based, Form-based, or somewhere in between?

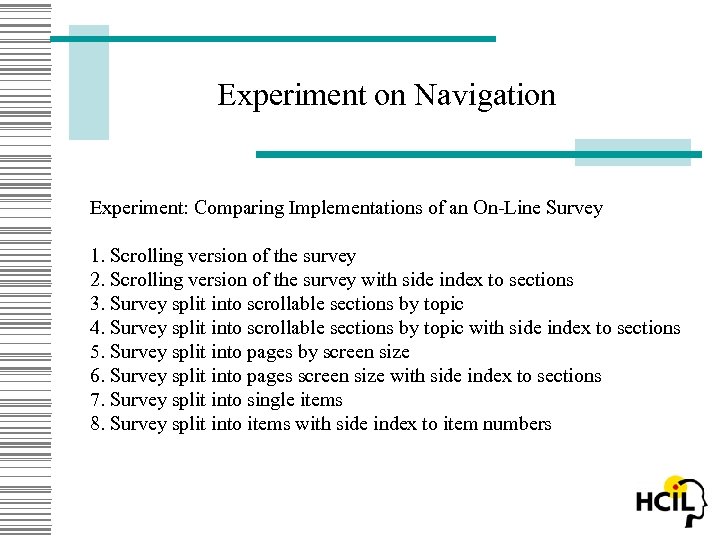

Experiment on Navigation Experiment: Comparing Implementations of an On-Line Survey 1. Scrolling version of the survey 2. Scrolling version of the survey with side index to sections 3. Survey split into scrollable sections by topic 4. Survey split into scrollable sections by topic with side index to sections 5. Survey split into pages by screen size 6. Survey split into pages screen size with side index to sections 7. Survey split into single items 8. Survey split into items with side index to item numbers

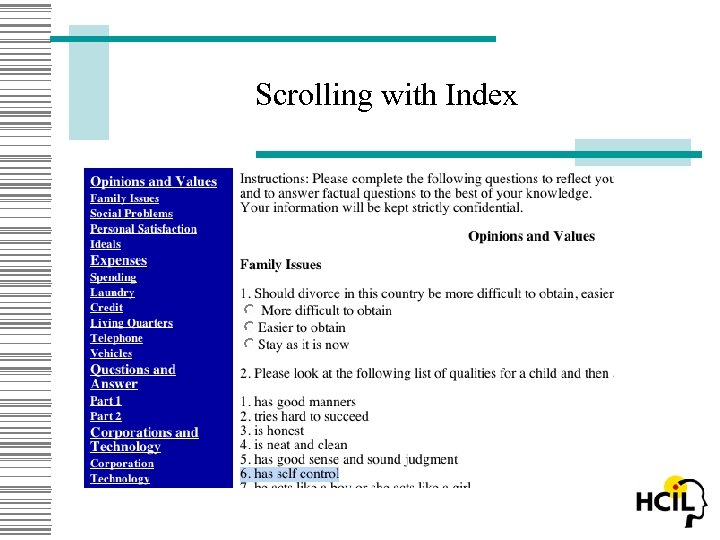

Scrolling with Index

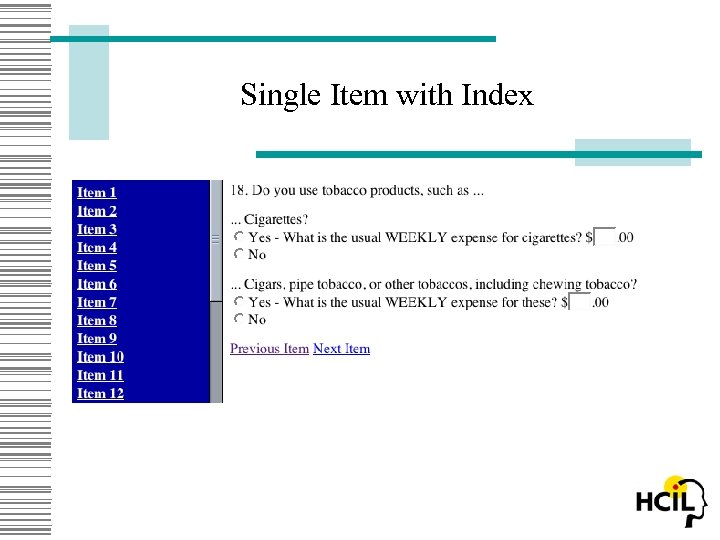

Single Item with Index

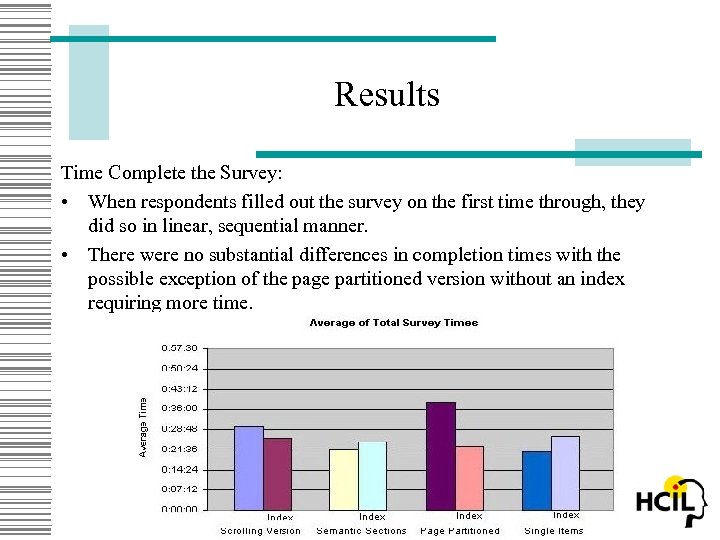

Results Time Complete the Survey: • When respondents filled out the survey on the first time through, they did so in linear, sequential manner. • There were no substantial differences in completion times with the possible exception of the page partitioned version without an index requiring more time.

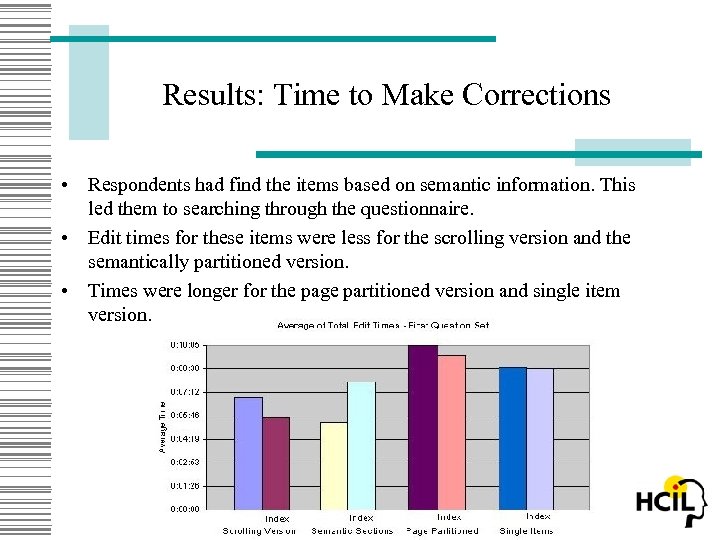

Results: Time to Make Corrections • Respondents had find the items based on semantic information. This led them to searching through the questionnaire. • Edit times for these items were less for the scrolling version and the semantically partitioned version. • Times were longer for the page partitioned version and single item version.

Guidelines • When respondents complete the survey in a forward, linear manner, either the whole form or the single item implementations can be used as long as navigational functions are clear and easy to use. • When correction and editing tasks require the respondent to find items, the whole form implementation can be used to aid in searching for items. • Paged surveys that are not congruent with sections are to be avoided. • Sectioned surveys that require scrolling should clearly indicate that additional items must be accessed by scrolling to them. • Indexes to sections and pages are of marginal benefit and may sometimes lead to confusion.

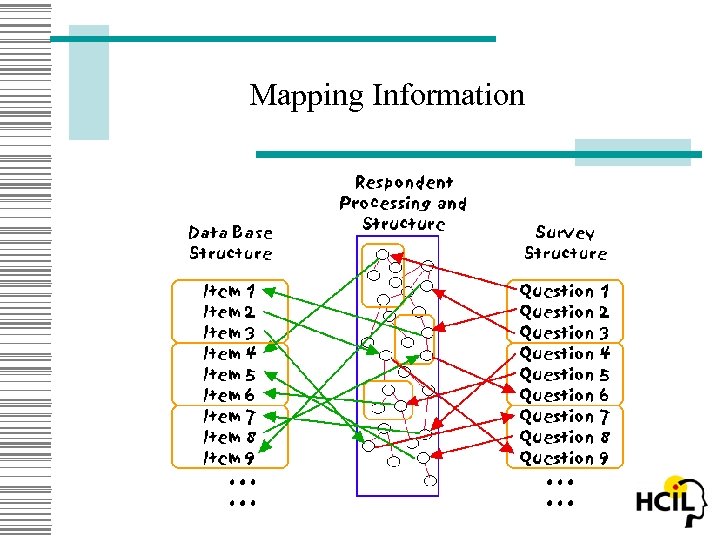

Organization Can we match of knowledge structures of the respondent and databases to organization of the survey? The problem is to map items between the external data base and the structure of the survey. One may navigate either the external data base, the items on the survey, or both.

Mapping Information

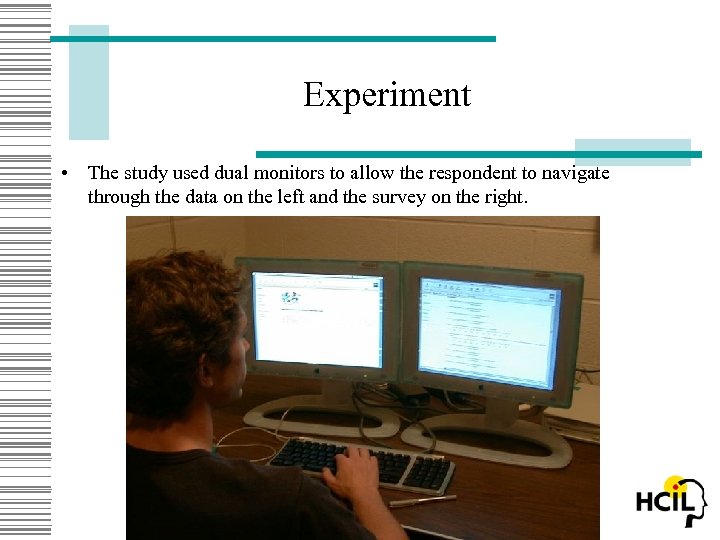

Experiment • The study used dual monitors to allow the respondent to navigate through the data on the left and the survey on the right.

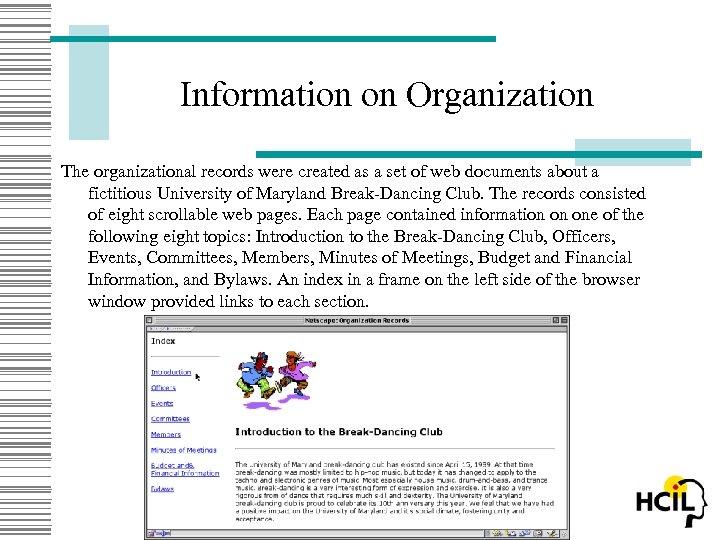

Information on Organization The organizational records were created as a set of web documents about a fictitious University of Maryland Break-Dancing Club. The records consisted of eight scrollable web pages. Each page contained information on one of the following eight topics: Introduction to the Break-Dancing Club, Officers, Events, Committees, Members, Minutes of Meetings, Budget and Financial Information, and Bylaws. An index in a frame on the left side of the browser window provided links to each section.

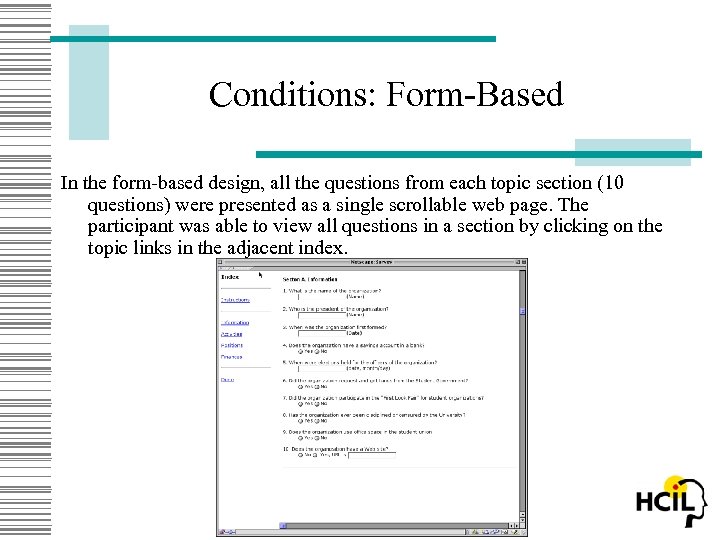

Conditions: Form-Based In the form-based design, all the questions from each topic section (10 questions) were presented as a single scrollable web page. The participant was able to view all questions in a section by clicking on the topic links in the adjacent index.

Conditions: Item-Based In the item-based design, the questions were listed one per screen with links provided at the bottom of each question item page labeled "next" and "previous" to allow forward and backward movement through each section of the questionnaire. When a participant clicked on a topic section link in the index, only the first question was displayed for that topic. The participant was required to click "next" to view the other 9 questions within that particular topic section.

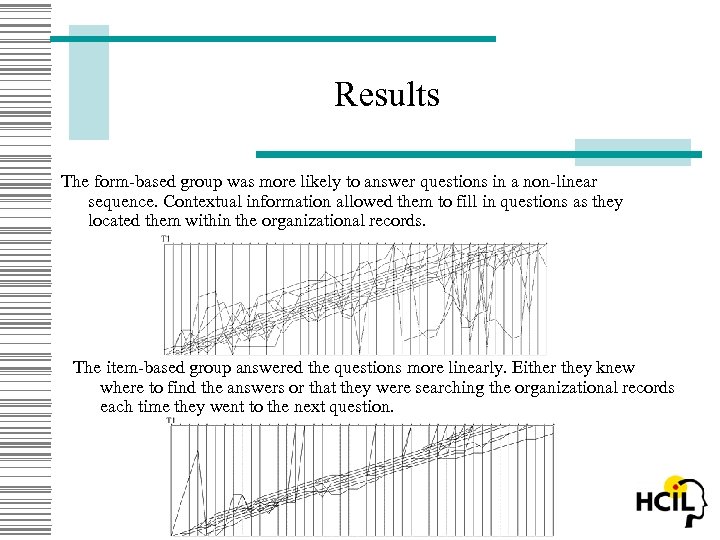

Results The form-based group was more likely to answer questions in a non-linear sequence. Contextual information allowed them to fill in questions as they located them within the organizational records. The item-based group answered the questions more linearly. Either they knew where to find the answers or that they were searching the organizational records each time they went to the next question.

Guidelines • Organize the survey in a manner that matches the organization of the company's records. • Allow respondents to easily navigate the survey. • Explore methods of linking data fields in the survey to records in the database.

Learning Effects How does increased experience with the survey and with the database affect the respondent's time to complete the survey and the pattern of answering items? Experiment: Respondents completed the same survey for the 1999 and 2000 year records for the same organization (Break Dance Club 1999 then Break Dance Club 2000 or Knitting Club 1999 then Knitting Club 2000) or the same survey for two different clubs (Break Dance Club 1999 then Knitting Club 1999 or Break Dance Club 2000 then Knitting Club 2000).

Results • Survey completion times were markedly faster for the administration across all conditions and somewhat faster if when the survey was completed for the same organization. • The pattern of completing the survey and accessing the records indicated that on the second time through, respondents were more efficient in finding information and in accessing survey items in a nonlinear order.

Guidelines • When possible, use the same survey to allow the respondent to become more and more familiar with its organization. • Seek the same respondent to fill out the questionnaire on successive administrations. • Look for ways to facilitate familiarity and learning of the correspondence between the database and the survey.

Enhancements • Record links between the survey and database on each administration and make them available to the respondent in subsequent administrations. • Explore methods of auto-filling fields in the survey from the database. • Explore methods of automatically customizing the order of items to correspond to the database or pull information from the database to correspond to the survey.

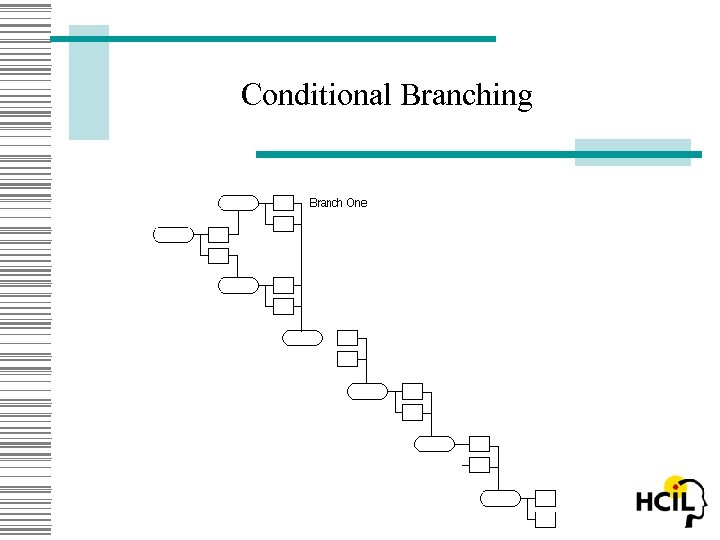

Conditional Branching • On-line surveys can automate conditional branching in self-administered questionnaires and make the task easier for the respondent. • Design factors need to take into consideration the level of automation pre-programmed into the survey versus the amount of control afforded to the respondent, the cognitive complexity of the interface versus ease of use, and the degree of context provided by showing surrounding items on the questionnaire versus a focus on single questions.

Conditional Branching

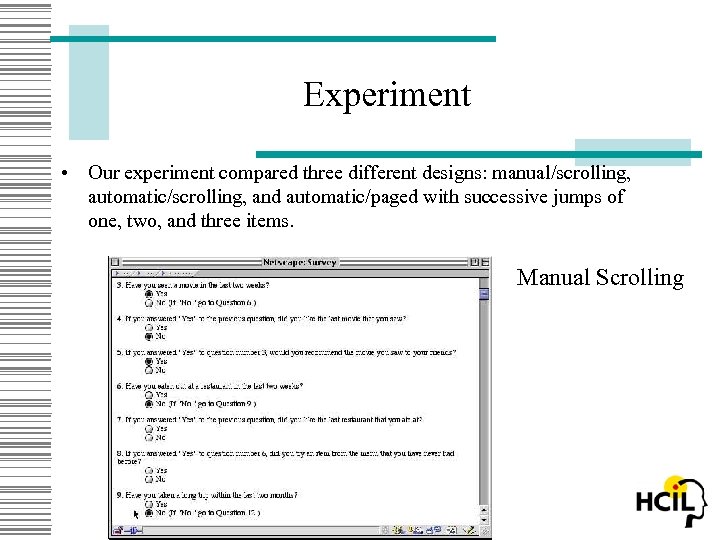

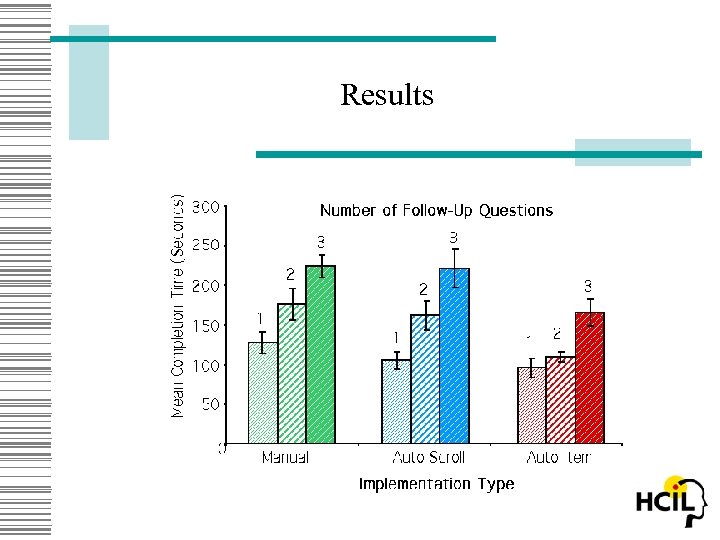

Experiment • Our experiment compared three different designs: manual/scrolling, automatic/scrolling, and automatic/paged with successive jumps of one, two, and three items. Manual Scrolling

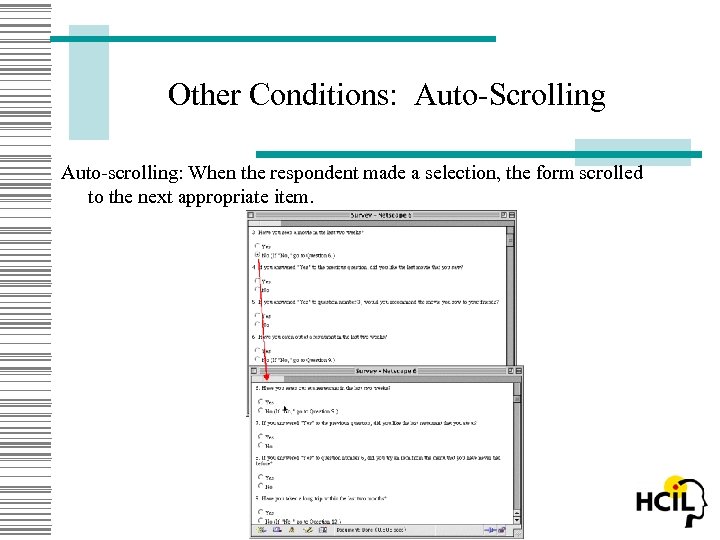

Other Conditions: Auto-Scrolling Auto-scrolling: When the respondent made a selection, the form scrolled to the next appropriate item.

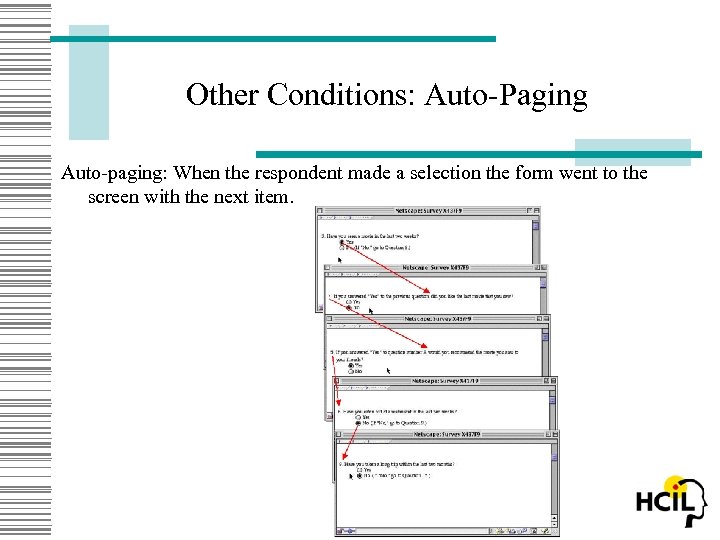

Other Conditions: Auto-Paging Auto-paging: When the respondent made a selection the form went to the screen with the next item.

Results

Guidelines • Reduce the branching instructions to a minimum to reduce reading time, confusion, and perceived difficulty of the questionnaire. • Automate conditional branching when possible, but allow the respondent to override branching if there is a need or desire to do so on the part of the respondent. • Hide inappropriate and irrelevant questions to shorten the apparent length of the questionnaire and make such questions available only if the respondent specifically needs or wishes to view them.

More Guidelines • When the respondent is allowed to answer all questions, implement logic and consistency checks on conditional branches. • Streamline forward movement through the questionnaire while allowing backtracking and changing of answers. • When context matters, provide form-based views of sections to help to clarify the meaning of items and the interrelationships among items. • Finally, it must be remembered that although good design seems intuitive, it requires empirical verification before final implementation.

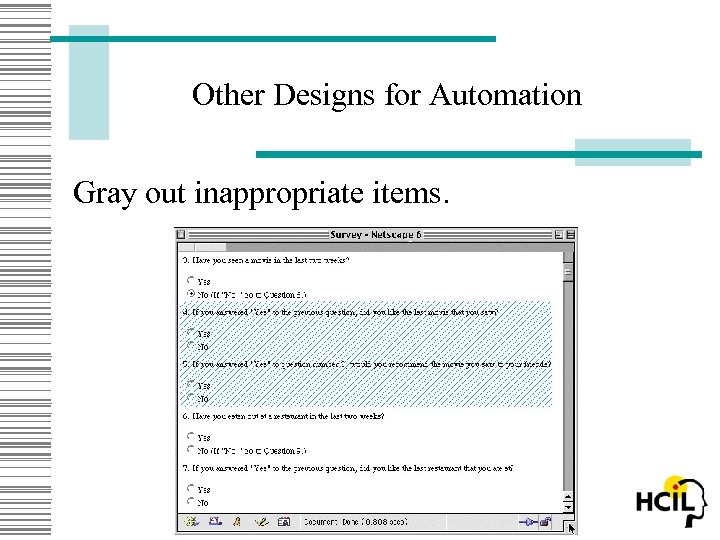

Other Designs for Automation Gray out inappropriate items.

Another Design Expanding-contracting sections of questions

Edits • On-line surveys and on-line data entry systems are often able to detect if the respondent has entered information incorrectly or inappropriately. Automatic checking can inform the respondent that he or she should re -enter the information and may even give helpful suggestions as to how to make the correction. • The system may either check the whole form at the point that the respondent submits it, or it may check each data entry and inform the respondent immediately. In general it is easier to program and to process error checking at the submit point. However, if the respondent is notified of the errors until the end of the task, he or she has to navigate back to point data entry error and in some cases trouble shoot to find which of several data points was in error.

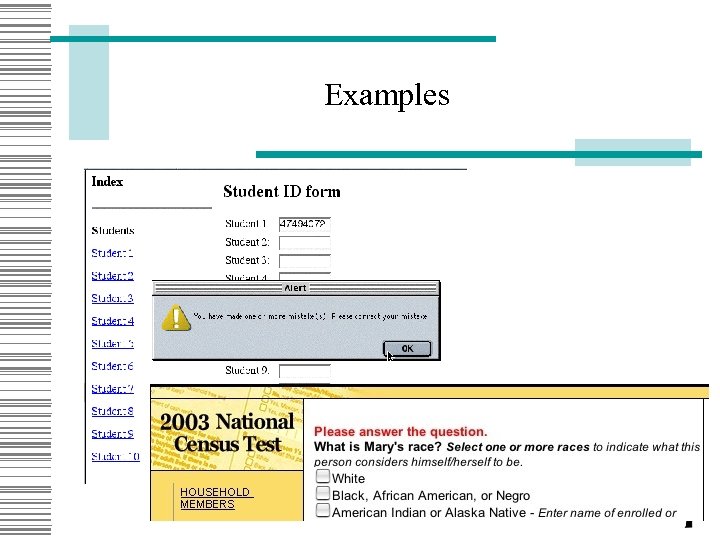

Examples

Guidelines for Edits • If possible, implement edits immediately after the entry of a field or set of fields to maintain the context of the item. • If there is a routine flow or burst of automatic entry of data, notify the user at the end of that flow of any errors. • If an error is detected on a later page, provide a quick path back to correct the error, or provide a pop-up field to re-enter the information.

More Guidelines for Edits • If an error is the combined result of several fields, guide the user successively to those fields or, if possible, provide a pop-up screen showing all of the relevant information and providing fields to correct the information. • Phrase "error" messages in a positive, helpful manner that suggests how to complete a question.

Conclusion • There are many advantages to on-line surveys. • The design options for surveys are many. • Some design alternatives make a difference and others don’t. • The most important thing is to reduce frustration on the part of the respondent!

Questions?

Credits • Support from the U. S. Census • Thanks to students Zachary Friedman, Kirk Norman, Tim Pleskac, Walky Rivadeneira, Laura Slaughter, and Rod Stevenson http: //lap. umd. edu kent_norman@lap. umd. edu

References Kent Norman, Laura Slaughter, Zachary Friedman, Kirk Norman, Rod Stevenson (October 2000). Dual Navigation of Computerized Self-Administered Questionnaires and Organizational Records (CS-TR-4192, UMIACS-TR-200071). Norman K. L. , Pleskac T. J. , Norman K. (May 2001) Navigational Issues in the Design of On-Line Self-Administered Questionnaires: The Effect of Training and Familiarity. LAP-2001 -01, HCIL-2001 -09, CS-TR-4255, UMIACS-TR 2001 -38. Norman K. L. & Pleskac (January 2002). Conditional Branching in Computerized Self-Administered Questionnaires: An Empirical Study. LAP-2002 -01, HCIL 2002 -02, CS-TR-4323, UMIACS-TR-2002 -05. Norman K. L. (November 2001). Implementation of Conditional Branching in Computerized Self-Administered Questionnaires. LAP-2001 -02, HCIL-200126, CS-TR-4319, UMIACS-TR-2002 -1.

e2c63ef5a6e18ca6e31ccdea43deaab7.ppt