052abfc4220259986fdb66a13d9502bb.ppt

- Количество слайдов: 96

USC C S E University of Southern California Center for Software Engineering The COCOMO II Suite of Software Cost Estimation Models Barry Boehm, USC COCOMO/SCM 16 Tutorial October 23, 2001 boehm@sunset. usc. edu http: //sunset. usc. edu/research/cocomosuite 10/23/01 ©USC-CSE 1

USC C S E University of Southern California Center for Software Engineering The COCOMO II Suite of Software Cost Estimation Models Barry Boehm, USC COCOMO/SCM 16 Tutorial October 23, 2001 boehm@sunset. usc. edu http: //sunset. usc. edu/research/cocomosuite 10/23/01 ©USC-CSE 1

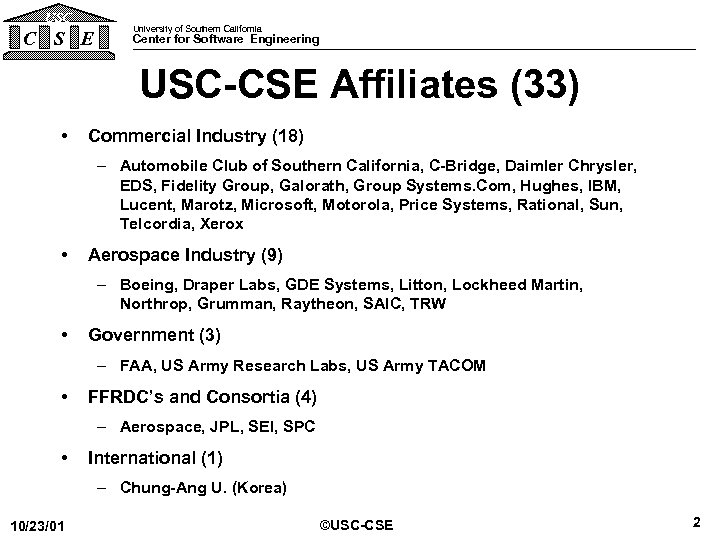

USC C S E University of Southern California Center for Software Engineering USC-CSE Affiliates (33) • Commercial Industry (18) – Automobile Club of Southern California, C-Bridge, Daimler Chrysler, EDS, Fidelity Group, Galorath, Group Systems. Com, Hughes, IBM, Lucent, Marotz, Microsoft, Motorola, Price Systems, Rational, Sun, Telcordia, Xerox • Aerospace Industry (9) – Boeing, Draper Labs, GDE Systems, Litton, Lockheed Martin, Northrop, Grumman, Raytheon, SAIC, TRW • Government (3) – FAA, US Army Research Labs, US Army TACOM • FFRDC’s and Consortia (4) – Aerospace, JPL, SEI, SPC • International (1) – Chung-Ang U. (Korea) 10/23/01 ©USC-CSE 2

USC C S E University of Southern California Center for Software Engineering USC-CSE Affiliates (33) • Commercial Industry (18) – Automobile Club of Southern California, C-Bridge, Daimler Chrysler, EDS, Fidelity Group, Galorath, Group Systems. Com, Hughes, IBM, Lucent, Marotz, Microsoft, Motorola, Price Systems, Rational, Sun, Telcordia, Xerox • Aerospace Industry (9) – Boeing, Draper Labs, GDE Systems, Litton, Lockheed Martin, Northrop, Grumman, Raytheon, SAIC, TRW • Government (3) – FAA, US Army Research Labs, US Army TACOM • FFRDC’s and Consortia (4) – Aerospace, JPL, SEI, SPC • International (1) – Chung-Ang U. (Korea) 10/23/01 ©USC-CSE 2

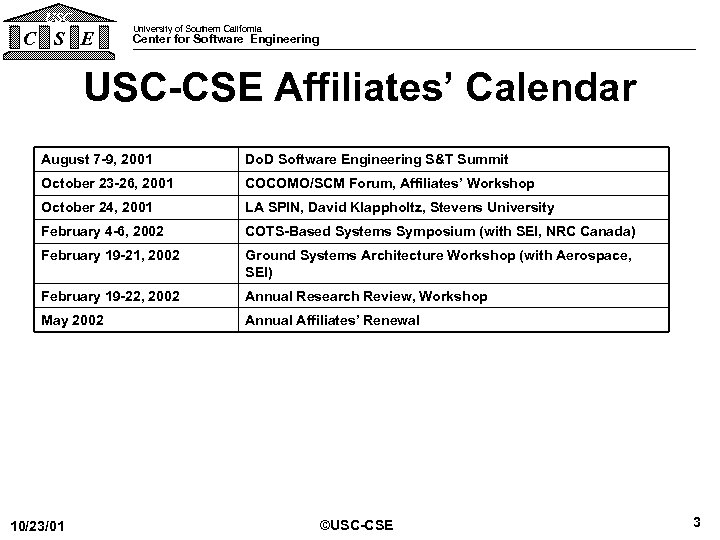

USC C S E University of Southern California Center for Software Engineering USC-CSE Affiliates’ Calendar August 7 -9, 2001 Do. D Software Engineering S&T Summit October 23 -26, 2001 COCOMO/SCM Forum, Affiliates’ Workshop October 24, 2001 LA SPIN, David Klappholtz, Stevens University February 4 -6, 2002 COTS-Based Systems Symposium (with SEI, NRC Canada) February 19 -21, 2002 Ground Systems Architecture Workshop (with Aerospace, SEI) February 19 -22, 2002 Annual Research Review, Workshop May 2002 Annual Affiliates’ Renewal 10/23/01 ©USC-CSE 3

USC C S E University of Southern California Center for Software Engineering USC-CSE Affiliates’ Calendar August 7 -9, 2001 Do. D Software Engineering S&T Summit October 23 -26, 2001 COCOMO/SCM Forum, Affiliates’ Workshop October 24, 2001 LA SPIN, David Klappholtz, Stevens University February 4 -6, 2002 COTS-Based Systems Symposium (with SEI, NRC Canada) February 19 -21, 2002 Ground Systems Architecture Workshop (with Aerospace, SEI) February 19 -22, 2002 Annual Research Review, Workshop May 2002 Annual Affiliates’ Renewal 10/23/01 ©USC-CSE 3

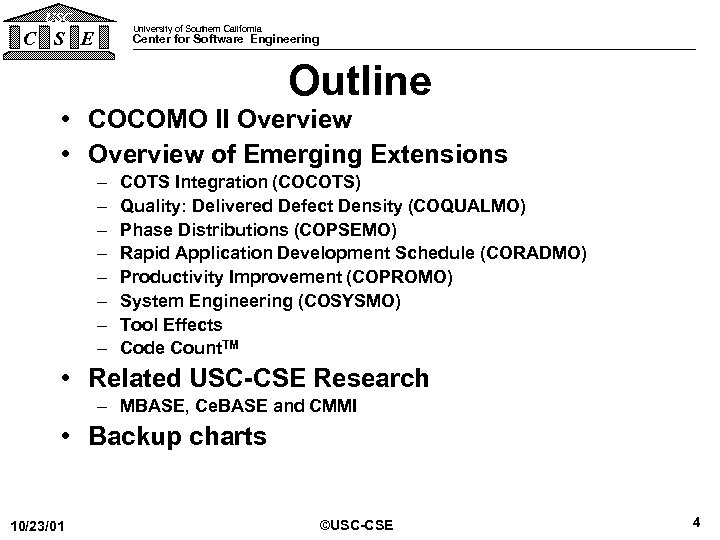

USC University of Southern California C S E Center for Software Engineering Outline • COCOMO II Overview • Overview of Emerging Extensions – – – – COTS Integration (COCOTS) Quality: Delivered Defect Density (COQUALMO) Phase Distributions (COPSEMO) Rapid Application Development Schedule (CORADMO) Productivity Improvement (COPROMO) System Engineering (COSYSMO) Tool Effects Code Count. TM • Related USC-CSE Research – MBASE, Ce. BASE and CMMI • Backup charts 10/23/01 ©USC-CSE 4

USC University of Southern California C S E Center for Software Engineering Outline • COCOMO II Overview • Overview of Emerging Extensions – – – – COTS Integration (COCOTS) Quality: Delivered Defect Density (COQUALMO) Phase Distributions (COPSEMO) Rapid Application Development Schedule (CORADMO) Productivity Improvement (COPROMO) System Engineering (COSYSMO) Tool Effects Code Count. TM • Related USC-CSE Research – MBASE, Ce. BASE and CMMI • Backup charts 10/23/01 ©USC-CSE 4

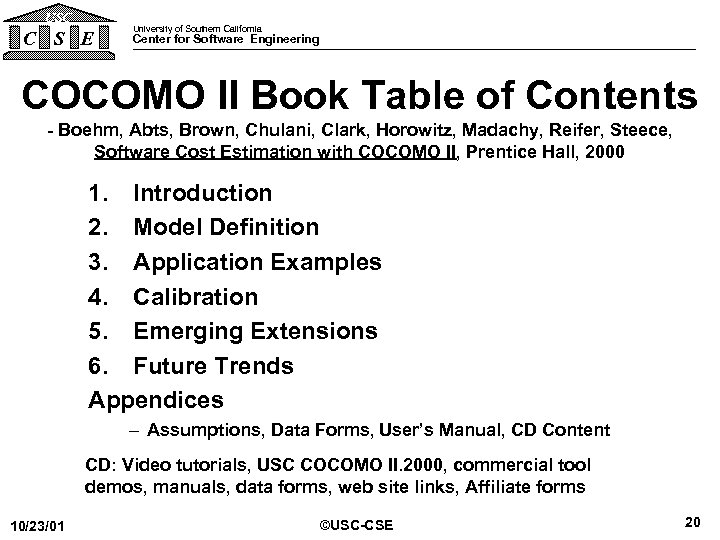

USC C S E University of Southern California Center for Software Engineering COCOMO II Book Table of Contents - Boehm, Abts, Brown, Chulani, Clark, Horowitz, Madachy, Reifer, Steece, Software Cost Estimation with COCOMO II, Prentice Hall, 2000 1. Introduction 2. Model Definition 3. Application Examples 4. Calibration 5. Emerging Extensions 6. Future Trends Appendices – Assumptions, Data Forms, User’s Manual, CD Content CD: Video tutorials, USC COCOMO II. 2000, commercial tool demos, manuals, data forms, web site links, Affiliate forms 10/23/01 ©USC-CSE 5

USC C S E University of Southern California Center for Software Engineering COCOMO II Book Table of Contents - Boehm, Abts, Brown, Chulani, Clark, Horowitz, Madachy, Reifer, Steece, Software Cost Estimation with COCOMO II, Prentice Hall, 2000 1. Introduction 2. Model Definition 3. Application Examples 4. Calibration 5. Emerging Extensions 6. Future Trends Appendices – Assumptions, Data Forms, User’s Manual, CD Content CD: Video tutorials, USC COCOMO II. 2000, commercial tool demos, manuals, data forms, web site links, Affiliate forms 10/23/01 ©USC-CSE 5

USC C S E University of Southern California Center for Software Engineering Purpose of COCOMO II To help people reason about the cost and schedule implications of their software decisions 10/23/01 ©USC-CSE 6

USC C S E University of Southern California Center for Software Engineering Purpose of COCOMO II To help people reason about the cost and schedule implications of their software decisions 10/23/01 ©USC-CSE 6

USC C S E University of Southern California Center for Software Engineering Major Decision Situations Helped by COCOMO II • Software investment decisions – When to develop, reuse, or purchase – What legacy software to modify or phase out • • Setting project budgets and schedules Negotiating cost/schedule/performance tradeoffs Making software risk management decisions Making software improvement decisions – Reuse, tools, process maturity, outsourcing 10/23/01 ©USC-CSE 7

USC C S E University of Southern California Center for Software Engineering Major Decision Situations Helped by COCOMO II • Software investment decisions – When to develop, reuse, or purchase – What legacy software to modify or phase out • • Setting project budgets and schedules Negotiating cost/schedule/performance tradeoffs Making software risk management decisions Making software improvement decisions – Reuse, tools, process maturity, outsourcing 10/23/01 ©USC-CSE 7

USC C S E University of Southern California Center for Software Engineering Need to Re. Engineer COCOMO 81 • • 10/23/01 New software processes New sizing phenomena New reuse phenomena Need to make decisions based on incomplete information ©USC-CSE 8

USC C S E University of Southern California Center for Software Engineering Need to Re. Engineer COCOMO 81 • • 10/23/01 New software processes New sizing phenomena New reuse phenomena Need to make decisions based on incomplete information ©USC-CSE 8

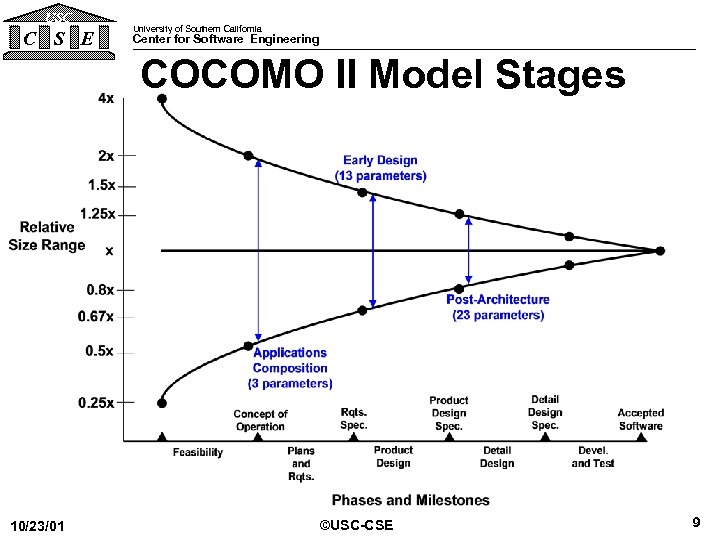

USC C S E University of Southern California Center for Software Engineering COCOMO II Model Stages 10/23/01 ©USC-CSE 9

USC C S E University of Southern California Center for Software Engineering COCOMO II Model Stages 10/23/01 ©USC-CSE 9

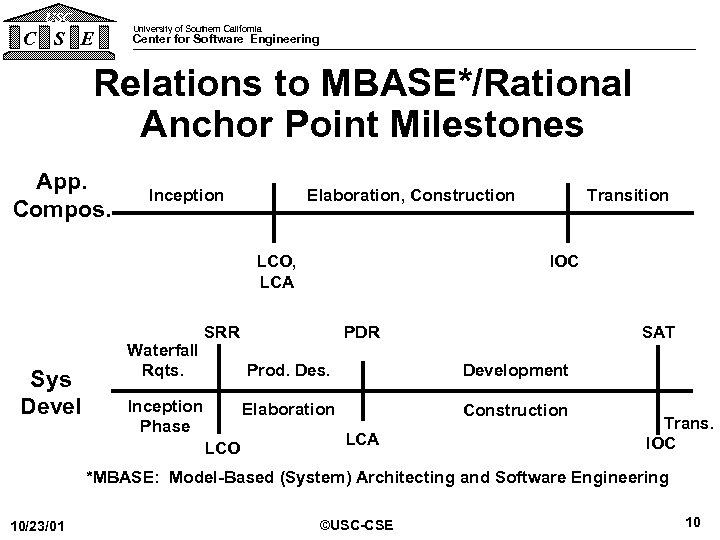

USC C S E University of Southern California Center for Software Engineering Relations to MBASE*/Rational Anchor Point Milestones App. Compos. Inception LCO, LCA Sys Devel Waterfall Rqts. Transition Elaboration, Construction IOC SRR SAT PDR Prod. Des. Inception Elaboration Phase LCA LCO Development Construction Trans. IOC *MBASE: Model-Based (System) Architecting and Software Engineering 10/23/01 ©USC-CSE 10

USC C S E University of Southern California Center for Software Engineering Relations to MBASE*/Rational Anchor Point Milestones App. Compos. Inception LCO, LCA Sys Devel Waterfall Rqts. Transition Elaboration, Construction IOC SRR SAT PDR Prod. Des. Inception Elaboration Phase LCA LCO Development Construction Trans. IOC *MBASE: Model-Based (System) Architecting and Software Engineering 10/23/01 ©USC-CSE 10

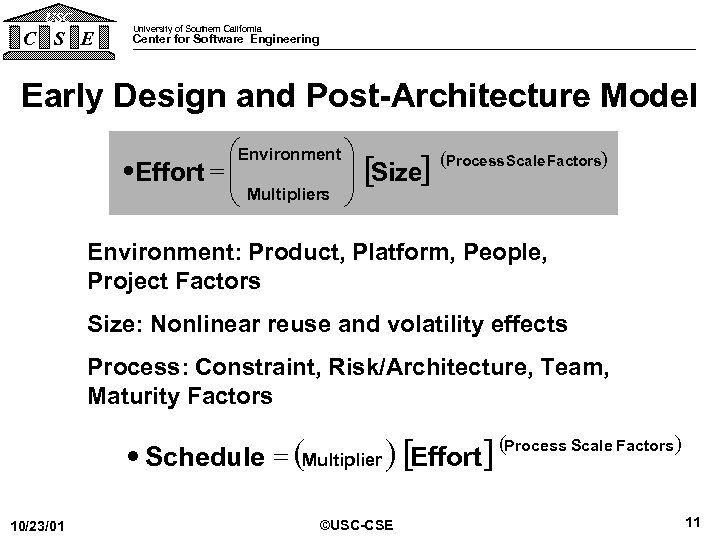

USC C S E University of Southern California Center for Software Engineering Early Design and Post-Architecture Model æEnvironment ö ·Effort = ç ç Multipliers ÷ [Size] ÷ è ø (Process Scale Factors) Environment: Product, Platform, People, Project Factors Size: Nonlinear reuse and volatility effects Process: Constraint, Risk/Architecture, Team, Maturity Factors (Multiplier ) [Effort] (Process Scale Factors ) · Schedule = 10/23/01 ©USC-CSE 11

USC C S E University of Southern California Center for Software Engineering Early Design and Post-Architecture Model æEnvironment ö ·Effort = ç ç Multipliers ÷ [Size] ÷ è ø (Process Scale Factors) Environment: Product, Platform, People, Project Factors Size: Nonlinear reuse and volatility effects Process: Constraint, Risk/Architecture, Team, Maturity Factors (Multiplier ) [Effort] (Process Scale Factors ) · Schedule = 10/23/01 ©USC-CSE 11

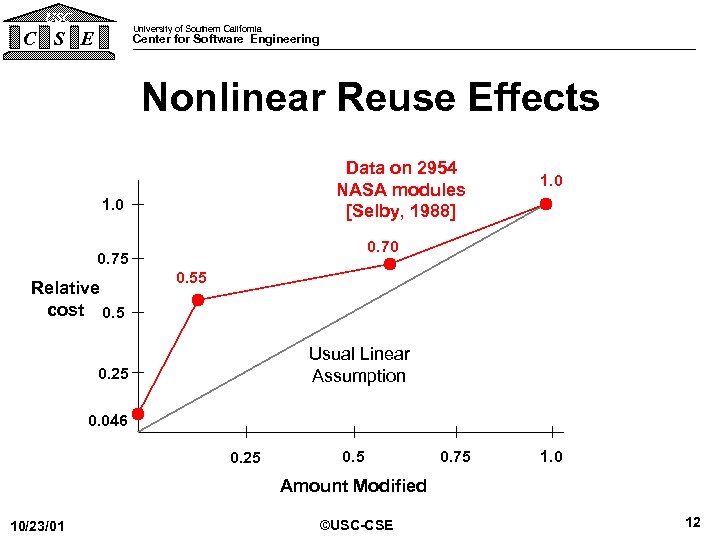

USC University of Southern California C S E Center for Software Engineering Nonlinear Reuse Effects Data on 2954 NASA modules [Selby, 1988] 1. 0 0. 75 Relative cost 0. 5 1. 0 0. 55 Usual Linear Assumption 0. 25 0. 046 0. 25 0. 75 1. 0 Amount Modified 10/23/01 ©USC-CSE 12

USC University of Southern California C S E Center for Software Engineering Nonlinear Reuse Effects Data on 2954 NASA modules [Selby, 1988] 1. 0 0. 75 Relative cost 0. 5 1. 0 0. 55 Usual Linear Assumption 0. 25 0. 046 0. 25 0. 75 1. 0 Amount Modified 10/23/01 ©USC-CSE 12

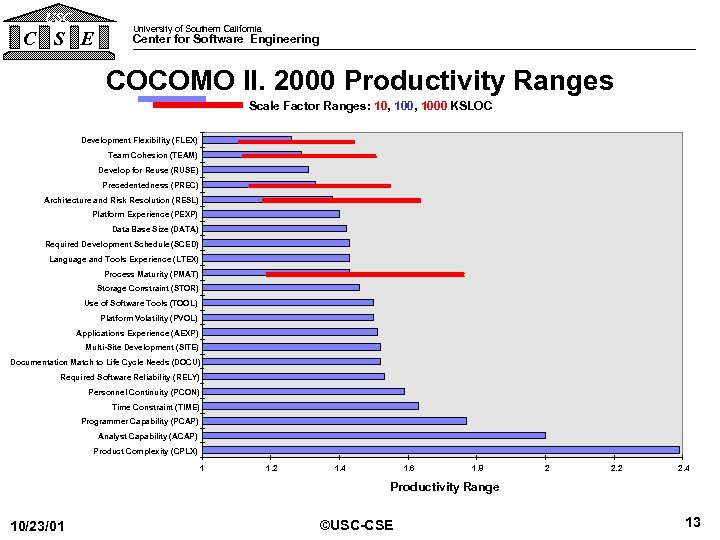

USC C S E University of Southern California Center for Software Engineering COCOMO II. 2000 Productivity Ranges Scale Factor Ranges: 10, 1000 KSLOC Development Flexibility (FLEX) Team Cohesion (TEAM) Develop for Reuse (RUSE) Precedentedness (PREC) Architecture and Risk Resolution (RESL) Platform Experience (PEXP) Data Base Size (DATA) Required Development Schedule (SCED) Language and Tools Experience (LTEX) Process Maturity (PMAT) Storage Constraint (STOR) Use of Software Tools (TOOL) Platform Volatility (PVOL) Applications Experience (AEXP) Multi-Site Development (SITE) Documentation Match to Life Cycle Needs (DOCU) Required Software Reliability (RELY) Personnel Continuity (PCON) Time Constraint (TIME) Programmer Capability (PCAP) Analyst Capability (ACAP) Product Complexity (CPLX) 1 1. 2 1. 4 1. 6 1. 8 2 2. 4 Productivity Range 10/23/01 ©USC-CSE 13

USC C S E University of Southern California Center for Software Engineering COCOMO II. 2000 Productivity Ranges Scale Factor Ranges: 10, 1000 KSLOC Development Flexibility (FLEX) Team Cohesion (TEAM) Develop for Reuse (RUSE) Precedentedness (PREC) Architecture and Risk Resolution (RESL) Platform Experience (PEXP) Data Base Size (DATA) Required Development Schedule (SCED) Language and Tools Experience (LTEX) Process Maturity (PMAT) Storage Constraint (STOR) Use of Software Tools (TOOL) Platform Volatility (PVOL) Applications Experience (AEXP) Multi-Site Development (SITE) Documentation Match to Life Cycle Needs (DOCU) Required Software Reliability (RELY) Personnel Continuity (PCON) Time Constraint (TIME) Programmer Capability (PCAP) Analyst Capability (ACAP) Product Complexity (CPLX) 1 1. 2 1. 4 1. 6 1. 8 2 2. 4 Productivity Range 10/23/01 ©USC-CSE 13

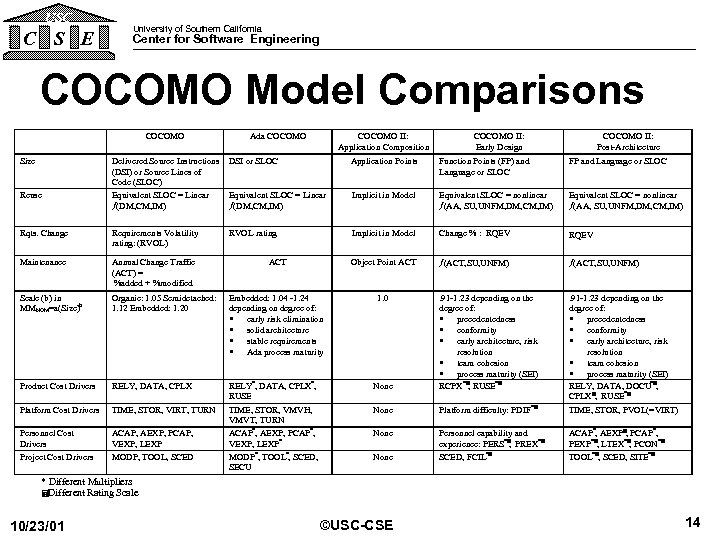

USC C S E University of Southern California Center for Software Engineering COCOMO Model Comparisons COCOMO Size Ada COCOMO II: Application Composition COCOMO II: Early Design COCOMO II: Post-Architecture Delivered Source Instructions (DSI) or Source Lines of Code (SLOC) Equivalent SLOC = Linear ¦(DM, CM, IM) DSI or SLOC Application Points Function Points (FP) and Language or SLOC FP and Language or SLOC Equivalent SLOC = Linear ¦(DM, CM, IM) Implicit in Model Equivalent SLOC = nonlinear ¦(AA, SU, UNFM, DM, CM, IM) Rqts. Change Requirements Volatility rating: (RVOL) RVOL rating Implicit in Model Change % : RQEV Maintenance Annual Change Traffic (ACT) = %added + %modified ACT Object Point ACT ¦(ACT, SU, UNFM) Scale (b) in MMNOM=a(Size)b Organic: 1. 05 Semidetached: 1. 12 Embedded: 1. 20 Embedded: 1. 04 -1. 24 depending on degree of: · early risk elimination · solid architecture · stable requirements · Ada process maturity 1. 0 Product Cost Drivers RELY, DATA, CPLX None Platform Cost Drivers TIME, STOR, VIRT, TURN None Platform difficulty: PDIF *= . 91 -1. 23 depending on the degree of: · precedentedness · conformity · early architecture, risk resolution · team cohesion · process maturity (SEI) RELY, DATA, DOCU*=, CPLX=, RUSE*= TIME, STOR, PVOL(=VIRT) Personnel Cost Drivers Project Cost Drivers ACAP, AEXP, PCAP, VEXP, LEXP MODP, TOOL, SCED RELY*, DATA, CPLX*, RUSE TIME, STOR, VMVH, VMVT, TURN ACAP*, AEXP, PCAP*, VEXP, LEXP* MODP*, TOOL*, SCED, SECU . 91 -1. 23 depending on the degree of: · precedentedness · conformity · early architecture, risk resolution · team cohesion · process maturity (SEI) RCPX*=, RUSE*= None Personnel capability and experience: PERS*= PREX*= , SCED, FCIL*= ACAP*, AEXP=, PCAP*, PEXP*=, LTEX*= PCON*= , TOOL*=, SCED, SITE*= Reuse None * Different Multipliers =Different Rating Scale 10/23/01 ©USC-CSE 14

USC C S E University of Southern California Center for Software Engineering COCOMO Model Comparisons COCOMO Size Ada COCOMO II: Application Composition COCOMO II: Early Design COCOMO II: Post-Architecture Delivered Source Instructions (DSI) or Source Lines of Code (SLOC) Equivalent SLOC = Linear ¦(DM, CM, IM) DSI or SLOC Application Points Function Points (FP) and Language or SLOC FP and Language or SLOC Equivalent SLOC = Linear ¦(DM, CM, IM) Implicit in Model Equivalent SLOC = nonlinear ¦(AA, SU, UNFM, DM, CM, IM) Rqts. Change Requirements Volatility rating: (RVOL) RVOL rating Implicit in Model Change % : RQEV Maintenance Annual Change Traffic (ACT) = %added + %modified ACT Object Point ACT ¦(ACT, SU, UNFM) Scale (b) in MMNOM=a(Size)b Organic: 1. 05 Semidetached: 1. 12 Embedded: 1. 20 Embedded: 1. 04 -1. 24 depending on degree of: · early risk elimination · solid architecture · stable requirements · Ada process maturity 1. 0 Product Cost Drivers RELY, DATA, CPLX None Platform Cost Drivers TIME, STOR, VIRT, TURN None Platform difficulty: PDIF *= . 91 -1. 23 depending on the degree of: · precedentedness · conformity · early architecture, risk resolution · team cohesion · process maturity (SEI) RELY, DATA, DOCU*=, CPLX=, RUSE*= TIME, STOR, PVOL(=VIRT) Personnel Cost Drivers Project Cost Drivers ACAP, AEXP, PCAP, VEXP, LEXP MODP, TOOL, SCED RELY*, DATA, CPLX*, RUSE TIME, STOR, VMVH, VMVT, TURN ACAP*, AEXP, PCAP*, VEXP, LEXP* MODP*, TOOL*, SCED, SECU . 91 -1. 23 depending on the degree of: · precedentedness · conformity · early architecture, risk resolution · team cohesion · process maturity (SEI) RCPX*=, RUSE*= None Personnel capability and experience: PERS*= PREX*= , SCED, FCIL*= ACAP*, AEXP=, PCAP*, PEXP*=, LTEX*= PCON*= , TOOL*=, SCED, SITE*= Reuse None * Different Multipliers =Different Rating Scale 10/23/01 ©USC-CSE 14

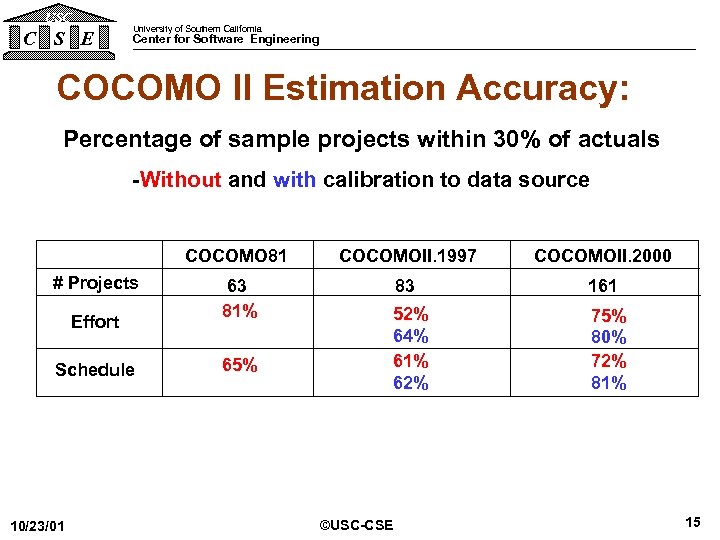

USC C S E University of Southern California Center for Software Engineering COCOMO II Estimation Accuracy: Percentage of sample projects within 30% of actuals -Without and with calibration to data source COCOMO 81 # Projects Effort Schedule 10/23/01 COCOMOII. 1997 COCOMOII. 2000 63 81% 83 161 52% 64% 61% 62% 75% 80% 72% 81% 65% ©USC-CSE 15

USC C S E University of Southern California Center for Software Engineering COCOMO II Estimation Accuracy: Percentage of sample projects within 30% of actuals -Without and with calibration to data source COCOMO 81 # Projects Effort Schedule 10/23/01 COCOMOII. 1997 COCOMOII. 2000 63 81% 83 161 52% 64% 61% 62% 75% 80% 72% 81% 65% ©USC-CSE 15

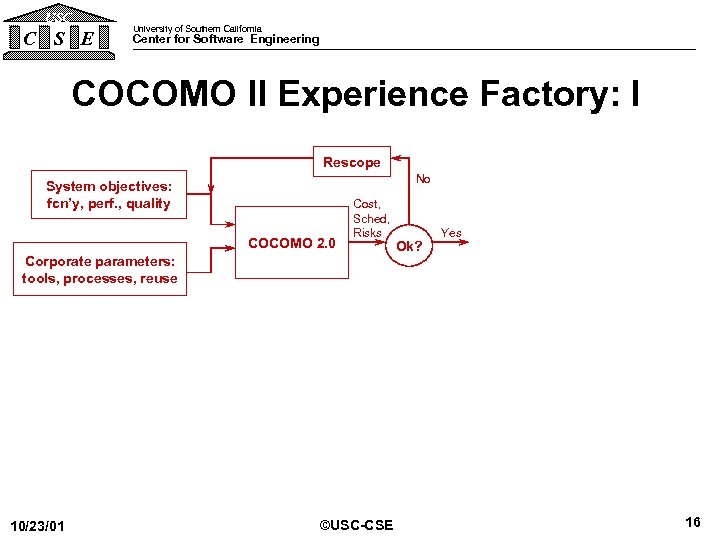

USC C S E University of Southern California Center for Software Engineering COCOMO II Experience Factory: I Rescope No System objectives: fcn’y, perf. , quality COCOMO 2. 0 Cost, Sched, Risks Corporate parameters: tools, processes, reuse 10/23/01 ©USC-CSE Ok? Yes 16

USC C S E University of Southern California Center for Software Engineering COCOMO II Experience Factory: I Rescope No System objectives: fcn’y, perf. , quality COCOMO 2. 0 Cost, Sched, Risks Corporate parameters: tools, processes, reuse 10/23/01 ©USC-CSE Ok? Yes 16

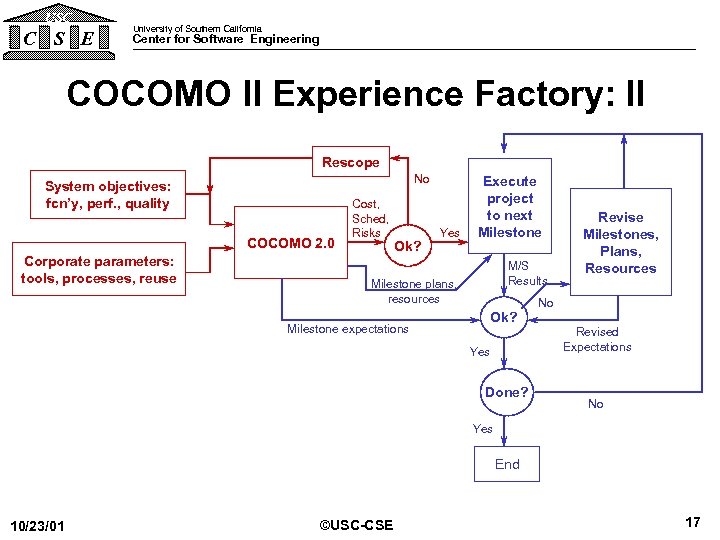

USC C S E University of Southern California Center for Software Engineering COCOMO II Experience Factory: II Rescope No System objectives: fcn’y, perf. , quality COCOMO 2. 0 Corporate parameters: tools, processes, reuse Cost, Sched, Risks Ok? Yes Execute project to next Milestone M/S Results Milestone plans, resources Milestone expectations Revise Milestones, Plans, Resources No Ok? Yes Done? Revised Expectations No Yes End 10/23/01 ©USC-CSE 17

USC C S E University of Southern California Center for Software Engineering COCOMO II Experience Factory: II Rescope No System objectives: fcn’y, perf. , quality COCOMO 2. 0 Corporate parameters: tools, processes, reuse Cost, Sched, Risks Ok? Yes Execute project to next Milestone M/S Results Milestone plans, resources Milestone expectations Revise Milestones, Plans, Resources No Ok? Yes Done? Revised Expectations No Yes End 10/23/01 ©USC-CSE 17

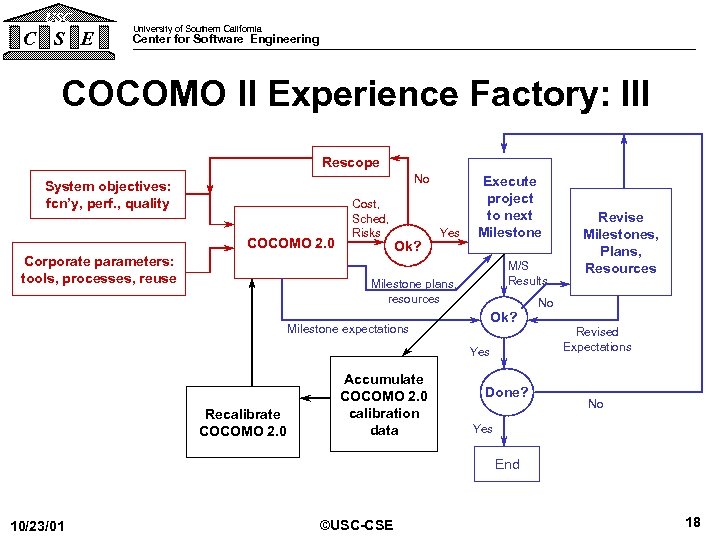

USC C S E University of Southern California Center for Software Engineering COCOMO II Experience Factory: III Rescope No System objectives: fcn’y, perf. , quality COCOMO 2. 0 Corporate parameters: tools, processes, reuse Cost, Sched, Risks Ok? Yes Execute project to next Milestone M/S Results Milestone plans, resources Milestone expectations No Ok? Yes Recalibrate COCOMO 2. 0 Accumulate COCOMO 2. 0 calibration data Revise Milestones, Plans, Resources Done? Revised Expectations No Yes End 10/23/01 ©USC-CSE 18

USC C S E University of Southern California Center for Software Engineering COCOMO II Experience Factory: III Rescope No System objectives: fcn’y, perf. , quality COCOMO 2. 0 Corporate parameters: tools, processes, reuse Cost, Sched, Risks Ok? Yes Execute project to next Milestone M/S Results Milestone plans, resources Milestone expectations No Ok? Yes Recalibrate COCOMO 2. 0 Accumulate COCOMO 2. 0 calibration data Revise Milestones, Plans, Resources Done? Revised Expectations No Yes End 10/23/01 ©USC-CSE 18

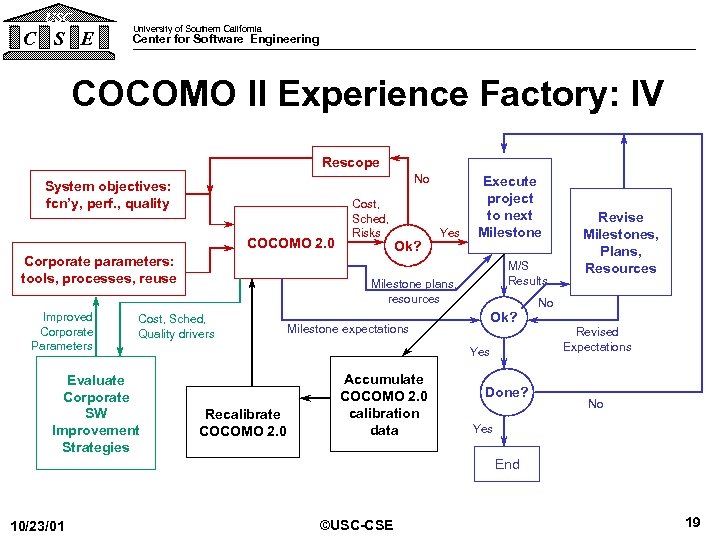

USC C S E University of Southern California Center for Software Engineering COCOMO II Experience Factory: IV Rescope No System objectives: fcn’y, perf. , quality COCOMO 2. 0 Corporate parameters: tools, processes, reuse Improved Corporate Parameters Ok? Yes M/S Results Milestone plans, resources Cost, Sched, Quality drivers Evaluate Corporate SW Improvement Strategies Cost, Sched, Risks Execute project to next Milestone expectations No Ok? Yes Recalibrate COCOMO 2. 0 Accumulate COCOMO 2. 0 calibration data Revise Milestones, Plans, Resources Done? Revised Expectations No Yes End 10/23/01 ©USC-CSE 19

USC C S E University of Southern California Center for Software Engineering COCOMO II Experience Factory: IV Rescope No System objectives: fcn’y, perf. , quality COCOMO 2. 0 Corporate parameters: tools, processes, reuse Improved Corporate Parameters Ok? Yes M/S Results Milestone plans, resources Cost, Sched, Quality drivers Evaluate Corporate SW Improvement Strategies Cost, Sched, Risks Execute project to next Milestone expectations No Ok? Yes Recalibrate COCOMO 2. 0 Accumulate COCOMO 2. 0 calibration data Revise Milestones, Plans, Resources Done? Revised Expectations No Yes End 10/23/01 ©USC-CSE 19

USC C S E University of Southern California Center for Software Engineering COCOMO II Book Table of Contents - Boehm, Abts, Brown, Chulani, Clark, Horowitz, Madachy, Reifer, Steece, Software Cost Estimation with COCOMO II, Prentice Hall, 2000 1. Introduction 2. Model Definition 3. Application Examples 4. Calibration 5. Emerging Extensions 6. Future Trends Appendices – Assumptions, Data Forms, User’s Manual, CD Content CD: Video tutorials, USC COCOMO II. 2000, commercial tool demos, manuals, data forms, web site links, Affiliate forms 10/23/01 ©USC-CSE 20

USC C S E University of Southern California Center for Software Engineering COCOMO II Book Table of Contents - Boehm, Abts, Brown, Chulani, Clark, Horowitz, Madachy, Reifer, Steece, Software Cost Estimation with COCOMO II, Prentice Hall, 2000 1. Introduction 2. Model Definition 3. Application Examples 4. Calibration 5. Emerging Extensions 6. Future Trends Appendices – Assumptions, Data Forms, User’s Manual, CD Content CD: Video tutorials, USC COCOMO II. 2000, commercial tool demos, manuals, data forms, web site links, Affiliate forms 10/23/01 ©USC-CSE 20

USC University of Southern California C S E Center for Software Engineering Outline • COCOMO II Overview • Overview of Emerging Extensions – – – – COTS Integration (COCOTS) Quality: Delivered Defect Density (COQUALMO) Phase Distributions (COPSEMO) Rapid Application Development Schedule (CORADMO) Productivity Improvement (COPROMO) System Engineering (COSYSMO) Tool Effects Code Count. TM • Related USC-CSE Research – MBASE, Ce. BASE and CMMI • Backup charts 10/23/01 ©USC-CSE 21

USC University of Southern California C S E Center for Software Engineering Outline • COCOMO II Overview • Overview of Emerging Extensions – – – – COTS Integration (COCOTS) Quality: Delivered Defect Density (COQUALMO) Phase Distributions (COPSEMO) Rapid Application Development Schedule (CORADMO) Productivity Improvement (COPROMO) System Engineering (COSYSMO) Tool Effects Code Count. TM • Related USC-CSE Research – MBASE, Ce. BASE and CMMI • Backup charts 10/23/01 ©USC-CSE 21

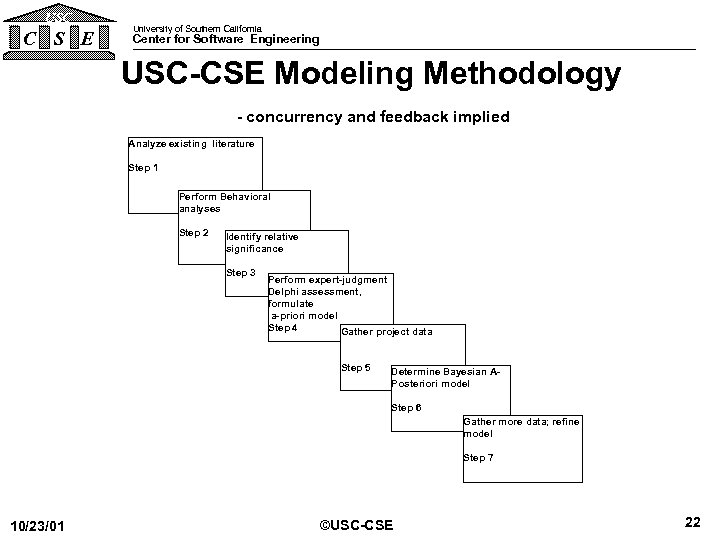

USC C S E University of Southern California Center for Software Engineering USC-CSE Modeling Methodology - concurrency and feedback implied Analyze existing literature Step 1 Perform Behavioral analyses Step 2 Identify relative significance Step 3 Perform expert-judgment Delphi assessment, formulate a-priori model Step 4 Gather project data Step 5 Determine Bayesian APosteriori model Step 6 Gather more data; refine model Step 7 10/23/01 ©USC-CSE 22

USC C S E University of Southern California Center for Software Engineering USC-CSE Modeling Methodology - concurrency and feedback implied Analyze existing literature Step 1 Perform Behavioral analyses Step 2 Identify relative significance Step 3 Perform expert-judgment Delphi assessment, formulate a-priori model Step 4 Gather project data Step 5 Determine Bayesian APosteriori model Step 6 Gather more data; refine model Step 7 10/23/01 ©USC-CSE 22

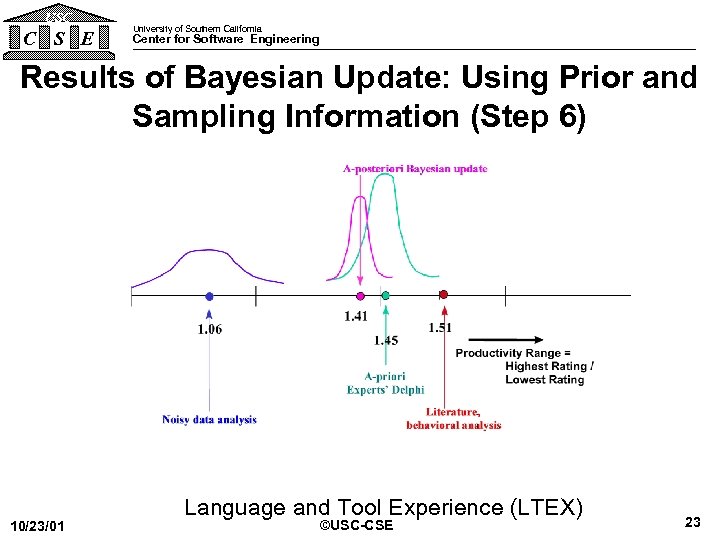

USC C S E University of Southern California Center for Software Engineering Results of Bayesian Update: Using Prior and Sampling Information (Step 6) 10/23/01 Language and Tool Experience (LTEX) ©USC-CSE 23

USC C S E University of Southern California Center for Software Engineering Results of Bayesian Update: Using Prior and Sampling Information (Step 6) 10/23/01 Language and Tool Experience (LTEX) ©USC-CSE 23

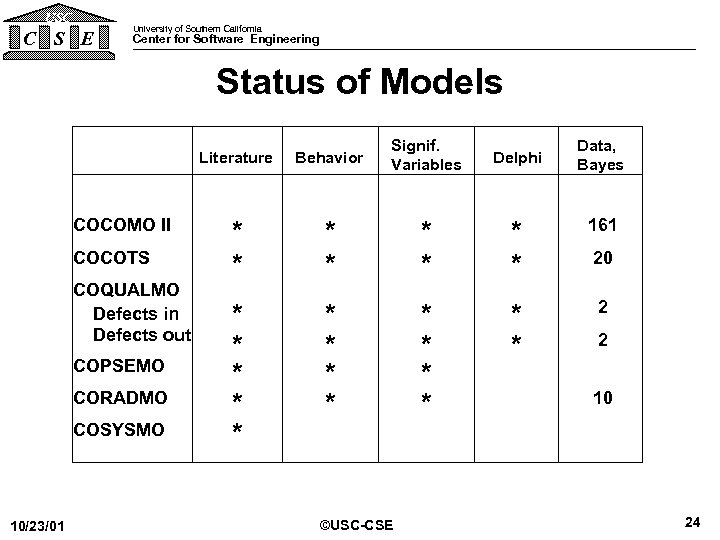

USC C S E University of Southern California Center for Software Engineering Status of Models Literature COCOMO II COCOTS COQUALMO Defects in Defects out COPSEMO CORADMO COSYSMO 10/23/01 Behavior Signif. Variables Delphi * * * * 161 * * * * 2 ©USC-CSE Data, Bayes 20 2 10 24

USC C S E University of Southern California Center for Software Engineering Status of Models Literature COCOMO II COCOTS COQUALMO Defects in Defects out COPSEMO CORADMO COSYSMO 10/23/01 Behavior Signif. Variables Delphi * * * * 161 * * * * 2 ©USC-CSE Data, Bayes 20 2 10 24

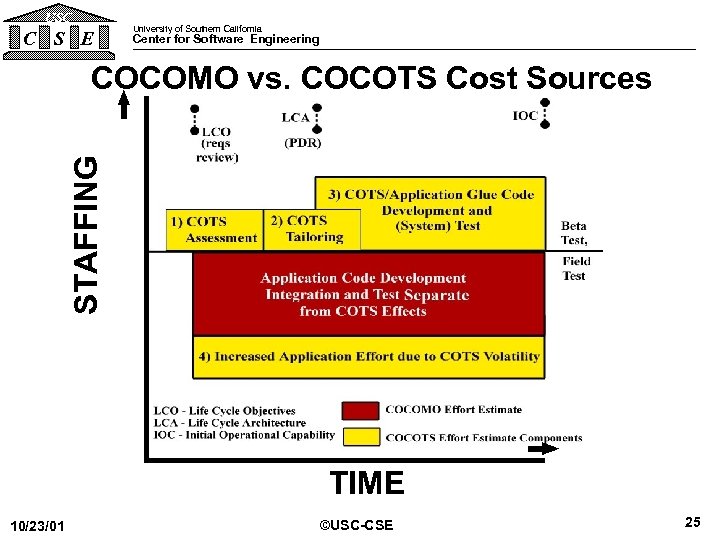

USC C S E University of Southern California Center for Software Engineering STAFFING COCOMO vs. COCOTS Cost Sources 10/23/01 TIME ©USC-CSE 25

USC C S E University of Southern California Center for Software Engineering STAFFING COCOMO vs. COCOTS Cost Sources 10/23/01 TIME ©USC-CSE 25

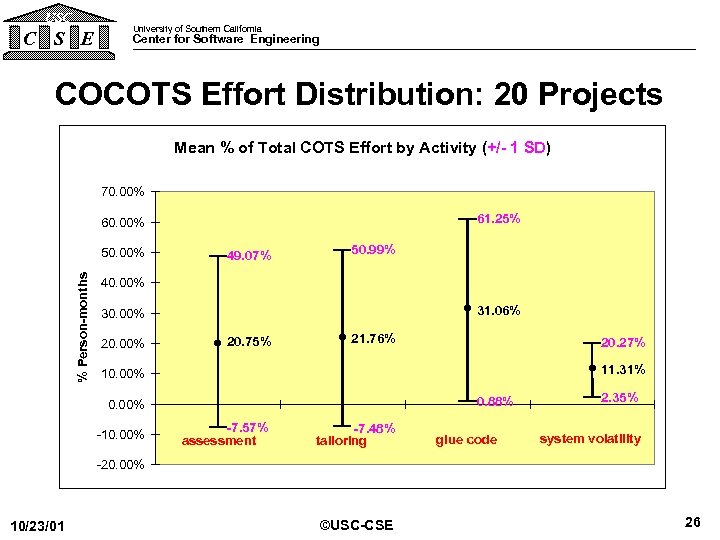

USC C S E University of Southern California Center for Software Engineering COCOTS Effort Distribution: 20 Projects Mean % of Total COTS Effort by Activity (+/- 1 SD) 70. 00% 61. 25% 60. 00% % Person-months 50. 00% 49. 07% 50. 99% 40. 00% 31. 06% 30. 00% 20. 75% 21. 76% 20. 27% 11. 31% 10. 00% 0. 88% 0. 00% -10. 00% -7. 57% assessment -7. 48% tailoring glue code 2. 35% system volatility -20. 00% 10/23/01 ©USC-CSE 26

USC C S E University of Southern California Center for Software Engineering COCOTS Effort Distribution: 20 Projects Mean % of Total COTS Effort by Activity (+/- 1 SD) 70. 00% 61. 25% 60. 00% % Person-months 50. 00% 49. 07% 50. 99% 40. 00% 31. 06% 30. 00% 20. 75% 21. 76% 20. 27% 11. 31% 10. 00% 0. 88% 0. 00% -10. 00% -7. 57% assessment -7. 48% tailoring glue code 2. 35% system volatility -20. 00% 10/23/01 ©USC-CSE 26

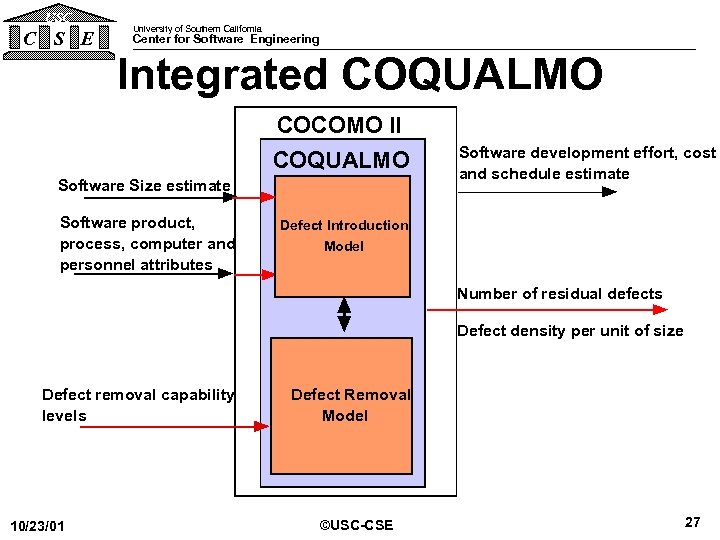

USC C S E University of Southern California Center for Software Engineering Integrated COQUALMO COCOMO II COQUALMO Software Size estimate Software product, process, computer and personnel attributes Software development effort, cost and schedule estimate Defect Introduction Model Number of residual defects Defect density per unit of size Defect removal capability levels 10/23/01 Defect Removal Model ©USC-CSE 27

USC C S E University of Southern California Center for Software Engineering Integrated COQUALMO COCOMO II COQUALMO Software Size estimate Software product, process, computer and personnel attributes Software development effort, cost and schedule estimate Defect Introduction Model Number of residual defects Defect density per unit of size Defect removal capability levels 10/23/01 Defect Removal Model ©USC-CSE 27

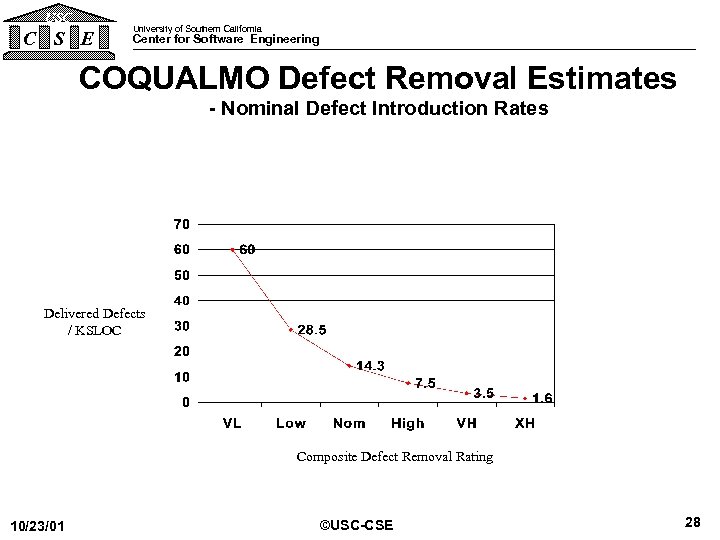

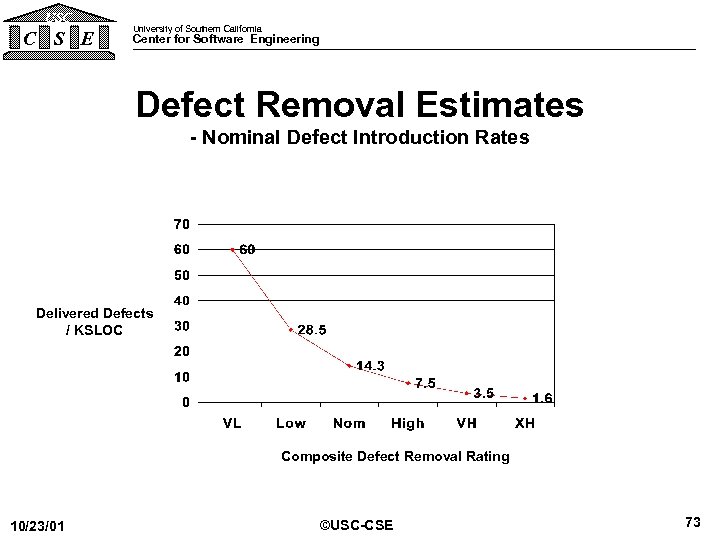

USC C S E University of Southern California Center for Software Engineering COQUALMO Defect Removal Estimates - Nominal Defect Introduction Rates Delivered Defects / KSLOC Composite Defect Removal Rating 10/23/01 ©USC-CSE 28

USC C S E University of Southern California Center for Software Engineering COQUALMO Defect Removal Estimates - Nominal Defect Introduction Rates Delivered Defects / KSLOC Composite Defect Removal Rating 10/23/01 ©USC-CSE 28

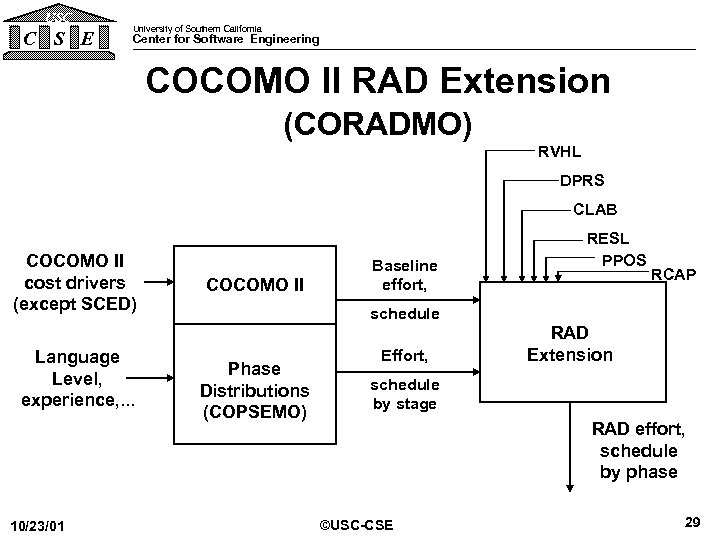

USC C S E University of Southern California Center for Software Engineering COCOMO II RAD Extension (CORADMO) RVHL DPRS CLAB COCOMO II cost drivers (except SCED) Language Level, experience, . . . 10/23/01 COCOMO II Baseline effort, RESL PPOS RCAP schedule Phase Distributions (COPSEMO) Effort, RAD Extension schedule by stage RAD effort, schedule by phase ©USC-CSE 29

USC C S E University of Southern California Center for Software Engineering COCOMO II RAD Extension (CORADMO) RVHL DPRS CLAB COCOMO II cost drivers (except SCED) Language Level, experience, . . . 10/23/01 COCOMO II Baseline effort, RESL PPOS RCAP schedule Phase Distributions (COPSEMO) Effort, RAD Extension schedule by stage RAD effort, schedule by phase ©USC-CSE 29

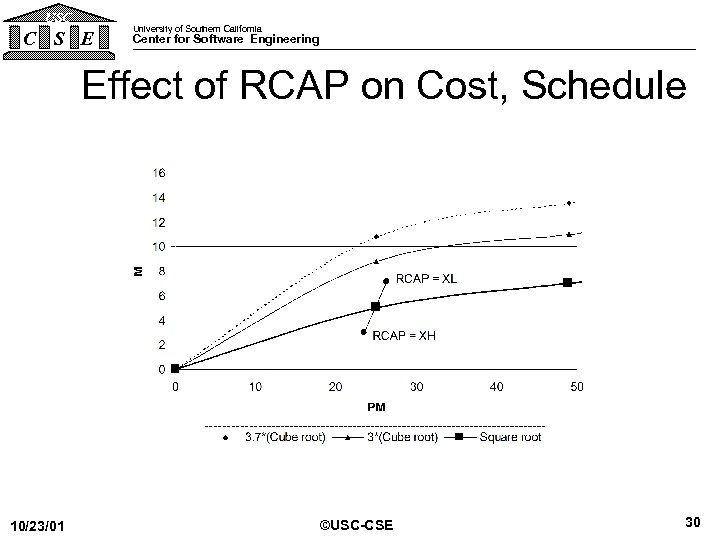

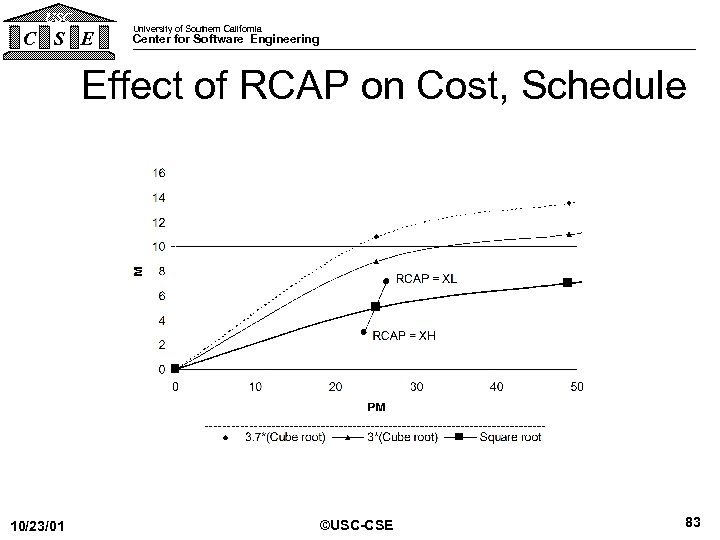

USC C S E University of Southern California Center for Software Engineering Effect of RCAP on Cost, Schedule 10/23/01 ©USC-CSE 30

USC C S E University of Southern California Center for Software Engineering Effect of RCAP on Cost, Schedule 10/23/01 ©USC-CSE 30

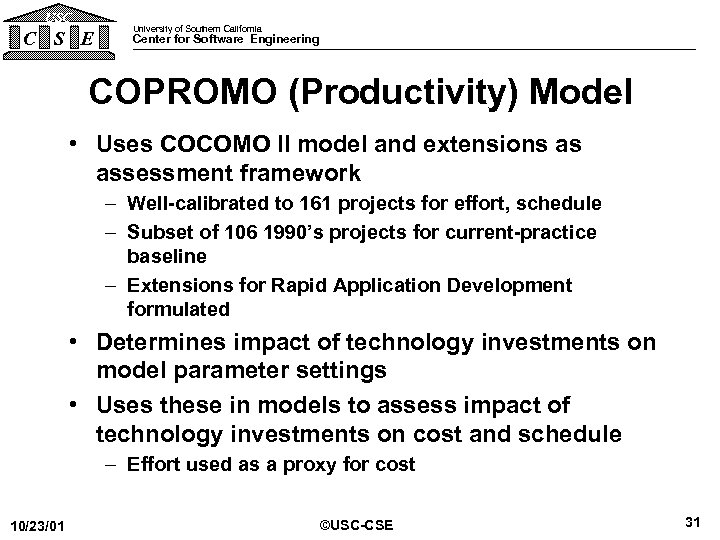

USC C S E University of Southern California Center for Software Engineering COPROMO (Productivity) Model • Uses COCOMO II model and extensions as assessment framework – Well-calibrated to 161 projects for effort, schedule – Subset of 106 1990’s projects for current-practice baseline – Extensions for Rapid Application Development formulated • Determines impact of technology investments on model parameter settings • Uses these in models to assess impact of technology investments on cost and schedule – Effort used as a proxy for cost 10/23/01 ©USC-CSE 31

USC C S E University of Southern California Center for Software Engineering COPROMO (Productivity) Model • Uses COCOMO II model and extensions as assessment framework – Well-calibrated to 161 projects for effort, schedule – Subset of 106 1990’s projects for current-practice baseline – Extensions for Rapid Application Development formulated • Determines impact of technology investments on model parameter settings • Uses these in models to assess impact of technology investments on cost and schedule – Effort used as a proxy for cost 10/23/01 ©USC-CSE 31

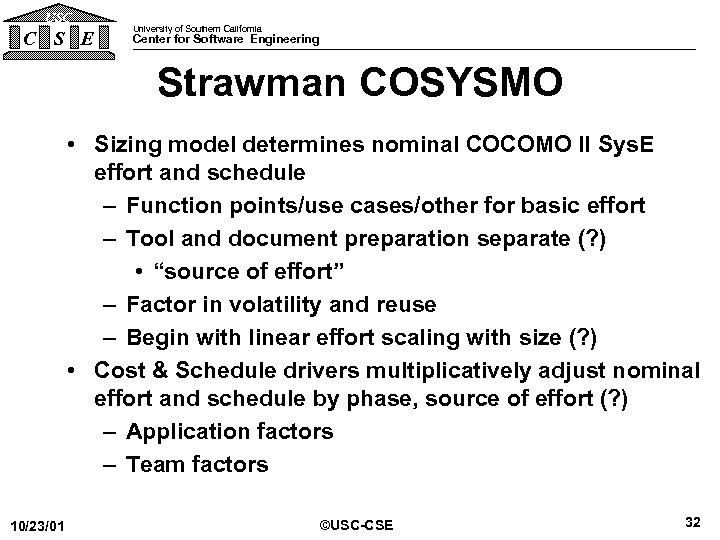

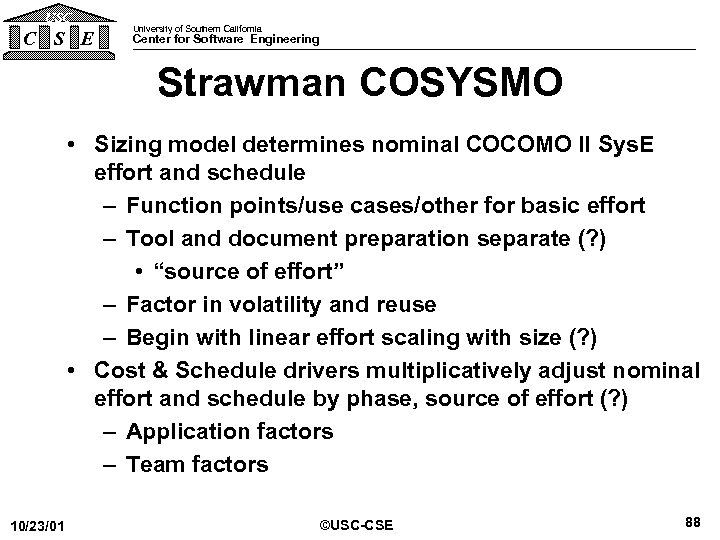

USC C S E University of Southern California Center for Software Engineering Strawman COSYSMO • Sizing model determines nominal COCOMO II Sys. E effort and schedule – Function points/use cases/other for basic effort – Tool and document preparation separate (? ) • “source of effort” – Factor in volatility and reuse – Begin with linear effort scaling with size (? ) • Cost & Schedule drivers multiplicatively adjust nominal effort and schedule by phase, source of effort (? ) – Application factors – Team factors 10/23/01 ©USC-CSE 32

USC C S E University of Southern California Center for Software Engineering Strawman COSYSMO • Sizing model determines nominal COCOMO II Sys. E effort and schedule – Function points/use cases/other for basic effort – Tool and document preparation separate (? ) • “source of effort” – Factor in volatility and reuse – Begin with linear effort scaling with size (? ) • Cost & Schedule drivers multiplicatively adjust nominal effort and schedule by phase, source of effort (? ) – Application factors – Team factors 10/23/01 ©USC-CSE 32

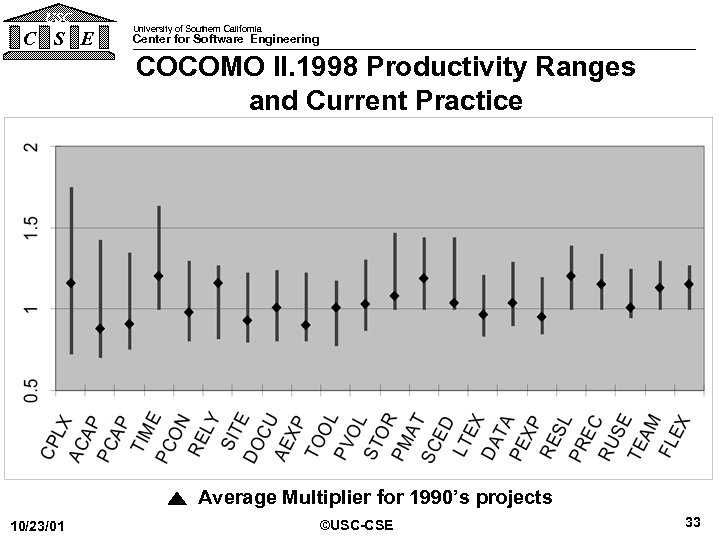

USC C S E University of Southern California Center for Software Engineering COCOMO II. 1998 Productivity Ranges and Current Practice Average Multiplier for 1990’s projects 10/23/01 ©USC-CSE 33

USC C S E University of Southern California Center for Software Engineering COCOMO II. 1998 Productivity Ranges and Current Practice Average Multiplier for 1990’s projects 10/23/01 ©USC-CSE 33

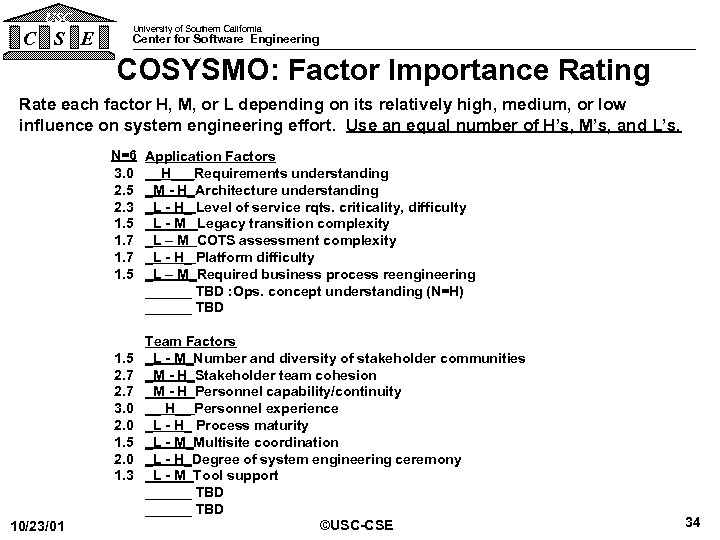

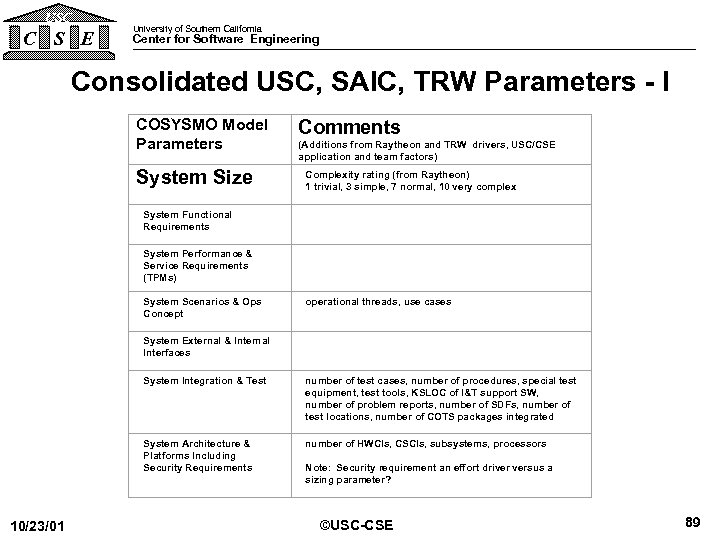

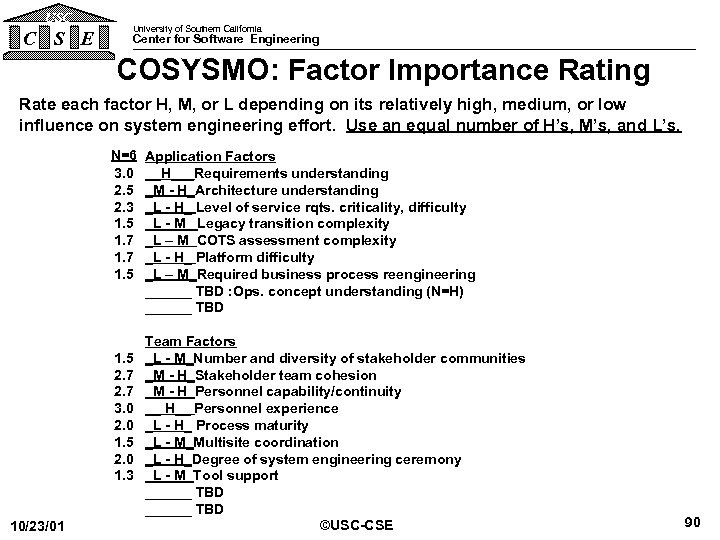

USC C S E University of Southern California Center for Software Engineering COSYSMO: Factor Importance Rating Rate each factor H, M, or L depending on its relatively high, medium, or low influence on system engineering effort. Use an equal number of H’s, M’s, and L’s. N=6 3. 0 2. 5 2. 3 1. 5 1. 7 1. 5 2. 7 3. 0 2. 0 1. 5 2. 0 1. 3 10/23/01 Application Factors __H___Requirements understanding _M - H_Architecture understanding _L - H_ Level of service rqts. criticality, difficulty _L - M_ Legacy transition complexity _L – M COTS assessment complexity _L - H_ Platform difficulty _L – M_Required business process reengineering ______ TBD : Ops. concept understanding (N=H) ______ TBD Team Factors _L - M_Number and diversity of stakeholder communities _M - H_Stakeholder team cohesion _M - H_Personnel capability/continuity __ H__ Personnel experience _L - H_ Process maturity _L - M_Multisite coordination _L - H_Degree of system engineering ceremony _L - M_Tool support ______ TBD ©USC-CSE 34

USC C S E University of Southern California Center for Software Engineering COSYSMO: Factor Importance Rating Rate each factor H, M, or L depending on its relatively high, medium, or low influence on system engineering effort. Use an equal number of H’s, M’s, and L’s. N=6 3. 0 2. 5 2. 3 1. 5 1. 7 1. 5 2. 7 3. 0 2. 0 1. 5 2. 0 1. 3 10/23/01 Application Factors __H___Requirements understanding _M - H_Architecture understanding _L - H_ Level of service rqts. criticality, difficulty _L - M_ Legacy transition complexity _L – M COTS assessment complexity _L - H_ Platform difficulty _L – M_Required business process reengineering ______ TBD : Ops. concept understanding (N=H) ______ TBD Team Factors _L - M_Number and diversity of stakeholder communities _M - H_Stakeholder team cohesion _M - H_Personnel capability/continuity __ H__ Personnel experience _L - H_ Process maturity _L - M_Multisite coordination _L - H_Degree of system engineering ceremony _L - M_Tool support ______ TBD ©USC-CSE 34

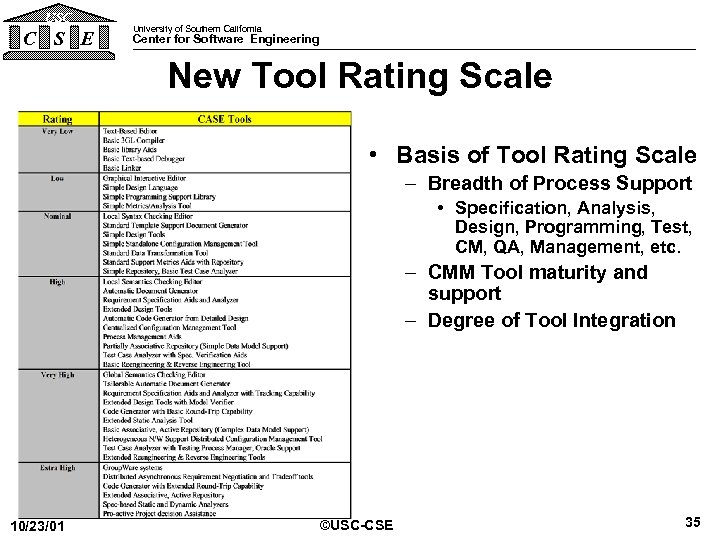

USC C S E University of Southern California Center for Software Engineering New Tool Rating Scale • Basis of Tool Rating Scale – Breadth of Process Support • Specification, Analysis, Design, Programming, Test, CM, QA, Management, etc. – CMM Tool maturity and support – Degree of Tool Integration 10/23/01 ©USC-CSE 35

USC C S E University of Southern California Center for Software Engineering New Tool Rating Scale • Basis of Tool Rating Scale – Breadth of Process Support • Specification, Analysis, Design, Programming, Test, CM, QA, Management, etc. – CMM Tool maturity and support – Degree of Tool Integration 10/23/01 ©USC-CSE 35

USC C S E University of Southern California Center for Software Engineering Code Count™ • Suite of 9 counting tools + COPY LEFTed + Full source code Ada ASM 1750 C/C++ COBOL FORTRAN Java JOVIAL Pascal PL/1 • Counts *”QA” Data (Tallies) +SLOC +DSI 10/23/01 +Statements By Type +Comment By Type ©USC-CSE 36

USC C S E University of Southern California Center for Software Engineering Code Count™ • Suite of 9 counting tools + COPY LEFTed + Full source code Ada ASM 1750 C/C++ COBOL FORTRAN Java JOVIAL Pascal PL/1 • Counts *”QA” Data (Tallies) +SLOC +DSI 10/23/01 +Statements By Type +Comment By Type ©USC-CSE 36

USC University of Southern California C S E Center for Software Engineering Outline • COCOMO II Overview • Overview of Emerging Extensions – – – – COTS Integration (COCOTS) Quality: Delivered Defect Density (COQUALMO) Phase Distributions (COPSEMO) Rapid Application Development Schedule (CORADMO) Productivity Improvement (COPROMO) System Engineering (COSYSMO) Tool Effects Code Count. TM • Related USC-CSE Research – MBASE, Ce. BASE and CMMI • Backup charts 10/23/01 ©USC-CSE 37

USC University of Southern California C S E Center for Software Engineering Outline • COCOMO II Overview • Overview of Emerging Extensions – – – – COTS Integration (COCOTS) Quality: Delivered Defect Density (COQUALMO) Phase Distributions (COPSEMO) Rapid Application Development Schedule (CORADMO) Productivity Improvement (COPROMO) System Engineering (COSYSMO) Tool Effects Code Count. TM • Related USC-CSE Research – MBASE, Ce. BASE and CMMI • Backup charts 10/23/01 ©USC-CSE 37

USC C S E University of Southern California Center for Software Engineering MBASE, Ce. BASE, and CMMI • Model-Based (System) Architecting and Software Engineering (MBASE) –Extension of Win Spiral Model –Avoids process/product/property/success model clashes –Provides project-oriented guidelines • Center for Empirically-Based Software Engineering (Ce. BASE) –Led by USC, UMaryland –Sponsored by NSF, others –Empirical software data collection and analysis –Integrates MBASE, Experience Factory, GMQM into Ce. BASE Method –Goal-Model-Question-Metric method –Integrated organization/portfolio/project guidelines • Ce. BASE Method implements Integrated Capability Maturity Model (CMMI) and more –Parts of People CMM, but light on Acquisition CMM 10/23/01 ©USC-CSE 38

USC C S E University of Southern California Center for Software Engineering MBASE, Ce. BASE, and CMMI • Model-Based (System) Architecting and Software Engineering (MBASE) –Extension of Win Spiral Model –Avoids process/product/property/success model clashes –Provides project-oriented guidelines • Center for Empirically-Based Software Engineering (Ce. BASE) –Led by USC, UMaryland –Sponsored by NSF, others –Empirical software data collection and analysis –Integrates MBASE, Experience Factory, GMQM into Ce. BASE Method –Goal-Model-Question-Metric method –Integrated organization/portfolio/project guidelines • Ce. BASE Method implements Integrated Capability Maturity Model (CMMI) and more –Parts of People CMM, but light on Acquisition CMM 10/23/01 ©USC-CSE 38

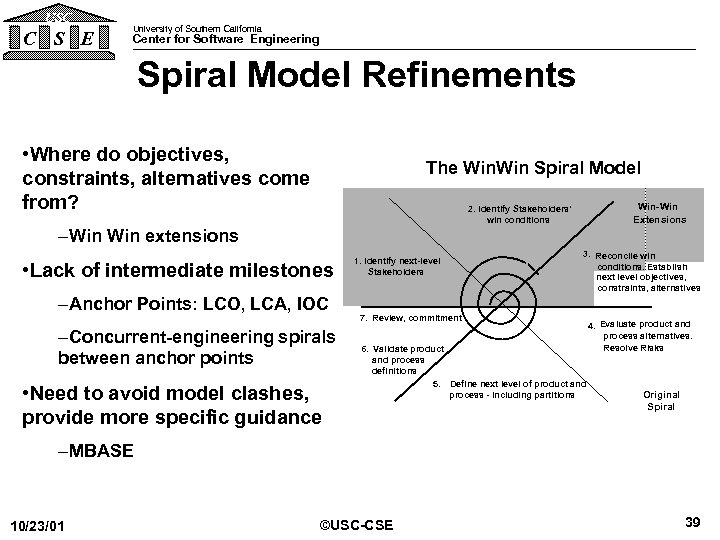

USC C S E University of Southern California Center for Software Engineering Spiral Model Refinements • Where do objectives, constraints, alternatives come from? The Win Spiral Model Win-Win Extensions 2. Identify Stakeholders’ win conditions –Win extensions • Lack of intermediate milestones –Anchor Points: LCO, LCA, IOC –Concurrent-engineering spirals between anchor points • Need to avoid model clashes, provide more specific guidance 1. Identify next-level Stakeholders 3. Reconcile win conditions. Establish next level objectives, constraints, alternatives 7. Review, commitment 6. Validate product and process definitions 5. Define next level of product and process - including partitions 4. Evaluate product and process alternatives. Resolve Risks Original Spiral –MBASE 10/23/01 ©USC-CSE 39

USC C S E University of Southern California Center for Software Engineering Spiral Model Refinements • Where do objectives, constraints, alternatives come from? The Win Spiral Model Win-Win Extensions 2. Identify Stakeholders’ win conditions –Win extensions • Lack of intermediate milestones –Anchor Points: LCO, LCA, IOC –Concurrent-engineering spirals between anchor points • Need to avoid model clashes, provide more specific guidance 1. Identify next-level Stakeholders 3. Reconcile win conditions. Establish next level objectives, constraints, alternatives 7. Review, commitment 6. Validate product and process definitions 5. Define next level of product and process - including partitions 4. Evaluate product and process alternatives. Resolve Risks Original Spiral –MBASE 10/23/01 ©USC-CSE 39

USC C S E University of Southern California Center for Software Engineering Life Cycle Anchor Points • Common System/Software stakeholder commitment points – Defined in concert with Government, industry affiliates – Coordinated with Rational’s Unified Software Development Process • Life Cycle Objectives (LCO) – Stakeholders’ commitment to support system architecting – Like getting engaged • Life Cycle Architecture (LCA) – Stakeholders’ commitment to support full life cycle – Like getting married • Initial Operational Capability (IOC) – Stakeholders’ commitment to support operations – Like having your first child 10/23/01 ©USC-CSE 40

USC C S E University of Southern California Center for Software Engineering Life Cycle Anchor Points • Common System/Software stakeholder commitment points – Defined in concert with Government, industry affiliates – Coordinated with Rational’s Unified Software Development Process • Life Cycle Objectives (LCO) – Stakeholders’ commitment to support system architecting – Like getting engaged • Life Cycle Architecture (LCA) – Stakeholders’ commitment to support full life cycle – Like getting married • Initial Operational Capability (IOC) – Stakeholders’ commitment to support operations – Like having your first child 10/23/01 ©USC-CSE 40

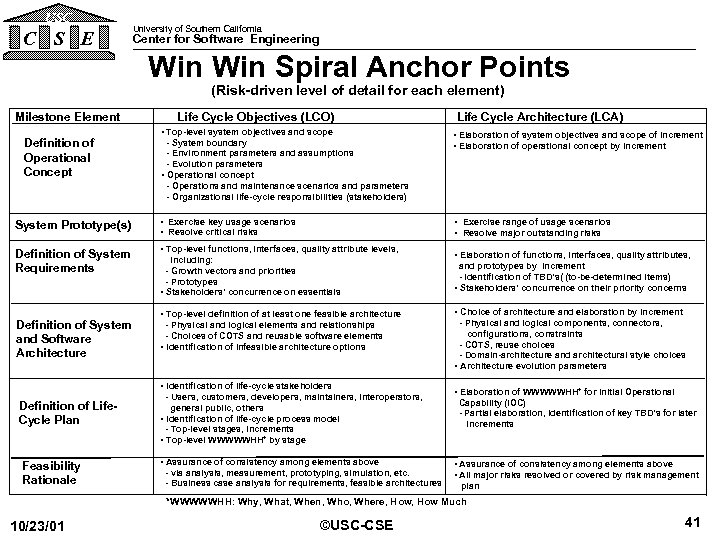

USC C S E University of Southern California Center for Software Engineering Win Spiral Anchor Points (Risk-driven level of detail for each element) Milestone Element Definition of Operational Concept Life Cycle Objectives (LCO) • Top-level system objectives and scope - System boundary - Environment parameters and assumptions - Evolution parameters • Operational concept - Operations and maintenance scenarios and parameters - Organizational life-cycle responsibilities (stakeholders) System Prototype(s) • Exercise key usage scenarios • Resolve critical risks Definition of System Requirements • Top-level functions, interfaces, quality attribute levels, Definition of System and Software Architecture Feasibility Rationale • Elaboration of system objectives and scope of increment • Elaboration of operational concept by increment • Exercise range of usage scenarios • Resolve major outstanding risks including: - Growth vectors and priorities - Prototypes • Stakeholders’ concurrence on essentials • Top-level definition of at least one feasible architecture - Physical and logical elements and relationships - Choices of COTS and reusable software elements • Identification of infeasible architecture options • Identification of life-cycle stakeholders Definition of Life. Cycle Plan Life Cycle Architecture (LCA) - Users, customers, developers, maintainers, interoperators, general public, others • Identification of life-cycle process model - Top-level stages, increments • Top-level WWWWWHH* by stage • Assurance of consistency among elements above - via analysis, measurement, prototyping, simulation, etc. - Business case analysis for requirements, feasible architectures • Elaboration of functions, interfaces, quality attributes, and prototypes by increment - Identification of TBD’s( (to-be-determined items) • Stakeholders’ concurrence on their priority concerns • Choice of architecture and elaboration by increment - Physical and logical components, connectors, configurations, constraints - COTS, reuse choices - Domain-architecture and architectural style choices • Architecture evolution parameters • Elaboration of WWWWWHH* for Initial Operational Capability (IOC) - Partial elaboration, identification of key TBD’s for later increments • Assurance of consistency among elements above • All major risks resolved or covered by risk management plan *WWWWWHH: Why, What, When, Who, Where, How Much 10/23/01 ©USC-CSE 41

USC C S E University of Southern California Center for Software Engineering Win Spiral Anchor Points (Risk-driven level of detail for each element) Milestone Element Definition of Operational Concept Life Cycle Objectives (LCO) • Top-level system objectives and scope - System boundary - Environment parameters and assumptions - Evolution parameters • Operational concept - Operations and maintenance scenarios and parameters - Organizational life-cycle responsibilities (stakeholders) System Prototype(s) • Exercise key usage scenarios • Resolve critical risks Definition of System Requirements • Top-level functions, interfaces, quality attribute levels, Definition of System and Software Architecture Feasibility Rationale • Elaboration of system objectives and scope of increment • Elaboration of operational concept by increment • Exercise range of usage scenarios • Resolve major outstanding risks including: - Growth vectors and priorities - Prototypes • Stakeholders’ concurrence on essentials • Top-level definition of at least one feasible architecture - Physical and logical elements and relationships - Choices of COTS and reusable software elements • Identification of infeasible architecture options • Identification of life-cycle stakeholders Definition of Life. Cycle Plan Life Cycle Architecture (LCA) - Users, customers, developers, maintainers, interoperators, general public, others • Identification of life-cycle process model - Top-level stages, increments • Top-level WWWWWHH* by stage • Assurance of consistency among elements above - via analysis, measurement, prototyping, simulation, etc. - Business case analysis for requirements, feasible architectures • Elaboration of functions, interfaces, quality attributes, and prototypes by increment - Identification of TBD’s( (to-be-determined items) • Stakeholders’ concurrence on their priority concerns • Choice of architecture and elaboration by increment - Physical and logical components, connectors, configurations, constraints - COTS, reuse choices - Domain-architecture and architectural style choices • Architecture evolution parameters • Elaboration of WWWWWHH* for Initial Operational Capability (IOC) - Partial elaboration, identification of key TBD’s for later increments • Assurance of consistency among elements above • All major risks resolved or covered by risk management plan *WWWWWHH: Why, What, When, Who, Where, How Much 10/23/01 ©USC-CSE 41

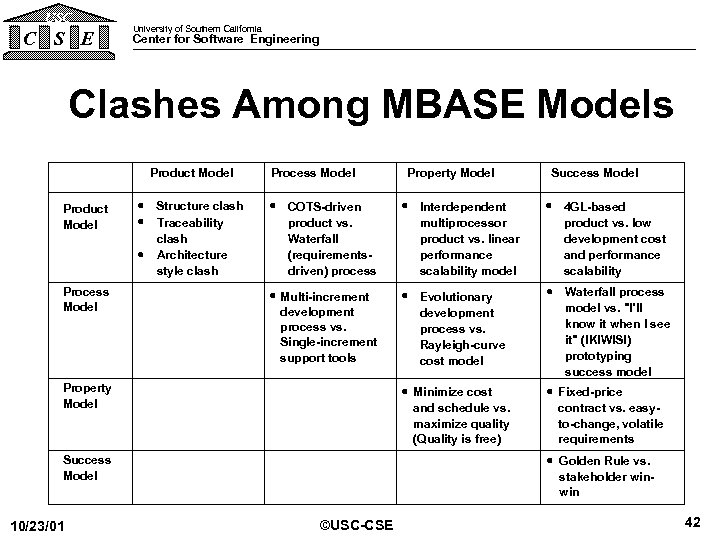

USC C S E University of Southern California Center for Software Engineering Clashes Among MBASE Models Product Model Process Model · Structure clash · Traceability clash · Architecture style clash Process Model Success Model · COTS-driven product vs. Waterfall (requirementsdriven) process · Interdependent multiprocessor product vs. linear performance scalability model · 4 GL-based product vs. low development cost and performance scalability · Multi-increment development process vs. Single-increment support tools · Evolutionary development process vs. Rayleigh-curve cost model · Waterfall process model vs. "I'll know it when I see it" (IKIWISI) prototyping success model · Minimize cost and schedule vs. maximize quality (Quality is free) · Fixed-price contract vs. easyto-change, volatile requirements Property Model · Golden Rule vs. stakeholder winwin Success Model 10/23/01 Property Model ©USC-CSE 42

USC C S E University of Southern California Center for Software Engineering Clashes Among MBASE Models Product Model Process Model · Structure clash · Traceability clash · Architecture style clash Process Model Success Model · COTS-driven product vs. Waterfall (requirementsdriven) process · Interdependent multiprocessor product vs. linear performance scalability model · 4 GL-based product vs. low development cost and performance scalability · Multi-increment development process vs. Single-increment support tools · Evolutionary development process vs. Rayleigh-curve cost model · Waterfall process model vs. "I'll know it when I see it" (IKIWISI) prototyping success model · Minimize cost and schedule vs. maximize quality (Quality is free) · Fixed-price contract vs. easyto-change, volatile requirements Property Model · Golden Rule vs. stakeholder winwin Success Model 10/23/01 Property Model ©USC-CSE 42

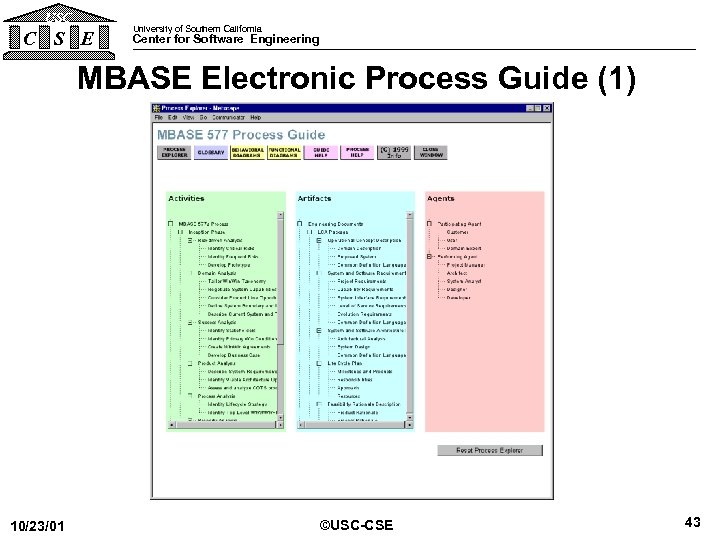

USC C S E University of Southern California Center for Software Engineering MBASE Electronic Process Guide (1) 10/23/01 ©USC-CSE 43

USC C S E University of Southern California Center for Software Engineering MBASE Electronic Process Guide (1) 10/23/01 ©USC-CSE 43

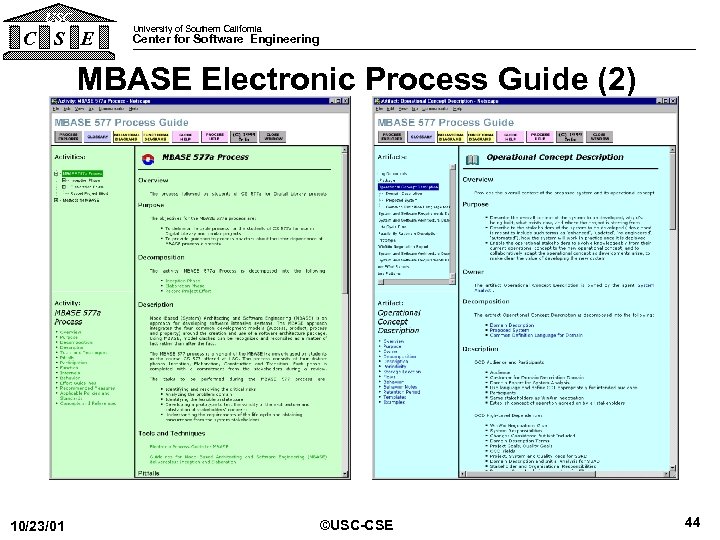

USC C S E University of Southern California Center for Software Engineering MBASE Electronic Process Guide (2) 10/23/01 ©USC-CSE 44

USC C S E University of Southern California Center for Software Engineering MBASE Electronic Process Guide (2) 10/23/01 ©USC-CSE 44

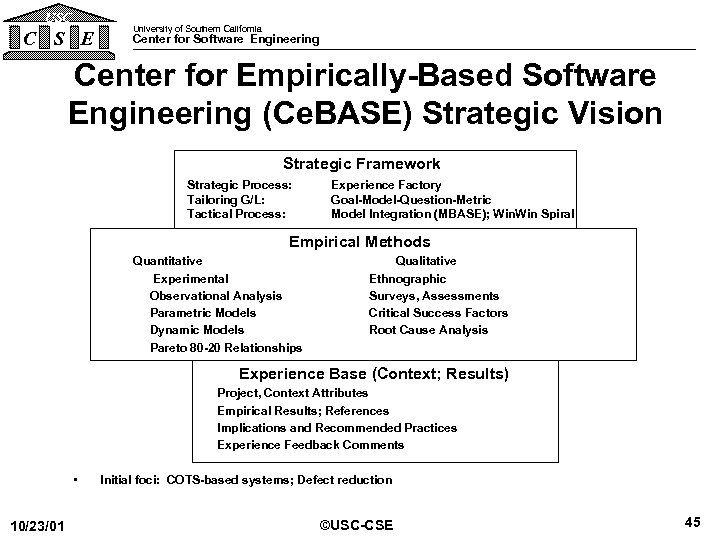

USC C S E University of Southern California Center for Software Engineering Center for Empirically-Based Software Engineering (Ce. BASE) Strategic Vision Strategic Framework Strategic Process: Tailoring G/L: Tactical Process: Experience Factory Goal-Model-Question-Metric Model Integration (MBASE); Win Spiral Empirical Methods Quantitative Experimental Observational Analysis Parametric Models Dynamic Models Pareto 80 -20 Relationships Qualitative Ethnographic Surveys, Assessments Critical Success Factors Root Cause Analysis Experience Base (Context; Results) Project, Context Attributes Empirical Results; References Implications and Recommended Practices Experience Feedback Comments • 10/23/01 Initial foci: COTS-based systems; Defect reduction ©USC-CSE 45

USC C S E University of Southern California Center for Software Engineering Center for Empirically-Based Software Engineering (Ce. BASE) Strategic Vision Strategic Framework Strategic Process: Tailoring G/L: Tactical Process: Experience Factory Goal-Model-Question-Metric Model Integration (MBASE); Win Spiral Empirical Methods Quantitative Experimental Observational Analysis Parametric Models Dynamic Models Pareto 80 -20 Relationships Qualitative Ethnographic Surveys, Assessments Critical Success Factors Root Cause Analysis Experience Base (Context; Results) Project, Context Attributes Empirical Results; References Implications and Recommended Practices Experience Feedback Comments • 10/23/01 Initial foci: COTS-based systems; Defect reduction ©USC-CSE 45

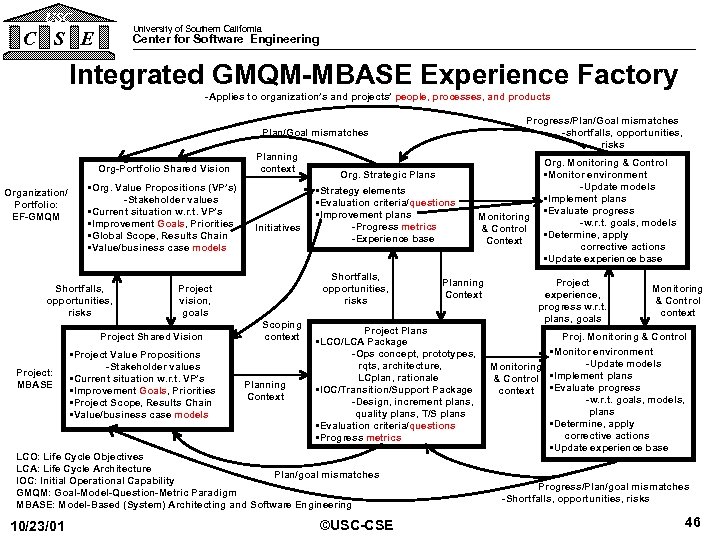

USC University of Southern California C S E Center for Software Engineering Integrated GMQM-MBASE Experience Factory -Applies to organization’s and projects’ people, processes, and products Progress/Plan/Goal mismatches -shortfalls, opportunities, risks Plan/Goal mismatches Org-Portfolio Shared Vision Organization/ Portfolio: EF-GMQM • Org. Value Propositions (VP’s) -Stakeholder values • Current situation w. r. t. VP’s • Improvement Goals, Priorities • Global Scope, Results Chain • Value/business case models Shortfalls, opportunities, risks Initiatives • Project Value Propositions -Stakeholder values • Current situation w. r. t. VP’s • Improvement Goals, Priorities • Project Scope, Results Chain • Value/business case models Org. Strategic Plans • Strategy elements • Evaluation criteria/questions • Improvement plans -Progress metrics -Experience base Shortfalls, opportunities, risks Project vision, goals Project Shared Vision Project: MBASE Planning context Scoping context Planning Context Project Plans • LCO/LCA Package -Ops concept, prototypes, rqts, architecture, LCplan, rationale • IOC/Transition/Support Package -Design, increment plans, quality plans, T/S plans • Evaluation criteria/questions • Progress metrics LCO: Life Cycle Objectives LCA: Life Cycle Architecture Plan/goal mismatches IOC: Initial Operational Capability GMQM: Goal-Model-Question-Metric Paradigm MBASE: Model-Based (System) Architecting and Software Engineering 10/23/01 Monitoring & Control Context ©USC-CSE Org. Monitoring & Control • Monitor environment -Update models • Implement plans • Evaluate progress -w. r. t. goals, models • Determine, apply corrective actions • Update experience base Project experience, progress w. r. t. plans, goals Monitoring & Control context Proj. Monitoring & Control • Monitor environment -Update models Monitoring • Implement plans & Control • Evaluate progress context -w. r. t. goals, models, plans • Determine, apply corrective actions • Update experience base Progress/Plan/goal mismatches -Shortfalls, opportunities, risks 46

USC University of Southern California C S E Center for Software Engineering Integrated GMQM-MBASE Experience Factory -Applies to organization’s and projects’ people, processes, and products Progress/Plan/Goal mismatches -shortfalls, opportunities, risks Plan/Goal mismatches Org-Portfolio Shared Vision Organization/ Portfolio: EF-GMQM • Org. Value Propositions (VP’s) -Stakeholder values • Current situation w. r. t. VP’s • Improvement Goals, Priorities • Global Scope, Results Chain • Value/business case models Shortfalls, opportunities, risks Initiatives • Project Value Propositions -Stakeholder values • Current situation w. r. t. VP’s • Improvement Goals, Priorities • Project Scope, Results Chain • Value/business case models Org. Strategic Plans • Strategy elements • Evaluation criteria/questions • Improvement plans -Progress metrics -Experience base Shortfalls, opportunities, risks Project vision, goals Project Shared Vision Project: MBASE Planning context Scoping context Planning Context Project Plans • LCO/LCA Package -Ops concept, prototypes, rqts, architecture, LCplan, rationale • IOC/Transition/Support Package -Design, increment plans, quality plans, T/S plans • Evaluation criteria/questions • Progress metrics LCO: Life Cycle Objectives LCA: Life Cycle Architecture Plan/goal mismatches IOC: Initial Operational Capability GMQM: Goal-Model-Question-Metric Paradigm MBASE: Model-Based (System) Architecting and Software Engineering 10/23/01 Monitoring & Control Context ©USC-CSE Org. Monitoring & Control • Monitor environment -Update models • Implement plans • Evaluate progress -w. r. t. goals, models • Determine, apply corrective actions • Update experience base Project experience, progress w. r. t. plans, goals Monitoring & Control context Proj. Monitoring & Control • Monitor environment -Update models Monitoring • Implement plans & Control • Evaluate progress context -w. r. t. goals, models, plans • Determine, apply corrective actions • Update experience base Progress/Plan/goal mismatches -Shortfalls, opportunities, risks 46

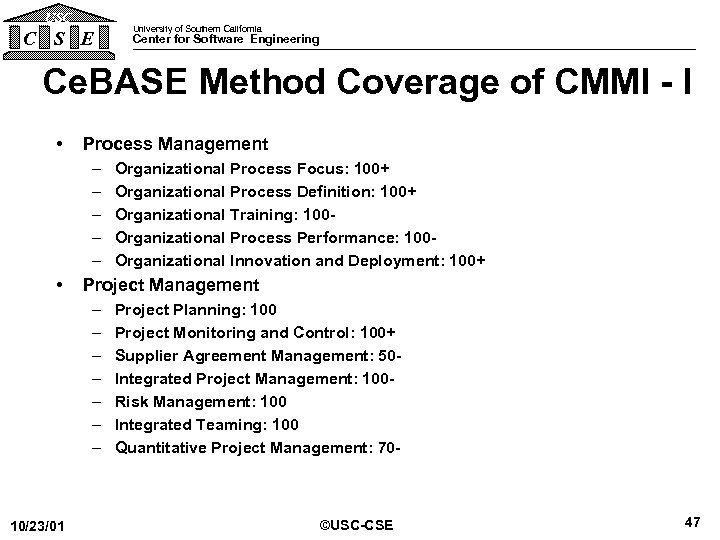

USC C S E University of Southern California Center for Software Engineering Ce. BASE Method Coverage of CMMI - I • Process Management – – – • Project Management – – – – 10/23/01 Organizational Process Focus: 100+ Organizational Process Definition: 100+ Organizational Training: 100 Organizational Process Performance: 100 Organizational Innovation and Deployment: 100+ Project Planning: 100 Project Monitoring and Control: 100+ Supplier Agreement Management: 50 Integrated Project Management: 100 Risk Management: 100 Integrated Teaming: 100 Quantitative Project Management: 70 - ©USC-CSE 47

USC C S E University of Southern California Center for Software Engineering Ce. BASE Method Coverage of CMMI - I • Process Management – – – • Project Management – – – – 10/23/01 Organizational Process Focus: 100+ Organizational Process Definition: 100+ Organizational Training: 100 Organizational Process Performance: 100 Organizational Innovation and Deployment: 100+ Project Planning: 100 Project Monitoring and Control: 100+ Supplier Agreement Management: 50 Integrated Project Management: 100 Risk Management: 100 Integrated Teaming: 100 Quantitative Project Management: 70 - ©USC-CSE 47

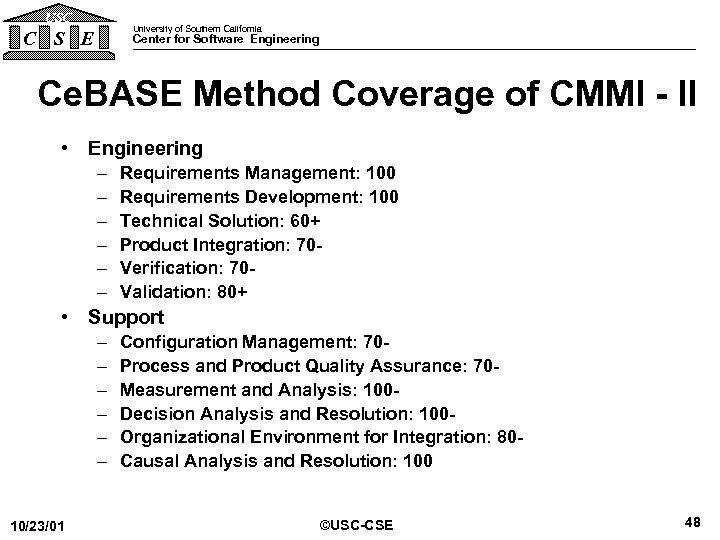

USC University of Southern California C S E Center for Software Engineering Ce. BASE Method Coverage of CMMI - II • Engineering – – – Requirements Management: 100 Requirements Development: 100 Technical Solution: 60+ Product Integration: 70 Verification: 70 Validation: 80+ • Support – – – 10/23/01 Configuration Management: 70 Process and Product Quality Assurance: 70 Measurement and Analysis: 100 Decision Analysis and Resolution: 100 Organizational Environment for Integration: 80 Causal Analysis and Resolution: 100 ©USC-CSE 48

USC University of Southern California C S E Center for Software Engineering Ce. BASE Method Coverage of CMMI - II • Engineering – – – Requirements Management: 100 Requirements Development: 100 Technical Solution: 60+ Product Integration: 70 Verification: 70 Validation: 80+ • Support – – – 10/23/01 Configuration Management: 70 Process and Product Quality Assurance: 70 Measurement and Analysis: 100 Decision Analysis and Resolution: 100 Organizational Environment for Integration: 80 Causal Analysis and Resolution: 100 ©USC-CSE 48

USC University of Southern California C S E Center for Software Engineering Outline • COCOMO II Overview • Overview of Emerging Extensions – – – – COTS Integration (COCOTS) Quality: Delivered Defect Density (COQUALMO) Phase Distributions (COPSEMO) Rapid Application Development Schedule (CORADMO) Productivity Improvement (COPROMO) System Engineering (COSYSMO) Tool Effects Code Count. TM • Related USC-CSE Research • Backup charts 10/23/01 ©USC-CSE 49

USC University of Southern California C S E Center for Software Engineering Outline • COCOMO II Overview • Overview of Emerging Extensions – – – – COTS Integration (COCOTS) Quality: Delivered Defect Density (COQUALMO) Phase Distributions (COPSEMO) Rapid Application Development Schedule (CORADMO) Productivity Improvement (COPROMO) System Engineering (COSYSMO) Tool Effects Code Count. TM • Related USC-CSE Research • Backup charts 10/23/01 ©USC-CSE 49

USC C S E University of Southern California Center for Software Engineering Backup Charts • • • 10/23/01 COCOMO II COCOTS COQUALMO CORADMO COSYSMO ©USC-CSE 50

USC C S E University of Southern California Center for Software Engineering Backup Charts • • • 10/23/01 COCOMO II COCOTS COQUALMO CORADMO COSYSMO ©USC-CSE 50

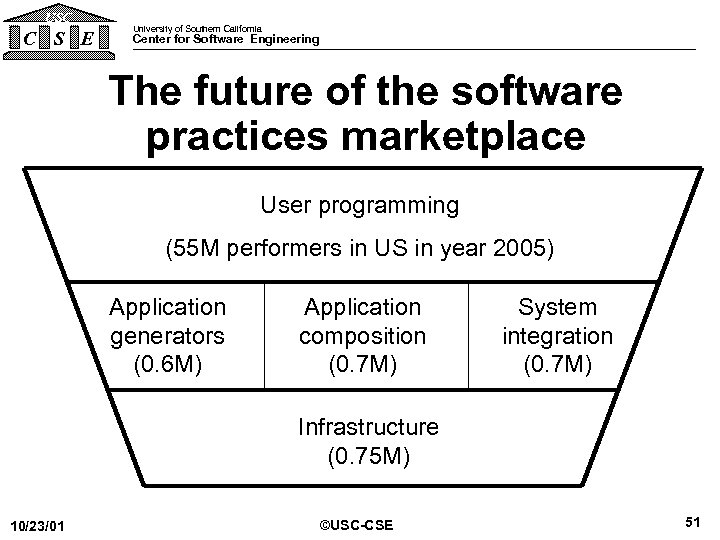

USC C S E University of Southern California Center for Software Engineering The future of the software practices marketplace User programming (55 M performers in US in year 2005) Application generators (0. 6 M) Application composition (0. 7 M) System integration (0. 7 M) Infrastructure (0. 75 M) 10/23/01 ©USC-CSE 51

USC C S E University of Southern California Center for Software Engineering The future of the software practices marketplace User programming (55 M performers in US in year 2005) Application generators (0. 6 M) Application composition (0. 7 M) System integration (0. 7 M) Infrastructure (0. 75 M) 10/23/01 ©USC-CSE 51

USC C S E University of Southern California Center for Software Engineering COCOMO II Coverage of Future SW Practices Sectors • User Programming: No need for cost model • Applications Composition: Use application points - Count (weight) screens, reports, 3 GL routines • System Integration; development of applications generators and infrastructure software - Prototyping: Applications composition model - Early design: Function Points and/or Source Statements and 7 cost drivers - Post-architecture: Source Statements and/or Function Points and 17 cost drivers - Stronger reuse/reengineering model 10/23/01 ©USC-CSE 52

USC C S E University of Southern California Center for Software Engineering COCOMO II Coverage of Future SW Practices Sectors • User Programming: No need for cost model • Applications Composition: Use application points - Count (weight) screens, reports, 3 GL routines • System Integration; development of applications generators and infrastructure software - Prototyping: Applications composition model - Early design: Function Points and/or Source Statements and 7 cost drivers - Post-architecture: Source Statements and/or Function Points and 17 cost drivers - Stronger reuse/reengineering model 10/23/01 ©USC-CSE 52

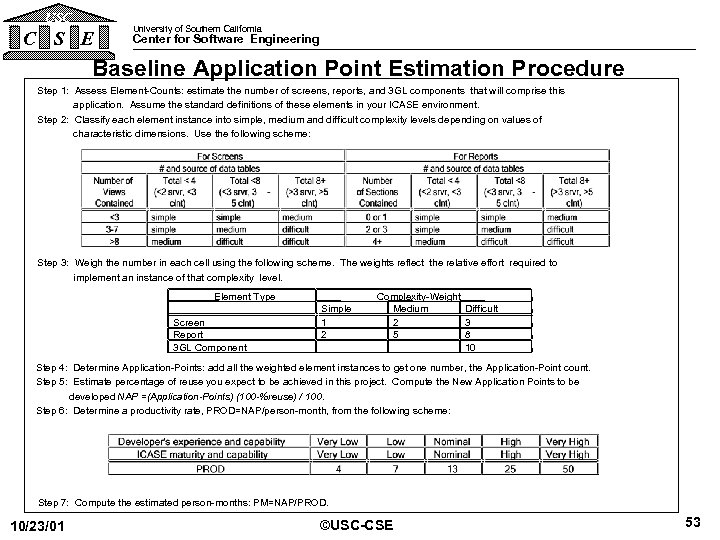

USC C S E University of Southern California Center for Software Engineering Baseline Application Point Estimation Procedure Step 1: Assess Element-Counts: estimate the number of screens, reports, and 3 GL components that will comprise this application. Assume the standard definitions of these elements in your ICASE environment. Step 2: Classify each element instance into simple, medium and difficult complexity levels depending on values of characteristic dimensions. Use the following scheme: Step 3: Weigh the number in each cell using the following scheme. The weights reflect the relative effort required to implement an instance of that complexity level. Element Type Screen Report 3 GL Component Simple 1 2 Complexity-Weight Medium Difficult 2 3 5 8 10 Step 4: Determine Application-Points: add all the weighted element instances to get one number, the Application-Point count. Step 5: Estimate percentage of reuse you expect to be achieved in this project. Compute the New Application Points to be developed NAP =(Application-Points) (100 -%reuse) / 100. Step 6: Determine a productivity rate, PROD=NAP/person-month, from the following scheme: Step 7: Compute the estimated person-months: PM=NAP/PROD. 10/23/01 ©USC-CSE 53

USC C S E University of Southern California Center for Software Engineering Baseline Application Point Estimation Procedure Step 1: Assess Element-Counts: estimate the number of screens, reports, and 3 GL components that will comprise this application. Assume the standard definitions of these elements in your ICASE environment. Step 2: Classify each element instance into simple, medium and difficult complexity levels depending on values of characteristic dimensions. Use the following scheme: Step 3: Weigh the number in each cell using the following scheme. The weights reflect the relative effort required to implement an instance of that complexity level. Element Type Screen Report 3 GL Component Simple 1 2 Complexity-Weight Medium Difficult 2 3 5 8 10 Step 4: Determine Application-Points: add all the weighted element instances to get one number, the Application-Point count. Step 5: Estimate percentage of reuse you expect to be achieved in this project. Compute the New Application Points to be developed NAP =(Application-Points) (100 -%reuse) / 100. Step 6: Determine a productivity rate, PROD=NAP/person-month, from the following scheme: Step 7: Compute the estimated person-months: PM=NAP/PROD. 10/23/01 ©USC-CSE 53

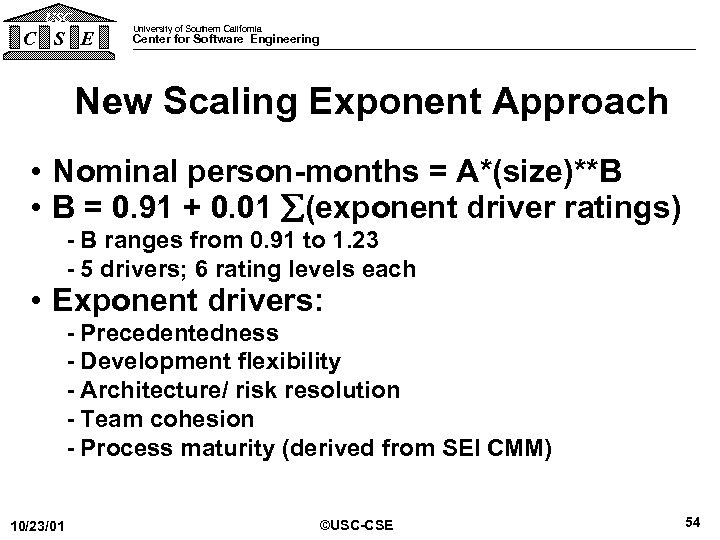

USC C S E University of Southern California Center for Software Engineering New Scaling Exponent Approach • Nominal person-months = A*(size)**B • B = 0. 91 + 0. 01 (exponent driver ratings) - B ranges from 0. 91 to 1. 23 - 5 drivers; 6 rating levels each • Exponent drivers: - Precedentedness - Development flexibility - Architecture/ risk resolution - Team cohesion - Process maturity (derived from SEI CMM) 10/23/01 ©USC-CSE 54

USC C S E University of Southern California Center for Software Engineering New Scaling Exponent Approach • Nominal person-months = A*(size)**B • B = 0. 91 + 0. 01 (exponent driver ratings) - B ranges from 0. 91 to 1. 23 - 5 drivers; 6 rating levels each • Exponent drivers: - Precedentedness - Development flexibility - Architecture/ risk resolution - Team cohesion - Process maturity (derived from SEI CMM) 10/23/01 ©USC-CSE 54

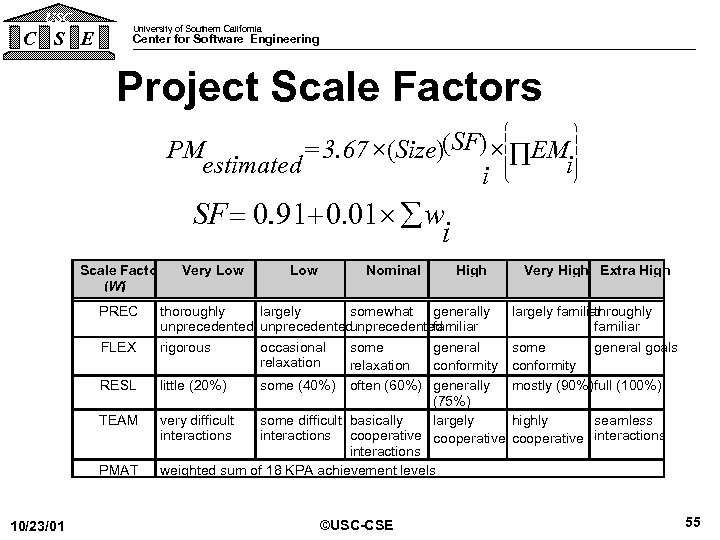

USC C S E University of Southern California Center for Software Engineering Project Scale Factors æ ç ç è = 3. 67 ´(Size)(SF) ´ Õ EM PM estimated i i ö ÷ ÷ ø SF = 0. 91+ 0. 01´ å w. i Scale Factors Very Low (Wi ) PREC FLEX RESL TEAM PMAT 10/23/01 Low Nominal High thoroughly largely somewhat generally unprecedented familiar rigorous occasional some general relaxation conformity little (20%) some (40%) Very High Extra High largely familiar throughly familiar some general goals conformity mostly (90%)full (100%) often (60%) generally (75%) very difficult some difficult basically largely highly seamless interactions cooperative interactions weighted sum of 18 KPA achievement levels ©USC-CSE 55

USC C S E University of Southern California Center for Software Engineering Project Scale Factors æ ç ç è = 3. 67 ´(Size)(SF) ´ Õ EM PM estimated i i ö ÷ ÷ ø SF = 0. 91+ 0. 01´ å w. i Scale Factors Very Low (Wi ) PREC FLEX RESL TEAM PMAT 10/23/01 Low Nominal High thoroughly largely somewhat generally unprecedented familiar rigorous occasional some general relaxation conformity little (20%) some (40%) Very High Extra High largely familiar throughly familiar some general goals conformity mostly (90%)full (100%) often (60%) generally (75%) very difficult some difficult basically largely highly seamless interactions cooperative interactions weighted sum of 18 KPA achievement levels ©USC-CSE 55

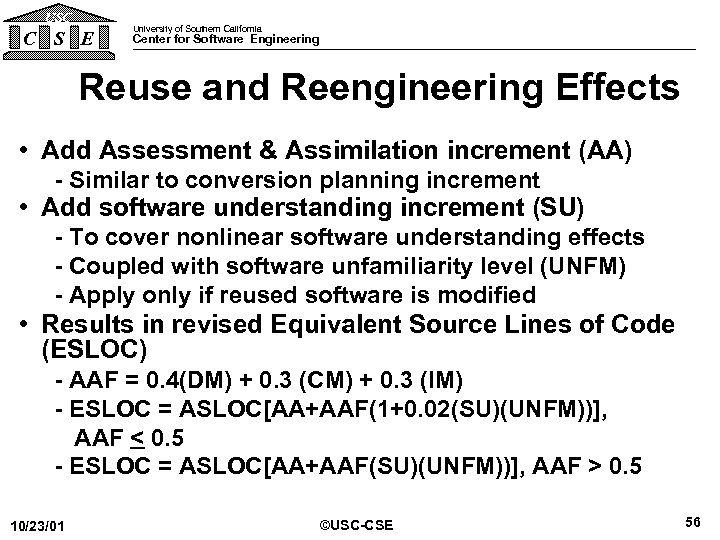

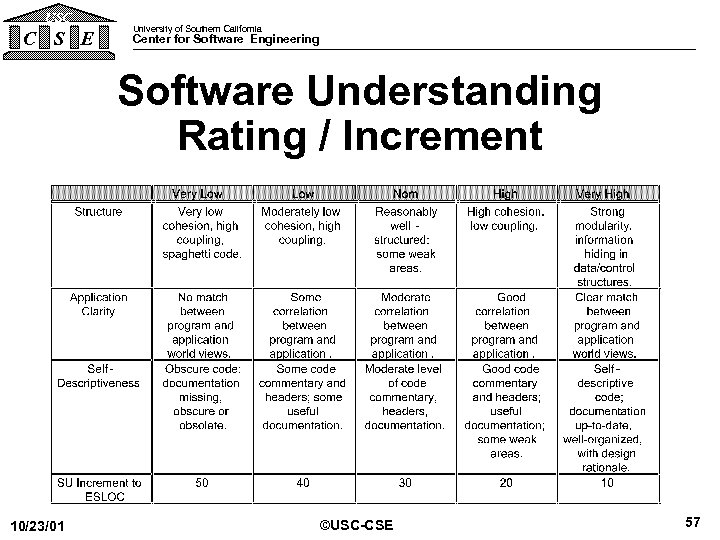

USC C S E University of Southern California Center for Software Engineering Reuse and Reengineering Effects • Add Assessment & Assimilation increment (AA) - Similar to conversion planning increment • Add software understanding increment (SU) - To cover nonlinear software understanding effects - Coupled with software unfamiliarity level (UNFM) - Apply only if reused software is modified • Results in revised Equivalent Source Lines of Code (ESLOC) - AAF = 0. 4(DM) + 0. 3 (CM) + 0. 3 (IM) - ESLOC = ASLOC[AA+AAF(1+0. 02(SU)(UNFM))], AAF < 0. 5 - ESLOC = ASLOC[AA+AAF(SU)(UNFM))], AAF > 0. 5 10/23/01 ©USC-CSE 56

USC C S E University of Southern California Center for Software Engineering Reuse and Reengineering Effects • Add Assessment & Assimilation increment (AA) - Similar to conversion planning increment • Add software understanding increment (SU) - To cover nonlinear software understanding effects - Coupled with software unfamiliarity level (UNFM) - Apply only if reused software is modified • Results in revised Equivalent Source Lines of Code (ESLOC) - AAF = 0. 4(DM) + 0. 3 (CM) + 0. 3 (IM) - ESLOC = ASLOC[AA+AAF(1+0. 02(SU)(UNFM))], AAF < 0. 5 - ESLOC = ASLOC[AA+AAF(SU)(UNFM))], AAF > 0. 5 10/23/01 ©USC-CSE 56

USC C S E University of Southern California Center for Software Engineering Software Understanding Rating / Increment 10/23/01 ©USC-CSE 57

USC C S E University of Southern California Center for Software Engineering Software Understanding Rating / Increment 10/23/01 ©USC-CSE 57

USC C S E University of Southern California Center for Software Engineering Other Major COCOMO II Changes • Range versus point estimates • Requirements Volatility (Evolution) included in Size • Multiplicative cost driver changes - Product CD’s - Platform CD’s - Personnel CD’s - Project CD’s • Maintenance model includes SU, UNFM factors from reuse model – Applied to subset of legacy code undergoing change 10/23/01 ©USC-CSE 58

USC C S E University of Southern California Center for Software Engineering Other Major COCOMO II Changes • Range versus point estimates • Requirements Volatility (Evolution) included in Size • Multiplicative cost driver changes - Product CD’s - Platform CD’s - Personnel CD’s - Project CD’s • Maintenance model includes SU, UNFM factors from reuse model – Applied to subset of legacy code undergoing change 10/23/01 ©USC-CSE 58

USC C S E University of Southern California Center for Software Engineering Process Maturity (PMAT) Effects – Effort reduction per maturity level, 100 KDSI project – Normalized for effects of other variables • Clark Ph. D. dissertation (112 projects) – Research model: 12 -23% per level – COCOMO II subsets: 9 -29% per level • COCOMO II. 1999 (161 projects) – 4 -11% per level • PMAT positive contribution is statistically significant 10/23/01 ©USC-CSE 59

USC C S E University of Southern California Center for Software Engineering Process Maturity (PMAT) Effects – Effort reduction per maturity level, 100 KDSI project – Normalized for effects of other variables • Clark Ph. D. dissertation (112 projects) – Research model: 12 -23% per level – COCOMO II subsets: 9 -29% per level • COCOMO II. 1999 (161 projects) – 4 -11% per level • PMAT positive contribution is statistically significant 10/23/01 ©USC-CSE 59

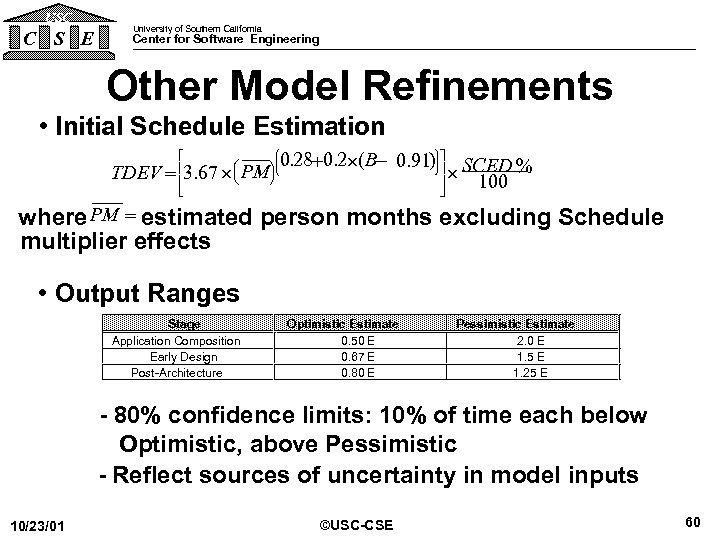

USC C S E University of Southern California Center for Software Engineering Other Model Refinements • Initial Schedule Estimation ù æ ö 0. 28+0. 2´(B- 0. 91) ú SCED % TDEV = 3. 67 ´ ç PMø ´ 100 è ú é ê ê ë ö ÷ ÷ ÷ ø æ ç ç ç è û PM = where estimated person months excluding Schedule multiplier effects • Output Ranges Stage Application Composition Early Design Post-Architecture Optimistic Estimate 0. 50 E 0. 67 E 0. 80 E Pessimistic Estimate 2. 0 E 1. 5 E 1. 25 E - 80% confidence limits: 10% of time each below Optimistic, above Pessimistic - Reflect sources of uncertainty in model inputs 10/23/01 ©USC-CSE 60

USC C S E University of Southern California Center for Software Engineering Other Model Refinements • Initial Schedule Estimation ù æ ö 0. 28+0. 2´(B- 0. 91) ú SCED % TDEV = 3. 67 ´ ç PMø ´ 100 è ú é ê ê ë ö ÷ ÷ ÷ ø æ ç ç ç è û PM = where estimated person months excluding Schedule multiplier effects • Output Ranges Stage Application Composition Early Design Post-Architecture Optimistic Estimate 0. 50 E 0. 67 E 0. 80 E Pessimistic Estimate 2. 0 E 1. 5 E 1. 25 E - 80% confidence limits: 10% of time each below Optimistic, above Pessimistic - Reflect sources of uncertainty in model inputs 10/23/01 ©USC-CSE 60

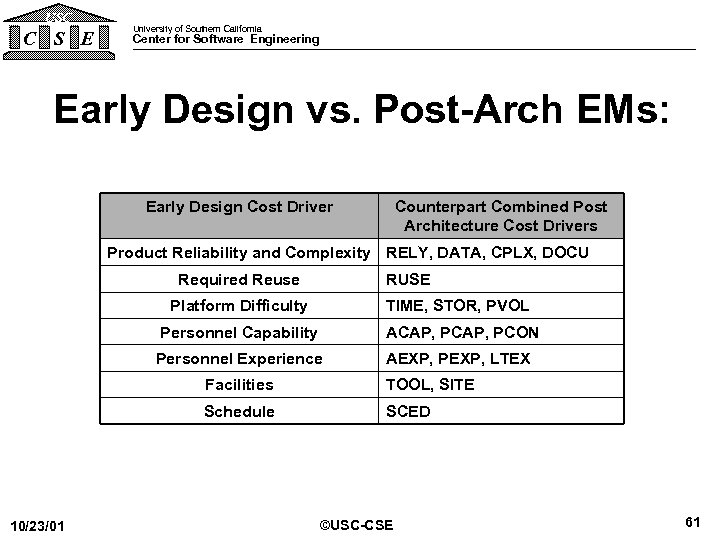

USC C S E University of Southern California Center for Software Engineering Early Design vs. Post-Arch EMs: Early Design Cost Driver Counterpart Combined Post Architecture Cost Drivers Product Reliability and Complexity RELY, DATA, CPLX, DOCU Required Reuse RUSE Platform Difficulty TIME, STOR, PVOL Personnel Capability ACAP, PCON Personnel Experience AEXP, PEXP, LTEX Facilities Schedule 10/23/01 TOOL, SITE SCED ©USC-CSE 61

USC C S E University of Southern California Center for Software Engineering Early Design vs. Post-Arch EMs: Early Design Cost Driver Counterpart Combined Post Architecture Cost Drivers Product Reliability and Complexity RELY, DATA, CPLX, DOCU Required Reuse RUSE Platform Difficulty TIME, STOR, PVOL Personnel Capability ACAP, PCON Personnel Experience AEXP, PEXP, LTEX Facilities Schedule 10/23/01 TOOL, SITE SCED ©USC-CSE 61

USC C S E University of Southern California Center for Software Engineering COCOTS Backup Charts • Development and Life Cycle Models • Research Highlights Since ARR 2000 • Data Highlights • New Glue Code Submodel Results • Next Steps • Benefits 10/23/01 ©USC-CSE 62

USC C S E University of Southern California Center for Software Engineering COCOTS Backup Charts • Development and Life Cycle Models • Research Highlights Since ARR 2000 • Data Highlights • New Glue Code Submodel Results • Next Steps • Benefits 10/23/01 ©USC-CSE 62

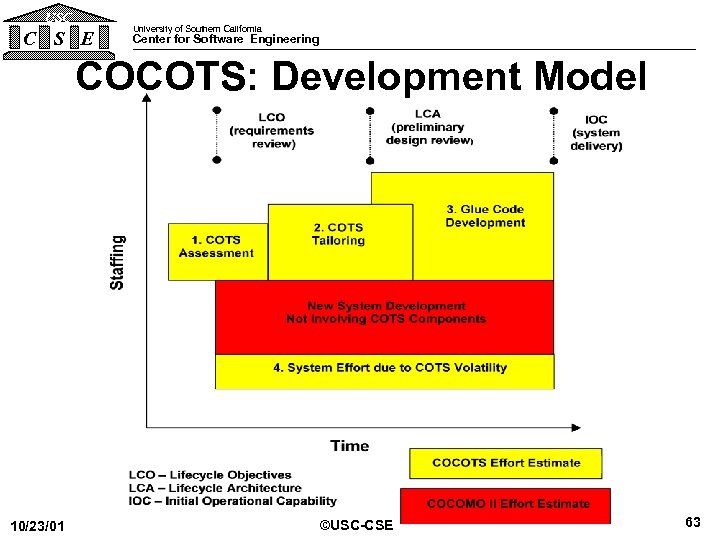

USC C S E University of Southern California Center for Software Engineering COCOTS: Development Model 10/23/01 ©USC-CSE 63

USC C S E University of Southern California Center for Software Engineering COCOTS: Development Model 10/23/01 ©USC-CSE 63

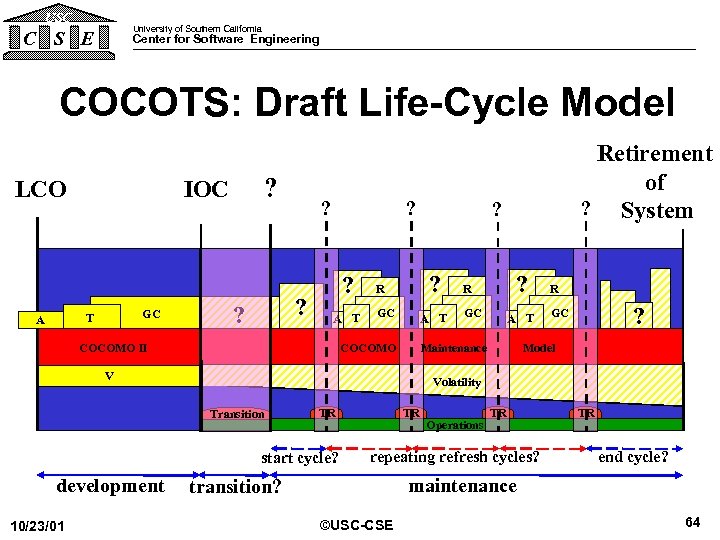

USC University of Southern California C S E Center for Software Engineering COCOTS: Draft Life-Cycle Model LCO GC T A ? IOC ? ? ? A T COCOMO II R GC ? ? ? A T COCOMO GC A T R ? GC Model Volatility Transition TR start cycle? 10/23/01 ? R Maintenance V development Retirement of ? System TR Operations TR repeating refresh cycles? TR end cycle? maintenance transition? ©USC-CSE 64

USC University of Southern California C S E Center for Software Engineering COCOTS: Draft Life-Cycle Model LCO GC T A ? IOC ? ? ? A T COCOMO II R GC ? ? ? A T COCOMO GC A T R ? GC Model Volatility Transition TR start cycle? 10/23/01 ? R Maintenance V development Retirement of ? System TR Operations TR repeating refresh cycles? TR end cycle? maintenance transition? ©USC-CSE 64

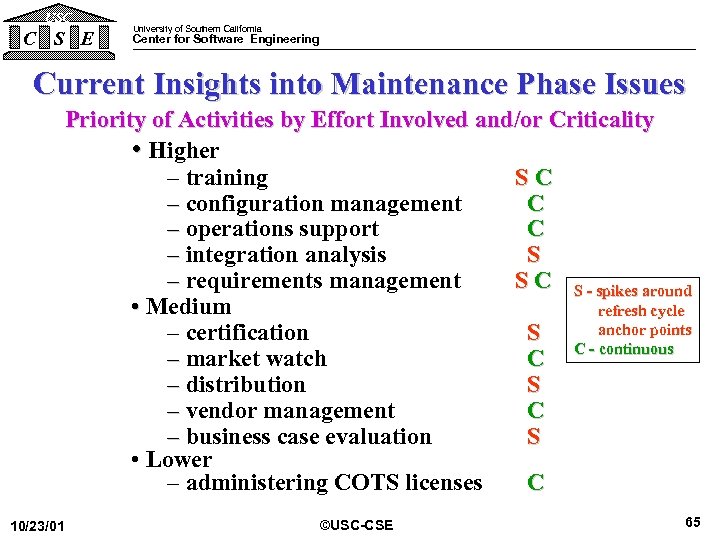

USC C S E University of Southern California Center for Software Engineering Current Insights into Maintenance Phase Issues Priority of Activities by Effort Involved and/or Criticality • Higher – training SC – configuration management C – operations support C – integration analysis S – requirements management S C S - spikes around • Medium refresh cycle anchor points – certification S C - continuous – market watch C – distribution S – vendor management C – business case evaluation S • Lower – administering COTS licenses C 10/23/01 ©USC-CSE 65

USC C S E University of Southern California Center for Software Engineering Current Insights into Maintenance Phase Issues Priority of Activities by Effort Involved and/or Criticality • Higher – training SC – configuration management C – operations support C – integration analysis S – requirements management S C S - spikes around • Medium refresh cycle anchor points – certification S C - continuous – market watch C – distribution S – vendor management C – business case evaluation S • Lower – administering COTS licenses C 10/23/01 ©USC-CSE 65

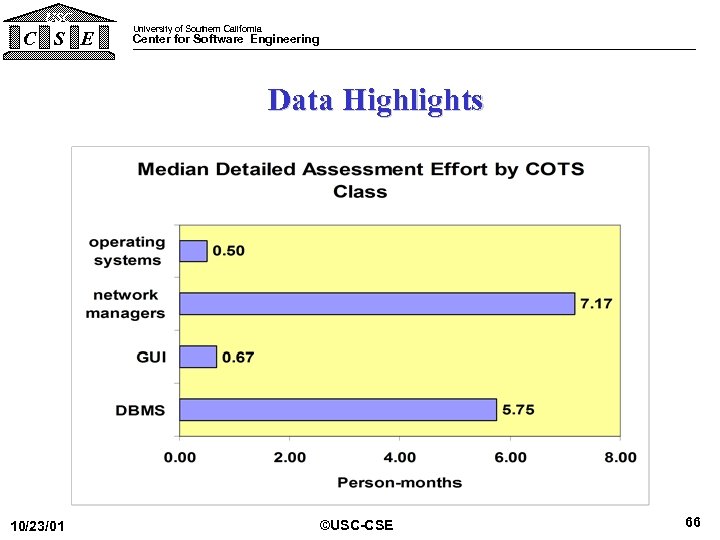

USC C S E University of Southern California Center for Software Engineering Data Highlights 10/23/01 ©USC-CSE 66

USC C S E University of Southern California Center for Software Engineering Data Highlights 10/23/01 ©USC-CSE 66

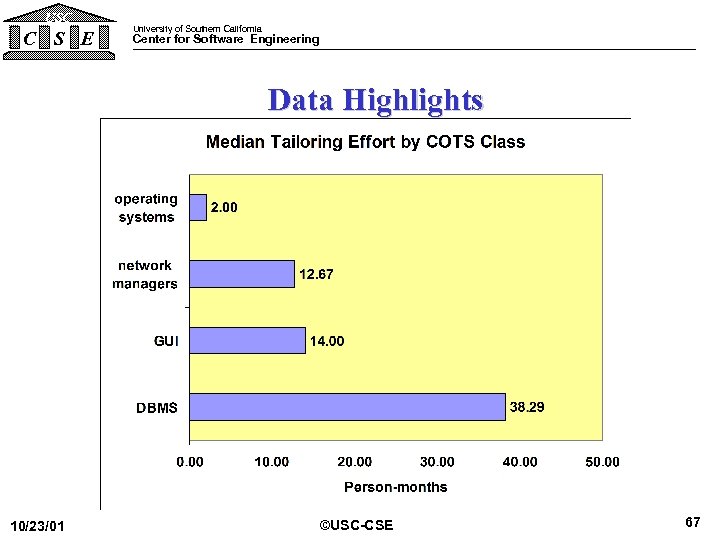

USC C S E University of Southern California Center for Software Engineering Data Highlights 10/23/01 ©USC-CSE 67

USC C S E University of Southern California Center for Software Engineering Data Highlights 10/23/01 ©USC-CSE 67

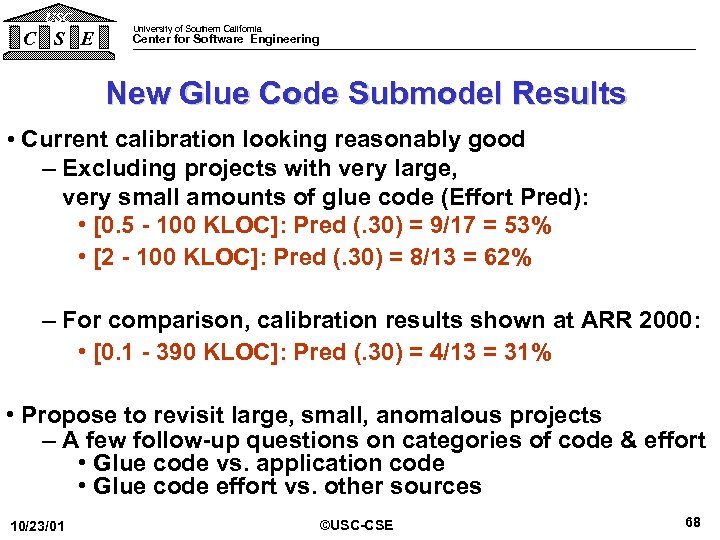

USC C S E University of Southern California Center for Software Engineering New Glue Code Submodel Results • Current calibration looking reasonably good – Excluding projects with very large, very small amounts of glue code (Effort Pred): • [0. 5 - 100 KLOC]: Pred (. 30) = 9/17 = 53% • [2 - 100 KLOC]: Pred (. 30) = 8/13 = 62% – For comparison, calibration results shown at ARR 2000: • [0. 1 - 390 KLOC]: Pred (. 30) = 4/13 = 31% • Propose to revisit large, small, anomalous projects – A few follow-up questions on categories of code & effort • Glue code vs. application code • Glue code effort vs. other sources 10/23/01 ©USC-CSE 68

USC C S E University of Southern California Center for Software Engineering New Glue Code Submodel Results • Current calibration looking reasonably good – Excluding projects with very large, very small amounts of glue code (Effort Pred): • [0. 5 - 100 KLOC]: Pred (. 30) = 9/17 = 53% • [2 - 100 KLOC]: Pred (. 30) = 8/13 = 62% – For comparison, calibration results shown at ARR 2000: • [0. 1 - 390 KLOC]: Pred (. 30) = 4/13 = 31% • Propose to revisit large, small, anomalous projects – A few follow-up questions on categories of code & effort • Glue code vs. application code • Glue code effort vs. other sources 10/23/01 ©USC-CSE 68

USC C S E University of Southern California Center for Software Engineering Benefits • Existing – Independent source of estimates – Checklist for effort sources – (Fairly) easy-to-use development phase tool • On the Horizon – Empirically supported, tightly calibrated, total lifecycle COTS estimation tool 10/23/01 ©USC-CSE 69

USC C S E University of Southern California Center for Software Engineering Benefits • Existing – Independent source of estimates – Checklist for effort sources – (Fairly) easy-to-use development phase tool • On the Horizon – Empirically supported, tightly calibrated, total lifecycle COTS estimation tool 10/23/01 ©USC-CSE 69

USC C S E University of Southern California Center for Software Engineering COQUALMO Backup Charts • • Current COQUALMO system Defect removal rating scales Defect removal estimates Multiplicative defect removal model • Orthogonal Defect Classification (ODC) extensions 10/23/01 ©USC-CSE 70

USC C S E University of Southern California Center for Software Engineering COQUALMO Backup Charts • • Current COQUALMO system Defect removal rating scales Defect removal estimates Multiplicative defect removal model • Orthogonal Defect Classification (ODC) extensions 10/23/01 ©USC-CSE 70

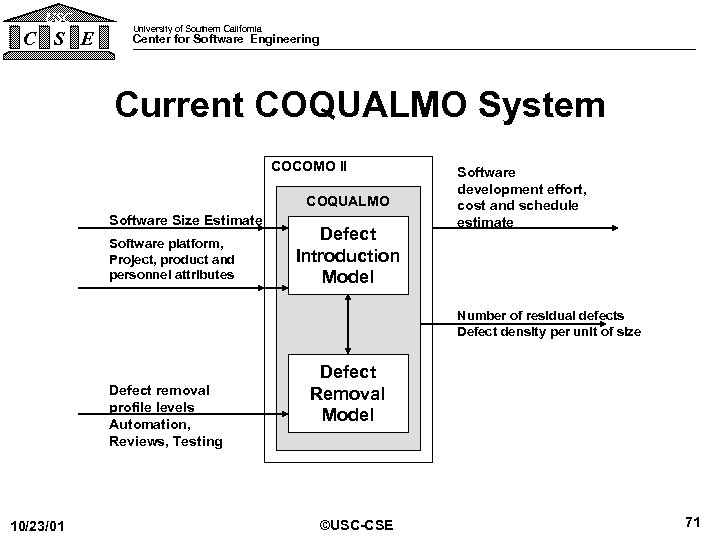

USC C S E University of Southern California Center for Software Engineering Current COQUALMO System COCOMO II COQUALMO Software Size Estimate Software platform, Project, product and personnel attributes Defect Introduction Model Software development effort, cost and schedule estimate Number of residual defects Defect density per unit of size Defect removal profile levels Automation, Reviews, Testing 10/23/01 Defect Removal Model ©USC-CSE 71

USC C S E University of Southern California Center for Software Engineering Current COQUALMO System COCOMO II COQUALMO Software Size Estimate Software platform, Project, product and personnel attributes Defect Introduction Model Software development effort, cost and schedule estimate Number of residual defects Defect density per unit of size Defect removal profile levels Automation, Reviews, Testing 10/23/01 Defect Removal Model ©USC-CSE 71

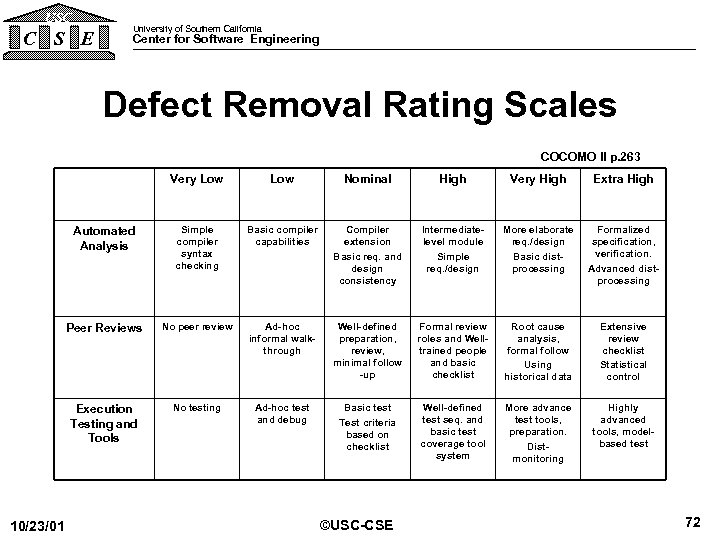

USC C S E University of Southern California Center for Software Engineering Defect Removal Rating Scales COCOMO II p. 263 Very Low Nominal High Very High Extra High Automated Analysis Simple compiler syntax checking Basic compiler capabilities Compiler extension Basic req. and design consistency Intermediatelevel module Simple req. /design More elaborate req. /design Basic distprocessing Formalized specification, verification. Advanced distprocessing Peer Reviews No peer review Ad-hoc informal walkthrough Well-defined preparation, review, minimal follow -up Formal review roles and Welltrained people and basic checklist Root cause analysis, formal follow Using historical data Extensive review checklist Statistical control Execution Testing and Tools 10/23/01 Low No testing Ad-hoc test and debug Basic test Test criteria based on checklist Well-defined test seq. and basic test coverage tool system More advance test tools, preparation. Distmonitoring Highly advanced tools, modelbased test ©USC-CSE 72

USC C S E University of Southern California Center for Software Engineering Defect Removal Rating Scales COCOMO II p. 263 Very Low Nominal High Very High Extra High Automated Analysis Simple compiler syntax checking Basic compiler capabilities Compiler extension Basic req. and design consistency Intermediatelevel module Simple req. /design More elaborate req. /design Basic distprocessing Formalized specification, verification. Advanced distprocessing Peer Reviews No peer review Ad-hoc informal walkthrough Well-defined preparation, review, minimal follow -up Formal review roles and Welltrained people and basic checklist Root cause analysis, formal follow Using historical data Extensive review checklist Statistical control Execution Testing and Tools 10/23/01 Low No testing Ad-hoc test and debug Basic test Test criteria based on checklist Well-defined test seq. and basic test coverage tool system More advance test tools, preparation. Distmonitoring Highly advanced tools, modelbased test ©USC-CSE 72

USC C S E University of Southern California Center for Software Engineering Defect Removal Estimates - Nominal Defect Introduction Rates Delivered Defects / KSLOC Composite Defect Removal Rating 10/23/01 ©USC-CSE 73

USC C S E University of Southern California Center for Software Engineering Defect Removal Estimates - Nominal Defect Introduction Rates Delivered Defects / KSLOC Composite Defect Removal Rating 10/23/01 ©USC-CSE 73

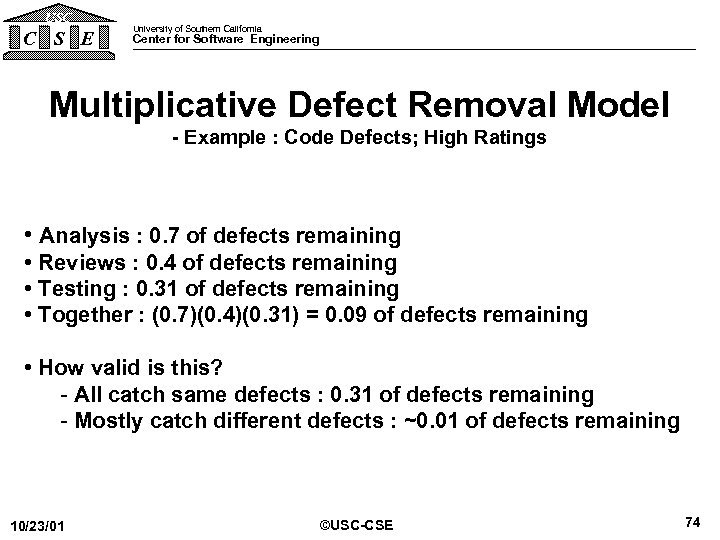

USC C S E University of Southern California Center for Software Engineering Multiplicative Defect Removal Model - Example : Code Defects; High Ratings • Analysis : 0. 7 of defects remaining • Reviews : 0. 4 of defects remaining • Testing : 0. 31 of defects remaining • Together : (0. 7)(0. 4)(0. 31) = 0. 09 of defects remaining • How valid is this? - All catch same defects : 0. 31 of defects remaining - Mostly catch different defects : ~0. 01 of defects remaining 10/23/01 ©USC-CSE 74

USC C S E University of Southern California Center for Software Engineering Multiplicative Defect Removal Model - Example : Code Defects; High Ratings • Analysis : 0. 7 of defects remaining • Reviews : 0. 4 of defects remaining • Testing : 0. 31 of defects remaining • Together : (0. 7)(0. 4)(0. 31) = 0. 09 of defects remaining • How valid is this? - All catch same defects : 0. 31 of defects remaining - Mostly catch different defects : ~0. 01 of defects remaining 10/23/01 ©USC-CSE 74

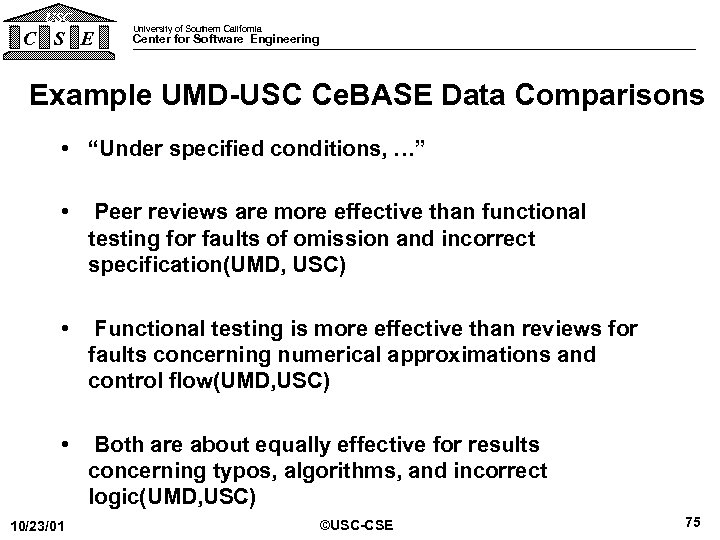

USC C S E University of Southern California Center for Software Engineering Example UMD-USC Ce. BASE Data Comparisons • “Under specified conditions, …” • Peer reviews are more effective than functional testing for faults of omission and incorrect specification(UMD, USC) • Functional testing is more effective than reviews for faults concerning numerical approximations and control flow(UMD, USC) • Both are about equally effective for results concerning typos, algorithms, and incorrect logic(UMD, USC) 10/23/01 ©USC-CSE 75

USC C S E University of Southern California Center for Software Engineering Example UMD-USC Ce. BASE Data Comparisons • “Under specified conditions, …” • Peer reviews are more effective than functional testing for faults of omission and incorrect specification(UMD, USC) • Functional testing is more effective than reviews for faults concerning numerical approximations and control flow(UMD, USC) • Both are about equally effective for results concerning typos, algorithms, and incorrect logic(UMD, USC) 10/23/01 ©USC-CSE 75

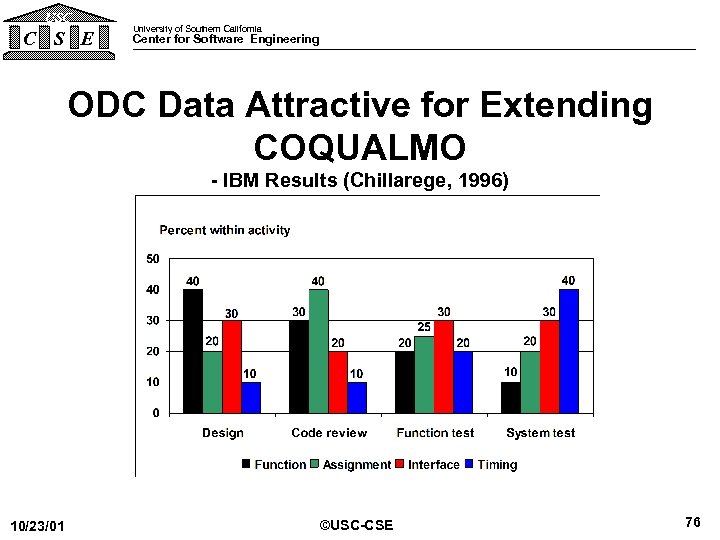

USC C S E University of Southern California Center for Software Engineering ODC Data Attractive for Extending COQUALMO - IBM Results (Chillarege, 1996) 10/23/01 ©USC-CSE 76

USC C S E University of Southern California Center for Software Engineering ODC Data Attractive for Extending COQUALMO - IBM Results (Chillarege, 1996) 10/23/01 ©USC-CSE 76

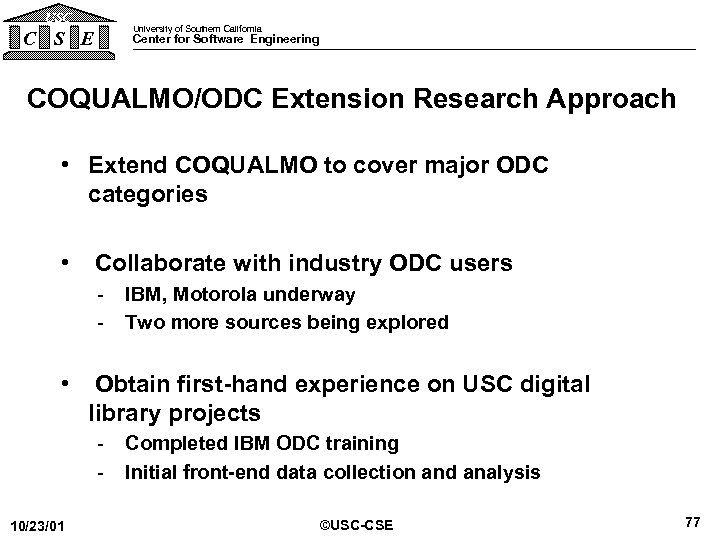

USC C S E University of Southern California Center for Software Engineering COQUALMO/ODC Extension Research Approach • Extend COQUALMO to cover major ODC categories • Collaborate with industry ODC users - IBM, Motorola underway - Two more sources being explored • Obtain first-hand experience on USC digital library projects - Completed IBM ODC training - Initial front-end data collection and analysis 10/23/01 ©USC-CSE 77

USC C S E University of Southern California Center for Software Engineering COQUALMO/ODC Extension Research Approach • Extend COQUALMO to cover major ODC categories • Collaborate with industry ODC users - IBM, Motorola underway - Two more sources being explored • Obtain first-hand experience on USC digital library projects - Completed IBM ODC training - Initial front-end data collection and analysis 10/23/01 ©USC-CSE 77

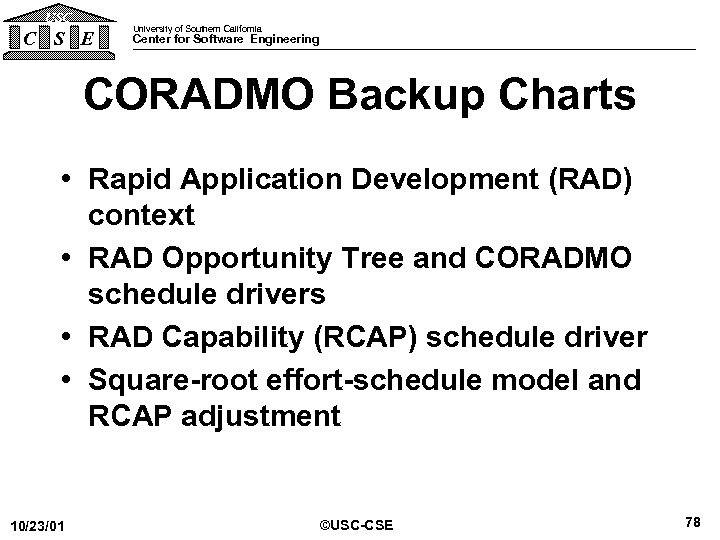

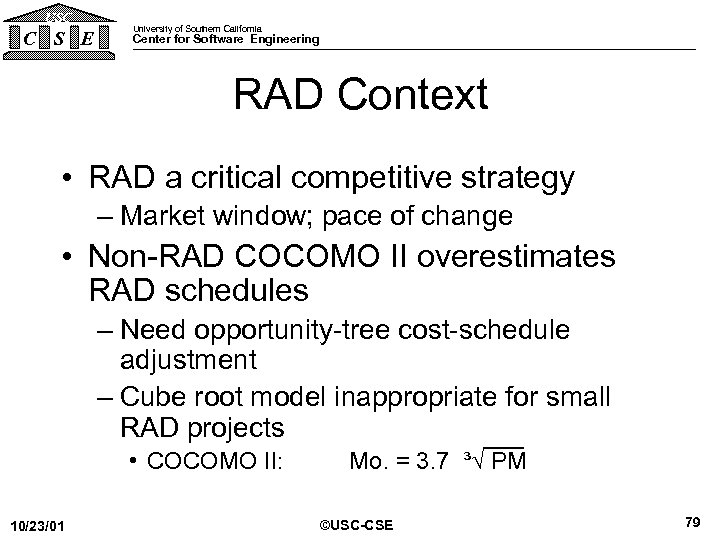

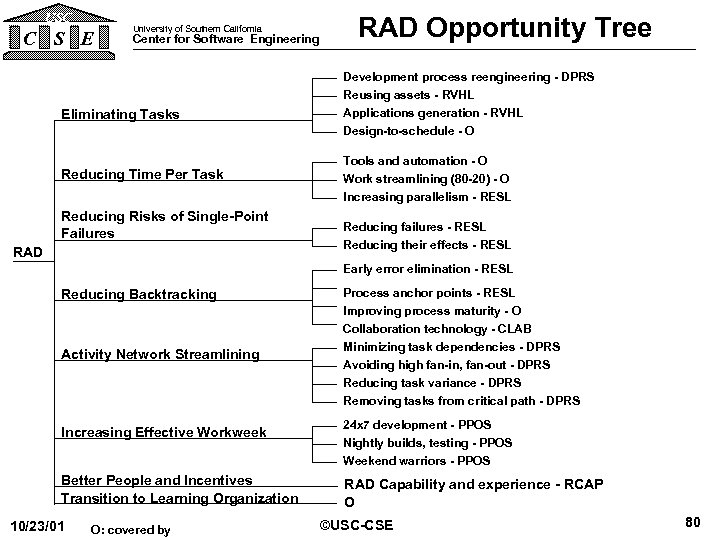

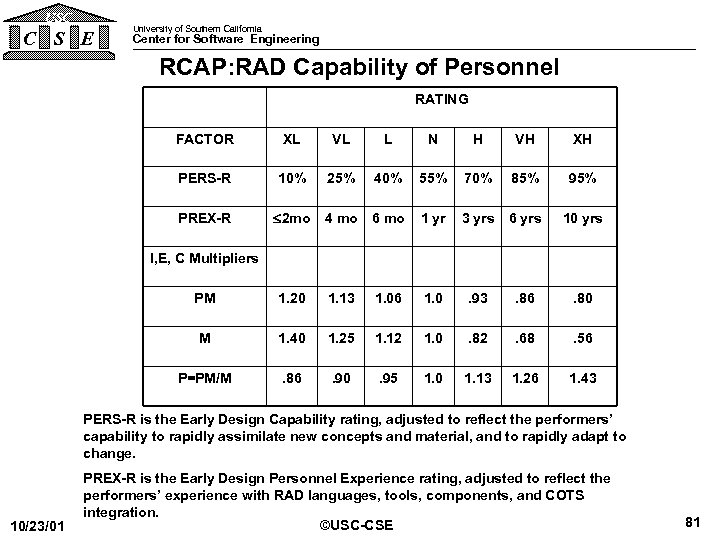

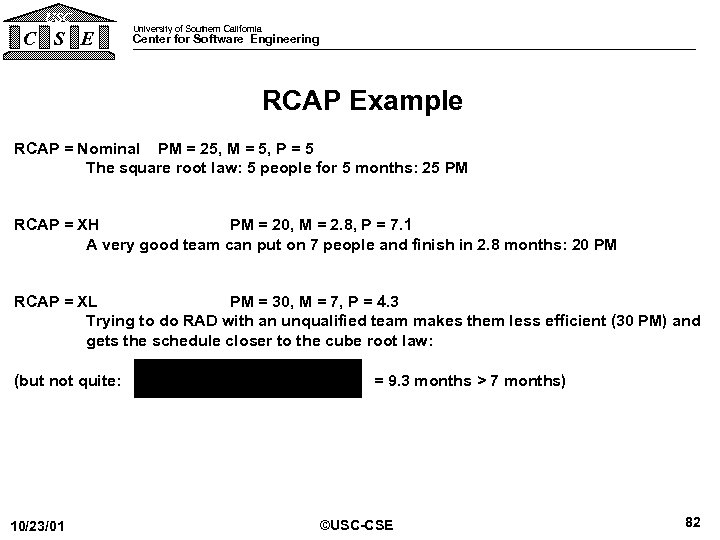

USC C S E University of Southern California Center for Software Engineering CORADMO Backup Charts • Rapid Application Development (RAD) context • RAD Opportunity Tree and CORADMO schedule drivers • RAD Capability (RCAP) schedule driver • Square-root effort-schedule model and RCAP adjustment 10/23/01 ©USC-CSE 78

USC C S E University of Southern California Center for Software Engineering CORADMO Backup Charts • Rapid Application Development (RAD) context • RAD Opportunity Tree and CORADMO schedule drivers • RAD Capability (RCAP) schedule driver • Square-root effort-schedule model and RCAP adjustment 10/23/01 ©USC-CSE 78