6cb74fb40e5e1c9ed0e365c590f37367.ppt

- Количество слайдов: 81

UNIVERSITY OF VIRGINIA Massively Parallel Graphics Processors in a Multicore, Power-Limited Era Kevin Skadron University of Virginia Dept. of Computer Science LAVA Lab © Kevin Skadron, 2008 and NVIDIA Research

UNIVERSITY OF VIRGINIA Massively Parallel Graphics Processors in a Multicore, Power-Limited Era Kevin Skadron University of Virginia Dept. of Computer Science LAVA Lab © Kevin Skadron, 2008 and NVIDIA Research

UNIVERSITY OF VIRGINIA Outline of Overall Talk Why multicore? How did we get into this jam? What next? How do we get out of this jam? Are heterogeneous architectures the answer? © Kevin Skadron, 2008 What is the role of graphics processors (GPUs)? Role in system architecture Architecture and programming overview (2 nd half of talk) 2

UNIVERSITY OF VIRGINIA Outline of Overall Talk Why multicore? How did we get into this jam? What next? How do we get out of this jam? Are heterogeneous architectures the answer? © Kevin Skadron, 2008 What is the role of graphics processors (GPUs)? Role in system architecture Architecture and programming overview (2 nd half of talk) 2

UNIVERSITY OF VIRGINIA Disclaimer © Kevin Skadron, 2008 The opinions here are my own as a computer engineer. They represent my interpretation of technology trends and associated opportunities. They do not in any way represent positions or plans of University of Virginia or NVIDIA. 3

UNIVERSITY OF VIRGINIA Disclaimer © Kevin Skadron, 2008 The opinions here are my own as a computer engineer. They represent my interpretation of technology trends and associated opportunities. They do not in any way represent positions or plans of University of Virginia or NVIDIA. 3

UNIVERSITY OF VIRGINIA Why Multicore? How did we get here? Combination of both “ILP wall” and “power wall” ILP wall: wider superscalar & more aggressive OO execution diminishing returns Boost single-thread performance boost frequency Power wall: boosting frequency to keep up with Moore’s Law (2 X per generation) is expensive Natural frequency growth with technology scaling is only ~20 -30% per generation – Don’t need expensive microarchitectures just for this © Kevin Skadron, 2008 Faster frequency growth requires – Aggressive circuits (expensive) – Very deep pipeline – 30+ stages? (expensive) – Power-saving techniques weren’t able to compensate No longer worth the Si, cooling costs, or battery life 4

UNIVERSITY OF VIRGINIA Why Multicore? How did we get here? Combination of both “ILP wall” and “power wall” ILP wall: wider superscalar & more aggressive OO execution diminishing returns Boost single-thread performance boost frequency Power wall: boosting frequency to keep up with Moore’s Law (2 X per generation) is expensive Natural frequency growth with technology scaling is only ~20 -30% per generation – Don’t need expensive microarchitectures just for this © Kevin Skadron, 2008 Faster frequency growth requires – Aggressive circuits (expensive) – Very deep pipeline – 30+ stages? (expensive) – Power-saving techniques weren’t able to compensate No longer worth the Si, cooling costs, or battery life 4

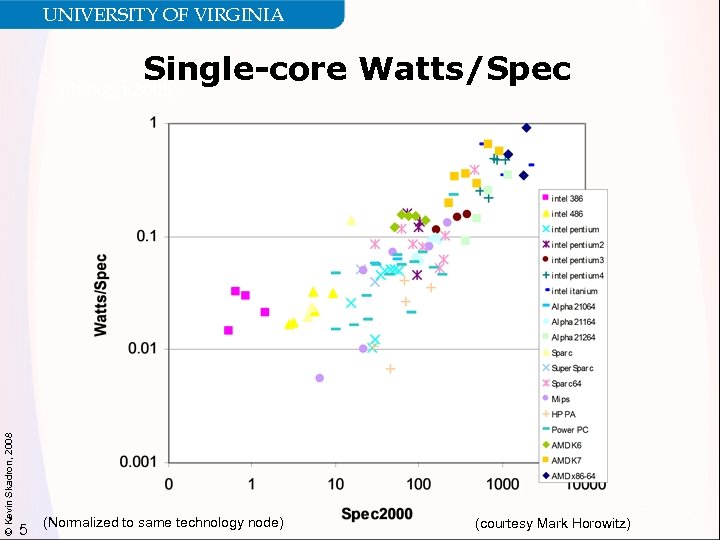

UNIVERSITY OF VIRGINIA Single-core Watts/Spec © Kevin Skadron, 2008 (through 2005) 5 (Normalized to same technology node) (courtesy Mark Horowitz)

UNIVERSITY OF VIRGINIA Single-core Watts/Spec © Kevin Skadron, 2008 (through 2005) 5 (Normalized to same technology node) (courtesy Mark Horowitz)

UNIVERSITY OF VIRGINIA The Multi-core Revolution Can’t make a single core much faster But need to maintain profit margins More and more cache diminishing returns “New” Moore’s Law Same core is 2 X smaller per generation, can double # cores Focus on throughput © Kevin Skadron, 2008 Can use smaller, lower-power cores (even inorder issue) Make cores multi-threaded Trade single-thread performance for Better throughput Lower power density 6 Maybe keep one aggressive core so we don’t make single-thread performance worse

UNIVERSITY OF VIRGINIA The Multi-core Revolution Can’t make a single core much faster But need to maintain profit margins More and more cache diminishing returns “New” Moore’s Law Same core is 2 X smaller per generation, can double # cores Focus on throughput © Kevin Skadron, 2008 Can use smaller, lower-power cores (even inorder issue) Make cores multi-threaded Trade single-thread performance for Better throughput Lower power density 6 Maybe keep one aggressive core so we don’t make single-thread performance worse

UNIVERSITY OF VIRGINIA Can Parallelism Succeed? Parallel computing never took off as a commodity Expensive and rare despite decades of investments What’s different this time? © Kevin Skadron, 2008 Need – power wall! Opportunity – commodity hardware is out there now in hundreds of millions of PCs and servers x 86 multicores – 6 -way multicore has been announced GPUs – 100+ multicore! This breaks the chicken-and-egg problem – Parallel language and software creators no longer need to wait for parallel hadware 7

UNIVERSITY OF VIRGINIA Can Parallelism Succeed? Parallel computing never took off as a commodity Expensive and rare despite decades of investments What’s different this time? © Kevin Skadron, 2008 Need – power wall! Opportunity – commodity hardware is out there now in hundreds of millions of PCs and servers x 86 multicores – 6 -way multicore has been announced GPUs – 100+ multicore! This breaks the chicken-and-egg problem – Parallel language and software creators no longer need to wait for parallel hadware 7

UNIVERSITY OF VIRGINIA What To Do With All Those Cores? PC workloads have limited number of independent tasks Parallel programming is hard, isn’t it? Well, at least we solved the power wall © Kevin Skadron, 2008 …or did we? 8

UNIVERSITY OF VIRGINIA What To Do With All Those Cores? PC workloads have limited number of independent tasks Parallel programming is hard, isn’t it? Well, at least we solved the power wall © Kevin Skadron, 2008 …or did we? 8

UNIVERSITY OF VIRGINIA Moore’s Law and Dennard Scaling Moore’s Law: transistor density doubles every N years (currently N ~ 2) Dennard Scaling (constant electric field) Shrink feature size by k (typ. 0. 7), hold electric field constant Area scales by k 2 (1/2) , C, V, delay reduce by k © Kevin Skadron, 2008 P CV 2 f P goes down by k 2 9

UNIVERSITY OF VIRGINIA Moore’s Law and Dennard Scaling Moore’s Law: transistor density doubles every N years (currently N ~ 2) Dennard Scaling (constant electric field) Shrink feature size by k (typ. 0. 7), hold electric field constant Area scales by k 2 (1/2) , C, V, delay reduce by k © Kevin Skadron, 2008 P CV 2 f P goes down by k 2 9

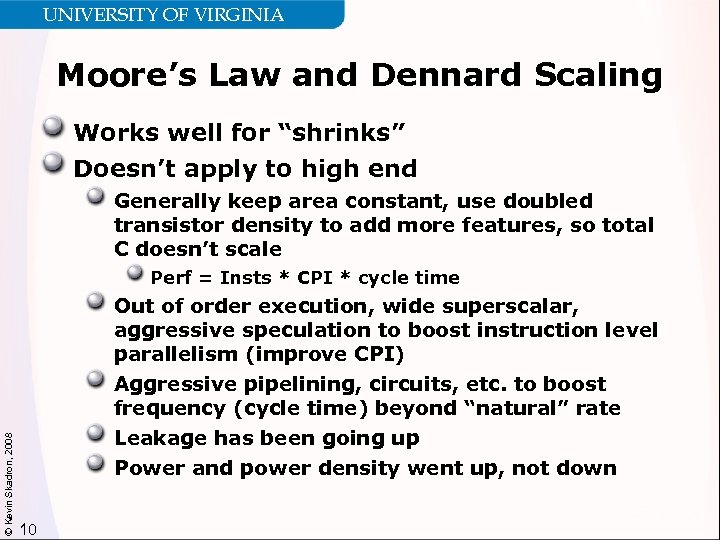

UNIVERSITY OF VIRGINIA Moore’s Law and Dennard Scaling Works well for “shrinks” Doesn’t apply to high end Generally keep area constant, use doubled transistor density to add more features, so total C doesn’t scale © Kevin Skadron, 2008 Perf = Insts * CPI * cycle time Out of order execution, wide superscalar, aggressive speculation to boost instruction level parallelism (improve CPI) Aggressive pipelining, circuits, etc. to boost frequency (cycle time) beyond “natural” rate Leakage has been going up Power and power density went up, not down 10

UNIVERSITY OF VIRGINIA Moore’s Law and Dennard Scaling Works well for “shrinks” Doesn’t apply to high end Generally keep area constant, use doubled transistor density to add more features, so total C doesn’t scale © Kevin Skadron, 2008 Perf = Insts * CPI * cycle time Out of order execution, wide superscalar, aggressive speculation to boost instruction level parallelism (improve CPI) Aggressive pipelining, circuits, etc. to boost frequency (cycle time) beyond “natural” rate Leakage has been going up Power and power density went up, not down 10

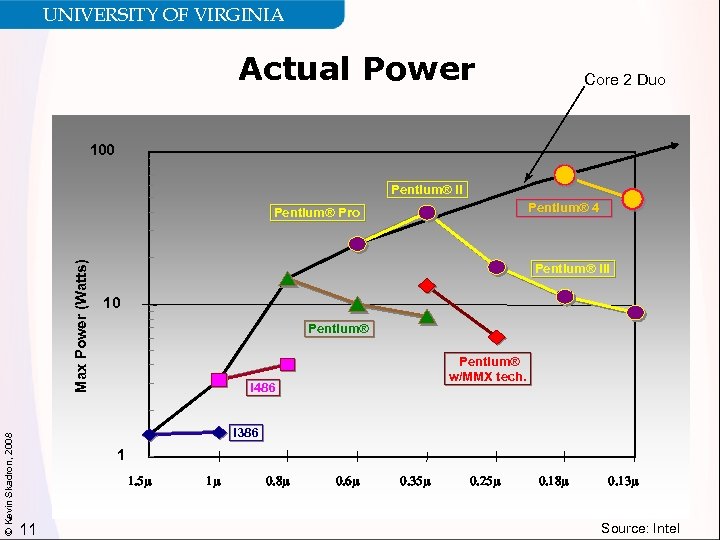

UNIVERSITY OF VIRGINIA Actual Power Core 2 Duo 100 Pentium® II Pentium® 4 © Kevin Skadron, 2008 Max Power (Watts) Pentium® Pro Pentium® III 10 Pentium® w/MMX tech. i 486 i 386 1 1. 5 m 11 1 m 0. 8 m 0. 6 m 0. 35 m 0. 25 m 0. 18 m 0. 13 m Source: Intel

UNIVERSITY OF VIRGINIA Actual Power Core 2 Duo 100 Pentium® II Pentium® 4 © Kevin Skadron, 2008 Max Power (Watts) Pentium® Pro Pentium® III 10 Pentium® w/MMX tech. i 486 i 386 1 1. 5 m 11 1 m 0. 8 m 0. 6 m 0. 35 m 0. 25 m 0. 18 m 0. 13 m Source: Intel

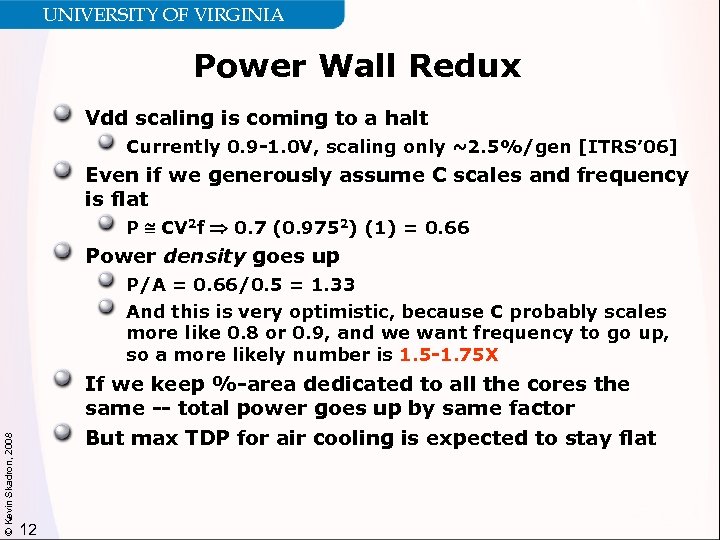

UNIVERSITY OF VIRGINIA Power Wall Redux Vdd scaling is coming to a halt Currently 0. 9 -1. 0 V, scaling only ~2. 5%/gen [ITRS’ 06] Even if we generously assume C scales and frequency is flat P CV 2 f 0. 7 (0. 9752) (1) = 0. 66 Power density goes up © Kevin Skadron, 2008 P/A = 0. 66/0. 5 = 1. 33 And this is very optimistic, because C probably scales more like 0. 8 or 0. 9, and we want frequency to go up, so a more likely number is 1. 5 -1. 75 X If we keep %-area dedicated to all the cores the same -- total power goes up by same factor But max TDP for air cooling is expected to stay flat 12

UNIVERSITY OF VIRGINIA Power Wall Redux Vdd scaling is coming to a halt Currently 0. 9 -1. 0 V, scaling only ~2. 5%/gen [ITRS’ 06] Even if we generously assume C scales and frequency is flat P CV 2 f 0. 7 (0. 9752) (1) = 0. 66 Power density goes up © Kevin Skadron, 2008 P/A = 0. 66/0. 5 = 1. 33 And this is very optimistic, because C probably scales more like 0. 8 or 0. 9, and we want frequency to go up, so a more likely number is 1. 5 -1. 75 X If we keep %-area dedicated to all the cores the same -- total power goes up by same factor But max TDP for air cooling is expected to stay flat 12

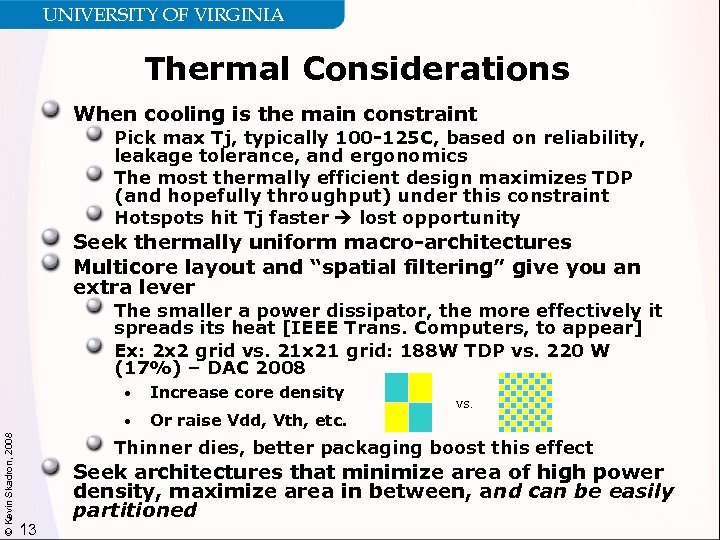

UNIVERSITY OF VIRGINIA Thermal Considerations When cooling is the main constraint Pick max Tj, typically 100 -125 C, based on reliability, leakage tolerance, and ergonomics The most thermally efficient design maximizes TDP (and hopefully throughput) under this constraint Hotspots hit Tj faster lost opportunity Seek thermally uniform macro-architectures Multicore layout and “spatial filtering” give you an extra lever The smaller a power dissipator, the more effectively it spreads its heat [IEEE Trans. Computers, to appear] Ex: 2 x 2 grid vs. 21 x 21 grid: 188 W TDP vs. 220 W (17%) – DAC 2008 Increase core density • © Kevin Skadron, 2008 • Or raise Vdd, Vth, etc. vs. Thinner dies, better packaging boost this effect 13 Seek architectures that minimize area of high power density, maximize area in between, and can be easily partitioned

UNIVERSITY OF VIRGINIA Thermal Considerations When cooling is the main constraint Pick max Tj, typically 100 -125 C, based on reliability, leakage tolerance, and ergonomics The most thermally efficient design maximizes TDP (and hopefully throughput) under this constraint Hotspots hit Tj faster lost opportunity Seek thermally uniform macro-architectures Multicore layout and “spatial filtering” give you an extra lever The smaller a power dissipator, the more effectively it spreads its heat [IEEE Trans. Computers, to appear] Ex: 2 x 2 grid vs. 21 x 21 grid: 188 W TDP vs. 220 W (17%) – DAC 2008 Increase core density • © Kevin Skadron, 2008 • Or raise Vdd, Vth, etc. vs. Thinner dies, better packaging boost this effect 13 Seek architectures that minimize area of high power density, maximize area in between, and can be easily partitioned

UNIVERSITY OF VIRGINIA Where We are Today - Multicore © Kevin Skadron, 2008 Programmability wall 14 Power wall Classic architectures http: //interactive. usc. edu/classes/ctin 542 -designprod/archives/r 2 d 2 -01. jpg

UNIVERSITY OF VIRGINIA Where We are Today - Multicore © Kevin Skadron, 2008 Programmability wall 14 Power wall Classic architectures http: //interactive. usc. edu/classes/ctin 542 -designprod/archives/r 2 d 2 -01. jpg

UNIVERSITY OF VIRGINIA Outline of Overall Talk Why multicore? How did we get into this jam? What next? How do we get out of this jam? Are heterogeneous architectures the answer? © Kevin Skadron, 2008 What is the role of graphics processors (GPUs)? Role in system architecture Architecture and programming overview 15

UNIVERSITY OF VIRGINIA Outline of Overall Talk Why multicore? How did we get into this jam? What next? How do we get out of this jam? Are heterogeneous architectures the answer? © Kevin Skadron, 2008 What is the role of graphics processors (GPUs)? Role in system architecture Architecture and programming overview 15

UNIVERSITY OF VIRGINIA © Kevin Skadron, 2008 Low-Fat Cores 16 PClaes Oldenburg, Apple Core – Autumn http: //www. greenwicharts. org/pastshows. asp

UNIVERSITY OF VIRGINIA © Kevin Skadron, 2008 Low-Fat Cores 16 PClaes Oldenburg, Apple Core – Autumn http: //www. greenwicharts. org/pastshows. asp

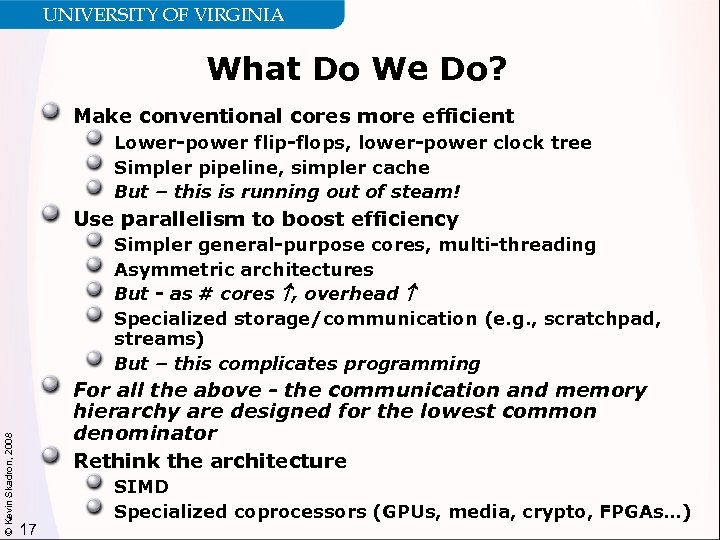

UNIVERSITY OF VIRGINIA What Do We Do? Make conventional cores more efficient Lower-power flip-flops, lower-power clock tree Simpler pipeline, simpler cache But – this is running out of steam! Use parallelism to boost efficiency © Kevin Skadron, 2008 Simpler general-purpose cores, multi-threading Asymmetric architectures But - as # cores , overhead Specialized storage/communication (e. g. , scratchpad, streams) But – this complicates programming For all the above - the communication and memory hierarchy are designed for the lowest common denominator Rethink the architecture 17 SIMD Specialized coprocessors (GPUs, media, crypto, FPGAs…)

UNIVERSITY OF VIRGINIA What Do We Do? Make conventional cores more efficient Lower-power flip-flops, lower-power clock tree Simpler pipeline, simpler cache But – this is running out of steam! Use parallelism to boost efficiency © Kevin Skadron, 2008 Simpler general-purpose cores, multi-threading Asymmetric architectures But - as # cores , overhead Specialized storage/communication (e. g. , scratchpad, streams) But – this complicates programming For all the above - the communication and memory hierarchy are designed for the lowest common denominator Rethink the architecture 17 SIMD Specialized coprocessors (GPUs, media, crypto, FPGAs…)

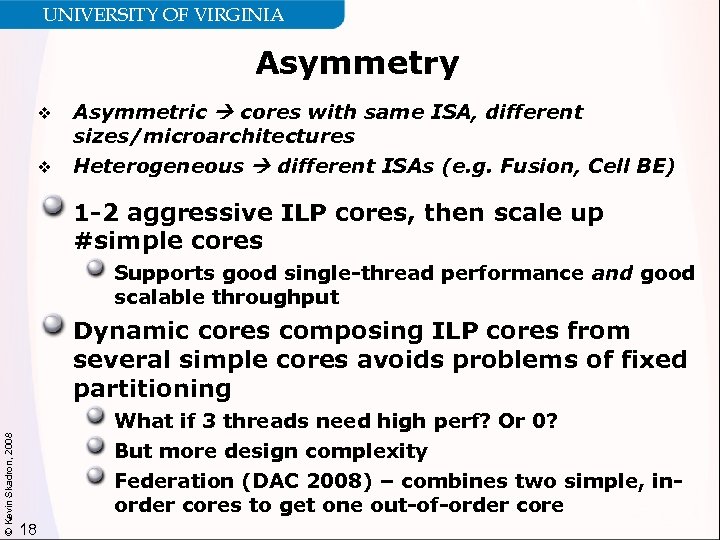

UNIVERSITY OF VIRGINIA Asymmetry v v Asymmetric cores with same ISA, different sizes/microarchitectures Heterogeneous different ISAs (e. g. Fusion, Cell BE) 1 -2 aggressive ILP cores, then scale up #simple cores Supports good single-thread performance and good scalable throughput © Kevin Skadron, 2008 Dynamic cores composing ILP cores from several simple cores avoids problems of fixed partitioning What if 3 threads need high perf? Or 0? But more design complexity Federation (DAC 2008) – combines two simple, inorder cores to get one out-of-order core 18

UNIVERSITY OF VIRGINIA Asymmetry v v Asymmetric cores with same ISA, different sizes/microarchitectures Heterogeneous different ISAs (e. g. Fusion, Cell BE) 1 -2 aggressive ILP cores, then scale up #simple cores Supports good single-thread performance and good scalable throughput © Kevin Skadron, 2008 Dynamic cores composing ILP cores from several simple cores avoids problems of fixed partitioning What if 3 threads need high perf? Or 0? But more design complexity Federation (DAC 2008) – combines two simple, inorder cores to get one out-of-order core 18

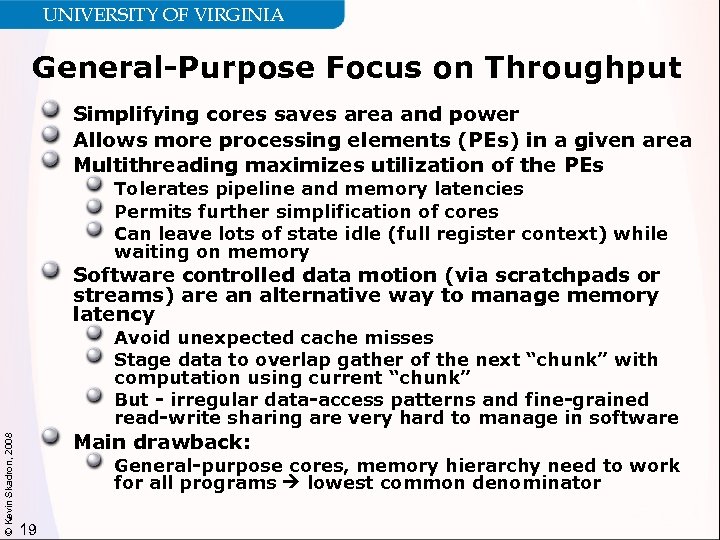

UNIVERSITY OF VIRGINIA General-Purpose Focus on Throughput Simplifying cores saves area and power Allows more processing elements (PEs) in a given area Multithreading maximizes utilization of the PEs Tolerates pipeline and memory latencies Permits further simplification of cores Can leave lots of state idle (full register context) while waiting on memory Software controlled data motion (via scratchpads or streams) are an alternative way to manage memory latency © Kevin Skadron, 2008 Avoid unexpected cache misses Stage data to overlap gather of the next “chunk” with computation using current “chunk” But - irregular data-access patterns and fine-grained read-write sharing are very hard to manage in software Main drawback: General-purpose cores, memory hierarchy need to work for all programs lowest common denominator 19

UNIVERSITY OF VIRGINIA General-Purpose Focus on Throughput Simplifying cores saves area and power Allows more processing elements (PEs) in a given area Multithreading maximizes utilization of the PEs Tolerates pipeline and memory latencies Permits further simplification of cores Can leave lots of state idle (full register context) while waiting on memory Software controlled data motion (via scratchpads or streams) are an alternative way to manage memory latency © Kevin Skadron, 2008 Avoid unexpected cache misses Stage data to overlap gather of the next “chunk” with computation using current “chunk” But - irregular data-access patterns and fine-grained read-write sharing are very hard to manage in software Main drawback: General-purpose cores, memory hierarchy need to work for all programs lowest common denominator 19

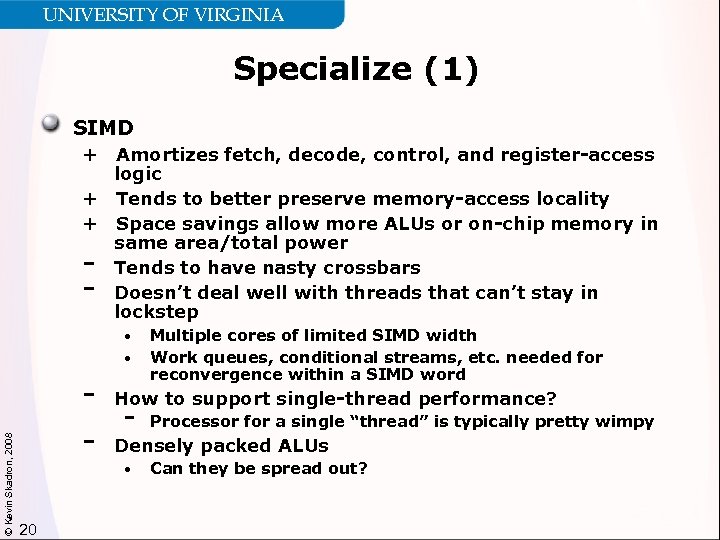

UNIVERSITY OF VIRGINIA Specialize (1) SIMD + Amortizes fetch, decode, control, and register-access logic + Tends to better preserve memory-access locality + Space savings allow more ALUs or on-chip memory in same area/total power Tends to have nasty crossbars Doesn’t deal well with threads that can’t stay in lockstep - © Kevin Skadron, 2008 • • Multiple cores of limited SIMD width Work queues, conditional streams, etc. needed for reconvergence within a SIMD word - How to support single-thread performance? - Processor for a single “thread” is typically pretty wimpy - Densely packed ALUs • 20 Can they be spread out?

UNIVERSITY OF VIRGINIA Specialize (1) SIMD + Amortizes fetch, decode, control, and register-access logic + Tends to better preserve memory-access locality + Space savings allow more ALUs or on-chip memory in same area/total power Tends to have nasty crossbars Doesn’t deal well with threads that can’t stay in lockstep - © Kevin Skadron, 2008 • • Multiple cores of limited SIMD width Work queues, conditional streams, etc. needed for reconvergence within a SIMD word - How to support single-thread performance? - Processor for a single “thread” is typically pretty wimpy - Densely packed ALUs • 20 Can they be spread out?

UNIVERSITY OF VIRGINIA Specialize (2) Is heterogeneity the answer? Specialized coprocessors trade generality for efficiency Datapaths, memory hierarchies tuned for certain types of code Graphics processors (GPUs) Network processors (NPUs) Media processors 10 -100 x speedups often possible May still be high-power cores But also high performance/watt © Kevin Skadron, 2008 Main drawbacks: 21 Cooling can still be a challenge Only suitable for certain types of algorithms How do you choose which coprocessors to include? Each has its own API—programming a collection of different coprocessors a potential nightmare

UNIVERSITY OF VIRGINIA Specialize (2) Is heterogeneity the answer? Specialized coprocessors trade generality for efficiency Datapaths, memory hierarchies tuned for certain types of code Graphics processors (GPUs) Network processors (NPUs) Media processors 10 -100 x speedups often possible May still be high-power cores But also high performance/watt © Kevin Skadron, 2008 Main drawbacks: 21 Cooling can still be a challenge Only suitable for certain types of algorithms How do you choose which coprocessors to include? Each has its own API—programming a collection of different coprocessors a potential nightmare

UNIVERSITY OF VIRGINIA Programming for Heterogeneity Need either Very wide applicability (GPUs, media processors) An architecture-specific API can still survive with enough market share Flexible programming model that applies to multiple types of coprocessors Flexible specification of parallelism Ability to use hardware-accelerated functions when available © Kevin Skadron, 2008 – Transcendentals, string matching, etc. We are going to need new, higher-level programming models anyway 22

UNIVERSITY OF VIRGINIA Programming for Heterogeneity Need either Very wide applicability (GPUs, media processors) An architecture-specific API can still survive with enough market share Flexible programming model that applies to multiple types of coprocessors Flexible specification of parallelism Ability to use hardware-accelerated functions when available © Kevin Skadron, 2008 – Transcendentals, string matching, etc. We are going to need new, higher-level programming models anyway 22

UNIVERSITY OF VIRGINIA High-Level Programming Models Claim: programmers who can do low-level parallel programming are an elite minority We will never train the “average programmer” to write highly parallel programs in C, Java, X 10, etc. Most people need to think about things in pieces And pieces need sequential semantics But it’s ok if the “pieces” are internally parallel Threaded programming models don’t easily support such decomposition © Kevin Skadron, 2008 Must develop APIs and libraries with higher-level abstractions Simplify average programmer’s task Allow advanced programmers to drill down We will need this regardless of what the underlying architecture is But it also buys us more flexibility in the architecture Hiding hardware details facilitates heterogeneity Best APIs may be domain-specific Direct. X, Open. GL are a good case study 23

UNIVERSITY OF VIRGINIA High-Level Programming Models Claim: programmers who can do low-level parallel programming are an elite minority We will never train the “average programmer” to write highly parallel programs in C, Java, X 10, etc. Most people need to think about things in pieces And pieces need sequential semantics But it’s ok if the “pieces” are internally parallel Threaded programming models don’t easily support such decomposition © Kevin Skadron, 2008 Must develop APIs and libraries with higher-level abstractions Simplify average programmer’s task Allow advanced programmers to drill down We will need this regardless of what the underlying architecture is But it also buys us more flexibility in the architecture Hiding hardware details facilitates heterogeneity Best APIs may be domain-specific Direct. X, Open. GL are a good case study 23

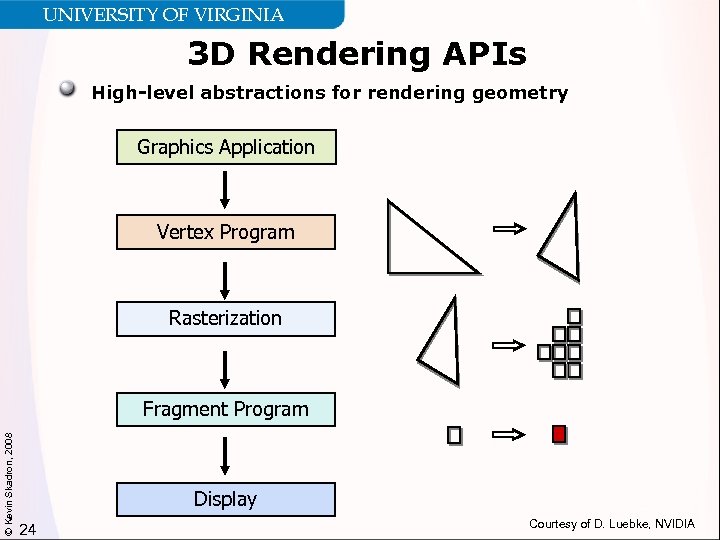

UNIVERSITY OF VIRGINIA 3 D Rendering APIs High-level abstractions for rendering geometry Graphics Application Vertex Program Rasterization © Kevin Skadron, 2008 Fragment Program Display 24 Courtesy of D. Luebke, NVIDIA

UNIVERSITY OF VIRGINIA 3 D Rendering APIs High-level abstractions for rendering geometry Graphics Application Vertex Program Rasterization © Kevin Skadron, 2008 Fragment Program Display 24 Courtesy of D. Luebke, NVIDIA

UNIVERSITY OF VIRGINIA 3 D Rendering APIs High-level abstractions for rendering geometry Serial ordering among primitives Implicit synchronization No guarantees about ordering within primitives © Kevin Skadron, 2008 Means no fine-grained synchronization Middleware translates to CPUs and various GPUs (NVIDIA, ATI, Intel) Domain-specific API is convenient for programmers and provides lots of semantic information to middleware: parallelism, load balancing, etc. Domain-specific API is convenient for hardware designers: same API supports radically different architectures across product generations and companies Similar arguments apply to Matlab, SQL, Map-Reduce, etc. These examples show abstractions might solve the programming challenges associated with both Highly parallel architectures Heterogeneous architectures 25

UNIVERSITY OF VIRGINIA 3 D Rendering APIs High-level abstractions for rendering geometry Serial ordering among primitives Implicit synchronization No guarantees about ordering within primitives © Kevin Skadron, 2008 Means no fine-grained synchronization Middleware translates to CPUs and various GPUs (NVIDIA, ATI, Intel) Domain-specific API is convenient for programmers and provides lots of semantic information to middleware: parallelism, load balancing, etc. Domain-specific API is convenient for hardware designers: same API supports radically different architectures across product generations and companies Similar arguments apply to Matlab, SQL, Map-Reduce, etc. These examples show abstractions might solve the programming challenges associated with both Highly parallel architectures Heterogeneous architectures 25

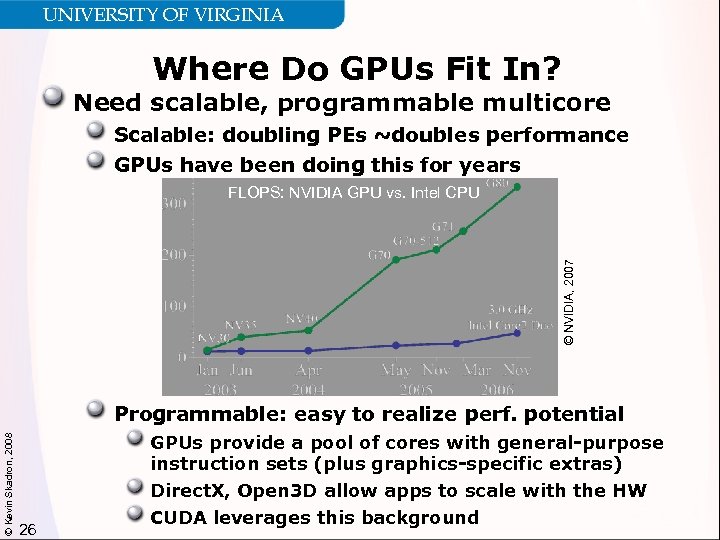

UNIVERSITY OF VIRGINIA Where Do GPUs Fit In? Need scalable, programmable multicore Scalable: doubling PEs ~doubles performance GPUs have been doing this for years © NVIDIA, 2007 FLOPS: NVIDIA GPU vs. Intel CPU © Kevin Skadron, 2008 Programmable: easy to realize perf. potential 26 GPUs provide a pool of cores with general-purpose instruction sets (plus graphics-specific extras) Direct. X, Open 3 D allow apps to scale with the HW CUDA leverages this background

UNIVERSITY OF VIRGINIA Where Do GPUs Fit In? Need scalable, programmable multicore Scalable: doubling PEs ~doubles performance GPUs have been doing this for years © NVIDIA, 2007 FLOPS: NVIDIA GPU vs. Intel CPU © Kevin Skadron, 2008 Programmable: easy to realize perf. potential 26 GPUs provide a pool of cores with general-purpose instruction sets (plus graphics-specific extras) Direct. X, Open 3 D allow apps to scale with the HW CUDA leverages this background

UNIVERSITY OF VIRGINIA Why is CUDA Important? (1) Mass market host platform Easy to buy and set up a system Provides a solution for manycore parallelism Not limited to small core counts Easy to learn abstractions for massive parallelism Abstractions not tied to a specific platform © Kevin Skadron, 2008 Doesn’t depend on graphics pipeline; can be implemented on other platforms Preliminary results suggest that CUDA programs run efficiently on multicore CPUs [Stratton’ 08] Supports a wide range of application characteristics More general than streaming Not limited to data parallelism 27

UNIVERSITY OF VIRGINIA Why is CUDA Important? (1) Mass market host platform Easy to buy and set up a system Provides a solution for manycore parallelism Not limited to small core counts Easy to learn abstractions for massive parallelism Abstractions not tied to a specific platform © Kevin Skadron, 2008 Doesn’t depend on graphics pipeline; can be implemented on other platforms Preliminary results suggest that CUDA programs run efficiently on multicore CPUs [Stratton’ 08] Supports a wide range of application characteristics More general than streaming Not limited to data parallelism 27

UNIVERSITY OF VIRGINIA Why is CUDA Important? (2) CUDA + GPUs facilitate multicore research at scale NVIDIA Tesla: 16, 8 -way SIMD cores = 128 PEs, 12, 288 thread contexts total Simple programming model allows exploration of new algorithms and hardware bottlenecks The whole community can learn from this © Kevin Skadron, 2008 CUDA + GPUs provide a real platform…today Results are not theoretical Increases interest from potential users, e. g. computational scientists Boosts opportunities for interdisciplinary collaboration CUDA is teachable Undergrads can start writing real programs within a couple of weeks 28

UNIVERSITY OF VIRGINIA Why is CUDA Important? (2) CUDA + GPUs facilitate multicore research at scale NVIDIA Tesla: 16, 8 -way SIMD cores = 128 PEs, 12, 288 thread contexts total Simple programming model allows exploration of new algorithms and hardware bottlenecks The whole community can learn from this © Kevin Skadron, 2008 CUDA + GPUs provide a real platform…today Results are not theoretical Increases interest from potential users, e. g. computational scientists Boosts opportunities for interdisciplinary collaboration CUDA is teachable Undergrads can start writing real programs within a couple of weeks 28

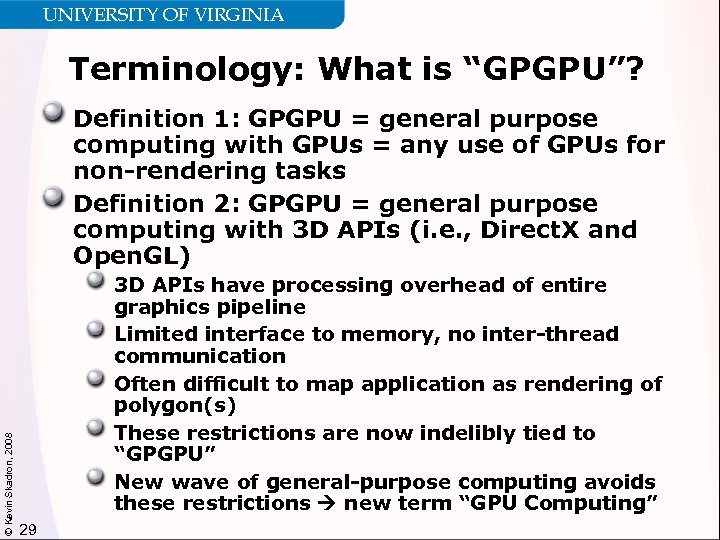

UNIVERSITY OF VIRGINIA Terminology: What is “GPGPU”? © Kevin Skadron, 2008 Definition 1: GPGPU = general purpose computing with GPUs = any use of GPUs for non-rendering tasks Definition 2: GPGPU = general purpose computing with 3 D APIs (i. e. , Direct. X and Open. GL) 3 D APIs have processing overhead of entire graphics pipeline Limited interface to memory, no inter-thread communication Often difficult to map application as rendering of polygon(s) These restrictions are now indelibly tied to “GPGPU” New wave of general-purpose computing avoids these restrictions new term “GPU Computing” 29

UNIVERSITY OF VIRGINIA Terminology: What is “GPGPU”? © Kevin Skadron, 2008 Definition 1: GPGPU = general purpose computing with GPUs = any use of GPUs for non-rendering tasks Definition 2: GPGPU = general purpose computing with 3 D APIs (i. e. , Direct. X and Open. GL) 3 D APIs have processing overhead of entire graphics pipeline Limited interface to memory, no inter-thread communication Often difficult to map application as rendering of polygon(s) These restrictions are now indelibly tied to “GPGPU” New wave of general-purpose computing avoids these restrictions new term “GPU Computing” 29

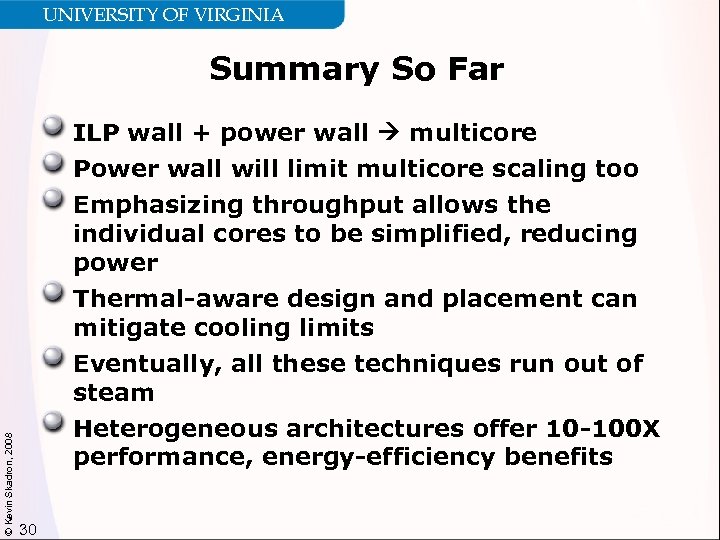

UNIVERSITY OF VIRGINIA Summary So Far © Kevin Skadron, 2008 ILP wall + power wall multicore Power wall will limit multicore scaling too Emphasizing throughput allows the individual cores to be simplified, reducing power Thermal-aware design and placement can mitigate cooling limits Eventually, all these techniques run out of steam Heterogeneous architectures offer 10 -100 X performance, energy-efficiency benefits 30

UNIVERSITY OF VIRGINIA Summary So Far © Kevin Skadron, 2008 ILP wall + power wall multicore Power wall will limit multicore scaling too Emphasizing throughput allows the individual cores to be simplified, reducing power Thermal-aware design and placement can mitigate cooling limits Eventually, all these techniques run out of steam Heterogeneous architectures offer 10 -100 X performance, energy-efficiency benefits 30

UNIVERSITY OF VIRGINIA Outline of Overall Talk Why multicore? How did we get into this jam? What next? How do we get out of this jam? Are heterogeneous architectures the answer? Not clear yet… © Kevin Skadron, 2008 What is the role of graphics processors (GPUs)? Role in system architecture Architecture and programming overview 31

UNIVERSITY OF VIRGINIA Outline of Overall Talk Why multicore? How did we get into this jam? What next? How do we get out of this jam? Are heterogeneous architectures the answer? Not clear yet… © Kevin Skadron, 2008 What is the role of graphics processors (GPUs)? Role in system architecture Architecture and programming overview 31

UNIVERSITY OF VIRGINIA Outline of GPU Portion of Talk Overview of interesting GPU features © Kevin Skadron, 2008 Role of GPU in system architecture More detail on CUDA More detail on NVIDIA Tesla architecture 32

UNIVERSITY OF VIRGINIA Outline of GPU Portion of Talk Overview of interesting GPU features © Kevin Skadron, 2008 Role of GPU in system architecture More detail on CUDA More detail on NVIDIA Tesla architecture 32

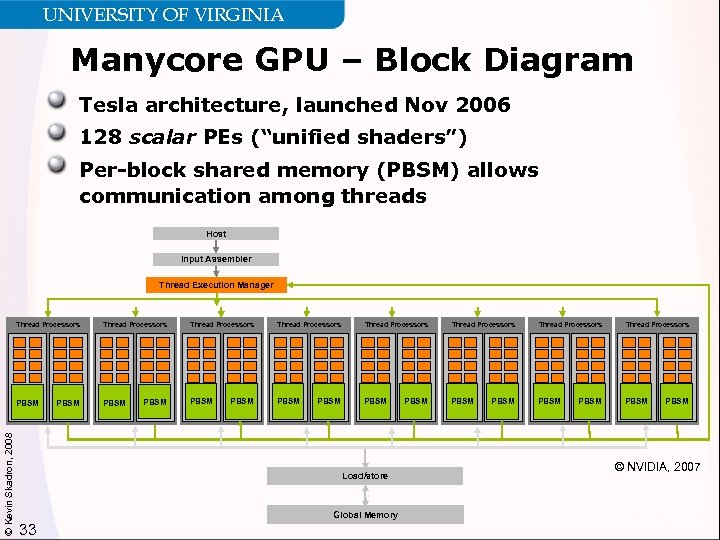

UNIVERSITY OF VIRGINIA Manycore GPU – Block Diagram Tesla architecture, launched Nov 2006 128 scalar PEs (“unified shaders”) Per-block shared memory (PBSM) allows communication among threads Host Input Assembler Thread Execution Manager Thread Processors PBSM © Kevin Skadron, 2008 Thread Processors PBSM Thread Processors Thread Processors PBSM PBSM Load/store Global Memory 33 PBSM © NVIDIA, 2007

UNIVERSITY OF VIRGINIA Manycore GPU – Block Diagram Tesla architecture, launched Nov 2006 128 scalar PEs (“unified shaders”) Per-block shared memory (PBSM) allows communication among threads Host Input Assembler Thread Execution Manager Thread Processors PBSM © Kevin Skadron, 2008 Thread Processors PBSM Thread Processors Thread Processors PBSM PBSM Load/store Global Memory 33 PBSM © NVIDIA, 2007

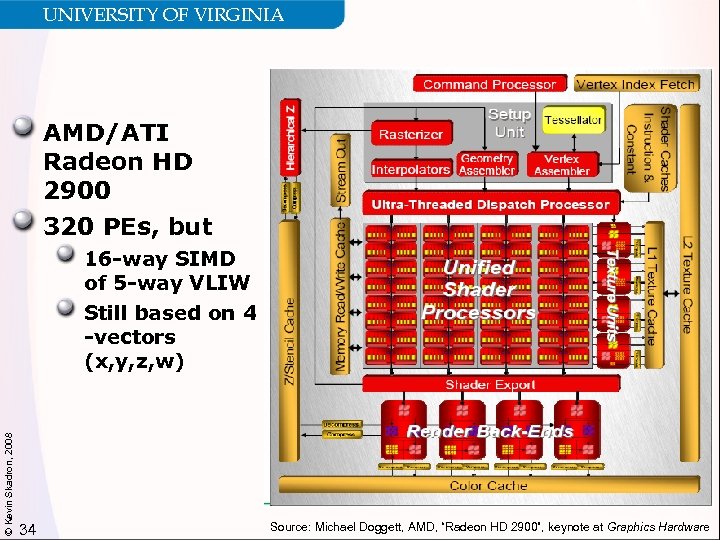

UNIVERSITY OF VIRGINIA AMD/ATI Radeon HD 2900 320 PEs, but © Kevin Skadron, 2008 16 -way SIMD of 5 -way VLIW Still based on 4 -vectors (x, y, z, w) 34 Source: Michael Doggett, AMD, “Radeon HD 2900”, keynote at Graphics Hardware

UNIVERSITY OF VIRGINIA AMD/ATI Radeon HD 2900 320 PEs, but © Kevin Skadron, 2008 16 -way SIMD of 5 -way VLIW Still based on 4 -vectors (x, y, z, w) 34 Source: Michael Doggett, AMD, “Radeon HD 2900”, keynote at Graphics Hardware

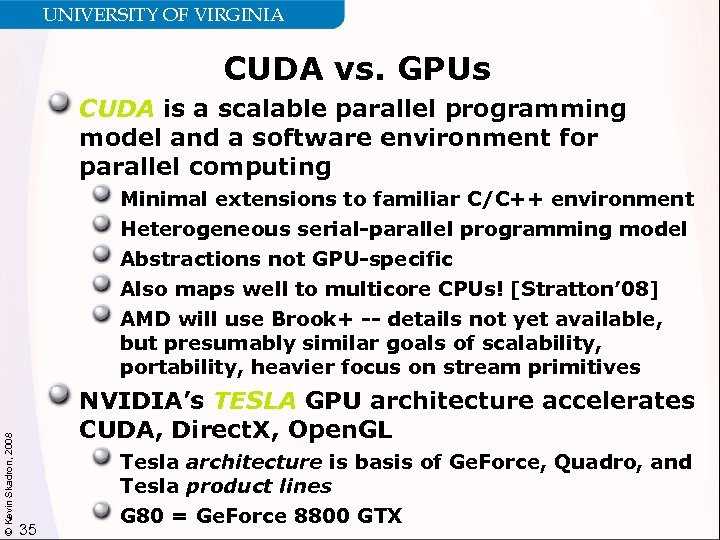

UNIVERSITY OF VIRGINIA CUDA vs. GPUs CUDA is a scalable parallel programming model and a software environment for parallel computing © Kevin Skadron, 2008 Minimal extensions to familiar C/C++ environment Heterogeneous serial-parallel programming model Abstractions not GPU-specific Also maps well to multicore CPUs! [Stratton’ 08] AMD will use Brook+ -- details not yet available, but presumably similar goals of scalability, portability, heavier focus on stream primitives NVIDIA’s TESLA GPU architecture accelerates CUDA, Direct. X, Open. GL 35 Tesla architecture is basis of Ge. Force, Quadro, and Tesla product lines G 80 = Ge. Force 8800 GTX

UNIVERSITY OF VIRGINIA CUDA vs. GPUs CUDA is a scalable parallel programming model and a software environment for parallel computing © Kevin Skadron, 2008 Minimal extensions to familiar C/C++ environment Heterogeneous serial-parallel programming model Abstractions not GPU-specific Also maps well to multicore CPUs! [Stratton’ 08] AMD will use Brook+ -- details not yet available, but presumably similar goals of scalability, portability, heavier focus on stream primitives NVIDIA’s TESLA GPU architecture accelerates CUDA, Direct. X, Open. GL 35 Tesla architecture is basis of Ge. Force, Quadro, and Tesla product lines G 80 = Ge. Force 8800 GTX

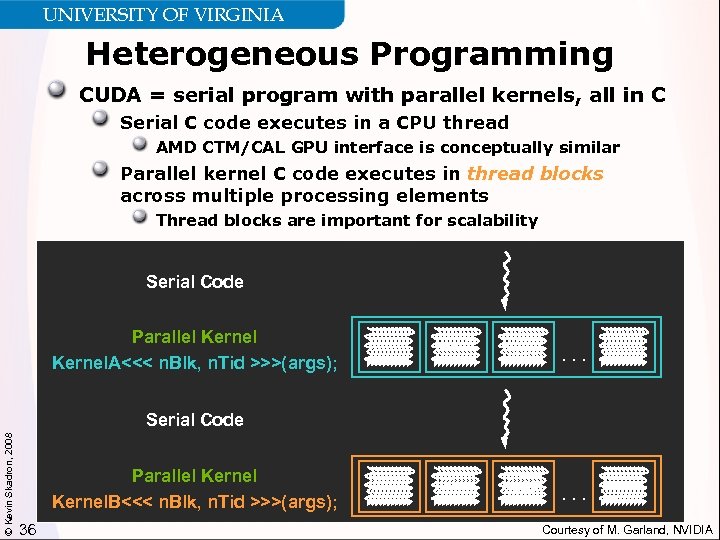

UNIVERSITY OF VIRGINIA Heterogeneous Programming CUDA = serial program with parallel kernels, all in C Serial C code executes in a CPU thread AMD CTM/CAL GPU interface is conceptually similar Parallel kernel C code executes in thread blocks across multiple processing elements Thread blocks are important for scalability Serial Code Parallel Kernel. A<<< n. Blk, n. Tid >>>(args); . . . © Kevin Skadron, 2008 Serial Code Parallel Kernel. B<<< n. Blk, n. Tid >>>(args); 36 . . . Courtesy of M. Garland, NVIDIA

UNIVERSITY OF VIRGINIA Heterogeneous Programming CUDA = serial program with parallel kernels, all in C Serial C code executes in a CPU thread AMD CTM/CAL GPU interface is conceptually similar Parallel kernel C code executes in thread blocks across multiple processing elements Thread blocks are important for scalability Serial Code Parallel Kernel. A<<< n. Blk, n. Tid >>>(args); . . . © Kevin Skadron, 2008 Serial Code Parallel Kernel. B<<< n. Blk, n. Tid >>>(args); 36 . . . Courtesy of M. Garland, NVIDIA

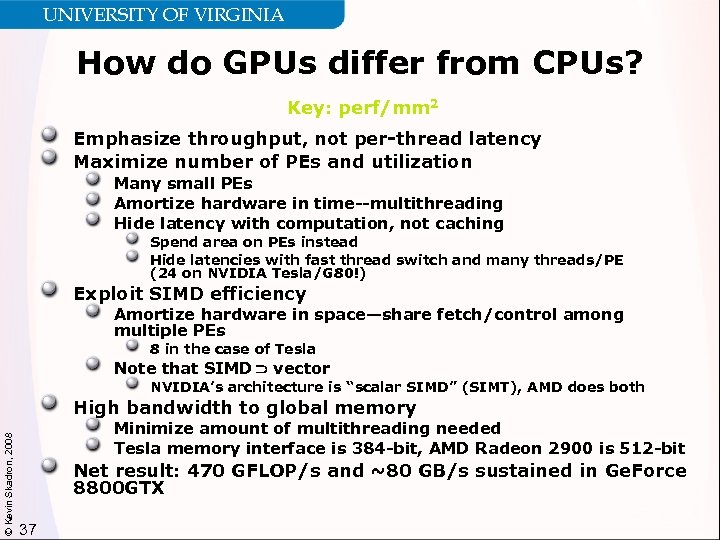

UNIVERSITY OF VIRGINIA How do GPUs differ from CPUs? Key: perf/mm 2 Emphasize throughput, not per-thread latency Maximize number of PEs and utilization Many small PEs Amortize hardware in time--multithreading Hide latency with computation, not caching Spend area on PEs instead Hide latencies with fast thread switch and many threads/PE (24 on NVIDIA Tesla/G 80!) Exploit SIMD efficiency Amortize hardware in space—share fetch/control among multiple PEs 8 in the case of Tesla Note that SIMD vector NVIDIA’s architecture is “scalar SIMD” (SIMT), AMD does both © Kevin Skadron, 2008 High bandwidth to global memory Minimize amount of multithreading needed Tesla memory interface is 384 -bit, AMD Radeon 2900 is 512 -bit Net result: 470 GFLOP/s and ~80 GB/s sustained in Ge. Force 8800 GTX 37

UNIVERSITY OF VIRGINIA How do GPUs differ from CPUs? Key: perf/mm 2 Emphasize throughput, not per-thread latency Maximize number of PEs and utilization Many small PEs Amortize hardware in time--multithreading Hide latency with computation, not caching Spend area on PEs instead Hide latencies with fast thread switch and many threads/PE (24 on NVIDIA Tesla/G 80!) Exploit SIMD efficiency Amortize hardware in space—share fetch/control among multiple PEs 8 in the case of Tesla Note that SIMD vector NVIDIA’s architecture is “scalar SIMD” (SIMT), AMD does both © Kevin Skadron, 2008 High bandwidth to global memory Minimize amount of multithreading needed Tesla memory interface is 384 -bit, AMD Radeon 2900 is 512 -bit Net result: 470 GFLOP/s and ~80 GB/s sustained in Ge. Force 8800 GTX 37

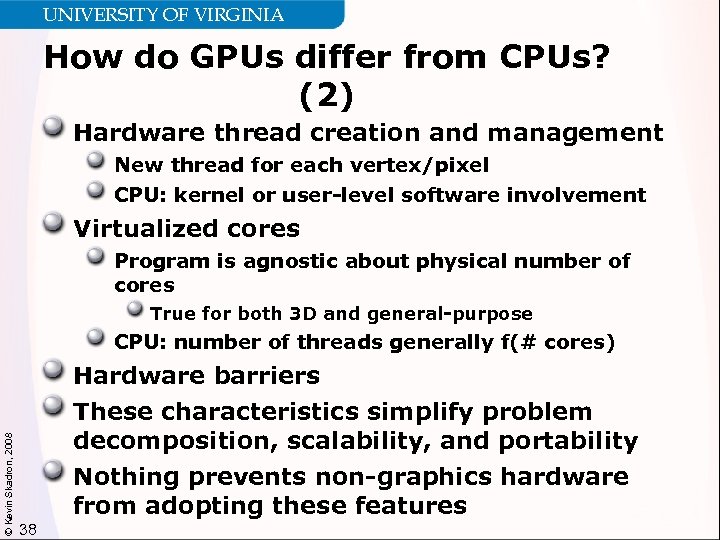

UNIVERSITY OF VIRGINIA How do GPUs differ from CPUs? (2) Hardware thread creation and management New thread for each vertex/pixel CPU: kernel or user-level software involvement Virtualized cores Program is agnostic about physical number of cores True for both 3 D and general-purpose CPU: number of threads generally f(# cores) © Kevin Skadron, 2008 Hardware barriers These characteristics simplify problem decomposition, scalability, and portability Nothing prevents non-graphics hardware from adopting these features 38

UNIVERSITY OF VIRGINIA How do GPUs differ from CPUs? (2) Hardware thread creation and management New thread for each vertex/pixel CPU: kernel or user-level software involvement Virtualized cores Program is agnostic about physical number of cores True for both 3 D and general-purpose CPU: number of threads generally f(# cores) © Kevin Skadron, 2008 Hardware barriers These characteristics simplify problem decomposition, scalability, and portability Nothing prevents non-graphics hardware from adopting these features 38

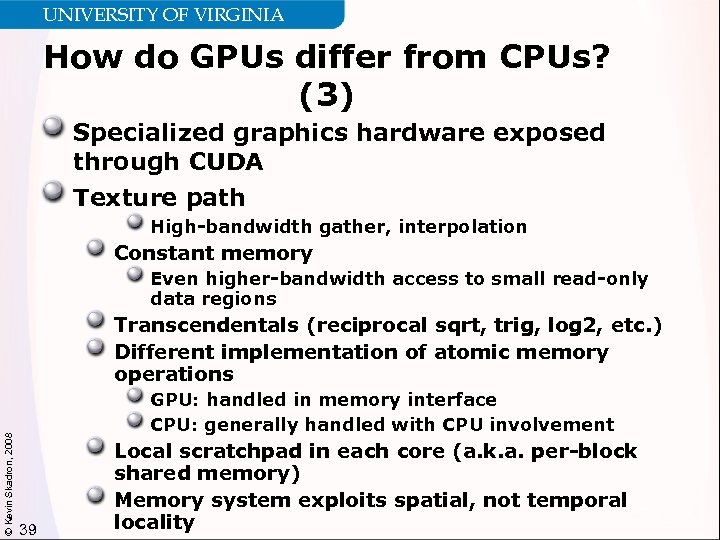

UNIVERSITY OF VIRGINIA How do GPUs differ from CPUs? (3) Specialized graphics hardware exposed through CUDA Texture path High-bandwidth gather, interpolation Constant memory Even higher-bandwidth access to small read-only data regions © Kevin Skadron, 2008 Transcendentals (reciprocal sqrt, trig, log 2, etc. ) Different implementation of atomic memory operations GPU: handled in memory interface CPU: generally handled with CPU involvement 39 Local scratchpad in each core (a. k. a. per-block shared memory) Memory system exploits spatial, not temporal locality

UNIVERSITY OF VIRGINIA How do GPUs differ from CPUs? (3) Specialized graphics hardware exposed through CUDA Texture path High-bandwidth gather, interpolation Constant memory Even higher-bandwidth access to small read-only data regions © Kevin Skadron, 2008 Transcendentals (reciprocal sqrt, trig, log 2, etc. ) Different implementation of atomic memory operations GPU: handled in memory interface CPU: generally handled with CPU involvement 39 Local scratchpad in each core (a. k. a. per-block shared memory) Memory system exploits spatial, not temporal locality

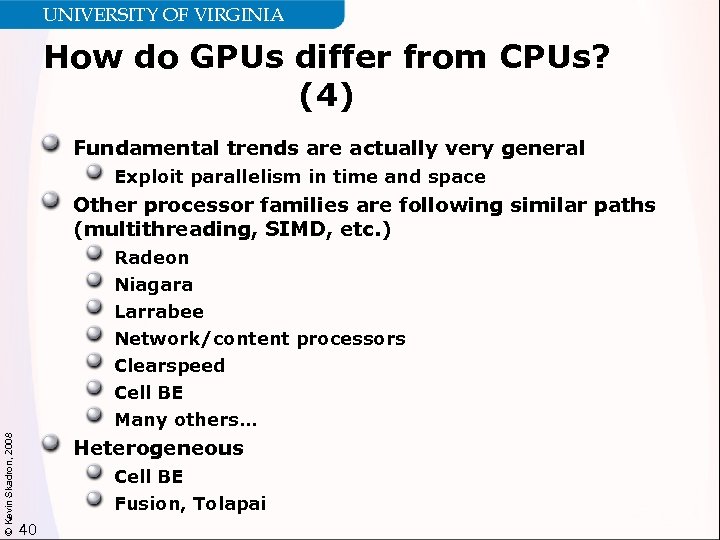

UNIVERSITY OF VIRGINIA How do GPUs differ from CPUs? (4) Fundamental trends are actually very general Exploit parallelism in time and space Other processor families are following similar paths (multithreading, SIMD, etc. ) © Kevin Skadron, 2008 Radeon Niagara Larrabee Network/content processors Clearspeed Cell BE Many others… Heterogeneous Cell BE Fusion, Tolapai 40

UNIVERSITY OF VIRGINIA How do GPUs differ from CPUs? (4) Fundamental trends are actually very general Exploit parallelism in time and space Other processor families are following similar paths (multithreading, SIMD, etc. ) © Kevin Skadron, 2008 Radeon Niagara Larrabee Network/content processors Clearspeed Cell BE Many others… Heterogeneous Cell BE Fusion, Tolapai 40

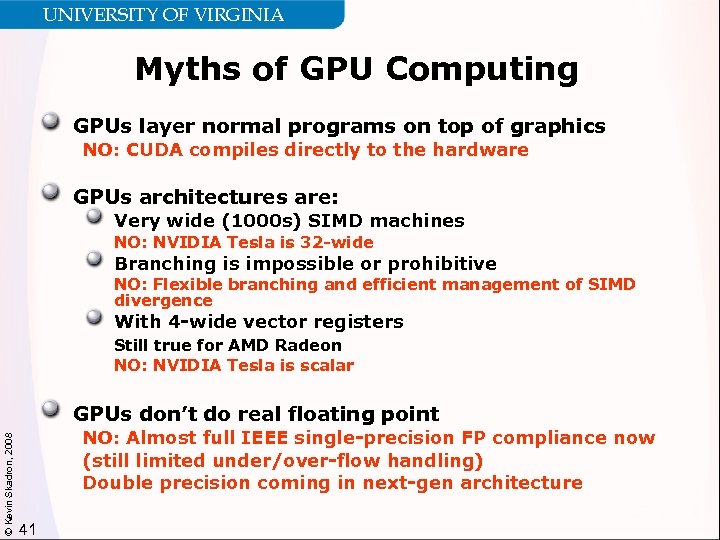

UNIVERSITY OF VIRGINIA Myths of GPU Computing GPUs layer normal programs on top of graphics NO: CUDA compiles directly to the hardware GPUs architectures are: Very wide (1000 s) SIMD machines NO: NVIDIA Tesla is 32 -wide Branching is impossible or prohibitive NO: Flexible branching and efficient management of SIMD divergence With 4 -wide vector registers Still true for AMD Radeon NO: NVIDIA Tesla is scalar © Kevin Skadron, 2008 GPUs don’t do real floating point NO: Almost full IEEE single-precision FP compliance now (still limited under/over-flow handling) Double precision coming in next-gen architecture 41

UNIVERSITY OF VIRGINIA Myths of GPU Computing GPUs layer normal programs on top of graphics NO: CUDA compiles directly to the hardware GPUs architectures are: Very wide (1000 s) SIMD machines NO: NVIDIA Tesla is 32 -wide Branching is impossible or prohibitive NO: Flexible branching and efficient management of SIMD divergence With 4 -wide vector registers Still true for AMD Radeon NO: NVIDIA Tesla is scalar © Kevin Skadron, 2008 GPUs don’t do real floating point NO: Almost full IEEE single-precision FP compliance now (still limited under/over-flow handling) Double precision coming in next-gen architecture 41

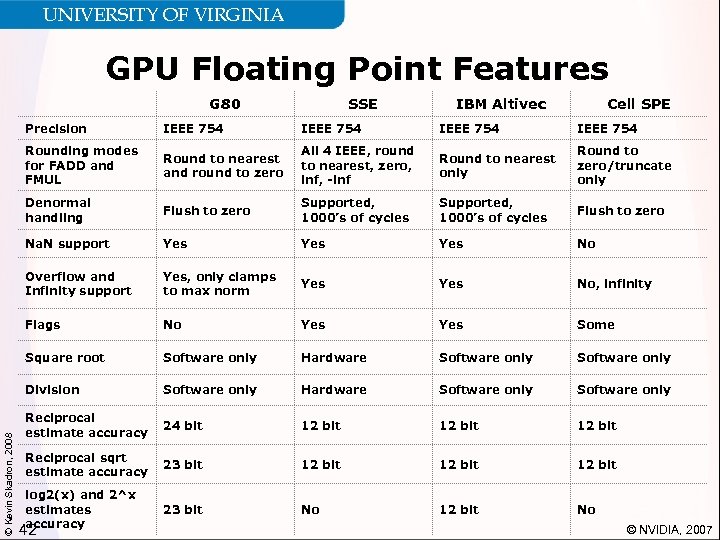

UNIVERSITY OF VIRGINIA GPU Floating Point Features G 80 SSE IBM Altivec Cell SPE IEEE 754 Rounding modes for FADD and FMUL Round to nearest and round to zero All 4 IEEE, round to nearest, zero, inf, -inf Round to nearest only Round to zero/truncate only Denormal handling Flush to zero Supported, 1000’s of cycles Flush to zero Na. N support Yes Yes No Overflow and Infinity support Yes, only clamps to max norm Yes No, infinity Flags No Yes Some Square root Software only Hardware Software only Division © Kevin Skadron, 2008 Precision Software only Hardware Software only Reciprocal estimate accuracy 24 bit 12 bit Reciprocal sqrt estimate accuracy 23 bit 12 bit log 2(x) and 2^x estimates accuracy 23 bit No 12 bit No 42 © NVIDIA, 2007

UNIVERSITY OF VIRGINIA GPU Floating Point Features G 80 SSE IBM Altivec Cell SPE IEEE 754 Rounding modes for FADD and FMUL Round to nearest and round to zero All 4 IEEE, round to nearest, zero, inf, -inf Round to nearest only Round to zero/truncate only Denormal handling Flush to zero Supported, 1000’s of cycles Flush to zero Na. N support Yes Yes No Overflow and Infinity support Yes, only clamps to max norm Yes No, infinity Flags No Yes Some Square root Software only Hardware Software only Division © Kevin Skadron, 2008 Precision Software only Hardware Software only Reciprocal estimate accuracy 24 bit 12 bit Reciprocal sqrt estimate accuracy 23 bit 12 bit log 2(x) and 2^x estimates accuracy 23 bit No 12 bit No 42 © NVIDIA, 2007

UNIVERSITY OF VIRGINIA Outline of GPU Portion of Talk Overview of interesting GPU features © Kevin Skadron, 2008 Role of GPU in system architecture More detail on CUDA More detail on NVIDIA Tesla architecture 43

UNIVERSITY OF VIRGINIA Outline of GPU Portion of Talk Overview of interesting GPU features © Kevin Skadron, 2008 Role of GPU in system architecture More detail on CUDA More detail on NVIDIA Tesla architecture 43

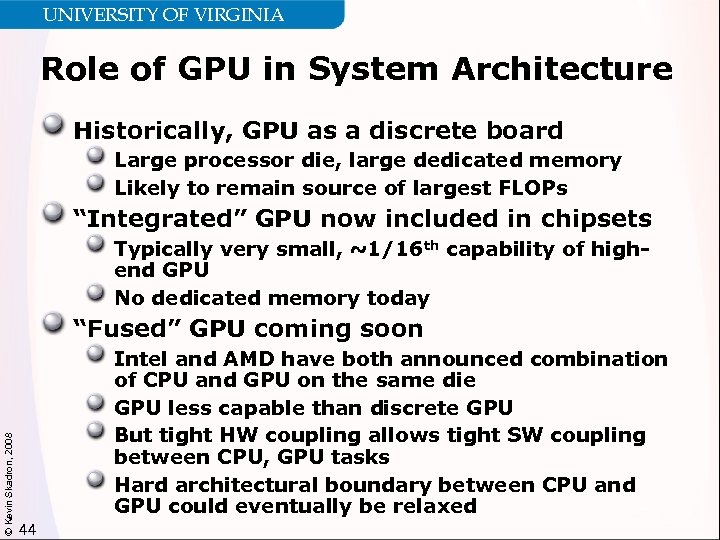

UNIVERSITY OF VIRGINIA Role of GPU in System Architecture Historically, GPU as a discrete board Large processor die, large dedicated memory Likely to remain source of largest FLOPs “Integrated” GPU now included in chipsets Typically very small, ~1/16 th capability of highend GPU No dedicated memory today © Kevin Skadron, 2008 “Fused” GPU coming soon Intel and AMD have both announced combination of CPU and GPU on the same die GPU less capable than discrete GPU But tight HW coupling allows tight SW coupling between CPU, GPU tasks Hard architectural boundary between CPU and GPU could eventually be relaxed 44

UNIVERSITY OF VIRGINIA Role of GPU in System Architecture Historically, GPU as a discrete board Large processor die, large dedicated memory Likely to remain source of largest FLOPs “Integrated” GPU now included in chipsets Typically very small, ~1/16 th capability of highend GPU No dedicated memory today © Kevin Skadron, 2008 “Fused” GPU coming soon Intel and AMD have both announced combination of CPU and GPU on the same die GPU less capable than discrete GPU But tight HW coupling allows tight SW coupling between CPU, GPU tasks Hard architectural boundary between CPU and GPU could eventually be relaxed 44

UNIVERSITY OF VIRGINIA Heterogeneous Architectures Same choices are/will be available for other coprocessors Media processors, network processors, FPGAs All available as discrete boards, some included in chipsets Growing support for “peer” support Coprocessor fits in CPU socket on an SMP motherboard FPGAs often discussed in this context © Kevin Skadron, 2008 Integration of other coprocessors with CPU cores on same die seems inevitable 45

UNIVERSITY OF VIRGINIA Heterogeneous Architectures Same choices are/will be available for other coprocessors Media processors, network processors, FPGAs All available as discrete boards, some included in chipsets Growing support for “peer” support Coprocessor fits in CPU socket on an SMP motherboard FPGAs often discussed in this context © Kevin Skadron, 2008 Integration of other coprocessors with CPU cores on same die seems inevitable 45

UNIVERSITY OF VIRGINIA Implications Discrete: high offload cost, offload “chunk” must be large enough to amortize this overhead Bringing coprocessor closer reduces time, power cost for offload Joining coprocessor and CPU on the same die could allow very tight coupling Coprocessor features exposed through ISA Shared memory, coherent caching More flexible coprocessor exception handling Drawbacks of integration: Integration limits size of coprocessor © Kevin Skadron, 2008 e. g. , can’t be competitive with highest-end GPU Risk that these will be low-end, low-margin parts Premium pricing will require major value from the tight coupling 46

UNIVERSITY OF VIRGINIA Implications Discrete: high offload cost, offload “chunk” must be large enough to amortize this overhead Bringing coprocessor closer reduces time, power cost for offload Joining coprocessor and CPU on the same die could allow very tight coupling Coprocessor features exposed through ISA Shared memory, coherent caching More flexible coprocessor exception handling Drawbacks of integration: Integration limits size of coprocessor © Kevin Skadron, 2008 e. g. , can’t be competitive with highest-end GPU Risk that these will be low-end, low-margin parts Premium pricing will require major value from the tight coupling 46

UNIVERSITY OF VIRGINIA Outline of GPU Portion of Talk Overview of interesting GPU features © Kevin Skadron, 2008 Role of GPU in system architecture More detail on CUDA More detail on NVIDIA Tesla architecture 47

UNIVERSITY OF VIRGINIA Outline of GPU Portion of Talk Overview of interesting GPU features © Kevin Skadron, 2008 Role of GPU in system architecture More detail on CUDA More detail on NVIDIA Tesla architecture 47

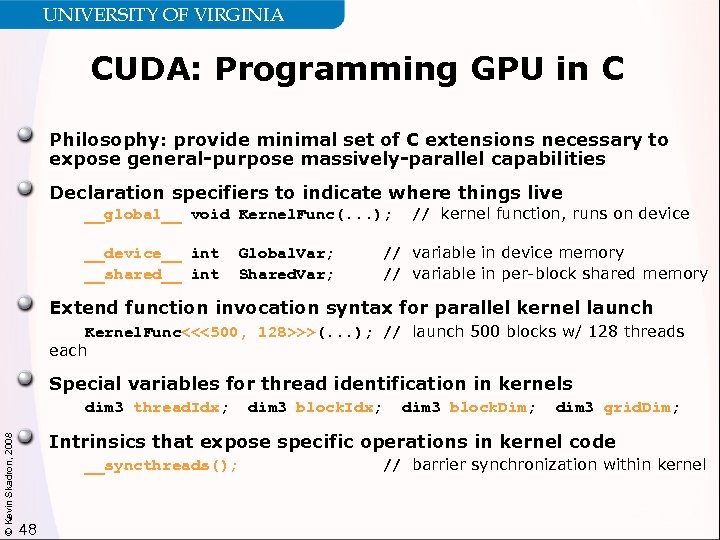

UNIVERSITY OF VIRGINIA CUDA: Programming GPU in C Philosophy: provide minimal set of C extensions necessary to expose general-purpose massively-parallel capabilities Declaration specifiers to indicate where things live __global__ void Kernel. Func(. . . ); __device__ int __shared__ int Global. Var; Shared. Var; // kernel function, runs on device // variable in device memory // variable in per-block shared memory Extend function invocation syntax for parallel kernel launch Kernel. Func<<<500, 128>>>(. . . ); // launch 500 blocks w/ 128 threads each Special variables for thread identification in kernels © Kevin Skadron, 2008 dim 3 thread. Idx; dim 3 block. Dim; dim 3 grid. Dim; Intrinsics that expose specific operations in kernel code __syncthreads(); 48 // barrier synchronization within kernel

UNIVERSITY OF VIRGINIA CUDA: Programming GPU in C Philosophy: provide minimal set of C extensions necessary to expose general-purpose massively-parallel capabilities Declaration specifiers to indicate where things live __global__ void Kernel. Func(. . . ); __device__ int __shared__ int Global. Var; Shared. Var; // kernel function, runs on device // variable in device memory // variable in per-block shared memory Extend function invocation syntax for parallel kernel launch Kernel. Func<<<500, 128>>>(. . . ); // launch 500 blocks w/ 128 threads each Special variables for thread identification in kernels © Kevin Skadron, 2008 dim 3 thread. Idx; dim 3 block. Dim; dim 3 grid. Dim; Intrinsics that expose specific operations in kernel code __syncthreads(); 48 // barrier synchronization within kernel

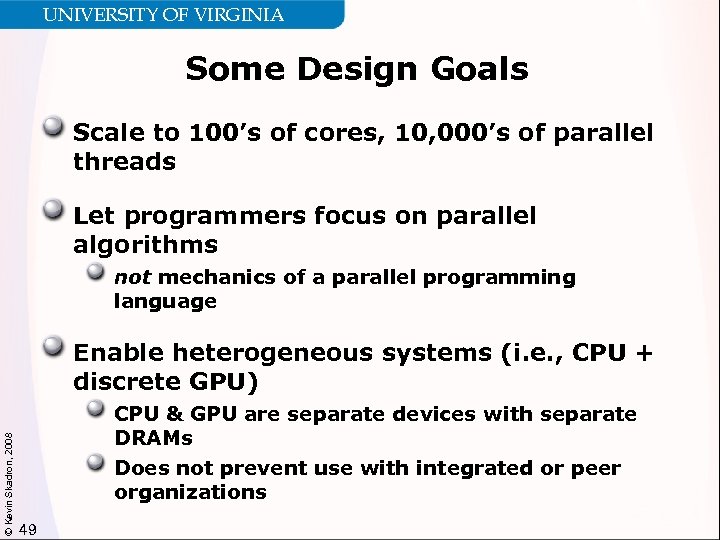

UNIVERSITY OF VIRGINIA Some Design Goals Scale to 100’s of cores, 10, 000’s of parallel threads Let programmers focus on parallel algorithms not mechanics of a parallel programming language © Kevin Skadron, 2008 Enable heterogeneous systems (i. e. , CPU + discrete GPU) CPU & GPU are separate devices with separate DRAMs Does not prevent use with integrated or peer organizations 49

UNIVERSITY OF VIRGINIA Some Design Goals Scale to 100’s of cores, 10, 000’s of parallel threads Let programmers focus on parallel algorithms not mechanics of a parallel programming language © Kevin Skadron, 2008 Enable heterogeneous systems (i. e. , CPU + discrete GPU) CPU & GPU are separate devices with separate DRAMs Does not prevent use with integrated or peer organizations 49

UNIVERSITY OF VIRGINIA Key Parallel Abstractions in CUDA Hierarchy of concurrent threads Lightweight synchronization primitives © Kevin Skadron, 2008 Shared memory model for cooperating threads 50

UNIVERSITY OF VIRGINIA Key Parallel Abstractions in CUDA Hierarchy of concurrent threads Lightweight synchronization primitives © Kevin Skadron, 2008 Shared memory model for cooperating threads 50

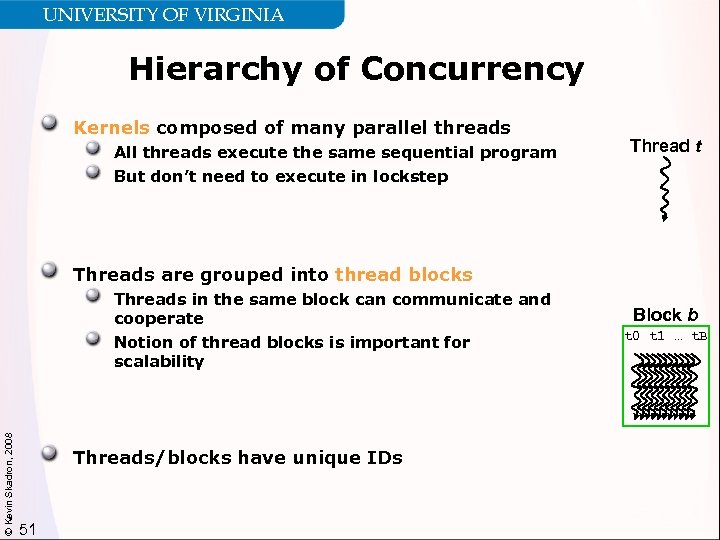

UNIVERSITY OF VIRGINIA Hierarchy of Concurrency Kernels composed of many parallel threads All threads execute the same sequential program Thread t But don’t need to execute in lockstep Threads are grouped into thread blocks Threads in the same block can communicate and cooperate © Kevin Skadron, 2008 Notion of thread blocks is important for scalability Threads/blocks have unique IDs 51 Block b t 0 t 1 … t. B

UNIVERSITY OF VIRGINIA Hierarchy of Concurrency Kernels composed of many parallel threads All threads execute the same sequential program Thread t But don’t need to execute in lockstep Threads are grouped into thread blocks Threads in the same block can communicate and cooperate © Kevin Skadron, 2008 Notion of thread blocks is important for scalability Threads/blocks have unique IDs 51 Block b t 0 t 1 … t. B

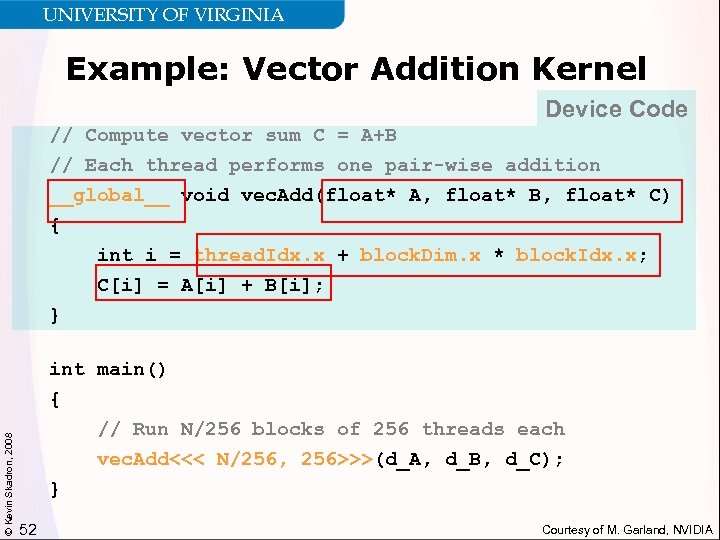

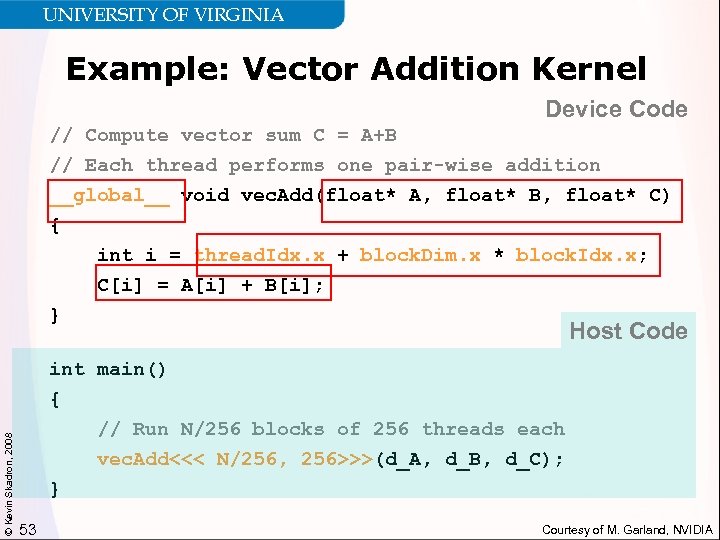

UNIVERSITY OF VIRGINIA Example: Vector Addition Kernel Device Code // Compute vector sum C = A+B // Each thread performs one pair-wise addition __global__ void vec. Add(float* A, float* B, float* C) { int i = thread. Idx. x + block. Dim. x * block. Idx. x; C[i] = A[i] + B[i]; © Kevin Skadron, 2008 } int main() { // Run N/256 blocks of 256 threads each vec. Add<<< N/256, 256>>>(d_A, d_B, d_C); } 52 Courtesy of M. Garland, NVIDIA

UNIVERSITY OF VIRGINIA Example: Vector Addition Kernel Device Code // Compute vector sum C = A+B // Each thread performs one pair-wise addition __global__ void vec. Add(float* A, float* B, float* C) { int i = thread. Idx. x + block. Dim. x * block. Idx. x; C[i] = A[i] + B[i]; © Kevin Skadron, 2008 } int main() { // Run N/256 blocks of 256 threads each vec. Add<<< N/256, 256>>>(d_A, d_B, d_C); } 52 Courtesy of M. Garland, NVIDIA

UNIVERSITY OF VIRGINIA Example: Vector Addition Kernel Device Code // Compute vector sum C = A+B // Each thread performs one pair-wise addition __global__ void vec. Add(float* A, float* B, float* C) { int i = thread. Idx. x + block. Dim. x * block. Idx. x; C[i] = A[i] + B[i]; © Kevin Skadron, 2008 } Host Code int main() { // Run N/256 blocks of 256 threads each vec. Add<<< N/256, 256>>>(d_A, d_B, d_C); } 53 Courtesy of M. Garland, NVIDIA

UNIVERSITY OF VIRGINIA Example: Vector Addition Kernel Device Code // Compute vector sum C = A+B // Each thread performs one pair-wise addition __global__ void vec. Add(float* A, float* B, float* C) { int i = thread. Idx. x + block. Dim. x * block. Idx. x; C[i] = A[i] + B[i]; © Kevin Skadron, 2008 } Host Code int main() { // Run N/256 blocks of 256 threads each vec. Add<<< N/256, 256>>>(d_A, d_B, d_C); } 53 Courtesy of M. Garland, NVIDIA

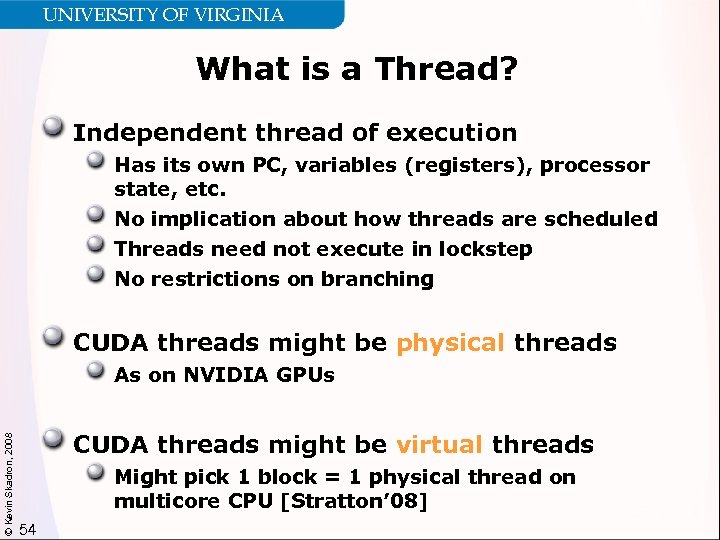

UNIVERSITY OF VIRGINIA What is a Thread? Independent thread of execution Has its own PC, variables (registers), processor state, etc. No implication about how threads are scheduled Threads need not execute in lockstep No restrictions on branching CUDA threads might be physical threads © Kevin Skadron, 2008 As on NVIDIA GPUs CUDA threads might be virtual threads Might pick 1 block = 1 physical thread on multicore CPU [Stratton’ 08] 54

UNIVERSITY OF VIRGINIA What is a Thread? Independent thread of execution Has its own PC, variables (registers), processor state, etc. No implication about how threads are scheduled Threads need not execute in lockstep No restrictions on branching CUDA threads might be physical threads © Kevin Skadron, 2008 As on NVIDIA GPUs CUDA threads might be virtual threads Might pick 1 block = 1 physical thread on multicore CPU [Stratton’ 08] 54

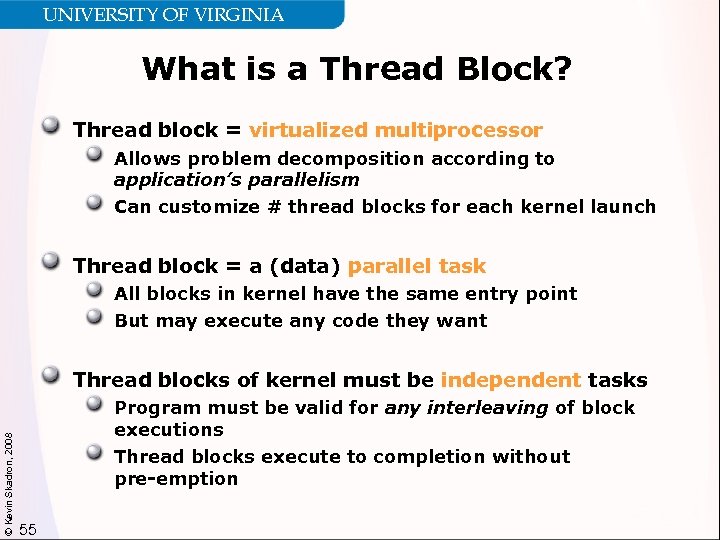

UNIVERSITY OF VIRGINIA What is a Thread Block? Thread block = virtualized multiprocessor Allows problem decomposition according to application’s parallelism Can customize # thread blocks for each kernel launch Thread block = a (data) parallel task All blocks in kernel have the same entry point But may execute any code they want © Kevin Skadron, 2008 Thread blocks of kernel must be independent tasks Program must be valid for any interleaving of block executions Thread blocks execute to completion without pre-emption 55

UNIVERSITY OF VIRGINIA What is a Thread Block? Thread block = virtualized multiprocessor Allows problem decomposition according to application’s parallelism Can customize # thread blocks for each kernel launch Thread block = a (data) parallel task All blocks in kernel have the same entry point But may execute any code they want © Kevin Skadron, 2008 Thread blocks of kernel must be independent tasks Program must be valid for any interleaving of block executions Thread blocks execute to completion without pre-emption 55

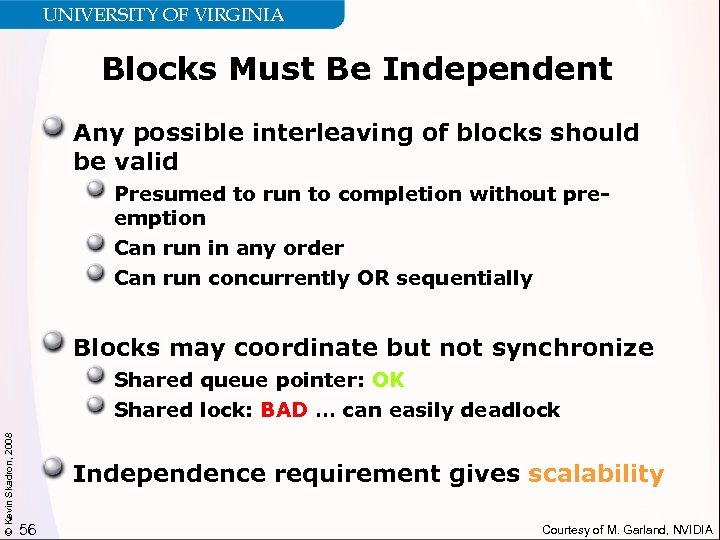

UNIVERSITY OF VIRGINIA Blocks Must Be Independent Any possible interleaving of blocks should be valid Presumed to run to completion without preemption Can run in any order Can run concurrently OR sequentially Blocks may coordinate but not synchronize © Kevin Skadron, 2008 Shared queue pointer: OK Shared lock: BAD … can easily deadlock Independence requirement gives scalability 56 Courtesy of M. Garland, NVIDIA

UNIVERSITY OF VIRGINIA Blocks Must Be Independent Any possible interleaving of blocks should be valid Presumed to run to completion without preemption Can run in any order Can run concurrently OR sequentially Blocks may coordinate but not synchronize © Kevin Skadron, 2008 Shared queue pointer: OK Shared lock: BAD … can easily deadlock Independence requirement gives scalability 56 Courtesy of M. Garland, NVIDIA

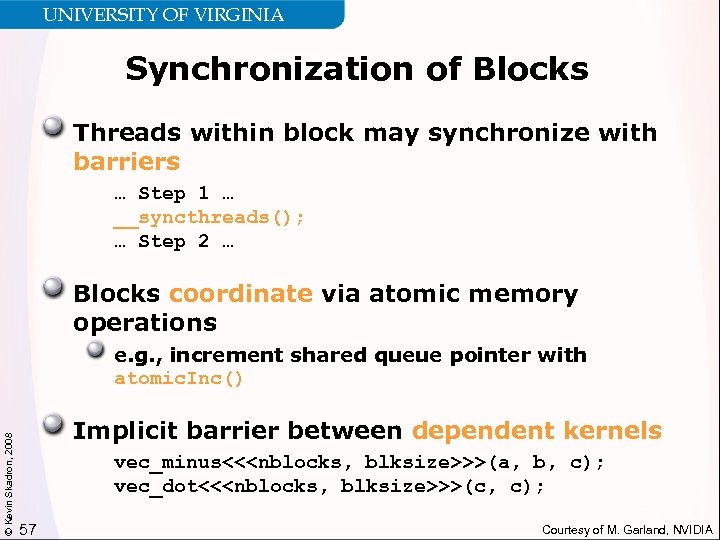

UNIVERSITY OF VIRGINIA Synchronization of Blocks Threads within block may synchronize with barriers … Step 1 … __syncthreads(); … Step 2 … Blocks coordinate via atomic memory operations © Kevin Skadron, 2008 e. g. , increment shared queue pointer with atomic. Inc() Implicit barrier between dependent kernels vec_minus<<

UNIVERSITY OF VIRGINIA Synchronization of Blocks Threads within block may synchronize with barriers … Step 1 … __syncthreads(); … Step 2 … Blocks coordinate via atomic memory operations © Kevin Skadron, 2008 e. g. , increment shared queue pointer with atomic. Inc() Implicit barrier between dependent kernels vec_minus<<

UNIVERSITY OF VIRGINIA Types of Parallelism Thread parallelism Each thread is an independent thread of execution Data parallelism Across threads in a block Across blocks in a kernel © Kevin Skadron, 2008 Task parallelism Different blocks are independent Independent kernels 58

UNIVERSITY OF VIRGINIA Types of Parallelism Thread parallelism Each thread is an independent thread of execution Data parallelism Across threads in a block Across blocks in a kernel © Kevin Skadron, 2008 Task parallelism Different blocks are independent Independent kernels 58

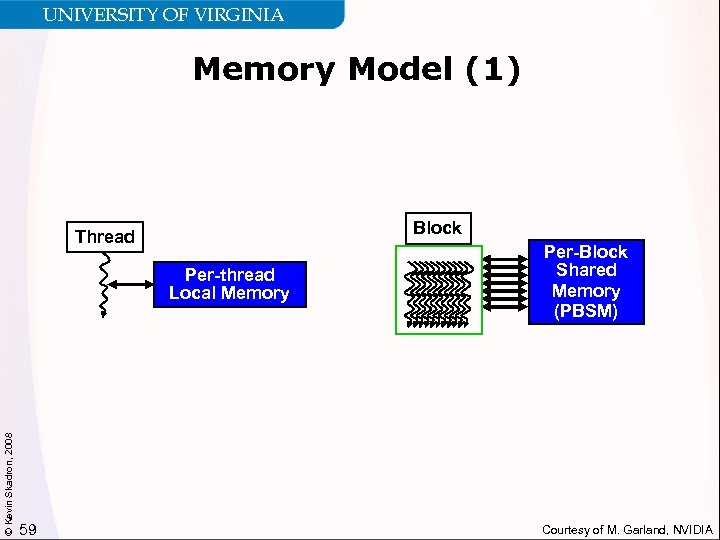

UNIVERSITY OF VIRGINIA Memory Model (1) Block Thread © Kevin Skadron, 2008 Per-thread Local Memory 59 Per-Block Shared Memory (PBSM) Courtesy of M. Garland, NVIDIA

UNIVERSITY OF VIRGINIA Memory Model (1) Block Thread © Kevin Skadron, 2008 Per-thread Local Memory 59 Per-Block Shared Memory (PBSM) Courtesy of M. Garland, NVIDIA

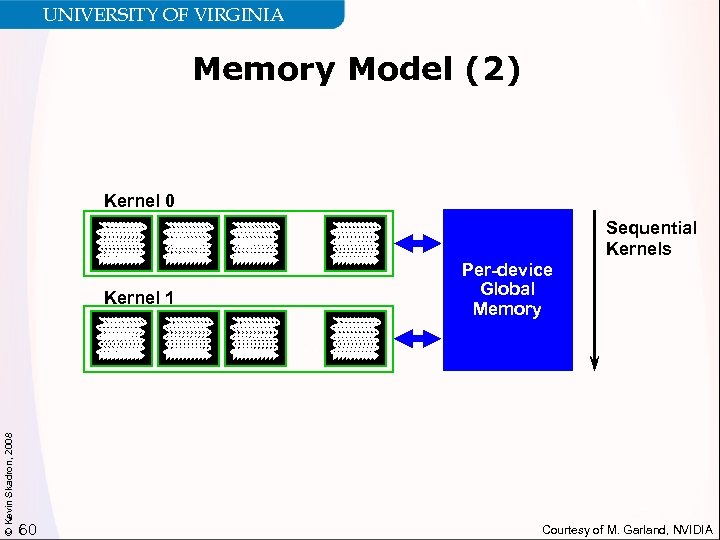

UNIVERSITY OF VIRGINIA Memory Model (2) Kernel 0. . . Kernel 1 Sequential Kernels Per-device Global Memory © Kevin Skadron, 2008 . . . 60 Courtesy of M. Garland, NVIDIA

UNIVERSITY OF VIRGINIA Memory Model (2) Kernel 0. . . Kernel 1 Sequential Kernels Per-device Global Memory © Kevin Skadron, 2008 . . . 60 Courtesy of M. Garland, NVIDIA

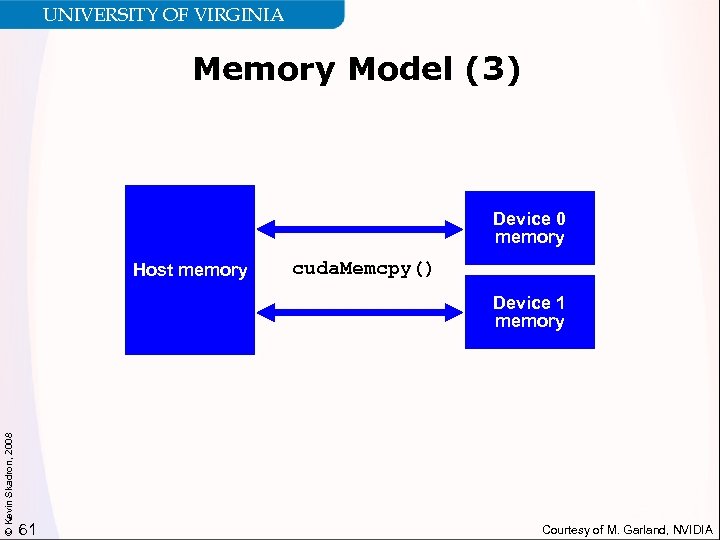

UNIVERSITY OF VIRGINIA Memory Model (3) Device 0 memory Host memory cuda. Memcpy() © Kevin Skadron, 2008 Device 1 memory 61 Courtesy of M. Garland, NVIDIA

UNIVERSITY OF VIRGINIA Memory Model (3) Device 0 memory Host memory cuda. Memcpy() © Kevin Skadron, 2008 Device 1 memory 61 Courtesy of M. Garland, NVIDIA

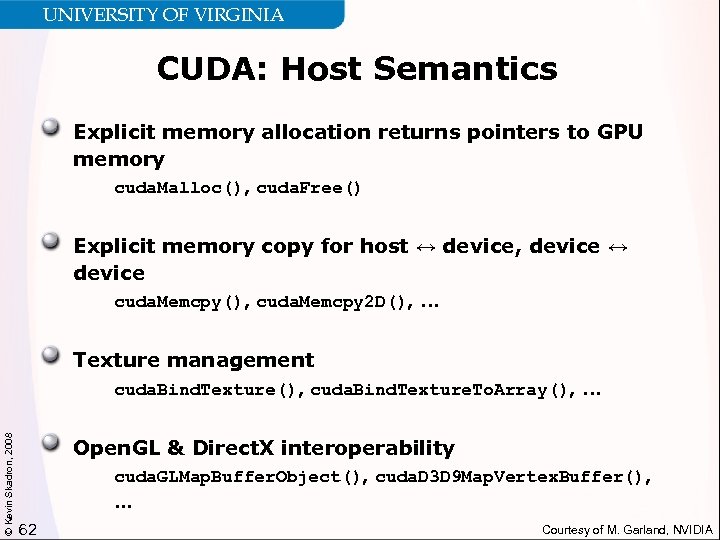

UNIVERSITY OF VIRGINIA CUDA: Host Semantics Explicit memory allocation returns pointers to GPU memory cuda. Malloc(), cuda. Free() Explicit memory copy for host ↔ device, device ↔ device cuda. Memcpy(), cuda. Memcpy 2 D(), . . . Texture management © Kevin Skadron, 2008 cuda. Bind. Texture(), cuda. Bind. Texture. To. Array(), . . . Open. GL & Direct. X interoperability cuda. GLMap. Buffer. Object(), cuda. D 3 D 9 Map. Vertex. Buffer(), … 62 Courtesy of M. Garland, NVIDIA

UNIVERSITY OF VIRGINIA CUDA: Host Semantics Explicit memory allocation returns pointers to GPU memory cuda. Malloc(), cuda. Free() Explicit memory copy for host ↔ device, device ↔ device cuda. Memcpy(), cuda. Memcpy 2 D(), . . . Texture management © Kevin Skadron, 2008 cuda. Bind. Texture(), cuda. Bind. Texture. To. Array(), . . . Open. GL & Direct. X interoperability cuda. GLMap. Buffer. Object(), cuda. D 3 D 9 Map. Vertex. Buffer(), … 62 Courtesy of M. Garland, NVIDIA

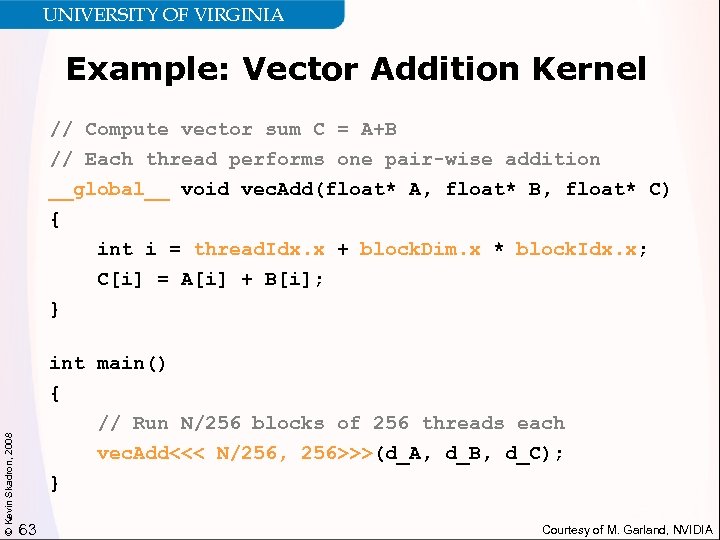

UNIVERSITY OF VIRGINIA Example: Vector Addition Kernel // Compute vector sum C = A+B // Each thread performs one pair-wise addition __global__ void vec. Add(float* A, float* B, float* C) { int i = thread. Idx. x + block. Dim. x * block. Idx. x; C[i] = A[i] + B[i]; © Kevin Skadron, 2008 } int main() { // Run N/256 blocks of 256 threads each vec. Add<<< N/256, 256>>>(d_A, d_B, d_C); } 63 Courtesy of M. Garland, NVIDIA

UNIVERSITY OF VIRGINIA Example: Vector Addition Kernel // Compute vector sum C = A+B // Each thread performs one pair-wise addition __global__ void vec. Add(float* A, float* B, float* C) { int i = thread. Idx. x + block. Dim. x * block. Idx. x; C[i] = A[i] + B[i]; © Kevin Skadron, 2008 } int main() { // Run N/256 blocks of 256 threads each vec. Add<<< N/256, 256>>>(d_A, d_B, d_C); } 63 Courtesy of M. Garland, NVIDIA

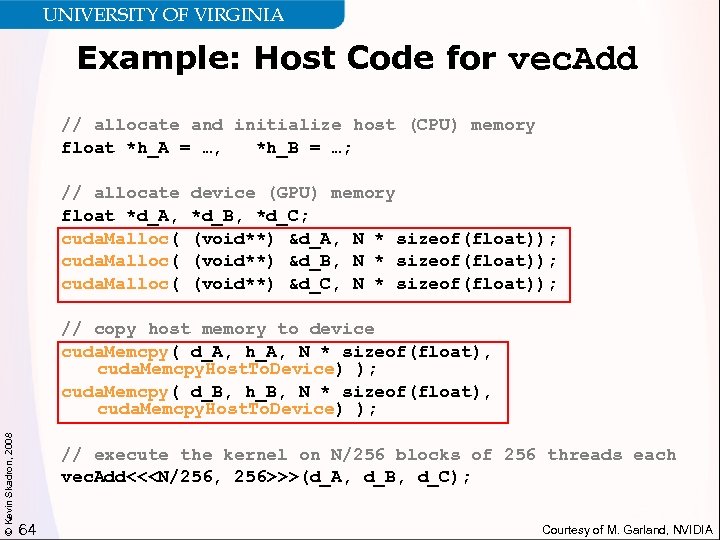

UNIVERSITY OF VIRGINIA Example: Host Code for vec. Add // allocate and initialize host (CPU) memory float *h_A = …, *h_B = …; // allocate float *d_A, cuda. Malloc( device (GPU) memory *d_B, *d_C; (void**) &d_A, N * sizeof(float)); (void**) &d_B, N * sizeof(float)); (void**) &d_C, N * sizeof(float)); © Kevin Skadron, 2008 // copy host memory to device cuda. Memcpy( d_A, h_A, N * sizeof(float), cuda. Memcpy. Host. To. Device) ); cuda. Memcpy( d_B, h_B, N * sizeof(float), cuda. Memcpy. Host. To. Device) ); // execute the kernel on N/256 blocks of 256 threads each vec. Add<<

UNIVERSITY OF VIRGINIA Example: Host Code for vec. Add // allocate and initialize host (CPU) memory float *h_A = …, *h_B = …; // allocate float *d_A, cuda. Malloc( device (GPU) memory *d_B, *d_C; (void**) &d_A, N * sizeof(float)); (void**) &d_B, N * sizeof(float)); (void**) &d_C, N * sizeof(float)); © Kevin Skadron, 2008 // copy host memory to device cuda. Memcpy( d_A, h_A, N * sizeof(float), cuda. Memcpy. Host. To. Device) ); cuda. Memcpy( d_B, h_B, N * sizeof(float), cuda. Memcpy. Host. To. Device) ); // execute the kernel on N/256 blocks of 256 threads each vec. Add<<

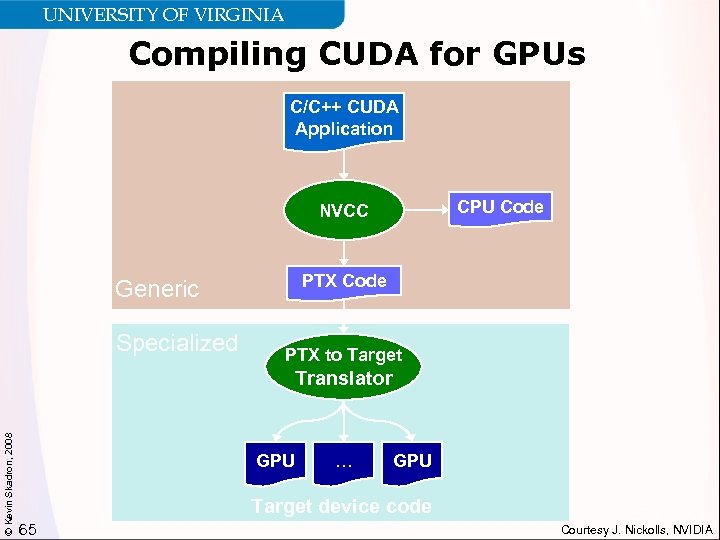

UNIVERSITY OF VIRGINIA Compiling CUDA for GPUs C/C++ CUDA Application CPU Code NVCC PTX Code Generic Specialized PTX to Target © Kevin Skadron, 2008 Translator GPU … GPU Target device code 65 Courtesy J. Nickolls, NVIDIA

UNIVERSITY OF VIRGINIA Compiling CUDA for GPUs C/C++ CUDA Application CPU Code NVCC PTX Code Generic Specialized PTX to Target © Kevin Skadron, 2008 Translator GPU … GPU Target device code 65 Courtesy J. Nickolls, NVIDIA

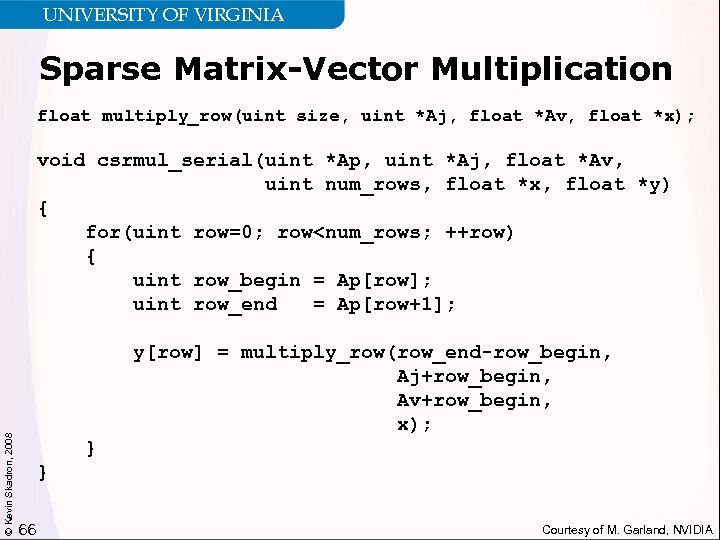

UNIVERSITY OF VIRGINIA Sparse Matrix-Vector Multiplication float multiply_row(uint size, uint *Aj, float *Av, float *x); © Kevin Skadron, 2008 void csrmul_serial(uint *Ap, uint *Aj, float *Av, uint num_rows, float *x, float *y) { for(uint row=0; row

UNIVERSITY OF VIRGINIA Sparse Matrix-Vector Multiplication float multiply_row(uint size, uint *Aj, float *Av, float *x); © Kevin Skadron, 2008 void csrmul_serial(uint *Ap, uint *Aj, float *Av, uint num_rows, float *x, float *y) { for(uint row=0; row

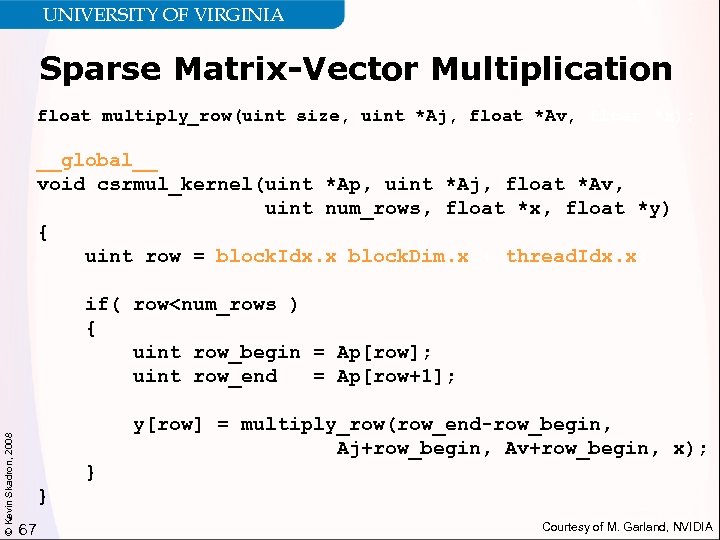

UNIVERSITY OF VIRGINIA Sparse Matrix-Vector Multiplication float multiply_row(uint size, uint *Aj, float *Av, float *x); __global__ void csrmul_kernel(uint *Ap, uint *Aj, float *Av, uint num_rows, float *x, float *y) { uint row = block. Idx. x*block. Dim. x + thread. Idx. x; © Kevin Skadron, 2008 if( row

UNIVERSITY OF VIRGINIA Sparse Matrix-Vector Multiplication float multiply_row(uint size, uint *Aj, float *Av, float *x); __global__ void csrmul_kernel(uint *Ap, uint *Aj, float *Av, uint num_rows, float *x, float *y) { uint row = block. Idx. x*block. Dim. x + thread. Idx. x; © Kevin Skadron, 2008 if( row

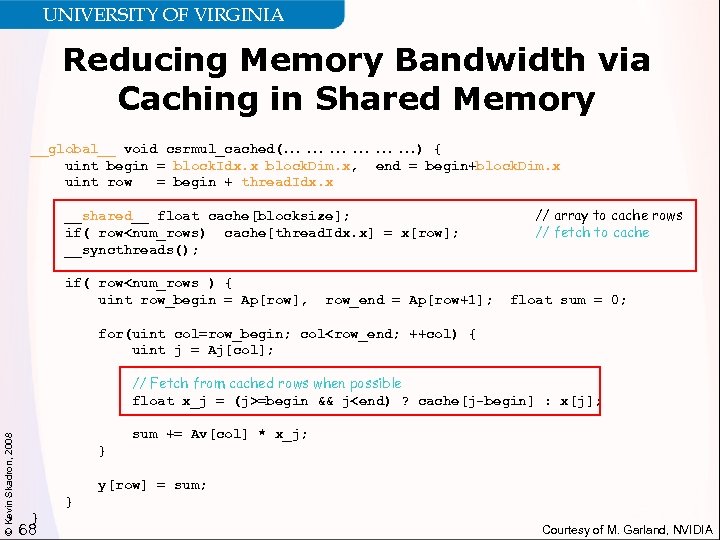

UNIVERSITY OF VIRGINIA Reducing Memory Bandwidth via Caching in Shared Memory __global__ void csrmul_cached(… … … …) { uint begin = block. Idx. x*block. Dim. x, end = begin+block. Dim. x; uint row = begin + thread. Idx. x; __shared__ float cache[blocksize]; if( row

UNIVERSITY OF VIRGINIA Reducing Memory Bandwidth via Caching in Shared Memory __global__ void csrmul_cached(… … … …) { uint begin = block. Idx. x*block. Dim. x, end = begin+block. Dim. x; uint row = begin + thread. Idx. x; __shared__ float cache[blocksize]; if( row

UNIVERSITY OF VIRGINIA Basic Efficiency Rules Develop algorithms with a data parallel mindset Simple example – parallel summation now requires a reduction Maximize locality of global memory accesses This will improve memory bandwidth utilization and, depending on platform, local caching © Kevin Skadron, 2008 Exploit per-block shared memory as scratchpad Even on CPUs, this will improve locality Similar to benefits of blocking Expose enough parallelism Need minimum of 1000 s of threads 69

UNIVERSITY OF VIRGINIA Basic Efficiency Rules Develop algorithms with a data parallel mindset Simple example – parallel summation now requires a reduction Maximize locality of global memory accesses This will improve memory bandwidth utilization and, depending on platform, local caching © Kevin Skadron, 2008 Exploit per-block shared memory as scratchpad Even on CPUs, this will improve locality Similar to benefits of blocking Expose enough parallelism Need minimum of 1000 s of threads 69

UNIVERSITY OF VIRGINIA Summary So Far Three key generic abstractions: 1. hierarchy of parallel threads 2. corresponding levels of synchronization 3. corresponding memory spaces Thread blocks promote scalable algorithms Focus on parallelism, correctness, and scalability first © Kevin Skadron, 2008 Then a few standard optimizations usually produce significant additional speedup CUDA illustrates promising directions to pursue for other coprocessors and heterogeneous systems in general 70

UNIVERSITY OF VIRGINIA Summary So Far Three key generic abstractions: 1. hierarchy of parallel threads 2. corresponding levels of synchronization 3. corresponding memory spaces Thread blocks promote scalable algorithms Focus on parallelism, correctness, and scalability first © Kevin Skadron, 2008 Then a few standard optimizations usually produce significant additional speedup CUDA illustrates promising directions to pursue for other coprocessors and heterogeneous systems in general 70

UNIVERSITY OF VIRGINIA Outline of GPU Portion of Talk Overview of interesting GPU features © Kevin Skadron, 2008 Role of GPU in system architecture More detail on CUDA More detail on NVIDIA Tesla architecture 71

UNIVERSITY OF VIRGINIA Outline of GPU Portion of Talk Overview of interesting GPU features © Kevin Skadron, 2008 Role of GPU in system architecture More detail on CUDA More detail on NVIDIA Tesla architecture 71

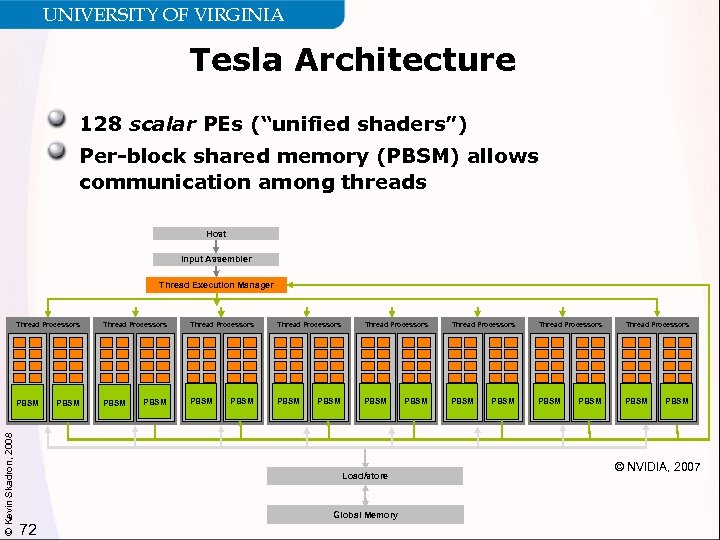

UNIVERSITY OF VIRGINIA Tesla Architecture 128 scalar PEs (“unified shaders”) Per-block shared memory (PBSM) allows communication among threads Host Input Assembler Thread Execution Manager Thread Processors PBSM © Kevin Skadron, 2008 Thread Processors PBSM Thread Processors Thread Processors PBSM PBSM Load/store Global Memory 72 PBSM © NVIDIA, 2007

UNIVERSITY OF VIRGINIA Tesla Architecture 128 scalar PEs (“unified shaders”) Per-block shared memory (PBSM) allows communication among threads Host Input Assembler Thread Execution Manager Thread Processors PBSM © Kevin Skadron, 2008 Thread Processors PBSM Thread Processors Thread Processors PBSM PBSM Load/store Global Memory 72 PBSM © NVIDIA, 2007

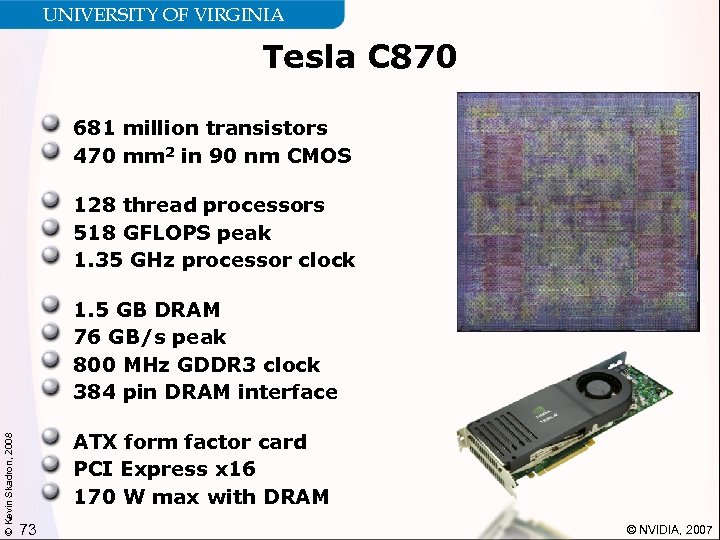

UNIVERSITY OF VIRGINIA Tesla C 870 681 million transistors 470 mm 2 in 90 nm CMOS 128 thread processors 518 GFLOPS peak 1. 35 GHz processor clock © Kevin Skadron, 2008 1. 5 GB DRAM 76 GB/s peak 800 MHz GDDR 3 clock 384 pin DRAM interface ATX form factor card PCI Express x 16 170 W max with DRAM 73 © NVIDIA, 2007

UNIVERSITY OF VIRGINIA Tesla C 870 681 million transistors 470 mm 2 in 90 nm CMOS 128 thread processors 518 GFLOPS peak 1. 35 GHz processor clock © Kevin Skadron, 2008 1. 5 GB DRAM 76 GB/s peak 800 MHz GDDR 3 clock 384 pin DRAM interface ATX form factor card PCI Express x 16 170 W max with DRAM 73 © NVIDIA, 2007

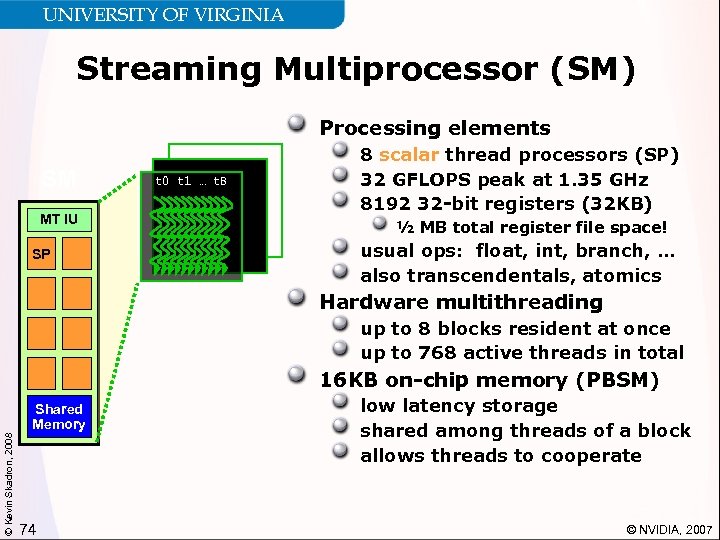

UNIVERSITY OF VIRGINIA Streaming Multiprocessor (SM) Processing elements SM MT IU SP t 0 t 1 … t. B 8 scalar thread processors (SP) 32 GFLOPS peak at 1. 35 GHz 8192 32 -bit registers (32 KB) ½ MB total register file space! usual ops: float, int, branch, … also transcendentals, atomics Hardware multithreading up to 8 blocks resident at once up to 768 active threads in total 16 KB on-chip memory (PBSM) © Kevin Skadron, 2008 Shared Memory 74 low latency storage shared among threads of a block allows threads to cooperate © NVIDIA, 2007

UNIVERSITY OF VIRGINIA Streaming Multiprocessor (SM) Processing elements SM MT IU SP t 0 t 1 … t. B 8 scalar thread processors (SP) 32 GFLOPS peak at 1. 35 GHz 8192 32 -bit registers (32 KB) ½ MB total register file space! usual ops: float, int, branch, … also transcendentals, atomics Hardware multithreading up to 8 blocks resident at once up to 768 active threads in total 16 KB on-chip memory (PBSM) © Kevin Skadron, 2008 Shared Memory 74 low latency storage shared among threads of a block allows threads to cooperate © NVIDIA, 2007

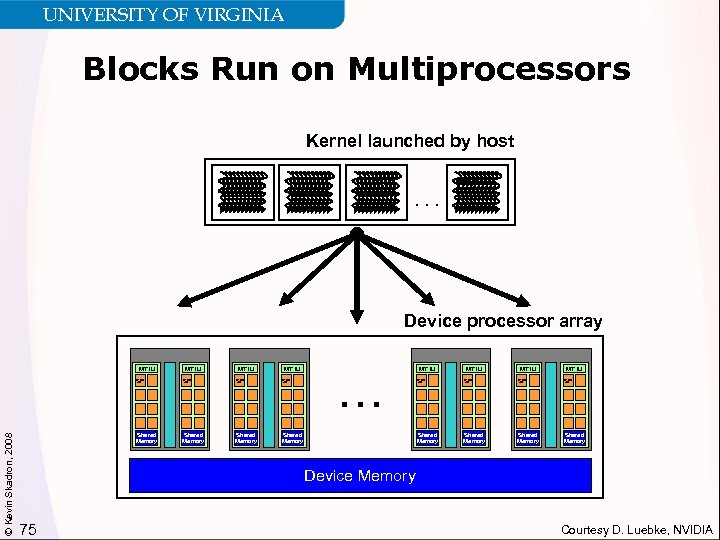

UNIVERSITY OF VIRGINIA Blocks Run on Multiprocessors Kernel launched by host. . . Device processor array MT IU © Kevin Skadron, 2008 SP SP Shared Memory MT IU SP SP SP Shared Memory . . . SP Shared Memory Device Memory 75 Courtesy D. Luebke, NVIDIA

UNIVERSITY OF VIRGINIA Blocks Run on Multiprocessors Kernel launched by host. . . Device processor array MT IU © Kevin Skadron, 2008 SP SP Shared Memory MT IU SP SP SP Shared Memory . . . SP Shared Memory Device Memory 75 Courtesy D. Luebke, NVIDIA

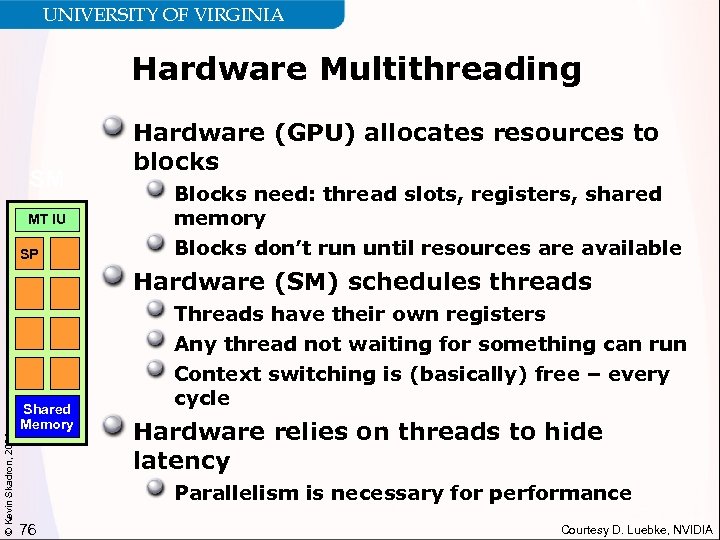

UNIVERSITY OF VIRGINIA Hardware Multithreading SM MT IU SP Hardware (GPU) allocates resources to blocks Blocks need: thread slots, registers, shared memory Blocks don’t run until resources are available Hardware (SM) schedules threads © Kevin Skadron, 2008 Shared Memory Threads have their own registers Any thread not waiting for something can run Context switching is (basically) free – every cycle Hardware relies on threads to hide latency Parallelism is necessary for performance 76 Courtesy D. Luebke, NVIDIA

UNIVERSITY OF VIRGINIA Hardware Multithreading SM MT IU SP Hardware (GPU) allocates resources to blocks Blocks need: thread slots, registers, shared memory Blocks don’t run until resources are available Hardware (SM) schedules threads © Kevin Skadron, 2008 Shared Memory Threads have their own registers Any thread not waiting for something can run Context switching is (basically) free – every cycle Hardware relies on threads to hide latency Parallelism is necessary for performance 76 Courtesy D. Luebke, NVIDIA

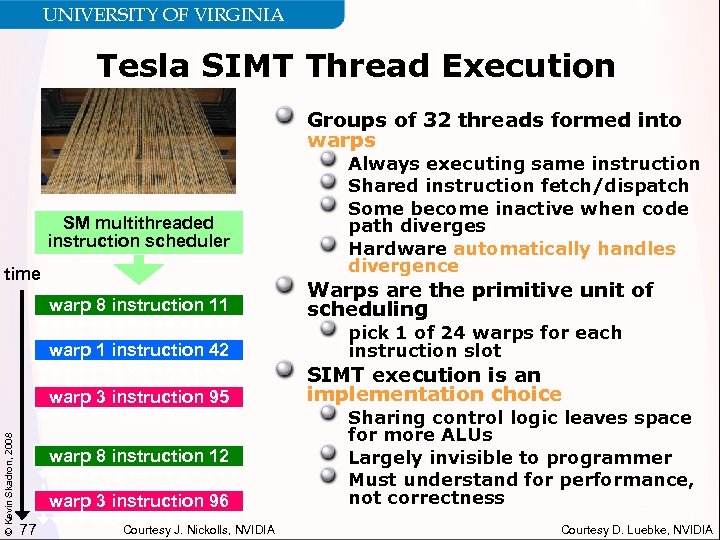

UNIVERSITY OF VIRGINIA Tesla SIMT Thread Execution Groups of 32 threads formed into warps SM multithreaded instruction scheduler time Always executing same instruction Shared instruction fetch/dispatch Some become inactive when code path diverges Hardware automatically handles divergence Warps are the primitive unit of scheduling warp 1 instruction 42 © Kevin Skadron, 2008 warp 8 instruction 11 pick 1 of 24 warps for each instruction slot warp 3 instruction 95. . . warp 8 instruction 12 warp 3 instruction 96 77 Courtesy J. Nickolls, NVIDIA SIMT execution is an implementation choice Sharing control logic leaves space for more ALUs Largely invisible to programmer Must understand for performance, not correctness Courtesy D. Luebke, NVIDIA

UNIVERSITY OF VIRGINIA Tesla SIMT Thread Execution Groups of 32 threads formed into warps SM multithreaded instruction scheduler time Always executing same instruction Shared instruction fetch/dispatch Some become inactive when code path diverges Hardware automatically handles divergence Warps are the primitive unit of scheduling warp 1 instruction 42 © Kevin Skadron, 2008 warp 8 instruction 11 pick 1 of 24 warps for each instruction slot warp 3 instruction 95. . . warp 8 instruction 12 warp 3 instruction 96 77 Courtesy J. Nickolls, NVIDIA SIMT execution is an implementation choice Sharing control logic leaves space for more ALUs Largely invisible to programmer Must understand for performance, not correctness Courtesy D. Luebke, NVIDIA

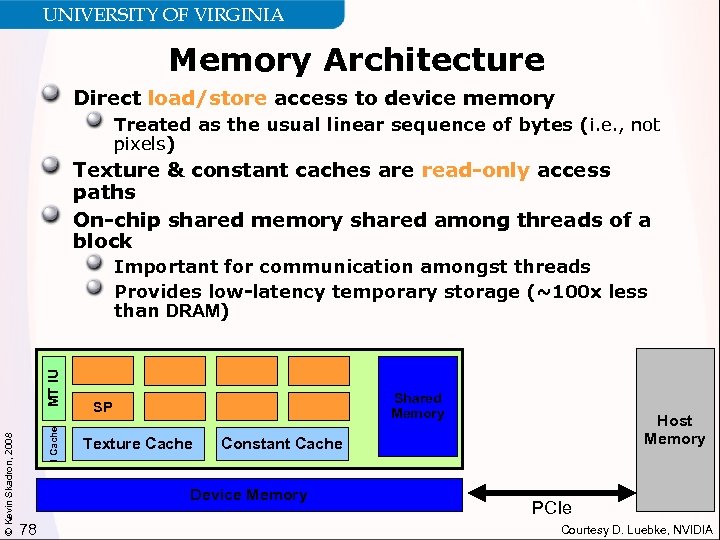

UNIVERSITY OF VIRGINIA Memory Architecture Direct load/store access to device memory Treated as the usual linear sequence of bytes (i. e. , not pixels) Texture & constant caches are read-only access paths On-chip shared memory shared among threads of a block I Cache © Kevin Skadron, 2008 MT IU Important for communication amongst threads Provides low-latency temporary storage (~100 x less than DRAM) Shared Memory SP Texture Cache Constant Cache Device Memory 78 Host Memory PCIe Courtesy D. Luebke, NVIDIA

UNIVERSITY OF VIRGINIA Memory Architecture Direct load/store access to device memory Treated as the usual linear sequence of bytes (i. e. , not pixels) Texture & constant caches are read-only access paths On-chip shared memory shared among threads of a block I Cache © Kevin Skadron, 2008 MT IU Important for communication amongst threads Provides low-latency temporary storage (~100 x less than DRAM) Shared Memory SP Texture Cache Constant Cache Device Memory 78 Host Memory PCIe Courtesy D. Luebke, NVIDIA

UNIVERSITY OF VIRGINIA Summary So Far Key Tesla Architecture Features © Kevin Skadron, 2008 Scalar ISA 32 -wide SIMT Deeply multithreaded Per-block shared memory Designed for scalability 79

UNIVERSITY OF VIRGINIA Summary So Far Key Tesla Architecture Features © Kevin Skadron, 2008 Scalar ISA 32 -wide SIMT Deeply multithreaded Per-block shared memory Designed for scalability 79

UNIVERSITY OF VIRGINIA Conclusions ILP wall + power wall multicore Power wall will limit multicore scaling too Coprocessors offer compelling performance and energy-efficiency benefits Architecture of a heterogeneous system—open question Programmability is the key challenge for heterogeneous architectures CUDA offers interesting lessons on generic abstractions, scalability © Kevin Skadron, 2008 GPUs are an interesting platform for research on parallelism, heterogeneity Manycore architecture Facilitate parallelism research at scale Can be placed at various positions in system architecture 80

UNIVERSITY OF VIRGINIA Conclusions ILP wall + power wall multicore Power wall will limit multicore scaling too Coprocessors offer compelling performance and energy-efficiency benefits Architecture of a heterogeneous system—open question Programmability is the key challenge for heterogeneous architectures CUDA offers interesting lessons on generic abstractions, scalability © Kevin Skadron, 2008 GPUs are an interesting platform for research on parallelism, heterogeneity Manycore architecture Facilitate parallelism research at scale Can be placed at various positions in system architecture 80

UNIVERSITY OF VIRGINIA Thank You Questions? Contact me: © Kevin Skadron, 2008 skadron@cs. virginia. edu http: //www. cs. virginia. edu/~skadron 81

UNIVERSITY OF VIRGINIA Thank You Questions? Contact me: © Kevin Skadron, 2008 skadron@cs. virginia. edu http: //www. cs. virginia. edu/~skadron 81