0f4d904a983f0e905adbd431f00ac0e2.ppt

- Количество слайдов: 18

Towards Autonomic Hosting of Multi-tier Internet Services Swaminathan Sivasubramanian, Guillaume Pierre and Maarten van Steen Vrije Universiteit, Amsterdam, The Netherlands.

Towards Autonomic Hosting of Multi-tier Internet Services Swaminathan Sivasubramanian, Guillaume Pierre and Maarten van Steen Vrije Universiteit, Amsterdam, The Netherlands.

Hosting Large-Scale Internet Services n Large-scale e-commerce enterprises use complex software systems q Sites built of numerous applications called services. q A request to amazon. com leads to requests to hundreds of services [Vogels, ACM Queue, 2006]. n Each site has a SLA (latency, availability targets) q Global optimization-based hosting is intractable q Convert Global to per-service SLA q Host each service scalably. n Problem in focus: Efficient hosting of an Internet service.

Hosting Large-Scale Internet Services n Large-scale e-commerce enterprises use complex software systems q Sites built of numerous applications called services. q A request to amazon. com leads to requests to hundreds of services [Vogels, ACM Queue, 2006]. n Each site has a SLA (latency, availability targets) q Global optimization-based hosting is intractable q Convert Global to per-service SLA q Host each service scalably. n Problem in focus: Efficient hosting of an Internet service.

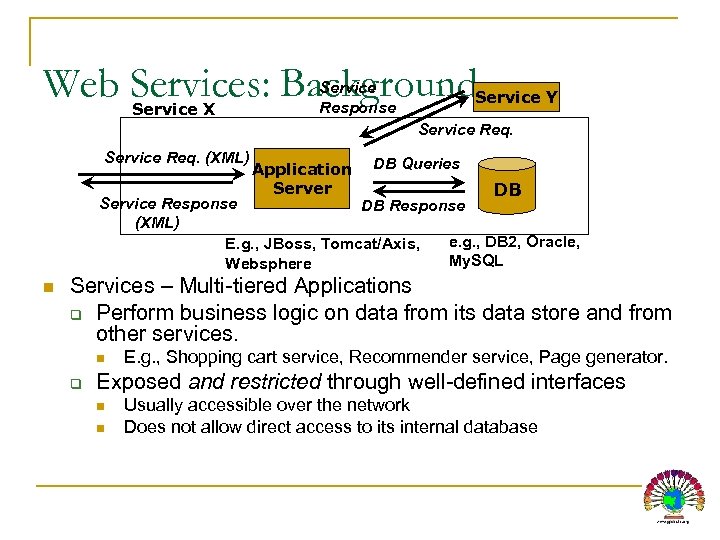

Web Services: Background Service X Service Response Service Y Service Req. (XML) Application Server DB Queries DB Service Response DB Response (XML) e. g. , DB 2, Oracle, E. g. , JBoss, Tomcat/Axis, My. SQL Websphere n Services – Multi-tiered Applications q Perform business logic on data from its data store and from other services. n q E. g. , Shopping cart service, Recommender service, Page generator. Exposed and restricted through well-defined interfaces n n Usually accessible over the network Does not allow direct access to its internal database

Web Services: Background Service X Service Response Service Y Service Req. (XML) Application Server DB Queries DB Service Response DB Response (XML) e. g. , DB 2, Oracle, E. g. , JBoss, Tomcat/Axis, My. SQL Websphere n Services – Multi-tiered Applications q Perform business logic on data from its data store and from other services. n q E. g. , Shopping cart service, Recommender service, Page generator. Exposed and restricted through well-defined interfaces n n Usually accessible over the network Does not allow direct access to its internal database

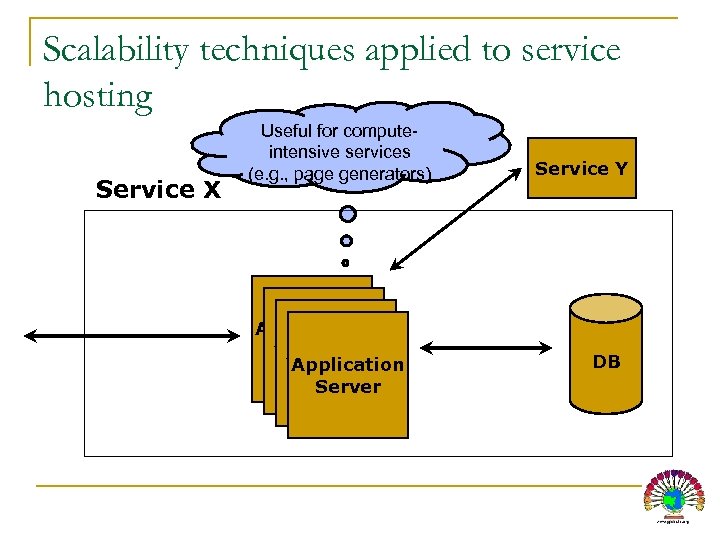

Scalability techniques applied to service hosting Service X Useful for computeintensive services (e. g. , page generators) Application Server Service Y DB

Scalability techniques applied to service hosting Service X Useful for computeintensive services (e. g. , page generators) Application Server Service Y DB

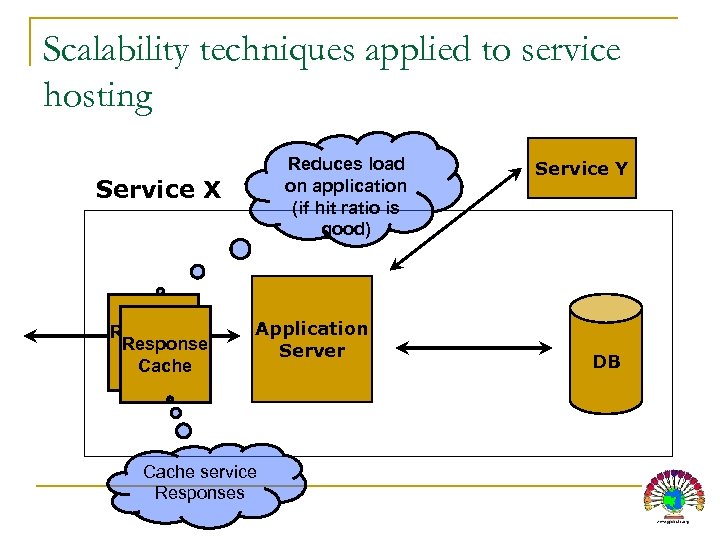

Scalability techniques applied to service hosting Reduces load on application (if hit ratio is good) Service X Response Cache Application Server Cache service Responses Service Y DB

Scalability techniques applied to service hosting Reduces load on application (if hit ratio is good) Service X Response Cache Application Server Cache service Responses Service Y DB

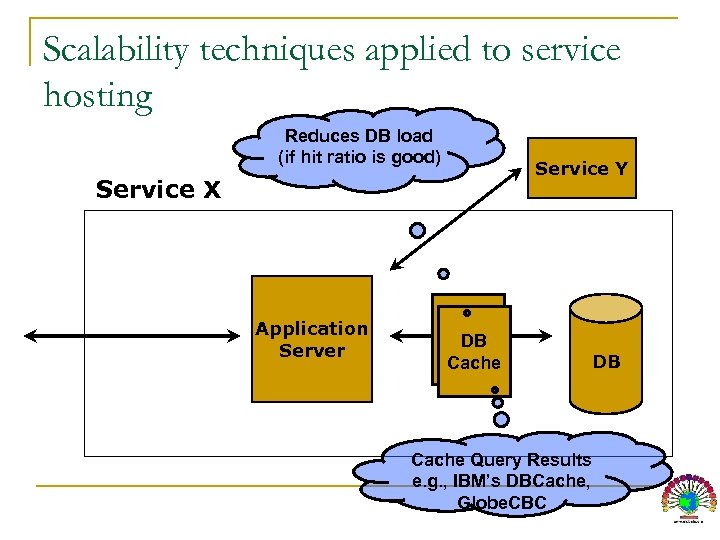

Scalability techniques applied to service hosting Reduces DB load (if hit ratio is good) Service X Application Server DB DB Caches Cache Service Y DB Cache Query Results e. g. , IBM’s DBCache, Globe. CBC

Scalability techniques applied to service hosting Reduces DB load (if hit ratio is good) Service X Application Server DB DB Caches Cache Service Y DB Cache Query Results e. g. , IBM’s DBCache, Globe. CBC

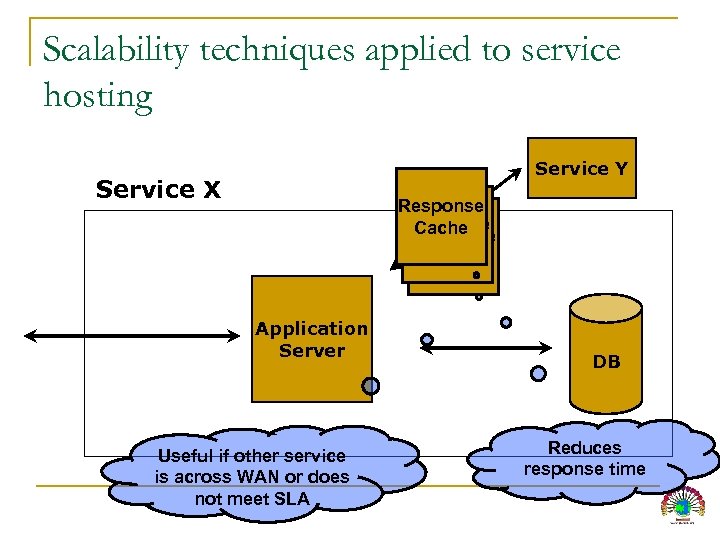

Scalability techniques applied to service hosting Service Y Service X Response Cache Application Server Useful if other service is across WAN or does not meet SLA DB Reduces response time

Scalability techniques applied to service hosting Service Y Service X Response Cache Application Server Useful if other service is across WAN or does not meet SLA DB Reduces response time

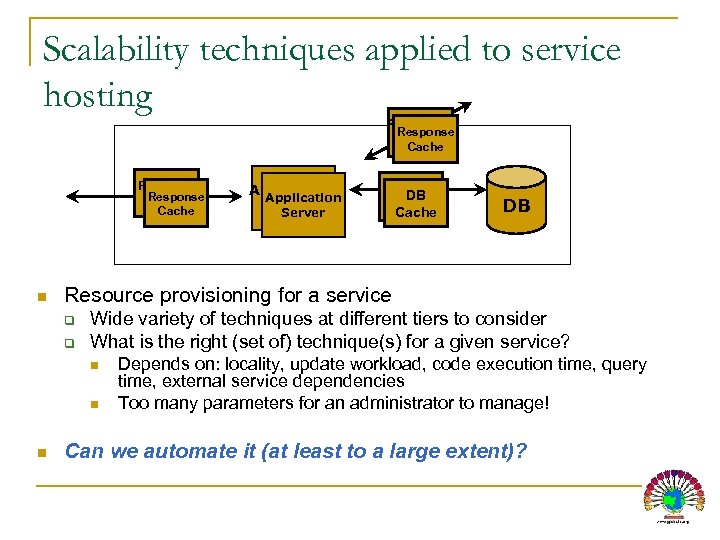

Scalability techniques applied to service hosting Response Cache n DB DB Cache DB Resource provisioning for a service q q Wide variety of techniques at different tiers to consider What is the right (set of) technique(s) for a given service? n n n Application Server Depends on: locality, update workload, code execution time, query time, external service dependencies Too many parameters for an administrator to manage! Can we automate it (at least to a large extent)?

Scalability techniques applied to service hosting Response Cache n DB DB Cache DB Resource provisioning for a service q q Wide variety of techniques at different tiers to consider What is the right (set of) technique(s) for a given service? n n n Application Server Depends on: locality, update workload, code execution time, query time, external service dependencies Too many parameters for an administrator to manage! Can we automate it (at least to a large extent)?

Autonomic Hosting: Initial Objective “To find the minimum set of resources to host a given service such that its end-to-end latency is maintained between [Latmin, Latmax]. ” We pose it as: “To find the minimum number of resources (servers) to provision in each tier for a service to meet its SLA”

Autonomic Hosting: Initial Objective “To find the minimum set of resources to host a given service such that its end-to-end latency is maintained between [Latmin, Latmax]. ” We pose it as: “To find the minimum number of resources (servers) to provision in each tier for a service to meet its SLA”

Proposed Approach n Get a model of end-to-end latency q Lat = f(hrserver , t. App, hrcli , tdb , hrdbcache , Req. Rate) q q hr = hit ratio, t = execution time f – Latency modeling function n Little’s law based network of queues MVA (mean value analysis) on network of queues Or other models?

Proposed Approach n Get a model of end-to-end latency q Lat = f(hrserver , t. App, hrcli , tdb , hrdbcache , Req. Rate) q q hr = hit ratio, t = execution time f – Latency modeling function n Little’s law based network of queues MVA (mean value analysis) on network of queues Or other models?

Proposed Approach (contd. . ) n Fit a service to the model q Parameters such as execution time can be obtained n q Log analysis, server instrumentation Estimating hr at different tiers is harder n Request patterns and update patterns vary Fluid-based cache models assume infinite cache memory n Need a technique that predicts hr for a given cache size n

Proposed Approach (contd. . ) n Fit a service to the model q Parameters such as execution time can be obtained n q Log analysis, server instrumentation Estimating hr at different tiers is harder n Request patterns and update patterns vary Fluid-based cache models assume infinite cache memory n Need a technique that predicts hr for a given cache size n

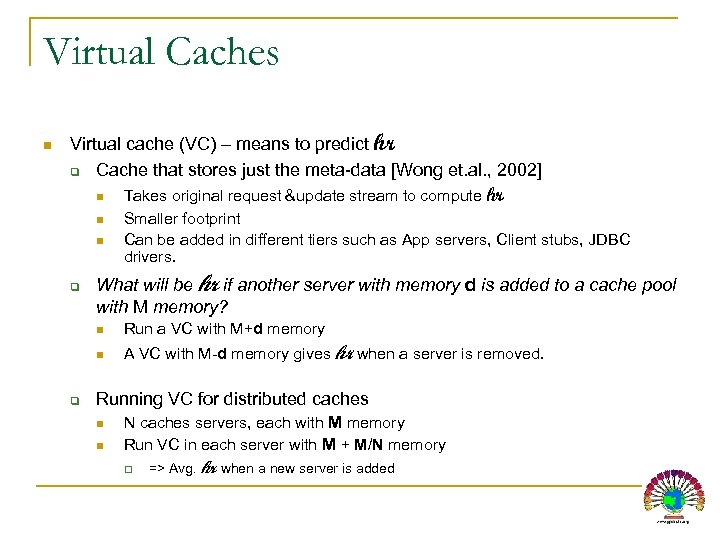

Virtual Caches n Virtual cache (VC) – means to predict hr q Cache that stores just the meta-data [Wong et. al. , 2002] n n n q Takes original request &update stream to compute hr Smaller footprint Can be added in different tiers such as App servers, Client stubs, JDBC drivers. What will be hr if another server with memory d is added to a cache pool with M memory? n n q Run a VC with M+d memory A VC with M-d memory gives hr when a server is removed. Running VC for distributed caches n n N caches servers, each with M memory Run VC in each server with M + M/N memory q => Avg. hr when a new server is added

Virtual Caches n Virtual cache (VC) – means to predict hr q Cache that stores just the meta-data [Wong et. al. , 2002] n n n q Takes original request &update stream to compute hr Smaller footprint Can be added in different tiers such as App servers, Client stubs, JDBC drivers. What will be hr if another server with memory d is added to a cache pool with M memory? n n q Run a VC with M+d memory A VC with M-d memory gives hr when a server is removed. Running VC for distributed caches n n N caches servers, each with M memory Run VC in each server with M + M/N memory q => Avg. hr when a new server is added

Resource Provisioning n To provision a service q Obtain (hr & t) values from different tiers of service q Estimate latency for different resource configurations n q Find the best configuration that meets its latency SLA For a running service n n If SLA is violated, find the best tier to add a server Switching time? q q q Addition of servers take time (e. g. , cache warm up, reconfiguration) Right now, assumed negligible Need to investigate prediction algorithms

Resource Provisioning n To provision a service q Obtain (hr & t) values from different tiers of service q Estimate latency for different resource configurations n q Find the best configuration that meets its latency SLA For a running service n n If SLA is violated, find the best tier to add a server Switching time? q q q Addition of servers take time (e. g. , cache warm up, reconfiguration) Right now, assumed negligible Need to investigate prediction algorithms

Current Status & Limitations n Goal: To build an autonomic hosting platform for Multi-tier internet applications q Multi-queue model w/ online-cache simulations has been a good start q Prototyped with Apache, Tomcat/Axis, My. SQL n n Integrating with our CDN, Globule Experiments with TPC-App -> encouraging Experimented with other services Current Work q Refining Queueing Models for accurate latency estimation q Investigating availability issues

Current Status & Limitations n Goal: To build an autonomic hosting platform for Multi-tier internet applications q Multi-queue model w/ online-cache simulations has been a good start q Prototyped with Apache, Tomcat/Axis, My. SQL n n Integrating with our CDN, Globule Experiments with TPC-App -> encouraging Experimented with other services Current Work q Refining Queueing Models for accurate latency estimation q Investigating availability issues

Discussion Points n n Utilization based SLAs Other prediction models q n Failures q n Does cache behavior vary with req. rate? How to provision for availability targets? Multiple service classes

Discussion Points n n Utilization based SLAs Other prediction models q n Failures q n Does cache behavior vary with req. rate? How to provision for availability targets? Multiple service classes

Availability-aware provisioning n q q To provision for a required up-time n Must consider MTTF and MTTR for servers in each tier n Caches have different MTTR than App. Servers How to provision? n Strategy 1 n n n Perform latency-based provisioning. For each tier, additional resources to reach target uptime Strategy 2 n Formulate as a dual-constrained optimization problem.

Availability-aware provisioning n q q To provision for a required up-time n Must consider MTTF and MTTR for servers in each tier n Caches have different MTTR than App. Servers How to provision? n Strategy 1 n n n Perform latency-based provisioning. For each tier, additional resources to reach target uptime Strategy 2 n Formulate as a dual-constrained optimization problem.

Dynamic Provisioning n For handling dynamic load changes q Need to predict workload changes n Allows us to be prepared earlier q q n Adding/reconfiguring servers take time Prediction window should be greater than server addition time Load prediction is relatively well understood q Prediction of temporal effects?

Dynamic Provisioning n For handling dynamic load changes q Need to predict workload changes n Allows us to be prepared earlier q q n Adding/reconfiguring servers take time Prediction window should be greater than server addition time Load prediction is relatively well understood q Prediction of temporal effects?

Thank You! More info: http: //www. globule. org Questions?

Thank You! More info: http: //www. globule. org Questions?