25792ae2e3a5a05094a0a41c5ee8280b.ppt

- Количество слайдов: 33

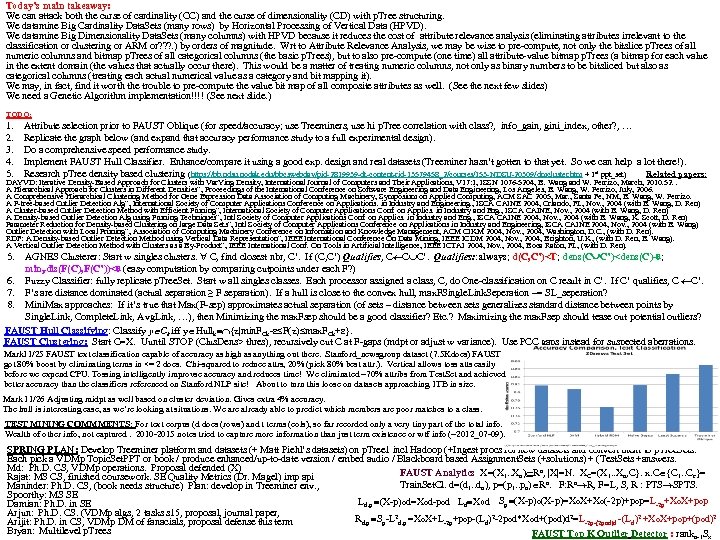

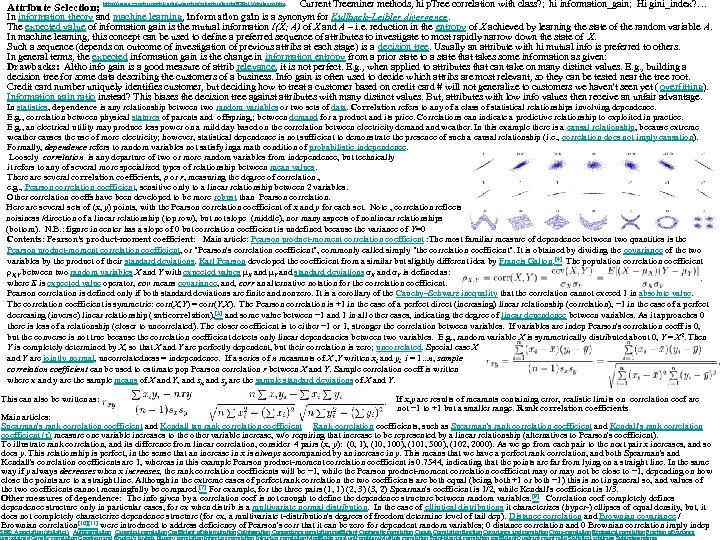

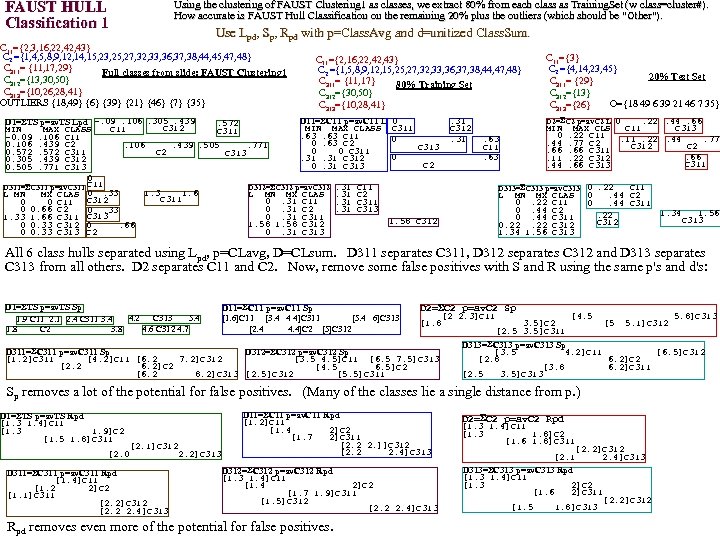

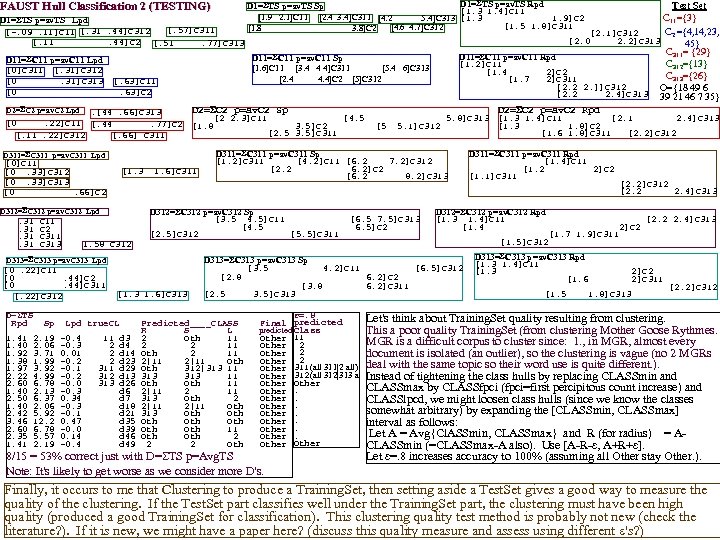

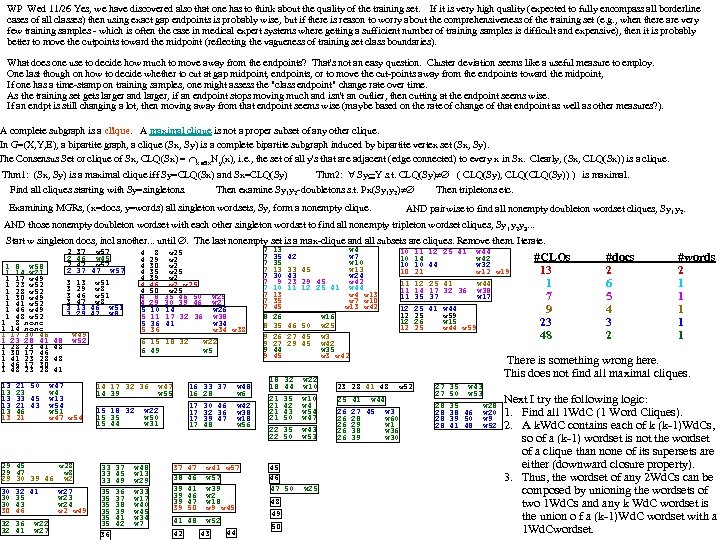

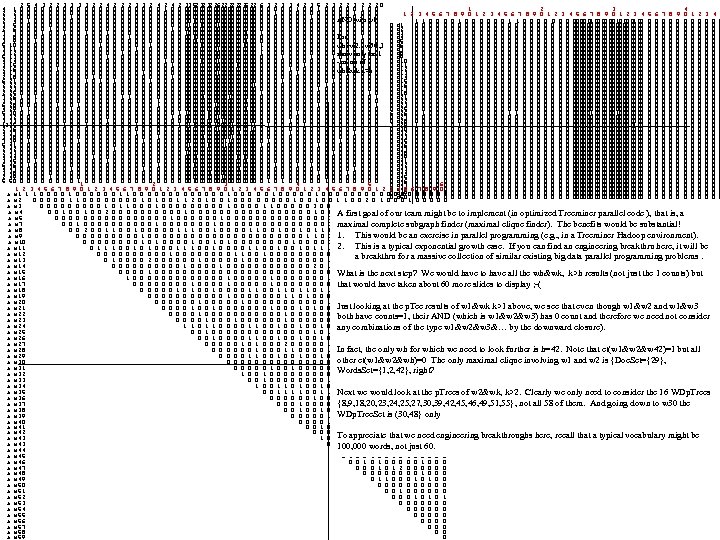

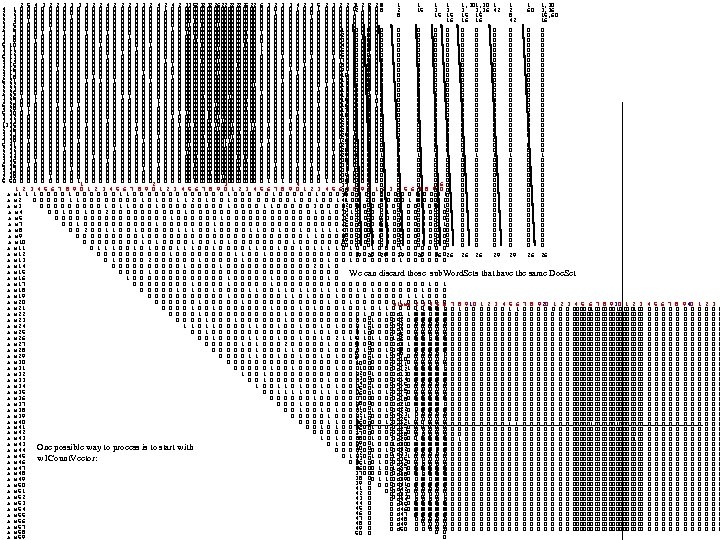

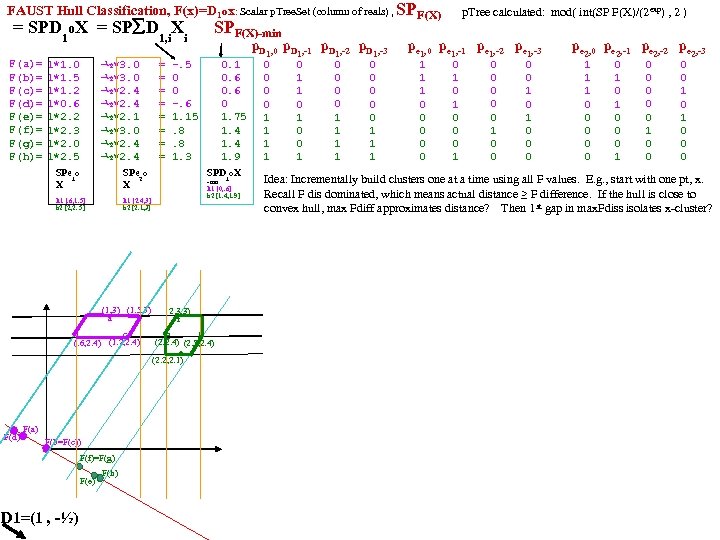

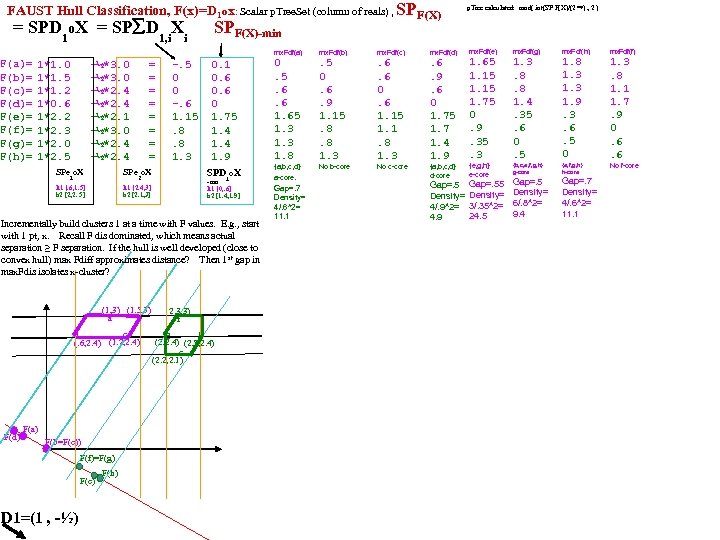

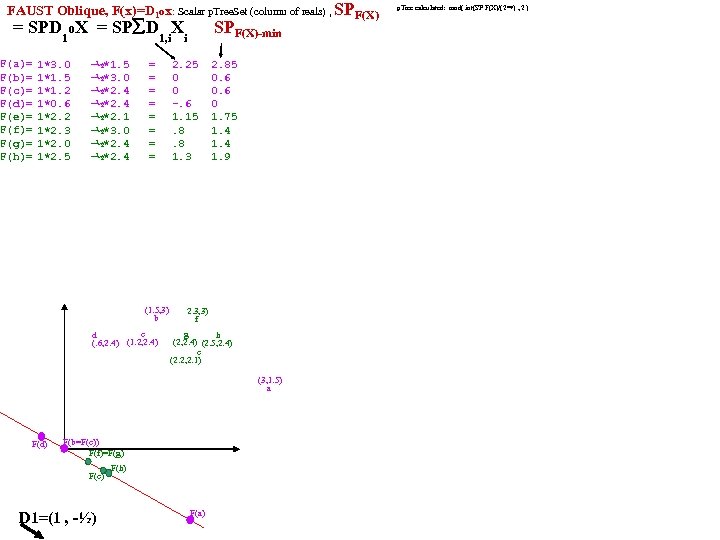

Today’s main takeaway: We can attack both the curse of cardinality (CC) and the curse of dimensionality (CD) with p. Tree structuring. We datamine Big Cardinality Data. Sets (many rows) by Horizontal Processing of Vertical Data (HPVD). We datamine Big Dimensionality Data. Sets (many columns) with HPVD because it reduces the cost of attribute relevance analysis (eliminating attributes irrelevant to the classification or clustering or ARM or? ? ? . ) by orders of magnitude. Wrt to Attribute Relevance Analysis, we may be wise to pre-compute, not only the bitslice p. Trees of all numeric columns and bitmap p. Trees of all categorical columns (the basic p. Trees), but to also pre-compute (one time) all attribute-value bitmap p. Trees (a bitmap for each value in the extent domain (the values that actually occur there). This would be a matter of treating numeric columns, not only as binary numbers to be bitsliced but also as categorical columns (treating each actual numerical value as a category and bit mapping it). We may, in fact, find it worth the trouble to pre-compute the value bit map of all composite attributes as well. (See the next few slides) We need a Genetic Algorithm implementation!!!! (See next slide. ) TODO: 1. 2. 3. 4. 5. Attribute selection prior to FAUST Oblique (for speed/accuracy; use Treeminers, use hi p. Tree correlation with class? , info_gain, gini_index, other? , … Replicate the graph below (and expand that accuracy performance study to a full experimental design). Do a comprehensive speed performance study. Implement FAUST Hull Classifier. Enhance/compare it using a good exp. design and real datasets (Treeminer hasn’t gotten to that yet. So we can help a lot there!). Research p. Tree density based clustering (https: //bb. ndsu. nodak. edu/bbcswebdav/pid-2819939 -dt-content-rid-13579458_2/courses/153 -NDSU-20309/dmcluster. htm + 1 st ppt_set) Related papers: DAYVD: Iterative Density-Based Approach for Clusters with Var. Ying Density, International Journal of Computers and Their Applications, V 17: 1, ISSN 1076 -5204, B. Wang and W. Perrizo, March, 2010. 52. . A Hierarchical Approach for Clusters in Different Densities”, Proceedings of the International Conference on Software Engineering and Data Engineering, Los Angeles, B. Wang, W. Perrizo, July, 2006. A Comprehensive Hierarchical Clustering Method for Gene Expression Data Association of Computing Machinery, Symposium on Applied Computing, ACM SAC 2005, Mar. , Santa Fe, NM, B. Wang, W. Perrizo. A P-tree-based Outlier Detection Alg”, International Society of Computer Applications Conference on Applications. in Industry and Engineering. , ISCA CAINE 2004, Orlando, FL, Nov. , 2004 (with B. Wang, D. Ren) A Cluster-based Outlier Detection Method with Efficient Pruning”, International Society of Computer Applications Conf. on Applics. in Industry and Eng. , ISCA CAINE, Nov. , 2004 (with B. Wang, D. Ren) A Density-based Outlier Detection Alg using Pruning Techniques”, Intl Society of Computer Applications Conf. on Applics. in Industry and Eng. , ISCA CAINE 2004, Nov. , 2004 (with B. Wang, K. Scott, D. Ren) Parameter Reduction for Density-based Clustering on large Data Sets”, Intl Society of Computer Applications Conference on Applications in Industry and Engineering, ISCA CAINE 2004, Nov. , 2004 (with B. Wang) Outlier Detection with Local Pruning”, Association of Computing Machinery Conference on Information and Knowledge Management, ACM CIKM 2004, Nov. , 2004, Washington, D. C. , (with D. Ren). RDF: A Density-based Outlier Detection Method using Vertical Data Representation”, IEEE International Conference On Data Mining, IEEE ICDM 2004, Nov. , 2004, Brighton, U. K. , (with D. Ren, B. Wang). A Vertical Outlier Detection Method with Clusters as a By-Product”, IEEE International Conf. On Tools in Artificial Intelligence, IEEE ICTAI 2004, Nov. , 2004, Boca Raton, FL, (with D. Ren). 5. AGNES Clusterer: Start w singles clusters. C, find closest nbr, C’. If (C, C’) Qualifies, C C C’. Qualifies: : always; d(C, C’)<T; dens(C C’)<dens(C)- ; min. Fdis(F(C), F(C’))< (easy computation by comparing cutpoints under each F? ) 6. Fuzzy Classifier: fully replicate p. Tree. Set. Start w all singles classes. Each processor assigned a class, C, do One-classification on C result in C’. If C’ qualifies, C C’. 7. F’s are distance dominated (actual separation ≥ F separation). If a hull is close to the convex hull, max. FSingle. Link. Seperation ~= SL_seperation? 8. Mini. Max approaches: If it’s true that Max(F-sep) approximates actual separation (of sets – distance between sets generalizes standard distance between points by Single. Link, Complete. Link, Avg. Link, …), then Minimizing the max. Fsep should be a good classifier? Etc. ? Maximizing the max. Fsep should tease out potential outliers? FAUST Hull Classifying: Classify y Ck iff y Hullk {z|min. FCk- F(z) max. FCk+ }. FAUST Clustering: Start C=X. Uuntil STOP (Clus. Dens> thres), recursively cut C at F-gaps (mdpt or adjust w variance). Use PCC gaps instead for suspected aberrations. Mark 11/25 FAUST text classification capable of accuracy as high as anything out there. Stanford_newsgroup dataset (7. 5 Kdocs) FAUST got 80% boost by eliminating terms in <= 2 docs. Chi-squared to reduce attrs, 20% (pick 80% best attr. ). Vertical allows toss atts easily before we expend CPU. Tossing intelligently improvse accuracy and reduces time! We eliminated ~70% attribs from Test. Set and achieved better accuracy than the classifiers referenced on Stanford NLP site! About to turn this loose on datasets approaching 1 TB in size. Mark 11/26 Adjusting midpt as well based on cluster deviation. Gives extra 4% accuracy. The hull is interesting case, as we’re looking at situations. We are already able to predict which members are poor matches to a class. TEST MINING COMMMENTS: For text corpus (d docs (rows) and t terms (cols), so far recorded only a very tiny part of the total info. Wealth of other info, not captured. 2010 -2015 notes tried to capture more information than just term existence or wtf info (~2012_07 -09). SPRING PLAN: Develop Treeminer platform and datasets (+ Matt Piehl’s datasets) on p. Tree 1 incl Hadoop (+Ingest procs for new datasets and convert them to p. Tree. Sets. Each pick a VDMp Topic. Set. PPT or book / produce enhanced/up-to-date version / embed audio / Blackboard based Assignment. Sets (+solutions) + (Test. Sets +answers. Md: Ph. D. CS, VDMp operations. Proposal defended (X) FAUST Analytics X=(X 1. . Xn) Rn, |X|=N. XC=(X 1. . Xn, C}. x. C {C 1. . Cc}= Rajat: MS CS, finished coursework. SE Quality Metrics (Dr. Magel) imp api Train. Set. Cl. d=(d 1. . dn), p=(p 1. . pn) Rn. F: Rn R, F=L, S, R : PTS SPTS. Maninder: Ph. D. CS, (book needs structure) Plan: develop in Treeminer env. , Spoorthy: MS SE Ld, p (X-p)od=Xod-pod Ld Xod Sp (X-p)o(X-p)=Xo. X+Xo(-2 p)+pop=L-2 p+Xo. X+pop Damian: Ph. D. in SE Arjun: Ph. D. CS. (VDMp algs, 2 tasks s 15, proposal, journal paper, Rd, p Sp-L 2 d, p =Xo. X+L-2 p+pop-(Ld)2 -2 pod*Xod+(pod)d 2=L-2 p-(2 pod)d -(Ld)2 +Xo. X+pop+(pod)2 Arijit: Ph. D. in CS, VDMp DM of fanacials, proposal defense this term Bryan: Multilevel p. Trees FAUST Top K Outlier Detector : rankn-1 Sx

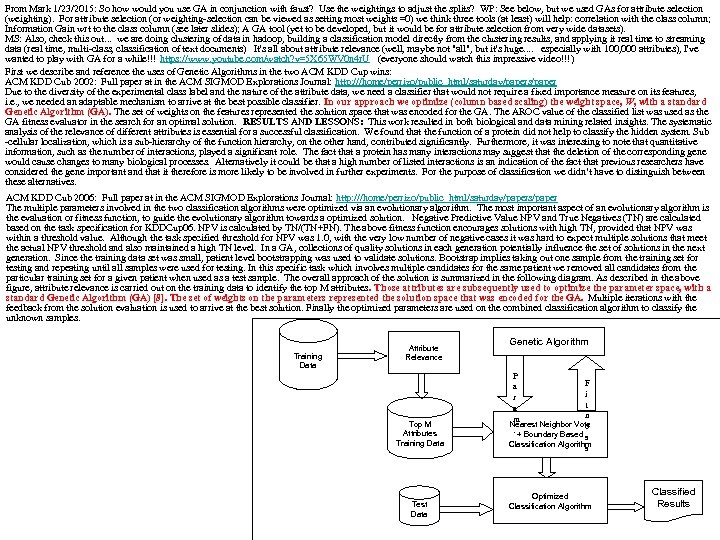

From Mark 1/23/2015: So how would you use GA in conjunction with faust? Use the weightings to adjust the splits? WP: See below, but we used GAs for attribute selection (weighting). For attribute selection (or weighting-selection can be viewed as setting most weights =0) we think three tools (at least) will help: correlation with the class column; Information Gain wrt to the class column (see later slides); A GA tool (yet to be developed, but it would be for attribute selection from very wide datasets). MS: Also, check this out. . . we are doing clustering of data in hadoop, building a classification model directly from the clustering results, and applying it real time to streaming data (real time, multi-class, classification of text documents) It's all about attribute relevance (well, maybe not "all", but it's huge. . especially with 100, 000 attributes), I've wanted to play with GA for a while!!! https: //www. youtube. com/watch? v=5 X 65 WV 0 n 4 r. U (everyone should watch this impressive video!!!) First we describe and reference the uses of Genetic Algorithms in the two ACM KDD Cup wins: ACM KDD Cub 2002: Full paper at in the ACM SIGMOD Explorations Journal: http: ///home/perrizo/public_html/saturday/papers/paper Due to the diversity of the experimental class label and the nature of the attribute data, we need a classifier that would not require a fixed importance measure on its features, i. e. , we needed an adaptable mechanism to arrive at the best possible classifier. In our approach we optimize (column based scaling) the weight space, W, with a standard Genetic Algorithm (GA). The set of weights on the features represented the solution space that was encoded for the GA. The AROC value of the classified list was used as the GA fitness evaluator in the search for an optimal solution. RESULTS AND LESSONS: This work resulted in both biological and data mining related insights. The systematic analysis of the relevance of different attributes is essential for a successful classification. We found that the function of a protein did not help to classify the hidden system. Sub -cellular localization, which is a sub-hierarchy of the function hierarchy, on the other hand, contributed significantly. Furthermore, it was interesting to note that quantitative information, such as the number of interactions, played a significant role. The fact that a protein has many interactions may suggest that the deletion of the corresponding gene would cause changes to many biological processes. Alternatively it could be that a high number of listed interactions is an indication of the fact that previous researchers have considered the gene important and that it therefore is more likely to be involved in further experiments. For the purpose of classification we didn’t have to distinguish between these alternatives. ACM KDD Cub 2006: Full paper at in the ACM SIGMOD Explorations Journal: http: ///home/perrizo/public_html/saturday/papers/paper The multiple parameters involved in the two classification algorithms were optimized via an evolutionary algorithm. The most important aspect of an evolutionary algorithm is the evaluation or fitness function, to guide the evolutionary algorithm towards a optimized solution. Negative Predictive Value NPV and True Negatives (TN) are calculated based on the task specification for KDDCup 06. NPV is calculated by TN/(TN+FN). The above fitness function encourages solutions with high TN, provided that NPV was within a threshold value. Although the task specified threshold for NPV was 1. 0, with the very low number of negative cases it was hard to expect multiple solutions that meet the actual NPV threshold and also maintained a high TN level. In a GA, collections of quality solutions in each generation potentially influence the set of solutions in the next generation. Since the training data set was small, patient level bootstrapping was used to validate solutions. Bootstrap implies taking out one sample from the training set for testing and repeating until all samples were used for testing. In this specific task which involves multiple candidates for the same patient we removed all candidates from the particular training set for a given patient when used as a test sample. The overall approach of the solution is summarized in the following diagram. As described in the above figure, attribute relevance is carried out on the training data to identify the top M attributes. Those attributes are subsequently used to optimize the parameter space, with a standard Genetic Algorithm (GA) [8]. The set of weights on the parameters represented the solution space that was encoded for the GA. Multiple iterations with the feedback from the solution evaluation is used to arrive at the best solution. Finally the optimized parameters are used on the combined classification algorithm to classify the unknown samples. Training Data Attribute Relevance Top M Attributes Training Data Test Data Genetic Algorithm P F a i r t a n m Nearest Neighbor Vote. + Boundary Based e s Classification Algorithm s Optimized Classification Algorithm Classified Results

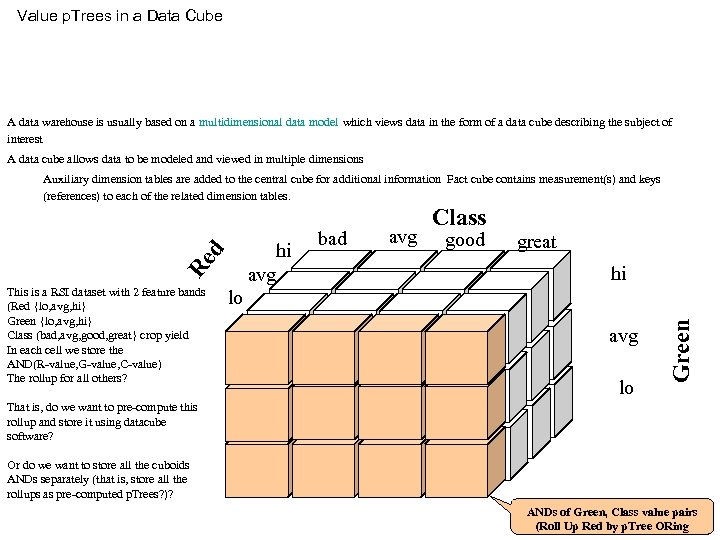

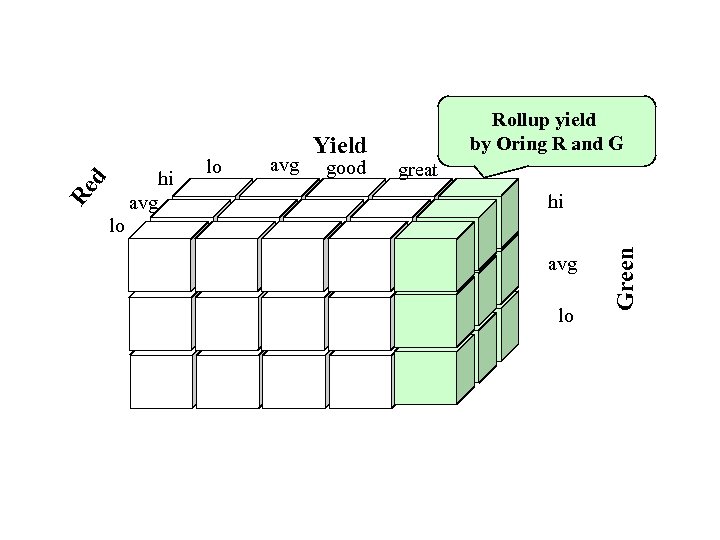

Value p. Trees in a Data Cube A data warehouse is usually based on a multidimensional data model which views data in the form of a data cube describing the subject of interest A data cube allows data to be modeled and viewed in multiple dimensions This is a RSI dataset with 2 feature bands (Red {lo, avg, hi} Green {lo, avg, hi} Class (bad, avg, good, great} crop yield In each cell we store the AND(R-value, G-value, C-value) The rollup for all others? lo hi avg bad avg Class good great hi avg lo Green Re d Auxiliary dimension tables are added to the central cube for additional information Fact cube contains measurement(s) and keys (references) to each of the related dimension tables. That is, do we want to pre-compute this rollup and store it using datacube software? Or do we want to store all the cuboids ANDs separately (that is, store all the rollups as pre-computed p. Trees? )? ANDs of Green, Class value pairs (Roll Up Red by p. Tree ORing

avg good great hi avg lo Green d Re lo hi avg lo Yield Rollup yield by Oring R and G

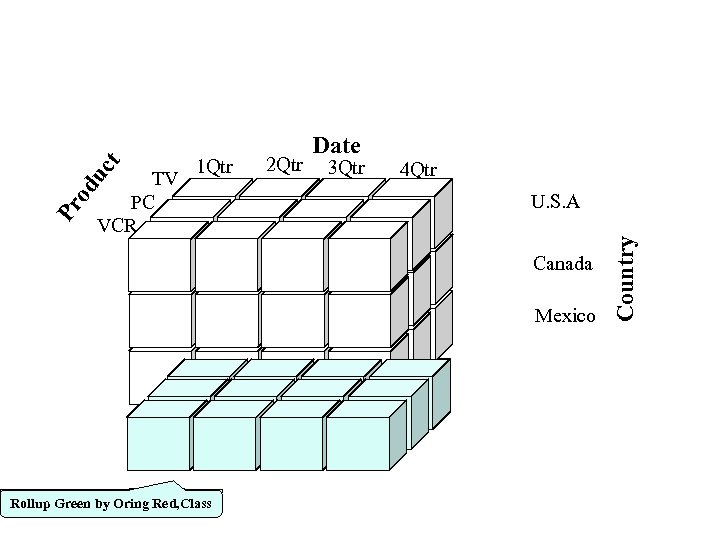

2 Qtr 3 Qtr 4 Qtr U. S. A Canada Mexico Rollup Green by Oring Red, Class Country t uc Pr od TV PC VCR 1 Qtr Date

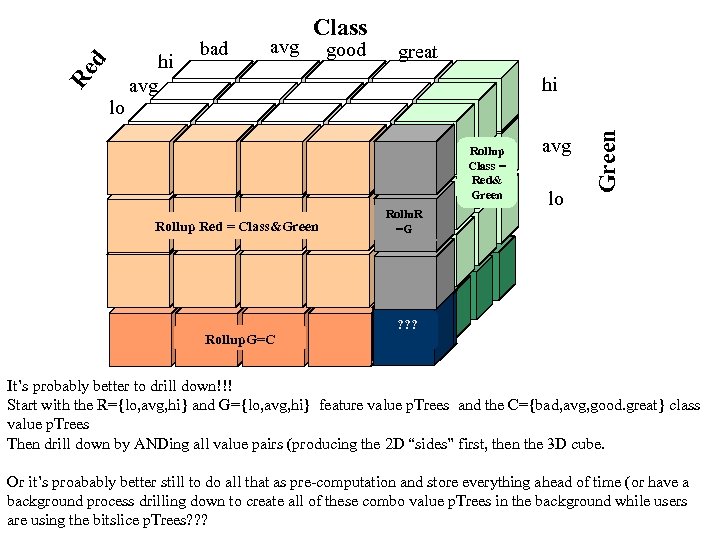

bad avg good great hi Rollup Class = Red& Green Rollup Red = Class&Green Rollup. G=C Rollu. R Rollup C=G =G avg lo Green d Re lo hi avg Class Rollup. C= R ? ? ? ? ? It’s probably better to drill down!!! Start with the R={lo, avg, hi} and G={lo, avg, hi} feature value p. Trees and the C={bad, avg, good. great} class value p. Trees Then drill down by ANDing all value pairs (producing the 2 D “sides” first, then the 3 D cube. Or it’s proabably better still to do all that as pre-computation and store everything ahead of time (or have a background process drilling down to create all of these combo value p. Trees in the background while users are using the bitslice p. Trees? ? ?

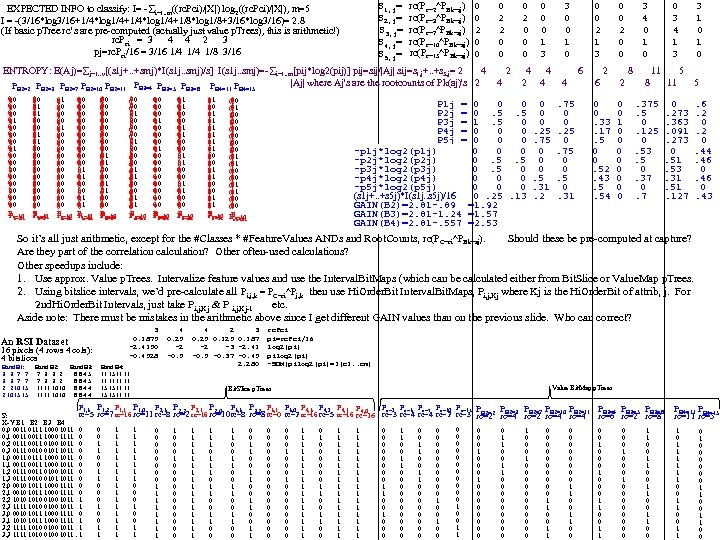

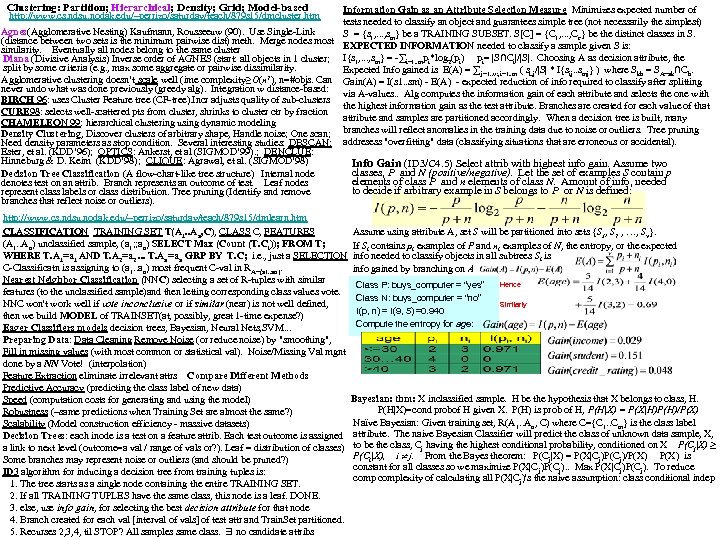

S 1, j= S 2, j= S 3, j= S 4, j= S 5, j= EXPECTED INFO to classify: I= - i=1. . m((rc. Pci)/|X|) log 2((rc. Pci)/|X|), m=5 I = -(3/16*log 3/16+1/4*log 1/4+1/8*log 1/8+3/16*log 3/16)= 2. 8 (If basic p. Tree rc’s are pre-computed (actually just value p. Trees), this is arithmetic!) rc. Pci = 3 4 4 2 3 pj=rc. Pci/16 = 3/16 1/4 1/8 3/16 rc(Pc=2^PBk=aj) rc(Pc=3^PBk=aj) rc(Pc=7^PBk=aj) rc(Pc=10^PBk=aj) rc(Pc=15^PBk=aj) 0 0 2 0 0 0 1 3 3 0 0 1 0 0 0 2 1 3 0 0 2 0 0 3 4 0 1 0 0 3 4 1 3 3 1 0 ENTROPY: E(Aj)= j=1. . v[(s 1 j+. . +smj)*I(s 1 j. . smj)/s] I(s 1 j. . smj)=- i=1. . m[pij*log 2(pij)] pij=sij/|Aj| sij=s 1, j+. . +s 5, j= 2 4 2 4 4 6 2 8 11 5 |Aj| where Aj's are the rootcounts of Pk(aj)'s 2 4 2 4 4 6 2 8 11 5 PB 2=3 PB 2=7 PB 2=10 PB 2=11 PB 3=4 B 3=5 PB 3=8 PB 4=11 P P P B 4=15 B 2=2 00 0 1 00 0 1 01 0 1 00 1 0 10 0 00 0 1 Pc=10 Pc=3 c=7 c=15 c=2 00 1 0 01 1 00 1 0 10 0 0 1 00 0 00 1 0 10 0 0 1 00 0 Pc=10 Pc=3 c=15 c=7 c=2 01 0 1 00 1 0 00 1 0 00 1 0 10 0 0 00 1 0 00 0 0 1 00 0 1 00 1 0 11 0 0 01 0 00 1 0 10 0 0 01 0 1 0 0 0 1 00 00 0 1 0 1 0 01 0 10 0 0 1 00 0 1 0 01 1 00 0 0 Pc=10 Pc=15 Pc=7 Pc=15 c=3 c=7 c=15 c=3 c=2 c=2 0 00 1 0 11 0 0 10 0 0 1 00 11 0 0 01 1 0 00 01 1 0 0 1 01 00 1 0 00 0 1 01 0 1 0 10 0 1 00 0 00 1 0 01 0 0 1 00 0 1 0 0 11 0 1 00 0 0 00 1 0 0 11 0 1 01 0 0 00 1 0 Pc=10 Pc=7 Pc=2 Pc=3 Pc=7 Pc=3 c=15 c=7 c=15 c=2 01 1 0 00 1 0 01 1 0 01 1 00 1 0 11 0 0 01 1 0 00 1 0 10 0 0 01 1 0 10 0 0 01 0 0 10 0 0 01 1 0 1 00 0 01 1 00 1 00 0 P 1 j = 0 0 0 P 2 j = 0. 5. 5 P 3 j = 1. 5 0 P 4 j = 0 0 0 P 5 j = 0 0 0 -p 1 j*log 2(p 1 j) 0. 5. 5 -p 2 j*log 2(p 2 j) 0. 5 0 -p 3 j*log 2(p 3 j) 0 0 0 -p 4 j*log 2(p 4 j) 0 0 0 -p 5 j*log 2(p 5 j) (s 1 j+. . +s 5 j)*I(s 1 j. . s 5 j)/16 0. 25. 13 GAIN(B 2)=2. 81 -. 89 =1. 92 GAIN(B 3)=2. 81 -1. 24 =1. 57 GAIN(B 4)=2. 81 -. 557 =2. 53 Pc=10 Pc=3 Pc=7 Pc=2 c=15 c=7 c=2 c=15 c=3 0 0 0. 25. 75 0 0 0. 5. 31. 2 . 75 0 0. 25 0. 75 0 0. 5 0. 31 0 0. 33. 17. 5 0 0. 52. 43. 5. 54 0 0 1 0 0 0 0 . 375. 5 0. 125 0. 53. 5 0. 37 0. 273. 363. 091. 273 0. 51. 53. 31. 51. 127 . 6. 2 0. 44. 46 0. 43 rc(PC=ci^PBk=aj). So it’s all just arithmetic, except for the #Classes * #Feature. Values ANDs and Root. Counts, Should these be pre-computed at capture? Are they part of the correlation calculation? Other often-used calculations? Other speedups include: 1. Use approx. Value p. Trees. Intervalize feature values and use the Interval. Bit. Maps (which can be calculated either from Bit. Slice or Value. Map p. Trees. 2. Using bitslice intervals, we’d pre-calculate all then use Hi. Order. Bit Interval. Bit. Maps, Pi, j, Kj where Kj is the Hi. Order. Bit of attrib, j. For Pi, j, k = PC=ci^Pj, k 2 nd. Hi. Order. Bit Intervals, just take Pi, j, Kj & P i, j, Kj-1 etc. Aside note: There must be mistakes in the arithmetic above since I get different GAIN values than on the previous slide. Who can correct? 3 0. 1875 -2. 4150 -0. 4528 An RSI Dataset 16 pixels (4 rows 4 cols): 4 bitslices 4 0. 25 -2 -0. 5 Band B 1: Band B 2: Band B 3: Band B 4: 3 3 7 7 7 3 3 2 8 8 4 5 11 15 11 11 3 3 7 7 7 3 3 2 8 8 4 5 11 11 2 2 10 15 11 11 10 10 8 8 4 4 15 15 11 11 2 10 15 15 11 11 10 10 8 8 4 4 15 15 11 11 4 2 3 0. 25 0. 187 -2 -3 -2. 41 -0. 5 -0. 37 -0. 45 2. 280 rc. Pci pi=rc. Pci/16 log 2(pi) pilog 2(pi) -SUM(pilog 2(pi)=I(c 1. . cm) Value Bit. Map p. Trees Bit. Slice p. Trees P 1, 3 P 1, 2 P 1, 1 P 1, 0 rc=5 S: X-Y B 1 B 2 B 3 B 4 0, 0 0011 0111 1000 1011 0 0, 1 0011 1000 1111 0 0, 2 0111 0011 0100 1011 0 0, 3 0111 0010 0101 1011 0 1, 0 0011 0111 1000 1011 0 1, 1 0011 1000 1011 0 1, 2 0111 0011 0100 1011 0 1, 3 0111 0010 0101 1011 0 2, 0 0010 1011 1000 1111 0 2, 1 0010 1011 1000 1111 0 2, 2 1010 0100 1011 1 2, 3 1111 1010 0100 1011 1 3, 0 0010 1011 1000 1111 0 3, 1 1010 1011 1000 1111 1 3, 2 1111 1010 0100 1011 1 3, 3 1111 1010 0100 1011. 1 Pc=2 Pc=3 Pc=7 Pc=10 Pc=15 P 2, 3 P 2, 2 P 2, 1 P 2, 0 P 3, 3 P 3, 2 P 3, 1 P 3, 0 P 4, 3 P 4, 2 P 4, 1 P 4, 0 P PB 2=3 PB 2=7 PB 2=10 PB 2=11 PB 3=4 B 3=5 PB 3=8 PB 4=11 B 4=15 P rc=7 rc=16 rc=11 rc=8 rc=2 rc=16 rc=10 rc=8 rc=0 rc=2 rc=16 rc=5 rc=16 rc=3 rc=4 rc=2 rc=3 PB 2=2 rc=4 rc=6 rc=2 rc=8 rc=11 rc=5 rc=2 0 0 1 1 0 0 0 1 1 1 1 1 1 1 0 0 0 1 1 0 0 0 0 1 1 1 1 1 0 0 0 0 1 1 1 1 1 0 1 1 0 0 1 1 0 0 1 1 0 0 0 0 0 0 1 0 0 0 0 0 1 1 1 1 0 1 0 0 0 1 1 1 1 1 1 1 1 1 0 0 0 0 1 1 0 0 1 1 0 0 0 0 0 0 0 0 1 0 0 0 0 1 0 0 1 1 0 0 0 0 0 1 1 0 0 0 0 0 0 0 0 0 1 1 0 0 0 0 1 0 0 0 1 0 0 0 0 1 1 0 0 1 0 1 1 1 0 0 1 1 0 1 0 0 0 1 1 0 0

Access to Ptree 1 and Ptree 2 William Perrizo To all my students (plus 3 former students who may be interested in an opportunity to use a p. Tree environment replete with massive, real-life big datasets that was developed by Treeminer Inc (the company that licensed p. Tree patents and is becoming a strong player in the vertical data mining area). The only requirement from this side is your willingness to share and willingness to sign a Treeminer NDA. I particularly am interested in having available for everyone to use a Genetic Algorithm tool. As we all know, Dr. Amal Shehan Perera was the lead scientist implementing a GA tool in p. Tree data mining software and it helped to win two ACM KDD Cups!!! Since that time my suggestion to anyone has been "When your done getting all you can out of your algorithm, GA it!!!" Baoying (Elizabeth)Wang did some amazing work on p. Tree clustering (and classification and ARM) and Dr. Imad Rahal did amazing p. Tree work on classification (and ARM and Clustering). That's why I invite Amal, Elizabeth and Imad to use our system once it gets up and going. My hope is that they will be willing to let us use any tools they develop in that system? ; -) (synergism). There may be other former Data. SURG members who would be interested as well (please advise). Just to update Amal, Elizabeth and Imad, Treeminer is a startup that has implemented a p. Tree data mining system in Java (about 70. 000 lines of code). It is wonderful and is commercially successful. Treeminer has used several Big. Data real-life dataset (captured in p. Trees). They have demonstrated both orders of magnitude improvement in speed (which is the traditional focus of p. Tree technology - speedup through Horizontal Processing of Vertical Data or HPVD) but also improvements in accuracy at the same time! This is unheard of and wonderful. Maybe the main reason we are able to get accuracy improvements while getting great speedup is that vertical structuring facilitates fast and effective attribute selection (which might take forever with horizontal data and therefore is not attempted in the horizontal data world). E. g. , when datamining a text corpus of 100, 000 vocab words (the columns) and 1 oo, 000 documents (the rows). it is important to find out the important words (attribute selection) before running an algorithm (solve the curse of dimensionality first). Amal, Elizabeth and Imad, if you would like to get involved, let us know. We still have Saturday meetings at 10 CST and we have two people who skype in every week (the more the merrier!). Arjun Roy or Arijit Chatterjee can tell how to skype in. Nathan Olson is our department technician who has helped us a lot. At this point we are nearly done getting all of Treeminer's stuff to the two p. Tree 1 and p. Tree 2 servers two servers, so that you can remote login to add anything to the GIT repository there (and run and test it against a very impressive suite of datasets. ) Please note I have posted all recent Saturday notes and other good materials on my web site: http: //www. cs. ndsu. nodak. edu/~perrizo You can get these materials by clicking on dot or period to the right of the bullet "media coverage" William Perrizo: I have put all the Saturday notes for the past few years and many other useful files (e. g. , topic synopses) on my web site so you all can access anything you want. I'm not worried at all about security since, if someone outside our group were to think they had stolen something important from there, then they probably would study it and become a p. Tree data mining expert. How could that be bad? You can get these materials by clicking on dot or period to the right of the bullet "media coverage" My homepage is: http: //www. cs. ndsu. nodak. edu/~perrizo There was a time in the past when I contemplated a "p. Tree Data Mining" monograph. I will put the very rough preliminary version of that book project on the website also. Anyone at all that every wants to co-author such a thing with me is welcome to work on it using the material at the site. I just wanted to ask if the 4 experience students (Damian, Mohammad, Arjun and Arijit) would be willing to help the students who will/may join our group (manider, Rajat, Spoorthy) and who will then be tasked to do coding, implementation and testing using Treeminer Software Environment and using Treeminer big vertical data sets? That would be great and would also help each of you, I believe. I'm sure Mark, Puneet will help also but they are, no doubt, buried under the weight of other needs right now. From: Bryan Mesich bryan. mesich@ndsu. edu Sent: Friday, January 23, 2015 12: 41 PM > I had the same question. We can make changes to code on ptree 1/2 directly but it will be difficult via VI editor. 2 relevant options. Take a copy in your local system, make changes and move the java file to ptree 1/2. Compile and run. > 2. Take a copy in your local system, make changes and compile. Move class file to ptree 1/2 and run. I do all of my development (Perl, C/C++. Assembly, Java, Bash) using VIM. This is my preference. I'm not advocating to use one IDE/Editor over another as its a personal choice. The best way to accomplish this would be to use a version system. That way you can do development on a remote machine and commit your changes. You could then log into ptree[12]. ndsu, checkout the latest version and run the code. Version control works well in collaborations efforts when merging code together. Also, I went ahead and installed both Java 7 and Java 8 JDKs on both servers. They are located in /usr/java. By default, Java 8 will be used when running/compiling. Bryan So we have any other alternatives I would suggest running GIT or SVN. Rajat > From: Arjun Roy > My IP address is 192. 168. 0. 4 > If for some reason I am not able to copy, Damian can give a try on campus? > I think the way we are going to proceed is that we will have Eclipse software on our client machine (pointing to code on ptree 1) but its going to be compiled on ptree 1. > I don't know if we can directly make changes to code stored on ptree 1 or if we would have to make changes locally and push it to ptree 1. > I dont have much knowledge on Java environment/Eclipse but does this sound logical (Rajat/Maninder)? > Thanks, Arjun > > From: Bryan Mesich <bryan. mesich@ndsu. edu> > Please give me a remote access. Once I have access, will put everything on Share. > > I have used it with Jdk 7 without any compiler complaints. > I need your IP address in order to make the exception in the firewall. > Also, did you use Open. JDK, or the official JDK from Oracle? > > Subject: Re: Access to Ptree 1 and Ptree 2 > > Logins are ready for use. I will be installing a firewall shortly > > that will only allow on-campus access. If you need remote access, > > please let me know and I'll make an exception for you in the firewall. > > Otherwise I'll assume everyone has access unless contacted. > > > Bryan, I have a few months old version of Tree. Miner. Its about 2. 5 GB. Is > > > it ok if I put the entire thing in my space on ptree 1 (might take a while to transfer)? > > Lets have you put the Tree. Miner code under the Shared directory > > (/stggroups/perrizo/Shared). That way everyone will have access to the code. > > > For latest version, we will have to contact Mark and go through some layers of security. > > We'll want to investigate a way to pull the code in an automated > > fashion in the future. The current version(s) you have should work for now. > > Does anyone know what JDK version Tree. Miner is using?

Damian Lampl Fri 1/23/2015 2: 12 PM I'll need remote access, too. My IP is: 208. 107. 126. 159 One reason we wanted to get things centralized on the p. Tree servers was so we wouldn't have to configure everyone's development environment separately (Java, Eclipse dependencies, etc) since that's the primary hang-up to getting started with the Treeminer code. I was thinking we could just remote desktop into the ptree servers using xrdp or something similar if that works? I really don't know the best way of setting it up so we all have access to the same preconfigured Java/Eclipse environment (. NET and Visual Studio would be a different story: install Visual Studio, done). You mentioned maven, Bryan, but I'm not familiar with how that works? Or if there's a configuration file we can get set up that makes sure our local Java, Eclipse, and any other dependencies are configured properly? I agree on a git repository so we'll have one main trunk and then separate branches for everyone, and when we need to merge with Mark's code, we can use the main trunk for that since he's also using git. Mark also has things working with Hadoop and some of his datasets will be stored in that. Bryan Mesich <bryan. mesich@ndsu. edu> Fri 1/23/2015 12: 41 PM Rajat Singh wrote: had the same question. > We can make changes to code on ptree 1/2 directly but it will be difficult via VI editor. So we have two relevant options > 1. Take a copy in your local system, make changes and move the java file to ptree 1/2. Compile and run. > 2. Take a copy in your local system, make changes and compile. Move class file to ptree 1/2 and run. I do all of my development (Perl, C/C++. Assembly, Java, Bash) using VIM. This is my preference. I'm not advocating to use one IDE/Editor over another as its a personal choice. The best way to accomplish this would be to use a version control system. That way you can do development on a remote machine and commit your changes. You could then log into ptree[12]. ndsu, checkout thel atest version and run code. Version control works well in collaborative efforts when merging code together. Also, I went ahead and installed both Java 7 and Java 8 JDKs on both servers. They are located in /usr/java. By default, Java 8 will be used when running/compiling. Bryan Maninder Singh Fri 1/23/2015 12: 21 PM Just a thought, can we think of some way to write a batch file in VI editor to do some of our work directly on ptree? Rajat Singh Fri 1/23/2015 12: 15 PM I had the same question. We can make changes to code on ptree 1/2 directly but it will be difficult via VI editor. So we have two relevant options 1. Take a copy in your local system, make changes and move the java file to ptree 1/2. Compile and run. 2. Take Arjun Roy Fri 1/23/2015 12: 08 PM My IP address is 192. 168. 0. 4 If for some reason I am not able to copy, Damian can give a try on campus? I think the way we are going to proceed is that we will have Eclipse software on our client machine (pointing to code on ptree 1) but its going to be compiled on ptree 1. I don't know if we can directly make changes to code stored on ptree 1 or if we would have to make changes locally and push it to ptree 1. I dont have much knowledge on Java environment/Eclipse but does this sound logical (Rajat/Maninder)? Arjun In my opinion, you can stay back at home and relax this Saturday : ) We students will collectively make it operational now that Bryan has created login for us. Logins are ready for use. I will be installing a firewall shortly that will only allow on-campus access. If you need remote access, please let me know and I'll make an exception for you in the firewall. Otherwise I'll assume everyone has access unless contacted. > > Bryan, I have a few months old version of Tree. Miner. Its about 2. 5 GB. Is > it ok if I put the entire thing in my space on ptree 1 (might take a while > to transfer)? Lets have you put the Tree. Miner code under the Shared directory (/stggroups/perrizo/Shared). That way everyone will have access to the code. > For latest version, we will have to contact Mark and go through some > layers of security. We'll want to investigate a way to pull the code in an automated fashion in the future. The current version(s) you have should work for now. Does anyone know what JDK version Tree. Miner is using?

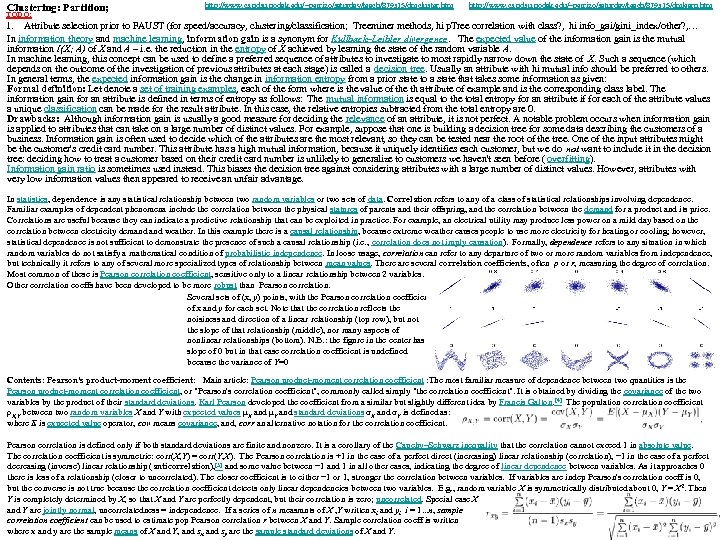

http: //www. cs. ndsu. nodak. edu/~perrizo/saturday/teach/879 s 15/dmlearn. htm Current Treeminer methods, hi p. Tree correlation with class? ; hi information_gain; Hi gini_index? … In information theory and machine learning, information gain is a synonym for Kullback–Leibler divergence. The expected value of information gain is the mutual information I(X; A) of X and A – i. e. reduction in the entropy of X achieved by learning the state of the random variable A. In machine learning, this concept can be used to define a preferred sequence of attributes to investigate to most rapidly narrow down the state of X. Such a sequence (depends on outcome of investigation of previous attribs at each stage) is a decision tree. Usually an attribute with hi mutual info is preferred to others. In general terms, the expected information gain is the change in information entropy from a prior state to a state that takes some information as given: Drawbacks: Altho info gain is a good measure of attrib relevance, it is not perfect. E. g. , when applied to attributes that can take on many distinct values. E. g. , building a decision tree for some data describing the customers of a business. Info gain is often used to decide which attribs are most relevant, so they can be tested near the tree root. Credit card number uniquely identifies customer, but deciding how to treat a customer based on credit card # will not generalize to customers we haven't seen yet ( overfitting). Information gain ratio instead? This biases the decision tree against attributes with many distinct values. But, attributes with low info values then receive an unfair advantage. Attribute Selection; In statistics, dependence is any relationship between two random variables or two sets of data. Correlation refers to any of a class of statistical relationships involving dependence. E. g. , correlation between physical statures of parents and offspring, ; between demand for a product and its price. Correlations can indicate a predictive relationship to exploited in practice. E. g. , an electrical utility may produce less power on a mild day based on the correlation between electricity demand weather. In this example there is a causal relationship, because extreme weather causes the use of more electricity; however, statistical dependence is not sufficient to demonstrate the presence of such a causal relationship (i. e. , correlation does not imply causation). Formally, dependence refers to random variables not satisfy inga math condition of probabilistic independence. Loosely correlation is any departure of two or more random variables from independence, but technically it refers to any of several more specialized types of relationship between mean values. There are several correlation coefficients, ρ or r, measuring the degree of correlation. , e. g. , Pearson correlation coefficient, sensitive only to a linear relationship between 2 variables. Other correlation coeffs have been developed to be more robust than Pearson correlation. Here are several sets of (x, y) points, with the Pearson correlation coefficient of x and y for each set. Note , correlation reflects noisiness /direction of a linear relationship (top row), but not slope (middle), nor many aspects of nonlinear relationships (bottom). N. B. : figure in center has a slope of 0 but correlation coefficient is undefined because the variance of Y=0 Contents: Pearson's product-moment coefficient: Main article: Pearson product-moment correlation coefficient : The most familiar measure of dependence between two quantities is the Pearson product-moment correlation coefficient, or "Pearson's correlation coefficient", commonly called simply "the correlation coefficient". It is obtained by dividing the covariance of the two variables by the product of their standard deviations. Karl Pearson developed the coefficient from a similar but slightly different idea by Francis Galton. [4] The population correlation coefficient ρX, Y between two random variables X and Y with expected values μX and μY and standard deviations σX and σY is defined as: where E is expected value operator, cov means covariance, and, corr an alternative notation for the correlation coefficient. Pearson correlation is defined only if both standard deviations are finite and nonzero. It is a corollary of the Cauchy–Schwarz inequality that the correlation cannot exceed 1 in absolute value. The correlation coefficient is symmetric: corr(X, Y) = corr(Y, X). The Pearson correlation is +1 in the case of a perfect direct (increasing) linear relationship (correlation), − 1 in the case of a perfect decreasing (inverse) linear relationship (anticorrelation), [5] and some value between − 1 and 1 in all other cases, indicating the degree of linear dependence between variables. As it approaches 0 there is less of a relationship (closer to uncorrelated). The closer coefficient is to either − 1 or 1, stronger the correlation between variables. If variables are indep Pearson's correlation coeff is 0, but the converse is not true because the correlation coefficient detects only linear dependencies between two variables. E. g. , random variable X is symmetrically distributed about 0, Y = X 2. Then Y is completely determined by X, so that X and Y are perfectly dependent, but their correlation is zero; uncorrelated. Special case X and Y are jointly normal, uncorrelatedness = independence. If a series of n measmnts of X , Y written xi and yi i = 1. . . n, sample correlation coefficient can be used to estimate pop Pearson correlation r between X and Y. Sample correlation coeff is written where x and y are the sample means of X and Y, and sx and sy are the sample standard deviations of X and Y. This can also be written as: If x, y are results of meamnts containing error, realistic limits on correlation coef are not − 1 to +1 but a smaller range. . Rank correlation coefficients Main articles: Spearman's rank correlation coefficient and Kendall tau rank correlation coefficient Rank correlation coefficients, such as Spearman's rank correlation coefficient and Kendall's rank correlation coefficient (τ) measure one variable increases to the other variable increase, w/o requiring that increase to be represented by a linear relationship (alternatives to Pearson's coefficient). To illustrate rank correlation, and its difference from linear correlation, consider 4 pairs (x, y): (0, 1), (10, 100), (101, 500), (102, 2000). As we go from each pair to the next pair x increases, and so does y. This relationship is perfect, in the sense that an increase in x is always accompanied by an increase in y. This means that we have a perfect rank correlation, and both Spearman's and Kendall's correlation coefficients are 1, whereas in this example Pearson product-moment correlation coefficient is 0. 7544, indicating that the points are far from lying on a straight line. In the same way if y always decreases when x increases, the rank correlation coefficients will be − 1, while the Pearson product-moment correlation coefficient may or may not be close to − 1, depending on how close the points are to a straight line. Although in the extreme cases of perfect rank correlation the two coefficients are both equal (being both +1 or both − 1) this is not in general so, and values of the two coefficients cannot meaningfully be compared. [7] For example, for the three pairs (1, 1) (2, 3) (3, 2) Spearman's coefficient is 1/2, while Kendall's coefficient is 1/3. Other measures of dependence: The info given by a correlation coef is not enough to define the dependence structure between random variables. [9] Correlation coef completely defines dependence structure only in particular cases, for ex when distrib is a multivariate normal distribution. In the case of elliptical distributions it characterizes (hyper-) ellipses of equal density, but, it does not completely characterize dependence structure (for ex, a multivariate t-distribution's degrees of freedom determine level of tail dep). Distance correlation and Brownian covariance / Brownian correlation[10][11] were introduced to address deficiency of Pearson's corr that it can be zero for dependent random variables; 0 distance correlation and 0 Brownian correlation imply indep SEE: Association (statistics) Autocorrelation Canonical correlation Coefficient of determination Cointegration Concordance correlation coefficient Cophenetic correlation Copula Correlation function Covariance and correlation Cross-correlation Ecological correlation Fraction of variance

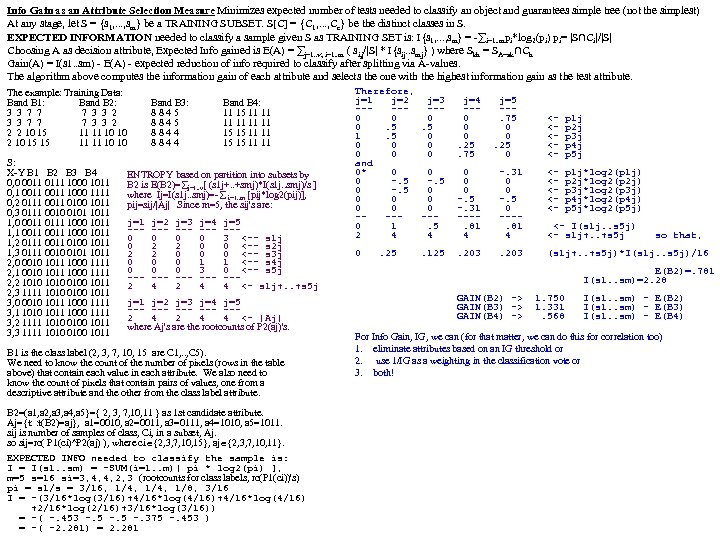

Clustering: Partition; Hierarchical; Density; Grid; Model-based http: //www. cs. ndsu. nodak. edu/~perrizo/saturday/teach/879 s 15/dmcluster. htm Agnes(Agglomerative Nesting) Kaufmann, Rousseeuw (90). Use Single-Link (distance between two sets is the minimum pairwise dist) meth. Merge nodes most similarity. Eventually all nodes belong to the same cluster Diana (Divisive Analysis) Inverse order of AGNES (start: all objects in 1 cluster; split by some criteria (e. g. , max some aggregate or pairwise dissimilarity. Agglomerative clustering doesn’t scale well (ime complexity≥O(n 2), n=#objs. Can never undo what was done previously (greedy alg). Integration w distance-based: BIRCH 96: uses Cluster Feature tree (CF-tree). Incr adjusts quality of sub-clusters CURE 98: selects well-scattered pts from cluster, shrinks to cluster ctr by fraction CHAMELEON 99: hierarchical clustering using dynamic modeling Density Clustering, Discover clusters of arbitrary shape, Handle noise; One scan; Need density parameters as stop condition. Several interesting studies: DBSCAN: Ester, et al. (KDD’ 96); OPTICS: Ankerst, et al (SIGMOD’ 99). ; DENCLUE: Hinneburg & D. Keim (KDD’ 98); CLIQUE: Agrawal, et al. (SIGMOD’ 98) Decision Tree Classification (A flow-chart-like tree structure) Internal node denotes test on an attrib. Branch represents an outcome of test. Leaf nodes represent class labels or class distribution. Tree pruning (Identify and remove branches that reflect noise or outliers). Information Gain as an Attribute Selection Measure Minimizes expected number of tests needed to classify an object and guarantees simple tree (not necessarily the simplest) S = {s 1, . . . , sm} be a TRAINING SUBSET. S[C] = {C 1, . . . , Cc} be the distinct classes in S. EXPECTED INFORMATION needed to classify a sample given S is: I{s 1, . . . , sm} = -∑i=1. . mpi*log 2(pi) pi= |S∩Ci|/|S|. Choosing A as decision attribute, the Expected Info gained is E(A) = ∑ j=1. . v; i=1. . m ( si, j/|S| * I{sij. . smj} ) where Skh = SA=ak∩Ch. Gain(A) = I(s 1. . sm) - E(A) - expected reduction of info required to classify after splitting via A-values. . Alg computes the information gain of each attribute and selects the one with the highest information gain as the test attribute. Branches are created for each value of that attribute and samples are partitioned accordingly. When a decision tree is built, many branches will reflect anomalies in the training data due to noise or outliers. Tree pruning addresess "overfitting" data (classifying situations that are erroneous or accidental). Info Gain (ID 3/C 4. 5) Select attrib with highest info gain. Assume two classes, P and N (positive/negative). Let the set of examples S contain p elements of class P and n elements of class N. Amount of info, needed to decide if arbitrary example in S belongs to P or N is defined: http: //www. cs. ndsu. nodak. edu/~perrizo/saturday/teach/879 s 15/dmlearn. htm Assume using attribute A, set S will be partitioned into sets {S 1, S 2 , …, Sv}. CLASSIFICATION TRAINING SET T(A 1. . An, C), CLASS C, FEATURES (A 1. . An) unclassified sample, (a 1; ; an) SELECT Max (Count (T. Ci)); FROM T; If Si contains pi examples of P and ni examples of N, the entropy, or the expected WHERE T. A 1=a 1 AND T. A 2=a 2. . . T. An=an GRP BY T. C; i. e. , just a SELECTION info needed to classify objects in all subtrees Si is C-Classificatn is assigning to (a 1. . an) most frequent C-val in RA=(a 1. . an). info gained by branching on A Nearest Neighbor Classification (NNC) selecting a set of R-tuples with similar Hence Class P: buys_computer = “yes” features (to the unclassified sample)and then letting corresponding class values vote. Class N: buys_computer = “no” NNC won't work well if vote inconclusive or if similar (near) is not well defined, Similarly I(p, n) = I(9, 5) =0. 940 then we build MODEL of TRAINSET(at, possibly, great 1 -time expense? ) Compute the entropy for age: Eager Classifiers models decision trees, Bayesian, Neural Nets, SVM. . . Preparing Data: Data Cleaning Remove Noise (or reduce noise) by "smoothing", Fill in missing values (with most common or statistical val). Noise/Missing Val mgnt done by a NN Vote! (interpolation) Feature Extraction eliminate irrelevant attrs Compare Different Methods Predictive Accuracy (predicting the class label of new data) Bayesian: thm: X inclassified sample. H be the hypothesis that X belongs to class, H. Speed (computation costs for generating and using the model) P(H|X)=cond probof H given X. P(H) is prob of H, P(H|X) = P(X|H)P(H)/P(X) Robustness (~same predictions when Training Set are almost the same? ) Naïve Bayesian: Given training set, R(A 1. . An, C) where C={C 1. . Cm} is the class label Scalability (Model construction efficiency - massive datasets) Decision Trees: each inode is a test on a feature attrib. Each test outcome is assigned attribute. The naive Bayesian Classifier will predict the class of unknown data sample, X, a link to next level (outcome=a val / range of vals or? ). Leaf = distribution of classes) to be the class, Cj having the highest conditional probability, conditioned on X P(Cj|X) ≥ P(Ci|X), i j. From the Bayes theorem: P(Cj|X) = P(X|Cj)P(Cj)/P(X) is Some branches may represent noise or outliers (and should be pruned? ) constant for all classes so we maximize P(X|C j)P(Cj). . Max P(X|Cj)P(Cj). To reduce ID 3 algorithm for inducing a decision tree from training tuples is: complexity of calculating all P(X|C j)'s the naive assumption: class conditional indep 1. The tree starts as a single node containing the entire TRAINING SET. 2. If all TRAINING TUPLES have the same class, this node is a leaf. DONE. 3. else, use info gain, for selecting the best decision attribute for that node 4. Branch created for each val [interval of vals] of test attr and Train. Set partitioned. 5. Recurses 2, 3, 4, til STOP? All samples same class. ∃ no candidate attribs

S 1, j= S 2, j= S 3, j= S 4, j= S 5, j= EXPECTED INFO to classify: I= - i=1. . m((rc. Pci)/|X|) log 2((rc. Pci)/|X|), m=5 I = -(3/16*log 3/16+1/4*log 1/4+1/8*log 1/8+3/16*log 3/16)= 2. 8 (If basic p. Tree rc’s are pre-computed (actually just value p. Trees), this is arithmetic!) rc. Pci = 3 4 4 2 3 pj=rc. Pci/16 = 3/16 1/4 1/8 3/16 rc(Pc=2^PBk=aj) rc(Pc=3^PBk=aj) rc(Pc=7^PBk=aj) rc(Pc=10^PBk=aj) rc(Pc=15^PBk=aj) 0 0 2 0 0 0 1 3 3 0 0 1 0 0 0 2 1 3 0 0 2 0 0 3 4 0 1 0 0 3 4 1 3 3 1 0 ENTROPY: E(Aj)= j=1. . v[(s 1 j+. . +smj)*I(s 1 j. . smj)/s] I(s 1 j. . smj)=- i=1. . m[pij*log 2(pij)] pij=sij/|Aj| sij=s 1, j+. . +s 5, j= 2 4 2 4 4 6 2 8 11 5 |Aj| where Aj's are the rootcounts of Pk(aj)'s 2 4 2 4 4 6 2 8 11 5 PB 2=3 PB 2=7 PB 2=10 PB 2=11 PB 3=4 B 3=5 PB 3=8 PB 4=11 P P P B 4=15 B 2=2 00 0 1 00 0 1 01 0 1 00 1 0 10 0 00 0 1 Pc=10 Pc=3 c=7 c=15 c=2 00 1 0 01 1 00 1 0 10 0 0 1 00 0 00 1 0 10 0 0 1 00 0 Pc=10 Pc=3 c=15 c=7 c=2 01 0 1 00 1 0 00 1 0 00 1 0 10 0 0 00 1 0 00 0 0 1 00 0 1 00 1 0 11 0 0 01 0 00 1 0 10 0 0 01 0 1 0 0 0 1 00 00 0 1 0 1 0 01 0 10 0 0 1 00 0 1 0 01 1 00 0 0 Pc=10 Pc=15 Pc=7 Pc=15 c=3 c=7 c=15 c=3 c=2 c=2 0 00 1 0 11 0 0 10 0 0 1 00 11 0 0 01 1 0 00 01 1 0 0 1 01 00 1 0 00 0 1 01 0 1 0 10 0 1 00 0 00 1 0 01 0 0 1 00 0 1 0 0 11 0 1 00 0 0 00 1 0 0 11 0 1 01 0 0 00 1 0 Pc=10 Pc=7 Pc=2 Pc=3 Pc=7 Pc=3 c=15 c=7 c=15 c=2 01 1 0 00 1 0 01 1 0 01 1 00 1 0 11 0 0 01 1 0 00 1 0 10 0 0 01 1 0 10 0 0 01 0 0 10 0 0 01 1 0 1 00 0 01 1 00 1 00 0 P 1 j = 0 0 0 P 2 j = 0. 5. 5 P 3 j = 1. 5 0 P 4 j = 0 0 0 P 5 j = 0 0 0 -p 1 j*log 2(p 1 j) 0. 5. 5 -p 2 j*log 2(p 2 j) 0. 5 0 -p 3 j*log 2(p 3 j) 0 0 0 -p 4 j*log 2(p 4 j) 0 0 0 -p 5 j*log 2(p 5 j) (s 1 j+. . +s 5 j)*I(s 1 j. . s 5 j)/16 0. 25. 13 GAIN(B 2)=2. 81 -. 89 =1. 92 GAIN(B 3)=2. 81 -1. 24 =1. 57 GAIN(B 4)=2. 81 -. 557 =2. 53 Pc=10 Pc=3 Pc=7 Pc=2 c=15 c=7 c=2 c=15 c=3 0 0 0. 25. 75 0 0 0. 5. 31. 2 . 75 0 0. 25 0. 75 0 0. 5 0. 31 0 0. 33. 17. 5 0 0. 52. 43. 5. 54 0 0 1 0 0 0 0 . 375. 5 0. 125 0. 53. 5 0. 37 0. 273. 363. 091. 273 0. 51. 53. 31. 51. 127 . 6. 2 0. 44. 46 0. 43 rc(PC=ci^PBk=aj). So it’s all just arithmetic, except for the #Classes * #Feature. Values ANDs and Root. Counts, Should these be pre-computed at capture? Are they part of the correlation calculation? Other often-used calculations? Other speedups include: 1. Use approx. Value p. Trees. Intervalize feature values and use the Interval. Bit. Maps (which can be calculated either from Bit. Slice or Value. Map p. Trees. 2. Using bitslice intervals, we’d pre-calculate all then use Hi. Order. Bit Interval. Bit. Maps, Pi, j, Kj where Kj is the Hi. Order. Bit of attrib, j. For Pi, j, k = PC=ci^Pj, k 2 nd. Hi. Order. Bit Intervals, just take Pi, j, Kj & P i, j, Kj-1 etc. Aside note: There must be mistakes in the arithmetic above since I get different GAIN values than on the previous slide. Who can correct? 3 0. 1875 -2. 4150 -0. 4528 An RSI Dataset 16 pixels (4 rows 4 cols): 4 bitslices 4 0. 25 -2 -0. 5 Band B 1: Band B 2: Band B 3: Band B 4: 3 3 7 7 7 3 3 2 8 8 4 5 11 15 11 11 3 3 7 7 7 3 3 2 8 8 4 5 11 11 2 2 10 15 11 11 10 10 8 8 4 4 15 15 11 11 2 10 15 15 11 11 10 10 8 8 4 4 15 15 11 11 4 2 3 0. 25 0. 187 -2 -3 -2. 41 -0. 5 -0. 37 -0. 45 2. 280 rc. Pci pi=rc. Pci/16 log 2(pi) pilog 2(pi) -SUM(pilog 2(pi)=I(c 1. . cm) Value Bit. Map p. Trees Bit. Slice p. Trees P 1, 3 P 1, 2 P 1, 1 P 1, 0 rc=5 S: X-Y B 1 B 2 B 3 B 4 0, 0 0011 0111 1000 1011 0 0, 1 0011 1000 1111 0 0, 2 0111 0011 0100 1011 0 0, 3 0111 0010 0101 1011 0 1, 0 0011 0111 1000 1011 0 1, 1 0011 1000 1011 0 1, 2 0111 0011 0100 1011 0 1, 3 0111 0010 0101 1011 0 2, 0 0010 1011 1000 1111 0 2, 1 0010 1011 1000 1111 0 2, 2 1010 0100 1011 1 2, 3 1111 1010 0100 1011 1 3, 0 0010 1011 1000 1111 0 3, 1 1010 1011 1000 1111 1 3, 2 1111 1010 0100 1011 1 3, 3 1111 1010 0100 1011. 1 Pc=2 Pc=3 Pc=7 Pc=10 Pc=15 P 2, 3 P 2, 2 P 2, 1 P 2, 0 P 3, 3 P 3, 2 P 3, 1 P 3, 0 P 4, 3 P 4, 2 P 4, 1 P 4, 0 P PB 2=3 PB 2=7 PB 2=10 PB 2=11 PB 3=4 B 3=5 PB 3=8 PB 4=11 B 4=15 P rc=7 rc=16 rc=11 rc=8 rc=2 rc=16 rc=10 rc=8 rc=0 rc=2 rc=16 rc=5 rc=16 rc=3 rc=4 rc=2 rc=3 PB 2=2 rc=4 rc=6 rc=2 rc=8 rc=11 rc=5 rc=2 0 0 1 1 0 0 0 1 1 1 1 1 1 1 0 0 0 1 1 0 0 0 0 1 1 1 1 1 0 0 0 0 1 1 1 1 1 0 1 1 0 0 1 1 0 0 1 1 0 0 0 0 0 0 1 0 0 0 0 0 1 1 1 1 0 1 0 0 0 1 1 1 1 1 1 1 1 1 0 0 0 0 1 1 0 0 1 1 0 0 0 0 0 0 0 0 1 0 0 0 0 1 0 0 1 1 0 0 0 0 0 1 1 0 0 0 0 0 0 0 0 0 1 1 0 0 0 0 1 0 0 0 1 0 0 0 0 1 1 0 0 1 0 1 1 1 0 0 1 1 0 1 0 0 0 1 1 0 0

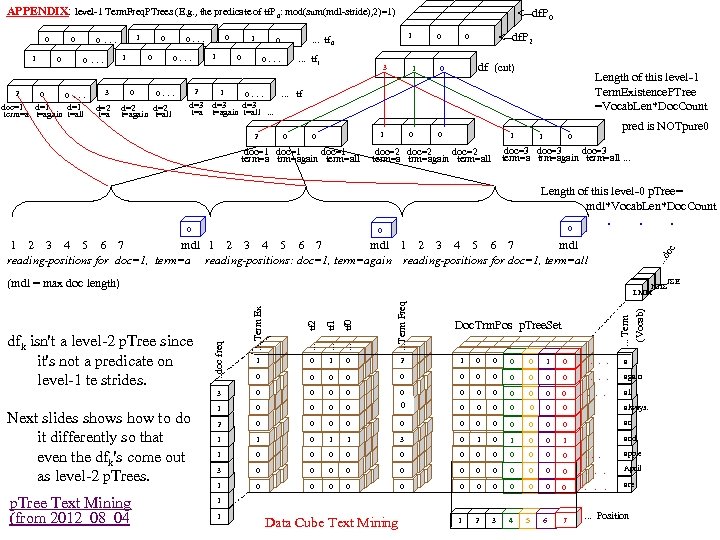

APPENDIX: level-1 Term. Freq. PTrees (E. g. , the predicate of tf. P 0: mod(sum(mdl-stride), 2)=1) 0 1 2 0 0 1 0 . . . doc=1 d=1 term=a t=again t=all 3 0. . . 0 0. . . d=2 t=again t=all 0 1 2 1 1 1 0. . . tf 0 0 0. . . tf 1 0. . . tf d=3 t=again t=all . . . 2 0 8 3 1 8 8 0 1 1 1 0 3 3 <--df. P 0 3 <--df. P 2 df (cnt) 0 Length of this level-1 Term. Existence. PTree =Vocab. Len*Doc. Count 1 0 0 0 1 1 pred is NOTpure 0 0 doc=3 doc=1 doc=2 term=a trm=again term=all. . . 0 Length of this level-0 p. Tree= mdl*Vocab. Len*Doc. Count . . . 0 0 . . . d oc 1 2 3 4 5 6 7 mdl reading-positions for doc=1, term=a reading-positions: doc=1, term=again reading-positions for doc=1, term=all JSE HHS LMM p. Tree Text Mining (from 2012_08_04 . . . tf 0 . . . Term Freq 1 0 2 1 0 0 1 0 . a 0 0 0 . again 0 0 0 . all 1 0 0 0 3 0 0 0 0 always. 2 0 0 0 an 1 1 0 1 1 3 0 1 0 0 1 and . . . Term (Vocab) . . . tf 1 0 . . doc freq . . . tf 2 Next slides shows how to do it differently so that even the dfk's come out as level-2 p. Trees. 1 3 dfk isn't a level-2 p. Tree since it's not a predicate on level-1 te strides. . Term Ex (mdl = max doc length) Doc. Trm. Pos p. Tree. Set 1 0 0 0 . . . apple 3 0 0 0 . April 1 0 0 0 . are 1 2 3 4 1 1 Data Cube Text Mining 5 6 7 . . . Position

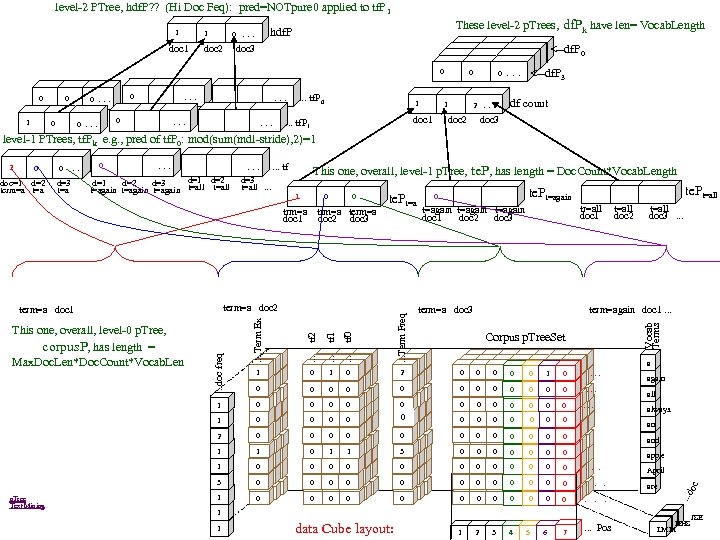

level-2 PTree, hdf. P? ? (Hi Doc Feq): pred=NOTpure 0 applied to tf. P 1 1 These level-2 p. Trees, df. Pk have len= Vocab. Length hdf. P 0 . . . 1 doc 2 doc 3 0 0 1 0 0 . . . 0 . . . tf. P 0 1 . . . 8 8 8 0 1 1 1 3 3 3 0 . . . 2 . . . 1 <--df. P 0 <--df. P 3 df count doc 1 doc 2 doc 3 . . . tf. P 1 level-1 PTrees, tf. Pk e. g. , pred of tf. P 0: mod(sum(mdl-stride), 2)=1 0 0 . . . doc=1 d=2 d=3 term=a t=a 0 . . tf d=1 d=2 d=3 t=again t=all . . . This one, overall, level-1 p. Tree, te. P, has length = Doc. Count*Vocab. Length 1 0 0 trm=a term=a doc 1 doc 2 doc 3 te. Pt=a term=a doc 2 te. Pt=again te. Pt=all tr=all t=all doc 1 doc 2 doc 3 . . . t=again doc 1 doc 2 doc 3 term=a doc 3 . . . tf 0 . . . Term Freq 0 2 0 0 0 1 0 . . . 0 0 0 0 0 0 . . . 0 0 0 3 0 0 0 0 2 0 0 0 1 1 0 1 1 3 0 0 0 0 apple 1 0 0 0 . . . April 3 0 0 0 . are 1 0 0 0 . 1 2 3 4 Vocab Terms . . . tf 1 1 Corpus p. Tree. Set a data Cube layout: 5 6 7 all always. an and 1 1 again . . . Pos . . . doc freq 0 1 p. Tree Text Mining 1 1 This one, overall, level-0 p. Tree, corpus. P, has length = Max. Doc. Len*Doc. Count*Vocab. Len . . . tf 2 term=again doc 1. . . Term Ex term=a doc 1 0 oc 2 JSE HHS LMM

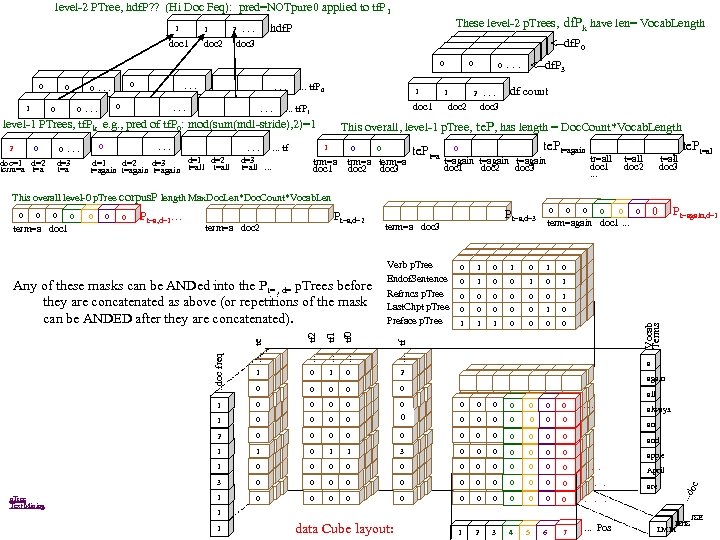

level-2 PTree, hdf. P? ? (Hi Doc Feq): pred=NOTpure 0 applied to tf. P 1 1 These level-2 p. Trees, df. Pk have len= Vocab. Length hdf. P 2 . . . 1 doc 2 doc 3 0 0 1 0 0 . . . 0 . . . tf. P 0 1 . . . doc=1 d=2 d=3 term=a t=a . . . 0 0 . . . tf d=1 d=2 d=3 t=again t=all . . . 8 8 0 1 1 1 3 3 0 . . . <--df. P 3 df count 2 . . . 1 <--df. P 0 3 doc 1 doc 2 doc 3 . . . tf. P 1 level-1 PTrees, tf. Pk e. g. , pred of tf. P 0: mod(sum(mdl-stride), 2)=1 2 8 This overall, level-1 p. Tree, te. P, has length = Doc. Count*Vocab. Length 1 0 0 te. Pt=a trm=a term=a doc 1 doc 2 doc 3 0 te. Pt=again t=again doc 1 doc 2 doc 3 te. Pt=al l tr=all t=all doc 1 doc 2 doc 3 . . . This overall level-0 p. Tree corpus. P length Max. Doc. Len*Doc. Count*Vocab. Len 0 term=a doc 1 0 0 Pt=a, d=1. . . Pt=a, d=2 term=a doc 3 0 1 0 0 0 Pt=again, d=1 0 1 0 0 0 1 1 1 0 0 1 0 2 0 0 0 1 0 0 0 . . . 1 0 0 0 3 0 0 0 0 2 0 0 0 1 1 0 1 1 3 0 0 0 0 apple 1 0 0 0 . . . April 3 0 0 0 . are 1 0 0 0 . 1 2 3 4 p. Tree Text Mining a again all an and 1 1 data Cube layout: 5 6 7 always. . Pos . . . doc freq . . . tf 0 0 . . . tf 0 1 0 . . . tf 1 0 0 . . . tf 2 Verb p. Tree Endof. Sentence Refrncs p. Tree Last. Chpt p. Tree Preface p. Tree 0 term=again doc 1. . . te Any of these masks can be ANDed into the Pt= , d= p. Trees before they are concatenated as above (or repetitions of the mask can be ANDED after they are concatenated). Pt=a, d=3 oc 0 Vocab Terms 0 JSE HHS LMM

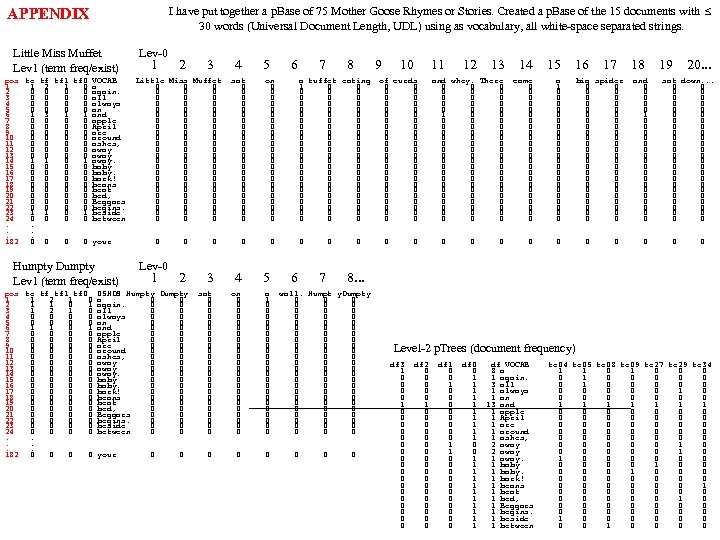

I have put together a p. Base of 75 Mother Goose Rhymes or Stories. Created a p. Base of the 15 documents with 30 words (Universal Document Length, UDL) using as vocabulary, all white-space separated strings. APPENDIX Little Miss Muffet Lev 1 (term freq/exist) pos te tf tf 1 tf 0 VOCAB 1 1 2 1 0 a 2 0 0 again. 3 0 0 all 4 0 0 always 5 0 0 an 6 1 3 1 1 and 7 0 0 apple 8 0 0 April 9 0 0 are 10 0 0 around 11 0 0 ashes, 12 0 0 away 13 0 0 away 14 1 1 0 1 away. 15 0 0 baby 16 0 0 baby. 17 0 0 bark! 18 0 0 beans 19 0 0 beat 20 0 0 bed, 21 0 0 Beggars 22 0 0 begins. 23 1 1 0 1 beside 24 0 0 between. . . 182 0 0 your Humpty Dumpty Lev 1 (term freq/exist) pos te tf tf 1 tf 0 1 1 2 1 0 2 1 1 0 1 3 1 2 1 0 4 0 0 5 0 0 6 1 1 0 1 7 0 0 8 0 0 9 0 0 10 0 0 11 0 0 12 0 0 13 0 0 14 0 0 15 0 0 16 0 0 17 0 0 18 0 0 19 0 0 20 0 0 21 0 0 22 0 0 23 0 0 24 0 0. . . 182 0 0 Lev-0 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20. . . Little Miss Muffet 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 on 0 0 0 0 0 0 0 a tuffet eating 1 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 of curds 0 0 0 0 0 0 0 0 0 0 0 0 0 and whey. There 0 0 0 0 1 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 came 0 0 0 0 0 0 a 1 0 0 0 0 0 0 big spider 0 0 0 0 0 0 0 0 0 0 0 0 0 and 0 0 0 1 0 0 0 0 0 sat down. . . 0 0 0 0 0 0 0 0 0 0 0 0 0 Lev-0 1 2 3 4 5 6 7 8. . . 05 HDS Humpty Dumpty a 0 0 again. 0 0 all 0 0 always 0 0 and 0 0 apple 0 0 April 0 0 are 0 0 around 0 0 ashes, 0 0 away. 0 0 baby. 0 0 bark! 0 0 beans 0 0 beat 0 0 bed, 0 0 Beggars 0 0 begins. 0 0 beside 0 0 between 0 0 your sat 0 0 0 0 0 0 0 sat 0 0 0 0 0 0 on 0 0 0 0 0 0 a 1 0 0 0 0 0 0 0 wall. Humpt y. Dumpty 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 Level-2 p. Trees (document frequency) df 3 1 0 0 0 0 0 0 df 2 0 0 0 1 0 0 0 0 0 df 1 0 0 0 0 0 1 1 0 0 0 df 0 0 1 1 1 1 1 1 df VOCAB 8 a 1 again. 3 all 1 always 1 an 13 and 1 apple 1 April 1 are 1 around 1 ashes, 2 away 1 away. 1 baby. 1 bark! 1 beans 1 beat 1 bed, 1 Beggars 1 begins. 1 beside 1 between te 04 te 05 te 08 te 09 te 27 te 29 te 34 1 1 0 0 0 0 0 0 0 1 0 0 0 0 1 1 1 1 0 0 0 0 0 0 0 0 0 0 1 0 0 0 0 0 0 1 0 0 0 0 0 0 0 0 1 0 0 0 0 1 0 0 0 0 1 0 0

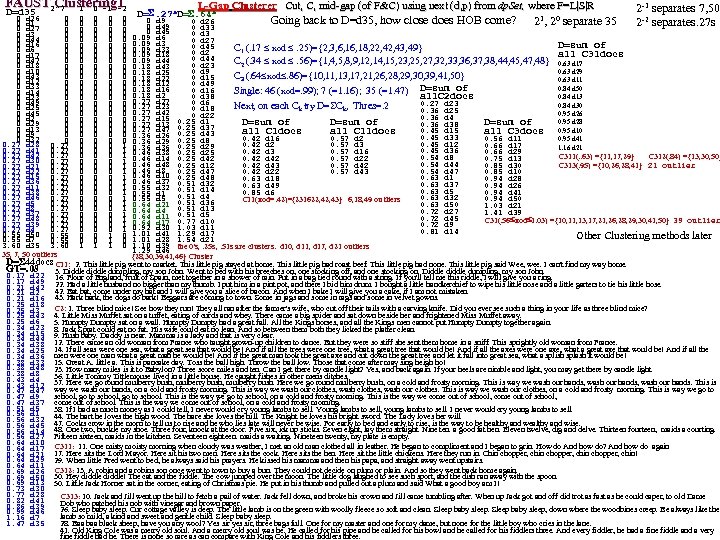

FAUST 2^? 1 0 -1 -2 Clustering 1 D=d 35 L-Gap Clusterer Cut, C, mid-gap (of F&C) using next (d, p) from dp. Set, where F=L|S|R Cut, C, mid-gap (of F&C) using next (d, p) from dp. Set, D=. 27 s. D=. 64 s 2 -1 separates 7, 50 2 -2 separates. 27 s 0 d 26 0 0 0 d 9 0 d 26 Going back to D=d 35, how close does HOB come? 21, 20 separate 35 0 d 1 0 0 0 d 49 0 d 33 0 d 27 0 0 0 d 45 0 d 3 0 0 0. 09 d 6 0 d 44 0 0 0. 09 d 3 0 d 27 0 d 16 0 0 0 D=sum of 0 d 45 C 1 (. 17 xod . 25)={2, 3, 6, 18, 22, 43, 49} 0. 09 d 33 0 d 6 0 0 0 d 2 all C 31 docs 0 d 17 0 0 0. 09 d 18 0 d 44 0 d 47 0 0 0. 09 d 44 C 2 (. 34 xod . 56)={1, 4, 5, 8, 9, 12, 14, 15, 23, 25, 27, 32, 33, 36, 37, 38, 44, 45, 47, 48} 0. 63 d 17 0 d 23 0 d 18 0 0 0. 18 d 43 0 d 10 0 d 9 0. 63 d 29 0 0 0. 18 d 25 0 d 43 C 3 (. 64 xod. 86)={10, 11, 13, 17, 21, 26, 28, 29, 30, 39, 41, 50} 0 0 0. 18 d 22 0 d 15 0. 63 d 11 0 d 12 0 0 0. 18 d 12 0 d 49 0 d 33 0 0 0. 18 d 16 0. 84 d 50 0 d 16 Single: 46 (xod=. 99); 7 (=1. 16); 35 (=1. 47) D=sum of 0 d 14 0 0 0. 18 d 2 0 d 38 0. 84 d 13 all. C 2 docs 0 d 23 0 0 0. 27 d 27 0 d 6 0 d 49 0 0 0. 27 d 23 0. 84 d 30 Next, on each Ck try D= Ck, Thres=. 2 0 d 25 0 0 0 d 18 0. 36 d 25 0 d 45 0. 95 d 26 0 0 0. 27 d 42 0 d 22 0. 36 d 4 0 d 2 0 0 0. 27 d 15 0. 25 d 1 0. 95 d 28 D=sum of 0 d 29 0. 36 d 38 0 0 0. 27 d 13 0. 25 d 37 0 d 13 0 0 0. 27 d 47 0. 45 d 15 all C 1 docs all C 11 docs all C 3 docs 0. 95 d 10 0 d 9 0 0 0. 36 d 26 0. 25 d 43 0. 45 d 33 0. 42 d 16 0. 57 d 2 0. 56 d 11 0. 95 d 41 0 d 32 0. 25 d 8 0 0 0. 45 d 12 0. 27 d 28 0. 27 0 0 0 1 0. 36 d 29 0. 25 d 29 0. 42 d 2 0. 57 d 3 0. 66 d 17 1. 16 d 21 0. 27 d 41 0. 27 0 0 0 1 0. 36 d 36 0. 25 d 25 0. 45 d 36 0. 42 d 3 0. 57 d 16 0. 66 d 29 0. 46 d 38 0. 27 d 42 0. 27 0 0 0 1 0. 46 d 14 0. 25 d 42 C 311(. . 63) ={11, 17, 29} C 312(. 84) ={13, 30, 50} 0. 54 d 8 0. 42 d 42 0. 57 d 22 0. 75 d 13 0. 27 d 30 0. 27 0 0 0 1 0. 46 d 48 0. 54 d 44 0. 25 d 12 0. 42 d 43 0. 57 d 42 0. 85 d 30 C 313(. 95) ={10, 26, 28, 41} 21 outlier 0. 27 d 21 0. 27 0 0 0 1 0. 54 d 47 0. 27 d 22 0. 27 0 0 0 1 0. 46 d 8 0. 25 d 47 0. 42 d 22 0. 57 d 43 0. 85 d 10 0. 27 d 15 0. 27 0 0 0 1 0. 46 d 10 0. 25 d 48 0. 63 d 18 0. 94 d 28 0. 27 d 36 0. 27 0 0 0 1 0. 46 d 37 0. 51 d 32 0. 63 d 37 0. 63 d 49 0. 94 d 26 0. 27 d 11 0. 27 0 0 0 1 0. 55 d 32 0. 51 d 14 0. 63 d 5 0. 85 d 6 0. 94 d 41 0. 27 d 38 0. 27 0 0 0 1 0. 55 d 1 0. 51 d 4 0. 27 d 46 0. 27 0 0 0 1 0. 55 d 5 0. 63 d 32 0. 94 d 50 C 11(xod=. 42)={231622, 43} 6, 18, 49 outliers 0. 27 d 5 0. 27 0 0 0 1 0. 64 d 21 0. 51 d 36 0. 63 d 50 1. 03 d 21 0. 27 d 8 0. 27 0 0 0 1 0. 64 d 4 0. 51 d 13 0. 72 d 27 1. 41 d 39 0. 27 d 37 0. 27 0 0 0 1 0. 64 d 11 0. 51 d 5 0. 72 d 45 0. 27 d 48 0. 27 0 0 0 1 0. 64 d 17 0. 77 d 10 C 31(. 56 xod 1. 03) ={10, 11, 13, 17, 21, 26, 28, 29, 30, 41, 50} 39 outlier 0. 27 d 39 0. 27 0 0 0 1 0. 92 d 30 1. 03 d 11 0. 72 d 9 0. 27 d 4 0. 27 0 0 0 1 1. 01 d 41 1. 29 d 17 0. 81 d 14 0. 55 d 50 0. 55 0 0 1 0 Other Clustering methods later 0. 55 d 7 0. 55 0 0 1. 01 d 28 1. 54 d 21 3. 60 d 35 3. 60 1 1 1 0 1. 10 d 39 the 0's, . 25 s, . 51 s are clusters. d 10, d 11, d 17, d 21 outliers 1. 29 d 46 35, 7, 50 outliers {28, 30, 39, 41, 46} Cluster D= 44 docs C 11: 2. This little pig went to market. This little pig stayed at home. This little pig had roast beef. This little pig had none. This little pig said Wee, wee. I can't find my way home. GT=. 08 3. Diddle dumpling, my son John. Went to bed with his breeches on, one stocking off, and one stocking on. Diddle dumpling, my son John. 0. 17 d 22 16. Flour of England, fruit of Spain, met together in a shower of rain. Put in a bag tied round with a string. If you'll tell me this riddle, I will give you a ring. 0. 17 d 49 22. Had a little husband no bigger than my thumb. I put him in a pint pot, and there I bid him drum. I bought a little handkerchief to wipe his little nose and a little garters to tie his little hose. 0. 21 d 42 42. Bat bat, come under my hat and I will give you a slice of bacon. And when I bake I will give you a cake, if I am not mistaken. 0. 21 d 2 0. 21 d 16 43. Hark hark, the dogs do bark! Beggars are coming to town. Some in jags and some in rags and some in velvet gowns. 0. 25 d 18 C 2: 1. Three blind mice! See how they run! They all ran after the farmer's wife, who cut off their tails with a carving knife. Did you ever see such a thing in your life as three blind mice? 0. 25 d 3 0. 25 d 43 4. Little Miss Muffet sat on a tuffet, eating of curds and whey. There came a big spider and sat down beside her and frightened Miss Muffet away. 0. 25 d 6 5. Humpty Dumpty sat on a wall. Humpty Dumpty had a great fall. All the Kings horses, and all the Kings men cannot put Humpty Dumpty together again. 0. 34 d 23 8. Jack Sprat could eat no fat. His wife could eat no lean. And so between them both they licked the platter clean. 0. 34 d 15 9. Hush baby. Daddy is near. Mamma is a lady and that is very clear. 0. 34 d 44 12. There came an old woman from France who taught grown-up children to dance. But they were so stiff she sent them home in a sniff. This sprightly old woman from France. 0. 34 d 38 0. 34 d 25 14. If all seas were one sea, what a great sea that would be! And if all the trees were one tree, what a great tree that would be! And if all the axes were one axe, what a great axe that would be! And if all the 0. 34 d 36 men were one man what a great man he would be! And if the great man took the great axe and cut down the great tree and let it fall into great sea, what a splish splash it would be! 0. 38 d 33 15. Great A. little a. This is pancake day. Toss the ball high. Throw the ball low. Those that come after may sing heigh ho! 0. 38 d 48 23. How many miles is it to Babylon? Three score miles and ten. Can I get there by candle light? Yes, and back again. If your heels are nimble and light, you may get there by candle light. 0. 38 d 8 36. Little Tommy Tittlemouse lived in a little house. He caught fishes in other mens ditches. 0. 43 d 4 0. 43 d 12 37. Here we go round mulberry bush, mulberry bush. Here we go round mulberry bush, on a cold and frosty morning. This is way we wash our hands, wash our hands. This is 0. 47 d 47 way we wash our hands, on a cold and frosty morning. This is way we wash our clothes, wash our clothes. This is way we wash our clothes, on a cold and frosty morning. This is way we go to school, go to school. This is the way we go to school, on a cold and frosty morning. This is the way we come out of school, 0. 47 d 9 0. 47 d 37 come out of school. This is the way we come out of school, on a cold and frosty morning. 0. 51 d 5 38. If I had as much money as I could tell, I never would cry young lambs to sell. Young lambs to sell, young lambs to sell. I never would cry young lambs to sell. 0. 56 d 1 0. 56 d 32 44. The hart he loves the high wood. The hare she loves the hill. The Knight he loves his bright sword. The Lady loves her will. 0. 56 d 45 47. Cocks crow in the morn to tell us to rise and he who lies late will never be wise. For early to bed and early to rise, is the way to be healthy and wise. 0. 56 d 14 48. One two, buckle my shoe. Three four, knock at the door. Five six, ick up sticks. Seven eight, lay them straight. Nine ten. a good fat hen. Eleven twelve, dig and delve. Thirteen fourteen, maids a courting. 0. 56 d 27 Fifteen sixteen, maids in the kitchen. Seventeen eighteen. maids a waiting. Nineteen twenty, my plate is empty. 0. 64 d 10 0. 64 d 17 C 311: 11. One misty morning when cloudy was weather, I met an old man clothed all in leather. He began to compliment and I began to grin. How do And how do? And how do again 0. 64 d 21 17. Here sits the Lord Mayor. Here sit his two men. Here sits the cock. Here sits the hen. Here sit the little chickens. Here they run in. Chin chopper, chin! 0. 64 d 29 29. When little Fred went to bed, he always said his prayers. He kissed his mamma and then his papa, and straight away went upstairs. 0. 64 d 11 0. 69 d 26 C 312: 13. A robin and a robins son once went to town to buy a bun. They could not decide on plum or plain. And so they went back home again. 0. 69 d 50 30. Hey diddle! The cat and the fiddle. The cow jumped over the moon. The little dog laughed to see such sport, and the dish ran away with the spoon. 0. 69 d 13 50. Little Jack Horner sat in the corner, eating of Christmas pie. He put in his thumb and pulled out a plum and said What a good boy am I! 0. 73 d 30 0. 77 d 28 C 313: 10. Jack and Jill went up the hill to fetch a pail of water. Jack fell down, and broke his crown and Jill came tumbling after. When up Jack got and off did trot as fast as he could caper, to old Dame 0. 82 d 41 Dob who patched his nob with vinegar and brown paper. 0. 86 d 39 26. Sleep baby sleep. Our cottage valley is deep. The little lamb is on the green with woolly fleece so soft and clean. Sleep baby sleep, down where the woodbines creep. Be always like the 0. 99 d 46 lamb so mild, a kind and sweet and gentle child. Sleep baby sleep. 1. 16 d 7 1. 47 d 35 28. Baa black sheep, have you any wool? Yes sir yes sir, three bags full. One for my master and one for my dame, but none for the little boy who cries in the lane. 41. Old King Cole was a merry old soul. And a merry old soul was he. He called for his pipe and he called for his bowl and he called for his fiddlers three. And every fiddler, he had a fine fiddle and a very fine fiddle had he. There is none so rare as can compare with King Cole and his fiddlers three.

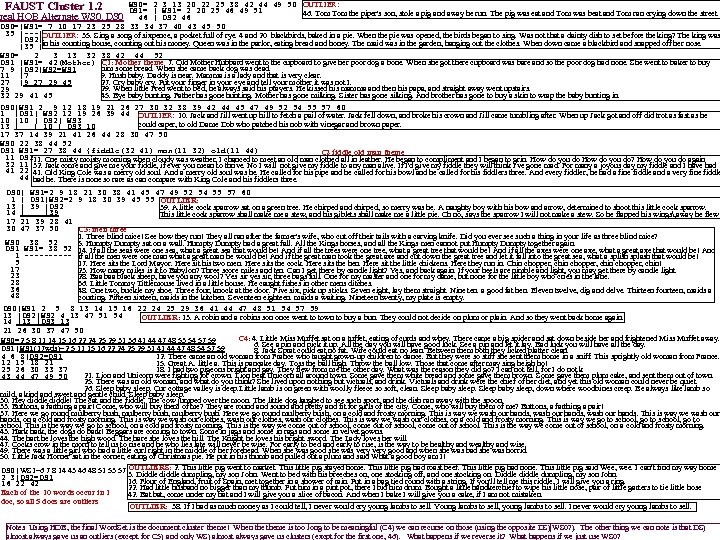

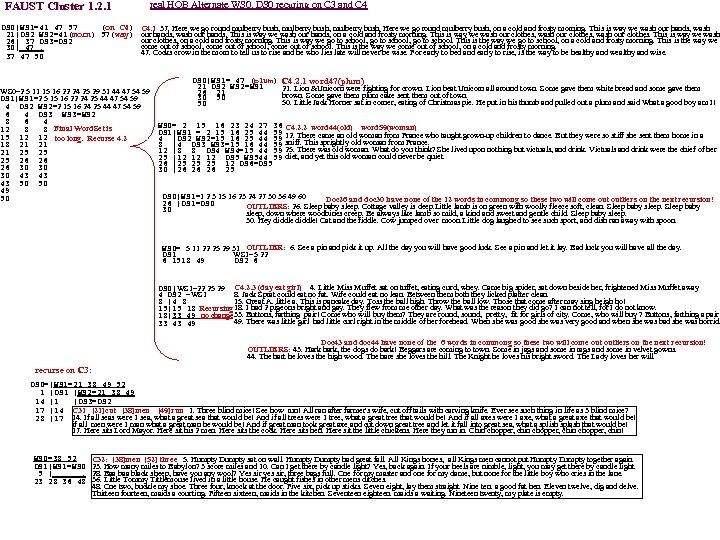

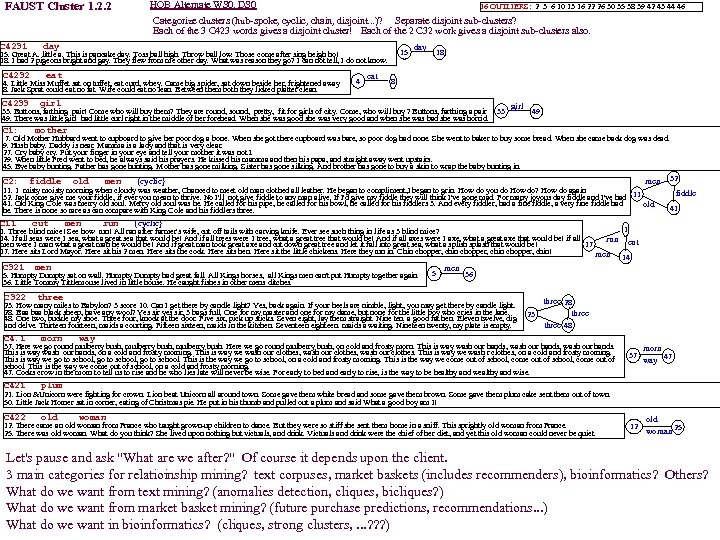

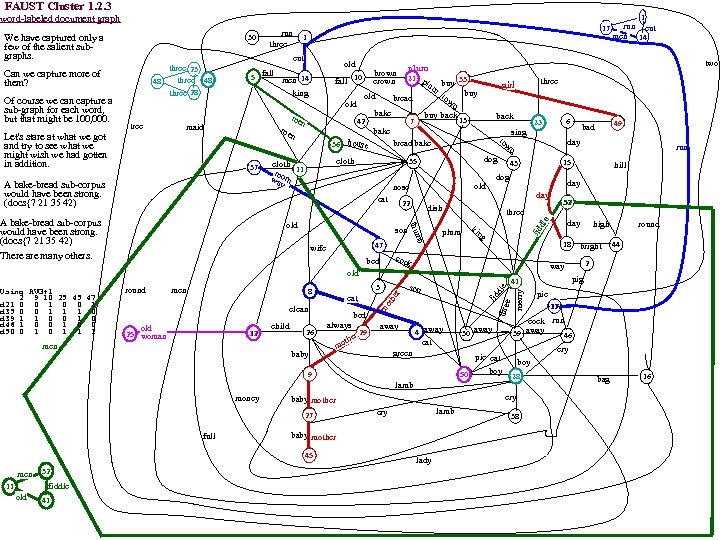

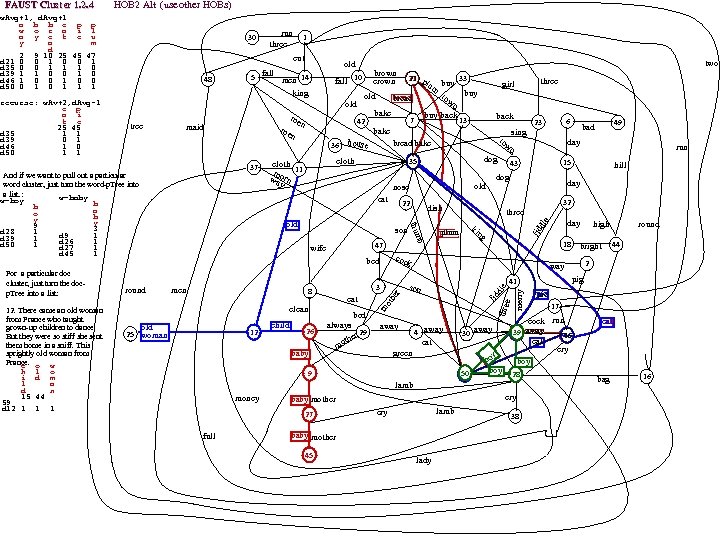

FAUST Cluster 1. 2 WS 0= 2 3 13 20 22 25 38 42 44 49 50 OUTLIER: DS 1= | WS 1= 2 20 25 46 49 51 46. Tom the piper's son, stole a pig and away he run. The pig was eat and Tom was beat and Tom ran crying down the street. 46 | DS 2 46 DS 0=|WS 1= 7 10 17 23 25 28 33 34 37 40 43 45 50 35 |---|OUTLIER: 35. Sing a song of sixpence, a pocket full of rye. 4 and 20 blackbirds, baked in a pie. When the pie was opened, the birds began to sing. Was not that a dainty dish to set before the king? The king was |DS 2| |35 |in his counting house, counting out his money. Queen was in the parlor, eating bread and honey. The maid was in the garden, hanging out the clothes. When down came a blackbird and snapped off her nose. WS 0= 2 3 13 32 38 42 44 52 DS 1 |WS 1= 42(Mother) C 1: Mother theme 7. Old Mother Hubbard went to the cupboard to give her poor dog a bone. When she got there cupboard was bare and so the poor dog had none. She went to baker to buy him some bread. When she came back dog was dead. 7 9 |DS 2|WS 2=WS 1 9. Hush baby. Daddy is near. Mamma is a lady and that is very clear. 11 |7 27. Cry baby cry. Put your finger in your eye and tell your mother it was not I. 27 |9 27 29 45 29. When little Fred went to bed, he always said his prayers. He kissed his mamma and then his papa, and straight away went upstairs. 29 45. Bye baby bunting. Father has gone hunting. Mother has gone milking. Sister has gone silking. And brother has gone to buy a skin to wrap the baby bunting in. 32 29 41 45 DS 0|WS 1 2 9 12 18 19 21 26 27 30 32 38 39 42 44 45 47 49 52 54 55 57 60 1 |DS 1| WS 2 12 19 26 39 44 OUTLIER: 10. Jack and Jill went up hill to fetch a pail of water. Jack fell down, and broke his crown and Jill came tumbling after. When up Jack got and off did trot as fast as he 10 | DS 2| WS 3 could caper, to old Dame Dob who patched his nob with vinegar and brown paper. 13 | | 10 | DS 3 10 17 37 14 39 21 41 26 44 28 30 47 50 WS 0 22 38 44 52 DS 1 WS 1= 27 38 44 {fiddle(32 41) man(11 32) old(11 44) C 2 fiddle old man theme 11 DS 211. One misty morning when cloudy was weather, I chanced to meet an old man clothed all in leather. He began to compliment and I began to grin. How do you do? How do you do again 32 11 32. Jack come and give me your fiddle, if ever you mean to thrive. No I will not give my fiddle to any man alive. If I'd give my fiddle they will think I've gone mad. For many a joyous day my fiddle and I have had 41 22 41. Old King Cole was a merry old soul. And a merry old soul was he. He called for his pipe and he called for his bowl and he called for his fiddlers three. And every fiddler, he had a fine fiddle and a very fine fiddle 44 had he. There is none so rare as can compare with King Cole and his fiddlers three. real HOB Alternate WS 0, DS 0| WS 1=2 9 18 21 30 38 41 45 47 49 52 54 55 57 60 1 | DS 1|WS 2=2 9 18 30 39 45 55 OUTLIER: 13 | 39 |DS 2 39. A little cock sparrow sat on a green tree. He chirped and chirped, so merry was he. A naughty boy with his bow and arrow, determined to shoot this little cock sparrow. 14 | |39 This little cock sparrow shall make me a stew, and his giblets shall make me a little pie. Oh no, says the sparrow I will not make a stew. So he flapped his wings, away he flew 17 21 39 28 41 30 47 37 50 C 3: men three 1. Three blind mice! See how they run! They all ran after the farmer's wife, who cut off their tails with a carving knife. Did you ever see such a thing in your life as three blind mice? WS 0 38 52 5. Humpty Dumpty sat on a wall. Humpty Dumpty had a great fall. All the Kings horses, and all the Kings men cannot put Humpty Dumpty together again. DS 1 WS 1= 38 52 14. If all the seas were one sea, what a great sea that would be! And if all the trees were one tree, what a great tree that would be! And if all the axes were one axe, what a great axe that would be! And 1 ----- if all the men were one man what a great man he would be! And if the great man took the great axe and cut down the great tree and let it fall into the great sea, what a splish splash that would be! 5 17. Here sits the Lord Mayor. Here sit his two men. Here sits the cock. Here sits the hen. Here sit the little chickens. Here they run in. Chin chopper, chin! 17 23. How many miles is it to Babylon? Three score miles and ten. Can I get there by candle light? Yes, and back again. If your heels are nimble and light, you may get there by candle light. 23 28. Baa black sheep, have you any wool? Yes sir yes sir, three bags full. One for my master and one for my dame, but none for the little boy who cries in the lane. 28 36. Little Tommy Tittlemouse lived in a little house. He caught fishes in other mens ditches. 36 48. One two, buckle my shoe. Three four, knock at the door. Five six, pick up sticks. Seven eight, lay them straight. Nine ten. a good fat hen. Eleven twelve, dig and delve. Thirteen fourteen, maids a 48 courting. Fifteen sixteen, maids in the kitchen. Seventeen eighteen. maids a waiting. Nineteen twenty, my plate is empty. DS 0|WS 1 2 5 8 13 14 15 16 22 24 25 29 36 41 44 47 48 51 54 57 59 13 |DS 2|WS 2 4 13 47 51 54 OUTLIER: 13. A robin and a robins son once went to town to buy a bun. They could not decide on plum or plain. And so they went back home again. 14 |13 |DS 3 13 21 26 30 37 47 50 C 4: 4. Little Miss Muffet sat on a tuffet, eating of curds and whey. There came a big spider and sat down beside her and frightened Miss Muffet away. WS 0=2 5 8 11 14 15 16 22 24 25 29 31 36 41 44 47 48 53 54 57 59 6. See a pin and pick it up. All the day you will have good luck. See a pin and let it lay. Bad luck you will have all the day. DS 1|WS 1(17 wds)=2 5 11 15 16 22 24 25 29 31 41 44 47 48 54 57 59 8. Jack Sprat could eat no fat. Wife could eat no lean. Between them both they licked platter clean. 4 6 8|DS 2=DS 1 12. There came an old woman from France who taught grown-up children to dance. But they were so stiff she sent them home in a sniff. This sprightly old woman from France. 12 15 18 21 15. Great A. little a. This is pancake day. Toss the ball high. Throw the ball low. Those that come after may sing heigh ho! 18. I had two pigeons bright and gay. They flew from me the other day. What was the reason they did go? I can not tell, for I do not k 25 26 30 33 37 21. Lion and Unicorn were fighting for crown. Lion beat Unicorn all around town. Some gave them white bread and some gave them brown. Some gave them plum cake, and sent them out of town. 43 44 47 49 50 25. There was an old woman, and what do you think? She lived upon nothing but victuals, and drink. Victuals and drink were the chief of her diet, and yet this old woman could never be quiet. 26. Sleep baby sleep. Our cottage valley is deep. Little lamb is on green with woolly fleece so soft, clean. Sleep baby sleep, down where woodbines creep. Be always like lamb so mild, a kind and sweet and gentle child. Sleep baby sleep. 30. Hey diddle! The cat and the fiddle. The cow jumped over the moon. The little dog laughed to see such sport, and the dish ran away with the spoon. 33. Buttons, a farthing a pair! Come, who will buy them of me? They are round and sound and pretty and fit for girls of the city. Come, who will buy them of me? Buttons, a farthing a pair! 37. Here we go round mulberry bush, mulberry bush. Here we go round mulberry bush, on a cold and frosty morning. This is way we wash our hands, wash our hands. This is way we wash our hands, on a cold and frosty morning. This is way we wash our clothes, wash our clothes. This is way we wash our clothes, on a cold and frosty morning. This is way we go to school, go to school. This is the way we go to school, on a cold and frosty morning. This is the way we come out of school, come out of school. This is the way we come out of school, on a cold and frosty morning. 43. Hark hark, the dogs do bark! Beggars are coming to town. Some in jags and some in rags and some in velvet gowns. 44. The hart he loves the high wood. The hare she loves the hill. The Knight he loves his bright sword. The Lady loves her will. 47. Cocks crow in the morn to tell us to rise and he who lies late will never be wise. For early to bed and early to rise, is the way to be healthy and wise. 49. There was a little girl who had a little curl right in the middle of her forehead. When she was good she was very good and when she was bad she was horrid. 50. Little Jack Horner sat in the corner, eating of Christmas pie. He put in his thumb and pulled out a plum and said What a good boy am I! DS 0|WS 1=6 7 8 14 43 46 48 51 53 57 OUTLIERS: 2. This little pig went to market. This little pig stayed home. This little pig had roast beef. This little pig had none. This little pig said Wee, wee. I can't find my way home 3. Diddle dumpling, my son John. Went to bed with his breeches on, one stocking off, and one stocking on. Diddle dumpling, my son John. 2 3|DS 2=DS 1 16. Flour of England, fruit of Spain, met together in a shower of rain. Put in a bag tied round with a string. If you'll tell me this riddle, I will give you a ring. 16 22 42 22. Had little husband no bigger than my thumb. Put him in a pint pot, there I bid him drum. Bought a little handkerchief to wipe his little nose, pair of little garters to tie little hose Each of the 10 words occur in 1 42. Bat bat, come under my hat and I will give you a slice of bacon. And when I bake I will give you a cake, if I am not mistaken. doc, so all 5 docs are outliers OUTLIER: 38. If I had as much money as I could tell, I never would cry young lambs to sell. Young lambs to sell, young lambs to sell. I never would cry young lambs to sell. Notes Using HOB, the final Word. Set is the document cluster theme! When theme is too long to be meaningful (C 4) we can recurse on those (using the opposite DS)|WS 0? ). The other thing we can note is that DS) almost always gave us an outliers (except for C 5) and only WS) almost always gave us clusters (excpt for the first one, 46). What happens if we reverse it? What happens if we just use WS 0?