5166f6da8eb1401882b40e4258f87016.ppt

- Количество слайдов: 47

Three Talks • Scalability Terminology – Gray (with help from Devlin, Laing, Spix) • What Windows is doing re this – Laing • The M$ Peta. Byte (as time allows) – Gray 1

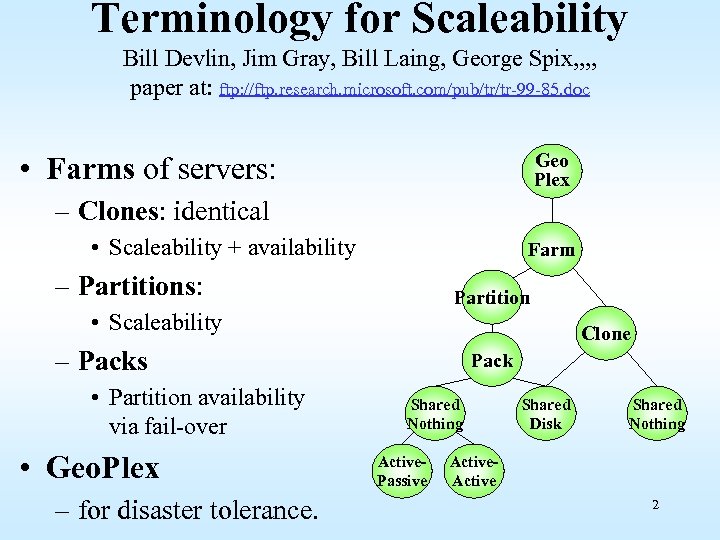

Terminology for Scaleability Bill Devlin, Jim Gray, Bill Laing, George Spix, , paper at: ftp: //ftp. research. microsoft. com/pub/tr/tr-99 -85. doc Geo Plex • Farms of servers: – Clones: identical • Scaleability + availability Farm – Partitions: Partition • Scaleability Clone – Packs • Partition availability via fail-over • Geo. Plex – for disaster tolerance. Pack Shared Nothing Active. Passive Shared Disk Shared Nothing Active 2

Unpredictable Growth • The Terra. Server Story: – Expected 5 M hits per day – Got 50 M hits on day 1 – Peak at 20 M hpd on a “hot” day – Average 5 M hpd over last 2 years • Most of us cannot predict demand – Must be able to deal with NO demand – Must be able to deal with HUGE demand 3

Web Services Requirements • Scalability: Need to be able to add capacity – New processing – New storage – New networking • Availability: Need continuous service – Online change of all components (hardware and software) – Multiple service sites – Multiple network providers • Agility: Need great tools – Manage the system – Change the application several times per year. – Add new services several times per year. 4

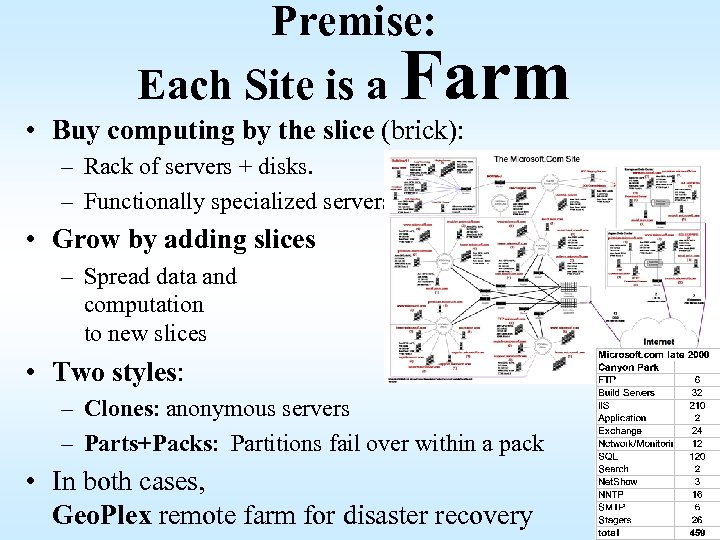

Premise: Each Site is a Farm • Buy computing by the slice (brick): – Rack of servers + disks. – Functionally specialized servers • Grow by adding slices – Spread data and computation to new slices • Two styles: – Clones: anonymous servers – Parts+Packs: Partitions fail over within a pack • In both cases, Geo. Plex remote farm for disaster recovery 5

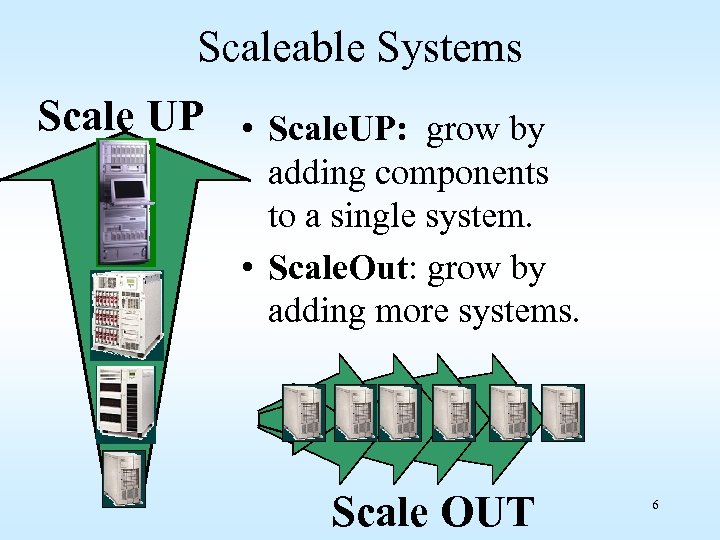

Scaleable Systems Scale UP • Scale. UP: grow by adding components to a single system. • Scale. Out: grow by adding more systems. Scale OUT 6

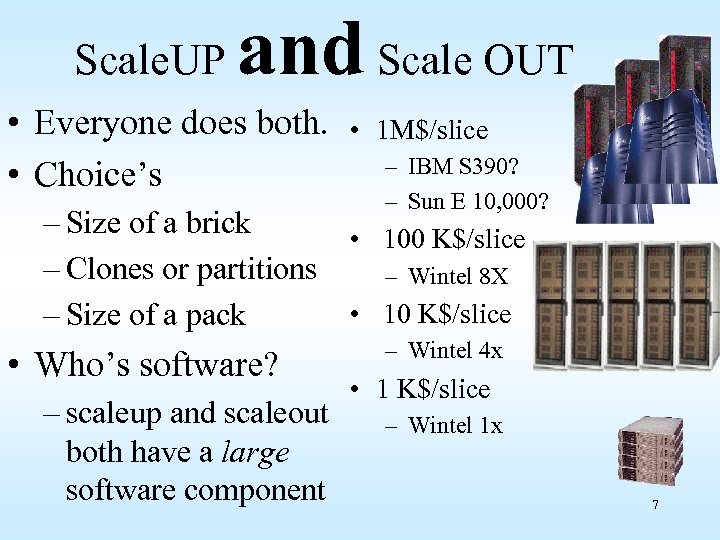

Scale. UP and Scale OUT • Everyone does both. • Choice’s • 1 M$/slice – IBM S 390? – Sun E 10, 000? – Size of a brick • 100 K$/slice – Clones or partitions – Wintel 8 X • 10 K$/slice – Size of a pack • Who’s software? – scaleup and scaleout both have a large software component – Wintel 4 x • 1 K$/slice – Wintel 1 x 7

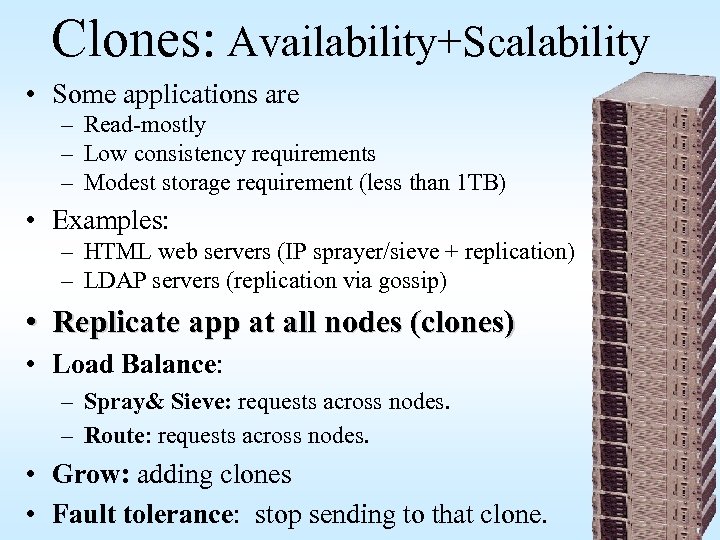

Clones: Availability+Scalability • Some applications are – Read-mostly – Low consistency requirements – Modest storage requirement (less than 1 TB) • Examples: – HTML web servers (IP sprayer/sieve + replication) – LDAP servers (replication via gossip) • Replicate app at all nodes (clones) • Load Balance: – Spray& Sieve: requests across nodes. – Route: requests across nodes. • Grow: adding clones • Fault tolerance: stop sending to that clone. 8

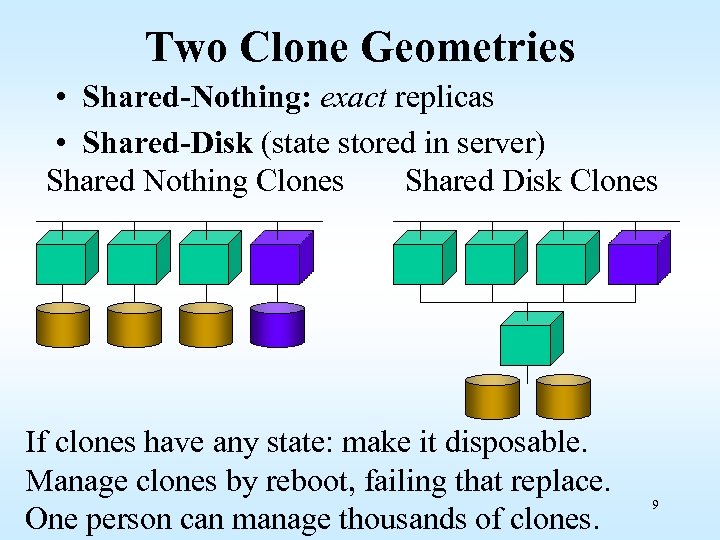

Two Clone Geometries • Shared-Nothing: exact replicas • Shared-Disk (state stored in server) Shared Nothing Clones Shared Disk Clones If clones have any state: make it disposable. Manage clones by reboot, failing that replace. One person can manage thousands of clones. 9

Clone Requirements • Automatic replication (if they have any state) – Applications (and system software) – Data • Automatic request routing – Spray or sieve • Management: – Who is up? – Update management & propagation – Application monitoring. • Clones are very easy to manage: – Rule of thumb: 100’s of clones per admin. 10

Partitions for Scalability • Clones are not appropriate for some apps. – State-full apps do not replicate well – high update rates do not replicate well • Examples – – – Email Databases Read/write file server… Cache managers chat • Partition state among servers • Partitioning: – must be transparent to client. – split & merge partitions online 11

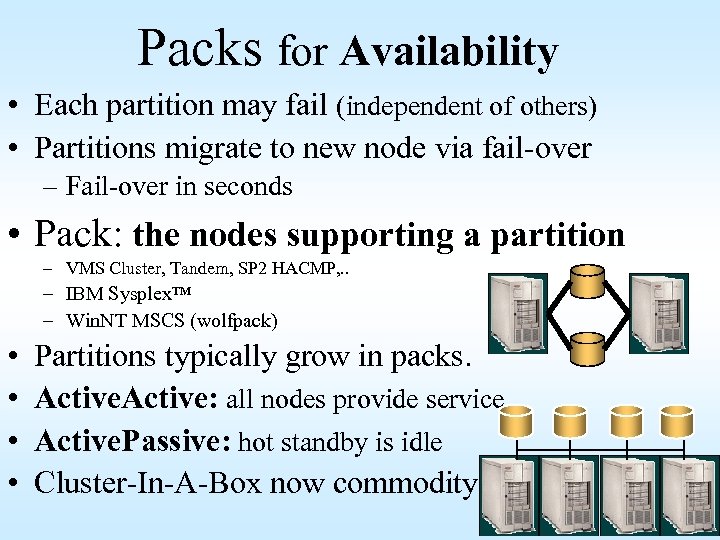

Packs for Availability • Each partition may fail (independent of others) • Partitions migrate to new node via fail-over – Fail-over in seconds • Pack: the nodes supporting a partition – VMS Cluster, Tandem, SP 2 HACMP, . . – IBM Sysplex™ – Win. NT MSCS (wolfpack) • • Partitions typically grow in packs. Active: all nodes provide service Active. Passive: hot standby is idle Cluster-In-A-Box now commodity 12

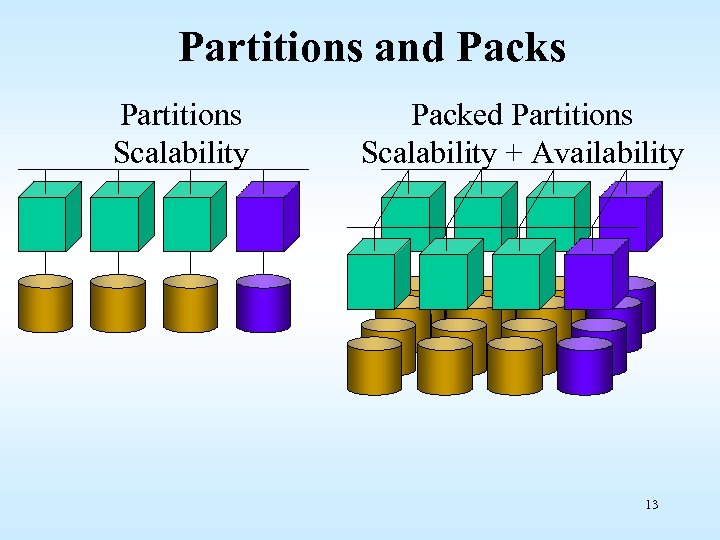

Partitions and Packs Partitions Scalability Packed Partitions Scalability + Availability 13

Parts+Packs Requirements • Automatic partitioning (in dbms, mail, files, …) – – Location transparent Partition split/merge Grow without limits (100 x 10 TB) Application-centric request routing • Simple fail-over model – Partition migration is transparent – MSCS-like model for services • Management: – Automatic partition management (split/merge) – Who is up? – Application monitoring. 14

Geo. Plex: Farm Pairs • • • Two farms (or more) State (your mailbox, bank account) stored at both farms Changes from one sent to other When one farm fails other provides service Masks – Hardware/Software faults – Operations tasks (reorganize, upgrade move) – Environmental faults (power fail, earthquake, fire) 15

Directory Fail-Over Load Balancing • Routes request to right farm – Farm can be clone or partition • At farm, routes request to right service • At service routes request to – Any clone – Correct partition. • Routes around failures. 16

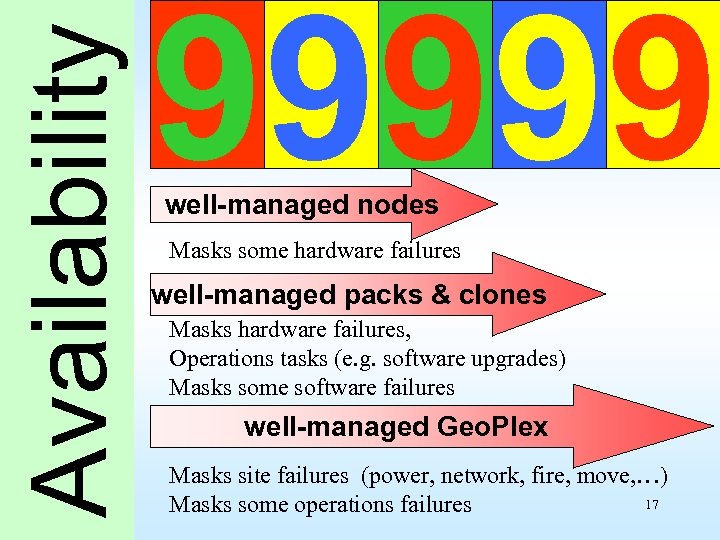

Availability 99999 well-managed nodes Masks some hardware failures well-managed packs & clones Masks hardware failures, Operations tasks (e. g. software upgrades) Masks some software failures well-managed Geo. Plex Masks site failures (power, network, fire, move, …) 17 Masks some operations failures

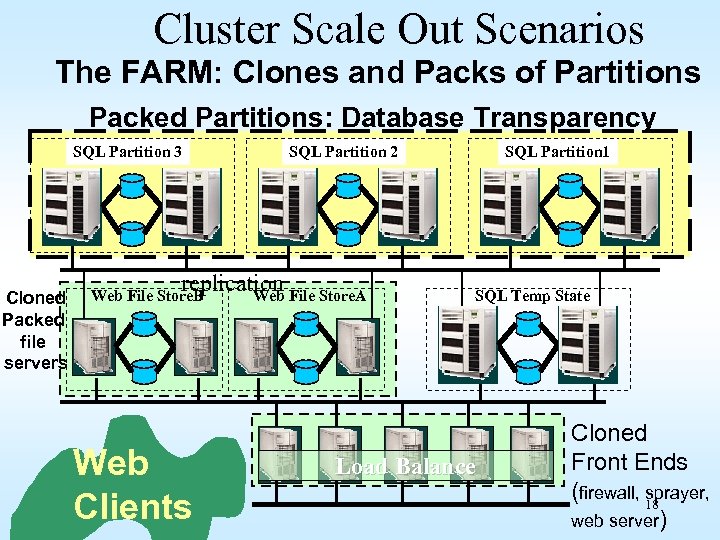

Cluster Scale Out Scenarios The FARM: Clones and Packs of Partitions Packed Partitions: Database Transparency SQL Partition 3 Cloned Packed file servers SQL Partition 2 replication File Store. A Web File Store. B Web Clients SQL Partition 1 SQL Database SQL Temp State Load Balance Cloned Front Ends (firewall, 18 sprayer, web server)

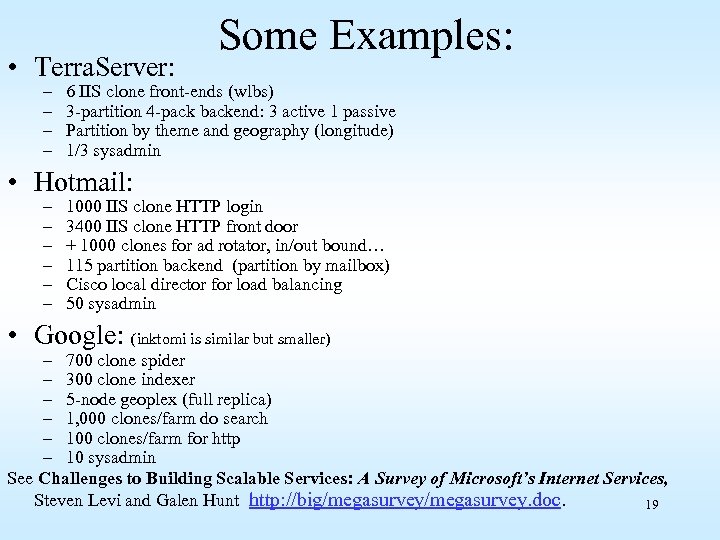

• Terra. Server: – – Some Examples: 6 IIS clone front-ends (wlbs) 3 -partition 4 -pack backend: 3 active 1 passive Partition by theme and geography (longitude) 1/3 sysadmin • Hotmail: – – – 1000 IIS clone HTTP login 3400 IIS clone HTTP front door + 1000 clones for ad rotator, in/out bound… 115 partition backend (partition by mailbox) Cisco local director for load balancing 50 sysadmin • Google: (inktomi is similar but smaller) – 700 clone spider – 300 clone indexer – 5 -node geoplex (full replica) – 1, 000 clones/farm do search – 100 clones/farm for http – 10 sysadmin See Challenges to Building Scalable Services: A Survey of Microsoft’s Internet Services, Steven Levi and Galen Hunt http: //big/megasurvey. doc. 19

Acronyms • RACS: Reliable Arrays of Cloned Servers • RAPS: Reliable Arrays of partitioned and Packed Servers (the first p is silent ). 20

Emissaries and Fiefdoms • Emissaries are stateless (nearly) Emissaries are easy to clone. • Fiefdoms are stateful Fiefdoms get partitioned. 21

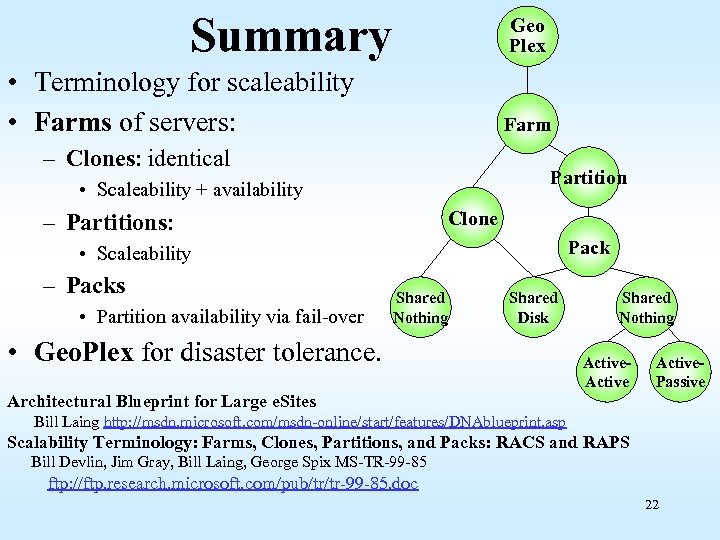

Summary Geo Plex • Terminology for scaleability • Farms of servers: Farm – Clones: identical Partition • Scaleability + availability Clone – Partitions: Pack • Scaleability – Packs • Partition availability via fail-over Shared Nothing Shared Disk • Geo. Plex for disaster tolerance. Shared Nothing Active. Passive Architectural Blueprint for Large e. Sites Bill Laing http: //msdn. microsoft. com/msdn-online/start/features/DNAblueprint. asp Scalability Terminology: Farms, Clones, Partitions, and Packs: RACS and RAPS Bill Devlin, Jim Gray, Bill Laing, George Spix MS-TR-99 -85 ftp: //ftp. research. microsoft. com/pub/tr/tr-99 -85. doc 22

Three Talks • Scalability Terminology – Gray (with help from Devlin, Laing, Spix) • What Windows is doing re this – Laing • The M$ Peta. Byte (as time allows) – Gray 23

What Windows is Doing • Continued architecture and analysis work • App. Center, Biz. Talk, SQL Service Broker, ISA, … all key to Clones/Partitions • Exchange is an archetype – Front ends, directory, partitioned, packs, transparent mobility. • • • NLB (clones) and MSCS (Packs) High Performance Technical Computing Appliances and hardware trends Management of these kind of systems Still need good ideas on…. 24

Architecture and Design work • Produced an architectural Blueprint for large e. Sites published on MSDN – http: //msdn. microsoft. com/msdn-online/start/features/DNAblueprint. asp • Creating and testing instances of the architecture – Team led by Per Vonge Neilsen – Actually building and testing examples of the architecture with partners. (sometimes known as MICE) • Built a scalability “Megalab” run by Robert Barnes – 1000 node cyber wall, 315 1 U Compaq DL 360 s, 32 8 ways, 7000 disks 25

26

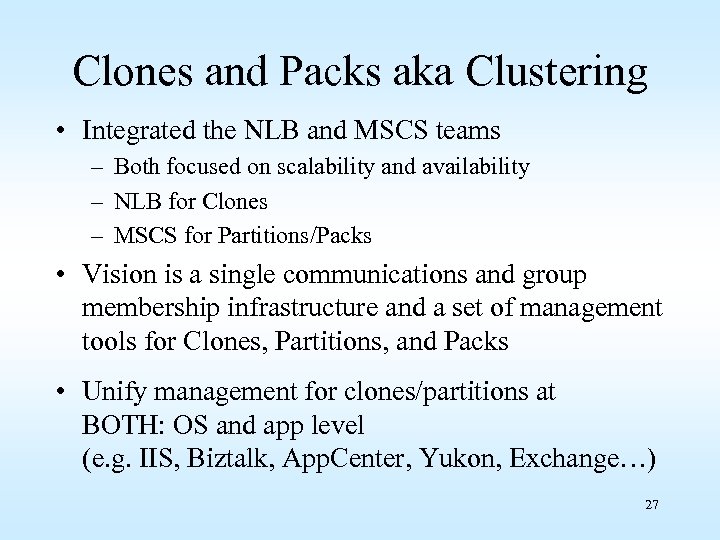

Clones and Packs aka Clustering • Integrated the NLB and MSCS teams – Both focused on scalability and availability – NLB for Clones – MSCS for Partitions/Packs • Vision is a single communications and group membership infrastructure and a set of management tools for Clones, Partitions, and Packs • Unify management for clones/partitions at BOTH: OS and app level (e. g. IIS, Biztalk, App. Center, Yukon, Exchange…) 27

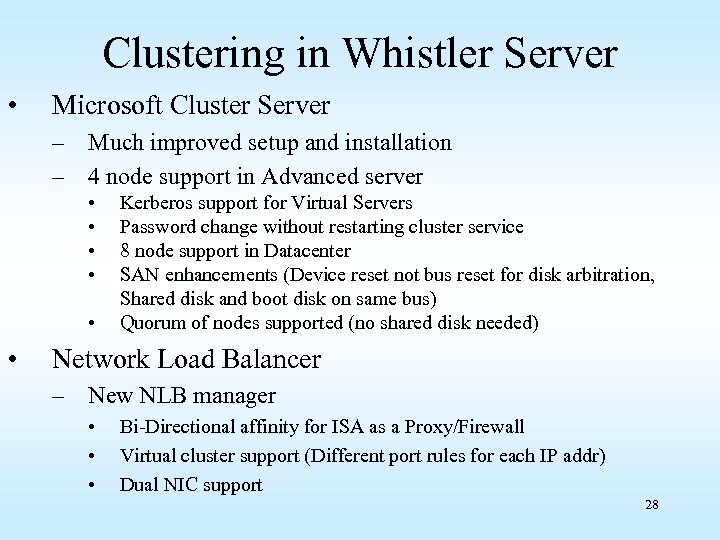

Clustering in Whistler Server • Microsoft Cluster Server – Much improved setup and installation – 4 node support in Advanced server • • • Kerberos support for Virtual Servers Password change without restarting cluster service 8 node support in Datacenter SAN enhancements (Device reset not bus reset for disk arbitration, Shared disk and boot disk on same bus) Quorum of nodes supported (no shared disk needed) Network Load Balancer – New NLB manager • • • Bi-Directional affinity for ISA as a Proxy/Firewall Virtual cluster support (Different port rules for each IP addr) Dual NIC support 28

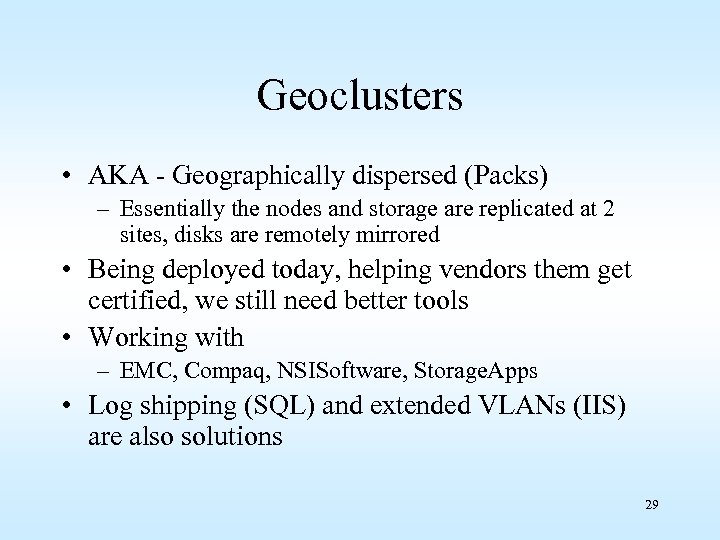

Geoclusters • AKA - Geographically dispersed (Packs) – Essentially the nodes and storage are replicated at 2 sites, disks are remotely mirrored • Being deployed today, helping vendors them get certified, we still need better tools • Working with – EMC, Compaq, NSISoftware, Storage. Apps • Log shipping (SQL) and extended VLANs (IIS) are also solutions 29

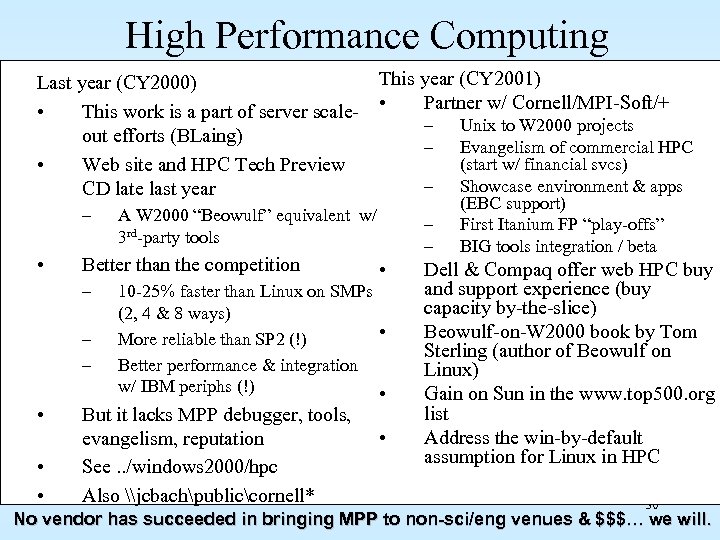

High Performance Computing Last year (CY 2000) • This work is a part of server scaleout efforts (BLaing) • Web site and HPC Tech Preview CD late last year – • – – • • • – – – A W 2000 “Beowulf” equivalent w/ 3 rd-party tools Better than the competition – This year (CY 2001) • Partner w/ Cornell/MPI-Soft/+ – – • 10 -25% faster than Linux on SMPs (2, 4 & 8 ways) • More reliable than SP 2 (!) Better performance & integration w/ IBM periphs (!) • But it lacks MPP debugger, tools, evangelism, reputation See. . /windows 2000/hpc Also \jcbachpubliccornell* • Unix to W 2000 projects Evangelism of commercial HPC (start w/ financial svcs) Showcase environment & apps (EBC support) First Itanium FP “play-offs” BIG tools integration / beta Dell & Compaq offer web HPC buy and support experience (buy capacity by-the-slice) Beowulf-on-W 2000 book by Tom Sterling (author of Beowulf on Linux) Gain on Sun in the www. top 500. org list Address the win-by-default assumption for Linux in HPC 30 No vendor has succeeded in bringing MPP to non-sci/eng venues & $$$… we will.

Appliances and Hardware Trends • The appliances team under Tom. Ph is focused on dramatically simplifying the user experience of installing the kind of devices – Working with OEMs to adopt Windows. XP • Ultradense servers are on the horizon – 100 s of servers per rack – Manage the rack as one • Infiniband 10 Gbps. Ethernet change things. 31

Operations and Management • Great research work done in MSR on this topic – The Mega services paper by Levi and Hunt – The follow on BIG project developed the ideas of • Scale Invariant Service Descriptions with • automated monitoring and • deployment of servers. • Building on that work in Windows Server group • App. Center doing similar things at app level 32

Still Need Good Ideas on… • Automatic partitioning • Stateful load balancing • Unified management of clones/partitions at both app and OS level 33

Three Talks • Scalability Terminology – Gray (with help from Devlin, Laing, Spix) • What Windows is doing re this – Laing • The M$ Peta. Byte (as time allows) – Gray 34

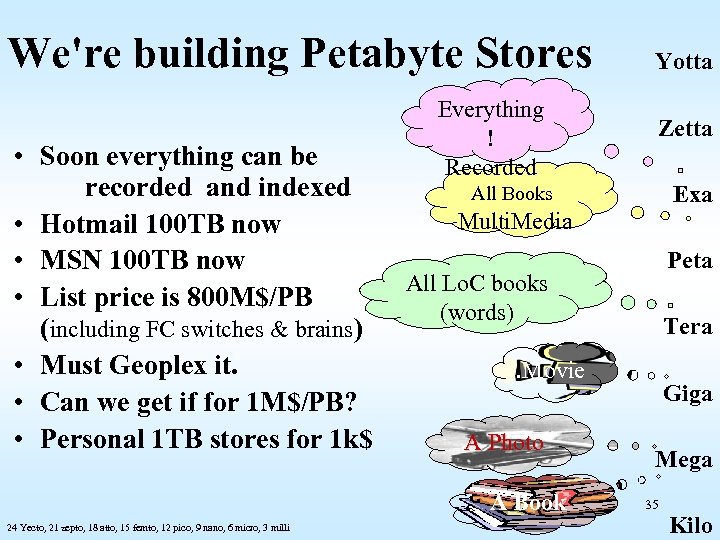

We're building Petabyte Stores • Soon everything can be recorded and indexed • Hotmail 100 TB now • MSN 100 TB now • List price is 800 M$/PB (including FC switches & brains) • Must Geoplex it. • Can we get if for 1 M$/PB? • Personal 1 TB stores for 1 k$ Everything ! Recorded Zetta Exa All Books Multi. Media Peta All Lo. C books (words) Tera . Movie A Photo A Book 24 Yecto, 21 zepto, 18 atto, 15 femto, 12 pico, 9 nano, 6 micro, 3 milli Yotta Giga Mega 35 Kilo

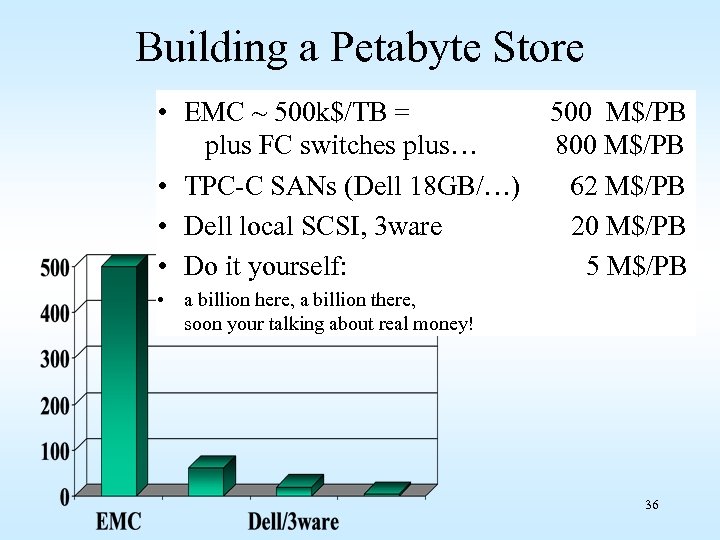

Building a Petabyte Store • EMC ~ 500 k$/TB = plus FC switches plus… • TPC-C SANs (Dell 18 GB/…) • Dell local SCSI, 3 ware • Do it yourself: 500 M$/PB 800 M$/PB 62 M$/PB 20 M$/PB 5 M$/PB • a billion here, a billion there, soon your talking about real money! 36

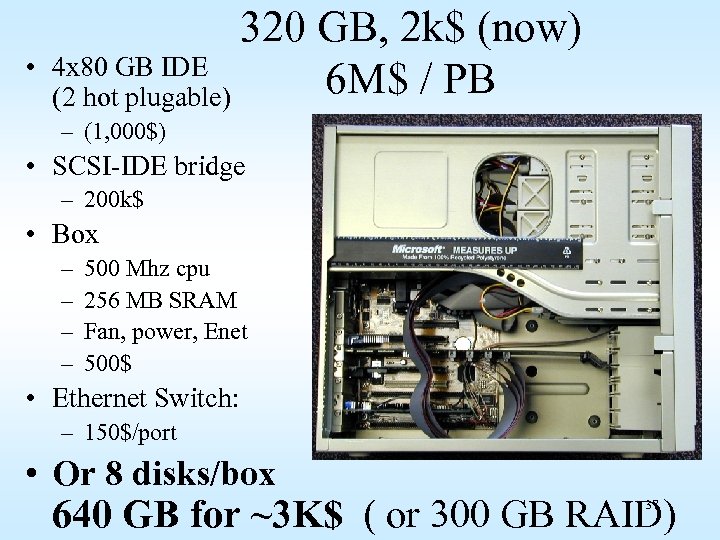

• 320 GB, 2 k$ (now) 4 x 80 GB IDE 6 M$ / PB (2 hot plugable) – (1, 000$) • SCSI-IDE bridge – 200 k$ • Box – – 500 Mhz cpu 256 MB SRAM Fan, power, Enet 500$ • Ethernet Switch: – 150$/port • Or 8 disks/box 640 GB for ~3 K$ ( or 300 GB RAID) 37

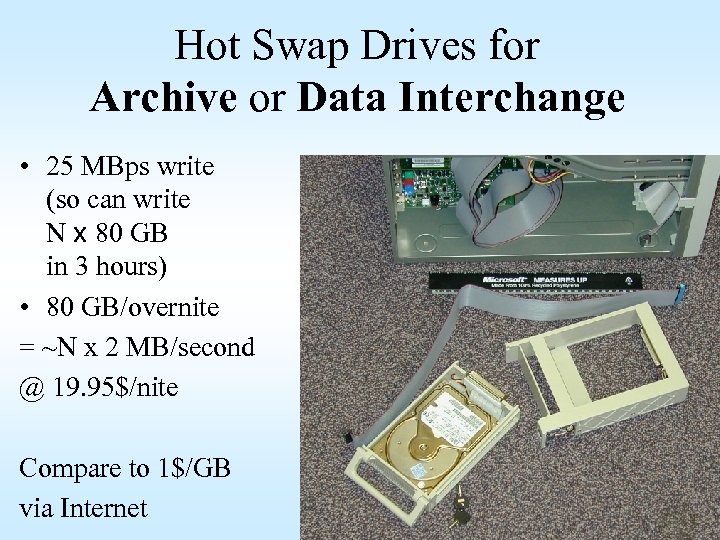

Hot Swap Drives for Archive or Data Interchange • 25 MBps write (so can write N x 80 GB in 3 hours) • 80 GB/overnite = ~N x 2 MB/second @ 19. 95$/nite Compare to 1$/GB via Internet 38

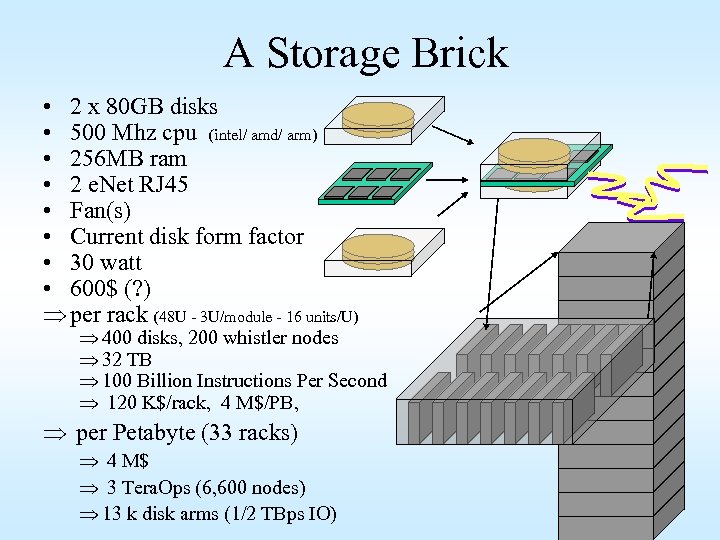

A Storage Brick • 2 x 80 GB disks • 500 Mhz cpu (intel/ amd/ arm) • 256 MB ram • 2 e. Net RJ 45 • Fan(s) • Current disk form factor • 30 watt • 600$ (? ) Þ per rack (48 U - 3 U/module - 16 units/U) Þ 400 disks, 200 whistler nodes Þ 32 TB Þ 100 Billion Instructions Per Second Þ 120 K$/rack, 4 M$/PB, Þ per Petabyte (33 racks) Þ 4 M$ Þ 3 Tera. Ops (6, 600 nodes) Þ 13 k disk arms (1/2 TBps IO) 39

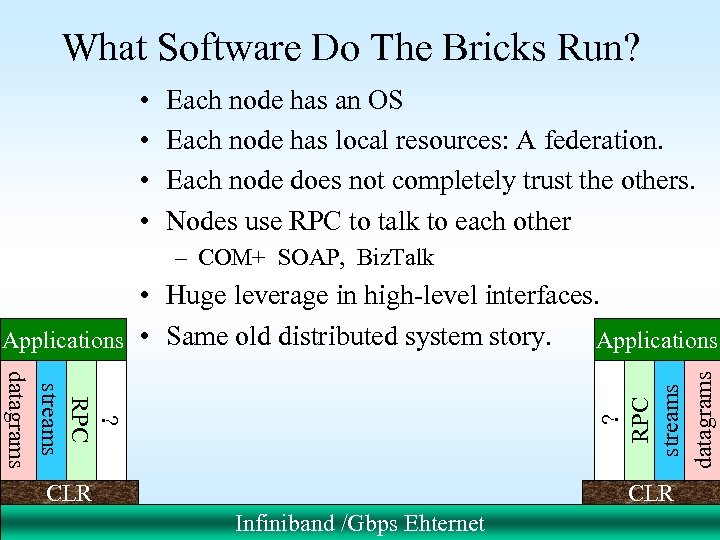

What Software Do The Bricks Run? • • Each node has an OS Each node has local resources: A federation. Each node does not completely trust the others. Nodes use RPC to talk to each other – COM+ SOAP, Biz. Talk ? RPC streams datagrams • Huge leverage in high-level interfaces. Applications • Same old distributed system story. Applications CLR 40 Infiniband /Gbps Ehternet

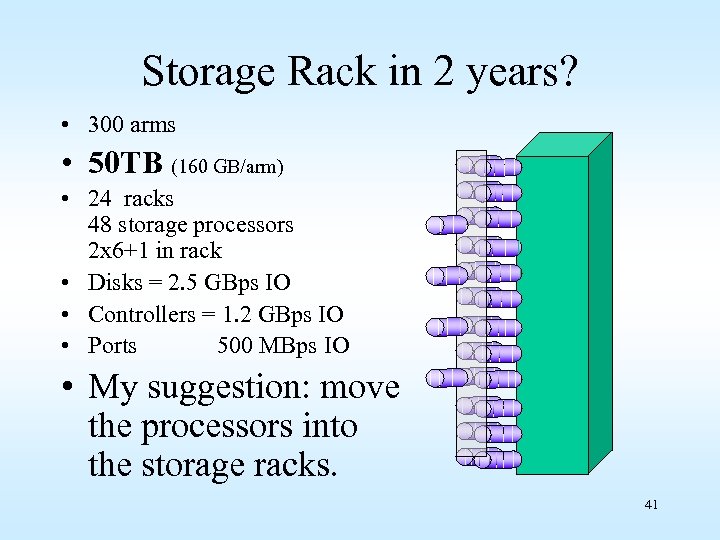

Storage Rack in 2 years? • 300 arms • 50 TB (160 GB/arm) • 24 racks 48 storage processors 2 x 6+1 in rack • Disks = 2. 5 GBps IO • Controllers = 1. 2 GBps IO • Ports 500 MBps IO • My suggestion: move the processors into the storage racks. 41

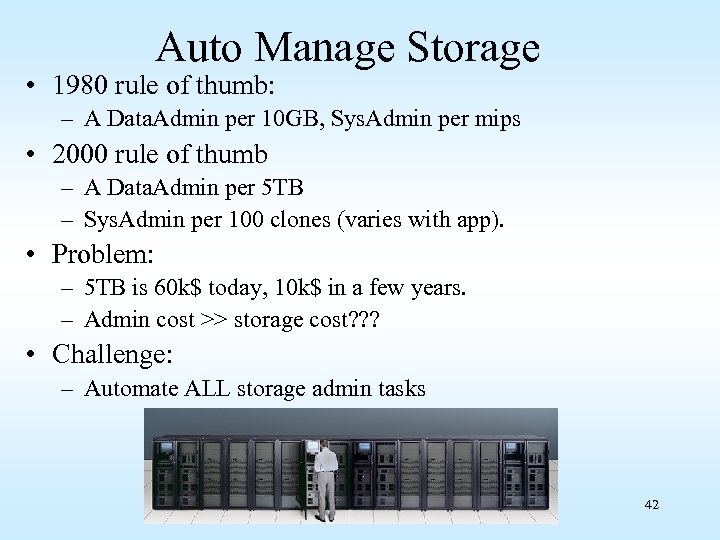

Auto Manage Storage • 1980 rule of thumb: – A Data. Admin per 10 GB, Sys. Admin per mips • 2000 rule of thumb – A Data. Admin per 5 TB – Sys. Admin per 100 clones (varies with app). • Problem: – 5 TB is 60 k$ today, 10 k$ in a few years. – Admin cost >> storage cost? ? ? • Challenge: – Automate ALL storage admin tasks 42

It’s Hard to Archive a Petabyte It takes a LONG time to restore it. • At 1 GBps it takes 12 days! • Store it in two (or more) places online (on disk? ). A geo-plex • Scrub it continuously (look for errors) • On failure, – use other copy until failure repaired, – refresh lost copy from safe copy. • Can organize the two copies differently (e. g. : one by time, one by space) 43

Call To Action • Lets work together to make storage bricks – Low cost – High function • NAS (network attached storage) not SAN (storage area network) • Ship NT 8/CLR/IIS/SQL/Exchange/… with every disk drive 44

Three Talks • Scalability Terminology – Gray (with help from Devlin, Laing, Spix) • What Windows is doing re this – Laing • The M$ Peta. Byte (as time allows) – Gray 45

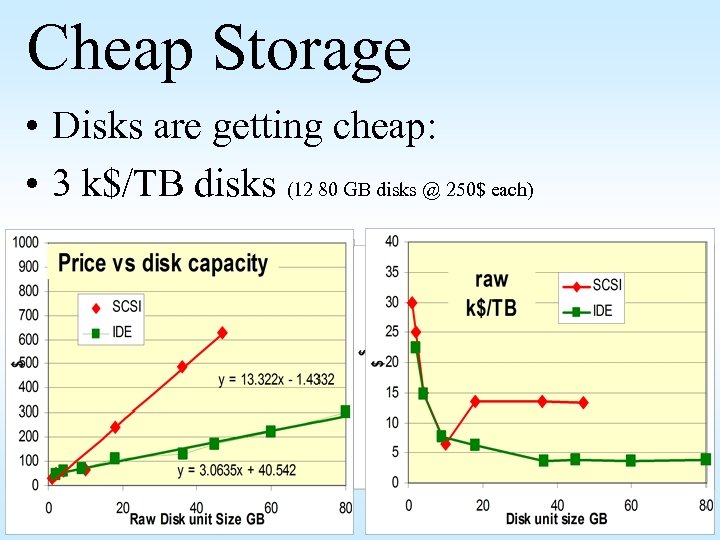

Cheap Storage • Disks are getting cheap: • 3 k$/TB disks (12 80 GB disks @ 250$ each) 46

All Device Controllers will be Super-Computers • TODAY – Disk controller is 10 mips risc engine with 2 MB DRAM – NIC is similar power • SOON – Will become 100 mips systems with 100 MB DRAM. Central Processor & Memory • They are nodes in a federation (can run Oracle on NT in disk controller). • Advantages – – – Uniform programming model Great tools Security Economics (cyberbricks) Move computation to data (minimize traffic) Tera Byte Backplane 54

5166f6da8eb1401882b40e4258f87016.ppt