ee9c509581537e29ed03bc6ff5ab7654.ppt

- Количество слайдов: 25

The Relative Entropy Rate of Two Hidden Markov Processes Or Zuk Dept. of Phys. Of Comp. Systems Weizmann Inst. Of Science Rehovot, Israel .

The Relative Entropy Rate of Two Hidden Markov Processes Or Zuk Dept. of Phys. Of Comp. Systems Weizmann Inst. Of Science Rehovot, Israel .

Overview u Introduction u Distance Measures and Relative Entropy rate u Results: Generalization from Entropy Rate. u Future Directions 2

Overview u Introduction u Distance Measures and Relative Entropy rate u Results: Generalization from Entropy Rate. u Future Directions 2

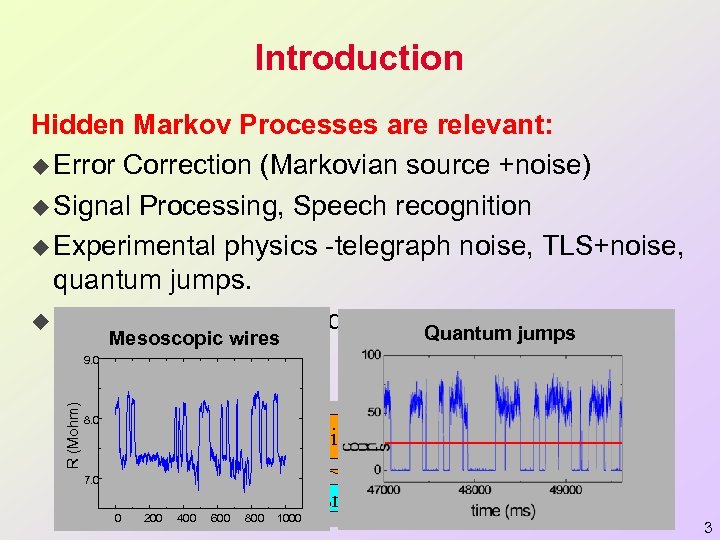

Introduction Hidden Markov Processes are relevant: u Error Correction (Markovian source +noise) u Signal Processing, Speech recognition u Experimental physics -telegraph noise, TLS+noise, quantum jumps. u Bioinformatics -biological sequences, gene Quantum jumps Mesoscopic wires expression R (Mohm) 9. 0 8. 0 Noise 10% t 7. 0 Markov chain 0 200 400 600 800 Transmission 1000 HMP 3

Introduction Hidden Markov Processes are relevant: u Error Correction (Markovian source +noise) u Signal Processing, Speech recognition u Experimental physics -telegraph noise, TLS+noise, quantum jumps. u Bioinformatics -biological sequences, gene Quantum jumps Mesoscopic wires expression R (Mohm) 9. 0 8. 0 Noise 10% t 7. 0 Markov chain 0 200 400 600 800 Transmission 1000 HMP 3

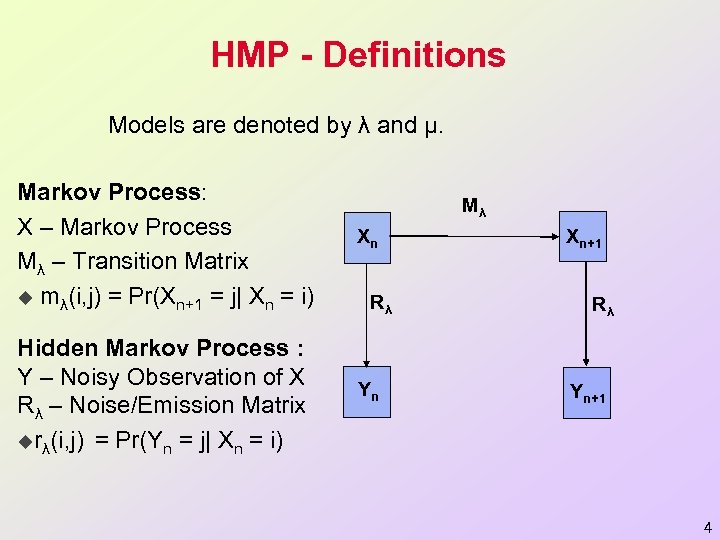

HMP - Definitions Models are denoted by λ and µ. Markov Process: X – Markov Process Mλ – Transition Matrix u mλ(i, j) = Pr(Xn+1 = j| Xn = i) Xn Hidden Markov Process : Y – Noisy Observation of X Rλ – Noise/Emission Matrix urλ(i, j) = Pr(Yn = j| Xn = i) Yn Mλ Rλ Xn+1 Rλ Yn+1 4

HMP - Definitions Models are denoted by λ and µ. Markov Process: X – Markov Process Mλ – Transition Matrix u mλ(i, j) = Pr(Xn+1 = j| Xn = i) Xn Hidden Markov Process : Y – Noisy Observation of X Rλ – Noise/Emission Matrix urλ(i, j) = Pr(Yn = j| Xn = i) Yn Mλ Rλ Xn+1 Rλ Yn+1 4

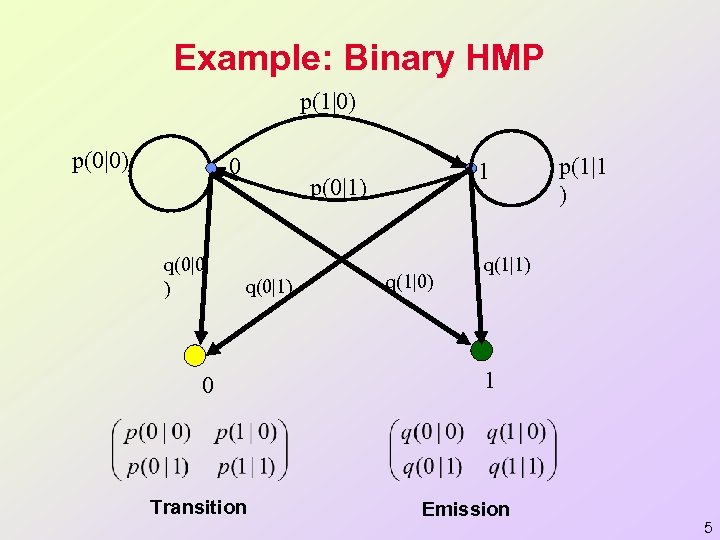

Example: Binary HMP p(1|0) p(0|0) 0 q(0|0 ) 1 p(0|1) q(0|1) 0 Transition q(1|0) p(1|1 ) q(1|1) 1 Emission 5

Example: Binary HMP p(1|0) p(0|0) 0 q(0|0 ) 1 p(0|1) q(0|1) 0 Transition q(1|0) p(1|1 ) q(1|1) 1 Emission 5

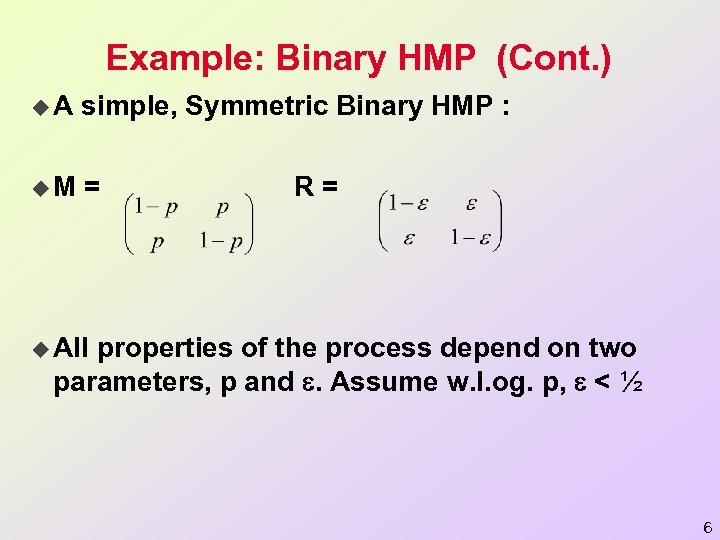

Example: Binary HMP (Cont. ) u. A simple, Symmetric Binary HMP : u. M = R= u All properties of the process depend on two parameters, p and . Assume w. l. og. p, < ½ 6

Example: Binary HMP (Cont. ) u. A simple, Symmetric Binary HMP : u. M = R= u All properties of the process depend on two parameters, p and . Assume w. l. og. p, < ½ 6

Overview u Introduction u Distance Measures and Relative Entropy rate u Results: Generalization from Entropy Rate. u Future Directions 7

Overview u Introduction u Distance Measures and Relative Entropy rate u Results: Generalization from Entropy Rate. u Future Directions 7

Distance Measures for Two HMPs u Why important ? u Often, one learns a HMP from data. It is important to know how different is the learned model from the true model. u Sometimes, many HMPs may represent different sources (e. g. different authors, different protein families etc. ), and we wish to know which sources are similar. u What distance measure to use? u Look at joint distributions of N consecutive Y symbols Pλ(N) and Pµ(N). 8

Distance Measures for Two HMPs u Why important ? u Often, one learns a HMP from data. It is important to know how different is the learned model from the true model. u Sometimes, many HMPs may represent different sources (e. g. different authors, different protein families etc. ), and we wish to know which sources are similar. u What distance measure to use? u Look at joint distributions of N consecutive Y symbols Pλ(N) and Pµ(N). 8

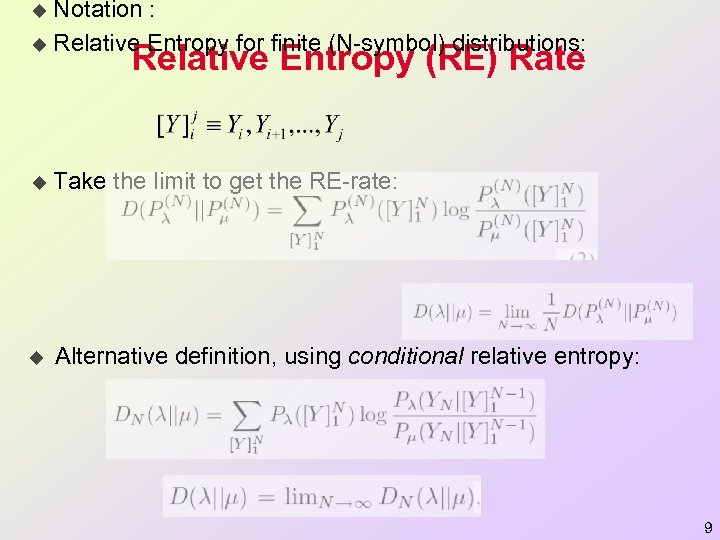

Notation : u Relative Entropy for finite (N-symbol) distributions: u Relative Entropy (RE) Rate u Take the limit to get the RE-rate: u Alternative definition, using conditional relative entropy: 9

Notation : u Relative Entropy for finite (N-symbol) distributions: u Relative Entropy (RE) Rate u Take the limit to get the RE-rate: u Alternative definition, using conditional relative entropy: 9

![Relative Entropy (RE) Rate First proposed for HMPs by [Juang&Rabiner 85]. u Not a Relative Entropy (RE) Rate First proposed for HMPs by [Juang&Rabiner 85]. u Not a](https://present5.com/presentation/ee9c509581537e29ed03bc6ff5ab7654/image-10.jpg) Relative Entropy (RE) Rate First proposed for HMPs by [Juang&Rabiner 85]. u Not a norm (not symmetric, no triangle inequality). u Still it has several natural interpretations: -If one generates data from λ, and gives likelihood score to µ, th -If one compresses data generated λ, assuming erroneously it w u u For Markov chains, D(λ || µ) is easily given by: 10

Relative Entropy (RE) Rate First proposed for HMPs by [Juang&Rabiner 85]. u Not a norm (not symmetric, no triangle inequality). u Still it has several natural interpretations: -If one generates data from λ, and gives likelihood score to µ, th -If one compresses data generated λ, assuming erroneously it w u u For Markov chains, D(λ || µ) is easily given by: 10

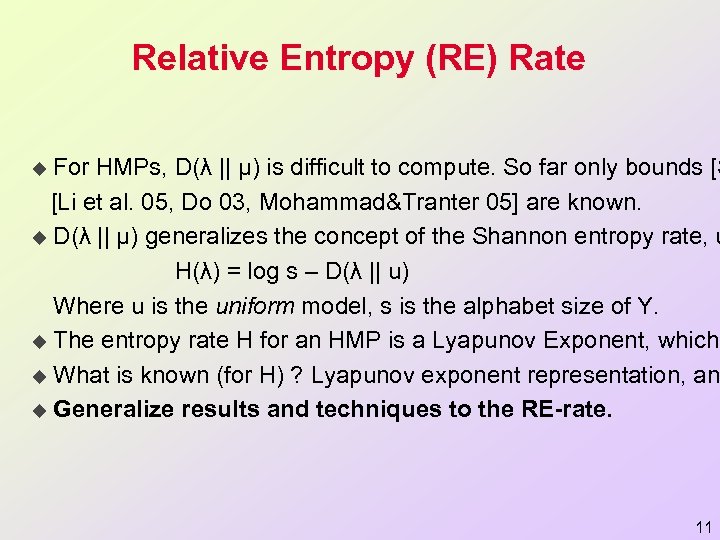

Relative Entropy (RE) Rate For HMPs, D(λ || µ) is difficult to compute. So far only bounds [S [Li et al. 05, Do 03, Mohammad&Tranter 05] are known. u D(λ || µ) generalizes the concept of the Shannon entropy rate, u H(λ) = log s – D(λ || u) Where u is the uniform model, s is the alphabet size of Y. u The entropy rate H for an HMP is a Lyapunov Exponent, which u What is known (for H) ? Lyapunov exponent representation, an u Generalize results and techniques to the RE-rate. u 11

Relative Entropy (RE) Rate For HMPs, D(λ || µ) is difficult to compute. So far only bounds [S [Li et al. 05, Do 03, Mohammad&Tranter 05] are known. u D(λ || µ) generalizes the concept of the Shannon entropy rate, u H(λ) = log s – D(λ || u) Where u is the uniform model, s is the alphabet size of Y. u The entropy rate H for an HMP is a Lyapunov Exponent, which u What is known (for H) ? Lyapunov exponent representation, an u Generalize results and techniques to the RE-rate. u 11

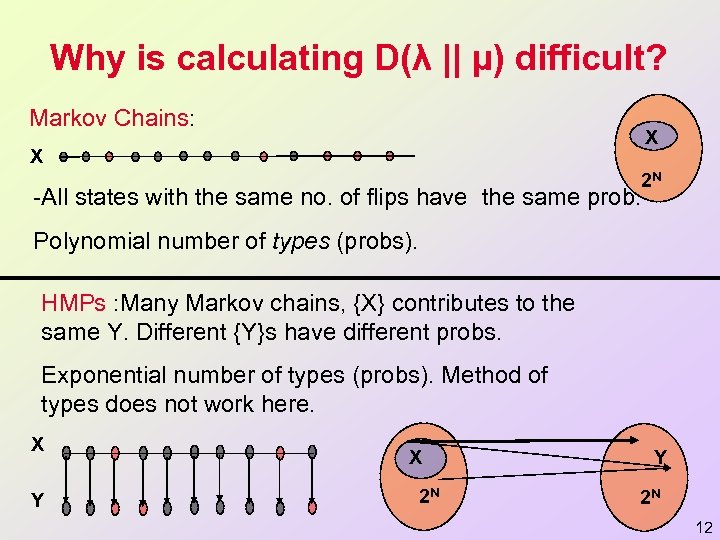

Why is calculating D(λ || µ) difficult? Markov Chains: X X 2 N -All states with the same no. of flips have the same prob. Polynomial number of types (probs). HMPs : Many Markov chains, {X} contributes to the same Y. Different {Y}s have different probs. Exponential number of types (probs). Method of types does not work here. X Y X 2 N Y 2 N 12

Why is calculating D(λ || µ) difficult? Markov Chains: X X 2 N -All states with the same no. of flips have the same prob. Polynomial number of types (probs). HMPs : Many Markov chains, {X} contributes to the same Y. Different {Y}s have different probs. Exponential number of types (probs). Method of types does not work here. X Y X 2 N Y 2 N 12

Overview u Introduction u Distance Measures and Relative Entropy rate u Results: Generalization from Entropy Rate. u Future Directions 13

Overview u Introduction u Distance Measures and Relative Entropy rate u Results: Generalization from Entropy Rate. u Future Directions 13

RE-Rate and Lyapunov Exponents What is Lyapunov exponent? u Arises in Dynamical Systems, Control Theory, Statistical Physic u Take two (square) matrices A, B. Choose each time at random A (1/N) log ||ABBBAABAB…BA|| The limit: -Exists a. s. [Furstenberg&Kesten 60] -Called Top Lyaponov Exponent. -Independent of Matrix Norm chosen. u HMP entropy rate is given as a Lyaponov Exponent [Jacquet e u 14

RE-Rate and Lyapunov Exponents What is Lyapunov exponent? u Arises in Dynamical Systems, Control Theory, Statistical Physic u Take two (square) matrices A, B. Choose each time at random A (1/N) log ||ABBBAABAB…BA|| The limit: -Exists a. s. [Furstenberg&Kesten 60] -Called Top Lyaponov Exponent. -Independent of Matrix Norm chosen. u HMP entropy rate is given as a Lyaponov Exponent [Jacquet e u 14

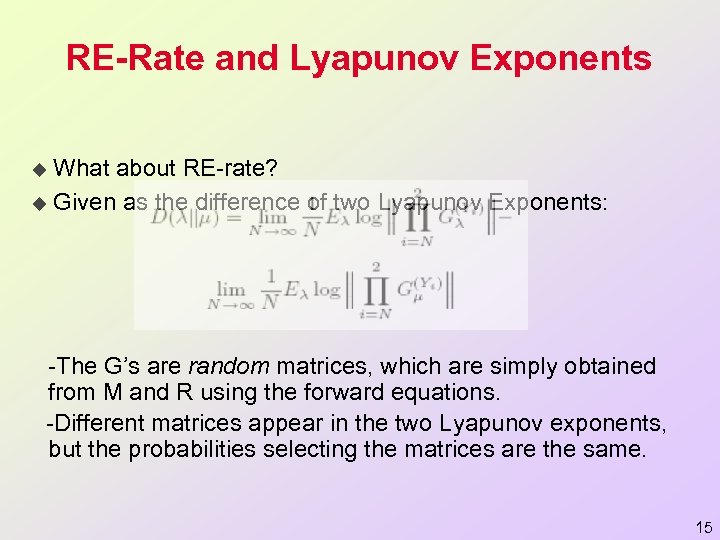

RE-Rate and Lyapunov Exponents What about RE-rate? u Given as the difference of two Lyapunov Exponents: u -The G’s are random matrices, which are simply obtained from M and R using the forward equations. -Different matrices appear in the two Lyapunov exponents, but the probabilities selecting the matrices are the same. 15

RE-Rate and Lyapunov Exponents What about RE-rate? u Given as the difference of two Lyapunov Exponents: u -The G’s are random matrices, which are simply obtained from M and R using the forward equations. -Different matrices appear in the two Lyapunov exponents, but the probabilities selecting the matrices are the same. 15

Analyticity of the RE-Rate u. Is the RE-rate continuous, ‘smooth’, or even ana u. For Lyapunov exponents: Known analyticity in t u. For HMP entropy rate, analyticity was recently s 16

Analyticity of the RE-Rate u. Is the RE-rate continuous, ‘smooth’, or even ana u. For Lyapunov exponents: Known analyticity in t u. For HMP entropy rate, analyticity was recently s 16

Analyticity of the RE-Rate u Using both results, we are able to show: Thm: The RE-rate is analytic in the HMPs paramete u Analyticity is shown only in the interior of the paramete u Behavior on the boundaries is more complicated. Som 17

Analyticity of the RE-Rate u Using both results, we are able to show: Thm: The RE-rate is analytic in the HMPs paramete u Analyticity is shown only in the interior of the paramete u Behavior on the boundaries is more complicated. Som 17

RE-Rate Taylor Series Expansion While in general the RE-rate is not known, there are specific pa u Similar approach was used for Lyapunov exponents [Derrida], a u 18

RE-Rate Taylor Series Expansion While in general the RE-rate is not known, there are specific pa u Similar approach was used for Lyapunov exponents [Derrida], a u 18

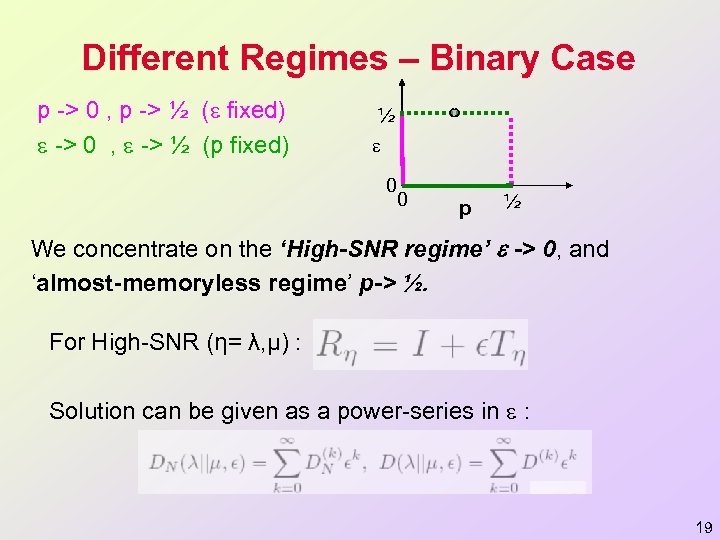

Different Regimes – Binary Case p -> 0 , p -> ½ ( fixed) -> 0 , -> ½ (p fixed) ½ 0 0 p ½ We concentrate on the ‘High-SNR regime’ -> 0, and ‘almost-memoryless regime’ p-> ½. For High-SNR (η= λ, µ) : Solution can be given as a power-series in : 19

Different Regimes – Binary Case p -> 0 , p -> ½ ( fixed) -> 0 , -> ½ (p fixed) ½ 0 0 p ½ We concentrate on the ‘High-SNR regime’ -> 0, and ‘almost-memoryless regime’ p-> ½. For High-SNR (η= λ, µ) : Solution can be given as a power-series in : 19

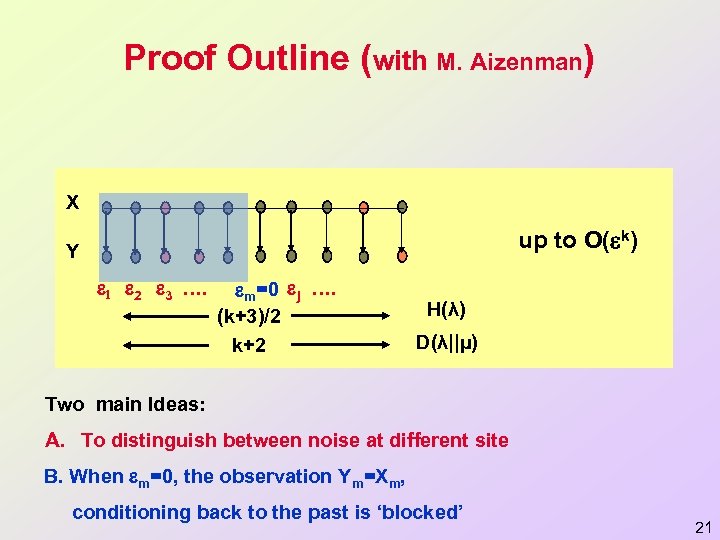

![RE-Rate Taylor Series Expansion In [Zuk, Domany, Kanter&Aizenman 06] we give a procedure for RE-Rate Taylor Series Expansion In [Zuk, Domany, Kanter&Aizenman 06] we give a procedure for](https://present5.com/presentation/ee9c509581537e29ed03bc6ff5ab7654/image-20.jpg) RE-Rate Taylor Series Expansion In [Zuk, Domany, Kanter&Aizenman 06] we give a procedure for u Main observation: Finite systems give the correct RE rate up to u Was discovered using computer experiments (symbolic comput u Stronger result holds for the entropy rate (orders ‘settle’ for N ≥ (k+3)/2) u Does not hold for any regime. For some regimes (e. g. p->0), ev u 20

RE-Rate Taylor Series Expansion In [Zuk, Domany, Kanter&Aizenman 06] we give a procedure for u Main observation: Finite systems give the correct RE rate up to u Was discovered using computer experiments (symbolic comput u Stronger result holds for the entropy rate (orders ‘settle’ for N ≥ (k+3)/2) u Does not hold for any regime. For some regimes (e. g. p->0), ev u 20

Proof Outline (with M. Aizenman) X H(p, ) up to O( k) Y 1 2 3 …. m=0 j …. (k+3)/2 k+2 H(λ) D(λ||µ) Two main Ideas: A. To distinguish between noise at different site B. When m=0, the observation Ym=Xm, conditioning back to the past is ‘blocked’ 21

Proof Outline (with M. Aizenman) X H(p, ) up to O( k) Y 1 2 3 …. m=0 j …. (k+3)/2 k+2 H(λ) D(λ||µ) Two main Ideas: A. To distinguish between noise at different site B. When m=0, the observation Ym=Xm, conditioning back to the past is ‘blocked’ 21

Overview u Introduction u Distance Measures and Relative Entropy rate u Results: Generalization from Entropy Rate. u Future Directions 22

Overview u Introduction u Distance Measures and Relative Entropy rate u Results: Generalization from Entropy Rate. u Future Directions 22

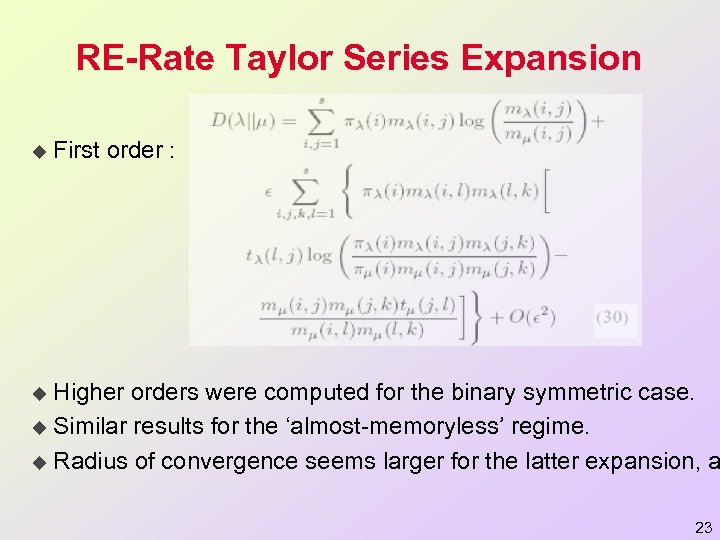

RE-Rate Taylor Series Expansion u First order : Higher orders were computed for the binary symmetric case. u Similar results for the ‘almost-memoryless’ regime. u Radius of convergence seems larger for the latter expansion, a u 23

RE-Rate Taylor Series Expansion u First order : Higher orders were computed for the binary symmetric case. u Similar results for the ‘almost-memoryless’ regime. u Radius of convergence seems larger for the latter expansion, a u 23

Future Directions o Study other regimes. (e. g. two ‘close’ models). o Behavior of the EM algorithm. o Generalizations (e. g. different alphabets sizes, continuous case). o Physical realization of HMPs (mesoscopic systems, quantum jumps) o Domain of Analyticity - Radius of convergence. 24

Future Directions o Study other regimes. (e. g. two ‘close’ models). o Behavior of the EM algorithm. o Generalizations (e. g. different alphabets sizes, continuous case). o Physical realization of HMPs (mesoscopic systems, quantum jumps) o Domain of Analyticity - Radius of convergence. 24

Thanks o o Eytan Domany Ido Kanter Michael Aizenman Libi Hertzberg (Weizmann Inst. ) (Bar-Ilan Univ. ) (Princeton Univ. ) (Weizmann Inst. ) 25

Thanks o o Eytan Domany Ido Kanter Michael Aizenman Libi Hertzberg (Weizmann Inst. ) (Bar-Ilan Univ. ) (Princeton Univ. ) (Weizmann Inst. ) 25