d4567a414b139c4011299b8e3ae810aa.ppt

- Количество слайдов: 55

The flight of the Condor a decade of High Throughput Computing Miron Livny Computer Sciences Department University of Wisconsin-Madison miron@cs. wisc. edu

The flight of the Condor a decade of High Throughput Computing Miron Livny Computer Sciences Department University of Wisconsin-Madison miron@cs. wisc. edu

Remember! › There are no silver bullets. › Response time = Queuing Time + › › Execution Time. If you believe in parallel computing you need a very good reason for not using an idle resource. Debugging complex parallel applications is not fun. www. cs. wisc. edu/condor

Remember! › There are no silver bullets. › Response time = Queuing Time + › › Execution Time. If you believe in parallel computing you need a very good reason for not using an idle resource. Debugging complex parallel applications is not fun. www. cs. wisc. edu/condor

Background and motivation … www. cs. wisc. edu/condor

Background and motivation … www. cs. wisc. edu/condor

“ … Since the early days of mankind the primary motivation for the establishment of communities has been the idea that by being part of an organized group the capabilities of an individual are improved. The great progress in the area of intercomputer communication led to the development of means by which standalone processing sub-systems can be M. Livny, “ Study into multi-computer integrated of Load Balancing Algorithms for Decentralized Distributed Processing Systems. ”, Ph. D thesis, ‘communities’. … “ July 1983. www. cs. wisc. edu/condor

“ … Since the early days of mankind the primary motivation for the establishment of communities has been the idea that by being part of an organized group the capabilities of an individual are improved. The great progress in the area of intercomputer communication led to the development of means by which standalone processing sub-systems can be M. Livny, “ Study into multi-computer integrated of Load Balancing Algorithms for Decentralized Distributed Processing Systems. ”, Ph. D thesis, ‘communities’. … “ July 1983. www. cs. wisc. edu/condor

The growing gap between what we own and what each of us can access www. cs. wisc. edu/condor

The growing gap between what we own and what each of us can access www. cs. wisc. edu/condor

Distributed Ownership Due to dramatic decrease in the costperformance ratio of hardware, powerful computing resources are owned today by individuals, groups, departments, universities… h. Huge increase in the computing capacity owned by the scientific community h. Moderate increase in the computing capacity accessible by a scientist www. cs. wisc. edu/condor

Distributed Ownership Due to dramatic decrease in the costperformance ratio of hardware, powerful computing resources are owned today by individuals, groups, departments, universities… h. Huge increase in the computing capacity owned by the scientific community h. Moderate increase in the computing capacity accessible by a scientist www. cs. wisc. edu/condor

What kind of Computing? High Performance Computing Other www. cs. wisc. edu/condor

What kind of Computing? High Performance Computing Other www. cs. wisc. edu/condor

How about High Throughput Computing (HTC)? I introduced the term HTC in a seminar at the NASA Goddard Flight Center in July of ‘ 96 and a month later at the European Laboratory for Particle Physics (CERN). h. HTC paper in HPCU News 1(2), June ‘ 97. h. HTC interview in HPCWire, July ‘ 97. h. HTC part of NCSA PACI proposal Sept. ‘ 97 h. HTC chapter in “the Grid” book, July ‘ 98. www. cs. wisc. edu/condor

How about High Throughput Computing (HTC)? I introduced the term HTC in a seminar at the NASA Goddard Flight Center in July of ‘ 96 and a month later at the European Laboratory for Particle Physics (CERN). h. HTC paper in HPCU News 1(2), June ‘ 97. h. HTC interview in HPCWire, July ‘ 97. h. HTC part of NCSA PACI proposal Sept. ‘ 97 h. HTC chapter in “the Grid” book, July ‘ 98. www. cs. wisc. edu/condor

High Throughput Computing is a 24 -7 -365 activity FLOPY (60*60*24*7*52)*FLOPS www. cs. wisc. edu/condor

High Throughput Computing is a 24 -7 -365 activity FLOPY (60*60*24*7*52)*FLOPS www. cs. wisc. edu/condor

A simple scenario of a High Throughput Computing (HTC) user with a very simple application and one workstation on his/her desk www. cs. wisc. edu/condor

A simple scenario of a High Throughput Computing (HTC) user with a very simple application and one workstation on his/her desk www. cs. wisc. edu/condor

The HTC Application Study the behavior of F(x, y, z) for 20 values of x, 10 values of y and 3 values of z (20*10*3 = 600) h. F takes on the average 3 hours to compute on a “typical” workstation (total = 1800 hours) h. F requires a “moderate” (128 MB) amount of memory h. F performs “little” I/O - (x, y, z) is 15 MB and F(x, y, z) is 40 MB www. cs. wisc. edu/condor

The HTC Application Study the behavior of F(x, y, z) for 20 values of x, 10 values of y and 3 values of z (20*10*3 = 600) h. F takes on the average 3 hours to compute on a “typical” workstation (total = 1800 hours) h. F requires a “moderate” (128 MB) amount of memory h. F performs “little” I/O - (x, y, z) is 15 MB and F(x, y, z) is 40 MB www. cs. wisc. edu/condor

What we have here is a Master Worker Application! www. cs. wisc. edu/condor

What we have here is a Master Worker Application! www. cs. wisc. edu/condor

Master-Worker Paradigm Many scientific, engineering and commercial applications (Software builds and testing, sensitivity analysis, parameter space exploration, image and movie rendering, High Energy Physics event reconstruction, processing of optical DNA sequencing, training of neural-networks, stochastic optimization, Monte Carlo. . . ) follow the Master-Worker (MW) paradigm where. . . www. cs. wisc. edu/condor

Master-Worker Paradigm Many scientific, engineering and commercial applications (Software builds and testing, sensitivity analysis, parameter space exploration, image and movie rendering, High Energy Physics event reconstruction, processing of optical DNA sequencing, training of neural-networks, stochastic optimization, Monte Carlo. . . ) follow the Master-Worker (MW) paradigm where. . . www. cs. wisc. edu/condor

Master-Worker Paradigm … a heap or a Directed Acyclic Graph (DAG) of tasks is assigned to a master. The master looks for workers who can perform tasks that are “ready to go” and passes them a description (input) of the task. Upon the completion of a task, the worker passes the result (output) of the task back to the master. h. Master may execute some of the tasks. h. Master maybe a worker of another master. h. Worker may require initialization data. www. cs. wisc. edu/condor

Master-Worker Paradigm … a heap or a Directed Acyclic Graph (DAG) of tasks is assigned to a master. The master looks for workers who can perform tasks that are “ready to go” and passes them a description (input) of the task. Upon the completion of a task, the worker passes the result (output) of the task back to the master. h. Master may execute some of the tasks. h. Master maybe a worker of another master. h. Worker may require initialization data. www. cs. wisc. edu/condor

Master-Worker computing is Naturally Parallel. It is by no means Embarrassingly Parallel. As you will see, doing it right is by no means trivial. Here a few challenges. . . www. cs. wisc. edu/condor

Master-Worker computing is Naturally Parallel. It is by no means Embarrassingly Parallel. As you will see, doing it right is by no means trivial. Here a few challenges. . . www. cs. wisc. edu/condor

Dynamic or Static? This is the key question one faces when building a MW application. How this question is answered has an impact on h. The algorithm h. Target architecture h. Resources availability h. Quality of results h. Complexity of implementation www. cs. wisc. edu/condor

Dynamic or Static? This is the key question one faces when building a MW application. How this question is answered has an impact on h. The algorithm h. Target architecture h. Resources availability h. Quality of results h. Complexity of implementation www. cs. wisc. edu/condor

How do the Master and Worker Communicate? 4 Via a shared/distributed file/disk system using reads and writes or 4 Via a message passing system (PVMMPI) using sends and receives or 4 Via a shared memory using loads, stores and semaphores. www. cs. wisc. edu/condor

How do the Master and Worker Communicate? 4 Via a shared/distributed file/disk system using reads and writes or 4 Via a message passing system (PVMMPI) using sends and receives or 4 Via a shared memory using loads, stores and semaphores. www. cs. wisc. edu/condor

How many workers? 4 One per task? 4 One per CPU allocated to the master? 4 N(t) depending on the dynamic properties of the “ready to go” set of tasks? www. cs. wisc. edu/condor

How many workers? 4 One per task? 4 One per CPU allocated to the master? 4 N(t) depending on the dynamic properties of the “ready to go” set of tasks? www. cs. wisc. edu/condor

Job Parallel MW 4 Master and workers communicate via the file system. 4 Workers are independent jobs that are submitted/started, suspended, resumed and cancelled by the master. 4 Master may monitor progress of jobs and availability of resources or just collect results at the end. www. cs. wisc. edu/condor

Job Parallel MW 4 Master and workers communicate via the file system. 4 Workers are independent jobs that are submitted/started, suspended, resumed and cancelled by the master. 4 Master may monitor progress of jobs and availability of resources or just collect results at the end. www. cs. wisc. edu/condor

Building a basic Job Parallel Application 1. Create n directories. 2. Write an input file in each directory. 3. Submit a cluster of n job. 4. Wait for the cluster to finish. 5. Read an output file from each directory. www. cs. wisc. edu/condor

Building a basic Job Parallel Application 1. Create n directories. 2. Write an input file in each directory. 3. Submit a cluster of n job. 4. Wait for the cluster to finish. 5. Read an output file from each directory. www. cs. wisc. edu/condor

Task Parallel MW 4 Master and workers exchange data via messages delivered by a message passing system like PVM or MPI. 4 Master monitors availability of resources and expends or shrinks the resource pool of the application accordingly. 4 Master monitors the “health” of workers and redistribute tasks accordingly. www. cs. wisc. edu/condor

Task Parallel MW 4 Master and workers exchange data via messages delivered by a message passing system like PVM or MPI. 4 Master monitors availability of resources and expends or shrinks the resource pool of the application accordingly. 4 Master monitors the “health” of workers and redistribute tasks accordingly. www. cs. wisc. edu/condor

Our Answer to High Throughput MW Computing www. cs. wisc. edu/condor

Our Answer to High Throughput MW Computing www. cs. wisc. edu/condor

“… Modern processing environments that consist of large collections of workstations interconnected by high capacity network raise the following challenging question: can we satisfy the needs of users who need extra capacity without lowering the quality of service experienced by the owners of under utilized workstations? … The Condor scheduling system is our M. answer M. Livny question. … “Condor - A Hunter of Litzkow, to this and M. Mutka, “ Idle Workstations”, IEEE 8 th ICDCS, June 1988. www. cs. wisc. edu/condor

“… Modern processing environments that consist of large collections of workstations interconnected by high capacity network raise the following challenging question: can we satisfy the needs of users who need extra capacity without lowering the quality of service experienced by the owners of under utilized workstations? … The Condor scheduling system is our M. answer M. Livny question. … “Condor - A Hunter of Litzkow, to this and M. Mutka, “ Idle Workstations”, IEEE 8 th ICDCS, June 1988. www. cs. wisc. edu/condor

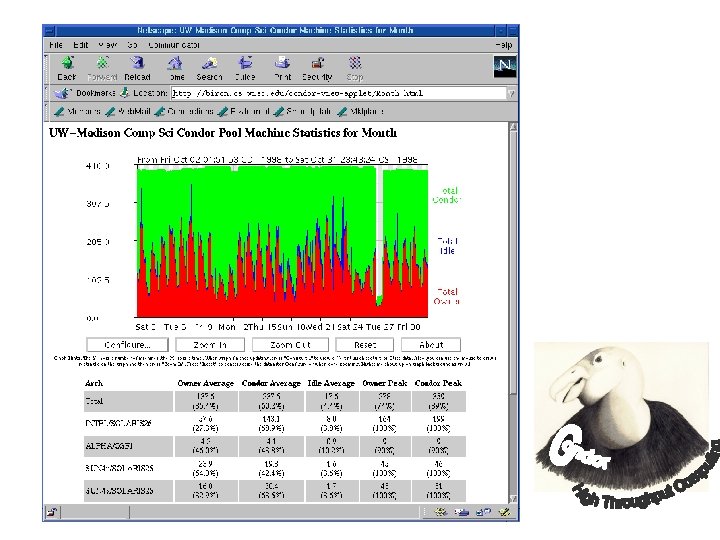

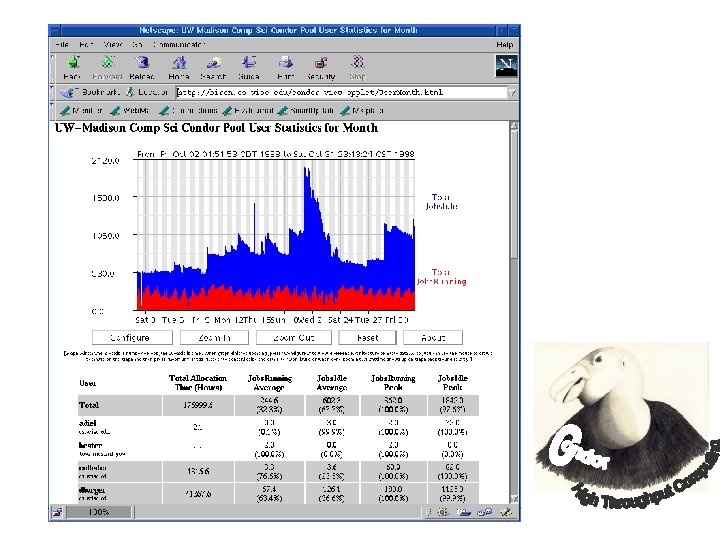

The Condor System A High Throughput Computing system that supports large dynamic MW applications on large collections of distributively owned resources developed, maintained and supported by the Condor Team at the University of Wisconsin - Madison since ‘ 86. h Originally developed for UNIX workstations. h Fully integrated NT version in advance testing. h Deployed world-wide by academia and industry. h A 600 CPU system at U of Wisconsin h Available at www. cs. wisc. edu/condor

The Condor System A High Throughput Computing system that supports large dynamic MW applications on large collections of distributively owned resources developed, maintained and supported by the Condor Team at the University of Wisconsin - Madison since ‘ 86. h Originally developed for UNIX workstations. h Fully integrated NT version in advance testing. h Deployed world-wide by academia and industry. h A 600 CPU system at U of Wisconsin h Available at www. cs. wisc. edu/condor

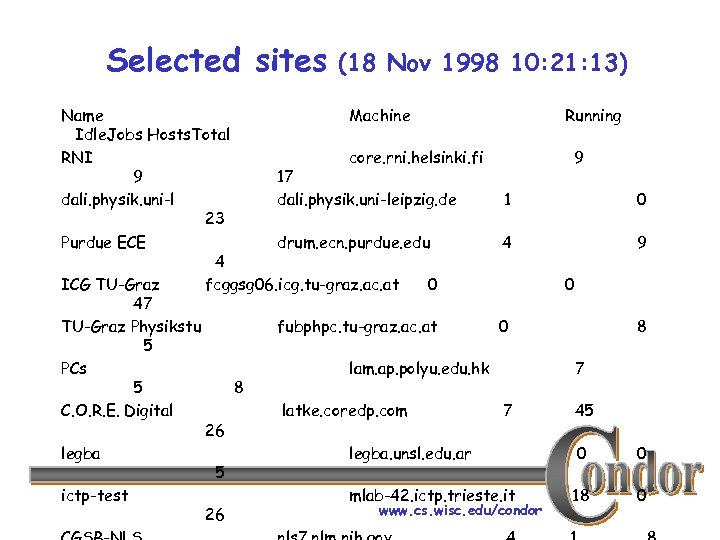

Selected sites (18 Nov 1998 10: 21: 13) Name Machine Idle. Jobs Hosts. Total RNI core. rni. helsinki. fi 9 17 dali. physik. uni-leipzig. de 1 23 Purdue ECE drum. ecn. purdue. edu 4 4 ICG TU-Graz fcggsg 06. icg. tu-graz. ac. at 0 47 TU-Graz Physikstu fubphpc. tu-graz. ac. at 0 5 PCs lam. ap. polyu. edu. hk 5 8 C. O. R. E. Digital latke. coredp. com 7 26 legba. unsl. edu. ar 5 ictp-test mlab-42. ictp. trieste. it www. cs. wisc. edu/condor 26 Running 9 0 8 7 45 0 0 18 0

Selected sites (18 Nov 1998 10: 21: 13) Name Machine Idle. Jobs Hosts. Total RNI core. rni. helsinki. fi 9 17 dali. physik. uni-leipzig. de 1 23 Purdue ECE drum. ecn. purdue. edu 4 4 ICG TU-Graz fcggsg 06. icg. tu-graz. ac. at 0 47 TU-Graz Physikstu fubphpc. tu-graz. ac. at 0 5 PCs lam. ap. polyu. edu. hk 5 8 C. O. R. E. Digital latke. coredp. com 7 26 legba. unsl. edu. ar 5 ictp-test mlab-42. ictp. trieste. it www. cs. wisc. edu/condor 26 Running 9 0 8 7 45 0 0 18 0

“… Several principals have driven the design of Condor. First is that workstation owners should always have the resources of the workstation they own at their disposal. … The second principal is that access to remote capacity must be easy, and should approximate the local execution environment as closely as possible. Portability is the third principal the Condor M. Litzkow and M. Livny, “Experience With behind the design of System”, … “ Distributed Batch. Condor. IEEE Workshop on Experimental Distributed Systems, Huntsville, AL. Oct. 1990. www. cs. wisc. edu/condor

“… Several principals have driven the design of Condor. First is that workstation owners should always have the resources of the workstation they own at their disposal. … The second principal is that access to remote capacity must be easy, and should approximate the local execution environment as closely as possible. Portability is the third principal the Condor M. Litzkow and M. Livny, “Experience With behind the design of System”, … “ Distributed Batch. Condor. IEEE Workshop on Experimental Distributed Systems, Huntsville, AL. Oct. 1990. www. cs. wisc. edu/condor

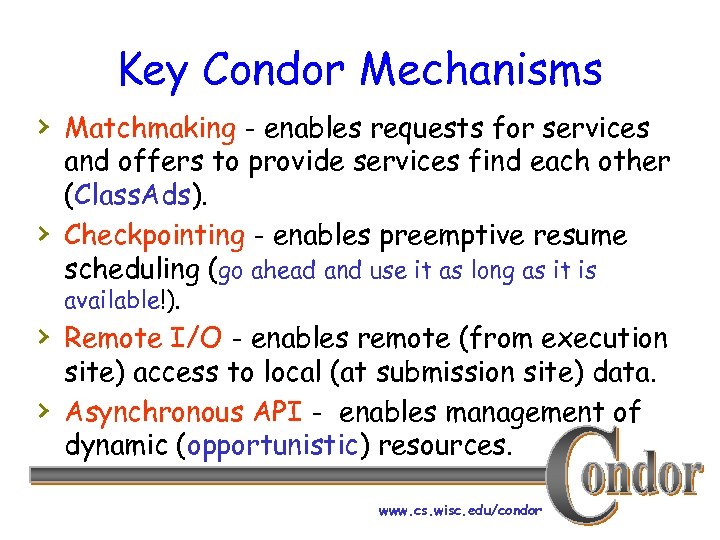

Key Condor Mechanisms › Matchmaking - enables requests for services › and offers to provide services find each other (Class. Ads). Checkpointing - enables preemptive resume scheduling (go ahead and use it as long as it is available!). › Remote I/O - enables remote (from execution › site) access to local (at submission site) data. Asynchronous API - enables management of dynamic (opportunistic) resources. www. cs. wisc. edu/condor

Key Condor Mechanisms › Matchmaking - enables requests for services › and offers to provide services find each other (Class. Ads). Checkpointing - enables preemptive resume scheduling (go ahead and use it as long as it is available!). › Remote I/O - enables remote (from execution › site) access to local (at submission site) data. Asynchronous API - enables management of dynamic (opportunistic) resources. www. cs. wisc. edu/condor

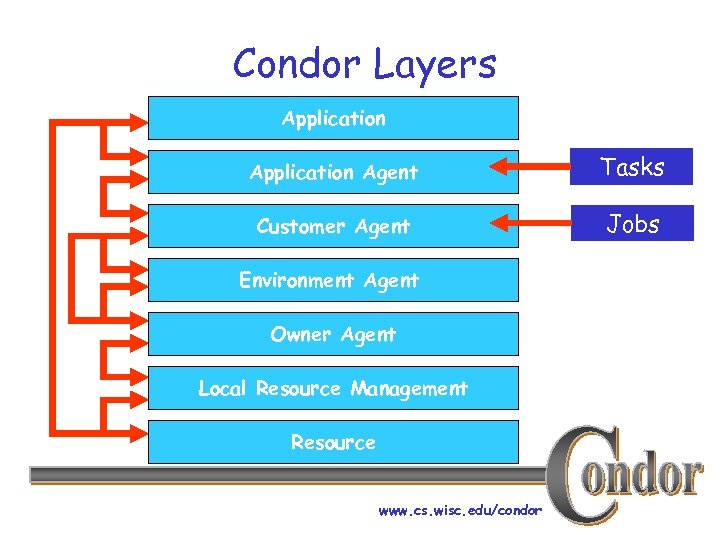

Condor Layers Application Agent Tasks Customer Agent Jobs Environment Agent Owner Agent Local Resource Management Resource www. cs. wisc. edu/condor

Condor Layers Application Agent Tasks Customer Agent Jobs Environment Agent Owner Agent Local Resource Management Resource www. cs. wisc. edu/condor

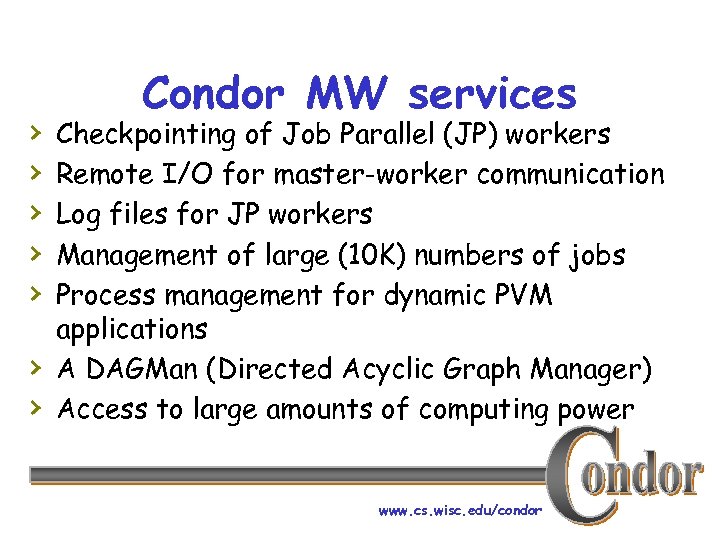

› › › › Condor MW services Checkpointing of Job Parallel (JP) workers Remote I/O for master-worker communication Log files for JP workers Management of large (10 K) numbers of jobs Process management for dynamic PVM applications A DAGMan (Directed Acyclic Graph Manager) Access to large amounts of computing power www. cs. wisc. edu/condor

› › › › Condor MW services Checkpointing of Job Parallel (JP) workers Remote I/O for master-worker communication Log files for JP workers Management of large (10 K) numbers of jobs Process management for dynamic PVM applications A DAGMan (Directed Acyclic Graph Manager) Access to large amounts of computing power www. cs. wisc. edu/condor

![Condor System Structure Central Manager Negotiator N Submit Machine [. . . A] [. Condor System Structure Central Manager Negotiator N Submit Machine [. . . A] [.](https://present5.com/presentation/d4567a414b139c4011299b8e3ae810aa/image-30.jpg) Condor System Structure Central Manager Negotiator N Submit Machine [. . . A] [. . . C] Collector C Execution Machine CA RA [. . . B] Customer Agent Resource Agent www. cs. wisc. edu/condor

Condor System Structure Central Manager Negotiator N Submit Machine [. . . A] [. . . C] Collector C Execution Machine CA RA [. . . B] Customer Agent Resource Agent www. cs. wisc. edu/condor

![Advertising Protocol [. . . N] [. . . M] N C [. . Advertising Protocol [. . . N] [. . . M] N C [. .](https://present5.com/presentation/d4567a414b139c4011299b8e3ae810aa/image-31.jpg) Advertising Protocol [. . . N] [. . . M] N C [. . . M] [. . . A] [. . . C] CA RA [. . . B] www. cs. wisc. edu/condor

Advertising Protocol [. . . N] [. . . M] N C [. . . M] [. . . A] [. . . C] CA RA [. . . B] www. cs. wisc. edu/condor

![Advertising Protocol [. . . N] [. . . M] N [. . . Advertising Protocol [. . . N] [. . . M] N [. . .](https://present5.com/presentation/d4567a414b139c4011299b8e3ae810aa/image-32.jpg) Advertising Protocol [. . . N] [. . . M] N [. . . A] [. . . C] CA C RA [. . . B] www. cs. wisc. edu/condor

Advertising Protocol [. . . N] [. . . M] N [. . . A] [. . . C] CA C RA [. . . B] www. cs. wisc. edu/condor

![Matching Protocol [. . . N] N C [. . . M] [. . Matching Protocol [. . . N] N C [. . . M] [. .](https://present5.com/presentation/d4567a414b139c4011299b8e3ae810aa/image-33.jpg) Matching Protocol [. . . N] N C [. . . M] [. . . B] [. . . A] [. . . C] CA RA www. cs. wisc. edu/condor

Matching Protocol [. . . N] N C [. . . M] [. . . B] [. . . A] [. . . C] CA RA www. cs. wisc. edu/condor

![Claiming Protocol [. . . S] N [. . . A] [. . . Claiming Protocol [. . . S] N [. . . A] [. . .](https://present5.com/presentation/d4567a414b139c4011299b8e3ae810aa/image-34.jpg) Claiming Protocol [. . . S] N [. . . A] [. . . C] CA C RA www. cs. wisc. edu/condor

Claiming Protocol [. . . S] N [. . . A] [. . . C] CA C RA www. cs. wisc. edu/condor

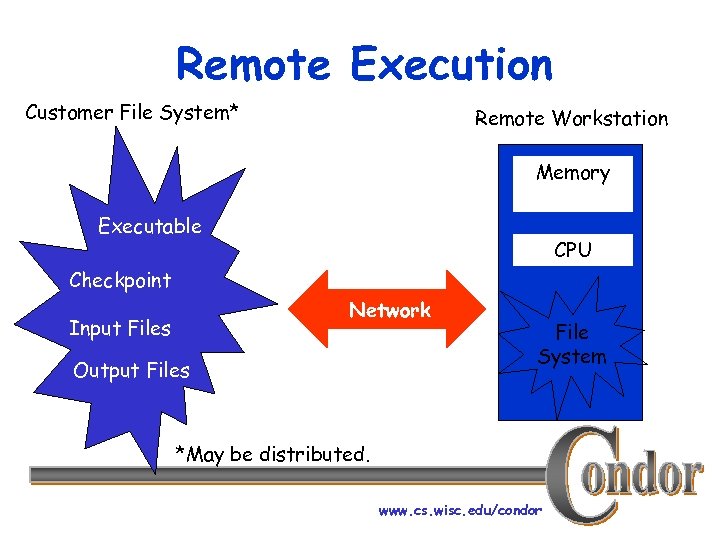

Remote Execution Customer File System* Remote Workstation Memory Executable CPU Checkpoint Network Input Files Output Files File System *May be distributed. www. cs. wisc. edu/condor

Remote Execution Customer File System* Remote Workstation Memory Executable CPU Checkpoint Network Input Files Output Files File System *May be distributed. www. cs. wisc. edu/condor

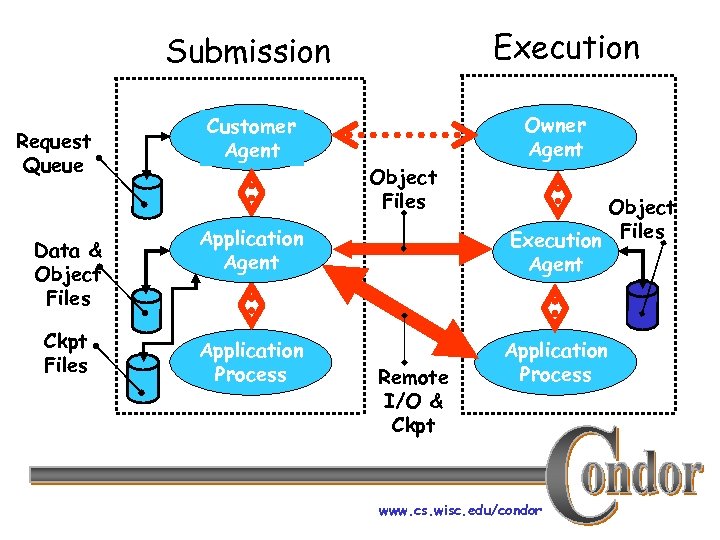

Execution Submission Request Queue Data & Object Files Ckpt Files Customer Agent Object Files Application Agent Application Process Remote I/O & Ckpt Owner Agent Object Execution Files Agent Application Process www. cs. wisc. edu/condor

Execution Submission Request Queue Data & Object Files Ckpt Files Customer Agent Object Files Application Agent Application Process Remote I/O & Ckpt Owner Agent Object Execution Files Agent Application Process www. cs. wisc. edu/condor

Workstation Cluster Workshop December 1992 www. cs. wisc. edu/condor

Workstation Cluster Workshop December 1992 www. cs. wisc. edu/condor

We have users that. . . › … have job parallel MW applications with › › › more than 5000 jobs. … have task parallel MW applications with more than 100 tasks. … run their job parallel MW application for more than six month. … run their task parallel MW application for more than four weeks. www. cs. wisc. edu/condor

We have users that. . . › … have job parallel MW applications with › › › more than 5000 jobs. … have task parallel MW applications with more than 100 tasks. … run their job parallel MW application for more than six month. … run their task parallel MW application for more than four weeks. www. cs. wisc. edu/condor

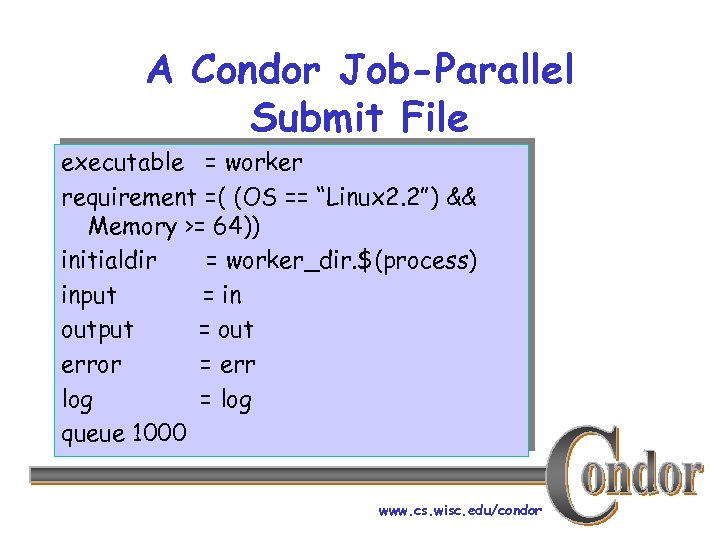

A Condor Job-Parallel Submit File executable = worker requirement =( (OS == “Linux 2. 2”) && Memory >= 64)) initialdir = worker_dir. $(process) input = in output = out error = err log = log queue 1000 www. cs. wisc. edu/condor

A Condor Job-Parallel Submit File executable = worker requirement =( (OS == “Linux 2. 2”) && Memory >= 64)) initialdir = worker_dir. $(process) input = in output = out error = err log = log queue 1000 www. cs. wisc. edu/condor

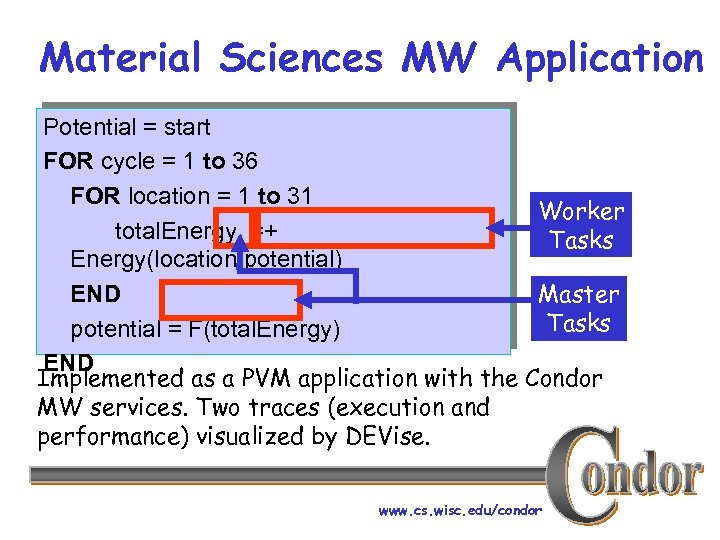

Material Sciences MW Application Potential = start FOR cycle = 1 to 36 FOR location = 1 to 31 Worker total. Energy =+ Tasks Energy(location, potential) END Master Tasks potential = F(total. Energy) END Implemented as a PVM application with the Condor MW services. Two traces (execution and performance) visualized by DEVise. www. cs. wisc. edu/condor

Material Sciences MW Application Potential = start FOR cycle = 1 to 36 FOR location = 1 to 31 Worker total. Energy =+ Tasks Energy(location, potential) END Master Tasks potential = F(total. Energy) END Implemented as a PVM application with the Condor MW services. Two traces (execution and performance) visualized by DEVise. www. cs. wisc. edu/condor

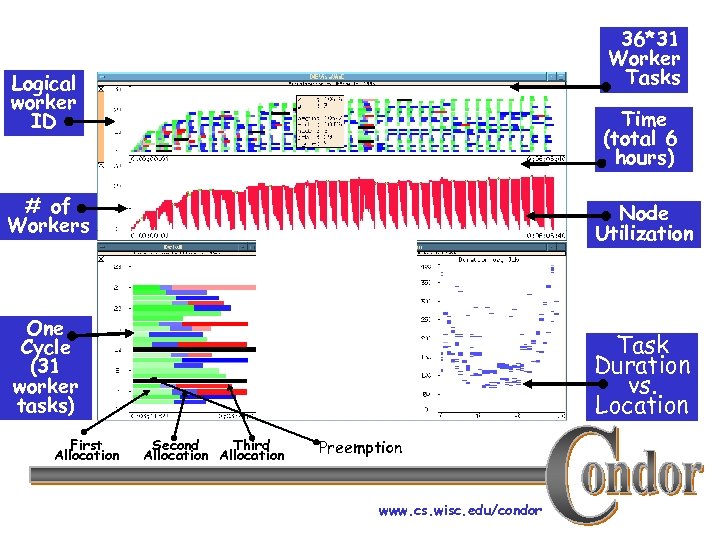

36*31 Worker Tasks Logical worker ID Time (total 6 hours) # of Workers Node Utilization One Cycle (31 worker tasks) Task Duration vs. Location First Allocation Second Third Allocation Preemption www. cs. wisc. edu/condor

36*31 Worker Tasks Logical worker ID Time (total 6 hours) # of Workers Node Utilization One Cycle (31 worker tasks) Task Duration vs. Location First Allocation Second Third Allocation Preemption www. cs. wisc. edu/condor

… back to the user with the 600 jobs and only one workstation to run them www. cs. wisc. edu/condor

… back to the user with the 600 jobs and only one workstation to run them www. cs. wisc. edu/condor

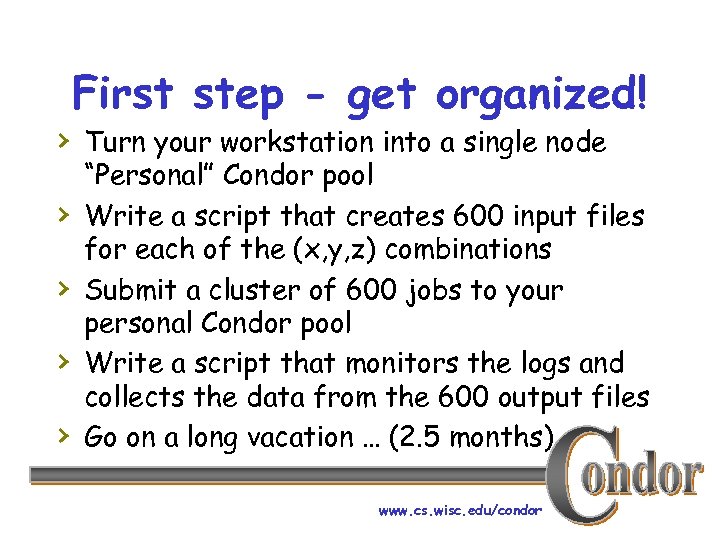

First step - get organized! › Turn your workstation into a single node › › “Personal” Condor pool Write a script that creates 600 input files for each of the (x, y, z) combinations Submit a cluster of 600 jobs to your personal Condor pool Write a script that monitors the logs and collects the data from the 600 output files Go on a long vacation … (2. 5 months) www. cs. wisc. edu/condor

First step - get organized! › Turn your workstation into a single node › › “Personal” Condor pool Write a script that creates 600 input files for each of the (x, y, z) combinations Submit a cluster of 600 jobs to your personal Condor pool Write a script that monitors the logs and collects the data from the 600 output files Go on a long vacation … (2. 5 months) www. cs. wisc. edu/condor

Your Personal Condor will. . . ›. . . keep an eye on your jobs and will keep › › you posted on their progress. . . implement your policy on when the jobs can run on your workstation. . . implement your policy on the execution order of the jobs. . add fault tolerance to your jobs … keep a log of your job activities www. cs. wisc. edu/condor

Your Personal Condor will. . . ›. . . keep an eye on your jobs and will keep › › you posted on their progress. . . implement your policy on when the jobs can run on your workstation. . . implement your policy on the execution order of the jobs. . add fault tolerance to your jobs … keep a log of your job activities www. cs. wisc. edu/condor

600 Condor jobs personal your workstation Condor www. cs. wisc. edu/condor

600 Condor jobs personal your workstation Condor www. cs. wisc. edu/condor

… and what about the underutilized workstation in the next office or the one in the class room downstairs or the Linux cluster node in the other building or the O 2 K node at the other side of town or … www. cs. wisc. edu/condor

… and what about the underutilized workstation in the next office or the one in the class room downstairs or the Linux cluster node in the other building or the O 2 K node at the other side of town or … www. cs. wisc. edu/condor

www. cs. wisc. edu/condor

www. cs. wisc. edu/condor

Second step - become a scavenger › Install Condor on the machine next door. › Install Condor on the machines in the class › › room. Configure these machines to be part of your Condor pool Go on a shorter vacation. . . www. cs. wisc. edu/condor

Second step - become a scavenger › Install Condor on the machine next door. › Install Condor on the machines in the class › › room. Configure these machines to be part of your Condor pool Go on a shorter vacation. . . www. cs. wisc. edu/condor

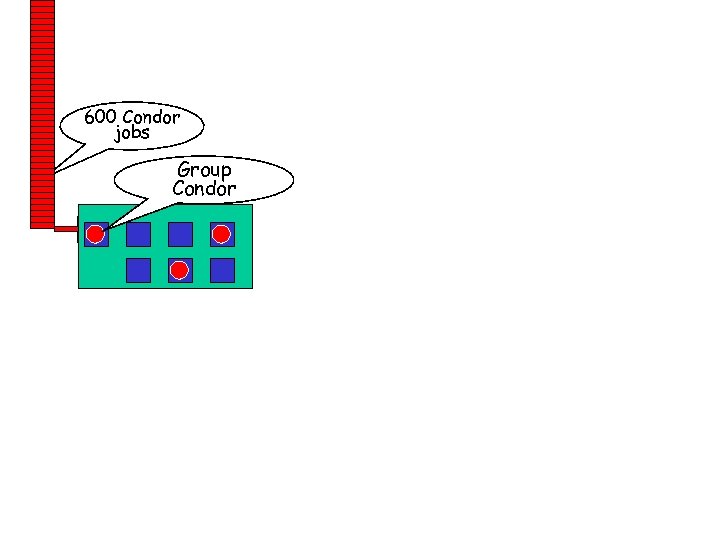

600 Condor jobs personal Group your workstation Condor www. cs. wisc. edu/condor

600 Condor jobs personal Group your workstation Condor www. cs. wisc. edu/condor

Third step - Take advantage of your friends › Get permission from “friendly” Condor › › pools to access their resources Configure your personal Condor to “flock” to these pools reconsider your vacation plans. . . www. cs. wisc. edu/condor

Third step - Take advantage of your friends › Get permission from “friendly” Condor › › pools to access their resources Configure your personal Condor to “flock” to these pools reconsider your vacation plans. . . www. cs. wisc. edu/condor

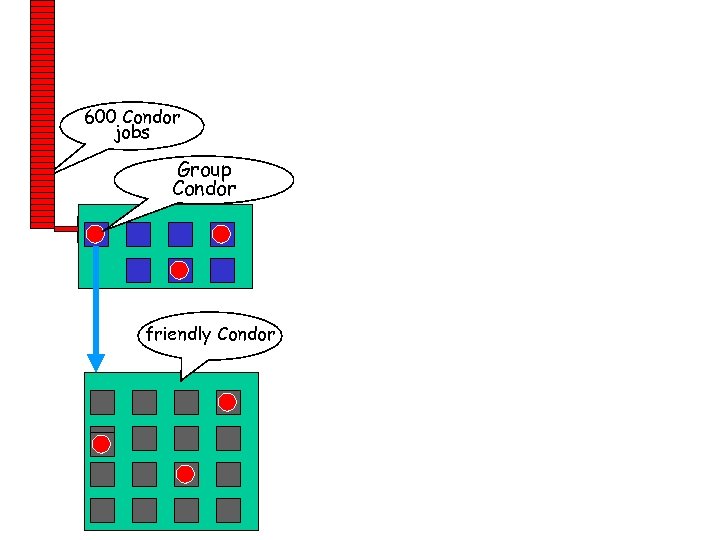

600 Condor jobs personal Group your workstation Condor friendly Condor www. cs. wisc. edu/condor

600 Condor jobs personal Group your workstation Condor friendly Condor www. cs. wisc. edu/condor

www. cs. wisc. edu/condor

www. cs. wisc. edu/condor

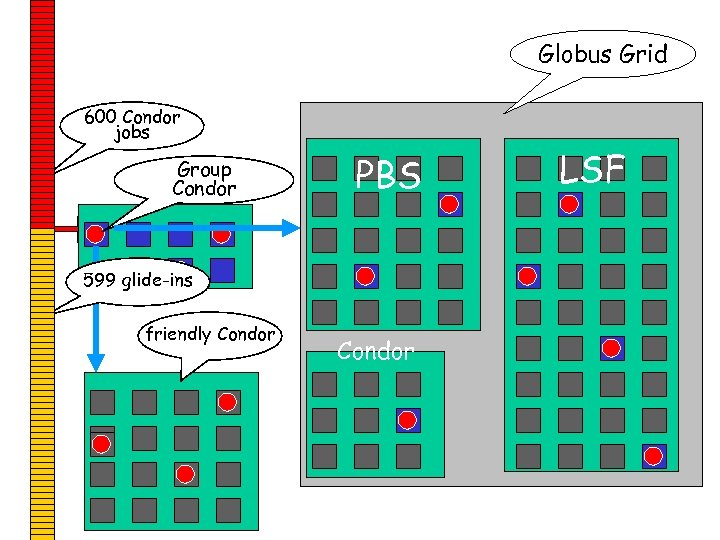

Forth Step - Think big! › Get access (account(s) + certificate(s)) › › › to a Globus managed Grid Submit 599 “To Globus” Condor glide-in jobs to your personal Condor When all your jobs are done, remove any pending glide-in jobs Take the rest of the afternoon off. . . www. cs. wisc. edu/condor

Forth Step - Think big! › Get access (account(s) + certificate(s)) › › › to a Globus managed Grid Submit 599 “To Globus” Condor glide-in jobs to your personal Condor When all your jobs are done, remove any pending glide-in jobs Take the rest of the afternoon off. . . www. cs. wisc. edu/condor

Globus Grid 600 Condor jobs personal Group your workstation Condor PBS 599 glide-ins friendly Condor www. cs. wisc. edu/condor LSF

Globus Grid 600 Condor jobs personal Group your workstation Condor PBS 599 glide-ins friendly Condor www. cs. wisc. edu/condor LSF

Simple is not only beautiful it can be very effective www. cs. wisc. edu/condor

Simple is not only beautiful it can be very effective www. cs. wisc. edu/condor