fb06294668c34bfe1729b3ae43844f8d.ppt

- Количество слайдов: 70

The F 5402 E 4 G FC to SAS/SATA August 2006 Xyratex Confidential

The F 5402 E 4 G FC to SAS/SATA August 2006 Xyratex Confidential

Safe Harbor This presentation includes statements that may constitute “forwardlooking” statements, usually containing the words “believe”, “estimated”, “project”, “expect”, “anticipate”, or similar expressions. These statements are made pursuant to the safe harbor provisions of the Private Securities Litigation Reform Act of 1995. Forward looking statements inherently involve risks and uncertainties that could cause actual results to differ materially from the forward looking statements. Factors that would cause or contribute to such differences include, but are not limited to, the Company’s inability to increase sales to current customers and to expand its customer base, continued acceptance of the Company’s products in the marketplace, the Company’s inability to improve the gross margin on its products, competitive factors, dependence upon third party vendors, outcome of litigation, and other risks detailed in the Company’s periodic report filings with the Securities and Exchange Commission. By making these forward looking statements, the Company undertakes no obligation to update these statements for revisions or changes after the date of this presentation. 2

Safe Harbor This presentation includes statements that may constitute “forwardlooking” statements, usually containing the words “believe”, “estimated”, “project”, “expect”, “anticipate”, or similar expressions. These statements are made pursuant to the safe harbor provisions of the Private Securities Litigation Reform Act of 1995. Forward looking statements inherently involve risks and uncertainties that could cause actual results to differ materially from the forward looking statements. Factors that would cause or contribute to such differences include, but are not limited to, the Company’s inability to increase sales to current customers and to expand its customer base, continued acceptance of the Company’s products in the marketplace, the Company’s inability to improve the gross margin on its products, competitive factors, dependence upon third party vendors, outcome of litigation, and other risks detailed in the Company’s periodic report filings with the Securities and Exchange Commission. By making these forward looking statements, the Company undertakes no obligation to update these statements for revisions or changes after the date of this presentation. 2

Agenda - F 5402 E training l Product introduction l Product feature set l Architecture l Supported configurations l Best practices for configuration l Performance l Storview demonstration l Techsupport Viewer l Storview Path Manager – MPIO l Snapshot l Raid 6 3

Agenda - F 5402 E training l Product introduction l Product feature set l Architecture l Supported configurations l Best practices for configuration l Performance l Storview demonstration l Techsupport Viewer l Storview Path Manager – MPIO l Snapshot l Raid 6 3

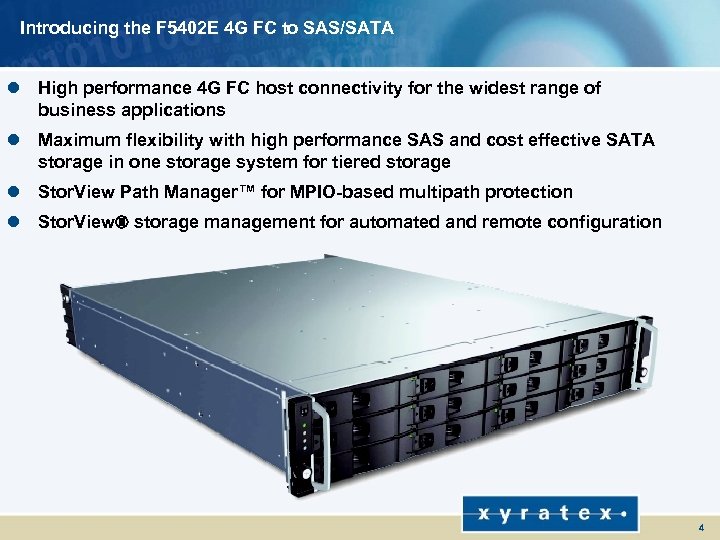

Introducing the F 5402 E 4 G FC to SAS/SATA l High performance 4 G FC host connectivity for the widest range of business applications l Maximum flexibility with high performance SAS and cost effective SATA storage in one storage system for tiered storage l Stor. View Path Manager™ for MPIO-based multipath protection l Stor. View® storage management for automated and remote configuration 4

Introducing the F 5402 E 4 G FC to SAS/SATA l High performance 4 G FC host connectivity for the widest range of business applications l Maximum flexibility with high performance SAS and cost effective SATA storage in one storage system for tiered storage l Stor. View Path Manager™ for MPIO-based multipath protection l Stor. View® storage management for automated and remote configuration 4

F 5402 E 4 G FC to SAS/SATA Main Features l RAS l Performance and Throughput n Dual-active HA configurations n 4 G, 2 G FC Host connections/controller n Single stand-alone n 3 G SAS & 3 G SATA-II drive connections configurations n Intel IOP 331 800 MHz (Lindsay) n Stor. View Path Manager™ for n Dedicated cache coherency channels MPIO-based multipathing n Up to 16 3 G or 1. 5 G devices per array n Hot sparing n 512 MB and 1 GB cache size/controller n Redundant power supplies/fans n Volume level cache settings n Dynamic array expansion • Read ahead (on/off) • Adaptive read ahead • Write-back/write through l Certifications • Maximum cache allocated n Ro. Hs compliant n Configurable strip size (64 K and 256 K) n UL, EC, Xyratex Eco Guidelines n Up to 16 MB I/O 5

F 5402 E 4 G FC to SAS/SATA Main Features l RAS l Performance and Throughput n Dual-active HA configurations n 4 G, 2 G FC Host connections/controller n Single stand-alone n 3 G SAS & 3 G SATA-II drive connections configurations n Intel IOP 331 800 MHz (Lindsay) n Stor. View Path Manager™ for n Dedicated cache coherency channels MPIO-based multipathing n Up to 16 3 G or 1. 5 G devices per array n Hot sparing n 512 MB and 1 GB cache size/controller n Redundant power supplies/fans n Volume level cache settings n Dynamic array expansion • Read ahead (on/off) • Adaptive read ahead • Write-back/write through l Certifications • Maximum cache allocated n Ro. Hs compliant n Configurable strip size (64 K and 256 K) n UL, EC, Xyratex Eco Guidelines n Up to 16 MB I/O 5

F 5402 E 4 G FC to SAS/SATA Main Features l Management Abilities n Stor. View™ out-of-band/inband n Served HTML GUI n Full function CLI n SNMP, E-mail alerts, paging n Out board SMI-S CIMOM (subsequent release) l System Capabilities n RAID 0, 1, 5, 10, 50, 6 n Concatenated LUNs (x 16) n 256 hosts/512 LUNs per host n Up to 60 drives n 512 logical drives n 512 byte sector support n Battery backup, removable l LUN Management n LUN masking n Instant LUN availability (background initialization) n Supports > 2 TB l Statistics n Host IOPS/Bandwidth n Volume access patterns n Cache hit rates 6

F 5402 E 4 G FC to SAS/SATA Main Features l Management Abilities n Stor. View™ out-of-band/inband n Served HTML GUI n Full function CLI n SNMP, E-mail alerts, paging n Out board SMI-S CIMOM (subsequent release) l System Capabilities n RAID 0, 1, 5, 10, 50, 6 n Concatenated LUNs (x 16) n 256 hosts/512 LUNs per host n Up to 60 drives n 512 logical drives n 512 byte sector support n Battery backup, removable l LUN Management n LUN masking n Instant LUN availability (background initialization) n Supports > 2 TB l Statistics n Host IOPS/Bandwidth n Volume access patterns n Cache hit rates 6

RS-1220 Chassis l Integrated packaging expertise (Havant, UK and Lake Mary, FL) n High-density drive packaging n Revolutionary airflow for maintained temperature control l 5 Th Generation Enclosure Design n 2 EIA U (1. 75 inch) high – total is 3. 47 inches n 19 inch IEC Rack compliant n Slide mounted l Sheet Metal riveted/projection welded construction n Rigid structure l Integrated midplane (Chassis level FRU) l Moulded drive carrier runners n Die cast insert for rigidity n Integrated drive guidance features 7

RS-1220 Chassis l Integrated packaging expertise (Havant, UK and Lake Mary, FL) n High-density drive packaging n Revolutionary airflow for maintained temperature control l 5 Th Generation Enclosure Design n 2 EIA U (1. 75 inch) high – total is 3. 47 inches n 19 inch IEC Rack compliant n Slide mounted l Sheet Metal riveted/projection welded construction n Rigid structure l Integrated midplane (Chassis level FRU) l Moulded drive carrier runners n Die cast insert for rigidity n Integrated drive guidance features 7

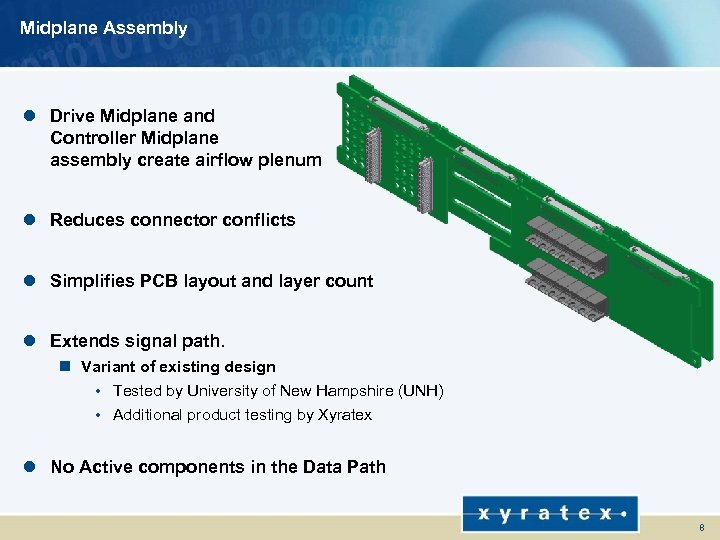

Midplane Assembly l Drive Midplane and Controller Midplane assembly create airflow plenum l Reduces connector conflicts l Simplifies PCB layout and layer count l Extends signal path. n Variant of existing design • Tested by University of New Hampshire (UNH) • Additional product testing by Xyratex l No Active components in the Data Path 8

Midplane Assembly l Drive Midplane and Controller Midplane assembly create airflow plenum l Reduces connector conflicts l Simplifies PCB layout and layer count l Extends signal path. n Variant of existing design • Tested by University of New Hampshire (UNH) • Additional product testing by Xyratex l No Active components in the Data Path 8

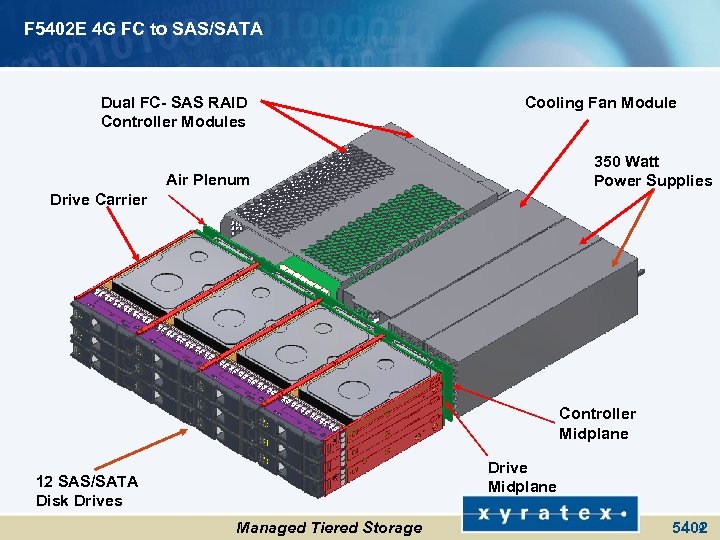

F 5402 E 4 G FC to SAS/SATA Dual FC- SAS RAID Controller Modules Cooling Fan Module 350 Watt Power Supplies Air Plenum Drive Carrier Controller Midplane Drive Midplane 12 SAS/SATA Disk Drives Managed Tiered Storage 9 5402

F 5402 E 4 G FC to SAS/SATA Dual FC- SAS RAID Controller Modules Cooling Fan Module 350 Watt Power Supplies Air Plenum Drive Carrier Controller Midplane Drive Midplane 12 SAS/SATA Disk Drives Managed Tiered Storage 9 5402

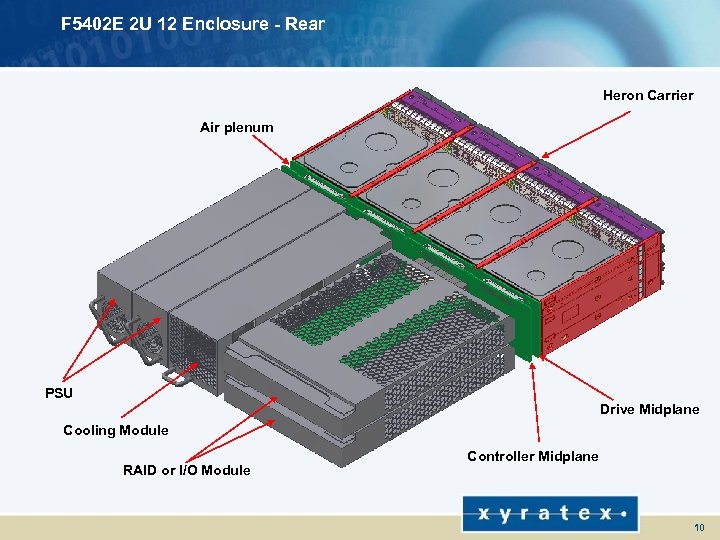

F 5402 E 2 U 12 Enclosure - Rear Heron Carrier Air plenum PSU Drive Midplane Cooling Module RAID or I/O Module Controller Midplane 10

F 5402 E 2 U 12 Enclosure - Rear Heron Carrier Air plenum PSU Drive Midplane Cooling Module RAID or I/O Module Controller Midplane 10

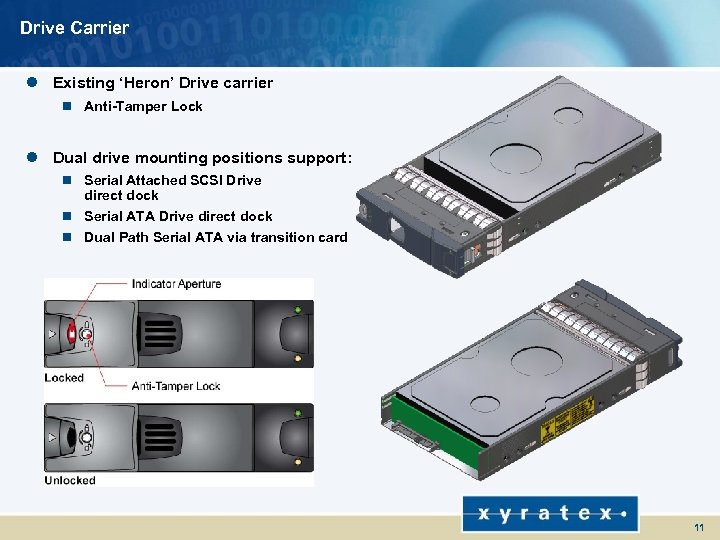

Drive Carrier l Existing ‘Heron’ Drive carrier n Anti-Tamper Lock l Dual drive mounting positions support: n Serial Attached SCSI Drive direct dock n Serial ATA Drive direct dock n Dual Path Serial ATA via transition card 11

Drive Carrier l Existing ‘Heron’ Drive carrier n Anti-Tamper Lock l Dual drive mounting positions support: n Serial Attached SCSI Drive direct dock n Serial ATA Drive direct dock n Dual Path Serial ATA via transition card 11

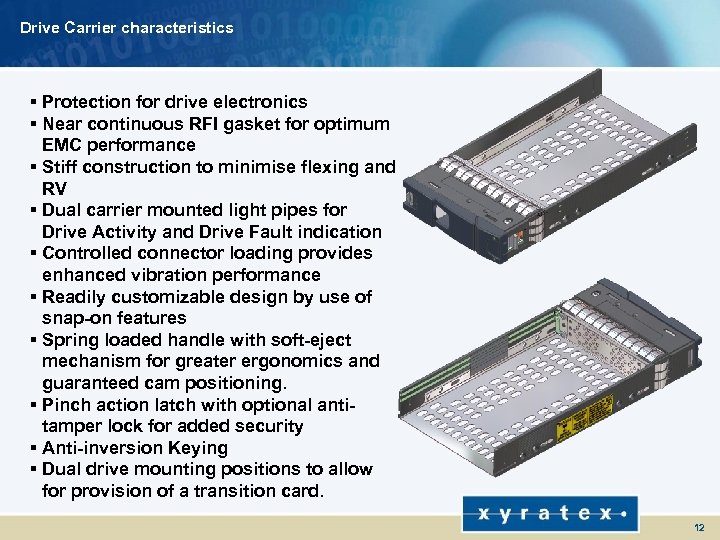

Drive Carrier characteristics § Protection for drive electronics § Near continuous RFI gasket for optimum EMC performance § Stiff construction to minimise flexing and RV § Dual carrier mounted light pipes for Drive Activity and Drive Fault indication § Controlled connector loading provides enhanced vibration performance § Readily customizable design by use of snap-on features § Spring loaded handle with soft-eject mechanism for greater ergonomics and guaranteed cam positioning. § Pinch action latch with optional antitamper lock for added security § Anti-inversion Keying § Dual drive mounting positions to allow for provision of a transition card. 12

Drive Carrier characteristics § Protection for drive electronics § Near continuous RFI gasket for optimum EMC performance § Stiff construction to minimise flexing and RV § Dual carrier mounted light pipes for Drive Activity and Drive Fault indication § Controlled connector loading provides enhanced vibration performance § Readily customizable design by use of snap-on features § Spring loaded handle with soft-eject mechanism for greater ergonomics and guaranteed cam positioning. § Pinch action latch with optional antitamper lock for added security § Anti-inversion Keying § Dual drive mounting positions to allow for provision of a transition card. 12

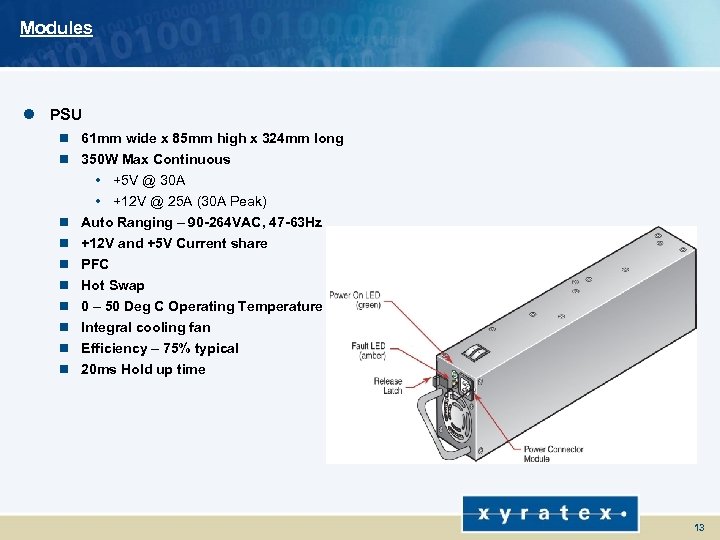

Modules l PSU n 61 mm wide x 85 mm high x 324 mm long n 350 W Max Continuous • +5 V @ 30 A • +12 V @ 25 A (30 A Peak) n Auto Ranging – 90 -264 VAC, 47 -63 Hz n +12 V and +5 V Current share n PFC n Hot Swap n 0 – 50 Deg C Operating Temperature n Integral cooling fan n Efficiency – 75% typical n 20 ms Hold up time 13

Modules l PSU n 61 mm wide x 85 mm high x 324 mm long n 350 W Max Continuous • +5 V @ 30 A • +12 V @ 25 A (30 A Peak) n Auto Ranging – 90 -264 VAC, 47 -63 Hz n +12 V and +5 V Current share n PFC n Hot Swap n 0 – 50 Deg C Operating Temperature n Integral cooling fan n Efficiency – 75% typical n 20 ms Hold up time 13

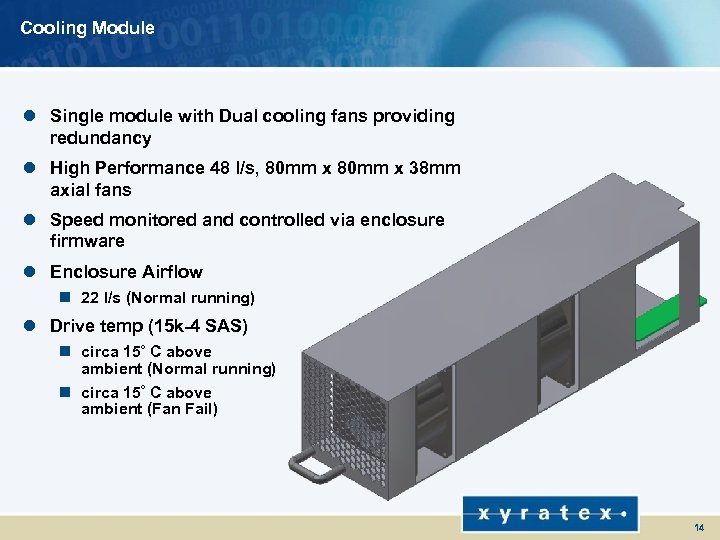

Cooling Module l Single module with Dual cooling fans providing redundancy l High Performance 48 l/s, 80 mm x 38 mm axial fans l Speed monitored and controlled via enclosure firmware l Enclosure Airflow n 22 l/s (Normal running) l Drive temp (15 k-4 SAS) o n circa 15 C above ambient (Normal running) o n circa 15 C above ambient (Fan Fail) 14

Cooling Module l Single module with Dual cooling fans providing redundancy l High Performance 48 l/s, 80 mm x 38 mm axial fans l Speed monitored and controlled via enclosure firmware l Enclosure Airflow n 22 l/s (Normal running) l Drive temp (15 k-4 SAS) o n circa 15 C above ambient (Normal running) o n circa 15 C above ambient (Fan Fail) 14

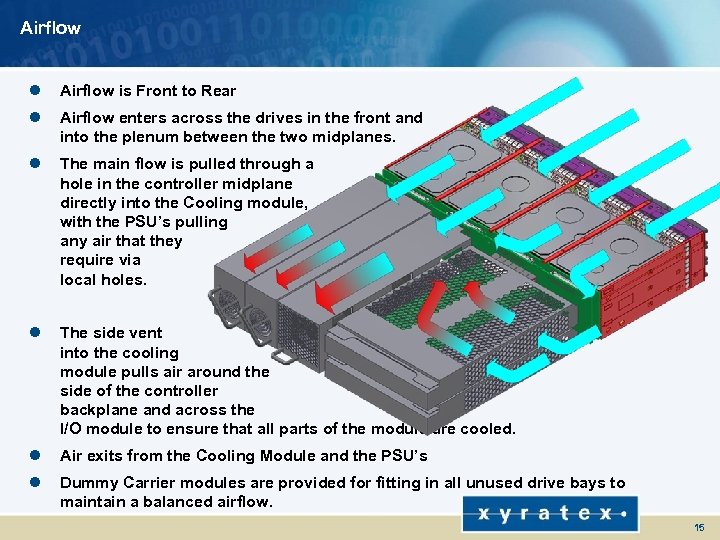

Airflow l Airflow is Front to Rear l Airflow enters across the drives in the front and into the plenum between the two midplanes. l The main flow is pulled through a hole in the controller midplane directly into the Cooling module, with the PSU’s pulling any air that they require via local holes. l The side vent into the cooling module pulls air around the side of the controller backplane and across the I/O module to ensure that all parts of the module are cooled. l Air exits from the Cooling Module and the PSU’s l Dummy Carrier modules are provided for fitting in all unused drive bays to maintain a balanced airflow. 15

Airflow l Airflow is Front to Rear l Airflow enters across the drives in the front and into the plenum between the two midplanes. l The main flow is pulled through a hole in the controller midplane directly into the Cooling module, with the PSU’s pulling any air that they require via local holes. l The side vent into the cooling module pulls air around the side of the controller backplane and across the I/O module to ensure that all parts of the module are cooled. l Air exits from the Cooling Module and the PSU’s l Dummy Carrier modules are provided for fitting in all unused drive bays to maintain a balanced airflow. 15

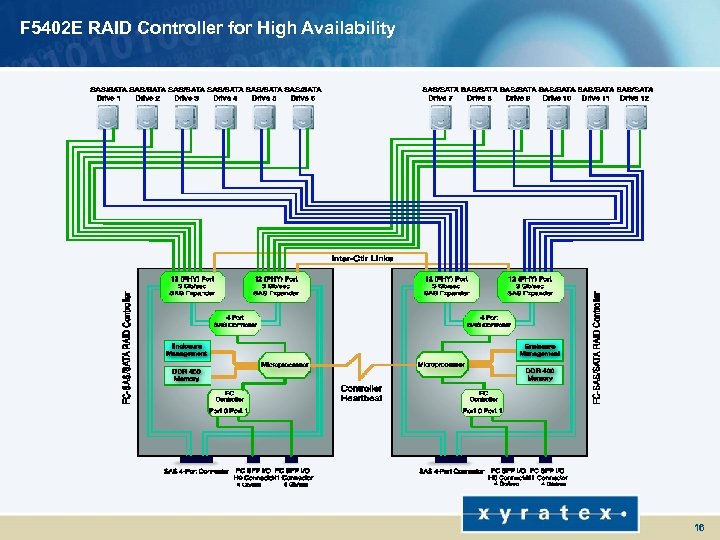

F 5402 E RAID Controller for High Availability 16

F 5402 E RAID Controller for High Availability 16

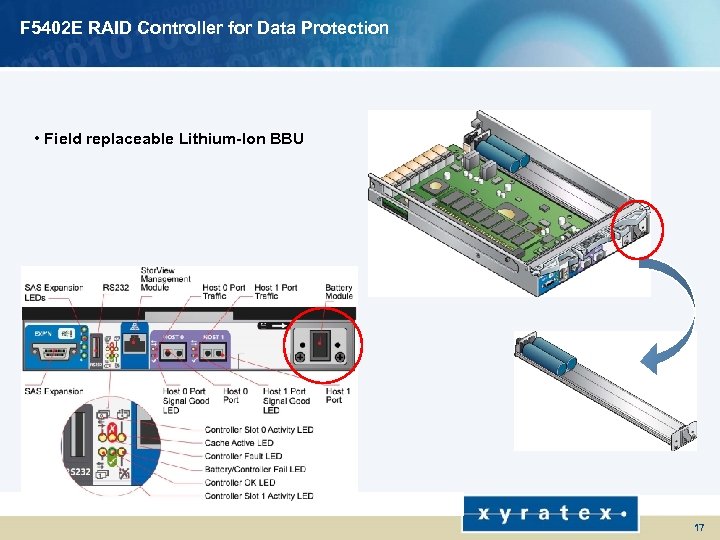

F 5402 E RAID Controller for Data Protection • Field replaceable Lithium-Ion BBU 17

F 5402 E RAID Controller for Data Protection • Field replaceable Lithium-Ion BBU 17

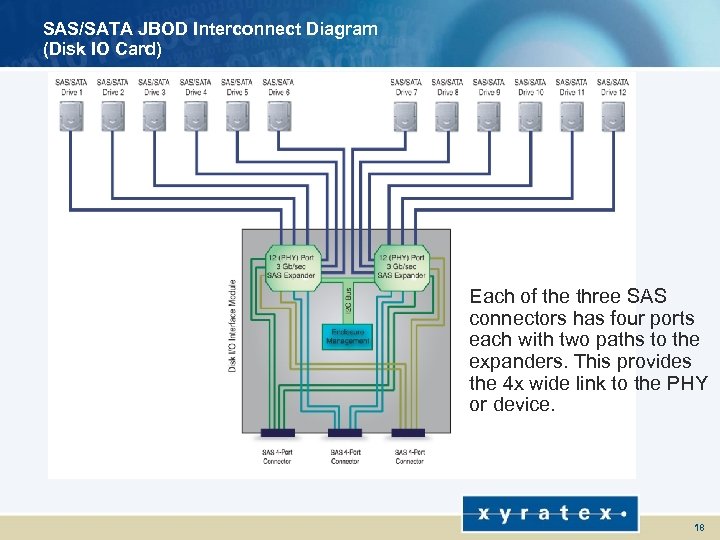

SAS/SATA JBOD Interconnect Diagram (Disk IO Card) Each of the three SAS connectors has four ports each with two paths to the expanders. This provides the 4 x wide link to the PHY or device. 18

SAS/SATA JBOD Interconnect Diagram (Disk IO Card) Each of the three SAS connectors has four ports each with two paths to the expanders. This provides the 4 x wide link to the PHY or device. 18

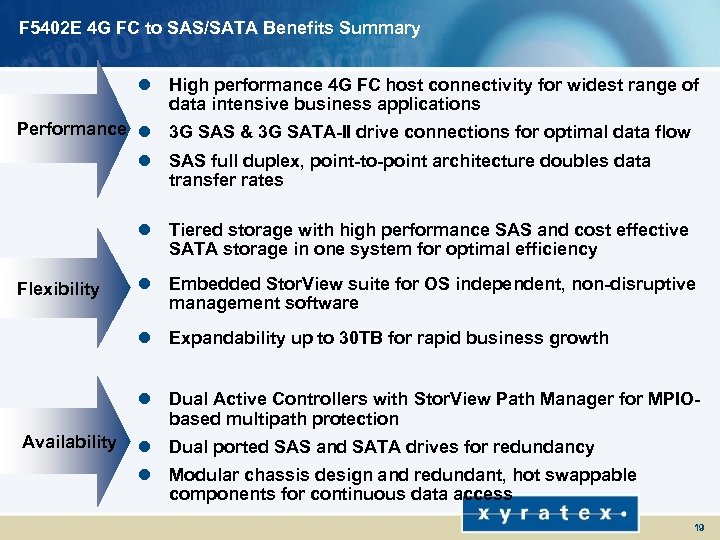

F 5402 E 4 G FC to SAS/SATA Benefits Summary l High performance 4 G FC host connectivity for widest range of data intensive business applications Performance l 3 G SAS & 3 G SATA-II drive connections for optimal data flow l SAS full duplex, point-to-point architecture doubles data transfer rates l Tiered storage with high performance SAS and cost effective SATA storage in one system for optimal efficiency Flexibility l Embedded Stor. View suite for OS independent, non-disruptive management software l Expandability up to 30 TB for rapid business growth l Dual Active Controllers with Stor. View Path Manager for MPIObased multipath protection Availability l Dual ported SAS and SATA drives for redundancy l Modular chassis design and redundant, hot swappable components for continuous data access 19

F 5402 E 4 G FC to SAS/SATA Benefits Summary l High performance 4 G FC host connectivity for widest range of data intensive business applications Performance l 3 G SAS & 3 G SATA-II drive connections for optimal data flow l SAS full duplex, point-to-point architecture doubles data transfer rates l Tiered storage with high performance SAS and cost effective SATA storage in one system for optimal efficiency Flexibility l Embedded Stor. View suite for OS independent, non-disruptive management software l Expandability up to 30 TB for rapid business growth l Dual Active Controllers with Stor. View Path Manager for MPIObased multipath protection Availability l Dual ported SAS and SATA drives for redundancy l Modular chassis design and redundant, hot swappable components for continuous data access 19

F 5402 E Configurations l F 5402 E Simplex Mode n Single Host, Single HBA n Dual Host, Single HBA l F 5402 E Duplex Mode n Single Host, Dual HBA n Dual Host, Single HBAs n Dual Host, Dual HBAs n SAN Attach, Single Switch n SAN Attach, Dual Switches l Daisy-Chaining for Expansion 20

F 5402 E Configurations l F 5402 E Simplex Mode n Single Host, Single HBA n Dual Host, Single HBA l F 5402 E Duplex Mode n Single Host, Dual HBA n Dual Host, Single HBAs n Dual Host, Dual HBAs n SAN Attach, Single Switch n SAN Attach, Dual Switches l Daisy-Chaining for Expansion 20

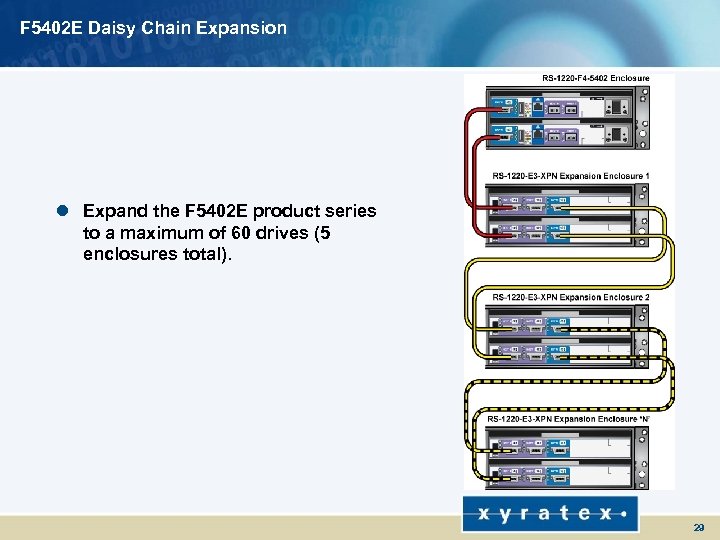

F 5402 E Daisy Chain Expansion l Expand the F 5402 E product series to a maximum of 60 drives (5 enclosures total). 29

F 5402 E Daisy Chain Expansion l Expand the F 5402 E product series to a maximum of 60 drives (5 enclosures total). 29

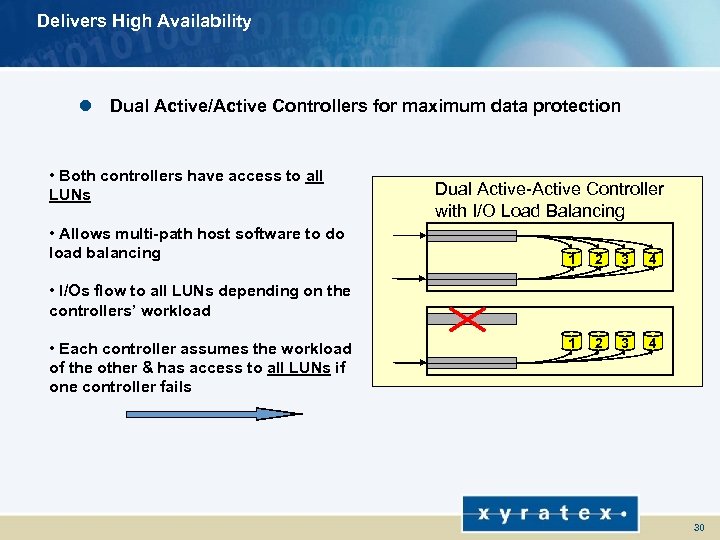

Delivers High Availability l Dual Active/Active Controllers for maximum data protection • Both controllers have access to all LUNs • Allows multi-path host software to do load balancing Dual Active-Active Controller with I/O Load Balancing 1 2 3 4 • I/Os flow to all LUNs depending on the controllers’ workload • Each controller assumes the workload of the other & has access to all LUNs if one controller fails 30

Delivers High Availability l Dual Active/Active Controllers for maximum data protection • Both controllers have access to all LUNs • Allows multi-path host software to do load balancing Dual Active-Active Controller with I/O Load Balancing 1 2 3 4 • I/Os flow to all LUNs depending on the controllers’ workload • Each controller assumes the workload of the other & has access to all LUNs if one controller fails 30

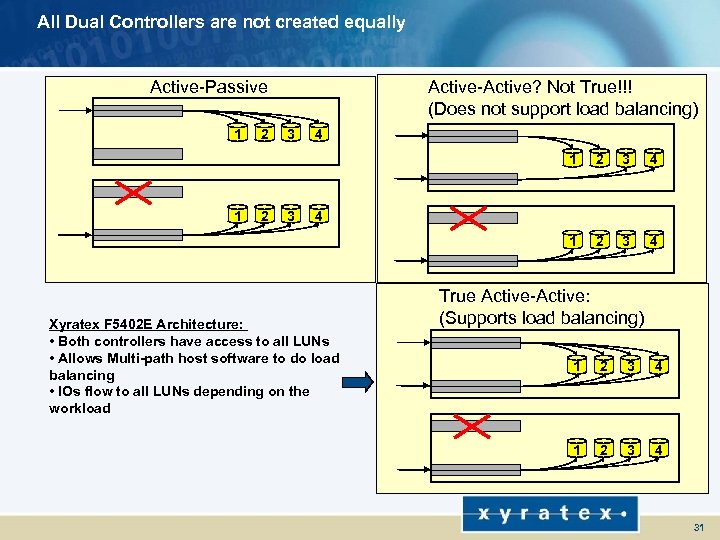

All Dual Controllers are not created equally Active-Passive 1 2 Active-Active? Not True!!! (Does not support load balancing) 3 4 1 2 3 3 4 1 1 2 2 3 4 4 Xyratex F 5402 E Architecture: • Both controllers have access to all LUNs • Allows Multi-path host software to do load balancing • IOs flow to all LUNs depending on the workload True Active-Active: (Supports load balancing) 1 2 3 4 31

All Dual Controllers are not created equally Active-Passive 1 2 Active-Active? Not True!!! (Does not support load balancing) 3 4 1 2 3 3 4 1 1 2 2 3 4 4 Xyratex F 5402 E Architecture: • Both controllers have access to all LUNs • Allows Multi-path host software to do load balancing • IOs flow to all LUNs depending on the workload True Active-Active: (Supports load balancing) 1 2 3 4 31

Storview demostration

Storview demostration

Standalone technical support viewer

Standalone technical support viewer

F 5402 E 4 G FC to SAS/SATA Best Practices for Configuring the F 5402 E 34

F 5402 E 4 G FC to SAS/SATA Best Practices for Configuring the F 5402 E 34

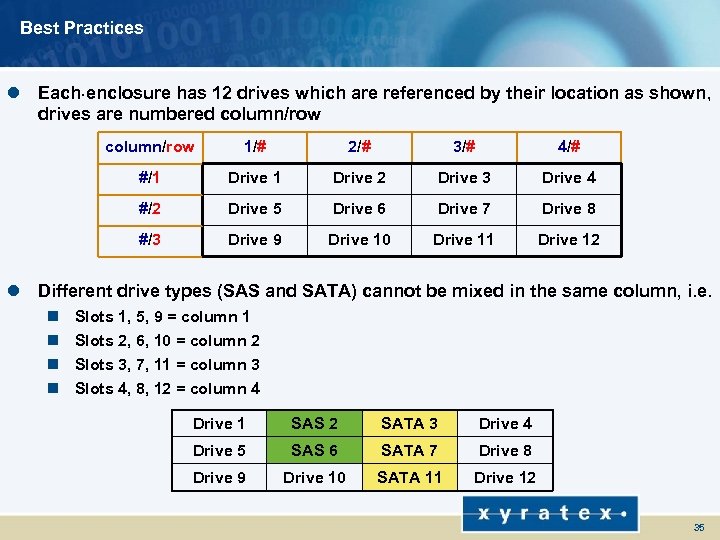

Best Practices l Each. enclosure has 12 drives which are referenced by their location as shown, drives are numbered column/row 1/# 2/# 3/# 4/# #/1 Drive 2 Drive 3 Drive 4 #/2 Drive 5 Drive 6 Drive 7 Drive 8 #/3 Drive 9 Drive 10 Drive 11 Drive 12 l Different drive types (SAS and SATA) cannot be mixed in the same column, i. e. n n Slots 1, 5, 9 = column 1 Slots 2, 6, 10 = column 2 Slots 3, 7, 11 = column 3 Slots 4, 8, 12 = column 4 Drive 1 SAS 2 SATA 3 Drive 4 Drive 5 SAS 6 SATA 7 Drive 8 Drive 9 Drive 10 SATA 11 Drive 12 35

Best Practices l Each. enclosure has 12 drives which are referenced by their location as shown, drives are numbered column/row 1/# 2/# 3/# 4/# #/1 Drive 2 Drive 3 Drive 4 #/2 Drive 5 Drive 6 Drive 7 Drive 8 #/3 Drive 9 Drive 10 Drive 11 Drive 12 l Different drive types (SAS and SATA) cannot be mixed in the same column, i. e. n n Slots 1, 5, 9 = column 1 Slots 2, 6, 10 = column 2 Slots 3, 7, 11 = column 3 Slots 4, 8, 12 = column 4 Drive 1 SAS 2 SATA 3 Drive 4 Drive 5 SAS 6 SATA 7 Drive 8 Drive 9 Drive 10 SATA 11 Drive 12 35

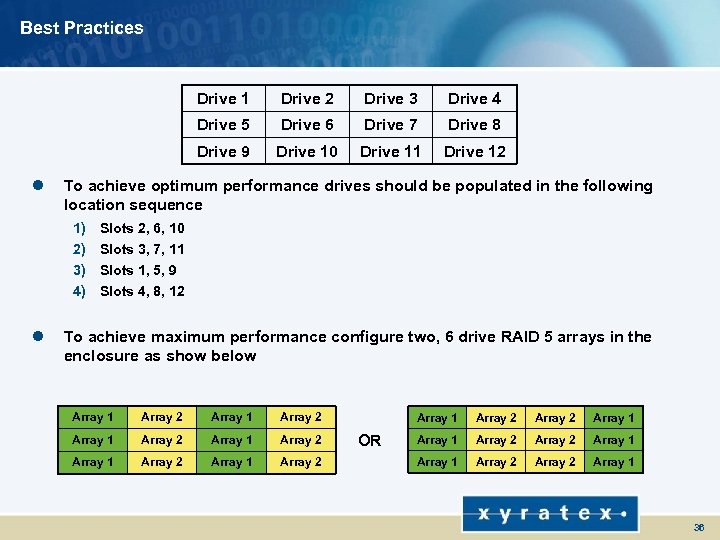

Best Practices Drive 1 Drive 4 Drive 6 Drive 7 Drive 8 Drive 9 Drive 10 Drive 11 Drive 12 To achieve optimum performance drives should be populated in the following location sequence 1) 2) 3) 4) l Drive 3 Drive 5 l Drive 2 Slots 2, 6, 10 Slots 3, 7, 11 Slots 1, 5, 9 Slots 4, 8, 12 To achieve maximum performance configure two, 6 drive RAID 5 arrays in the enclosure as show below Array 1 Array 2 Array 1 Array 2 Array 1 OR Array 2 Array 1 Array 2 Array 1 36

Best Practices Drive 1 Drive 4 Drive 6 Drive 7 Drive 8 Drive 9 Drive 10 Drive 11 Drive 12 To achieve optimum performance drives should be populated in the following location sequence 1) 2) 3) 4) l Drive 3 Drive 5 l Drive 2 Slots 2, 6, 10 Slots 3, 7, 11 Slots 1, 5, 9 Slots 4, 8, 12 To achieve maximum performance configure two, 6 drive RAID 5 arrays in the enclosure as show below Array 1 Array 2 Array 1 Array 2 Array 1 OR Array 2 Array 1 Array 2 Array 1 36

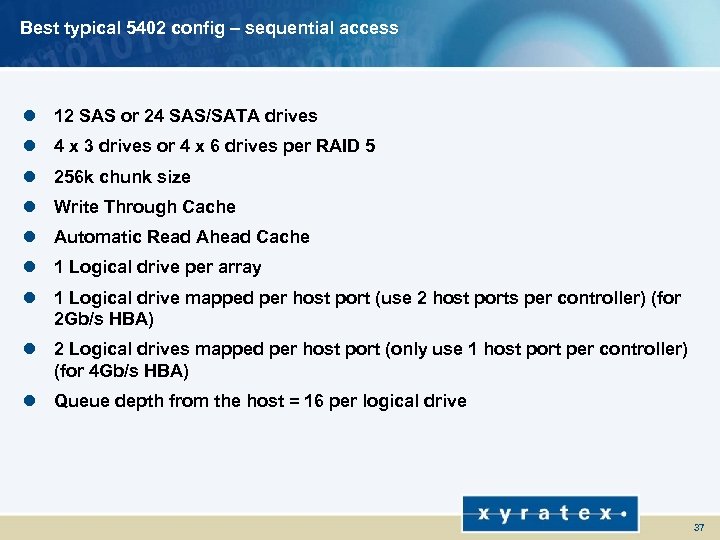

Best typical 5402 config – sequential access l 12 SAS or 24 SAS/SATA drives l 4 x 3 drives or 4 x 6 drives per RAID 5 l 256 k chunk size l Write Through Cache l Automatic Read Ahead Cache l 1 Logical drive per array l 1 Logical drive mapped per host port (use 2 host ports per controller) (for 2 Gb/s HBA) l 2 Logical drives mapped per host port (only use 1 host port per controller) (for 4 Gb/s HBA) l Queue depth from the host = 16 per logical drive 37

Best typical 5402 config – sequential access l 12 SAS or 24 SAS/SATA drives l 4 x 3 drives or 4 x 6 drives per RAID 5 l 256 k chunk size l Write Through Cache l Automatic Read Ahead Cache l 1 Logical drive per array l 1 Logical drive mapped per host port (use 2 host ports per controller) (for 2 Gb/s HBA) l 2 Logical drives mapped per host port (only use 1 host port per controller) (for 4 Gb/s HBA) l Queue depth from the host = 16 per logical drive 37

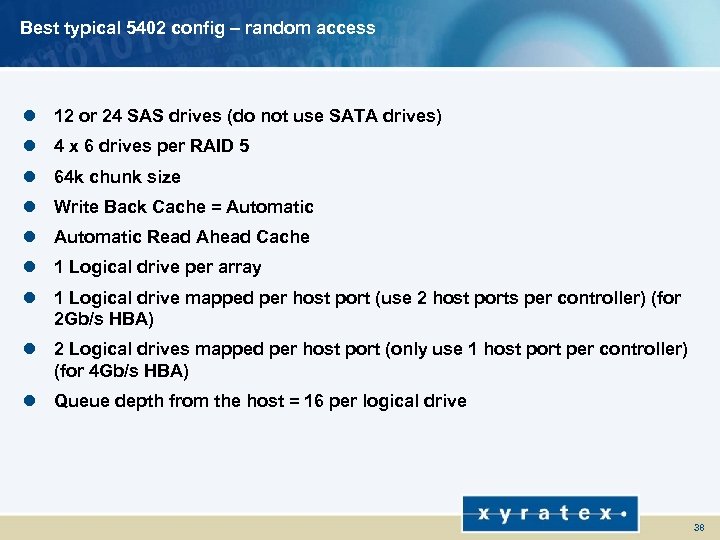

Best typical 5402 config – random access l 12 or 24 SAS drives (do not use SATA drives) l 4 x 6 drives per RAID 5 l 64 k chunk size l Write Back Cache = Automatic l Automatic Read Ahead Cache l 1 Logical drive per array l 1 Logical drive mapped per host port (use 2 host ports per controller) (for 2 Gb/s HBA) l 2 Logical drives mapped per host port (only use 1 host port per controller) (for 4 Gb/s HBA) l Queue depth from the host = 16 per logical drive 38

Best typical 5402 config – random access l 12 or 24 SAS drives (do not use SATA drives) l 4 x 6 drives per RAID 5 l 64 k chunk size l Write Back Cache = Automatic l Automatic Read Ahead Cache l 1 Logical drive per array l 1 Logical drive mapped per host port (use 2 host ports per controller) (for 2 Gb/s HBA) l 2 Logical drives mapped per host port (only use 1 host port per controller) (for 4 Gb/s HBA) l Queue depth from the host = 16 per logical drive 38

SAS dual controller performance

SAS dual controller performance

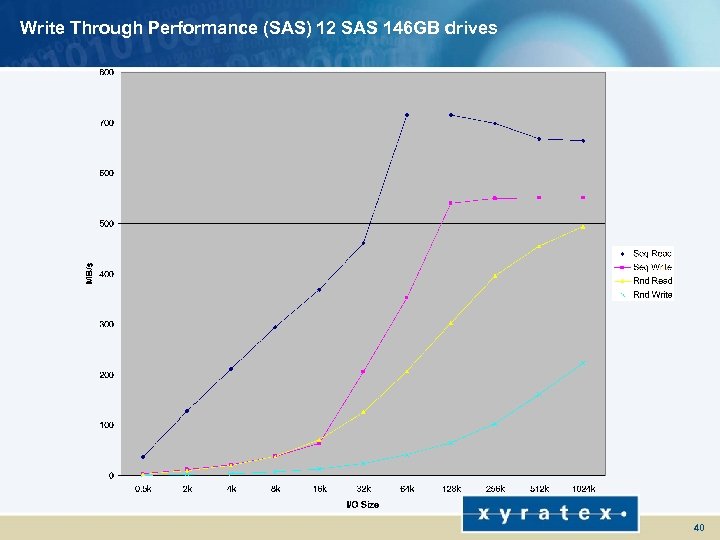

Write Through Performance (SAS) 12 SAS 146 GB drives 40

Write Through Performance (SAS) 12 SAS 146 GB drives 40

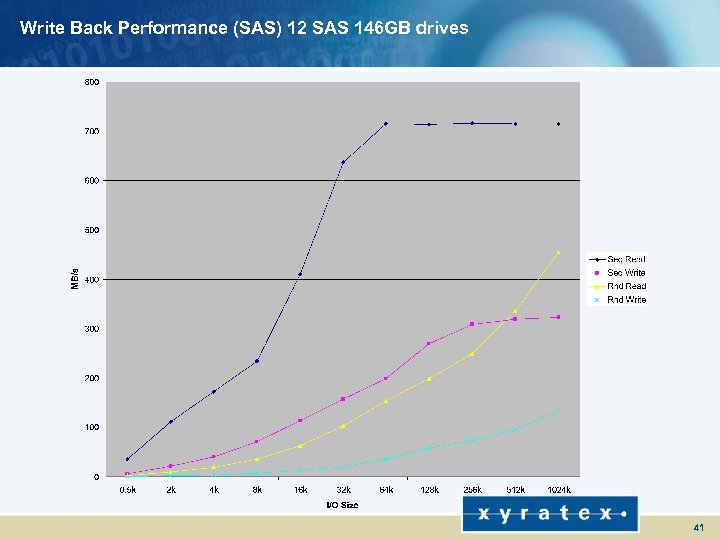

Write Back Performance (SAS) 12 SAS 146 GB drives 41

Write Back Performance (SAS) 12 SAS 146 GB drives 41

SATA dual controller performance

SATA dual controller performance

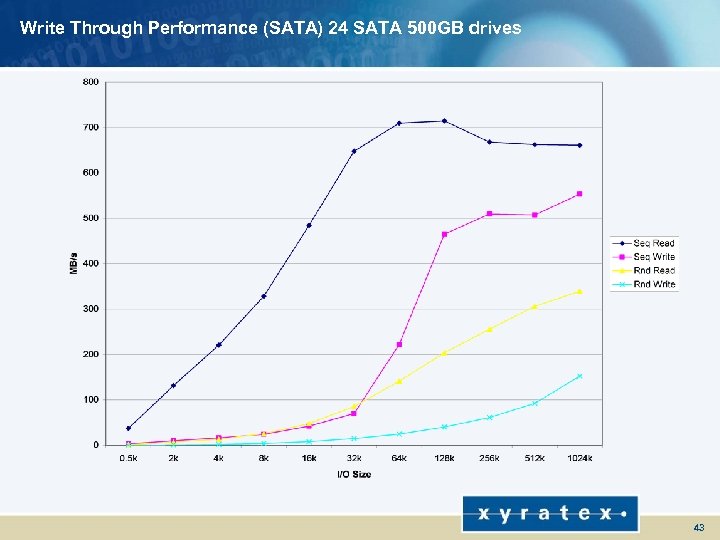

Write Through Performance (SATA) 24 SATA 500 GB drives 43

Write Through Performance (SATA) 24 SATA 500 GB drives 43

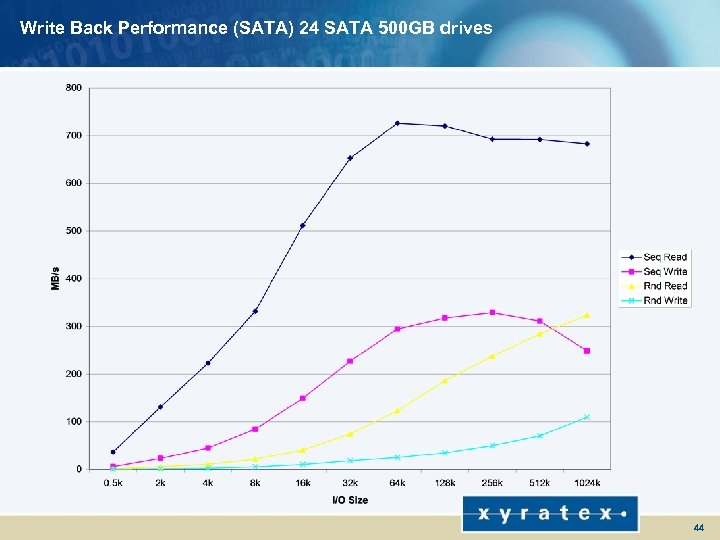

Write Back Performance (SATA) 24 SATA 500 GB drives 44

Write Back Performance (SATA) 24 SATA 500 GB drives 44

Queue depth SATA performance

Queue depth SATA performance

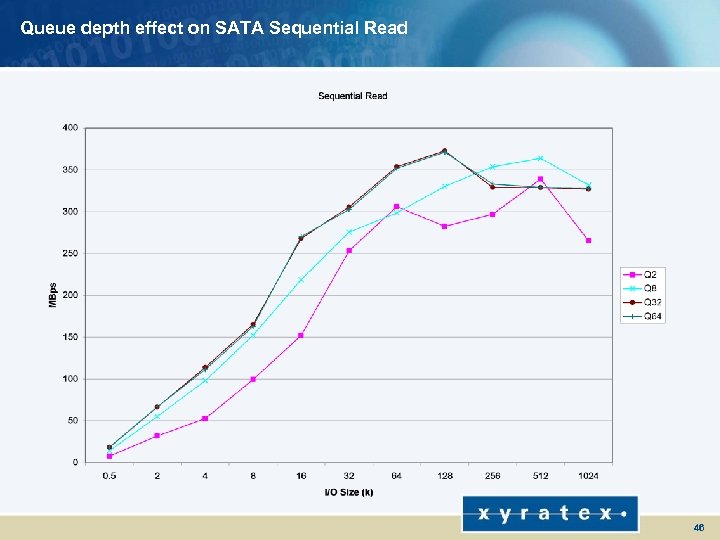

Queue depth effect on SATA Sequential Read 46

Queue depth effect on SATA Sequential Read 46

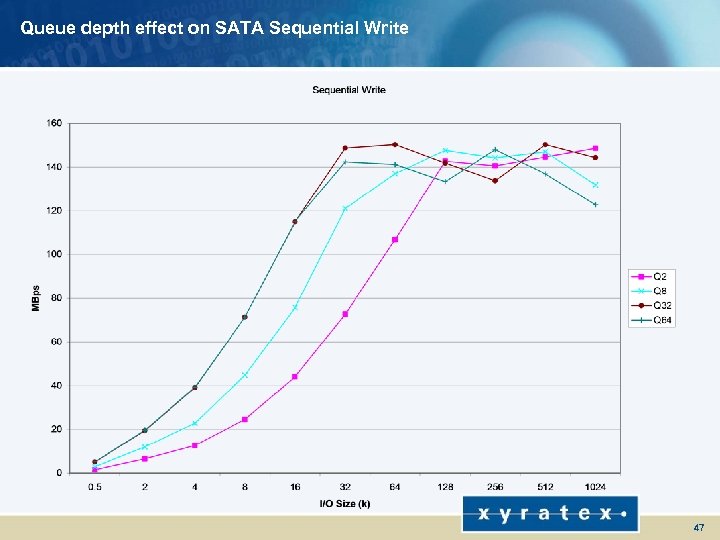

Queue depth effect on SATA Sequential Write 47

Queue depth effect on SATA Sequential Write 47

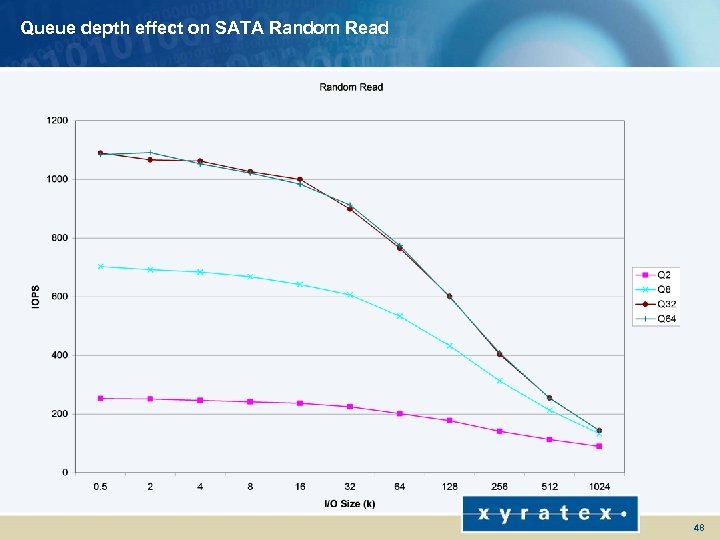

Queue depth effect on SATA Random Read 48

Queue depth effect on SATA Random Read 48

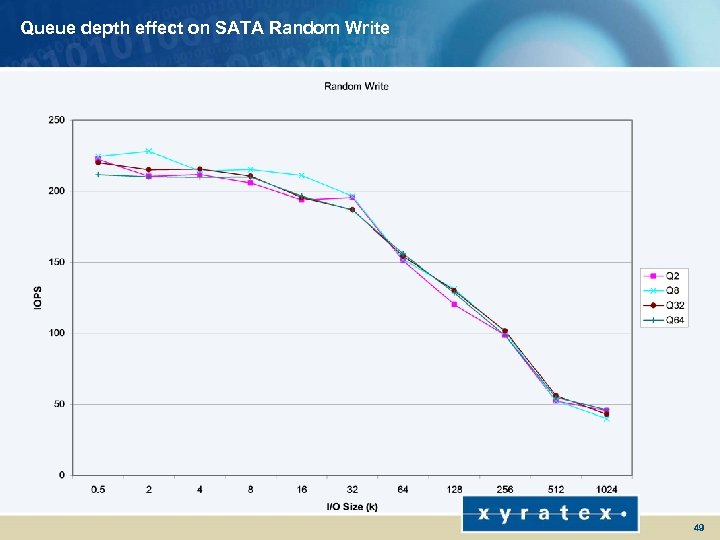

Queue depth effect on SATA Random Write 49

Queue depth effect on SATA Random Write 49

Queue depth SAS performance

Queue depth SAS performance

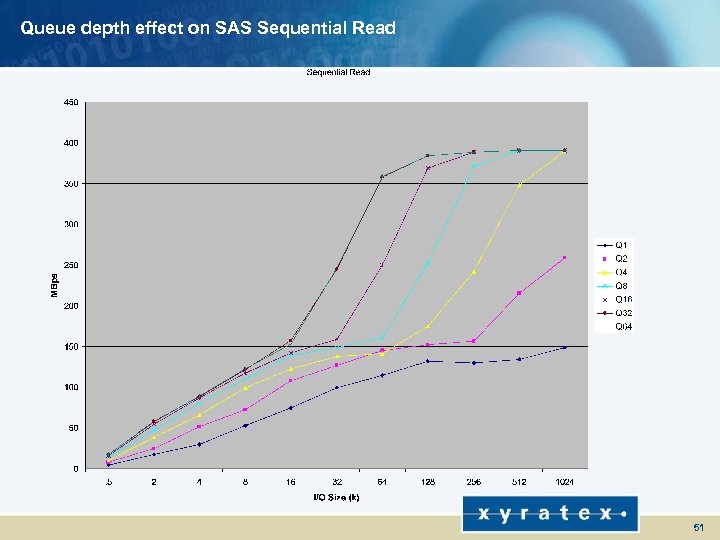

Queue depth effect on SAS Sequential Read 51

Queue depth effect on SAS Sequential Read 51

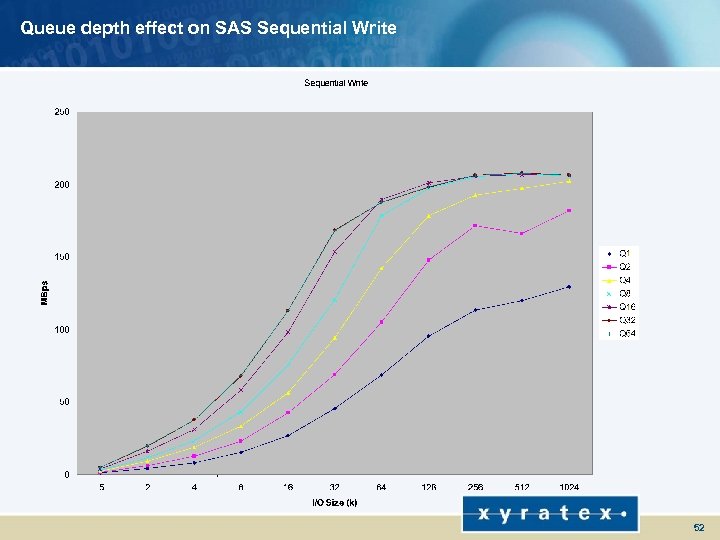

Queue depth effect on SAS Sequential Write 52

Queue depth effect on SAS Sequential Write 52

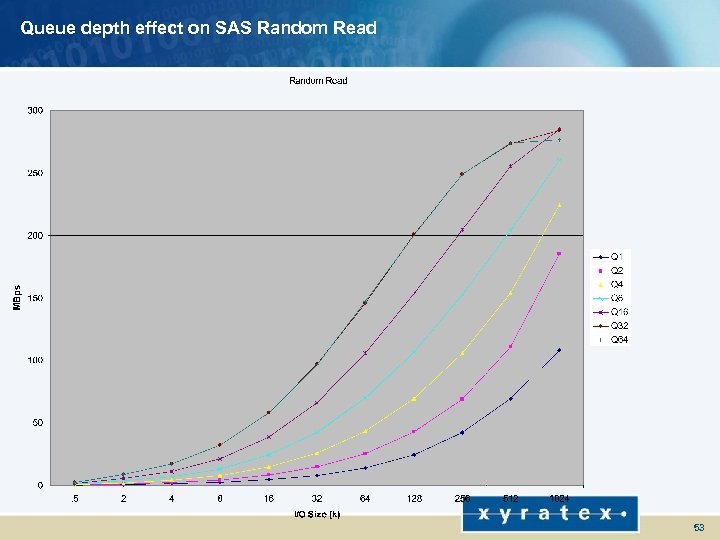

Queue depth effect on SAS Random Read 53

Queue depth effect on SAS Random Read 53

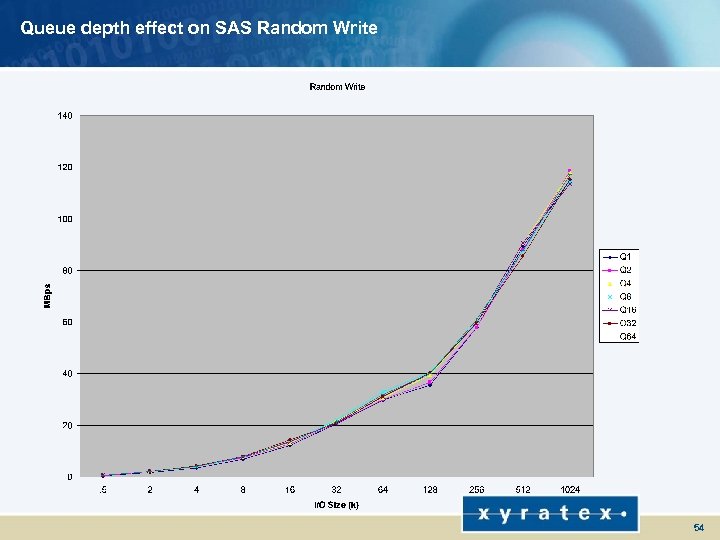

Queue depth effect on SAS Random Write 54

Queue depth effect on SAS Random Write 54

Single controller SAS performance

Single controller SAS performance

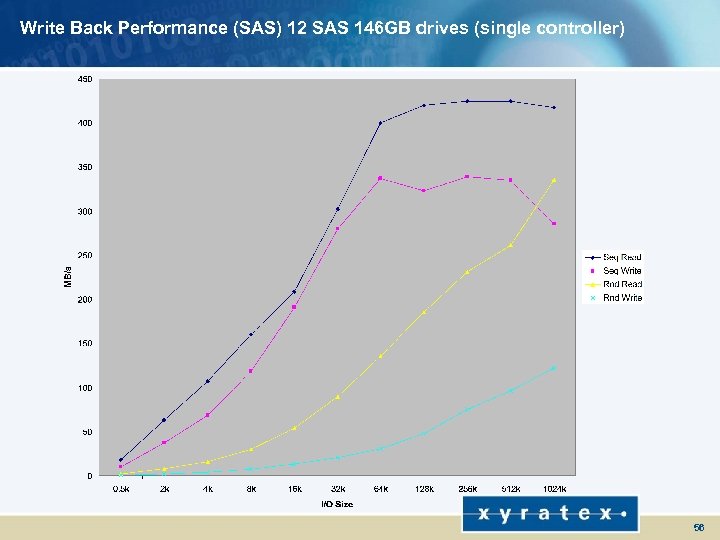

Write Back Performance (SAS) 12 SAS 146 GB drives (single controller) 56

Write Back Performance (SAS) 12 SAS 146 GB drives (single controller) 56

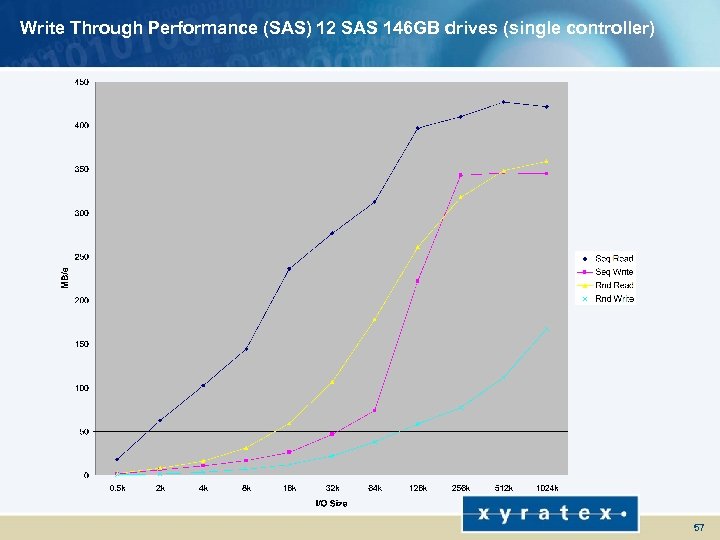

Write Through Performance (SAS) 12 SAS 146 GB drives (single controller) 57

Write Through Performance (SAS) 12 SAS 146 GB drives (single controller) 57

Factors which affect performance l Queue depth l Chunk size l WB Vs WT cache l FS write statistics l Read Ahead Cache statistics l Multiple LDs per array 58

Factors which affect performance l Queue depth l Chunk size l WB Vs WT cache l FS write statistics l Read Ahead Cache statistics l Multiple LDs per array 58

Storview Path Manager - MPIO

Storview Path Manager - MPIO

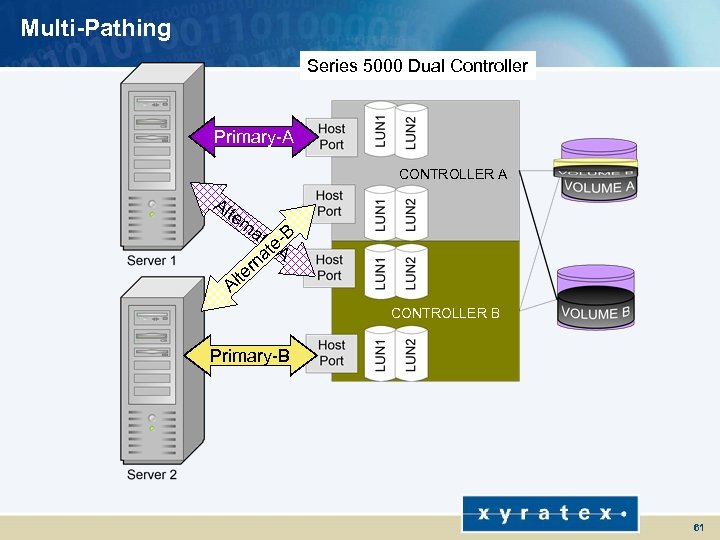

Multi-Pathing l Increases availability to critical data and databases l Protection from n n n Connection failures Cable failures Switch failures HBA failures Controller failures 60

Multi-Pathing l Increases availability to critical data and databases l Protection from n n n Connection failures Cable failures Switch failures HBA failures Controller failures 60

Multi-Pathing Series 5000 Dual Controller Primary-A CONTROLLER A Al te rn at e-e-B at. A rn lte A CONTROLLER B Primary-B 61

Multi-Pathing Series 5000 Dual Controller Primary-A CONTROLLER A Al te rn at e-e-B at. A rn lte A CONTROLLER B Primary-B 61

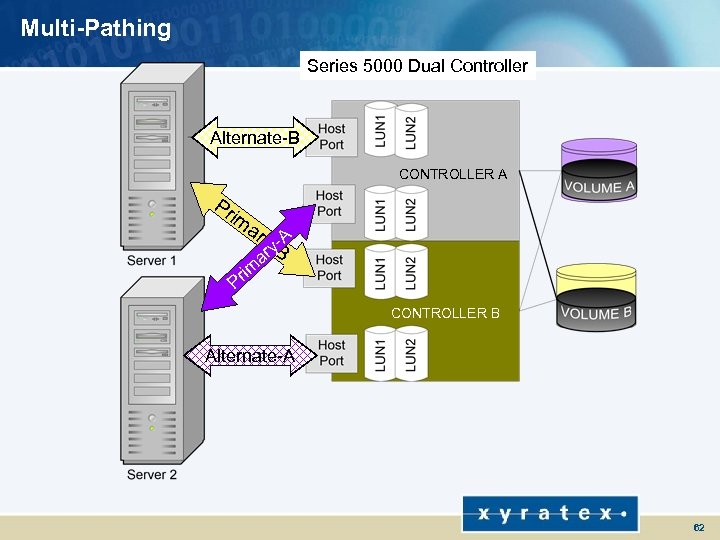

Multi-Pathing Series 5000 Dual Controller Alternate-B CONTROLLER A Pr im ar y-y-A r. B a rim P CONTROLLER B Alternate-A 62

Multi-Pathing Series 5000 Dual Controller Alternate-B CONTROLLER A Pr im ar y-y-A r. B a rim P CONTROLLER B Alternate-A 62

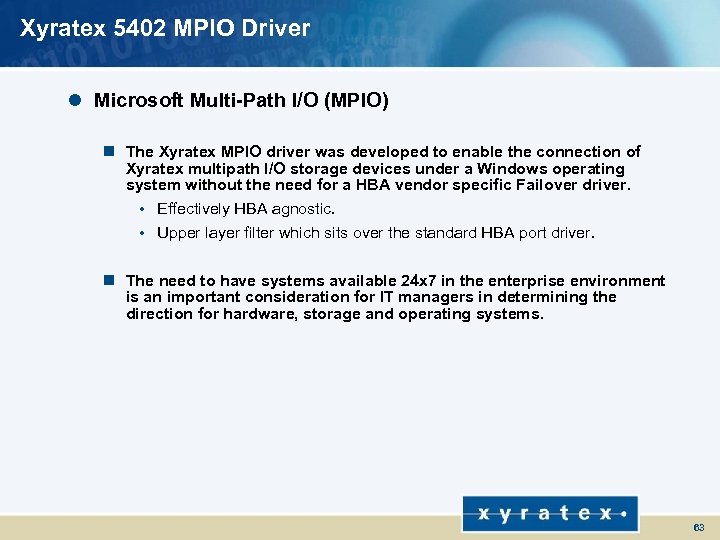

Xyratex 5402 MPIO Driver l Microsoft Multi-Path I/O (MPIO) n The Xyratex MPIO driver was developed to enable the connection of Xyratex multipath I/O storage devices under a Windows operating system without the need for a HBA vendor specific Failover driver. • Effectively HBA agnostic. • Upper layer filter which sits over the standard HBA port driver. n The need to have systems available 24 x 7 in the enterprise environment is an important consideration for IT managers in determining the direction for hardware, storage and operating systems. 63

Xyratex 5402 MPIO Driver l Microsoft Multi-Path I/O (MPIO) n The Xyratex MPIO driver was developed to enable the connection of Xyratex multipath I/O storage devices under a Windows operating system without the need for a HBA vendor specific Failover driver. • Effectively HBA agnostic. • Upper layer filter which sits over the standard HBA port driver. n The need to have systems available 24 x 7 in the enterprise environment is an important consideration for IT managers in determining the direction for hardware, storage and operating systems. 63

Xyratex MPIO Driver The default MPIO behaviour requires no user intervention for standard operation. There are several options which may be used which are described later. The Operating System automatically scans for all paths to the same LUN. The LUN is identified via SCSI Inquiry Page 0 x 80 or 0 x 83. SCSI Inquiry Page 0 x 80 is the Serial Number Page SCSI Inquiry Page 0 x 83 is the Device Identification Page Due to this, the MPIO driver must look for the same SCSI Inquiry string as in the 5402 firmware 64

Xyratex MPIO Driver The default MPIO behaviour requires no user intervention for standard operation. There are several options which may be used which are described later. The Operating System automatically scans for all paths to the same LUN. The LUN is identified via SCSI Inquiry Page 0 x 80 or 0 x 83. SCSI Inquiry Page 0 x 80 is the Serial Number Page SCSI Inquiry Page 0 x 83 is the Device Identification Page Due to this, the MPIO driver must look for the same SCSI Inquiry string as in the 5402 firmware 64

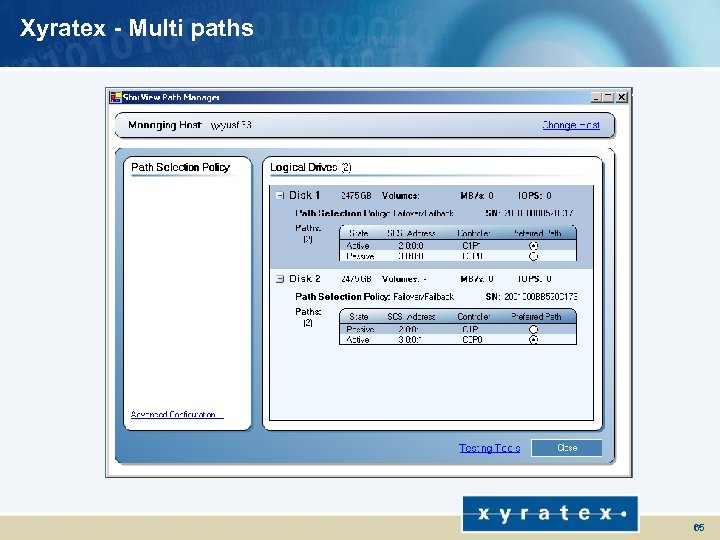

Xyratex - Multi paths 65

Xyratex - Multi paths 65

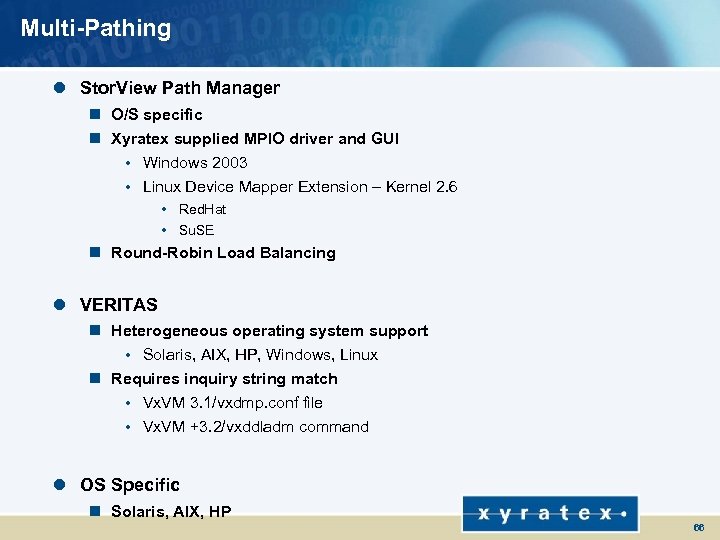

Multi-Pathing l Stor. View Path Manager n O/S specific n Xyratex supplied MPIO driver and GUI • Windows 2003 • Linux Device Mapper Extension – Kernel 2. 6 • Red. Hat • Su. SE n Round-Robin Load Balancing l VERITAS n Heterogeneous operating system support • Solaris, AIX, HP, Windows, Linux n Requires inquiry string match • Vx. VM 3. 1/vxdmp. conf file • Vx. VM +3. 2/vxddladm command l OS Specific n Solaris, AIX, HP 66

Multi-Pathing l Stor. View Path Manager n O/S specific n Xyratex supplied MPIO driver and GUI • Windows 2003 • Linux Device Mapper Extension – Kernel 2. 6 • Red. Hat • Su. SE n Round-Robin Load Balancing l VERITAS n Heterogeneous operating system support • Solaris, AIX, HP, Windows, Linux n Requires inquiry string match • Vx. VM 3. 1/vxdmp. conf file • Vx. VM +3. 2/vxddladm command l OS Specific n Solaris, AIX, HP 66

Snapshot

Snapshot

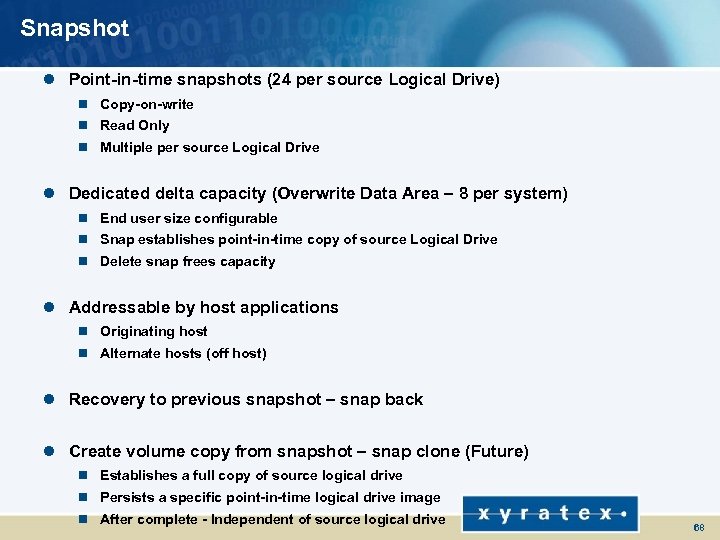

Snapshot l Point-in-time snapshots (24 per source Logical Drive) n Copy-on-write n Read Only n Multiple per source Logical Drive l Dedicated delta capacity (Overwrite Data Area – 8 per system) n End user size configurable n Snap establishes point-in-time copy of source Logical Drive n Delete snap frees capacity l Addressable by host applications n Originating host n Alternate hosts (off host) l Recovery to previous snapshot – snap back l Create volume copy from snapshot – snap clone (Future) n Establishes a full copy of source logical drive n Persists a specific point-in-time logical drive image n After complete - Independent of source logical drive 68

Snapshot l Point-in-time snapshots (24 per source Logical Drive) n Copy-on-write n Read Only n Multiple per source Logical Drive l Dedicated delta capacity (Overwrite Data Area – 8 per system) n End user size configurable n Snap establishes point-in-time copy of source Logical Drive n Delete snap frees capacity l Addressable by host applications n Originating host n Alternate hosts (off host) l Recovery to previous snapshot – snap back l Create volume copy from snapshot – snap clone (Future) n Establishes a full copy of source logical drive n Persists a specific point-in-time logical drive image n After complete - Independent of source logical drive 68

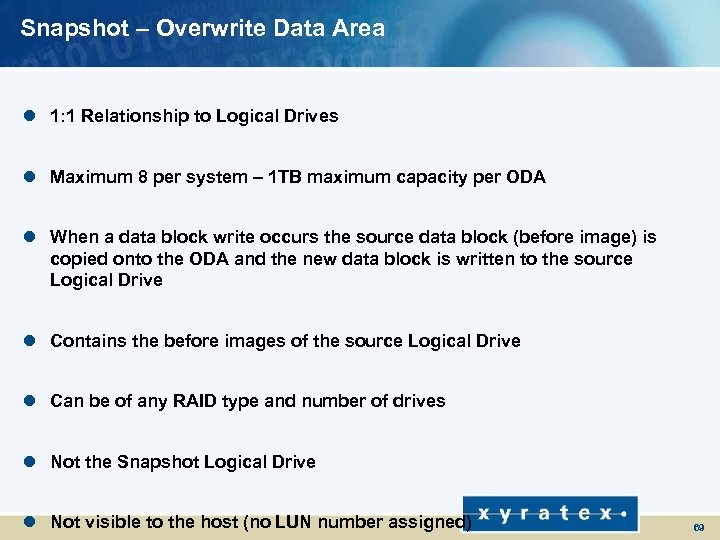

Snapshot – Overwrite Data Area l 1: 1 Relationship to Logical Drives l Maximum 8 per system – 1 TB maximum capacity per ODA l When a data block write occurs the source data block (before image) is copied onto the ODA and the new data block is written to the source Logical Drive l Contains the before images of the source Logical Drive l Can be of any RAID type and number of drives l Not the Snapshot Logical Drive l Not visible to the host (no LUN number assigned) 69

Snapshot – Overwrite Data Area l 1: 1 Relationship to Logical Drives l Maximum 8 per system – 1 TB maximum capacity per ODA l When a data block write occurs the source data block (before image) is copied onto the ODA and the new data block is written to the source Logical Drive l Contains the before images of the source Logical Drive l Can be of any RAID type and number of drives l Not the Snapshot Logical Drive l Not visible to the host (no LUN number assigned) 69

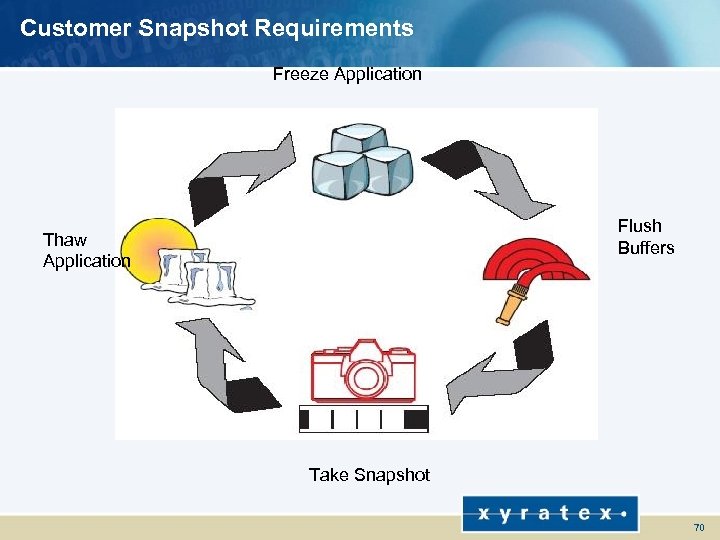

Customer Snapshot Requirements Freeze Application Flush Buffers Thaw Application Take Snapshot 70

Customer Snapshot Requirements Freeze Application Flush Buffers Thaw Application Take Snapshot 70

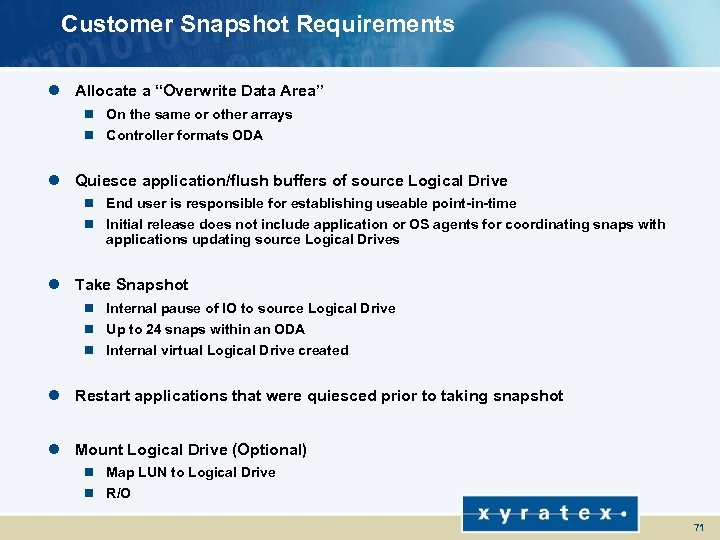

Customer Snapshot Requirements l Allocate a “Overwrite Data Area” n On the same or other arrays n Controller formats ODA l Quiesce application/flush buffers of source Logical Drive n End user is responsible for establishing useable point-in-time n Initial release does not include application or OS agents for coordinating snaps with applications updating source Logical Drives l Take Snapshot n Internal pause of IO to source Logical Drive n Up to 24 snaps within an ODA n Internal virtual Logical Drive created l Restart applications that were quiesced prior to taking snapshot l Mount Logical Drive (Optional) n Map LUN to Logical Drive n R/O 71

Customer Snapshot Requirements l Allocate a “Overwrite Data Area” n On the same or other arrays n Controller formats ODA l Quiesce application/flush buffers of source Logical Drive n End user is responsible for establishing useable point-in-time n Initial release does not include application or OS agents for coordinating snaps with applications updating source Logical Drives l Take Snapshot n Internal pause of IO to source Logical Drive n Up to 24 snaps within an ODA n Internal virtual Logical Drive created l Restart applications that were quiesced prior to taking snapshot l Mount Logical Drive (Optional) n Map LUN to Logical Drive n R/O 71

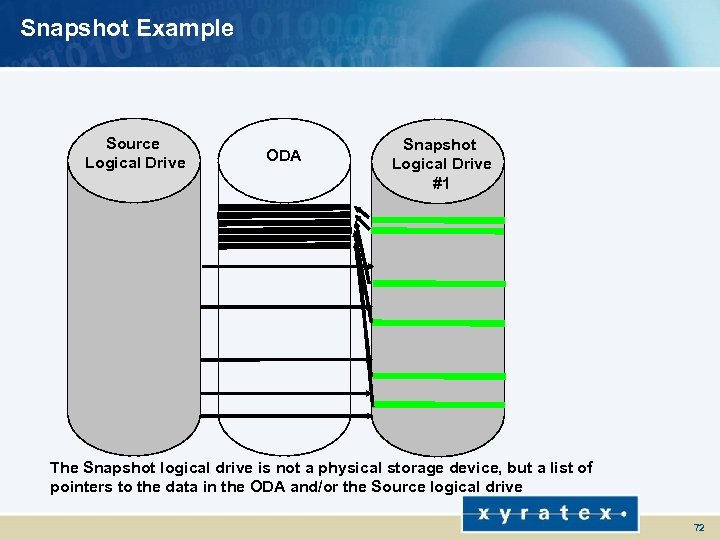

Snapshot Example Source Logical Drive ODA Snapshot Logical Drive #1 The Snapshot logical drive is not a physical storage device, but a list of pointers to the data in the ODA and/or the Source logical drive 72

Snapshot Example Source Logical Drive ODA Snapshot Logical Drive #1 The Snapshot logical drive is not a physical storage device, but a list of pointers to the data in the ODA and/or the Source logical drive 72

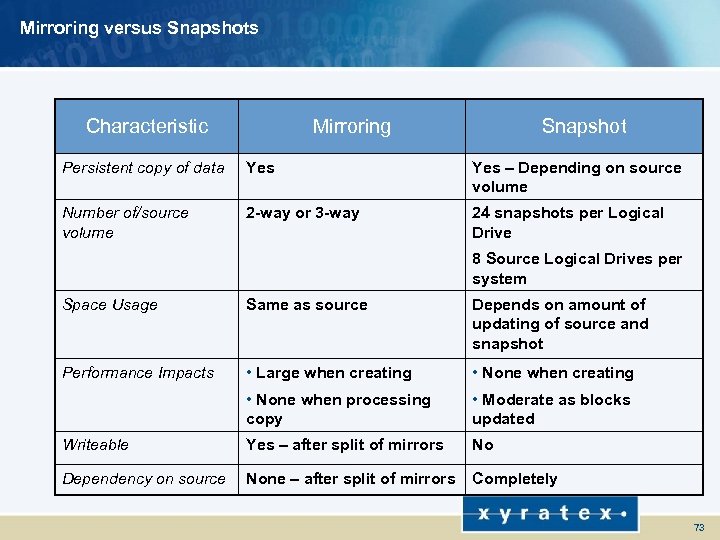

Mirroring versus Snapshots Characteristic Mirroring Snapshot Persistent copy of data Yes – Depending on source volume Number of/source volume 2 -way or 3 -way 24 snapshots per Logical Drive 8 Source Logical Drives per system Space Usage Same as source Depends on amount of updating of source and snapshot Performance Impacts • Large when creating • None when processing copy • Moderate as blocks updated Writeable Yes – after split of mirrors No Dependency on source None – after split of mirrors Completely 73

Mirroring versus Snapshots Characteristic Mirroring Snapshot Persistent copy of data Yes – Depending on source volume Number of/source volume 2 -way or 3 -way 24 snapshots per Logical Drive 8 Source Logical Drives per system Space Usage Same as source Depends on amount of updating of source and snapshot Performance Impacts • Large when creating • None when processing copy • Moderate as blocks updated Writeable Yes – after split of mirrors No Dependency on source None – after split of mirrors Completely 73

RAID 6

RAID 6

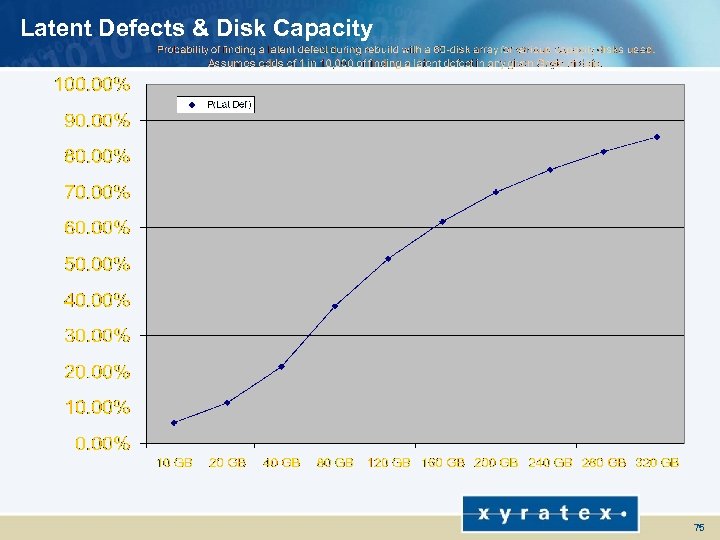

Latent Defects & Disk Capacity 75

Latent Defects & Disk Capacity 75

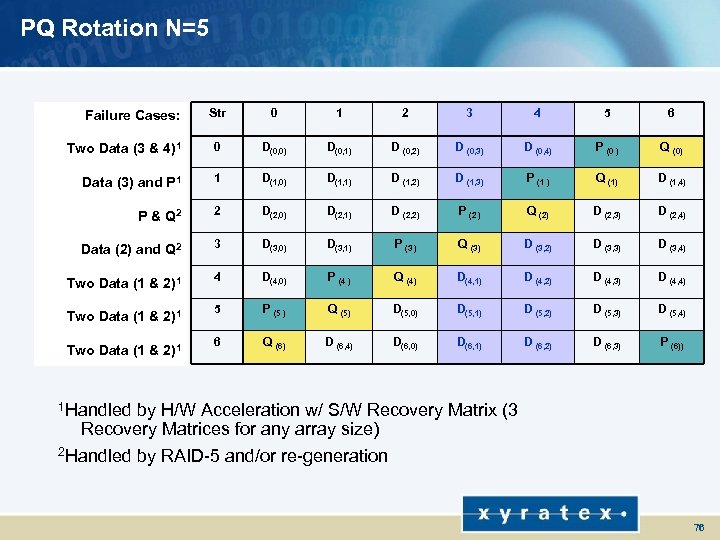

PQ Rotation N=5 Str 0 1 2 3 4 5 6 Two Data (3 & 4)1 0 D(0, 0) D(0, 1) D (0, 2) D (0, 3) D (0, 4) P (0 ) Q (0) Data (3) and P 1 1 D(1, 0) D(1, 1) D (1, 2) D (1, 3) P (1 ) Q (1) D (1, 4) P & Q 2 2 D(2, 0) D(2, 1) D (2, 2) P (2 ) Q (2) D (2, 3) D (2, 4) Data (2) and Q 2 3 D(3, 0) D(3, 1) P (3 ) Q (3) D (3, 2) D (3, 3) D (3, 4) Two Data (1 & 2)1 4 D(4, 0) P (4 ) Q (4) D(4, 1) D (4, 2) D (4, 3) D (4, 4) 5 P (5 ) Q (5) D(5, 0) D(5, 1) D (5, 2) D (5, 3) D (5, 4) 6 Q (6) D (6, 4) D(6, 0) D(6, 1) D (6, 2) D (6, 3) P (6)) Failure Cases: Two Data (1 & 2)1 1 Handled by H/W Acceleration w/ S/W Recovery Matrix (3 Recovery Matrices for any array size) 2 Handled by RAID-5 and/or re-generation 76

PQ Rotation N=5 Str 0 1 2 3 4 5 6 Two Data (3 & 4)1 0 D(0, 0) D(0, 1) D (0, 2) D (0, 3) D (0, 4) P (0 ) Q (0) Data (3) and P 1 1 D(1, 0) D(1, 1) D (1, 2) D (1, 3) P (1 ) Q (1) D (1, 4) P & Q 2 2 D(2, 0) D(2, 1) D (2, 2) P (2 ) Q (2) D (2, 3) D (2, 4) Data (2) and Q 2 3 D(3, 0) D(3, 1) P (3 ) Q (3) D (3, 2) D (3, 3) D (3, 4) Two Data (1 & 2)1 4 D(4, 0) P (4 ) Q (4) D(4, 1) D (4, 2) D (4, 3) D (4, 4) 5 P (5 ) Q (5) D(5, 0) D(5, 1) D (5, 2) D (5, 3) D (5, 4) 6 Q (6) D (6, 4) D(6, 0) D(6, 1) D (6, 2) D (6, 3) P (6)) Failure Cases: Two Data (1 & 2)1 1 Handled by H/W Acceleration w/ S/W Recovery Matrix (3 Recovery Matrices for any array size) 2 Handled by RAID-5 and/or re-generation 76

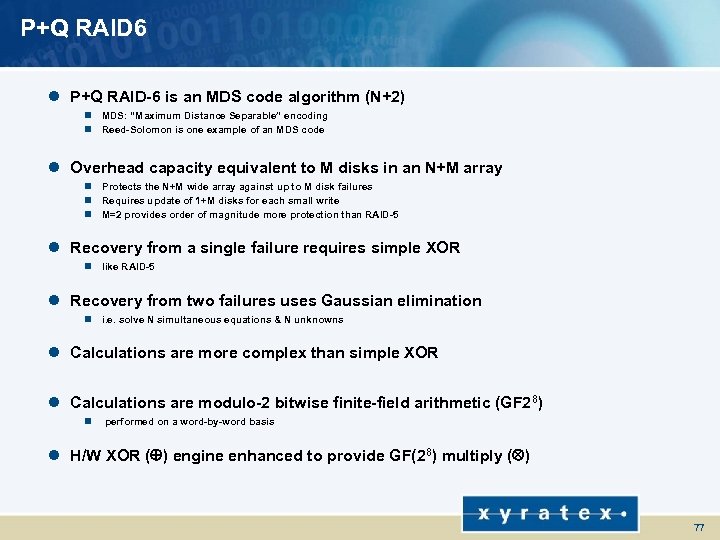

P+Q RAID 6 l P+Q RAID-6 is an MDS code algorithm (N+2) n MDS: “Maximum Distance Separable” encoding n Reed-Solomon is one example of an MDS code l Overhead capacity equivalent to M disks in an N+M array n Protects the N+M wide array against up to M disk failures n Requires update of 1+M disks for each small write n M=2 provides order of magnitude more protection than RAID-5 l Recovery from a single failure requires simple XOR n like RAID-5 l Recovery from two failures uses Gaussian elimination n i. e. solve N simultaneous equations & N unknowns l Calculations are more complex than simple XOR l Calculations are modulo-2 bitwise finite-field arithmetic (GF 28) n performed on a word-by-word basis l H/W XOR ( ) engine enhanced to provide GF(28) multiply ( ) 77

P+Q RAID 6 l P+Q RAID-6 is an MDS code algorithm (N+2) n MDS: “Maximum Distance Separable” encoding n Reed-Solomon is one example of an MDS code l Overhead capacity equivalent to M disks in an N+M array n Protects the N+M wide array against up to M disk failures n Requires update of 1+M disks for each small write n M=2 provides order of magnitude more protection than RAID-5 l Recovery from a single failure requires simple XOR n like RAID-5 l Recovery from two failures uses Gaussian elimination n i. e. solve N simultaneous equations & N unknowns l Calculations are more complex than simple XOR l Calculations are modulo-2 bitwise finite-field arithmetic (GF 28) n performed on a word-by-word basis l H/W XOR ( ) engine enhanced to provide GF(28) multiply ( ) 77

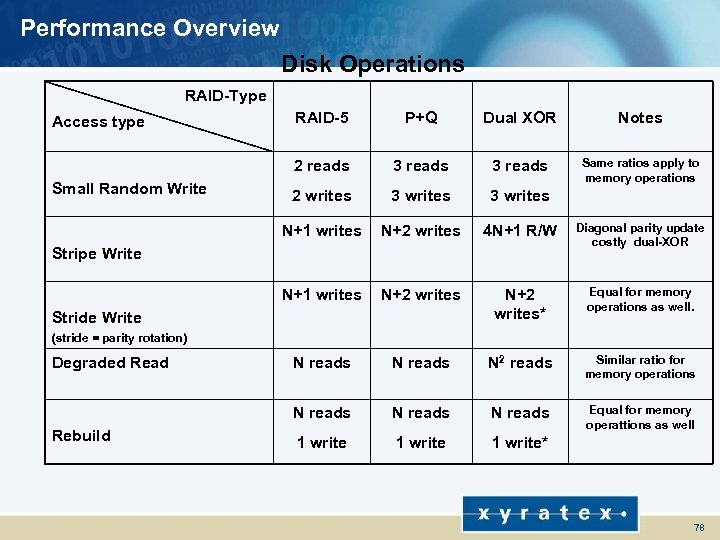

Performance Overview Disk Operations RAID-Type P+Q Dual XOR Notes 3 reads Same ratios apply to memory operations 2 writes 3 writes N+1 writes N+2 writes 4 N+1 R/W Diagonal parity update costly dual-XOR N+1 writes N+2 writes* Equal for memory operations as well. N reads N 2 reads Similar ratio for memory operations N reads Small Random Write RAID-5 2 reads Access type N reads Equal for memory operattions as well 1 write* Stripe Write Stride Write (stride = parity rotation) Degraded Read Rebuild 78

Performance Overview Disk Operations RAID-Type P+Q Dual XOR Notes 3 reads Same ratios apply to memory operations 2 writes 3 writes N+1 writes N+2 writes 4 N+1 R/W Diagonal parity update costly dual-XOR N+1 writes N+2 writes* Equal for memory operations as well. N reads N 2 reads Similar ratio for memory operations N reads Small Random Write RAID-5 2 reads Access type N reads Equal for memory operattions as well 1 write* Stripe Write Stride Write (stride = parity rotation) Degraded Read Rebuild 78