151a894f19279e1866e2b709cad283d8.ppt

- Количество слайдов: 37

The extension of the Anaphora Resolution Exercise (ARE) to Spanish and Catalan Constantin Orasan University of Wolverhampton, UK and Marta Recasens Universitat de Barcelona, Spain

The extension of the Anaphora Resolution Exercise (ARE) to Spanish and Catalan Constantin Orasan University of Wolverhampton, UK and Marta Recasens Universitat de Barcelona, Spain

Structure 1. 2. 3. 4. 5. Description of ARE 2007 English corpus used in ARE 2007 The An. Cora corpora Adapting the An. Cora corpora for ARE 2009 Plans for ARE 2009

Structure 1. 2. 3. 4. 5. Description of ARE 2007 English corpus used in ARE 2007 The An. Cora corpora Adapting the An. Cora corpora for ARE 2009 Plans for ARE 2009

The Anaphora Resolution Exercises (AREs) l l the goal of ARE was to “develop discourse anaphora resolution methods and to evaluate them in a common and consistent manner“ we organise them in conjunction with DAARC conferences thought as multilingual evaluations not supposed to be restricted only to pronominal and NP coreference Do we need a roadmap?

The Anaphora Resolution Exercises (AREs) l l the goal of ARE was to “develop discourse anaphora resolution methods and to evaluate them in a common and consistent manner“ we organise them in conjunction with DAARC conferences thought as multilingual evaluations not supposed to be restricted only to pronominal and NP coreference Do we need a roadmap?

ARE 2007 l l l l organised in conjunction with DAARC 2007 only English texts very short time to organise it can be considered a dry-run for ARE 2009 focused on 4 tasks 3 participants, 8 runs submitted we used the NP 4 E corpus, a corpus of newswire texts

ARE 2007 l l l l organised in conjunction with DAARC 2007 only English texts very short time to organise it can be considered a dry-run for ARE 2009 focused on 4 tasks 3 participants, 8 runs submitted we used the NP 4 E corpus, a corpus of newswire texts

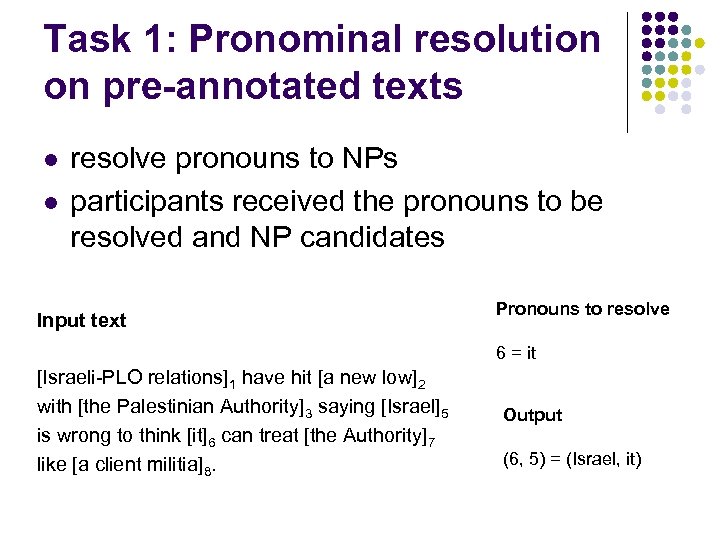

Task 1: Pronominal resolution on pre-annotated texts l l resolve pronouns to NPs participants received the pronouns to be resolved and NP candidates Input text Pronouns to resolve 6 = it [Israeli-PLO relations]1 have hit [a new low]2 with [the Palestinian Authority]3 saying [Israel]5 is wrong to think [it]6 can treat [the Authority]7 like [a client militia]8. Output (6, 5) = (Israel, it)

Task 1: Pronominal resolution on pre-annotated texts l l resolve pronouns to NPs participants received the pronouns to be resolved and NP candidates Input text Pronouns to resolve 6 = it [Israeli-PLO relations]1 have hit [a new low]2 with [the Palestinian Authority]3 saying [Israel]5 is wrong to think [it]6 can treat [the Authority]7 like [a client militia]8. Output (6, 5) = (Israel, it)

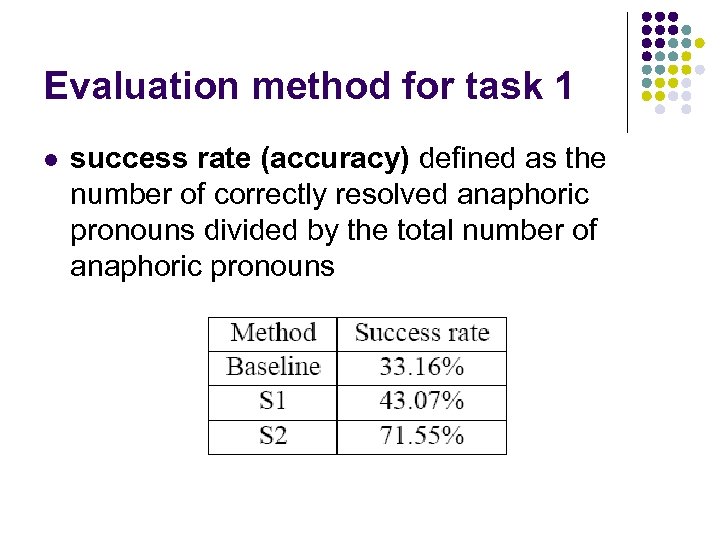

Evaluation method for task 1 l success rate (accuracy) defined as the number of correctly resolved anaphoric pronouns divided by the total number of anaphoric pronouns

Evaluation method for task 1 l success rate (accuracy) defined as the number of correctly resolved anaphoric pronouns divided by the total number of anaphoric pronouns

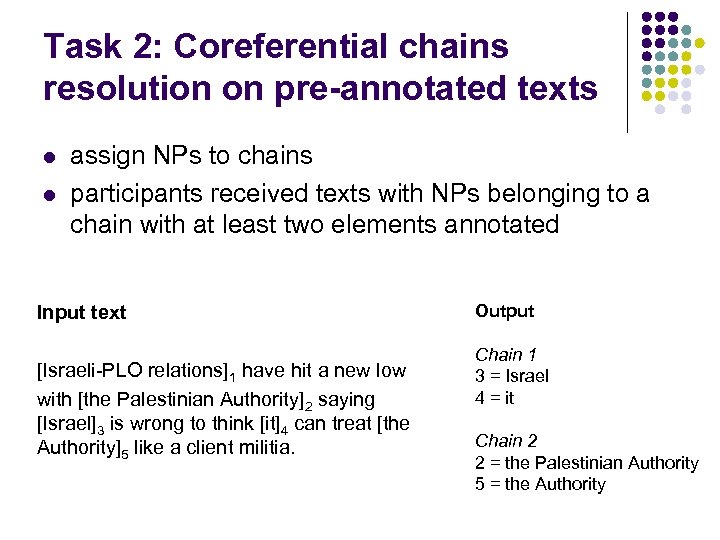

Task 2: Coreferential chains resolution on pre-annotated texts l l assign NPs to chains participants received texts with NPs belonging to a chain with at least two elements annotated Input text [Israeli-PLO relations]1 have hit a new low with [the Palestinian Authority]2 saying [Israel]3 is wrong to think [it]4 can treat [the Authority]5 like a client militia. Output Chain 1 3 = Israel 4 = it Chain 2 2 = the Palestinian Authority 5 = the Authority

Task 2: Coreferential chains resolution on pre-annotated texts l l assign NPs to chains participants received texts with NPs belonging to a chain with at least two elements annotated Input text [Israeli-PLO relations]1 have hit a new low with [the Palestinian Authority]2 saying [Israel]3 is wrong to think [it]4 can treat [the Authority]5 like a client militia. Output Chain 1 3 = Israel 4 = it Chain 2 2 = the Palestinian Authority 5 = the Authority

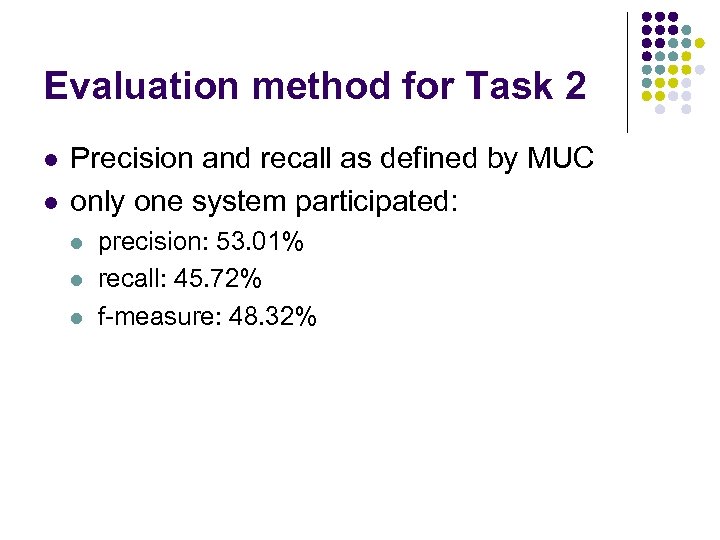

Evaluation method for Task 2 l l Precision and recall as defined by MUC only one system participated: l l l precision: 53. 01% recall: 45. 72% f-measure: 48. 32%

Evaluation method for Task 2 l l Precision and recall as defined by MUC only one system participated: l l l precision: 53. 01% recall: 45. 72% f-measure: 48. 32%

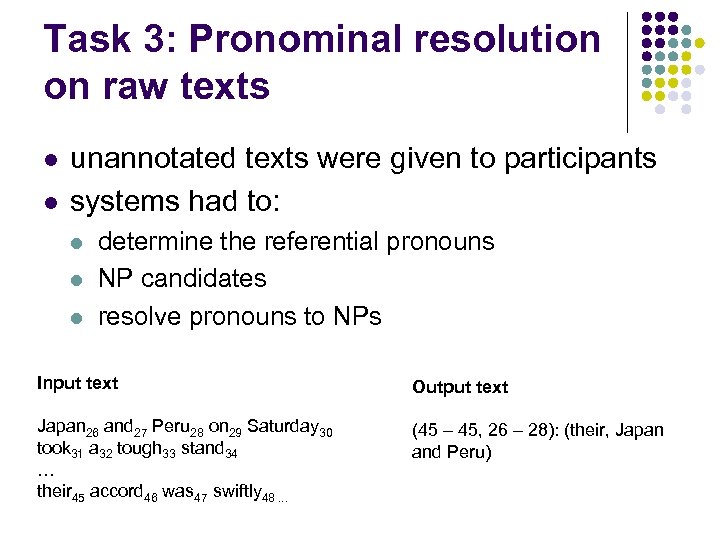

Task 3: Pronominal resolution on raw texts l l unannotated texts were given to participants systems had to: l l l determine the referential pronouns NP candidates resolve pronouns to NPs Input text Output text Japan 26 and 27 Peru 28 on 29 Saturday 30 took 31 a 32 tough 33 stand 34 … their 45 accord 46 was 47 swiftly 48. . . (45 – 45, 26 – 28): (their, Japan and Peru)

Task 3: Pronominal resolution on raw texts l l unannotated texts were given to participants systems had to: l l l determine the referential pronouns NP candidates resolve pronouns to NPs Input text Output text Japan 26 and 27 Peru 28 on 29 Saturday 30 took 31 a 32 tough 33 stand 34 … their 45 accord 46 was 47 swiftly 48. . . (45 – 45, 26 – 28): (their, Japan and Peru)

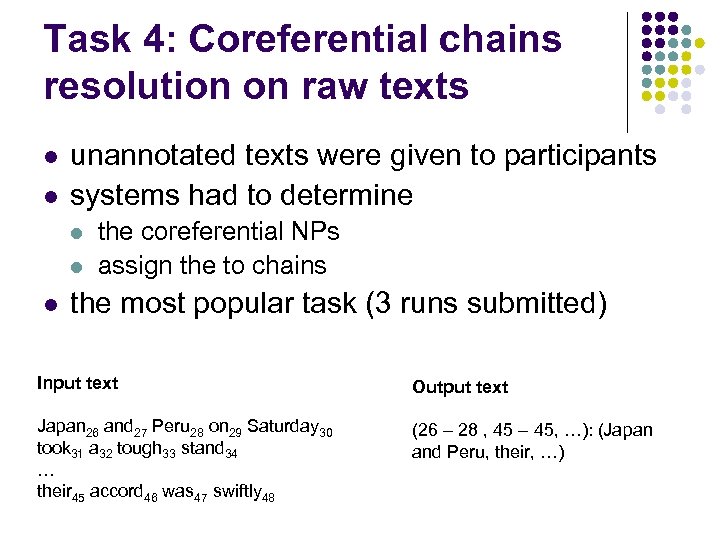

Task 4: Coreferential chains resolution on raw texts l l unannotated texts were given to participants systems had to determine l l l the coreferential NPs assign the to chains the most popular task (3 runs submitted) Input text Output text Japan 26 and 27 Peru 28 on 29 Saturday 30 took 31 a 32 tough 33 stand 34 … their 45 accord 46 was 47 swiftly 48 (26 – 28 , 45 – 45, …): (Japan and Peru, their, …)

Task 4: Coreferential chains resolution on raw texts l l unannotated texts were given to participants systems had to determine l l l the coreferential NPs assign the to chains the most popular task (3 runs submitted) Input text Output text Japan 26 and 27 Peru 28 on 29 Saturday 30 took 31 a 32 tough 33 stand 34 … their 45 accord 46 was 47 swiftly 48 (26 – 28 , 45 – 45, …): (Japan and Peru, their, …)

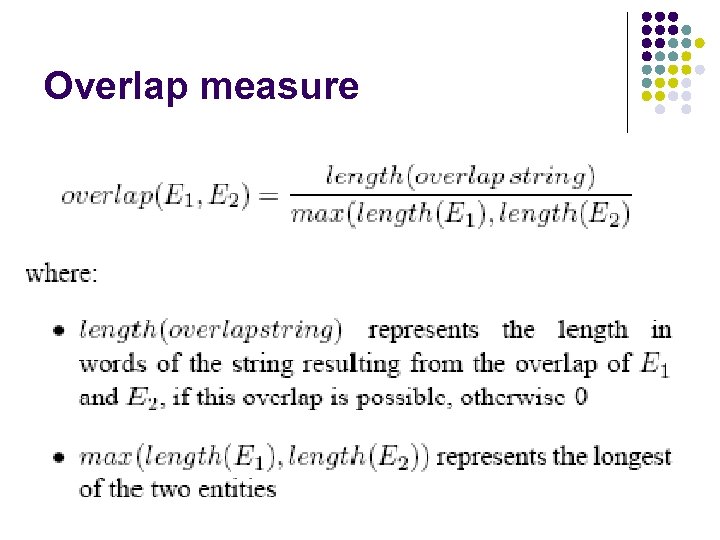

Overlap measure

Overlap measure

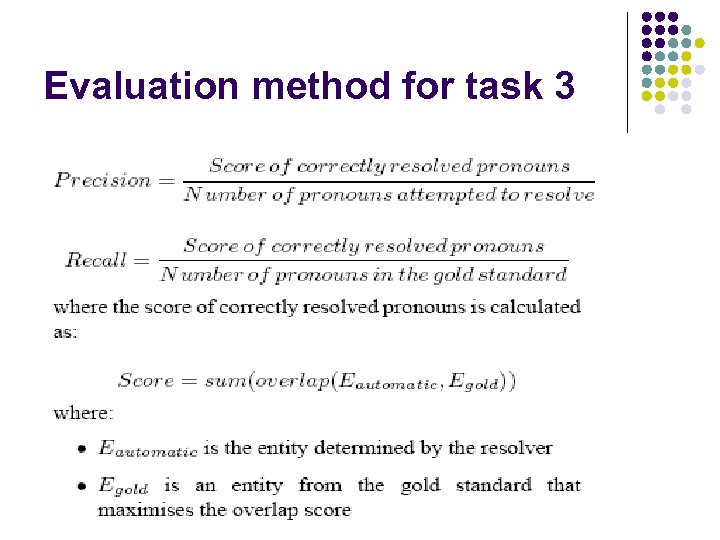

Evaluation method for task 3

Evaluation method for task 3

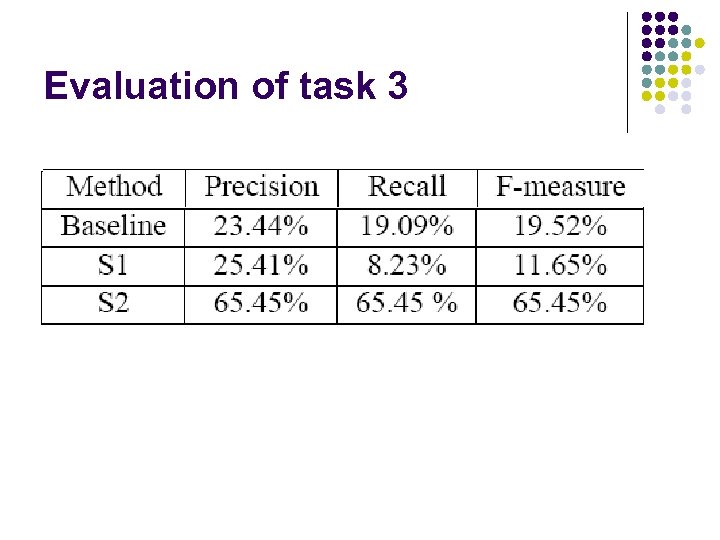

Evaluation of task 3

Evaluation of task 3

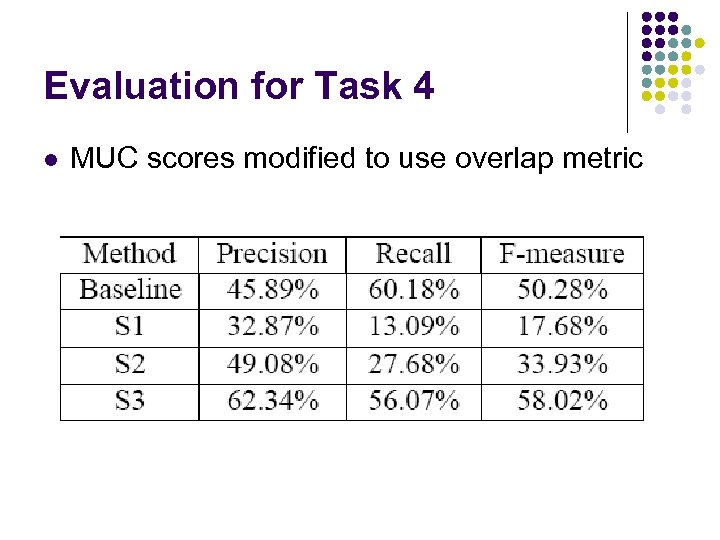

Evaluation for Task 4 l MUC scores modified to use overlap metric

Evaluation for Task 4 l MUC scores modified to use overlap metric

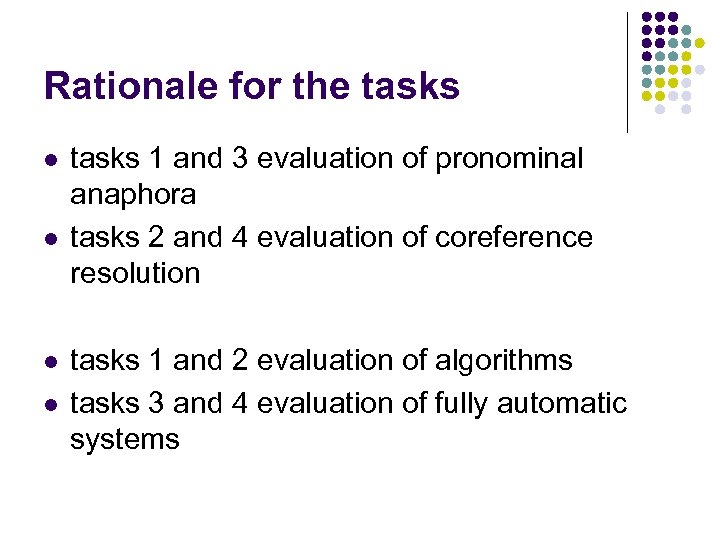

Rationale for the tasks l l tasks 1 and 3 evaluation of pronominal anaphora tasks 2 and 4 evaluation of coreference resolution tasks 1 and 2 evaluation of algorithms tasks 3 and 4 evaluation of fully automatic systems

Rationale for the tasks l l tasks 1 and 3 evaluation of pronominal anaphora tasks 2 and 4 evaluation of coreference resolution tasks 1 and 2 evaluation of algorithms tasks 3 and 4 evaluation of fully automatic systems

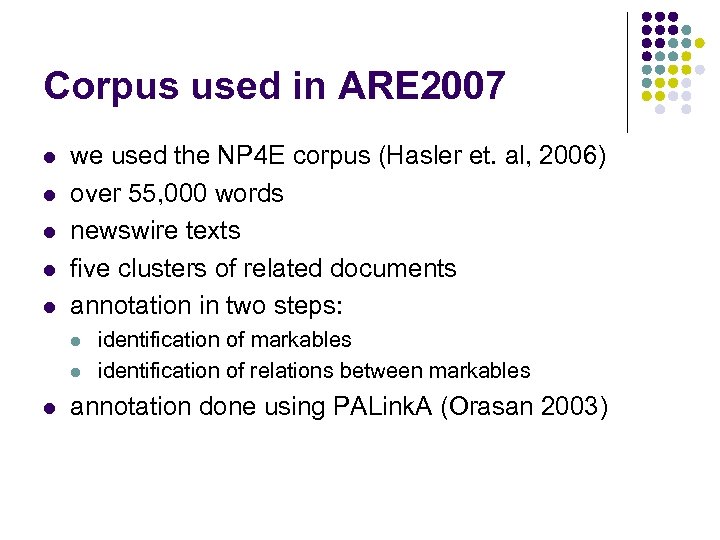

Corpus used in ARE 2007 l l l we used the NP 4 E corpus (Hasler et. al, 2006) over 55, 000 words newswire texts five clusters of related documents annotation in two steps: l l l identification of markables identification of relations between markables annotation done using PALink. A (Orasan 2003)

Corpus used in ARE 2007 l l l we used the NP 4 E corpus (Hasler et. al, 2006) over 55, 000 words newswire texts five clusters of related documents annotation in two steps: l l l identification of markables identification of relations between markables annotation done using PALink. A (Orasan 2003)

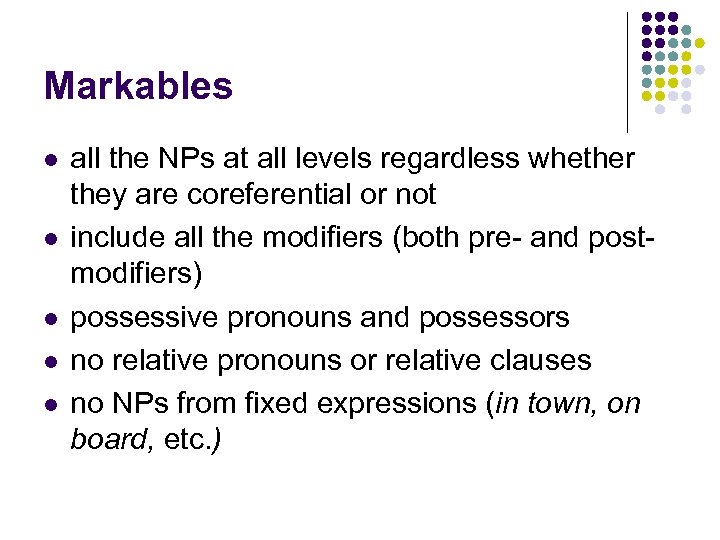

Markables l l l all the NPs at all levels regardless whether they are coreferential or not include all the modifiers (both pre- and postmodifiers) possessive pronouns and possessors no relative pronouns or relative clauses no NPs from fixed expressions (in town, on board, etc. )

Markables l l l all the NPs at all levels regardless whether they are coreferential or not include all the modifiers (both pre- and postmodifiers) possessive pronouns and possessors no relative pronouns or relative clauses no NPs from fixed expressions (in town, on board, etc. )

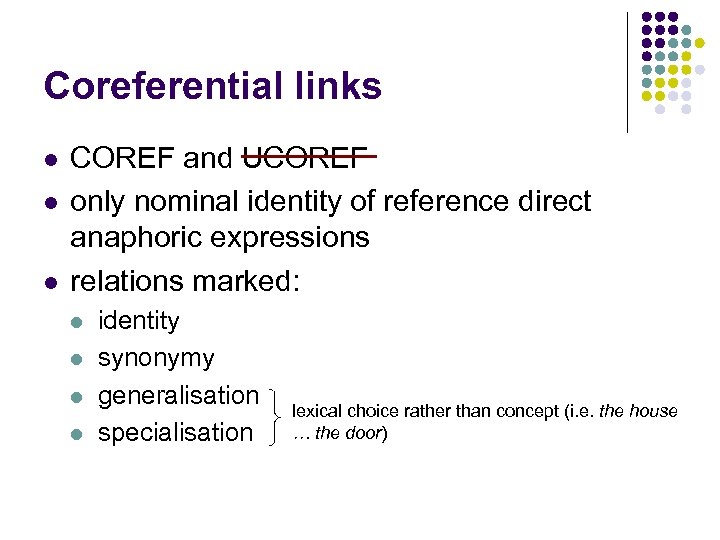

Coreferential links l l l COREF and UCOREF only nominal identity of reference direct anaphoric expressions relations marked: l l identity synonymy generalisation specialisation lexical choice rather than concept (i. e. the house … the door)

Coreferential links l l l COREF and UCOREF only nominal identity of reference direct anaphoric expressions relations marked: l l identity synonymy generalisation specialisation lexical choice rather than concept (i. e. the house … the door)

![Coreferential links l definite NPs in copular relation: [the blast] was [the worst attack Coreferential links l definite NPs in copular relation: [the blast] was [the worst attack](https://present5.com/presentation/151a894f19279e1866e2b709cad283d8/image-19.jpg) Coreferential links l definite NPs in copular relation: [the blast] was [the worst attack on [civilians] on [U. S. soil]] l definite appositives [Zaire Airlines, [the main commercial airline in [Zaire]]] l l text in brackets I, you, we in speech coreferential to their antecedents

Coreferential links l definite NPs in copular relation: [the blast] was [the worst attack on [civilians] on [U. S. soil]] l definite appositives [Zaire Airlines, [the main commercial airline in [Zaire]]] l l text in brackets I, you, we in speech coreferential to their antecedents

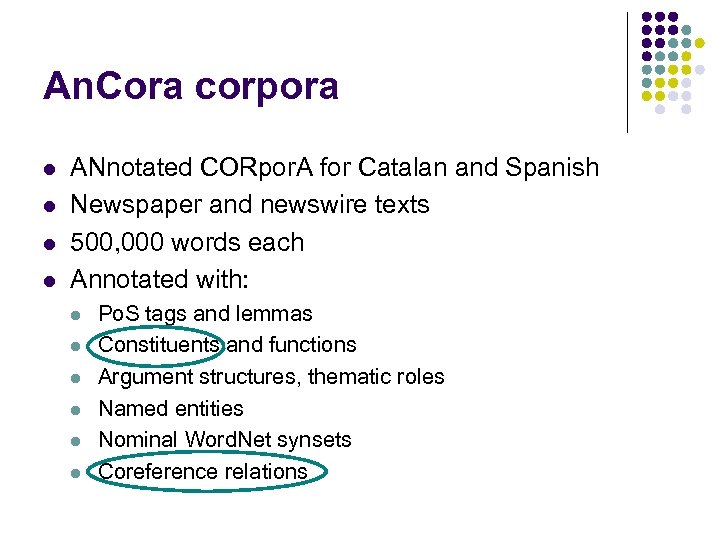

An. Cora corpora l l ANnotated CORpor. A for Catalan and Spanish Newspaper and newswire texts 500, 000 words each Annotated with: l l l Po. S tags and lemmas Constituents and functions Argument structures, thematic roles Named entities Nominal Word. Net synsets Coreference relations

An. Cora corpora l l ANnotated CORpor. A for Catalan and Spanish Newspaper and newswire texts 500, 000 words each Annotated with: l l l Po. S tags and lemmas Constituents and functions Argument structures, thematic roles Named entities Nominal Word. Net synsets Coreference relations

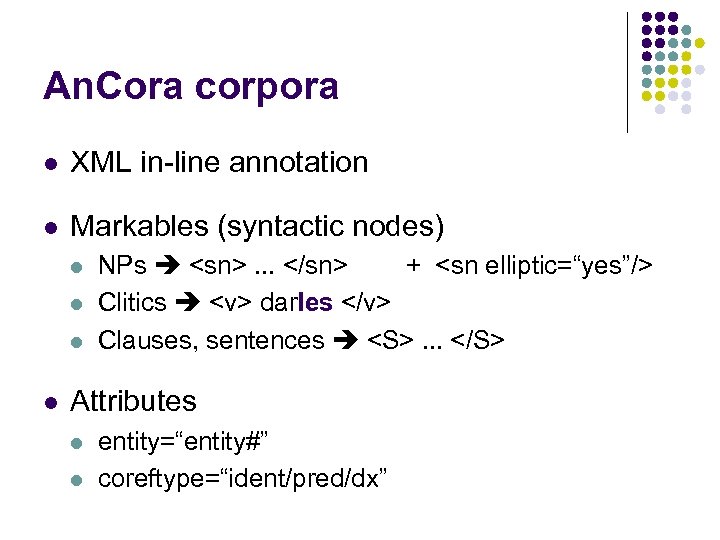

An. Cora corpora l XML in-line annotation l Markables (syntactic nodes) l l NPs

An. Cora corpora l XML in-line annotation l Markables (syntactic nodes) l l NPs . . . Attributes l l entity=“entity#” coreftype=“ident/pred/dx”

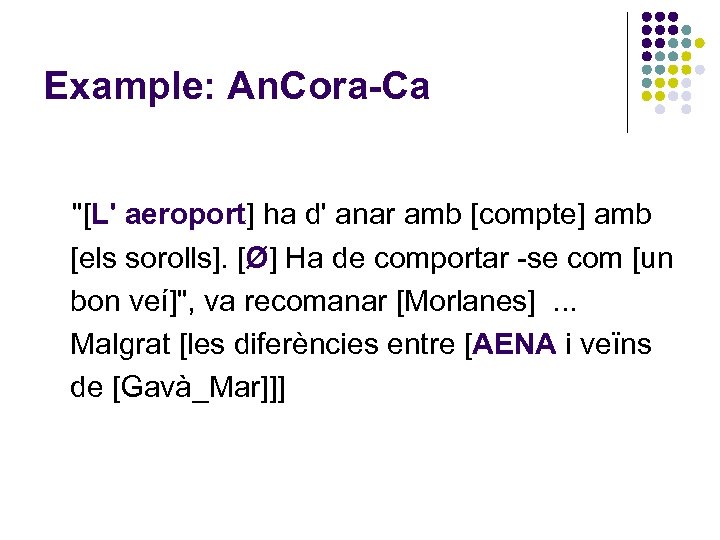

Example: An. Cora-Ca "[L' aeroport] ha d' anar amb [compte] amb [els sorolls]. [Ø] Ha de comportar -se com [un bon veí]", va recomanar [Morlanes]. . . Malgrat [les diferències entre [AENA i veïns de [Gavà_Mar]]]

Example: An. Cora-Ca "[L' aeroport] ha d' anar amb [compte] amb [els sorolls]. [Ø] Ha de comportar -se com [un bon veí]", va recomanar [Morlanes]. . . Malgrat [les diferències entre [AENA i veïns de [Gavà_Mar]]]

ha d' anar amb compte" src="https://present5.com/presentation/151a894f19279e1866e2b709cad283d8/image-23.jpg" alt="Example: An. Cora-Ca

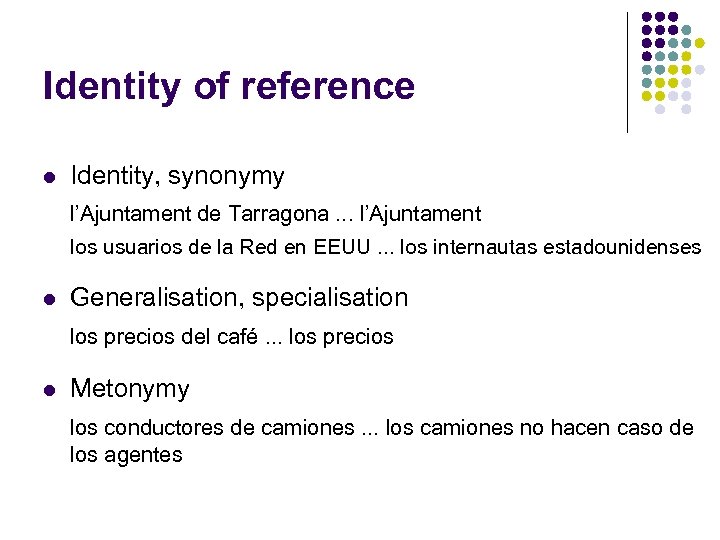

Identity of reference l Identity, synonymy l’Ajuntament de Tarragona. . . l’Ajuntament los usuarios de la Red en EEUU. . . los internautas estadounidenses l Generalisation, specialisation los precios del café. . . los precios l Metonymy los conductores de camiones. . . los camiones no hacen caso de los agentes

Identity of reference l Identity, synonymy l’Ajuntament de Tarragona. . . l’Ajuntament los usuarios de la Red en EEUU. . . los internautas estadounidenses l Generalisation, specialisation los precios del café. . . los precios l Metonymy los conductores de camiones. . . los camiones no hacen caso de los agentes

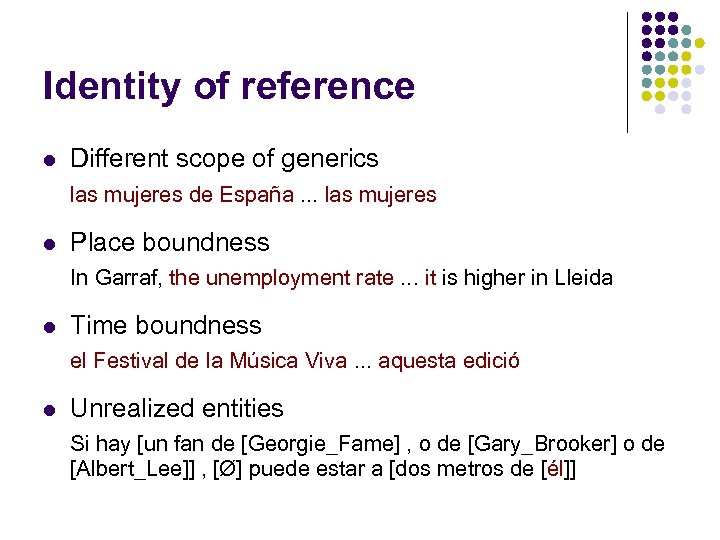

Identity of reference l Different scope of generics las mujeres de España. . . las mujeres l Place boundness In Garraf, the unemployment rate. . . it is higher in Lleida l Time boundness el Festival de la Música Viva. . . aquesta edició l Unrealized entities Si hay [un fan de [Georgie_Fame] , o de [Gary_Brooker] o de [Albert_Lee]] , [Ø] puede estar a [dos metros de [él]]

Identity of reference l Different scope of generics las mujeres de España. . . las mujeres l Place boundness In Garraf, the unemployment rate. . . it is higher in Lleida l Time boundness el Festival de la Música Viva. . . aquesta edició l Unrealized entities Si hay [un fan de [Georgie_Fame] , o de [Gary_Brooker] o de [Albert_Lee]] , [Ø] puede estar a [dos metros de [él]]

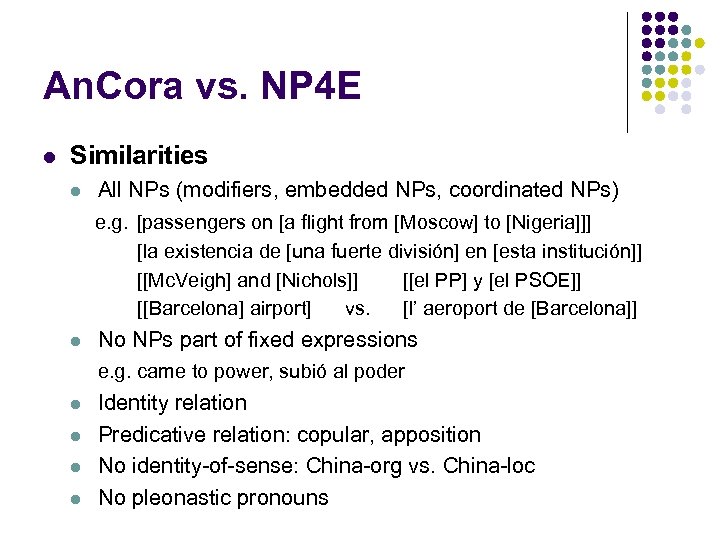

An. Cora vs. NP 4 E l Similarities l All NPs (modifiers, embedded NPs, coordinated NPs) e. g. [passengers on [a flight from [Moscow] to [Nigeria]]] [la existencia de [una fuerte división] en [esta institución]] [[Mc. Veigh] and [Nichols]] [[el PP] y [el PSOE]] [[Barcelona] airport] vs. [l’ aeroport de [Barcelona]] l No NPs part of fixed expressions e. g. came to power, subió al poder l l Identity relation Predicative relation: copular, apposition No identity-of-sense: China-org vs. China-loc No pleonastic pronouns

An. Cora vs. NP 4 E l Similarities l All NPs (modifiers, embedded NPs, coordinated NPs) e. g. [passengers on [a flight from [Moscow] to [Nigeria]]] [la existencia de [una fuerte división] en [esta institución]] [[Mc. Veigh] and [Nichols]] [[el PP] y [el PSOE]] [[Barcelona] airport] vs. [l’ aeroport de [Barcelona]] l No NPs part of fixed expressions e. g. came to power, subió al poder l l Identity relation Predicative relation: copular, apposition No identity-of-sense: China-org vs. China-loc No pleonastic pronouns

An. Cora vs. NP 4 E l Differences l l Zero elements: elliptical subjects Clitical pronouns e. g. give them = (Spanish) darles / (Catalan) donar-les l l Relative pronouns Discourse deixis No possessive pronouns Split antecedents

An. Cora vs. NP 4 E l Differences l l Zero elements: elliptical subjects Clitical pronouns e. g. give them = (Spanish) darles / (Catalan) donar-les l l Relative pronouns Discourse deixis No possessive pronouns Split antecedents

Preparation of Catalan and Spanish data for ARE 2009 l l l there are lots of similarities between the guidelines used for An. Cora and NP 4 E corpus features too specific will be discarded An. Cora will be converted to the light XML annotation used in ARE 2007 We hope not to encounter major problems when we do the actual conversion

Preparation of Catalan and Spanish data for ARE 2009 l l l there are lots of similarities between the guidelines used for An. Cora and NP 4 E corpus features too specific will be discarded An. Cora will be converted to the light XML annotation used in ARE 2007 We hope not to encounter major problems when we do the actual conversion

Lessons learnt from ARE 2007 l l if possible more evaluation methods and more baselines better overlap metric participants want more time (lots of interest, but the evaluation clashed with some major conferences) participants want to be able to publish

Lessons learnt from ARE 2007 l l if possible more evaluation methods and more baselines better overlap metric participants want more time (lots of interest, but the evaluation clashed with some major conferences) participants want to be able to publish

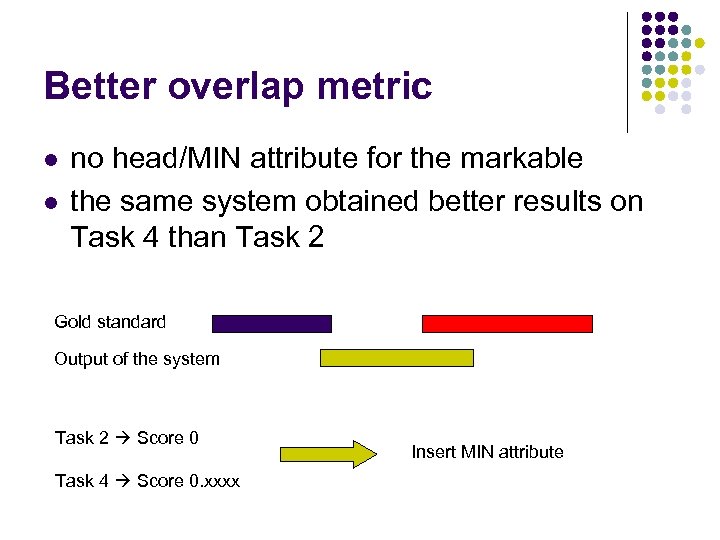

Better overlap metric l l no head/MIN attribute for the markable the same system obtained better results on Task 4 than Task 2 Gold standard Output of the system Task 2 Score 0 Task 4 Score 0. xxxx Insert MIN attribute

Better overlap metric l l no head/MIN attribute for the markable the same system obtained better results on Task 4 than Task 2 Gold standard Output of the system Task 2 Score 0 Task 4 Score 0. xxxx Insert MIN attribute

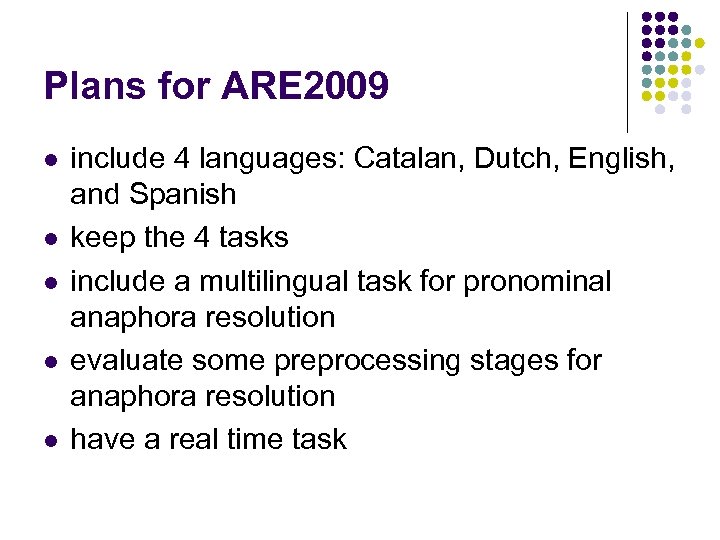

Plans for ARE 2009 l l l include 4 languages: Catalan, Dutch, English, and Spanish keep the 4 tasks include a multilingual task for pronominal anaphora resolution evaluate some preprocessing stages for anaphora resolution have a real time task

Plans for ARE 2009 l l l include 4 languages: Catalan, Dutch, English, and Spanish keep the 4 tasks include a multilingual task for pronominal anaphora resolution evaluate some preprocessing stages for anaphora resolution have a real time task

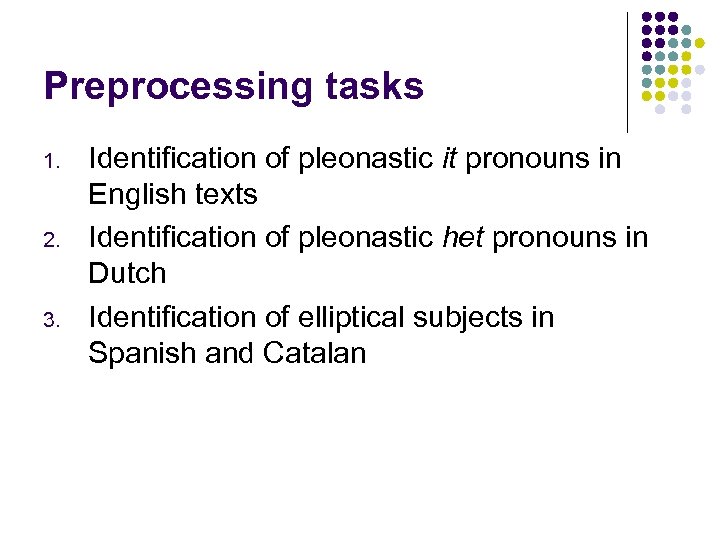

Preprocessing tasks 1. 2. 3. Identification of pleonastic it pronouns in English texts Identification of pleonastic het pronouns in Dutch Identification of elliptical subjects in Spanish and Catalan

Preprocessing tasks 1. 2. 3. Identification of pleonastic it pronouns in English texts Identification of pleonastic het pronouns in Dutch Identification of elliptical subjects in Spanish and Catalan

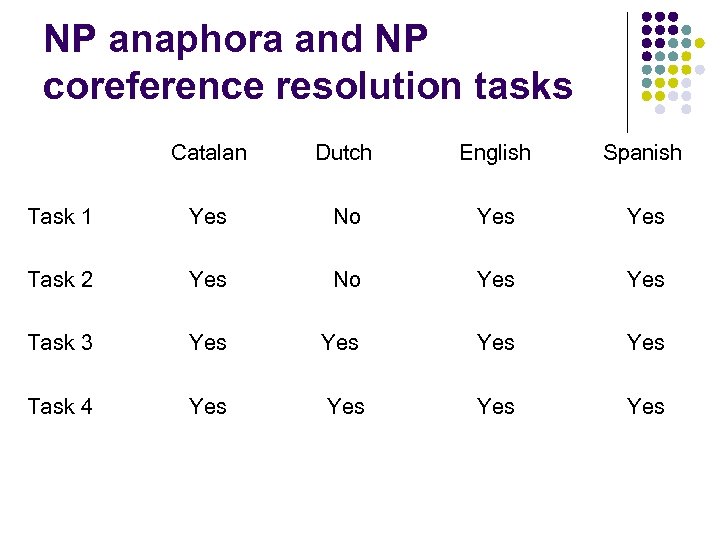

NP anaphora and NP coreference resolution tasks Catalan Dutch English Spanish Task 1 Yes No Yes Task 2 Yes No Yes Task 3 Yes Yes Task 4 Yes Yes

NP anaphora and NP coreference resolution tasks Catalan Dutch English Spanish Task 1 Yes No Yes Task 2 Yes No Yes Task 3 Yes Yes Task 4 Yes Yes

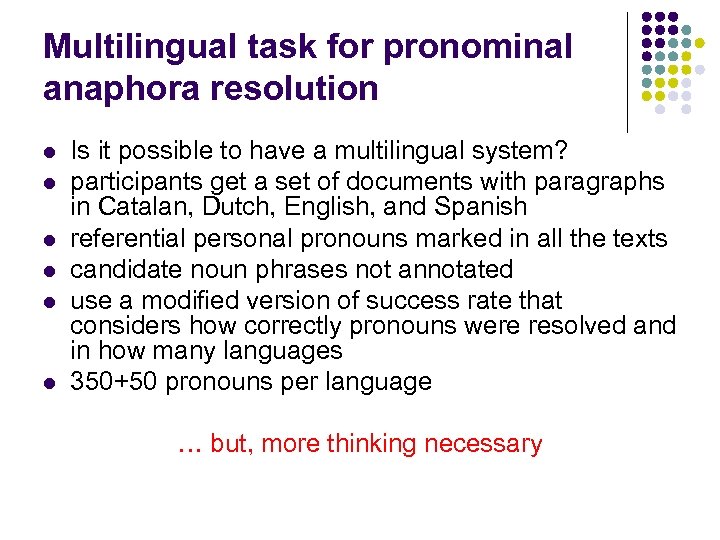

Multilingual task for pronominal anaphora resolution l l l Is it possible to have a multilingual system? participants get a set of documents with paragraphs in Catalan, Dutch, English, and Spanish referential personal pronouns marked in all the texts candidate noun phrases not annotated use a modified version of success rate that considers how correctly pronouns were resolved and in how many languages 350+50 pronouns per language … but, more thinking necessary

Multilingual task for pronominal anaphora resolution l l l Is it possible to have a multilingual system? participants get a set of documents with paragraphs in Catalan, Dutch, English, and Spanish referential personal pronouns marked in all the texts candidate noun phrases not annotated use a modified version of success rate that considers how correctly pronouns were resolved and in how many languages 350+50 pronouns per language … but, more thinking necessary

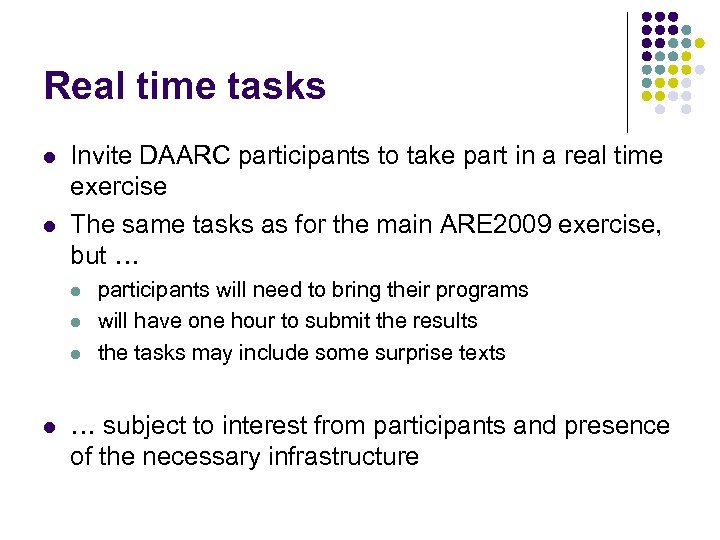

Real time tasks l l Invite DAARC participants to take part in a real time exercise The same tasks as for the main ARE 2009 exercise, but … l l participants will need to bring their programs will have one hour to submit the results the tasks may include some surprise texts … subject to interest from participants and presence of the necessary infrastructure

Real time tasks l l Invite DAARC participants to take part in a real time exercise The same tasks as for the main ARE 2009 exercise, but … l l participants will need to bring their programs will have one hour to submit the results the tasks may include some surprise texts … subject to interest from participants and presence of the necessary infrastructure

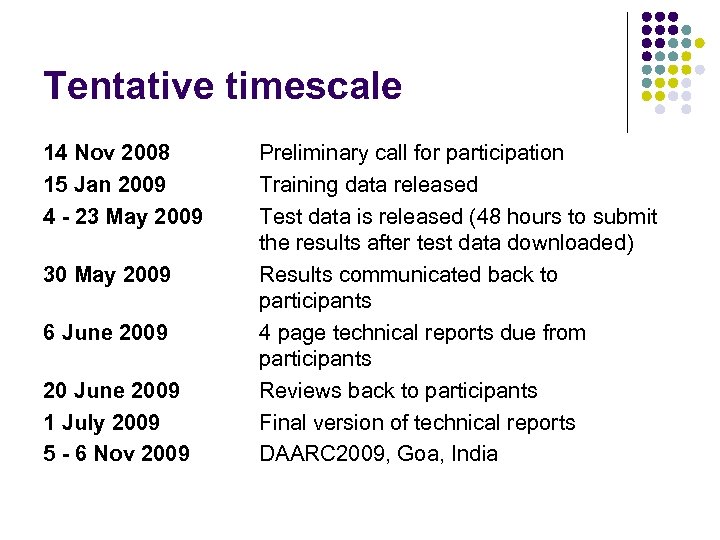

Tentative timescale 14 Nov 2008 15 Jan 2009 4 - 23 May 2009 30 May 2009 6 June 2009 20 June 2009 1 July 2009 5 - 6 Nov 2009 Preliminary call for participation Training data released Test data is released (48 hours to submit the results after test data downloaded) Results communicated back to participants 4 page technical reports due from participants Reviews back to participants Final version of technical reports DAARC 2009, Goa, India

Tentative timescale 14 Nov 2008 15 Jan 2009 4 - 23 May 2009 30 May 2009 6 June 2009 20 June 2009 1 July 2009 5 - 6 Nov 2009 Preliminary call for participation Training data released Test data is released (48 hours to submit the results after test data downloaded) Results communicated back to participants 4 page technical reports due from participants Reviews back to participants Final version of technical reports DAARC 2009, Goa, India

Webpage http: //www. anaphora-and-coreference. info/ARE 2009 Mailing list ARE 2009 -list@anaphora-and-coreference. info Email address ARE 2009@anaphora-and-coreference. info Thank you!

Webpage http: //www. anaphora-and-coreference. info/ARE 2009 Mailing list ARE 2009 -list@anaphora-and-coreference. info Email address ARE 2009@anaphora-and-coreference. info Thank you!