f424e50b1cadbc0f9a7cc3a4915fa135.ppt

- Количество слайдов: 110

Text Mining Dr Eamonn Keogh Computer Science & Engineering Department University of California - Riverside, CA 92521 eamonn@cs. ucr. edu

Text Mining Dr Eamonn Keogh Computer Science & Engineering Department University of California - Riverside, CA 92521 eamonn@cs. ucr. edu

Text Mining/Information Retrieval • Task Statement: Build a system that retrieves documents that users are likely to find relevant to their queries. • This assumption underlies the field of Information Retrieval.

Text Mining/Information Retrieval • Task Statement: Build a system that retrieves documents that users are likely to find relevant to their queries. • This assumption underlies the field of Information Retrieval.

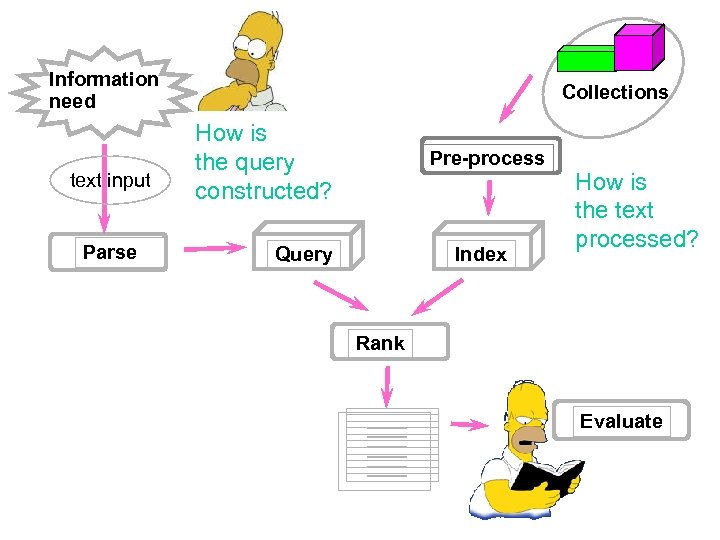

Information need text input Parse Collections How is the query constructed? Pre-process Query Index How is the text processed? Rank Evaluate

Information need text input Parse Collections How is the query constructed? Pre-process Query Index How is the text processed? Rank Evaluate

Terminology Token: A natural language word “Swim”, “Simpson”, “ 92513” etc Document: Usually a web page, but more generally any file.

Terminology Token: A natural language word “Swim”, “Simpson”, “ 92513” etc Document: Usually a web page, but more generally any file.

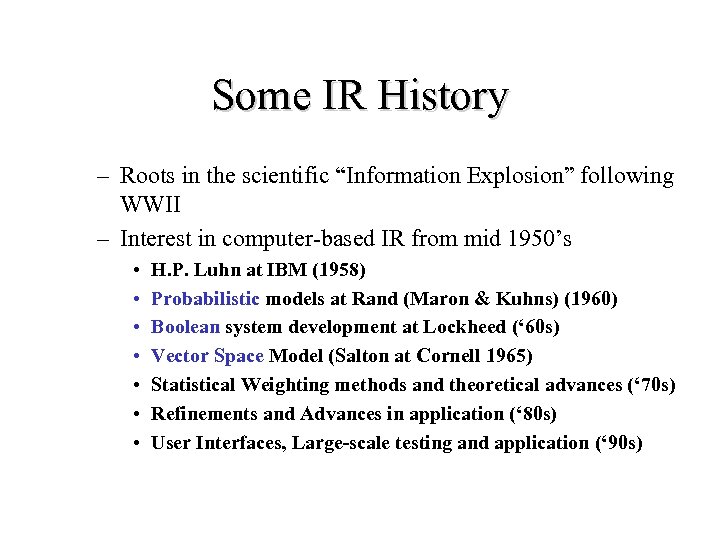

Some IR History – Roots in the scientific “Information Explosion” following WWII – Interest in computer-based IR from mid 1950’s • • H. P. Luhn at IBM (1958) Probabilistic models at Rand (Maron & Kuhns) (1960) Boolean system development at Lockheed (‘ 60 s) Vector Space Model (Salton at Cornell 1965) Statistical Weighting methods and theoretical advances (‘ 70 s) Refinements and Advances in application (‘ 80 s) User Interfaces, Large-scale testing and application (‘ 90 s)

Some IR History – Roots in the scientific “Information Explosion” following WWII – Interest in computer-based IR from mid 1950’s • • H. P. Luhn at IBM (1958) Probabilistic models at Rand (Maron & Kuhns) (1960) Boolean system development at Lockheed (‘ 60 s) Vector Space Model (Salton at Cornell 1965) Statistical Weighting methods and theoretical advances (‘ 70 s) Refinements and Advances in application (‘ 80 s) User Interfaces, Large-scale testing and application (‘ 90 s)

Relevance • In what ways can a document be relevant to a query? – Answer precise question precisely. – Who is Homer’s Boss? Montgomery Burns. – Partially answer question. – Where does Homer work? Power Plant. – Suggest a source for more information. – What is Bart’s middle name? Look in Issue 234 of Fanzine – Give background information. – Remind the user of other knowledge. – Others. . .

Relevance • In what ways can a document be relevant to a query? – Answer precise question precisely. – Who is Homer’s Boss? Montgomery Burns. – Partially answer question. – Where does Homer work? Power Plant. – Suggest a source for more information. – What is Bart’s middle name? Look in Issue 234 of Fanzine – Give background information. – Remind the user of other knowledge. – Others. . .

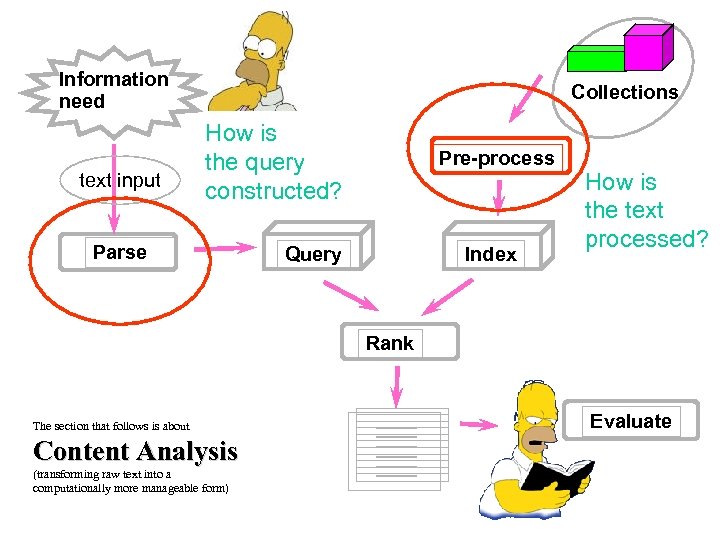

Information need text input Collections How is the query constructed? Parse Pre-process Query Index How is the text processed? Rank The section that follows is about Content Analysis (transforming raw text into a computationally more manageable form) Evaluate

Information need text input Collections How is the query constructed? Parse Pre-process Query Index How is the text processed? Rank The section that follows is about Content Analysis (transforming raw text into a computationally more manageable form) Evaluate

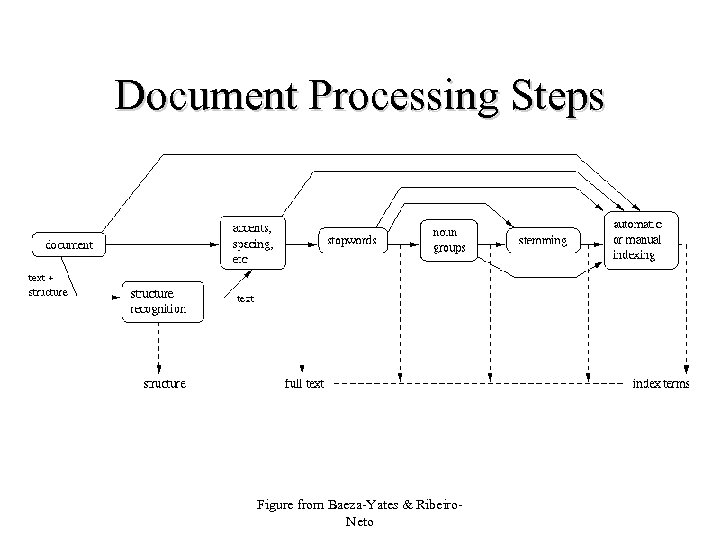

Document Processing Steps Figure from Baeza-Yates & Ribeiro. Neto

Document Processing Steps Figure from Baeza-Yates & Ribeiro. Neto

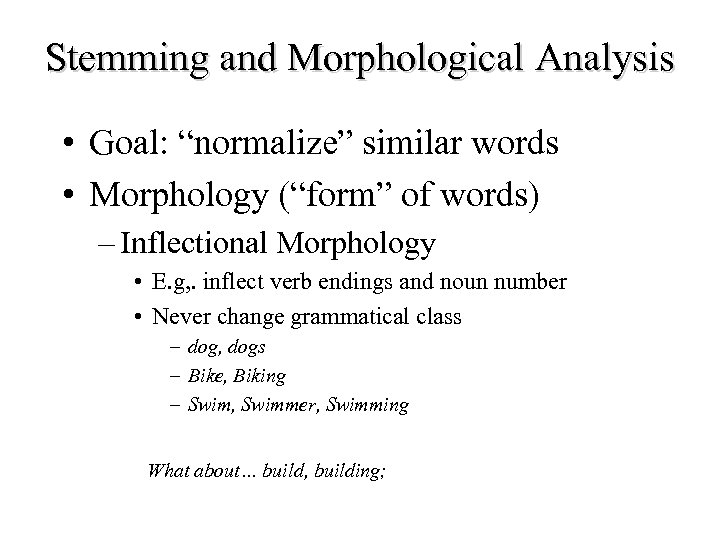

Stemming and Morphological Analysis • Goal: “normalize” similar words • Morphology (“form” of words) – Inflectional Morphology • E. g, . inflect verb endings and noun number • Never change grammatical class – dog, dogs – Bike, Biking – Swim, Swimmer, Swimming What about… build, building;

Stemming and Morphological Analysis • Goal: “normalize” similar words • Morphology (“form” of words) – Inflectional Morphology • E. g, . inflect verb endings and noun number • Never change grammatical class – dog, dogs – Bike, Biking – Swim, Swimmer, Swimming What about… build, building;

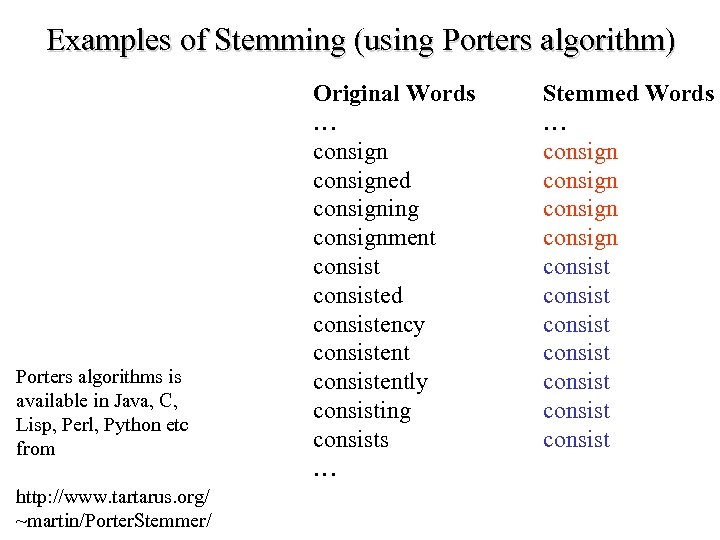

Examples of Stemming (using Porters algorithm) Porters algorithms is available in Java, C, Lisp, Perl, Python etc from http: //www. tartarus. org/ ~martin/Porter. Stemmer/ Original Words … consigned consigning consignment consisted consistency consistently consisting consists … Stemmed Words … consign consist consist

Examples of Stemming (using Porters algorithm) Porters algorithms is available in Java, C, Lisp, Perl, Python etc from http: //www. tartarus. org/ ~martin/Porter. Stemmer/ Original Words … consigned consigning consignment consisted consistency consistently consisting consists … Stemmed Words … consign consist consist

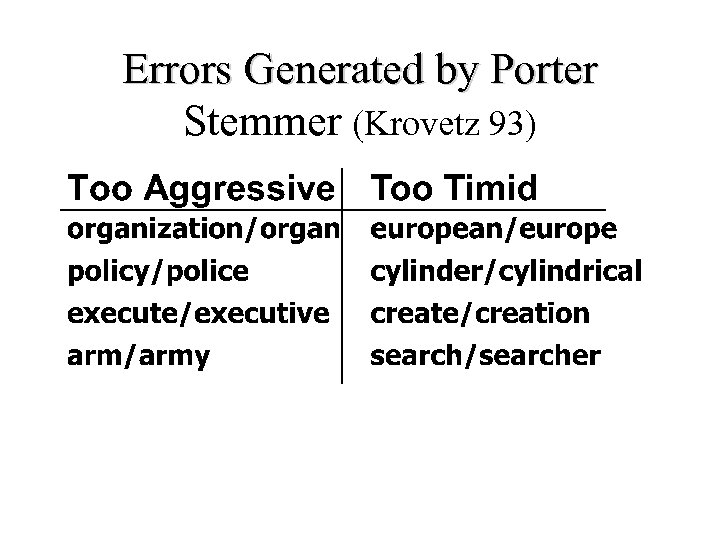

Errors Generated by Porter Stemmer (Krovetz 93)

Errors Generated by Porter Stemmer (Krovetz 93)

Statistical Properties of Text • Token occurrences in text are not uniformly distributed • They are also not normally distributed • They do exhibit a Zipf distribution

Statistical Properties of Text • Token occurrences in text are not uniformly distributed • They are also not normally distributed • They do exhibit a Zipf distribution

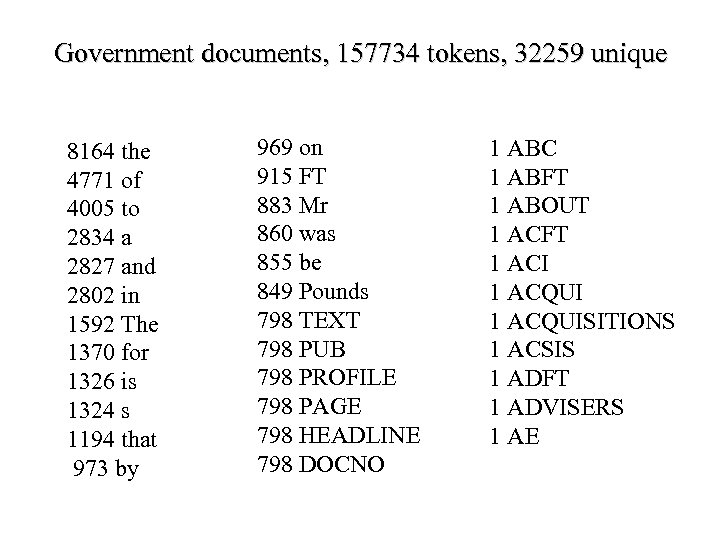

Government documents, 157734 tokens, 32259 unique 8164 the 4771 of 4005 to 2834 a 2827 and 2802 in 1592 The 1370 for 1326 is 1324 s 1194 that 973 by 969 on 915 FT 883 Mr 860 was 855 be 849 Pounds 798 TEXT 798 PUB 798 PROFILE 798 PAGE 798 HEADLINE 798 DOCNO 1 ABC 1 ABFT 1 ABOUT 1 ACFT 1 ACI 1 ACQUISITIONS 1 ACSIS 1 ADFT 1 ADVISERS 1 AE

Government documents, 157734 tokens, 32259 unique 8164 the 4771 of 4005 to 2834 a 2827 and 2802 in 1592 The 1370 for 1326 is 1324 s 1194 that 973 by 969 on 915 FT 883 Mr 860 was 855 be 849 Pounds 798 TEXT 798 PUB 798 PROFILE 798 PAGE 798 HEADLINE 798 DOCNO 1 ABC 1 ABFT 1 ABOUT 1 ACFT 1 ACI 1 ACQUISITIONS 1 ACSIS 1 ADFT 1 ADVISERS 1 AE

Plotting Word Frequency by Rank • Main idea: count – How many times tokens occur in the text • Over all texts in the collection • Now rank these according to how often they occur. This is called the rank.

Plotting Word Frequency by Rank • Main idea: count – How many times tokens occur in the text • Over all texts in the collection • Now rank these according to how often they occur. This is called the rank.

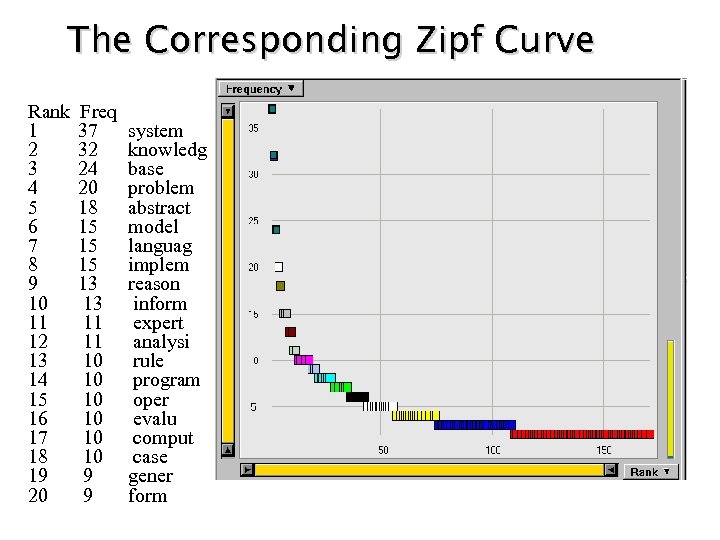

The Corresponding Zipf Curve Rank Freq 1 37 system 2 32 knowledg 3 24 base 4 20 problem 5 18 abstract 6 15 model 7 15 languag 8 15 implem 9 13 reason 10 13 inform 11 expert 12 11 analysi 13 10 rule 14 10 program 15 10 oper 16 10 evalu 17 10 comput 18 10 case 19 9 gener 20 9 form

The Corresponding Zipf Curve Rank Freq 1 37 system 2 32 knowledg 3 24 base 4 20 problem 5 18 abstract 6 15 model 7 15 languag 8 15 implem 9 13 reason 10 13 inform 11 expert 12 11 analysi 13 10 rule 14 10 program 15 10 oper 16 10 evalu 17 10 comput 18 10 case 19 9 gener 20 9 form

Zipf Distribution • The Important Points: – a few elements occur very frequently – a medium number of elements have medium frequency – many elements occur very infrequently

Zipf Distribution • The Important Points: – a few elements occur very frequently – a medium number of elements have medium frequency – many elements occur very infrequently

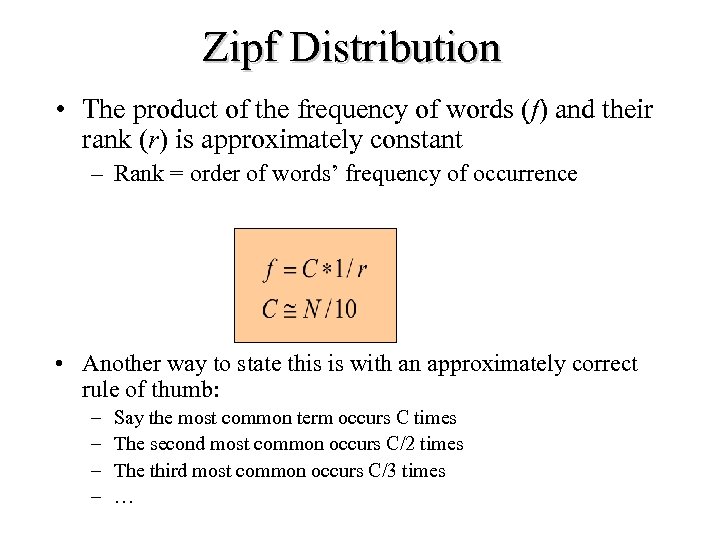

Zipf Distribution • The product of the frequency of words (f) and their rank (r) is approximately constant – Rank = order of words’ frequency of occurrence • Another way to state this is with an approximately correct rule of thumb: – – Say the most common term occurs C times The second most common occurs C/2 times The third most common occurs C/3 times …

Zipf Distribution • The product of the frequency of words (f) and their rank (r) is approximately constant – Rank = order of words’ frequency of occurrence • Another way to state this is with an approximately correct rule of thumb: – – Say the most common term occurs C times The second most common occurs C/2 times The third most common occurs C/3 times …

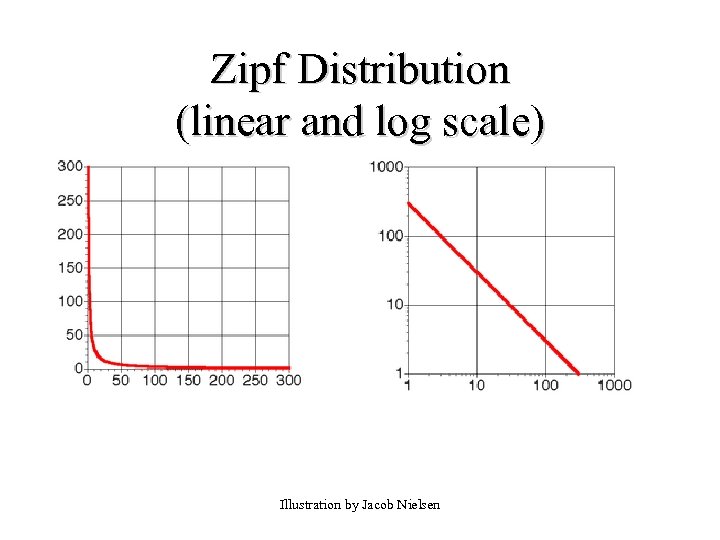

Zipf Distribution (linear and log scale) Illustration by Jacob Nielsen

Zipf Distribution (linear and log scale) Illustration by Jacob Nielsen

What Kinds of Data Exhibit a Zipf Distribution? • Words in a text collection – Virtually any language usage • • • Library book checkout patterns Incoming Web Page Requests Outgoing Web Page Requests Document Size on Web City Sizes …

What Kinds of Data Exhibit a Zipf Distribution? • Words in a text collection – Virtually any language usage • • • Library book checkout patterns Incoming Web Page Requests Outgoing Web Page Requests Document Size on Web City Sizes …

Consequences of Zipf • There always a few very frequent tokens that are not good discriminators. – Called “stop words” in IR • English examples: to, from, on, and, the, . . . • There always a large number of tokens that occur once and can mess up algorithms. • Medium frequency words most descriptive

Consequences of Zipf • There always a few very frequent tokens that are not good discriminators. – Called “stop words” in IR • English examples: to, from, on, and, the, . . . • There always a large number of tokens that occur once and can mess up algorithms. • Medium frequency words most descriptive

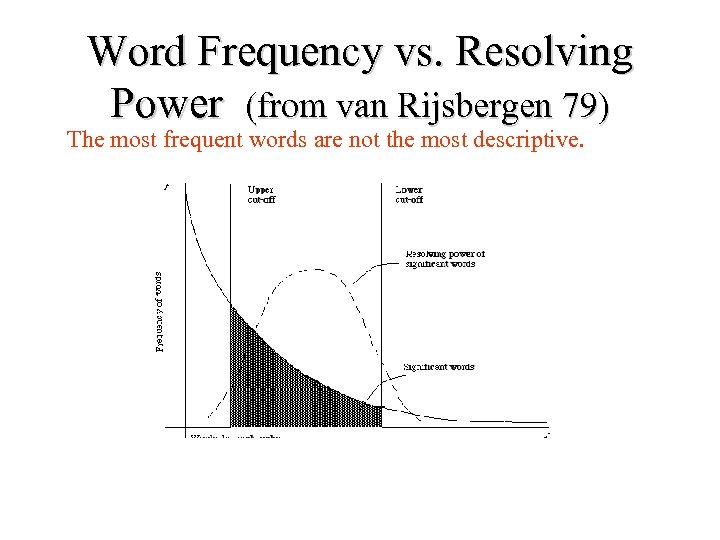

Word Frequency vs. Resolving Power (from van Rijsbergen 79) The most frequent words are not the most descriptive.

Word Frequency vs. Resolving Power (from van Rijsbergen 79) The most frequent words are not the most descriptive.

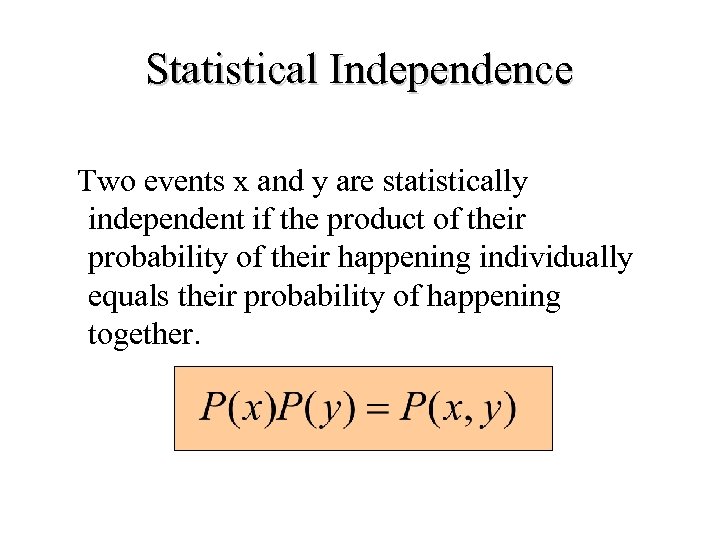

Statistical Independence Two events x and y are statistically independent if the product of their probability of their happening individually equals their probability of happening together.

Statistical Independence Two events x and y are statistically independent if the product of their probability of their happening individually equals their probability of happening together.

Statistical Independence and Dependence • What are examples of things that are statistically independent? • What are examples of things that are statistically dependent?

Statistical Independence and Dependence • What are examples of things that are statistically independent? • What are examples of things that are statistically dependent?

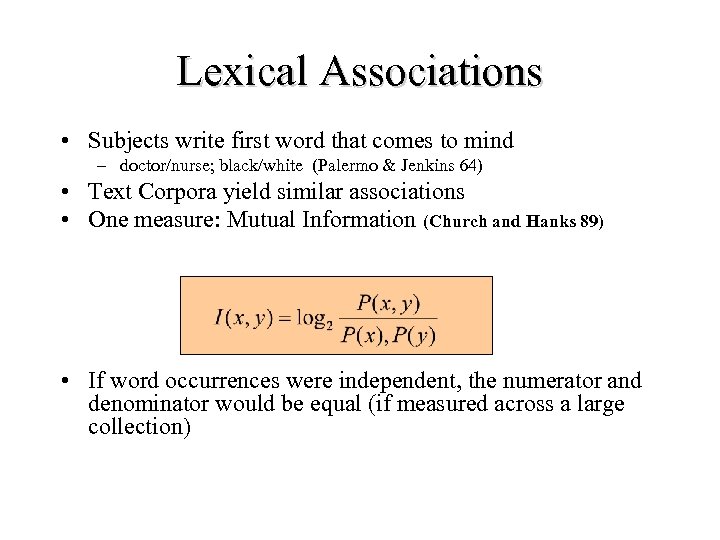

Lexical Associations • Subjects write first word that comes to mind – doctor/nurse; black/white (Palermo & Jenkins 64) • Text Corpora yield similar associations • One measure: Mutual Information (Church and Hanks 89) • If word occurrences were independent, the numerator and denominator would be equal (if measured across a large collection)

Lexical Associations • Subjects write first word that comes to mind – doctor/nurse; black/white (Palermo & Jenkins 64) • Text Corpora yield similar associations • One measure: Mutual Information (Church and Hanks 89) • If word occurrences were independent, the numerator and denominator would be equal (if measured across a large collection)

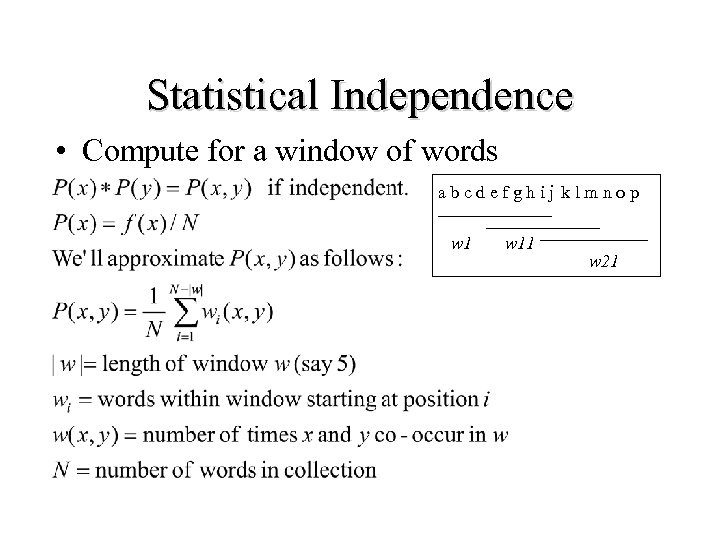

Statistical Independence • Compute for a window of words a b c d e f g h i j k l m n o p w 11 w 21

Statistical Independence • Compute for a window of words a b c d e f g h i j k l m n o p w 11 w 21

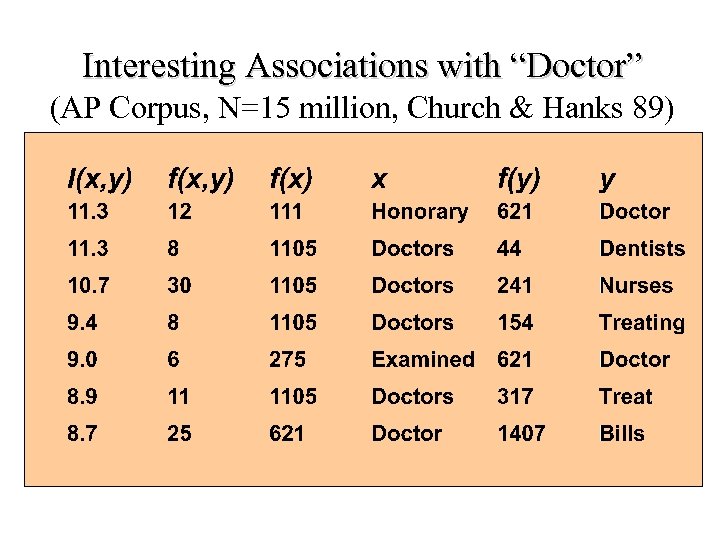

Interesting Associations with “Doctor” (AP Corpus, N=15 million, Church & Hanks 89)

Interesting Associations with “Doctor” (AP Corpus, N=15 million, Church & Hanks 89)

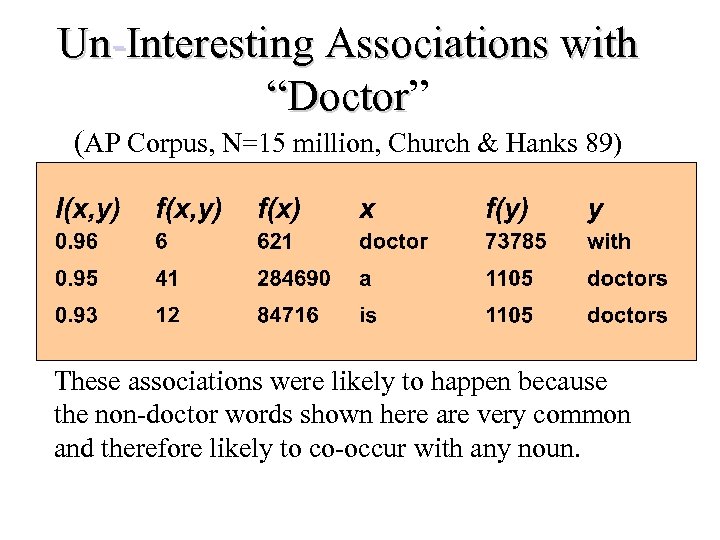

Un-Interesting Associations with “Doctor” “Doctor (AP Corpus, N=15 million, Church & Hanks 89) These associations were likely to happen because the non-doctor words shown here are very common and therefore likely to co-occur with any noun.

Un-Interesting Associations with “Doctor” “Doctor (AP Corpus, N=15 million, Church & Hanks 89) These associations were likely to happen because the non-doctor words shown here are very common and therefore likely to co-occur with any noun.

Associations Are Important Because… • We may be able to discover that phrases that should be treated as a word. I. e. “data mining”. • We may be able to automatically discover synonyms. I. e. “Bike” and “Bicycle”

Associations Are Important Because… • We may be able to discover that phrases that should be treated as a word. I. e. “data mining”. • We may be able to automatically discover synonyms. I. e. “Bike” and “Bicycle”

Content Analysis Summary • Content Analysis: transforming raw text into more computationally useful forms • Words in text collections exhibit interesting statistical properties – Word frequencies have a Zipf distribution – Word co-occurrences exhibit dependencies • Text documents are transformed to vectors – Pre-processing includes tokenization, stemming, collocations/phrases

Content Analysis Summary • Content Analysis: transforming raw text into more computationally useful forms • Words in text collections exhibit interesting statistical properties – Word frequencies have a Zipf distribution – Word co-occurrences exhibit dependencies • Text documents are transformed to vectors – Pre-processing includes tokenization, stemming, collocations/phrases

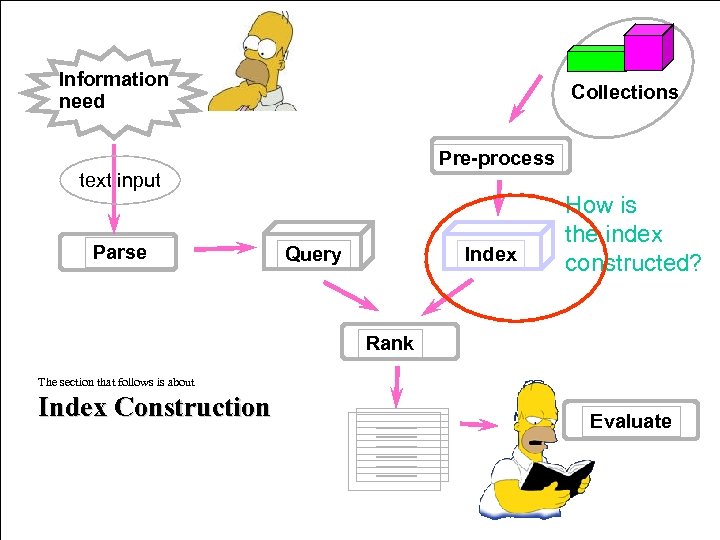

Information need Collections Pre-process text input Parse Query Index How is the index constructed? Rank The section that follows is about Index Construction Evaluate

Information need Collections Pre-process text input Parse Query Index How is the index constructed? Rank The section that follows is about Index Construction Evaluate

Inverted Index • This is the primary data structure for text indexes • Main Idea: – Invert documents into a big index • Basic steps: – Make a “dictionary” of all the tokens in the collection – For each token, list all the docs it occurs in. – Do a few things to reduce redundancy in the data structure

Inverted Index • This is the primary data structure for text indexes • Main Idea: – Invert documents into a big index • Basic steps: – Make a “dictionary” of all the tokens in the collection – For each token, list all the docs it occurs in. – Do a few things to reduce redundancy in the data structure

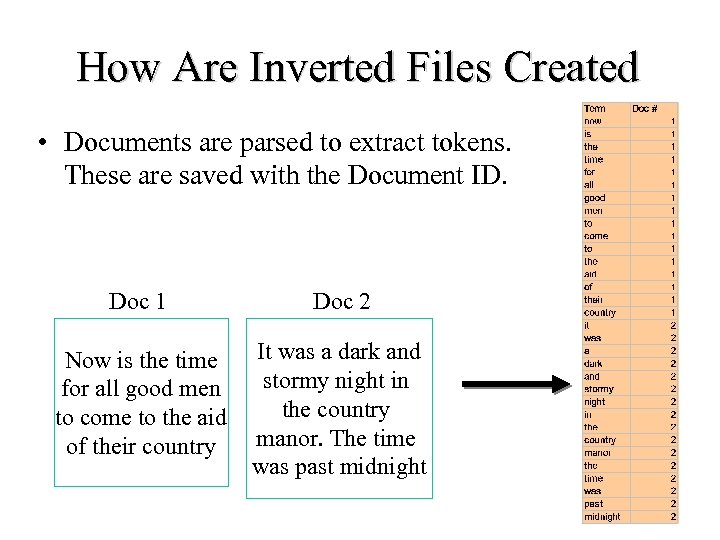

How Are Inverted Files Created • Documents are parsed to extract tokens. These are saved with the Document ID. Doc 1 Doc 2 Now is the time for all good men to come to the aid of their country It was a dark and stormy night in the country manor. The time was past midnight

How Are Inverted Files Created • Documents are parsed to extract tokens. These are saved with the Document ID. Doc 1 Doc 2 Now is the time for all good men to come to the aid of their country It was a dark and stormy night in the country manor. The time was past midnight

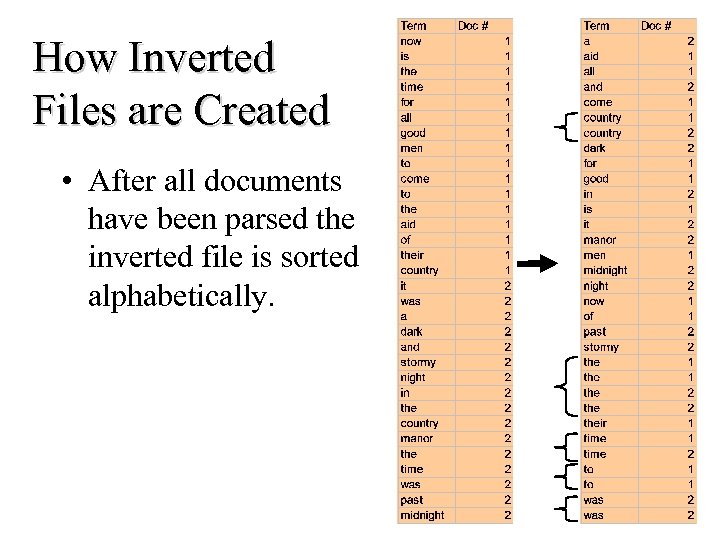

How Inverted Files are Created • After all documents have been parsed the inverted file is sorted alphabetically.

How Inverted Files are Created • After all documents have been parsed the inverted file is sorted alphabetically.

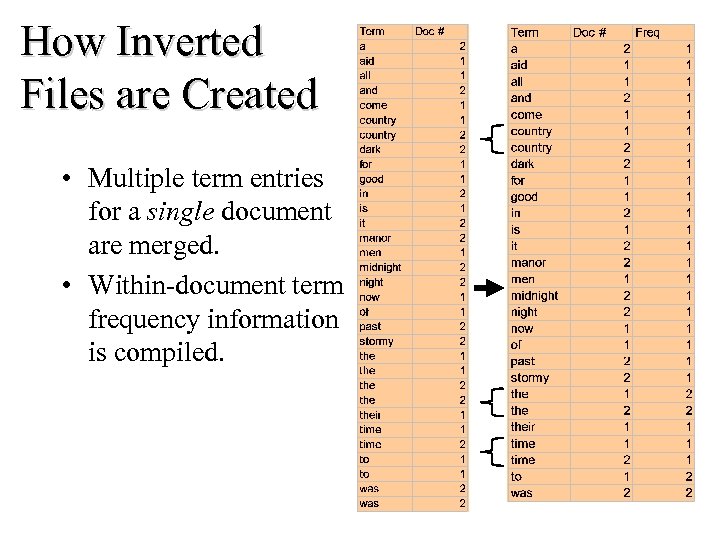

How Inverted Files are Created • Multiple term entries for a single document are merged. • Within-document term frequency information is compiled.

How Inverted Files are Created • Multiple term entries for a single document are merged. • Within-document term frequency information is compiled.

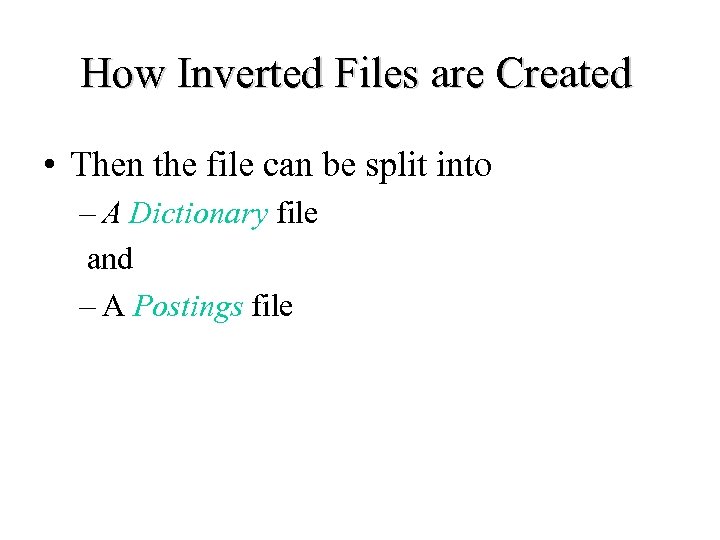

How Inverted Files are Created • Then the file can be split into – A Dictionary file and – A Postings file

How Inverted Files are Created • Then the file can be split into – A Dictionary file and – A Postings file

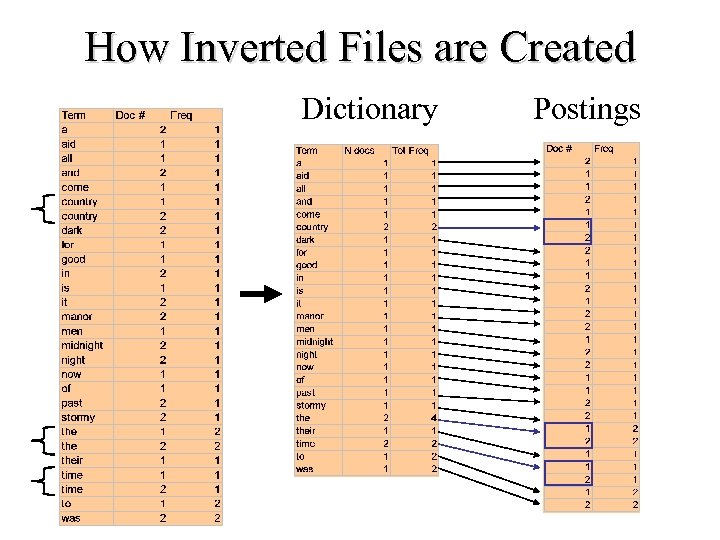

How Inverted Files are Created Dictionary Postings

How Inverted Files are Created Dictionary Postings

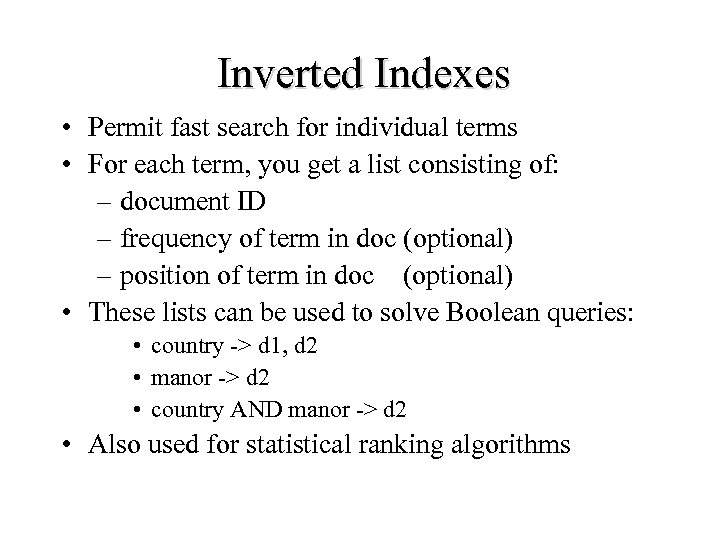

Inverted Indexes • Permit fast search for individual terms • For each term, you get a list consisting of: – document ID – frequency of term in doc (optional) – position of term in doc (optional) • These lists can be used to solve Boolean queries: • country -> d 1, d 2 • manor -> d 2 • country AND manor -> d 2 • Also used for statistical ranking algorithms

Inverted Indexes • Permit fast search for individual terms • For each term, you get a list consisting of: – document ID – frequency of term in doc (optional) – position of term in doc (optional) • These lists can be used to solve Boolean queries: • country -> d 1, d 2 • manor -> d 2 • country AND manor -> d 2 • Also used for statistical ranking algorithms

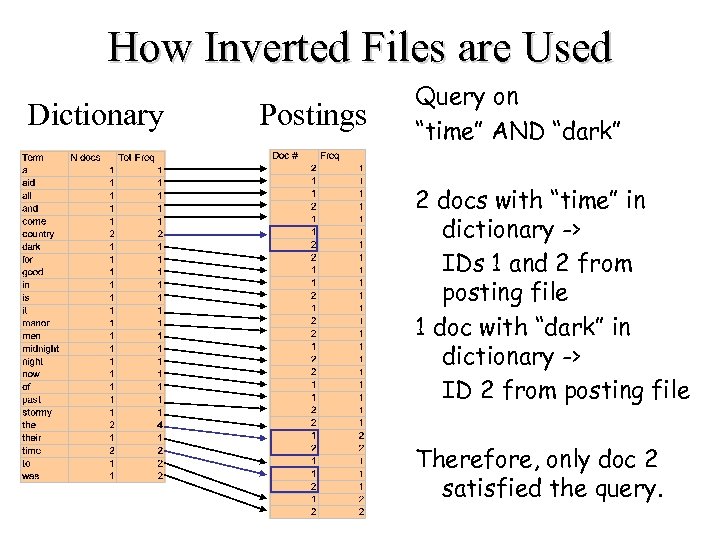

How Inverted Files are Used Dictionary Postings Query on “time” AND “dark” 2 docs with “time” in dictionary -> IDs 1 and 2 from posting file 1 doc with “dark” in dictionary -> ID 2 from posting file Therefore, only doc 2 satisfied the query.

How Inverted Files are Used Dictionary Postings Query on “time” AND “dark” 2 docs with “time” in dictionary -> IDs 1 and 2 from posting file 1 doc with “dark” in dictionary -> ID 2 from posting file Therefore, only doc 2 satisfied the query.

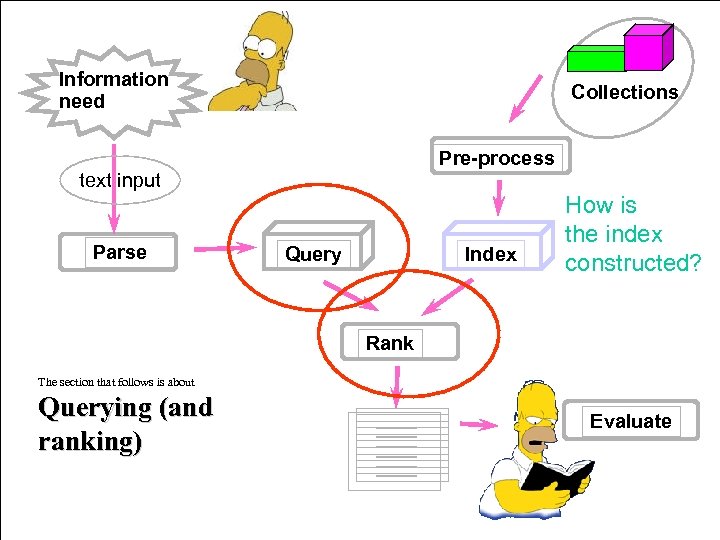

Information need Collections Pre-process text input Parse Query Index How is the index constructed? Rank The section that follows is about Querying (and ranking) Evaluate

Information need Collections Pre-process text input Parse Query Index How is the index constructed? Rank The section that follows is about Querying (and ranking) Evaluate

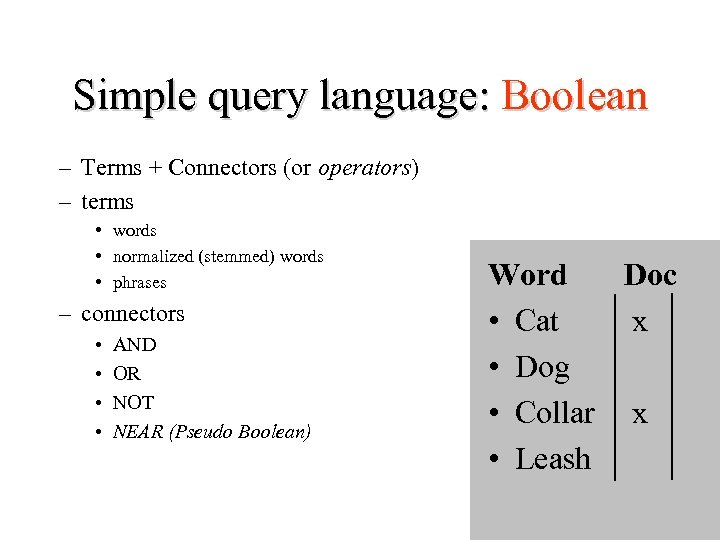

Simple query language: Boolean – Terms + Connectors (or operators) – terms • words • normalized (stemmed) words • phrases – connectors • • AND OR NOT NEAR (Pseudo Boolean) Word Doc • Cat x • Dog • Collar x • Leash

Simple query language: Boolean – Terms + Connectors (or operators) – terms • words • normalized (stemmed) words • phrases – connectors • • AND OR NOT NEAR (Pseudo Boolean) Word Doc • Cat x • Dog • Collar x • Leash

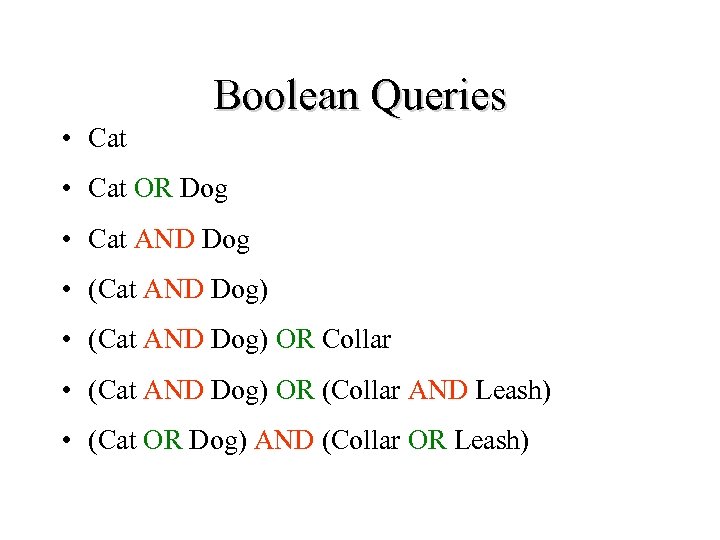

Boolean Queries • Cat OR Dog • Cat AND Dog • (Cat AND Dog) OR Collar • (Cat AND Dog) OR (Collar AND Leash) • (Cat OR Dog) AND (Collar OR Leash)

Boolean Queries • Cat OR Dog • Cat AND Dog • (Cat AND Dog) OR Collar • (Cat AND Dog) OR (Collar AND Leash) • (Cat OR Dog) AND (Collar OR Leash)

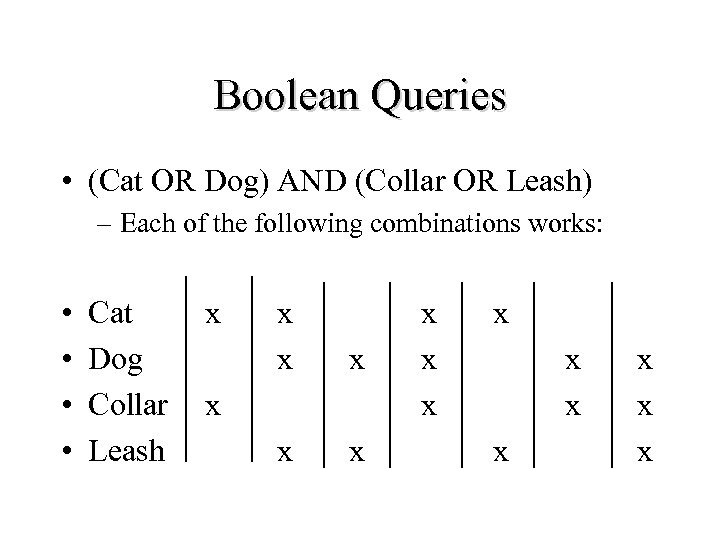

Boolean Queries • (Cat OR Dog) AND (Collar OR Leash) – Each of the following combinations works: • • Cat Dog Collar Leash x x x x x

Boolean Queries • (Cat OR Dog) AND (Collar OR Leash) – Each of the following combinations works: • • Cat Dog Collar Leash x x x x x

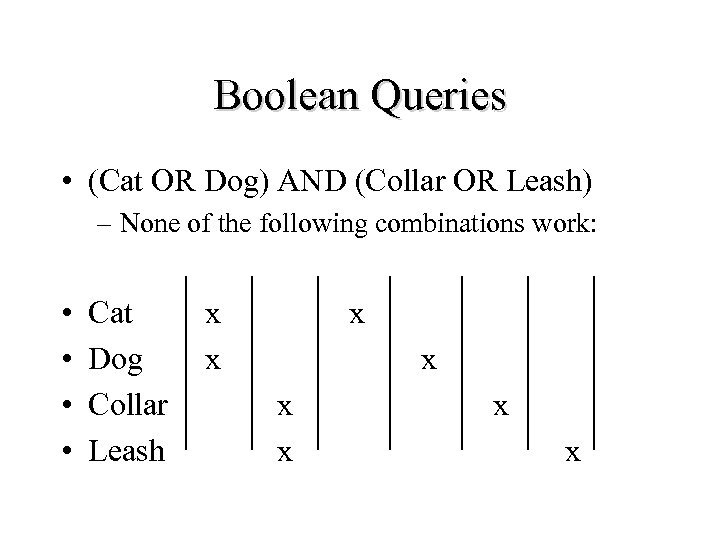

Boolean Queries • (Cat OR Dog) AND (Collar OR Leash) – None of the following combinations work: • • Cat Dog Collar Leash x x x x

Boolean Queries • (Cat OR Dog) AND (Collar OR Leash) – None of the following combinations work: • • Cat Dog Collar Leash x x x x

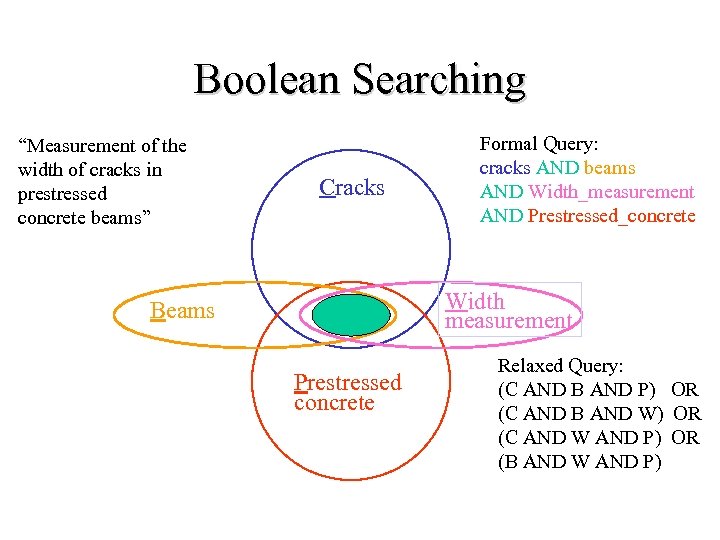

Boolean Searching “Measurement of the width of cracks in prestressed concrete beams” Cracks Formal Query: cracks AND beams AND Width_measurement AND Prestressed_concrete Width measurement Beams Prestressed concrete Relaxed Query: (C AND B AND P) OR (C AND B AND W) OR (C AND W AND P) OR (B AND W AND P)

Boolean Searching “Measurement of the width of cracks in prestressed concrete beams” Cracks Formal Query: cracks AND beams AND Width_measurement AND Prestressed_concrete Width measurement Beams Prestressed concrete Relaxed Query: (C AND B AND P) OR (C AND B AND W) OR (C AND W AND P) OR (B AND W AND P)

Ordering of Retrieved Documents • Pure Boolean has no ordering • In practice: – order chronologically – order by total number of “hits” on query terms • What if one term has more hits than others? • Is it better to one of each term or many of one term?

Ordering of Retrieved Documents • Pure Boolean has no ordering • In practice: – order chronologically – order by total number of “hits” on query terms • What if one term has more hits than others? • Is it better to one of each term or many of one term?

Boolean Model • Advantages – simple queries are easy to understand – relatively easy to implement • Disadvantages – difficult to specify what is wanted – too much returned, or too little – ordering not well determined • Dominant language in commercial Information Retrieval systems until the WWW Since the Boolean model is limited, lets consider a generalization…

Boolean Model • Advantages – simple queries are easy to understand – relatively easy to implement • Disadvantages – difficult to specify what is wanted – too much returned, or too little – ordering not well determined • Dominant language in commercial Information Retrieval systems until the WWW Since the Boolean model is limited, lets consider a generalization…

Vector Model • Documents are represented as “bags of words” • Represented as vectors when used computationally – – A vector is like an array of floating point Has direction and magnitude Each vector holds a place for every term in the collection Therefore, most vectors are sparse • Smithers secretly loves Monty Burns • Monty Burns secretly loves Smithers Both map to… [ Burns, loves, Monty, secretly, Smithers]

Vector Model • Documents are represented as “bags of words” • Represented as vectors when used computationally – – A vector is like an array of floating point Has direction and magnitude Each vector holds a place for every term in the collection Therefore, most vectors are sparse • Smithers secretly loves Monty Burns • Monty Burns secretly loves Smithers Both map to… [ Burns, loves, Monty, secretly, Smithers]

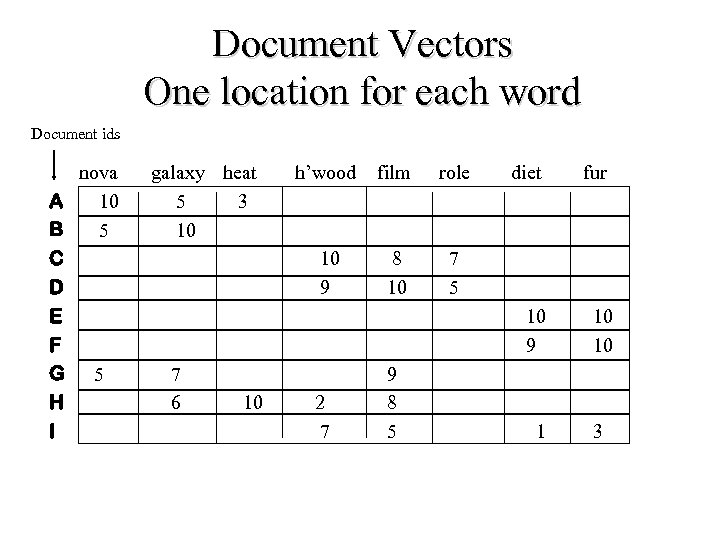

Document Vectors One location for each word Document ids nova A 10 B 5 C D E F G 5 H I galaxy heat 5 3 10 role 10 9 7 5 10 2 7 8 10 9 8 5 diet fur 10 9 7 6 h’wood film 10 1 3

Document Vectors One location for each word Document ids nova A 10 B 5 C D E F G 5 H I galaxy heat 5 3 10 role 10 9 7 5 10 2 7 8 10 9 8 5 diet fur 10 9 7 6 h’wood film 10 1 3

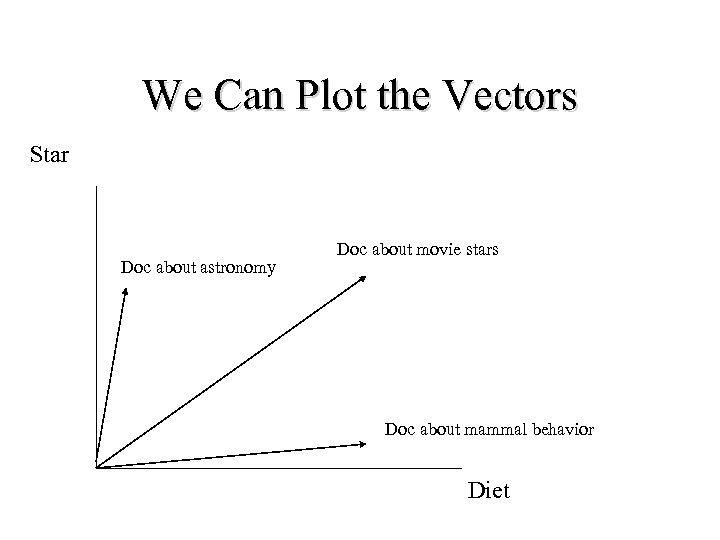

We Can Plot the Vectors Star Doc about astronomy Doc about movie stars Doc about mammal behavior Diet

We Can Plot the Vectors Star Doc about astronomy Doc about movie stars Doc about mammal behavior Diet

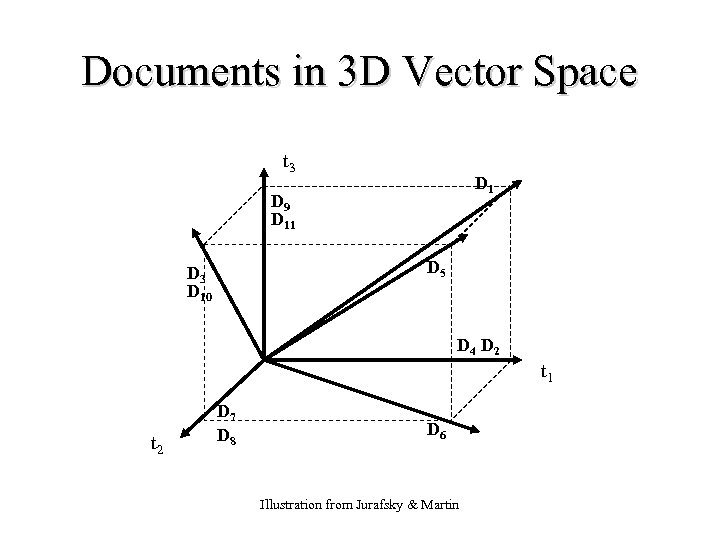

Documents in 3 D Vector Space t 3 D 1 D 9 D 11 D 5 D 3 D 10 D 4 D 2 t 1 t 2 D 7 D 8 D 6 Illustration from Jurafsky & Martin

Documents in 3 D Vector Space t 3 D 1 D 9 D 11 D 5 D 3 D 10 D 4 D 2 t 1 t 2 D 7 D 8 D 6 Illustration from Jurafsky & Martin

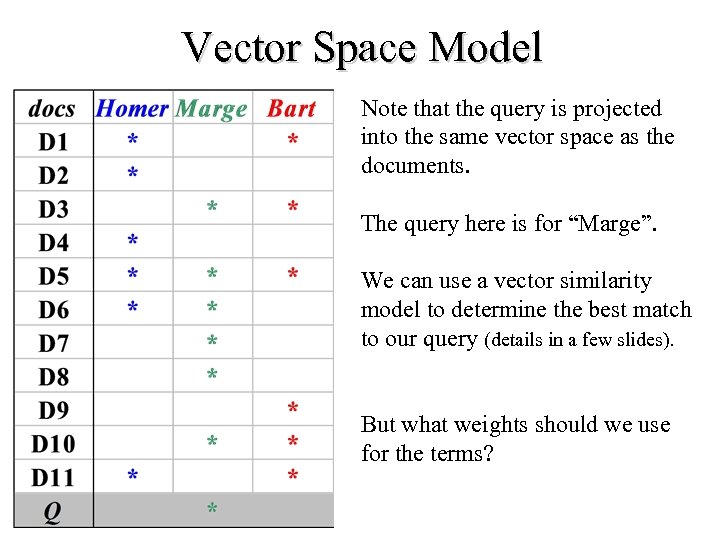

Vector Space Model Note that the query is projected into the same vector space as the documents. The query here is for “Marge”. We can use a vector similarity model to determine the best match to our query (details in a few slides). But what weights should we use for the terms?

Vector Space Model Note that the query is projected into the same vector space as the documents. The query here is for “Marge”. We can use a vector similarity model to determine the best match to our query (details in a few slides). But what weights should we use for the terms?

Assigning Weights to Terms • Binary Weights • Raw term frequency • tf x idf – Recall the Zipf distribution – Want to weight terms highly if they are • frequent in relevant documents … BUT • infrequent in the collection as a whole

Assigning Weights to Terms • Binary Weights • Raw term frequency • tf x idf – Recall the Zipf distribution – Want to weight terms highly if they are • frequent in relevant documents … BUT • infrequent in the collection as a whole

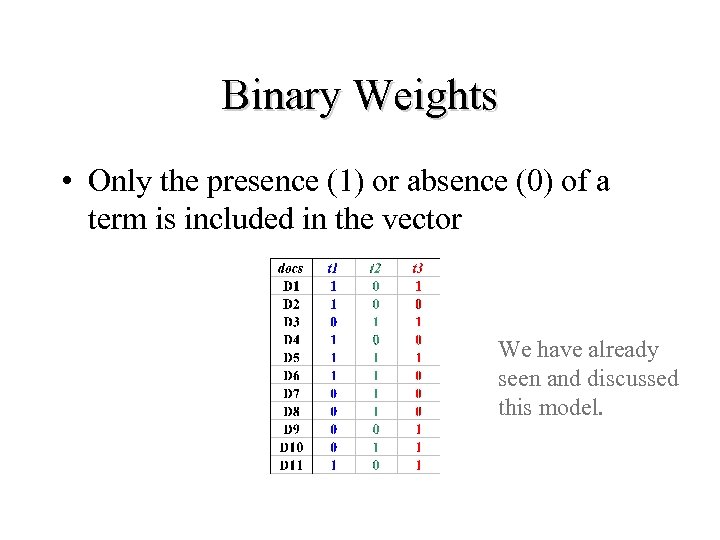

Binary Weights • Only the presence (1) or absence (0) of a term is included in the vector We have already seen and discussed this model.

Binary Weights • Only the presence (1) or absence (0) of a term is included in the vector We have already seen and discussed this model.

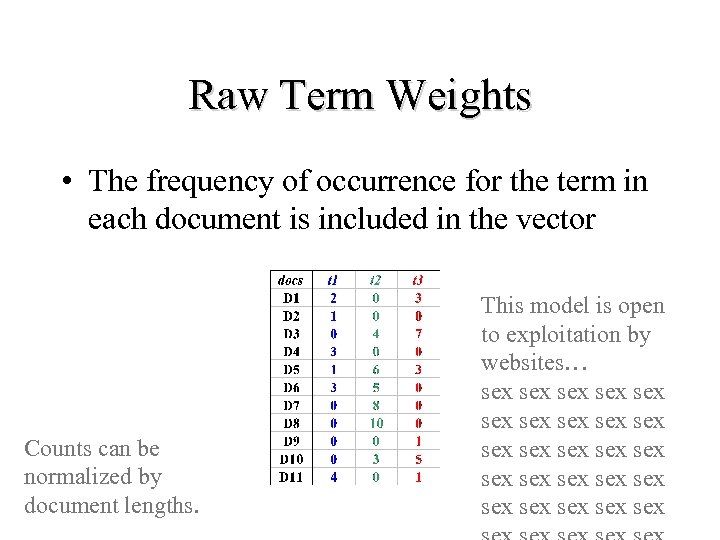

Raw Term Weights • The frequency of occurrence for the term in each document is included in the vector Counts can be normalized by document lengths. This model is open to exploitation by websites… sex sex sex sex sex sex sex

Raw Term Weights • The frequency of occurrence for the term in each document is included in the vector Counts can be normalized by document lengths. This model is open to exploitation by websites… sex sex sex sex sex sex sex

tf * idf Weights • tf * idf measure: – term frequency (tf) – inverse document frequency (idf) -- a way to deal with the problems of the Zipf distribution • Goal: assign a tf * idf weight to each term in each document

tf * idf Weights • tf * idf measure: – term frequency (tf) – inverse document frequency (idf) -- a way to deal with the problems of the Zipf distribution • Goal: assign a tf * idf weight to each term in each document

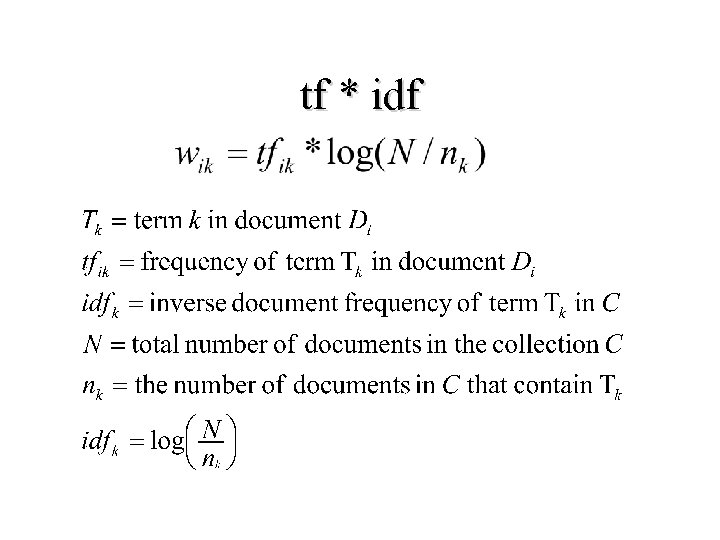

tf * idf

tf * idf

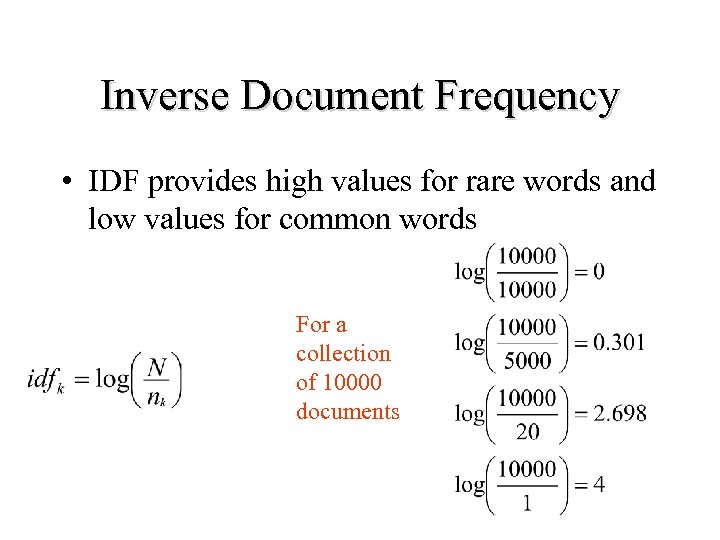

Inverse Document Frequency • IDF provides high values for rare words and low values for common words For a collection of 10000 documents

Inverse Document Frequency • IDF provides high values for rare words and low values for common words For a collection of 10000 documents

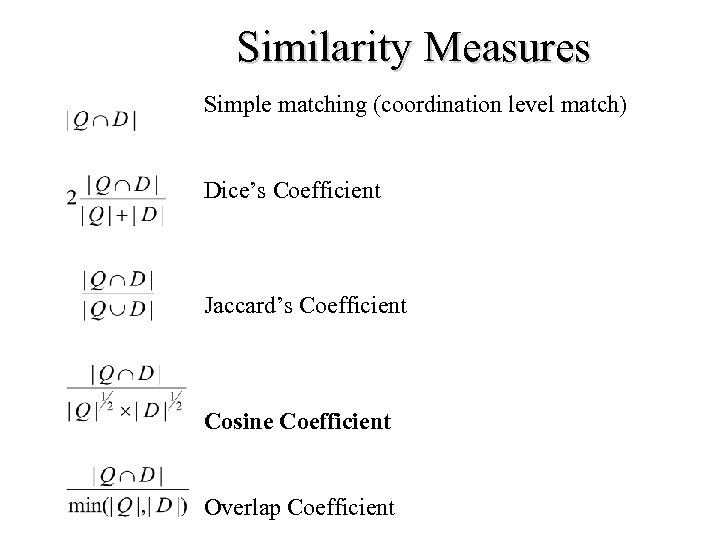

Similarity Measures Simple matching (coordination level match) Dice’s Coefficient Jaccard’s Coefficient Cosine Coefficient Overlap Coefficient

Similarity Measures Simple matching (coordination level match) Dice’s Coefficient Jaccard’s Coefficient Cosine Coefficient Overlap Coefficient

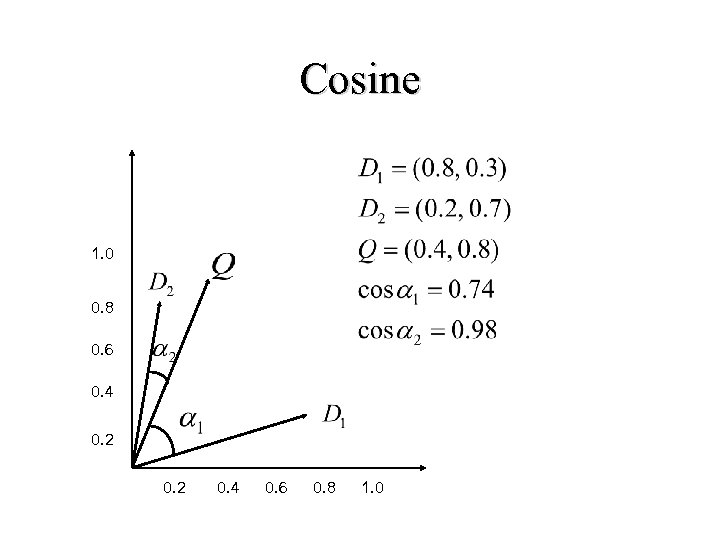

Cosine 1. 0 0. 8 0. 6 0. 4 0. 2 0. 4 0. 6 0. 8 1. 0

Cosine 1. 0 0. 8 0. 6 0. 4 0. 2 0. 4 0. 6 0. 8 1. 0

Problems with Vector Space • There is no real theoretical basis for the assumption of a term space – it is more for visualization that having any real basis – most similarity measures work about the same regardless of model • Terms are not really orthogonal dimensions – Terms are not independent of all other terms

Problems with Vector Space • There is no real theoretical basis for the assumption of a term space – it is more for visualization that having any real basis – most similarity measures work about the same regardless of model • Terms are not really orthogonal dimensions – Terms are not independent of all other terms

Probabilistic Models • Rigorous formal model attempts to predict the probability that a given document will be relevant to a given query • Ranks retrieved documents according to this probability of relevance (Probability Ranking Principle) • Rely on accurate estimates of probabilities

Probabilistic Models • Rigorous formal model attempts to predict the probability that a given document will be relevant to a given query • Ranks retrieved documents according to this probability of relevance (Probability Ranking Principle) • Rely on accurate estimates of probabilities

Relevance Feedback • Main Idea: – Modify existing query based on relevance judgements • Query Expansion: Extract terms from relevant documents and add them to the query • Term Re-weighing: and/or re-weight the terms already in the query – Two main approaches: • Automatic (psuedo-relevance feedback) • Users select relevant documents – Users/system select terms from an automaticallygenerated list

Relevance Feedback • Main Idea: – Modify existing query based on relevance judgements • Query Expansion: Extract terms from relevant documents and add them to the query • Term Re-weighing: and/or re-weight the terms already in the query – Two main approaches: • Automatic (psuedo-relevance feedback) • Users select relevant documents – Users/system select terms from an automaticallygenerated list

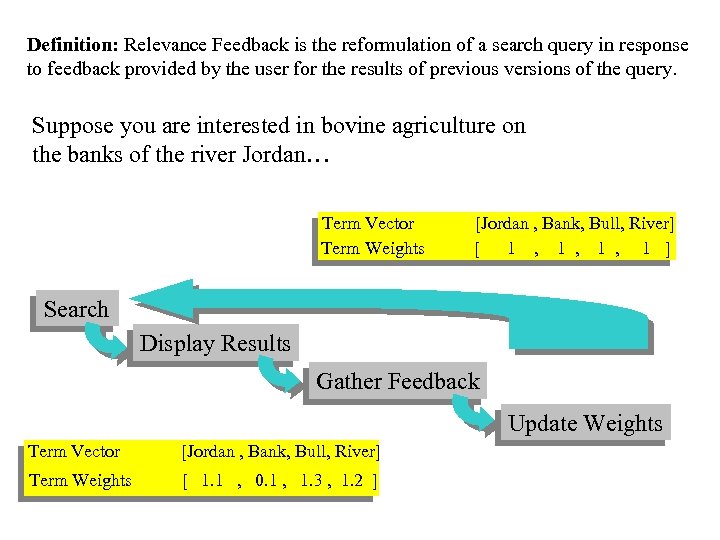

Definition: Relevance Feedback is the reformulation of a search query in response to feedback provided by the user for the results of previous versions of the query. Suppose you are interested in bovine agriculture on the banks of the river Jordan… Term Vector [Jordan , Bank, Bull, River] Term Weights [ 1 , 1 , 1 ] Search Display Results Gather Feedback Update Weights Term Vector [Jordan , Bank, Bull, River] Term Weights [ 1. 1 , 0. 1 , 1. 3 , 1. 2 ]

Definition: Relevance Feedback is the reformulation of a search query in response to feedback provided by the user for the results of previous versions of the query. Suppose you are interested in bovine agriculture on the banks of the river Jordan… Term Vector [Jordan , Bank, Bull, River] Term Weights [ 1 , 1 , 1 ] Search Display Results Gather Feedback Update Weights Term Vector [Jordan , Bank, Bull, River] Term Weights [ 1. 1 , 0. 1 , 1. 3 , 1. 2 ]

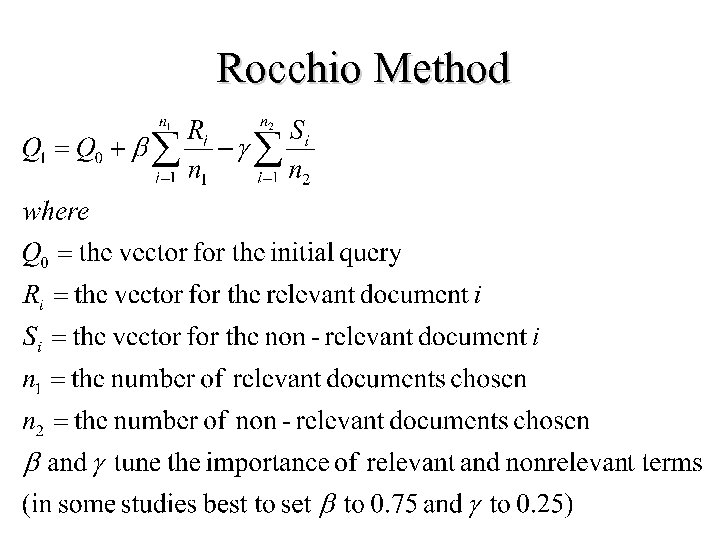

Rocchio Method

Rocchio Method

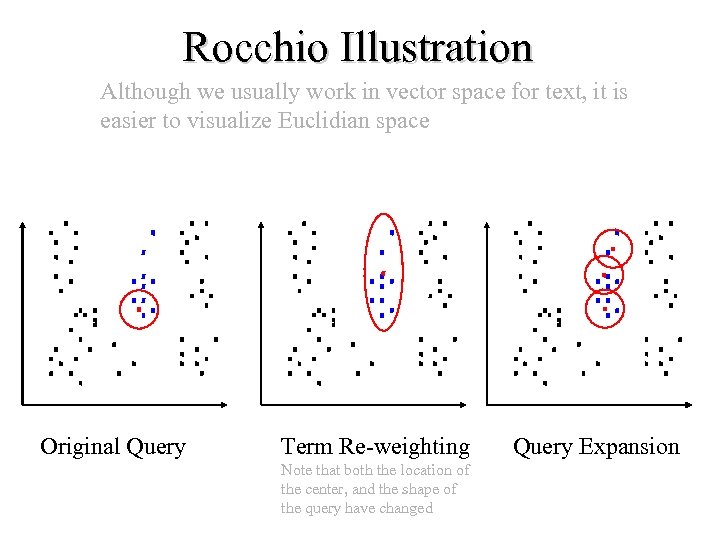

Rocchio Illustration Although we usually work in vector space for text, it is easier to visualize Euclidian space Original Query Term Re-weighting Note that both the location of the center, and the shape of the query have changed Query Expansion

Rocchio Illustration Although we usually work in vector space for text, it is easier to visualize Euclidian space Original Query Term Re-weighting Note that both the location of the center, and the shape of the query have changed Query Expansion

Rocchio Method • Rocchio automatically – re-weights terms – adds in new terms (from relevant docs) • have to be careful when using negative terms • Rocchio is not a machine learning algorithm • Most methods perform similarly – results heavily dependent on test collection • Machine learning methods are proving to work better than standard IR approaches like Rocchio

Rocchio Method • Rocchio automatically – re-weights terms – adds in new terms (from relevant docs) • have to be careful when using negative terms • Rocchio is not a machine learning algorithm • Most methods perform similarly – results heavily dependent on test collection • Machine learning methods are proving to work better than standard IR approaches like Rocchio

Using Relevance Feedback • Known to improve results • People don’t seem to like giving feedback!

Using Relevance Feedback • Known to improve results • People don’t seem to like giving feedback!

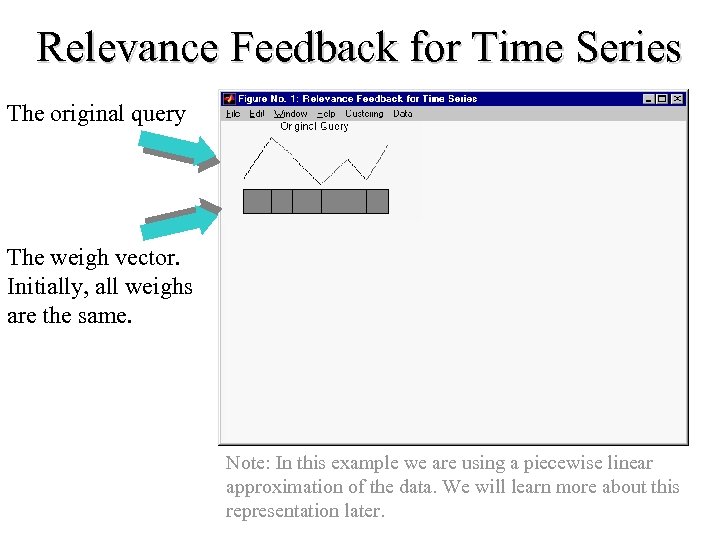

Relevance Feedback for Time Series The original query The weigh vector. Initially, all weighs are the same. Note: In this example we are using a piecewise linear approximation of the data. We will learn more about this representation later.

Relevance Feedback for Time Series The original query The weigh vector. Initially, all weighs are the same. Note: In this example we are using a piecewise linear approximation of the data. We will learn more about this representation later.

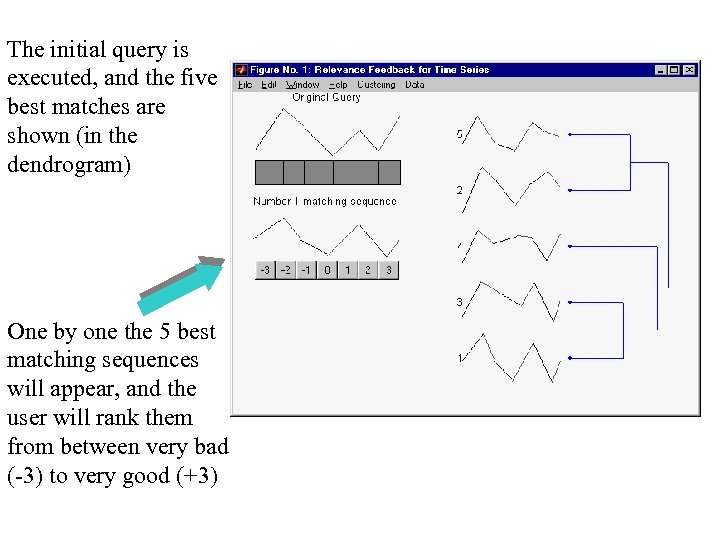

The initial query is executed, and the five best matches are shown (in the dendrogram) One by one the 5 best matching sequences will appear, and the user will rank them from between very bad (-3) to very good (+3)

The initial query is executed, and the five best matches are shown (in the dendrogram) One by one the 5 best matching sequences will appear, and the user will rank them from between very bad (-3) to very good (+3)

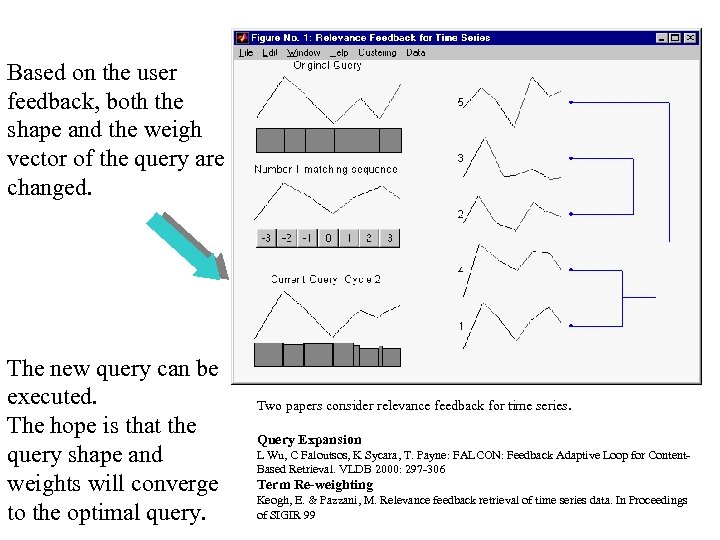

Based on the user feedback, both the shape and the weigh vector of the query are changed. The new query can be executed. The hope is that the query shape and weights will converge to the optimal query. Two papers consider relevance feedback for time series. Query Expansion L Wu, C Faloutsos, K Sycara, T. Payne: FALCON: Feedback Adaptive Loop for Content. Based Retrieval. VLDB 2000: 297 -306 Term Re-weighting Keogh, E. & Pazzani, M. Relevance feedback retrieval of time series data. In Proceedings of SIGIR 99

Based on the user feedback, both the shape and the weigh vector of the query are changed. The new query can be executed. The hope is that the query shape and weights will converge to the optimal query. Two papers consider relevance feedback for time series. Query Expansion L Wu, C Faloutsos, K Sycara, T. Payne: FALCON: Feedback Adaptive Loop for Content. Based Retrieval. VLDB 2000: 297 -306 Term Re-weighting Keogh, E. & Pazzani, M. Relevance feedback retrieval of time series data. In Proceedings of SIGIR 99

Document Space has High Dimensionality • What happens beyond 2 or 3 dimensions? • Similarity still has to do with how many tokens are shared in common. • More terms -> harder to understand which subsets of words are shared among similar documents. • One approach to handling high dimensionality: Clustering

Document Space has High Dimensionality • What happens beyond 2 or 3 dimensions? • Similarity still has to do with how many tokens are shared in common. • More terms -> harder to understand which subsets of words are shared among similar documents. • One approach to handling high dimensionality: Clustering

Text Clustering • Finds overall similarities among groups of documents. • Finds overall similarities among groups of tokens. • Picks out some themes, ignores others.

Text Clustering • Finds overall similarities among groups of documents. • Finds overall similarities among groups of tokens. • Picks out some themes, ignores others.

Scatter/Gather Hearst & Pedersen 95 • Cluster sets of documents into general “themes”, like a table of contents (using K-means) • Display the contents of the clusters by showing topical terms and typical titles • User chooses subsets of the clusters and re-clusters the documents within • Resulting new groups have different “themes”

Scatter/Gather Hearst & Pedersen 95 • Cluster sets of documents into general “themes”, like a table of contents (using K-means) • Display the contents of the clusters by showing topical terms and typical titles • User chooses subsets of the clusters and re-clusters the documents within • Resulting new groups have different “themes”

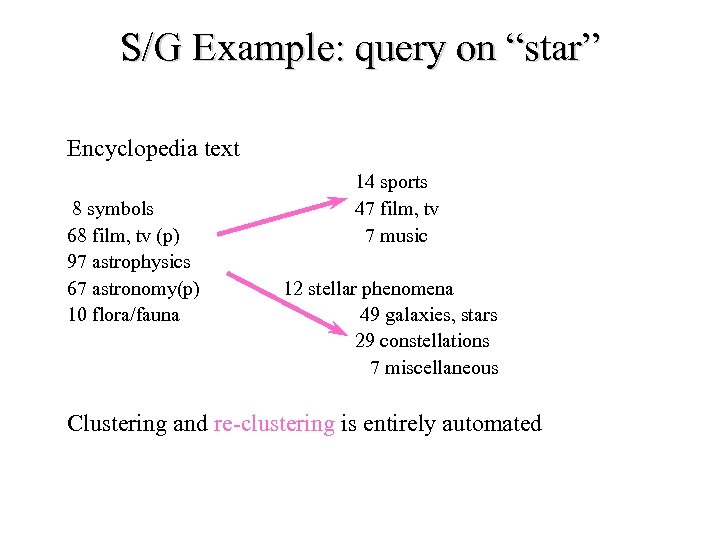

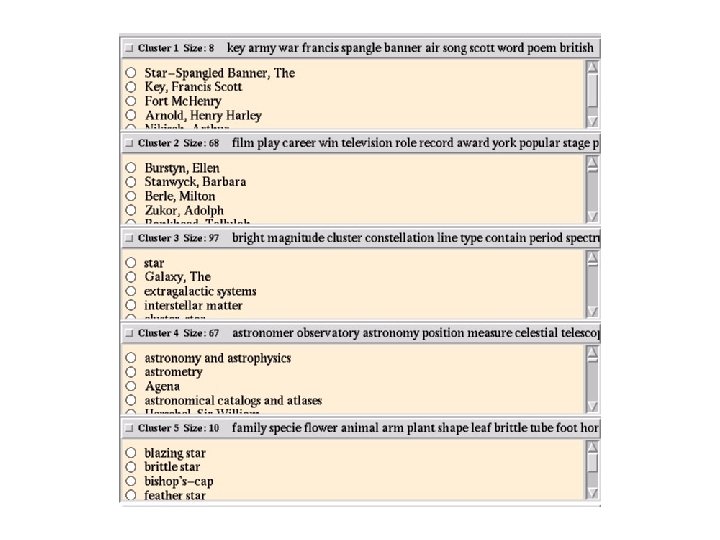

S/G Example: query on “star” Encyclopedia text 8 symbols 68 film, tv (p) 97 astrophysics 67 astronomy(p) 10 flora/fauna 14 sports 47 film, tv 7 music 12 stellar phenomena 49 galaxies, stars 29 constellations 7 miscellaneous Clustering and re-clustering is entirely automated

S/G Example: query on “star” Encyclopedia text 8 symbols 68 film, tv (p) 97 astrophysics 67 astronomy(p) 10 flora/fauna 14 sports 47 film, tv 7 music 12 stellar phenomena 49 galaxies, stars 29 constellations 7 miscellaneous Clustering and re-clustering is entirely automated

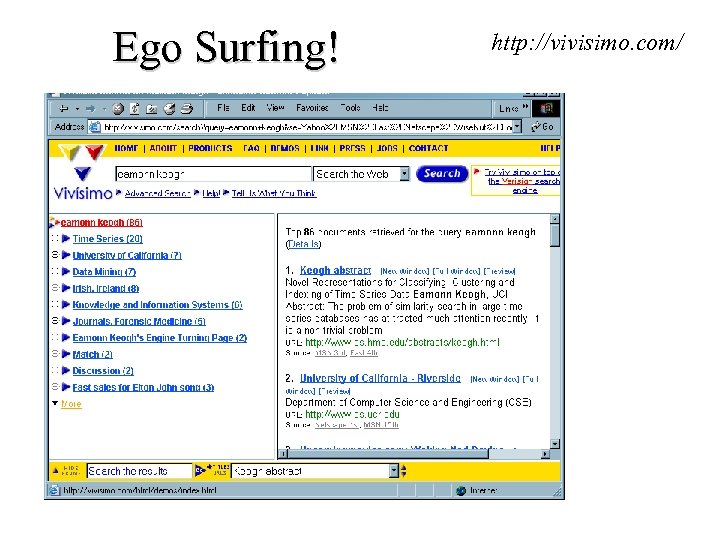

Ego Surfing! http: //vivisimo. com/

Ego Surfing! http: //vivisimo. com/

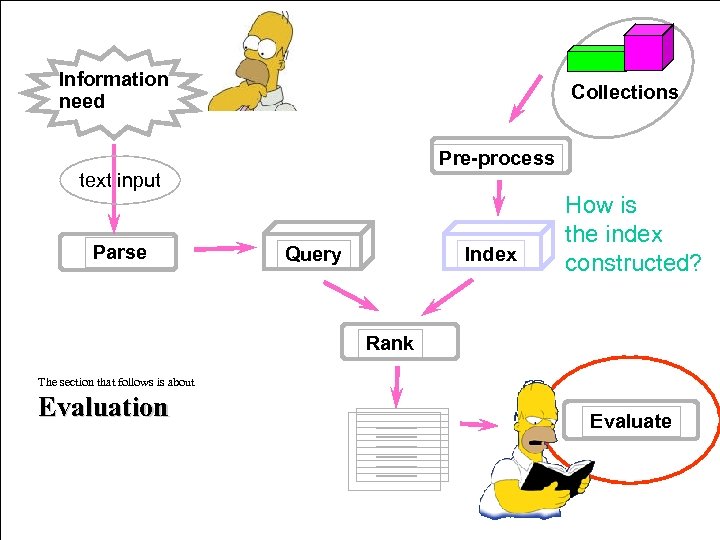

Information need Collections Pre-process text input Parse Query Index How is the index constructed? Rank The section that follows is about Evaluation Evaluate

Information need Collections Pre-process text input Parse Query Index How is the index constructed? Rank The section that follows is about Evaluation Evaluate

Evaluation • Why Evaluate? • What to Evaluate? • How to Evaluate?

Evaluation • Why Evaluate? • What to Evaluate? • How to Evaluate?

Why Evaluate? • Determine if the system is desirable • Make comparative assessments • Others?

Why Evaluate? • Determine if the system is desirable • Make comparative assessments • Others?

What to Evaluate? • How much of the information need is satisfied. • How much was learned about a topic. • Incidental learning: – How much was learned about the collection. – How much was learned about other topics. • How inviting the system is.

What to Evaluate? • How much of the information need is satisfied. • How much was learned about a topic. • Incidental learning: – How much was learned about the collection. – How much was learned about other topics. • How inviting the system is.

What to Evaluate? effectiveness What can be measured that reflects users’ ability to use system? (Cleverdon 66) – – – Coverage of Information Form of Presentation Effort required/Ease of Use Time and Space Efficiency Recall • proportion of relevant material actually retrieved – Precision • proportion of retrieved material actually relevant

What to Evaluate? effectiveness What can be measured that reflects users’ ability to use system? (Cleverdon 66) – – – Coverage of Information Form of Presentation Effort required/Ease of Use Time and Space Efficiency Recall • proportion of relevant material actually retrieved – Precision • proportion of retrieved material actually relevant

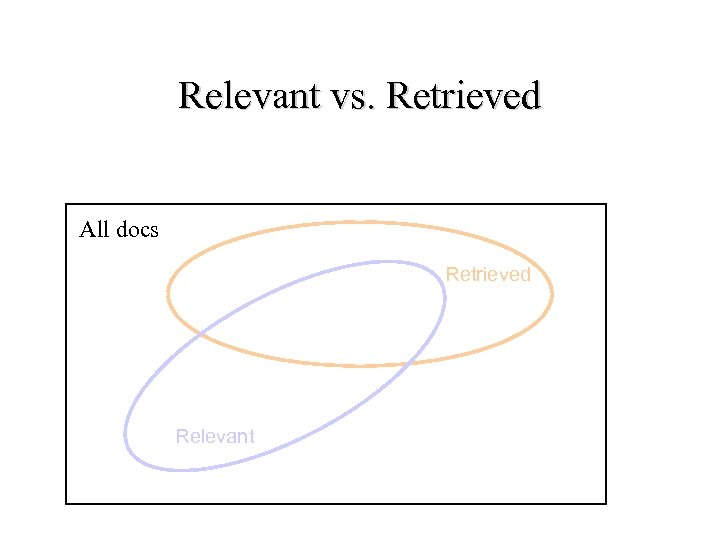

Relevant vs. Retrieved All docs Retrieved Relevant

Relevant vs. Retrieved All docs Retrieved Relevant

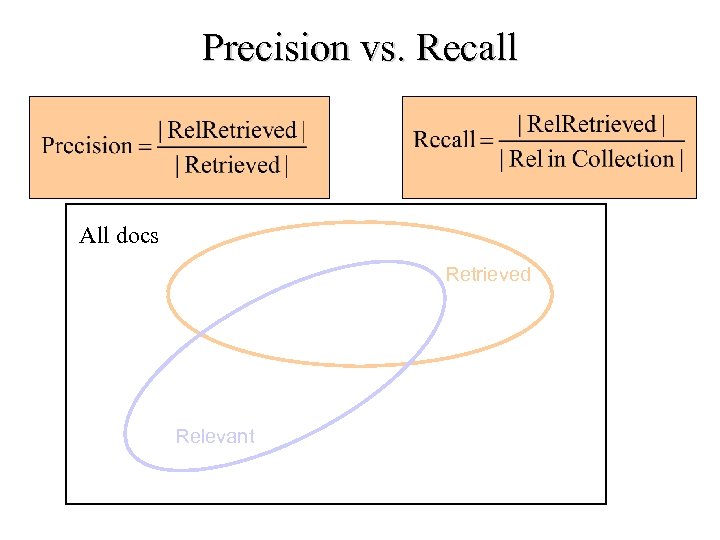

Precision vs. Recall All docs Retrieved Relevant

Precision vs. Recall All docs Retrieved Relevant

Why Precision and Recall? Intuition: Get as much good stuff while at the same time getting as little junk as possible.

Why Precision and Recall? Intuition: Get as much good stuff while at the same time getting as little junk as possible.

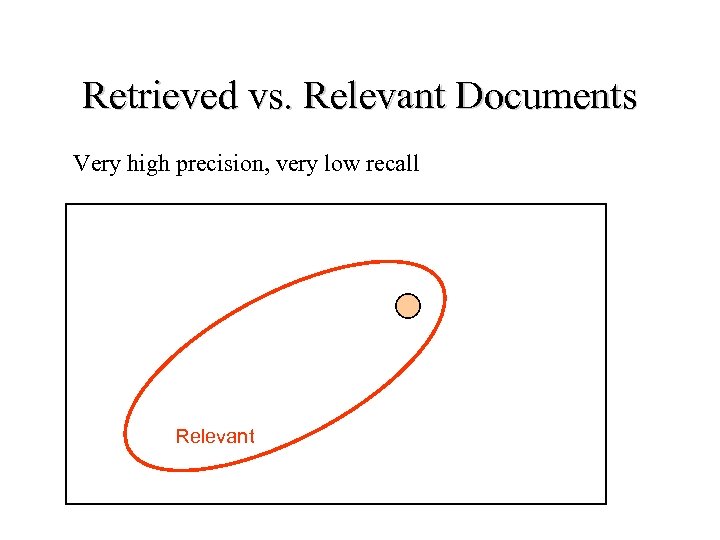

Retrieved vs. Relevant Documents Very high precision, very low recall Relevant

Retrieved vs. Relevant Documents Very high precision, very low recall Relevant

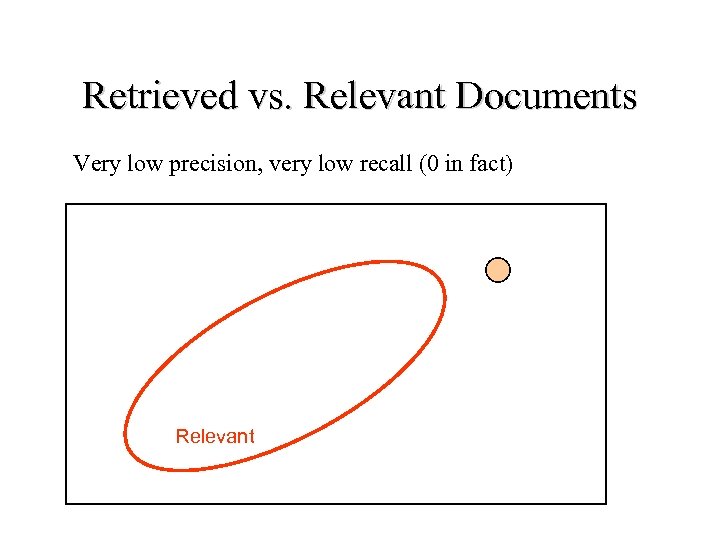

Retrieved vs. Relevant Documents Very low precision, very low recall (0 in fact) Relevant

Retrieved vs. Relevant Documents Very low precision, very low recall (0 in fact) Relevant

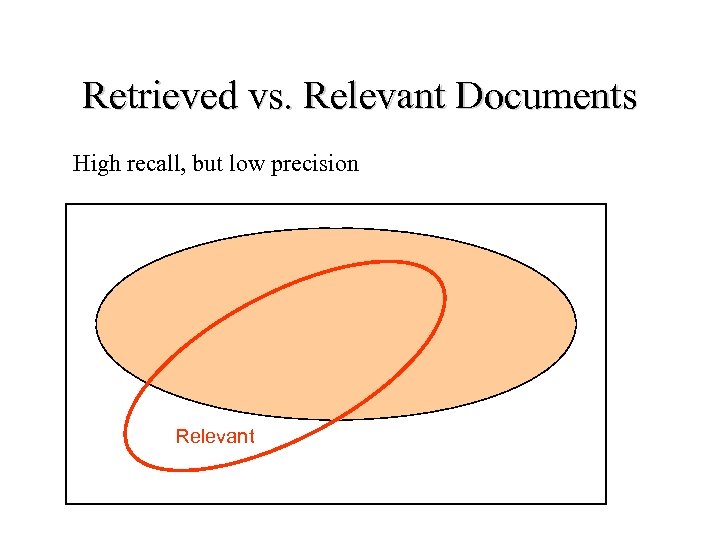

Retrieved vs. Relevant Documents High recall, but low precision Relevant

Retrieved vs. Relevant Documents High recall, but low precision Relevant

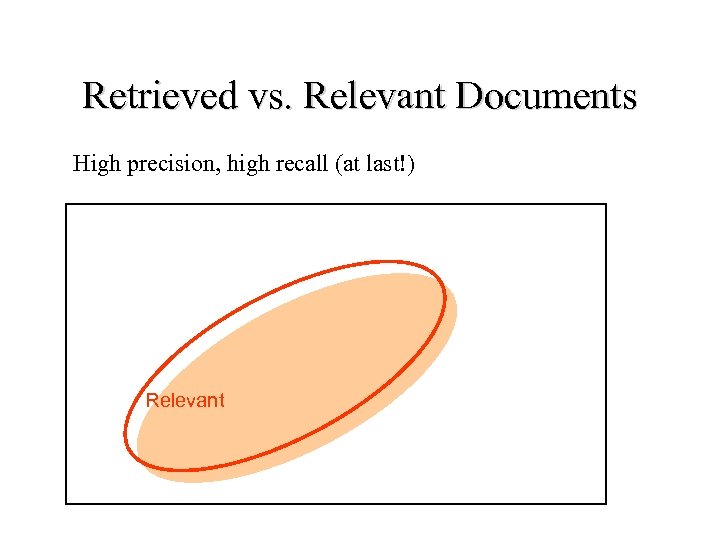

Retrieved vs. Relevant Documents High precision, high recall (at last!) Relevant

Retrieved vs. Relevant Documents High precision, high recall (at last!) Relevant

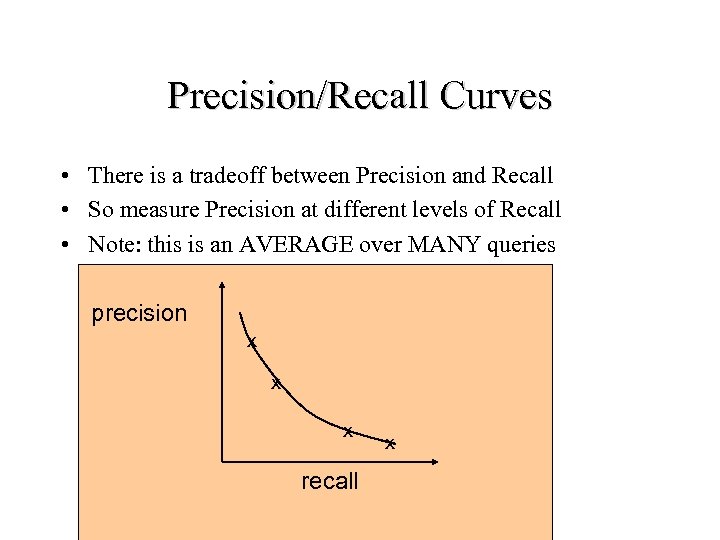

Precision/Recall Curves • There is a tradeoff between Precision and Recall • So measure Precision at different levels of Recall • Note: this is an AVERAGE over MANY queries precision x x x recall x

Precision/Recall Curves • There is a tradeoff between Precision and Recall • So measure Precision at different levels of Recall • Note: this is an AVERAGE over MANY queries precision x x x recall x

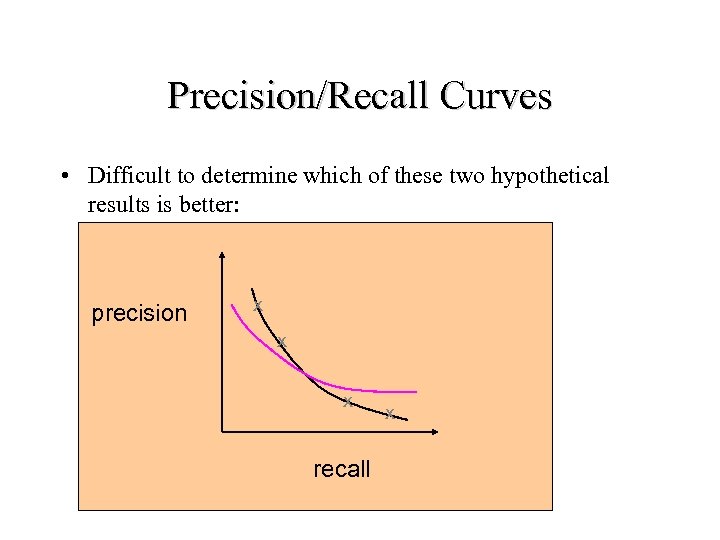

Precision/Recall Curves • Difficult to determine which of these two hypothetical results is better: precision x x x recall x

Precision/Recall Curves • Difficult to determine which of these two hypothetical results is better: precision x x x recall x

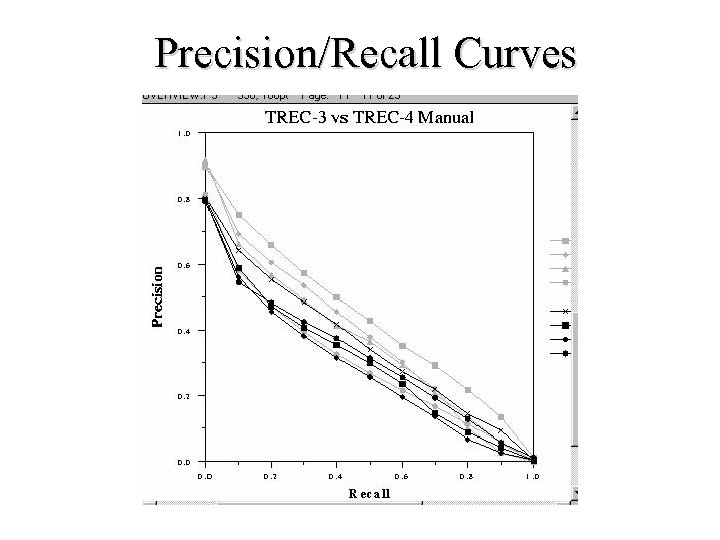

Precision/Recall Curves

Precision/Recall Curves

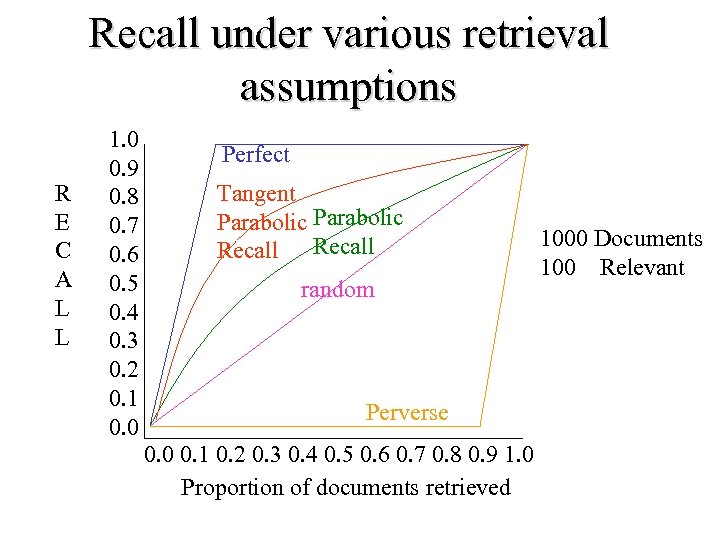

Recall under various retrieval assumptions R E C A L L 1. 0 0. 9 0. 8 0. 7 0. 6 0. 5 0. 4 0. 3 0. 2 0. 1 0. 0 Perfect Tangent Parabolic Recall random Perverse 0. 0 0. 1 0. 2 0. 3 0. 4 0. 5 0. 6 0. 7 0. 8 0. 9 1. 0 Proportion of documents retrieved 1000 Documents 100 Relevant

Recall under various retrieval assumptions R E C A L L 1. 0 0. 9 0. 8 0. 7 0. 6 0. 5 0. 4 0. 3 0. 2 0. 1 0. 0 Perfect Tangent Parabolic Recall random Perverse 0. 0 0. 1 0. 2 0. 3 0. 4 0. 5 0. 6 0. 7 0. 8 0. 9 1. 0 Proportion of documents retrieved 1000 Documents 100 Relevant

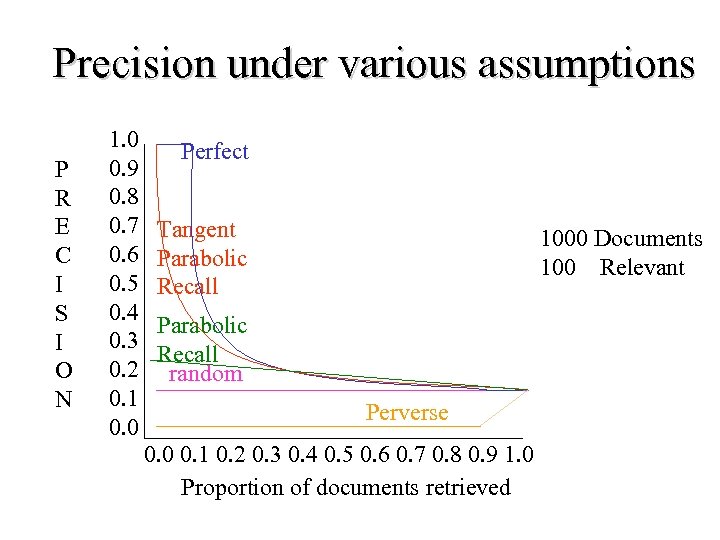

Precision under various assumptions P R E C I S I O N 1. 0 0. 9 0. 8 0. 7 0. 6 0. 5 0. 4 0. 3 0. 2 0. 1 0. 0 Perfect Tangent Parabolic Recall 1000 Documents 100 Relevant Parabolic Recall random Perverse 0. 0 0. 1 0. 2 0. 3 0. 4 0. 5 0. 6 0. 7 0. 8 0. 9 1. 0 Proportion of documents retrieved

Precision under various assumptions P R E C I S I O N 1. 0 0. 9 0. 8 0. 7 0. 6 0. 5 0. 4 0. 3 0. 2 0. 1 0. 0 Perfect Tangent Parabolic Recall 1000 Documents 100 Relevant Parabolic Recall random Perverse 0. 0 0. 1 0. 2 0. 3 0. 4 0. 5 0. 6 0. 7 0. 8 0. 9 1. 0 Proportion of documents retrieved

Document Cutoff Levels • Another way to evaluate: – Fix the number of documents retrieved at several levels: • • • top 5 top 10 top 20 top 50 top 100 top 500 – Measure precision at each of these levels – Take (weighted) average over results • This is a way to focus on how well the system ranks the first k documents.

Document Cutoff Levels • Another way to evaluate: – Fix the number of documents retrieved at several levels: • • • top 5 top 10 top 20 top 50 top 100 top 500 – Measure precision at each of these levels – Take (weighted) average over results • This is a way to focus on how well the system ranks the first k documents.

Problems with Precision/Recall • Can’t know true recall value – except in small collections • Precision/Recall are related – A combined measure sometimes more appropriate • Assumes batch mode – Interactive IR is important and has different criteria for successful searches – Assumes a strict rank ordering matters.

Problems with Precision/Recall • Can’t know true recall value – except in small collections • Precision/Recall are related – A combined measure sometimes more appropriate • Assumes batch mode – Interactive IR is important and has different criteria for successful searches – Assumes a strict rank ordering matters.

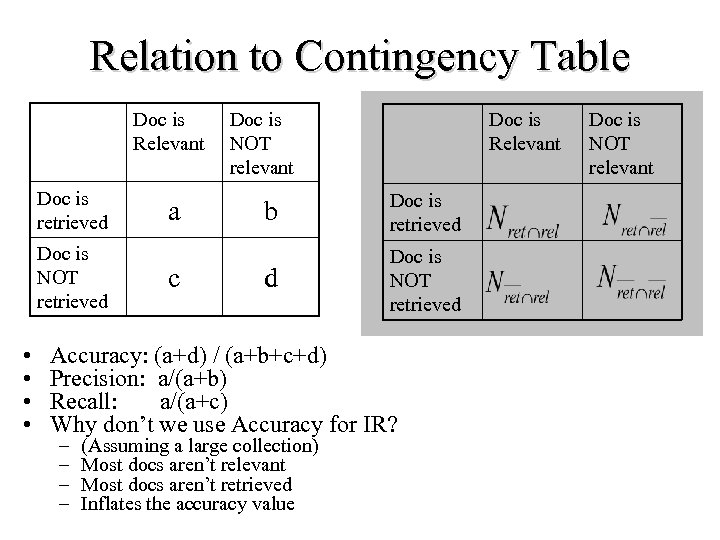

Relation to Contingency Table Doc is Relevant Doc is retrieved Doc is NOT retrieved • • a c Doc is NOT relevant Doc is Relevant b Doc is retrieved d Doc is NOT retrieved Accuracy: (a+d) / (a+b+c+d) Precision: a/(a+b) Recall: a/(a+c) Why don’t we use Accuracy for IR? – – (Assuming a large collection) Most docs aren’t relevant Most docs aren’t retrieved Inflates the accuracy value Doc is NOT relevant

Relation to Contingency Table Doc is Relevant Doc is retrieved Doc is NOT retrieved • • a c Doc is NOT relevant Doc is Relevant b Doc is retrieved d Doc is NOT retrieved Accuracy: (a+d) / (a+b+c+d) Precision: a/(a+b) Recall: a/(a+c) Why don’t we use Accuracy for IR? – – (Assuming a large collection) Most docs aren’t relevant Most docs aren’t retrieved Inflates the accuracy value Doc is NOT relevant

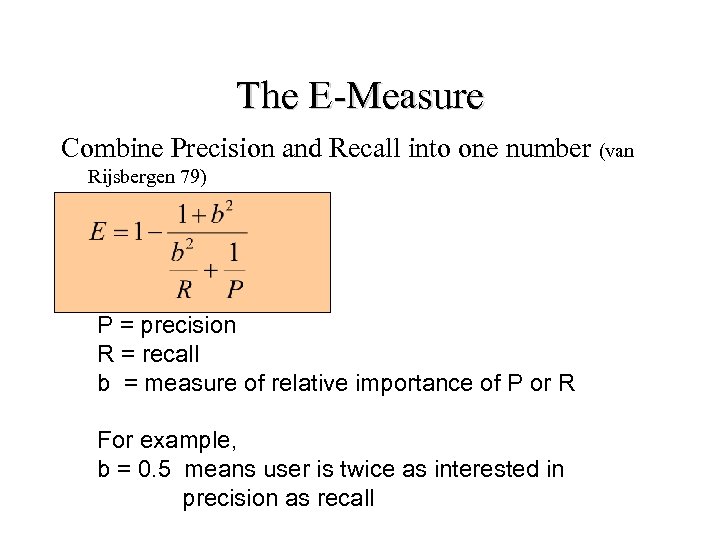

The E-Measure Combine Precision and Recall into one number (van Rijsbergen 79) P = precision R = recall b = measure of relative importance of P or R For example, b = 0. 5 means user is twice as interested in precision as recall

The E-Measure Combine Precision and Recall into one number (van Rijsbergen 79) P = precision R = recall b = measure of relative importance of P or R For example, b = 0. 5 means user is twice as interested in precision as recall

How to Evaluate? Test Collections

How to Evaluate? Test Collections

Test Collections • Cranfield 2 – – 1400 Documents, 221 Queries – 200 Documents, 42 Queries • INSPEC – 542 Documents, 97 Queries • UKCIS -- > 10000 Documents, multiple sets, 193 Queries • ADI – 82 Document, 35 Queries • CACM – 3204 Documents, 50 Queries • CISI – 1460 Documents, 35 Queries • MEDLARS (Salton) 273 Documents, 18 Queries

Test Collections • Cranfield 2 – – 1400 Documents, 221 Queries – 200 Documents, 42 Queries • INSPEC – 542 Documents, 97 Queries • UKCIS -- > 10000 Documents, multiple sets, 193 Queries • ADI – 82 Document, 35 Queries • CACM – 3204 Documents, 50 Queries • CISI – 1460 Documents, 35 Queries • MEDLARS (Salton) 273 Documents, 18 Queries

TREC • Text REtrieval Conference/Competition – Run by NIST (National Institute of Standards & Technology) – 2002 (November) will be 11 th year • Collection: >6 Gigabytes (5 CRDOMs), >1. 5 Million Docs – Newswire & full text news (AP, WSJ, Ziff, FT) – Government documents (federal register, Congressional Record) – Radio Transcripts (FBIS) – Web “subsets”

TREC • Text REtrieval Conference/Competition – Run by NIST (National Institute of Standards & Technology) – 2002 (November) will be 11 th year • Collection: >6 Gigabytes (5 CRDOMs), >1. 5 Million Docs – Newswire & full text news (AP, WSJ, Ziff, FT) – Government documents (federal register, Congressional Record) – Radio Transcripts (FBIS) – Web “subsets”

TREC (cont. ) • Queries + Relevance Judgments – Queries devised and judged by “Information Specialists” – Relevance judgments done only for those documents retrieved -- not entire collection! • Competition – Various research and commercial groups compete (TREC 6 had 51, TREC 7 had 56, TREC 8 had 66) – Results judged on precision and recall, going up to a recall level of 1000 documents

TREC (cont. ) • Queries + Relevance Judgments – Queries devised and judged by “Information Specialists” – Relevance judgments done only for those documents retrieved -- not entire collection! • Competition – Various research and commercial groups compete (TREC 6 had 51, TREC 7 had 56, TREC 8 had 66) – Results judged on precision and recall, going up to a recall level of 1000 documents

TREC • Benefits: – made research systems scale to large collections (pre. WWW) – allows for somewhat controlled comparisons • Drawbacks: – emphasis on high recall, which may be unrealistic for what most users want – very long queries, also unrealistic – comparisons still difficult to make, because systems are quite different on many dimensions – focus on batch ranking rather than interaction – no focus on the WWW

TREC • Benefits: – made research systems scale to large collections (pre. WWW) – allows for somewhat controlled comparisons • Drawbacks: – emphasis on high recall, which may be unrealistic for what most users want – very long queries, also unrealistic – comparisons still difficult to make, because systems are quite different on many dimensions – focus on batch ranking rather than interaction – no focus on the WWW

TREC is changing • Emphasis on specialized “tracks” – Interactive track – Natural Language Processing (NLP) track – Multilingual tracks (Chinese, Spanish) – Filtering track – High-Precision – High-Performance • http: //trec. nist. gov/

TREC is changing • Emphasis on specialized “tracks” – Interactive track – Natural Language Processing (NLP) track – Multilingual tracks (Chinese, Spanish) – Filtering track – High-Precision – High-Performance • http: //trec. nist. gov/

What to Evaluate? • Effectiveness – Difficult to measure – Recall and Precision are one way – What might be others?

What to Evaluate? • Effectiveness – Difficult to measure – Recall and Precision are one way – What might be others?