d94dd761d82841ff7e2e74017bbe2970.ppt

- Количество слайдов: 60

Techniques for Developing Efficient Petascale Applications Laxmikant (Sanjay) Kale http: //charm. cs. uiuc. edu Parallel Programming Laboratory Department of Computer Science University of Illinois at Urbana Champaign

Techniques for Developing Efficient Petascale Applications Laxmikant (Sanjay) Kale http: //charm. cs. uiuc. edu Parallel Programming Laboratory Department of Computer Science University of Illinois at Urbana Champaign

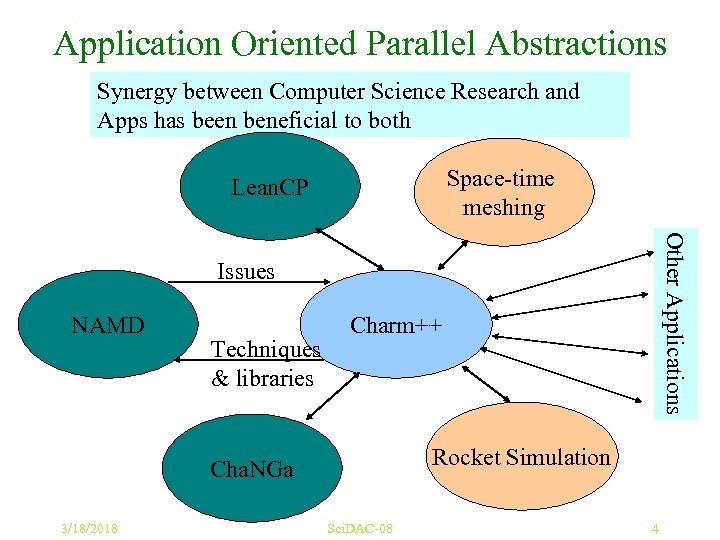

Application Oriented Parallel Abstractions Synergy between Computer Science Research and Apps has been beneficial to both Space-time meshing Lean. CP Other Applications Issues NAMD Techniques & libraries Charm++ Rocket Simulation Cha. NGa 3/18/2018 Sci. DAC-08 4

Application Oriented Parallel Abstractions Synergy between Computer Science Research and Apps has been beneficial to both Space-time meshing Lean. CP Other Applications Issues NAMD Techniques & libraries Charm++ Rocket Simulation Cha. NGa 3/18/2018 Sci. DAC-08 4

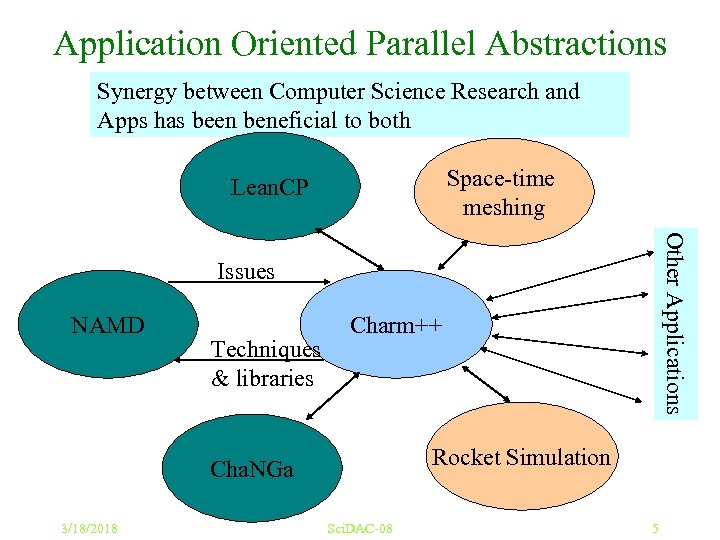

Application Oriented Parallel Abstractions Synergy between Computer Science Research and Apps has been beneficial to both Space-time meshing Lean. CP Other Applications Issues NAMD Techniques & libraries Charm++ Rocket Simulation Cha. NGa 3/18/2018 Sci. DAC-08 5

Application Oriented Parallel Abstractions Synergy between Computer Science Research and Apps has been beneficial to both Space-time meshing Lean. CP Other Applications Issues NAMD Techniques & libraries Charm++ Rocket Simulation Cha. NGa 3/18/2018 Sci. DAC-08 5

Enabling CS technology of parallel objects and intelligent runtime systems (Charm++ and AMPI) has led to several collaborative applications in CSE 3/18/2018 PSC Peta. Scale 6

Enabling CS technology of parallel objects and intelligent runtime systems (Charm++ and AMPI) has led to several collaborative applications in CSE 3/18/2018 PSC Peta. Scale 6

Outline • Techniques for Petascale Programming: – My biases: isoefficiency analysis, overdecompistion, automated resource management, sophisticated perf. analysis tools • Big. Sim: • Early development of Applications on future machines • Dealing with Multicore processors and SMP nodes • Novel parallel programming models 3/18/2018 Sci. DAC-08 7

Outline • Techniques for Petascale Programming: – My biases: isoefficiency analysis, overdecompistion, automated resource management, sophisticated perf. analysis tools • Big. Sim: • Early development of Applications on future machines • Dealing with Multicore processors and SMP nodes • Novel parallel programming models 3/18/2018 Sci. DAC-08 7

Techniques For Scaling to Multi-Peta. FLOP/s 3/18/2018 Sci. DAC-08 8

Techniques For Scaling to Multi-Peta. FLOP/s 3/18/2018 Sci. DAC-08 8

1. Analyze Scalability of Algorithms (say via the iso-efficiency metric) 3/18/2018 Sci. DAC-08 9

1. Analyze Scalability of Algorithms (say via the iso-efficiency metric) 3/18/2018 Sci. DAC-08 9

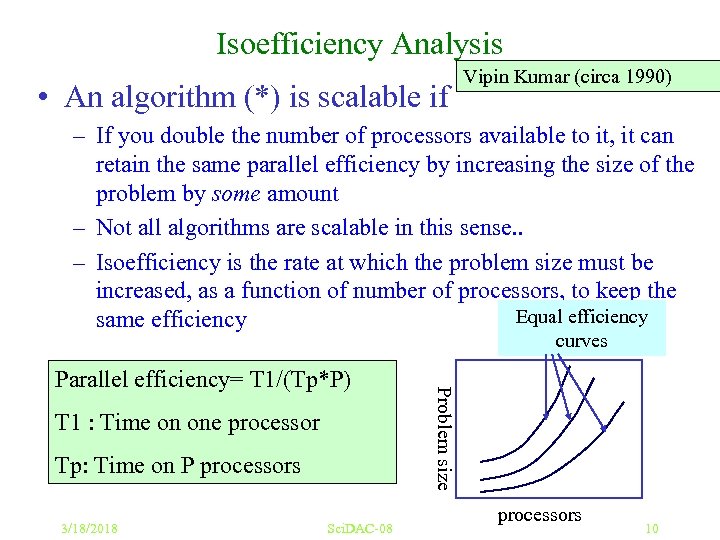

Isoefficiency Analysis • An algorithm (*) is scalable if Vipin Kumar (circa 1990) – If you double the number of processors available to it, it can retain the same parallel efficiency by increasing the size of the problem by some amount – Not all algorithms are scalable in this sense. . – Isoefficiency is the rate at which the problem size must be increased, as a function of number of processors, to keep the Equal efficiency same efficiency curves T 1 : Time on one processor Tp: Time on P processors 3/18/2018 Sci. DAC-08 Problem size Parallel efficiency= T 1/(Tp*P) processors 10

Isoefficiency Analysis • An algorithm (*) is scalable if Vipin Kumar (circa 1990) – If you double the number of processors available to it, it can retain the same parallel efficiency by increasing the size of the problem by some amount – Not all algorithms are scalable in this sense. . – Isoefficiency is the rate at which the problem size must be increased, as a function of number of processors, to keep the Equal efficiency same efficiency curves T 1 : Time on one processor Tp: Time on P processors 3/18/2018 Sci. DAC-08 Problem size Parallel efficiency= T 1/(Tp*P) processors 10

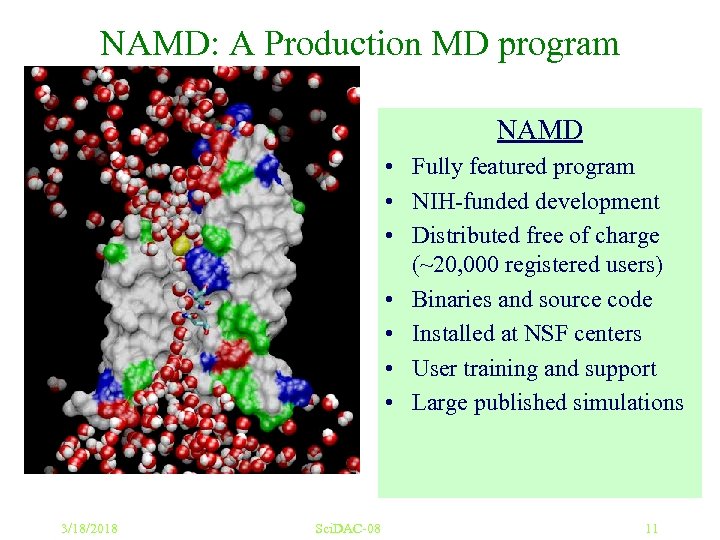

NAMD: A Production MD program NAMD • Fully featured program • NIH-funded development • Distributed free of charge (~20, 000 registered users) • Binaries and source code • Installed at NSF centers • User training and support • Large published simulations 3/18/2018 Sci. DAC-08 11

NAMD: A Production MD program NAMD • Fully featured program • NIH-funded development • Distributed free of charge (~20, 000 registered users) • Binaries and source code • Installed at NSF centers • User training and support • Large published simulations 3/18/2018 Sci. DAC-08 11

![Molecular Dynamics in NAMD • Collection of [charged] atoms, with bonds – Newtonian mechanics Molecular Dynamics in NAMD • Collection of [charged] atoms, with bonds – Newtonian mechanics](https://present5.com/presentation/d94dd761d82841ff7e2e74017bbe2970/image-10.jpg) Molecular Dynamics in NAMD • Collection of [charged] atoms, with bonds – Newtonian mechanics – Thousands of atoms (10, 000 – 5, 000) • At each time-step – Calculate forces on each atom • Bonds: • Non-bonded: electrostatic and van der Waal’s – Short-distance: every timestep – Long-distance: using PME (3 D FFT) – Multiple Time Stepping : PME every 4 timesteps – Calculate velocities and advance positions • Challenge: femtosecond time-step, millions needed! Collaboration with K. Schulten, R. Skeel, and coworkers 3/18/2018 Sci. DAC-08 12

Molecular Dynamics in NAMD • Collection of [charged] atoms, with bonds – Newtonian mechanics – Thousands of atoms (10, 000 – 5, 000) • At each time-step – Calculate forces on each atom • Bonds: • Non-bonded: electrostatic and van der Waal’s – Short-distance: every timestep – Long-distance: using PME (3 D FFT) – Multiple Time Stepping : PME every 4 timesteps – Calculate velocities and advance positions • Challenge: femtosecond time-step, millions needed! Collaboration with K. Schulten, R. Skeel, and coworkers 3/18/2018 Sci. DAC-08 12

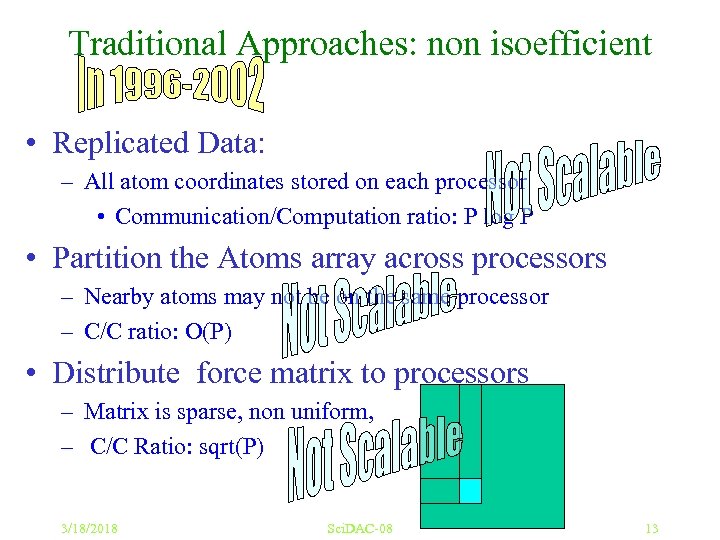

Traditional Approaches: non isoefficient • Replicated Data: – All atom coordinates stored on each processor • Communication/Computation ratio: P log P • Partition the Atoms array across processors – Nearby atoms may not be on the same processor – C/C ratio: O(P) • Distribute force matrix to processors – Matrix is sparse, non uniform, – C/C Ratio: sqrt(P) 3/18/2018 Sci. DAC-08 13

Traditional Approaches: non isoefficient • Replicated Data: – All atom coordinates stored on each processor • Communication/Computation ratio: P log P • Partition the Atoms array across processors – Nearby atoms may not be on the same processor – C/C ratio: O(P) • Distribute force matrix to processors – Matrix is sparse, non uniform, – C/C Ratio: sqrt(P) 3/18/2018 Sci. DAC-08 13

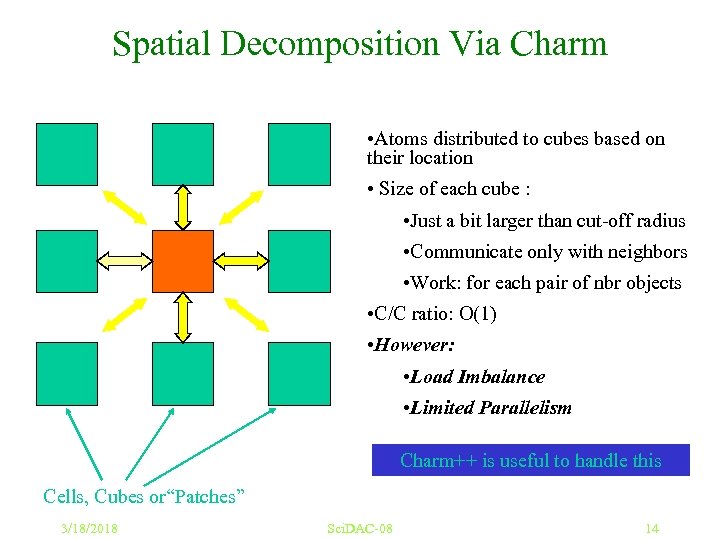

Spatial Decomposition Via Charm • Atoms distributed to cubes based on their location • Size of each cube : • Just a bit larger than cut-off radius • Communicate only with neighbors • Work: for each pair of nbr objects • C/C ratio: O(1) • However: • Load Imbalance • Limited Parallelism Charm++ is useful to handle this Cells, Cubes or“Patches” 3/18/2018 Sci. DAC-08 14

Spatial Decomposition Via Charm • Atoms distributed to cubes based on their location • Size of each cube : • Just a bit larger than cut-off radius • Communicate only with neighbors • Work: for each pair of nbr objects • C/C ratio: O(1) • However: • Load Imbalance • Limited Parallelism Charm++ is useful to handle this Cells, Cubes or“Patches” 3/18/2018 Sci. DAC-08 14

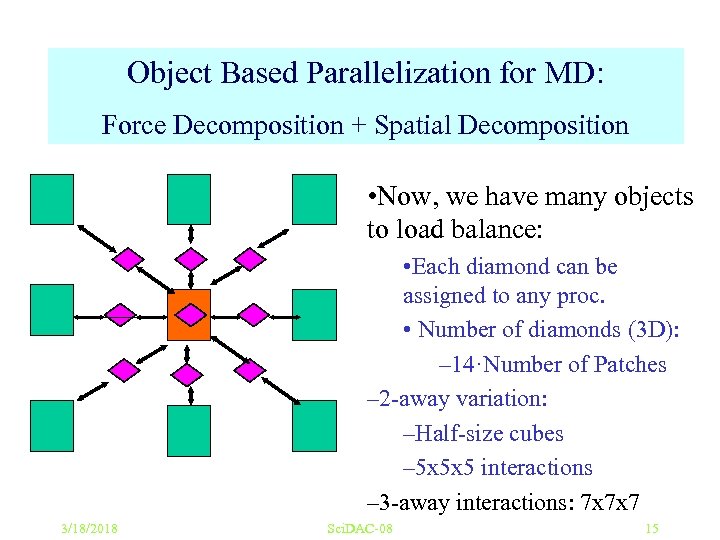

Object Based Parallelization for MD: Force Decomposition + Spatial Decomposition • Now, we have many objects to load balance: • Each diamond can be assigned to any proc. • Number of diamonds (3 D): – 14·Number of Patches – 2 -away variation: –Half-size cubes – 5 x 5 x 5 interactions – 3 -away interactions: 7 x 7 x 7 3/18/2018 Sci. DAC-08 15

Object Based Parallelization for MD: Force Decomposition + Spatial Decomposition • Now, we have many objects to load balance: • Each diamond can be assigned to any proc. • Number of diamonds (3 D): – 14·Number of Patches – 2 -away variation: –Half-size cubes – 5 x 5 x 5 interactions – 3 -away interactions: 7 x 7 x 7 3/18/2018 Sci. DAC-08 15

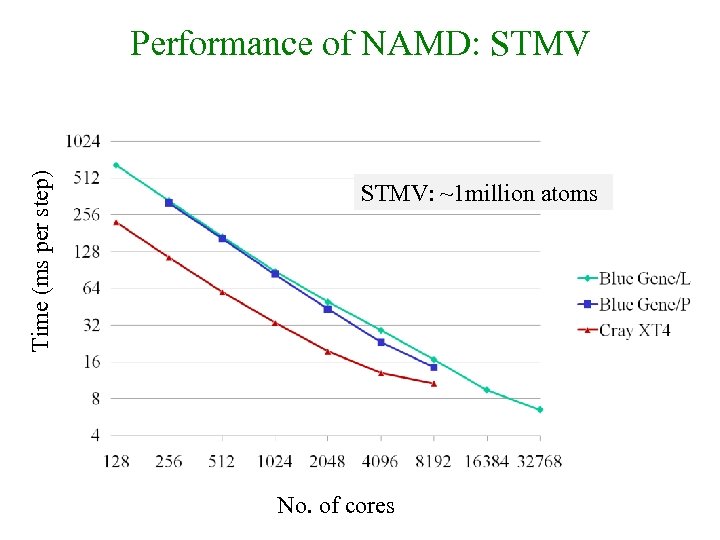

Time (ms per step) Performance of NAMD: STMV: ~1 million atoms No. of cores

Time (ms per step) Performance of NAMD: STMV: ~1 million atoms No. of cores

2. Decouple decomposition from Physical Processors 3/18/2018 Sci. DAC-08 17

2. Decouple decomposition from Physical Processors 3/18/2018 Sci. DAC-08 17

![Migratable Objects (aka Processor Virtualization) Programmer: [Over] decomposition into virtual processors Benefits • Software Migratable Objects (aka Processor Virtualization) Programmer: [Over] decomposition into virtual processors Benefits • Software](https://present5.com/presentation/d94dd761d82841ff7e2e74017bbe2970/image-16.jpg) Migratable Objects (aka Processor Virtualization) Programmer: [Over] decomposition into virtual processors Benefits • Software engineering – Number of virtual processors can be independently controlled – Separate VPs for different modules Runtime: Assigns VPs to processors Enables adaptive runtime strategies Implementations: Charm++, AMPI • Message driven execution – Adaptive overlap of communication – Predictability : • Automatic out-of-core • Prefetch to local stores – Asynchronous reductions System implementation • Dynamic mapping – Heterogeneous clusters • Vacate, adjust to speed, share – Automatic checkpointing – Change set of processors used – Automatic dynamic load balancing – Communication optimization User View 3/18/2018 Sci. DAC-08 18

Migratable Objects (aka Processor Virtualization) Programmer: [Over] decomposition into virtual processors Benefits • Software engineering – Number of virtual processors can be independently controlled – Separate VPs for different modules Runtime: Assigns VPs to processors Enables adaptive runtime strategies Implementations: Charm++, AMPI • Message driven execution – Adaptive overlap of communication – Predictability : • Automatic out-of-core • Prefetch to local stores – Asynchronous reductions System implementation • Dynamic mapping – Heterogeneous clusters • Vacate, adjust to speed, share – Automatic checkpointing – Change set of processors used – Automatic dynamic load balancing – Communication optimization User View 3/18/2018 Sci. DAC-08 18

![Migratable Objects (aka Processor Virtualization) Programmer: [Over] decomposition into virtual processors Benefits • Software Migratable Objects (aka Processor Virtualization) Programmer: [Over] decomposition into virtual processors Benefits • Software](https://present5.com/presentation/d94dd761d82841ff7e2e74017bbe2970/image-17.jpg) Migratable Objects (aka Processor Virtualization) Programmer: [Over] decomposition into virtual processors Benefits • Software engineering – Number of virtual processors can be independently controlled – Separate VPs for different modules Runtime: Assigns VPs to processors Enables adaptive runtime strategies Implementations: Charm++, AMPI • Message driven execution – Adaptive overlap of communication – Predictability : • Automatic out-of-core • Prefetch to local stores – Asynchronous reductions MPI processes Virtual Processors (user-level migratable threads) • Dynamic mapping – Heterogeneous clusters • Vacate, adjust to speed, share – Automatic checkpointing – Change set of processors used – Automatic dynamic load balancing – Communication optimization Real Processors 3/18/2018 Sci. DAC-08 19

Migratable Objects (aka Processor Virtualization) Programmer: [Over] decomposition into virtual processors Benefits • Software engineering – Number of virtual processors can be independently controlled – Separate VPs for different modules Runtime: Assigns VPs to processors Enables adaptive runtime strategies Implementations: Charm++, AMPI • Message driven execution – Adaptive overlap of communication – Predictability : • Automatic out-of-core • Prefetch to local stores – Asynchronous reductions MPI processes Virtual Processors (user-level migratable threads) • Dynamic mapping – Heterogeneous clusters • Vacate, adjust to speed, share – Automatic checkpointing – Change set of processors used – Automatic dynamic load balancing – Communication optimization Real Processors 3/18/2018 Sci. DAC-08 19

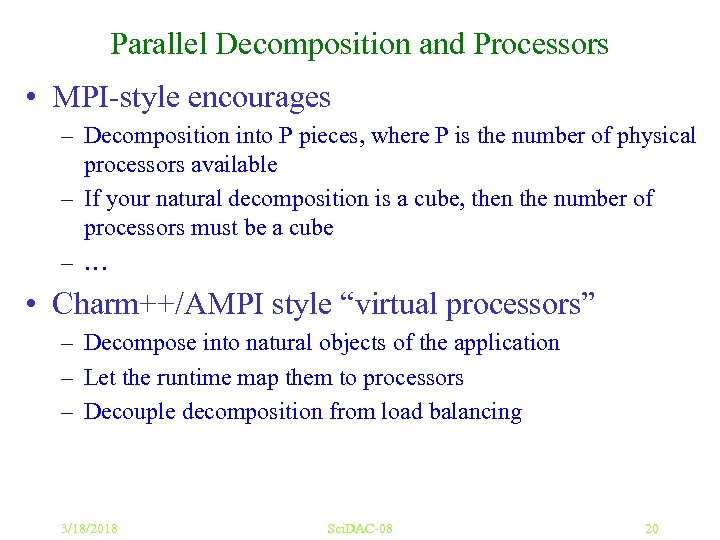

Parallel Decomposition and Processors • MPI-style encourages – Decomposition into P pieces, where P is the number of physical processors available – If your natural decomposition is a cube, then the number of processors must be a cube – … • Charm++/AMPI style “virtual processors” – Decompose into natural objects of the application – Let the runtime map them to processors – Decouple decomposition from load balancing 3/18/2018 Sci. DAC-08 20

Parallel Decomposition and Processors • MPI-style encourages – Decomposition into P pieces, where P is the number of physical processors available – If your natural decomposition is a cube, then the number of processors must be a cube – … • Charm++/AMPI style “virtual processors” – Decompose into natural objects of the application – Let the runtime map them to processors – Decouple decomposition from load balancing 3/18/2018 Sci. DAC-08 20

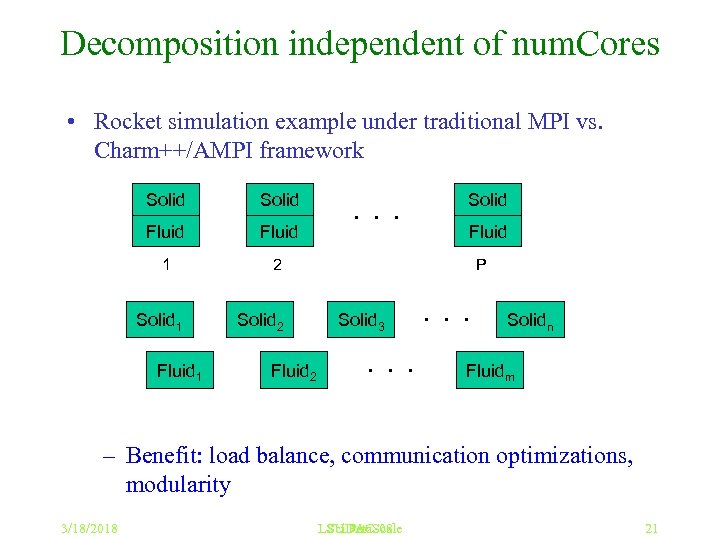

Decomposition independent of num. Cores • Rocket simulation example under traditional MPI vs. Charm++/AMPI framework Solid Fluid 1 2 Solid 1 Fluid 1 Solid 2 Fluid 2 . . . Solid Fluid P Solid 3 . . . Solidn Fluidm – Benefit: load balance, communication optimizations, modularity 3/18/2018 LSU Peta. Scale Sci. DAC-08 21

Decomposition independent of num. Cores • Rocket simulation example under traditional MPI vs. Charm++/AMPI framework Solid Fluid 1 2 Solid 1 Fluid 1 Solid 2 Fluid 2 . . . Solid Fluid P Solid 3 . . . Solidn Fluidm – Benefit: load balance, communication optimizations, modularity 3/18/2018 LSU Peta. Scale Sci. DAC-08 21

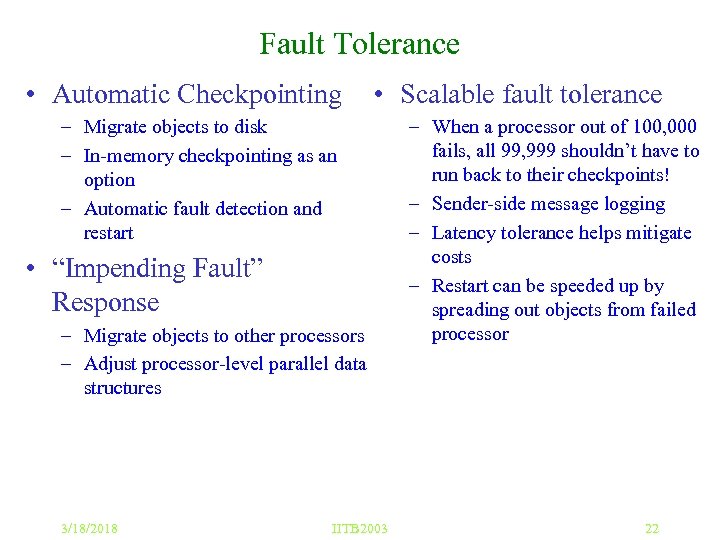

Fault Tolerance • Automatic Checkpointing • Scalable fault tolerance – Migrate objects to disk – In-memory checkpointing as an option – Automatic fault detection and restart • “Impending Fault” Response – Migrate objects to other processors – Adjust processor-level parallel data structures 3/18/2018 IITB 2003 – When a processor out of 100, 000 fails, all 99, 999 shouldn’t have to run back to their checkpoints! – Sender-side message logging – Latency tolerance helps mitigate costs – Restart can be speeded up by spreading out objects from failed processor 22

Fault Tolerance • Automatic Checkpointing • Scalable fault tolerance – Migrate objects to disk – In-memory checkpointing as an option – Automatic fault detection and restart • “Impending Fault” Response – Migrate objects to other processors – Adjust processor-level parallel data structures 3/18/2018 IITB 2003 – When a processor out of 100, 000 fails, all 99, 999 shouldn’t have to run back to their checkpoints! – Sender-side message logging – Latency tolerance helps mitigate costs – Restart can be speeded up by spreading out objects from failed processor 22

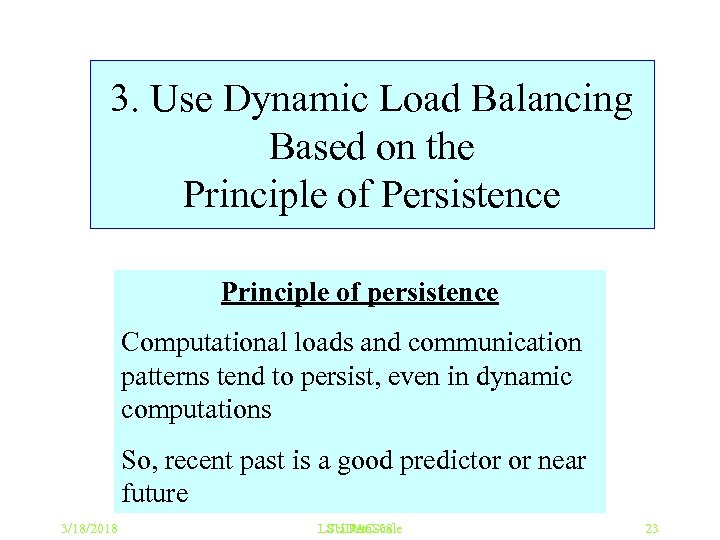

3. Use Dynamic Load Balancing Based on the Principle of Persistence Principle of persistence Computational loads and communication patterns tend to persist, even in dynamic computations So, recent past is a good predictor or near future 3/18/2018 LSU Peta. Scale Sci. DAC-08 23

3. Use Dynamic Load Balancing Based on the Principle of Persistence Principle of persistence Computational loads and communication patterns tend to persist, even in dynamic computations So, recent past is a good predictor or near future 3/18/2018 LSU Peta. Scale Sci. DAC-08 23

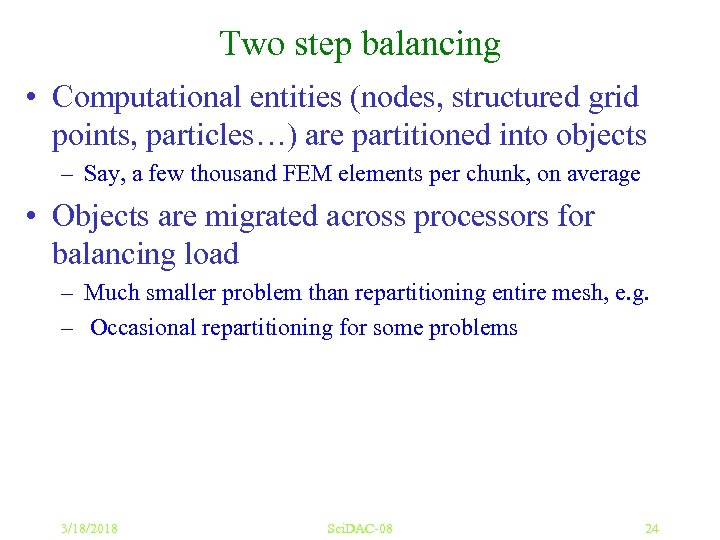

Two step balancing • Computational entities (nodes, structured grid points, particles…) are partitioned into objects – Say, a few thousand FEM elements per chunk, on average • Objects are migrated across processors for balancing load – Much smaller problem than repartitioning entire mesh, e. g. – Occasional repartitioning for some problems 3/18/2018 Sci. DAC-08 24

Two step balancing • Computational entities (nodes, structured grid points, particles…) are partitioned into objects – Say, a few thousand FEM elements per chunk, on average • Objects are migrated across processors for balancing load – Much smaller problem than repartitioning entire mesh, e. g. – Occasional repartitioning for some problems 3/18/2018 Sci. DAC-08 24

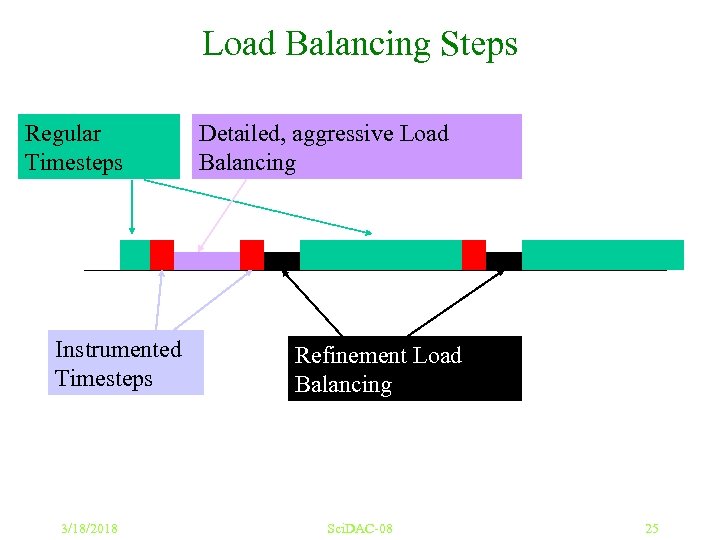

Load Balancing Steps Regular Timesteps Instrumented Timesteps 3/18/2018 Detailed, aggressive Load Balancing Refinement Load Balancing Sci. DAC-08 25

Load Balancing Steps Regular Timesteps Instrumented Timesteps 3/18/2018 Detailed, aggressive Load Balancing Refinement Load Balancing Sci. DAC-08 25

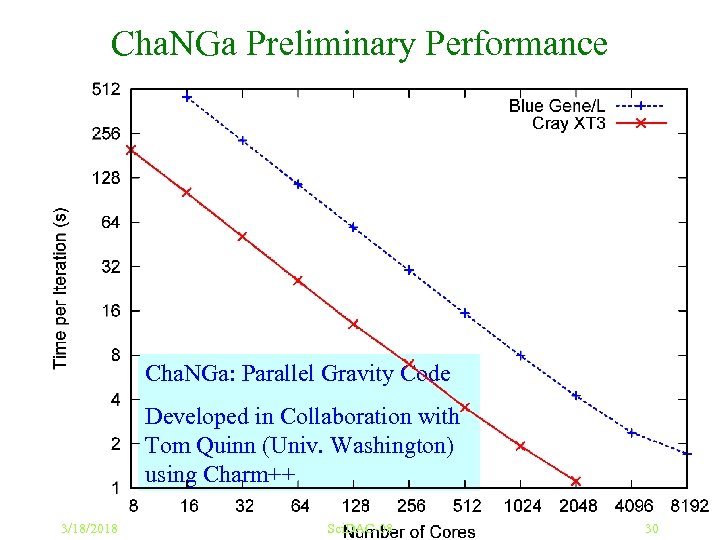

Cha. NGa: Parallel Gravity • Collaborative project (NSF ITR) – with Prof. Tom Quinn, Univ. of Washington • Components: gravity, gas dynamics • Barnes-Hut tree codes – Oct tree is natural decomposition: • Geometry has better aspect ratios, and so you “open” fewer nodes up. • But is not used because it leads to bad load balance • Assumption: one-to-one map between sub-trees and processors • Binary trees are considered better load balanced – With Charm++: Use Oct-Tree, and let Charm++ map subtrees to processors 3/18/2018 Sci. DAC-08 26

Cha. NGa: Parallel Gravity • Collaborative project (NSF ITR) – with Prof. Tom Quinn, Univ. of Washington • Components: gravity, gas dynamics • Barnes-Hut tree codes – Oct tree is natural decomposition: • Geometry has better aspect ratios, and so you “open” fewer nodes up. • But is not used because it leads to bad load balance • Assumption: one-to-one map between sub-trees and processors • Binary trees are considered better load balanced – With Charm++: Use Oct-Tree, and let Charm++ map subtrees to processors 3/18/2018 Sci. DAC-08 26

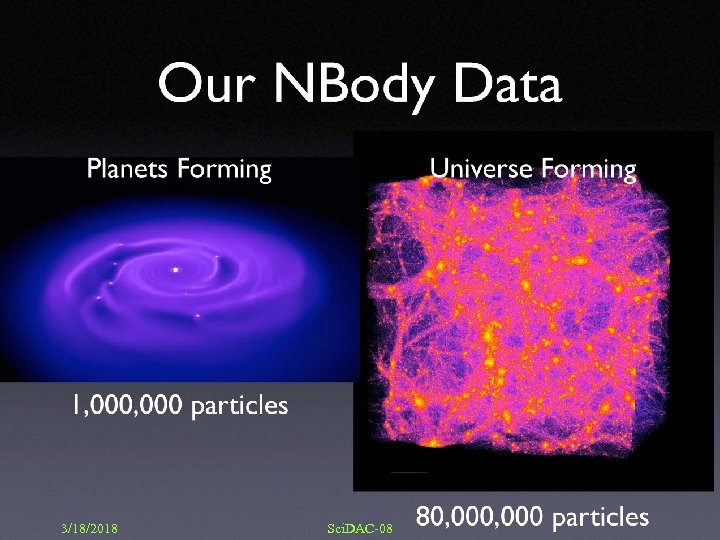

3/18/2018 Sci. DAC-08 27

3/18/2018 Sci. DAC-08 27

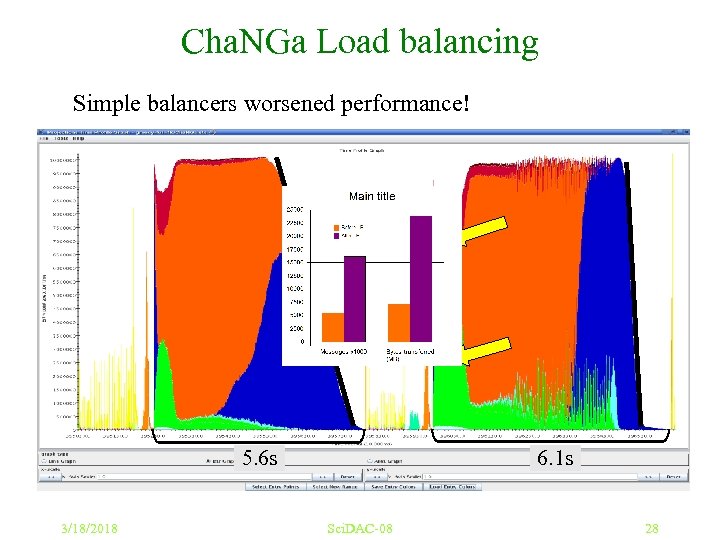

Cha. NGa Load balancing Simple balancers worsened performance! dwarf 5 M on 1, 024 Blue. Gene/L processors 5. 6 s 3/18/2018 6. 1 s Sci. DAC-08 28

Cha. NGa Load balancing Simple balancers worsened performance! dwarf 5 M on 1, 024 Blue. Gene/L processors 5. 6 s 3/18/2018 6. 1 s Sci. DAC-08 28

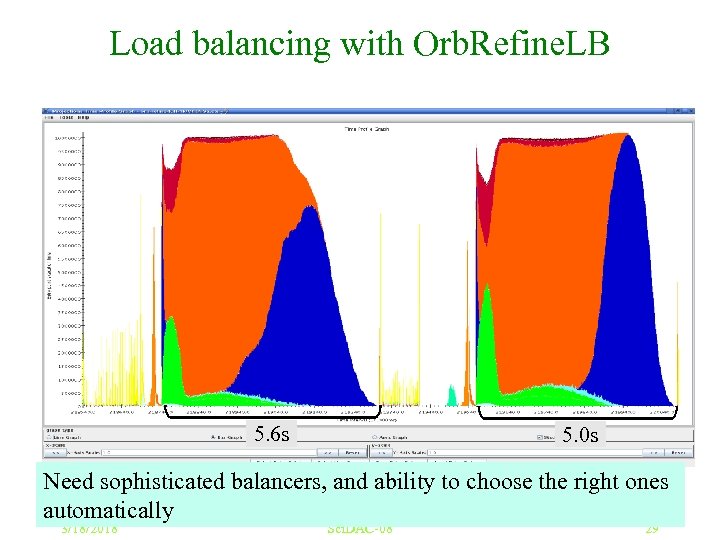

Load balancing with Orb. Refine. LB dwarf 5 M on 1, 024 Blue. Gene/L processors 5. 6 s 5. 0 s Need sophisticated balancers, and ability to choose the right ones automatically 3/18/2018 Sci. DAC-08 29

Load balancing with Orb. Refine. LB dwarf 5 M on 1, 024 Blue. Gene/L processors 5. 6 s 5. 0 s Need sophisticated balancers, and ability to choose the right ones automatically 3/18/2018 Sci. DAC-08 29

Cha. NGa Preliminary Performance Cha. NGa: Parallel Gravity Code Developed in Collaboration with Tom Quinn (Univ. Washington) using Charm++ 3/18/2018 Sci. DAC-08 30

Cha. NGa Preliminary Performance Cha. NGa: Parallel Gravity Code Developed in Collaboration with Tom Quinn (Univ. Washington) using Charm++ 3/18/2018 Sci. DAC-08 30

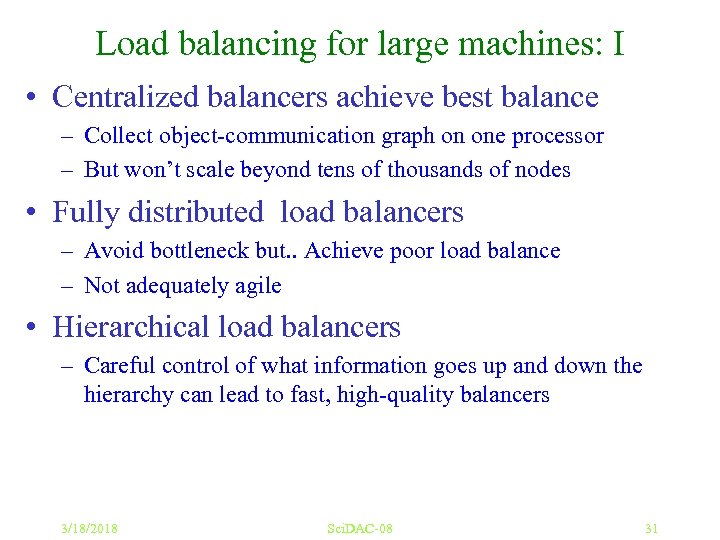

Load balancing for large machines: I • Centralized balancers achieve best balance – Collect object-communication graph on one processor – But won’t scale beyond tens of thousands of nodes • Fully distributed load balancers – Avoid bottleneck but. . Achieve poor load balance – Not adequately agile • Hierarchical load balancers – Careful control of what information goes up and down the hierarchy can lead to fast, high-quality balancers 3/18/2018 Sci. DAC-08 31

Load balancing for large machines: I • Centralized balancers achieve best balance – Collect object-communication graph on one processor – But won’t scale beyond tens of thousands of nodes • Fully distributed load balancers – Avoid bottleneck but. . Achieve poor load balance – Not adequately agile • Hierarchical load balancers – Careful control of what information goes up and down the hierarchy can lead to fast, high-quality balancers 3/18/2018 Sci. DAC-08 31

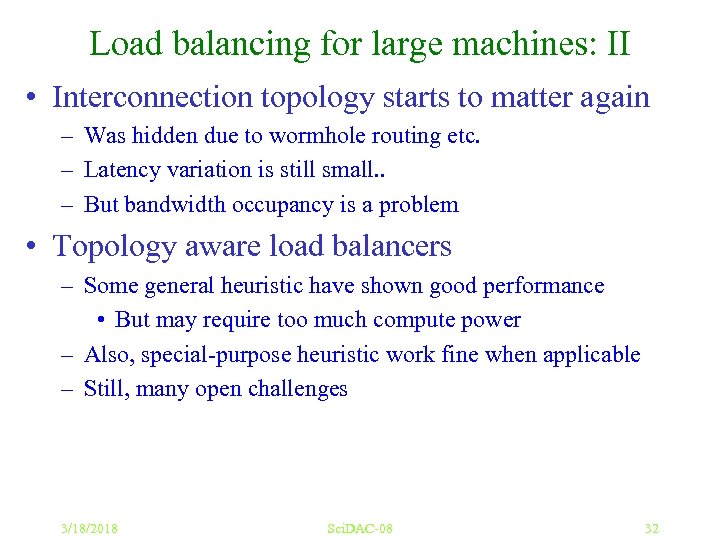

Load balancing for large machines: II • Interconnection topology starts to matter again – Was hidden due to wormhole routing etc. – Latency variation is still small. . – But bandwidth occupancy is a problem • Topology aware load balancers – Some general heuristic have shown good performance • But may require too much compute power – Also, special-purpose heuristic work fine when applicable – Still, many open challenges 3/18/2018 Sci. DAC-08 32

Load balancing for large machines: II • Interconnection topology starts to matter again – Was hidden due to wormhole routing etc. – Latency variation is still small. . – But bandwidth occupancy is a problem • Topology aware load balancers – Some general heuristic have shown good performance • But may require too much compute power – Also, special-purpose heuristic work fine when applicable – Still, many open challenges 3/18/2018 Sci. DAC-08 32

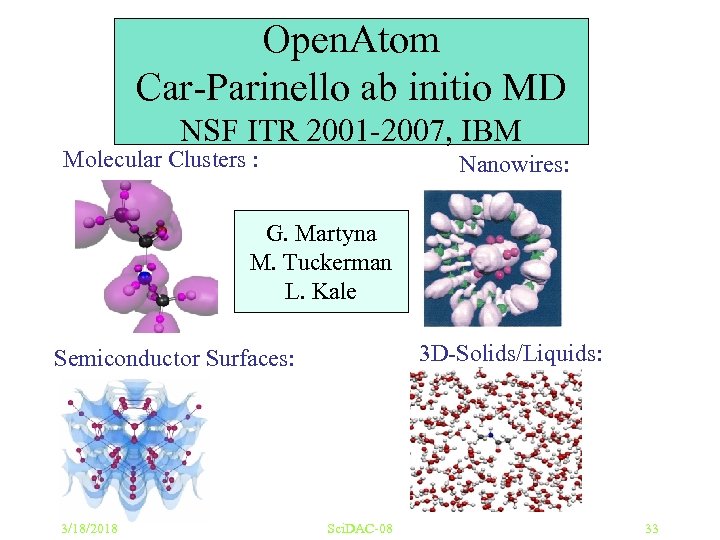

Open. Atom Car-Parinello ab initio MD NSF ITR 2001 -2007, IBM Molecular Clusters : Nanowires: G. Martyna M. Tuckerman L. Kale 3 D-Solids/Liquids: Semiconductor Surfaces: 3/18/2018 Sci. DAC-08 33

Open. Atom Car-Parinello ab initio MD NSF ITR 2001 -2007, IBM Molecular Clusters : Nanowires: G. Martyna M. Tuckerman L. Kale 3 D-Solids/Liquids: Semiconductor Surfaces: 3/18/2018 Sci. DAC-08 33

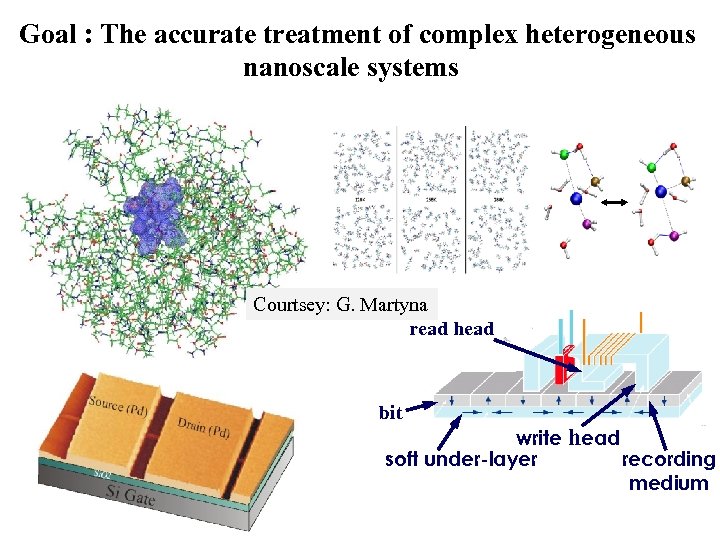

Goal : The accurate treatment of complex heterogeneous nanoscale systems Courtsey: G. Martyna read head bit write head soft under-layer recording medium

Goal : The accurate treatment of complex heterogeneous nanoscale systems Courtsey: G. Martyna read head bit write head soft under-layer recording medium

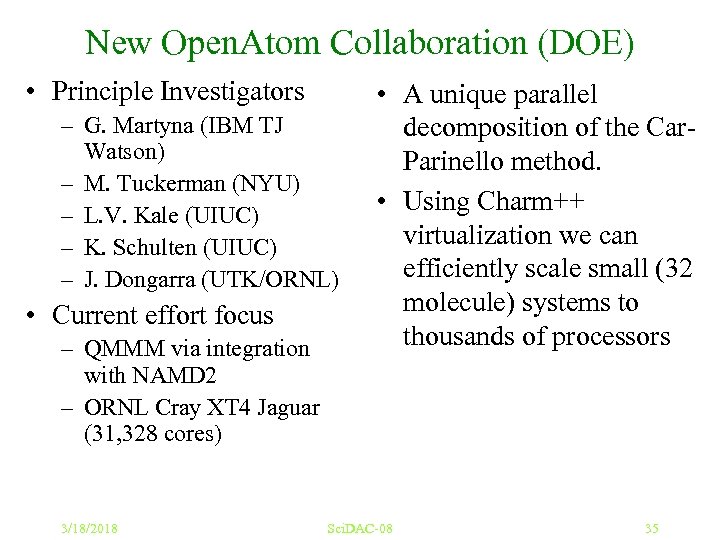

New Open. Atom Collaboration (DOE) • Principle Investigators – G. Martyna (IBM TJ Watson) – M. Tuckerman (NYU) – L. V. Kale (UIUC) – K. Schulten (UIUC) – J. Dongarra (UTK/ORNL) • Current effort focus – QMMM via integration with NAMD 2 – ORNL Cray XT 4 Jaguar (31, 328 cores) 3/18/2018 • A unique parallel decomposition of the Car. Parinello method. • Using Charm++ virtualization we can efficiently scale small (32 molecule) systems to thousands of processors Sci. DAC-08 35

New Open. Atom Collaboration (DOE) • Principle Investigators – G. Martyna (IBM TJ Watson) – M. Tuckerman (NYU) – L. V. Kale (UIUC) – K. Schulten (UIUC) – J. Dongarra (UTK/ORNL) • Current effort focus – QMMM via integration with NAMD 2 – ORNL Cray XT 4 Jaguar (31, 328 cores) 3/18/2018 • A unique parallel decomposition of the Car. Parinello method. • Using Charm++ virtualization we can efficiently scale small (32 molecule) systems to thousands of processors Sci. DAC-08 35

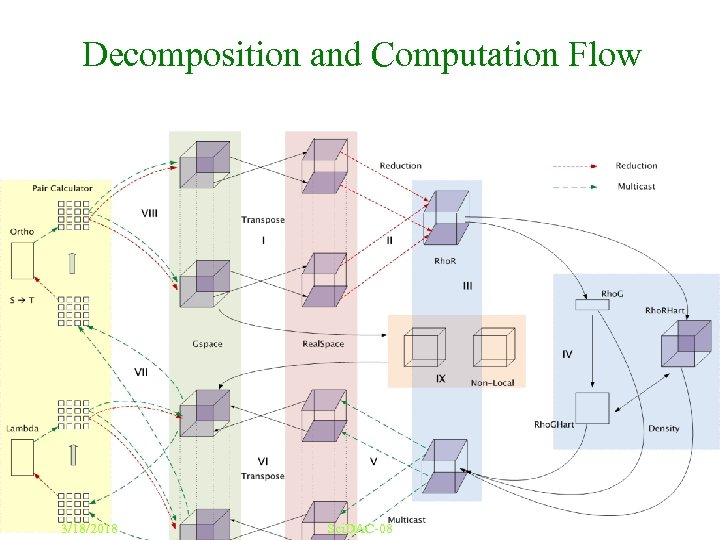

Decomposition and Computation Flow 3/18/2018 Sci. DAC-08 36

Decomposition and Computation Flow 3/18/2018 Sci. DAC-08 36

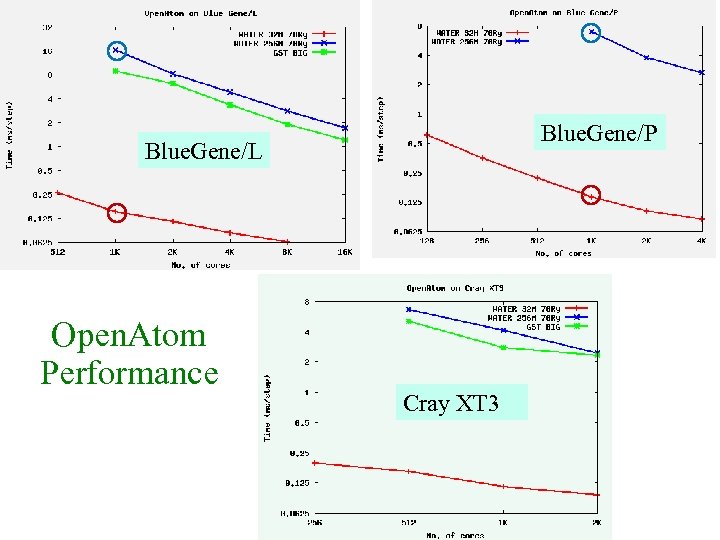

Blue. Gene/P Blue. Gene/L Open. Atom Performance Cray XT 3

Blue. Gene/P Blue. Gene/L Open. Atom Performance Cray XT 3

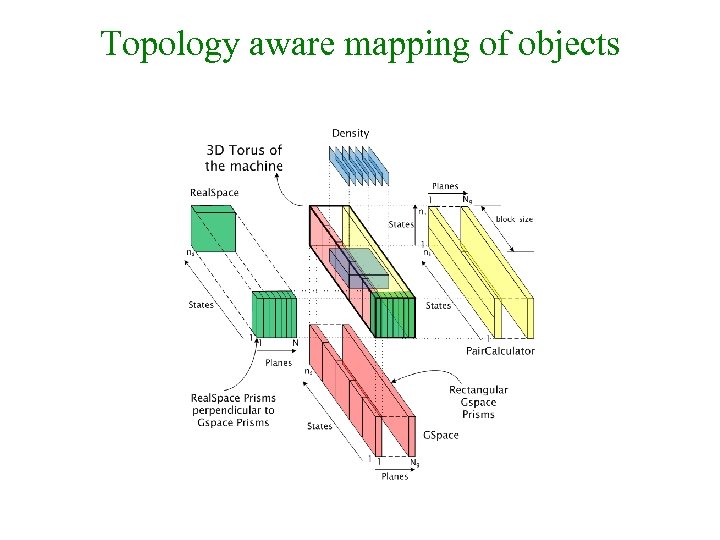

Topology aware mapping of objects

Topology aware mapping of objects

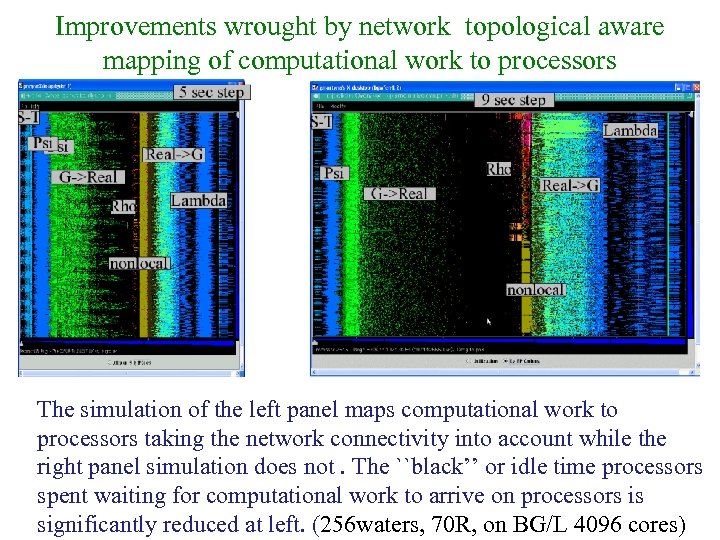

Improvements wrought by network topological aware mapping of computational work to processors The simulation of the left panel maps computational work to processors taking the network connectivity into account while the right panel simulation does not. The ``black’’ or idle time processors spent waiting for computational work to arrive on processors is significantly reduced at left. (256 waters, 70 R, on BG/L 4096 cores)

Improvements wrought by network topological aware mapping of computational work to processors The simulation of the left panel maps computational work to processors taking the network connectivity into account while the right panel simulation does not. The ``black’’ or idle time processors spent waiting for computational work to arrive on processors is significantly reduced at left. (256 waters, 70 R, on BG/L 4096 cores)

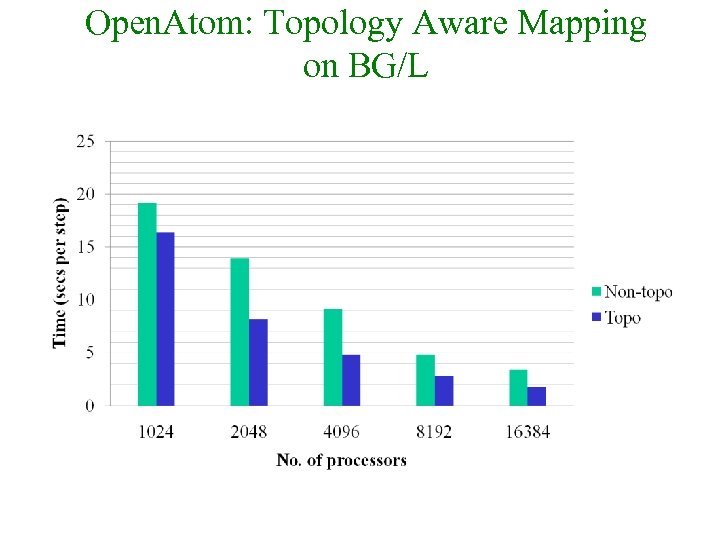

Open. Atom: Topology Aware Mapping on BG/L

Open. Atom: Topology Aware Mapping on BG/L

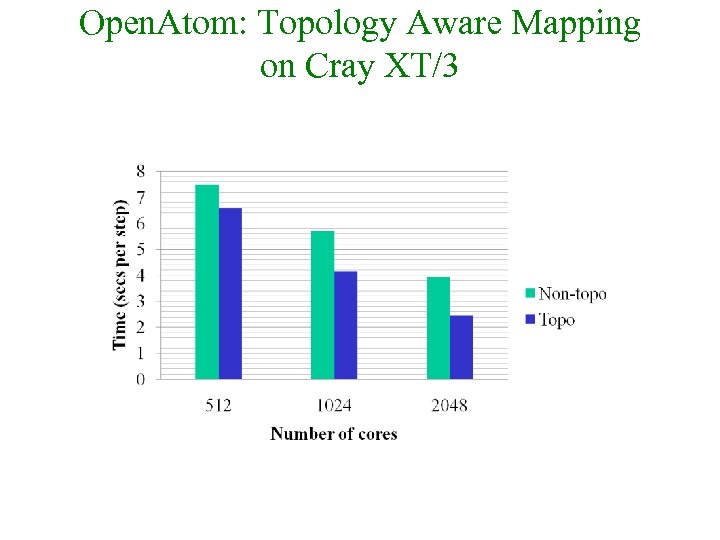

Open. Atom: Topology Aware Mapping on Cray XT/3

Open. Atom: Topology Aware Mapping on Cray XT/3

4. Analyze Performance with Sophisticated Tools 3/18/2018 Sci. DAC-08 42

4. Analyze Performance with Sophisticated Tools 3/18/2018 Sci. DAC-08 42

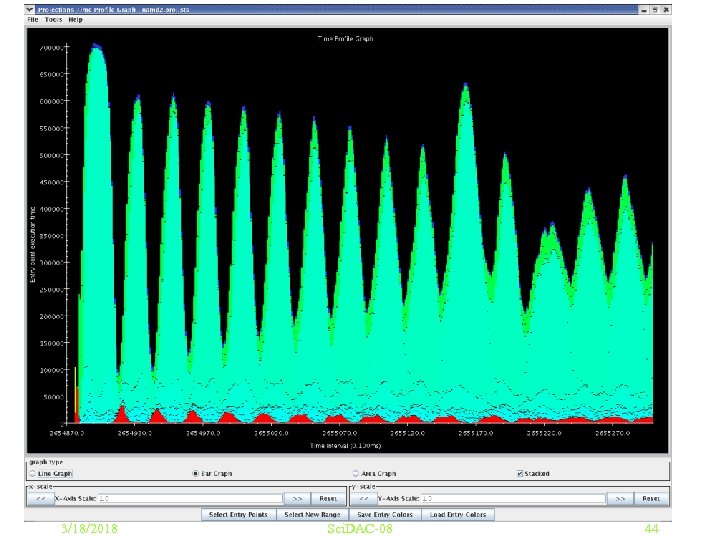

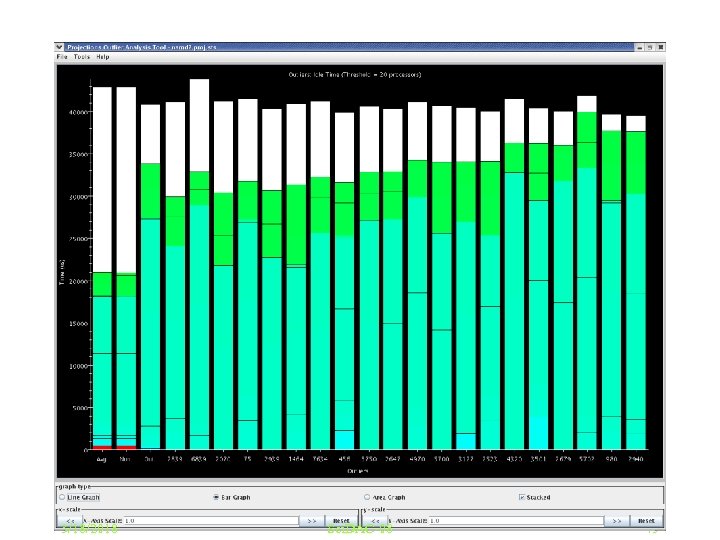

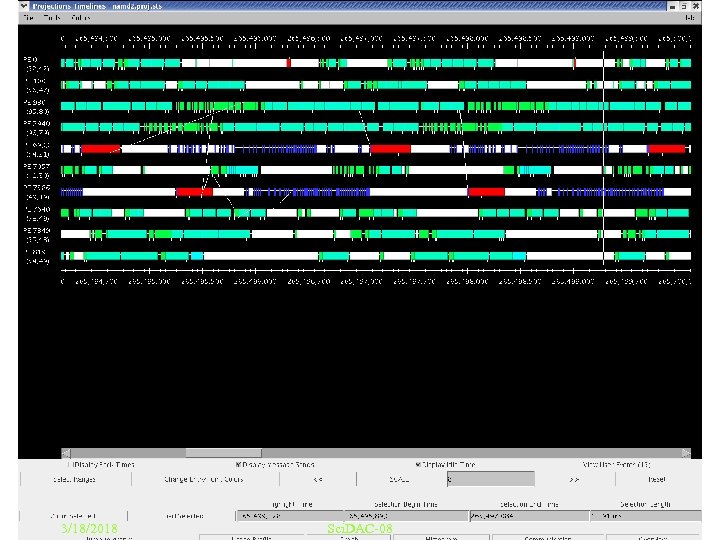

Exploit sophisticated Performance analysis tools • • We use a tool called “Projections” Many other tools exist Need for scalable analysis A recent example: – Trying to identify the next performance obstacle for NAMD • Running on 8192 processors, with 92, 000 atom simulation • Test scenario: without PME • Time is 3 ms per step, but lower bound is 1. 6 ms or so. . 3/18/2018 Sci. DAC-08 43

Exploit sophisticated Performance analysis tools • • We use a tool called “Projections” Many other tools exist Need for scalable analysis A recent example: – Trying to identify the next performance obstacle for NAMD • Running on 8192 processors, with 92, 000 atom simulation • Test scenario: without PME • Time is 3 ms per step, but lower bound is 1. 6 ms or so. . 3/18/2018 Sci. DAC-08 43

3/18/2018 Sci. DAC-08 44

3/18/2018 Sci. DAC-08 44

3/18/2018 Sci. DAC-08 45

3/18/2018 Sci. DAC-08 45

3/18/2018 Sci. DAC-08 46

3/18/2018 Sci. DAC-08 46

5. Model and Predict Performance Via Simulation 3/18/2018 Sci. DAC-08 47

5. Model and Predict Performance Via Simulation 3/18/2018 Sci. DAC-08 47

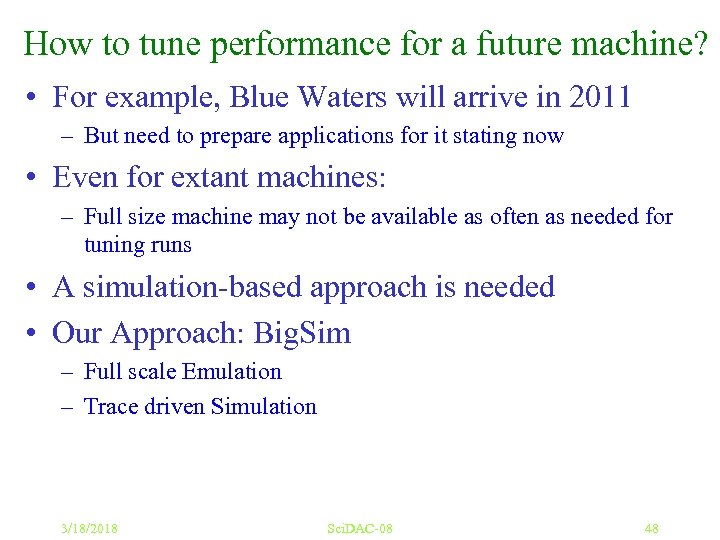

How to tune performance for a future machine? • For example, Blue Waters will arrive in 2011 – But need to prepare applications for it stating now • Even for extant machines: – Full size machine may not be available as often as needed for tuning runs • A simulation-based approach is needed • Our Approach: Big. Sim – Full scale Emulation – Trace driven Simulation 3/18/2018 Sci. DAC-08 48

How to tune performance for a future machine? • For example, Blue Waters will arrive in 2011 – But need to prepare applications for it stating now • Even for extant machines: – Full size machine may not be available as often as needed for tuning runs • A simulation-based approach is needed • Our Approach: Big. Sim – Full scale Emulation – Trace driven Simulation 3/18/2018 Sci. DAC-08 48

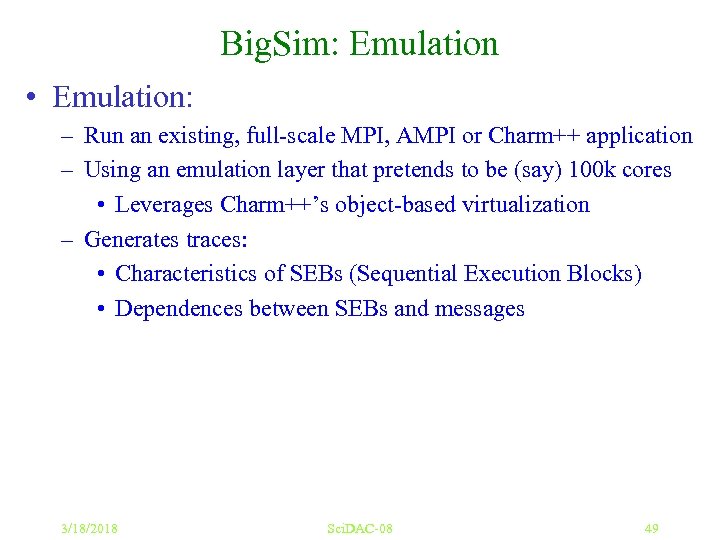

Big. Sim: Emulation • Emulation: – Run an existing, full-scale MPI, AMPI or Charm++ application – Using an emulation layer that pretends to be (say) 100 k cores • Leverages Charm++’s object-based virtualization – Generates traces: • Characteristics of SEBs (Sequential Execution Blocks) • Dependences between SEBs and messages 3/18/2018 Sci. DAC-08 49

Big. Sim: Emulation • Emulation: – Run an existing, full-scale MPI, AMPI or Charm++ application – Using an emulation layer that pretends to be (say) 100 k cores • Leverages Charm++’s object-based virtualization – Generates traces: • Characteristics of SEBs (Sequential Execution Blocks) • Dependences between SEBs and messages 3/18/2018 Sci. DAC-08 49

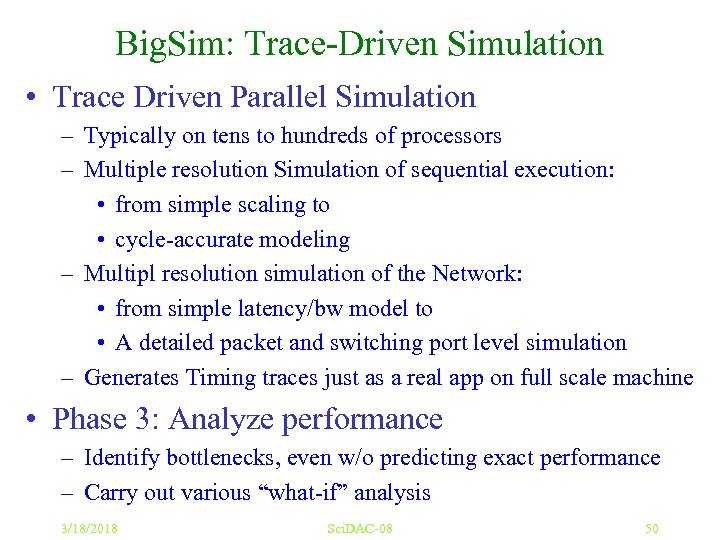

Big. Sim: Trace-Driven Simulation • Trace Driven Parallel Simulation – Typically on tens to hundreds of processors – Multiple resolution Simulation of sequential execution: • from simple scaling to • cycle-accurate modeling – Multipl resolution simulation of the Network: • from simple latency/bw model to • A detailed packet and switching port level simulation – Generates Timing traces just as a real app on full scale machine • Phase 3: Analyze performance – Identify bottlenecks, even w/o predicting exact performance – Carry out various “what-if” analysis 3/18/2018 Sci. DAC-08 50

Big. Sim: Trace-Driven Simulation • Trace Driven Parallel Simulation – Typically on tens to hundreds of processors – Multiple resolution Simulation of sequential execution: • from simple scaling to • cycle-accurate modeling – Multipl resolution simulation of the Network: • from simple latency/bw model to • A detailed packet and switching port level simulation – Generates Timing traces just as a real app on full scale machine • Phase 3: Analyze performance – Identify bottlenecks, even w/o predicting exact performance – Carry out various “what-if” analysis 3/18/2018 Sci. DAC-08 50

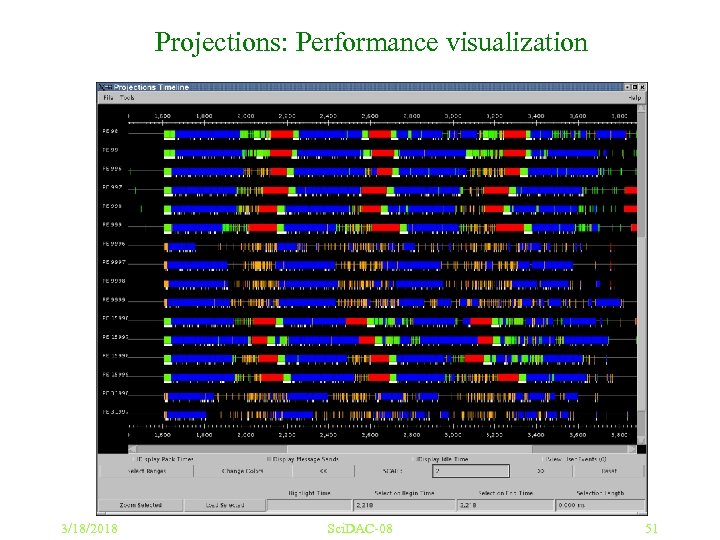

Projections: Performance visualization 3/18/2018 Sci. DAC-08 51

Projections: Performance visualization 3/18/2018 Sci. DAC-08 51

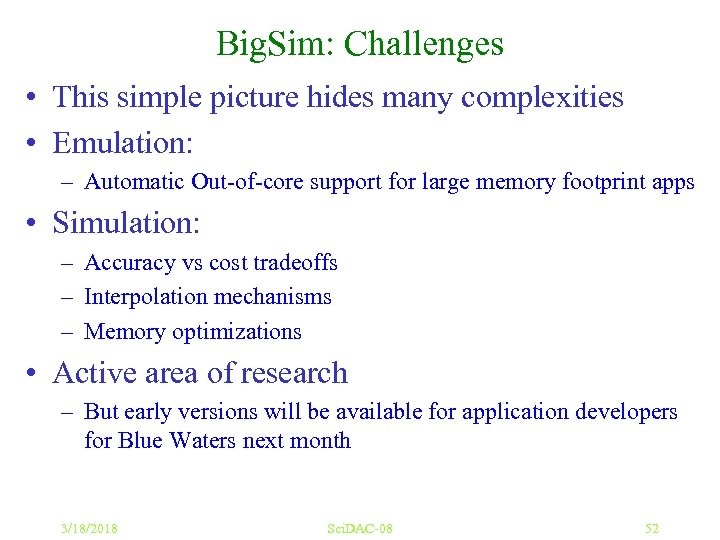

Big. Sim: Challenges • This simple picture hides many complexities • Emulation: – Automatic Out-of-core support for large memory footprint apps • Simulation: – Accuracy vs cost tradeoffs – Interpolation mechanisms – Memory optimizations • Active area of research – But early versions will be available for application developers for Blue Waters next month 3/18/2018 Sci. DAC-08 52

Big. Sim: Challenges • This simple picture hides many complexities • Emulation: – Automatic Out-of-core support for large memory footprint apps • Simulation: – Accuracy vs cost tradeoffs – Interpolation mechanisms – Memory optimizations • Active area of research – But early versions will be available for application developers for Blue Waters next month 3/18/2018 Sci. DAC-08 52

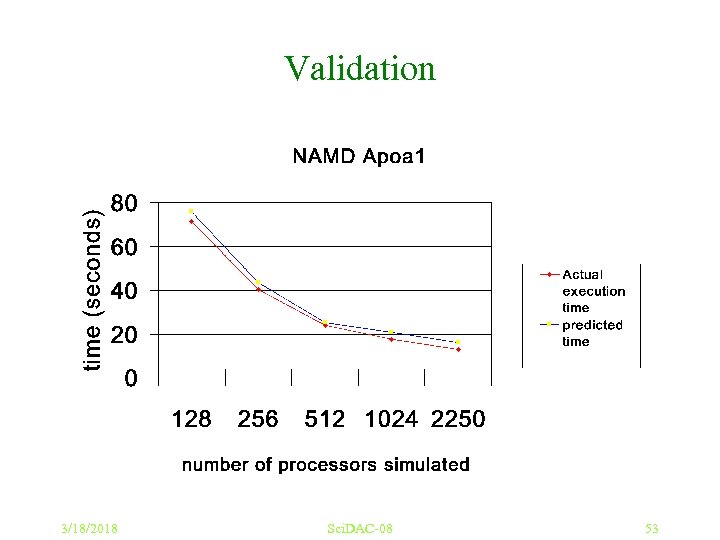

Validation 3/18/2018 Sci. DAC-08 53

Validation 3/18/2018 Sci. DAC-08 53

Challenges of Multicore Chips And SMP nodes 3/18/2018 Sci. DAC-08 54

Challenges of Multicore Chips And SMP nodes 3/18/2018 Sci. DAC-08 54

Challenges of Multicore Chips • SMP nodes have been around for a while – Typically, users just run separate MPI processes on each core – Seen to be faster (Open. MP + MPI has not succeeded much) • Yet, you cannot ignore the shared memory – E. g. For gravity calculations (cosmology), a processor may request a set of particles from remote nodes • With shared memory, one can maintain a node-level cache, minimizing communication • Need model without pitfalls of Pthreads/Open. MP – Costly locks, false sharing, no respect for locality – E. g. Charm++ avoids these pitfalls, IF its RTS can avoid them 3/18/2018 Sci. DAC-08 55

Challenges of Multicore Chips • SMP nodes have been around for a while – Typically, users just run separate MPI processes on each core – Seen to be faster (Open. MP + MPI has not succeeded much) • Yet, you cannot ignore the shared memory – E. g. For gravity calculations (cosmology), a processor may request a set of particles from remote nodes • With shared memory, one can maintain a node-level cache, minimizing communication • Need model without pitfalls of Pthreads/Open. MP – Costly locks, false sharing, no respect for locality – E. g. Charm++ avoids these pitfalls, IF its RTS can avoid them 3/18/2018 Sci. DAC-08 55

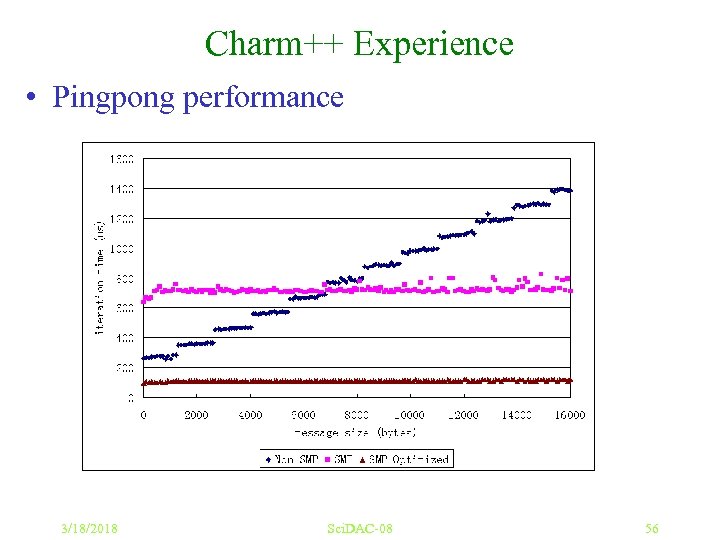

Charm++ Experience • Pingpong performance 3/18/2018 Sci. DAC-08 56

Charm++ Experience • Pingpong performance 3/18/2018 Sci. DAC-08 56

Raising the Level of Abstraction 3/18/2018 Sci. DAC-08 57

Raising the Level of Abstraction 3/18/2018 Sci. DAC-08 57

Raising the Level of Abstraction • Clearly (? ) we need new programming models – Many opinions and ideas exist • Two metapoints: – Need for Exploration (don’t standardize too soon) – Interoperability • Allows a “beachhead” for novel paradigms • Long-term: Allow each module to be coded using the paradigm best suited for it – Interoperability requires concurrent composition • This may require message-driven execution 3/18/2018 Sci. DAC-08 58

Raising the Level of Abstraction • Clearly (? ) we need new programming models – Many opinions and ideas exist • Two metapoints: – Need for Exploration (don’t standardize too soon) – Interoperability • Allows a “beachhead” for novel paradigms • Long-term: Allow each module to be coded using the paradigm best suited for it – Interoperability requires concurrent composition • This may require message-driven execution 3/18/2018 Sci. DAC-08 58

Simplifying Parallel Programming • By giving up completeness! – A paradigm may be simple, but – not suitable for expressing all parallel patterns – Yet, if it can cover a significant classes of patters (applications, modules), it is useful – A collection of incomplete models, backed by a few complete ones, will do the trick • Our own examples: both outlaw non-determinism – Multiphase Shared Arrays (MSA): restricted shared memory • LCPC ‘ 04 – Charisma: Static data flow among collections of objects • LCR’ 04, HPDC ‘ 07 3/18/2018 Sci. DAC-08 59

Simplifying Parallel Programming • By giving up completeness! – A paradigm may be simple, but – not suitable for expressing all parallel patterns – Yet, if it can cover a significant classes of patters (applications, modules), it is useful – A collection of incomplete models, backed by a few complete ones, will do the trick • Our own examples: both outlaw non-determinism – Multiphase Shared Arrays (MSA): restricted shared memory • LCPC ‘ 04 – Charisma: Static data flow among collections of objects • LCR’ 04, HPDC ‘ 07 3/18/2018 Sci. DAC-08 59

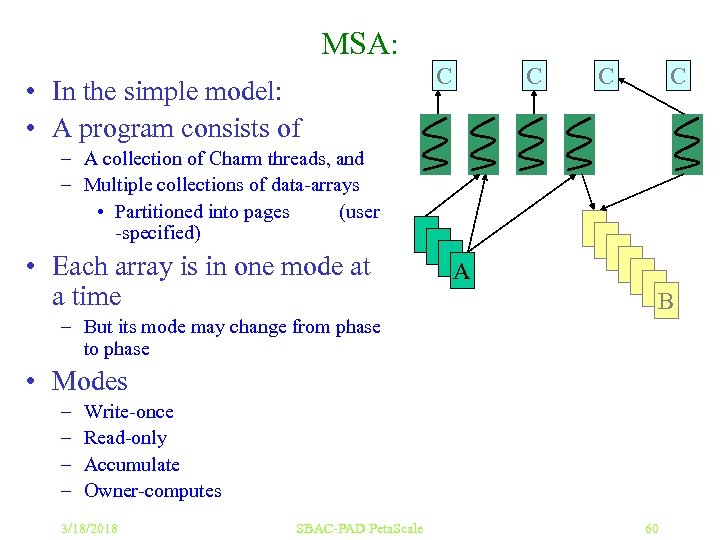

MSA: • In the simple model: • A program consists of C C – A collection of Charm threads, and – Multiple collections of data-arrays • Partitioned into pages (user -specified) • Each array is in one mode at a time A B – But its mode may change from phase to phase • Modes – – Write-once Read-only Accumulate Owner-computes 3/18/2018 SBAC-PAD Peta. Scale 60

MSA: • In the simple model: • A program consists of C C – A collection of Charm threads, and – Multiple collections of data-arrays • Partitioned into pages (user -specified) • Each array is in one mode at a time A B – But its mode may change from phase to phase • Modes – – Write-once Read-only Accumulate Owner-computes 3/18/2018 SBAC-PAD Peta. Scale 60

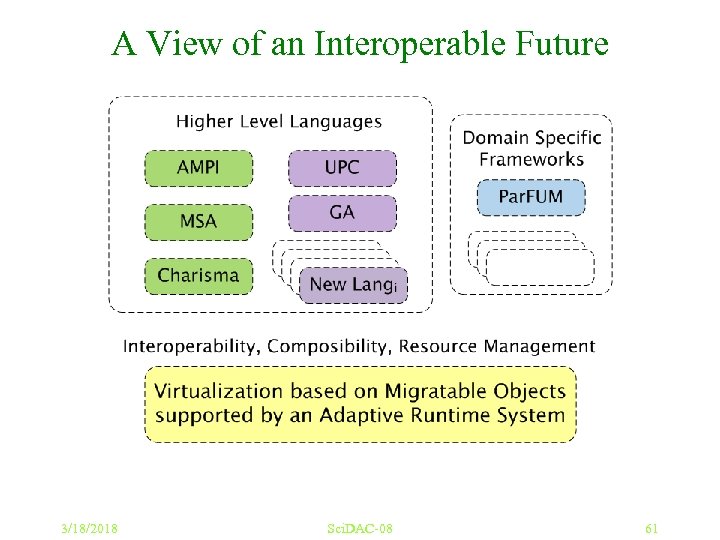

A View of an Interoperable Future 3/18/2018 Sci. DAC-08 61

A View of an Interoperable Future 3/18/2018 Sci. DAC-08 61

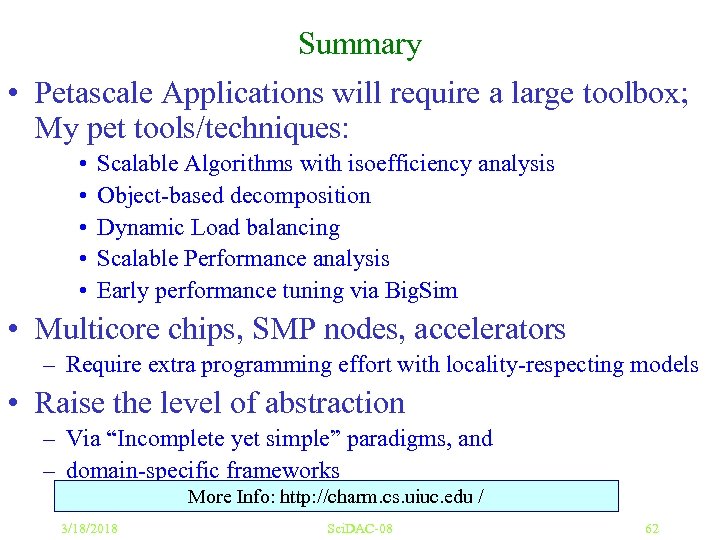

Summary • Petascale Applications will require a large toolbox; My pet tools/techniques: • • • Scalable Algorithms with isoefficiency analysis Object-based decomposition Dynamic Load balancing Scalable Performance analysis Early performance tuning via Big. Sim • Multicore chips, SMP nodes, accelerators – Require extra programming effort with locality-respecting models • Raise the level of abstraction – Via “Incomplete yet simple” paradigms, and – domain-specific frameworks More Info: http: //charm. cs. uiuc. edu / 3/18/2018 Sci. DAC-08 62

Summary • Petascale Applications will require a large toolbox; My pet tools/techniques: • • • Scalable Algorithms with isoefficiency analysis Object-based decomposition Dynamic Load balancing Scalable Performance analysis Early performance tuning via Big. Sim • Multicore chips, SMP nodes, accelerators – Require extra programming effort with locality-respecting models • Raise the level of abstraction – Via “Incomplete yet simple” paradigms, and – domain-specific frameworks More Info: http: //charm. cs. uiuc. edu / 3/18/2018 Sci. DAC-08 62