a2aaa136dd7c6b14f70ad356dac96252.ppt

- Количество слайдов: 50

Take-Home • Handed out Friday 12/12 2: 30 pm • Due Monday 12/15 2: 30 pm • Points Worth 2 problem sets © Daniel S. Weld

Take-Home • Handed out Friday 12/12 2: 30 pm • Due Monday 12/15 2: 30 pm • Points Worth 2 problem sets © Daniel S. Weld

Tournament • Wednesday 3 rounds each (unless requested or finals) • Before each battle, teams describe project 6 min per group Both people to talk Focus on specific approach, surprises, lessons 2 slides max (print on transparencies) © Daniel S. Weld

Tournament • Wednesday 3 rounds each (unless requested or finals) • Before each battle, teams describe project 6 min per group Both people to talk Focus on specific approach, surprises, lessons 2 slides max (print on transparencies) © Daniel S. Weld

Report • 8 page limit; 12 pt font; 1” margins • Model on conference paper Include abstract, conclusions, but no introduction Online and offline aspect of your agent Describe your use of search (if you did) Section on lessons learned / experiments Short section explaining who did what • Due 12/18 1 pm • Points Worth 40 -60% of project score © Daniel S. Weld

Report • 8 page limit; 12 pt font; 1” margins • Model on conference paper Include abstract, conclusions, but no introduction Online and offline aspect of your agent Describe your use of search (if you did) Section on lessons learned / experiments Short section explaining who did what • Due 12/18 1 pm • Points Worth 40 -60% of project score © Daniel S. Weld

Outline • Review of topics • Hot applications Internet “Search” Ubiquitous computation Crosswords © Daniel S. Weld

Outline • Review of topics • Hot applications Internet “Search” Ubiquitous computation Crosswords © Daniel S. Weld

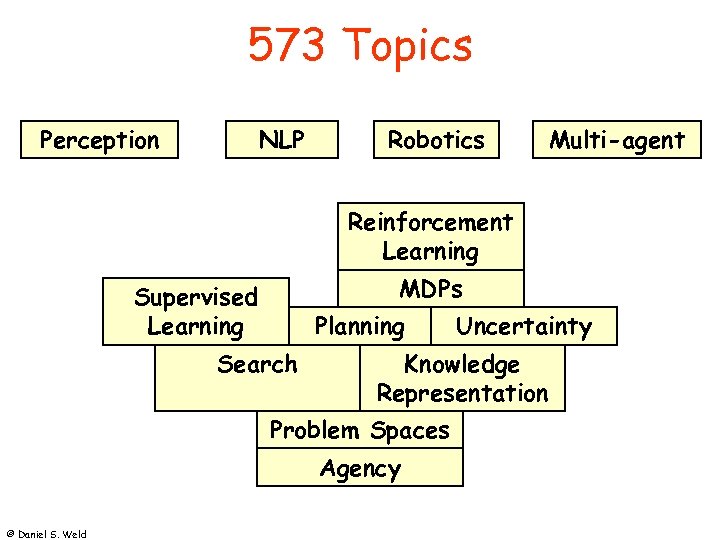

573 Topics Perception NLP Robotics Multi-agent Reinforcement Learning MDPs Supervised Learning Planning Search Knowledge Representation Problem Spaces Agency © Daniel S. Weld Uncertainty

573 Topics Perception NLP Robotics Multi-agent Reinforcement Learning MDPs Supervised Learning Planning Search Knowledge Representation Problem Spaces Agency © Daniel S. Weld Uncertainty

![State Space Search • Input: Set of states Operators [and costs] Start state Goal State Space Search • Input: Set of states Operators [and costs] Start state Goal](https://present5.com/presentation/a2aaa136dd7c6b14f70ad356dac96252/image-6.jpg) State Space Search • Input: Set of states Operators [and costs] Start state Goal state test • Output: Path Start End May require shortest path © Daniel S. Weld

State Space Search • Input: Set of states Operators [and costs] Start state Goal state test • Output: Path Start End May require shortest path © Daniel S. Weld

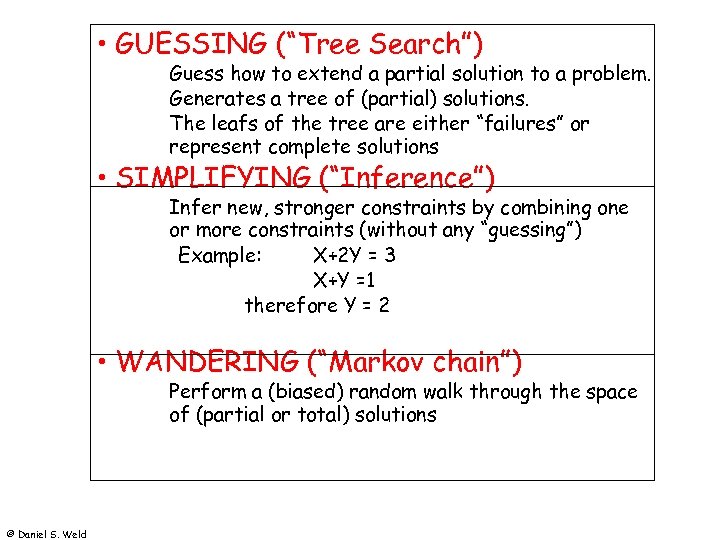

• GUESSING (“Tree Search”) Guess how to extend a partial solution to a problem. Generates a tree of (partial) solutions. The leafs of the tree are either “failures” or represent complete solutions • SIMPLIFYING (“Inference”) Infer new, stronger constraints by combining one or more constraints (without any “guessing”) Example: X+2 Y = 3 X+Y =1 therefore Y = 2 • WANDERING (“Markov chain”) Perform a (biased) random walk through the space of (partial or total) solutions © Daniel S. Weld

• GUESSING (“Tree Search”) Guess how to extend a partial solution to a problem. Generates a tree of (partial) solutions. The leafs of the tree are either “failures” or represent complete solutions • SIMPLIFYING (“Inference”) Infer new, stronger constraints by combining one or more constraints (without any “guessing”) Example: X+2 Y = 3 X+Y =1 therefore Y = 2 • WANDERING (“Markov chain”) Perform a (biased) random walk through the space of (partial or total) solutions © Daniel S. Weld

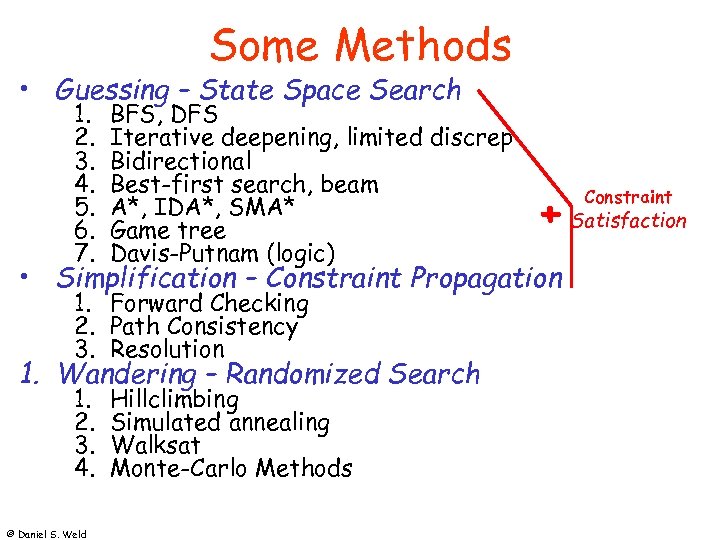

Some Methods • Guessing – State Space Search 1. 2. 3. 4. 5. 6. 7. BFS, DFS Iterative deepening, limited discrep Bidirectional Best-first search, beam A*, IDA*, SMA* Game tree Davis-Putnam (logic) + Satisfaction • Simplification – Constraint Propagation 1. Forward Checking 2. Path Consistency 3. Resolution 1. Wandering – Randomized Search 1. 2. 3. 4. © Daniel S. Weld Hillclimbing Simulated annealing Walksat Monte-Carlo Methods Constraint

Some Methods • Guessing – State Space Search 1. 2. 3. 4. 5. 6. 7. BFS, DFS Iterative deepening, limited discrep Bidirectional Best-first search, beam A*, IDA*, SMA* Game tree Davis-Putnam (logic) + Satisfaction • Simplification – Constraint Propagation 1. Forward Checking 2. Path Consistency 3. Resolution 1. Wandering – Randomized Search 1. 2. 3. 4. © Daniel S. Weld Hillclimbing Simulated annealing Walksat Monte-Carlo Methods Constraint

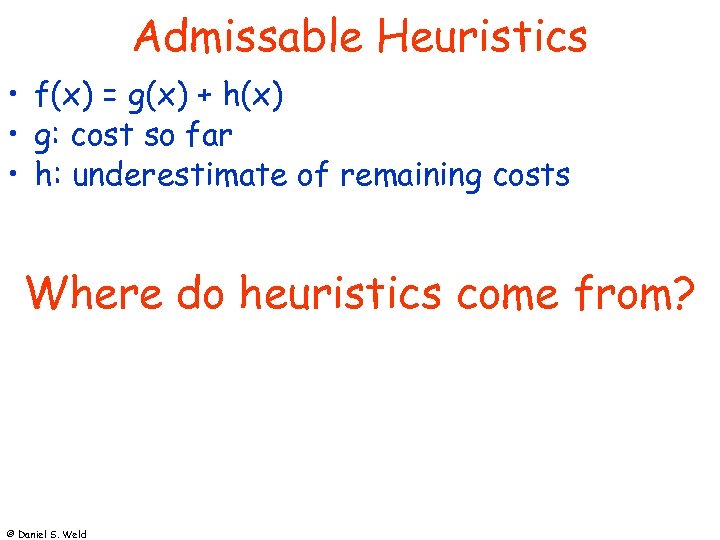

Admissable Heuristics • f(x) = g(x) + h(x) • g: cost so far • h: underestimate of remaining costs Where do heuristics come from? © Daniel S. Weld

Admissable Heuristics • f(x) = g(x) + h(x) • g: cost so far • h: underestimate of remaining costs Where do heuristics come from? © Daniel S. Weld

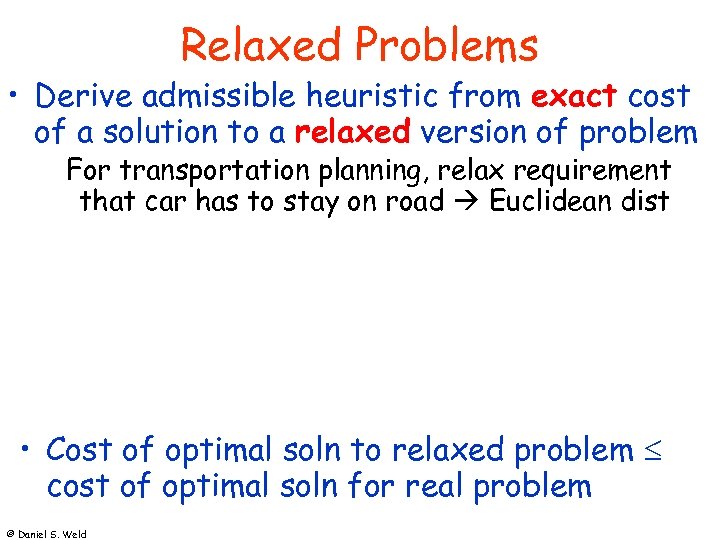

Relaxed Problems • Derive admissible heuristic from exact cost of a solution to a relaxed version of problem For transportation planning, relax requirement that car has to stay on road Euclidean dist For blocks world, distance = # move operations heuristic = number of misplaced blocks What is relaxed problem? # out of place = 2, true distance to goal = 3 • Cost of optimal soln to relaxed problem cost of optimal soln for real problem © Daniel S. Weld

Relaxed Problems • Derive admissible heuristic from exact cost of a solution to a relaxed version of problem For transportation planning, relax requirement that car has to stay on road Euclidean dist For blocks world, distance = # move operations heuristic = number of misplaced blocks What is relaxed problem? # out of place = 2, true distance to goal = 3 • Cost of optimal soln to relaxed problem cost of optimal soln for real problem © Daniel S. Weld

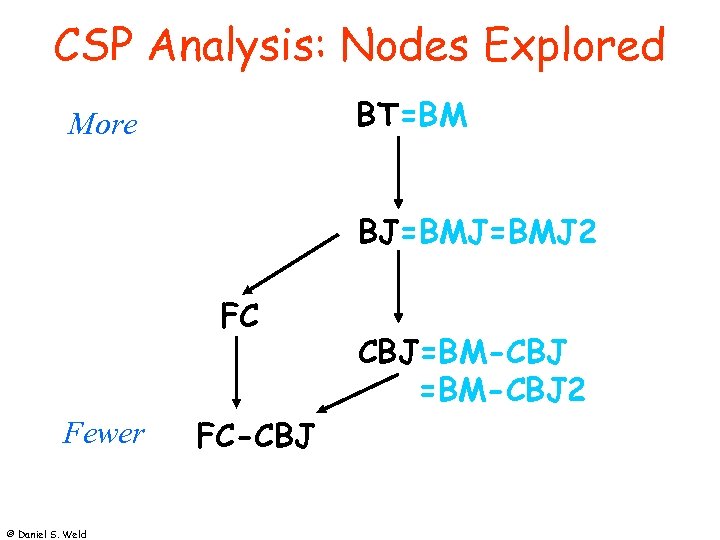

CSP Analysis: Nodes Explored BT=BM More BJ=BMJ 2 FC Fewer © Daniel S. Weld FC-CBJ CBJ=BM-CBJ 2

CSP Analysis: Nodes Explored BT=BM More BJ=BMJ 2 FC Fewer © Daniel S. Weld FC-CBJ CBJ=BM-CBJ 2

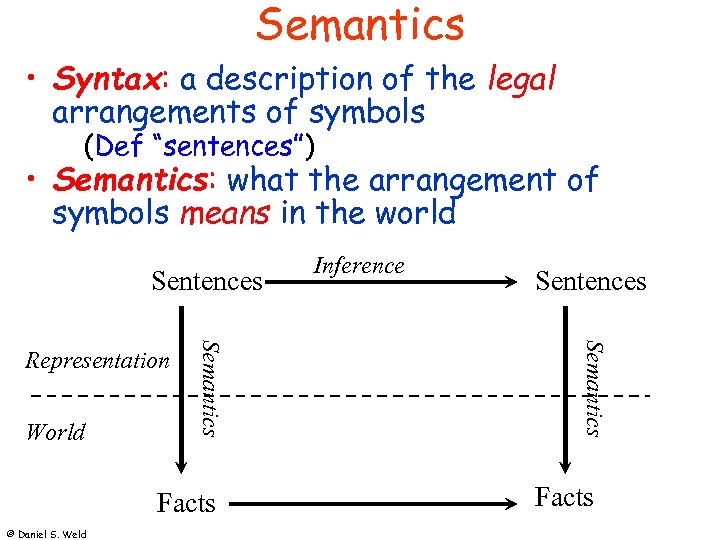

Semantics • Syntax: a description of the legal arrangements of symbols (Def “sentences”) • Semantics: what the arrangement of symbols means in the world Sentences Facts © Daniel S. Weld Sentences Semantics World Semantics Representation Inference Facts

Semantics • Syntax: a description of the legal arrangements of symbols (Def “sentences”) • Semantics: what the arrangement of symbols means in the world Sentences Facts © Daniel S. Weld Sentences Semantics World Semantics Representation Inference Facts

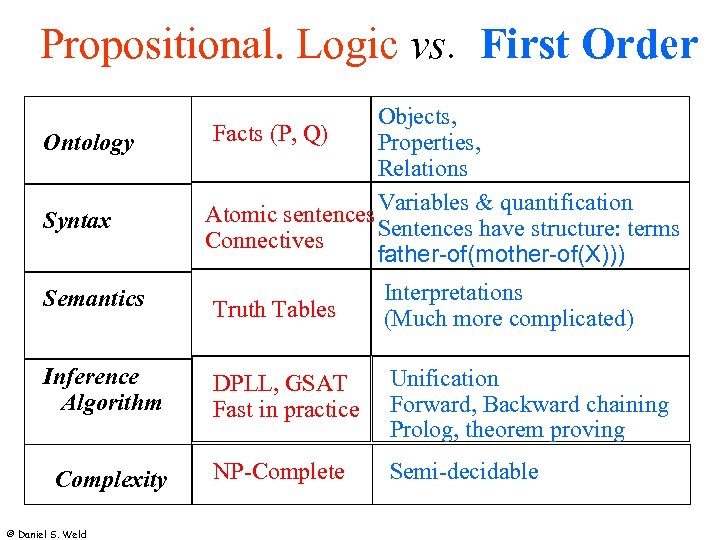

Propositional. Logic vs. First Order Ontology Syntax Objects, Facts (P, Q) Properties, Relations Variables & quantification Atomic sentences Sentences have structure: terms Connectives father-of(mother-of(X))) Semantics Truth Tables Interpretations (Much more complicated) Inference Algorithm DPLL, GSAT Fast in practice Unification Forward, Backward chaining Prolog, theorem proving NP-Complete Semi-decidable Complexity © Daniel S. Weld

Propositional. Logic vs. First Order Ontology Syntax Objects, Facts (P, Q) Properties, Relations Variables & quantification Atomic sentences Sentences have structure: terms Connectives father-of(mother-of(X))) Semantics Truth Tables Interpretations (Much more complicated) Inference Algorithm DPLL, GSAT Fast in practice Unification Forward, Backward chaining Prolog, theorem proving NP-Complete Semi-decidable Complexity © Daniel S. Weld

Computational Cliff • Description logics • Knowledge representation systems © Daniel S. Weld

Computational Cliff • Description logics • Knowledge representation systems © Daniel S. Weld

Machine Learning Overview • Inductive Learning Defn, need for bias, … • One method: Decision Tree Induction • • Hill climbing thru space of DTs Missing attributes Multivariate attributes Overfitting Ensembles Naïve Bayes Classifier Co-learning © Daniel S. Weld

Machine Learning Overview • Inductive Learning Defn, need for bias, … • One method: Decision Tree Induction • • Hill climbing thru space of DTs Missing attributes Multivariate attributes Overfitting Ensembles Naïve Bayes Classifier Co-learning © Daniel S. Weld

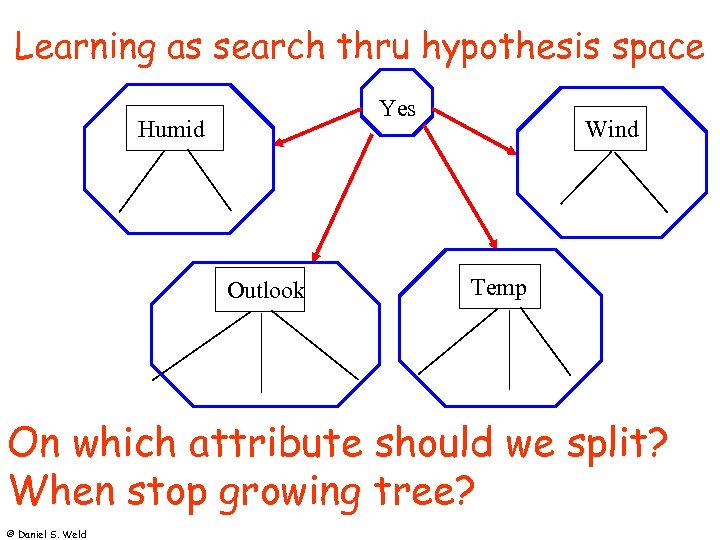

Learning as search thru hypothesis space Yes Humid Outlook Wind Temp On which attribute should we split? When stop growing tree? © Daniel S. Weld

Learning as search thru hypothesis space Yes Humid Outlook Wind Temp On which attribute should we split? When stop growing tree? © Daniel S. Weld

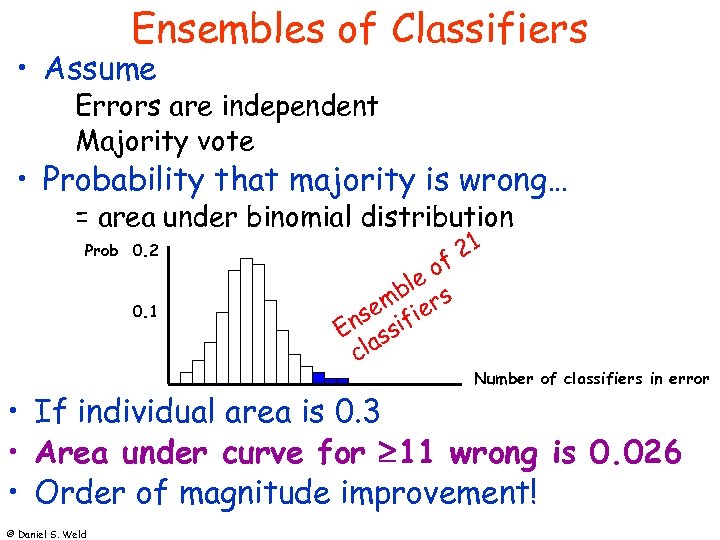

Ensembles of Classifiers • Assume Errors are independent Majority vote • Probability that majority is wrong… = area under binomial distribution Prob 0. 2 0. 1 21 of ble s m se ifier En ss cla Number of classifiers in error • If individual area is 0. 3 • Area under curve for 11 wrong is 0. 026 • Order of magnitude improvement! © Daniel S. Weld

Ensembles of Classifiers • Assume Errors are independent Majority vote • Probability that majority is wrong… = area under binomial distribution Prob 0. 2 0. 1 21 of ble s m se ifier En ss cla Number of classifiers in error • If individual area is 0. 3 • Area under curve for 11 wrong is 0. 026 • Order of magnitude improvement! © Daniel S. Weld

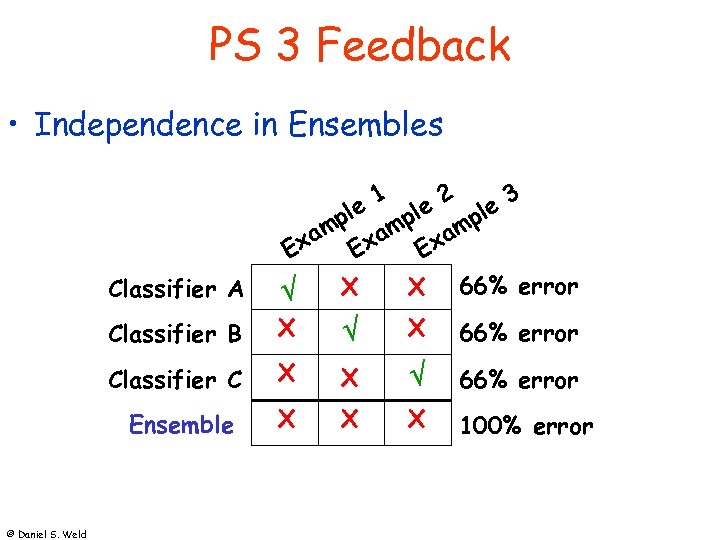

PS 3 Feedback • Independence in Ensembles 1 2 3 Classifier A Classifier B X X 66% error Classifier C X X 66% error Ensemble © Daniel S. Weld ple mple m xa Exa E X X 66% error X X X 100% error

PS 3 Feedback • Independence in Ensembles 1 2 3 Classifier A Classifier B X X 66% error Classifier C X X 66% error Ensemble © Daniel S. Weld ple mple m xa Exa E X X 66% error X X X 100% error

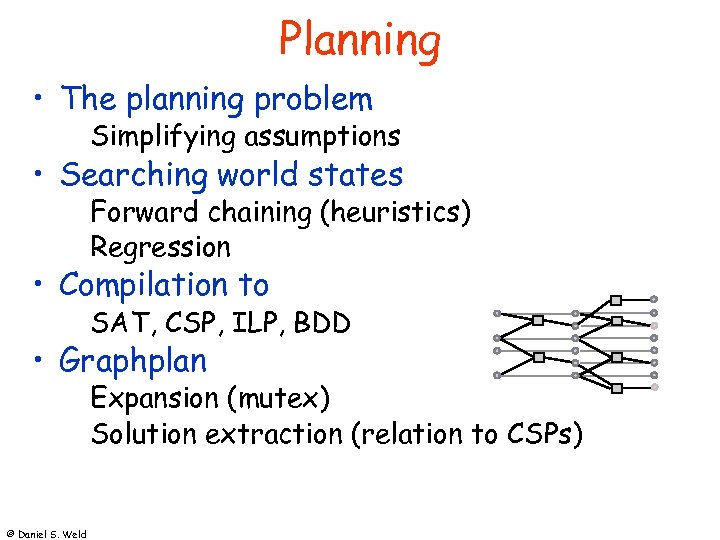

Planning • The planning problem Simplifying assumptions • Searching world states Forward chaining (heuristics) Regression • Compilation to SAT, CSP, ILP, BDD • Graphplan Expansion (mutex) Solution extraction (relation to CSPs) © Daniel S. Weld

Planning • The planning problem Simplifying assumptions • Searching world states Forward chaining (heuristics) Regression • Compilation to SAT, CSP, ILP, BDD • Graphplan Expansion (mutex) Solution extraction (relation to CSPs) © Daniel S. Weld

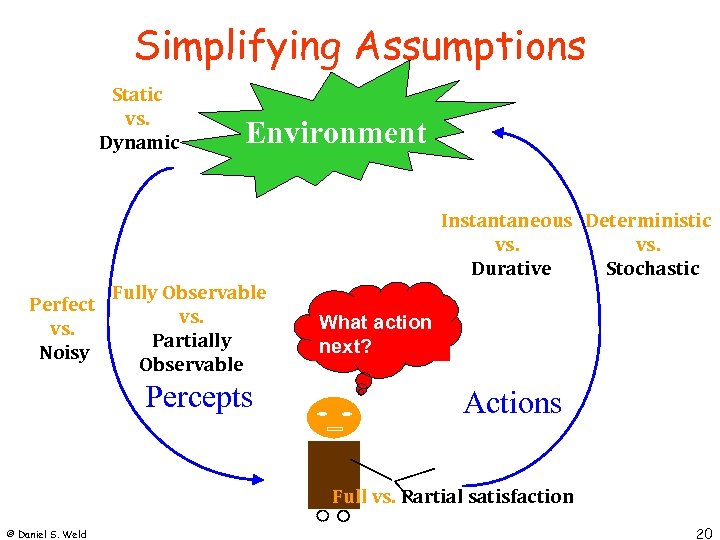

Simplifying Assumptions Static vs. Dynamic Environment Instantaneous Deterministic vs. Stochastic Durative Perfect vs. Noisy Fully Observable vs. Partially Observable Percepts What action next? Actions Full vs. Partial satisfaction © Daniel S. Weld 20

Simplifying Assumptions Static vs. Dynamic Environment Instantaneous Deterministic vs. Stochastic Durative Perfect vs. Noisy Fully Observable vs. Partially Observable Percepts What action next? Actions Full vs. Partial satisfaction © Daniel S. Weld 20

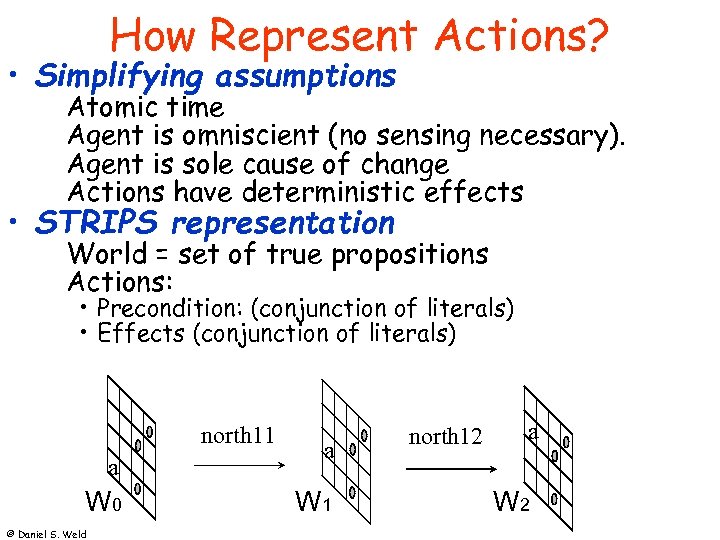

How Represent Actions? • Simplifying assumptions Atomic time Agent is omniscient (no sensing necessary). Agent is sole cause of change Actions have deterministic effects • STRIPS representation World = set of true propositions Actions: • Precondition: (conjunction of literals) • Effects (conjunction of literals) north 11 a W 0 © Daniel S. Weld a W 1 north 12 a W 2

How Represent Actions? • Simplifying assumptions Atomic time Agent is omniscient (no sensing necessary). Agent is sole cause of change Actions have deterministic effects • STRIPS representation World = set of true propositions Actions: • Precondition: (conjunction of literals) • Effects (conjunction of literals) north 11 a W 0 © Daniel S. Weld a W 1 north 12 a W 2

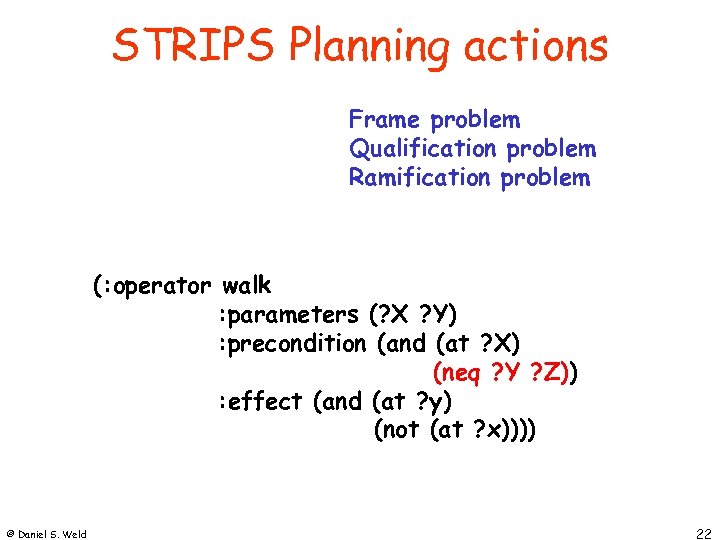

STRIPS Planning actions Frame problem Qualification problem Ramification problem (: operator walk : parameters (? X ? Y) : precondition (at ? X) (and (at (neq ? Y ? Z)) : effect (and (at ? y) (not (at ? x)))) © Daniel S. Weld 22

STRIPS Planning actions Frame problem Qualification problem Ramification problem (: operator walk : parameters (? X ? Y) : precondition (at ? X) (and (at (neq ? Y ? Z)) : effect (and (at ? y) (not (at ? x)))) © Daniel S. Weld 22

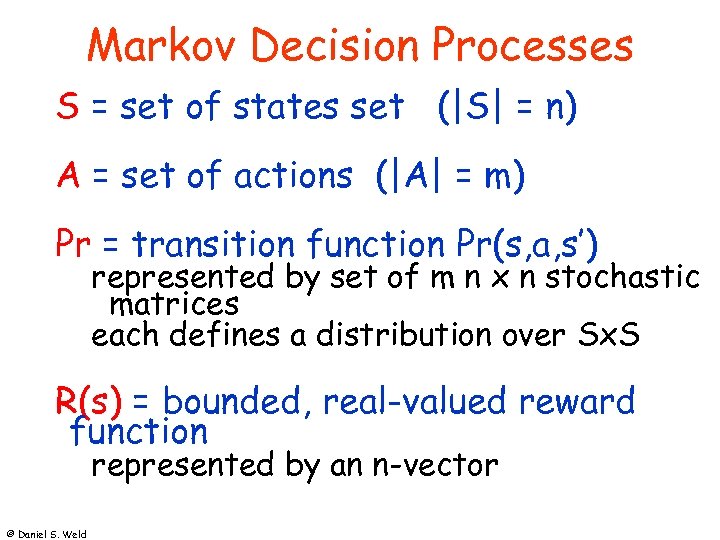

Markov Decision Processes S = set of states set (|S| = n) A = set of actions (|A| = m) Pr = transition function Pr(s, a, s’) represented by set of m n x n stochastic matrices each defines a distribution over Sx. S R(s) = bounded, real-valued reward function represented by an n-vector © Daniel S. Weld

Markov Decision Processes S = set of states set (|S| = n) A = set of actions (|A| = m) Pr = transition function Pr(s, a, s’) represented by set of m n x n stochastic matrices each defines a distribution over Sx. S R(s) = bounded, real-valued reward function represented by an n-vector © Daniel S. Weld

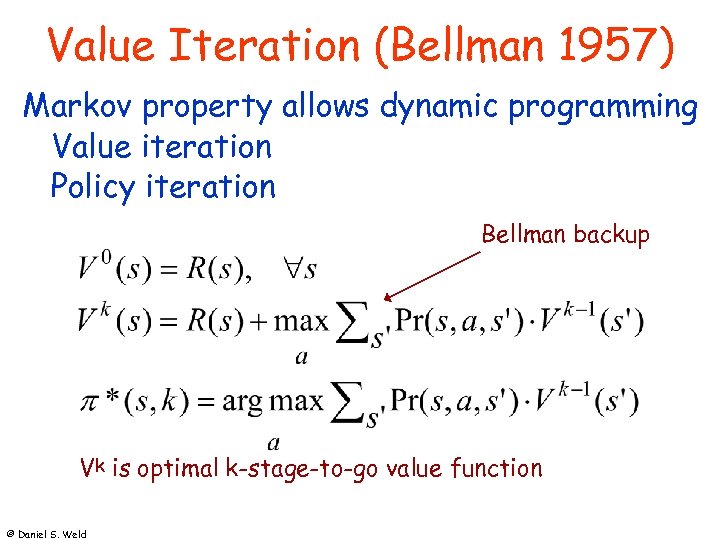

Value Iteration (Bellman 1957) Markov property allows dynamic programming Value iteration Policy iteration Bellman backup Vk is optimal k-stage-to-go value function © Daniel S. Weld

Value Iteration (Bellman 1957) Markov property allows dynamic programming Value iteration Policy iteration Bellman backup Vk is optimal k-stage-to-go value function © Daniel S. Weld

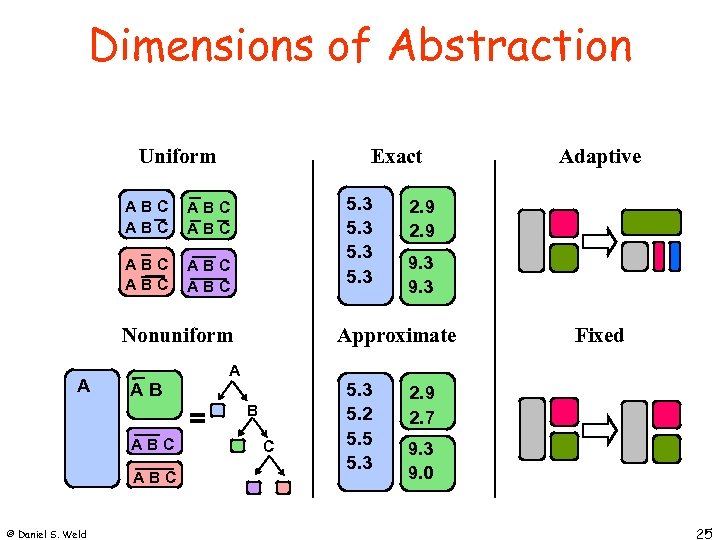

Dimensions of Abstraction Uniform Exact ABC ABC 5. 3 ABC ABC Nonuniform A AB = ABC © Daniel S. Weld 2. 9 9. 3 Approximate A B C 5. 3 5. 2 5. 5 5. 3 Adaptive Fixed 2. 9 2. 7 9. 3 9. 0 25

Dimensions of Abstraction Uniform Exact ABC ABC 5. 3 ABC ABC Nonuniform A AB = ABC © Daniel S. Weld 2. 9 9. 3 Approximate A B C 5. 3 5. 2 5. 5 5. 3 Adaptive Fixed 2. 9 2. 7 9. 3 9. 0 25

Partial observability • Belief states POMDP © Daniel S. Weld

Partial observability • Belief states POMDP © Daniel S. Weld

Reinforcement Learning • Adaptive dynamic programming Learns a utility function on states • Temporal-difference learning Don’t update value at every state • Exploration functions Balance exploration / exploitation • Function approximation Compress a large state space into a small one Linear function approximation, neural nets, … © Daniel S. Weld

Reinforcement Learning • Adaptive dynamic programming Learns a utility function on states • Temporal-difference learning Don’t update value at every state • Exploration functions Balance exploration / exploitation • Function approximation Compress a large state space into a small one Linear function approximation, neural nets, … © Daniel S. Weld

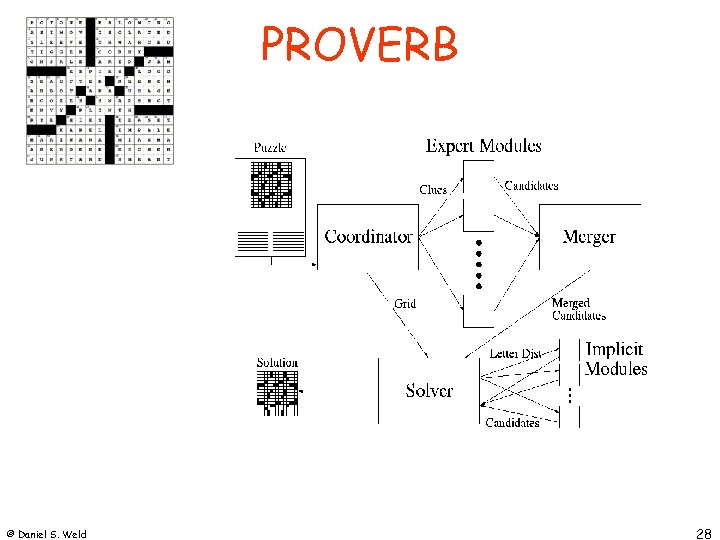

PROVERB © Daniel S. Weld 28

PROVERB © Daniel S. Weld 28

PROVERB • Weaknesses © Daniel S. Weld

PROVERB • Weaknesses © Daniel S. Weld

PROVERB • Future Work © Daniel S. Weld

PROVERB • Future Work © Daniel S. Weld

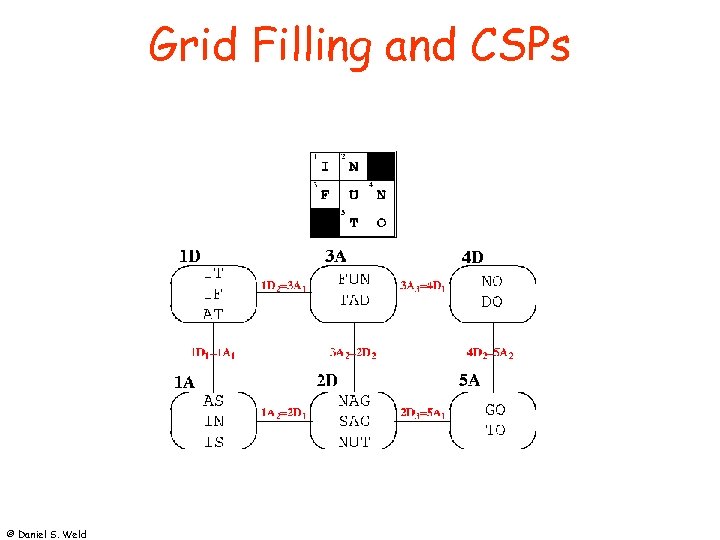

Grid Filling and CSPs © Daniel S. Weld

Grid Filling and CSPs © Daniel S. Weld

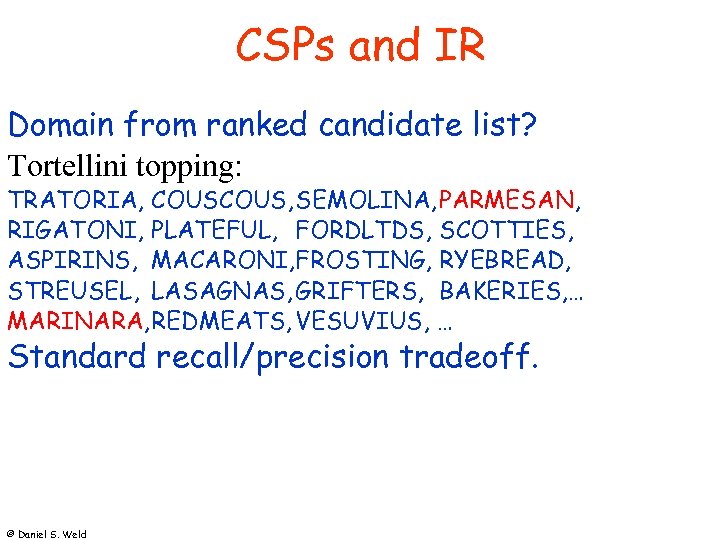

CSPs and IR Domain from ranked candidate list? Tortellini topping: TRATORIA, COUS, SEMOLINA, PARMESAN, RIGATONI, PLATEFUL, FORDLTDS, SCOTTIES, ASPIRINS, MACARONI, FROSTING, RYEBREAD, STREUSEL, LASAGNAS, GRIFTERS, BAKERIES, … MARINARA, REDMEATS, VESUVIUS, … Standard recall/precision tradeoff. © Daniel S. Weld

CSPs and IR Domain from ranked candidate list? Tortellini topping: TRATORIA, COUS, SEMOLINA, PARMESAN, RIGATONI, PLATEFUL, FORDLTDS, SCOTTIES, ASPIRINS, MACARONI, FROSTING, RYEBREAD, STREUSEL, LASAGNAS, GRIFTERS, BAKERIES, … MARINARA, REDMEATS, VESUVIUS, … Standard recall/precision tradeoff. © Daniel S. Weld

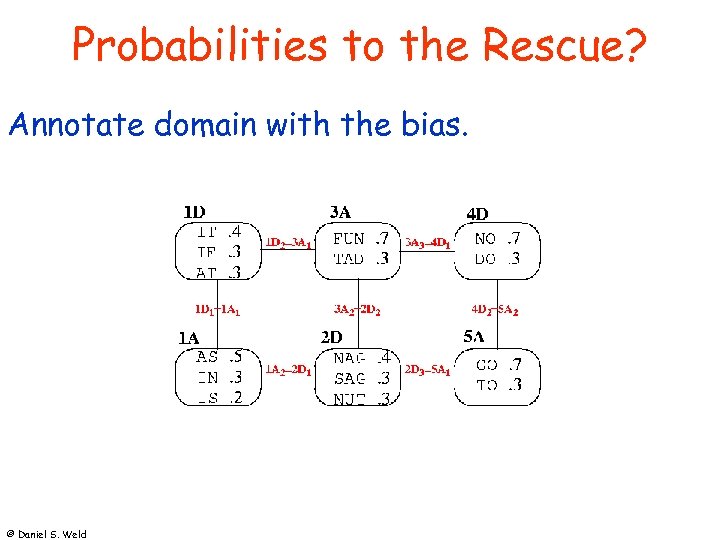

Probabilities to the Rescue? Annotate domain with the bias. © Daniel S. Weld

Probabilities to the Rescue? Annotate domain with the bias. © Daniel S. Weld

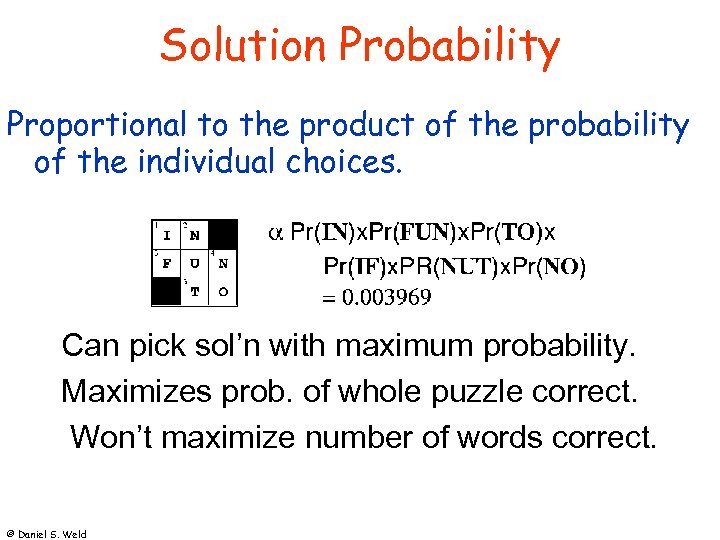

Solution Probability Proportional to the product of the probability of the individual choices. Can pick sol’n with maximum probability. Maximizes prob. of whole puzzle correct. Won’t maximize number of words correct. © Daniel S. Weld

Solution Probability Proportional to the product of the probability of the individual choices. Can pick sol’n with maximum probability. Maximizes prob. of whole puzzle correct. Won’t maximize number of words correct. © Daniel S. Weld

Trivial Pursuit™ Race around board, answer questions. Categories: Geography, Entertainment, History, Literature, Science, Sports © Daniel S. Weld

Trivial Pursuit™ Race around board, answer questions. Categories: Geography, Entertainment, History, Literature, Science, Sports © Daniel S. Weld

Wigwam QA via AQUA (Abney et al. 00) • back off: word match in order helps score. • “When was Amelia Earhart's last flight? ” • 1937, 1897 (birth), 1997 (reenactment) • Named entities only, 100 G of web pages Move selection via MDP (Littman 00) • Estimate category accuracy. • Minimize expected turns to finish. © Daniel S. Weld

Wigwam QA via AQUA (Abney et al. 00) • back off: word match in order helps score. • “When was Amelia Earhart's last flight? ” • 1937, 1897 (birth), 1997 (reenactment) • Named entities only, 100 G of web pages Move selection via MDP (Littman 00) • Estimate category accuracy. • Minimize expected turns to finish. © Daniel S. Weld

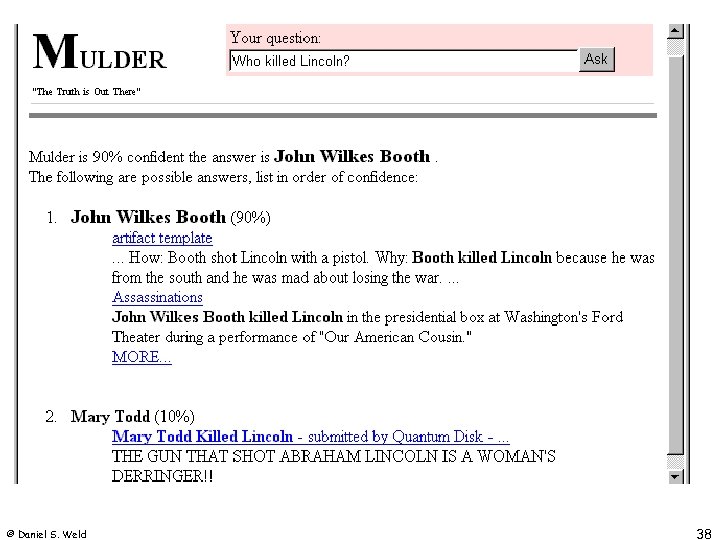

Mulder • Question Answering System User asks Natural Language question: “Who killed Lincoln? ” Mulder answers: “John Wilkes Booth” • KB = Web/Search Engines • Domain-independent • Fully automated © Daniel S. Weld 37

Mulder • Question Answering System User asks Natural Language question: “Who killed Lincoln? ” Mulder answers: “John Wilkes Booth” • KB = Web/Search Engines • Domain-independent • Fully automated © Daniel S. Weld 37

© Daniel S. Weld 38

© Daniel S. Weld 38

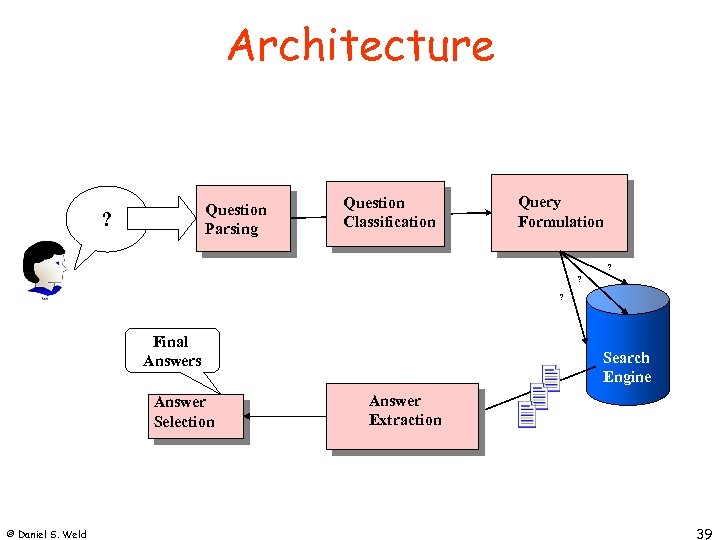

Architecture Question Parsing ? Question Classification Query Formulation ? ? ? Final Answers Answer Selection © Daniel S. Weld Search Engine Answer Extraction 39

Architecture Question Parsing ? Question Classification Query Formulation ? ? ? Final Answers Answer Selection © Daniel S. Weld Search Engine Answer Extraction 39

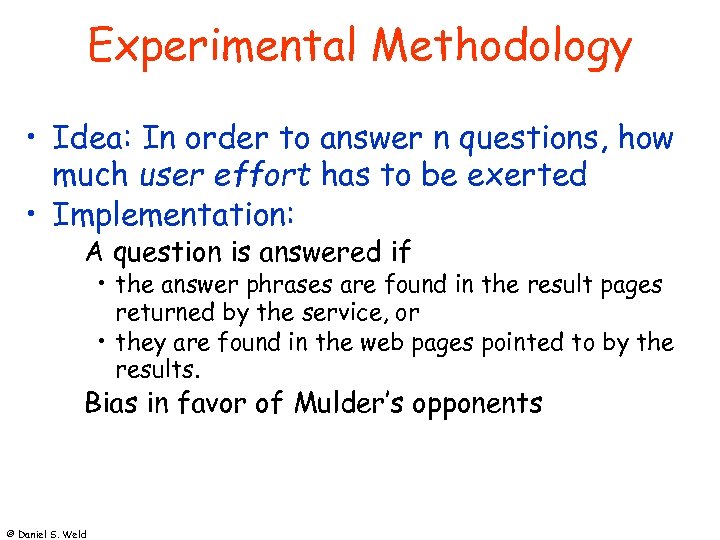

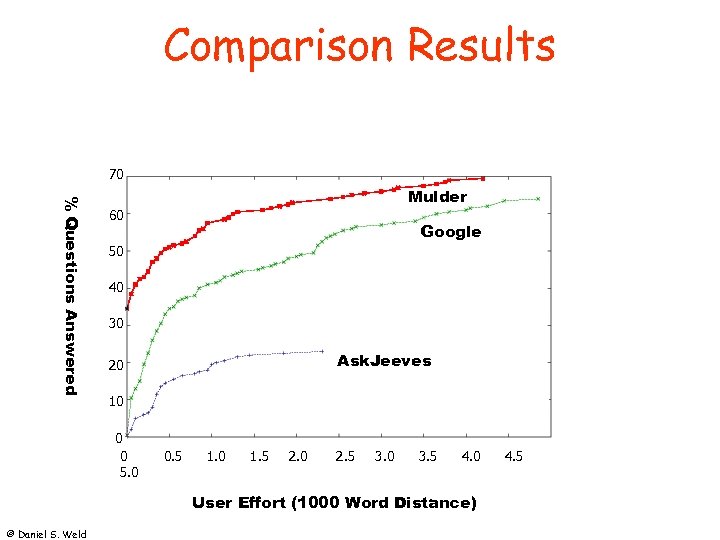

Experimental Methodology • Idea: In order to answer n questions, how much user effort has to be exerted • Implementation: A question is answered if • the answer phrases are found in the result pages returned by the service, or • they are found in the web pages pointed to by the results. Bias in favor of Mulder’s opponents © Daniel S. Weld

Experimental Methodology • Idea: In order to answer n questions, how much user effort has to be exerted • Implementation: A question is answered if • the answer phrases are found in the result pages returned by the service, or • they are found in the web pages pointed to by the results. Bias in favor of Mulder’s opponents © Daniel S. Weld

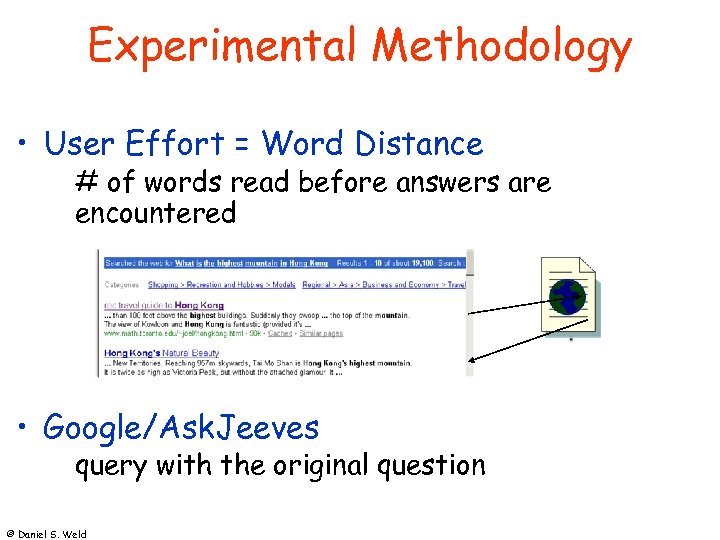

Experimental Methodology • User Effort = Word Distance # of words read before answers are encountered • Google/Ask. Jeeves query with the original question © Daniel S. Weld

Experimental Methodology • User Effort = Word Distance # of words read before answers are encountered • Google/Ask. Jeeves query with the original question © Daniel S. Weld

Comparison Results 70 % Questions Answered Mulder 60 Google 50 40 30 Ask. Jeeves 20 10 0 0 5. 0 0. 5 1. 0 1. 5 2. 0 2. 5 3. 0 3. 5 4. 0 User Effort (1000 Word Distance) © Daniel S. Weld 4. 5

Comparison Results 70 % Questions Answered Mulder 60 Google 50 40 30 Ask. Jeeves 20 10 0 0 5. 0 0. 5 1. 0 1. 5 2. 0 2. 5 3. 0 3. 5 4. 0 User Effort (1000 Word Distance) © Daniel S. Weld 4. 5

Know It All • Research project started June 2003 • Large scale information extraction Domain-independent extraction PMI-IR Completeness of web © Daniel S. Weld

Know It All • Research project started June 2003 • Large scale information extraction Domain-independent extraction PMI-IR Completeness of web © Daniel S. Weld

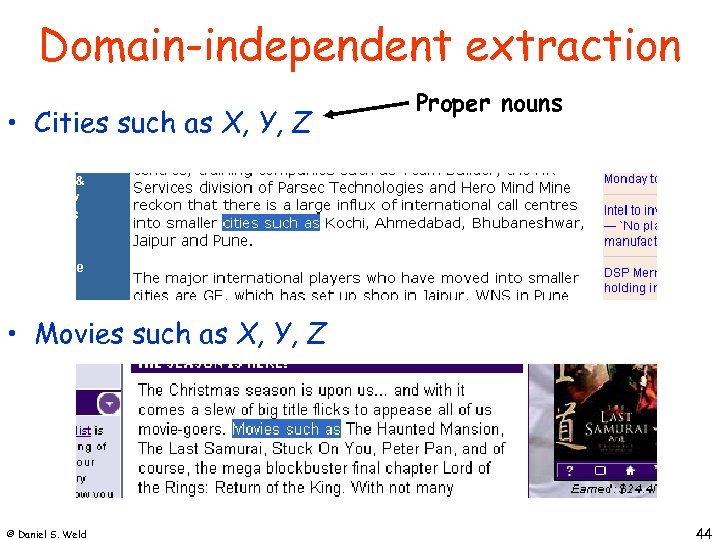

Domain-independent extraction • Cities such as X, Y, Z Proper nouns • Movies such as X, Y, Z © Daniel S. Weld 44

Domain-independent extraction • Cities such as X, Y, Z Proper nouns • Movies such as X, Y, Z © Daniel S. Weld 44

TOEFL Synonyms Used in college applications. fish (a) (b) (c) (d) scale angle swim dredge Turney: PMI-IR © Daniel S. Weld

TOEFL Synonyms Used in college applications. fish (a) (b) (c) (d) scale angle swim dredge Turney: PMI-IR © Daniel S. Weld

Comprehensive Coverage Search on: boston seattle paris chicago london © Daniel S. Weld 46

Comprehensive Coverage Search on: boston seattle paris chicago london © Daniel S. Weld 46

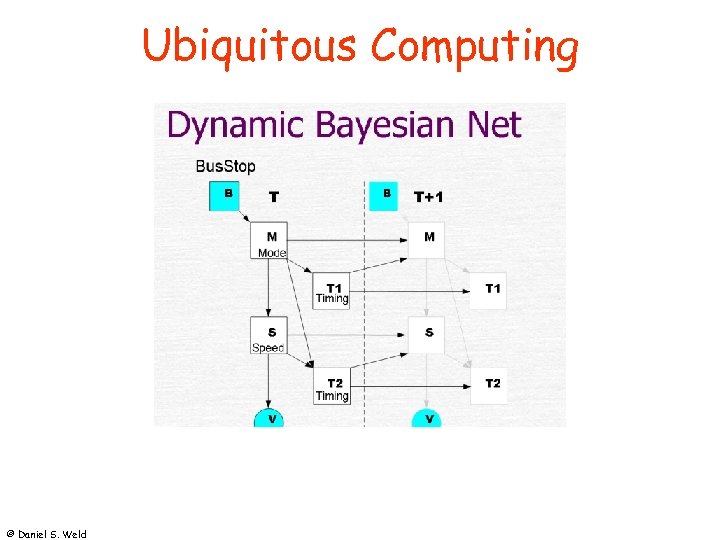

Ubiquitous Computing © Daniel S. Weld

Ubiquitous Computing © Daniel S. Weld

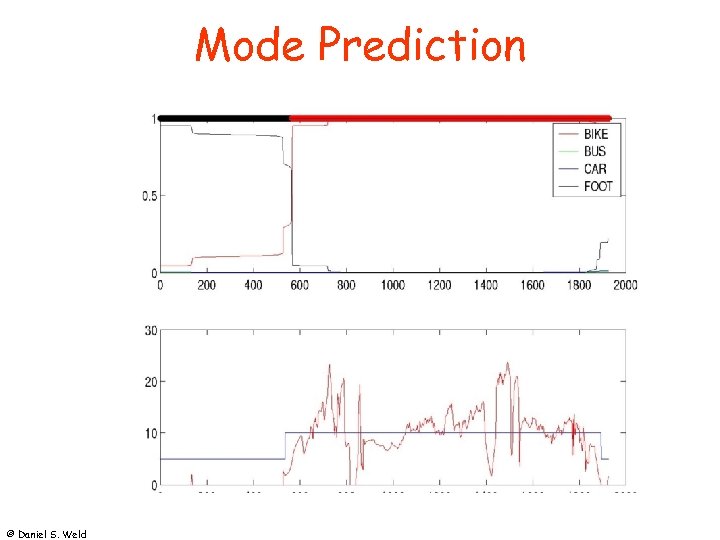

Mode Prediction © Daniel S. Weld

Mode Prediction © Daniel S. Weld

Placelab • Location-aware computing © Daniel S. Weld

Placelab • Location-aware computing © Daniel S. Weld

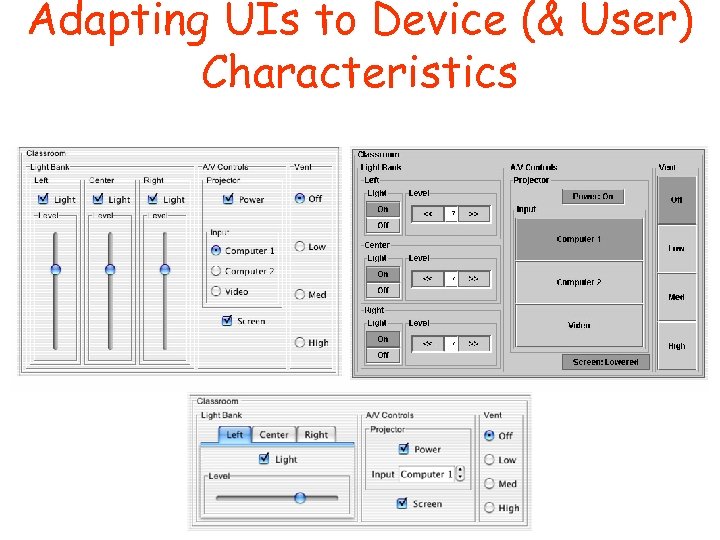

Adapting UIs to Device (& User) Characteristics

Adapting UIs to Device (& User) Characteristics