2ca18f6e50841b927b58192a8631230f.ppt

- Количество слайдов: 30

Systems I Pipelining I Topics n n n Pipelining principles Pipeline overheads Pipeline registers and stages

Systems I Pipelining I Topics n n n Pipelining principles Pipeline overheads Pipeline registers and stages

Overview What’s wrong with the sequential (SEQ) Y 86? n It’s slow! n Each piece of hardware is used only a small fraction of time We would like to find a way to get more performance with only a little more hardware n General Principles of Pipelining n n Goal Difficulties Creating a Pipelined Y 86 Processor n n n Rearranging SEQ Inserting pipeline registers Problems with data and control hazards 2

Overview What’s wrong with the sequential (SEQ) Y 86? n It’s slow! n Each piece of hardware is used only a small fraction of time We would like to find a way to get more performance with only a little more hardware n General Principles of Pipelining n n Goal Difficulties Creating a Pipelined Y 86 Processor n n n Rearranging SEQ Inserting pipeline registers Problems with data and control hazards 2

Real-World Pipelines: Car Washes Parallel Sequential Pipelined Idea n n n Divide process into independent stages Move objects through stages in sequence At any given times, multiple objects being processed 3

Real-World Pipelines: Car Washes Parallel Sequential Pipelined Idea n n n Divide process into independent stages Move objects through stages in sequence At any given times, multiple objects being processed 3

Laundry example Ann, Brian, Cathy, Dave each have one load of clothes to wash, dry, and fold A B C D Washer takes 30 minutes Dryer takes 30 minutes “Folder” takes 30 minutes “Stasher” takes 30 minutes to put clothes into drawers Slide courtesy of D. Patterson 4

Laundry example Ann, Brian, Cathy, Dave each have one load of clothes to wash, dry, and fold A B C D Washer takes 30 minutes Dryer takes 30 minutes “Folder” takes 30 minutes “Stasher” takes 30 minutes to put clothes into drawers Slide courtesy of D. Patterson 4

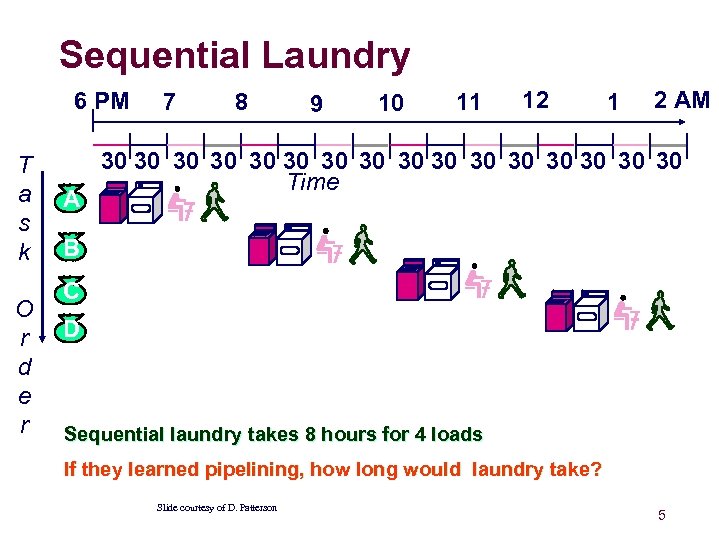

Sequential Laundry 6 PM T a s k O r d e r A 7 8 9 10 11 12 1 2 AM 30 30 30 30 Time B C D Sequential laundry takes 8 hours for 4 loads If they learned pipelining, how long would laundry take? Slide courtesy of D. Patterson 5

Sequential Laundry 6 PM T a s k O r d e r A 7 8 9 10 11 12 1 2 AM 30 30 30 30 Time B C D Sequential laundry takes 8 hours for 4 loads If they learned pipelining, how long would laundry take? Slide courtesy of D. Patterson 5

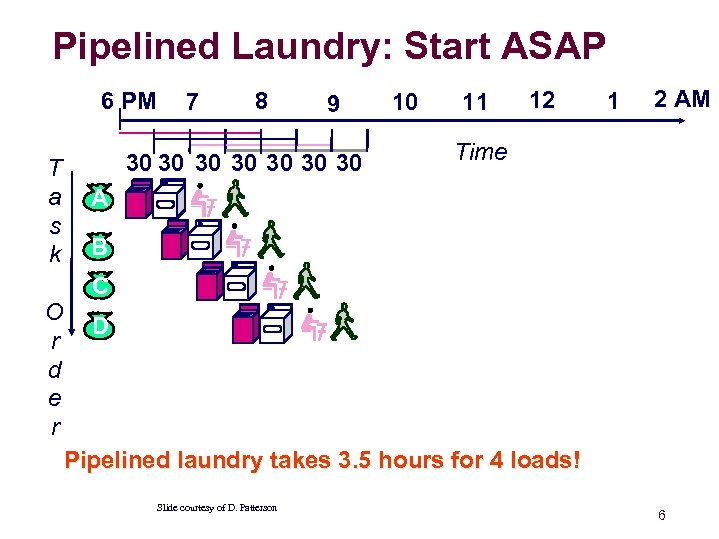

Pipelined Laundry: Start ASAP 6 PM T a s k 7 8 9 30 30 10 11 12 1 2 AM Time A B C O D r d e r Pipelined laundry takes 3. 5 hours for 4 loads! Slide courtesy of D. Patterson 6

Pipelined Laundry: Start ASAP 6 PM T a s k 7 8 9 30 30 10 11 12 1 2 AM Time A B C O D r d e r Pipelined laundry takes 3. 5 hours for 4 loads! Slide courtesy of D. Patterson 6

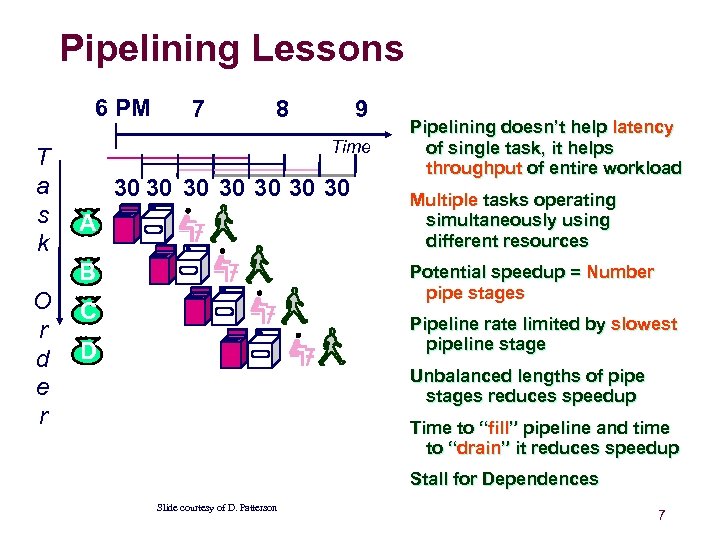

Pipelining Lessons 6 PM T a s k 7 8 Time 30 30 A B O r d e r 9 Pipelining doesn’t help latency of single task, it helps throughput of entire workload Multiple tasks operating simultaneously using different resources Potential speedup = Number pipe stages C Pipeline rate limited by slowest pipeline stage D Unbalanced lengths of pipe stages reduces speedup Time to “fill” pipeline and time to “drain” it reduces speedup Stall for Dependences Slide courtesy of D. Patterson 7

Pipelining Lessons 6 PM T a s k 7 8 Time 30 30 A B O r d e r 9 Pipelining doesn’t help latency of single task, it helps throughput of entire workload Multiple tasks operating simultaneously using different resources Potential speedup = Number pipe stages C Pipeline rate limited by slowest pipeline stage D Unbalanced lengths of pipe stages reduces speedup Time to “fill” pipeline and time to “drain” it reduces speedup Stall for Dependences Slide courtesy of D. Patterson 7

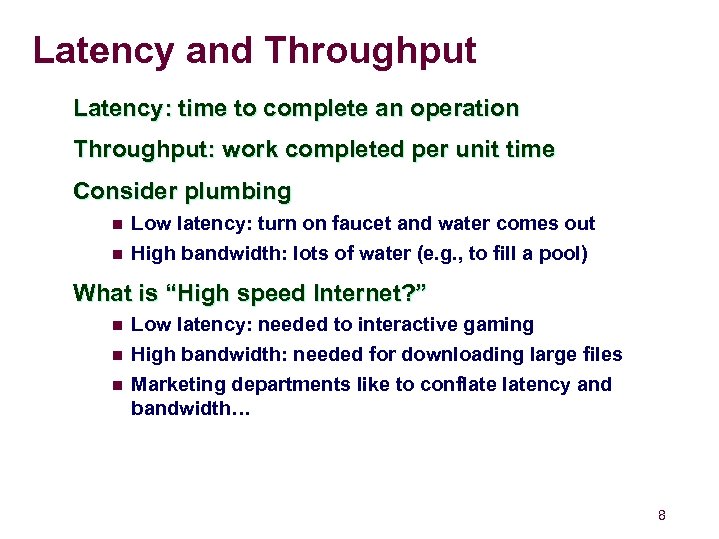

Latency and Throughput Latency: time to complete an operation Throughput: work completed per unit time Consider plumbing n n Low latency: turn on faucet and water comes out High bandwidth: lots of water (e. g. , to fill a pool) What is “High speed Internet? ” n n n Low latency: needed to interactive gaming High bandwidth: needed for downloading large files Marketing departments like to conflatency and bandwidth… 8

Latency and Throughput Latency: time to complete an operation Throughput: work completed per unit time Consider plumbing n n Low latency: turn on faucet and water comes out High bandwidth: lots of water (e. g. , to fill a pool) What is “High speed Internet? ” n n n Low latency: needed to interactive gaming High bandwidth: needed for downloading large files Marketing departments like to conflatency and bandwidth… 8

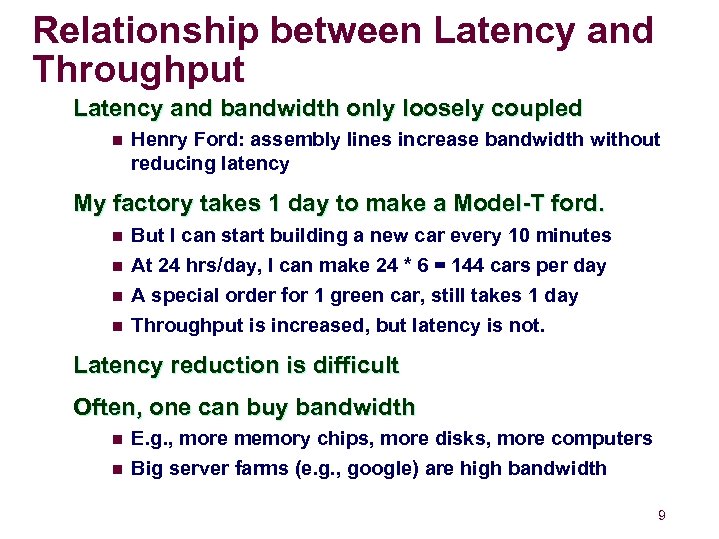

Relationship between Latency and Throughput Latency and bandwidth only loosely coupled n Henry Ford: assembly lines increase bandwidth without reducing latency My factory takes 1 day to make a Model-T ford. n n But I can start building a new car every 10 minutes At 24 hrs/day, I can make 24 * 6 = 144 cars per day A special order for 1 green car, still takes 1 day Throughput is increased, but latency is not. Latency reduction is difficult Often, one can buy bandwidth n n E. g. , more memory chips, more disks, more computers Big server farms (e. g. , google) are high bandwidth 9

Relationship between Latency and Throughput Latency and bandwidth only loosely coupled n Henry Ford: assembly lines increase bandwidth without reducing latency My factory takes 1 day to make a Model-T ford. n n But I can start building a new car every 10 minutes At 24 hrs/day, I can make 24 * 6 = 144 cars per day A special order for 1 green car, still takes 1 day Throughput is increased, but latency is not. Latency reduction is difficult Often, one can buy bandwidth n n E. g. , more memory chips, more disks, more computers Big server farms (e. g. , google) are high bandwidth 9

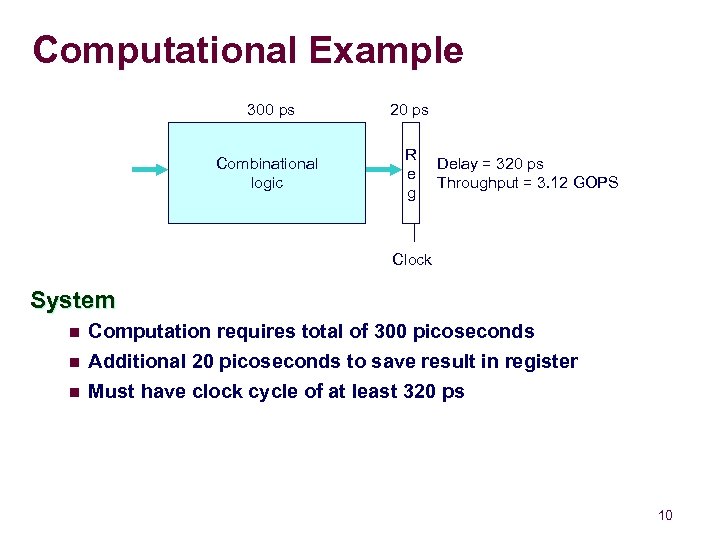

Computational Example 300 ps 20 ps Combinational logic R e g Delay = 320 ps Throughput = 3. 12 GOPS Clock System n Computation requires total of 300 picoseconds n Additional 20 picoseconds to save result in register Must have clock cycle of at least 320 ps n 10

Computational Example 300 ps 20 ps Combinational logic R e g Delay = 320 ps Throughput = 3. 12 GOPS Clock System n Computation requires total of 300 picoseconds n Additional 20 picoseconds to save result in register Must have clock cycle of at least 320 ps n 10

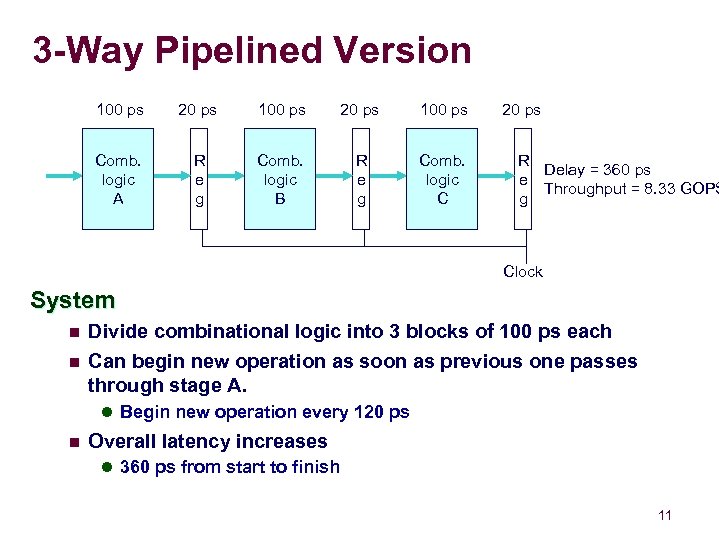

3 -Way Pipelined Version 100 ps 20 ps 100 ps Comb. logic A R e g Comb. logic B R e g Comb. logic C 20 ps R Delay = 360 ps e Throughput = 8. 33 GOPS g Clock System n Divide combinational logic into 3 blocks of 100 ps each n Can begin new operation as soon as previous one passes through stage A. l Begin new operation every 120 ps n Overall latency increases l 360 ps from start to finish 11

3 -Way Pipelined Version 100 ps 20 ps 100 ps Comb. logic A R e g Comb. logic B R e g Comb. logic C 20 ps R Delay = 360 ps e Throughput = 8. 33 GOPS g Clock System n Divide combinational logic into 3 blocks of 100 ps each n Can begin new operation as soon as previous one passes through stage A. l Begin new operation every 120 ps n Overall latency increases l 360 ps from start to finish 11

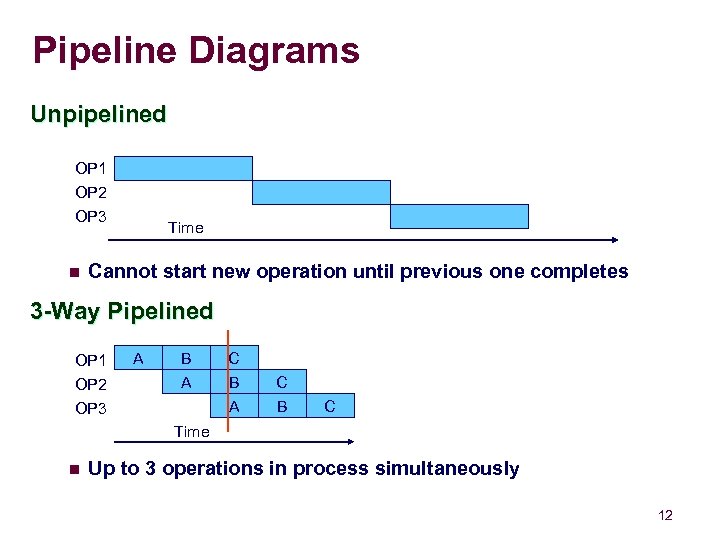

Pipeline Diagrams Unpipelined OP 1 OP 2 OP 3 n Time Cannot start new operation until previous one completes 3 -Way Pipelined OP 1 OP 2 OP 3 A B A C B C Time n Up to 3 operations in process simultaneously 12

Pipeline Diagrams Unpipelined OP 1 OP 2 OP 3 n Time Cannot start new operation until previous one completes 3 -Way Pipelined OP 1 OP 2 OP 3 A B A C B C Time n Up to 3 operations in process simultaneously 12

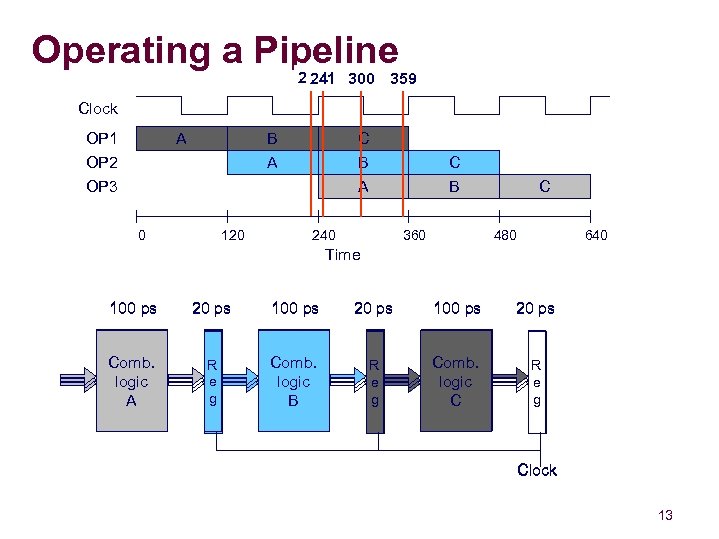

Operating a Pipeline 239 300 359 241 Clock OP 1 OP 2 OP 3 A B A 120 C A 0 C B B 240 360 C 480 640 Time 100 ps 20 ps Comb. logic A R e g Comb. logic B R e g Comb. logic C R e g Clock 13

Operating a Pipeline 239 300 359 241 Clock OP 1 OP 2 OP 3 A B A 120 C A 0 C B B 240 360 C 480 640 Time 100 ps 20 ps Comb. logic A R e g Comb. logic B R e g Comb. logic C R e g Clock 13

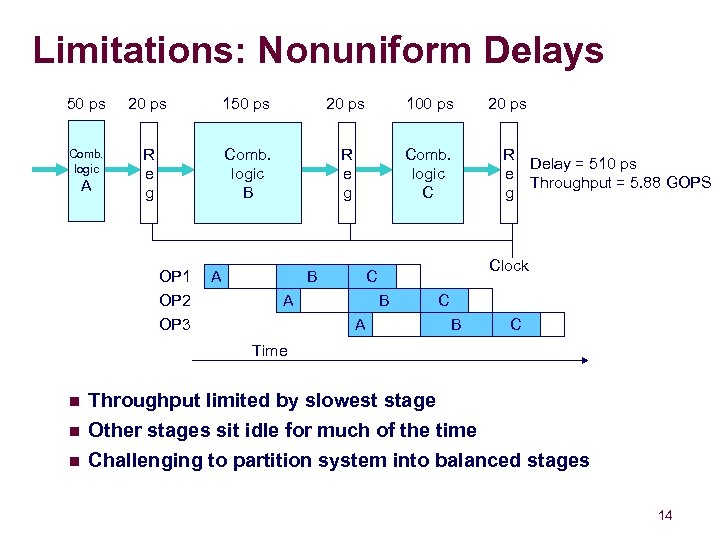

Limitations: Nonuniform Delays 50 ps 20 ps 100 ps Comb. logic R e g Comb. logic B R e g Comb. logic C A OP 1 OP 2 OP 3 A B B A R Delay = 510 ps e Throughput = 5. 88 GOPS g Clock C A 20 ps C B C Time n Throughput limited by slowest stage Other stages sit idle for much of the time n Challenging to partition system into balanced stages n 14

Limitations: Nonuniform Delays 50 ps 20 ps 100 ps Comb. logic R e g Comb. logic B R e g Comb. logic C A OP 1 OP 2 OP 3 A B B A R Delay = 510 ps e Throughput = 5. 88 GOPS g Clock C A 20 ps C B C Time n Throughput limited by slowest stage Other stages sit idle for much of the time n Challenging to partition system into balanced stages n 14

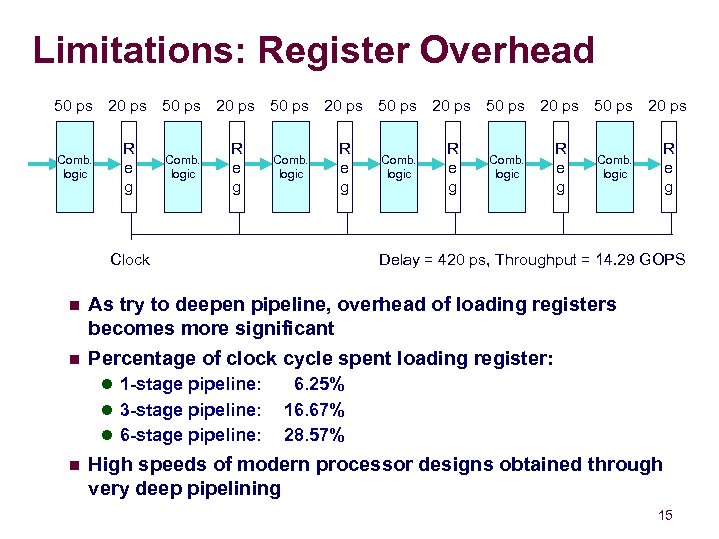

Limitations: Register Overhead 50 ps 20 ps 50 ps 20 ps Comb. logic R e g Delay = 420 ps, Throughput = 14. 29 GOPS Clock n As try to deepen pipeline, overhead of loading registers becomes more significant n Percentage of clock cycle spent loading register: l 1 -stage pipeline: l 3 -stage pipeline: l 6 -stage pipeline: n 6. 25% 16. 67% 28. 57% High speeds of modern processor designs obtained through very deep pipelining 15

Limitations: Register Overhead 50 ps 20 ps 50 ps 20 ps Comb. logic R e g Delay = 420 ps, Throughput = 14. 29 GOPS Clock n As try to deepen pipeline, overhead of loading registers becomes more significant n Percentage of clock cycle spent loading register: l 1 -stage pipeline: l 3 -stage pipeline: l 6 -stage pipeline: n 6. 25% 16. 67% 28. 57% High speeds of modern processor designs obtained through very deep pipelining 15

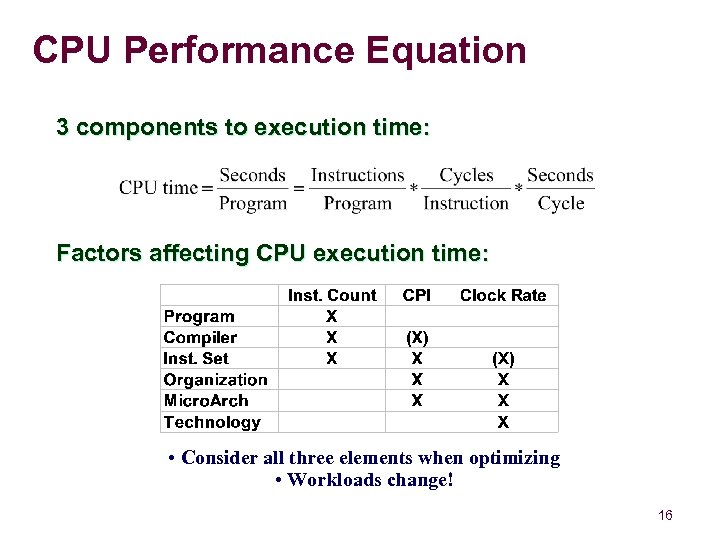

CPU Performance Equation 3 components to execution time: Factors affecting CPU execution time: • Consider all three elements when optimizing • Workloads change! 16

CPU Performance Equation 3 components to execution time: Factors affecting CPU execution time: • Consider all three elements when optimizing • Workloads change! 16

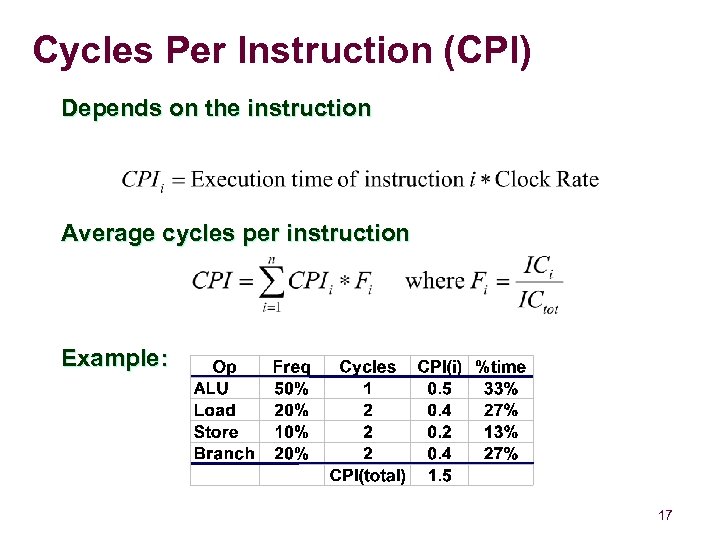

Cycles Per Instruction (CPI) Depends on the instruction Average cycles per instruction Example: 17

Cycles Per Instruction (CPI) Depends on the instruction Average cycles per instruction Example: 17

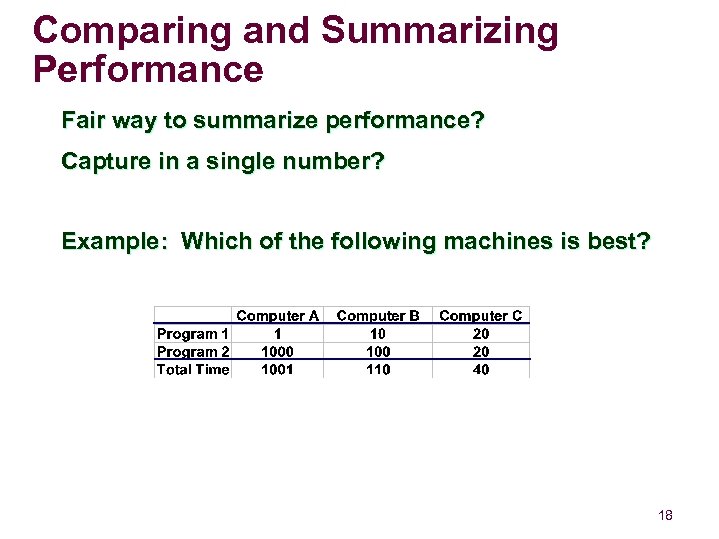

Comparing and Summarizing Performance Fair way to summarize performance? Capture in a single number? Example: Which of the following machines is best? 18

Comparing and Summarizing Performance Fair way to summarize performance? Capture in a single number? Example: Which of the following machines is best? 18

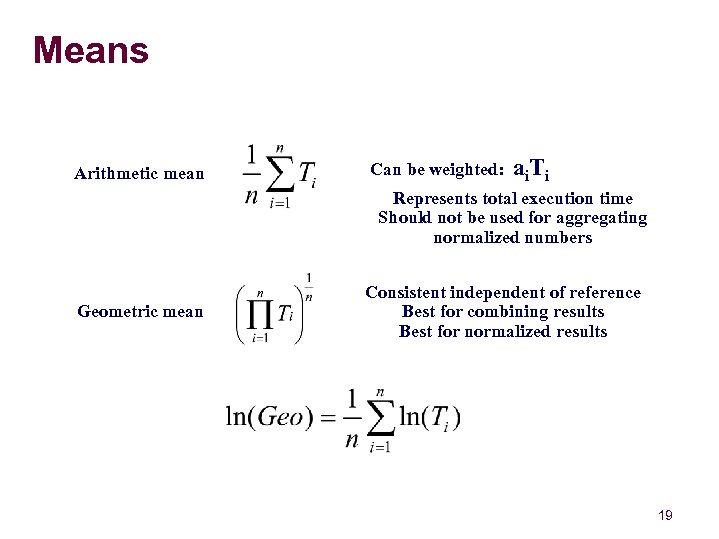

Means Arithmetic mean Can be weighted: ai. Ti Represents total execution time Should not be used for aggregating normalized numbers Geometric mean Consistent independent of reference Best for combining results Best for normalized results 19

Means Arithmetic mean Can be weighted: ai. Ti Represents total execution time Should not be used for aggregating normalized numbers Geometric mean Consistent independent of reference Best for combining results Best for normalized results 19

What is the geometric mean of 2 and 8? n A. 5 n B. 4 20

What is the geometric mean of 2 and 8? n A. 5 n B. 4 20

Is Speed the Last Word in Performance? Depends on the application! Cost n Not just processor, but other components (ie. memory) Power consumption n Trade power for performance in many applications Capacity n Many database applications are I/O bound and disk bandwidth is the precious commodity 21

Is Speed the Last Word in Performance? Depends on the application! Cost n Not just processor, but other components (ie. memory) Power consumption n Trade power for performance in many applications Capacity n Many database applications are I/O bound and disk bandwidth is the precious commodity 21

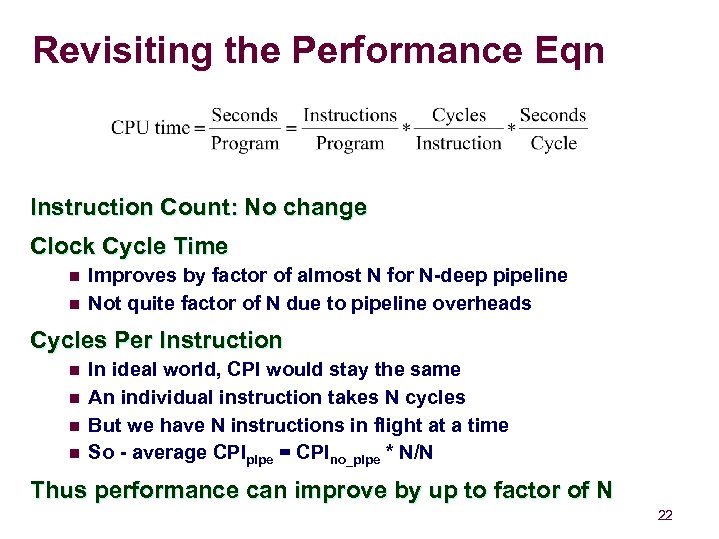

Revisiting the Performance Eqn Instruction Count: No change Clock Cycle Time n n Improves by factor of almost N for N-deep pipeline Not quite factor of N due to pipeline overheads Cycles Per Instruction n n In ideal world, CPI would stay the same An individual instruction takes N cycles But we have N instructions in flight at a time So - average CPIpipe = CPIno_pipe * N/N Thus performance can improve by up to factor of N 22

Revisiting the Performance Eqn Instruction Count: No change Clock Cycle Time n n Improves by factor of almost N for N-deep pipeline Not quite factor of N due to pipeline overheads Cycles Per Instruction n n In ideal world, CPI would stay the same An individual instruction takes N cycles But we have N instructions in flight at a time So - average CPIpipe = CPIno_pipe * N/N Thus performance can improve by up to factor of N 22

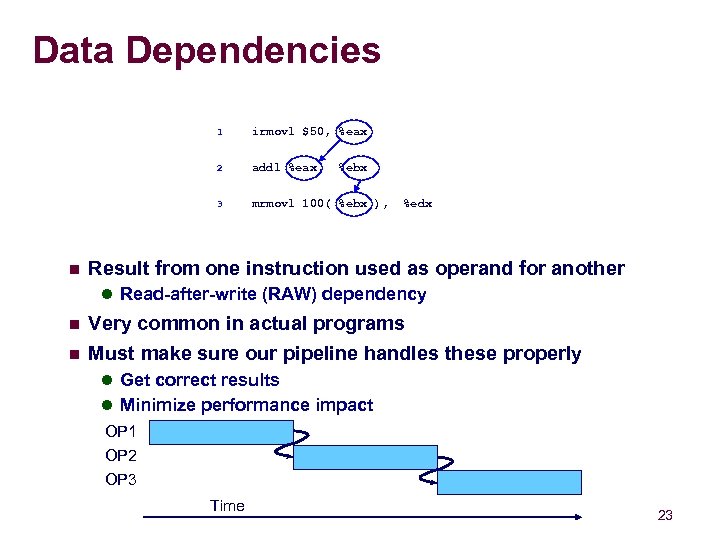

Data Dependencies 1 2 addl %eax, 3 n irmovl $50, %eax mrmovl 100( %ebx ), %ebx %edx Result from one instruction used as operand for another l Read-after-write (RAW) dependency n n Very common in actual programs Must make sure our pipeline handles these properly l Get correct results l Minimize performance impact OP 1 OP 2 OP 3 Time 23

Data Dependencies 1 2 addl %eax, 3 n irmovl $50, %eax mrmovl 100( %ebx ), %ebx %edx Result from one instruction used as operand for another l Read-after-write (RAW) dependency n n Very common in actual programs Must make sure our pipeline handles these properly l Get correct results l Minimize performance impact OP 1 OP 2 OP 3 Time 23

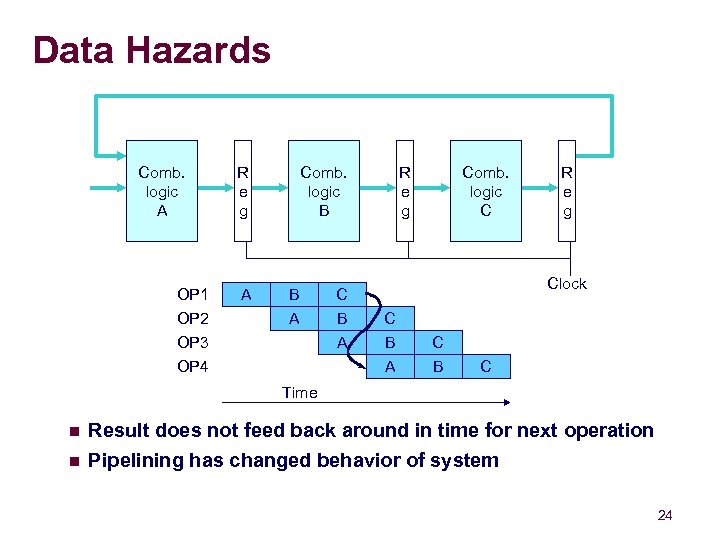

Data Hazards Comb. logic A OP 1 OP 2 OP 3 R e g A Comb. logic B B A C B A R e g Comb. logic C Clock C B C A OP 4 R e g B C Time n n Result does not feed back around in time for next operation Pipelining has changed behavior of system 24

Data Hazards Comb. logic A OP 1 OP 2 OP 3 R e g A Comb. logic B B A C B A R e g Comb. logic C Clock C B C A OP 4 R e g B C Time n n Result does not feed back around in time for next operation Pipelining has changed behavior of system 24

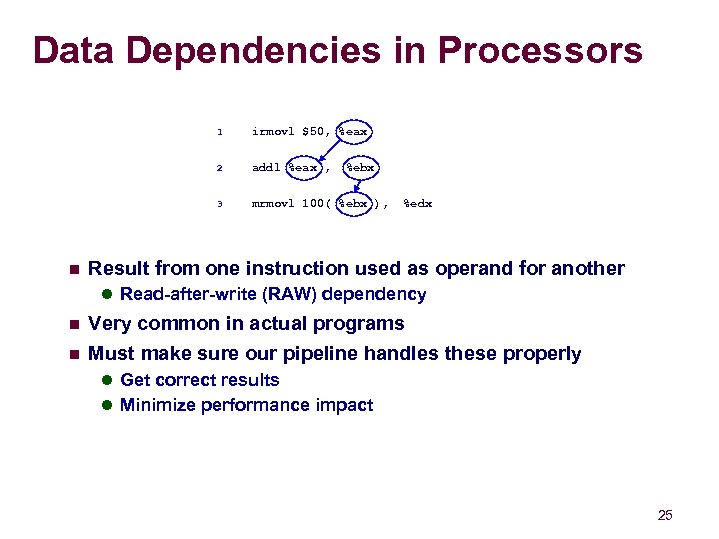

Data Dependencies in Processors 1 2 addl %eax , 3 n irmovl $50, %eax mrmovl 100( %ebx ), %ebx %edx Result from one instruction used as operand for another l Read-after-write (RAW) dependency n n Very common in actual programs Must make sure our pipeline handles these properly l Get correct results l Minimize performance impact 25

Data Dependencies in Processors 1 2 addl %eax , 3 n irmovl $50, %eax mrmovl 100( %ebx ), %ebx %edx Result from one instruction used as operand for another l Read-after-write (RAW) dependency n n Very common in actual programs Must make sure our pipeline handles these properly l Get correct results l Minimize performance impact 25

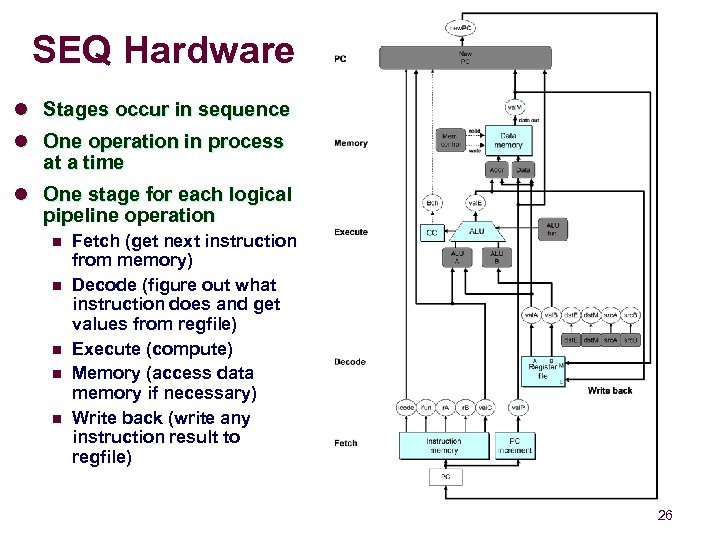

SEQ Hardware l Stages occur in sequence l One operation in process at a time l One stage for each logical pipeline operation n n Fetch (get next instruction from memory) Decode (figure out what instruction does and get values from regfile) Execute (compute) Memory (access data memory if necessary) Write back (write any instruction result to regfile) 26

SEQ Hardware l Stages occur in sequence l One operation in process at a time l One stage for each logical pipeline operation n n Fetch (get next instruction from memory) Decode (figure out what instruction does and get values from regfile) Execute (compute) Memory (access data memory if necessary) Write back (write any instruction result to regfile) 26

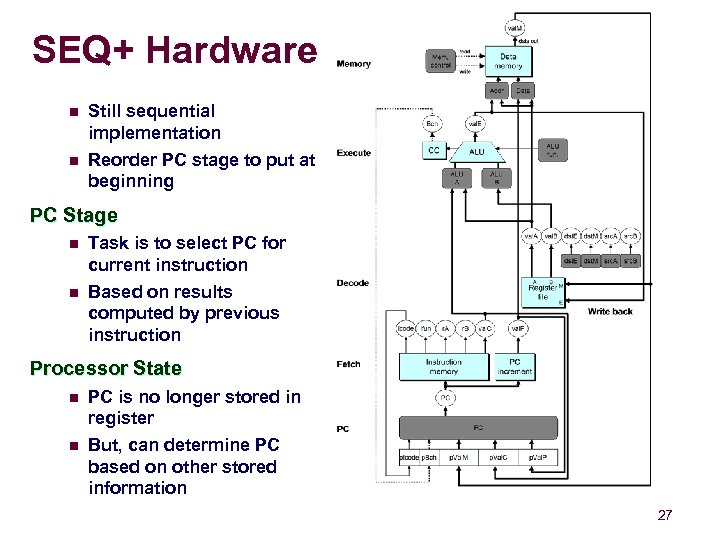

SEQ+ Hardware n n Still sequential implementation Reorder PC stage to put at beginning PC Stage n n Task is to select PC for current instruction Based on results computed by previous instruction Processor State n n PC is no longer stored in register But, can determine PC based on other stored information 27

SEQ+ Hardware n n Still sequential implementation Reorder PC stage to put at beginning PC Stage n n Task is to select PC for current instruction Based on results computed by previous instruction Processor State n n PC is no longer stored in register But, can determine PC based on other stored information 27

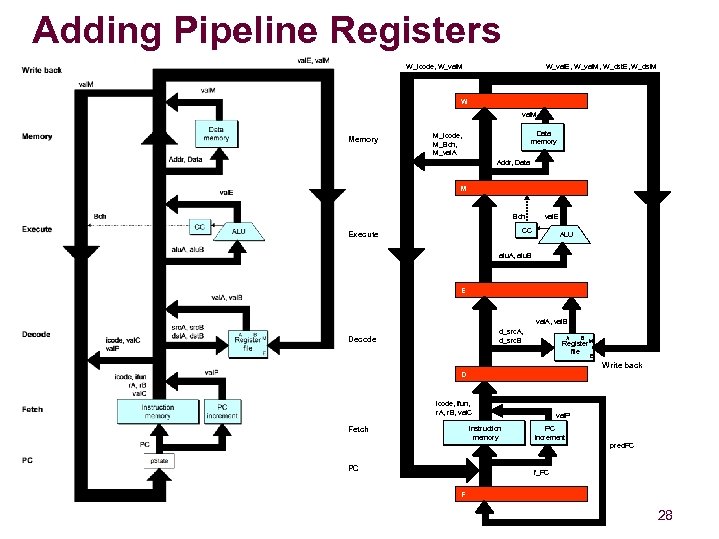

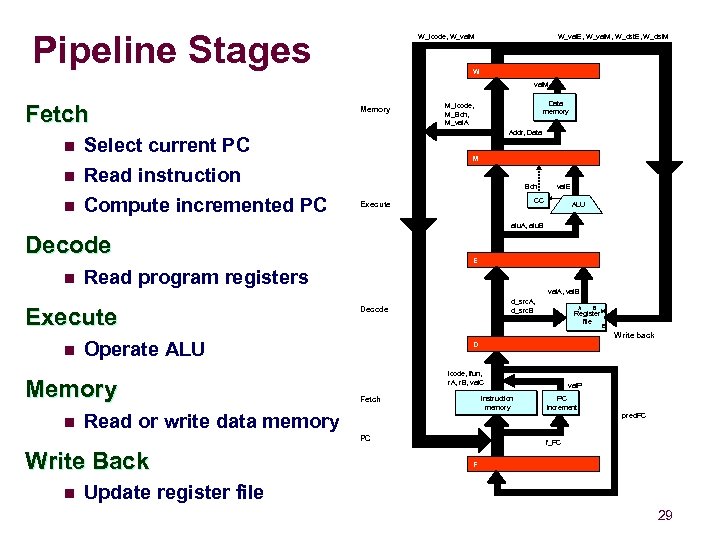

Adding Pipeline Registers W_icode, W_val. M W_val. E, W_val. M, W_dst. E, W_dst. M W val. M Memory Data memory M_icode, M_Bch, M_val. A Addr, Data M Bch val. E CC CC Execute ALU alu. A, alu. B E val. A, val. B d_src. A, d_src. B Decode A B Register M Register file E Write back D val. P icode, ifun, r. A, r. B, val. C Instruction memory Fetch PC val. P PC PC increment pred. PC f_PC F 28

Adding Pipeline Registers W_icode, W_val. M W_val. E, W_val. M, W_dst. E, W_dst. M W val. M Memory Data memory M_icode, M_Bch, M_val. A Addr, Data M Bch val. E CC CC Execute ALU alu. A, alu. B E val. A, val. B d_src. A, d_src. B Decode A B Register M Register file E Write back D val. P icode, ifun, r. A, r. B, val. C Instruction memory Fetch PC val. P PC PC increment pred. PC f_PC F 28

Pipeline Stages W_icode, W_val. M W_val. E, W_val. M, W_dst. E, W_dst. M W val. M Fetch n Read instruction Compute incremented PC n Data memory M_icode, M_Bch, M_val. A Addr, Data Select current PC n Memory M Bch val. E CC CC Execute ALU alu. A, alu. B Decode n Read program registers Execute n val. A, val. B d_src. A, d_src. B Decode D Instruction memory Fetch PC n B val. P icode, ifun, r. A, r. B, val. C Read or write data memory Write Back A Register M Register file E Write back Operate ALU Memory n E val. P PC PC increment pred. PC f_PC F Update register file 29

Pipeline Stages W_icode, W_val. M W_val. E, W_val. M, W_dst. E, W_dst. M W val. M Fetch n Read instruction Compute incremented PC n Data memory M_icode, M_Bch, M_val. A Addr, Data Select current PC n Memory M Bch val. E CC CC Execute ALU alu. A, alu. B Decode n Read program registers Execute n val. A, val. B d_src. A, d_src. B Decode D Instruction memory Fetch PC n B val. P icode, ifun, r. A, r. B, val. C Read or write data memory Write Back A Register M Register file E Write back Operate ALU Memory n E val. P PC PC increment pred. PC f_PC F Update register file 29

Summary Today n Pipelining principles (assembly line) n Overheads due to imperfect pipelining Breaking instruction execution into sequence of stages n Next Time n n n Pipelining hardware: registers and feedback paths Difficulties with pipelines: hazards Method of mitigating hazards 30

Summary Today n Pipelining principles (assembly line) n Overheads due to imperfect pipelining Breaking instruction execution into sequence of stages n Next Time n n n Pipelining hardware: registers and feedback paths Difficulties with pipelines: hazards Method of mitigating hazards 30