da57888b70033fc6622f1f93717714f0.ppt

- Количество слайдов: 71

Synchronization Chapter 5

Synchronization Chapter 5

Synchronization • Synchronization in distributed systems is harder than in centralized systems because of the need for distributed algorithms. • Distributed algorithms have the following properties: – – No machine has complete information about the system state. Machines make decisions based only on local information. Failure of one machine does not ruin the algorithm. There is no implicit assumption that a global clock exists. • Clocks are needed to synchronize in a distributed system.

Synchronization • Synchronization in distributed systems is harder than in centralized systems because of the need for distributed algorithms. • Distributed algorithms have the following properties: – – No machine has complete information about the system state. Machines make decisions based only on local information. Failure of one machine does not ruin the algorithm. There is no implicit assumption that a global clock exists. • Clocks are needed to synchronize in a distributed system.

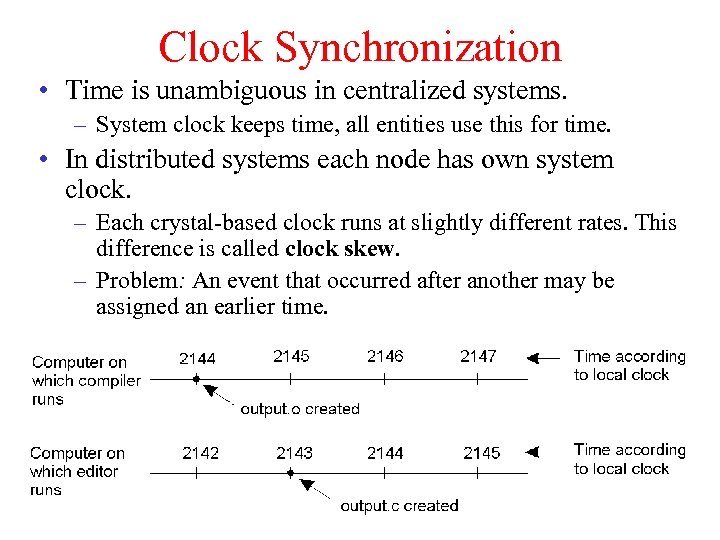

Clock Synchronization • Time is unambiguous in centralized systems. – System clock keeps time, all entities use this for time. • In distributed systems each node has own system clock. – Each crystal-based clock runs at slightly different rates. This difference is called clock skew. – Problem: An event that occurred after another may be assigned an earlier time.

Clock Synchronization • Time is unambiguous in centralized systems. – System clock keeps time, all entities use this for time. • In distributed systems each node has own system clock. – Each crystal-based clock runs at slightly different rates. This difference is called clock skew. – Problem: An event that occurred after another may be assigned an earlier time.

Physical Clocks: A Primer • Accurate clocks are atomic oscillators • Most clocks are less accurate (e. g. , mechanical watches) – Computers use crystal-based blocks – Results in clock drift • How do you tell time? – Use astronomical metrics (solar day) • Coordinated universal time (UTC) – international standard based on atomic time same as Greenwich Mean Time – Add leap seconds to be consistent with astronomical time – UTC broadcast on radio (satellite and earth) – Receivers accurate to 0. 1 – 10 ms • The goal is to synchronize machines with a master

Physical Clocks: A Primer • Accurate clocks are atomic oscillators • Most clocks are less accurate (e. g. , mechanical watches) – Computers use crystal-based blocks – Results in clock drift • How do you tell time? – Use astronomical metrics (solar day) • Coordinated universal time (UTC) – international standard based on atomic time same as Greenwich Mean Time – Add leap seconds to be consistent with astronomical time – UTC broadcast on radio (satellite and earth) – Receivers accurate to 0. 1 – 10 ms • The goal is to synchronize machines with a master

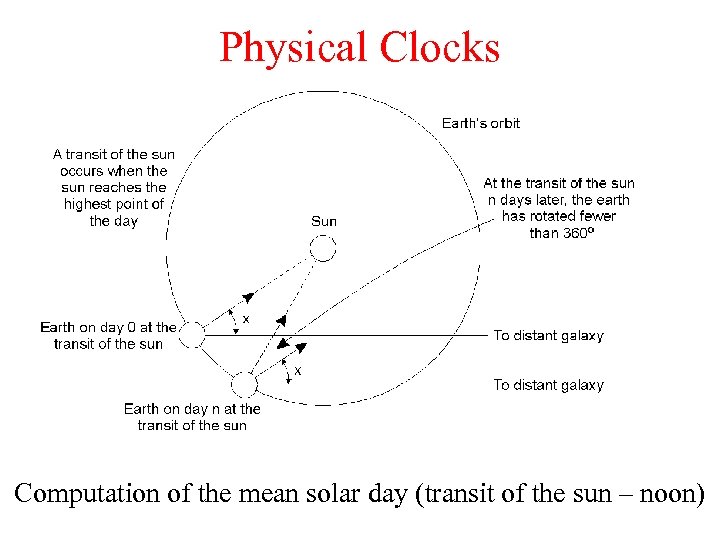

Physical Clocks Computation of the mean solar day (transit of the sun – noon)

Physical Clocks Computation of the mean solar day (transit of the sun – noon)

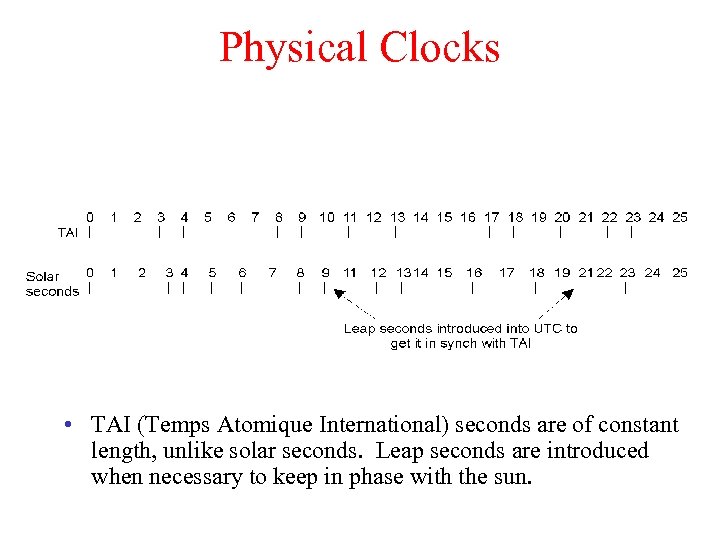

Physical Clocks • TAI (Temps Atomique International) seconds are of constant length, unlike solar seconds. Leap seconds are introduced when necessary to keep in phase with the sun.

Physical Clocks • TAI (Temps Atomique International) seconds are of constant length, unlike solar seconds. Leap seconds are introduced when necessary to keep in phase with the sun.

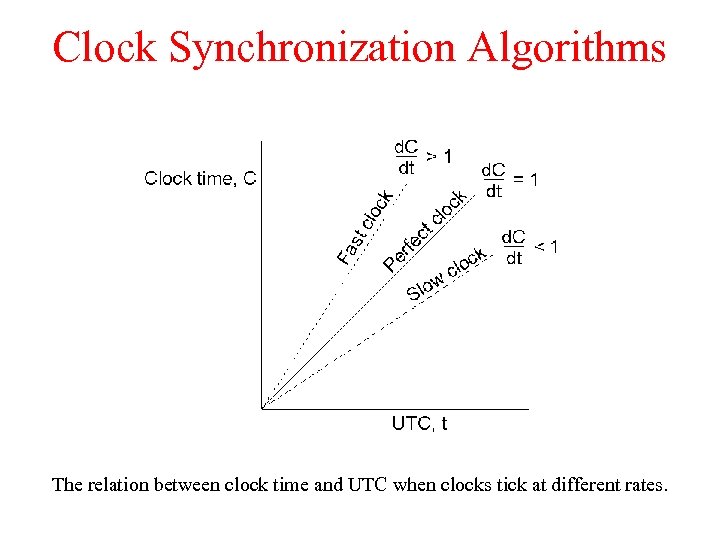

Clock Synchronization Algorithms The relation between clock time and UTC when clocks tick at different rates.

Clock Synchronization Algorithms The relation between clock time and UTC when clocks tick at different rates.

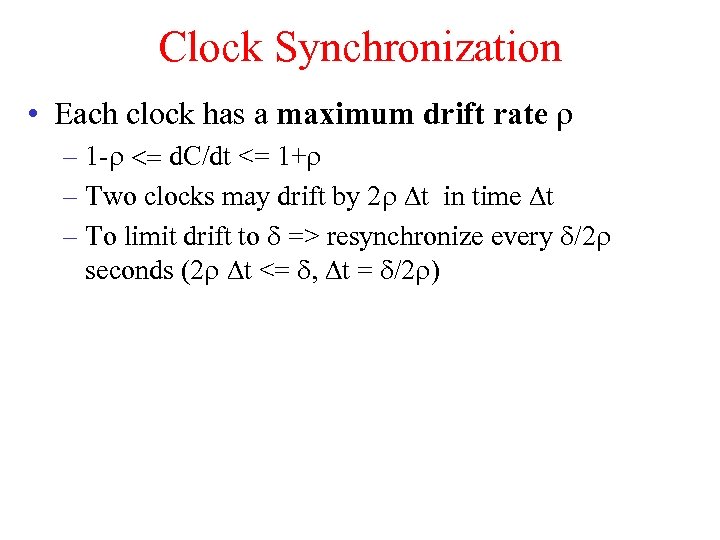

Clock Synchronization • Each clock has a maximum drift rate r – 1 -r <= d. C/dt <= 1+r – Two clocks may drift by 2 r Dt in time Dt – To limit drift to d => resynchronize every d/2 r seconds (2 r Dt <= d, Dt = d/2 r)

Clock Synchronization • Each clock has a maximum drift rate r – 1 -r <= d. C/dt <= 1+r – Two clocks may drift by 2 r Dt in time Dt – To limit drift to d => resynchronize every d/2 r seconds (2 r Dt <= d, Dt = d/2 r)

Cristian's Algorithm • Synchronize machines to a time server that has a UTC receiver. • Machine P requests time from server every d/2 r seconds – Receives time t (Cutc) from server, P sets clock to t+treply where treply is the time to send reply to P – Use (treq+treply)/2 as an estimate of treply – Improve accuracy by making a series of measurements

Cristian's Algorithm • Synchronize machines to a time server that has a UTC receiver. • Machine P requests time from server every d/2 r seconds – Receives time t (Cutc) from server, P sets clock to t+treply where treply is the time to send reply to P – Use (treq+treply)/2 as an estimate of treply – Improve accuracy by making a series of measurements

Cristian's Algorithm Getting the current time from a time server.

Cristian's Algorithm Getting the current time from a time server.

Berkeley Algorithm • Used in systems without UTC receiver – Keep clocks synchronized with one another – One computer is master, other are slaves – Master periodically polls slaves for their times • Average times and return differences to slaves • Communication delays compensated as in Cristian’s algorithm – Failure of master election of a new master

Berkeley Algorithm • Used in systems without UTC receiver – Keep clocks synchronized with one another – One computer is master, other are slaves – Master periodically polls slaves for their times • Average times and return differences to slaves • Communication delays compensated as in Cristian’s algorithm – Failure of master election of a new master

The Berkeley Algorithm a) b) c) The time daemon asks all the other machines for their clock values The machines answer The time daemon tells everyone how to adjust their clock

The Berkeley Algorithm a) b) c) The time daemon asks all the other machines for their clock values The machines answer The time daemon tells everyone how to adjust their clock

Distributed Approaches • Both approaches studied thus far are centralized. • Decentralized algorithms: use resynchronization intervals – – Broadcast time at the start of the interval Collect all other broadcast that arrive in a period S Use average value of all reported times Can throw away few highest and lowest values • Approaches in use today – rdate: synchronizes a machine with a specified machine – Network Time Protocol (NTP): Uses advanced clock synchronization to achieve accuracy in 1 -50 ms

Distributed Approaches • Both approaches studied thus far are centralized. • Decentralized algorithms: use resynchronization intervals – – Broadcast time at the start of the interval Collect all other broadcast that arrive in a period S Use average value of all reported times Can throw away few highest and lowest values • Approaches in use today – rdate: synchronizes a machine with a specified machine – Network Time Protocol (NTP): Uses advanced clock synchronization to achieve accuracy in 1 -50 ms

Logical Clocks • For many problems, only internal consistency of clocks matters. – Absolute (real) time is less important – Use logical clocks • Key idea: – Clock synchronization needs not be absolute. – If two machines do not interact, no need to synchronize them. – More importantly, processes need to agree on the order in which events occur rather than the time at which they occurred.

Logical Clocks • For many problems, only internal consistency of clocks matters. – Absolute (real) time is less important – Use logical clocks • Key idea: – Clock synchronization needs not be absolute. – If two machines do not interact, no need to synchronize them. – More importantly, processes need to agree on the order in which events occur rather than the time at which they occurred.

Event Ordering • Problem: define a total ordering of all events that occur in a system. • Events in a single processor machine are totally ordered. • In a distributed system: – No global clock, local clocks may be unsynchronized. – Can not order events on different machines using local times. • Key idea [Lamport] – Processes exchange messages – Message must be sent before received – Send/receive used to order events (and synchronize clocks).

Event Ordering • Problem: define a total ordering of all events that occur in a system. • Events in a single processor machine are totally ordered. • In a distributed system: – No global clock, local clocks may be unsynchronized. – Can not order events on different machines using local times. • Key idea [Lamport] – Processes exchange messages – Message must be sent before received – Send/receive used to order events (and synchronize clocks).

Happenes-Before Relation • The expression A B is read ‘A happens before B’. • If A and B are events in the same process and A executed before B, then A B • If A represents sending of a message and B is the receipt of this message, then A B • Relation is transitive: – A B and B C A C • Relation is undefined across processes that do not exchange messages – Partial ordering on events

Happenes-Before Relation • The expression A B is read ‘A happens before B’. • If A and B are events in the same process and A executed before B, then A B • If A represents sending of a message and B is the receipt of this message, then A B • Relation is transitive: – A B and B C A C • Relation is undefined across processes that do not exchange messages – Partial ordering on events

Event Ordering Using HB • Goal: define the notion of time of an event such that – If A B then C(A) < C(B) – If A and B are concurrent, then C(A) <, =, or > C(B) • Solution: – – Each processor maintains a logical clock LCi Whenever an event occurs locally at I, LCi = LCi+1 When i sends message to j, piggyback LCi When j receives message from i • If LCj < LCi then LCj = LCi +1 else do nothing – This algorithm meets the above goals

Event Ordering Using HB • Goal: define the notion of time of an event such that – If A B then C(A) < C(B) – If A and B are concurrent, then C(A) <, =, or > C(B) • Solution: – – Each processor maintains a logical clock LCi Whenever an event occurs locally at I, LCi = LCi+1 When i sends message to j, piggyback LCi When j receives message from i • If LCj < LCi then LCj = LCi +1 else do nothing – This algorithm meets the above goals

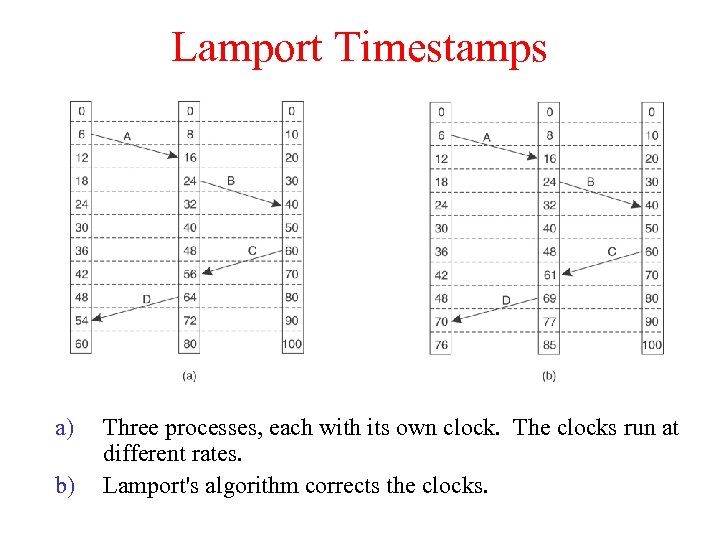

Lamport Timestamps a) b) Three processes, each with its own clock. The clocks run at different rates. Lamport's algorithm corrects the clocks.

Lamport Timestamps a) b) Three processes, each with its own clock. The clocks run at different rates. Lamport's algorithm corrects the clocks.

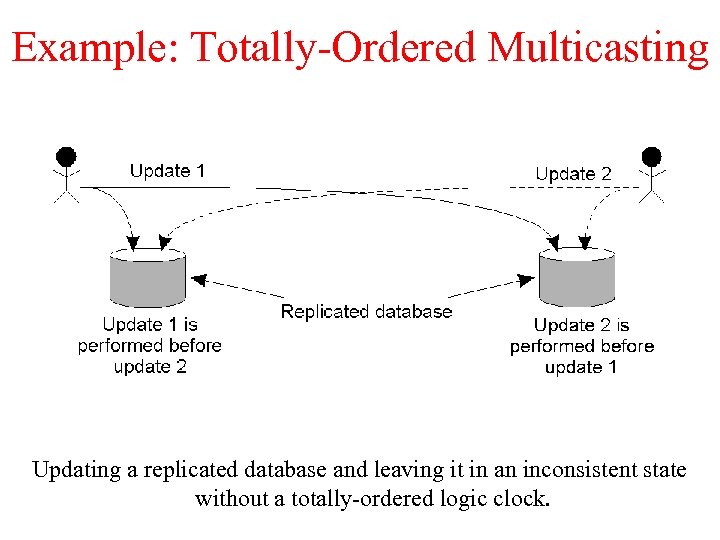

Example: Totally-Ordered Multicasting Updating a replicated database and leaving it in an inconsistent state without a totally-ordered logic clock.

Example: Totally-Ordered Multicasting Updating a replicated database and leaving it in an inconsistent state without a totally-ordered logic clock.

Causality • Lamport’s logical clocks – If A B then C(A) < C(B) – Reverse is not true!! • Nothing can be said about events by comparing time-stamps! • If C(A) < C(B), then ? ? • Need to maintain causality – Causal delivery: If send(m) send(n) deliver(m) deliver(n) – Capture causal relationships between groups of processes – Need a time-stamping mechanism such that: • If T(A) < T(B) then A should have causally preceded B

Causality • Lamport’s logical clocks – If A B then C(A) < C(B) – Reverse is not true!! • Nothing can be said about events by comparing time-stamps! • If C(A) < C(B), then ? ? • Need to maintain causality – Causal delivery: If send(m) send(n) deliver(m) deliver(n) – Capture causal relationships between groups of processes – Need a time-stamping mechanism such that: • If T(A) < T(B) then A should have causally preceded B

Vector Clocks • Causality can be captured by means of vector timestamps. • Each process i maintains a vector Vi – Vi[i] : number of events that have occurred at i – Vi[j] : number of events I knows have occurred at process j • Update vector clocks as follows – Local event: increment Vi[I] – Send a message : piggyback entire vector V – Receipt of a message: Vj[k] = max( Vj[k], Vi[k] ) • Receiver is told about how many events the sender knows occurred at another process k • Also Vj[i] = Vj[i]+1

Vector Clocks • Causality can be captured by means of vector timestamps. • Each process i maintains a vector Vi – Vi[i] : number of events that have occurred at i – Vi[j] : number of events I knows have occurred at process j • Update vector clocks as follows – Local event: increment Vi[I] – Send a message : piggyback entire vector V – Receipt of a message: Vj[k] = max( Vj[k], Vi[k] ) • Receiver is told about how many events the sender knows occurred at another process k • Also Vj[i] = Vj[i]+1

Global State • The global state of a distributed system consists of – Local state of each process – Messages sent but not received (state of the queues) • Many applications need to know the state of the system – Failure recovery, distributed deadlock detection • Problem: how can you figure out the state of a distributed system? – Each process is independent – No global clock or synchronization • A distributed snapshot reflects a consistent global state.

Global State • The global state of a distributed system consists of – Local state of each process – Messages sent but not received (state of the queues) • Many applications need to know the state of the system – Failure recovery, distributed deadlock detection • Problem: how can you figure out the state of a distributed system? – Each process is independent – No global clock or synchronization • A distributed snapshot reflects a consistent global state.

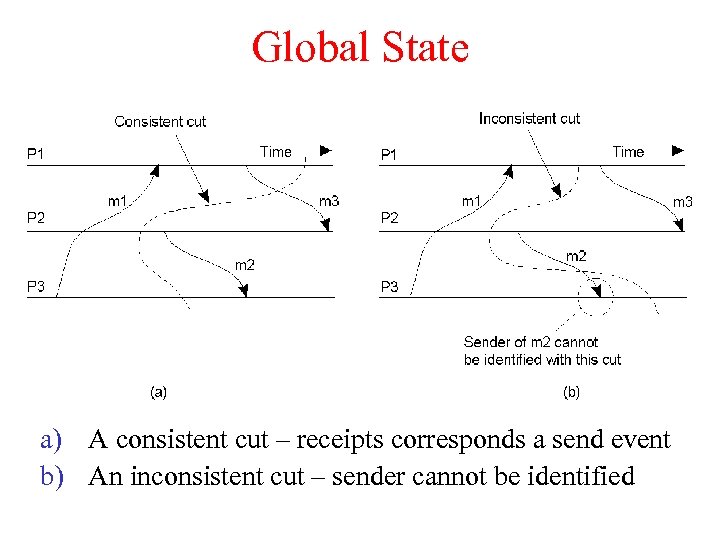

Global State a) A consistent cut – receipts corresponds a send event b) An inconsistent cut – sender cannot be identified

Global State a) A consistent cut – receipts corresponds a send event b) An inconsistent cut – sender cannot be identified

Distributed Snapshot Algorithm • Assume each process communicates with another process using unidirectional point-to-point channels (e. g, TCP connections) • Any process can initiate the algorithm – Checkpoint local state – Send marker on every outgoing channel • On receiving a marker – Checkpoint state if first marker and send marker on outgoing channels, save messages on all other channels until: – Subsequent marker on a channel: stop saving state for that channel

Distributed Snapshot Algorithm • Assume each process communicates with another process using unidirectional point-to-point channels (e. g, TCP connections) • Any process can initiate the algorithm – Checkpoint local state – Send marker on every outgoing channel • On receiving a marker – Checkpoint state if first marker and send marker on outgoing channels, save messages on all other channels until: – Subsequent marker on a channel: stop saving state for that channel

Distributed Snapshot • A process finishes when – It receives a marker on each incoming channel and processes them all – State: local state plus state of all channels B M – Send state to initiator • Any process can initiate snapshot – Multiple snapshots may be in progress A M C • Each is separate, and each is distinguished by tagging the marker with the initiator ID (and sequence number)

Distributed Snapshot • A process finishes when – It receives a marker on each incoming channel and processes them all – State: local state plus state of all channels B M – Send state to initiator • Any process can initiate snapshot – Multiple snapshots may be in progress A M C • Each is separate, and each is distinguished by tagging the marker with the initiator ID (and sequence number)

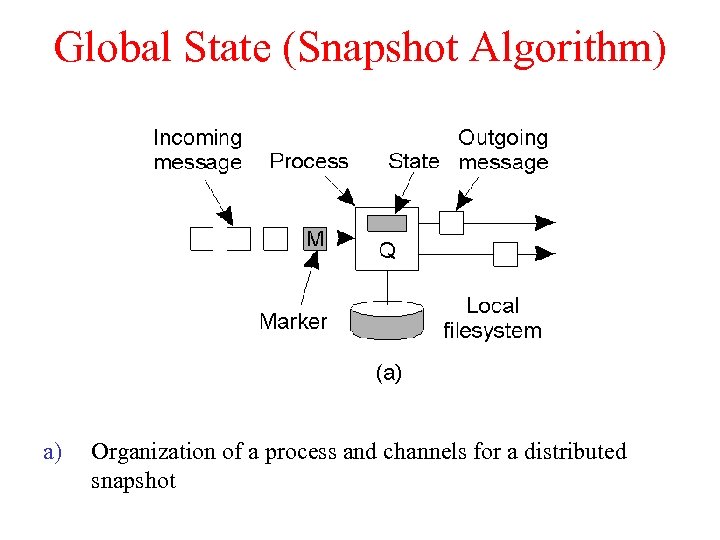

Global State (Snapshot Algorithm) a) Organization of a process and channels for a distributed snapshot

Global State (Snapshot Algorithm) a) Organization of a process and channels for a distributed snapshot

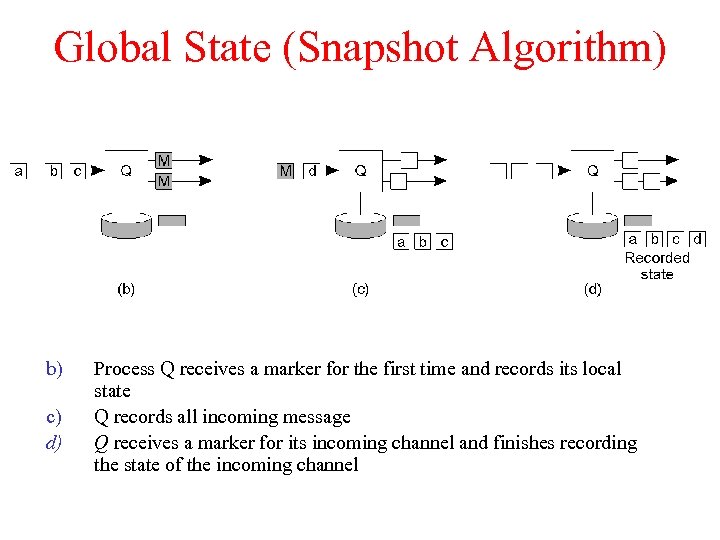

Global State (Snapshot Algorithm) b) c) d) Process Q receives a marker for the first time and records its local state Q records all incoming message Q receives a marker for its incoming channel and finishes recording the state of the incoming channel

Global State (Snapshot Algorithm) b) c) d) Process Q receives a marker for the first time and records its local state Q records all incoming message Q receives a marker for its incoming channel and finishes recording the state of the incoming channel

Termination Detection • Detecting the end of a distributed computation • Notation: let sender be predecessor, receiver be successor • Two types of markers: Done and Continue • After finishing its part of the snapshot, process Q sends a Done or a Continue to its predecessor • Send a Done only when – All of Q’s successors send a Done – Q has not received any message since it check-pointed its local state and received a marker on all incoming channels – Else send a Continue • Computation has terminated if the initiator receives Done messages from everyone

Termination Detection • Detecting the end of a distributed computation • Notation: let sender be predecessor, receiver be successor • Two types of markers: Done and Continue • After finishing its part of the snapshot, process Q sends a Done or a Continue to its predecessor • Send a Done only when – All of Q’s successors send a Done – Q has not received any message since it check-pointed its local state and received a marker on all incoming channels – Else send a Continue • Computation has terminated if the initiator receives Done messages from everyone

Election Algorithms • Many distributed algorithms need one process to act as coordinator – Doesn’t matter which process does the job, just need to pick one • Election algorithms: technique to pick a unique coordinator (aka leader election) • Examples: take over the role of a failed process, pick a master in Berkeley clock synchronization algorithm • Types of election algorithms: Bully and Ring algorithms

Election Algorithms • Many distributed algorithms need one process to act as coordinator – Doesn’t matter which process does the job, just need to pick one • Election algorithms: technique to pick a unique coordinator (aka leader election) • Examples: take over the role of a failed process, pick a master in Berkeley clock synchronization algorithm • Types of election algorithms: Bully and Ring algorithms

Bully Algorithm • Each process has a unique numerical ID • Processes know the Ids and address of every other process • Communication is assumed reliable • Key Idea: select process with highest ID • Process initiates election if it just recovered from failure or if coordinator failed • 3 message types: election, OK, I won • Several processes can initiate an election simultaneously – Need consistent result • O(n 2) messages required with n processes

Bully Algorithm • Each process has a unique numerical ID • Processes know the Ids and address of every other process • Communication is assumed reliable • Key Idea: select process with highest ID • Process initiates election if it just recovered from failure or if coordinator failed • 3 message types: election, OK, I won • Several processes can initiate an election simultaneously – Need consistent result • O(n 2) messages required with n processes

Bully Algorithm Details • Any process P can initiate an election • P sends Election messages to all process with higher Ids and awaits OK messages • If no OK messages, P becomes coordinator and sends I won messages to all process with lower Ids • If it receives an OK, it drops out and waits for an I won • If a process receives an Election msg, it returns an OK and starts an election • If a process receives a I won, it treats sender an coordinator

Bully Algorithm Details • Any process P can initiate an election • P sends Election messages to all process with higher Ids and awaits OK messages • If no OK messages, P becomes coordinator and sends I won messages to all process with lower Ids • If it receives an OK, it drops out and waits for an I won • If a process receives an Election msg, it returns an OK and starts an election • If a process receives a I won, it treats sender an coordinator

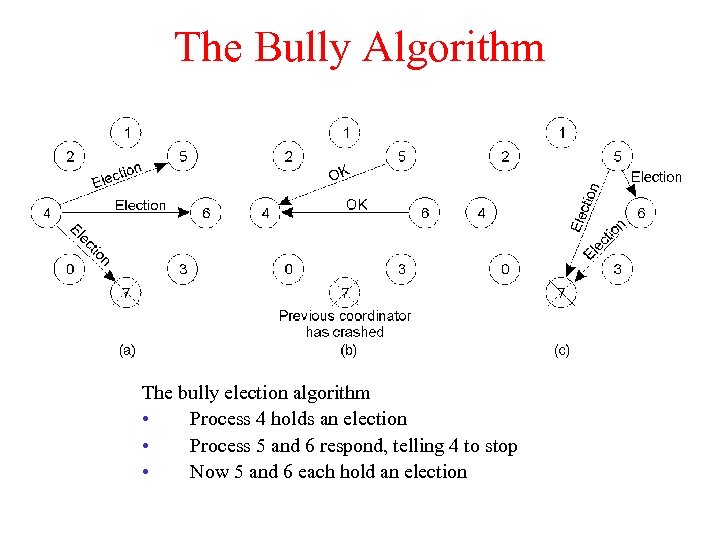

The Bully Algorithm The bully election algorithm • Process 4 holds an election • Process 5 and 6 respond, telling 4 to stop • Now 5 and 6 each hold an election

The Bully Algorithm The bully election algorithm • Process 4 holds an election • Process 5 and 6 respond, telling 4 to stop • Now 5 and 6 each hold an election

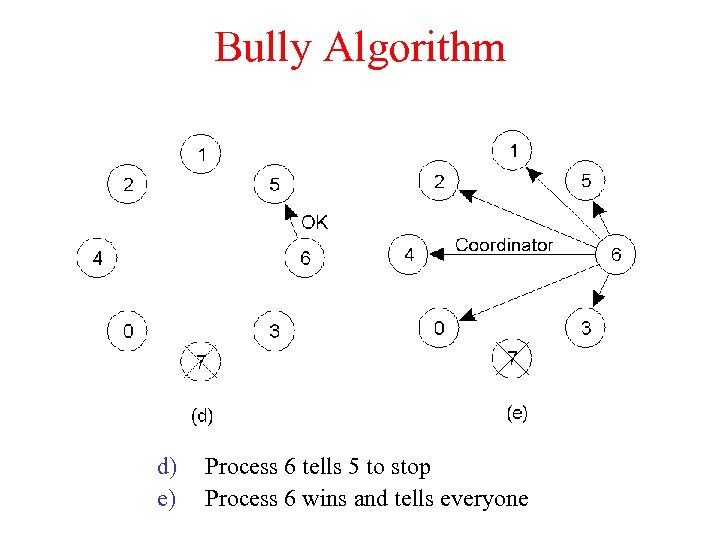

Bully Algorithm d) e) Process 6 tells 5 to stop Process 6 wins and tells everyone

Bully Algorithm d) e) Process 6 tells 5 to stop Process 6 wins and tells everyone

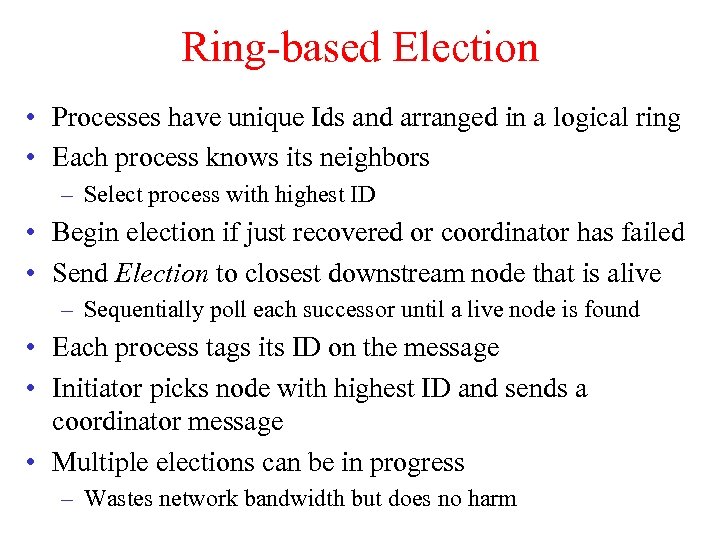

Ring-based Election • Processes have unique Ids and arranged in a logical ring • Each process knows its neighbors – Select process with highest ID • Begin election if just recovered or coordinator has failed • Send Election to closest downstream node that is alive – Sequentially poll each successor until a live node is found • Each process tags its ID on the message • Initiator picks node with highest ID and sends a coordinator message • Multiple elections can be in progress – Wastes network bandwidth but does no harm

Ring-based Election • Processes have unique Ids and arranged in a logical ring • Each process knows its neighbors – Select process with highest ID • Begin election if just recovered or coordinator has failed • Send Election to closest downstream node that is alive – Sequentially poll each successor until a live node is found • Each process tags its ID on the message • Initiator picks node with highest ID and sends a coordinator message • Multiple elections can be in progress – Wastes network bandwidth but does no harm

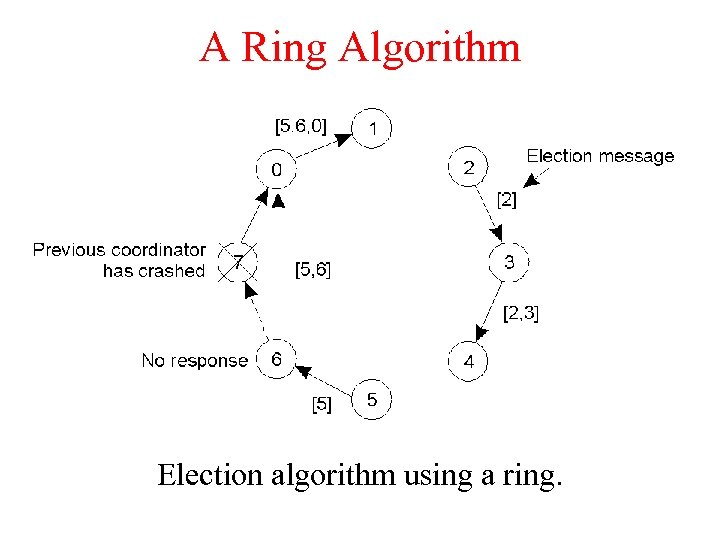

A Ring Algorithm Election algorithm using a ring.

A Ring Algorithm Election algorithm using a ring.

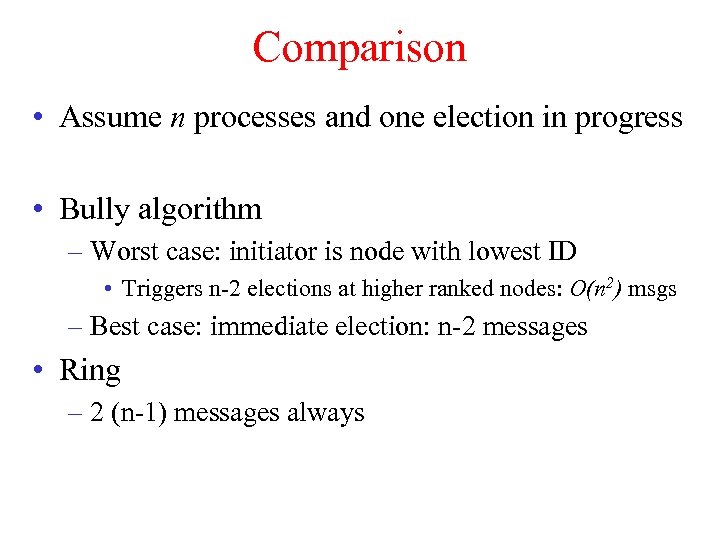

Comparison • Assume n processes and one election in progress • Bully algorithm – Worst case: initiator is node with lowest ID • Triggers n-2 elections at higher ranked nodes: O(n 2) msgs – Best case: immediate election: n-2 messages • Ring – 2 (n-1) messages always

Comparison • Assume n processes and one election in progress • Bully algorithm – Worst case: initiator is node with lowest ID • Triggers n-2 elections at higher ranked nodes: O(n 2) msgs – Best case: immediate election: n-2 messages • Ring – 2 (n-1) messages always

Distributed Synchronization • Distributed system with multiple processes may need to share data or access shared data structures – Use critical sections with mutual exclusion • Single process with multiple threads – Semaphores, locks, monitors • How do you do this for multiple processes in a distributed system? – Processes may be running on different machines • Solution: lock mechanism for a distributed environment – Can be centralized or distributed

Distributed Synchronization • Distributed system with multiple processes may need to share data or access shared data structures – Use critical sections with mutual exclusion • Single process with multiple threads – Semaphores, locks, monitors • How do you do this for multiple processes in a distributed system? – Processes may be running on different machines • Solution: lock mechanism for a distributed environment – Can be centralized or distributed

Centralized Mutual Exclusion • Assume processes are numbered • One process is elected coordinator (highest ID process) • Every process needs to check with coordinator before entering the critical section • To obtain exclusive access: send request, await reply • To release: send release message • Coordinator: – Receive request: if available and queue empty, send grant; if not, queue request – Receive release: remove next request from queue and send grant

Centralized Mutual Exclusion • Assume processes are numbered • One process is elected coordinator (highest ID process) • Every process needs to check with coordinator before entering the critical section • To obtain exclusive access: send request, await reply • To release: send release message • Coordinator: – Receive request: if available and queue empty, send grant; if not, queue request – Receive release: remove next request from queue and send grant

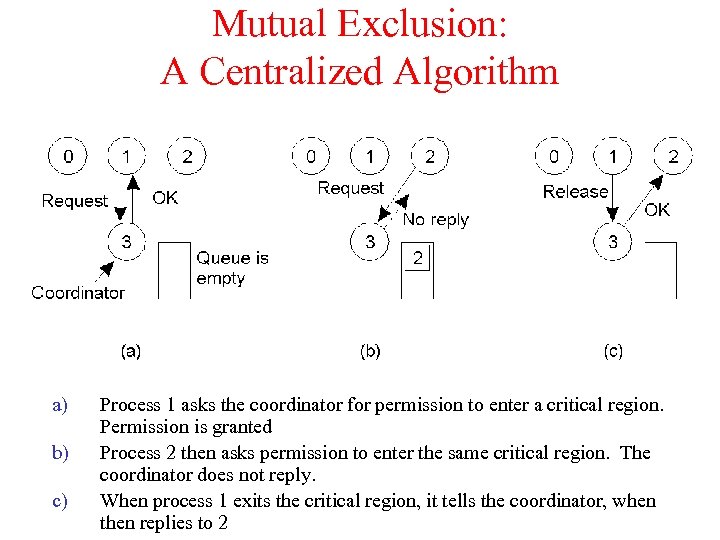

Mutual Exclusion: A Centralized Algorithm a) b) c) Process 1 asks the coordinator for permission to enter a critical region. Permission is granted Process 2 then asks permission to enter the same critical region. The coordinator does not reply. When process 1 exits the critical region, it tells the coordinator, when then replies to 2

Mutual Exclusion: A Centralized Algorithm a) b) c) Process 1 asks the coordinator for permission to enter a critical region. Permission is granted Process 2 then asks permission to enter the same critical region. The coordinator does not reply. When process 1 exits the critical region, it tells the coordinator, when then replies to 2

Properties • Simulates centralized lock using blocking calls • Fair: requests are granted the lock in the order they were received • Simple: three messages per use of a critical section (request, grant, release) • Shortcomings: – Single point of failure – How do you detect a dead coordinator? • A process can not distinguish between “lock in use” from a dead coordinator – No response from coordinator in either case – Performance bottleneck in large distributed systems

Properties • Simulates centralized lock using blocking calls • Fair: requests are granted the lock in the order they were received • Simple: three messages per use of a critical section (request, grant, release) • Shortcomings: – Single point of failure – How do you detect a dead coordinator? • A process can not distinguish between “lock in use” from a dead coordinator – No response from coordinator in either case – Performance bottleneck in large distributed systems

![Distributed Algorithm • [Ricart and Agrawala]: needs 2(n-1) messages • Based on event ordering Distributed Algorithm • [Ricart and Agrawala]: needs 2(n-1) messages • Based on event ordering](https://present5.com/presentation/da57888b70033fc6622f1f93717714f0/image-41.jpg) Distributed Algorithm • [Ricart and Agrawala]: needs 2(n-1) messages • Based on event ordering and time stamps • Process k enters critical section as follows – – Generate new time stamp TSk = TSk+1 Send request(k, TSk) all other n-1 processes Wait until reply(j) received from all other processes Enter critical section • Upon receiving a request message, process j – Sends reply if no contention – If already in critical section, does not reply, queue request – If wants to enter, compare TSj with TSk and send reply if TSk

Distributed Algorithm • [Ricart and Agrawala]: needs 2(n-1) messages • Based on event ordering and time stamps • Process k enters critical section as follows – – Generate new time stamp TSk = TSk+1 Send request(k, TSk) all other n-1 processes Wait until reply(j) received from all other processes Enter critical section • Upon receiving a request message, process j – Sends reply if no contention – If already in critical section, does not reply, queue request – If wants to enter, compare TSj with TSk and send reply if TSk

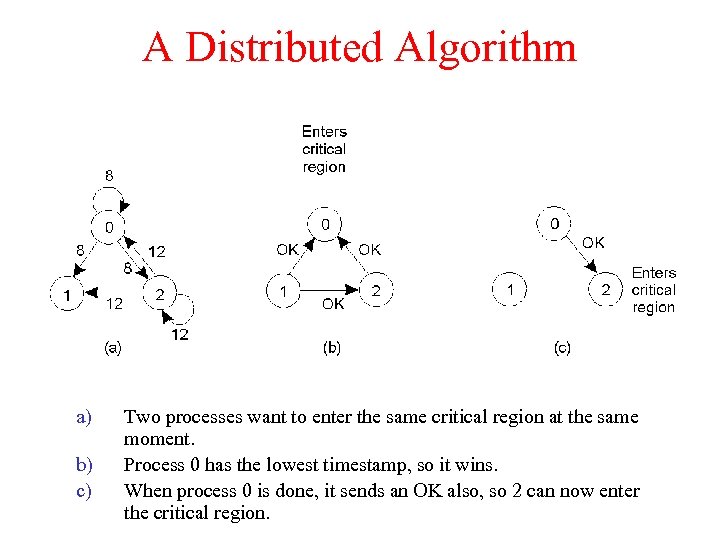

A Distributed Algorithm a) b) c) Two processes want to enter the same critical region at the same moment. Process 0 has the lowest timestamp, so it wins. When process 0 is done, it sends an OK also, so 2 can now enter the critical region.

A Distributed Algorithm a) b) c) Two processes want to enter the same critical region at the same moment. Process 0 has the lowest timestamp, so it wins. When process 0 is done, it sends an OK also, so 2 can now enter the critical region.

Properties • Fully decentralized • N points of failure! • All processes are involved in all decisions – Any overloaded process can become a bottleneck • A Token Ring Algorithm – – Use a token to arbitrate access to critical section Must wait for token before entering CS Pass the token to neighbor once done or if not interested Detecting token loss in not-trivial

Properties • Fully decentralized • N points of failure! • All processes are involved in all decisions – Any overloaded process can become a bottleneck • A Token Ring Algorithm – – Use a token to arbitrate access to critical section Must wait for token before entering CS Pass the token to neighbor once done or if not interested Detecting token loss in not-trivial

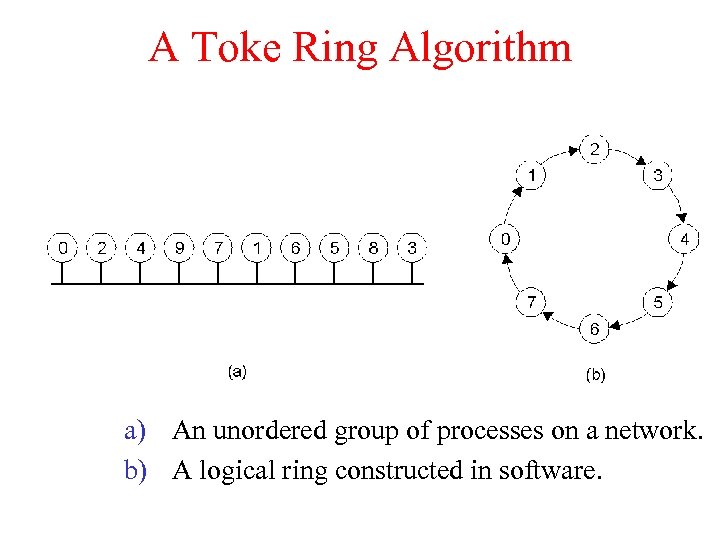

A Toke Ring Algorithm a) An unordered group of processes on a network. b) A logical ring constructed in software.

A Toke Ring Algorithm a) An unordered group of processes on a network. b) A logical ring constructed in software.

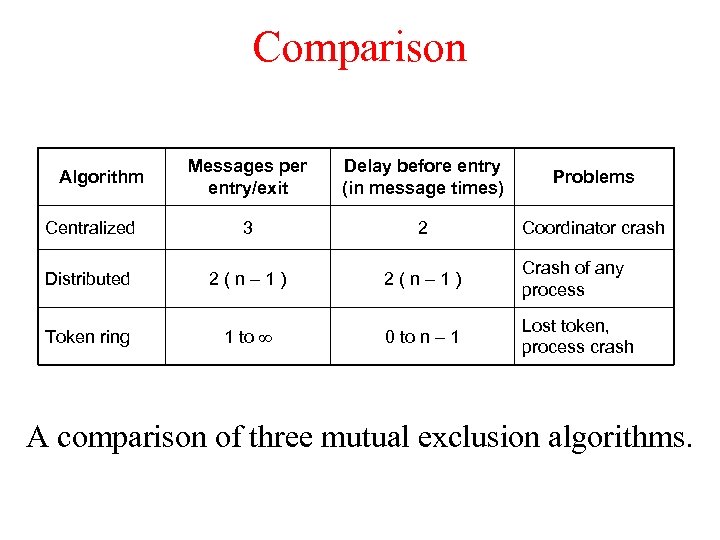

Comparison Messages per entry/exit Delay before entry (in message times) Problems Centralized 3 2 Coordinator crash Distributed 2(n– 1) Crash of any process Token ring 1 to 0 to n – 1 Lost token, process crash Algorithm A comparison of three mutual exclusion algorithms.

Comparison Messages per entry/exit Delay before entry (in message times) Problems Centralized 3 2 Coordinator crash Distributed 2(n– 1) Crash of any process Token ring 1 to 0 to n – 1 Lost token, process crash Algorithm A comparison of three mutual exclusion algorithms.

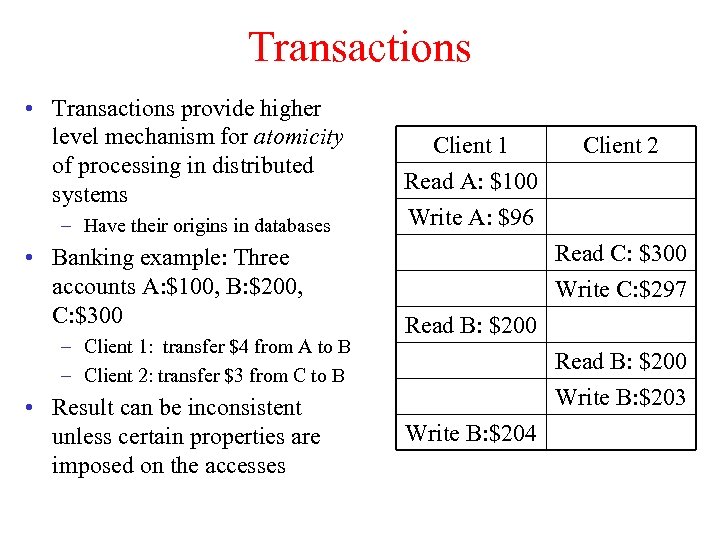

Transactions • Transactions provide higher level mechanism for atomicity of processing in distributed systems – Have their origins in databases • Banking example: Three accounts A: $100, B: $200, C: $300 – Client 1: transfer $4 from A to B – Client 2: transfer $3 from C to B • Result can be inconsistent unless certain properties are imposed on the accesses Client 1 Read A: $100 Write A: $96 Client 2 Read C: $300 Write C: $297 Read B: $200 Write B: $203 Write B: $204

Transactions • Transactions provide higher level mechanism for atomicity of processing in distributed systems – Have their origins in databases • Banking example: Three accounts A: $100, B: $200, C: $300 – Client 1: transfer $4 from A to B – Client 2: transfer $3 from C to B • Result can be inconsistent unless certain properties are imposed on the accesses Client 1 Read A: $100 Write A: $96 Client 2 Read C: $300 Write C: $297 Read B: $200 Write B: $203 Write B: $204

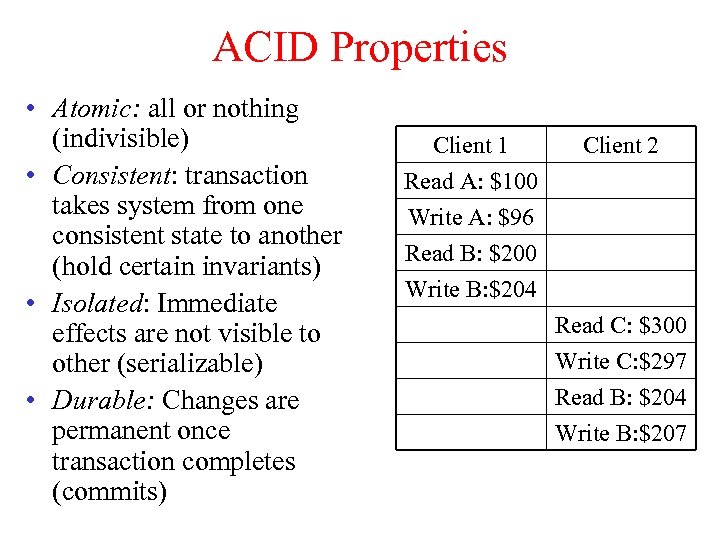

ACID Properties • Atomic: all or nothing (indivisible) • Consistent: transaction takes system from one consistent state to another (hold certain invariants) • Isolated: Immediate effects are not visible to other (serializable) • Durable: Changes are permanent once transaction completes (commits) Client 1 Read A: $100 Write A: $96 Read B: $200 Client 2 Write B: $204 Read C: $300 Write C: $297 Read B: $204 Write B: $207

ACID Properties • Atomic: all or nothing (indivisible) • Consistent: transaction takes system from one consistent state to another (hold certain invariants) • Isolated: Immediate effects are not visible to other (serializable) • Durable: Changes are permanent once transaction completes (commits) Client 1 Read A: $100 Write A: $96 Read B: $200 Client 2 Write B: $204 Read C: $300 Write C: $297 Read B: $204 Write B: $207

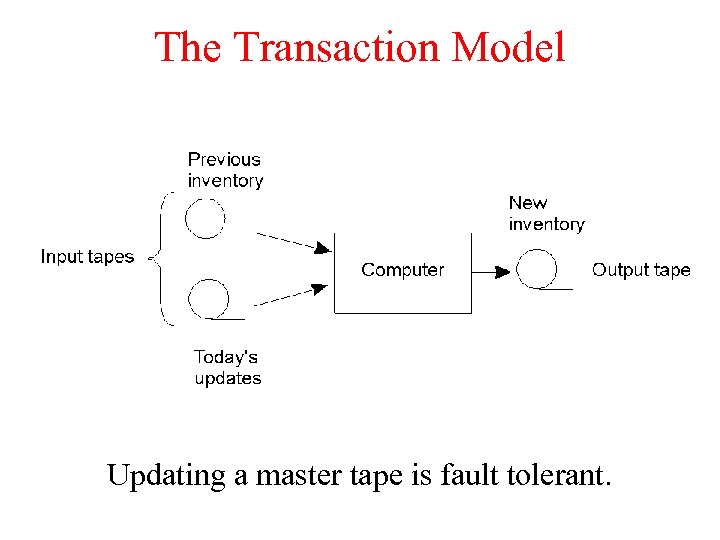

The Transaction Model Updating a master tape is fault tolerant.

The Transaction Model Updating a master tape is fault tolerant.

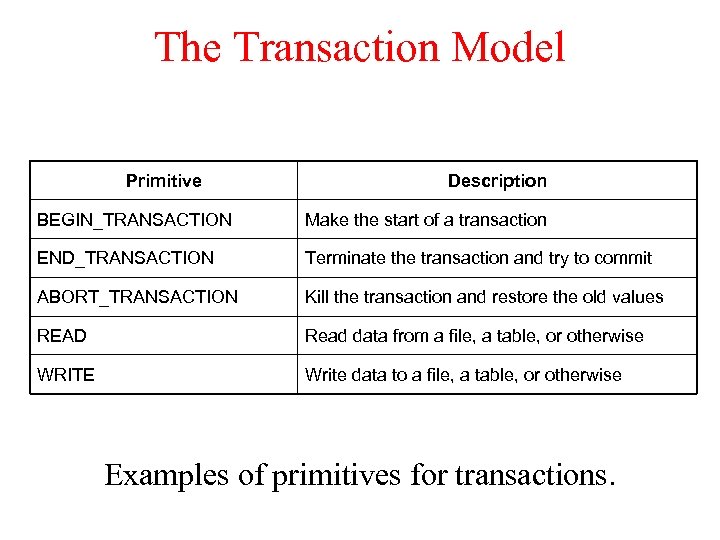

The Transaction Model Primitive Description BEGIN_TRANSACTION Make the start of a transaction END_TRANSACTION Terminate the transaction and try to commit ABORT_TRANSACTION Kill the transaction and restore the old values READ Read data from a file, a table, or otherwise WRITE Write data to a file, a table, or otherwise Examples of primitives for transactions.

The Transaction Model Primitive Description BEGIN_TRANSACTION Make the start of a transaction END_TRANSACTION Terminate the transaction and try to commit ABORT_TRANSACTION Kill the transaction and restore the old values READ Read data from a file, a table, or otherwise WRITE Write data to a file, a table, or otherwise Examples of primitives for transactions.

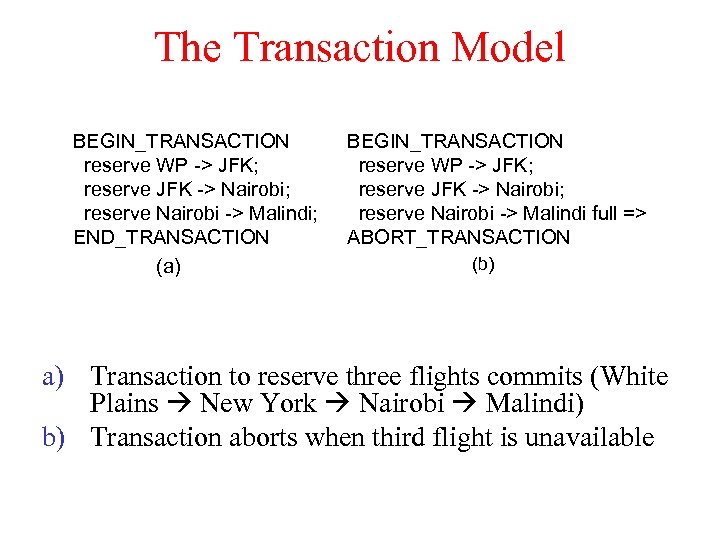

The Transaction Model BEGIN_TRANSACTION reserve WP -> JFK; reserve JFK -> Nairobi; reserve Nairobi -> Malindi; END_TRANSACTION (a) BEGIN_TRANSACTION reserve WP -> JFK; reserve JFK -> Nairobi; reserve Nairobi -> Malindi full => ABORT_TRANSACTION (b) a) Transaction to reserve three flights commits (White Plains New York Nairobi Malindi) b) Transaction aborts when third flight is unavailable

The Transaction Model BEGIN_TRANSACTION reserve WP -> JFK; reserve JFK -> Nairobi; reserve Nairobi -> Malindi; END_TRANSACTION (a) BEGIN_TRANSACTION reserve WP -> JFK; reserve JFK -> Nairobi; reserve Nairobi -> Malindi full => ABORT_TRANSACTION (b) a) Transaction to reserve three flights commits (White Plains New York Nairobi Malindi) b) Transaction aborts when third flight is unavailable

Classification of Transactions. • A flat transaction is a series of operations that satisfy the ACID properties. – It does not allow partial results to be committed or aborted. – Example: flight reservation, Web link update. • A nest transaction is constructed from a number of subtransactions. • A distributed transaction is logically a flat, indivisible transaction that operates on distributed data.

Classification of Transactions. • A flat transaction is a series of operations that satisfy the ACID properties. – It does not allow partial results to be committed or aborted. – Example: flight reservation, Web link update. • A nest transaction is constructed from a number of subtransactions. • A distributed transaction is logically a flat, indivisible transaction that operates on distributed data.

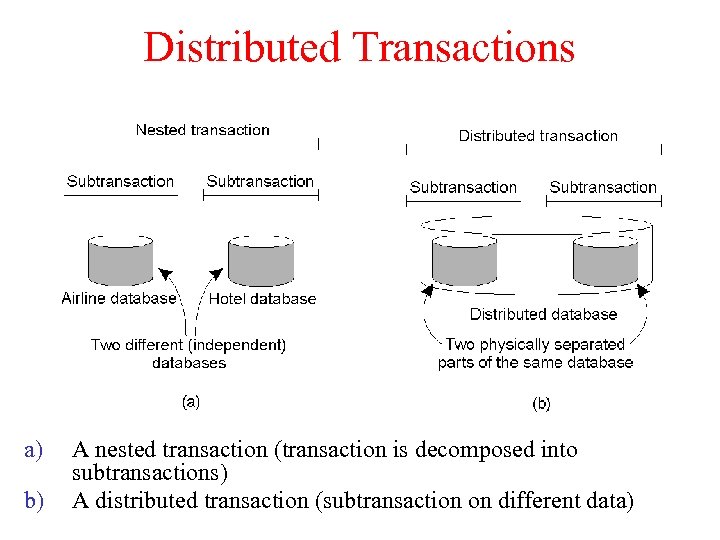

Distributed Transactions a) b) A nested transaction (transaction is decomposed into subtransactions) A distributed transaction (subtransaction on different data)

Distributed Transactions a) b) A nested transaction (transaction is decomposed into subtransactions) A distributed transaction (subtransaction on different data)

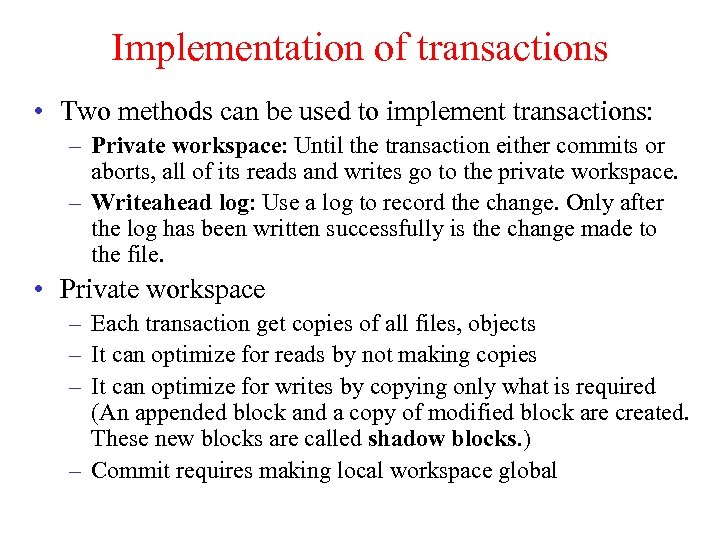

Implementation of transactions • Two methods can be used to implement transactions: – Private workspace: Until the transaction either commits or aborts, all of its reads and writes go to the private workspace. – Writeahead log: Use a log to record the change. Only after the log has been written successfully is the change made to the file. • Private workspace – Each transaction get copies of all files, objects – It can optimize for reads by not making copies – It can optimize for writes by copying only what is required (An appended block and a copy of modified block are created. These new blocks are called shadow blocks. ) – Commit requires making local workspace global

Implementation of transactions • Two methods can be used to implement transactions: – Private workspace: Until the transaction either commits or aborts, all of its reads and writes go to the private workspace. – Writeahead log: Use a log to record the change. Only after the log has been written successfully is the change made to the file. • Private workspace – Each transaction get copies of all files, objects – It can optimize for reads by not making copies – It can optimize for writes by copying only what is required (An appended block and a copy of modified block are created. These new blocks are called shadow blocks. ) – Commit requires making local workspace global

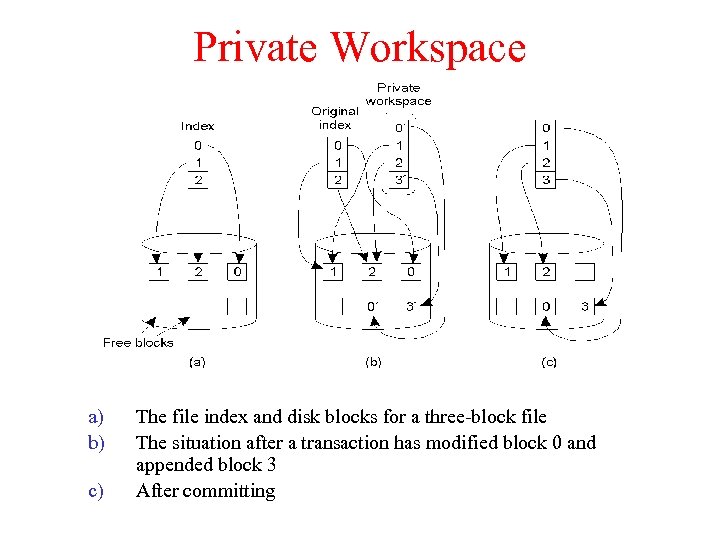

Private Workspace a) b) c) The file index and disk blocks for a three-block file The situation after a transaction has modified block 0 and appended block 3 After committing

Private Workspace a) b) c) The file index and disk blocks for a three-block file The situation after a transaction has modified block 0 and appended block 3 After committing

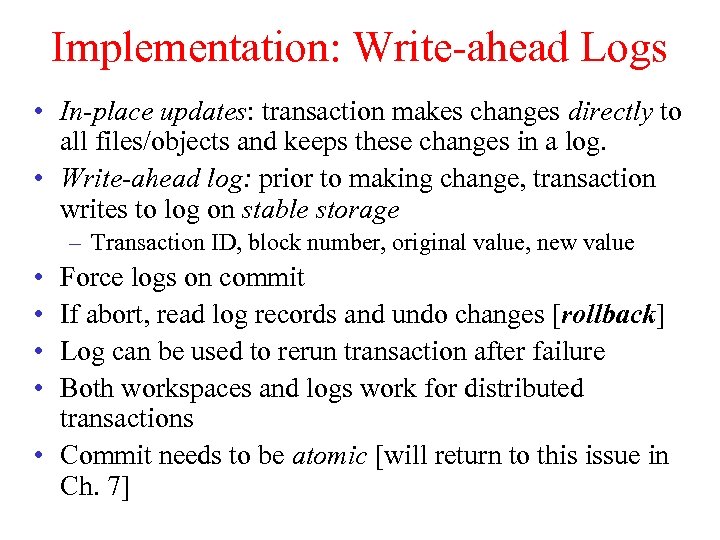

Implementation: Write-ahead Logs • In-place updates: transaction makes changes directly to all files/objects and keeps these changes in a log. • Write-ahead log: prior to making change, transaction writes to log on stable storage – Transaction ID, block number, original value, new value • • Force logs on commit If abort, read log records and undo changes [rollback] Log can be used to rerun transaction after failure Both workspaces and logs work for distributed transactions • Commit needs to be atomic [will return to this issue in Ch. 7]

Implementation: Write-ahead Logs • In-place updates: transaction makes changes directly to all files/objects and keeps these changes in a log. • Write-ahead log: prior to making change, transaction writes to log on stable storage – Transaction ID, block number, original value, new value • • Force logs on commit If abort, read log records and undo changes [rollback] Log can be used to rerun transaction after failure Both workspaces and logs work for distributed transactions • Commit needs to be atomic [will return to this issue in Ch. 7]

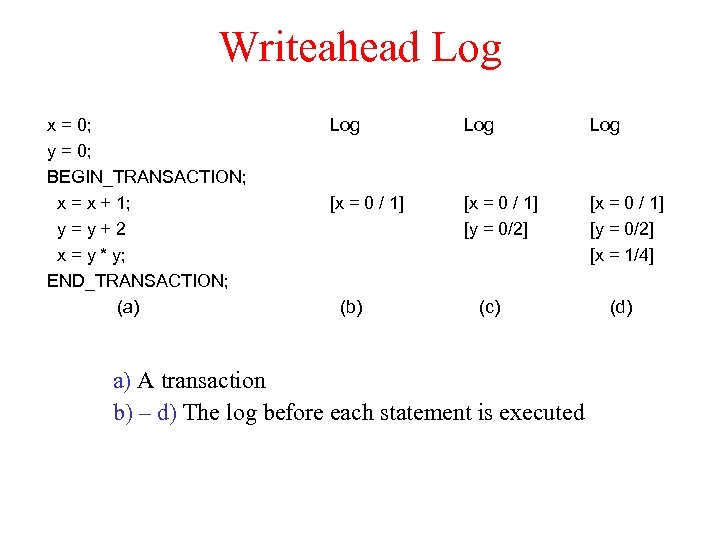

Writeahead Log x = 0; y = 0; BEGIN_TRANSACTION; x = x + 1; y=y+2 x = y * y; END_TRANSACTION; (a) Log Log [x = 0 / 1] [y = 0/2] [x = 1/4] (b) (c) a) A transaction b) – d) The log before each statement is executed (d)

Writeahead Log x = 0; y = 0; BEGIN_TRANSACTION; x = x + 1; y=y+2 x = y * y; END_TRANSACTION; (a) Log Log [x = 0 / 1] [y = 0/2] [x = 1/4] (b) (c) a) A transaction b) – d) The log before each statement is executed (d)

Concurrency Control • Goal: Allow several transactions to be executing simultaneously such that – Collection of manipulated data item is left in a consistent state • Achieve consistency by ensuring data items are accessed in an specific order – Final result should be same as if each transaction ran sequentially

Concurrency Control • Goal: Allow several transactions to be executing simultaneously such that – Collection of manipulated data item is left in a consistent state • Achieve consistency by ensuring data items are accessed in an specific order – Final result should be same as if each transaction ran sequentially

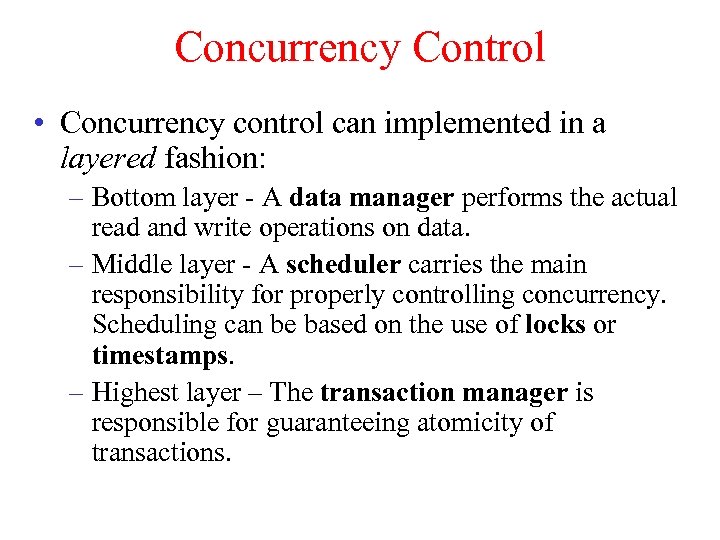

Concurrency Control • Concurrency control can implemented in a layered fashion: – Bottom layer - A data manager performs the actual read and write operations on data. – Middle layer - A scheduler carries the main responsibility for properly controlling concurrency. Scheduling can be based on the use of locks or timestamps. – Highest layer – The transaction manager is responsible for guaranteeing atomicity of transactions.

Concurrency Control • Concurrency control can implemented in a layered fashion: – Bottom layer - A data manager performs the actual read and write operations on data. – Middle layer - A scheduler carries the main responsibility for properly controlling concurrency. Scheduling can be based on the use of locks or timestamps. – Highest layer – The transaction manager is responsible for guaranteeing atomicity of transactions.

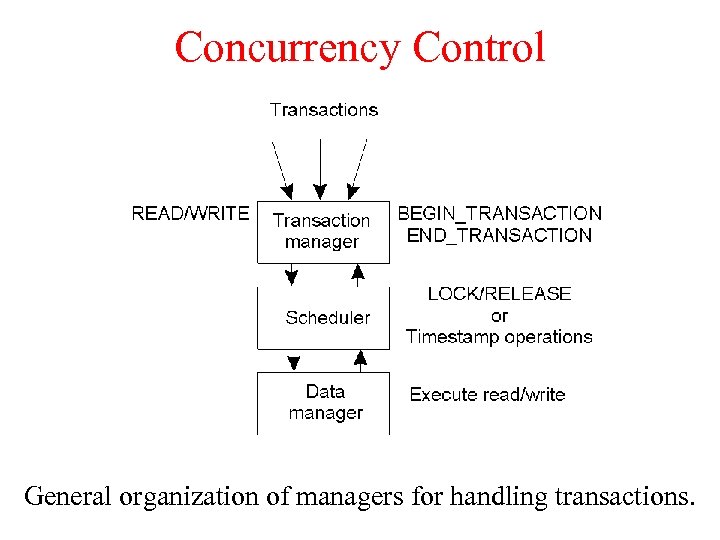

Concurrency Control General organization of managers for handling transactions.

Concurrency Control General organization of managers for handling transactions.

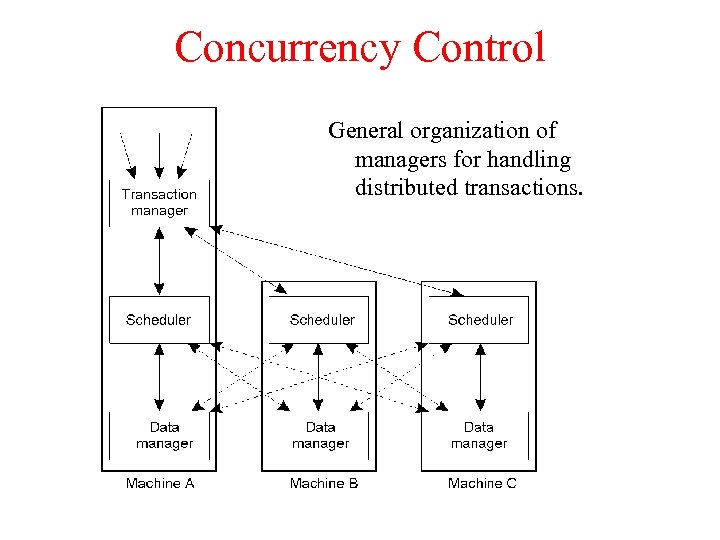

Concurrency Control General organization of managers for handling distributed transactions.

Concurrency Control General organization of managers for handling distributed transactions.

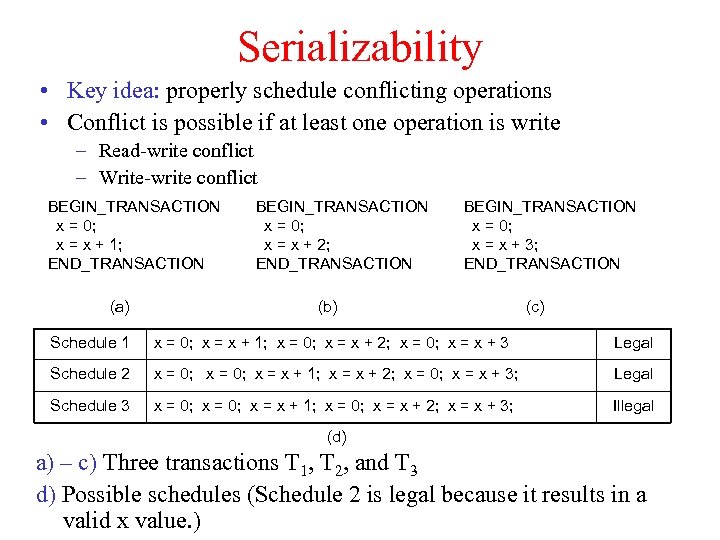

Serializability • Key idea: properly schedule conflicting operations • Conflict is possible if at least one operation is write – Read-write conflict – Write-write conflict BEGIN_TRANSACTION x = 0; x = x + 1; END_TRANSACTION (a) BEGIN_TRANSACTION x = 0; x = x + 2; END_TRANSACTION BEGIN_TRANSACTION x = 0; x = x + 3; END_TRANSACTION (b) (c) Schedule 1 x = 0; x = x + 1; x = 0; x = x + 2; x = 0; x = x + 3 Legal Schedule 2 x = 0; x = x + 1; x = x + 2; x = 0; x = x + 3; Legal Schedule 3 x = 0; x = x + 1; x = 0; x = x + 2; x = x + 3; Illegal (d) a) – c) Three transactions T 1, T 2, and T 3 d) Possible schedules (Schedule 2 is legal because it results in a valid x value. )

Serializability • Key idea: properly schedule conflicting operations • Conflict is possible if at least one operation is write – Read-write conflict – Write-write conflict BEGIN_TRANSACTION x = 0; x = x + 1; END_TRANSACTION (a) BEGIN_TRANSACTION x = 0; x = x + 2; END_TRANSACTION BEGIN_TRANSACTION x = 0; x = x + 3; END_TRANSACTION (b) (c) Schedule 1 x = 0; x = x + 1; x = 0; x = x + 2; x = 0; x = x + 3 Legal Schedule 2 x = 0; x = x + 1; x = x + 2; x = 0; x = x + 3; Legal Schedule 3 x = 0; x = x + 1; x = 0; x = x + 2; x = x + 3; Illegal (d) a) – c) Three transactions T 1, T 2, and T 3 d) Possible schedules (Schedule 2 is legal because it results in a valid x value. )

Serializability • Two approaches are used in concurrency control: – Pessimistic approaches: operations are synchronized before they are carried out. – Optimistic approaches: operations are carried out and synchronization takes place at the end of transaction. At the conflict point, one or more transactions are aborted.

Serializability • Two approaches are used in concurrency control: – Pessimistic approaches: operations are synchronized before they are carried out. – Optimistic approaches: operations are carried out and synchronization takes place at the end of transaction. At the conflict point, one or more transactions are aborted.

Two-phase Locking (2 PL) • Widely used concurrency control technique • Scheduler acquires all necessary locks in growing phase, releases locks in shrinking phase – Check if operation on data item x conflicts with existing locks • If so, delay transaction. If not, grant a lock on x – Never release a lock until data manager finishes operation on x – Once a lock is released, no further locks can be granted.

Two-phase Locking (2 PL) • Widely used concurrency control technique • Scheduler acquires all necessary locks in growing phase, releases locks in shrinking phase – Check if operation on data item x conflicts with existing locks • If so, delay transaction. If not, grant a lock on x – Never release a lock until data manager finishes operation on x – Once a lock is released, no further locks can be granted.

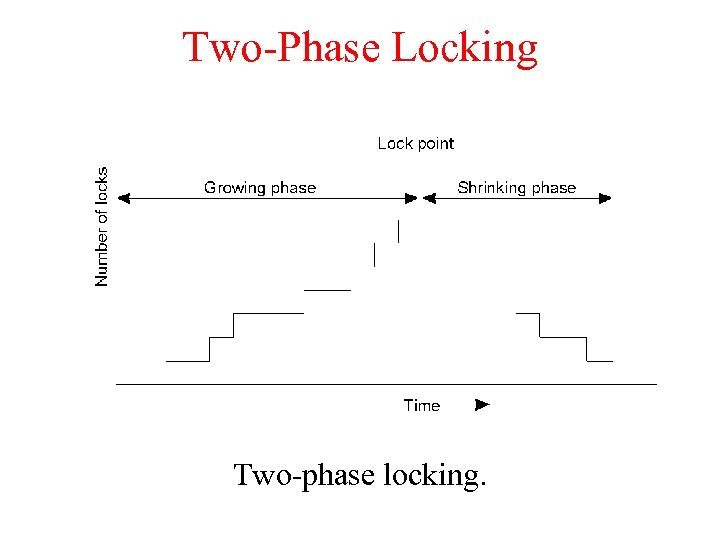

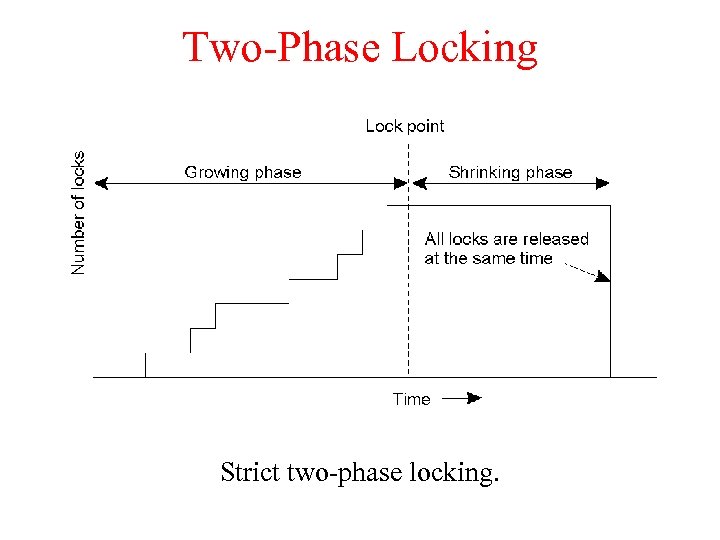

Two-Phase Locking Two-phase locking.

Two-Phase Locking Two-phase locking.

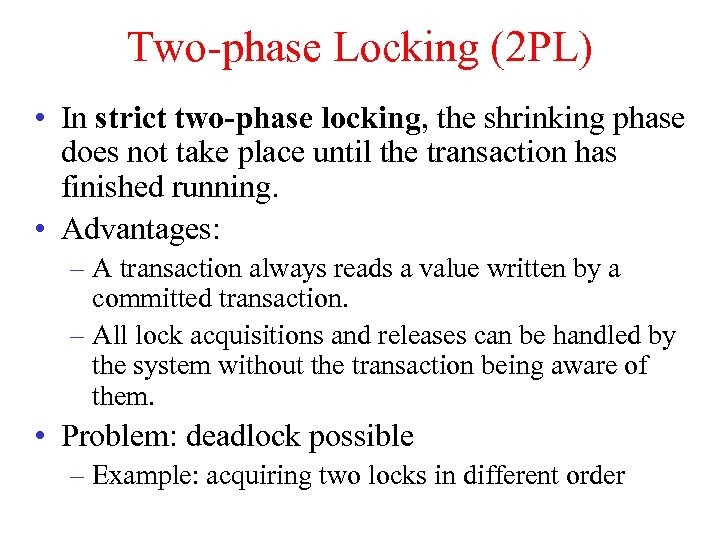

Two-phase Locking (2 PL) • In strict two-phase locking, the shrinking phase does not take place until the transaction has finished running. • Advantages: – A transaction always reads a value written by a committed transaction. – All lock acquisitions and releases can be handled by the system without the transaction being aware of them. • Problem: deadlock possible – Example: acquiring two locks in different order

Two-phase Locking (2 PL) • In strict two-phase locking, the shrinking phase does not take place until the transaction has finished running. • Advantages: – A transaction always reads a value written by a committed transaction. – All lock acquisitions and releases can be handled by the system without the transaction being aware of them. • Problem: deadlock possible – Example: acquiring two locks in different order

Two-Phase Locking Strict two-phase locking.

Two-Phase Locking Strict two-phase locking.

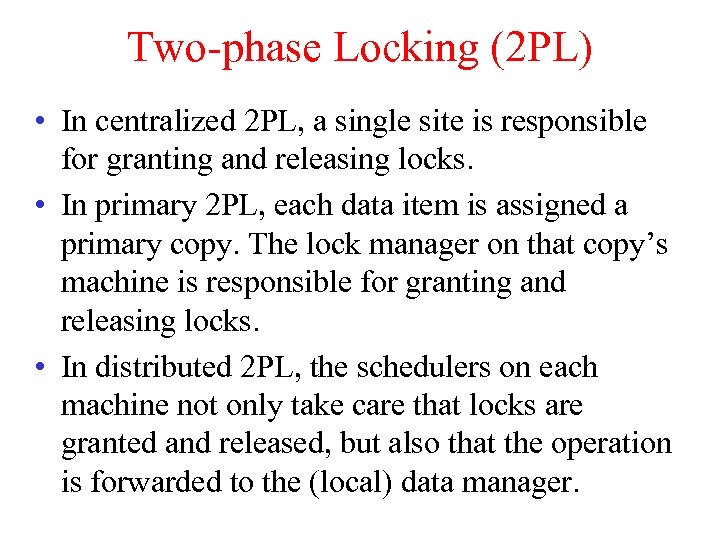

Two-phase Locking (2 PL) • In centralized 2 PL, a single site is responsible for granting and releasing locks. • In primary 2 PL, each data item is assigned a primary copy. The lock manager on that copy’s machine is responsible for granting and releasing locks. • In distributed 2 PL, the schedulers on each machine not only take care that locks are granted and released, but also that the operation is forwarded to the (local) data manager.

Two-phase Locking (2 PL) • In centralized 2 PL, a single site is responsible for granting and releasing locks. • In primary 2 PL, each data item is assigned a primary copy. The lock manager on that copy’s machine is responsible for granting and releasing locks. • In distributed 2 PL, the schedulers on each machine not only take care that locks are granted and released, but also that the operation is forwarded to the (local) data manager.

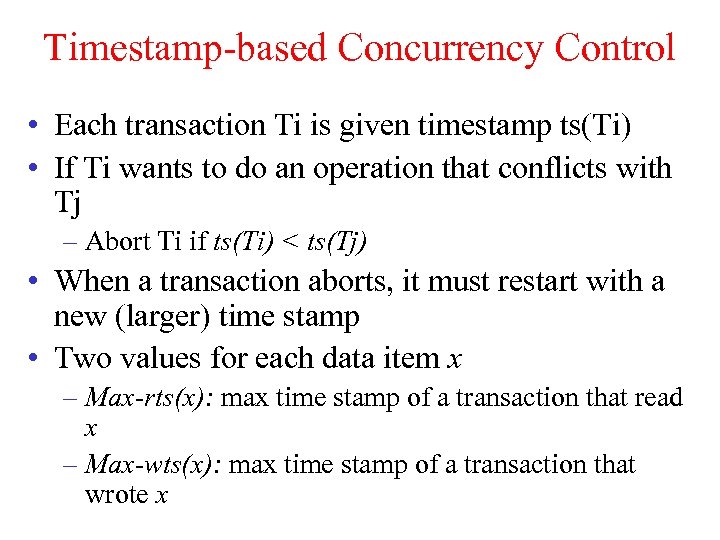

Timestamp-based Concurrency Control • Each transaction Ti is given timestamp ts(Ti) • If Ti wants to do an operation that conflicts with Tj – Abort Ti if ts(Ti) < ts(Tj) • When a transaction aborts, it must restart with a new (larger) time stamp • Two values for each data item x – Max-rts(x): max time stamp of a transaction that read x – Max-wts(x): max time stamp of a transaction that wrote x

Timestamp-based Concurrency Control • Each transaction Ti is given timestamp ts(Ti) • If Ti wants to do an operation that conflicts with Tj – Abort Ti if ts(Ti) < ts(Tj) • When a transaction aborts, it must restart with a new (larger) time stamp • Two values for each data item x – Max-rts(x): max time stamp of a transaction that read x – Max-wts(x): max time stamp of a transaction that wrote x

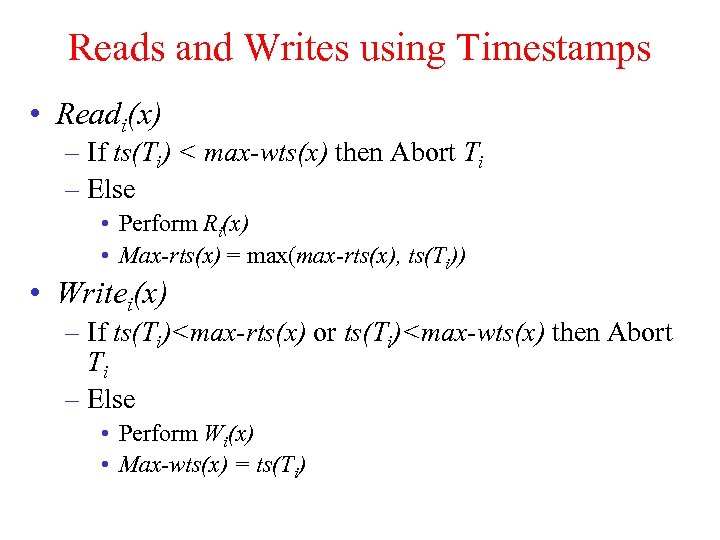

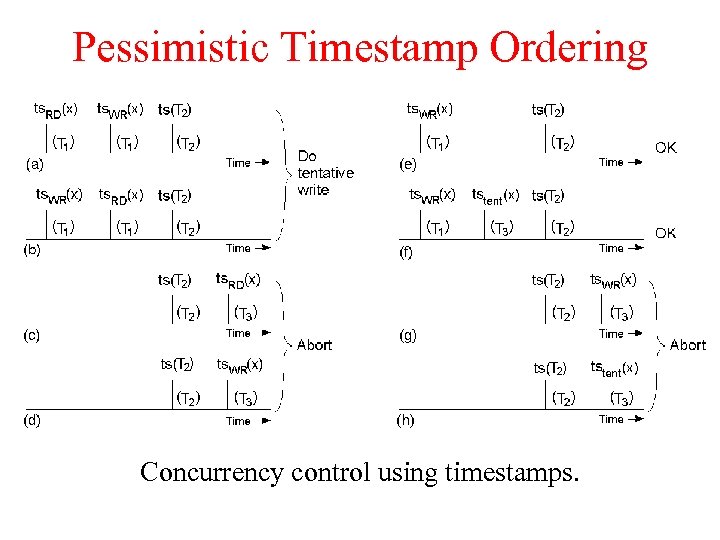

Reads and Writes using Timestamps • Readi(x) – If ts(Ti) < max-wts(x) then Abort Ti – Else • Perform Ri(x) • Max-rts(x) = max(max-rts(x), ts(Ti)) • Writei(x) – If ts(Ti)

Reads and Writes using Timestamps • Readi(x) – If ts(Ti) < max-wts(x) then Abort Ti – Else • Perform Ri(x) • Max-rts(x) = max(max-rts(x), ts(Ti)) • Writei(x) – If ts(Ti)

Pessimistic Timestamp Ordering Concurrency control using timestamps.

Pessimistic Timestamp Ordering Concurrency control using timestamps.

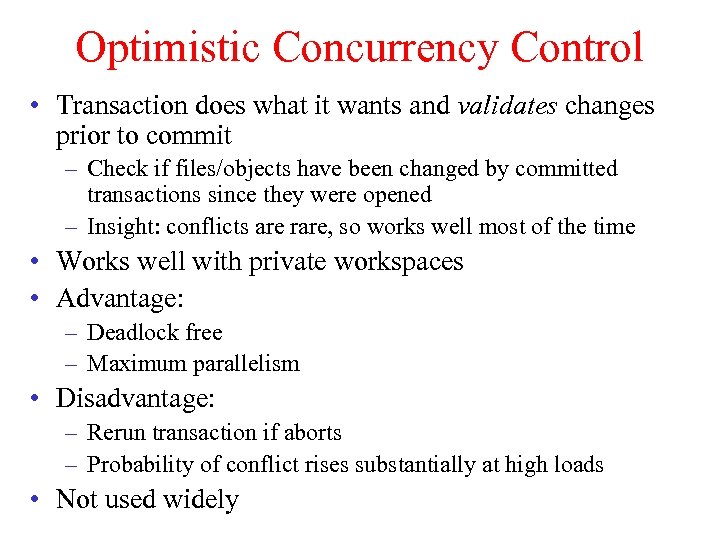

Optimistic Concurrency Control • Transaction does what it wants and validates changes prior to commit – Check if files/objects have been changed by committed transactions since they were opened – Insight: conflicts are rare, so works well most of the time • Works well with private workspaces • Advantage: – Deadlock free – Maximum parallelism • Disadvantage: – Rerun transaction if aborts – Probability of conflict rises substantially at high loads • Not used widely

Optimistic Concurrency Control • Transaction does what it wants and validates changes prior to commit – Check if files/objects have been changed by committed transactions since they were opened – Insight: conflicts are rare, so works well most of the time • Works well with private workspaces • Advantage: – Deadlock free – Maximum parallelism • Disadvantage: – Rerun transaction if aborts – Probability of conflict rises substantially at high loads • Not used widely