451f15ade444690dd09d979ac99da1b7.ppt

- Количество слайдов: 34

Subject Name: Data Warehousing & Data Mining Subject Code: 10 IS 74 &10 MCA 542 Prepared By: Atul Talukder & V. Srikanth Department : ISE & MCA Date 3/19/2018 19/9/2014

UNIT 4 -Association Analysis : Basic Concepts and Algorithms v Frequent Itemset Generation v Rule Generation v Compact Representation of Frequent Itemsets v Alternative methods for generating Frequent Itemsets v FP Growth Algorithm v Evaluation of Association Patterns 3/19/2018

Frequent Itemset Generation 3/19/2018

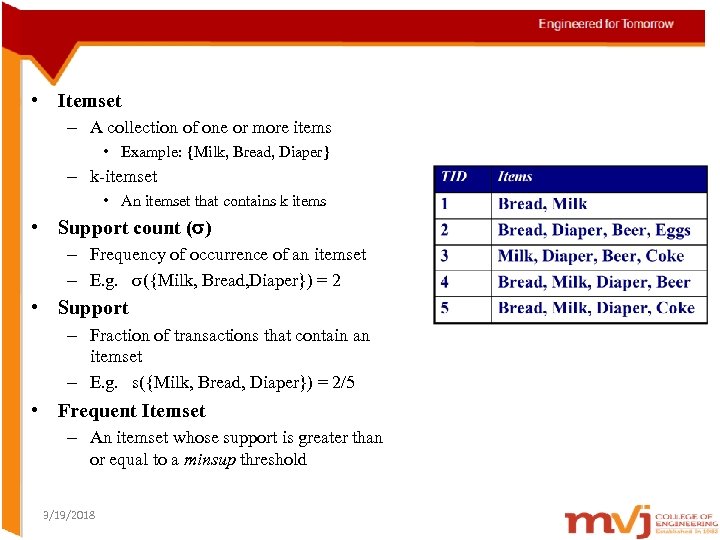

• Itemset – A collection of one or more items • Example: {Milk, Bread, Diaper} – k-itemset • An itemset that contains k items • Support count ( ) – Frequency of occurrence of an itemset – E. g. ({Milk, Bread, Diaper}) = 2 • Support – Fraction of transactions that contain an itemset – E. g. s({Milk, Bread, Diaper}) = 2/5 • Frequent Itemset – An itemset whose support is greater than or equal to a minsup threshold 3/19/2018

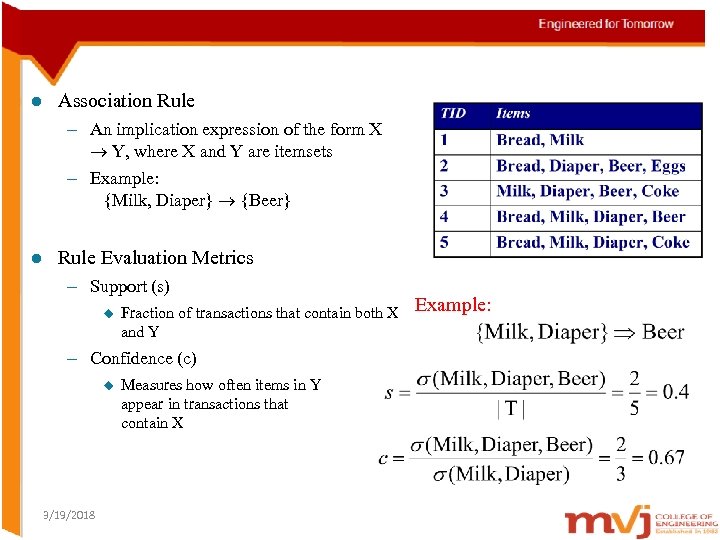

l Association Rule – An implication expression of the form X Y, where X and Y are itemsets – Example: {Milk, Diaper} {Beer} l Rule Evaluation Metrics – Support (s) u Fraction of transactions that contain both X and Y – Confidence (c) u 3/19/2018 Measures how often items in Y appear in transactions that contain X Example:

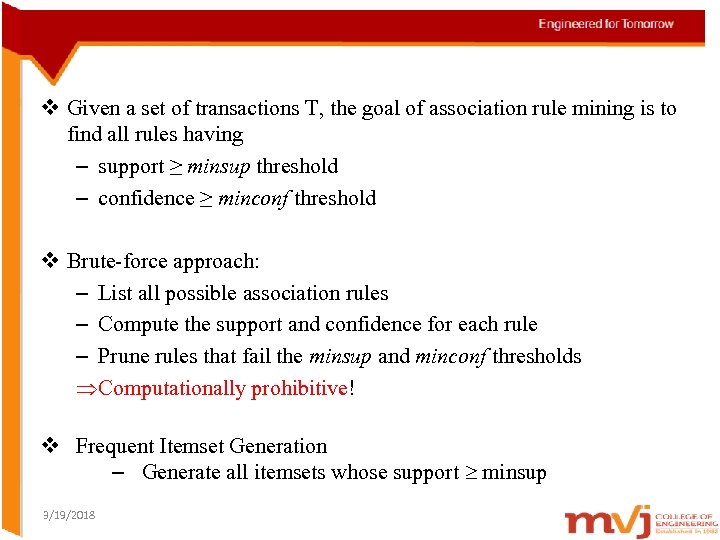

v Given a set of transactions T, the goal of association rule mining is to find all rules having – support ≥ minsup threshold – confidence ≥ minconf threshold v Brute-force approach: – List all possible association rules – Compute the support and confidence for each rule – Prune rules that fail the minsup and minconf thresholds ÞComputationally prohibitive! v Frequent Itemset Generation – Generate all itemsets whose support minsup 3/19/2018

Rule Generation 3/19/2018

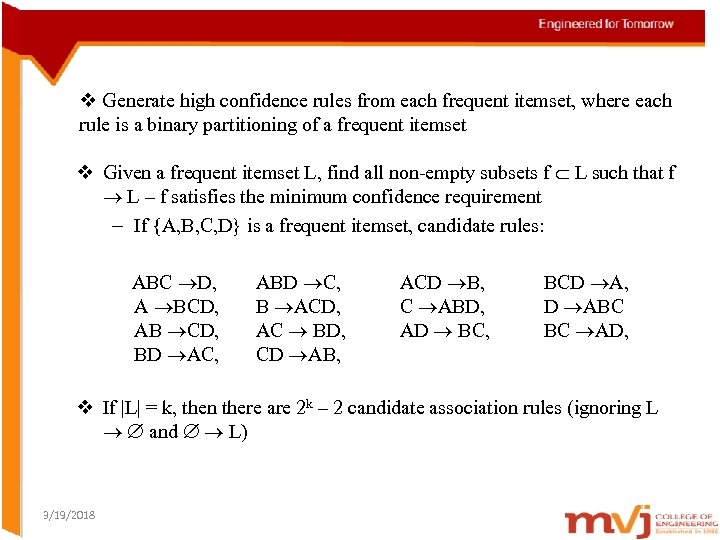

v Generate high confidence rules from each frequent itemset, where each rule is a binary partitioning of a frequent itemset v Given a frequent itemset L, find all non-empty subsets f L such that f L – f satisfies the minimum confidence requirement – If {A, B, C, D} is a frequent itemset, candidate rules: ABC D, A BCD, AB CD, BD AC, ABD C, B ACD, AC BD, CD AB, ACD B, C ABD, AD BC, BCD A, D ABC BC AD, v If |L| = k, then there are 2 k – 2 candidate association rules (ignoring L and L) 3/19/2018

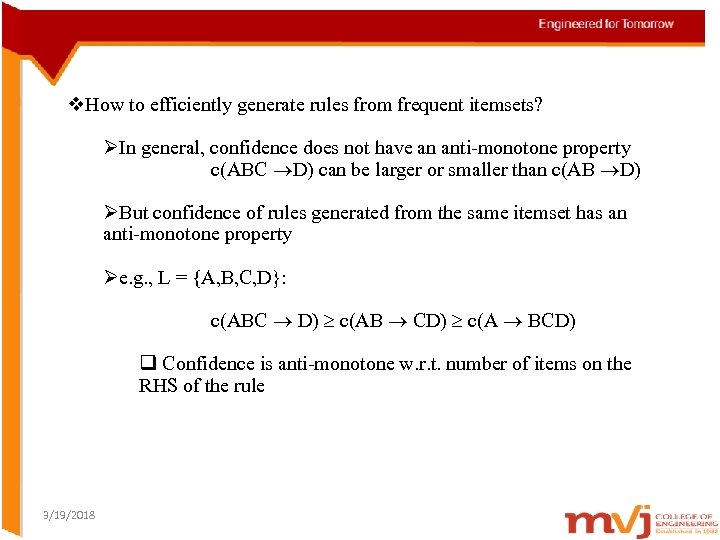

v. How to efficiently generate rules from frequent itemsets? ØIn general, confidence does not have an anti-monotone property c(ABC D) can be larger or smaller than c(AB D) ØBut confidence of rules generated from the same itemset has an anti-monotone property Øe. g. , L = {A, B, C, D}: c(ABC D) c(AB CD) c(A BCD) q Confidence is anti-monotone w. r. t. number of items on the RHS of the rule 3/19/2018

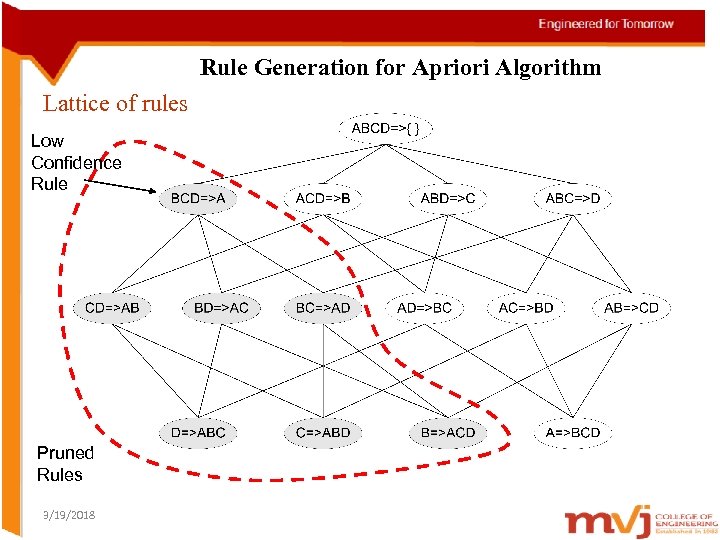

Rule Generation for Apriori Algorithm Lattice of rules Low Confidence Rule Pruned Rules 3/19/2018

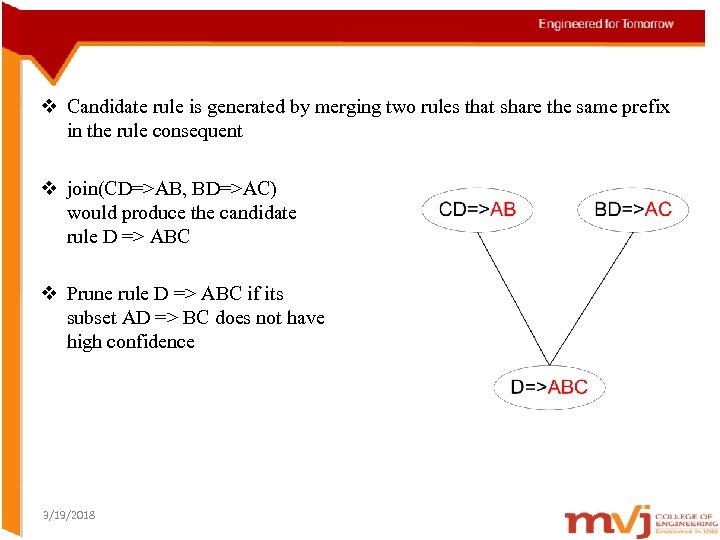

v Candidate rule is generated by merging two rules that share the same prefix in the rule consequent v join(CD=>AB, BD=>AC) would produce the candidate rule D => ABC v Prune rule D => ABC if its subset AD => BC does not have high confidence 3/19/2018

Compact Representation of Frequent Itemsets 3/19/2018

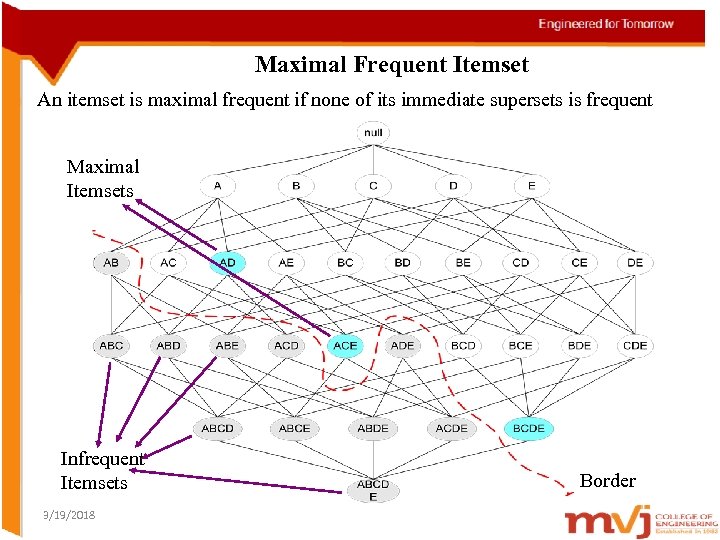

Maximal Frequent Itemset An itemset is maximal frequent if none of its immediate supersets is frequent Maximal Itemsets Infrequent Itemsets 3/19/2018 Border

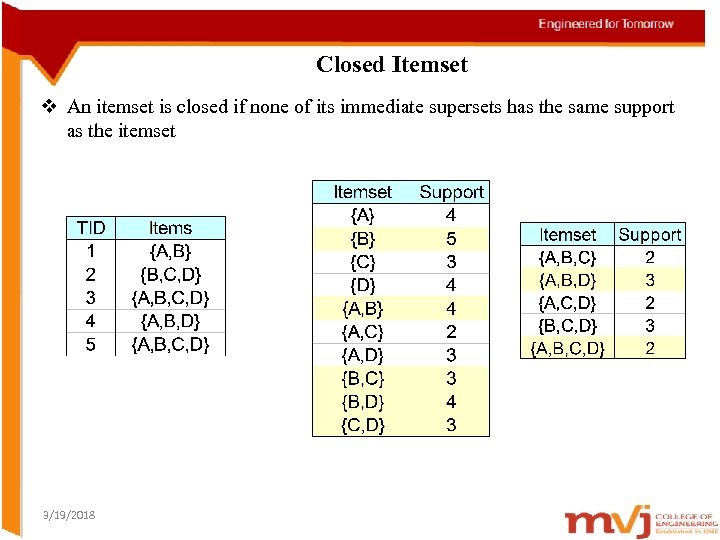

Closed Itemset v An itemset is closed if none of its immediate supersets has the same support as the itemset 3/19/2018

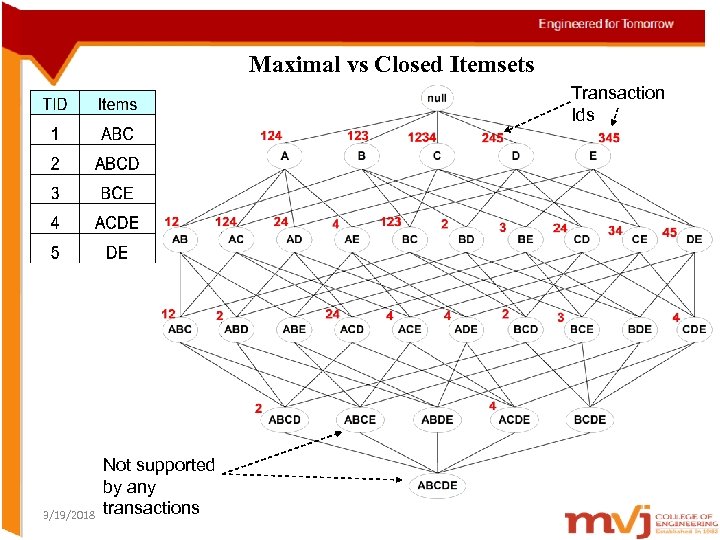

Maximal vs Closed Itemsets Transaction Ids 3/19/2018 Not supported by any transactions

Alternative methods for generating Frequent Itemsets 3/19/2018

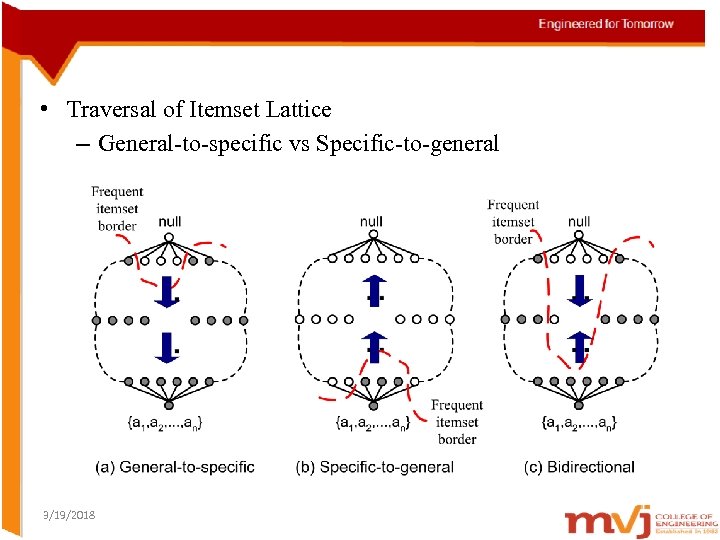

• Traversal of Itemset Lattice – General-to-specific vs Specific-to-general 3/19/2018

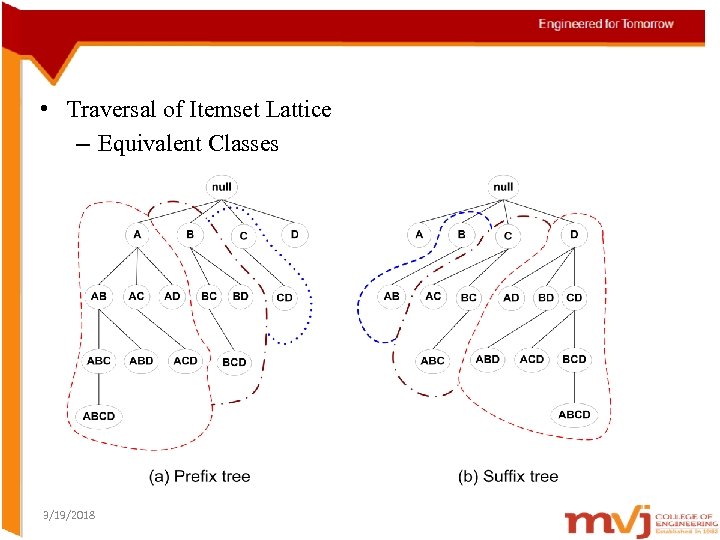

• Traversal of Itemset Lattice – Equivalent Classes 3/19/2018

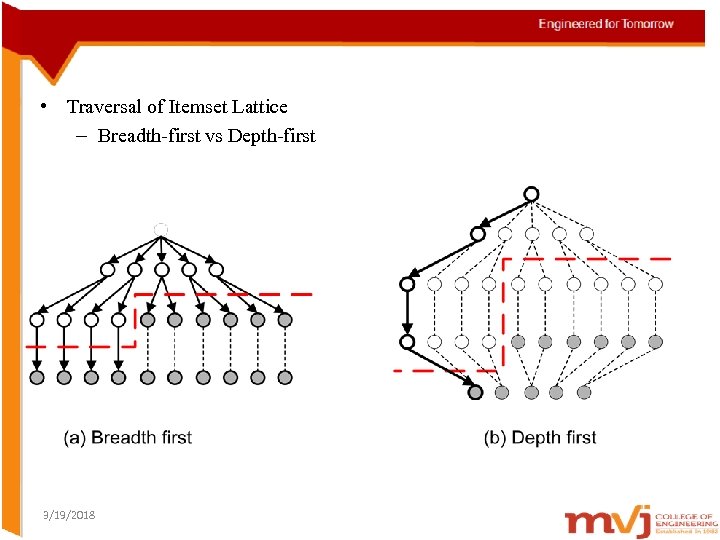

• Traversal of Itemset Lattice – Breadth-first vs Depth-first 3/19/2018

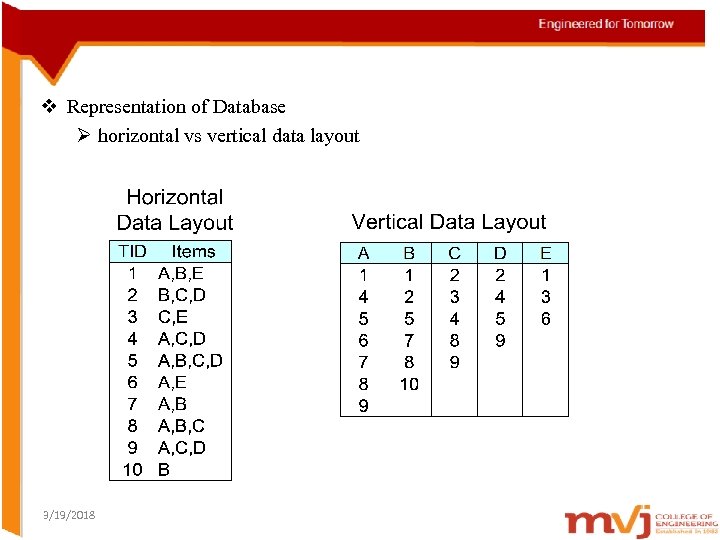

v Representation of Database Ø horizontal vs vertical data layout 3/19/2018

FP Tree 3/19/2018

FP-growth Algorithm v Use a compressed representation of the database using an FPtree v Once an FP-tree has been constructed, it uses a recursive divide -and-conquer approach to mine the frequent itemsets 3/19/2018

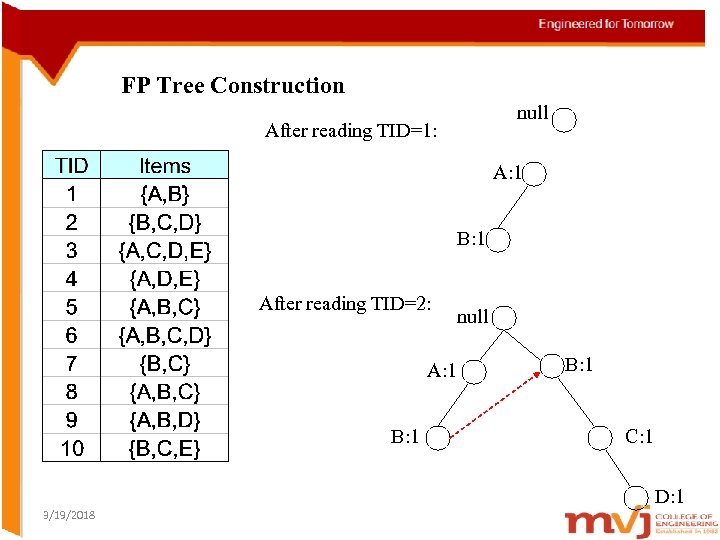

FP Tree Construction null After reading TID=1: A: 1 B: 1 After reading TID=2: A: 1 B: 1 null B: 1 C: 1 D: 1 3/19/2018

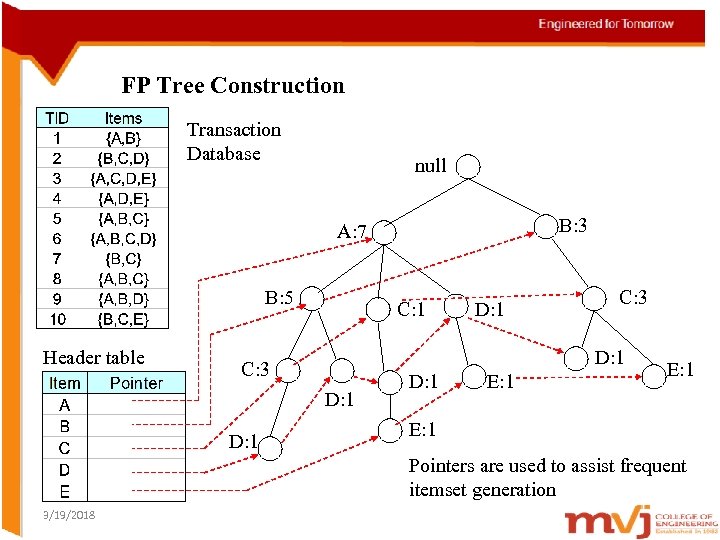

FP Tree Construction Transaction Database null B: 3 A: 7 B: 5 Header table C: 1 C: 3 D: 1 D: 1 E: 1 Pointers are used to assist frequent itemset generation 3/19/2018

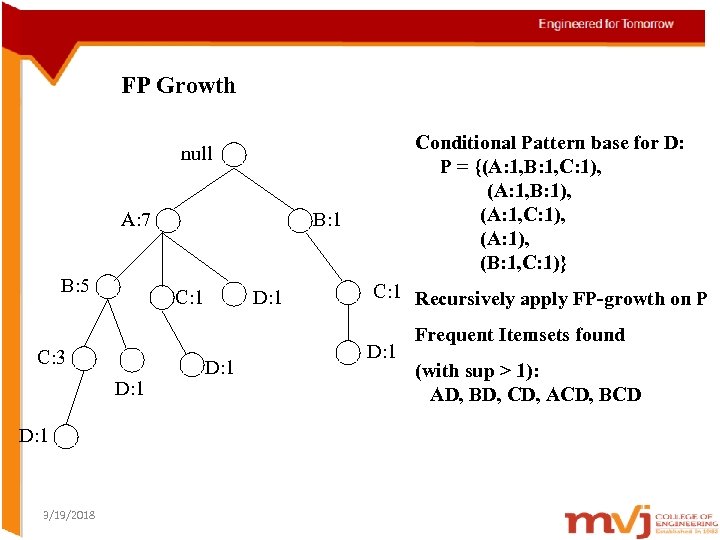

FP Growth Conditional Pattern base for D: P = {(A: 1, B: 1, C: 1), (A: 1, B: 1), (A: 1, C: 1), (A: 1), (B: 1, C: 1)} null A: 7 B: 5 C: 1 C: 3 D: 1 3/19/2018 B: 1 D: 1 C: 1 Recursively apply FP-growth on P D: 1 Frequent Itemsets found (with sup > 1): AD, BD, CD, ACD, BCD

Evaluation of Association Patterns 3/19/2018

Subjective Interestingness v. Visualization Ø Domain experts to interact with the data mining system by interpreting and verifying the discovered patterns v Template based approach Ø Instead of reporting all the extracted rules, only rules that satisfy a user specified template are returned. v Subjective interestingness measures Ø Defined based on domain information or profit margin of items. The measures can then be used to filter patterns that are obvious and nonactionable. 3/19/2018

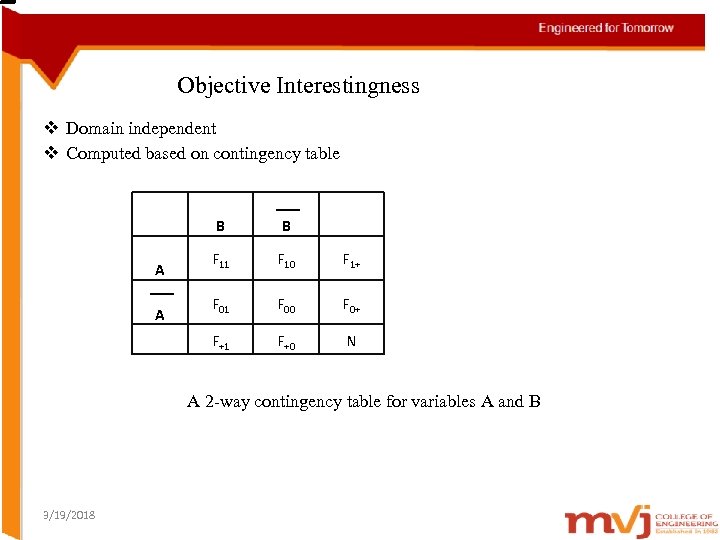

Objective Interestingness v Domain independent v Computed based on contingency table B A F 11 F 10 F 1+ F 01 F 00 F 0+ F+1 A B F+0 N A 2 -way contingency table for variables A and B 3/19/2018

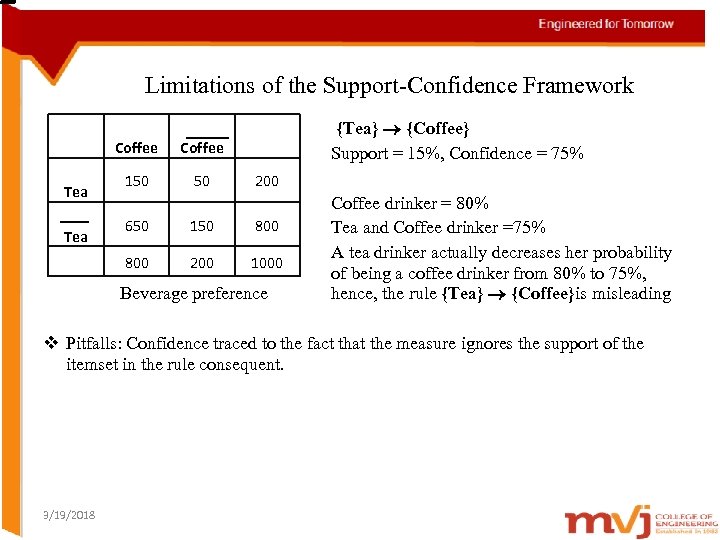

Limitations of the Support-Confidence Framework Coffee Tea Coffee 150 50 {Tea} {Coffee} Support = 15%, Confidence = 75% 200 650 150 800 Tea 200 1000 Beverage preference Coffee drinker = 80% Tea and Coffee drinker =75% A tea drinker actually decreases her probability of being a coffee drinker from 80% to 75%, hence, the rule {Tea} {Coffee}is misleading v Pitfalls: Confidence traced to the fact that the measure ignores the support of the itemset in the rule consequent. 3/19/2018

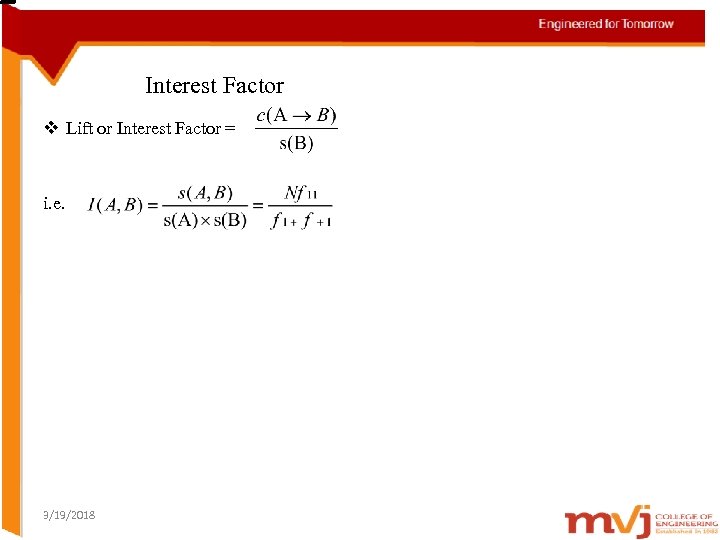

Interest Factor v Lift or Interest Factor = i. e. 3/19/2018

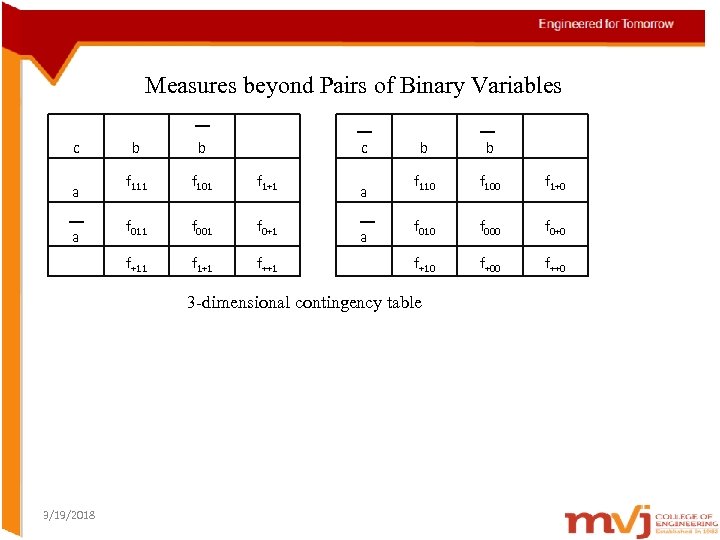

Measures beyond Pairs of Binary Variables c a b f 111 f 101 f 1+1 f 011 f 001 f 0+1 f+11 a b c f 1+1 f++1 a a b b f 110 f 100 f 1+0 f 010 f 000 f 0+0 f+10 f+00 f++0 3 -dimensional contingency table 3/19/2018

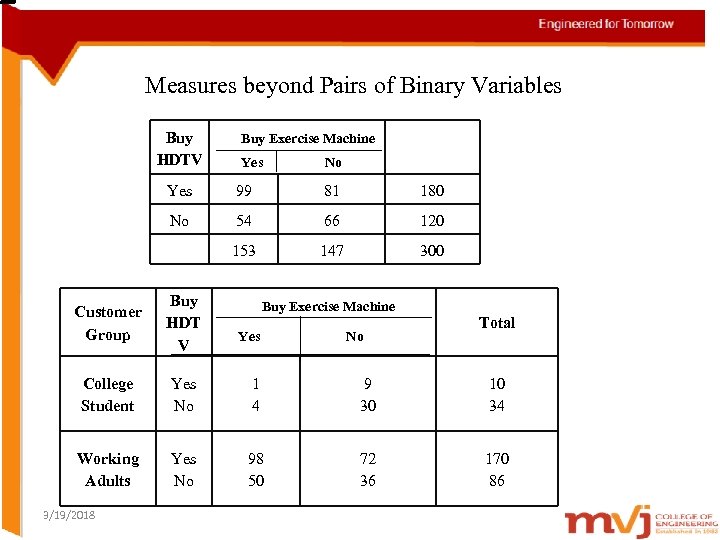

Measures beyond Pairs of Binary Variables Buy HDTV Buy Exercise Machine Yes No Yes 99 81 180 No 54 66 120 153 147 300 Customer Group Buy HDT V College Student Yes No 1 4 9 30 10 34 Working Adults Yes No 98 50 72 36 170 86 3/19/2018 Buy Exercise Machine Yes No Total

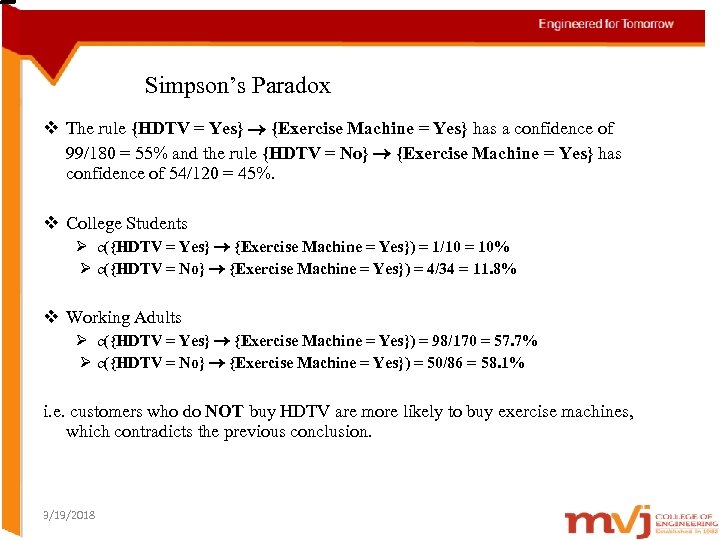

Simpson’s Paradox v The rule {HDTV = Yes} {Exercise Machine = Yes} has a confidence of 99/180 = 55% and the rule {HDTV = No} {Exercise Machine = Yes} has confidence of 54/120 = 45%. v College Students Ø c({HDTV = Yes} {Exercise Machine = Yes}) = 1/10 = 10% Ø c({HDTV = No} {Exercise Machine = Yes}) = 4/34 = 11. 8% v Working Adults Ø c({HDTV = Yes} {Exercise Machine = Yes}) = 98/170 = 57. 7% Ø c({HDTV = No} {Exercise Machine = Yes}) = 50/86 = 58. 1% i. e. customers who do NOT buy HDTV are more likely to buy exercise machines, which contradicts the previous conclusion. 3/19/2018

Simpson’s Paradox v Most customers who buy HDTV’s are working adults. v Working adults are also the largest group of customers who buy exercise machines. v Because 85% of customers are working adults, the observed relationship between HDTV and exercise machine turns out to be stronger in the combined data than what it would have been if the data is stratified. 3/19/2018

451f15ade444690dd09d979ac99da1b7.ppt