c3aa449c6ce53e170d9e7331b0ae1c34.ppt

- Количество слайдов: 17

Storage at the ATLAS Great Lakes Tier-2 Storage Administrator Talks Shawn Mc. Kee / University of Michigan Shawn Mc. Kee OSG Storage Forum 1

Storage at the ATLAS Great Lakes Tier-2 Storage Administrator Talks Shawn Mc. Kee / University of Michigan Shawn Mc. Kee OSG Storage Forum 1

AGLT 2 Storage: Outline T Overview of AGLT 2 T Description, hardware and configuration T Storage Implementation T Original options: AFS, NFS, Lustre, d. Cache T Choose d. Cache (broad support in USATLAS/OSG) T Issues T Monitoring needed T Manpower to maintain (including creating custom cron scripts) T Reliability and performance T Future? T NFSv 4(. x), Lustre (1. 8. 1+), Hadoop Shawn Mc. Kee OSG Storage Forum 2

AGLT 2 Storage: Outline T Overview of AGLT 2 T Description, hardware and configuration T Storage Implementation T Original options: AFS, NFS, Lustre, d. Cache T Choose d. Cache (broad support in USATLAS/OSG) T Issues T Monitoring needed T Manpower to maintain (including creating custom cron scripts) T Reliability and performance T Future? T NFSv 4(. x), Lustre (1. 8. 1+), Hadoop Shawn Mc. Kee OSG Storage Forum 2

The ATLAS Great Lakes Tier-2 T Within the US there are five Tier-2 computing centers, most split over two physical locations. They support “production” and user analysis tasks. T The ATLAS Great Lakes Tier-2 (AGLT 2) is hosted at the University of Michigan and Michigan State University. T AGLT 2 design goals: q Incorporate 10 GE networks for high-performance data transfers q Utilize 2. 6 custom kernels (Ultra. Light) and SL[C]4 OS (soon to SL[C]5) q Deploy inexpensive high-capacity storage systems with large partitions using the XFS filesystem --- Still must address SRM q Take advantage of Mi. LR to the extent possible Shawn Mc. Kee OSG Storage Forum 3

The ATLAS Great Lakes Tier-2 T Within the US there are five Tier-2 computing centers, most split over two physical locations. They support “production” and user analysis tasks. T The ATLAS Great Lakes Tier-2 (AGLT 2) is hosted at the University of Michigan and Michigan State University. T AGLT 2 design goals: q Incorporate 10 GE networks for high-performance data transfers q Utilize 2. 6 custom kernels (Ultra. Light) and SL[C]4 OS (soon to SL[C]5) q Deploy inexpensive high-capacity storage systems with large partitions using the XFS filesystem --- Still must address SRM q Take advantage of Mi. LR to the extent possible Shawn Mc. Kee OSG Storage Forum 3

AGLT 2 Storage Node Information T AGLT 2 has 13 d. Cache storage nodes distributed between MSU and UM with either 40 or 52 useable TB each. T Total d. Cache production storage is 500 TB T We use space-tokens to manage space allocations for ATLAS and currently have 7 space-token areas. T We use XFS for the filesystem on our 50 pools. Each pool varies from 10 -20 TB in size. T d. Cache version is 1. 9. 2. 5 and we are running Chimera T We also use AFS (1. 4. 10) and NFSv 3 for storage Shawn Mc. Kee OSG Storage Forum 4

AGLT 2 Storage Node Information T AGLT 2 has 13 d. Cache storage nodes distributed between MSU and UM with either 40 or 52 useable TB each. T Total d. Cache production storage is 500 TB T We use space-tokens to manage space allocations for ATLAS and currently have 7 space-token areas. T We use XFS for the filesystem on our 50 pools. Each pool varies from 10 -20 TB in size. T d. Cache version is 1. 9. 2. 5 and we are running Chimera T We also use AFS (1. 4. 10) and NFSv 3 for storage Shawn Mc. Kee OSG Storage Forum 4

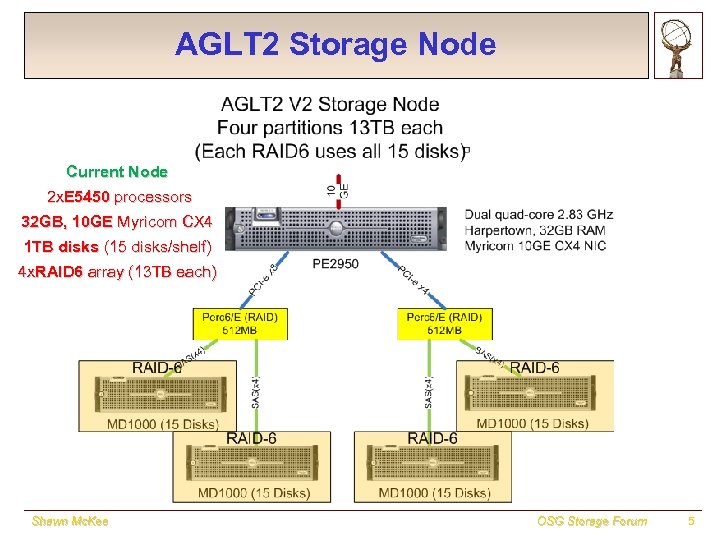

AGLT 2 Storage Node UMFS 05 Current Node 2 x. E 5450 processors 32 GB, 10 GE Myricom CX 4 1 TB disks (15 disks/shelf) 4 x. RAID 6 array (13 TB each) Shawn Mc. Kee OSG Storage Forum 5

AGLT 2 Storage Node UMFS 05 Current Node 2 x. E 5450 processors 32 GB, 10 GE Myricom CX 4 1 TB disks (15 disks/shelf) 4 x. RAID 6 array (13 TB each) Shawn Mc. Kee OSG Storage Forum 5

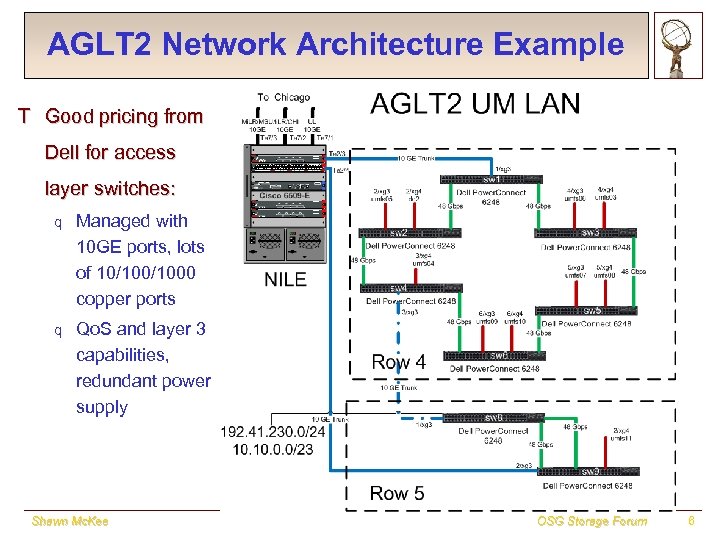

AGLT 2 Network Architecture Example T Good pricing from Dell for access layer switches: q Managed with 10 GE ports, lots of 10/1000 copper ports q Qo. S and layer 3 capabilities, redundant power supply Shawn Mc. Kee OSG Storage Forum 6

AGLT 2 Network Architecture Example T Good pricing from Dell for access layer switches: q Managed with 10 GE ports, lots of 10/1000 copper ports q Qo. S and layer 3 capabilities, redundant power supply Shawn Mc. Kee OSG Storage Forum 6

d. Cache at AGLT 2 T Choose d. Cache because BNL and OSG both supported it. T Really using a system outside of its original design purpose q We wanted a way to tie multiple storage locations into a single user accessible name-space providing unified storage. q d. Cache was intended as a front-end for a HSM system T Lots of manpower and attention has been required q Significant effort required to “watch for” and debug problems early q For a while we felt like the little Dutch boy…every time we fixed a problem, another popped up! q 1. 9. 2. 5/Chimera has improved the situation Shawn Mc. Kee OSG Storage Forum 7

d. Cache at AGLT 2 T Choose d. Cache because BNL and OSG both supported it. T Really using a system outside of its original design purpose q We wanted a way to tie multiple storage locations into a single user accessible name-space providing unified storage. q d. Cache was intended as a front-end for a HSM system T Lots of manpower and attention has been required q Significant effort required to “watch for” and debug problems early q For a while we felt like the little Dutch boy…every time we fixed a problem, another popped up! q 1. 9. 2. 5/Chimera has improved the situation Shawn Mc. Kee OSG Storage Forum 7

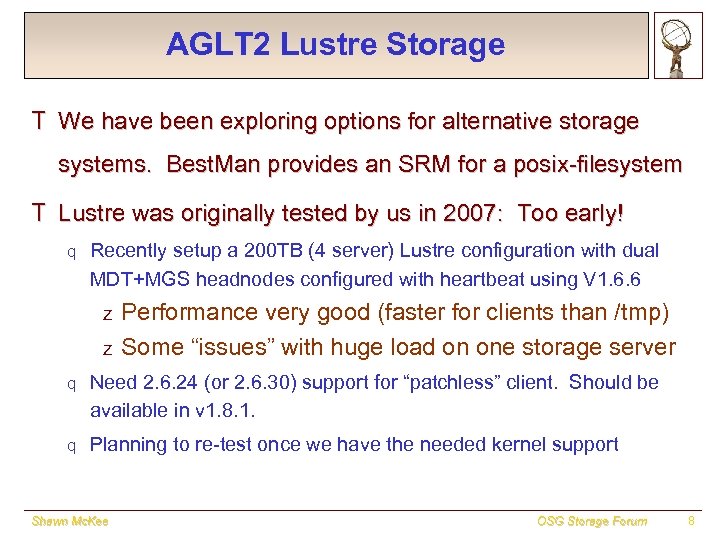

AGLT 2 Lustre Storage T We have been exploring options for alternative storage systems. Best. Man provides an SRM for a posix-filesystem T Lustre was originally tested by us in 2007: Too early! q Recently setup a 200 TB (4 server) Lustre configuration with dual MDT+MGS headnodes configured with heartbeat using V 1. 6. 6 z z Performance very good (faster for clients than /tmp) Some “issues” with huge load on one storage server q Need 2. 6. 24 (or 2. 6. 30) support for “patchless” client. Should be available in v 1. 8. 1. q Planning to re-test once we have the needed kernel support Shawn Mc. Kee OSG Storage Forum 8

AGLT 2 Lustre Storage T We have been exploring options for alternative storage systems. Best. Man provides an SRM for a posix-filesystem T Lustre was originally tested by us in 2007: Too early! q Recently setup a 200 TB (4 server) Lustre configuration with dual MDT+MGS headnodes configured with heartbeat using V 1. 6. 6 z z Performance very good (faster for clients than /tmp) Some “issues” with huge load on one storage server q Need 2. 6. 24 (or 2. 6. 30) support for “patchless” client. Should be available in v 1. 8. 1. q Planning to re-test once we have the needed kernel support Shawn Mc. Kee OSG Storage Forum 8

Monitoring Storage T Monitoring our Tier-2 is a critical part of our work and storage monitoring is one of the most important aspects T We use a number of tools to track status: q Cacti q Ganglia q Custom web pages q Brian’s d. Cache billing web interface q Email alerting (low space, failed auto-tests, specific errors noted) T See http: //head 02. aglt 2. org/cgi-bin/dashboard or https: //hep. pa. msu. edu/twiki/bin/view/AGLT 2/Monitors Shawn Mc. Kee OSG Storage Forum 9

Monitoring Storage T Monitoring our Tier-2 is a critical part of our work and storage monitoring is one of the most important aspects T We use a number of tools to track status: q Cacti q Ganglia q Custom web pages q Brian’s d. Cache billing web interface q Email alerting (low space, failed auto-tests, specific errors noted) T See http: //head 02. aglt 2. org/cgi-bin/dashboard or https: //hep. pa. msu. edu/twiki/bin/view/AGLT 2/Monitors Shawn Mc. Kee OSG Storage Forum 9

Scripts to Manage Storage T As we have found problems we sometimes needed to automate the solution q Rephot – Automated replica creation developed by Wenjing Wu scans for “hot” files and automatically adds/removes replicas q Pool-balancer – Rebalances pools within a group T Numerous scripts (cron) for maintenance q Directory ownership q Consistency checking, including Adler 32 checksum verification q Repairing inconsistencies q Tracking usage (real space and space-token) q Auto-loading PNFSID into Chimera DB for faster access Shawn Mc. Kee OSG Storage Forum 10

Scripts to Manage Storage T As we have found problems we sometimes needed to automate the solution q Rephot – Automated replica creation developed by Wenjing Wu scans for “hot” files and automatically adds/removes replicas q Pool-balancer – Rebalances pools within a group T Numerous scripts (cron) for maintenance q Directory ownership q Consistency checking, including Adler 32 checksum verification q Repairing inconsistencies q Tracking usage (real space and space-token) q Auto-loading PNFSID into Chimera DB for faster access Shawn Mc. Kee OSG Storage Forum 10

AGLT 2 Storage Issues T Information is scattered across server nodes and log files T Java based components have verbose output which makes it hard to find the real problem T With d. Cache we find the large number of components have a large phase-space for problems T Errors are not always indicative of the real problem… T Is syslog-ng a part of the solution? T Can error messages be significantly improved? Shawn Mc. Kee OSG Storage Forum 11

AGLT 2 Storage Issues T Information is scattered across server nodes and log files T Java based components have verbose output which makes it hard to find the real problem T With d. Cache we find the large number of components have a large phase-space for problems T Errors are not always indicative of the real problem… T Is syslog-ng a part of the solution? T Can error messages be significantly improved? Shawn Mc. Kee OSG Storage Forum 11

Benchmarking and Optimization T We have spent some time trying to optimize our storage performance. T See https: //hep. pa. msu. edu/twiki/bin/view/AGLT 2/IOTest. On. Raid Systems T Explored system/kernel/network and I/O tunings for our hardware. Have achieved good performance for single read/write (>700 MB/sec) per partition. Multiple readers/writers at a few hundred MB/sec. Shawn Mc. Kee OSG Storage Forum 12

Benchmarking and Optimization T We have spent some time trying to optimize our storage performance. T See https: //hep. pa. msu. edu/twiki/bin/view/AGLT 2/IOTest. On. Raid Systems T Explored system/kernel/network and I/O tunings for our hardware. Have achieved good performance for single read/write (>700 MB/sec) per partition. Multiple readers/writers at a few hundred MB/sec. Shawn Mc. Kee OSG Storage Forum 12

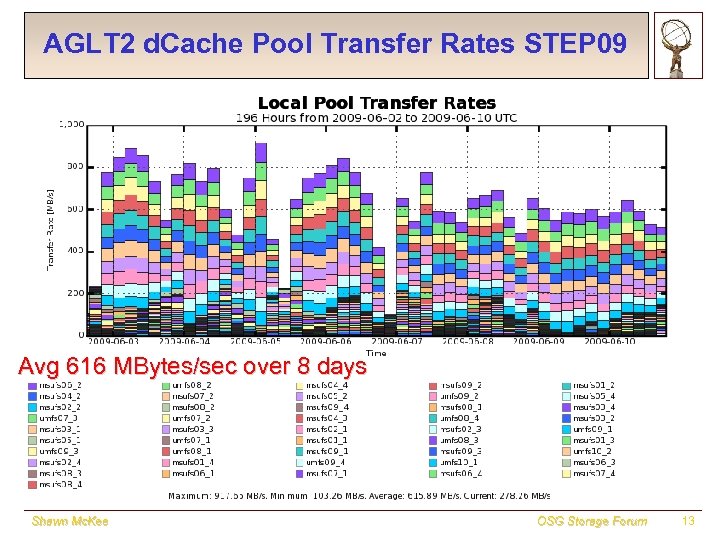

AGLT 2 d. Cache Pool Transfer Rates STEP 09 Avg 616 MBytes/sec over 8 days Shawn Mc. Kee OSG Storage Forum 13

AGLT 2 d. Cache Pool Transfer Rates STEP 09 Avg 616 MBytes/sec over 8 days Shawn Mc. Kee OSG Storage Forum 13

Future AGLT 2 Storage T We are interested in exploring Best. Man+X where ‘X’ is q NFSv 4[. x] q Lustre 1. 8. x q Hadoop T We are interested in the following characteristics for each option: q Expertise required to install/configure q Manpower required to maintain q Robustness over time q Performance (single and multiple read/writer) T Our plan is to test these during the rest of 2009 Shawn Mc. Kee OSG Storage Forum 14

Future AGLT 2 Storage T We are interested in exploring Best. Man+X where ‘X’ is q NFSv 4[. x] q Lustre 1. 8. x q Hadoop T We are interested in the following characteristics for each option: q Expertise required to install/configure q Manpower required to maintain q Robustness over time q Performance (single and multiple read/writer) T Our plan is to test these during the rest of 2009 Shawn Mc. Kee OSG Storage Forum 14

? Questions? Shawn Mc. Kee OSG Storage Forum 15

? Questions? Shawn Mc. Kee OSG Storage Forum 15

Backup Slides Shawn Mc. Kee OSG Storage Forum 16

Backup Slides Shawn Mc. Kee OSG Storage Forum 16

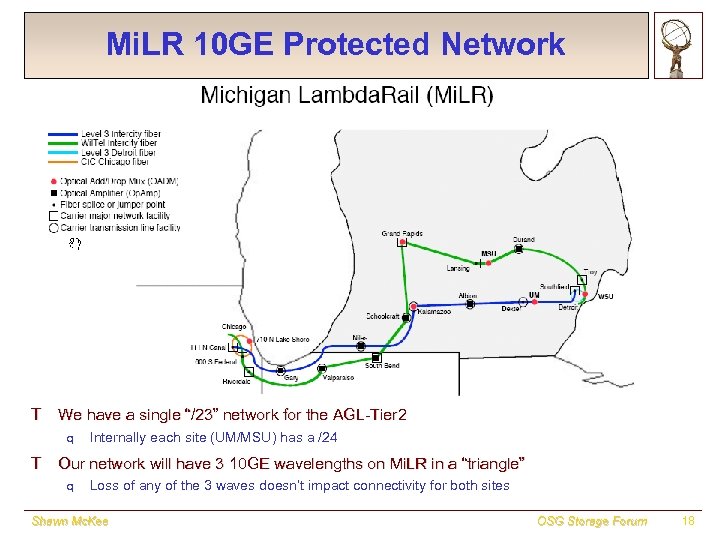

Mi. LR 10 GE Protected Network T We have a single “/23” network for the AGL-Tier 2 q Internally each site (UM/MSU) has a /24 T Our network will have 3 10 GE wavelengths on Mi. LR in a “triangle” q Loss of any of the 3 waves doesn’t impact connectivity for both sites Shawn Mc. Kee OSG Storage Forum 18

Mi. LR 10 GE Protected Network T We have a single “/23” network for the AGL-Tier 2 q Internally each site (UM/MSU) has a /24 T Our network will have 3 10 GE wavelengths on Mi. LR in a “triangle” q Loss of any of the 3 waves doesn’t impact connectivity for both sites Shawn Mc. Kee OSG Storage Forum 18