1017e15e065fc491386419a7d95e863e.ppt

- Количество слайдов: 55

Steps Towards Understanding Common Sense Kenneth D. Forbus Northwestern University

Our Working Hypotheses (Forbus & Gentner, 1997) • Common sense = Combination of analogical reasoning from experience and first-principles reasoning • Within-domain analogies provide robustness, rapid predictions • First-principles reasoning emerges slowly as generalizations from examples • Qualitative representations are central – Appropriate level of understanding for communication, action, and generalization

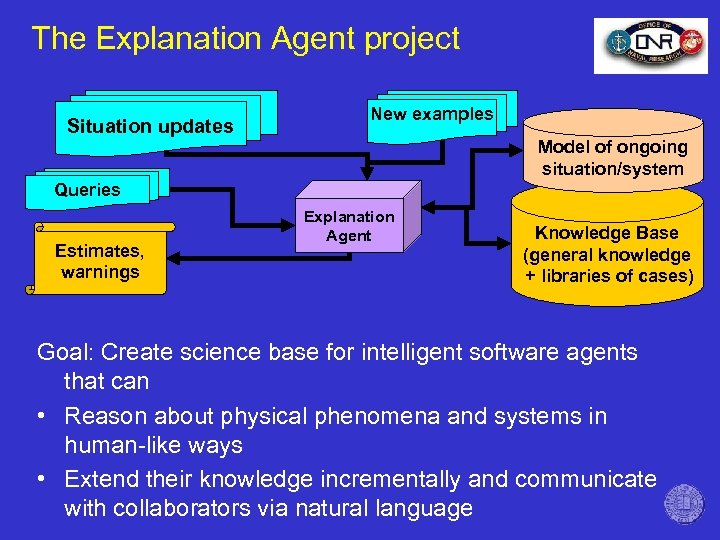

The Explanation Agent project Situation updates New examples Model of ongoing situation/system Queries Estimates, warnings Explanation Agent Knowledge Base (general knowledge + libraries of cases) Goal: Create science base for intelligent software agents that can • Reason about physical phenomena and systems in human-like ways • Extend their knowledge incrementally and communicate with collaborators via natural language

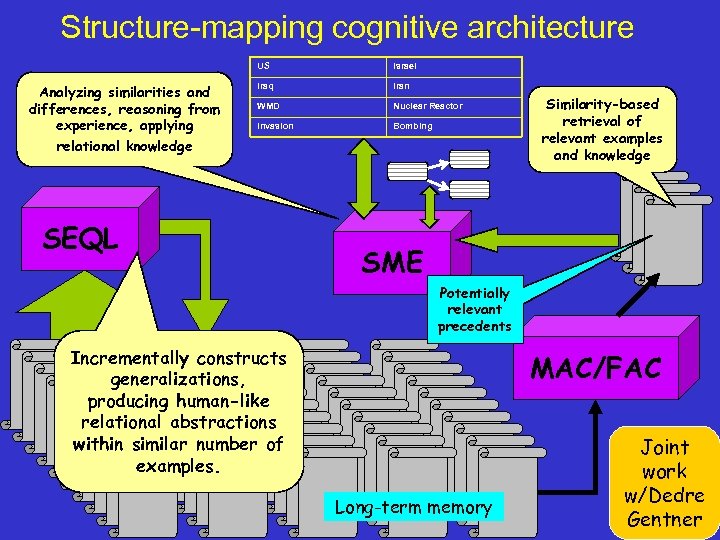

Structure-mapping cognitive architecture US Analyzing similarities and differences, reasoning from experience, applying relational knowledge Israel Iraq Iran WMD Nuclear Reactor Invasion Bombing SEQL Similarity-based retrieval of relevant examples and knowledge SME Potentially relevant precedents Incrementally constructs generalizations, producing human-like relational abstractions within similar number of examples. MAC/FAC Long-term memory Joint work w/Dedre Gentner

Overview • Back of the envelope reasoning – How do we capture the feeling for quantity people have? – Ph. D. work of Praveen Paritosh, in progress • Rerepresentation – How do we achieve human-like flexibility in analogical matching? – Ph. D. work of Jin Yan, in progress • Qualitative representations as part of natural language semantics – How do we communicate about the physical world? – Ph. D. work of Sven Kuehne, just finished • Next steps

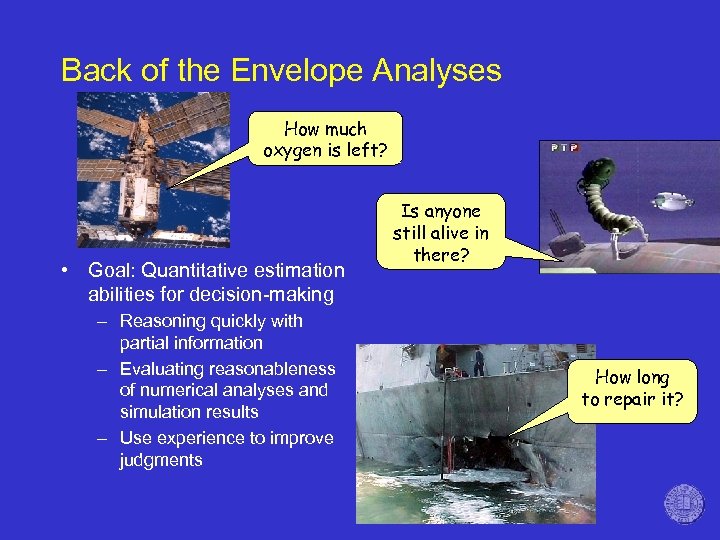

Back of the Envelope Analyses How much oxygen is left? • Goal: Quantitative estimation abilities for decision-making – Reasoning quickly with partial information – Evaluating reasonableness of numerical analyses and simulation results – Use experience to improve judgments Is anyone still alive in there? How long to repair it?

Some everyday examples • • • How much money is spent on newspapers in USA per year? How many K-8 elementary school teachers are in the USA? How much time would be saved per year nationwide by increasing the speed limit from 55 to 65 mph? What is the annual cost of healthcare in USA? How much tea (weight) is there in China? Last summer, the US Army bought Microsoft Windows/Office/Server software for 500, 000 computers. The deal included the software and six years of support. How much did the army pay for this?

How much money is spent on newspapers in USA per year? 1. Total money spent = Money spent per buyer * number of buyers 2. Annual expense per buyer = Units bought per year * cost per unit 3. Annual expense per buyer = 365 * $0. 75 = $250 4. Number of buyers = 300 million people in US * ¼ = 75 million 5. Total money spent = 75 million * 250 = $20 billion $26 billion, source: Statistical abstracts

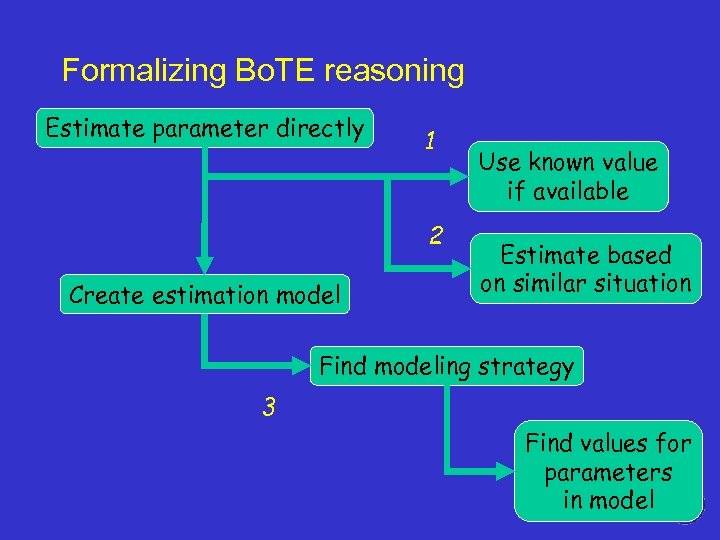

Formalizing Bo. TE reasoning Estimate parameter directly 1 2 Create estimation model Use known value if available Estimate based on similar situation Find modeling strategy 3 Find values for parameters in model

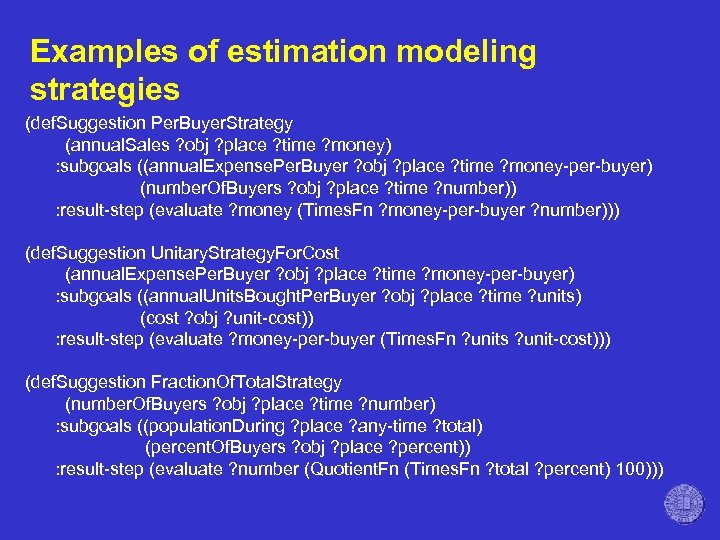

Examples of estimation modeling strategies (def. Suggestion Per. Buyer. Strategy (annual. Sales ? obj ? place ? time ? money) : subgoals ((annual. Expense. Per. Buyer ? obj ? place ? time ? money-per-buyer) (number. Of. Buyers ? obj ? place ? time ? number)) : result-step (evaluate ? money (Times. Fn ? money-per-buyer ? number))) (def. Suggestion Unitary. Strategy. For. Cost (annual. Expense. Per. Buyer ? obj ? place ? time ? money-per-buyer) : subgoals ((annual. Units. Bought. Per. Buyer ? obj ? place ? time ? units) (cost ? obj ? unit-cost)) : result-step (evaluate ? money-per-buyer (Times. Fn ? units ? unit-cost))) (def. Suggestion Fraction. Of. Total. Strategy (number. Of. Buyers ? obj ? place ? time ? number) : subgoals ((population. During ? place ? any-time ? total) (percent. Of. Buyers ? obj ? place ? percent)) : result-step (evaluate ? number (Quotient. Fn (Times. Fn ? total ? percent) 100)))

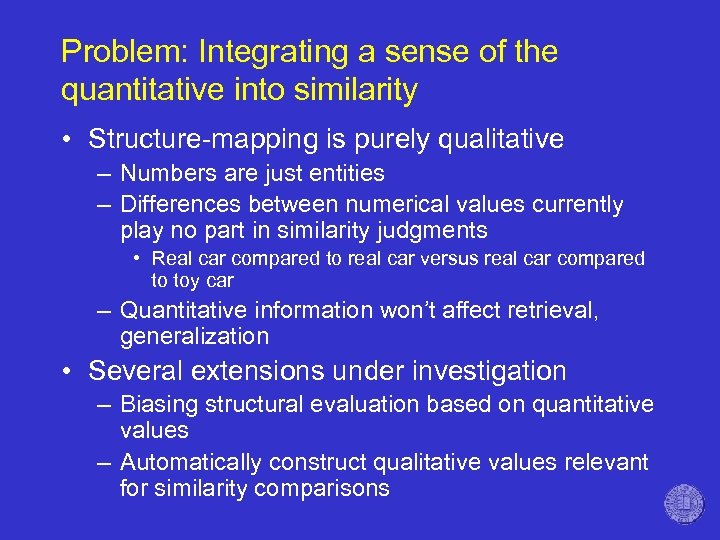

Problem: Integrating a sense of the quantitative into similarity • Structure-mapping is purely qualitative – Numbers are just entities – Differences between numerical values currently play no part in similarity judgments • Real car compared to real car versus real car compared to toy car – Quantitative information won’t affect retrieval, generalization • Several extensions under investigation – Biasing structural evaluation based on quantitative values – Automatically construct qualitative values relevant for similarity comparisons

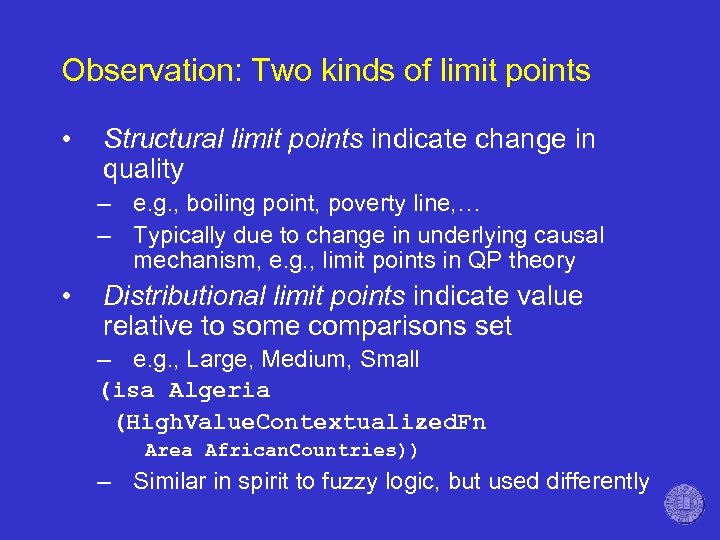

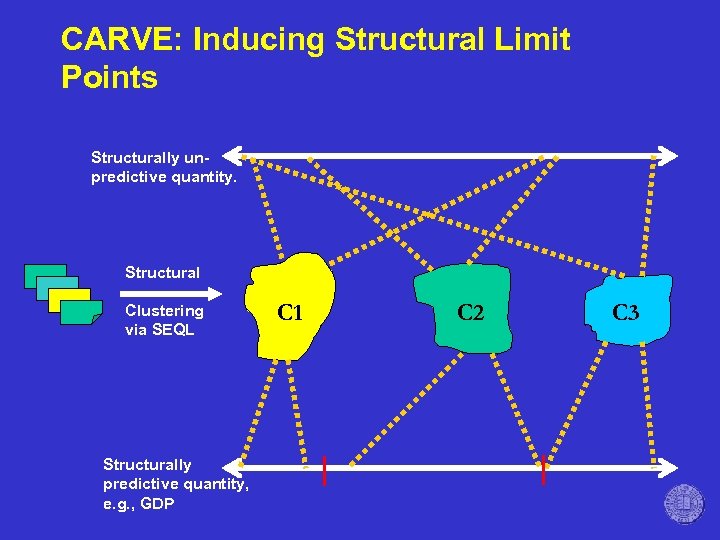

Observation: Two kinds of limit points • Structural limit points indicate change in quality – e. g. , boiling point, poverty line, … – Typically due to change in underlying causal mechanism, e. g. , limit points in QP theory • Distributional limit points indicate value relative to some comparisons set – e. g. , Large, Medium, Small (isa Algeria (High. Value. Contextualized. Fn Area African. Countries)) – Similar in spirit to fuzzy logic, but used differently

Paritosh’s CARVE model (in progress) • Idea: Use distributional information to provide simple qualitative representations for bootstrapping – Interleave qualitative representation and categorization (via SEQL) to induce more sophisticated qualitative representations

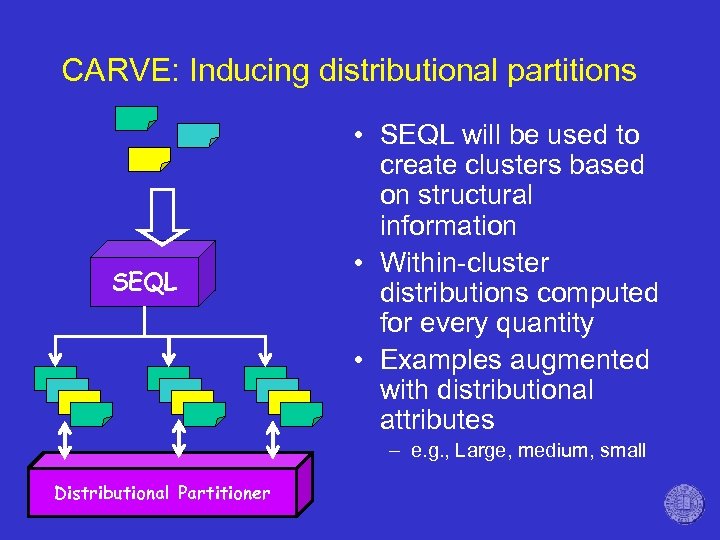

CARVE: Inducing distributional partitions SEQL • SEQL will be used to create clusters based on structural information • Within-cluster distributions computed for every quantity • Examples augmented with distributional attributes – e. g. , Large, medium, small Distributional Partitioner

CARVE: Inducing Structural Limit Points Structurally unpredictive quantity. Structural Clustering via SEQL Structurally predictive quantity, e. g. , GDP C 1 C 2 C 3

Rerepresentation • Rerepresentation reconstrues parts of compared situations in order to improve a match. • Rerepresentation is important in analogical reasoning and learning. – Kotovsky & Gentner (1996) found that children are better able to make cross-dimensional analogies when they have been induced to rerepresent the two situations to permit noticing the common magnitude increase. – Gentner et al (1997) argue that rerepresentation played a crucial role in Kepler’s working through his analogy of vis-Motrix to light.

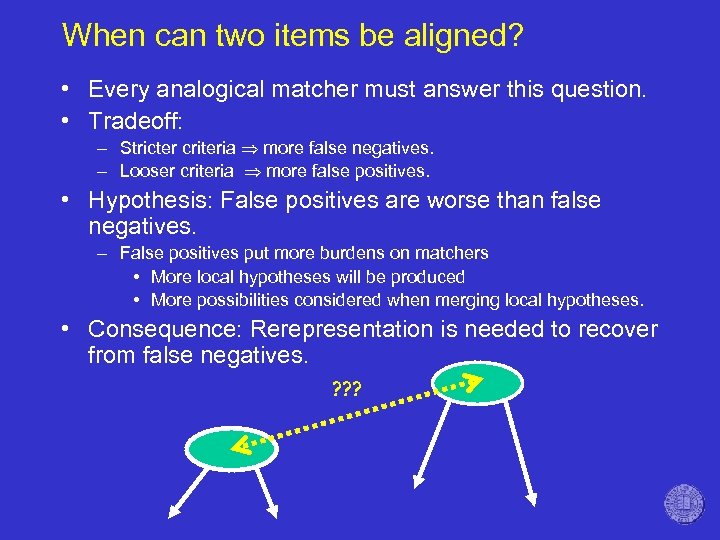

When can two items be aligned? • Every analogical matcher must answer this question. • Tradeoff: – Stricter criteria more false negatives. – Looser criteria more false positives. • Hypothesis: False positives are worse than false negatives. – False positives put more burdens on matchers • More local hypotheses will be produced • More possibilities considered when merging local hypotheses. • Consequence: Rerepresentation is needed to recover from false negatives. ? ? ?

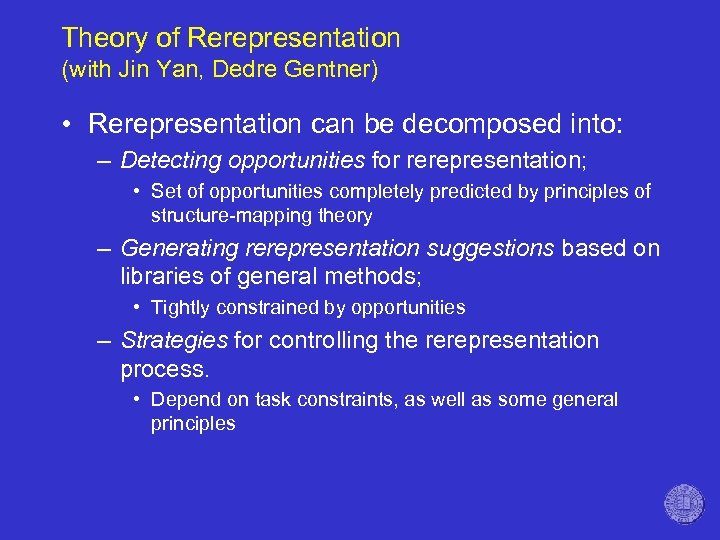

Theory of Rerepresentation (with Jin Yan, Dedre Gentner) • Rerepresentation can be decomposed into: – Detecting opportunities for rerepresentation; • Set of opportunities completely predicted by principles of structure-mapping theory – Generating rerepresentation suggestions based on libraries of general methods; • Tightly constrained by opportunities – Strategies for controlling the rerepresentation process. • Depend on task constraints, as well as some general principles

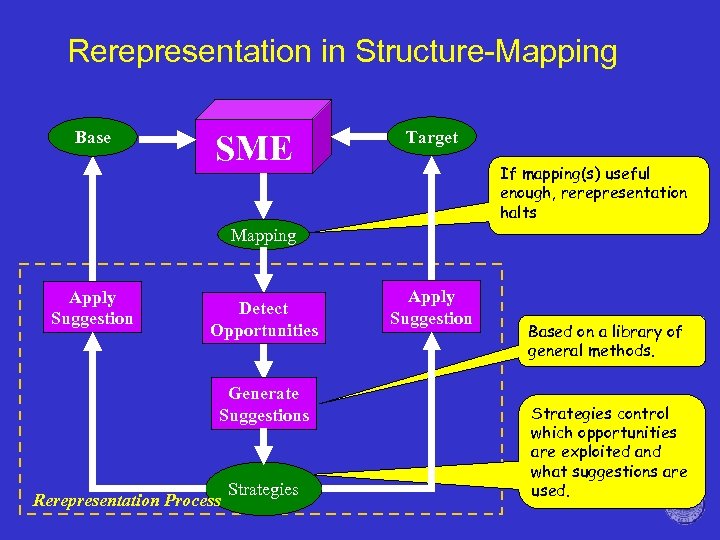

Rerepresentation in Structure-Mapping Base SME Target If mapping(s) useful enough, rerepresentation halts Mapping Apply Suggestion Detect Opportunities Generate Suggestions Rerepresentation Process Strategies Apply Suggestion Based on a library of general methods. Strategies control which opportunities are exploited and what suggestions are used.

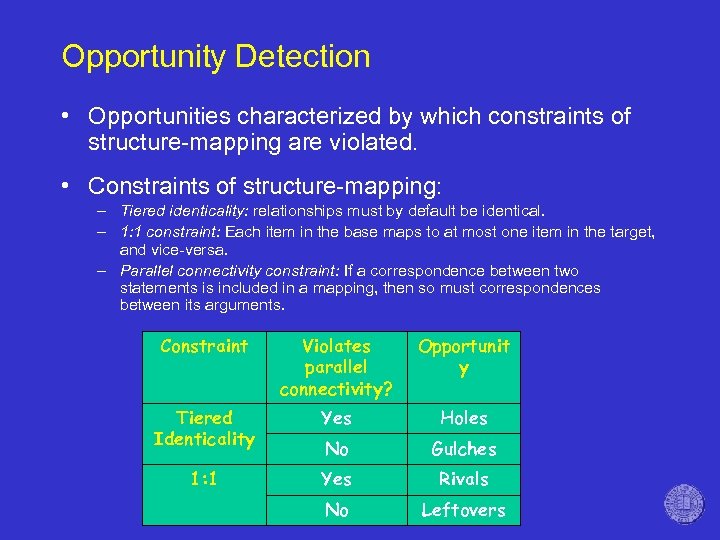

Opportunity Detection • Opportunities characterized by which constraints of structure-mapping are violated. • Constraints of structure-mapping: – Tiered identicality: relationships must by default be identical. – 1: 1 constraint: Each item in the base maps to at most one item in the target, and vice-versa. – Parallel connectivity constraint: If a correspondence between two statements is included in a mapping, then so must correspondences between its arguments. Constraint Violates parallel connectivity? Opportunit y Tiered Identicality Yes Holes No Gulches 1: 1 Yes Rivals No Leftovers

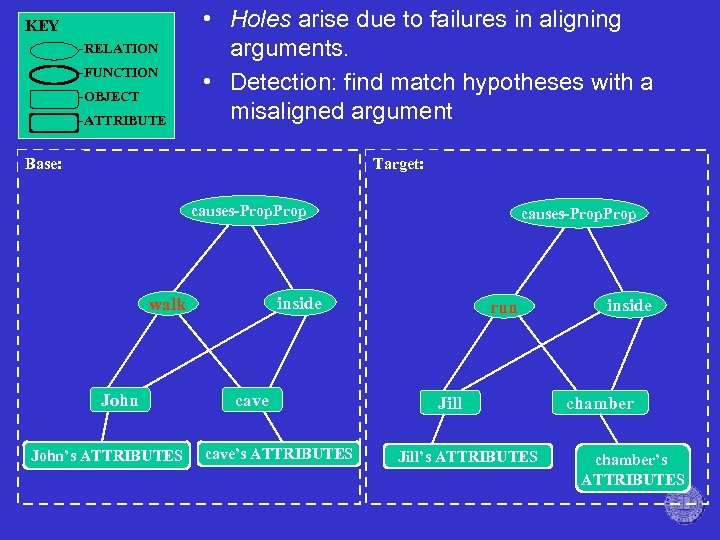

KEY -RELATION -FUNCTION -OBJECT -ATTRIBUTE • Holes arise due to failures in aligning arguments. • Detection: find match hypotheses with a misaligned argument Base: Target: causes-Prop inside walk John’s ATTRIBUTES causes-Prop cave’s ATTRIBUTES run Jill’s ATTRIBUTES inside chamber’s ATTRIBUTES

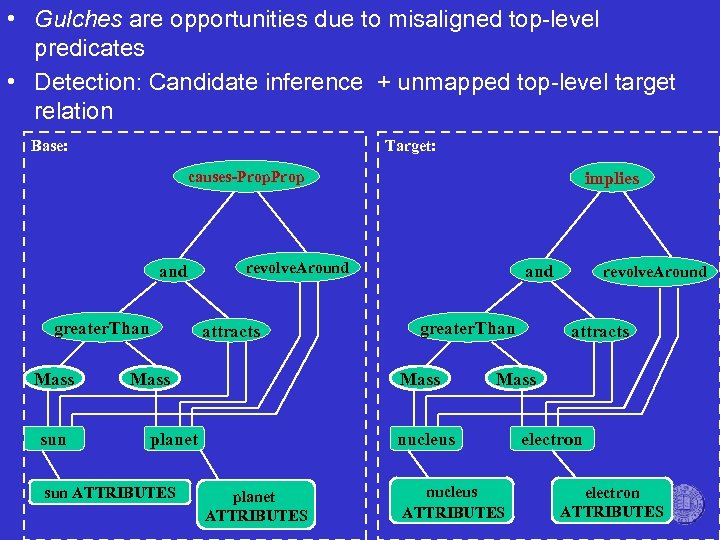

• Gulches are opportunities due to misaligned top-level predicates • Detection: Candidate inference + unmapped top-level target relation Base: Target: implies causes-Prop and greater. Than Mass sun revolve. Around attracts Mass greater. Than Mass planet sun ATTRIBUTES and attracts Mass nucleus planet ATTRIBUTES revolve. Around nucleus ATTRIBUTES electron ATTRIBUTES

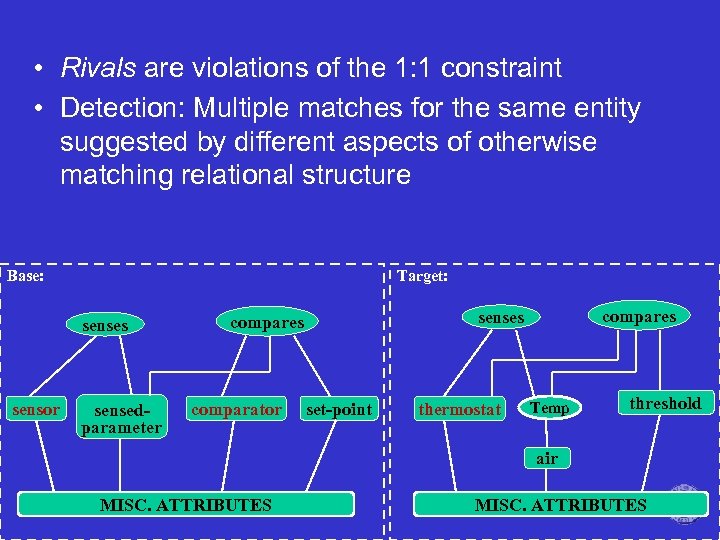

• Rivals are violations of the 1: 1 constraint • Detection: Multiple matches for the same entity suggested by different aspects of otherwise matching relational structure Base: Target: senses sensor sensedparameter comparator compares senses compares set-point thermostat Temp threshold air MISC. ATTRIBUTES

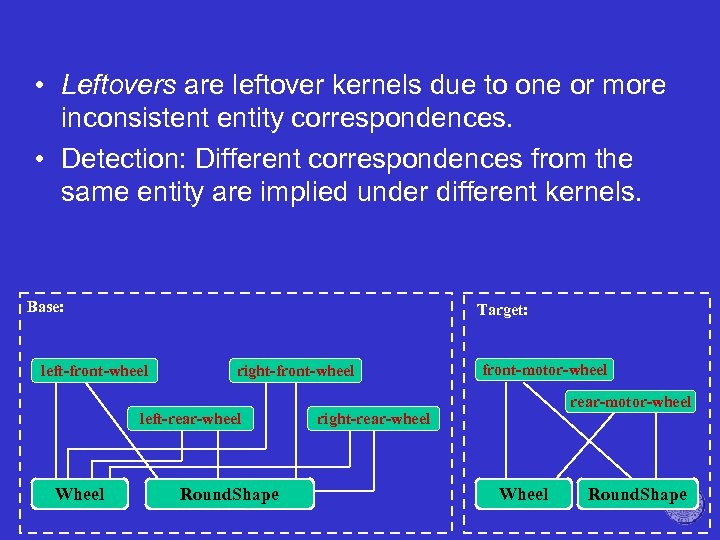

• Leftovers are leftover kernels due to one or more inconsistent entity correspondences. • Detection: Different correspondences from the same entity are implied under different kernels. Base: Target: left-front-wheel right-front-wheel left-rear-wheel Wheel Round. Shape front-motor-wheel rear-motor-wheel right-rear-wheel Wheel Round. Shape

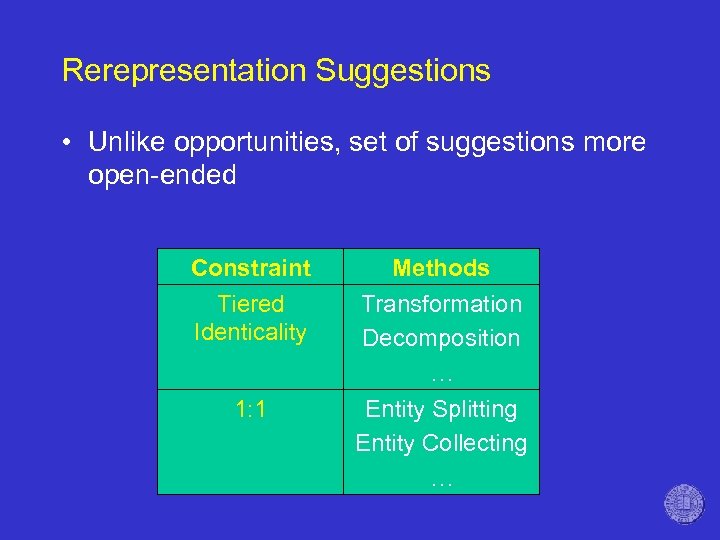

Rerepresentation Suggestions • Unlike opportunities, set of suggestions more open-ended Constraint Tiered Identicality Methods Transformation Decomposition … 1: 1 Entity Splitting Entity Collecting …

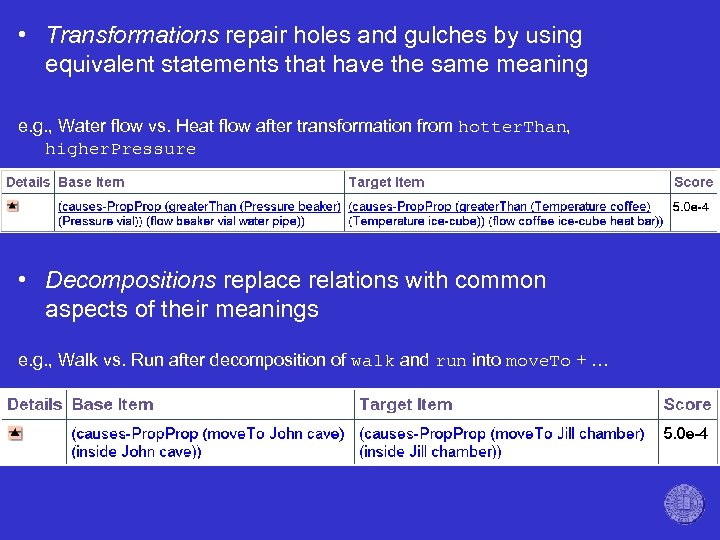

• Transformations repair holes and gulches by using equivalent statements that have the same meaning e. g. , Water flow vs. Heat flow after transformation from hotter. Than, higher. Pressure • Decompositions replace relations with common aspects of their meanings e. g. , Walk vs. Run after decomposition of walk and run into move. To + …

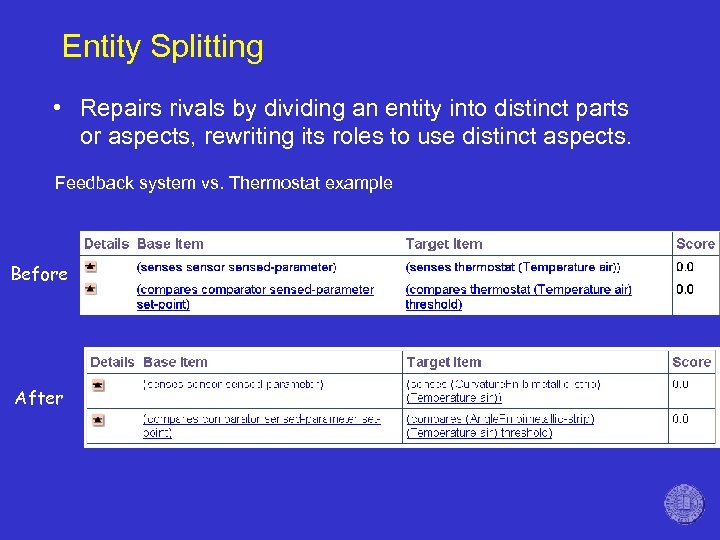

Entity Splitting • Repairs rivals by dividing an entity into distinct parts or aspects, rewriting its roles to use distinct aspects. Feedback system vs. Thermostat example Before After

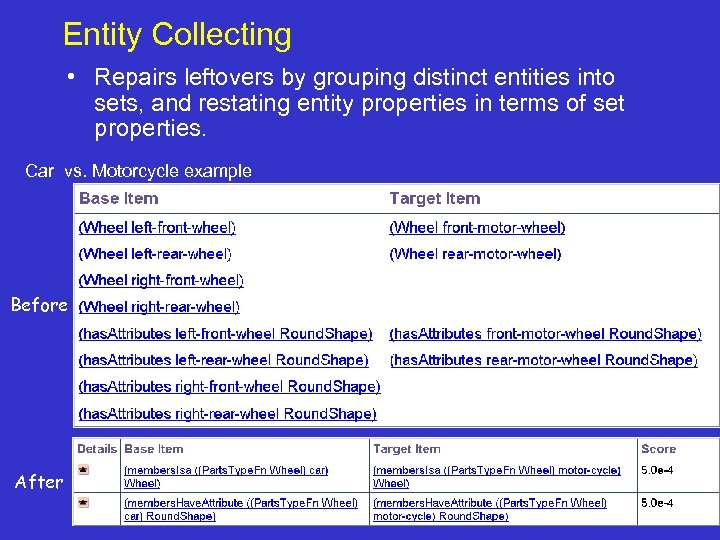

Entity Collecting • Repairs leftovers by grouping distinct entities into sets, and restating entity properties in terms of set properties. Car vs. Motorcycle example Before After

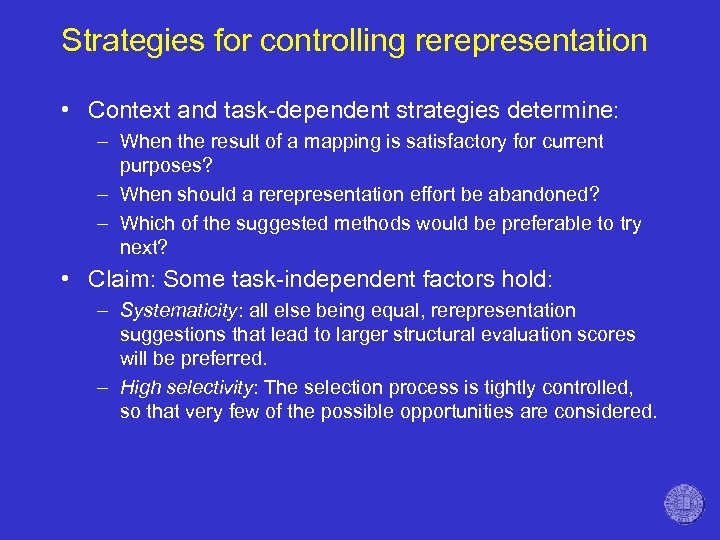

Strategies for controlling rerepresentation • Context and task-dependent strategies determine: – When the result of a mapping is satisfactory for current purposes? – When should a rerepresentation effort be abandoned? – Which of the suggested methods would be preferable to try next? • Claim: Some task-independent factors hold: – Systematicity: all else being equal, rerepresentation suggestions that lead to larger structural evaluation scores will be preferred. – High selectivity: The selection process is tightly controlled, so that very few of the possible opportunities are considered.

![Related Work: Rerepresentation • Most models of analogical matching (cf. IAM [Keane 1990], LISA Related Work: Rerepresentation • Most models of analogical matching (cf. IAM [Keane 1990], LISA](https://present5.com/presentation/1017e15e065fc491386419a7d95e863e/image-30.jpg)

Related Work: Rerepresentation • Most models of analogical matching (cf. IAM [Keane 1990], LISA [Hummel & Holyoak, 1997]) have never been used as components in larger simulations. – By contrast, SME has been used as a module in various larger simulations and performance systems. – SME has demonstrated the ability to work with descriptions created automatically from large-scalre knowledge bases created by others (cf. [Mostek et al 2000] [Forbus, 2001] [Forbus et al 2002]). • Copy. Cat [Hofstader & Mitchell, 1994] & Table. Top [French, 1995] combined matching with inference systems to construct representations. – The matchers in both systems were domain-specific. – In contrast SME is domain-independent. • AMBR [Kokinov, Petrov 2000] adapts the base and target representations during mapping by instantiating additional knowledge from its semantic and episodic memories. • Similar representation transformation methods are used in casebased reasoning systems that rely on structured representations (cf. [Kolodner, 1994] [Leake, 1996]).

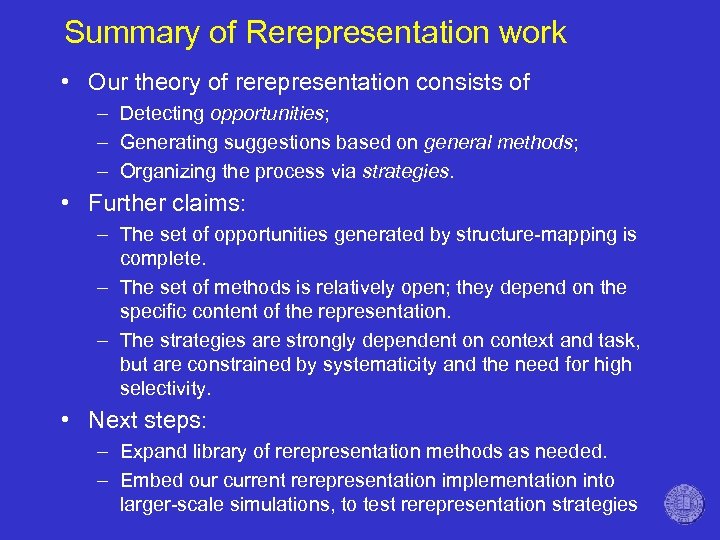

Summary of Rerepresentation work • Our theory of rerepresentation consists of – Detecting opportunities; – Generating suggestions based on general methods; – Organizing the process via strategies. • Further claims: – The set of opportunities generated by structure-mapping is complete. – The set of methods is relatively open; they depend on the specific content of the representation. – The strategies are strongly dependent on context and task, but are constrained by systematicity and the need for high selectivity. • Next steps: – Expand library of rerepresentation methods as needed. – Embed our current rerepresentation implementation into larger-scale simulations, to test rerepresentation strategies

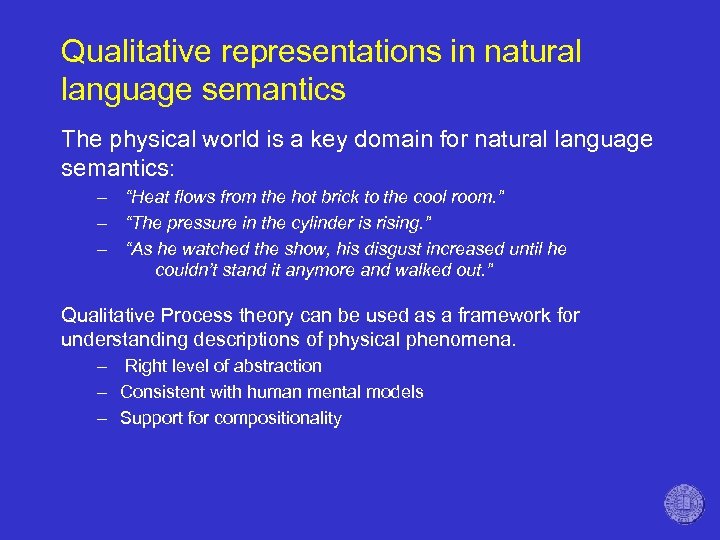

Qualitative representations in natural language semantics The physical world is a key domain for natural language semantics: – “Heat flows from the hot brick to the cool room. ” – “The pressure in the cylinder is rising. ” – “As he watched the show, his disgust increased until he couldn’t stand it anymore and walked out. ” Qualitative Process theory can be used as a framework for understanding descriptions of physical phenomena. – Right level of abstraction – Consistent with human mental models – Support for compositionality

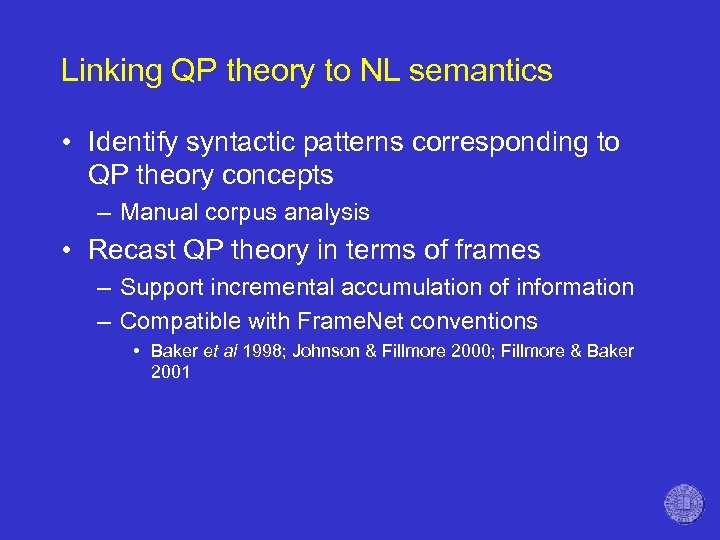

Linking QP theory to NL semantics • Identify syntactic patterns corresponding to QP theory concepts – Manual corpus analysis • Recast QP theory in terms of frames – Support incremental accumulation of information – Compatible with Frame. Net conventions • Baker et al 1998; Johnson & Fillmore 2000; Fillmore & Baker 2001

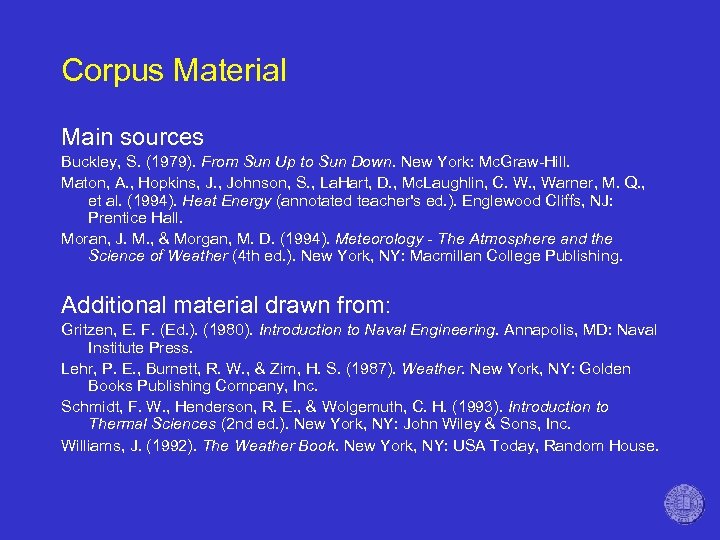

Corpus Material Main sources Buckley, S. (1979). From Sun Up to Sun Down. New York: Mc. Graw-Hill. Maton, A. , Hopkins, J. , Johnson, S. , La. Hart, D. , Mc. Laughlin, C. W. , Warner, M. Q. , et al. (1994). Heat Energy (annotated teacher's ed. ). Englewood Cliffs, NJ: Prentice Hall. Moran, J. M. , & Morgan, M. D. (1994). Meteorology - The Atmosphere and the Science of Weather (4 th ed. ). New York, NY: Macmillan College Publishing. Additional material drawn from: Gritzen, E. F. (Ed. ). (1980). Introduction to Naval Engineering. Annapolis, MD: Naval Institute Press. Lehr, P. E. , Burnett, R. W. , & Zim, H. S. (1987). Weather. New York, NY: Golden Books Publishing Company, Inc. Schmidt, F. W. , Henderson, R. E. , & Wolgemuth, C. H. (1993). Introduction to Thermal Sciences (2 nd ed. ). New York, NY: John Wiley & Sons, Inc. Williams, J. (1992). The Weather Book. New York, NY: USA Today, Random House.

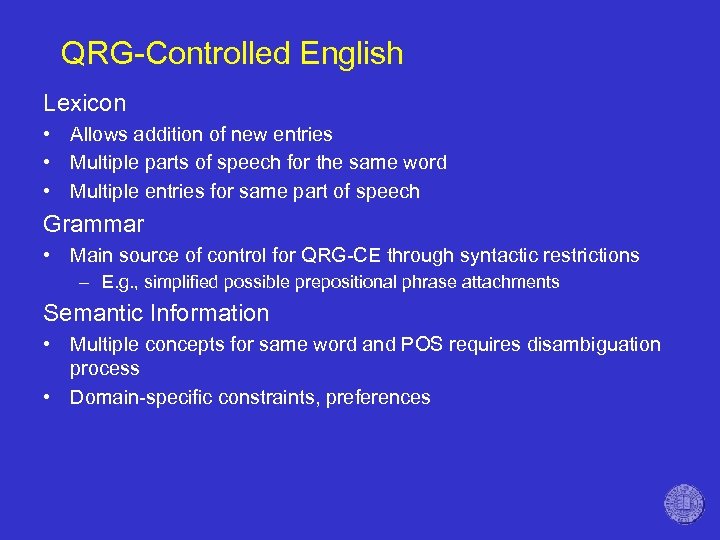

QRG-Controlled English Lexicon • Allows addition of new entries • Multiple parts of speech for the same word • Multiple entries for same part of speech Grammar • Main source of control for QRG-CE through syntactic restrictions – E. g. , simplified possible prepositional phrase attachments Semantic Information • Multiple concepts for same word and POS requires disambiguation process • Domain-specific constraints, preferences

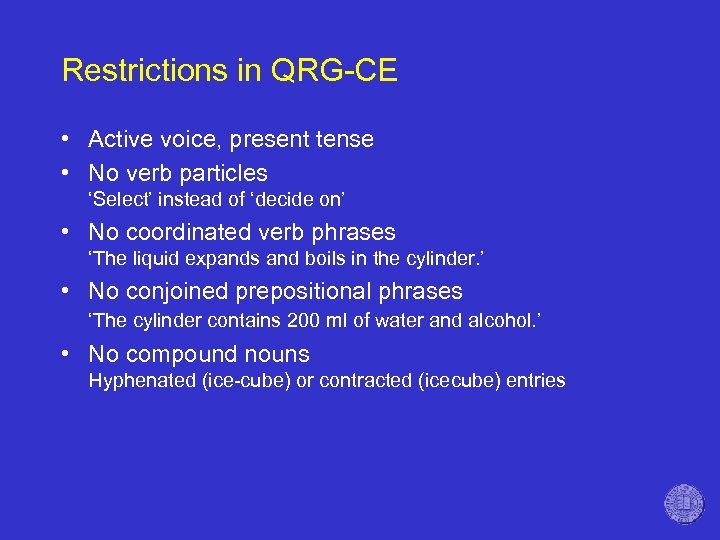

Restrictions in QRG-CE • Active voice, present tense • No verb particles ‘Select’ instead of ‘decide on’ • No coordinated verb phrases ‘The liquid expands and boils in the cylinder. ’ • No conjoined prepositional phrases ‘The cylinder contains 200 ml of water and alcohol. ’ • No compound nouns Hyphenated (ice-cube) or contracted (icecube) entries

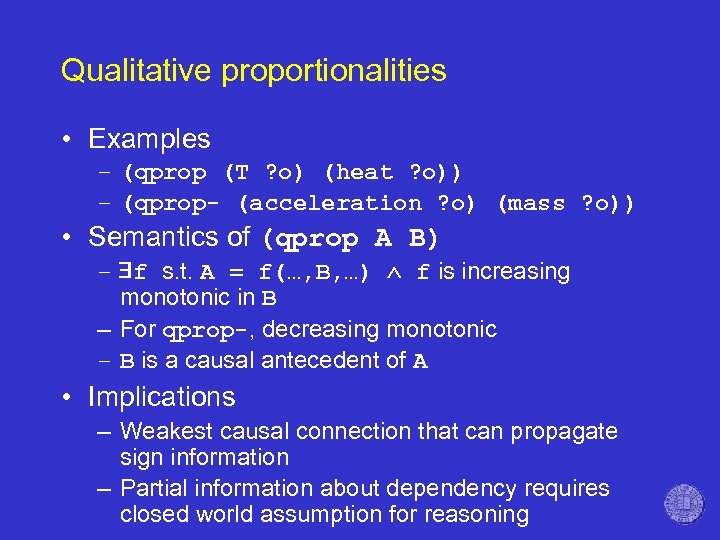

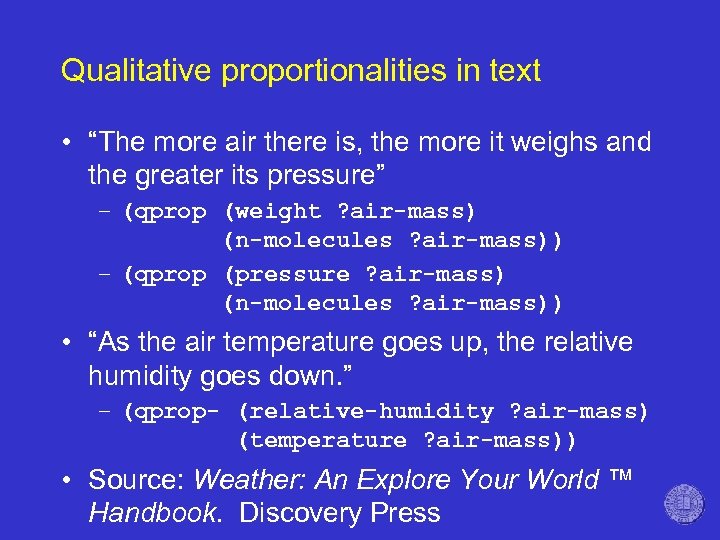

Qualitative proportionalities • Examples – (qprop (T ? o) (heat ? o)) – (qprop- (acceleration ? o) (mass ? o)) • Semantics of (qprop A B) – f s. t. A = f(…, B, …) f is increasing monotonic in B – For qprop-, decreasing monotonic – B is a causal antecedent of A • Implications – Weakest causal connection that can propagate sign information – Partial information about dependency requires closed world assumption for reasoning

Qualitative proportionalities in text • “The more air there is, the more it weighs and the greater its pressure” – (qprop (weight ? air-mass) (n-molecules ? air-mass)) – (qprop (pressure ? air-mass) (n-molecules ? air-mass)) • “As the air temperature goes up, the relative humidity goes down. ” – (qprop- (relative-humidity ? air-mass) (temperature ? air-mass)) • Source: Weather: An Explore Your World ™ Handbook. Discovery Press

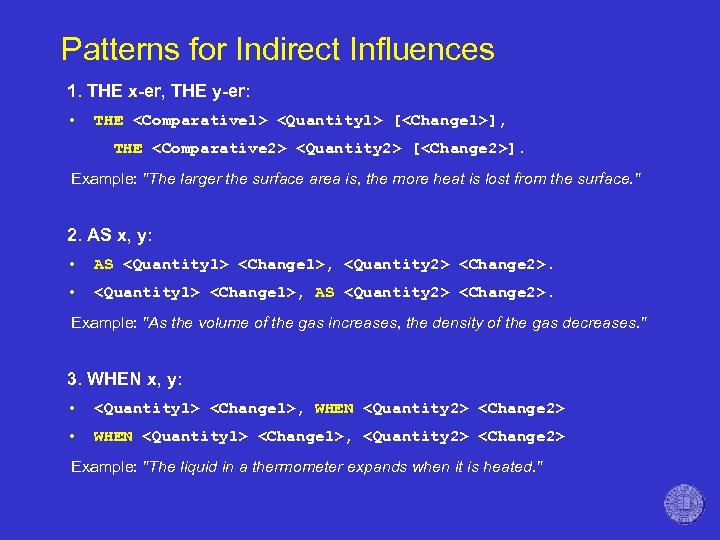

Patterns for Indirect Influences 1. THE x-er, THE y-er: • THE <Comparative 1> <Quantity 1> [<Change 1>], THE <Comparative 2> <Quantity 2> [<Change 2>]. Example: "The larger the surface area is, the more heat is lost from the surface. " 2. AS x, y: • AS <Quantity 1> <Change 1>, <Quantity 2> <Change 2>. • <Quantity 1> <Change 1>, AS <Quantity 2> <Change 2>. Example: "As the volume of the gas increases, the density of the gas decreases. " 3. WHEN x, y: • <Quantity 1> <Change 1>, WHEN <Quantity 2> <Change 2> • WHEN <Quantity 1> <Change 1>, <Quantity 2> <Change 2> Example: "The liquid in a thermometer expands when it is heated. "

![Patterns for Indirect Influences (cont’d) 4. Verb-based Patterns: • <Quantity 1> [<Entity 1>] DEPENDS Patterns for Indirect Influences (cont’d) 4. Verb-based Patterns: • <Quantity 1> [<Entity 1>] DEPENDS](https://present5.com/presentation/1017e15e065fc491386419a7d95e863e/image-40.jpg)

Patterns for Indirect Influences (cont’d) 4. Verb-based Patterns: • <Quantity 1> [<Entity 1>] DEPENDS ON <Quantity 2> [<Entity 2>] Example: "The amount of heat produced depends on the amount of motion". • <Quantity 1> [Sign] AFFECTS <Quantity 2> • <Quantity 1> AFFECTS <Quantity 2> [Sign] Example: "The area of the path affects the volume flow rate. " • <Quantity 1> [Sign] INFLUENCES <Quantity 2> • <Quantity 1> INFLUENCES <Quantity 2> [Sign] Example: "The speed of the airflow affects how quickly the heat flows. " • <Change 1> <Quantity 1> CAUSES <Change 2> <Quantity 2> Example: "Heat gain causes air temperature to rise. "

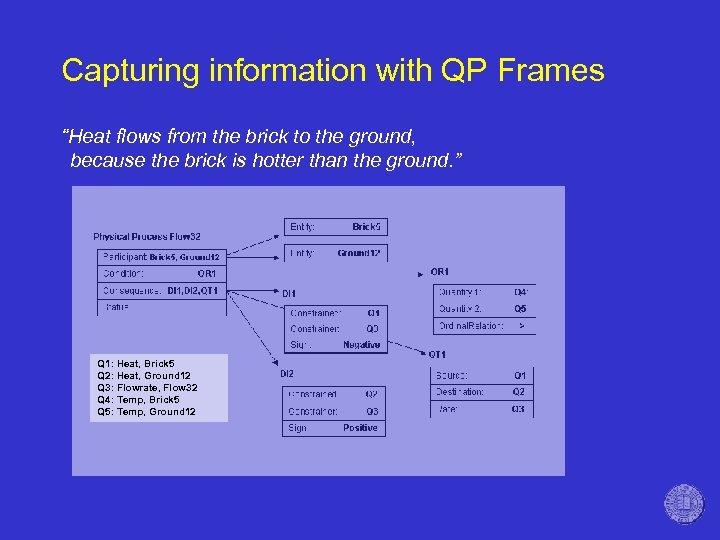

Capturing information with QP Frames “Heat flows from the brick to the ground, because the brick is hotter than the ground. ” Q 1: Heat, Brick 5 Q 2: Heat, Ground 12 Q 3: Flowrate, Flow 32 Q 4: Temp, Brick 5 Q 5: Temp, Ground 12

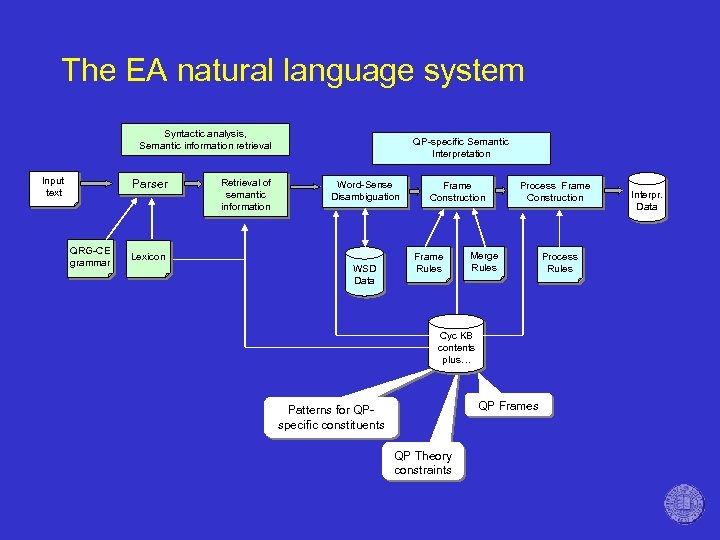

The EA natural language system Syntactic analysis, Semantic information retrieval Input text Parser QRG-CE grammar Retrieval of semantic information QP-specific Semantic Interpretation Word-Sense Disambiguation Lexicon WSD Data Frame Construction Frame Rules Process Frame Construction Merge Rules Cyc KB contents plus… QP Frames Patterns for QPspecific constituents QP Theory constraints Process Rules Interpr. Data

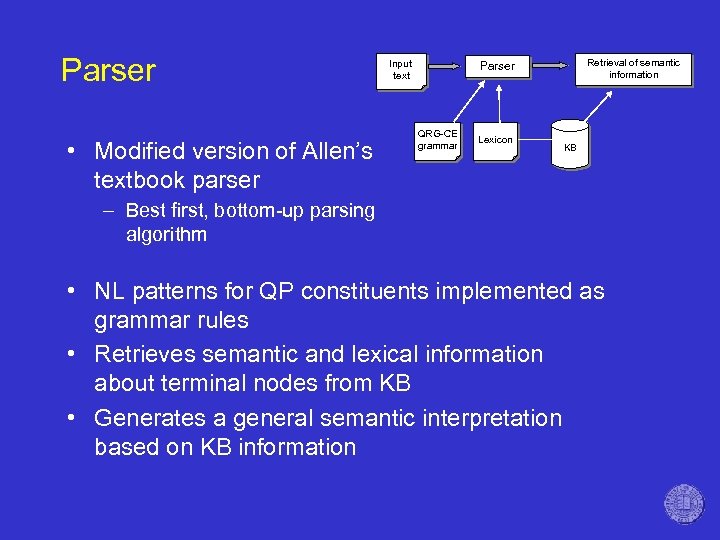

Parser • Modified version of Allen’s textbook parser Input text Retrieval of semantic information Parser QRG-CE grammar Lexicon KB – Best first, bottom-up parsing algorithm • NL patterns for QP constituents implemented as grammar rules • Retrieves semantic and lexical information about terminal nodes from KB • Generates a general semantic interpretation based on KB information

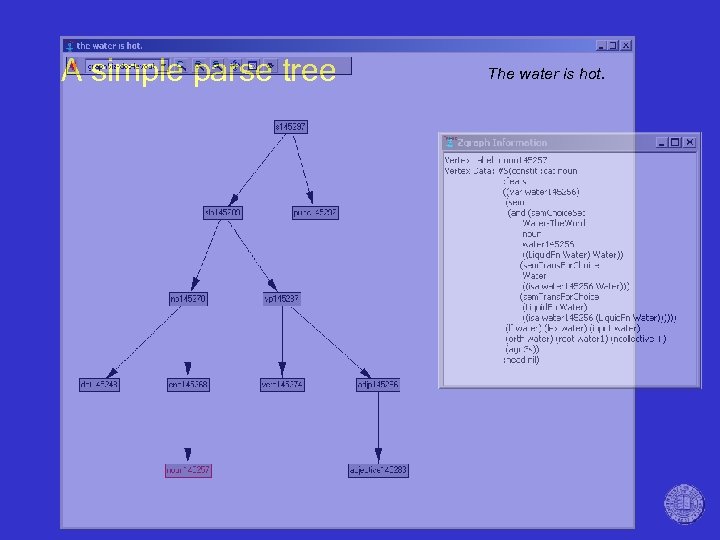

A simple parse tree The water is hot.

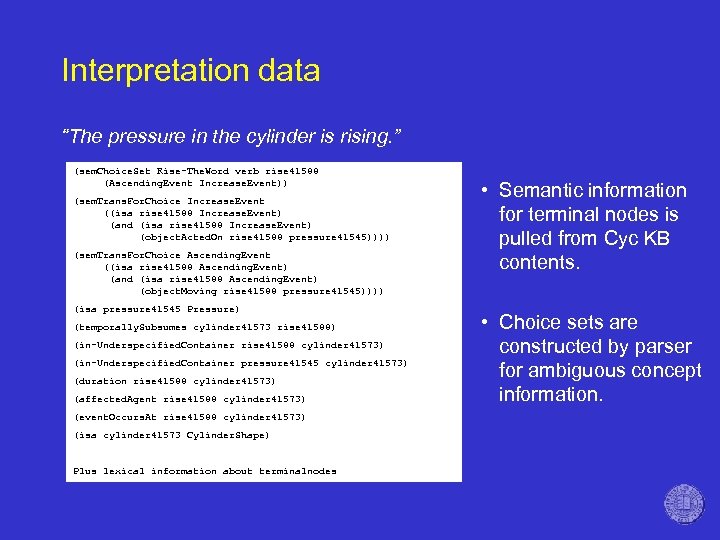

Interpretation data “The pressure in the cylinder is rising. ” (sem. Choice. Set Rise-The. Word verb rise 41588 (Ascending. Event Increase. Event)) (sem. Trans. For. Choice Increase. Event ((isa rise 41588 Increase. Event) (and (isa rise 41588 Increase. Event) (object. Acted. On rise 41588 pressure 41545)))) (sem. Trans. For. Choice Ascending. Event ((isa rise 41588 Ascending. Event) (and (isa rise 41588 Ascending. Event) (object. Moving rise 41588 pressure 41545)))) (isa pressure 41545 Pressure) (temporally. Subsumes cylinder 41573 rise 41588) (in-Underspecified. Container rise 41588 cylinder 41573) (in-Underspecified. Container pressure 41545 cylinder 41573) (duration rise 41588 cylinder 41573) (affected. Agent rise 41588 cylinder 41573) (event. Occurs. At rise 41588 cylinder 41573) (isa cylinder 41573 Cylinder. Shape) Plus lexical information about terminalnodes • Semantic information for terminal nodes is pulled from Cyc KB contents. • Choice sets are constructed by parser for ambiguous concept information.

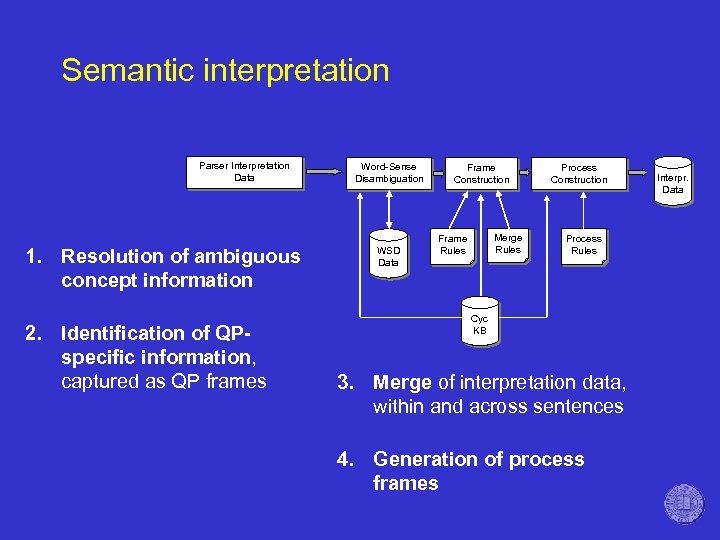

Semantic interpretation Parser Interpretation Data 1. Resolution of ambiguous concept information 2. Identification of QPspecific information, captured as QP frames Word-Sense Disambiguation WSD Data Frame Construction Merge Rules Frame Rules Process Construction Process Rules Cyc KB 3. Merge of interpretation data, within and across sentences 4. Generation of process frames Interpr. Data

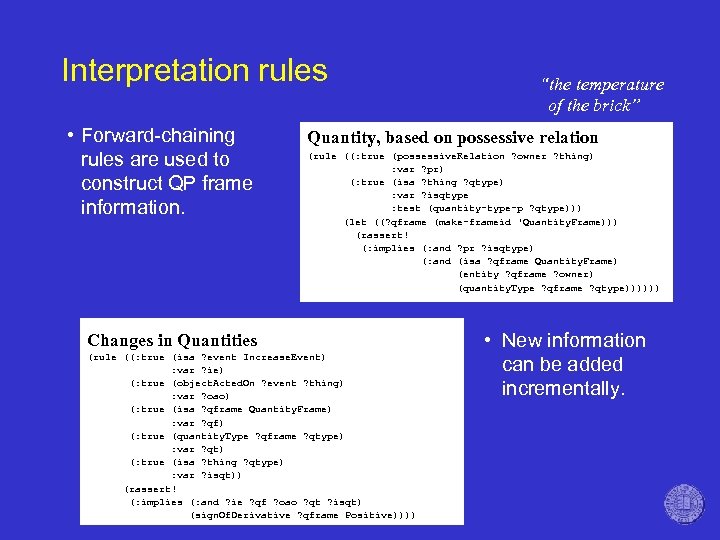

Interpretation rules • Forward-chaining rules are used to construct QP frame information. “the temperature of the brick” Quantity, based on possessive relation (rule ((: true (possessive. Relation ? owner ? thing) : var ? pr) (: true (isa ? thing ? qtype) : var ? isqtype : test (quantity-type-p ? qtype))) (let ((? qframe (make-frameid 'Quantity. Frame))) (rassert! (: implies (: and ? pr ? isqtype) (: and (isa ? qframe Quantity. Frame) (entity ? qframe ? owner) (quantity. Type ? qframe ? qtype)))))) Changes in Quantities (rule ((: true (isa ? event Increase. Event) : var ? ie) (: true (object. Acted. On ? event ? thing) : var ? oao) (: true (isa ? qframe Quantity. Frame) : var ? qf) (: true (quantity. Type ? qframe ? qtype) : var ? qt) (: true (isa ? thing ? qtype) : var ? isqt)) (rassert! (: implies (: and ? ie ? qf ? oao ? qt ? isqt) (sign. Of. Derivative ? qframe Positive)))) • New information can be added incrementally.

Examples • The system has been tested on a dozen paragraph-sized descriptions of heat and water flow scenarios. • Descriptions were based on corpus material, rewritten in controlled language.

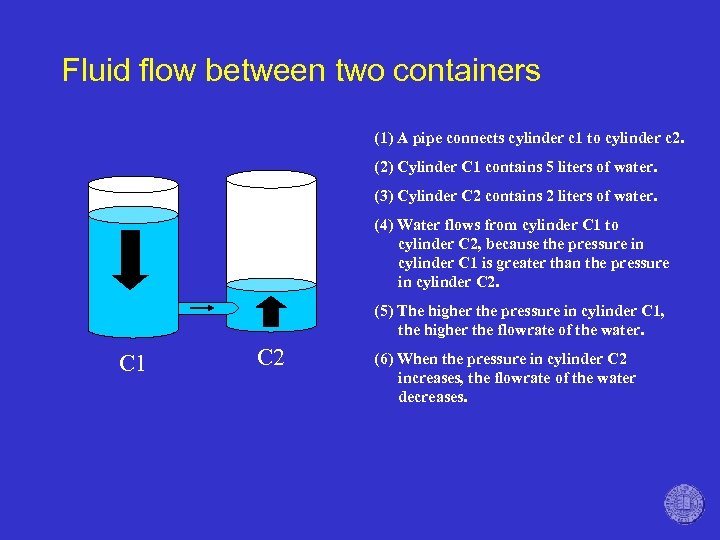

Fluid flow between two containers (1) A pipe connects cylinder c 1 to cylinder c 2. (2) Cylinder C 1 contains 5 liters of water. (3) Cylinder C 2 contains 2 liters of water. (4) Water flows from cylinder C 1 to cylinder C 2, because the pressure in cylinder C 1 is greater than the pressure in cylinder C 2. (5) The higher the pressure in cylinder C 1, the higher the flowrate of the water. C 1 C 2 (6) When the pressure in cylinder C 2 increases, the flowrate of the water decreases.

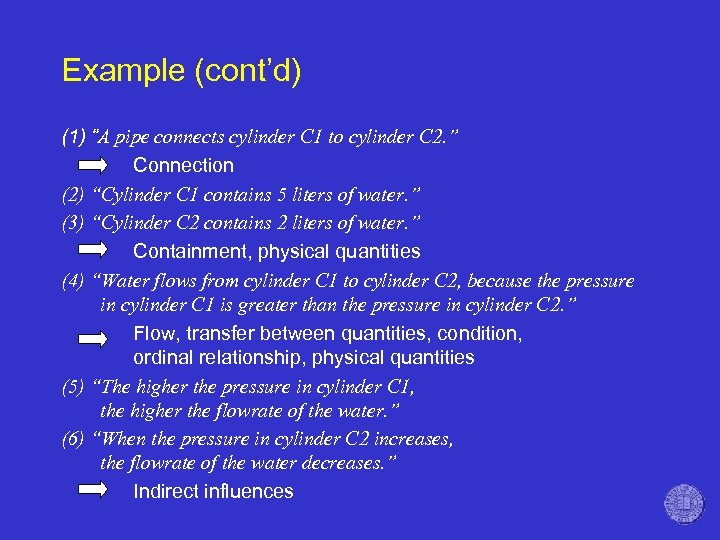

Example (cont’d) (1) “A pipe connects cylinder C 1 to cylinder C 2. ” Connection (2) “Cylinder C 1 contains 5 liters of water. ” (3) “Cylinder C 2 contains 2 liters of water. ” Containment, physical quantities (4) “Water flows from cylinder C 1 to cylinder C 2, because the pressure in cylinder C 1 is greater than the pressure in cylinder C 2. ” Flow, transfer between quantities, condition, ordinal relationship, physical quantities (5) “The higher the pressure in cylinder C 1, the higher the flowrate of the water. ” (6) “When the pressure in cylinder C 2 increases, the flowrate of the water decreases. ” Indirect influences

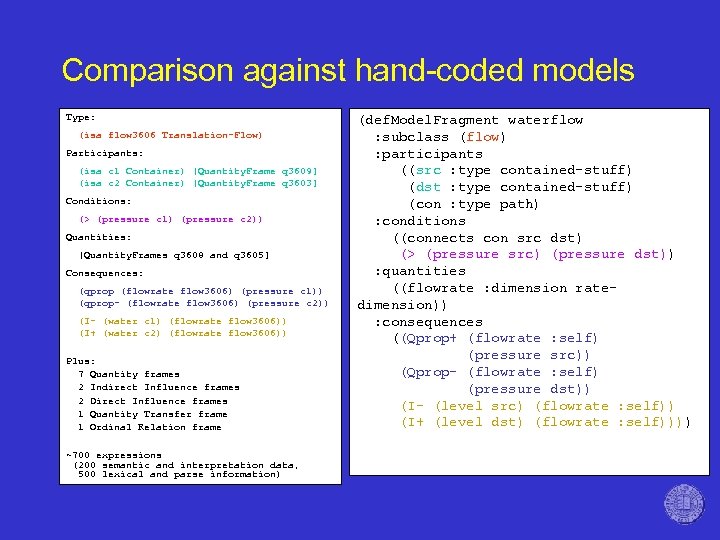

Comparison against hand-coded models Type: (isa flow 3606 Translation-Flow) Participants: (isa c 1 Container) [Quantity. Frame q 3609] (isa c 2 Container) [Quantity. Frame q 3603] Conditions: (> (pressure c 1) (pressure c 2)) Quantities: [Quantity. Frames q 3608 and q 3605] Consequences: (qprop (flowrate flow 3606) (pressure c 1)) (qprop- (flowrate flow 3606) (pressure c 2)) (I- (water c 1) (flowrate flow 3606)) (I+ (water c 2) (flowrate flow 3606)) Plus: 7 Quantity frames 2 Indirect Influence frames 2 Direct Influence frames 1 Quantity Transfer frame 1 Ordinal Relation frame ~700 expressions (200 semantic and interpretation data, 500 lexical and parse information) (def. Model. Fragment waterflow : subclass (flow) : participants ((src : type contained-stuff) (dst : type contained-stuff) (con : type path) : conditions ((connects con src dst) (> (pressure src) (pressure dst)) : quantities ((flowrate : dimension ratedimension)) : consequences ((Qprop+ (flowrate : self) (pressure src)) (Qprop- (flowrate : self) (pressure dst)) (I- (level src) (flowrate : self)) (I+ (level dst) (flowrate : self))))

Related work • Deep semantic NL approaches – Systems of the Yale school (Schank, Riesbeck, …) – KANT (Mitamura, Nyberg) – TANKA (Barker, Delisle) • IE approaches – Use of extraction patterns (FASTUS, Auto. SLOG, etc. ) – Automated discovery for extraction patterns (Grishman & Yangarber)

Many limitations to be overcome • Limited to single paragraph about an occurrence of a physical process – No past or future tense – No descriptions of sequences of qualitative states – Only coreference via explicitly named entities (e. g. , C 1) – No way to state general principles or laws – Doesn’t handle function or teleology – Doesn’t handle spatial language

Future Work • Eliminate the limitations • Develop knowledge assimilation strategies for accumulating domain knowledge across multiple textual inputs – Conjecture: Rerepresentation will play central role • Use Bo. TE reasoning to help with semantic interpretation – “The potato is on the ship. It weighs 16 tons. ” • Extend coverage via machine-assisted corpus analyses • Learn a physical domain by reading about it

Acknowledgements • Praveen Paritosh’s Bo. TE work and Sven Kuehne’s NLP work is supported by the Artificial Intelligence Program of the Office of Naval Research • Jin Yan’s rerepresentation work is supported by the Cognitive Science Program of the Office of Naval Research

1017e15e065fc491386419a7d95e863e.ppt