2e53a3386ae3feb4dcf979aa9aa30476.ppt

- Количество слайдов: 18

State of ESMF Cecelia De. Luca NOAA ESRL/University of Colorado ESMF Executive Board/Interagency Meeting June 12, 2014

State of ESMF Cecelia De. Luca NOAA ESRL/University of Colorado ESMF Executive Board/Interagency Meeting June 12, 2014

Common Model Infrastructure Community reports and papers calling for common modeling infrastructure in the Earth System sciences span more than a decade, for example: • NRC 1998: Capacity of U. S. Climate Modeling to Support Climate Change Assessment Activities • NRC 2001: Improving the Effectiveness of U. S. Climate Modeling • Dickinson et al 2002: How Can We Advance Our Weather and Climate Models as a Community? • NRC 2012: A National Strategy for Advancing Climate Modeling Goals: • Foster collaborative model development and knowledge transfer • Lessen redundant code development • Improve available infrastructure capabilities • Support controlled experimentation • Enable the creation of flexible ensembles for research and prediction

Common Model Infrastructure Community reports and papers calling for common modeling infrastructure in the Earth System sciences span more than a decade, for example: • NRC 1998: Capacity of U. S. Climate Modeling to Support Climate Change Assessment Activities • NRC 2001: Improving the Effectiveness of U. S. Climate Modeling • Dickinson et al 2002: How Can We Advance Our Weather and Climate Models as a Community? • NRC 2012: A National Strategy for Advancing Climate Modeling Goals: • Foster collaborative model development and knowledge transfer • Lessen redundant code development • Improve available infrastructure capabilities • Support controlled experimentation • Enable the creation of flexible ensembles for research and prediction

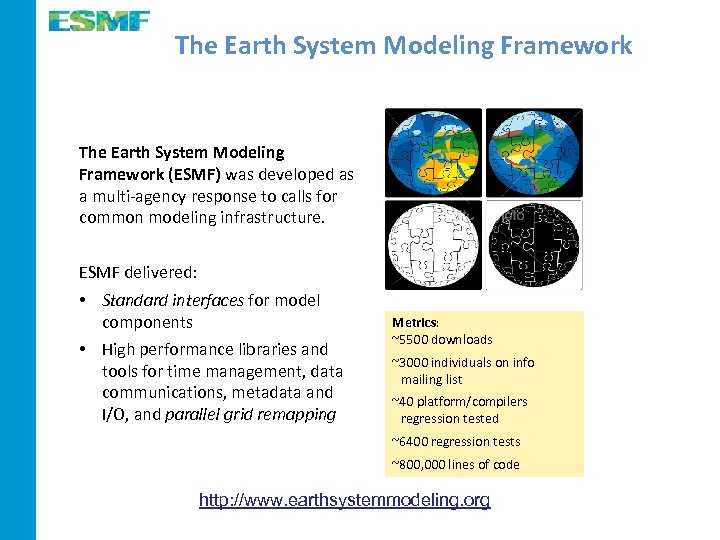

The Earth System Modeling Framework (ESMF) was developed as a multi-agency response to calls for common modeling infrastructure. ESMF delivered: • Standard interfaces for model components • High performance libraries and tools for time management, data communications, metadata and I/O, and parallel grid remapping Metrics: ~5500 downloads ~3000 individuals on info mailing list ~40 platform/compilers regression tested ~6400 regression tests ~800, 000 lines of code http: //www. earthsystemmodeling. org

The Earth System Modeling Framework (ESMF) was developed as a multi-agency response to calls for common modeling infrastructure. ESMF delivered: • Standard interfaces for model components • High performance libraries and tools for time management, data communications, metadata and I/O, and parallel grid remapping Metrics: ~5500 downloads ~3000 individuals on info mailing list ~40 platform/compilers regression tested ~6400 regression tests ~800, 000 lines of code http: //www. earthsystemmodeling. org

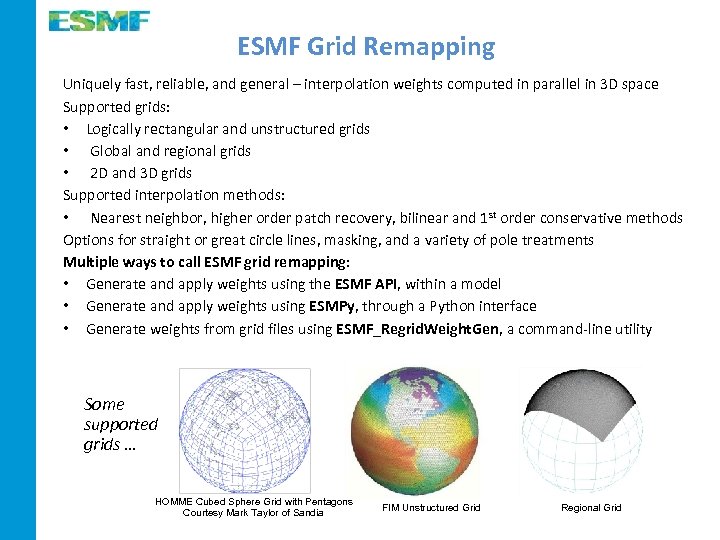

ESMF Grid Remapping Uniquely fast, reliable, and general – interpolation weights computed in parallel in 3 D space Supported grids: • Logically rectangular and unstructured grids • Global and regional grids • 2 D and 3 D grids Supported interpolation methods: • Nearest neighbor, higher order patch recovery, bilinear and 1 st order conservative methods Options for straight or great circle lines, masking, and a variety of pole treatments Multiple ways to call ESMF grid remapping: • Generate and apply weights using the ESMF API, within a model • Generate and apply weights using ESMPy, through a Python interface • Generate weights from grid files using ESMF_Regrid. Weight. Gen, a command-line utility Some supported grids … HOMME Cubed Sphere Grid with Pentagons Courtesy Mark Taylor of Sandia FIM Unstructured Grid Regional Grid

ESMF Grid Remapping Uniquely fast, reliable, and general – interpolation weights computed in parallel in 3 D space Supported grids: • Logically rectangular and unstructured grids • Global and regional grids • 2 D and 3 D grids Supported interpolation methods: • Nearest neighbor, higher order patch recovery, bilinear and 1 st order conservative methods Options for straight or great circle lines, masking, and a variety of pole treatments Multiple ways to call ESMF grid remapping: • Generate and apply weights using the ESMF API, within a model • Generate and apply weights using ESMPy, through a Python interface • Generate weights from grid files using ESMF_Regrid. Weight. Gen, a command-line utility Some supported grids … HOMME Cubed Sphere Grid with Pentagons Courtesy Mark Taylor of Sandia FIM Unstructured Grid Regional Grid

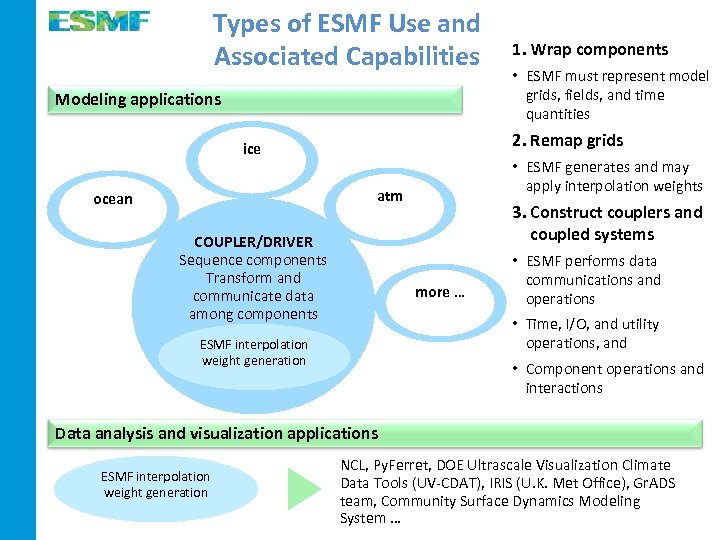

Types of ESMF Use and Associated Capabilities Modeling applications • ESMF must represent model grids, fields, and time quantities 2. Remap grids ice • ESMF generates and may apply interpolation weights atm ocean 1. Wrap components COUPLER/DRIVER Sequence components Transform and communicate data among components 3. Construct couplers and coupled systems more … • ESMF performs data communications and operations • Time, I/O, and utility operations, and ESMF interpolation weight generation • Component operations and interactions Data analysis and visualization applications ESMF interpolation weight generation NCL, Py. Ferret, DOE Ultrascale Visualization Climate Data Tools (UV-CDAT), IRIS (U. K. Met Office), Gr. ADS team, Community Surface Dynamics Modeling System …

Types of ESMF Use and Associated Capabilities Modeling applications • ESMF must represent model grids, fields, and time quantities 2. Remap grids ice • ESMF generates and may apply interpolation weights atm ocean 1. Wrap components COUPLER/DRIVER Sequence components Transform and communicate data among components 3. Construct couplers and coupled systems more … • ESMF performs data communications and operations • Time, I/O, and utility operations, and ESMF interpolation weight generation • Component operations and interactions Data analysis and visualization applications ESMF interpolation weight generation NCL, Py. Ferret, DOE Ultrascale Visualization Climate Data Tools (UV-CDAT), IRIS (U. K. Met Office), Gr. ADS team, Community Surface Dynamics Modeling System …

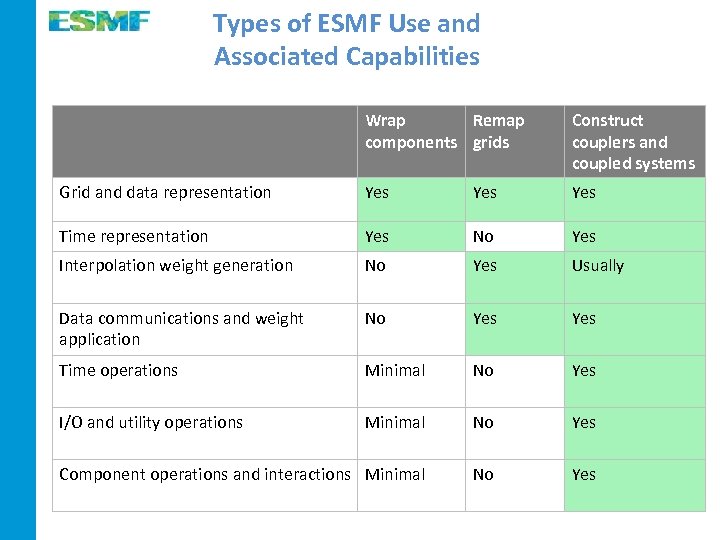

Types of ESMF Use and Associated Capabilities Wrap Remap components grids Construct couplers and coupled systems Grid and data representation Yes Yes Time representation Yes No Yes Interpolation weight generation No Yes Usually Data communications and weight application No Yes Time operations Minimal No Yes I/O and utility operations Minimal No Yes Component operations and interactions Minimal No Yes

Types of ESMF Use and Associated Capabilities Wrap Remap components grids Construct couplers and coupled systems Grid and data representation Yes Yes Time representation Yes No Yes Interpolation weight generation No Yes Usually Data communications and weight application No Yes Time operations Minimal No Yes I/O and utility operations Minimal No Yes Component operations and interactions Minimal No Yes

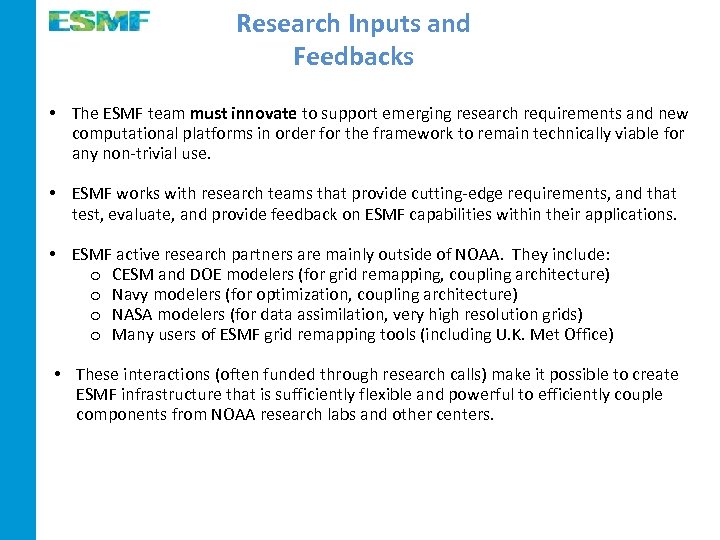

Research Inputs and Feedbacks • The ESMF team must innovate to support emerging research requirements and new computational platforms in order for the framework to remain technically viable for any non-trivial use. • ESMF works with research teams that provide cutting-edge requirements, and that test, evaluate, and provide feedback on ESMF capabilities within their applications. • ESMF active research partners are mainly outside of NOAA. They include: o CESM and DOE modelers (for grid remapping, coupling architecture) o Navy modelers (for optimization, coupling architecture) o NASA modelers (for data assimilation, very high resolution grids) o Many users of ESMF grid remapping tools (including U. K. Met Office) • These interactions (often funded through research calls) make it possible to create ESMF infrastructure that is sufficiently flexible and powerful to efficiently couple components from NOAA research labs and other centers.

Research Inputs and Feedbacks • The ESMF team must innovate to support emerging research requirements and new computational platforms in order for the framework to remain technically viable for any non-trivial use. • ESMF works with research teams that provide cutting-edge requirements, and that test, evaluate, and provide feedback on ESMF capabilities within their applications. • ESMF active research partners are mainly outside of NOAA. They include: o CESM and DOE modelers (for grid remapping, coupling architecture) o Navy modelers (for optimization, coupling architecture) o NASA modelers (for data assimilation, very high resolution grids) o Many users of ESMF grid remapping tools (including U. K. Met Office) • These interactions (often funded through research calls) make it possible to create ESMF infrastructure that is sufficiently flexible and powerful to efficiently couple components from NOAA research labs and other centers.

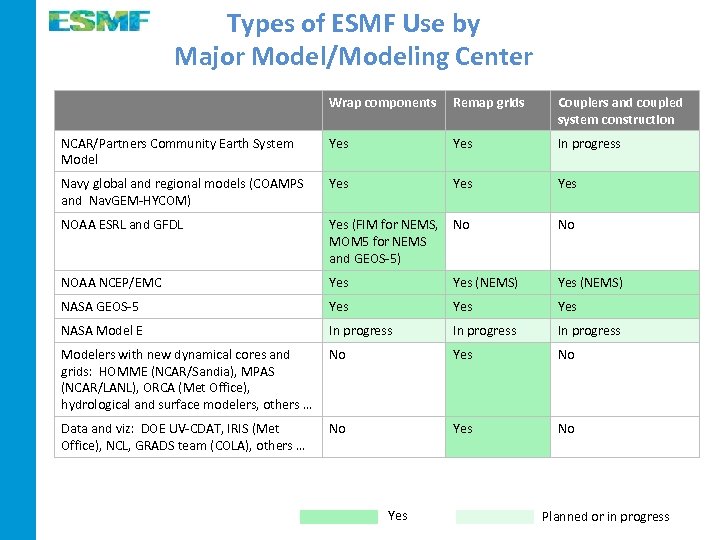

Types of ESMF Use by Major Model/Modeling Center Wrap components Remap grids Couplers and coupled system construction NCAR/Partners Community Earth System Model Yes In progress Navy global and regional models (COAMPS and Nav. GEM-HYCOM) Yes Yes NOAA ESRL and GFDL Yes (FIM for NEMS, MOM 5 for NEMS and GEOS-5) No No NOAA NCEP/EMC Yes (NEMS) NASA GEOS-5 Yes Yes NASA Model E In progress Modelers with new dynamical cores and grids: HOMME (NCAR/Sandia), MPAS (NCAR/LANL), ORCA (Met Office), hydrological and surface modelers, others … No Yes No Data and viz: DOE UV-CDAT, IRIS (Met Office), NCL, GRADS team (COLA), others … No Yes Planned or in progress

Types of ESMF Use by Major Model/Modeling Center Wrap components Remap grids Couplers and coupled system construction NCAR/Partners Community Earth System Model Yes In progress Navy global and regional models (COAMPS and Nav. GEM-HYCOM) Yes Yes NOAA ESRL and GFDL Yes (FIM for NEMS, MOM 5 for NEMS and GEOS-5) No No NOAA NCEP/EMC Yes (NEMS) NASA GEOS-5 Yes Yes NASA Model E In progress Modelers with new dynamical cores and grids: HOMME (NCAR/Sandia), MPAS (NCAR/LANL), ORCA (Met Office), hydrological and surface modelers, others … No Yes No Data and viz: DOE UV-CDAT, IRIS (Met Office), NCL, GRADS team (COLA), others … No Yes Planned or in progress

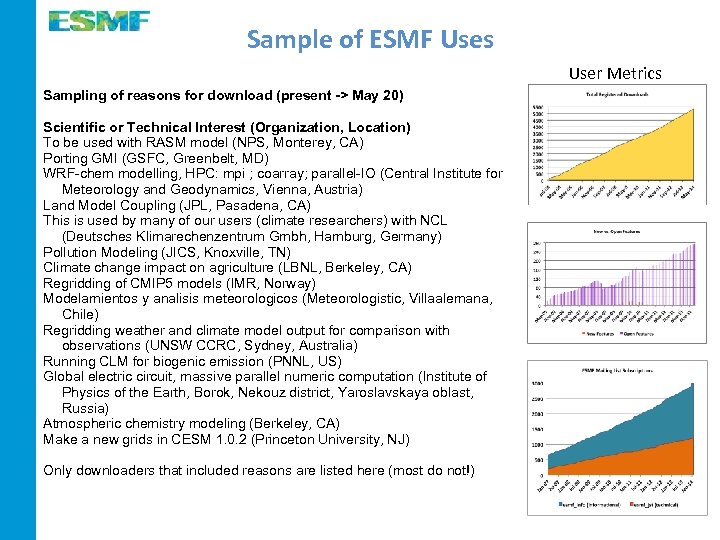

Sample of ESMF Uses User Metrics Sampling of reasons for download (present -> May 20) Scientific or Technical Interest (Organization, Location) To be used with RASM model (NPS, Monterey, CA) Porting GMI (GSFC, Greenbelt, MD) WRF-chem modelling, HPC: mpi ; coarray; parallel-IO (Central Institute for Meteorology and Geodynamics, Vienna, Austria) Land Model Coupling (JPL, Pasadena, CA) This is used by many of our users (climate researchers) with NCL (Deutsches Klimarechenzentrum Gmbh, Hamburg, Germany) Pollution Modeling (JICS, Knoxville, TN) Climate change impact on agriculture (LBNL, Berkeley, CA) Regridding of CMIP 5 models (IMR, Norway) Modelamientos y analisis meteorologicos (Meteorologistic, Villaalemana, Chile) Regridding weather and climate model output for comparison with observations (UNSW CCRC, Sydney, Australia) Running CLM for biogenic emission (PNNL, US) Global electric circuit, massive parallel numeric computation (Institute of Physics of the Earth, Borok, Nekouz district, Yaroslavskaya oblast, Russia) Atmospheric chemistry modeling (Berkeley, CA) Make a new grids in CESM 1. 0. 2 (Princeton University, NJ) Only downloaders that included reasons are listed here (most do not!)

Sample of ESMF Uses User Metrics Sampling of reasons for download (present -> May 20) Scientific or Technical Interest (Organization, Location) To be used with RASM model (NPS, Monterey, CA) Porting GMI (GSFC, Greenbelt, MD) WRF-chem modelling, HPC: mpi ; coarray; parallel-IO (Central Institute for Meteorology and Geodynamics, Vienna, Austria) Land Model Coupling (JPL, Pasadena, CA) This is used by many of our users (climate researchers) with NCL (Deutsches Klimarechenzentrum Gmbh, Hamburg, Germany) Pollution Modeling (JICS, Knoxville, TN) Climate change impact on agriculture (LBNL, Berkeley, CA) Regridding of CMIP 5 models (IMR, Norway) Modelamientos y analisis meteorologicos (Meteorologistic, Villaalemana, Chile) Regridding weather and climate model output for comparison with observations (UNSW CCRC, Sydney, Australia) Running CLM for biogenic emission (PNNL, US) Global electric circuit, massive parallel numeric computation (Institute of Physics of the Earth, Borok, Nekouz district, Yaroslavskaya oblast, Russia) Atmospheric chemistry modeling (Berkeley, CA) Make a new grids in CESM 1. 0. 2 (Princeton University, NJ) Only downloaders that included reasons are listed here (most do not!)

ESMF Public Release 6. 3. 0 r: Jan 2014 Many features and improvements were added since the last public release, including: • Grids that have elements with an arbitrary number of sides can be represented and remapped in parallel during model run Requested by U. K. Met Office modelers and others to support during-run grid remapping of model components on icosahedral and pentagonal-hexagonal grids • Generate dual grids so that non-conservative remapping methods can be performed on grids that are defined on cell centers (already supported on corners) Requested by NASA GMAO modelers and others as a convenience • Add parallel nearest neighbor interpolation methods Requested by the Community Earth System Model group to support downscaling • Users can select between great circle and Cartesian line paths when calculating interpolation weights on a sphere Requested by NRL radar apps and NOAA SWPC for accurate grid remapping involving the long, narrow grid cells in flux tubes, and others • Component interfaces support fault tolerance through a user-controlled “timeout” mechanism Requested by NCEP EMC to support fault tolerance in ensembles

ESMF Public Release 6. 3. 0 r: Jan 2014 Many features and improvements were added since the last public release, including: • Grids that have elements with an arbitrary number of sides can be represented and remapped in parallel during model run Requested by U. K. Met Office modelers and others to support during-run grid remapping of model components on icosahedral and pentagonal-hexagonal grids • Generate dual grids so that non-conservative remapping methods can be performed on grids that are defined on cell centers (already supported on corners) Requested by NASA GMAO modelers and others as a convenience • Add parallel nearest neighbor interpolation methods Requested by the Community Earth System Model group to support downscaling • Users can select between great circle and Cartesian line paths when calculating interpolation weights on a sphere Requested by NRL radar apps and NOAA SWPC for accurate grid remapping involving the long, narrow grid cells in flux tubes, and others • Component interfaces support fault tolerance through a user-controlled “timeout” mechanism Requested by NCEP EMC to support fault tolerance in ensembles

Coming Next in ESMF and NUOPC • Extend ESMF data classes to recognize accelerators and extend component classes to negotiate for resources Advance toward a long-term goal of more automated and optimized mapping of multi -component models to hardware platforms • Integrate the MOAB finite element mesh library into ESMF and compare and potentially replace ESMF’s original finite element mesh library Infrastructure development in collaboration with DOE and other ESPC partners • Enable grid remapping to have a point cloud or observational data stream destination Requested by surface modelers for remapping to irregular regions, and by NASA GMAO and other groups engaged in data assimilation • Introduce higher order conservative grid remapping methods Requested by multiple climate modeling groups

Coming Next in ESMF and NUOPC • Extend ESMF data classes to recognize accelerators and extend component classes to negotiate for resources Advance toward a long-term goal of more automated and optimized mapping of multi -component models to hardware platforms • Integrate the MOAB finite element mesh library into ESMF and compare and potentially replace ESMF’s original finite element mesh library Infrastructure development in collaboration with DOE and other ESPC partners • Enable grid remapping to have a point cloud or observational data stream destination Requested by surface modelers for remapping to irregular regions, and by NASA GMAO and other groups engaged in data assimilation • Introduce higher order conservative grid remapping methods Requested by multiple climate modeling groups

What Users Say About ESMF … User feedbacks from NCAR CGD, NCAR MMM, COLA, the Navy, NASA, and other organizations are collected here (grid remapping or coupled system s): https: //earthsystemcog. org/projects/esmf/user_feedback This task would have been impossible without the regridding capability that ESMF has provided … made it possible to access a large class of data from ocean and sea ice models that could not otherwise be handled …this ESMF interface is allowing us to provide users with much more functionality than we could before … the technical support was exemplary and invaluable … Themes in these statements: • ESMF grid remapping allows users to work with grids that they could not work with before (e. g. some CMIP 5 model grids) • The tools are fast and reliable. • Customer support is excellent.

What Users Say About ESMF … User feedbacks from NCAR CGD, NCAR MMM, COLA, the Navy, NASA, and other organizations are collected here (grid remapping or coupled system s): https: //earthsystemcog. org/projects/esmf/user_feedback This task would have been impossible without the regridding capability that ESMF has provided … made it possible to access a large class of data from ocean and sea ice models that could not otherwise be handled …this ESMF interface is allowing us to provide users with much more functionality than we could before … the technical support was exemplary and invaluable … Themes in these statements: • ESMF grid remapping allows users to work with grids that they could not work with before (e. g. some CMIP 5 model grids) • The tools are fast and reliable. • Customer support is excellent.

New Directions The initial ESMF software fell short of the vision for common infrastructure in several ways: 1. Implementations of ESMF could vary widely and did not guarantee a minimum level of technical interoperability among sites - creation of the NUOPC Layer 2. It was difficult to track who was using ESMF and how they were using it – initiation of the Earth System Prediction Suite 3. There was a significant learning curve for implementing ESMF in a modeling code – Cupid Integrated Development Environment New development directions address these gaps…

New Directions The initial ESMF software fell short of the vision for common infrastructure in several ways: 1. Implementations of ESMF could vary widely and did not guarantee a minimum level of technical interoperability among sites - creation of the NUOPC Layer 2. It was difficult to track who was using ESMF and how they were using it – initiation of the Earth System Prediction Suite 3. There was a significant learning curve for implementing ESMF in a modeling code – Cupid Integrated Development Environment New development directions address these gaps…

New Directions The initial ESMF software fell short of the vision for common infrastructure in several ways: 1. Implementations of ESMF could vary widely and did not guarantee a minimum level of technical interoperability among sites - creation of the NUOPC Layer 2. It was difficult to track who was using ESMF and how they were using it – initiation of the Earth System Prediction Suite 3. There was a significant learning curve for implementing ESMF in a modeling code – Cupid Integrated Development Environment New development directions address these gaps…

New Directions The initial ESMF software fell short of the vision for common infrastructure in several ways: 1. Implementations of ESMF could vary widely and did not guarantee a minimum level of technical interoperability among sites - creation of the NUOPC Layer 2. It was difficult to track who was using ESMF and how they were using it – initiation of the Earth System Prediction Suite 3. There was a significant learning curve for implementing ESMF in a modeling code – Cupid Integrated Development Environment New development directions address these gaps…

The Earth System Prediction Suite 2. It was difficult to track who was using ESMF and how they were using it • The Earth System Prediction Suite (ESPS) is a collection of weather and climate modeling codes that use ESMF with the NUOPC conventions. • The ESPS makes clear which codes are available as ESMF components and modeling systems. Draft inclusion criteria for ESPS: https: //www. earthsystemcog. org/projects/esps/strawman_criteria • • • Individual components and coupled modeling systems may be included in ESPS components and coupled modeling systems are NUOPC-compliant. A minimal, prescribed set of model documentation is provided for each version of the ESPS component or modeling system. ESPS codes must have an unambiguous public domain, open source license, or proprietary status. If the code is proprietary, a process must be identified that allows credentialed collaborators to request access. Tests for correct operation are provided for each component and modeling system. There is a commitment to continued NUOPC compliance and ESPS participation for new versions of the code.

The Earth System Prediction Suite 2. It was difficult to track who was using ESMF and how they were using it • The Earth System Prediction Suite (ESPS) is a collection of weather and climate modeling codes that use ESMF with the NUOPC conventions. • The ESPS makes clear which codes are available as ESMF components and modeling systems. Draft inclusion criteria for ESPS: https: //www. earthsystemcog. org/projects/esps/strawman_criteria • • • Individual components and coupled modeling systems may be included in ESPS components and coupled modeling systems are NUOPC-compliant. A minimal, prescribed set of model documentation is provided for each version of the ESPS component or modeling system. ESPS codes must have an unambiguous public domain, open source license, or proprietary status. If the code is proprietary, a process must be identified that allows credentialed collaborators to request access. Tests for correct operation are provided for each component and modeling system. There is a commitment to continued NUOPC compliance and ESPS participation for new versions of the code.

Model Codes in the ESPS Currently, components in the ESPS can be of the following types: coupled system, atmosphere, ocean, wave, sea ice Target codes include: • The Community Earth System Model (CESM) • The NOAA Environmental Modeling System (NEMS) and Climate Forecast System version 3 (CFSv 3) • The MOM 5 and HYCOM oceans • The Navy Global Environmental Model (Nav. GEM)-HYCOM-CICE coupled system • The Navy Coupled Ocean Atmosphere Mesoscale Prediction System (COAMPS) and COAMPS Tropical Cyclone (COAMPS-TC) • NASA GEOS-5 • NASA Model. E

Model Codes in the ESPS Currently, components in the ESPS can be of the following types: coupled system, atmosphere, ocean, wave, sea ice Target codes include: • The Community Earth System Model (CESM) • The NOAA Environmental Modeling System (NEMS) and Climate Forecast System version 3 (CFSv 3) • The MOM 5 and HYCOM oceans • The Navy Global Environmental Model (Nav. GEM)-HYCOM-CICE coupled system • The Navy Coupled Ocean Atmosphere Mesoscale Prediction System (COAMPS) and COAMPS Tropical Cyclone (COAMPS-TC) • NASA GEOS-5 • NASA Model. E

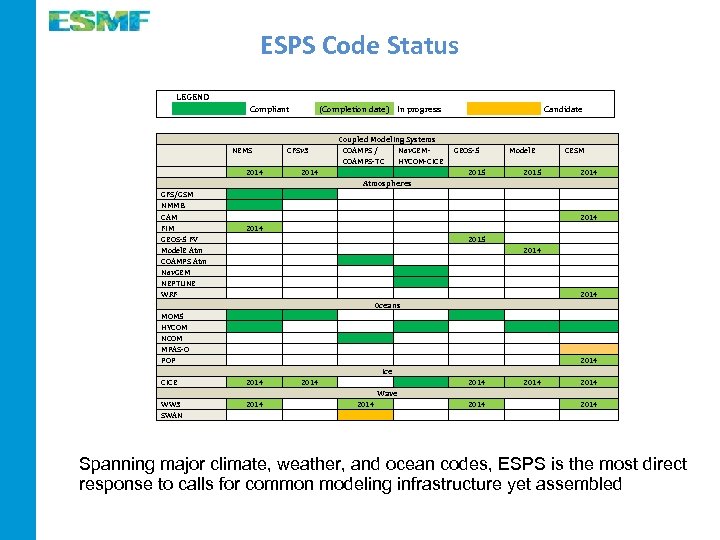

ESPS Code Status LEGEND Compliant (Completion date) In progress NEMS CFSv 3 2014 GFS/GSM NMMB CAM FIM GEOS-5 FV Model. E Atm COAMPS Atm Nav. GEM NEPTUNE WRF 2014 CICE 2014 WW 3 SWAN 2014 MOM 5 HYCOM NCOM MPAS-O POP Candidate Coupled Modeling Systems COAMPS / Nav. GEMCOAMPS-TC HYCOM-CICE Atmospheres Oceans Ice Wave 2014 GEOS-5 Model. E CESM 2015 2014 2015 2014 2014 2014 Spanning major climate, weather, and ocean codes, ESPS is the most direct response to calls for common modeling infrastructure yet assembled

ESPS Code Status LEGEND Compliant (Completion date) In progress NEMS CFSv 3 2014 GFS/GSM NMMB CAM FIM GEOS-5 FV Model. E Atm COAMPS Atm Nav. GEM NEPTUNE WRF 2014 CICE 2014 WW 3 SWAN 2014 MOM 5 HYCOM NCOM MPAS-O POP Candidate Coupled Modeling Systems COAMPS / Nav. GEMCOAMPS-TC HYCOM-CICE Atmospheres Oceans Ice Wave 2014 GEOS-5 Model. E CESM 2015 2014 2015 2014 2014 2014 Spanning major climate, weather, and ocean codes, ESPS is the most direct response to calls for common modeling infrastructure yet assembled

New Directions The initial ESMF software fell short of the vision for common infrastructure in several ways: 1. Implementations of ESMF could vary widely and did not guarantee a minimum level of technical interoperability among sites - creation of the NUOPC Layer 2. It was difficult to track who was using ESMF and how they were using it – initiation of the Earth System Prediction Suite 3. There was a significant learning curve for implementing ESMF in a modeling code – Cupid Integrated Development Environment

New Directions The initial ESMF software fell short of the vision for common infrastructure in several ways: 1. Implementations of ESMF could vary widely and did not guarantee a minimum level of technical interoperability among sites - creation of the NUOPC Layer 2. It was difficult to track who was using ESMF and how they were using it – initiation of the Earth System Prediction Suite 3. There was a significant learning curve for implementing ESMF in a modeling code – Cupid Integrated Development Environment