16de2135bcc2b9b7ca180e8b0ef67544.ppt

- Количество слайдов: 63

Speling Korecksion: A Survey Of Techniques from Past to Present A UCSD Research Exam by Dustin Boswell September 20 th 2004

Speling Korecksion: A Survey Of Techniques from Past to Present A UCSD Research Exam by Dustin Boswell September 20 th 2004

Presentation Outline • Introduction to Spelling Correction • Techniques • Difficulties • Noisy Channel Model • My Implementation • Demonstration • Conclusions

Presentation Outline • Introduction to Spelling Correction • Techniques • Difficulties • Noisy Channel Model • My Implementation • Demonstration • Conclusions

Introduction to Spelling Correction

Introduction to Spelling Correction

Goal of Spelling Correction To assist humans in the detection of mistaken tokens of text, and their replacement with corrected tokens.

Goal of Spelling Correction To assist humans in the detection of mistaken tokens of text, and their replacement with corrected tokens.

Sources of Spelling Mistakes “Spelling” is too specific. We want to correct many error types:

Sources of Spelling Mistakes “Spelling” is too specific. We want to correct many error types:

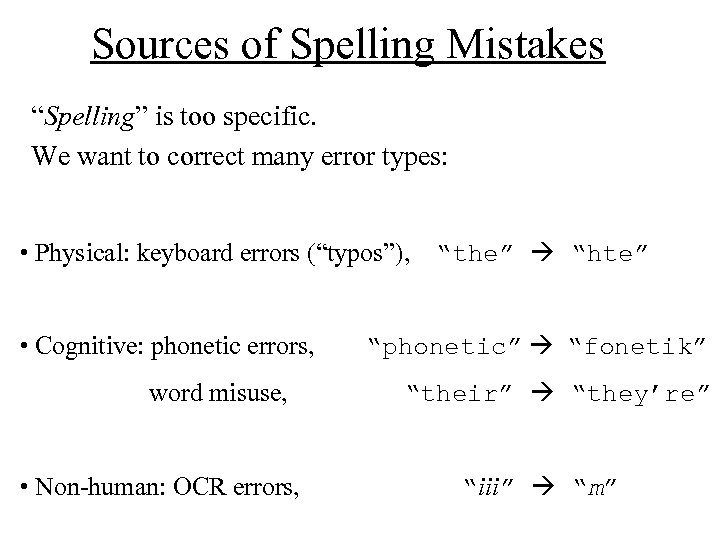

Sources of Spelling Mistakes “Spelling” is too specific. We want to correct many error types: • Physical: keyboard errors (“typos”), “the” “hte” • Cognitive: phonetic errors, word misuse, • Non-human: OCR errors, “phonetic” “fonetik” “their” “they’re” “iii” “m”

Sources of Spelling Mistakes “Spelling” is too specific. We want to correct many error types: • Physical: keyboard errors (“typos”), “the” “hte” • Cognitive: phonetic errors, word misuse, • Non-human: OCR errors, “phonetic” “fonetik” “their” “they’re” “iii” “m”

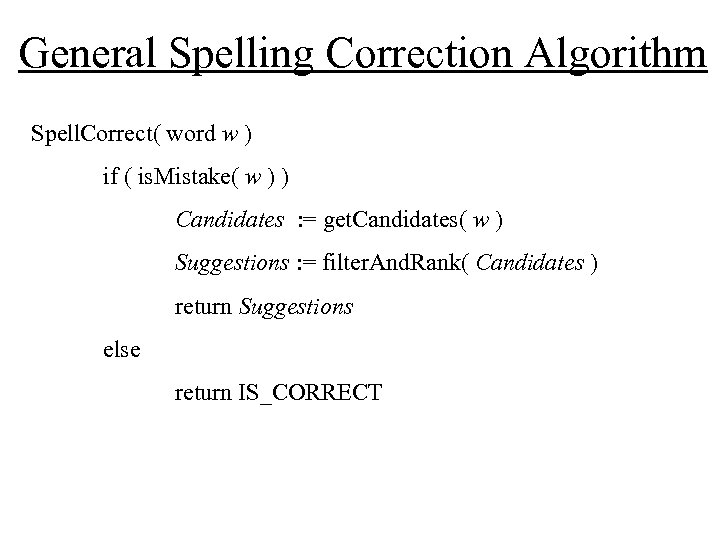

General Spelling Correction Algorithm Spell. Correct( word w ) if ( is. Mistake( w ) ) Candidates : = get. Candidates( w ) Suggestions : = filter. And. Rank( Candidates ) return Suggestions else return IS_CORRECT

General Spelling Correction Algorithm Spell. Correct( word w ) if ( is. Mistake( w ) ) Candidates : = get. Candidates( w ) Suggestions : = filter. And. Rank( Candidates ) return Suggestions else return IS_CORRECT

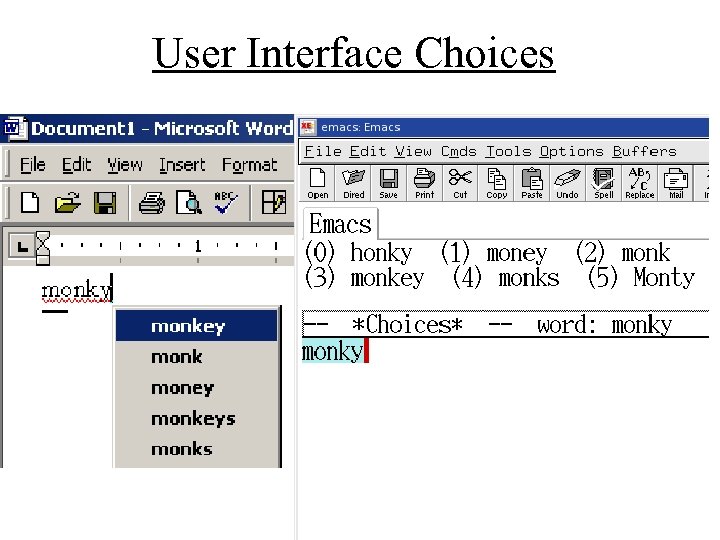

User Interface Choices

User Interface Choices

Techniques used in Spelling Correction

Techniques used in Spelling Correction

Recognizing Mistakes (Dictionaries) • Use a dictionary (lexicon) to define valid words • Recognizing a string in a language is an old CS problem • Hash Tables or Letter Tries are reasonably efficient implementations

Recognizing Mistakes (Dictionaries) • Use a dictionary (lexicon) to define valid words • Recognizing a string in a language is an old CS problem • Hash Tables or Letter Tries are reasonably efficient implementations

Recognizing Mistakes (Dictionaries) • Use a dictionary (lexicon) to define valid words • Recognizing a string in a language is an old CS problem • Hash Tables or Letter Tries are reasonably efficient implementations • Earlier work like (Peterson 80) focused on compression techniques (stemming, caching, etc…) For hardware like cell phones this may still be relevant.

Recognizing Mistakes (Dictionaries) • Use a dictionary (lexicon) to define valid words • Recognizing a string in a language is an old CS problem • Hash Tables or Letter Tries are reasonably efficient implementations • Earlier work like (Peterson 80) focused on compression techniques (stemming, caching, etc…) For hardware like cell phones this may still be relevant.

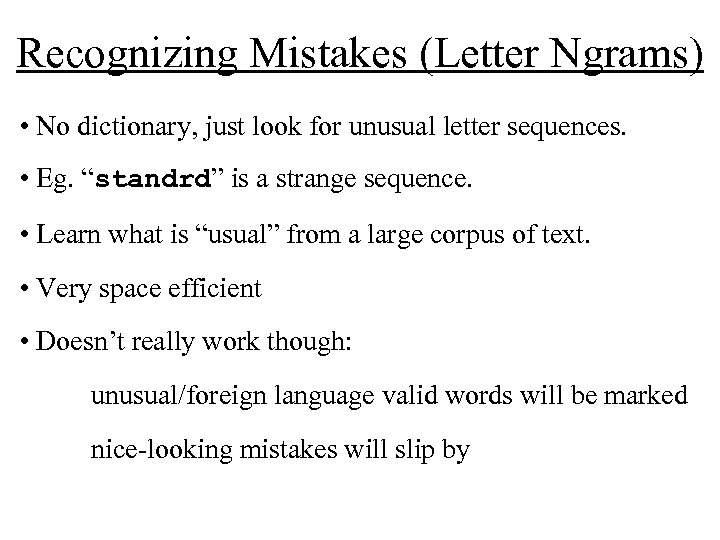

Recognizing Mistakes (Letter Ngrams) • No dictionary, just look for unusual letter sequences. • Eg. “standrd” is a strange sequence. • Learn what is “usual” from a large corpus of text.

Recognizing Mistakes (Letter Ngrams) • No dictionary, just look for unusual letter sequences. • Eg. “standrd” is a strange sequence. • Learn what is “usual” from a large corpus of text.

Recognizing Mistakes (Letter Ngrams) • No dictionary, just look for unusual letter sequences. • Eg. “standrd” is a strange sequence. • Learn what is “usual” from a large corpus of text. • Very space efficient • Doesn’t really work though: unusual/foreign language valid words will be marked nice-looking mistakes will slip by

Recognizing Mistakes (Letter Ngrams) • No dictionary, just look for unusual letter sequences. • Eg. “standrd” is a strange sequence. • Learn what is “usual” from a large corpus of text. • Very space efficient • Doesn’t really work though: unusual/foreign language valid words will be marked nice-looking mistakes will slip by

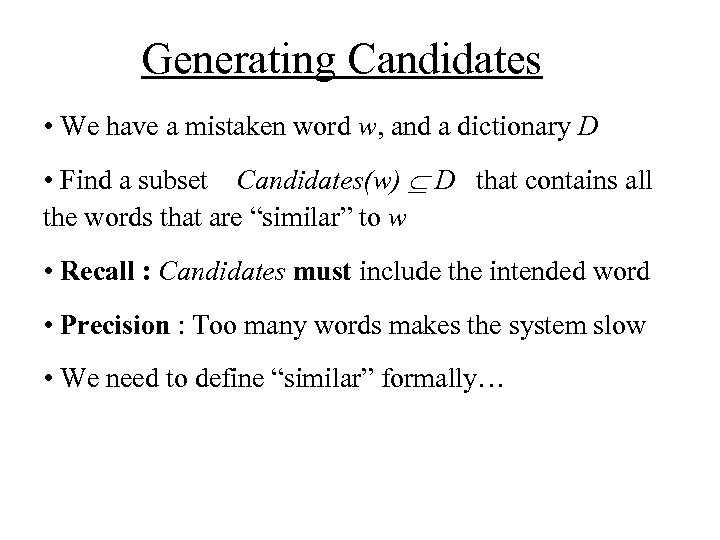

Generating Candidates • We have a mistaken word w, and a dictionary D • Find a subset Candidates(w) D that contains all the words that are “similar” to w • Recall : Candidates must include the intended word • Precision : Too many words makes the system slow • We need to define “similar” formally…

Generating Candidates • We have a mistaken word w, and a dictionary D • Find a subset Candidates(w) D that contains all the words that are “similar” to w • Recall : Candidates must include the intended word • Precision : Too many words makes the system slow • We need to define “similar” formally…

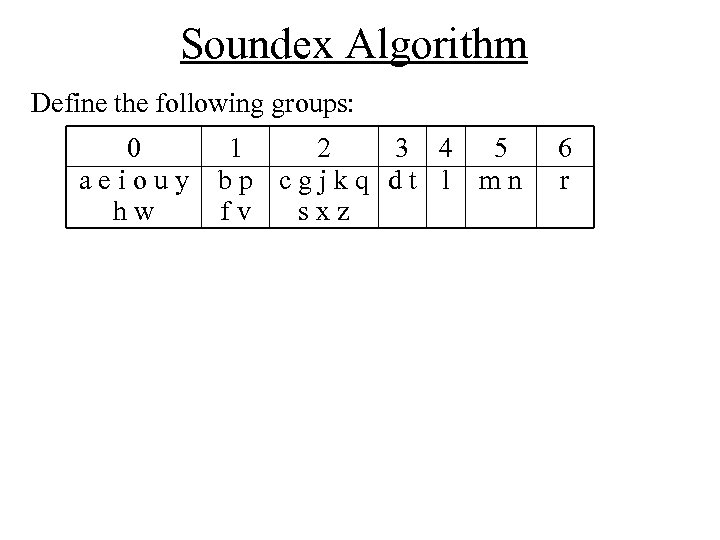

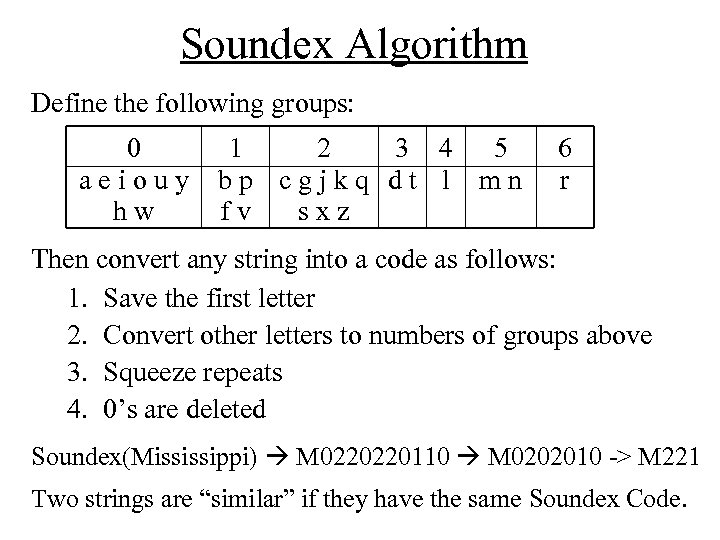

Soundex Algorithm Define the following groups: 0 aeiouy hw 1 2 3 4 5 bp cgjkq dt l mn fv sxz 6 r

Soundex Algorithm Define the following groups: 0 aeiouy hw 1 2 3 4 5 bp cgjkq dt l mn fv sxz 6 r

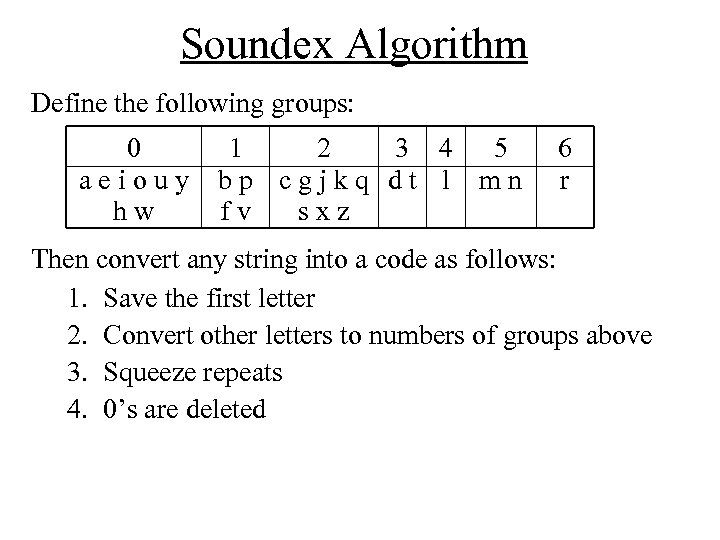

Soundex Algorithm Define the following groups: 0 aeiouy hw 1 2 3 4 5 bp cgjkq dt l mn fv sxz 6 r Then convert any string into a code as follows: 1. Save the first letter 2. Convert other letters to numbers of groups above 3. Squeeze repeats 4. 0’s are deleted

Soundex Algorithm Define the following groups: 0 aeiouy hw 1 2 3 4 5 bp cgjkq dt l mn fv sxz 6 r Then convert any string into a code as follows: 1. Save the first letter 2. Convert other letters to numbers of groups above 3. Squeeze repeats 4. 0’s are deleted

Soundex Algorithm Define the following groups: 0 aeiouy hw 1 2 3 4 5 bp cgjkq dt l mn fv sxz 6 r Then convert any string into a code as follows: 1. Save the first letter 2. Convert other letters to numbers of groups above 3. Squeeze repeats 4. 0’s are deleted Soundex(Mississippi) M 0220220110 M 0202010 -> M 221 Two strings are “similar” if they have the same Soundex Code.

Soundex Algorithm Define the following groups: 0 aeiouy hw 1 2 3 4 5 bp cgjkq dt l mn fv sxz 6 r Then convert any string into a code as follows: 1. Save the first letter 2. Convert other letters to numbers of groups above 3. Squeeze repeats 4. 0’s are deleted Soundex(Mississippi) M 0220220110 M 0202010 -> M 221 Two strings are “similar” if they have the same Soundex Code.

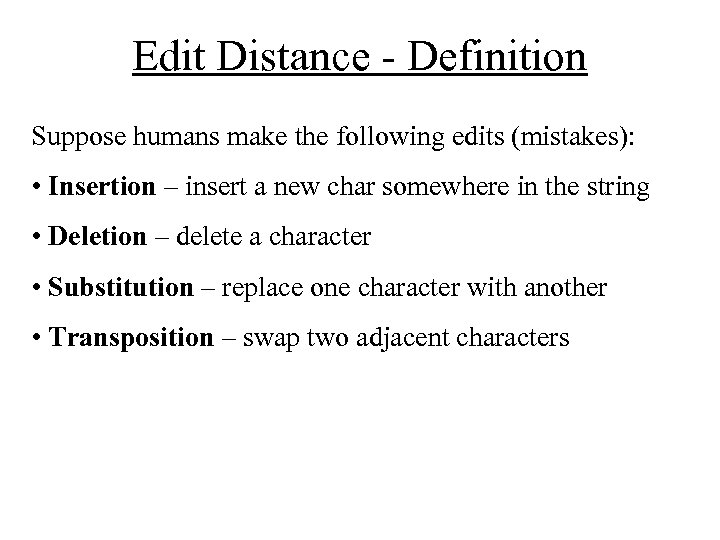

Edit Distance - Definition Suppose humans make the following edits (mistakes): • Insertion – insert a new char somewhere in the string • Deletion – delete a character • Substitution – replace one character with another • Transposition – swap two adjacent characters

Edit Distance - Definition Suppose humans make the following edits (mistakes): • Insertion – insert a new char somewhere in the string • Deletion – delete a character • Substitution – replace one character with another • Transposition – swap two adjacent characters

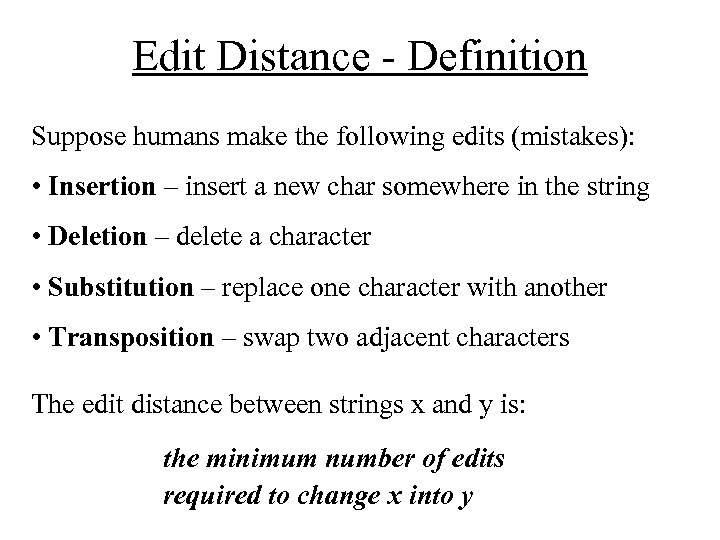

Edit Distance - Definition Suppose humans make the following edits (mistakes): • Insertion – insert a new char somewhere in the string • Deletion – delete a character • Substitution – replace one character with another • Transposition – swap two adjacent characters The edit distance between strings x and y is: the minimum number of edits required to change x into y

Edit Distance - Definition Suppose humans make the following edits (mistakes): • Insertion – insert a new char somewhere in the string • Deletion – delete a character • Substitution – replace one character with another • Transposition – swap two adjacent characters The edit distance between strings x and y is: the minimum number of edits required to change x into y

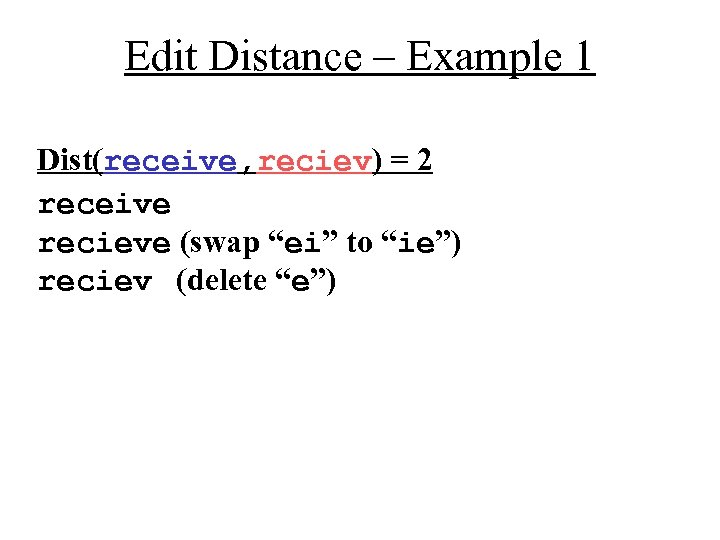

Edit Distance – Example 1 Dist(receive, reciev) = 2 receive recieve (swap “ei” to “ie”) reciev (delete “e”)

Edit Distance – Example 1 Dist(receive, reciev) = 2 receive recieve (swap “ei” to “ie”) reciev (delete “e”)

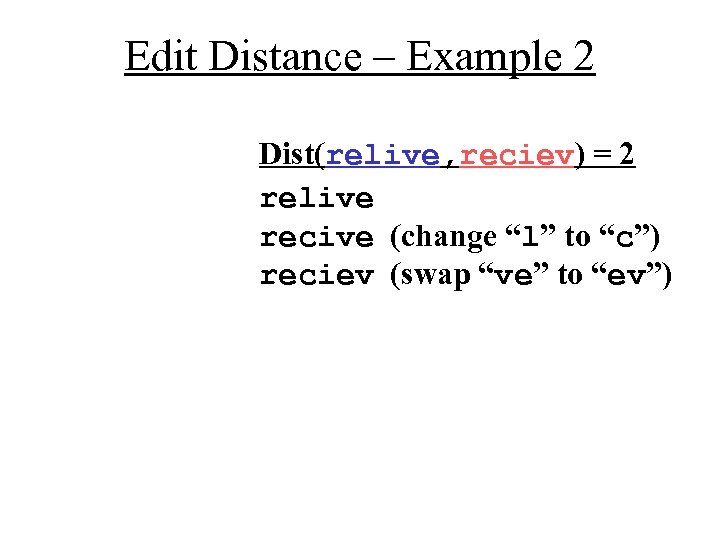

Edit Distance – Example 2 Dist(relive, reciev) = 2 relive recive (change “l” to “c”) reciev (swap “ve” to “ev”)

Edit Distance – Example 2 Dist(relive, reciev) = 2 relive recive (change “l” to “c”) reciev (swap “ve” to “ev”)

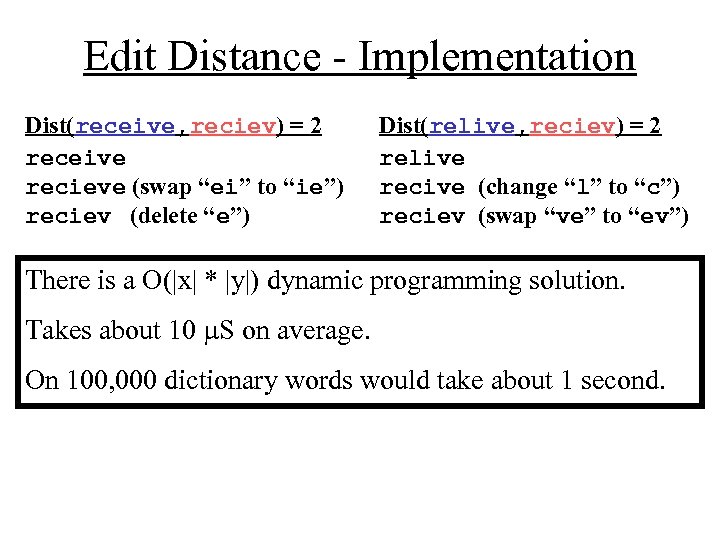

Edit Distance - Implementation Dist(receive, reciev) = 2 receive recieve (swap “ei” to “ie”) reciev (delete “e”) Dist(relive, reciev) = 2 relive recive (change “l” to “c”) reciev (swap “ve” to “ev”) There is a O(|x| * |y|) dynamic programming solution. Takes about 10 S on average. On 100, 000 dictionary words would take about 1 second.

Edit Distance - Implementation Dist(receive, reciev) = 2 receive recieve (swap “ei” to “ie”) reciev (delete “e”) Dist(relive, reciev) = 2 relive recive (change “l” to “c”) reciev (swap “ve” to “ev”) There is a O(|x| * |y|) dynamic programming solution. Takes about 10 S on average. On 100, 000 dictionary words would take about 1 second.

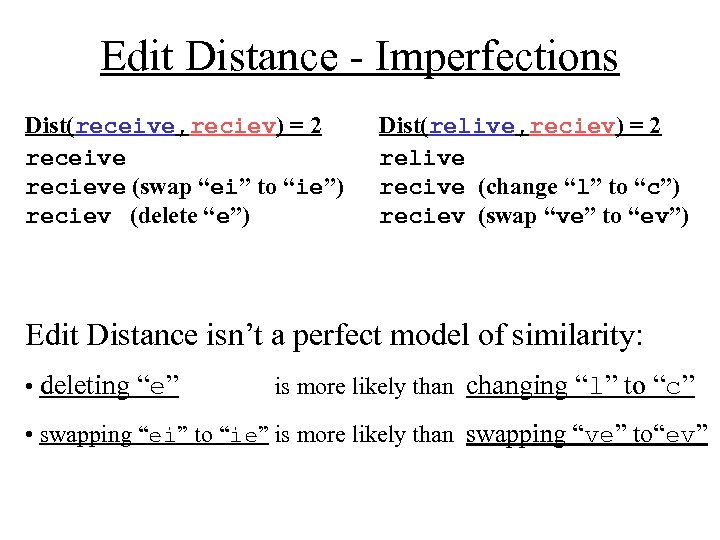

Edit Distance - Imperfections Dist(receive, reciev) = 2 receive recieve (swap “ei” to “ie”) reciev (delete “e”) Dist(relive, reciev) = 2 relive recive (change “l” to “c”) reciev (swap “ve” to “ev”) Edit Distance isn’t a perfect model of similarity: • deleting “e” is more likely than changing “l” to “c” • swapping “ei” to “ie” is more likely than swapping “ve” to“ev”

Edit Distance - Imperfections Dist(receive, reciev) = 2 receive recieve (swap “ei” to “ie”) reciev (delete “e”) Dist(relive, reciev) = 2 relive recive (change “l” to “c”) reciev (swap “ve” to “ev”) Edit Distance isn’t a perfect model of similarity: • deleting “e” is more likely than changing “l” to “c” • swapping “ei” to “ie” is more likely than swapping “ve” to“ev”

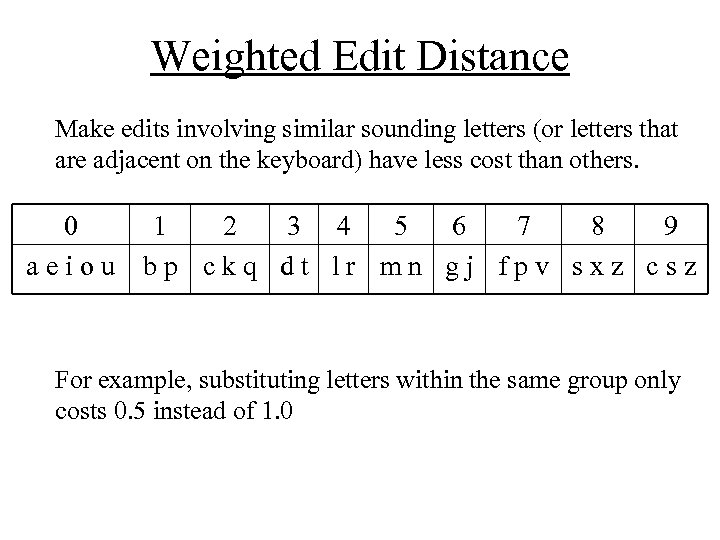

Weighted Edit Distance Make edits involving similar sounding letters (or letters that are adjacent on the keyboard) have less cost than others. 0 1 2 3 4 5 6 7 8 9 aeiou bp ckq dt lr mn gj fpv sxz csz For example, substituting letters within the same group only costs 0. 5 instead of 1. 0

Weighted Edit Distance Make edits involving similar sounding letters (or letters that are adjacent on the keyboard) have less cost than others. 0 1 2 3 4 5 6 7 8 9 aeiou bp ckq dt lr mn gj fpv sxz csz For example, substituting letters within the same group only costs 0. 5 instead of 1. 0

Ranking Candidates • The Single-edit assumption (80% true) - all candidates have an edit distance <= 1 - assign weights/probabilities to each edit - (Kernighan 90 learns these probs automatically)

Ranking Candidates • The Single-edit assumption (80% true) - all candidates have an edit distance <= 1 - assign weights/probabilities to each edit - (Kernighan 90 learns these probs automatically)

Ranking Candidates • The Single-edit assumption (80% true) - all candidates have an edit distance <= 1 - assign weights/probabilities to each edit - (Kernighan 90 learns these probs automatically) • Ranking multiple edit candidates - weighted edit distance is the most popular (used by aspell in Unix )

Ranking Candidates • The Single-edit assumption (80% true) - all candidates have an edit distance <= 1 - assign weights/probabilities to each edit - (Kernighan 90 learns these probs automatically) • Ranking multiple edit candidates - weighted edit distance is the most popular (used by aspell in Unix )

Difficulties for Spelling Correction

Difficulties for Spelling Correction

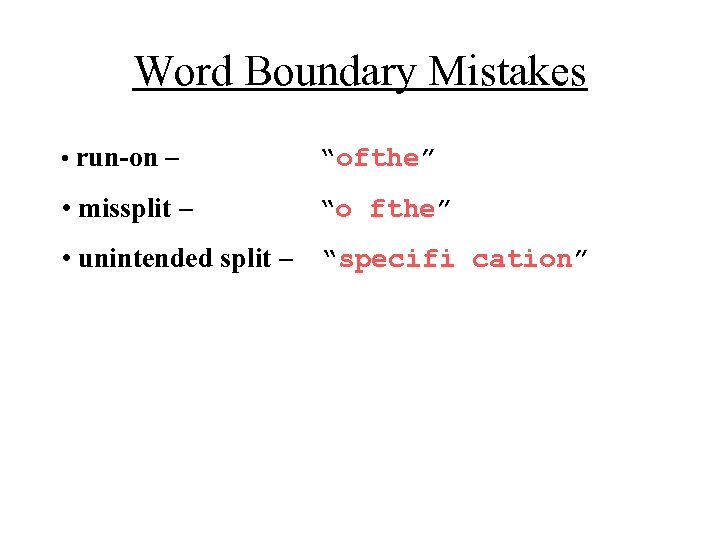

Word Boundary Mistakes • run-on – “ofthe” • missplit – “o fthe” • unintended split – “specifi cation”

Word Boundary Mistakes • run-on – “ofthe” • missplit – “o fthe” • unintended split – “specifi cation”

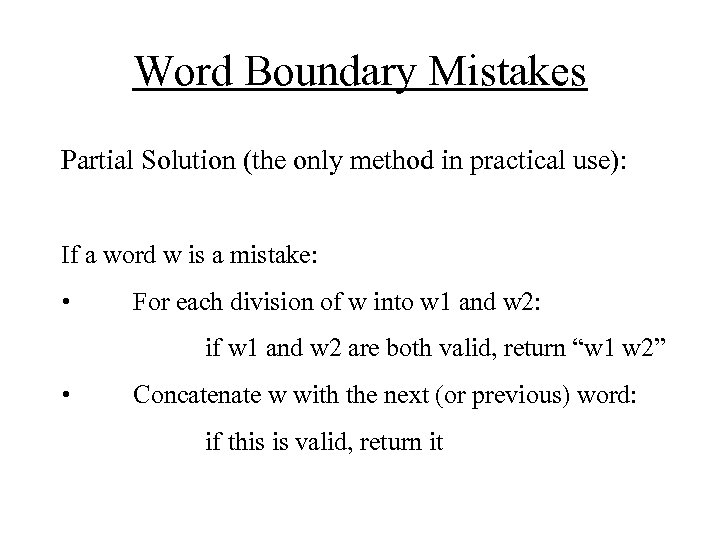

Word Boundary Mistakes Partial Solution (the only method in practical use): If a word w is a mistake: • For each division of w into w 1 and w 2: if w 1 and w 2 are both valid, return “w 1 w 2” • Concatenate w with the next (or previous) word: if this is valid, return it

Word Boundary Mistakes Partial Solution (the only method in practical use): If a word w is a mistake: • For each division of w into w 1 and w 2: if w 1 and w 2 are both valid, return “w 1 w 2” • Concatenate w with the next (or previous) word: if this is valid, return it

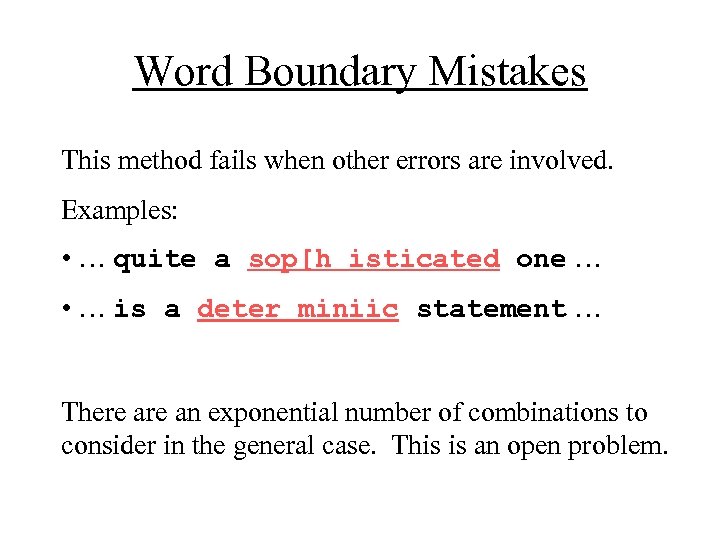

Word Boundary Mistakes This method fails when other errors are involved. Examples: • … quite a sop[h isticated one … • … is a deter miniic statement … There an exponential number of combinations to consider in the general case. This is an open problem.

Word Boundary Mistakes This method fails when other errors are involved. Examples: • … quite a sop[h isticated one … • … is a deter miniic statement … There an exponential number of combinations to consider in the general case. This is an open problem.

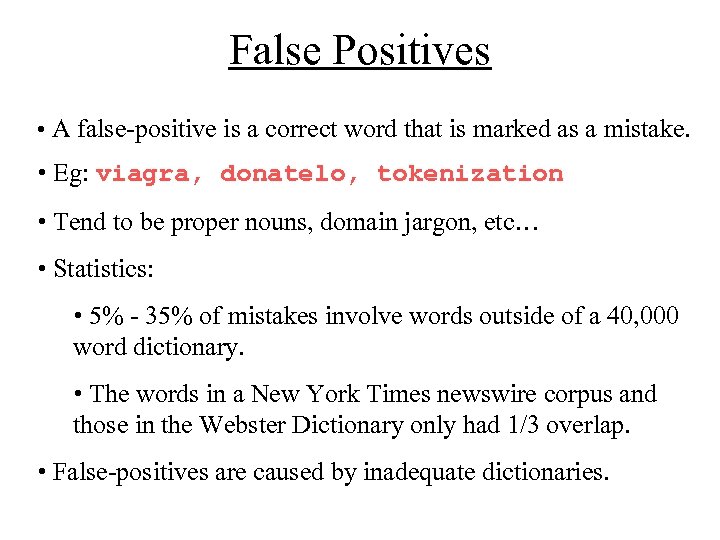

False Positives • A false-positive is a correct word that is marked as a mistake. • Eg: viagra, donatelo, tokenization • Tend to be proper nouns, domain jargon, etc…

False Positives • A false-positive is a correct word that is marked as a mistake. • Eg: viagra, donatelo, tokenization • Tend to be proper nouns, domain jargon, etc…

False Positives • A false-positive is a correct word that is marked as a mistake. • Eg: viagra, donatelo, tokenization • Tend to be proper nouns, domain jargon, etc… • Statistics: • 5% - 35% of mistakes involve words outside of a 40, 000 word dictionary. • The words in a New York Times newswire corpus and those in the Webster Dictionary only had 1/3 overlap. • False-positives are caused by inadequate dictionaries.

False Positives • A false-positive is a correct word that is marked as a mistake. • Eg: viagra, donatelo, tokenization • Tend to be proper nouns, domain jargon, etc… • Statistics: • 5% - 35% of mistakes involve words outside of a 40, 000 word dictionary. • The words in a New York Times newswire corpus and those in the Webster Dictionary only had 1/3 overlap. • False-positives are caused by inadequate dictionaries.

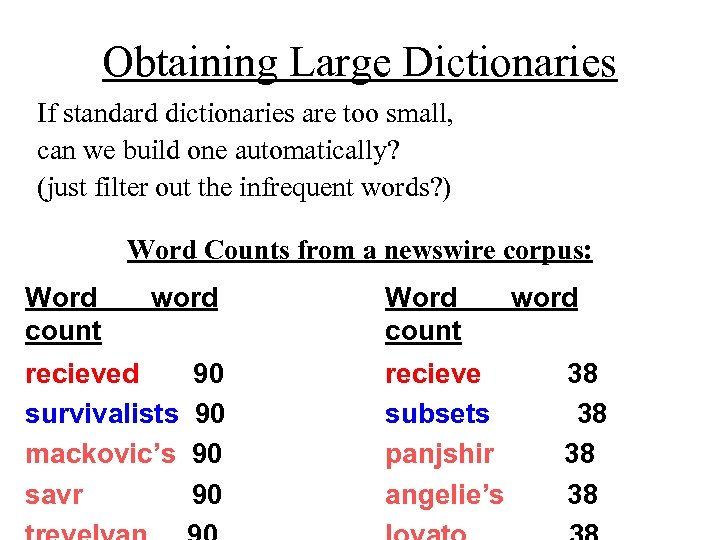

Obtaining Large Dictionaries If standard dictionaries are too small, can we build one automatically? (just filter out the infrequent words? )

Obtaining Large Dictionaries If standard dictionaries are too small, can we build one automatically? (just filter out the infrequent words? )

Obtaining Large Dictionaries If standard dictionaries are too small, can we build one automatically? (just filter out the infrequent words? ) Word Counts from a newswire corpus: Word count word recieved survivalists mackovic’s savr 90 90 Word count recieve subsets panjshir angelie’s word 38 38

Obtaining Large Dictionaries If standard dictionaries are too small, can we build one automatically? (just filter out the infrequent words? ) Word Counts from a newswire corpus: Word count word recieved survivalists mackovic’s savr 90 90 Word count recieve subsets panjshir angelie’s word 38 38

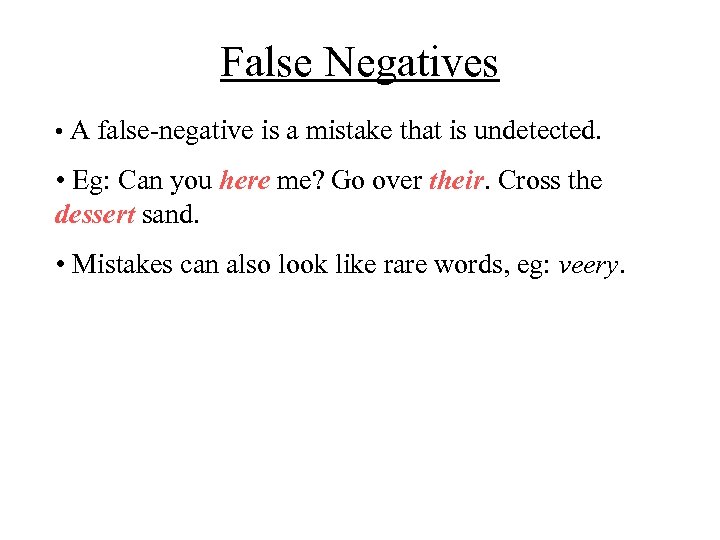

False Negatives • A false-negative is a mistake that is undetected. • Eg: Can you here me? Go over their. Cross the dessert sand. • Mistakes can also look like rare words, eg: veery.

False Negatives • A false-negative is a mistake that is undetected. • Eg: Can you here me? Go over their. Cross the dessert sand. • Mistakes can also look like rare words, eg: veery.

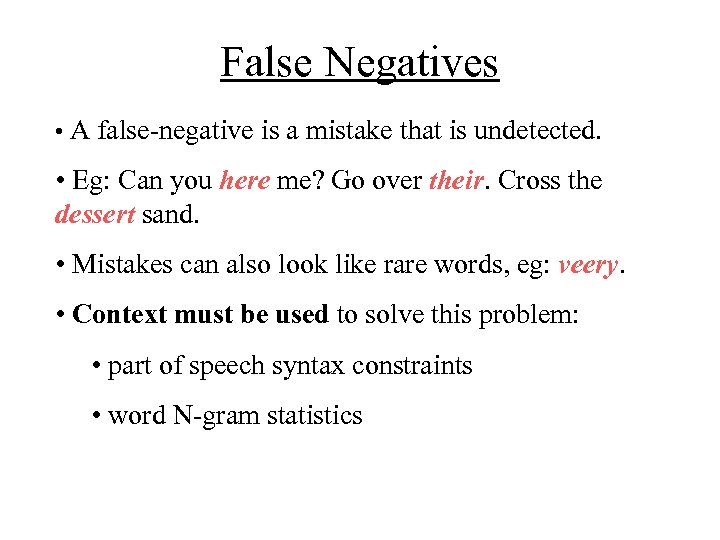

False Negatives • A false-negative is a mistake that is undetected. • Eg: Can you here me? Go over their. Cross the dessert sand. • Mistakes can also look like rare words, eg: veery. • Context must be used to solve this problem: • part of speech syntax constraints • word N-gram statistics

False Negatives • A false-negative is a mistake that is undetected. • Eg: Can you here me? Go over their. Cross the dessert sand. • Mistakes can also look like rare words, eg: veery. • Context must be used to solve this problem: • part of speech syntax constraints • word N-gram statistics

Lacking Features of Earlier Work • Handling multiple errors / quantifying “error likelihood” • Use of Large Dictionaries • Use of Word Statistics • Use of Word Context • Quantitative ways of combining all of the above!

Lacking Features of Earlier Work • Handling multiple errors / quantifying “error likelihood” • Use of Large Dictionaries • Use of Word Statistics • Use of Word Context • Quantitative ways of combining all of the above!

The Noisy Channel Model of Spelling Correction

The Noisy Channel Model of Spelling Correction

Noisy Channel Derivation Let S be a word, phrase, or sentence intended by an author. ~ Let S be the produced sequence after errors occur. Our task is to find the most likely candidate given the evidence:

Noisy Channel Derivation Let S be a word, phrase, or sentence intended by an author. ~ Let S be the produced sequence after errors occur. Our task is to find the most likely candidate given the evidence:

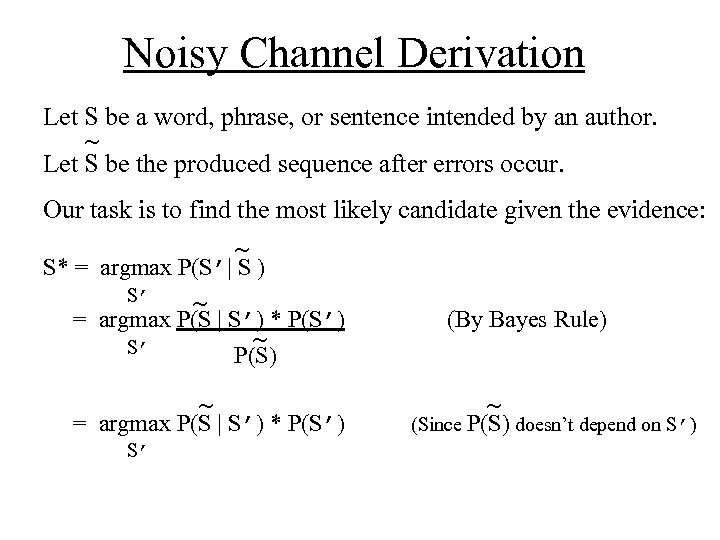

Noisy Channel Derivation Let S be a word, phrase, or sentence intended by an author. ~ Let S be the produced sequence after errors occur. Our task is to find the most likely candidate given the evidence: ~ S* = argmax P(S’| S ) S’ ~ = argmax P(S | S’) * P(S’) ~ S’ P(S) ~ = argmax P(S | S’) * P(S’) S’ (By Bayes Rule) ~ (Since P(S) doesn’t depend on S’)

Noisy Channel Derivation Let S be a word, phrase, or sentence intended by an author. ~ Let S be the produced sequence after errors occur. Our task is to find the most likely candidate given the evidence: ~ S* = argmax P(S’| S ) S’ ~ = argmax P(S | S’) * P(S’) ~ S’ P(S) ~ = argmax P(S | S’) * P(S’) S’ (By Bayes Rule) ~ (Since P(S) doesn’t depend on S’)

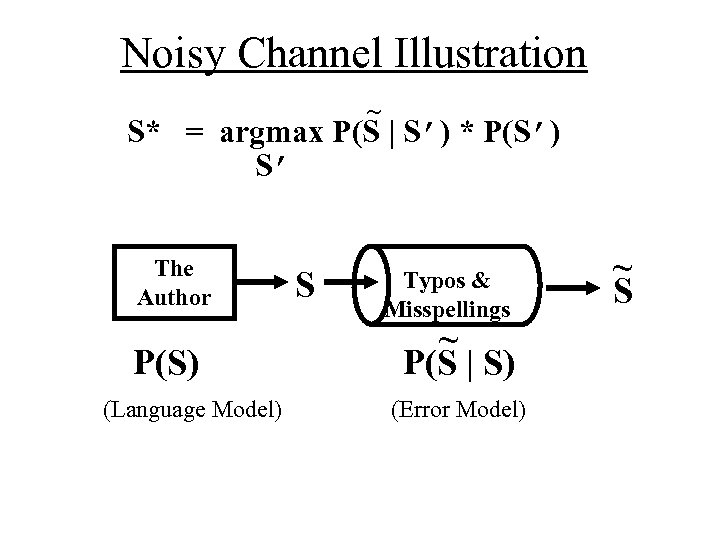

Noisy Channel Illustration ~ S* = argmax P(S | S’) * P(S’) S’ The Author P(S) (Language Model) S Typos & Misspellings ~ P(S | S) (Error Model) ~ S

Noisy Channel Illustration ~ S* = argmax P(S | S’) * P(S’) S’ The Author P(S) (Language Model) S Typos & Misspellings ~ P(S | S) (Error Model) ~ S

Noisy Channel Systems (1) First application to spelling correction: Kernighan, Church, Gale ‘ 90. Language Model Used: Unigram model Error Model Used: Learned probabilities of single edits automatically

Noisy Channel Systems (1) First application to spelling correction: Kernighan, Church, Gale ‘ 90. Language Model Used: Unigram model Error Model Used: Learned probabilities of single edits automatically

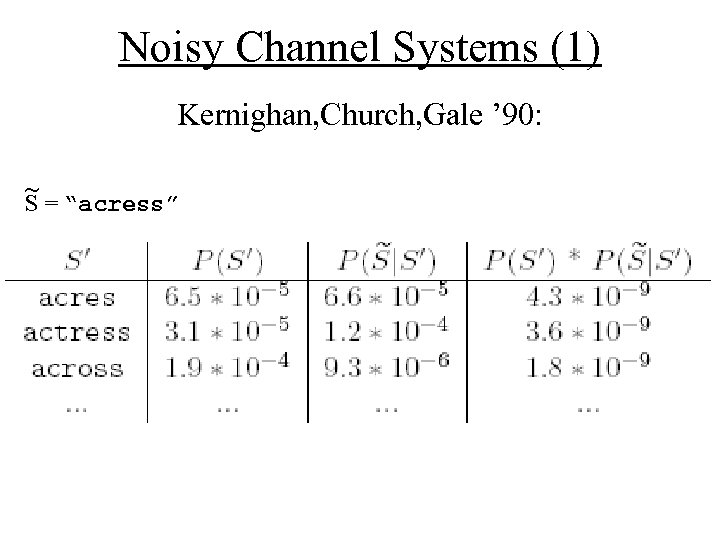

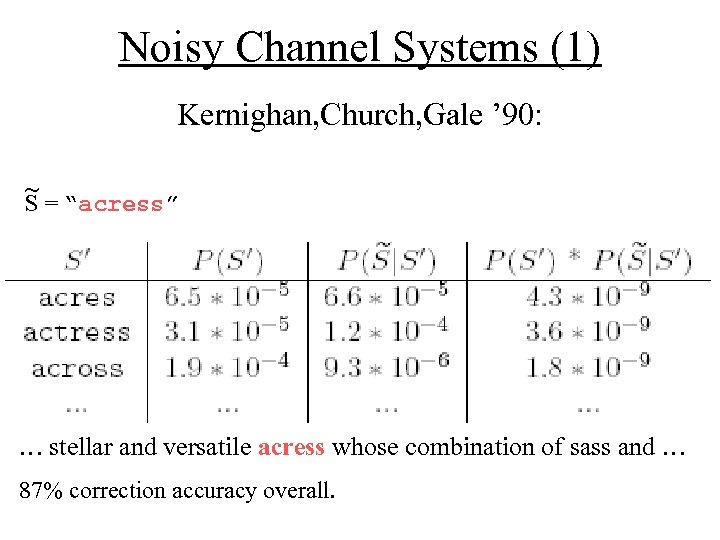

Noisy Channel Systems (1) Kernighan, Church, Gale ’ 90: ~ S = “acress”

Noisy Channel Systems (1) Kernighan, Church, Gale ’ 90: ~ S = “acress”

Noisy Channel Systems (1) Kernighan, Church, Gale ’ 90: ~ S = “acress” … stellar and versatile acress whose combination of sass and … 87% correction accuracy overall.

Noisy Channel Systems (1) Kernighan, Church, Gale ’ 90: ~ S = “acress” … stellar and versatile acress whose combination of sass and … 87% correction accuracy overall.

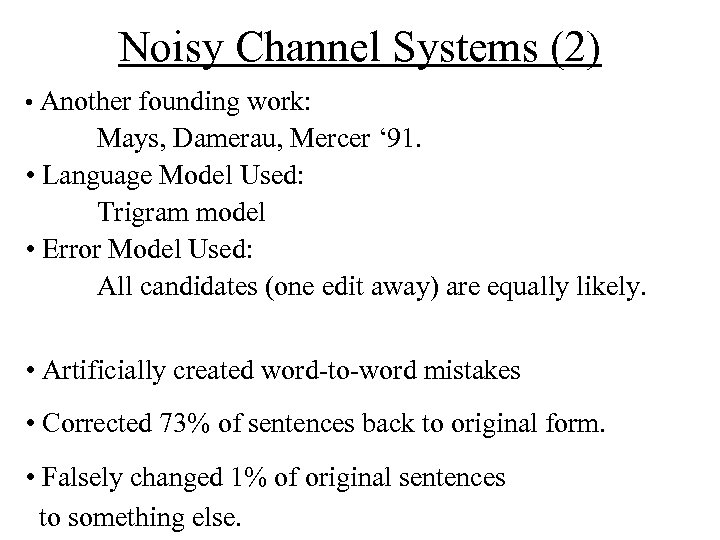

Noisy Channel Systems (2) • Another founding work: Mays, Damerau, Mercer ‘ 91. • Language Model Used: Trigram model • Error Model Used: All candidates (one edit away) are equally likely.

Noisy Channel Systems (2) • Another founding work: Mays, Damerau, Mercer ‘ 91. • Language Model Used: Trigram model • Error Model Used: All candidates (one edit away) are equally likely.

Noisy Channel Systems (2) • Another founding work: Mays, Damerau, Mercer ‘ 91. • Language Model Used: Trigram model • Error Model Used: All candidates (one edit away) are equally likely. • Artificially created word-to-word mistakes • Corrected 73% of sentences back to original form. • Falsely changed 1% of original sentences to something else.

Noisy Channel Systems (2) • Another founding work: Mays, Damerau, Mercer ‘ 91. • Language Model Used: Trigram model • Error Model Used: All candidates (one edit away) are equally likely. • Artificially created word-to-word mistakes • Corrected 73% of sentences back to original form. • Falsely changed 1% of original sentences to something else.

Noisy Channel Systems (3) • Recent work by Brill & Moore 2000. • Language Model Used: Trigram model • Error Model Used: Learned probabilities of “substring edits”:

Noisy Channel Systems (3) • Recent work by Brill & Moore 2000. • Language Model Used: Trigram model • Error Model Used: Learned probabilities of “substring edits”:

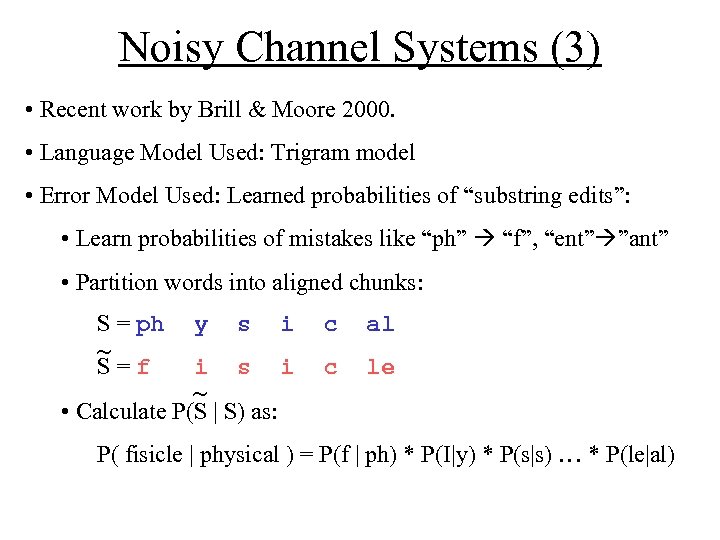

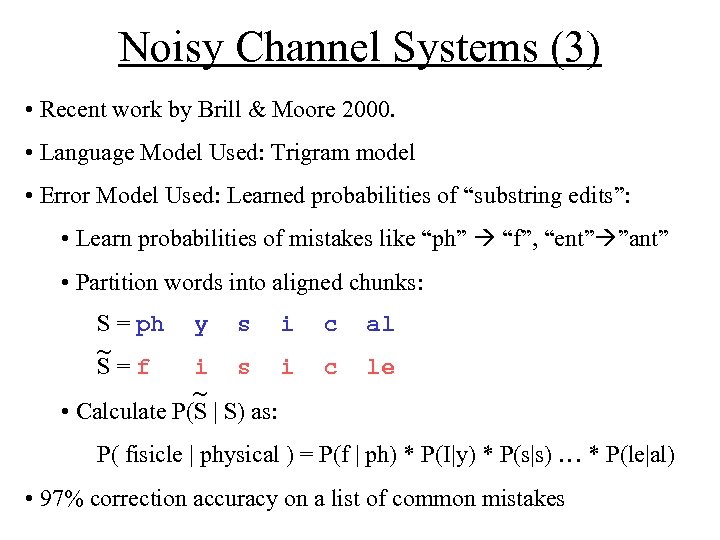

Noisy Channel Systems (3) • Recent work by Brill & Moore 2000. • Language Model Used: Trigram model • Error Model Used: Learned probabilities of “substring edits”: • Learn probabilities of mistakes like “ph” “f”, “ent” ”ant” • Partition words into aligned chunks: S = ph y s i c al S=f i s i c le ~ ~ • Calculate P(S | S) as: P( fisicle | physical ) = P(f | ph) * P(I|y) * P(s|s) … * P(le|al)

Noisy Channel Systems (3) • Recent work by Brill & Moore 2000. • Language Model Used: Trigram model • Error Model Used: Learned probabilities of “substring edits”: • Learn probabilities of mistakes like “ph” “f”, “ent” ”ant” • Partition words into aligned chunks: S = ph y s i c al S=f i s i c le ~ ~ • Calculate P(S | S) as: P( fisicle | physical ) = P(f | ph) * P(I|y) * P(s|s) … * P(le|al)

Noisy Channel Systems (3) • Recent work by Brill & Moore 2000. • Language Model Used: Trigram model • Error Model Used: Learned probabilities of “substring edits”: • Learn probabilities of mistakes like “ph” “f”, “ent” ”ant” • Partition words into aligned chunks: S = ph y s i c al S=f i s i c le ~ ~ • Calculate P(S | S) as: P( fisicle | physical ) = P(f | ph) * P(I|y) * P(s|s) … * P(le|al) • 97% correction accuracy on a list of common mistakes

Noisy Channel Systems (3) • Recent work by Brill & Moore 2000. • Language Model Used: Trigram model • Error Model Used: Learned probabilities of “substring edits”: • Learn probabilities of mistakes like “ph” “f”, “ent” ”ant” • Partition words into aligned chunks: S = ph y s i c al S=f i s i c le ~ ~ • Calculate P(S | S) as: P( fisicle | physical ) = P(f | ph) * P(I|y) * P(s|s) … * P(le|al) • 97% correction accuracy on a list of common mistakes

Noisy Channel Systems (4) • More recent work by Toutanova & Moore 2002. • Language Model: Unigram model • Error Model: Phonetic model combined with previous work • Convert all letter strings to phone strings first. • Learn probabilities of phonetic mistakes like “EH” “AH” • Linearly combine this model with previous model • Reduced the error rate by 24%

Noisy Channel Systems (4) • More recent work by Toutanova & Moore 2002. • Language Model: Unigram model • Error Model: Phonetic model combined with previous work • Convert all letter strings to phone strings first. • Learn probabilities of phonetic mistakes like “EH” “AH” • Linearly combine this model with previous model • Reduced the error rate by 24%

My Implementation

My Implementation

My Noisy Channel System • Language Model: Bigram model • Error Model: Two-phase model 1. First the user spells the intended word (using a phonetic model, similar to Brill&Moore 2000) 2. Then the user types the spelled word 1. (using a typographical model of my own)

My Noisy Channel System • Language Model: Bigram model • Error Model: Two-phase model 1. First the user spells the intended word (using a phonetic model, similar to Brill&Moore 2000) 2. Then the user types the spelled word 1. (using a typographical model of my own)

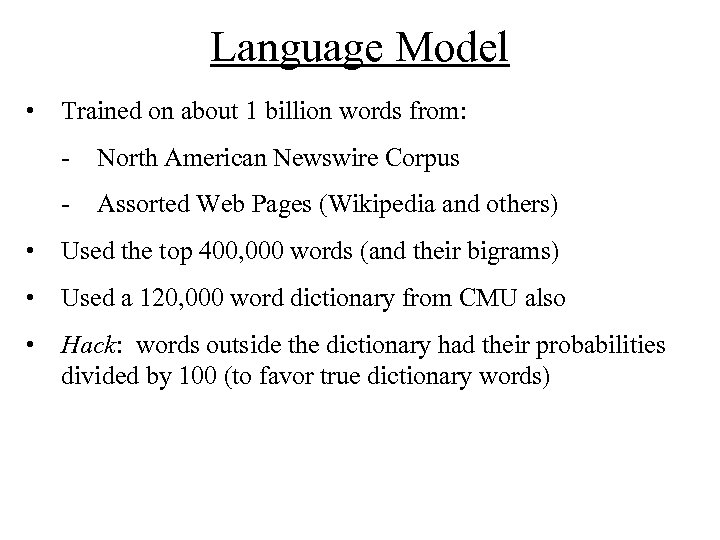

Language Model • Trained on about 1 billion words from: - North American Newswire Corpus - Assorted Web Pages (Wikipedia and others) • Used the top 400, 000 words (and their bigrams) • Used a 120, 000 word dictionary from CMU also • Hack: words outside the dictionary had their probabilities divided by 100 (to favor true dictionary words)

Language Model • Trained on about 1 billion words from: - North American Newswire Corpus - Assorted Web Pages (Wikipedia and others) • Used the top 400, 000 words (and their bigrams) • Used a 120, 000 word dictionary from CMU also • Hack: words outside the dictionary had their probabilities divided by 100 (to favor true dictionary words)

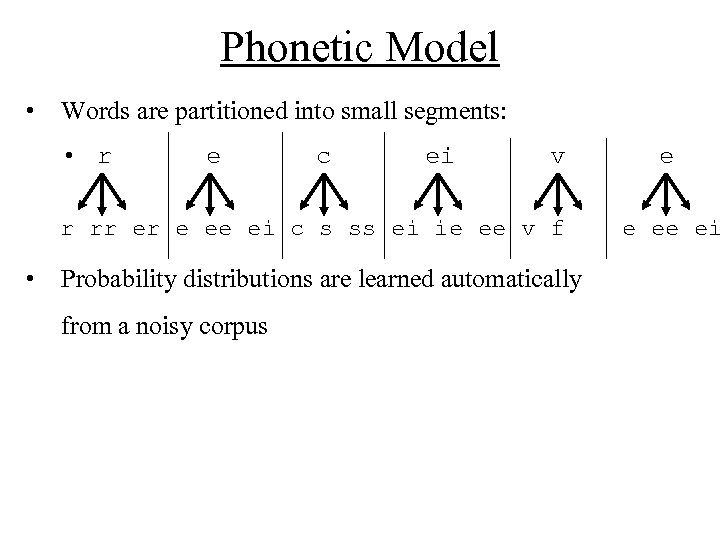

Phonetic Model • Words are partitioned into small segments: • r e c ei v r rr er e ee ei c s ss ei ie ee v f • Probability distributions are learned automatically from a noisy corpus e e ee ei

Phonetic Model • Words are partitioned into small segments: • r e c ei v r rr er e ee ei c s ss ei ie ee v f • Probability distributions are learned automatically from a noisy corpus e e ee ei

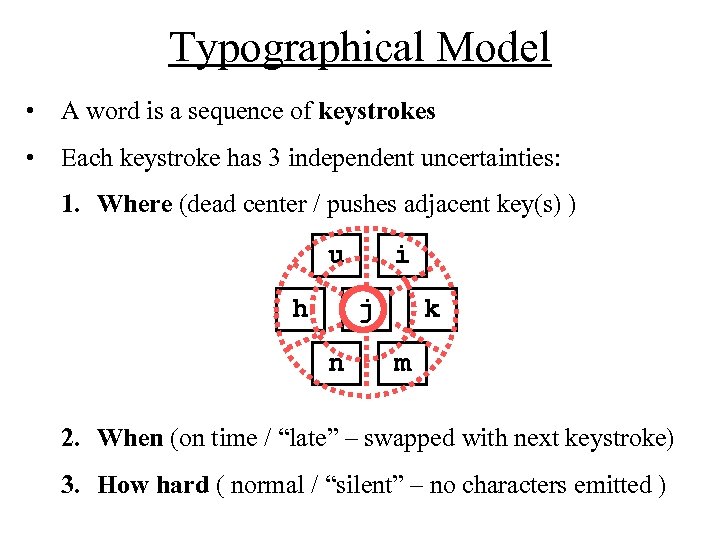

Typographical Model • A word is a sequence of keystrokes • Each keystroke has 3 independent uncertainties: 1. Where (dead center / pushes adjacent key(s) ) u h i j n k m 2. When (on time / “late” – swapped with next keystroke) 3. How hard ( normal / “silent” – no characters emitted )

Typographical Model • A word is a sequence of keystrokes • Each keystroke has 3 independent uncertainties: 1. Where (dead center / pushes adjacent key(s) ) u h i j n k m 2. When (on time / “late” – swapped with next keystroke) 3. How hard ( normal / “silent” – no characters emitted )

Typographical Model • A word is a sequence of keystrokes • Each keystroke has 3 independent uncertainties: 1. Where (dead center / pushes adjacent key(s) ) 2. When (on time / “late” – swapped with next keystroke) 3. How hard ( normal / “silent” – no characters emitted ) • There are 3 corresponding probabilities to set: 1. P( OFF_CENTER ) 2. P( LATE_STROKE ) 3. P( SILENT_STROKE )

Typographical Model • A word is a sequence of keystrokes • Each keystroke has 3 independent uncertainties: 1. Where (dead center / pushes adjacent key(s) ) 2. When (on time / “late” – swapped with next keystroke) 3. How hard ( normal / “silent” – no characters emitted ) • There are 3 corresponding probabilities to set: 1. P( OFF_CENTER ) 2. P( LATE_STROKE ) 3. P( SILENT_STROKE )

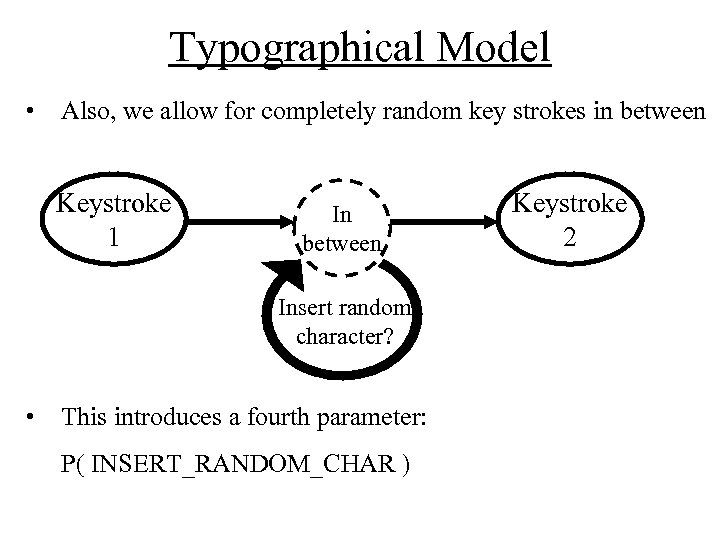

Typographical Model • Also, we allow for completely random key strokes in between Keystroke 1 In between Insert random character? • This introduces a fourth parameter: P( INSERT_RANDOM_CHAR ) Keystroke 2

Typographical Model • Also, we allow for completely random key strokes in between Keystroke 1 In between Insert random character? • This introduces a fourth parameter: P( INSERT_RANDOM_CHAR ) Keystroke 2

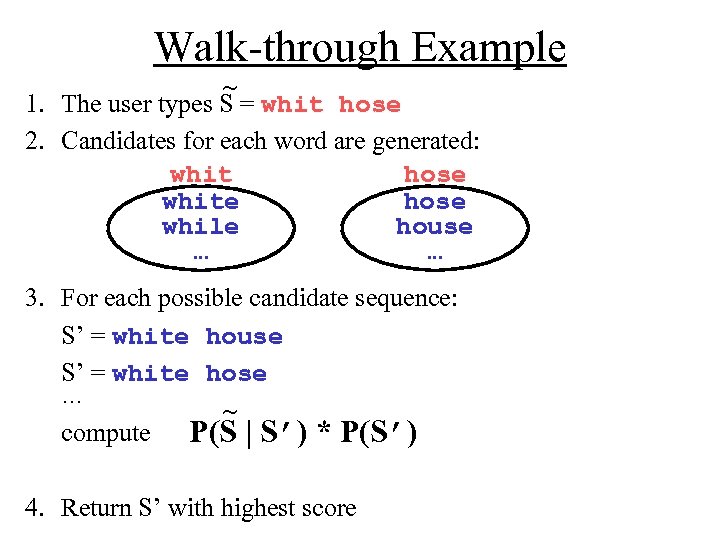

Walk-through Example ~ 1. The user types S = whit hose 2. Candidates for each word are generated: whit hose white hose while house … … 3. For each possible candidate sequence: S’ = white house S’ = white hose … ~ compute P(S | S’) * P(S’) 4. Return S’ with highest score

Walk-through Example ~ 1. The user types S = whit hose 2. Candidates for each word are generated: whit hose white hose while house … … 3. For each possible candidate sequence: S’ = white house S’ = white hose … ~ compute P(S | S’) * P(S’) 4. Return S’ with highest score

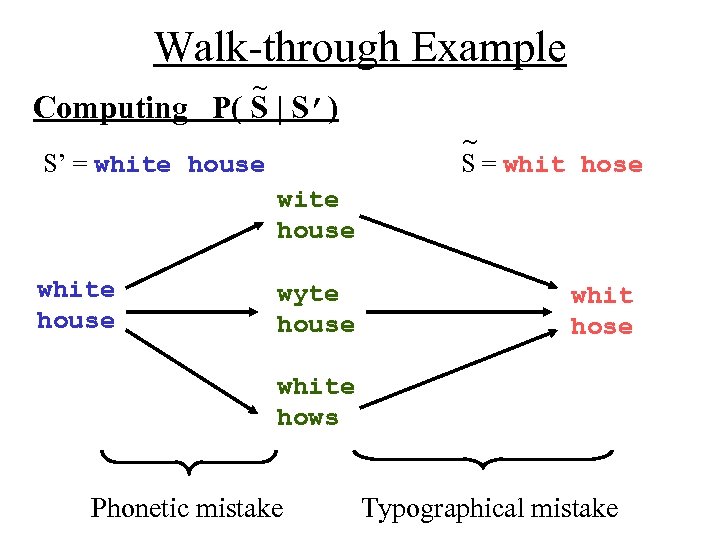

Walk-through Example ~ Computing P( S | S’) ~ S = whit hose S’ = white house wyte house whit hose white hows Phonetic mistake Typographical mistake

Walk-through Example ~ Computing P( S | S’) ~ S = whit hose S’ = white house wyte house whit hose white hows Phonetic mistake Typographical mistake

Demonstration

Demonstration

Conclusions Open Problems: • word boundary mistakes • construction of dictionaries from noisy corpora • clean algorithms for candidate generation/ranking

Conclusions Open Problems: • word boundary mistakes • construction of dictionaries from noisy corpora • clean algorithms for candidate generation/ranking

Conclusions Open Problems: • word boundary mistakes • construction of dictionaries from noisy corpora • clean algorithms for candidate generation/ranking Noisy Channel Model: • breaks down problem into language and error model • requires influences to be computed as probabilities • inherently inefficient – takes effort to reduce the runtime

Conclusions Open Problems: • word boundary mistakes • construction of dictionaries from noisy corpora • clean algorithms for candidate generation/ranking Noisy Channel Model: • breaks down problem into language and error model • requires influences to be computed as probabilities • inherently inefficient – takes effort to reduce the runtime

Thank You Sanjoy – for advising me throughout this project Russell and Amin – for reviewing my paper and begin on my committee Questions?

Thank You Sanjoy – for advising me throughout this project Russell and Amin – for reviewing my paper and begin on my committee Questions?