fddb21bbd571a05ef68566c4ad3ca20d.ppt

- Количество слайдов: 31

Speech-to-Speech MT JANUS C-STAR/Nespole! Lori Levin, Alon Lavie, Bob Frederking LTI Immigration Course September 11, 2000

Speech-to-Speech MT JANUS C-STAR/Nespole! Lori Levin, Alon Lavie, Bob Frederking LTI Immigration Course September 11, 2000

Outline • • • Problems in Speech-to-Speech MT The JANUS Approach The C-STAR/NESPOLE! Interlingua (IF) System Design and Engineering Evaluation and User Studies Open Problems, Current and Future Research

Outline • • • Problems in Speech-to-Speech MT The JANUS Approach The C-STAR/NESPOLE! Interlingua (IF) System Design and Engineering Evaluation and User Studies Open Problems, Current and Future Research

JANUS Speech Translation • • • Translation via an interlingua representation Main translation engine is rule-based Semantic grammars Modular grammar design System engineered for multiple domains Incorporate alternative translation engines

JANUS Speech Translation • • • Translation via an interlingua representation Main translation engine is rule-based Semantic grammars Modular grammar design System engineered for multiple domains Incorporate alternative translation engines

The C-STAR Travel Planning Domain General Scenario: • Dialogue between one traveler and one or more travel agents • Focus on making travel arrangements for a personal leisure trip (not business) • Free spontaneous speech

The C-STAR Travel Planning Domain General Scenario: • Dialogue between one traveler and one or more travel agents • Focus on making travel arrangements for a personal leisure trip (not business) • Free spontaneous speech

The C-STAR Travel Planning Domain Natural breakdown into several sub-domains: • Hotel Information and Reservation • Transportation Information and Reservation • Information about Sights and Events • General Travel Information • Cross Domain

The C-STAR Travel Planning Domain Natural breakdown into several sub-domains: • Hotel Information and Reservation • Transportation Information and Reservation • Information about Sights and Events • General Travel Information • Cross Domain

Semantic Grammars • Describe structure of semantic concepts instead of syntactic constituency of phrases • Well suited for task-oriented dialogue containing many fixed expressions • Appropriate for spoken language - often disfluent and syntactically ill-formed • Faster to develop reasonable coverage for limited domains

Semantic Grammars • Describe structure of semantic concepts instead of syntactic constituency of phrases • Well suited for task-oriented dialogue containing many fixed expressions • Appropriate for spoken language - often disfluent and syntactically ill-formed • Faster to develop reasonable coverage for limited domains

![Semantic Grammars Hotel Reservation Example: Input: we have two hotels available Parse Tree: [give-information+availability+hotel] Semantic Grammars Hotel Reservation Example: Input: we have two hotels available Parse Tree: [give-information+availability+hotel]](https://present5.com/presentation/fddb21bbd571a05ef68566c4ad3ca20d/image-7.jpg) Semantic Grammars Hotel Reservation Example: Input: we have two hotels available Parse Tree: [give-information+availability+hotel] (we have [hotel-type] ([quantity=] (two) [hotel] (hotels) available)

Semantic Grammars Hotel Reservation Example: Input: we have two hotels available Parse Tree: [give-information+availability+hotel] (we have [hotel-type] ([quantity=] (two) [hotel] (hotels) available)

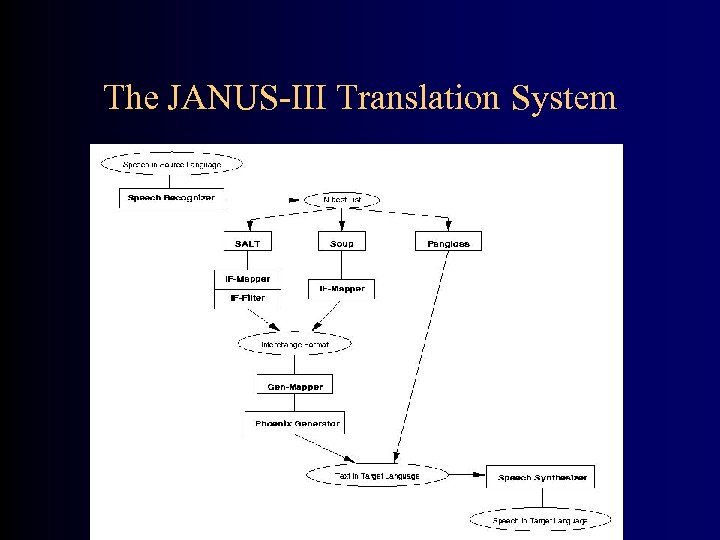

The JANUS-III Translation System

The JANUS-III Translation System

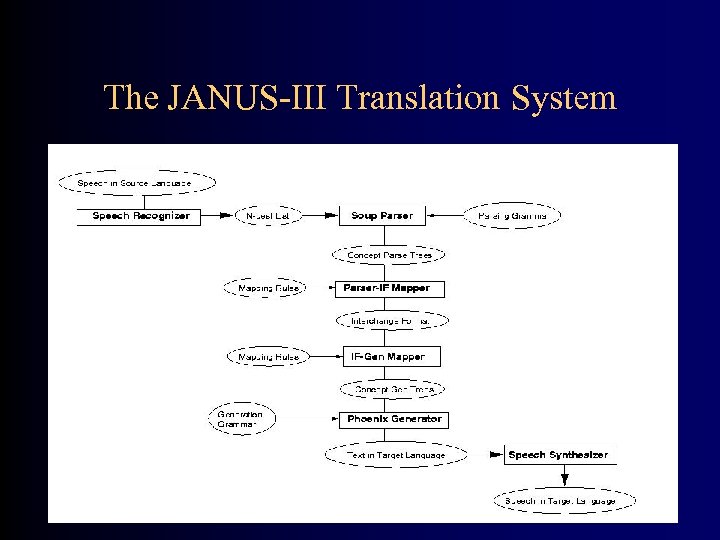

The JANUS-III Translation System

The JANUS-III Translation System

The SOUP Parser • Specifically designed to parse spoken language using domain-specific semantic grammars • Robust - can skip over disfluencies in input • Stochastic - probabilistic CFG encoded as a collection of RTNs with arc probabilities • Top-Down - parses from top-level concepts of the grammar down to matching of terminals • Chart-based - dynamic matrix of parse DAGs indexed by start and end positions and head cat

The SOUP Parser • Specifically designed to parse spoken language using domain-specific semantic grammars • Robust - can skip over disfluencies in input • Stochastic - probabilistic CFG encoded as a collection of RTNs with arc probabilities • Top-Down - parses from top-level concepts of the grammar down to matching of terminals • Chart-based - dynamic matrix of parse DAGs indexed by start and end positions and head cat

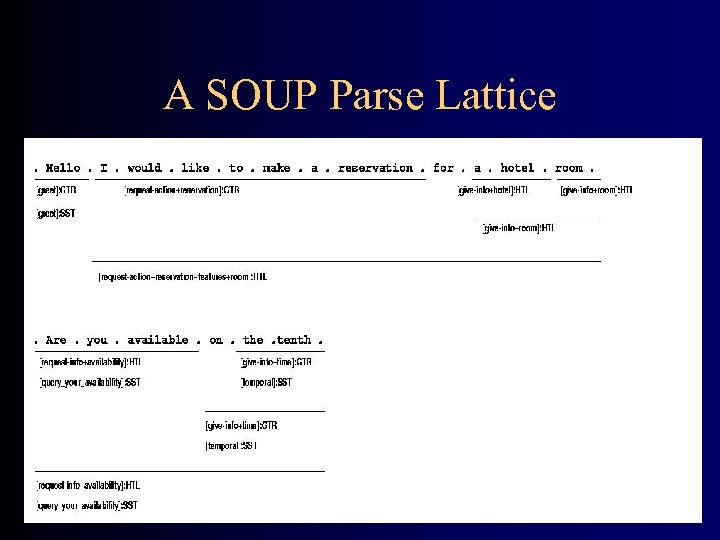

The SOUP Parser • Supports parsing with large multiple domain grammars • Produces a lattice of parse analyses headed by top-level concepts • Disambiguation heuristics rank the analyses in the parse lattice and select a single best path through the lattice • Graphical grammar editor

The SOUP Parser • Supports parsing with large multiple domain grammars • Produces a lattice of parse analyses headed by top-level concepts • Disambiguation heuristics rank the analyses in the parse lattice and select a single best path through the lattice • Graphical grammar editor

SOUP Disambiguation Heuristics • • • Maximize coverage (of input) Minimize number of parse trees (fragmentation) Minimize number of parse tree nodes Minimize the number of wild-card matches Maximize the probability of parse trees Find sequence of domain tags with maximal probability given the input words: P(T|W), where T= t 1, t 2, …, tn is a sequence of domain tags

SOUP Disambiguation Heuristics • • • Maximize coverage (of input) Minimize number of parse trees (fragmentation) Minimize number of parse tree nodes Minimize the number of wild-card matches Maximize the probability of parse trees Find sequence of domain tags with maximal probability given the input words: P(T|W), where T= t 1, t 2, …, tn is a sequence of domain tags

JANUS Generation Modules Two alternative generation modules: • Top-Down context-free based generator fast, used for English and Japanese • Gen. Kit - unification-based generator augmented with Morphe morphology module - used for German

JANUS Generation Modules Two alternative generation modules: • Top-Down context-free based generator fast, used for English and Japanese • Gen. Kit - unification-based generator augmented with Morphe morphology module - used for German

Modular Grammar Design • Grammar development separated into modules corresponding to sub-domains (Hotel, Transportation, Sights, General Travel, Cross Domain) • Shared core grammar for lower-level concepts that are common to the various sub-domains (e. g. times, prices) • Grammars can be developed independently (using shared core grammar) • Shared and Cross-Domain grammars significantly reduce effort in expanding to new domains • Separate grammar modules facilitate associating parses with domain tags - useful for multi-domain integration within the parser

Modular Grammar Design • Grammar development separated into modules corresponding to sub-domains (Hotel, Transportation, Sights, General Travel, Cross Domain) • Shared core grammar for lower-level concepts that are common to the various sub-domains (e. g. times, prices) • Grammars can be developed independently (using shared core grammar) • Shared and Cross-Domain grammars significantly reduce effort in expanding to new domains • Separate grammar modules facilitate associating parses with domain tags - useful for multi-domain integration within the parser

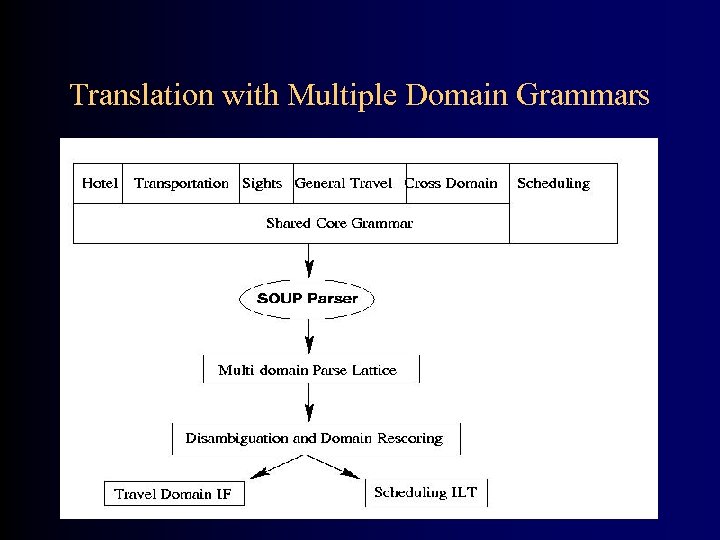

Translation with Multiple Domain Grammars • Parser is loaded with all domain grammars • Domain tag attached to grammar rules of each domain • Previously developed grammars for other domains can also be incorporated • Parser creates a parse lattice consisting of multiple analyses of the input into sequences of top-level domain concepts • Parser disambiguation heuristics rank the analyses in the parse lattice and select a single best sequence of concepts

Translation with Multiple Domain Grammars • Parser is loaded with all domain grammars • Domain tag attached to grammar rules of each domain • Previously developed grammars for other domains can also be incorporated • Parser creates a parse lattice consisting of multiple analyses of the input into sequences of top-level domain concepts • Parser disambiguation heuristics rank the analyses in the parse lattice and select a single best sequence of concepts

Translation with Multiple Domain Grammars

Translation with Multiple Domain Grammars

A SOUP Parse Lattice

A SOUP Parse Lattice

Alternative Approaches: SALT - Statistical Analyzer for Lang. Translation • Combines ML trainable and rule-based analysis methods for robustness and portability • Rule-based parsing restricted to well-defined set of argument-level phrases and fragments • Trainable classifiers (NN, Decision Trees, etc. ) used to derive the DA (speech-act and concepts) from the sequence of argument concepts. • Phrase-level grammars are more robust and portable to new domains

Alternative Approaches: SALT - Statistical Analyzer for Lang. Translation • Combines ML trainable and rule-based analysis methods for robustness and portability • Rule-based parsing restricted to well-defined set of argument-level phrases and fragments • Trainable classifiers (NN, Decision Trees, etc. ) used to derive the DA (speech-act and concepts) from the sequence of argument concepts. • Phrase-level grammars are more robust and portable to new domains

![SALT Approach • Example: Input: we have two hotels available Arg-SOUP: [exist] [hotel-type] [available] SALT Approach • Example: Input: we have two hotels available Arg-SOUP: [exist] [hotel-type] [available]](https://present5.com/presentation/fddb21bbd571a05ef68566c4ad3ca20d/image-19.jpg) SALT Approach • Example: Input: we have two hotels available Arg-SOUP: [exist] [hotel-type] [available] SA-Predictor: give-information Concept-Predictor: availability+hotel • Predictors using SOUP argument concepts and input words • Preliminary results are encouraging

SALT Approach • Example: Input: we have two hotels available Arg-SOUP: [exist] [hotel-type] [available] SA-Predictor: give-information Concept-Predictor: availability+hotel • Predictors using SOUP argument concepts and input words • Preliminary results are encouraging

Alternative Approaches: MEMT Glossary-based Translation • Translates directly into target language (no IF) • Based on Pangloss translation system developed at CMU • Uses a combination of EBMT, phrase glossaries and a bilingual dictionary • English/German system operational • Good fall-back for uncovered utterances

Alternative Approaches: MEMT Glossary-based Translation • Translates directly into target language (no IF) • Based on Pangloss translation system developed at CMU • Uses a combination of EBMT, phrase glossaries and a bilingual dictionary • English/German system operational • Good fall-back for uncovered utterances

User Studies • We conducted three sets of user tests • Travel agent played by experienced system user • Traveler is played by a novice and given five minutes of instruction • Traveler is given a general scenario - e. g. , plan a trip to Heidelberg • Communication only via ST system, multi-modal interface and muted video connection • Data collected used for system evaluation, error analysis and then grammar development

User Studies • We conducted three sets of user tests • Travel agent played by experienced system user • Traveler is played by a novice and given five minutes of instruction • Traveler is given a general scenario - e. g. , plan a trip to Heidelberg • Communication only via ST system, multi-modal interface and muted video connection • Data collected used for system evaluation, error analysis and then grammar development

System Evaluation Methodology • End-to-end evaluations conducted at the SDU (sentence) level • Multiple bilingual graders compare the input with translated output and assign a grade of: Perfect, OK or Bad • OK = meaning of SDU comes across • Perfect = OK + fluent output • Bad = translation incomplete or incorrect

System Evaluation Methodology • End-to-end evaluations conducted at the SDU (sentence) level • Multiple bilingual graders compare the input with translated output and assign a grade of: Perfect, OK or Bad • OK = meaning of SDU comes across • Perfect = OK + fluent output • Bad = translation incomplete or incorrect

August-99 Evaluation • Data from latest user study - traveler planning a trip to Japan • 132 utterances containing one or more SDUs, from six different users • SR word error rate 14. 7% • 40. 2% of utterances contain recognition error(s)

August-99 Evaluation • Data from latest user study - traveler planning a trip to Japan • 132 utterances containing one or more SDUs, from six different users • SR word error rate 14. 7% • 40. 2% of utterances contain recognition error(s)

Evaluation Results

Evaluation Results

Evaluation - Progress Over Time

Evaluation - Progress Over Time

• Speech-to-speech translation for e. Commerce – CMU, Karlsruhe, IRST, CLIPS, 2 commercial partners • Improved limited-domain speech translation • Experiment with multimodality and with MEMT • EU-side has strict scheduling and deliverables – First test domain: Italian travel agency – Second “showcase”: international Help desk • Tied in to CSTAR-III

• Speech-to-speech translation for e. Commerce – CMU, Karlsruhe, IRST, CLIPS, 2 commercial partners • Improved limited-domain speech translation • Experiment with multimodality and with MEMT • EU-side has strict scheduling and deliverables – First test domain: Italian travel agency – Second “showcase”: international Help desk • Tied in to CSTAR-III

C-STAR-III • Partners: ATR, CMU, CLIPS, ETRI, IRST, UKA • Main Research Goals: – Expandability - towards unlimited domains – Accessibility - Speech Translation over wireless phone – Usability - real service for real users

C-STAR-III • Partners: ATR, CMU, CLIPS, ETRI, IRST, UKA • Main Research Goals: – Expandability - towards unlimited domains – Accessibility - Speech Translation over wireless phone – Usability - real service for real users

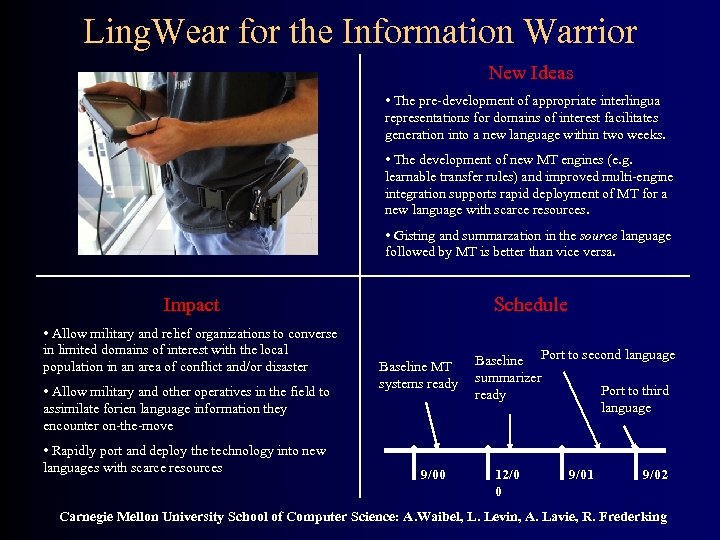

Ling. Wear for the Information Warrior New Ideas • The pre-development of appropriate interlingua representations for domains of interest facilitates generation into a new language within two weeks. • The development of new MT engines (e. g. learnable transfer rules) and improved multi-engine integration supports rapid deployment of MT for a new language with scarce resources. • Gisting and summarzation in the source language followed by MT is better than vice versa. Impact • Allow military and relief organizations to converse in limited domains of interest with the local population in an area of conflict and/or disaster • Allow military and other operatives in the field to assimilate forien language information they encounter on-the-move • Rapidly port and deploy the technology into new languages with scarce resources Schedule Baseline MT systems ready 9/00 Baseline Port to second language summarizer Port to third ready language 12/0 0 9/01 9/02 Carnegie Mellon University School of Computer Science: A. Waibel, L. Levin, A. Lavie, R. Frederking

Ling. Wear for the Information Warrior New Ideas • The pre-development of appropriate interlingua representations for domains of interest facilitates generation into a new language within two weeks. • The development of new MT engines (e. g. learnable transfer rules) and improved multi-engine integration supports rapid deployment of MT for a new language with scarce resources. • Gisting and summarzation in the source language followed by MT is better than vice versa. Impact • Allow military and relief organizations to converse in limited domains of interest with the local population in an area of conflict and/or disaster • Allow military and other operatives in the field to assimilate forien language information they encounter on-the-move • Rapidly port and deploy the technology into new languages with scarce resources Schedule Baseline MT systems ready 9/00 Baseline Port to second language summarizer Port to third ready language 12/0 0 9/01 9/02 Carnegie Mellon University School of Computer Science: A. Waibel, L. Levin, A. Lavie, R. Frederking

Current and Future Work • Expanding the travel domain: covering descriptive as well as task-oriented sentences • Development of the SALT statistical approach and expanding it to other domains • Full integration of multiple MT approaches: SOUP, SALT, Pangloss • Task-based evaluation • Disambiguation: improved sentence-level disambiguation; applying discourse contextual information for disambiguation

Current and Future Work • Expanding the travel domain: covering descriptive as well as task-oriented sentences • Development of the SALT statistical approach and expanding it to other domains • Full integration of multiple MT approaches: SOUP, SALT, Pangloss • Task-based evaluation • Disambiguation: improved sentence-level disambiguation; applying discourse contextual information for disambiguation

Students Working on the Project • Chad Langley: improved SALT approach • Dorcas Wallace: DA disambiguation using decision trees, English grammars • Taro Watanabe: DA correction and disambiguation using Transformation-based Learning, Japanese grammars • Ariadna Font-Llitjos: Spanish Generation

Students Working on the Project • Chad Langley: improved SALT approach • Dorcas Wallace: DA disambiguation using decision trees, English grammars • Taro Watanabe: DA correction and disambiguation using Transformation-based Learning, Japanese grammars • Ariadna Font-Llitjos: Spanish Generation

The JANUS/C-STAR/Nespole! Team • Project Leaders: Lori Levin, Alon Lavie, Alex Waibel, Bob Frederking • Grammar and Component Developers: Donna Gates, Dorcas Wallace, Kay Peterson, Chad Langley, Taro Watanabe, Celine Morel, Susie Burger, Vicky Maclaren, Dan Schneider

The JANUS/C-STAR/Nespole! Team • Project Leaders: Lori Levin, Alon Lavie, Alex Waibel, Bob Frederking • Grammar and Component Developers: Donna Gates, Dorcas Wallace, Kay Peterson, Chad Langley, Taro Watanabe, Celine Morel, Susie Burger, Vicky Maclaren, Dan Schneider