5e35a570687674912c639e01b45abf10.ppt

- Количество слайдов: 59

Sparse Representations of Signals: Theory and Applications * Michael Elad The CS Department The Technion – Israel Institute of technology Haifa 32000, Israel IPAM – MGA Program September 20 th, 2004 * Joint work with: Alfred M. Bruckstein – CS, Technion David L. Donoho Vladimir Temlyakov Jean-Luc Starck – Statistics, Stanford – Math, University of South Carolina – CEA - Service d’Astrophysique, CEA-Saclay, France Sparse representations for Signals – Theory and Applications

Sparse Representations of Signals: Theory and Applications * Michael Elad The CS Department The Technion – Israel Institute of technology Haifa 32000, Israel IPAM – MGA Program September 20 th, 2004 * Joint work with: Alfred M. Bruckstein – CS, Technion David L. Donoho Vladimir Temlyakov Jean-Luc Starck – Statistics, Stanford – Math, University of South Carolina – CEA - Service d’Astrophysique, CEA-Saclay, France Sparse representations for Signals – Theory and Applications

Collaborators Dave Donoho Statistics, Stanford Vladimir Temlyakov Jean Luc Starck CEA -Saclay, France Math, USC Sparse representations for Signals – Theory and Applications Freddy Bruckstein CS Technion 2

Collaborators Dave Donoho Statistics, Stanford Vladimir Temlyakov Jean Luc Starck CEA -Saclay, France Math, USC Sparse representations for Signals – Theory and Applications Freddy Bruckstein CS Technion 2

Agenda 1. Introduction Sparse & overcomplete representations, pursuit algorithms 2. Success of BP/MP as Forward Transforms Uniqueness, equivalence of BP and MP 3. Success of BP/MP for Inverse Problems Uniqueness, stability of BP and MP 4. Applications Image separation and inpainting Sparse representations for Signals – Theory and Applications 3

Agenda 1. Introduction Sparse & overcomplete representations, pursuit algorithms 2. Success of BP/MP as Forward Transforms Uniqueness, equivalence of BP and MP 3. Success of BP/MP for Inverse Problems Uniqueness, stability of BP and MP 4. Applications Image separation and inpainting Sparse representations for Signals – Theory and Applications 3

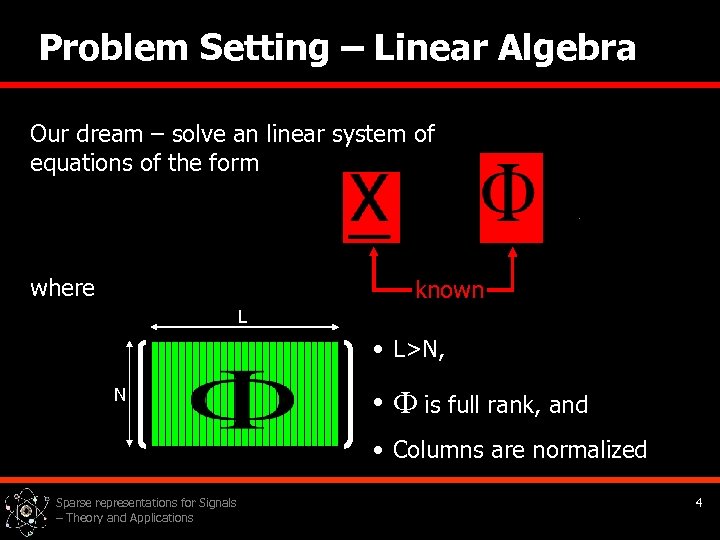

Problem Setting – Linear Algebra Our dream – solve an linear system of equations of the form where known L • L>N, N • Ф is full rank, and • Columns are normalized Sparse representations for Signals – Theory and Applications 4

Problem Setting – Linear Algebra Our dream – solve an linear system of equations of the form where known L • L>N, N • Ф is full rank, and • Columns are normalized Sparse representations for Signals – Theory and Applications 4

Can We Solve This? Generally NO * * Unless additional information is introduced. Our assumption for today: the sparsest possible solution is preferred Sparse representations for Signals – Theory and Applications 5

Can We Solve This? Generally NO * * Unless additional information is introduced. Our assumption for today: the sparsest possible solution is preferred Sparse representations for Signals – Theory and Applications 5

Great … But, • Why look at this problem at all? What is it good for? Why sparseness? • Is now the problem well defined now? does it lead to a unique solution? • How shall we numerically solve this problem? These and related questions will be discussed in today’s talk Sparse representations for Signals – Theory and Applications 6

Great … But, • Why look at this problem at all? What is it good for? Why sparseness? • Is now the problem well defined now? does it lead to a unique solution? • How shall we numerically solve this problem? These and related questions will be discussed in today’s talk Sparse representations for Signals – Theory and Applications 6

Addressing the First Question We will use the linear relation as the core idea for modeling signals Sparse representations for Signals – Theory and Applications 7

Addressing the First Question We will use the linear relation as the core idea for modeling signals Sparse representations for Signals – Theory and Applications 7

Signals’ Origin in Sparse-Land We shall assume that our signals of interest emerge from a random generator machine M Random Signal Generator M Sparse representations for Signals – Theory and Applications x 8

Signals’ Origin in Sparse-Land We shall assume that our signals of interest emerge from a random generator machine M Random Signal Generator M Sparse representations for Signals – Theory and Applications x 8

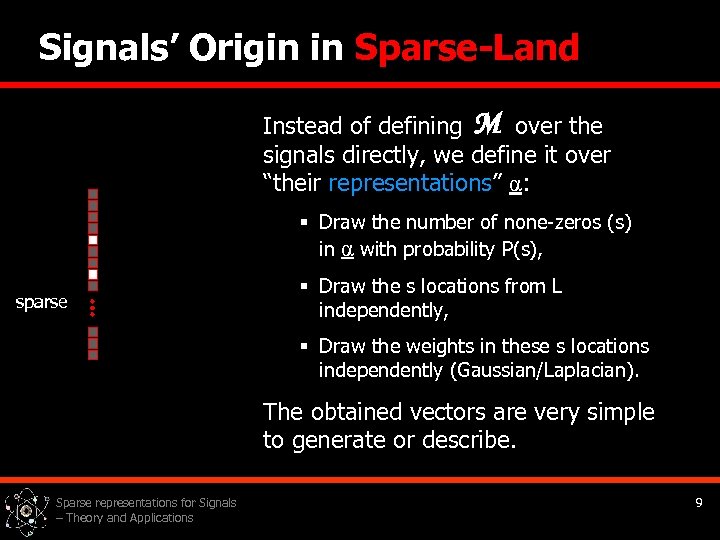

Signals’ Origin in Sparse-Land Instead of defining M over the signals directly, we define it over “their representations” α: § Draw the number of none-zeros (s) in α with probability P(s), sparse § Draw the s locations from L independently, § Draw the weights in these s locations independently (Gaussian/Laplacian). The obtained vectors are very simple to generate or describe. Sparse representations for Signals – Theory and Applications 9

Signals’ Origin in Sparse-Land Instead of defining M over the signals directly, we define it over “their representations” α: § Draw the number of none-zeros (s) in α with probability P(s), sparse § Draw the s locations from L independently, § Draw the weights in these s locations independently (Gaussian/Laplacian). The obtained vectors are very simple to generate or describe. Sparse representations for Signals – Theory and Applications 9

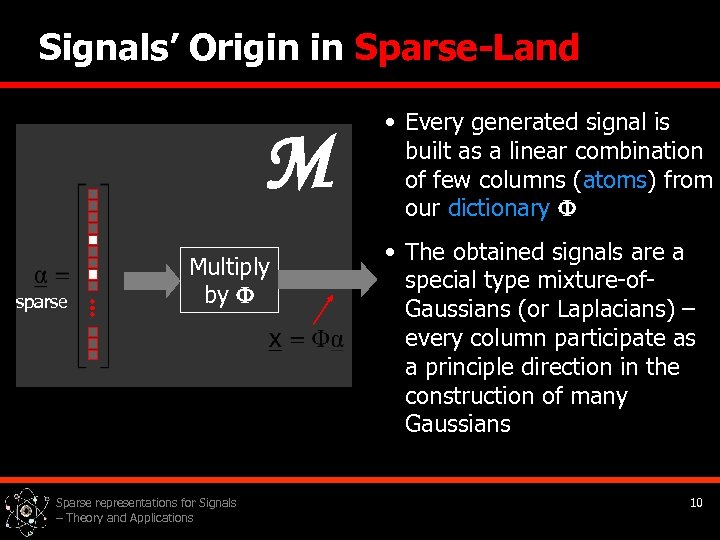

Signals’ Origin in Sparse-Land M sparse Multiply by Sparse representations for Signals – Theory and Applications • Every generated signal is built as a linear combination of few columns (atoms) from our dictionary • The obtained signals are a special type mixture-of. Gaussians (or Laplacians) – every column participate as a principle direction in the construction of many Gaussians 10

Signals’ Origin in Sparse-Land M sparse Multiply by Sparse representations for Signals – Theory and Applications • Every generated signal is built as a linear combination of few columns (atoms) from our dictionary • The obtained signals are a special type mixture-of. Gaussians (or Laplacians) – every column participate as a principle direction in the construction of many Gaussians 10

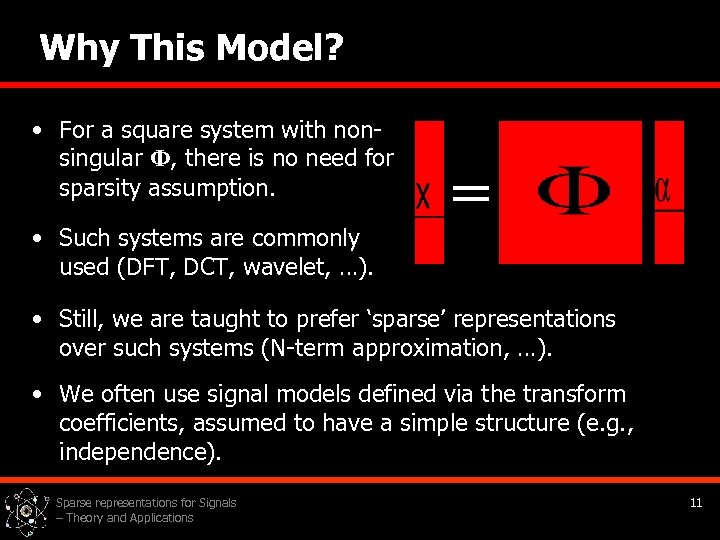

Why This Model? • For a square system with nonsingular Ф, there is no need for sparsity assumption. • Such systems are commonly used (DFT, DCT, wavelet, …). = • Still, we are taught to prefer ‘sparse’ representations over such systems (N-term approximation, …). • We often use signal models defined via the transform coefficients, assumed to have a simple structure (e. g. , independence). Sparse representations for Signals – Theory and Applications 11

Why This Model? • For a square system with nonsingular Ф, there is no need for sparsity assumption. • Such systems are commonly used (DFT, DCT, wavelet, …). = • Still, we are taught to prefer ‘sparse’ representations over such systems (N-term approximation, …). • We often use signal models defined via the transform coefficients, assumed to have a simple structure (e. g. , independence). Sparse representations for Signals – Theory and Applications 11

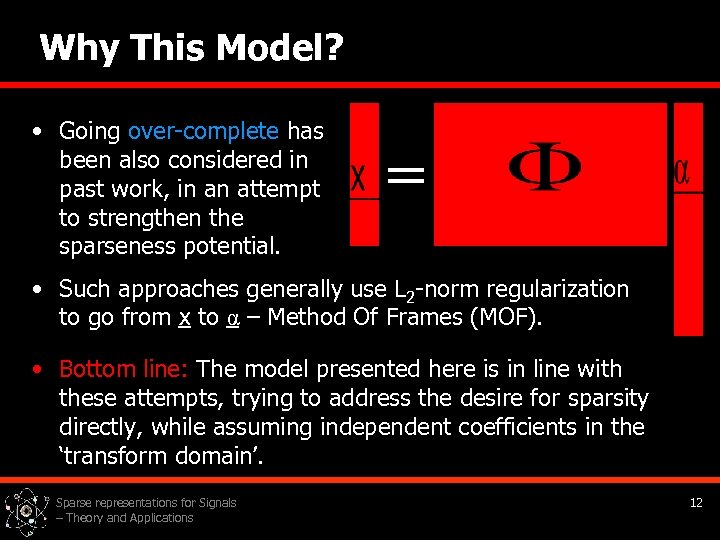

Why This Model? • Going over-complete has been also considered in past work, in an attempt to strengthen the sparseness potential. = • Such approaches generally use L 2 -norm regularization to go from x to α – Method Of Frames (MOF). • Bottom line: The model presented here is in line with these attempts, trying to address the desire for sparsity directly, while assuming independent coefficients in the ‘transform domain’. Sparse representations for Signals – Theory and Applications 12

Why This Model? • Going over-complete has been also considered in past work, in an attempt to strengthen the sparseness potential. = • Such approaches generally use L 2 -norm regularization to go from x to α – Method Of Frames (MOF). • Bottom line: The model presented here is in line with these attempts, trying to address the desire for sparsity directly, while assuming independent coefficients in the ‘transform domain’. Sparse representations for Signals – Theory and Applications 12

What’s to do With Such a Model? • Signal Transform: Given the signal, its sparsest (over-complete) representation α is its forward transform. Consider this for compression, feature extraction, analysis/synthesis of signals, … • Signal Prior: in inverse problems seek a solution that has a sparse representation over a predetermined dictionary, and this way regularize the problem (just as TV, bilateral, Beltrami flow, wavelet, and other priors are used). Sparse representations for Signals – Theory and Applications 13

What’s to do With Such a Model? • Signal Transform: Given the signal, its sparsest (over-complete) representation α is its forward transform. Consider this for compression, feature extraction, analysis/synthesis of signals, … • Signal Prior: in inverse problems seek a solution that has a sparse representation over a predetermined dictionary, and this way regularize the problem (just as TV, bilateral, Beltrami flow, wavelet, and other priors are used). Sparse representations for Signals – Theory and Applications 13

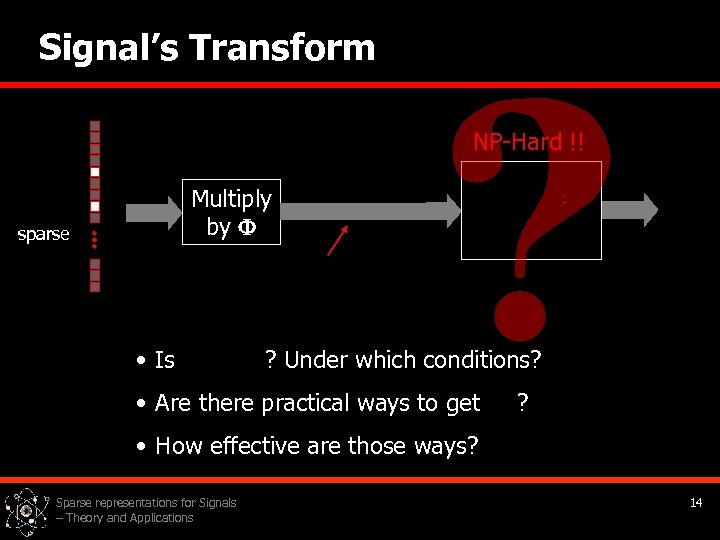

Signal’s Transform NP-Hard !! Multiply by sparse • Is ? Under which conditions? • Are there practical ways to get ? • How effective are those ways? Sparse representations for Signals – Theory and Applications 14

Signal’s Transform NP-Hard !! Multiply by sparse • Is ? Under which conditions? • Are there practical ways to get ? • How effective are those ways? Sparse representations for Signals – Theory and Applications 14

![Practical Pursuit Algorithms The Basis Pursuit se sparse alg [Chen, Donoho, Saunders (‘ 95)] Practical Pursuit Algorithms The Basis Pursuit se sparse alg [Chen, Donoho, Saunders (‘ 95)]](https://present5.com/presentation/5e35a570687674912c639e01b45abf10/image-15.jpg) Practical Pursuit Algorithms The Basis Pursuit se sparse alg [Chen, Donoho, Saunders (‘ 95)] orit in m hm NP-Hard Multiply any s wo (bu by t no cas rk w e[Mallat & Zhang (‘ 93)] l el t al s wa ys. Matching Pursuit ) Greedily minimize Sparse representations for Signals – Theory and Applications 15

Practical Pursuit Algorithms The Basis Pursuit se sparse alg [Chen, Donoho, Saunders (‘ 95)] orit in m hm NP-Hard Multiply any s wo (bu by t no cas rk w e[Mallat & Zhang (‘ 93)] l el t al s wa ys. Matching Pursuit ) Greedily minimize Sparse representations for Signals – Theory and Applications 15

Signal Prior • Assume that x is known to emerge from sparse such that • Suppose we observe with. • We denoise the signal M , i. e. , a noisy version of x by solving • This way we see that sparse representations can serve in inverse problems (denoising is the simplest example). Sparse representations for Signals – Theory and Applications 16

Signal Prior • Assume that x is known to emerge from sparse such that • Suppose we observe with. • We denoise the signal M , i. e. , a noisy version of x by solving • This way we see that sparse representations can serve in inverse problems (denoising is the simplest example). Sparse representations for Signals – Theory and Applications 16

To summarize … • Given a dictionary and a signal x, we want to find the sparsest “atom decomposition” of the signal by either or • Basis/Matching Pursuit algorithms propose alternative traceable method to compute the desired solution. • Our focus today: – – – Why should this work? Under what conditions could we claim success of BP/MP? What can we do with such results? Sparse representations for Signals – Theory and Applications 17

To summarize … • Given a dictionary and a signal x, we want to find the sparsest “atom decomposition” of the signal by either or • Basis/Matching Pursuit algorithms propose alternative traceable method to compute the desired solution. • Our focus today: – – – Why should this work? Under what conditions could we claim success of BP/MP? What can we do with such results? Sparse representations for Signals – Theory and Applications 17

Due to the Time Limit … (and the speaker’s limited knowledge) we will NOT discuss today • Proofs (and there are beautiful and painful proofs). • Numerical considerations in the pursuit algorithms. • Exotic results (e. g. -norm results, amalgam of orthobases, uncertainty principles). • Average performance (probabilistic) bounds. • How to train on data to obtain the best dictionary Ф. • Relation to other fields (Machine Learning, ICA, …). Sparse representations for Signals – Theory and Applications 18

Due to the Time Limit … (and the speaker’s limited knowledge) we will NOT discuss today • Proofs (and there are beautiful and painful proofs). • Numerical considerations in the pursuit algorithms. • Exotic results (e. g. -norm results, amalgam of orthobases, uncertainty principles). • Average performance (probabilistic) bounds. • How to train on data to obtain the best dictionary Ф. • Relation to other fields (Machine Learning, ICA, …). Sparse representations for Signals – Theory and Applications 18

Agenda 1. Introduction Sparse & overcomplete representations, pursuit algorithms 2. Success of BP/MP as Forward Transforms Uniqueness, equivalence of BP and MP 3. Success of BP/MP for Inverse Problems Uniqueness, stability of BP and MP 4. Applications Image separation and inpainting Sparse representations for Signals – Theory and Applications 19

Agenda 1. Introduction Sparse & overcomplete representations, pursuit algorithms 2. Success of BP/MP as Forward Transforms Uniqueness, equivalence of BP and MP 3. Success of BP/MP for Inverse Problems Uniqueness, stability of BP and MP 4. Applications Image separation and inpainting Sparse representations for Signals – Theory and Applications 19

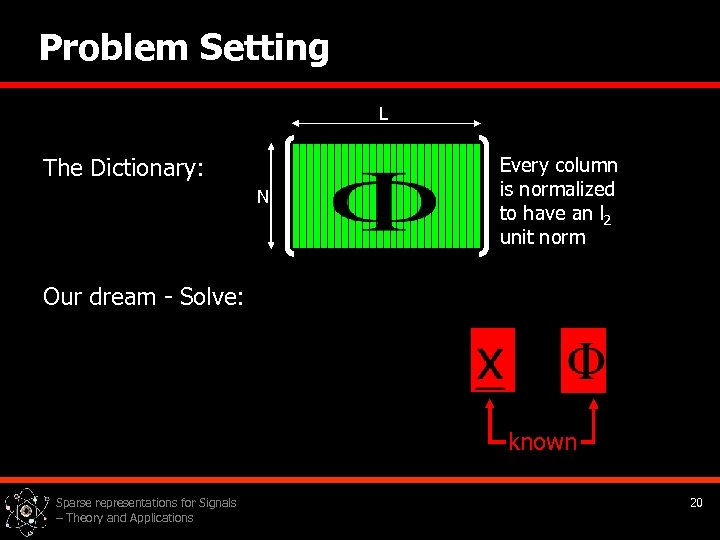

Problem Setting L The Dictionary: N Every column is normalized to have an l 2 unit norm Our dream - Solve: known Sparse representations for Signals – Theory and Applications 20

Problem Setting L The Dictionary: N Every column is normalized to have an l 2 unit norm Our dream - Solve: known Sparse representations for Signals – Theory and Applications 20

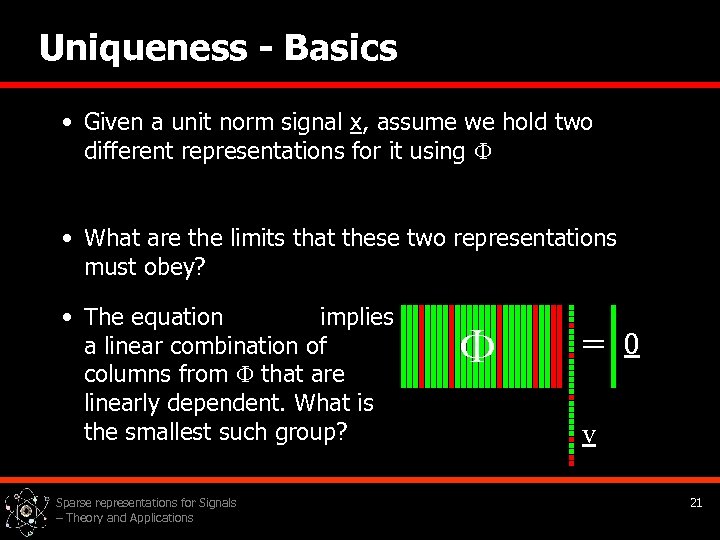

Uniqueness - Basics • Given a unit norm signal x, assume we hold two different representations for it using • What are the limits that these two representations must obey? • The equation implies a linear combination of columns from that are linearly dependent. What is the smallest such group? Sparse representations for Signals – Theory and Applications = 0 v 21

Uniqueness - Basics • Given a unit norm signal x, assume we hold two different representations for it using • What are the limits that these two representations must obey? • The equation implies a linear combination of columns from that are linearly dependent. What is the smallest such group? Sparse representations for Signals – Theory and Applications = 0 v 21

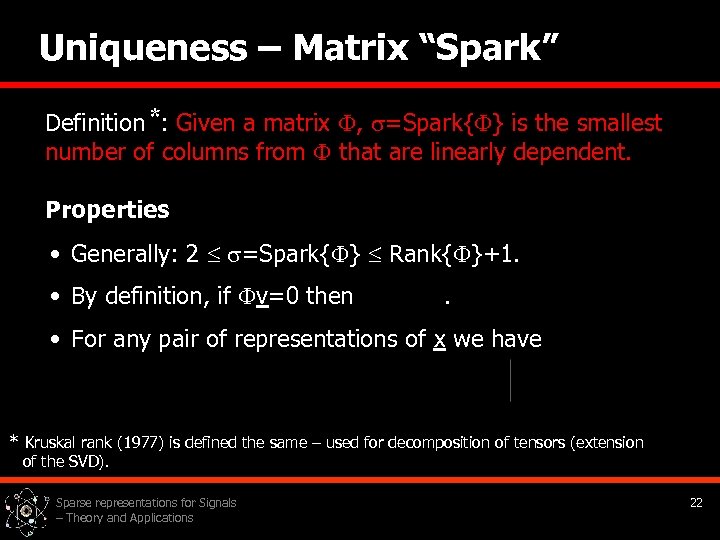

Uniqueness – Matrix “Spark” Definition *: Given a matrix , =Spark{ } is the smallest number of columns from that are linearly dependent. Properties • Generally: 2 =Spark{ } Rank{ }+1. • By definition, if v=0 then . • For any pair of representations of x we have * Kruskal rank (1977) is defined the same – used for decomposition of tensors (extension of the SVD). Sparse representations for Signals – Theory and Applications 22

Uniqueness – Matrix “Spark” Definition *: Given a matrix , =Spark{ } is the smallest number of columns from that are linearly dependent. Properties • Generally: 2 =Spark{ } Rank{ }+1. • By definition, if v=0 then . • For any pair of representations of x we have * Kruskal rank (1977) is defined the same – used for decomposition of tensors (extension of the SVD). Sparse representations for Signals – Theory and Applications 22

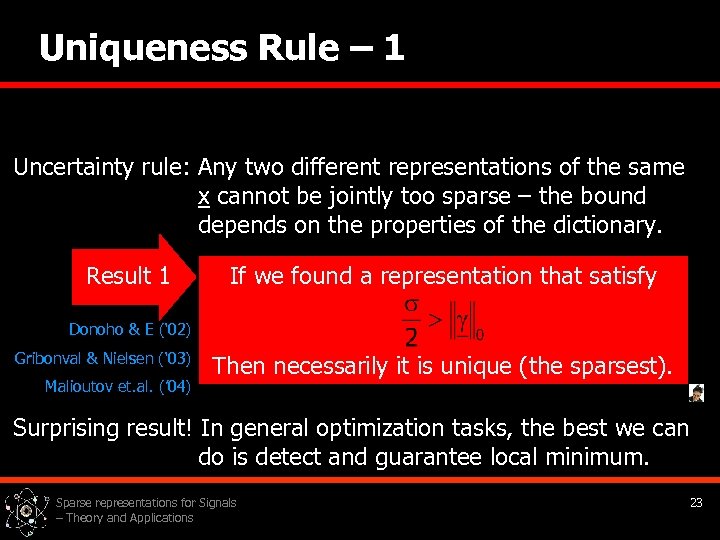

Uniqueness Rule – 1 Uncertainty rule: Any two different representations of the same x cannot be jointly too sparse – the bound depends on the properties of the dictionary. Result 1 If we found a representation that satisfy Donoho & E (‘ 02) Gribonval & Nielsen (‘ 03) Malioutov et. al. (’ 04) Then necessarily it is unique (the sparsest). Surprising result! In general optimization tasks, the best we can do is detect and guarantee local minimum. Sparse representations for Signals – Theory and Applications 23

Uniqueness Rule – 1 Uncertainty rule: Any two different representations of the same x cannot be jointly too sparse – the bound depends on the properties of the dictionary. Result 1 If we found a representation that satisfy Donoho & E (‘ 02) Gribonval & Nielsen (‘ 03) Malioutov et. al. (’ 04) Then necessarily it is unique (the sparsest). Surprising result! In general optimization tasks, the best we can do is detect and guarantee local minimum. Sparse representations for Signals – Theory and Applications 23

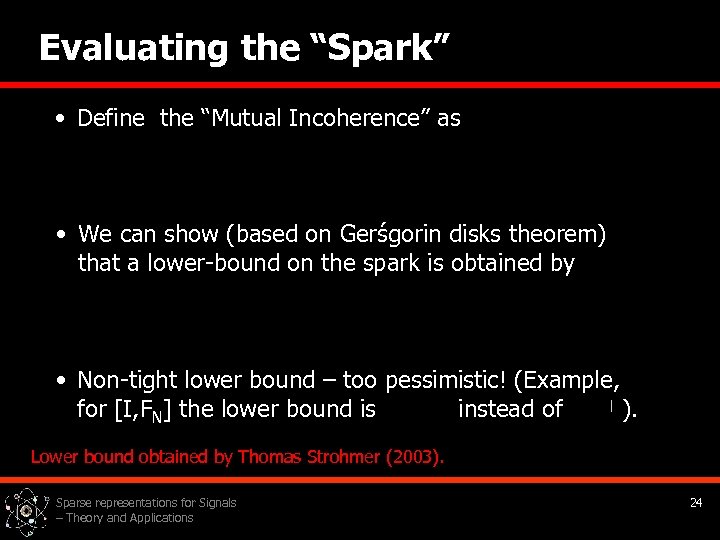

Evaluating the “Spark” • Define the “Mutual Incoherence” as • We can show (based on Gerśgorin disks theorem) that a lower-bound on the spark is obtained by • Non-tight lower bound – too pessimistic! (Example, for [I, FN] the lower bound is instead of ). Lower bound obtained by Thomas Strohmer (2003). Sparse representations for Signals – Theory and Applications 24

Evaluating the “Spark” • Define the “Mutual Incoherence” as • We can show (based on Gerśgorin disks theorem) that a lower-bound on the spark is obtained by • Non-tight lower bound – too pessimistic! (Example, for [I, FN] the lower bound is instead of ). Lower bound obtained by Thomas Strohmer (2003). Sparse representations for Signals – Theory and Applications 24

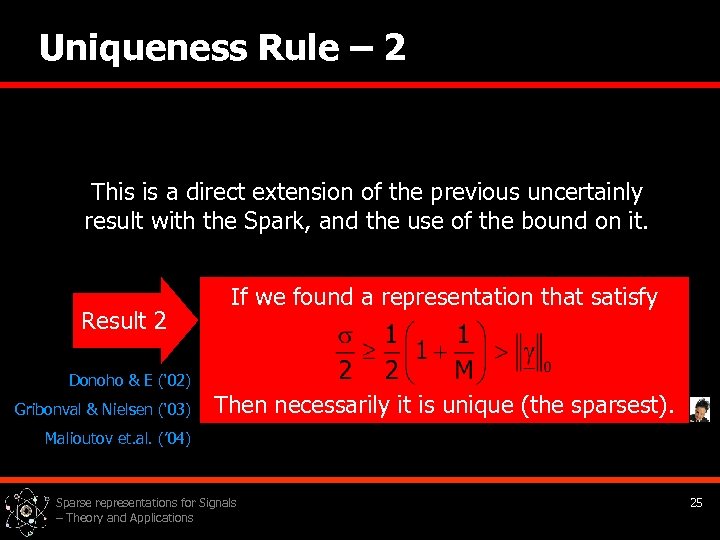

Uniqueness Rule – 2 This is a direct extension of the previous uncertainly result with the Spark, and the use of the bound on it. Result 2 Donoho & E (‘ 02) Gribonval & Nielsen (‘ 03) If we found a representation that satisfy Then necessarily it is unique (the sparsest). Malioutov et. al. (’ 04) Sparse representations for Signals – Theory and Applications 25

Uniqueness Rule – 2 This is a direct extension of the previous uncertainly result with the Spark, and the use of the bound on it. Result 2 Donoho & E (‘ 02) Gribonval & Nielsen (‘ 03) If we found a representation that satisfy Then necessarily it is unique (the sparsest). Malioutov et. al. (’ 04) Sparse representations for Signals – Theory and Applications 25

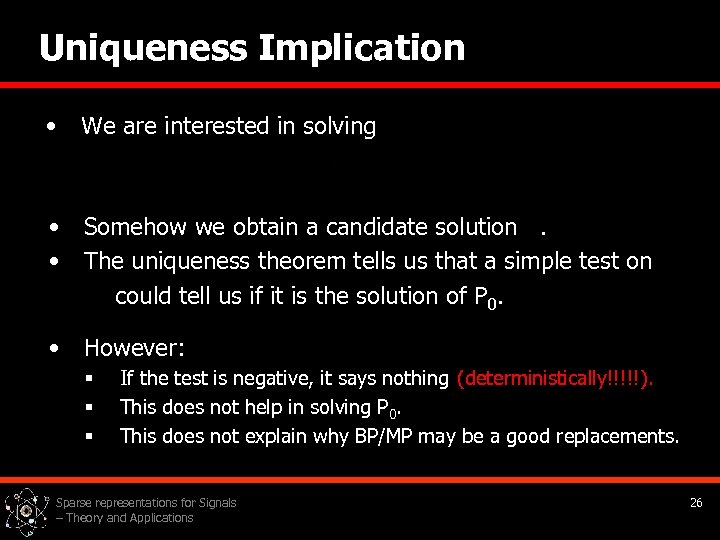

Uniqueness Implication • We are interested in solving • • Somehow we obtain a candidate solution. The uniqueness theorem tells us that a simple test on could tell us if it is the solution of P 0. • However: § § § If the test is negative, it says nothing. (deterministically!!!!!). This does not help in solving P 0. This does not explain why BP/MP may be a good replacements. Sparse representations for Signals – Theory and Applications 26

Uniqueness Implication • We are interested in solving • • Somehow we obtain a candidate solution. The uniqueness theorem tells us that a simple test on could tell us if it is the solution of P 0. • However: § § § If the test is negative, it says nothing. (deterministically!!!!!). This does not help in solving P 0. This does not explain why BP/MP may be a good replacements. Sparse representations for Signals – Theory and Applications 26

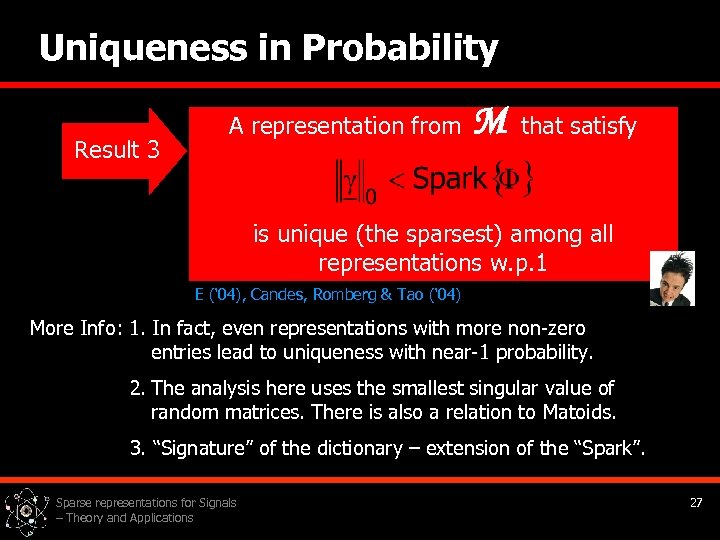

Uniqueness in Probability Result 3 A representation from M that satisfy is unique (the sparsest) among all representations w. p. 1 E (‘ 04), Candes, Romberg & Tao (‘ 04) More Info: 1. In fact, even representations with more non-zero entries lead to uniqueness with near-1 probability. 2. The analysis here uses the smallest singular value of random matrices. There is also a relation to Matoids. 3. “Signature” of the dictionary – extension of the “Spark”. Sparse representations for Signals – Theory and Applications 27

Uniqueness in Probability Result 3 A representation from M that satisfy is unique (the sparsest) among all representations w. p. 1 E (‘ 04), Candes, Romberg & Tao (‘ 04) More Info: 1. In fact, even representations with more non-zero entries lead to uniqueness with near-1 probability. 2. The analysis here uses the smallest singular value of random matrices. There is also a relation to Matoids. 3. “Signature” of the dictionary – extension of the “Spark”. Sparse representations for Signals – Theory and Applications 27

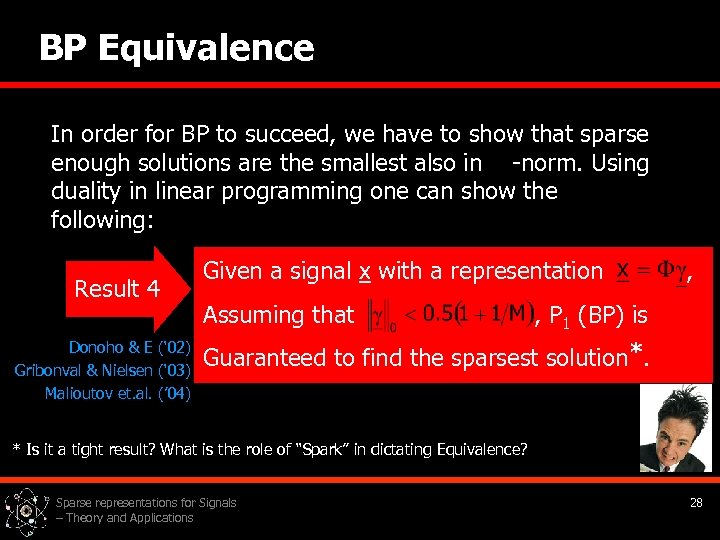

BP Equivalence In order for BP to succeed, we have to show that sparse enough solutions are the smallest also in -norm. Using duality in linear programming one can show the following: Result 4 Donoho & E (‘ 02) Gribonval & Nielsen (‘ 03) Malioutov et. al. (’ 04) Given a signal x with a representation Assuming that , , P 1 (BP) is Guaranteed to find the sparsest solution*. * Is it a tight result? What is the role of “Spark” in dictating Equivalence? Sparse representations for Signals – Theory and Applications 28

BP Equivalence In order for BP to succeed, we have to show that sparse enough solutions are the smallest also in -norm. Using duality in linear programming one can show the following: Result 4 Donoho & E (‘ 02) Gribonval & Nielsen (‘ 03) Malioutov et. al. (’ 04) Given a signal x with a representation Assuming that , , P 1 (BP) is Guaranteed to find the sparsest solution*. * Is it a tight result? What is the role of “Spark” in dictating Equivalence? Sparse representations for Signals – Theory and Applications 28

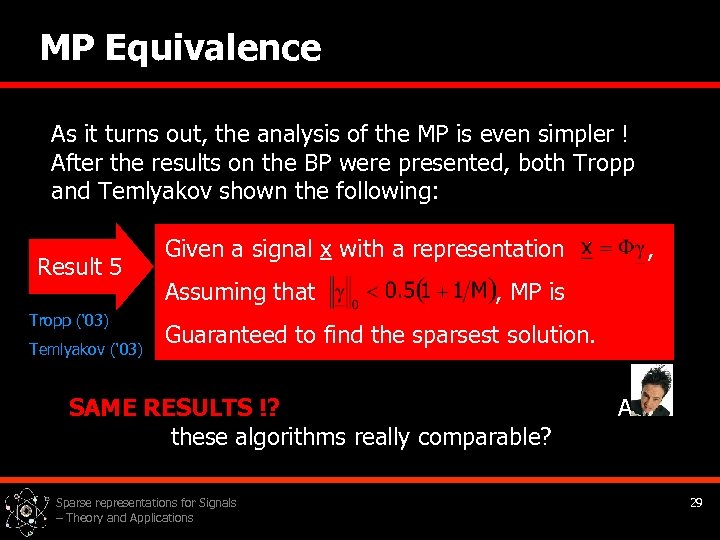

MP Equivalence As it turns out, the analysis of the MP is even simpler ! After the results on the BP were presented, both Tropp and Temlyakov shown the following: Result 5 Tropp (‘ 03) Temlyakov (‘ 03) Given a signal x with a representation Assuming that , , MP is Guaranteed to find the sparsest solution. SAME RESULTS !? these algorithms really comparable? Sparse representations for Signals – Theory and Applications Are 29

MP Equivalence As it turns out, the analysis of the MP is even simpler ! After the results on the BP were presented, both Tropp and Temlyakov shown the following: Result 5 Tropp (‘ 03) Temlyakov (‘ 03) Given a signal x with a representation Assuming that , , MP is Guaranteed to find the sparsest solution. SAME RESULTS !? these algorithms really comparable? Sparse representations for Signals – Theory and Applications Are 29

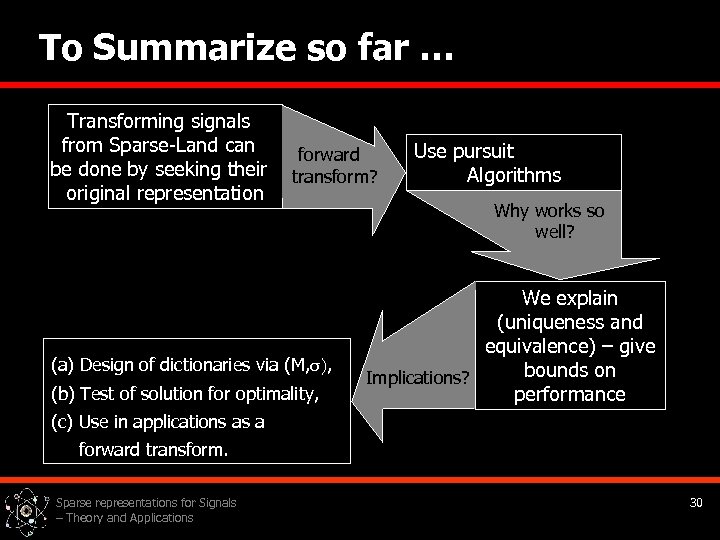

To Summarize so far … Transforming signals from Sparse-Land can be done by seeking their original representation forward transform? (a) Design of dictionaries via (M, σ), (b) Test of solution for optimality, Use pursuit Algorithms Why works so well? We explain (uniqueness and equivalence) – give bounds on Implications? performance (c) Use in applications as a forward transform. Sparse representations for Signals – Theory and Applications 30

To Summarize so far … Transforming signals from Sparse-Land can be done by seeking their original representation forward transform? (a) Design of dictionaries via (M, σ), (b) Test of solution for optimality, Use pursuit Algorithms Why works so well? We explain (uniqueness and equivalence) – give bounds on Implications? performance (c) Use in applications as a forward transform. Sparse representations for Signals – Theory and Applications 30

Agenda 1. Introduction Sparse & overcomplete representations, pursuit algorithms 2. Success of BP/MP as Forward Transforms Uniqueness, equivalence of BP and MP 3. Success of BP/MP for Inverse Problems Uniqueness, stability of BP and MP 4. Applications Image separation and inpainting Sparse representations for Signals – Theory and Applications 31

Agenda 1. Introduction Sparse & overcomplete representations, pursuit algorithms 2. Success of BP/MP as Forward Transforms Uniqueness, equivalence of BP and MP 3. Success of BP/MP for Inverse Problems Uniqueness, stability of BP and MP 4. Applications Image separation and inpainting Sparse representations for Signals – Theory and Applications 31

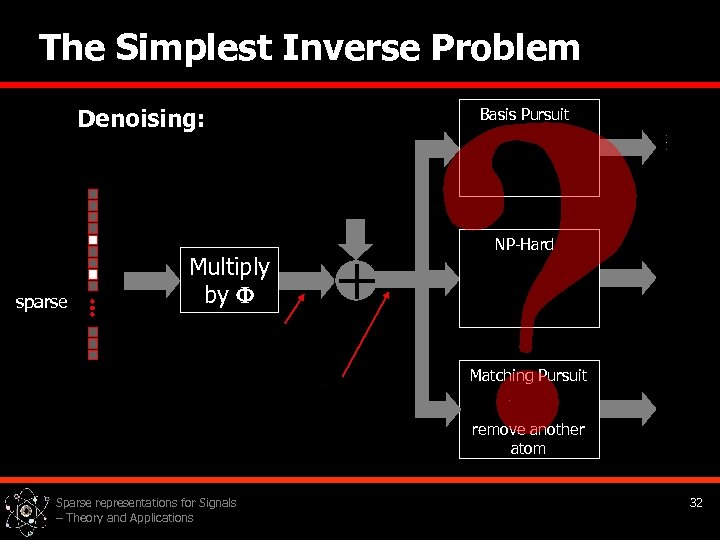

The Simplest Inverse Problem Denoising: sparse Multiply by Basis Pursuit + NP-Hard Matching Pursuit remove another atom Sparse representations for Signals – Theory and Applications 32

The Simplest Inverse Problem Denoising: sparse Multiply by Basis Pursuit + NP-Hard Matching Pursuit remove another atom Sparse representations for Signals – Theory and Applications 32

Questions We Should Ask • Reconstruction of the signal: § What is the relation between this and other Bayesian alternative methods [e. g. TV, wavelet denoising, … ]? § What is the role of over-completeness and sparsity here? § How about other, more general inverse problems? These are topics of our current research with P. Milanfar, D. L. Donoho, and R. Rubinstein. • Reconstruction of the representation: § Why the denoising works with P 0( )? § Why should the pursuit algorithms succeed? These questions are generalizations of the previous treatment. Sparse representations for Signals – Theory and Applications 33

Questions We Should Ask • Reconstruction of the signal: § What is the relation between this and other Bayesian alternative methods [e. g. TV, wavelet denoising, … ]? § What is the role of over-completeness and sparsity here? § How about other, more general inverse problems? These are topics of our current research with P. Milanfar, D. L. Donoho, and R. Rubinstein. • Reconstruction of the representation: § Why the denoising works with P 0( )? § Why should the pursuit algorithms succeed? These questions are generalizations of the previous treatment. Sparse representations for Signals – Theory and Applications 33

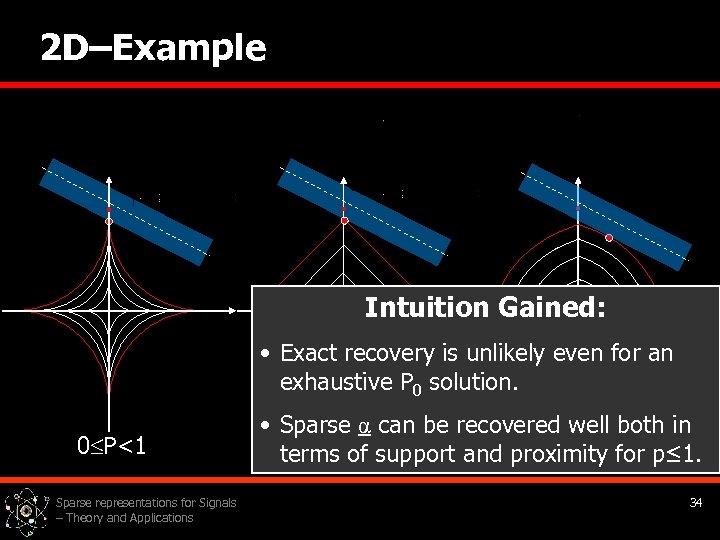

2 D–Example Intuition Gained: • Exact recovery is unlikely even for an exhaustive P 0 solution. 0 P<1 Sparse representations for Signals – Theory and Applications • Sparse α can be recovered well both in P=1 P>1 terms of support and proximity for p≤ 1. 34

2 D–Example Intuition Gained: • Exact recovery is unlikely even for an exhaustive P 0 solution. 0 P<1 Sparse representations for Signals – Theory and Applications • Sparse α can be recovered well both in P=1 P>1 terms of support and proximity for p≤ 1. 34

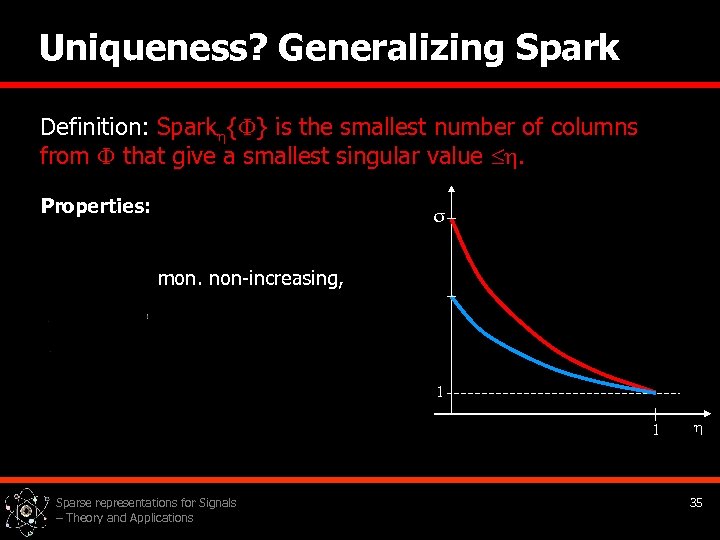

Uniqueness? Generalizing Spark Definition: Spark { } is the smallest number of columns from that give a smallest singular value . Properties: σ mon. non-increasing, 1 1 Sparse representations for Signals – Theory and Applications 35

Uniqueness? Generalizing Spark Definition: Spark { } is the smallest number of columns from that give a smallest singular value . Properties: σ mon. non-increasing, 1 1 Sparse representations for Signals – Theory and Applications 35

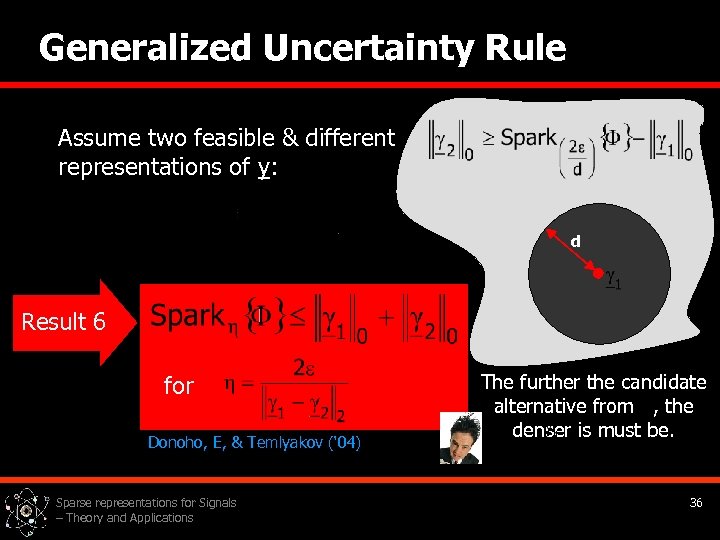

Generalized Uncertainty Rule Assume two feasible & different representations of y: d Result 6 for Donoho, E, & Temlyakov (‘ 04) Sparse representations for Signals – Theory and Applications The further the candidate alternative from , the denser is must be. 36

Generalized Uncertainty Rule Assume two feasible & different representations of y: d Result 6 for Donoho, E, & Temlyakov (‘ 04) Sparse representations for Signals – Theory and Applications The further the candidate alternative from , the denser is must be. 36

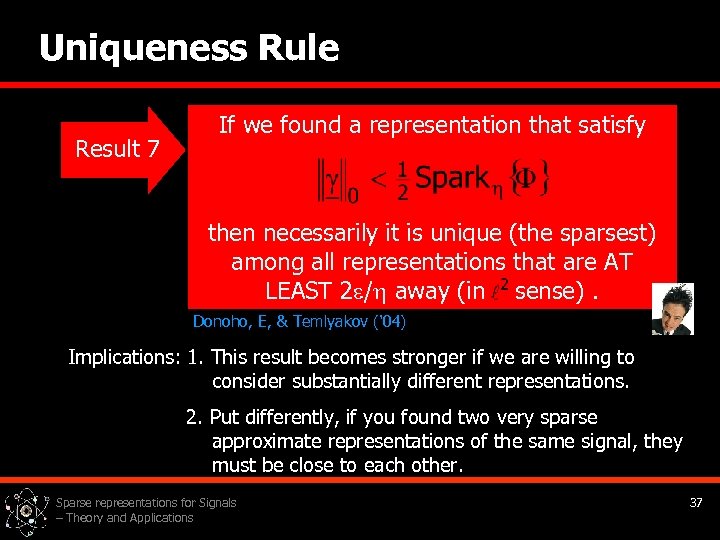

Uniqueness Rule Result 7 If we found a representation that satisfy then necessarily it is unique (the sparsest) among all representations that are AT LEAST 2 / away (in sense). Donoho, E, & Temlyakov (‘ 04) Implications: 1. This result becomes stronger if we are willing to consider substantially different representations. 2. Put differently, if you found two very sparse approximate representations of the same signal, they must be close to each other. Sparse representations for Signals – Theory and Applications 37

Uniqueness Rule Result 7 If we found a representation that satisfy then necessarily it is unique (the sparsest) among all representations that are AT LEAST 2 / away (in sense). Donoho, E, & Temlyakov (‘ 04) Implications: 1. This result becomes stronger if we are willing to consider substantially different representations. 2. Put differently, if you found two very sparse approximate representations of the same signal, they must be close to each other. Sparse representations for Signals – Theory and Applications 37

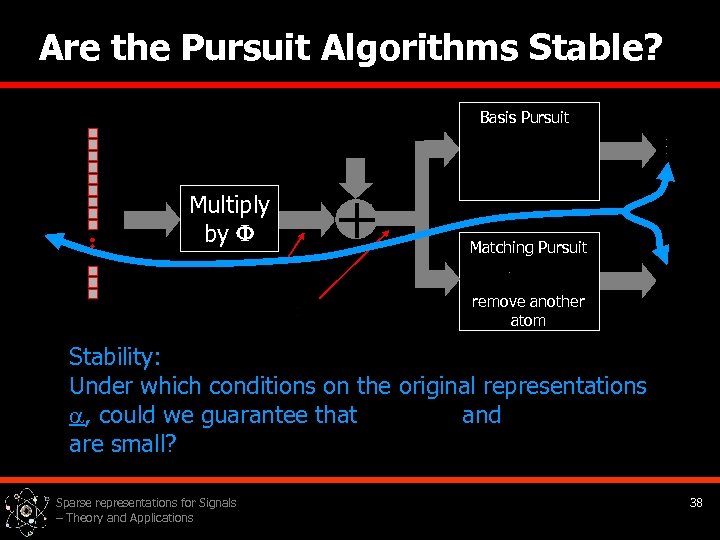

Are the Pursuit Algorithms Stable? Basis Pursuit Multiply by + Matching Pursuit remove another atom Stability: Under which conditions on the original representations , could we guarantee that and are small? Sparse representations for Signals – Theory and Applications 38

Are the Pursuit Algorithms Stable? Basis Pursuit Multiply by + Matching Pursuit remove another atom Stability: Under which conditions on the original representations , could we guarantee that and are small? Sparse representations for Signals – Theory and Applications 38

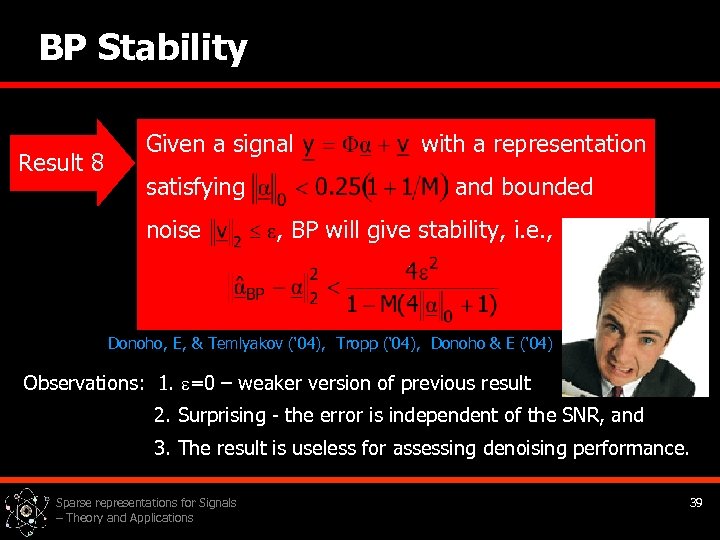

BP Stability Result 8 Given a signal satisfying noise with a representation and bounded , BP will give stability, i. e. , Donoho, E, & Temlyakov (‘ 04), Tropp (‘ 04), Donoho & E (‘ 04) Observations: 1. =0 – weaker version of previous result 2. Surprising - the error is independent of the SNR, and 3. The result is useless for assessing denoising performance. Sparse representations for Signals – Theory and Applications 39

BP Stability Result 8 Given a signal satisfying noise with a representation and bounded , BP will give stability, i. e. , Donoho, E, & Temlyakov (‘ 04), Tropp (‘ 04), Donoho & E (‘ 04) Observations: 1. =0 – weaker version of previous result 2. Surprising - the error is independent of the SNR, and 3. The result is useless for assessing denoising performance. Sparse representations for Signals – Theory and Applications 39

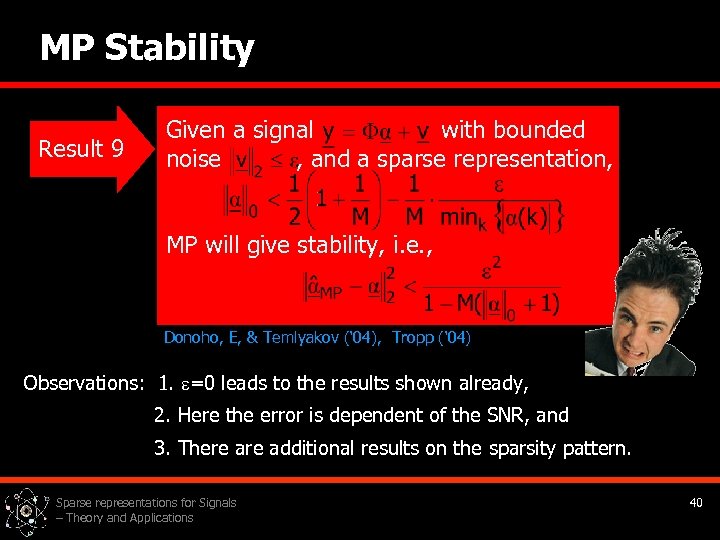

MP Stability Result 9 Given a signal with bounded noise , and a sparse representation, MP will give stability, i. e. , Donoho, E, & Temlyakov (‘ 04), Tropp (‘ 04) Observations: 1. =0 leads to the results shown already, 2. Here the error is dependent of the SNR, and 3. There additional results on the sparsity pattern. Sparse representations for Signals – Theory and Applications 40

MP Stability Result 9 Given a signal with bounded noise , and a sparse representation, MP will give stability, i. e. , Donoho, E, & Temlyakov (‘ 04), Tropp (‘ 04) Observations: 1. =0 leads to the results shown already, 2. Here the error is dependent of the SNR, and 3. There additional results on the sparsity pattern. Sparse representations for Signals – Theory and Applications 40

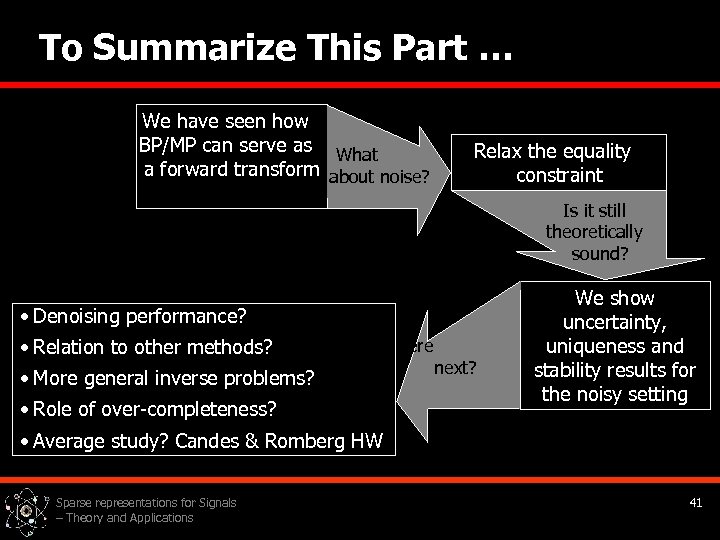

To Summarize This Part … We have seen how BP/MP can serve as What a forward transform about noise? Relax the equality constraint Is it still theoretically sound? • Denoising performance? • Relation to other methods? Where • More general inverse problems? • Role of over-completeness? next? We show uncertainty, uniqueness and stability results for the noisy setting • Average study? Candes & Romberg HW Sparse representations for Signals – Theory and Applications 41

To Summarize This Part … We have seen how BP/MP can serve as What a forward transform about noise? Relax the equality constraint Is it still theoretically sound? • Denoising performance? • Relation to other methods? Where • More general inverse problems? • Role of over-completeness? next? We show uncertainty, uniqueness and stability results for the noisy setting • Average study? Candes & Romberg HW Sparse representations for Signals – Theory and Applications 41

Agenda 1. Introduction Sparse & overcomplete representations, pursuit algorithms 2. Success of BP/MP as Forward Transforms Uniqueness, equivalence of BP and MP 3. Success of BP/MP for Inverse Problems Uniqueness, stability of BP and MP 4. Applications Image separation and inpainting Sparse representations for Signals – Theory and Applications 42

Agenda 1. Introduction Sparse & overcomplete representations, pursuit algorithms 2. Success of BP/MP as Forward Transforms Uniqueness, equivalence of BP and MP 3. Success of BP/MP for Inverse Problems Uniqueness, stability of BP and MP 4. Applications Image separation and inpainting Sparse representations for Signals – Theory and Applications 42

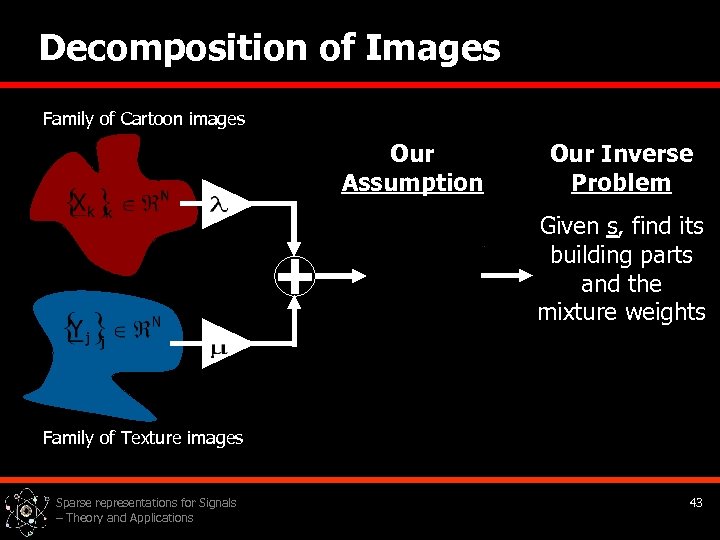

Decomposition of Images Family of Cartoon images Our Assumption Our Inverse Problem Given s, find its building parts and the mixture weights Family of Texture images Sparse representations for Signals – Theory and Applications 43

Decomposition of Images Family of Cartoon images Our Assumption Our Inverse Problem Given s, find its building parts and the mixture weights Family of Texture images Sparse representations for Signals – Theory and Applications 43

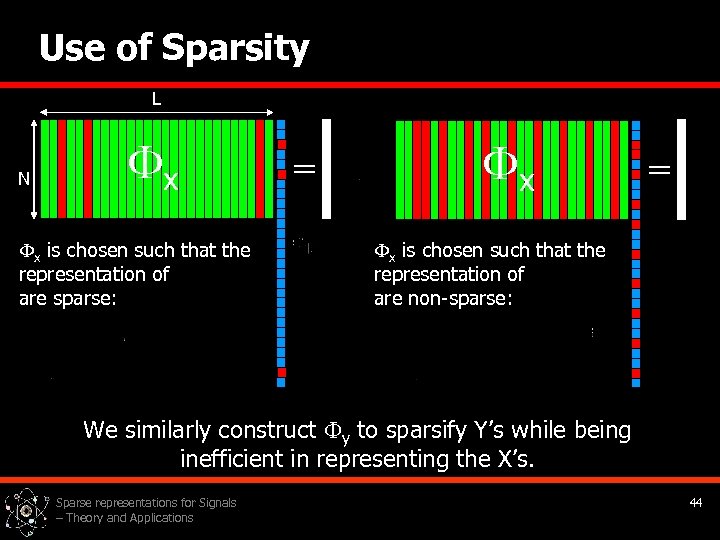

Use of Sparsity L N x x is chosen such that the representation of are sparse: = x is chosen such that the representation of are non-sparse: We similarly construct y to sparsify Y’s while being inefficient in representing the X’s. Sparse representations for Signals – Theory and Applications 44

Use of Sparsity L N x x is chosen such that the representation of are sparse: = x is chosen such that the representation of are non-sparse: We similarly construct y to sparsify Y’s while being inefficient in representing the X’s. Sparse representations for Signals – Theory and Applications 44

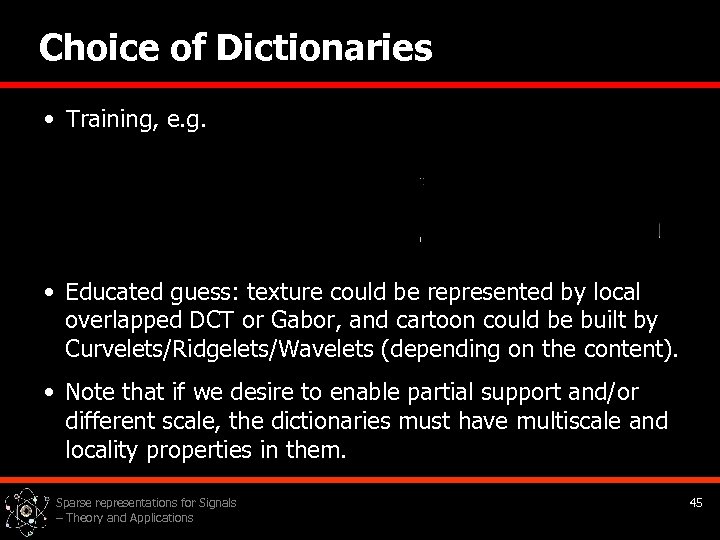

Choice of Dictionaries • Training, e. g. • Educated guess: texture could be represented by local overlapped DCT or Gabor, and cartoon could be built by Curvelets/Ridgelets/Wavelets (depending on the content). • Note that if we desire to enable partial support and/or different scale, the dictionaries must have multiscale and locality properties in them. Sparse representations for Signals – Theory and Applications 45

Choice of Dictionaries • Training, e. g. • Educated guess: texture could be represented by local overlapped DCT or Gabor, and cartoon could be built by Curvelets/Ridgelets/Wavelets (depending on the content). • Note that if we desire to enable partial support and/or different scale, the dictionaries must have multiscale and locality properties in them. Sparse representations for Signals – Theory and Applications 45

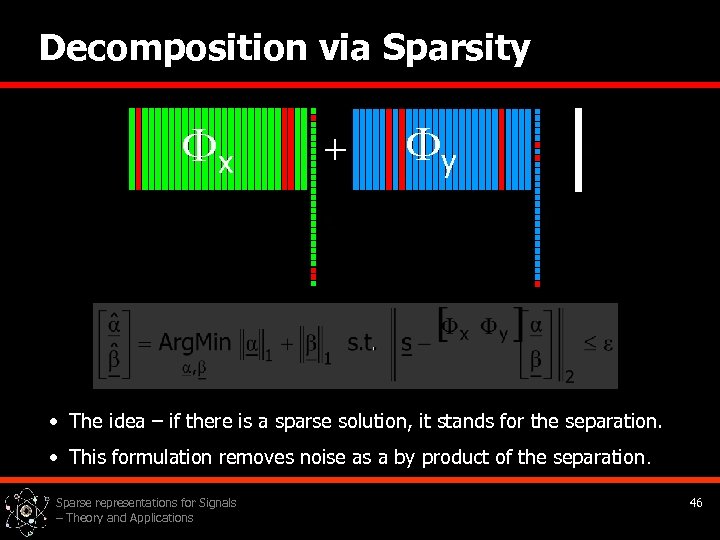

Decomposition via Sparsity x + y • The idea – if there is a sparse solution, it stands for the separation. • This formulation removes noise as a by product of the separation. Sparse representations for Signals – Theory and Applications 46

Decomposition via Sparsity x + y • The idea – if there is a sparse solution, it stands for the separation. • This formulation removes noise as a by product of the separation. Sparse representations for Signals – Theory and Applications 46

Theoretical Justification Several layers of study: 1. Uniqueness/stability as shown above apply directly but are ineffective in handling the realistic scenario where there are many non-zero coefficients. 2. Average performance analysis (Candes & Romberg HW) could remove this shortcoming. 3. Our numerical implementation is done on the “analysis domain” – Donoho’s results apply here. 4. All is built on a model for images as being built as sparse combination of Фxα+Фyβ. Sparse representations for Signals – Theory and Applications 47

Theoretical Justification Several layers of study: 1. Uniqueness/stability as shown above apply directly but are ineffective in handling the realistic scenario where there are many non-zero coefficients. 2. Average performance analysis (Candes & Romberg HW) could remove this shortcoming. 3. Our numerical implementation is done on the “analysis domain” – Donoho’s results apply here. 4. All is built on a model for images as being built as sparse combination of Фxα+Фyβ. Sparse representations for Signals – Theory and Applications 47

What About This Model? • Coifman’s dream – The concept of combining transforms to represent efficiently different signal contents was advocated by R. Coifman already in the early 90’s. • Compression – Compression algorithms were proposed by F. Meyer et. al. (2002) and Wakin et. al. (2002), based on separate transforms for cartoon and texture. • Variational Attempts – Modeling texture and cartoon and variational-based separation algorithms: Eve Meyer (2002), Vese & Osher (2003), Aujol et. al. (2003, 2004). • Sketchability – a recent work by Guo, Zhu, and Wu (2003) – MP and MRF modeling for sketch images. Sparse representations for Signals – Theory and Applications 48

What About This Model? • Coifman’s dream – The concept of combining transforms to represent efficiently different signal contents was advocated by R. Coifman already in the early 90’s. • Compression – Compression algorithms were proposed by F. Meyer et. al. (2002) and Wakin et. al. (2002), based on separate transforms for cartoon and texture. • Variational Attempts – Modeling texture and cartoon and variational-based separation algorithms: Eve Meyer (2002), Vese & Osher (2003), Aujol et. al. (2003, 2004). • Sketchability – a recent work by Guo, Zhu, and Wu (2003) – MP and MRF modeling for sketch images. Sparse representations for Signals – Theory and Applications 48

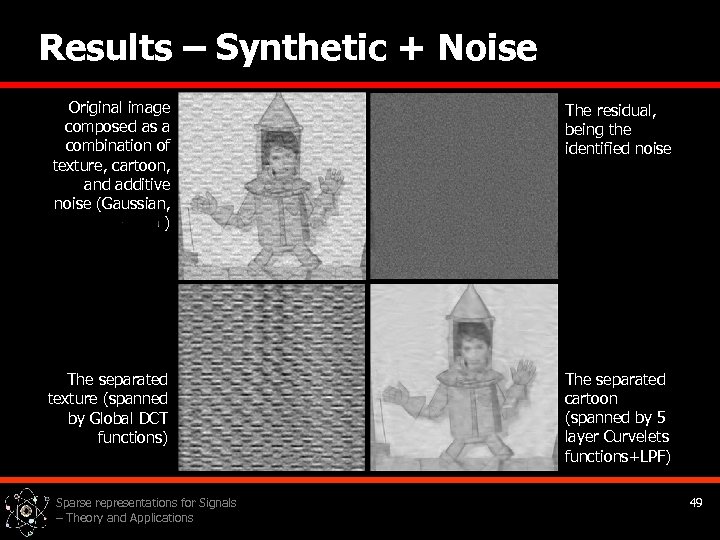

Results – Synthetic + Noise Original image composed as a combination of texture, cartoon, and additive noise (Gaussian, ) The residual, being the identified noise The separated texture (spanned by Global DCT functions) The separated cartoon (spanned by 5 layer Curvelets functions+LPF) Sparse representations for Signals – Theory and Applications 49

Results – Synthetic + Noise Original image composed as a combination of texture, cartoon, and additive noise (Gaussian, ) The residual, being the identified noise The separated texture (spanned by Global DCT functions) The separated cartoon (spanned by 5 layer Curvelets functions+LPF) Sparse representations for Signals – Theory and Applications 49

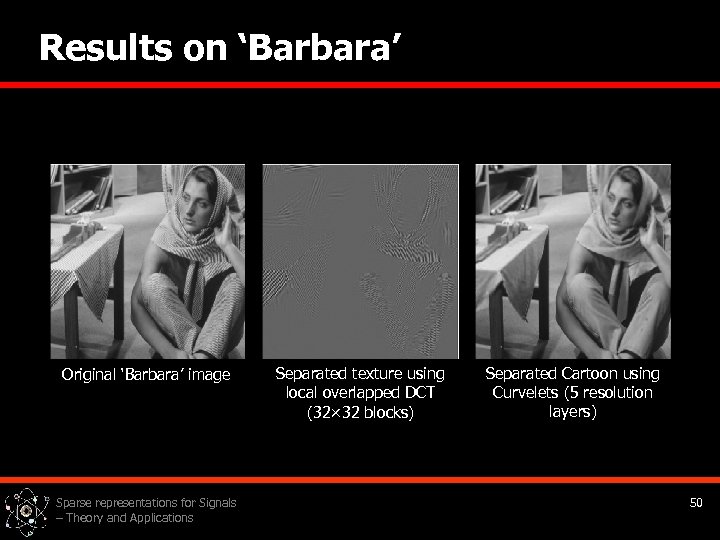

Results on ‘Barbara’ Original ‘Barbara’ image Sparse representations for Signals – Theory and Applications Separated texture using local overlapped DCT (32× 32 blocks) Separated Cartoon using Curvelets (5 resolution layers) 50

Results on ‘Barbara’ Original ‘Barbara’ image Sparse representations for Signals – Theory and Applications Separated texture using local overlapped DCT (32× 32 blocks) Separated Cartoon using Curvelets (5 resolution layers) 50

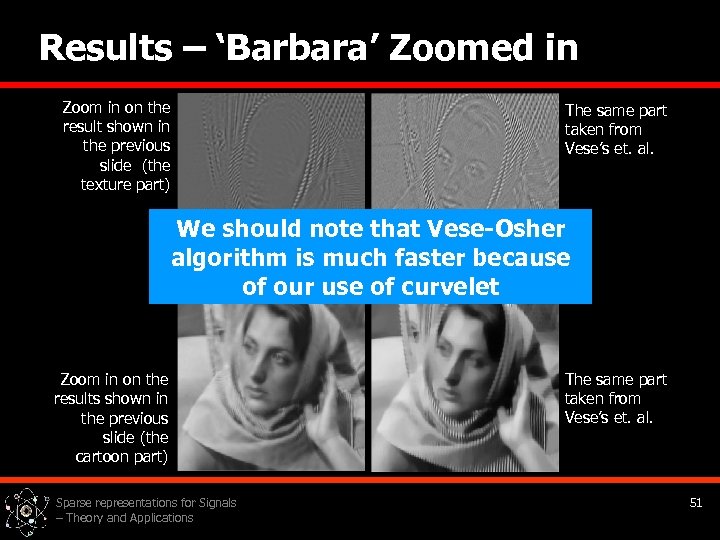

Results – ‘Barbara’ Zoomed in Zoom in on the result shown in the previous slide (the texture part) The same part taken from Vese’s et. al. We should note that Vese-Osher algorithm is much faster because of our use of curvelet Zoom in on the results shown in the previous slide (the cartoon part) Sparse representations for Signals – Theory and Applications The same part taken from Vese’s et. al. 51

Results – ‘Barbara’ Zoomed in Zoom in on the result shown in the previous slide (the texture part) The same part taken from Vese’s et. al. We should note that Vese-Osher algorithm is much faster because of our use of curvelet Zoom in on the results shown in the previous slide (the cartoon part) Sparse representations for Signals – Theory and Applications The same part taken from Vese’s et. al. 51

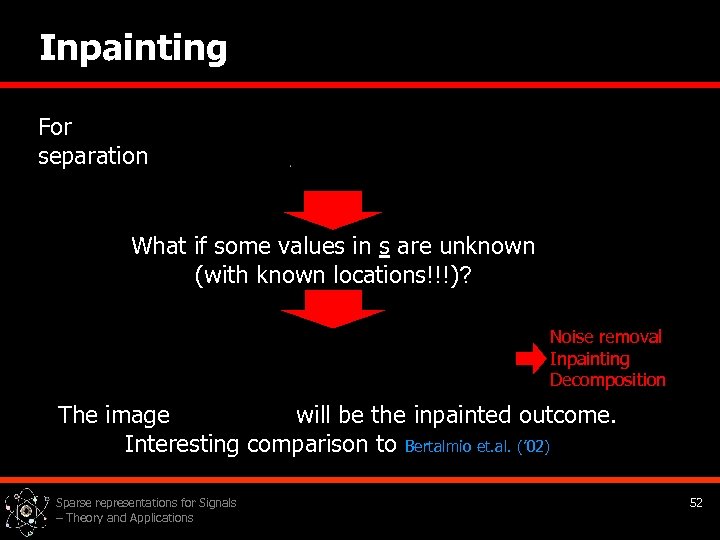

Inpainting For separation What if some values in s are unknown (with known locations!!!)? Noise removal Inpainting Decomposition The image will be the inpainted outcome. Interesting comparison to Bertalmio et. al. (’ 02) Sparse representations for Signals – Theory and Applications 52

Inpainting For separation What if some values in s are unknown (with known locations!!!)? Noise removal Inpainting Decomposition The image will be the inpainted outcome. Interesting comparison to Bertalmio et. al. (’ 02) Sparse representations for Signals – Theory and Applications 52

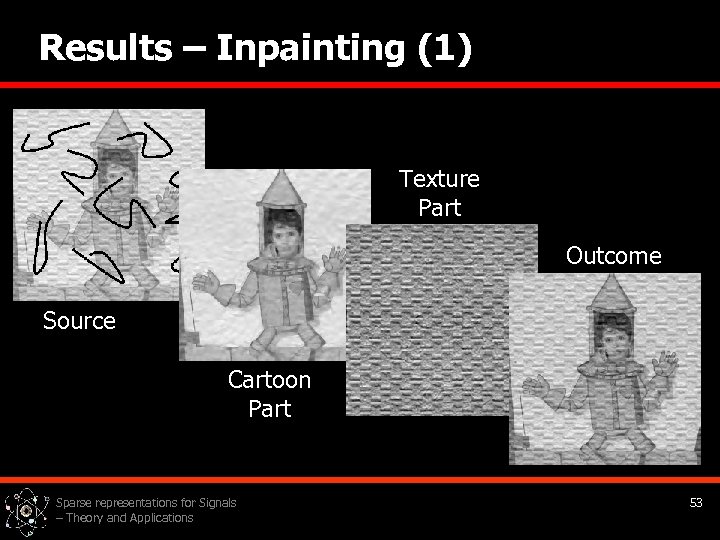

Results – Inpainting (1) Texture Part Outcome Source Cartoon Part Sparse representations for Signals – Theory and Applications 53

Results – Inpainting (1) Texture Part Outcome Source Cartoon Part Sparse representations for Signals – Theory and Applications 53

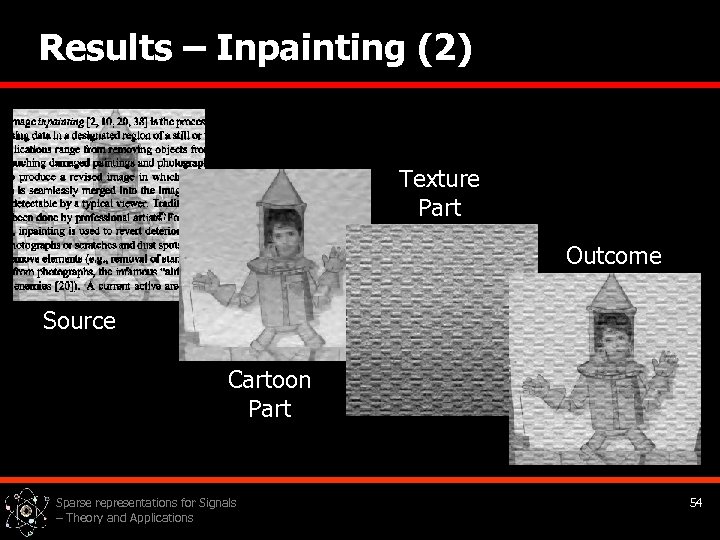

Results – Inpainting (2) Texture Part Outcome Source Cartoon Part Sparse representations for Signals – Theory and Applications 54

Results – Inpainting (2) Texture Part Outcome Source Cartoon Part Sparse representations for Signals – Theory and Applications 54

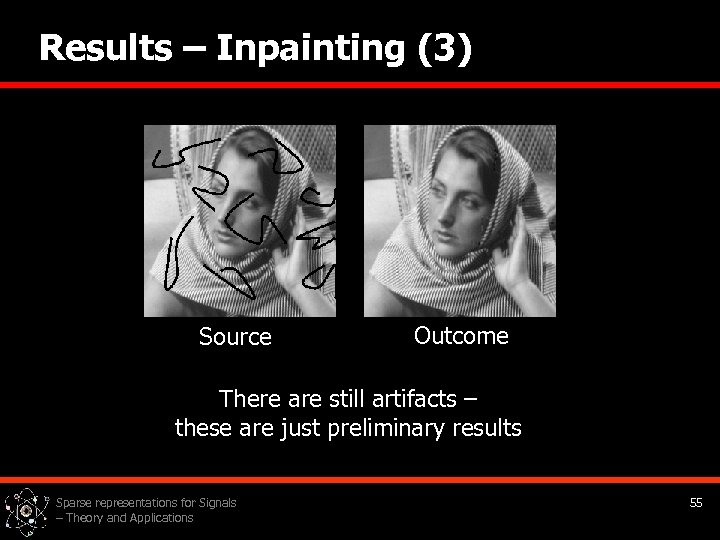

Results – Inpainting (3) Source Outcome There are still artifacts – these are just preliminary results Sparse representations for Signals – Theory and Applications 55

Results – Inpainting (3) Source Outcome There are still artifacts – these are just preliminary results Sparse representations for Signals – Theory and Applications 55

Today We Have Discussed 1. Introduction Sparse & overcomplete representations, pursuit algorithms 2. Success of BP/MP as Forward Transforms Uniqueness, equivalence of BP and MP 3. Success of BP/MP for Inverse Problems Uniqueness, stability of BP and MP 4. Applications Image separation and inpainting Sparse representations for Signals – Theory and Applications 56

Today We Have Discussed 1. Introduction Sparse & overcomplete representations, pursuit algorithms 2. Success of BP/MP as Forward Transforms Uniqueness, equivalence of BP and MP 3. Success of BP/MP for Inverse Problems Uniqueness, stability of BP and MP 4. Applications Image separation and inpainting Sparse representations for Signals – Theory and Applications 56

Summary • Pursuit algorithms are successful as § Forward transform – we shed light on this behavior. § Regularization scheme in inverse problems – we have shown that the noiseless results extend nicely to treat this case as well. • The dream: the over-completeness and sparsness ideas are highly effective, and should replace existing methods in signal representations and inverse-problems. • We would like to contribute to this change by § Supplying clear(er) explanations about the BP/MP behavior, § Improve the involved numerical tools, and then § Deploy it to applications. Sparse representations for Signals – Theory and Applications 57

Summary • Pursuit algorithms are successful as § Forward transform – we shed light on this behavior. § Regularization scheme in inverse problems – we have shown that the noiseless results extend nicely to treat this case as well. • The dream: the over-completeness and sparsness ideas are highly effective, and should replace existing methods in signal representations and inverse-problems. • We would like to contribute to this change by § Supplying clear(er) explanations about the BP/MP behavior, § Improve the involved numerical tools, and then § Deploy it to applications. Sparse representations for Signals – Theory and Applications 57

Future Work • Many intriguing questions: § What dictionary to use? Relation to learning? SVM? § Improved bounds – average performance assessments? § Relaxed notion of sparsity? When zero is really zero? § How to speed-up BP solver (accurate/approximate)? § Applications – Coding? Restoration? … • More information (including these slides) is found in http: //www. cs. technion. ac. il/~elad Sparse representations for Signals – Theory and Applications 58

Future Work • Many intriguing questions: § What dictionary to use? Relation to learning? SVM? § Improved bounds – average performance assessments? § Relaxed notion of sparsity? When zero is really zero? § How to speed-up BP solver (accurate/approximate)? § Applications – Coding? Restoration? … • More information (including these slides) is found in http: //www. cs. technion. ac. il/~elad Sparse representations for Signals – Theory and Applications 58

Some of the People Involved Donoho, Stanford Mallat, Paris Coifman, Yale Gilbert, Michigan Tropp, Michigan Strohmer, UC-Davis Rao, UCSD Saunders, Stanford Starck, Paris Sparse representations for Signals – Theory and Applications Daubechies, Princetone Candes, Caltech Zibulevsky, Technion Temlyakov, USC Romberg, Cal. Tech Nemirovski, Technion Gribonval, INRIA Tao, UCLA Feuer, Technion Nielsen, Aalborg Huo, Ga. Tech Bruckstein, Technion 59

Some of the People Involved Donoho, Stanford Mallat, Paris Coifman, Yale Gilbert, Michigan Tropp, Michigan Strohmer, UC-Davis Rao, UCSD Saunders, Stanford Starck, Paris Sparse representations for Signals – Theory and Applications Daubechies, Princetone Candes, Caltech Zibulevsky, Technion Temlyakov, USC Romberg, Cal. Tech Nemirovski, Technion Gribonval, INRIA Tao, UCLA Feuer, Technion Nielsen, Aalborg Huo, Ga. Tech Bruckstein, Technion 59