c2ba1302bbf72b55b01033c8e5226681.ppt

- Количество слайдов: 25

Site Throughput Review and Issues Shawn Mc. Kee/University of Michigan US ATLAS Tier 2/Tier 3 Workshop May 27 th, 2008

Site Throughput Review and Issues Shawn Mc. Kee/University of Michigan US ATLAS Tier 2/Tier 3 Workshop May 27 th, 2008

US ATLAS Throughput Working Group T In Fall 2007 a “Throughput” working group was put together to study our current Tier-1 and Tier-2 configurations and tunings and see where we could improve. T First steps were identifying current levels of performance. T Typically sites performed significantly below expectations. T During weekly calls we would schedule Tier-1 to Tier-2 testing for the following week. Gather a set of experts on a chat line or phone line and debug the setup. T See http: //www. usatlas. bnl. gov/twiki/bin/view/Admins/Load. Tests. html US ATLAS Tier 2/Tier 3 Workshop

US ATLAS Throughput Working Group T In Fall 2007 a “Throughput” working group was put together to study our current Tier-1 and Tier-2 configurations and tunings and see where we could improve. T First steps were identifying current levels of performance. T Typically sites performed significantly below expectations. T During weekly calls we would schedule Tier-1 to Tier-2 testing for the following week. Gather a set of experts on a chat line or phone line and debug the setup. T See http: //www. usatlas. bnl. gov/twiki/bin/view/Admins/Load. Tests. html US ATLAS Tier 2/Tier 3 Workshop

Roadmap for Data Transfer Tuning T Our first goal is to achieve ~200 MB (bytes) per second from the Tier-1 to each Tier-2. This is achieved by either/both: q Small number of high-performance systems (1 -5) at each site transferring data via Grid. FTP, FDT or similar applications. z Single systems benchmarked with I/O > 200 MB/sec z 10 GE NICs (“back-to-back” tests achieved 9981 Mbps) q Large number of “average” systems (10 -50), each transferring to a corresponding systems at the other site. This is a good match to an SRM/d. Cache transfer of many files between sites z z z Typical current disks are 40 -70 MB/sec Gigabit network is 125 MB/sec Doesn’t require great individual performance / host: 5 -25 MB/sec US ATLAS Tier 2/Tier 3 Workshop

Roadmap for Data Transfer Tuning T Our first goal is to achieve ~200 MB (bytes) per second from the Tier-1 to each Tier-2. This is achieved by either/both: q Small number of high-performance systems (1 -5) at each site transferring data via Grid. FTP, FDT or similar applications. z Single systems benchmarked with I/O > 200 MB/sec z 10 GE NICs (“back-to-back” tests achieved 9981 Mbps) q Large number of “average” systems (10 -50), each transferring to a corresponding systems at the other site. This is a good match to an SRM/d. Cache transfer of many files between sites z z z Typical current disks are 40 -70 MB/sec Gigabit network is 125 MB/sec Doesn’t require great individual performance / host: 5 -25 MB/sec US ATLAS Tier 2/Tier 3 Workshop

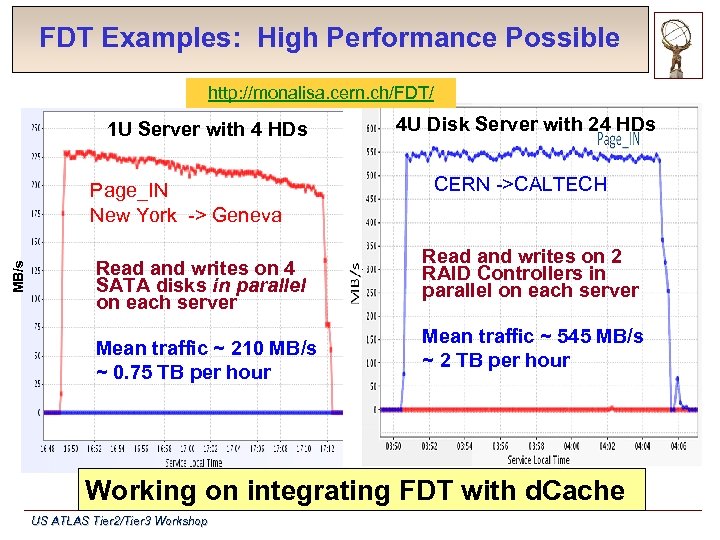

FDT Examples: High Performance Possible http: //monalisa. cern. ch/FDT/ 1 U Server with 4 HDs MB/s Page_IN New York -> Geneva Read and writes on 4 SATA disks in parallel on each server Mean traffic ~ 210 MB/s ~ 0. 75 TB per hour 4 U Disk Server with 24 HDs CERN ->CALTECH Read and writes on 2 RAID Controllers in parallel on each server Mean traffic ~ 545 MB/s ~ 2 TB per hour Working on integrating FDT with d. Cache US ATLAS Tier 2/Tier 3 Workshop

FDT Examples: High Performance Possible http: //monalisa. cern. ch/FDT/ 1 U Server with 4 HDs MB/s Page_IN New York -> Geneva Read and writes on 4 SATA disks in parallel on each server Mean traffic ~ 210 MB/s ~ 0. 75 TB per hour 4 U Disk Server with 24 HDs CERN ->CALTECH Read and writes on 2 RAID Controllers in parallel on each server Mean traffic ~ 545 MB/s ~ 2 TB per hour Working on integrating FDT with d. Cache US ATLAS Tier 2/Tier 3 Workshop

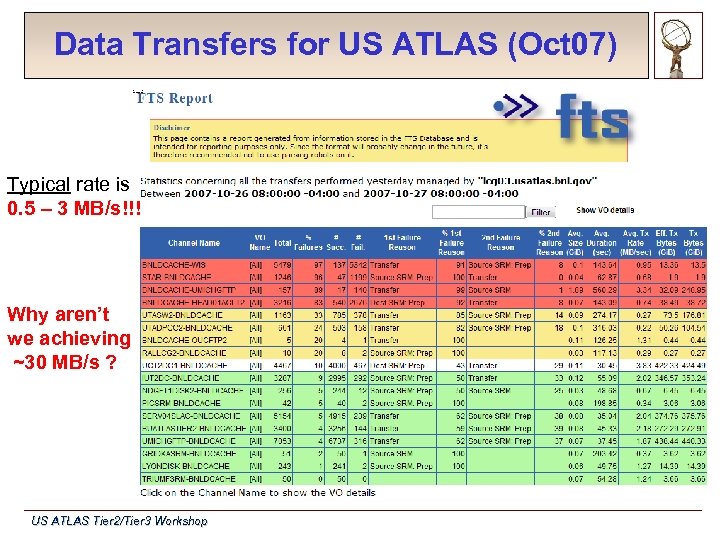

Data Transfers for US ATLAS (Oct 07) Typical rate is 0. 5 – 3 MB/s!!! Why aren’t we achieving ~30 MB/s ? US ATLAS Tier 2/Tier 3 Workshop

Data Transfers for US ATLAS (Oct 07) Typical rate is 0. 5 – 3 MB/s!!! Why aren’t we achieving ~30 MB/s ? US ATLAS Tier 2/Tier 3 Workshop

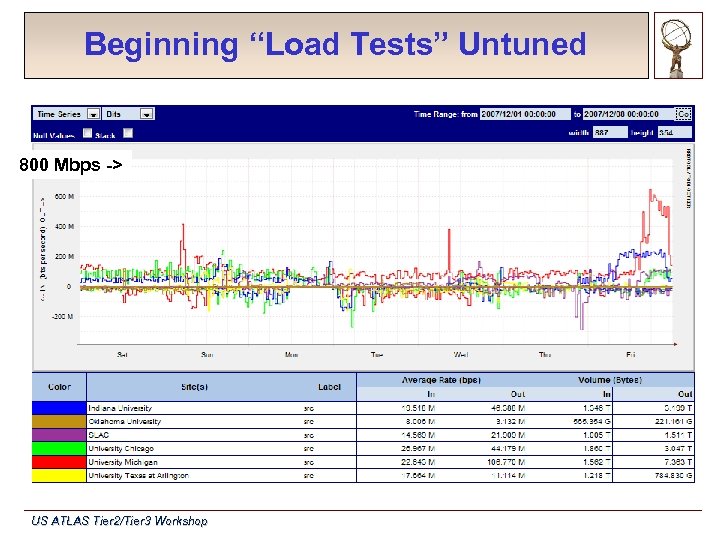

Beginning “Load Tests” Untuned 800 Mbps -> US ATLAS Tier 2/Tier 3 Workshop

Beginning “Load Tests” Untuned 800 Mbps -> US ATLAS Tier 2/Tier 3 Workshop

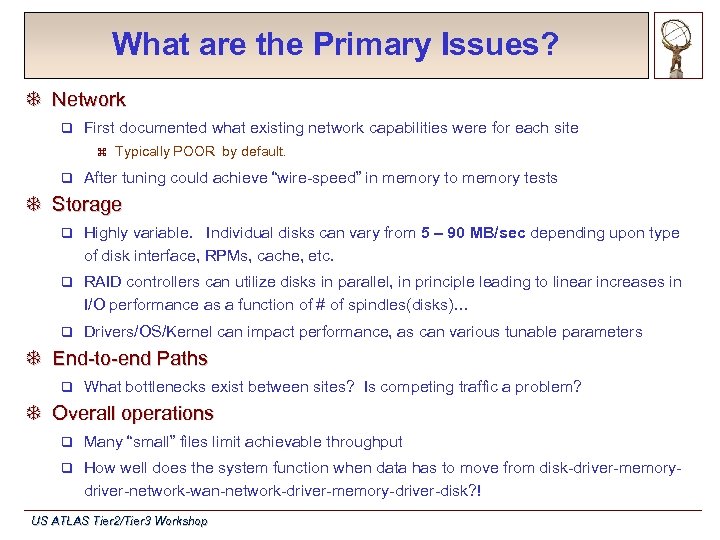

What are the Primary Issues? T Network q First documented what existing network capabilities were for each site z q Typically POOR by default. After tuning could achieve “wire-speed” in memory to memory tests T Storage q Highly variable. Individual disks can vary from 5 – 90 MB/sec depending upon type of disk interface, RPMs, cache, etc. q RAID controllers can utilize disks in parallel, in principle leading to linear increases in I/O performance as a function of # of spindles(disks)… q Drivers/OS/Kernel can impact performance, as can various tunable parameters T End-to-end Paths q What bottlenecks exist between sites? Is competing traffic a problem? T Overall operations q Many “small” files limit achievable throughput q How well does the system function when data has to move from disk-driver-memorydriver-network-wan-network-driver-memory-driver-disk? ! US ATLAS Tier 2/Tier 3 Workshop

What are the Primary Issues? T Network q First documented what existing network capabilities were for each site z q Typically POOR by default. After tuning could achieve “wire-speed” in memory to memory tests T Storage q Highly variable. Individual disks can vary from 5 – 90 MB/sec depending upon type of disk interface, RPMs, cache, etc. q RAID controllers can utilize disks in parallel, in principle leading to linear increases in I/O performance as a function of # of spindles(disks)… q Drivers/OS/Kernel can impact performance, as can various tunable parameters T End-to-end Paths q What bottlenecks exist between sites? Is competing traffic a problem? T Overall operations q Many “small” files limit achievable throughput q How well does the system function when data has to move from disk-driver-memorydriver-network-wan-network-driver-memory-driver-disk? ! US ATLAS Tier 2/Tier 3 Workshop

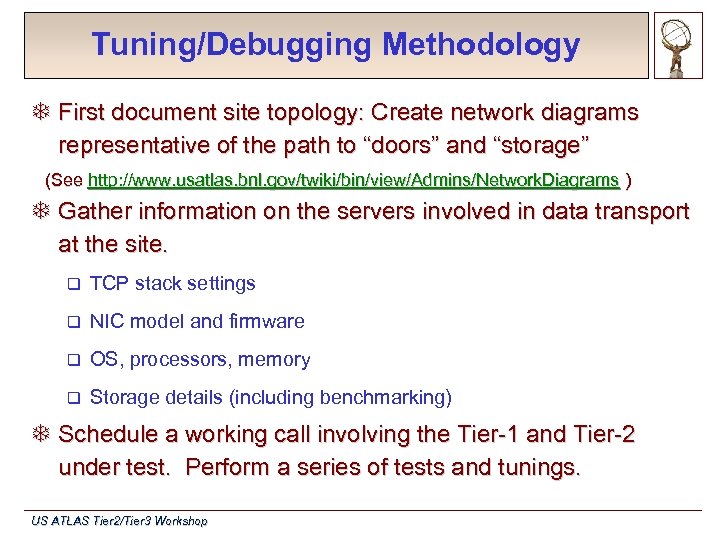

Tuning/Debugging Methodology T First document site topology: Create network diagrams representative of the path to “doors” and “storage” (See http: //www. usatlas. bnl. gov/twiki/bin/view/Admins/Network. Diagrams ) T Gather information on the servers involved in data transport at the site. q TCP stack settings q NIC model and firmware q OS, processors, memory q Storage details (including benchmarking) T Schedule a working call involving the Tier-1 and Tier-2 under test. Perform a series of tests and tunings. US ATLAS Tier 2/Tier 3 Workshop

Tuning/Debugging Methodology T First document site topology: Create network diagrams representative of the path to “doors” and “storage” (See http: //www. usatlas. bnl. gov/twiki/bin/view/Admins/Network. Diagrams ) T Gather information on the servers involved in data transport at the site. q TCP stack settings q NIC model and firmware q OS, processors, memory q Storage details (including benchmarking) T Schedule a working call involving the Tier-1 and Tier-2 under test. Perform a series of tests and tunings. US ATLAS Tier 2/Tier 3 Workshop

Network Tools and Tunings T The network stack is the 1 st candidate for optimization q Amount of memory allocated for data “in-flight” determines maximum achievable bandwidth for a given src-destination q Parameters (some example settings): z z net. core. rmem_max = 20000000 net. core. wmem_max = 20000000 net. ipv 4. tcp_rmem = 4096 87380 20000000 net. ipv 4. tcp_wmem = 4096 87380 20000000 T Other useful tools: Iperf, NDT, wireshark, tracepath, ethtool, ifconfig, sysctl, netperf, FDT. T Lots more info/results in this area available online… q http: //www. usatlas. bnl. gov/twiki/bin/view/Admins/Network. Performance. P 2. html US ATLAS Tier 2/Tier 3 Workshop

Network Tools and Tunings T The network stack is the 1 st candidate for optimization q Amount of memory allocated for data “in-flight” determines maximum achievable bandwidth for a given src-destination q Parameters (some example settings): z z net. core. rmem_max = 20000000 net. core. wmem_max = 20000000 net. ipv 4. tcp_rmem = 4096 87380 20000000 net. ipv 4. tcp_wmem = 4096 87380 20000000 T Other useful tools: Iperf, NDT, wireshark, tracepath, ethtool, ifconfig, sysctl, netperf, FDT. T Lots more info/results in this area available online… q http: //www. usatlas. bnl. gov/twiki/bin/view/Admins/Network. Performance. P 2. html US ATLAS Tier 2/Tier 3 Workshop

Achieving Good Networking Results T Test system-pairs with Iperf (tcp) to determine achievable bandwidth T Check ‘ifconfig

Achieving Good Networking Results T Test system-pairs with Iperf (tcp) to determine achievable bandwidth T Check ‘ifconfig

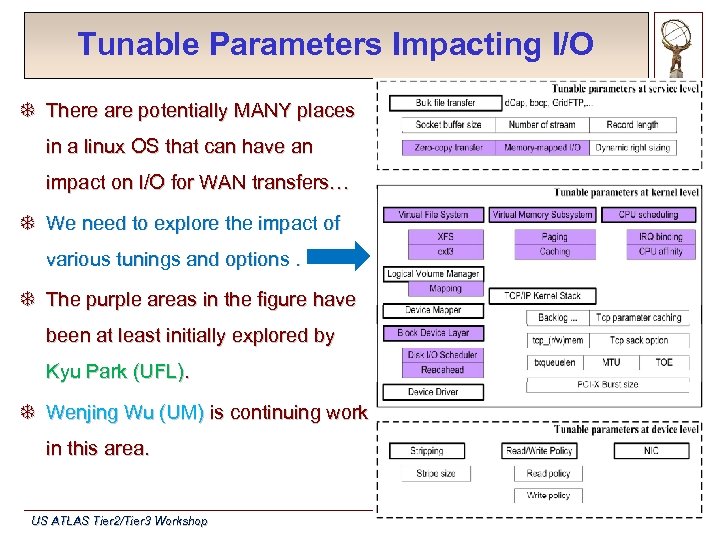

Tunable Parameters Impacting I/O T There are potentially MANY places in a linux OS that can have an impact on I/O for WAN transfers… T We need to explore the impact of various tunings and options. T The purple areas in the figure have been at least initially explored by Kyu Park (UFL). T Wenjing Wu (UM) is continuing work in this area. US ATLAS Tier 2/Tier 3 Workshop

Tunable Parameters Impacting I/O T There are potentially MANY places in a linux OS that can have an impact on I/O for WAN transfers… T We need to explore the impact of various tunings and options. T The purple areas in the figure have been at least initially explored by Kyu Park (UFL). T Wenjing Wu (UM) is continuing work in this area. US ATLAS Tier 2/Tier 3 Workshop

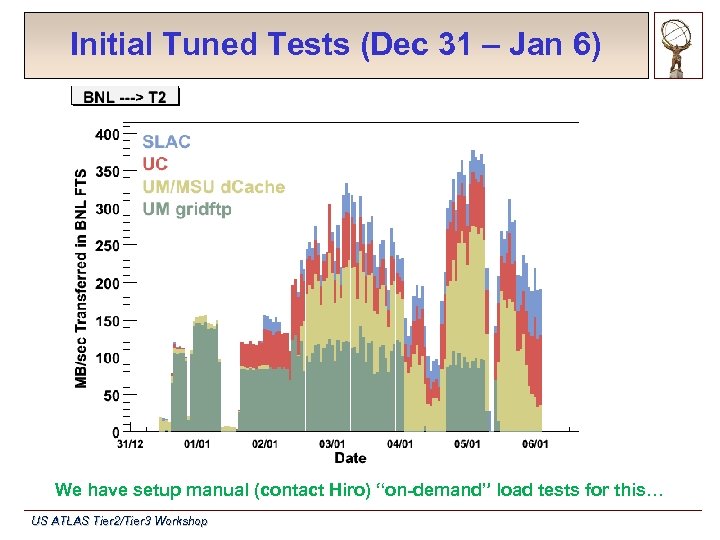

Initial Tuned Tests (Dec 31 – Jan 6) We have setup manual (contact Hiro) “on-demand” load tests for this… US ATLAS Tier 2/Tier 3 Workshop

Initial Tuned Tests (Dec 31 – Jan 6) We have setup manual (contact Hiro) “on-demand” load tests for this… US ATLAS Tier 2/Tier 3 Workshop

Initial Findings(1) T Most sites had at least a gigabit capable path in principle. q Some sites had < 1 Gbit/sec bottlenecks that weren’t discovered until we starting trying to document and test sites T Many sites had 10 GE “in principle” but getting end-to-end connections clear at 10 GE required changes T Most sites were un-tuned or not properly tuned to achieve high throughput T Sometimes flaky hardware was the issue: q Bad NICs, bad cables, underpowered CPU, insufficient memory, etc T BNL was limited by the Grid. FTP doors to around 700 MB/s US ATLAS Tier 2/Tier 3 Workshop

Initial Findings(1) T Most sites had at least a gigabit capable path in principle. q Some sites had < 1 Gbit/sec bottlenecks that weren’t discovered until we starting trying to document and test sites T Many sites had 10 GE “in principle” but getting end-to-end connections clear at 10 GE required changes T Most sites were un-tuned or not properly tuned to achieve high throughput T Sometimes flaky hardware was the issue: q Bad NICs, bad cables, underpowered CPU, insufficient memory, etc T BNL was limited by the Grid. FTP doors to around 700 MB/s US ATLAS Tier 2/Tier 3 Workshop

Initial Findings(2) T Network for properly tuned hosts is not the bottleneck T Memory-to-disk tests interesting in that they can expose problematic I/O systems (or give confidence in them) T Disk-to-disk tests do poorly. Still a lot of work required in this area. Possible issues: q Wrongly tuned parameters for this task (driver, kernel, OS) q Competing I/O interfering q Conflicts/inefficiency in the Linux “data path” (bus-driver-memory) q Badly organized hardware, e. g. , network and storage cards sharing the same bus q Underpowered hardware or bad applications for driving gigabit links US ATLAS Tier 2/Tier 3 Workshop

Initial Findings(2) T Network for properly tuned hosts is not the bottleneck T Memory-to-disk tests interesting in that they can expose problematic I/O systems (or give confidence in them) T Disk-to-disk tests do poorly. Still a lot of work required in this area. Possible issues: q Wrongly tuned parameters for this task (driver, kernel, OS) q Competing I/O interfering q Conflicts/inefficiency in the Linux “data path” (bus-driver-memory) q Badly organized hardware, e. g. , network and storage cards sharing the same bus q Underpowered hardware or bad applications for driving gigabit links US ATLAS Tier 2/Tier 3 Workshop

T 1/T 2 Host Throughput Monitoring T How it works Control plugin for Monalisa runs iperf and gridftp tests twice a day from select Tier 1 (BNL) to Tier 2 hosts and from each Tier 2 host to Tier 1, (production SE hosts). q Results are logged to file. q Monitoring plugin for Monalisa reads log and graphs results. q T What it currently provides Network throughput to determine if network tcp parameters need to be tuned. q Gridftp throughput to determine degradation due to gridftp software and disk. q Easy configuration to add or remove tests. q T What else can be added within this framework Gridftp memory to memory and memory to disk (and vice-versa) tests to isolate disk and software degradation separately. q SRM tests to isolate SRM degradation q FTS to isolate FTS degradation q US ATLAS Tier 2/Tier 3 Workshop

T 1/T 2 Host Throughput Monitoring T How it works Control plugin for Monalisa runs iperf and gridftp tests twice a day from select Tier 1 (BNL) to Tier 2 hosts and from each Tier 2 host to Tier 1, (production SE hosts). q Results are logged to file. q Monitoring plugin for Monalisa reads log and graphs results. q T What it currently provides Network throughput to determine if network tcp parameters need to be tuned. q Gridftp throughput to determine degradation due to gridftp software and disk. q Easy configuration to add or remove tests. q T What else can be added within this framework Gridftp memory to memory and memory to disk (and vice-versa) tests to isolate disk and software degradation separately. q SRM tests to isolate SRM degradation q FTS to isolate FTS degradation q US ATLAS Tier 2/Tier 3 Workshop

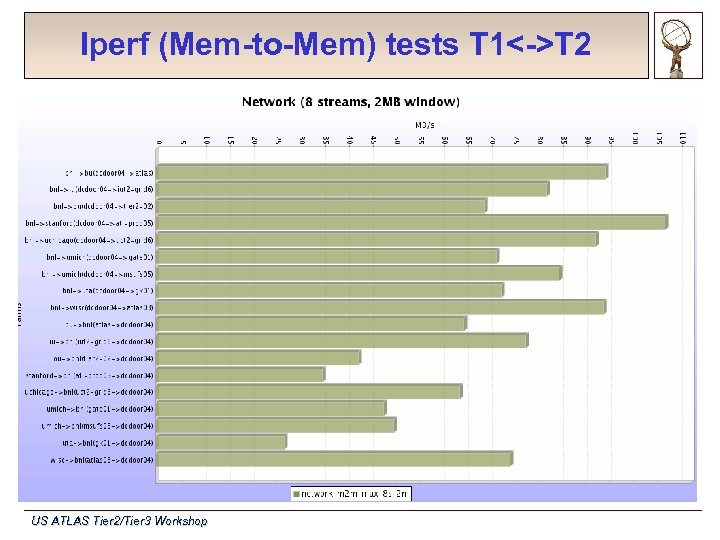

Iperf (Mem-to-Mem) tests T 1<->T 2 US ATLAS Tier 2/Tier 3 Workshop

Iperf (Mem-to-Mem) tests T 1<->T 2 US ATLAS Tier 2/Tier 3 Workshop

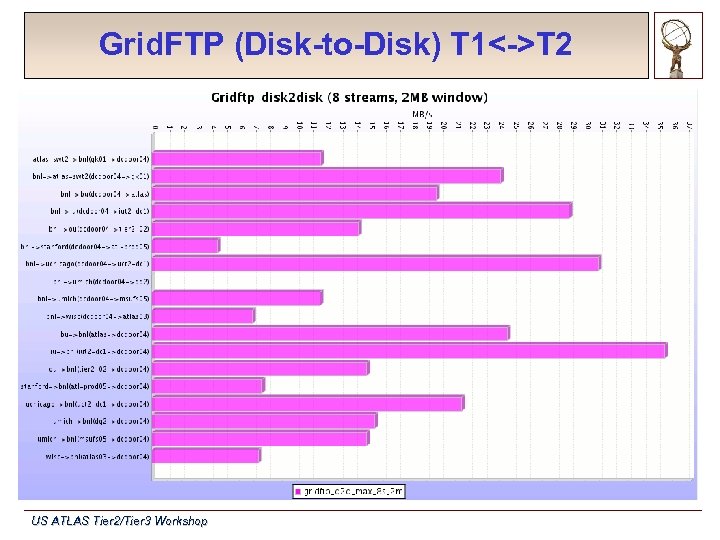

Grid. FTP (Disk-to-Disk) T 1<->T 2 US ATLAS Tier 2/Tier 3 Workshop

Grid. FTP (Disk-to-Disk) T 1<->T 2 US ATLAS Tier 2/Tier 3 Workshop

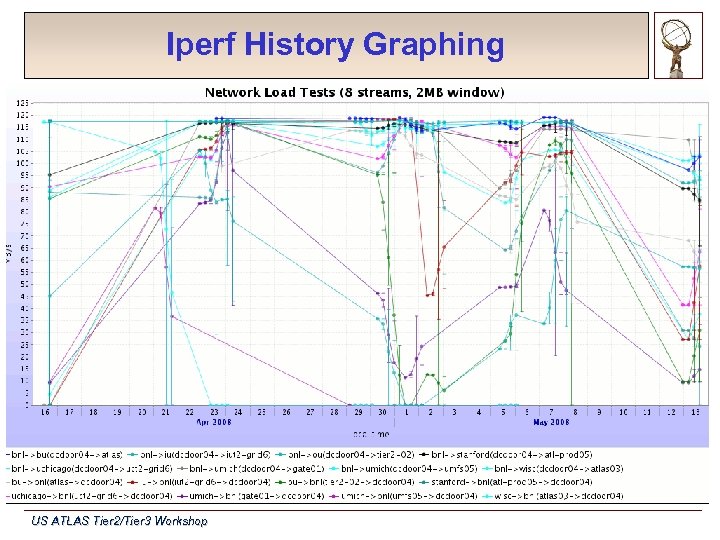

Iperf History Graphing US ATLAS Tier 2/Tier 3 Workshop

Iperf History Graphing US ATLAS Tier 2/Tier 3 Workshop

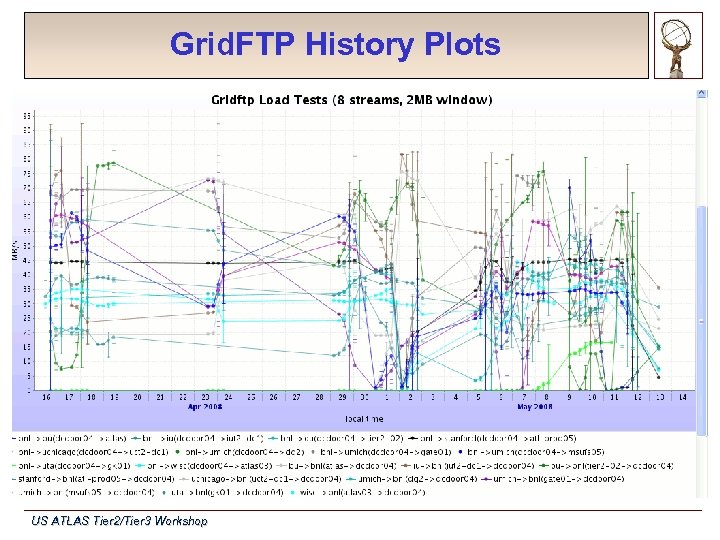

Grid. FTP History Plots US ATLAS Tier 2/Tier 3 Workshop

Grid. FTP History Plots US ATLAS Tier 2/Tier 3 Workshop

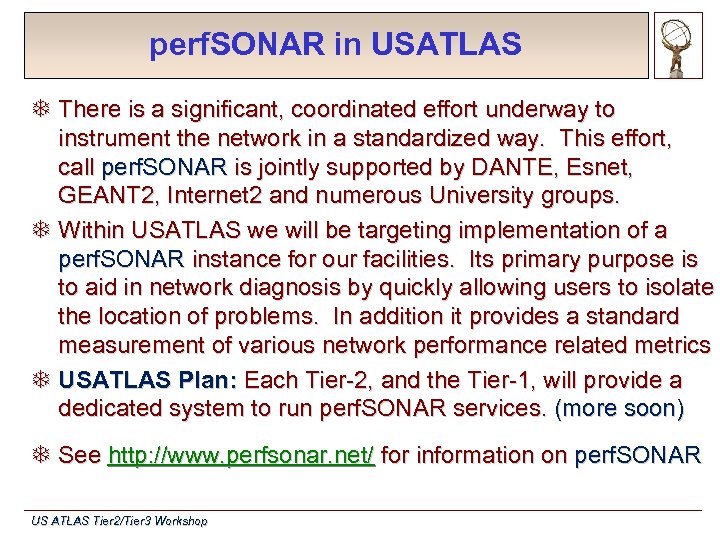

perf. SONAR in USATLAS T There is a significant, coordinated effort underway to instrument the network in a standardized way. This effort, call perf. SONAR is jointly supported by DANTE, Esnet, GEANT 2, Internet 2 and numerous University groups. T Within USATLAS we will be targeting implementation of a perf. SONAR instance for our facilities. Its primary purpose is to aid in network diagnosis by quickly allowing users to isolate the location of problems. In addition it provides a standard measurement of various network performance related metrics T USATLAS Plan: Each Tier-2, and the Tier-1, will provide a dedicated system to run perf. SONAR services. (more soon) T See http: //www. perfsonar. net/ for information on perf. SONAR US ATLAS Tier 2/Tier 3 Workshop

perf. SONAR in USATLAS T There is a significant, coordinated effort underway to instrument the network in a standardized way. This effort, call perf. SONAR is jointly supported by DANTE, Esnet, GEANT 2, Internet 2 and numerous University groups. T Within USATLAS we will be targeting implementation of a perf. SONAR instance for our facilities. Its primary purpose is to aid in network diagnosis by quickly allowing users to isolate the location of problems. In addition it provides a standard measurement of various network performance related metrics T USATLAS Plan: Each Tier-2, and the Tier-1, will provide a dedicated system to run perf. SONAR services. (more soon) T See http: //www. perfsonar. net/ for information on perf. SONAR US ATLAS Tier 2/Tier 3 Workshop

Near-term Steps for Working Group T Lots of work is needed for our Tier-2’s in end-to-end throughput. T Each site will want to explore options for system/architecture optimization specific to their hardware. T Most reasonably powerful storage systems should be able to exceed 200 MB/s. Getting this consistently across the WAN is the challenge! T For each Tier-2 we need to tune GSIFTP (or FDT) transfers between “powerful” storage systems to achieve “bottleneck” limited performance q Implies we document the bottleneck: I/O subsystem, network, processor? q Include 10 GE connected pairs where possible…target 400 MB/s/host-pair T For those sites supporting SRM, trying many host pair transfers to “go wide” in achieving high-bandwidth transfers. US ATLAS Tier 2/Tier 3 Workshop

Near-term Steps for Working Group T Lots of work is needed for our Tier-2’s in end-to-end throughput. T Each site will want to explore options for system/architecture optimization specific to their hardware. T Most reasonably powerful storage systems should be able to exceed 200 MB/s. Getting this consistently across the WAN is the challenge! T For each Tier-2 we need to tune GSIFTP (or FDT) transfers between “powerful” storage systems to achieve “bottleneck” limited performance q Implies we document the bottleneck: I/O subsystem, network, processor? q Include 10 GE connected pairs where possible…target 400 MB/s/host-pair T For those sites supporting SRM, trying many host pair transfers to “go wide” in achieving high-bandwidth transfers. US ATLAS Tier 2/Tier 3 Workshop

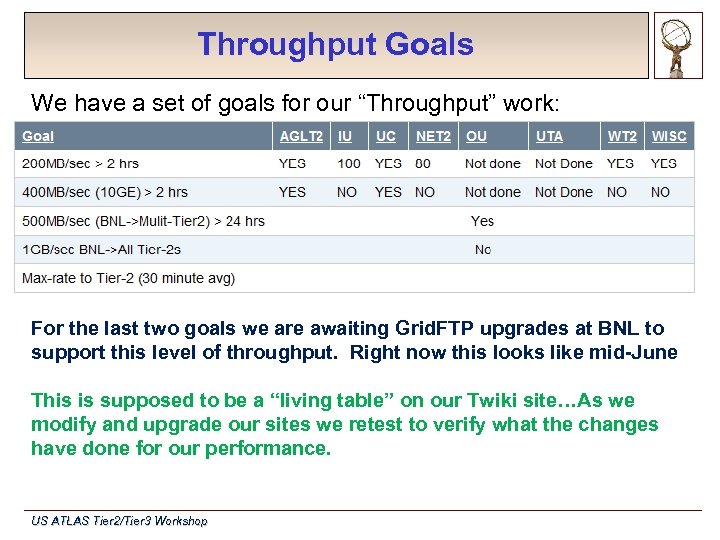

Throughput Goals We have a set of goals for our “Throughput” work: For the last two goals we are awaiting Grid. FTP upgrades at BNL to support this level of throughput. Right now this looks like mid-June This is supposed to be a “living table” on our Twiki site…As we modify and upgrade our sites we retest to verify what the changes have done for our performance. US ATLAS Tier 2/Tier 3 Workshop

Throughput Goals We have a set of goals for our “Throughput” work: For the last two goals we are awaiting Grid. FTP upgrades at BNL to support this level of throughput. Right now this looks like mid-June This is supposed to be a “living table” on our Twiki site…As we modify and upgrade our sites we retest to verify what the changes have done for our performance. US ATLAS Tier 2/Tier 3 Workshop

Plans and Future Goals T We still have a significant amount of work to do to reach consistently high throughput between our sites. T Performance analysis is central to identifying bottlenecks and achieving high-performance. T Automated testing via BNL’s Mon. ALISA plugin will be maintained to provide both baseline and near-term monitoring. T perf. SONAR will be deployed in the next month or so. T Continue “on-demand” load-tests to verify burst capacity and change impact T Finish all sites in our goal table & document our methodology US ATLAS Tier 2/Tier 3 Workshop

Plans and Future Goals T We still have a significant amount of work to do to reach consistently high throughput between our sites. T Performance analysis is central to identifying bottlenecks and achieving high-performance. T Automated testing via BNL’s Mon. ALISA plugin will be maintained to provide both baseline and near-term monitoring. T perf. SONAR will be deployed in the next month or so. T Continue “on-demand” load-tests to verify burst capacity and change impact T Finish all sites in our goal table & document our methodology US ATLAS Tier 2/Tier 3 Workshop

Questions? US ATLAS Tier 2/Tier 3 Workshop

Questions? US ATLAS Tier 2/Tier 3 Workshop

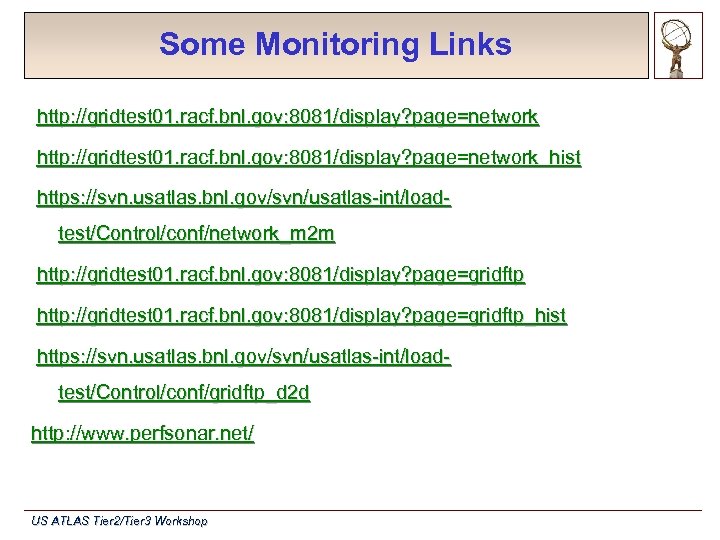

Some Monitoring Links http: //gridtest 01. racf. bnl. gov: 8081/display? page=network_hist https: //svn. usatlas. bnl. gov/svn/usatlas-int/loadtest/Control/conf/network_m 2 m http: //gridtest 01. racf. bnl. gov: 8081/display? page=gridftp_hist https: //svn. usatlas. bnl. gov/svn/usatlas-int/loadtest/Control/conf/gridftp_d 2 d http: //www. perfsonar. net/ US ATLAS Tier 2/Tier 3 Workshop

Some Monitoring Links http: //gridtest 01. racf. bnl. gov: 8081/display? page=network_hist https: //svn. usatlas. bnl. gov/svn/usatlas-int/loadtest/Control/conf/network_m 2 m http: //gridtest 01. racf. bnl. gov: 8081/display? page=gridftp_hist https: //svn. usatlas. bnl. gov/svn/usatlas-int/loadtest/Control/conf/gridftp_d 2 d http: //www. perfsonar. net/ US ATLAS Tier 2/Tier 3 Workshop