660f29491ac9cf84f5503c285d122c0a.ppt

- Количество слайдов: 59

Simulation Semantics Ben Bergen Tuesday Seminar October 7, 2003

Thanks Jerome Feldman Susanne Gahl Art Glenberg Teenie Matlock Shweta Narayan Srini Naryanan Terry Regier Amy Schafer

The basic question What is meaning?

The logical solution One popular answer is that meaning is like a formal logic system • Words map onto pieces of logical statements • Verbs map onto predicates • Nouns identify arguments of those predicates

The logical solution Language. John climbed the tree Logical Form climbed(John, tree)

The logical solution Problem 1: We’re terrible at logic Problem 2: We understand language before logic Problem 3: What does “Hi” mean, logically? Problem 4: No empirical evidence language users perform logical operations during language use Problem 5: Even if meaning involves logic, this just pushes back the question of meaning what does it mean for climbed(John, tree) to be meaningful?

Situating meaning One partial solution to the problem of what the logic means is to say that it’s about the world Once you have a logical representation of the meaning of a sentence, you can interpret it in terms of the world John climbed the tree is true if and only if some particular John climbed some particular tree

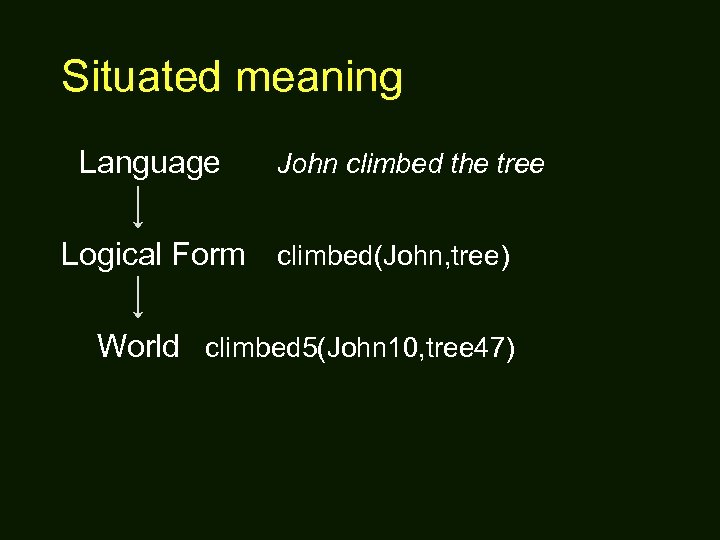

Situated meaning Language John climbed the tree Logical Form climbed(John, tree) World climbed 5(John 10, tree 47)

Situating meaning Problem 1: We have incomplete knowledge of the world Problem 2: Some of our world knowledge is created through language, and thus cannot presuppose it. (What’s a Jabberwocky? ) Problem 3: Many to most of the semantic distinctions we can make have to do with our interpretation of the world, not the world itself The dresser runs along the wall vs. The dresser leans against the wall. John isn’t stingy, he’s thrifty. Problem 4: When we say that meaning is about the world, what does that mean is going on inside a language user’s head?

How about embodiment? One candidate solution is the idea of embodiment (Lakoff 1987, Langacker 1991, Talmy 2000) Language gains meaning through embodied experiences that the language user has had, that the language relates to So language is meaningful by evoking recollection of past experiences, often combined in new ways

Simulation Understanding language requires the language user to simulate, that is, mentally imagine, the content of the utterance If I say “The pink elephants danced across the stage”, you have to imagine the scene If I say “John climbed the tree”, you imagine a human male climbing a tree and experience motor and perceptual content of performing and/or observing the action That’s what it means for that sentence to be meaningful - communication is mind control

“I don’t buy it” Brain areas that control specific motor actions are used to perceive, imagine, and process verbs and sentences about those very same actions Brain areas responsible for specific parts of the visual field are also used to process sentences that describe events occurring in those same regions Motor and perceptual imagery are components of meaningful language understanding

Simulated meaning Language ? Simulation World John climbed the tree

Mirror neurons Parts of the motor systems are used for things other than action (mirror neurons) • Pre-motor and parietal cortex are used in action perception (Gallese et al. 1996, Rizzolatti et al. 1996, Boccino 2002) • Imagination of motor action (Jeannerod 1996, Lotze et al 1999) • Recall of motor action (Nyberg et al 2001)

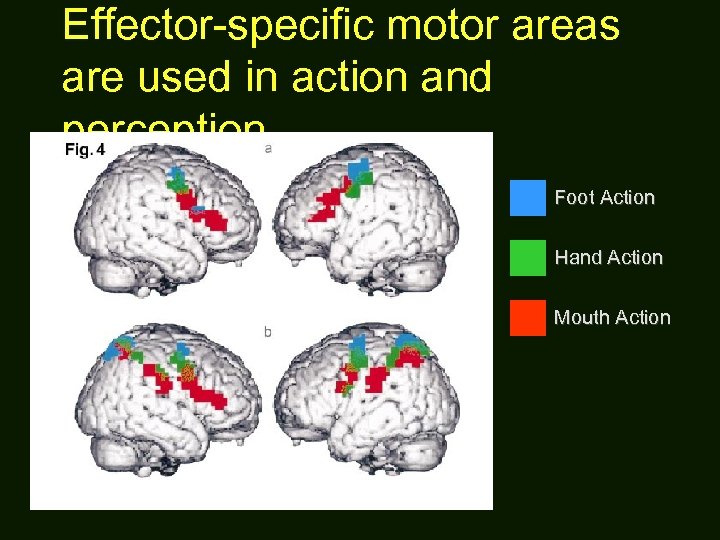

Effector-specific motor areas are used in action and perception Foot Action Hand Action Mouth Action

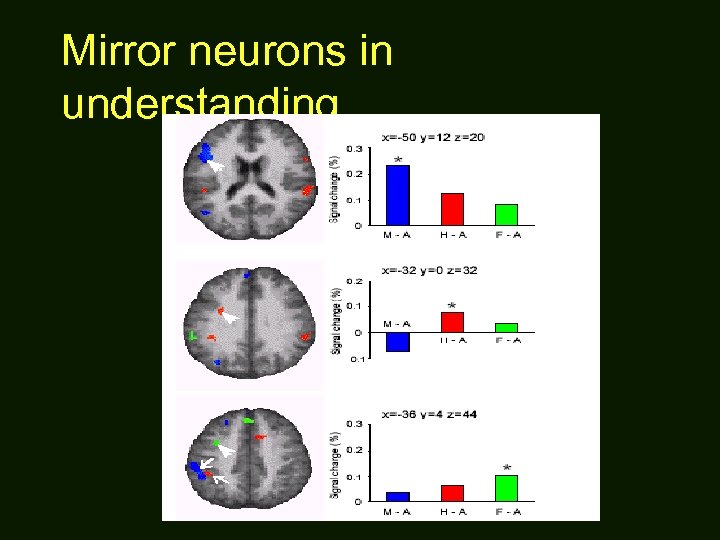

Mirror neurons Processing motor action language • Differential motor area activation to mouth, leg verbs in lexical decision (Pulvermüller et al 2001) • Differential motor area activation in passive listening to hand, mouth, leg sentences (Tettamanti et al ms)

Mirror neurons in understanding

Mirror neurons In other words, understanding the meaning of words such as walk or grasp involves mental simulation, executed by same functional clusters used in action and perception In other words, in understanding action language like walk or grasp, one imagines oneself walking or grasping an object, for example

Study 1 - A behavioral study Are the same structures used for perceiving motor actions also used for understanding motor language? If so, processing of action images and action language should interfere with each other

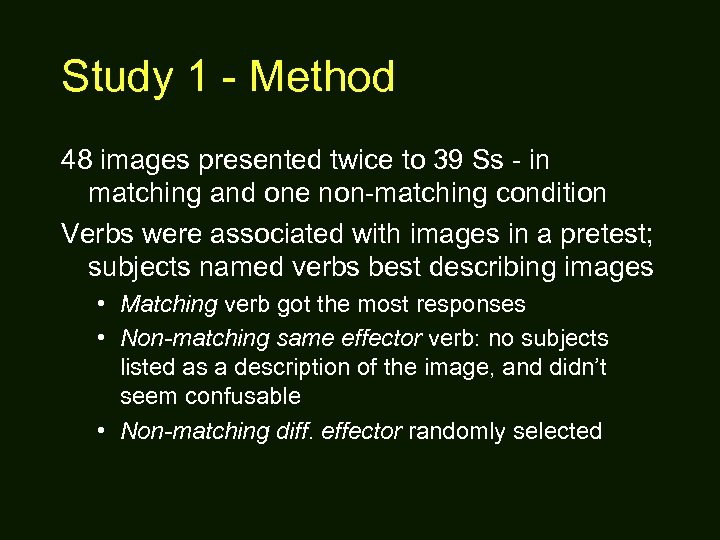

Study 1 - Method An image-verb matching task • A visual image (1 s) representing an action using one of three effectors - mouth, hand, or foot. • A mask, for 450 msec (50 msec blank) • A written verb, either a good descriptor of the image or not • Ss decided quickly if verb described image well

Study 1 - Method Conditions • Matching: verb matched image (1/2 trials) • Non-matching, same effector (1/4 trials) • Non-matching, different effector (1/4 trials)

Study 1 - Method The perception and verbal meaning sub-tasks might use the same (mirror) circuitry If so, non-matching images and verbs should activate different circuits In general, the more similar representations are (e. g. if they share an effector) the more mutual inhibition they should exhibit Hypothesis: Interference when verb and image don’t match but use same effector, thus slower reactions than with different effectors

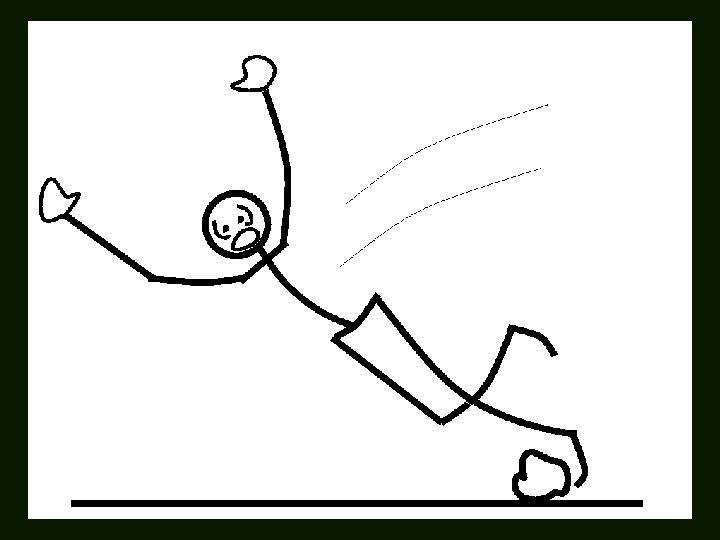

Matching Condition

trip

Non-Matching, Same Effector Condition

punch

Non-Matching, Different Effector Condition

reach

Study 1 - Method 48 images presented twice to 39 Ss - in matching and one non-matching condition Verbs were associated with images in a pretest; subjects named verbs best describing images • Matching verb got the most responses • Non-matching same effector verb: no subjects listed as a description of the image, and didn’t seem confusable • Non-matching diff. effector randomly selected

Study 1 - Results p<0. 01 in subject and item analyses

Study 1 - Interpretation Explanation 1: overall similarity between the two actions, not shared effector Ss might take longer to reject verbs using the same effector because those verbs had meanings that were globally more similar to the action depicted

Study 1 - Interpretation Measured semantic similarity between nonmatching and matching verbs for each image LSA (Landauer et al 1998) • Method for extracting & representing similarity among texts, based on the contexts they appear in • Two words or texts will be rated as more similar the more alike their distributions are • LSA performs like humans in a range of behaviors, e. g. synonym and multiple-choice tasks • The pairwise comparison function produces a similarity rating from -1 to 1 for any pair of texts

Study 1 - Interpretation

Study 1 - Interpretation Explanation 2: images in the non-matching, same effector condition might be more interpretable as the non-matching verb Indication: non-matching same effector verbs selected from among those that no subjects in the pretest offered as descriptors of the image Follow-up experiment using verbal stimuli yielded the same effect (but stronger!)

Study 1 - Discussion Mirror circuits used in executing and recognizing actions are specific (Gallese et al. 1996), e. g. to gesture type, like precision grip Neural structures encoding similar actions are more likely to become co-active (in action or perception), leading to confusion Therefore, the more similar two actions are, the more strongly neural structures encoding them must mutually inhibit each other

Study 1 - Discussion The matching task asked subjects to determine whether two inputs were the same • In non-matching conditions, this could be strong and stable activation of two mirror circuits • When the two mirror structures share an effector, they will strongly inhibit each other • It will thus take longer for two distinct mirror structures to become active, and for a subject to arrive at two distinct action perceptions • Unlike actions have less lateral inhibition, so two mirror structures become co-active more quickly

Study 1 - Conclusions Understanding motion verbs seems to use resources overlapping with those used in perceiving and executing actions This supports an embodied view of human semantics, where linguistic meaning is tightly linked to detailed motor knowledge

Study 2 - Introduction Motor imagery seems to be activated for language understanding. How about perceptual imagery? For example, when processing motion sentences involving upwards or downwards motion, language users might internally imagine an object moving upwards or downwards

Study 2 - Background Richardson, Spivey, Barsalou, and Mc. Rae (2003) tested whether processing sentences with up or down meanings interferes with visual processing Visual imagery interferes with visual perception (the “Perky effect”), so visual imagery when understanding a sentence could impede object categorization (1) a spoken sentence, then (2) a visual categorization task - a circle or square appeared up, down, right or left, and (3) an interleaved comprehension task Four types of sentence (determined by a rating test) • • Concrete Horizontal: The miner pushes the cart. Concrete Vertical: The plane bombs the city. Abstract Horizontal: The husband argues with the wife. Abstract Vertical: The storeowner increases the price.

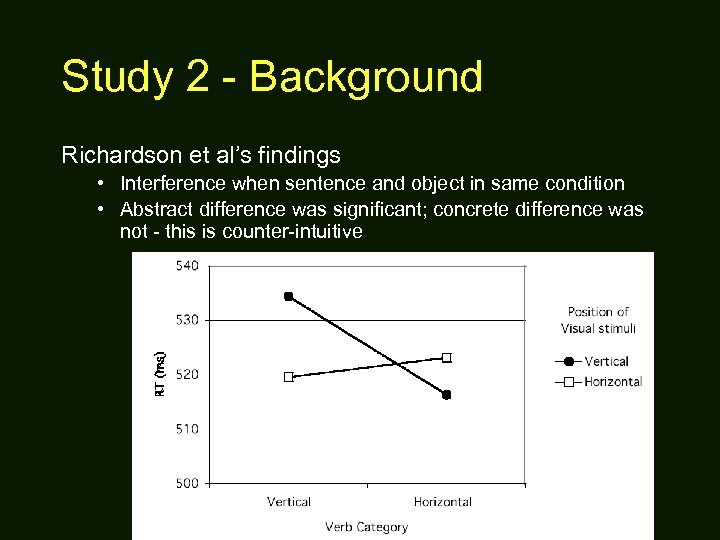

Study 2 - Background Richardson et al’s findings • Interference when sentence and object in same condition • Abstract difference was significant; concrete difference was not - this is counter-intuitive

Study 2 - Background Problems with the experiment • No way to tell whether it’s the sentences in their entirety or some component of them (e. g. nouns, verbs, clausal constructions) that yields the effect – E. g. The balloon floats through the cloud • Conflates up and down, left and right – The results may be the product of combined facilitory and inhibitory effects • Conflates sentences with very different semantics – The ship sinks in the ocean & The strongman lifts the barbell – The storeowner increases the price & The girl hopes for a pony

Study 2 - Experiment Idea: redo the Richardson et al experiment, fixing problems • Sentences are all intransitive, and differ only in the direction of their verb (only concrete sentences discussed here) – The chair toppled vs. The mule climbed • Separate conditions for sentences encoding up vs. down

Study 2 - Norming Sentences in each pair of conditions were closely balanced along two factors (no signif. diff. ) • Sentence reading (push the button as soon as you understand the sentence) • Sentence meaningfulness rating (1 -7) Verbs in each condition were rated as strongly related to up or down • up/down rating (1 -7) Subject nouns were controlled for their upness or downness in each pair of conditions So any differences would derive from

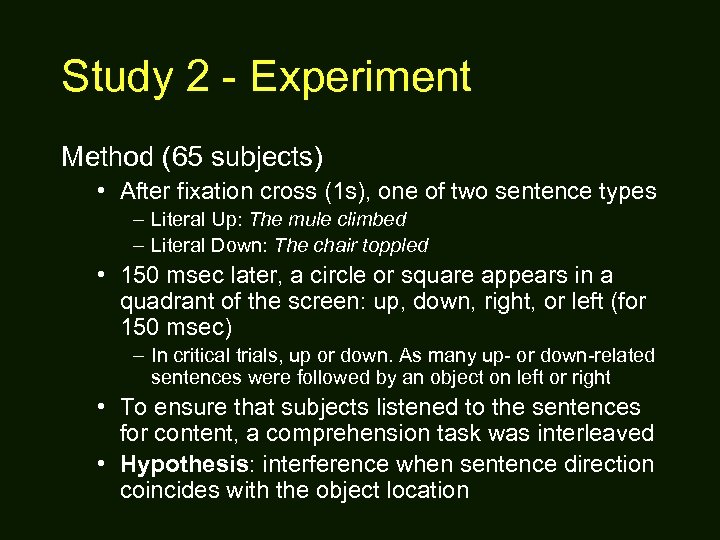

Study 2 - Experiment Method (65 subjects) • After fixation cross (1 s), one of two sentence types – Literal Up: The mule climbed – Literal Down: The chair toppled • 150 msec later, a circle or square appears in a quadrant of the screen: up, down, right, or left (for 150 msec) – In critical trials, up or down. As many up- or down-related sentences were followed by an object on left or right • To ensure that subjects listened to the sentences for content, a comprehension task was interleaved • Hypothesis: interference when sentence direction coincides with the object location

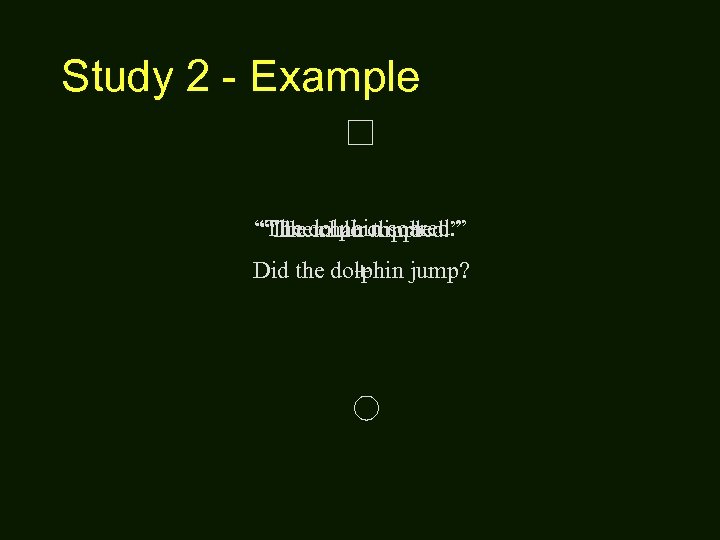

Study 2 - Example “The dolphin soared. ” “The mule climbed. ” “The chair toppled. ” Did the dolphin jump? +

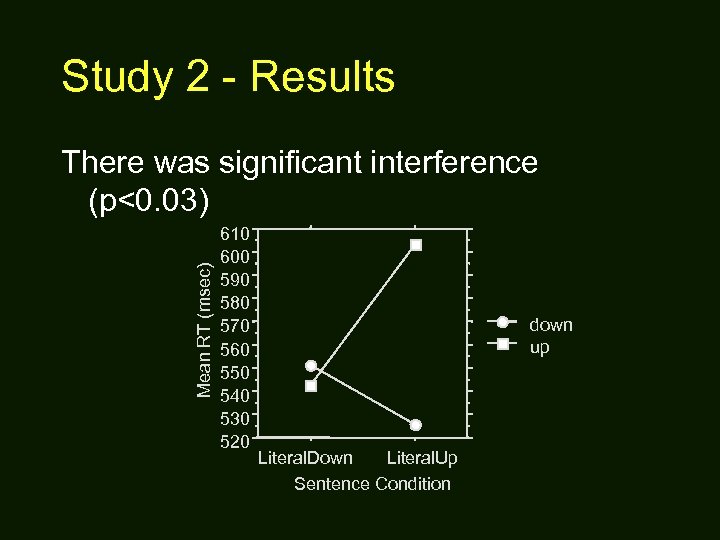

Study 2 - Results Mean RT (msec) There was significant interference (p<0. 03) 610 600 590 580 570 560 550 540 530 520 down up Literal. Down Literal. Up Sentence Condition

Study 2 - Results Strong effect in up condition but not in down condition • Possible explanation: intransitive up motion is more unusual, requiring more imagery • Up-related sentences were not rated significantly less meaningful in the norming study, but: – Literal up (ave): Meaningfulness=5. 9 – Literal down (ave): Meaningfulness=6. 1 • Intransitive motion tends downwards (gravity)

Study 2 - Conclusions Results • When processing literal motion sentences, subjects are slower performing a perception task if the two tasks use the same area of the visual field • This effect is specific to quadrants, not just axes • The effect results from verb differences, when clausal and nominal effects are controlled for

Summary of results Understanding language automatically and unconsciously leads individuals to activate internal simulations of the described scenes Simulation makes use of different modalities, including vision and motor control How exactly these different dimensions relate in simulation remains an important question

Other convergent evidence Language yields visual imagery (Spivey, Richardson, and colleagues) Language yields motor imagery (Glenberg 2001) Figurative language yields manner imagery (Matlock 1999)

Abstract language What about abstract language? Metaphorical language might make use of the same types of mechanism Stock prices rose. I kicked my licorice habit. Follow-ups coming soon to a Tuesday seminar near you!

Meaningful language Language is meaningful by evoking perceptual and motor experiences Language can be communicative since experiences are shared Linguistic structures are a conventionalized system for constraining simulation Any intermediate representation between form and simulation won’t look much like logic Words don’t “have” meanings, rather, they contribute to a blueprint for the construction of a meaningful simulation

What is meaning? Meaning is what you make of it

660f29491ac9cf84f5503c285d122c0a.ppt