f94e73748b39cb24d3fe7bb9e7d35aa5.ppt

- Количество слайдов: 181

SIMILARITY SEARCH The Metric Space Approach Pavel Zezula, Giuseppe Amato, Vlastislav Dohnal, Michal Batko Similarity Search: Part I, Chapter 1

SIMILARITY SEARCH The Metric Space Approach Pavel Zezula, Giuseppe Amato, Vlastislav Dohnal, Michal Batko Similarity Search: Part I, Chapter 1

Table of Content Part I: Metric searching in a nutshell n Foundations of metric space searching n Survey of existing approaches Part II: Metric searching in large collections n Centralized index structures n Approximate similarity search n Parallel and distributed indexes Similarity Search: Part I, Chapter 1 2

Table of Content Part I: Metric searching in a nutshell n Foundations of metric space searching n Survey of existing approaches Part II: Metric searching in large collections n Centralized index structures n Approximate similarity search n Parallel and distributed indexes Similarity Search: Part I, Chapter 1 2

Foundations of metric space searching 1. 2. 3. 4. 5. 6. 7. 8. 9. distance searching problem in metric spaces metric distance measures similarity queries basic partitioning principles of similarity query execution policies to avoid distance computations metric space transformations principles of approximate similarity search advanced issues Similarity Search: Part I, Chapter 1 3

Foundations of metric space searching 1. 2. 3. 4. 5. 6. 7. 8. 9. distance searching problem in metric spaces metric distance measures similarity queries basic partitioning principles of similarity query execution policies to avoid distance computations metric space transformations principles of approximate similarity search advanced issues Similarity Search: Part I, Chapter 1 3

Distance searching problem n Search problem: q q q n Data type The method of comparison Query formulation Extensibility: q A single indexing technique applied to many specific search problems quite different in nature Similarity Search: Part I, Chapter 1 4

Distance searching problem n Search problem: q q q n Data type The method of comparison Query formulation Extensibility: q A single indexing technique applied to many specific search problems quite different in nature Similarity Search: Part I, Chapter 1 4

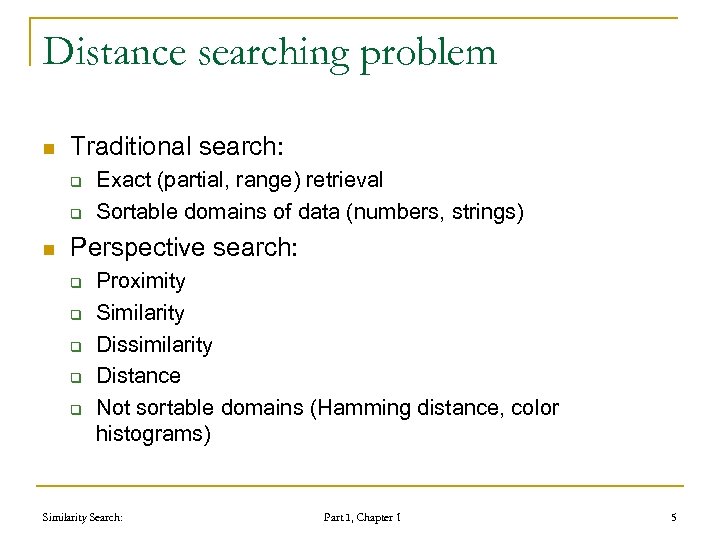

Distance searching problem n Traditional search: q q n Exact (partial, range) retrieval Sortable domains of data (numbers, strings) Perspective search: q q q Proximity Similarity Dissimilarity Distance Not sortable domains (Hamming distance, color histograms) Similarity Search: Part I, Chapter 1 5

Distance searching problem n Traditional search: q q n Exact (partial, range) retrieval Sortable domains of data (numbers, strings) Perspective search: q q q Proximity Similarity Dissimilarity Distance Not sortable domains (Hamming distance, color histograms) Similarity Search: Part I, Chapter 1 5

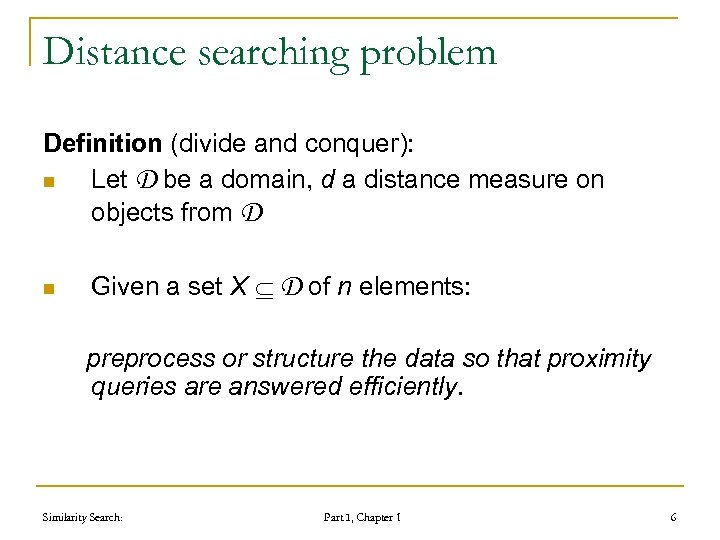

Distance searching problem Definition (divide and conquer): n Let D be a domain, d a distance measure on objects from D n Given a set X D of n elements: preprocess or structure the data so that proximity queries are answered efficiently. Similarity Search: Part I, Chapter 1 6

Distance searching problem Definition (divide and conquer): n Let D be a domain, d a distance measure on objects from D n Given a set X D of n elements: preprocess or structure the data so that proximity queries are answered efficiently. Similarity Search: Part I, Chapter 1 6

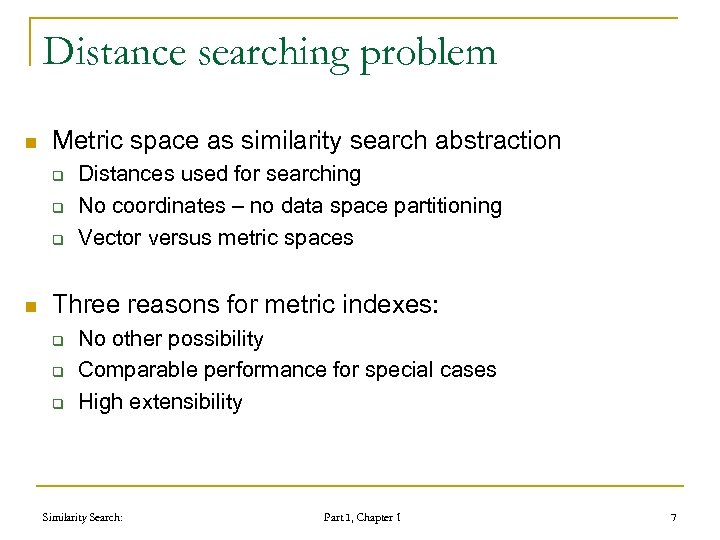

Distance searching problem n Metric space as similarity search abstraction q q q n Distances used for searching No coordinates – no data space partitioning Vector versus metric spaces Three reasons for metric indexes: q q q No other possibility Comparable performance for special cases High extensibility Similarity Search: Part I, Chapter 1 7

Distance searching problem n Metric space as similarity search abstraction q q q n Distances used for searching No coordinates – no data space partitioning Vector versus metric spaces Three reasons for metric indexes: q q q No other possibility Comparable performance for special cases High extensibility Similarity Search: Part I, Chapter 1 7

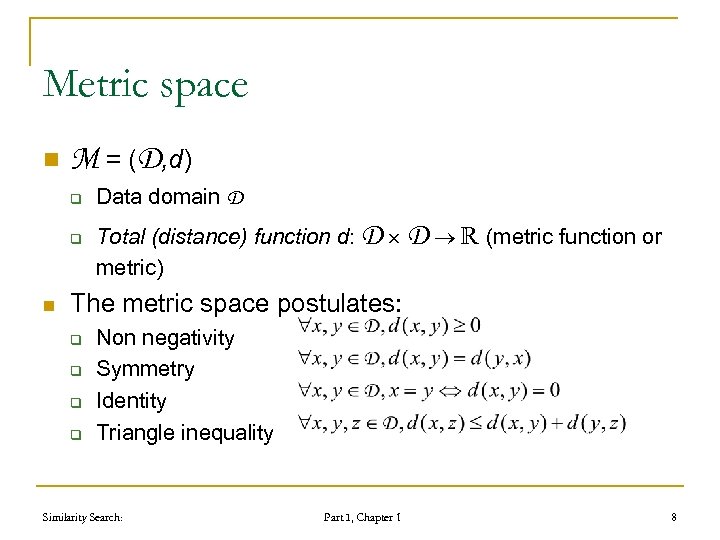

Metric space n M = (D, d) q q n Data domain D Total (distance) function d: D D (metric function or metric) The metric space postulates: q q Non negativity Symmetry Identity Triangle inequality Similarity Search: Part I, Chapter 1 8

Metric space n M = (D, d) q q n Data domain D Total (distance) function d: D D (metric function or metric) The metric space postulates: q q Non negativity Symmetry Identity Triangle inequality Similarity Search: Part I, Chapter 1 8

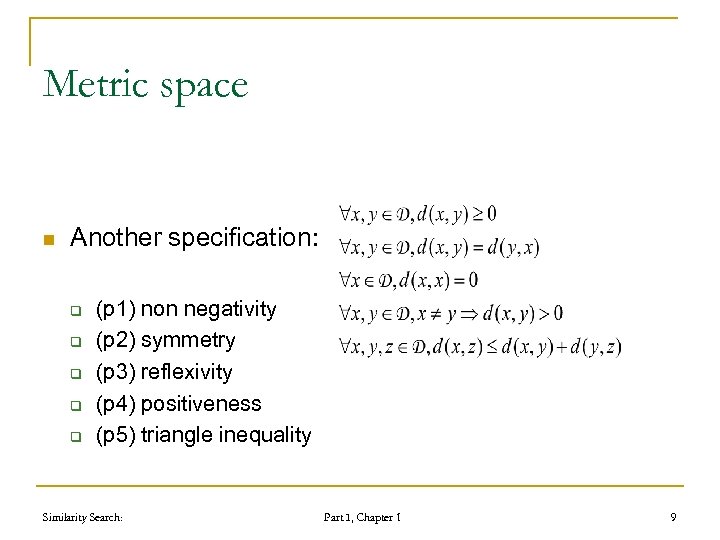

Metric space n Another specification: q q q (p 1) non negativity (p 2) symmetry (p 3) reflexivity (p 4) positiveness (p 5) triangle inequality Similarity Search: Part I, Chapter 1 9

Metric space n Another specification: q q q (p 1) non negativity (p 2) symmetry (p 3) reflexivity (p 4) positiveness (p 5) triangle inequality Similarity Search: Part I, Chapter 1 9

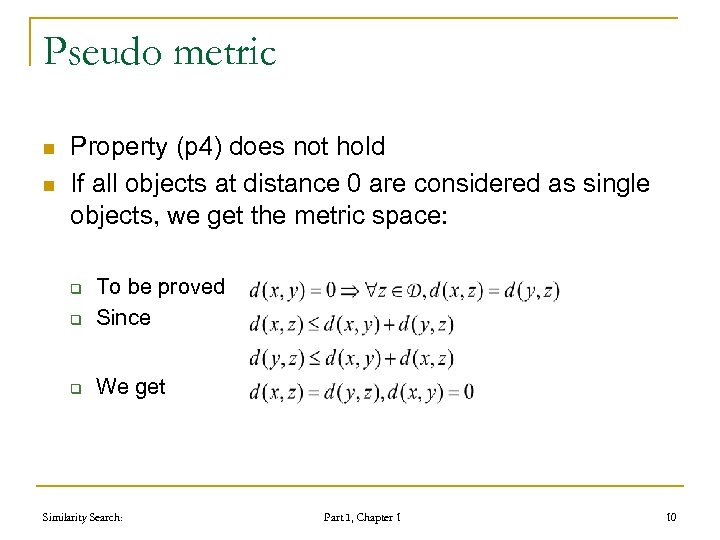

Pseudo metric n n Property (p 4) does not hold If all objects at distance 0 are considered as single objects, we get the metric space: q To be proved Since q We get q Similarity Search: Part I, Chapter 1 10

Pseudo metric n n Property (p 4) does not hold If all objects at distance 0 are considered as single objects, we get the metric space: q To be proved Since q We get q Similarity Search: Part I, Chapter 1 10

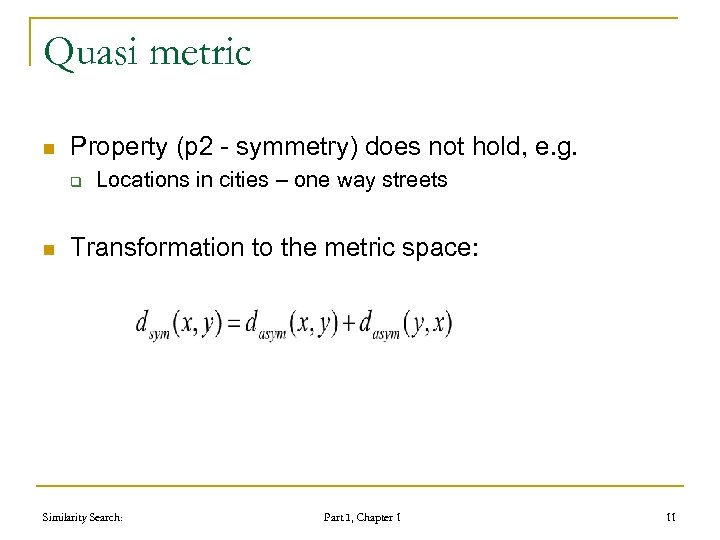

Quasi metric n Property (p 2 - symmetry) does not hold, e. g. q n Locations in cities – one way streets Transformation to the metric space: Similarity Search: Part I, Chapter 1 11

Quasi metric n Property (p 2 - symmetry) does not hold, e. g. q n Locations in cities – one way streets Transformation to the metric space: Similarity Search: Part I, Chapter 1 11

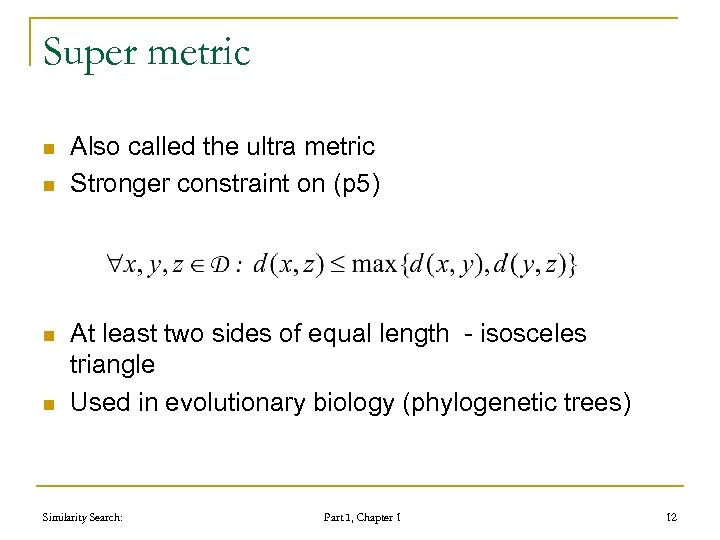

Super metric n n Also called the ultra metric Stronger constraint on (p 5) At least two sides of equal length - isosceles triangle Used in evolutionary biology (phylogenetic trees) Similarity Search: Part I, Chapter 1 12

Super metric n n Also called the ultra metric Stronger constraint on (p 5) At least two sides of equal length - isosceles triangle Used in evolutionary biology (phylogenetic trees) Similarity Search: Part I, Chapter 1 12

Foundations of metric space searching 1. 2. 3. 4. 5. 6. 7. 8. 9. distance searching problem in metric spaces metric distance measures similarity queries basic partitioning principles of similarity query execution policies to avoid distance computations metric space transformations principles of approximate similarity search advanced issues Similarity Search: Part I, Chapter 1 13

Foundations of metric space searching 1. 2. 3. 4. 5. 6. 7. 8. 9. distance searching problem in metric spaces metric distance measures similarity queries basic partitioning principles of similarity query execution policies to avoid distance computations metric space transformations principles of approximate similarity search advanced issues Similarity Search: Part I, Chapter 1 13

Distance measures n Discrete q n functions which return only a small (predefined) set of values Continuous q functions in which the cardinality of the set of values returned is very large or infinite. Similarity Search: Part I, Chapter 1 14

Distance measures n Discrete q n functions which return only a small (predefined) set of values Continuous q functions in which the cardinality of the set of values returned is very large or infinite. Similarity Search: Part I, Chapter 1 14

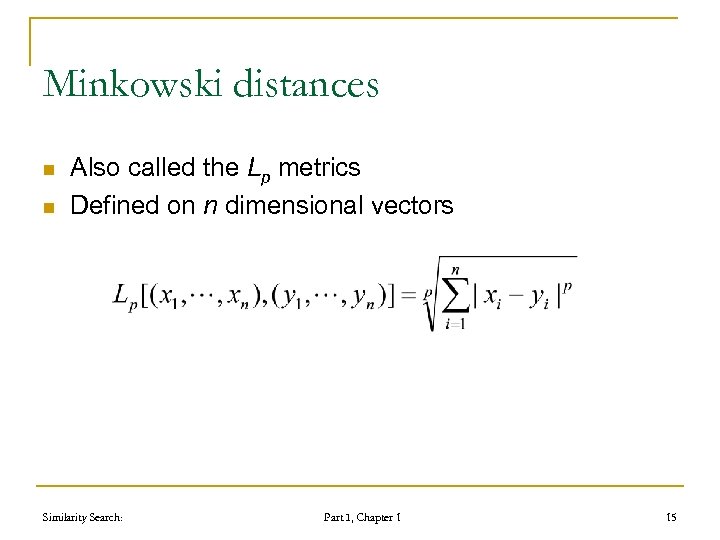

Minkowski distances n n Also called the Lp metrics Defined on n dimensional vectors Similarity Search: Part I, Chapter 1 15

Minkowski distances n n Also called the Lp metrics Defined on n dimensional vectors Similarity Search: Part I, Chapter 1 15

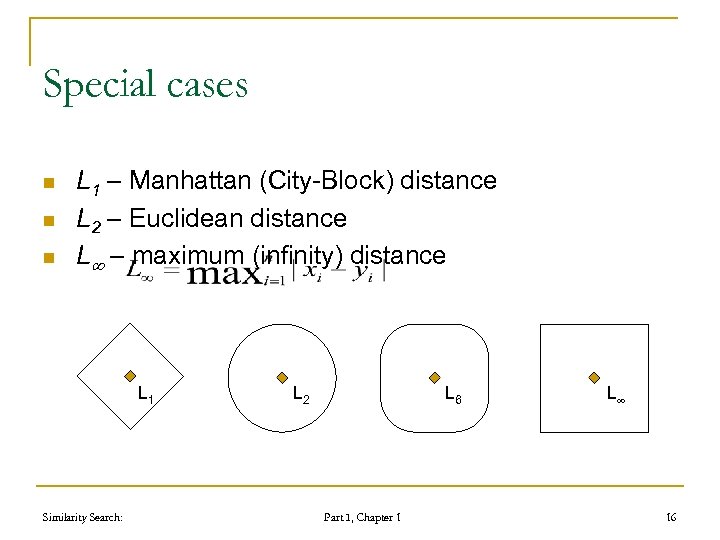

Special cases n n n L 1 – Manhattan (City-Block) distance L 2 – Euclidean distance L – maximum (infinity) distance L 1 Similarity Search: L 2 L 6 Part I, Chapter 1 L 16

Special cases n n n L 1 – Manhattan (City-Block) distance L 2 – Euclidean distance L – maximum (infinity) distance L 1 Similarity Search: L 2 L 6 Part I, Chapter 1 L 16

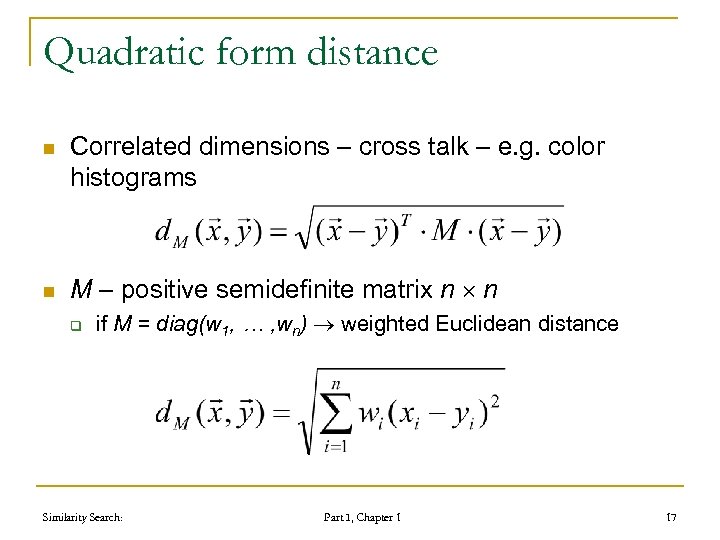

Quadratic form distance n n Correlated dimensions – cross talk – e. g. color histograms M – positive semidefinite matrix n n q if M = diag(w 1, … , wn) weighted Euclidean distance Similarity Search: Part I, Chapter 1 17

Quadratic form distance n n Correlated dimensions – cross talk – e. g. color histograms M – positive semidefinite matrix n n q if M = diag(w 1, … , wn) weighted Euclidean distance Similarity Search: Part I, Chapter 1 17

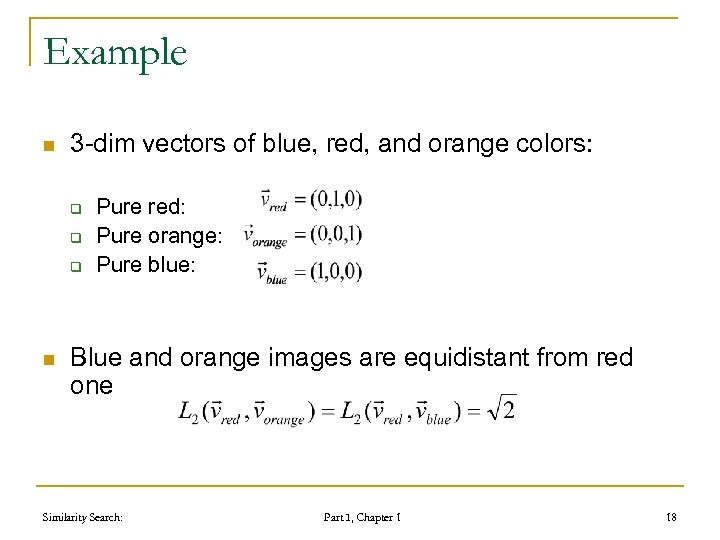

Example n 3 -dim vectors of blue, red, and orange colors: q q q n Pure red: Pure orange: Pure blue: Blue and orange images are equidistant from red one Similarity Search: Part I, Chapter 1 18

Example n 3 -dim vectors of blue, red, and orange colors: q q q n Pure red: Pure orange: Pure blue: Blue and orange images are equidistant from red one Similarity Search: Part I, Chapter 1 18

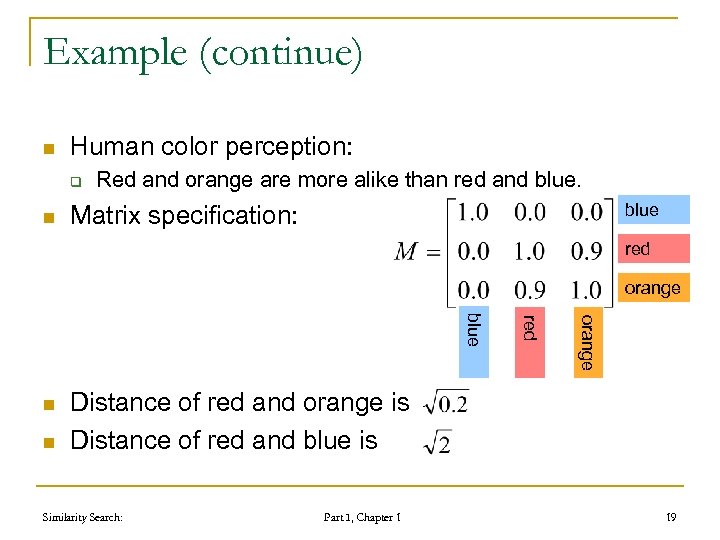

Example (continue) n Human color perception: q n Red and orange are more alike than red and blue Matrix specification: red orange n red blue n Distance of red and orange is Distance of red and blue is Similarity Search: Part I, Chapter 1 19

Example (continue) n Human color perception: q n Red and orange are more alike than red and blue Matrix specification: red orange n red blue n Distance of red and orange is Distance of red and blue is Similarity Search: Part I, Chapter 1 19

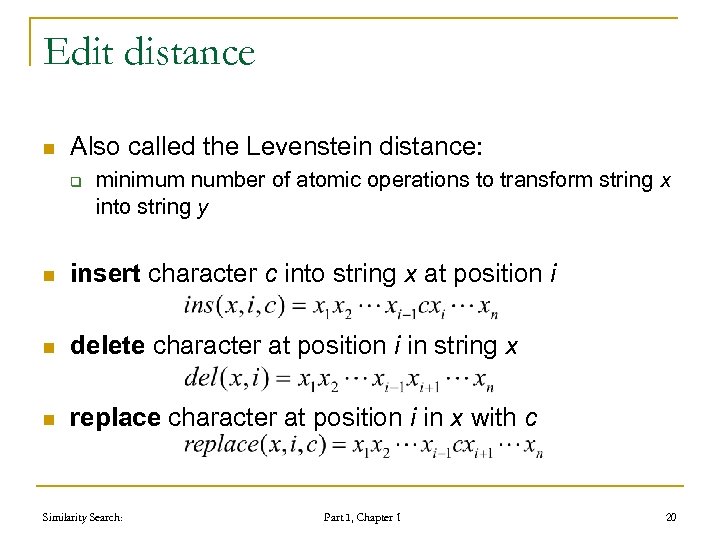

Edit distance n Also called the Levenstein distance: q minimum number of atomic operations to transform string x into string y n insert character c into string x at position i n delete character at position i in string x n replace character at position i in x with c Similarity Search: Part I, Chapter 1 20

Edit distance n Also called the Levenstein distance: q minimum number of atomic operations to transform string x into string y n insert character c into string x at position i n delete character at position i in string x n replace character at position i in x with c Similarity Search: Part I, Chapter 1 20

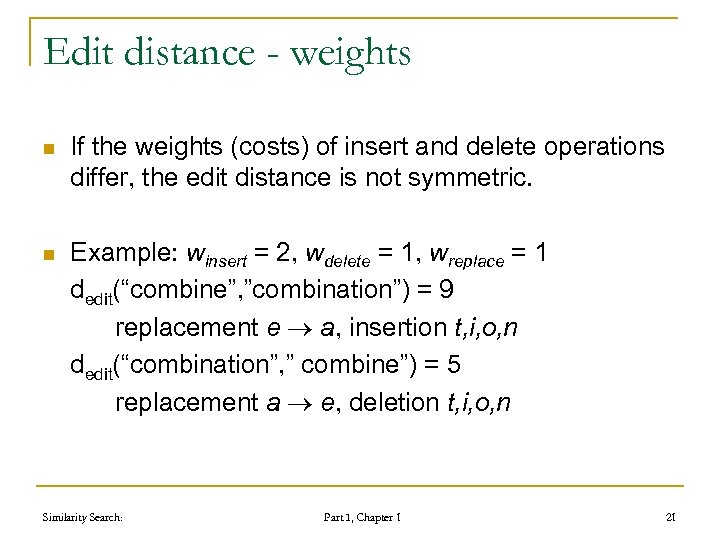

Edit distance - weights n If the weights (costs) of insert and delete operations differ, the edit distance is not symmetric. n Example: winsert = 2, wdelete = 1, wreplace = 1 dedit(“combine”, ”combination”) = 9 replacement e a, insertion t, i, o, n dedit(“combination”, ” combine”) = 5 replacement a e, deletion t, i, o, n Similarity Search: Part I, Chapter 1 21

Edit distance - weights n If the weights (costs) of insert and delete operations differ, the edit distance is not symmetric. n Example: winsert = 2, wdelete = 1, wreplace = 1 dedit(“combine”, ”combination”) = 9 replacement e a, insertion t, i, o, n dedit(“combination”, ” combine”) = 5 replacement a e, deletion t, i, o, n Similarity Search: Part I, Chapter 1 21

Edit distance - generalizations n n n Replacement of different characters can be different: a b different from a c If it is symmetric, it is still the metric: a b must be the same as b a Edit distance can be generalized to tree structures Similarity Search: Part I, Chapter 1 22

Edit distance - generalizations n n n Replacement of different characters can be different: a b different from a c If it is symmetric, it is still the metric: a b must be the same as b a Edit distance can be generalized to tree structures Similarity Search: Part I, Chapter 1 22

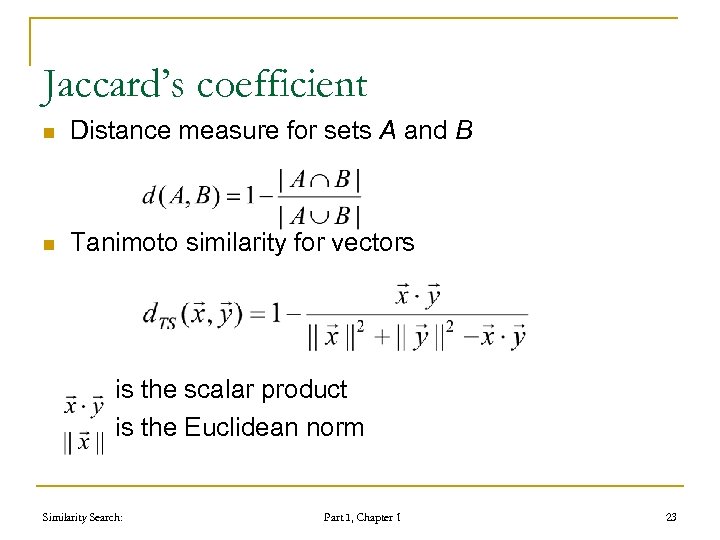

Jaccard’s coefficient n Distance measure for sets A and B n Tanimoto similarity for vectors is the scalar product is the Euclidean norm Similarity Search: Part I, Chapter 1 23

Jaccard’s coefficient n Distance measure for sets A and B n Tanimoto similarity for vectors is the scalar product is the Euclidean norm Similarity Search: Part I, Chapter 1 23

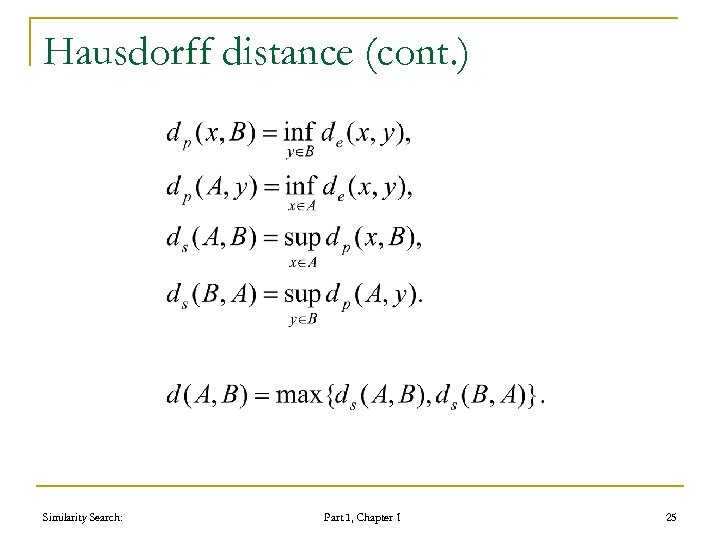

Hausdorff distance n n Distance measure for sets Compares elements by a distance de Measures the extent to which each point of the “model” set A lies near some point of the “image” set B and vice versa. Two sets are within Hausdorff distance r from each other if and only if any point of one set is within the distance r from some point of the other set. Similarity Search: Part I, Chapter 1 24

Hausdorff distance n n Distance measure for sets Compares elements by a distance de Measures the extent to which each point of the “model” set A lies near some point of the “image” set B and vice versa. Two sets are within Hausdorff distance r from each other if and only if any point of one set is within the distance r from some point of the other set. Similarity Search: Part I, Chapter 1 24

Hausdorff distance (cont. ) Similarity Search: Part I, Chapter 1 25

Hausdorff distance (cont. ) Similarity Search: Part I, Chapter 1 25

Foundations of metric space searching 1. 2. 3. 4. 5. 6. 7. 8. 9. distance searching problem in metric spaces metric distance measures similarity queries basic partitioning principles of similarity query execution policies to avoid distance computations metric space transformations principles of approximate similarity search advanced issues Similarity Search: Part I, Chapter 1 26

Foundations of metric space searching 1. 2. 3. 4. 5. 6. 7. 8. 9. distance searching problem in metric spaces metric distance measures similarity queries basic partitioning principles of similarity query execution policies to avoid distance computations metric space transformations principles of approximate similarity search advanced issues Similarity Search: Part I, Chapter 1 26

Similarity Queries n n n Range query Nearest neighbor query Reverse nearest neighbor query Similarity join Combined queries Complex queries Similarity Search: Part I, Chapter 1 27

Similarity Queries n n n Range query Nearest neighbor query Reverse nearest neighbor query Similarity join Combined queries Complex queries Similarity Search: Part I, Chapter 1 27

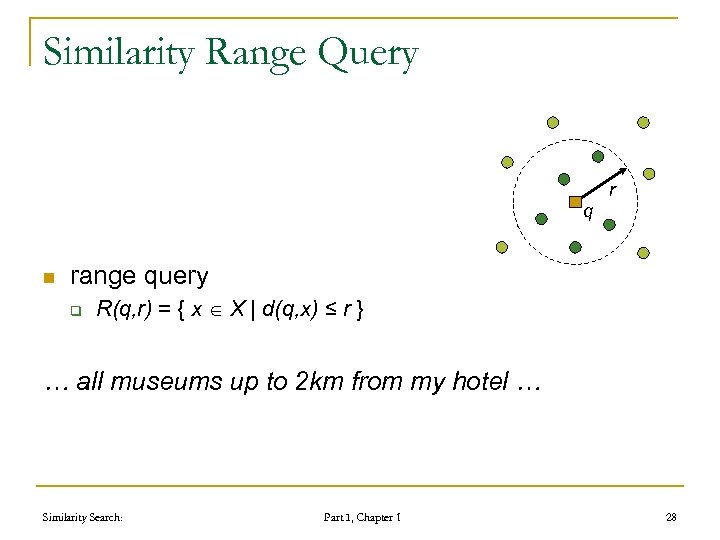

Similarity Range Query r q n range query q R(q, r) = { x X | d(q, x) ≤ r } … all museums up to 2 km from my hotel … Similarity Search: Part I, Chapter 1 28

Similarity Range Query r q n range query q R(q, r) = { x X | d(q, x) ≤ r } … all museums up to 2 km from my hotel … Similarity Search: Part I, Chapter 1 28

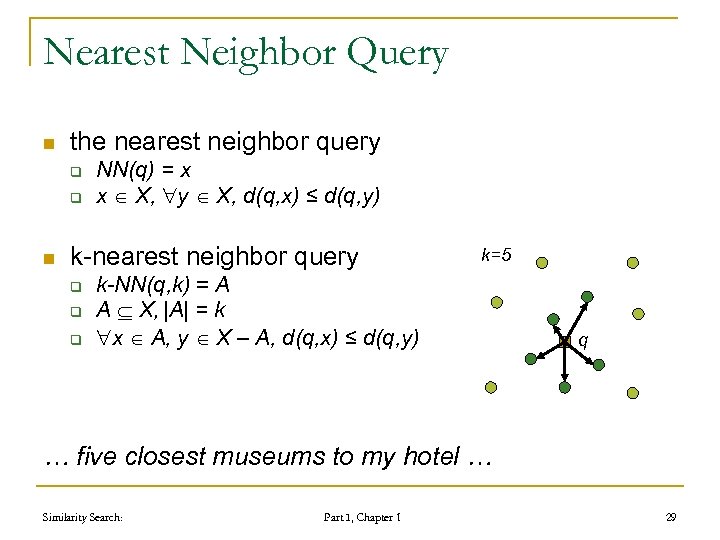

Nearest Neighbor Query n the nearest neighbor query q q n NN(q) = x x X, y X, d(q, x) ≤ d(q, y) k-nearest neighbor query q q q k=5 k-NN(q, k) = A A X, |A| = k x A, y X – A, d(q, x) ≤ d(q, y) q … five closest museums to my hotel … Similarity Search: Part I, Chapter 1 29

Nearest Neighbor Query n the nearest neighbor query q q n NN(q) = x x X, y X, d(q, x) ≤ d(q, y) k-nearest neighbor query q q q k=5 k-NN(q, k) = A A X, |A| = k x A, y X – A, d(q, x) ≤ d(q, y) q … five closest museums to my hotel … Similarity Search: Part I, Chapter 1 29

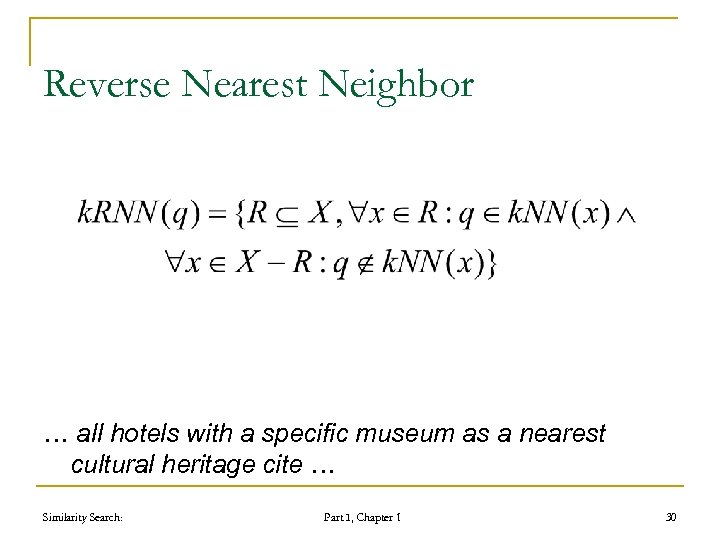

Reverse Nearest Neighbor … all hotels with a specific museum as a nearest cultural heritage cite … Similarity Search: Part I, Chapter 1 30

Reverse Nearest Neighbor … all hotels with a specific museum as a nearest cultural heritage cite … Similarity Search: Part I, Chapter 1 30

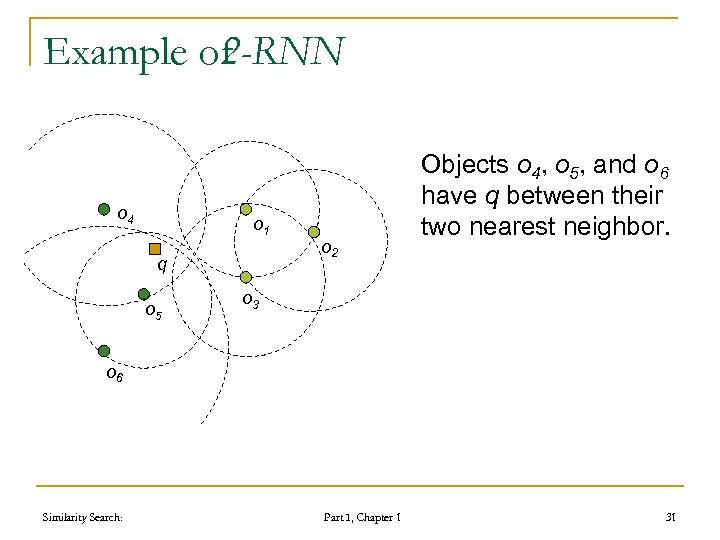

Example of 2 -RNN o 4 o 1 q o 5 o 2 Objects o 4, o 5, and o 6 have q between their two nearest neighbor. o 3 o 6 Similarity Search: Part I, Chapter 1 31

Example of 2 -RNN o 4 o 1 q o 5 o 2 Objects o 4, o 5, and o 6 have q between their two nearest neighbor. o 3 o 6 Similarity Search: Part I, Chapter 1 31

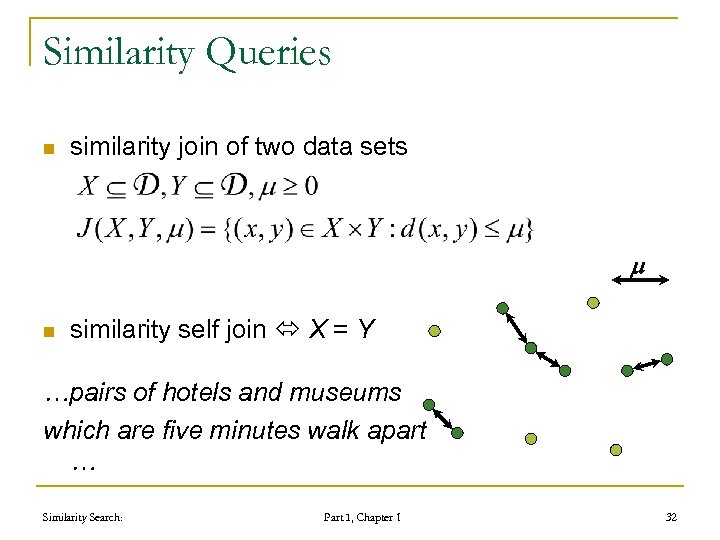

Similarity Queries n similarity join of two data sets m n similarity self join X = Y …pairs of hotels and museums which are five minutes walk apart … Similarity Search: Part I, Chapter 1 32

Similarity Queries n similarity join of two data sets m n similarity self join X = Y …pairs of hotels and museums which are five minutes walk apart … Similarity Search: Part I, Chapter 1 32

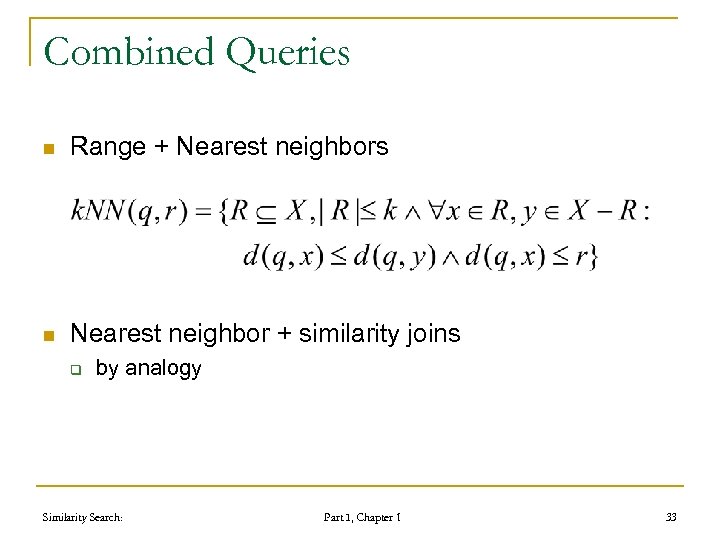

Combined Queries n Range + Nearest neighbors n Nearest neighbor + similarity joins q by analogy Similarity Search: Part I, Chapter 1 33

Combined Queries n Range + Nearest neighbors n Nearest neighbor + similarity joins q by analogy Similarity Search: Part I, Chapter 1 33

Complex Queries n Find the best matches of circular shape objects with red color n The best match for circular shape or red color needs not be the best match combined!!! Similarity Search: Part I, Chapter 1 34

Complex Queries n Find the best matches of circular shape objects with red color n The best match for circular shape or red color needs not be the best match combined!!! Similarity Search: Part I, Chapter 1 34

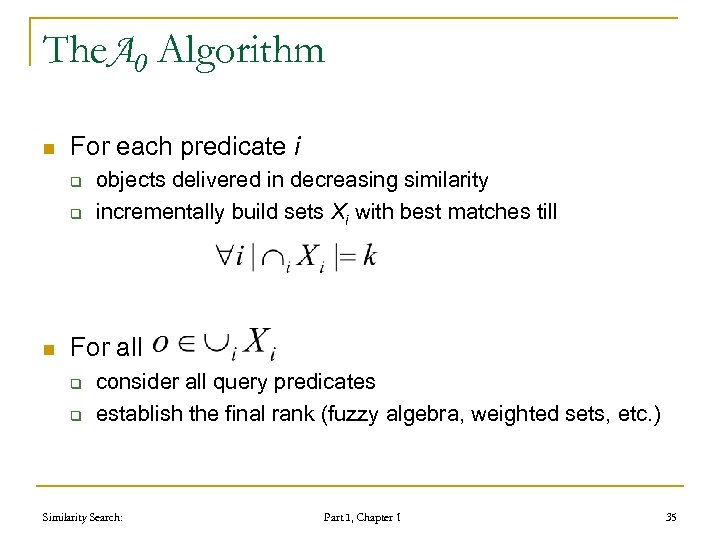

The. A 0 Algorithm n For each predicate i q q n objects delivered in decreasing similarity incrementally build sets Xi with best matches till For all q q consider all query predicates establish the final rank (fuzzy algebra, weighted sets, etc. ) Similarity Search: Part I, Chapter 1 35

The. A 0 Algorithm n For each predicate i q q n objects delivered in decreasing similarity incrementally build sets Xi with best matches till For all q q consider all query predicates establish the final rank (fuzzy algebra, weighted sets, etc. ) Similarity Search: Part I, Chapter 1 35

Foundations of Metric Space Searching 1. 2. 3. 4. 5. 6. 7. 8. 9. distance searching problem in metric spaces metric distance measures similarity queries basic partitioning principles of similarity query execution policies to avoid distance computations metric space transformations principles of approximate similarity search advanced issues Similarity Search: Part I, Chapter 1 36

Foundations of Metric Space Searching 1. 2. 3. 4. 5. 6. 7. 8. 9. distance searching problem in metric spaces metric distance measures similarity queries basic partitioning principles of similarity query execution policies to avoid distance computations metric space transformations principles of approximate similarity search advanced issues Similarity Search: Part I, Chapter 1 36

Partitioning Principles n Given a set X D in M=(D, d) three basic partitioning principles have been defined: q q q Ball partitioning Generalized hyper-plane partitioning Excluded middle partitioning Similarity Search: Part I, Chapter 1 37

Partitioning Principles n Given a set X D in M=(D, d) three basic partitioning principles have been defined: q q q Ball partitioning Generalized hyper-plane partitioning Excluded middle partitioning Similarity Search: Part I, Chapter 1 37

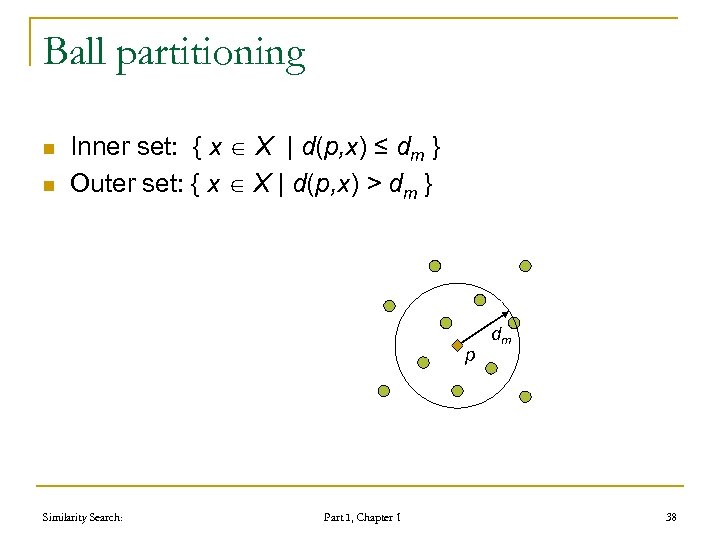

Ball partitioning n n Inner set: { x X | d(p, x) ≤ dm } Outer set: { x X | d(p, x) > dm } p Similarity Search: Part I, Chapter 1 dm 38

Ball partitioning n n Inner set: { x X | d(p, x) ≤ dm } Outer set: { x X | d(p, x) > dm } p Similarity Search: Part I, Chapter 1 dm 38

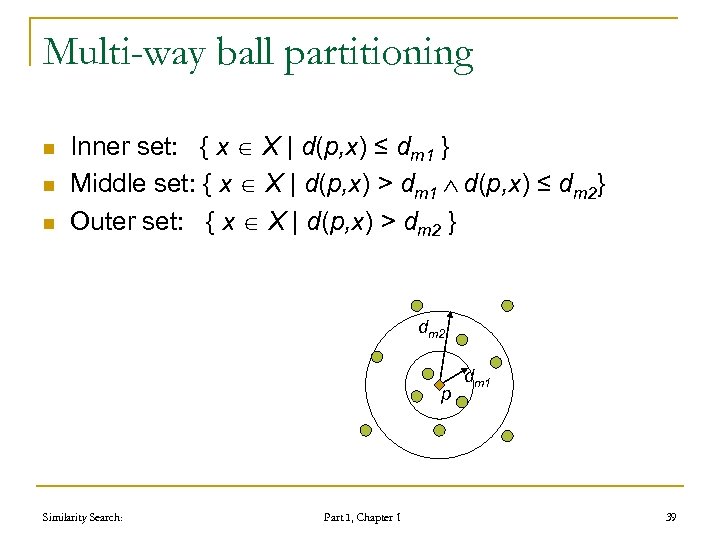

Multi-way ball partitioning n n n Inner set: { x X | d(p, x) ≤ dm 1 } Middle set: { x X | d(p, x) > dm 1 d(p, x) ≤ dm 2} Outer set: { x X | d(p, x) > dm 2 } dm 2 p Similarity Search: Part I, Chapter 1 dm 1 39

Multi-way ball partitioning n n n Inner set: { x X | d(p, x) ≤ dm 1 } Middle set: { x X | d(p, x) > dm 1 d(p, x) ≤ dm 2} Outer set: { x X | d(p, x) > dm 2 } dm 2 p Similarity Search: Part I, Chapter 1 dm 1 39

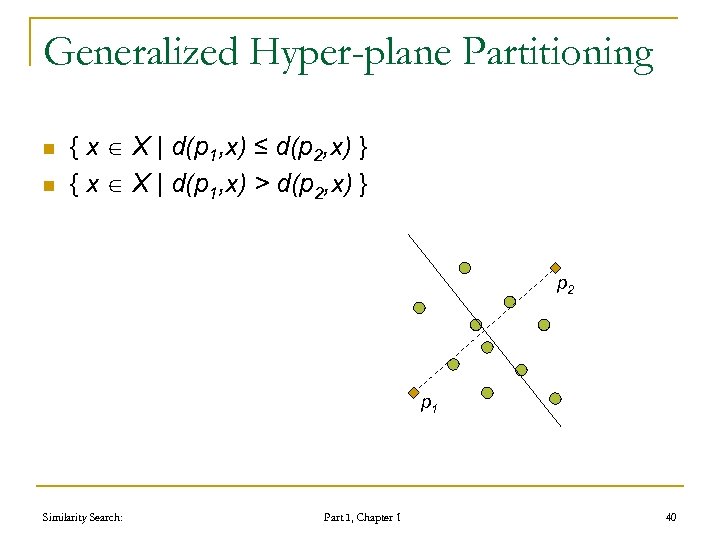

Generalized Hyper-plane Partitioning n n { x X | d(p 1, x) ≤ d(p 2, x) } { x X | d(p 1, x) > d(p 2, x) } p 2 p 1 Similarity Search: Part I, Chapter 1 40

Generalized Hyper-plane Partitioning n n { x X | d(p 1, x) ≤ d(p 2, x) } { x X | d(p 1, x) > d(p 2, x) } p 2 p 1 Similarity Search: Part I, Chapter 1 40

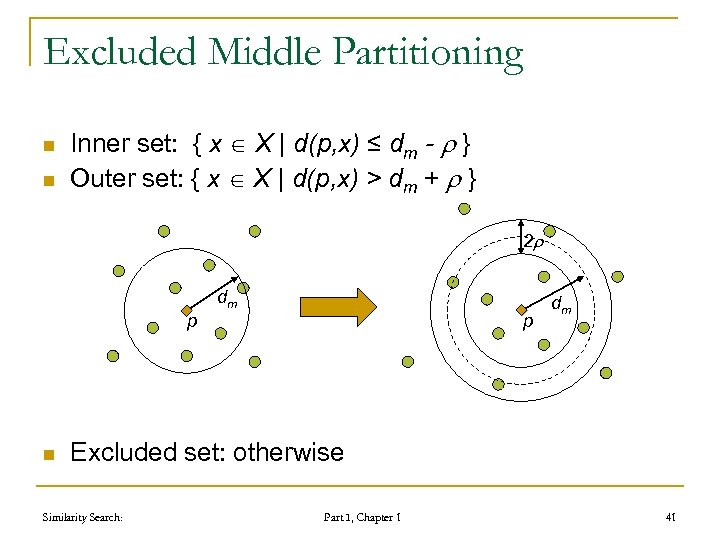

Excluded Middle Partitioning n n Inner set: { x X | d(p, x) ≤ dm - } Outer set: { x X | d(p, x) > dm + } 2 dm p n p dm Excluded set: otherwise Similarity Search: Part I, Chapter 1 41

Excluded Middle Partitioning n n Inner set: { x X | d(p, x) ≤ dm - } Outer set: { x X | d(p, x) > dm + } 2 dm p n p dm Excluded set: otherwise Similarity Search: Part I, Chapter 1 41

Foundations of metric space searching 1. 2. 3. 4. 5. 6. 7. 8. 9. distance searching problem in metric spaces metric distance measures similarity queries basic partitioning principles of similarity query execution policies to avoid distance computations metric space transformations principles of approximate similarity search advanced issues Similarity Search: Part I, Chapter 1 42

Foundations of metric space searching 1. 2. 3. 4. 5. 6. 7. 8. 9. distance searching problem in metric spaces metric distance measures similarity queries basic partitioning principles of similarity query execution policies to avoid distance computations metric space transformations principles of approximate similarity search advanced issues Similarity Search: Part I, Chapter 1 42

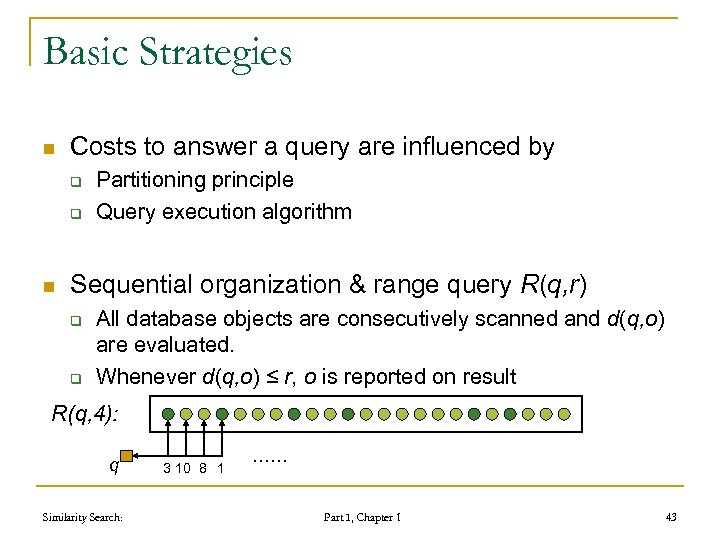

Basic Strategies n Costs to answer a query are influenced by q q n Partitioning principle Query execution algorithm Sequential organization & range query R(q, r) q q All database objects are consecutively scanned and d(q, o) are evaluated. Whenever d(q, o) ≤ r, o is reported on result R(q, 4): q Similarity Search: 3 10 8 1 …… Part I, Chapter 1 43

Basic Strategies n Costs to answer a query are influenced by q q n Partitioning principle Query execution algorithm Sequential organization & range query R(q, r) q q All database objects are consecutively scanned and d(q, o) are evaluated. Whenever d(q, o) ≤ r, o is reported on result R(q, 4): q Similarity Search: 3 10 8 1 …… Part I, Chapter 1 43

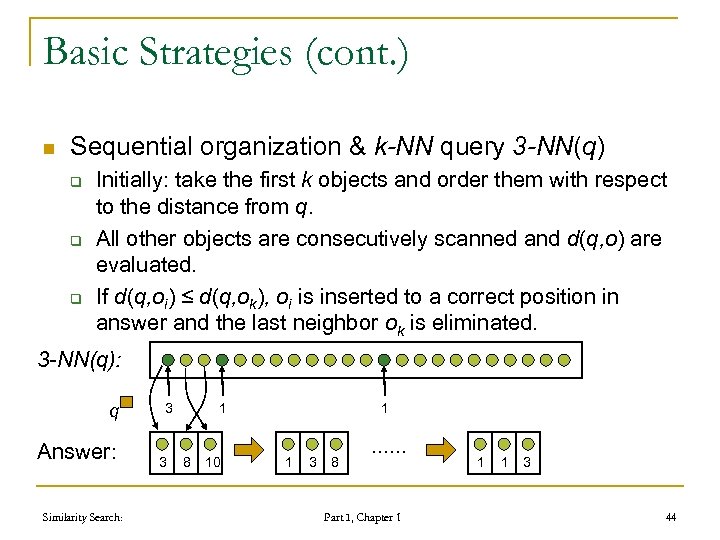

Basic Strategies (cont. ) n Sequential organization & k-NN query 3 -NN(q) q q q Initially: take the first k objects and order them with respect to the distance from q. All other objects are consecutively scanned and d(q, o) are evaluated. If d(q, oi) ≤ d(q, ok), oi is inserted to a correct position in answer and the last neighbor ok is eliminated. 3 -NN(q): q Answer: Similarity Search: 3 3 1 9 8 10 1 1 3 8 …… Part I, Chapter 1 1 1 3 44

Basic Strategies (cont. ) n Sequential organization & k-NN query 3 -NN(q) q q q Initially: take the first k objects and order them with respect to the distance from q. All other objects are consecutively scanned and d(q, o) are evaluated. If d(q, oi) ≤ d(q, ok), oi is inserted to a correct position in answer and the last neighbor ok is eliminated. 3 -NN(q): q Answer: Similarity Search: 3 3 1 9 8 10 1 1 3 8 …… Part I, Chapter 1 1 1 3 44

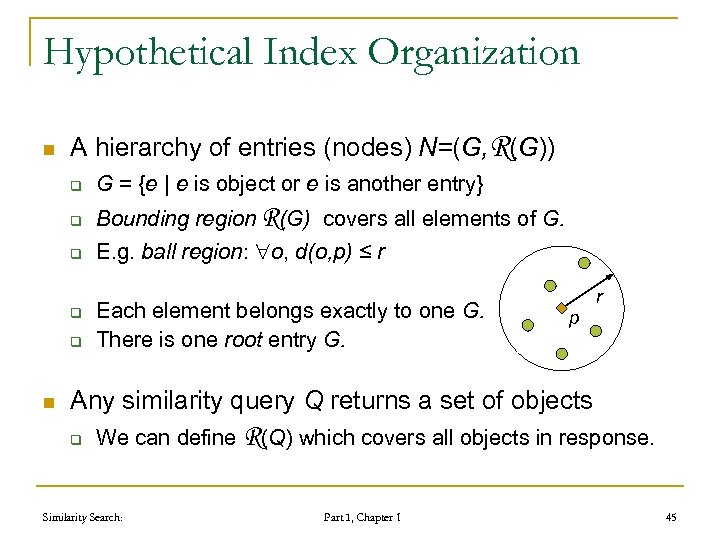

Hypothetical Index Organization n A hierarchy of entries (nodes) N=(G, R(G)) q G = {e | e is object or e is another entry} q Bounding region R(G) covers all elements of G. q E. g. ball region: o, d(o, p) ≤ r q q n Each element belongs exactly to one G. There is one root entry G. r p Any similarity query Q returns a set of objects q We can define R(Q) which covers all objects in response. Similarity Search: Part I, Chapter 1 45

Hypothetical Index Organization n A hierarchy of entries (nodes) N=(G, R(G)) q G = {e | e is object or e is another entry} q Bounding region R(G) covers all elements of G. q E. g. ball region: o, d(o, p) ≤ r q q n Each element belongs exactly to one G. There is one root entry G. r p Any similarity query Q returns a set of objects q We can define R(Q) which covers all objects in response. Similarity Search: Part I, Chapter 1 45

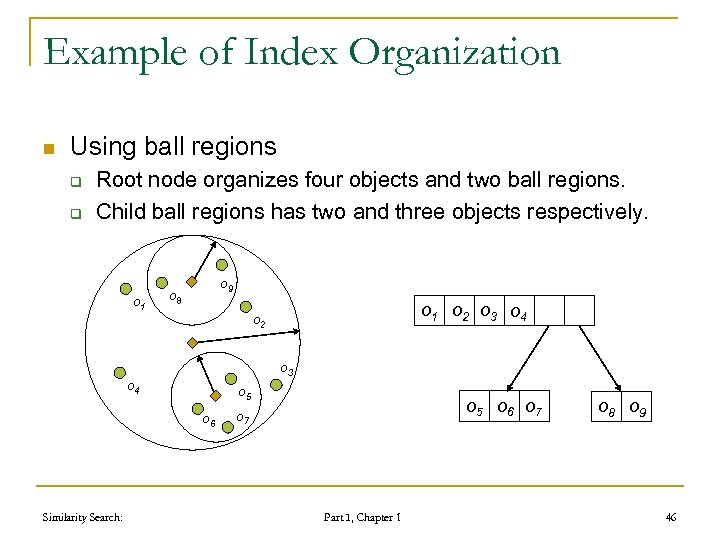

Example of Index Organization n Using ball regions q q Root node organizes four objects and two ball regions. Child ball regions has two and three objects respectively. o 1 o 9 o 8 o 3 o 4 o 5 o 6 Similarity Search: o 1 o 2 o 3 o 4 o 2 o 5 o 6 o 7 Part I, Chapter 1 o 8 o 9 46

Example of Index Organization n Using ball regions q q Root node organizes four objects and two ball regions. Child ball regions has two and three objects respectively. o 1 o 9 o 8 o 3 o 4 o 5 o 6 Similarity Search: o 1 o 2 o 3 o 4 o 2 o 5 o 6 o 7 Part I, Chapter 1 o 8 o 9 46

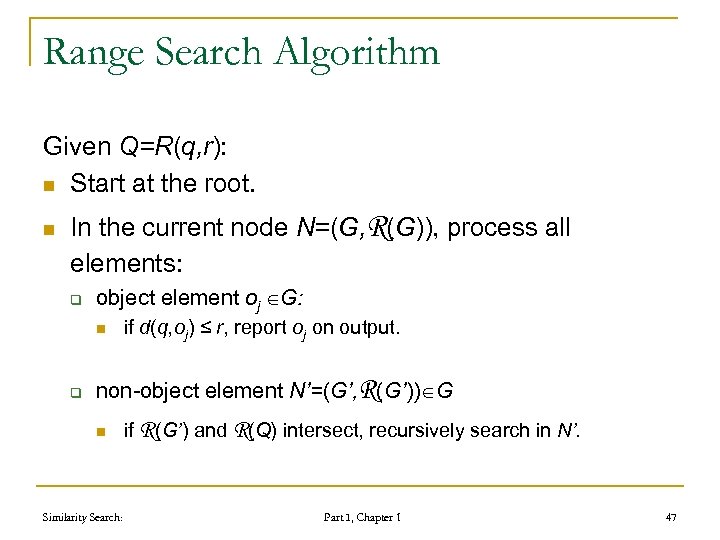

Range Search Algorithm Given Q=R(q, r): n Start at the root. n In the current node N=(G, R(G)), process all elements: q object element oj G: n q if d(q, oj) ≤ r, report oj on output. non-object element N’=(G’, R(G’)) G n Similarity Search: if R(G’) and R(Q) intersect, recursively search in N’. Part I, Chapter 1 47

Range Search Algorithm Given Q=R(q, r): n Start at the root. n In the current node N=(G, R(G)), process all elements: q object element oj G: n q if d(q, oj) ≤ r, report oj on output. non-object element N’=(G’, R(G’)) G n Similarity Search: if R(G’) and R(Q) intersect, recursively search in N’. Part I, Chapter 1 47

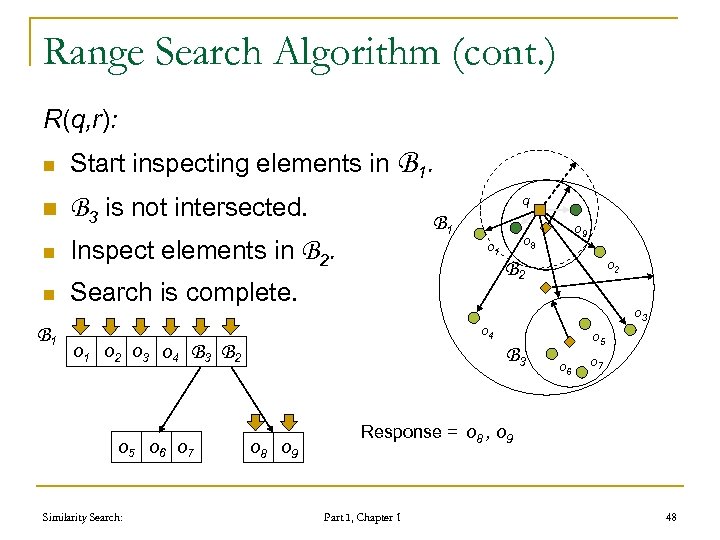

Range Search Algorithm (cont. ) R(q, r): n Start inspecting elements in B 1. n n q B 3 is not intersected. Inspect elements in B 2. Search is complete. n B 1 o 2 o 3 o 4 o 9 o 8 o 1 o 2 B 2 o 3 o 4 B 3 B 2 o 5 o 6 o 7 Similarity Search: B 1 B 3 o 8 o 9 o 5 o 6 o 7 Response = o 8 , o 9 Part I, Chapter 1 48

Range Search Algorithm (cont. ) R(q, r): n Start inspecting elements in B 1. n n q B 3 is not intersected. Inspect elements in B 2. Search is complete. n B 1 o 2 o 3 o 4 o 9 o 8 o 1 o 2 B 2 o 3 o 4 B 3 B 2 o 5 o 6 o 7 Similarity Search: B 1 B 3 o 8 o 9 o 5 o 6 o 7 Response = o 8 , o 9 Part I, Chapter 1 48

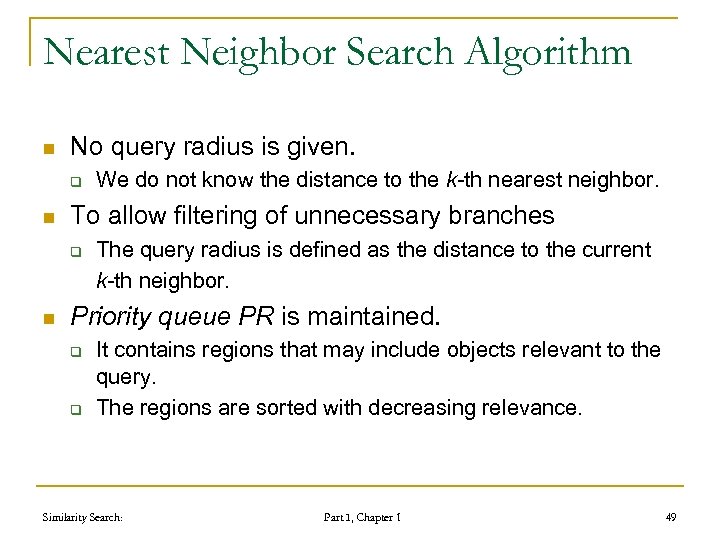

Nearest Neighbor Search Algorithm n No query radius is given. q n To allow filtering of unnecessary branches q n We do not know the distance to the k-th nearest neighbor. The query radius is defined as the distance to the current k-th neighbor. Priority queue PR is maintained. q q It contains regions that may include objects relevant to the query. The regions are sorted with decreasing relevance. Similarity Search: Part I, Chapter 1 49

Nearest Neighbor Search Algorithm n No query radius is given. q n To allow filtering of unnecessary branches q n We do not know the distance to the k-th nearest neighbor. The query radius is defined as the distance to the current k-th neighbor. Priority queue PR is maintained. q q It contains regions that may include objects relevant to the query. The regions are sorted with decreasing relevance. Similarity Search: Part I, Chapter 1 49

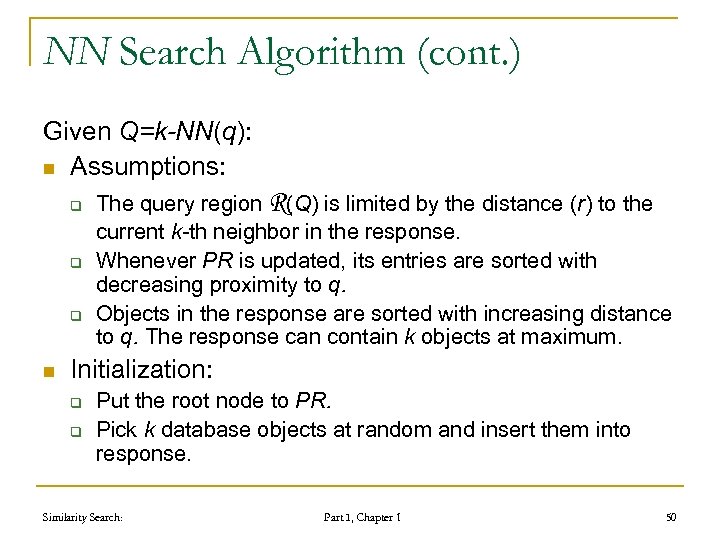

NN Search Algorithm (cont. ) Given Q=k-NN(q): n Assumptions: q q q n The query region R(Q) is limited by the distance (r) to the current k-th neighbor in the response. Whenever PR is updated, its entries are sorted with decreasing proximity to q. Objects in the response are sorted with increasing distance to q. The response can contain k objects at maximum. Initialization: q q Put the root node to PR. Pick k database objects at random and insert them into response. Similarity Search: Part I, Chapter 1 50

NN Search Algorithm (cont. ) Given Q=k-NN(q): n Assumptions: q q q n The query region R(Q) is limited by the distance (r) to the current k-th neighbor in the response. Whenever PR is updated, its entries are sorted with decreasing proximity to q. Objects in the response are sorted with increasing distance to q. The response can contain k objects at maximum. Initialization: q q Put the root node to PR. Pick k database objects at random and insert them into response. Similarity Search: Part I, Chapter 1 50

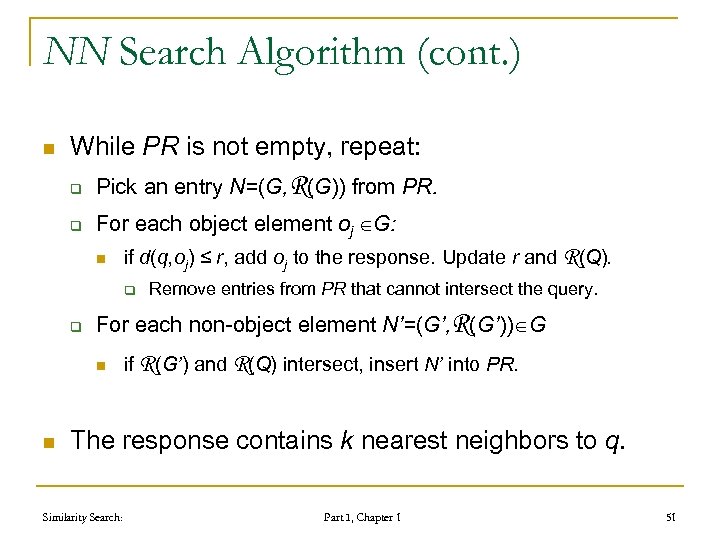

NN Search Algorithm (cont. ) n While PR is not empty, repeat: q Pick an entry N=(G, R(G)) from PR. q For each object element oj G: n if d(q, oj) ≤ r, add oj to the response. Update r and R(Q). q q For each non-object element N’=(G’, R(G’)) G n n Remove entries from PR that cannot intersect the query. if R(G’) and R(Q) intersect, insert N’ into PR. The response contains k nearest neighbors to q. Similarity Search: Part I, Chapter 1 51

NN Search Algorithm (cont. ) n While PR is not empty, repeat: q Pick an entry N=(G, R(G)) from PR. q For each object element oj G: n if d(q, oj) ≤ r, add oj to the response. Update r and R(Q). q q For each non-object element N’=(G’, R(G’)) G n n Remove entries from PR that cannot intersect the query. if R(G’) and R(Q) intersect, insert N’ into PR. The response contains k nearest neighbors to q. Similarity Search: Part I, Chapter 1 51

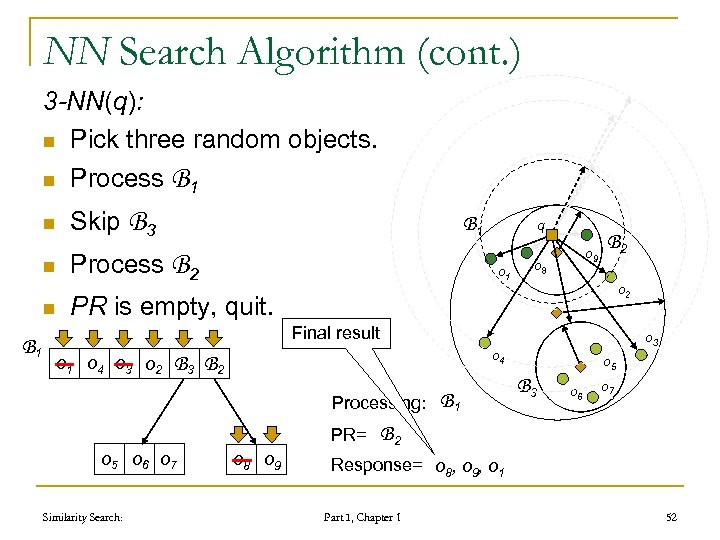

NN Search Algorithm (cont. ) 3 -NN(q): n Pick three random objects. n Process B 1 n Skip B 3 n n B 1 Process B 2 q o 1 o 9 o 8 B 2 o 2 PR is empty, quit. Final result o 1 o 4 o 3 o 2 o 4 B 3 B 2 Processing: PR= o 5 o 6 o 7 Similarity Search: o 3 o 8 o 9 B 2 1 o 5 B 3 o 6 o 7 B 1 2 Response= o 8, o 1, o 3 2 4 9 1 Part I, Chapter 1 52

NN Search Algorithm (cont. ) 3 -NN(q): n Pick three random objects. n Process B 1 n Skip B 3 n n B 1 Process B 2 q o 1 o 9 o 8 B 2 o 2 PR is empty, quit. Final result o 1 o 4 o 3 o 2 o 4 B 3 B 2 Processing: PR= o 5 o 6 o 7 Similarity Search: o 3 o 8 o 9 B 2 1 o 5 B 3 o 6 o 7 B 1 2 Response= o 8, o 1, o 3 2 4 9 1 Part I, Chapter 1 52

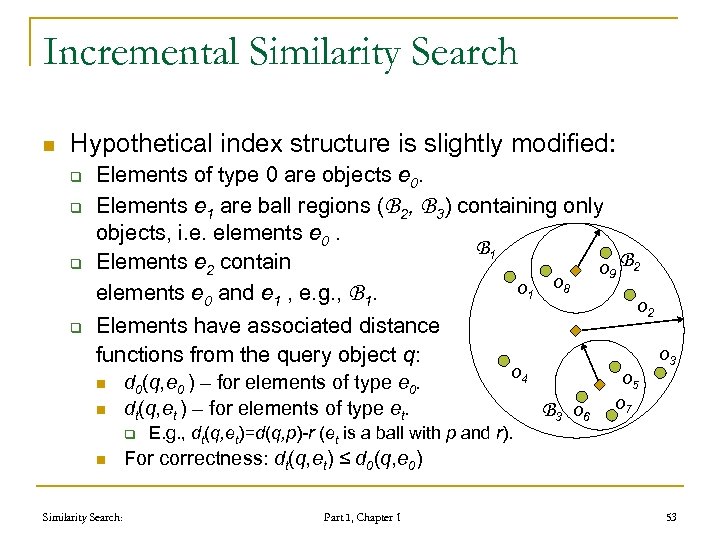

Incremental Similarity Search n Hypothetical index structure is slightly modified: q q Elements of type 0 are objects e 0. Elements e 1 are ball regions (B 2, B 3) containing only objects, i. e. elements e 0. B 1 Elements e 2 contain o 9 B 2 o 1 o 8 elements e 0 and e 1 , e. g. , B 1. o 2 Elements have associated distance o 3 functions from the query object q: n n d 0(q, e 0 ) – for elements of type e 0. dt(q, et ) – for elements of type et. q n Similarity Search: o 4 E. g. , dt(q, et)=d(q, p)-r (et is a ball with p and r). B 3 o 6 o 5 o 7 For correctness: dt(q, et) ≤ d 0(q, e 0) Part I, Chapter 1 53

Incremental Similarity Search n Hypothetical index structure is slightly modified: q q Elements of type 0 are objects e 0. Elements e 1 are ball regions (B 2, B 3) containing only objects, i. e. elements e 0. B 1 Elements e 2 contain o 9 B 2 o 1 o 8 elements e 0 and e 1 , e. g. , B 1. o 2 Elements have associated distance o 3 functions from the query object q: n n d 0(q, e 0 ) – for elements of type e 0. dt(q, et ) – for elements of type et. q n Similarity Search: o 4 E. g. , dt(q, et)=d(q, p)-r (et is a ball with p and r). B 3 o 6 o 5 o 7 For correctness: dt(q, et) ≤ d 0(q, e 0) Part I, Chapter 1 53

Incremental Search NN n Based on priority queue PR again q q n Each element et in PR knows also the distance dt(q, et). Entries in the queue are sorted with respect to these distances. Initialization: q Insert the root element with the distance 0 into PR. Similarity Search: Part I, Chapter 1 54

Incremental Search NN n Based on priority queue PR again q q n Each element et in PR knows also the distance dt(q, et). Entries in the queue are sorted with respect to these distances. Initialization: q Insert the root element with the distance 0 into PR. Similarity Search: Part I, Chapter 1 54

Incremental Search (cont. ) NN n While PR is not empty do q q q et the first element from PR if t = 0 (et is an object) then report et as the next nearest neighbor. else insert each child element el of et with the distance dl(q, el ) into PR. Similarity Search: Part I, Chapter 1 55

Incremental Search (cont. ) NN n While PR is not empty do q q q et the first element from PR if t = 0 (et is an object) then report et as the next nearest neighbor. else insert each child element el of et with the distance dl(q, el ) into PR. Similarity Search: Part I, Chapter 1 55

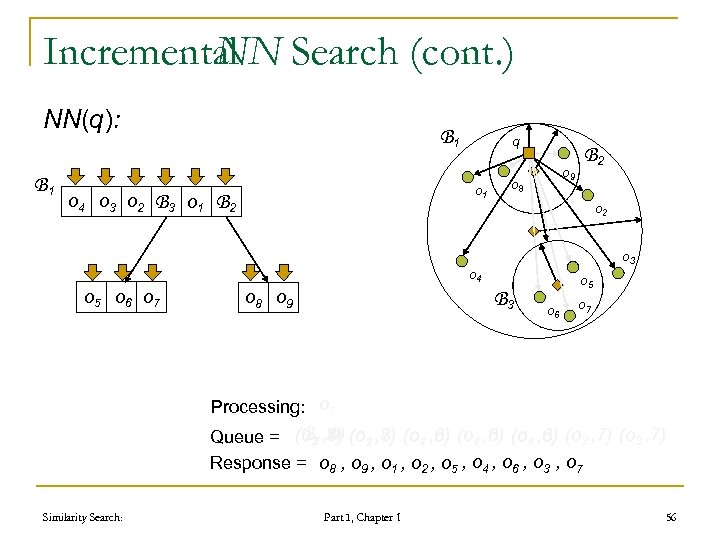

Incremental Search (cont. ) NN NN(q): B 1 o 4 o 3 o 2 B 1 q o 1 B 3 o 1 B 2 o 9 o 8 o 2 o 3 o 4 o 5 o 6 o 7 o 8 o 9 B 2 B 3 o 5 o 6 o 7 B 51 Processing: o 43 2 7 3 6 2 1 9 8 (B 23, 4) (B 1 , 3) (B 6 , 6) (B 4 , 7) (B 4 , 6) , 5) 2 , 6) 7 , 8) 5) , 8) Queue = (o 42, 6) (o 23, 4) (o 33, 7) (o 33, , 5) (o 33, 7) (o 3 , 7) 1 , 5) 9 , 2) 8 , 1) 1 , 3) 4 , 6) 3 , 7) 9 , 2) 1 , 8) 2 , 3) 4 , 4) 7 , 0) 7 , 5) 7 , 4) 3 , 8) 6 , 8) 5 , 5) 6 , 7) 7 Response = o 8 , o 9 , o 1 , o 2 , o 5 , o 4 , o 6 , o 3 , o 7 Similarity Search: Part I, Chapter 1 56

Incremental Search (cont. ) NN NN(q): B 1 o 4 o 3 o 2 B 1 q o 1 B 3 o 1 B 2 o 9 o 8 o 2 o 3 o 4 o 5 o 6 o 7 o 8 o 9 B 2 B 3 o 5 o 6 o 7 B 51 Processing: o 43 2 7 3 6 2 1 9 8 (B 23, 4) (B 1 , 3) (B 6 , 6) (B 4 , 7) (B 4 , 6) , 5) 2 , 6) 7 , 8) 5) , 8) Queue = (o 42, 6) (o 23, 4) (o 33, 7) (o 33, , 5) (o 33, 7) (o 3 , 7) 1 , 5) 9 , 2) 8 , 1) 1 , 3) 4 , 6) 3 , 7) 9 , 2) 1 , 8) 2 , 3) 4 , 4) 7 , 0) 7 , 5) 7 , 4) 3 , 8) 6 , 8) 5 , 5) 6 , 7) 7 Response = o 8 , o 9 , o 1 , o 2 , o 5 , o 4 , o 6 , o 3 , o 7 Similarity Search: Part I, Chapter 1 56

Foundations of metric space searching 1. 2. 3. 4. 5. 6. 7. 8. 9. distance searching problem in metric spaces metric distance measures similarity queries basic partitioning principles of similarity query execution policies to avoid distance computations metric space transformations principles of approximate similarity search advanced issues Similarity Search: Part I, Chapter 1 57

Foundations of metric space searching 1. 2. 3. 4. 5. 6. 7. 8. 9. distance searching problem in metric spaces metric distance measures similarity queries basic partitioning principles of similarity query execution policies to avoid distance computations metric space transformations principles of approximate similarity search advanced issues Similarity Search: Part I, Chapter 1 57

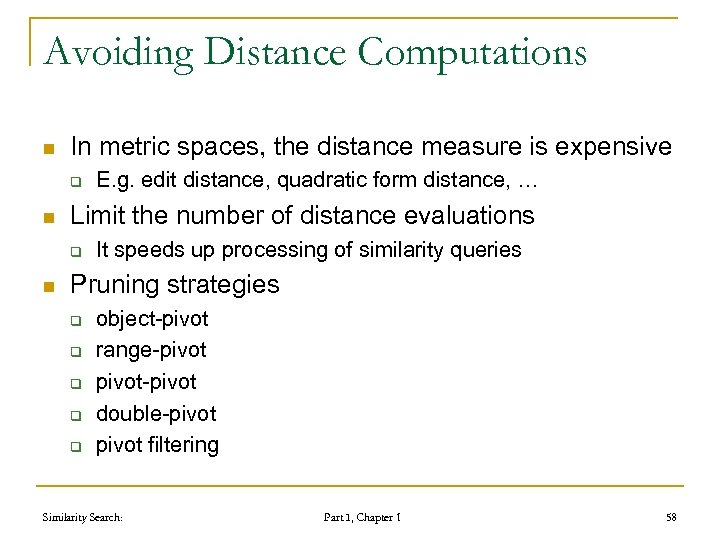

Avoiding Distance Computations n In metric spaces, the distance measure is expensive q n Limit the number of distance evaluations q n E. g. edit distance, quadratic form distance, … It speeds up processing of similarity queries Pruning strategies q q q object-pivot range-pivot-pivot double-pivot filtering Similarity Search: Part I, Chapter 1 58

Avoiding Distance Computations n In metric spaces, the distance measure is expensive q n Limit the number of distance evaluations q n E. g. edit distance, quadratic form distance, … It speeds up processing of similarity queries Pruning strategies q q q object-pivot range-pivot-pivot double-pivot filtering Similarity Search: Part I, Chapter 1 58

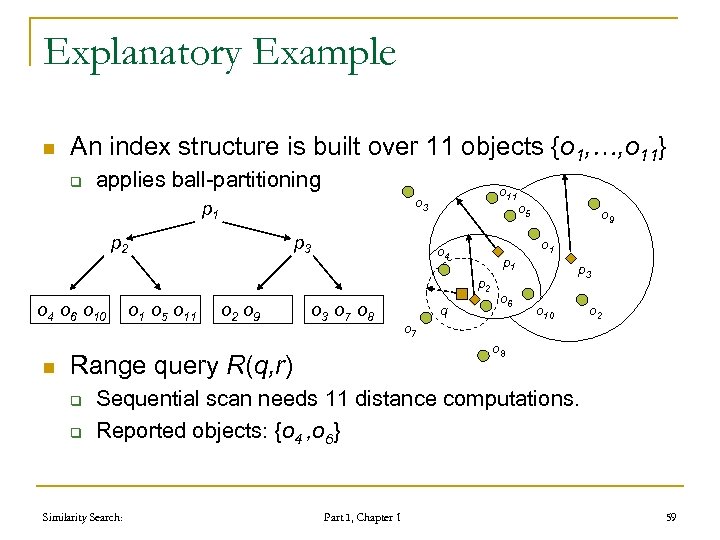

Explanatory Example n An index structure is built over 11 objects {o 1, …, o 11} q applies ball-partitioning o 11 o 3 p 1 p 2 p 3 o 4 p 1 p 2 o 4 o 6 o 10 n o 1 o 5 o 11 o 2 o 9 o 3 o 7 o 8 q o 6 o 7 o 9 o 1 p 3 o 10 o 2 o 8 Range query R(q, r) q q o 5 Sequential scan needs 11 distance computations. Reported objects: {o 4 , o 6} Similarity Search: Part I, Chapter 1 59

Explanatory Example n An index structure is built over 11 objects {o 1, …, o 11} q applies ball-partitioning o 11 o 3 p 1 p 2 p 3 o 4 p 1 p 2 o 4 o 6 o 10 n o 1 o 5 o 11 o 2 o 9 o 3 o 7 o 8 q o 6 o 7 o 9 o 1 p 3 o 10 o 2 o 8 Range query R(q, r) q q o 5 Sequential scan needs 11 distance computations. Reported objects: {o 4 , o 6} Similarity Search: Part I, Chapter 1 59

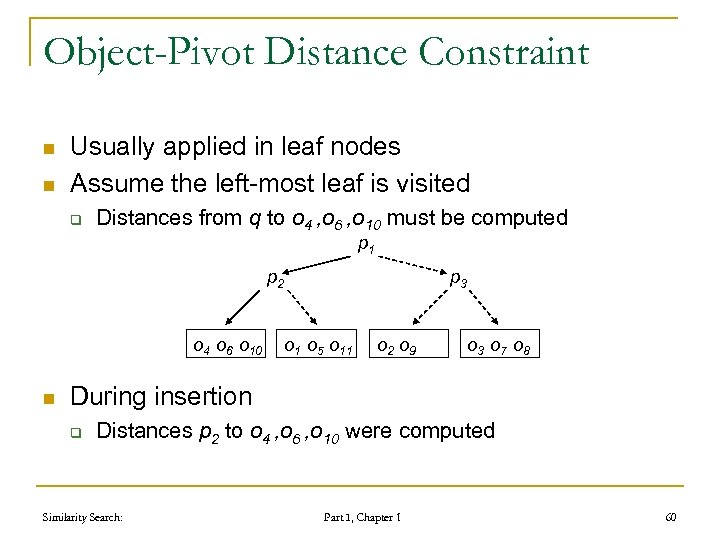

Object-Pivot Distance Constraint n n Usually applied in leaf nodes Assume the left-most leaf is visited q Distances from q to o 4 , o 6 , o 10 must be computed p 1 p 2 o 4 o 6 o 10 n p 3 o 1 o 5 o 11 o 2 o 9 o 3 o 7 o 8 During insertion q Distances p 2 to o 4 , o 6 , o 10 were computed Similarity Search: Part I, Chapter 1 60

Object-Pivot Distance Constraint n n Usually applied in leaf nodes Assume the left-most leaf is visited q Distances from q to o 4 , o 6 , o 10 must be computed p 1 p 2 o 4 o 6 o 10 n p 3 o 1 o 5 o 11 o 2 o 9 o 3 o 7 o 8 During insertion q Distances p 2 to o 4 , o 6 , o 10 were computed Similarity Search: Part I, Chapter 1 60

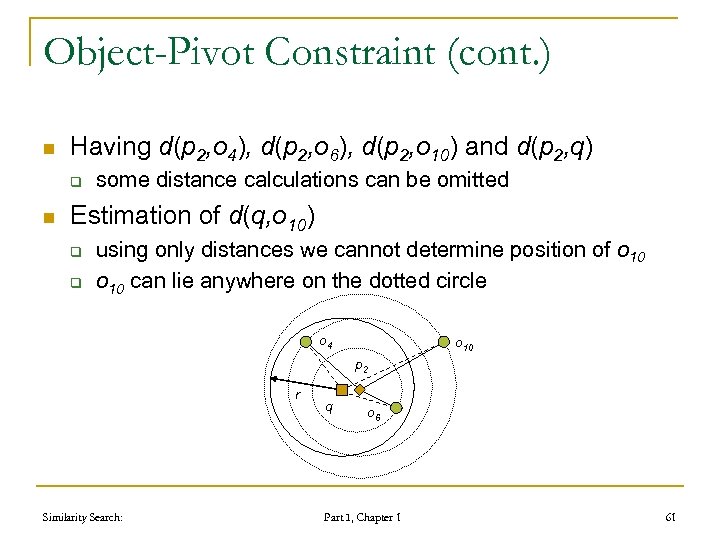

Object-Pivot Constraint (cont. ) n Having d(p 2, o 4), d(p 2, o 6), d(p 2, o 10) and d(p 2, q) q n some distance calculations can be omitted Estimation of d(q, o 10) q q using only distances we cannot determine position of o 10 can lie anywhere on the dotted circle o 4 o 10 p 2 r Similarity Search: q o 6 Part I, Chapter 1 61

Object-Pivot Constraint (cont. ) n Having d(p 2, o 4), d(p 2, o 6), d(p 2, o 10) and d(p 2, q) q n some distance calculations can be omitted Estimation of d(q, o 10) q q using only distances we cannot determine position of o 10 can lie anywhere on the dotted circle o 4 o 10 p 2 r Similarity Search: q o 6 Part I, Chapter 1 61

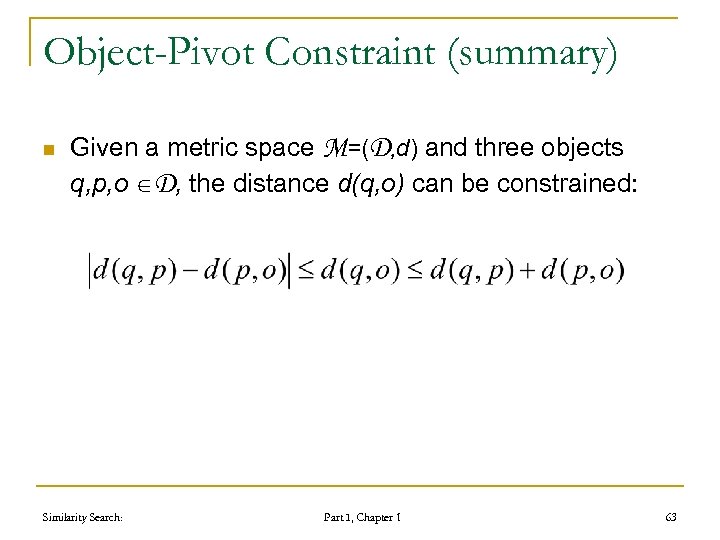

Object-Pivot Constraint (summary) n Given a metric space M=(D, d) and three objects q, p, o D, the distance d(q, o) can be constrained: Similarity Search: Part I, Chapter 1 63

Object-Pivot Constraint (summary) n Given a metric space M=(D, d) and three objects q, p, o D, the distance d(q, o) can be constrained: Similarity Search: Part I, Chapter 1 63

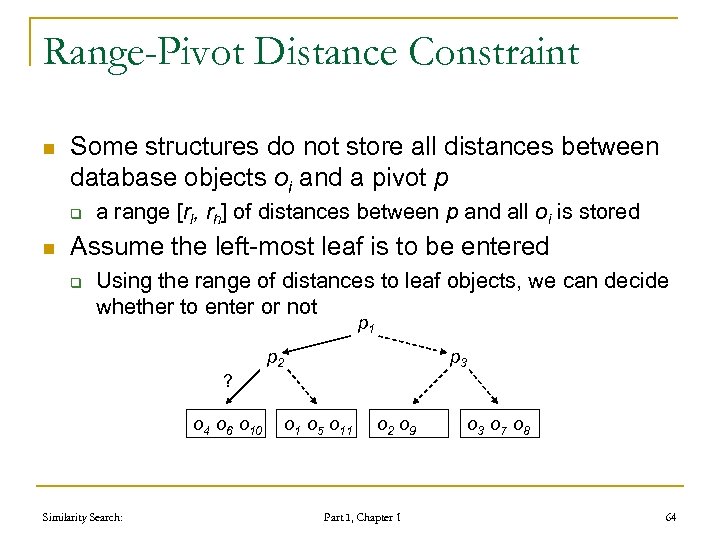

Range-Pivot Distance Constraint n Some structures do not store all distances between database objects oi and a pivot p q n a range [rl, rh] of distances between p and all oi is stored Assume the left-most leaf is to be entered q Using the range of distances to leaf objects, we can decide whether to enter or not p 1 p 2 p 3 ? o 4 o 6 o 10 Similarity Search: o 1 o 5 o 11 o 2 o 9 Part I, Chapter 1 o 3 o 7 o 8 64

Range-Pivot Distance Constraint n Some structures do not store all distances between database objects oi and a pivot p q n a range [rl, rh] of distances between p and all oi is stored Assume the left-most leaf is to be entered q Using the range of distances to leaf objects, we can decide whether to enter or not p 1 p 2 p 3 ? o 4 o 6 o 10 Similarity Search: o 1 o 5 o 11 o 2 o 9 Part I, Chapter 1 o 3 o 7 o 8 64

![Range-Pivot Constraint (cont. ) n Knowing interval [rl, rh] of distance in the leaf, Range-Pivot Constraint (cont. ) n Knowing interval [rl, rh] of distance in the leaf,](https://present5.com/presentation/f94e73748b39cb24d3fe7bb9e7d35aa5/image-64.jpg) Range-Pivot Constraint (cont. ) n Knowing interval [rl, rh] of distance in the leaf, we can optimize o 4 p 2 r q rh n o 6 rl o 10 p 2 r q rh rl o 6 o 4 o 10 p 2 r q rh rl o 6 Lower bound is rl - d(q, p 2) q n o 4 o 10 If greater than the query radius r, no object can qualify Upper bound is rh + d(q, p 2) q If less than the query radius r, all objects qualify! Similarity Search: Part I, Chapter 1 65

Range-Pivot Constraint (cont. ) n Knowing interval [rl, rh] of distance in the leaf, we can optimize o 4 p 2 r q rh n o 6 rl o 10 p 2 r q rh rl o 6 o 4 o 10 p 2 r q rh rl o 6 Lower bound is rl - d(q, p 2) q n o 4 o 10 If greater than the query radius r, no object can qualify Upper bound is rh + d(q, p 2) q If less than the query radius r, all objects qualify! Similarity Search: Part I, Chapter 1 65

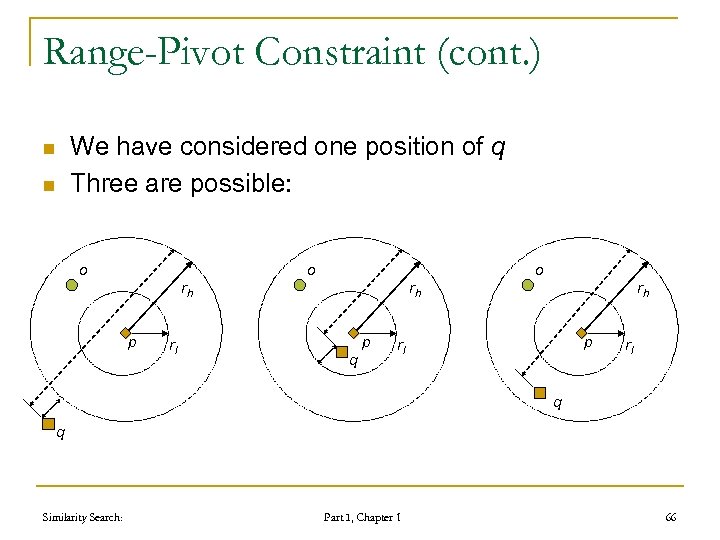

Range-Pivot Constraint (cont. ) n n We have considered one position of q Three are possible: o o o rh p rl rh p q rh p rl rl q q Similarity Search: Part I, Chapter 1 66

Range-Pivot Constraint (cont. ) n n We have considered one position of q Three are possible: o o o rh p rl rh p q rh p rl rl q q Similarity Search: Part I, Chapter 1 66

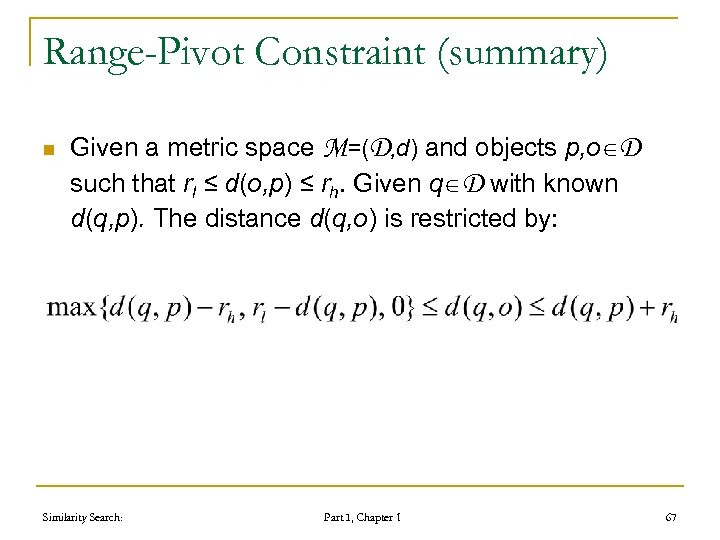

Range-Pivot Constraint (summary) n Given a metric space M=(D, d) and objects p, o D such that rl ≤ d(o, p) ≤ rh. Given q D with known d(q, p). The distance d(q, o) is restricted by: Similarity Search: Part I, Chapter 1 67

Range-Pivot Constraint (summary) n Given a metric space M=(D, d) and objects p, o D such that rl ≤ d(o, p) ≤ rh. Given q D with known d(q, p). The distance d(q, o) is restricted by: Similarity Search: Part I, Chapter 1 67

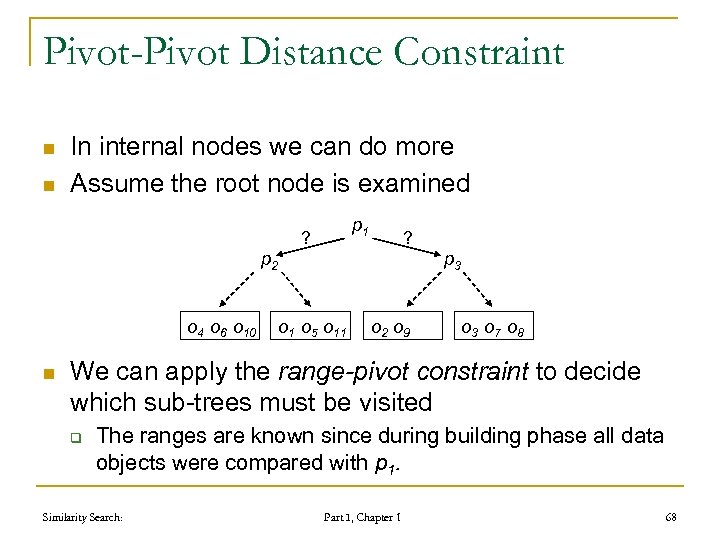

Pivot-Pivot Distance Constraint n n In internal nodes we can do more Assume the root node is examined p 1 ? ? p 2 o 4 o 6 o 10 n p 3 o 1 o 5 o 11 o 2 o 9 o 3 o 7 o 8 We can apply the range-pivot constraint to decide which sub-trees must be visited q The ranges are known since during building phase all data objects were compared with p 1. Similarity Search: Part I, Chapter 1 68

Pivot-Pivot Distance Constraint n n In internal nodes we can do more Assume the root node is examined p 1 ? ? p 2 o 4 o 6 o 10 n p 3 o 1 o 5 o 11 o 2 o 9 o 3 o 7 o 8 We can apply the range-pivot constraint to decide which sub-trees must be visited q The ranges are known since during building phase all data objects were compared with p 1. Similarity Search: Part I, Chapter 1 68

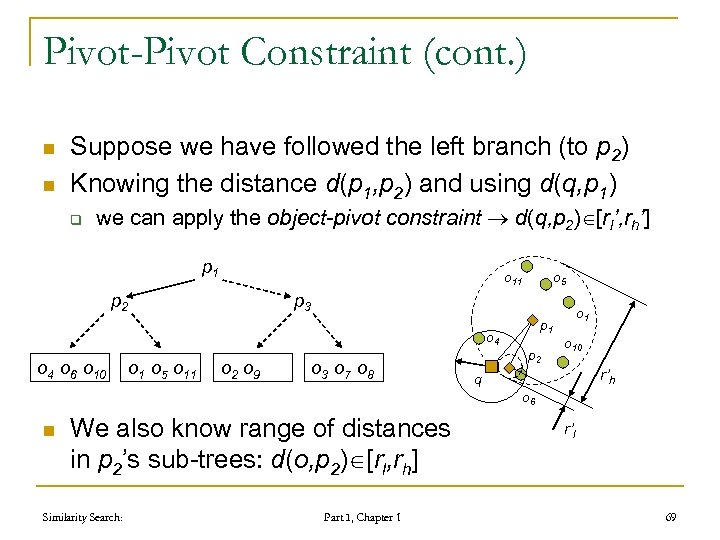

Pivot-Pivot Constraint (cont. ) n n Suppose we have followed the left branch (to p 2) Knowing the distance d(p 1, p 2) and using d(q, p 1) q we can apply the object-pivot constraint d(q, p 2) [rl’, rh’] p 1 o 11 p 2 p 3 o 4 o 6 o 10 o 5 o 11 o 2 o 9 o 3 o 7 o 8 p 1 p 2 o 10 r’h q o 6 n We also know range of distances in p 2’s sub-trees: d(o, p 2) [rl, rh] Similarity Search: Part I, Chapter 1 r’l 69

Pivot-Pivot Constraint (cont. ) n n Suppose we have followed the left branch (to p 2) Knowing the distance d(p 1, p 2) and using d(q, p 1) q we can apply the object-pivot constraint d(q, p 2) [rl’, rh’] p 1 o 11 p 2 p 3 o 4 o 6 o 10 o 5 o 11 o 2 o 9 o 3 o 7 o 8 p 1 p 2 o 10 r’h q o 6 n We also know range of distances in p 2’s sub-trees: d(o, p 2) [rl, rh] Similarity Search: Part I, Chapter 1 r’l 69

![Pivot-Pivot Constraint (cont. ) n Having q q d(q, p 2) [rl’, rh’] d(o, Pivot-Pivot Constraint (cont. ) n Having q q d(q, p 2) [rl’, rh’] d(o,](https://present5.com/presentation/f94e73748b39cb24d3fe7bb9e7d35aa5/image-69.jpg) Pivot-Pivot Constraint (cont. ) n Having q q d(q, p 2) [rl’, rh’] d(o, p 2) [rl , rh] o 11 r’h rh p 1 o 4 p 2 r’l n o 10 o 6 q n o 5 rl Both ranges intersect lower bound on d(q, o) is 0! Upper bound is rh+rh’ Similarity Search: Part I, Chapter 1 70

Pivot-Pivot Constraint (cont. ) n Having q q d(q, p 2) [rl’, rh’] d(o, p 2) [rl , rh] o 11 r’h rh p 1 o 4 p 2 r’l n o 10 o 6 q n o 5 rl Both ranges intersect lower bound on d(q, o) is 0! Upper bound is rh+rh’ Similarity Search: Part I, Chapter 1 70

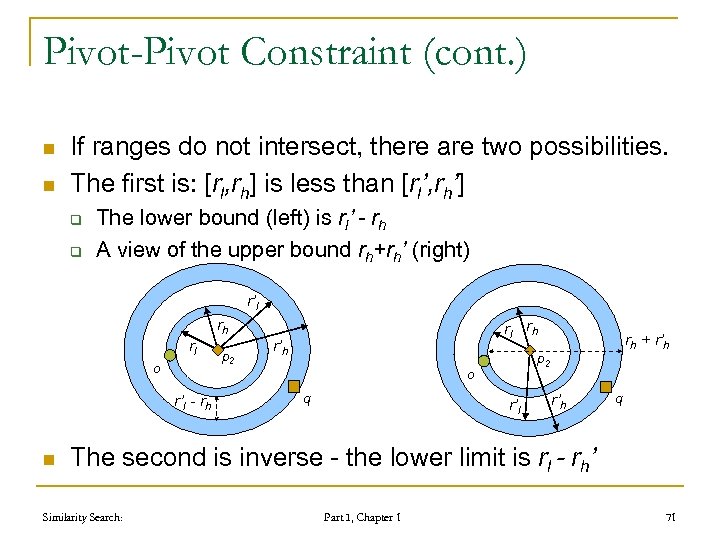

Pivot-Pivot Constraint (cont. ) n n If ranges do not intersect, there are two possibilities. The first is: [rl, rh] is less than [rl’, rh’] q q The lower bound (left) is rl’ - rh A view of the upper bound rh+rh’ (right) r’l rl o r’l - rh n rh p 2 rl rh r’h p 2 o q rh + r’h r’l r’h q The second is inverse - the lower limit is rl - rh’ Similarity Search: Part I, Chapter 1 71

Pivot-Pivot Constraint (cont. ) n n If ranges do not intersect, there are two possibilities. The first is: [rl, rh] is less than [rl’, rh’] q q The lower bound (left) is rl’ - rh A view of the upper bound rh+rh’ (right) r’l rl o r’l - rh n rh p 2 rl rh r’h p 2 o q rh + r’h r’l r’h q The second is inverse - the lower limit is rl - rh’ Similarity Search: Part I, Chapter 1 71

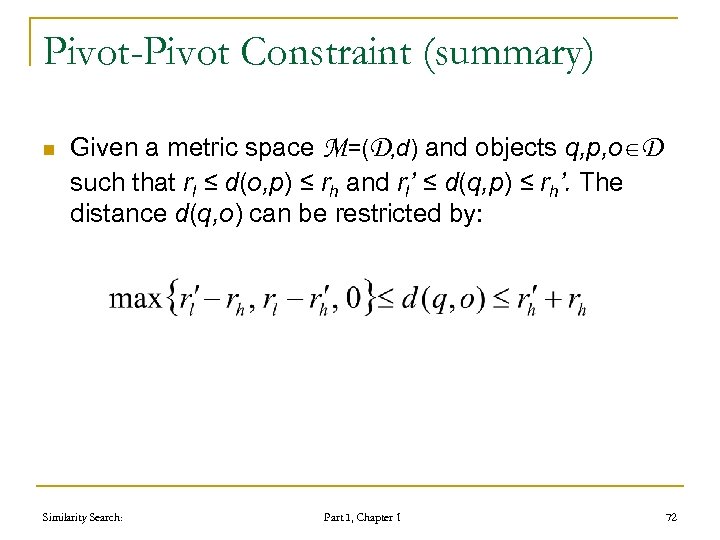

Pivot-Pivot Constraint (summary) n Given a metric space M=(D, d) and objects q, p, o D such that rl ≤ d(o, p) ≤ rh and rl’ ≤ d(q, p) ≤ rh’. The distance d(q, o) can be restricted by: Similarity Search: Part I, Chapter 1 72

Pivot-Pivot Constraint (summary) n Given a metric space M=(D, d) and objects q, p, o D such that rl ≤ d(o, p) ≤ rh and rl’ ≤ d(q, p) ≤ rh’. The distance d(q, o) can be restricted by: Similarity Search: Part I, Chapter 1 72

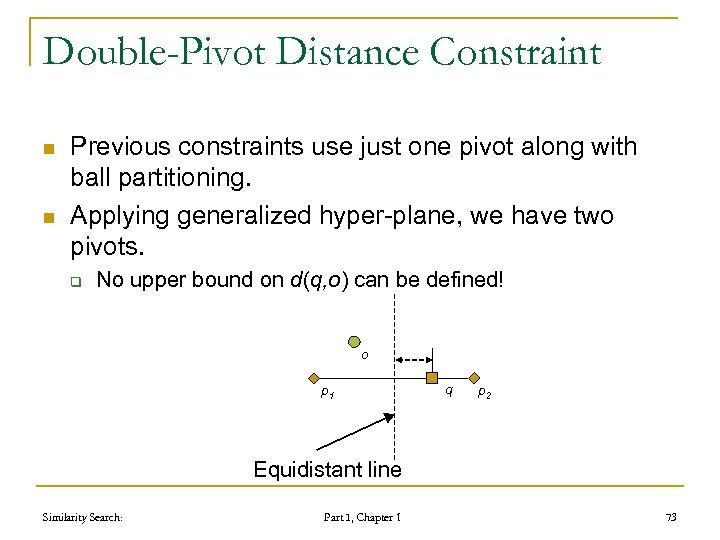

Double-Pivot Distance Constraint n n Previous constraints use just one pivot along with ball partitioning. Applying generalized hyper-plane, we have two pivots. q No upper bound on d(q, o) can be defined! o p 1 q p 2 Equidistant line Similarity Search: Part I, Chapter 1 73

Double-Pivot Distance Constraint n n Previous constraints use just one pivot along with ball partitioning. Applying generalized hyper-plane, we have two pivots. q No upper bound on d(q, o) can be defined! o p 1 q p 2 Equidistant line Similarity Search: Part I, Chapter 1 73

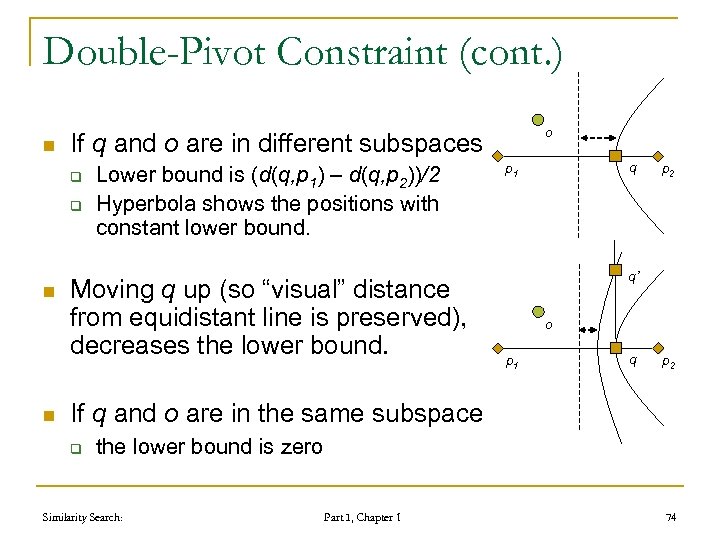

Double-Pivot Constraint (cont. ) n If q and o are in different subspaces q q n n o Lower bound is (d(q, p 1) – d(q, p 2))/2 Hyperbola shows the positions with constant lower bound. Moving q up (so “visual” distance from equidistant line is preserved), decreases the lower bound. q p 1 p 2 q’ o p 1 q p 2 If q and o are in the same subspace q the lower bound is zero Similarity Search: Part I, Chapter 1 74

Double-Pivot Constraint (cont. ) n If q and o are in different subspaces q q n n o Lower bound is (d(q, p 1) – d(q, p 2))/2 Hyperbola shows the positions with constant lower bound. Moving q up (so “visual” distance from equidistant line is preserved), decreases the lower bound. q p 1 p 2 q’ o p 1 q p 2 If q and o are in the same subspace q the lower bound is zero Similarity Search: Part I, Chapter 1 74

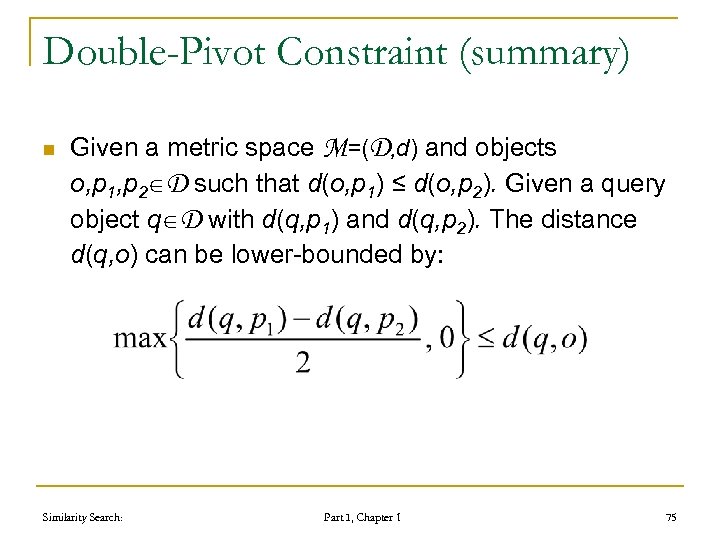

Double-Pivot Constraint (summary) n Given a metric space M=(D, d) and objects o, p 1, p 2 D such that d(o, p 1) ≤ d(o, p 2). Given a query object q D with d(q, p 1) and d(q, p 2). The distance d(q, o) can be lower-bounded by: Similarity Search: Part I, Chapter 1 75

Double-Pivot Constraint (summary) n Given a metric space M=(D, d) and objects o, p 1, p 2 D such that d(o, p 1) ≤ d(o, p 2). Given a query object q D with d(q, p 1) and d(q, p 2). The distance d(q, o) can be lower-bounded by: Similarity Search: Part I, Chapter 1 75

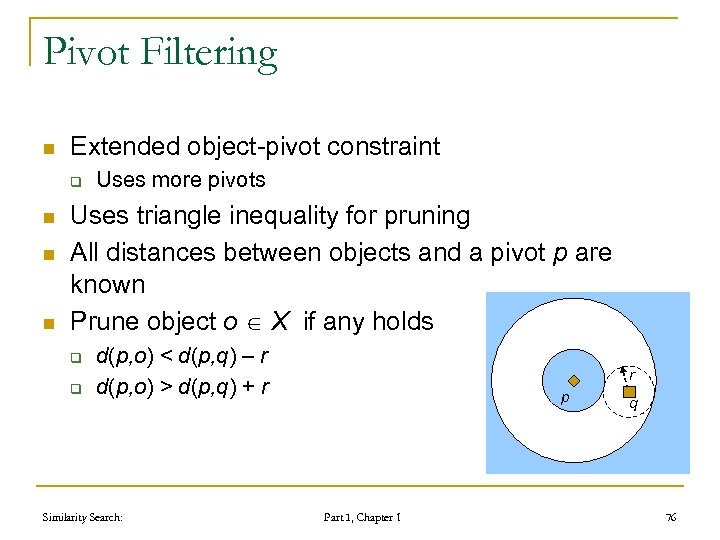

Pivot Filtering n Extended object-pivot constraint q n n n Uses more pivots Uses triangle inequality for pruning All distances between objects and a pivot p are known Prune object o X if any holds q q d(p, o) < d(p, q) – r d(p, o) > d(p, q) + r Similarity Search: r p Part I, Chapter 1 q 76

Pivot Filtering n Extended object-pivot constraint q n n n Uses more pivots Uses triangle inequality for pruning All distances between objects and a pivot p are known Prune object o X if any holds q q d(p, o) < d(p, q) – r d(p, o) > d(p, q) + r Similarity Search: r p Part I, Chapter 1 q 76

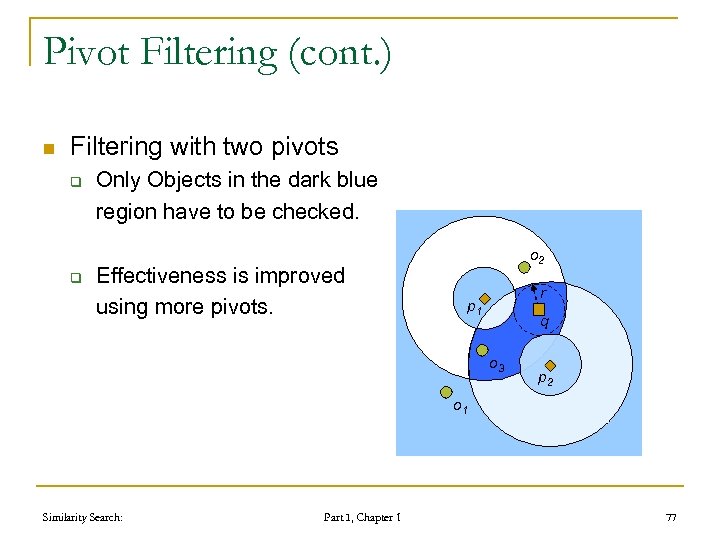

Pivot Filtering (cont. ) n Filtering with two pivots q q Only Objects in the dark blue region have to be checked. Effectiveness is improved using more pivots. o 2 r p 1 q o 3 p 2 o 1 Similarity Search: Part I, Chapter 1 77

Pivot Filtering (cont. ) n Filtering with two pivots q q Only Objects in the dark blue region have to be checked. Effectiveness is improved using more pivots. o 2 r p 1 q o 3 p 2 o 1 Similarity Search: Part I, Chapter 1 77

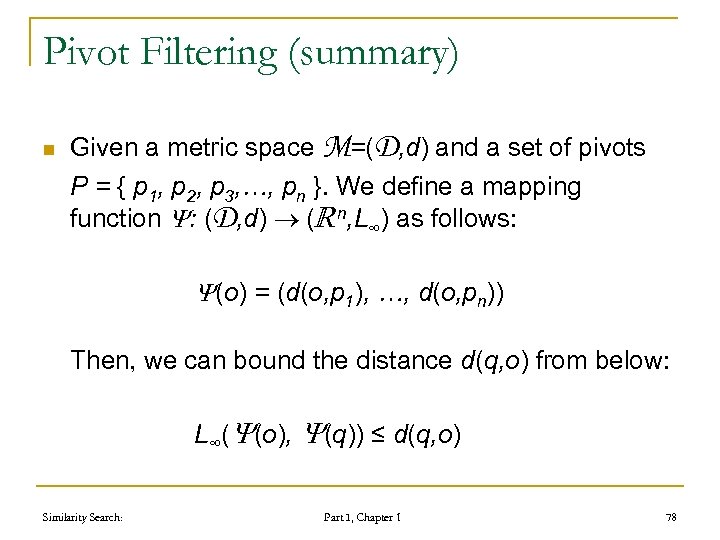

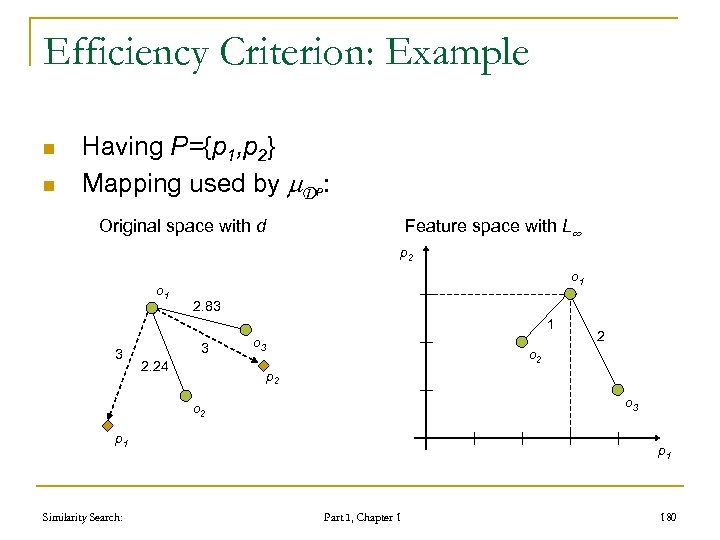

Pivot Filtering (summary) n Given a metric space M=(D, d) and a set of pivots P = { p 1, p 2, p 3, …, pn }. We define a mapping function : (D, d) ( n, L∞) as follows: (o) = (d(o, p 1), …, d(o, pn)) Then, we can bound the distance d(q, o) from below: L∞( (o), (q)) ≤ d(q, o) Similarity Search: Part I, Chapter 1 78

Pivot Filtering (summary) n Given a metric space M=(D, d) and a set of pivots P = { p 1, p 2, p 3, …, pn }. We define a mapping function : (D, d) ( n, L∞) as follows: (o) = (d(o, p 1), …, d(o, pn)) Then, we can bound the distance d(q, o) from below: L∞( (o), (q)) ≤ d(q, o) Similarity Search: Part I, Chapter 1 78

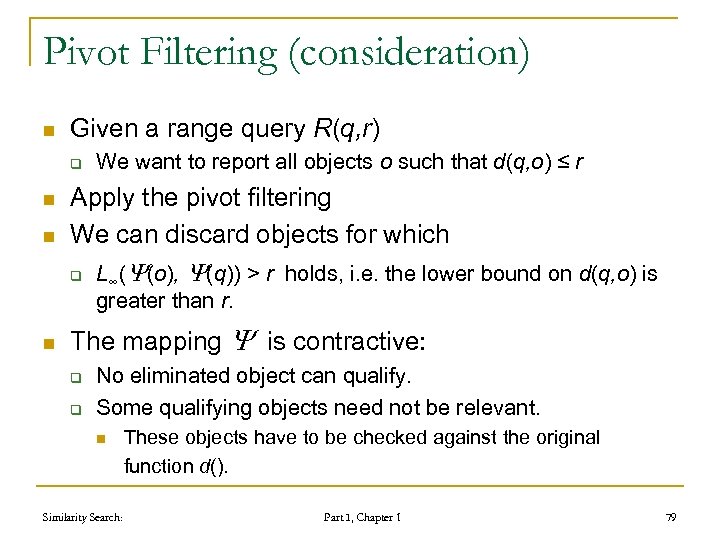

Pivot Filtering (consideration) n Given a range query R(q, r) q n n Apply the pivot filtering We can discard objects for which q n We want to report all objects o such that d(q, o) ≤ r L∞( (o), (q)) > r holds, i. e. the lower bound on d(q, o) is greater than r. The mapping is contractive: q q No eliminated object can qualify. Some qualifying objects need not be relevant. n Similarity Search: These objects have to be checked against the original function d(). Part I, Chapter 1 79

Pivot Filtering (consideration) n Given a range query R(q, r) q n n Apply the pivot filtering We can discard objects for which q n We want to report all objects o such that d(q, o) ≤ r L∞( (o), (q)) > r holds, i. e. the lower bound on d(q, o) is greater than r. The mapping is contractive: q q No eliminated object can qualify. Some qualifying objects need not be relevant. n Similarity Search: These objects have to be checked against the original function d(). Part I, Chapter 1 79

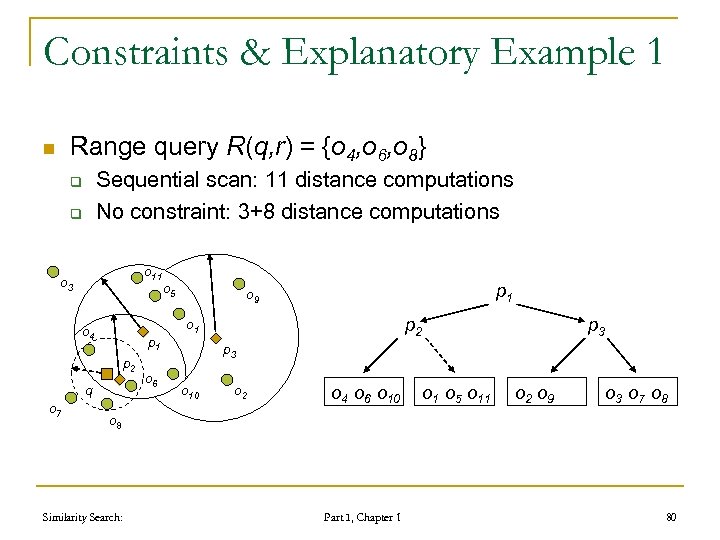

Constraints & Explanatory Example 1 Range query R(q, r) = {o 4, o 6, o 8} n Sequential scan: 11 distance computations No constraint: 3+8 distance computations q q o 11 o 3 o 4 p 1 p 2 q o 7 o 6 o 5 p 1 o 9 p 2 o 1 p 3 o 10 o 2 o 4 o 6 o 10 o 1 o 5 o 11 o 2 o 9 o 3 o 7 o 8 Similarity Search: Part I, Chapter 1 80

Constraints & Explanatory Example 1 Range query R(q, r) = {o 4, o 6, o 8} n Sequential scan: 11 distance computations No constraint: 3+8 distance computations q q o 11 o 3 o 4 p 1 p 2 q o 7 o 6 o 5 p 1 o 9 p 2 o 1 p 3 o 10 o 2 o 4 o 6 o 10 o 1 o 5 o 11 o 2 o 9 o 3 o 7 o 8 Similarity Search: Part I, Chapter 1 80

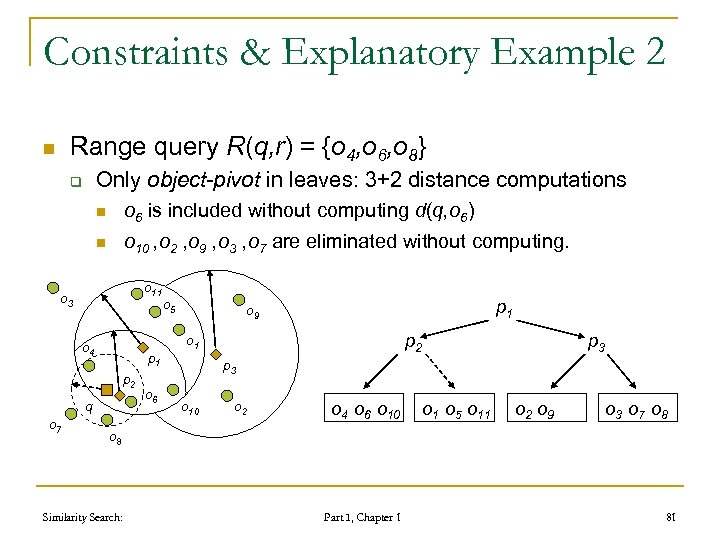

Constraints & Explanatory Example 2 Range query R(q, r) = {o 4, o 6, o 8} n Only object-pivot in leaves: 3+2 distance computations q n o 6 is included without computing d(q, o 6) n o 10 , o 2 , o 9 , o 3 , o 7 are eliminated without computing. o 11 o 3 o 4 p 1 p 2 q o 7 o 6 o 5 p 1 o 9 p 2 o 1 p 3 o 10 o 2 o 4 o 6 o 10 o 1 o 5 o 11 o 2 o 9 o 3 o 7 o 8 Similarity Search: Part I, Chapter 1 81

Constraints & Explanatory Example 2 Range query R(q, r) = {o 4, o 6, o 8} n Only object-pivot in leaves: 3+2 distance computations q n o 6 is included without computing d(q, o 6) n o 10 , o 2 , o 9 , o 3 , o 7 are eliminated without computing. o 11 o 3 o 4 p 1 p 2 q o 7 o 6 o 5 p 1 o 9 p 2 o 1 p 3 o 10 o 2 o 4 o 6 o 10 o 1 o 5 o 11 o 2 o 9 o 3 o 7 o 8 Similarity Search: Part I, Chapter 1 81

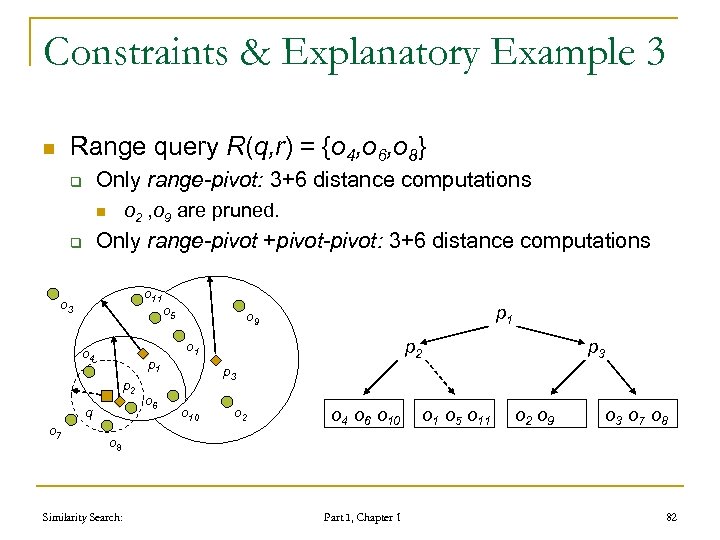

Constraints & Explanatory Example 3 Range query R(q, r) = {o 4, o 6, o 8} n Only range-pivot: 3+6 distance computations q o 2 , o 9 are pruned. n Only range-pivot +pivot-pivot: 3+6 distance computations q o 11 o 3 o 4 p 1 p 2 q o 7 o 6 o 5 p 1 o 9 p 2 o 1 p 3 o 10 o 2 o 4 o 6 o 10 o 1 o 5 o 11 o 2 o 9 o 3 o 7 o 8 Similarity Search: Part I, Chapter 1 82

Constraints & Explanatory Example 3 Range query R(q, r) = {o 4, o 6, o 8} n Only range-pivot: 3+6 distance computations q o 2 , o 9 are pruned. n Only range-pivot +pivot-pivot: 3+6 distance computations q o 11 o 3 o 4 p 1 p 2 q o 7 o 6 o 5 p 1 o 9 p 2 o 1 p 3 o 10 o 2 o 4 o 6 o 10 o 1 o 5 o 11 o 2 o 9 o 3 o 7 o 8 Similarity Search: Part I, Chapter 1 82

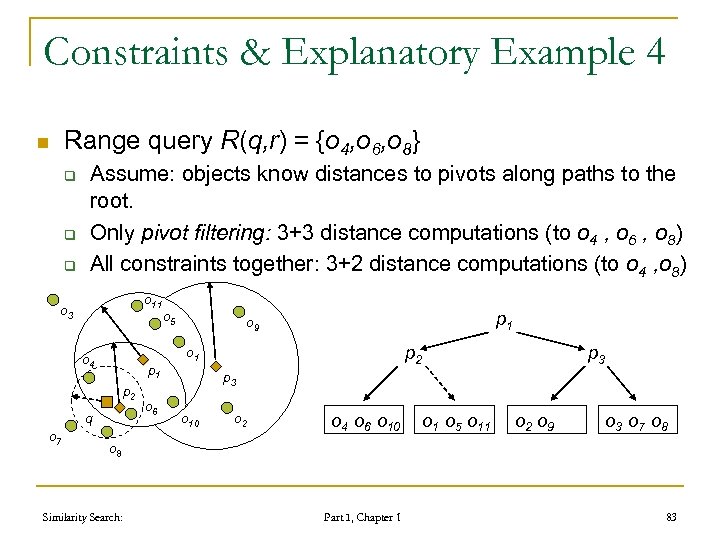

Constraints & Explanatory Example 4 Range query R(q, r) = {o 4, o 6, o 8} n q q q Assume: objects know distances to pivots along paths to the root. Only pivot filtering: 3+3 distance computations (to o 4 , o 6 , o 8) All constraints together: 3+2 distance computations (to o 4 , o 8) o 11 o 3 o 4 p 1 p 2 q o 7 o 6 o 5 p 1 o 9 p 2 o 1 p 3 o 10 o 2 o 4 o 6 o 10 o 1 o 5 o 11 o 2 o 9 o 3 o 7 o 8 Similarity Search: Part I, Chapter 1 83

Constraints & Explanatory Example 4 Range query R(q, r) = {o 4, o 6, o 8} n q q q Assume: objects know distances to pivots along paths to the root. Only pivot filtering: 3+3 distance computations (to o 4 , o 6 , o 8) All constraints together: 3+2 distance computations (to o 4 , o 8) o 11 o 3 o 4 p 1 p 2 q o 7 o 6 o 5 p 1 o 9 p 2 o 1 p 3 o 10 o 2 o 4 o 6 o 10 o 1 o 5 o 11 o 2 o 9 o 3 o 7 o 8 Similarity Search: Part I, Chapter 1 83

Foundations of metric space searching 1. 2. 3. 4. 5. 6. 7. 8. 9. distance searching problem in metric spaces metric distance measures similarity queries basic partitioning principles of similarity query execution policies to avoid distance computations metric space transformations principles of approximate similarity search advanced issues Similarity Search: Part I, Chapter 1 84

Foundations of metric space searching 1. 2. 3. 4. 5. 6. 7. 8. 9. distance searching problem in metric spaces metric distance measures similarity queries basic partitioning principles of similarity query execution policies to avoid distance computations metric space transformations principles of approximate similarity search advanced issues Similarity Search: Part I, Chapter 1 84

Metric Space Transformation n Change one metric space into another q q q n Metric space embedding q n Transformation of the original objects Changing the metric function Transforming both the function and the objects Cheaper distance function User-defined search functions Similarity Search: Part I, Chapter 1 85

Metric Space Transformation n Change one metric space into another q q q n Metric space embedding q n Transformation of the original objects Changing the metric function Transforming both the function and the objects Cheaper distance function User-defined search functions Similarity Search: Part I, Chapter 1 85

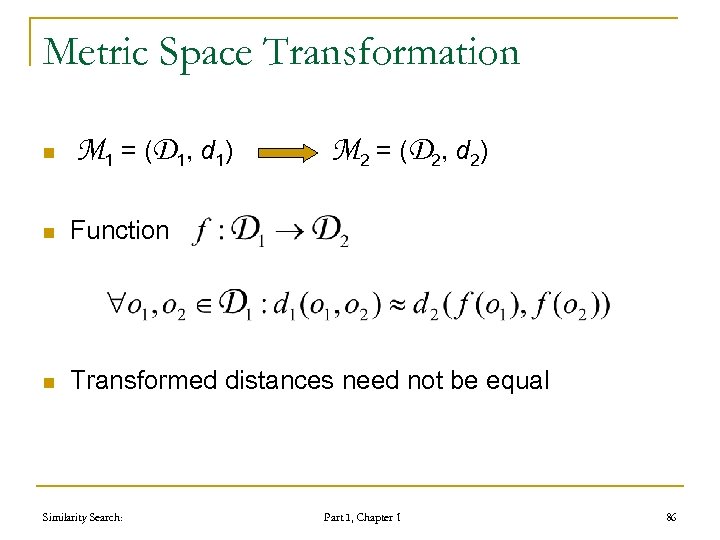

Metric Space Transformation n M 1 = ( D 1, d 1) M 2 = ( D 2, d 2) n Function n Transformed distances need not be equal Similarity Search: Part I, Chapter 1 86

Metric Space Transformation n M 1 = ( D 1, d 1) M 2 = ( D 2, d 2) n Function n Transformed distances need not be equal Similarity Search: Part I, Chapter 1 86

Lower Bounding Metric Functions n Bounds on transformations Exploitable by index structures n Having functions d 1, d 2: D D n d 1 is a lower-bounding distance function of d 2 n Similarity Search: Part I, Chapter 1 87

Lower Bounding Metric Functions n Bounds on transformations Exploitable by index structures n Having functions d 1, d 2: D D n d 1 is a lower-bounding distance function of d 2 n Similarity Search: Part I, Chapter 1 87

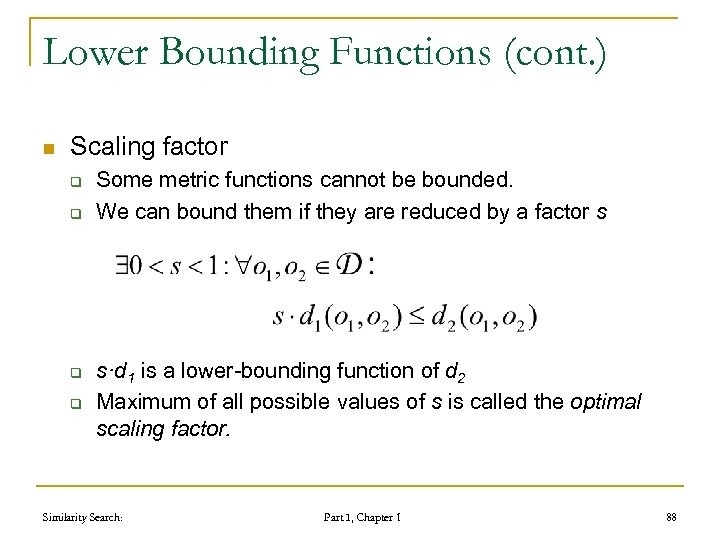

Lower Bounding Functions (cont. ) n Scaling factor q q Some metric functions cannot be bounded. We can bound them if they are reduced by a factor s s·d 1 is a lower-bounding function of d 2 Maximum of all possible values of s is called the optimal scaling factor. Similarity Search: Part I, Chapter 1 88

Lower Bounding Functions (cont. ) n Scaling factor q q Some metric functions cannot be bounded. We can bound them if they are reduced by a factor s s·d 1 is a lower-bounding function of d 2 Maximum of all possible values of s is called the optimal scaling factor. Similarity Search: Part I, Chapter 1 88

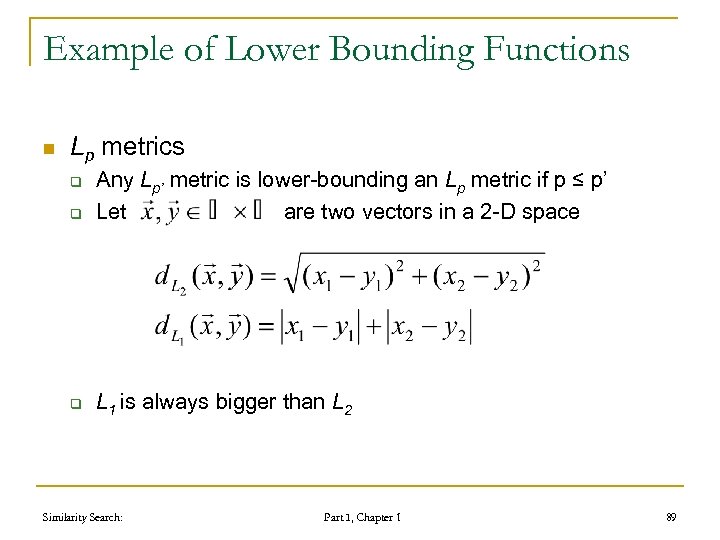

Example of Lower Bounding Functions n Lp metrics q Any Lp’ metric is lower-bounding an Lp metric if p ≤ p’ Let are two vectors in a 2 -D space q L 1 is always bigger than L 2 q Similarity Search: Part I, Chapter 1 89

Example of Lower Bounding Functions n Lp metrics q Any Lp’ metric is lower-bounding an Lp metric if p ≤ p’ Let are two vectors in a 2 -D space q L 1 is always bigger than L 2 q Similarity Search: Part I, Chapter 1 89

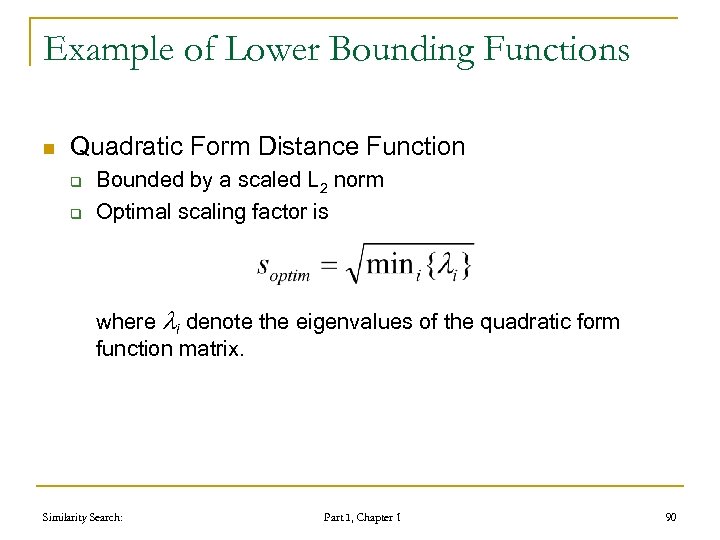

Example of Lower Bounding Functions n Quadratic Form Distance Function q q Bounded by a scaled L 2 norm Optimal scaling factor is where i denote the eigenvalues of the quadratic form function matrix. Similarity Search: Part I, Chapter 1 90

Example of Lower Bounding Functions n Quadratic Form Distance Function q q Bounded by a scaled L 2 norm Optimal scaling factor is where i denote the eigenvalues of the quadratic form function matrix. Similarity Search: Part I, Chapter 1 90

User-defined Metric Functions n Different users have different preferences q q q n Some people prefer car’s speed Others prefer lower prices etc… Preferences might be complex q q Color histograms, data-mining systems Can be learnt automatically n Similarity Search: from the previous behavior of a user Part I, Chapter 1 91

User-defined Metric Functions n Different users have different preferences q q q n Some people prefer car’s speed Others prefer lower prices etc… Preferences might be complex q q Color histograms, data-mining systems Can be learnt automatically n Similarity Search: from the previous behavior of a user Part I, Chapter 1 91

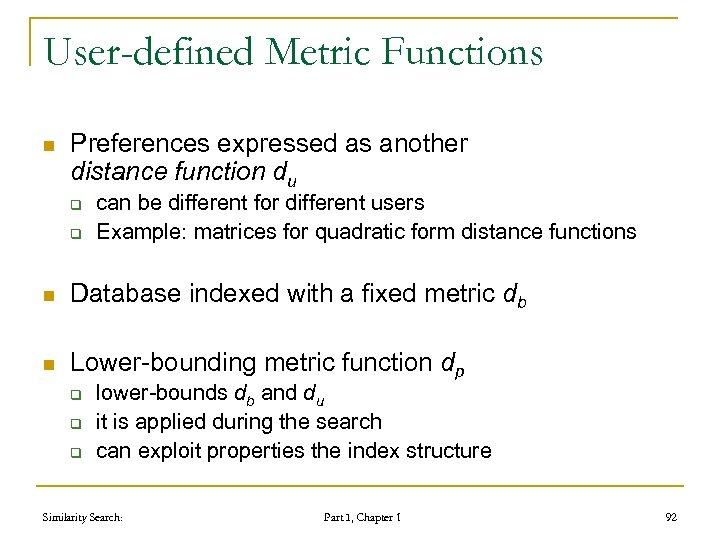

User-defined Metric Functions n Preferences expressed as another distance function du q q can be different for different users Example: matrices for quadratic form distance functions n Database indexed with a fixed metric db n Lower-bounding metric function dp q q q lower-bounds db and du it is applied during the search can exploit properties the index structure Similarity Search: Part I, Chapter 1 92

User-defined Metric Functions n Preferences expressed as another distance function du q q can be different for different users Example: matrices for quadratic form distance functions n Database indexed with a fixed metric db n Lower-bounding metric function dp q q q lower-bounds db and du it is applied during the search can exploit properties the index structure Similarity Search: Part I, Chapter 1 92

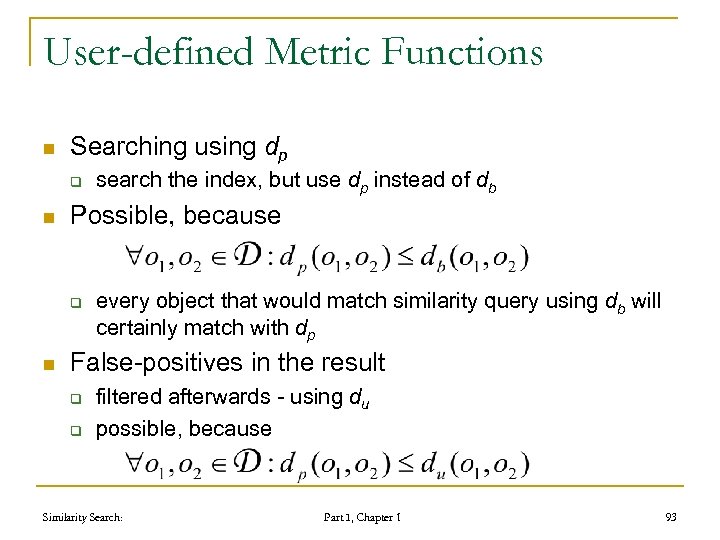

User-defined Metric Functions n Searching using dp q n Possible, because q n search the index, but use dp instead of db every object that would match similarity query using db will certainly match with dp False-positives in the result q q filtered afterwards - using du possible, because Similarity Search: Part I, Chapter 1 93

User-defined Metric Functions n Searching using dp q n Possible, because q n search the index, but use dp instead of db every object that would match similarity query using db will certainly match with dp False-positives in the result q q filtered afterwards - using du possible, because Similarity Search: Part I, Chapter 1 93

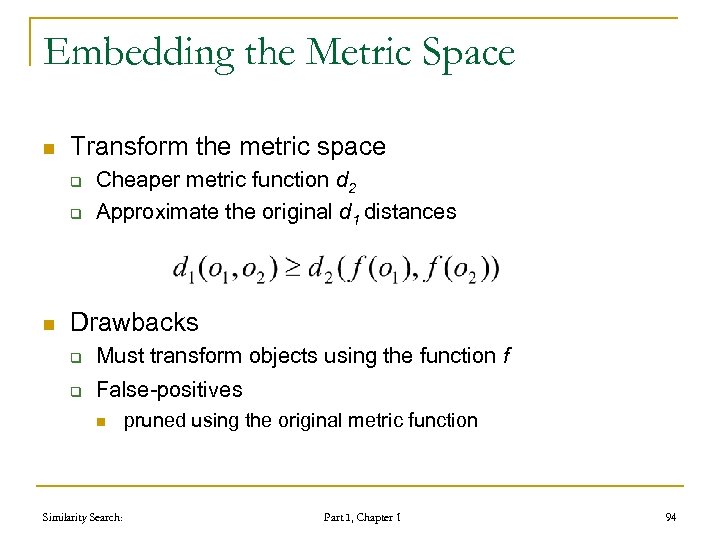

Embedding the Metric Space n Transform the metric space q q n Cheaper metric function d 2 Approximate the original d 1 distances Drawbacks q Must transform objects using the function f q False-positives n Similarity Search: pruned using the original metric function Part I, Chapter 1 94

Embedding the Metric Space n Transform the metric space q q n Cheaper metric function d 2 Approximate the original d 1 distances Drawbacks q Must transform objects using the function f q False-positives n Similarity Search: pruned using the original metric function Part I, Chapter 1 94

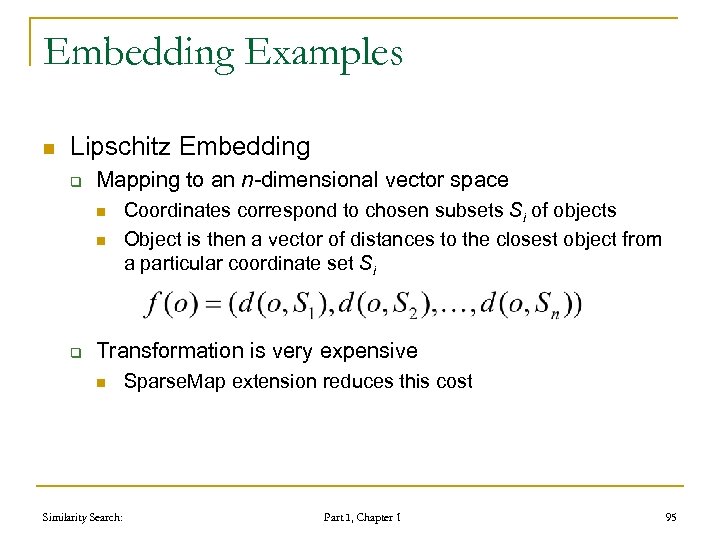

Embedding Examples n Lipschitz Embedding q Mapping to an n-dimensional vector space n n q Coordinates correspond to chosen subsets Si of objects Object is then a vector of distances to the closest object from a particular coordinate set Si Transformation is very expensive n Similarity Search: Sparse. Map extension reduces this cost Part I, Chapter 1 95

Embedding Examples n Lipschitz Embedding q Mapping to an n-dimensional vector space n n q Coordinates correspond to chosen subsets Si of objects Object is then a vector of distances to the closest object from a particular coordinate set Si Transformation is very expensive n Similarity Search: Sparse. Map extension reduces this cost Part I, Chapter 1 95

Embedding Examples n Karhunen-Loeve tranformation q q Linear transformation of vector spaces Dimensionality reduction technique n q q q Similar to Principal Component Analysis Projects object o onto the first k < n basis vectors Transformation is contractive Used in the Fast. Map technique Similarity Search: Part I, Chapter 1 96

Embedding Examples n Karhunen-Loeve tranformation q q Linear transformation of vector spaces Dimensionality reduction technique n q q q Similar to Principal Component Analysis Projects object o onto the first k < n basis vectors Transformation is contractive Used in the Fast. Map technique Similarity Search: Part I, Chapter 1 96

Foundations of metric space searching 1. 2. 3. 4. 5. 6. 7. 8. 9. distance searching problem in metric spaces metric distance measures similarity queries basic partitioning principles of similarity query execution policies to avoid distance computations metric space transformations principles of approximate similarity search advanced issues Similarity Search: Part I, Chapter 1 97

Foundations of metric space searching 1. 2. 3. 4. 5. 6. 7. 8. 9. distance searching problem in metric spaces metric distance measures similarity queries basic partitioning principles of similarity query execution policies to avoid distance computations metric space transformations principles of approximate similarity search advanced issues Similarity Search: Part I, Chapter 1 97

Principles of Approx. Similarity Search n Approximate similarity search over-comes problems of exact similarity search when using traditional access methods. q q n Moderate improvement of performance with respect to the sequential scan. Dimensionality curse Similarity search returns mathematically precise result sets. q Similarity is often subjective, so in some cases also approximate result sets satisfy the user’s needs. Similarity Search: Part I, Chapter 1 98

Principles of Approx. Similarity Search n Approximate similarity search over-comes problems of exact similarity search when using traditional access methods. q q n Moderate improvement of performance with respect to the sequential scan. Dimensionality curse Similarity search returns mathematically precise result sets. q Similarity is often subjective, so in some cases also approximate result sets satisfy the user’s needs. Similarity Search: Part I, Chapter 1 98

Principles of Approx. Similarity Search (cont. ) n Approximate similarity search processes a query faster at the price of imprecision in the returned result sets. q Useful, for instance, in interactive systems: n n Similarity search is typically an iterative process Users submit several search queries before being satisfied q q Fast approximate similarity search in intermediate queries can be useful. Improvements up to two orders of magnitude Similarity Search: Part I, Chapter 1 99

Principles of Approx. Similarity Search (cont. ) n Approximate similarity search processes a query faster at the price of imprecision in the returned result sets. q Useful, for instance, in interactive systems: n n Similarity search is typically an iterative process Users submit several search queries before being satisfied q q Fast approximate similarity search in intermediate queries can be useful. Improvements up to two orders of magnitude Similarity Search: Part I, Chapter 1 99

Approx. Similarity Search: Basic Strategies n Space transformation q Distance preserving transformations n n q Example: n n n q Distances in the transformed space are smaller than in the original space. Possible false hits Dimensionality reduction techniques such as KLT, DFT, DCT, DWT VA-files We will not discuss this approximation strategy in details. Similarity Search: Part I, Chapter 1 100

Approx. Similarity Search: Basic Strategies n Space transformation q Distance preserving transformations n n q Example: n n n q Distances in the transformed space are smaller than in the original space. Possible false hits Dimensionality reduction techniques such as KLT, DFT, DCT, DWT VA-files We will not discuss this approximation strategy in details. Similarity Search: Part I, Chapter 1 100

Basic Strategies (cont. ) n Reducing subsets of data to be examined q q q Not promising data is not accessed. False dismissals can occur. This strategy will be discussed more deeply in the following slides. Similarity Search: Part I, Chapter 1 101

Basic Strategies (cont. ) n Reducing subsets of data to be examined q q q Not promising data is not accessed. False dismissals can occur. This strategy will be discussed more deeply in the following slides. Similarity Search: Part I, Chapter 1 101

Reducing Volume of Examined Data n Possible strategies: q Early termination strategies n q A search algorithm might stop before all the needed data has been accessed. Relaxed branching strategies n Similarity Search: Data regions overlapping the query region can be discarded depending on a specific relaxed pruning strategy. Part I, Chapter 1 102

Reducing Volume of Examined Data n Possible strategies: q Early termination strategies n q A search algorithm might stop before all the needed data has been accessed. Relaxed branching strategies n Similarity Search: Data regions overlapping the query region can be discarded depending on a specific relaxed pruning strategy. Part I, Chapter 1 102

Early Termination Strategies n Exact similarity search algorithms are q q n Iterative processes, where Current result set is improved at each step. Exact similarity search algorithms stop q When no further improvement is possible. Similarity Search: Part I, Chapter 1 103

Early Termination Strategies n Exact similarity search algorithms are q q n Iterative processes, where Current result set is improved at each step. Exact similarity search algorithms stop q When no further improvement is possible. Similarity Search: Part I, Chapter 1 103

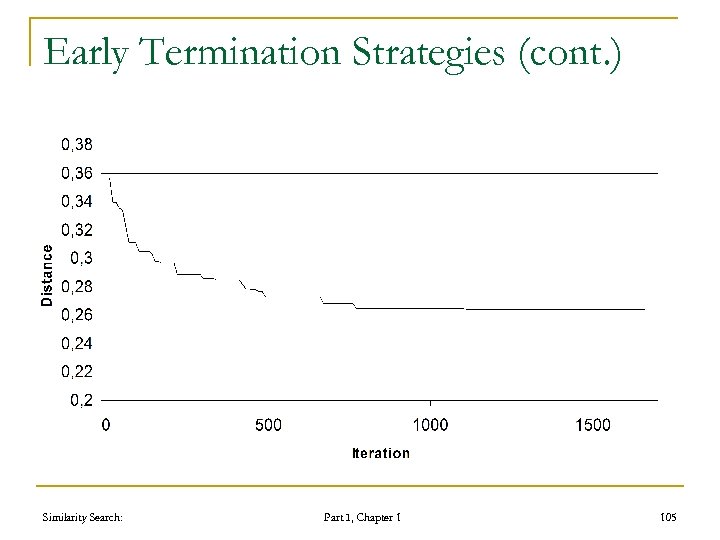

Early Termination Strategies (cont. ) n Approximate similarity search algorithms q q n Use a “relaxed” stop condition that stops the algorithm when little chances of improving the current results are detected. The hypothesis is that q q A good approximation is obtained after a few iterations. Further steps would consume most of the total search costs and would only marginally improve the result-set. Similarity Search: Part I, Chapter 1 104

Early Termination Strategies (cont. ) n Approximate similarity search algorithms q q n Use a “relaxed” stop condition that stops the algorithm when little chances of improving the current results are detected. The hypothesis is that q q A good approximation is obtained after a few iterations. Further steps would consume most of the total search costs and would only marginally improve the result-set. Similarity Search: Part I, Chapter 1 104

Early Termination Strategies (cont. ) Similarity Search: Part I, Chapter 1 105

Early Termination Strategies (cont. ) Similarity Search: Part I, Chapter 1 105

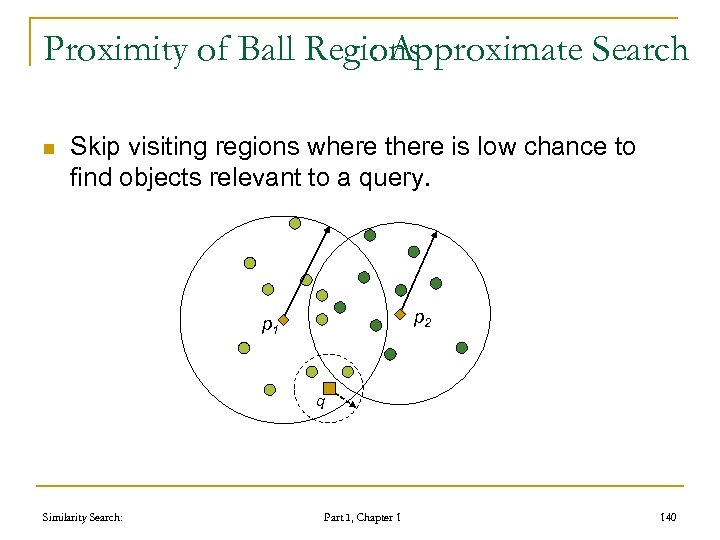

Relaxed Branching Strategies n Exact similarity search algorithms q n Approximate similarity search algorithms q q n Access all data regions overlapping the query region and discard all the others. Use a “relaxed” pruning condition that Rejects regions overlapping the query region when it detects a low likelihood that data objects are contained in the intersection. In particular, useful and effective with access methods based on hierarchical decomposition of the space. Similarity Search: Part I, Chapter 1 106

Relaxed Branching Strategies n Exact similarity search algorithms q n Approximate similarity search algorithms q q n Access all data regions overlapping the query region and discard all the others. Use a “relaxed” pruning condition that Rejects regions overlapping the query region when it detects a low likelihood that data objects are contained in the intersection. In particular, useful and effective with access methods based on hierarchical decomposition of the space. Similarity Search: Part I, Chapter 1 106

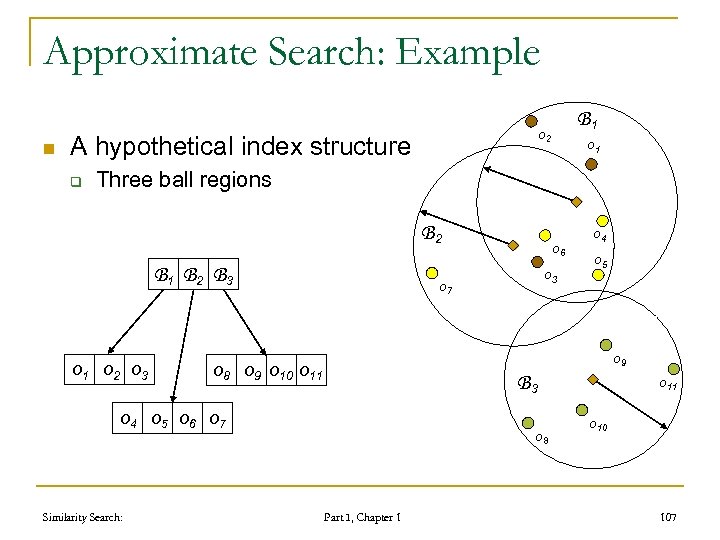

Approximate Search: Example n A hypothetical index structure q B 1 o 2 o 1 Three ball regions B 2 B 1 B 2 B 3 o 1 o 2 o 3 o 7 o 5 o 9 o 8 o 9 o 10 o 11 B 3 o 4 o 5 o 6 o 7 Similarity Search: o 6 o 4 o 8 Part I, Chapter 1 o 10 107

Approximate Search: Example n A hypothetical index structure q B 1 o 2 o 1 Three ball regions B 2 B 1 B 2 B 3 o 1 o 2 o 3 o 7 o 5 o 9 o 8 o 9 o 10 o 11 B 3 o 4 o 5 o 6 o 7 Similarity Search: o 6 o 4 o 8 Part I, Chapter 1 o 10 107

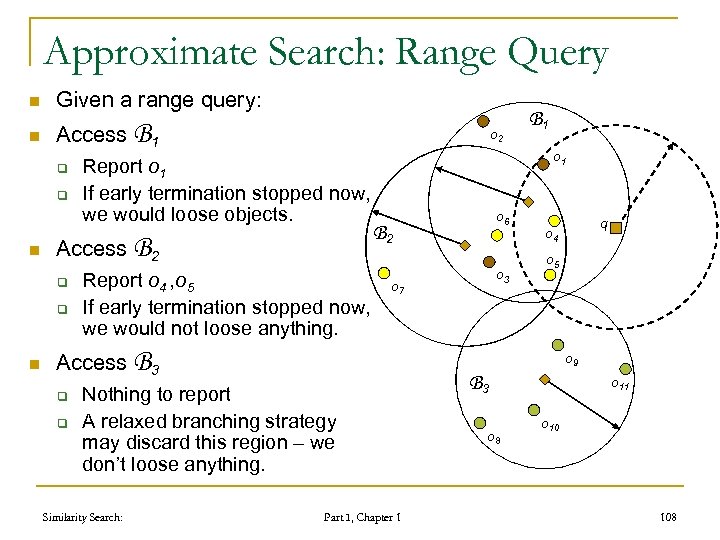

Approximate Search: Range Query n Given a range query: n Access B 1 q q n Report o 1 If early termination stopped now, we would loose objects. Access B 2 q q n o 2 Report o 4 , o 5 If early termination stopped now, we would not loose anything. o 1 q o 3 o 7 q o 4 o 5 o 9 Nothing to report A relaxed branching strategy may discard this region – we don’t loose anything. Similarity Search: o 6 B 2 Access B 3 q B 1 Part I, Chapter 1 B 3 o 8 o 11 o 10 108

Approximate Search: Range Query n Given a range query: n Access B 1 q q n Report o 1 If early termination stopped now, we would loose objects. Access B 2 q q n o 2 Report o 4 , o 5 If early termination stopped now, we would not loose anything. o 1 q o 3 o 7 q o 4 o 5 o 9 Nothing to report A relaxed branching strategy may discard this region – we don’t loose anything. Similarity Search: o 6 B 2 Access B 3 q B 1 Part I, Chapter 1 B 3 o 8 o 11 o 10 108

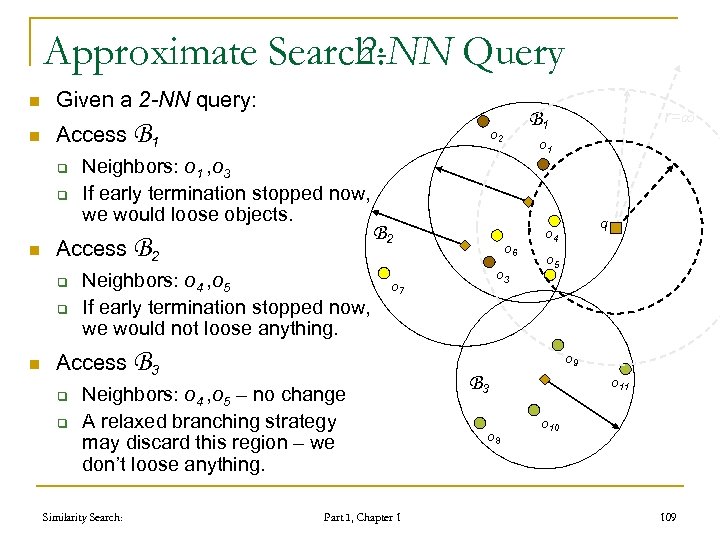

Approximate Search: 2 -NN Query n Given a 2 -NN query: n Access B 1 q q n Access B 2 q q n o 2 Neighbors: o 1 , o 3 If early termination stopped now, we would loose objects. Neighbors: o 4 , o 5 If early termination stopped now, we would not loose anything. B 2 Access B 3 q q o 3 q o 4 o 5 o 9 Neighbors: o 4 , o 5 – no change A relaxed branching strategy may discard this region – we don’t loose anything. Similarity Search: o 1 o 6 o 7 r= B 1 Part I, Chapter 1 B 3 o 8 o 11 o 10 109

Approximate Search: 2 -NN Query n Given a 2 -NN query: n Access B 1 q q n Access B 2 q q n o 2 Neighbors: o 1 , o 3 If early termination stopped now, we would loose objects. Neighbors: o 4 , o 5 If early termination stopped now, we would not loose anything. B 2 Access B 3 q q o 3 q o 4 o 5 o 9 Neighbors: o 4 , o 5 – no change A relaxed branching strategy may discard this region – we don’t loose anything. Similarity Search: o 1 o 6 o 7 r= B 1 Part I, Chapter 1 B 3 o 8 o 11 o 10 109

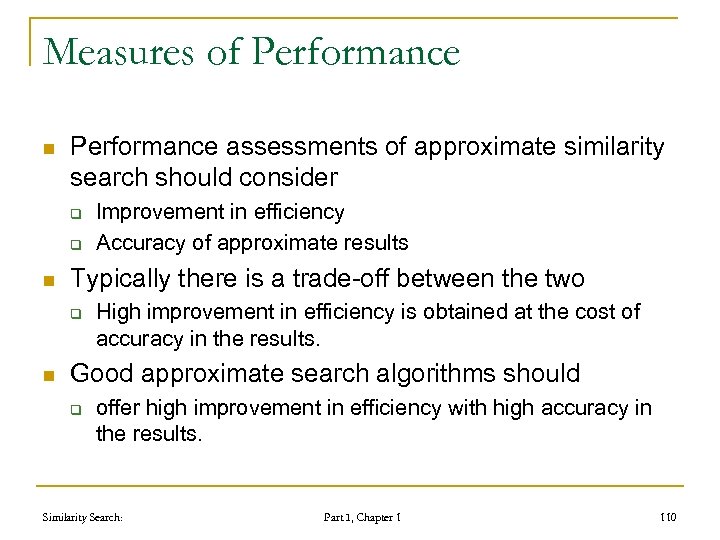

Measures of Performance n Performance assessments of approximate similarity search should consider q q n Typically there is a trade-off between the two q n Improvement in efficiency Accuracy of approximate results High improvement in efficiency is obtained at the cost of accuracy in the results. Good approximate search algorithms should q offer high improvement in efficiency with high accuracy in the results. Similarity Search: Part I, Chapter 1 110

Measures of Performance n Performance assessments of approximate similarity search should consider q q n Typically there is a trade-off between the two q n Improvement in efficiency Accuracy of approximate results High improvement in efficiency is obtained at the cost of accuracy in the results. Good approximate search algorithms should q offer high improvement in efficiency with high accuracy in the results. Similarity Search: Part I, Chapter 1 110

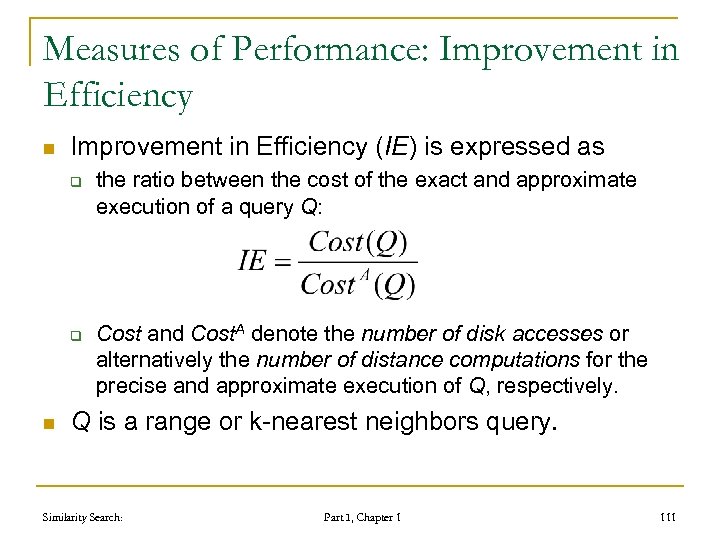

Measures of Performance: Improvement in Efficiency n Improvement in Efficiency (IE) is expressed as q q n the ratio between the cost of the exact and approximate execution of a query Q: Cost and Cost. A denote the number of disk accesses or alternatively the number of distance computations for the precise and approximate execution of Q, respectively. Q is a range or k-nearest neighbors query. Similarity Search: Part I, Chapter 1 111

Measures of Performance: Improvement in Efficiency n Improvement in Efficiency (IE) is expressed as q q n the ratio between the cost of the exact and approximate execution of a query Q: Cost and Cost. A denote the number of disk accesses or alternatively the number of distance computations for the precise and approximate execution of Q, respectively. Q is a range or k-nearest neighbors query. Similarity Search: Part I, Chapter 1 111

Improvement in Efficiency (cont. ) n n IE=10 means that approximate execution is 10 times faster Example: q q exact execution 6 minutes approximate execution 36 seconds Similarity Search: Part I, Chapter 1 112

Improvement in Efficiency (cont. ) n n IE=10 means that approximate execution is 10 times faster Example: q q exact execution 6 minutes approximate execution 36 seconds Similarity Search: Part I, Chapter 1 112

Measures of Performance: Precision and Recal n Widely used in Information Retrieval as a performance assessment. n Precision: ratio between the retrieved qualifying objects and the total objects retrieved. n Recall: ratio between the retrieved qualifying objects and the total qualifying objects. Similarity Search: Part I, Chapter 1 113

Measures of Performance: Precision and Recal n Widely used in Information Retrieval as a performance assessment. n Precision: ratio between the retrieved qualifying objects and the total objects retrieved. n Recall: ratio between the retrieved qualifying objects and the total qualifying objects. Similarity Search: Part I, Chapter 1 113

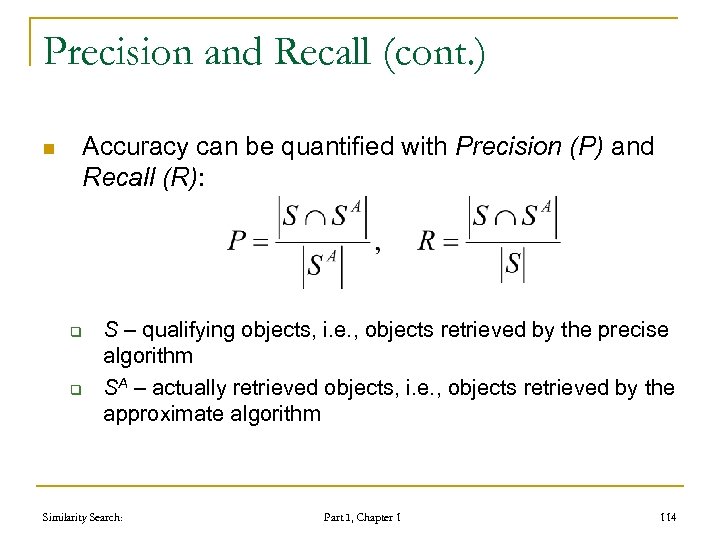

Precision and Recall (cont. ) n Accuracy can be quantified with Precision (P) and Recall (R): q q S – qualifying objects, i. e. , objects retrieved by the precise algorithm SA – actually retrieved objects, i. e. , objects retrieved by the approximate algorithm Similarity Search: Part I, Chapter 1 114

Precision and Recall (cont. ) n Accuracy can be quantified with Precision (P) and Recall (R): q q S – qualifying objects, i. e. , objects retrieved by the precise algorithm SA – actually retrieved objects, i. e. , objects retrieved by the approximate algorithm Similarity Search: Part I, Chapter 1 114

Precision and Recall (cont. ) n They are very intuitive but in our context q n For approximate range search we typically have SA S q n Therefore, precision is always 1 in this case Results of k-NN(q) have always size k q n Their interpretation is not obvious & misleading!!! Therefore, precision is always equal to recall in this case. Every element has the same importance q Loosing the first object rather than the 1000 th one is the same. Similarity Search: Part I, Chapter 1 115

Precision and Recall (cont. ) n They are very intuitive but in our context q n For approximate range search we typically have SA S q n Therefore, precision is always 1 in this case Results of k-NN(q) have always size k q n Their interpretation is not obvious & misleading!!! Therefore, precision is always equal to recall in this case. Every element has the same importance q Loosing the first object rather than the 1000 th one is the same. Similarity Search: Part I, Chapter 1 115

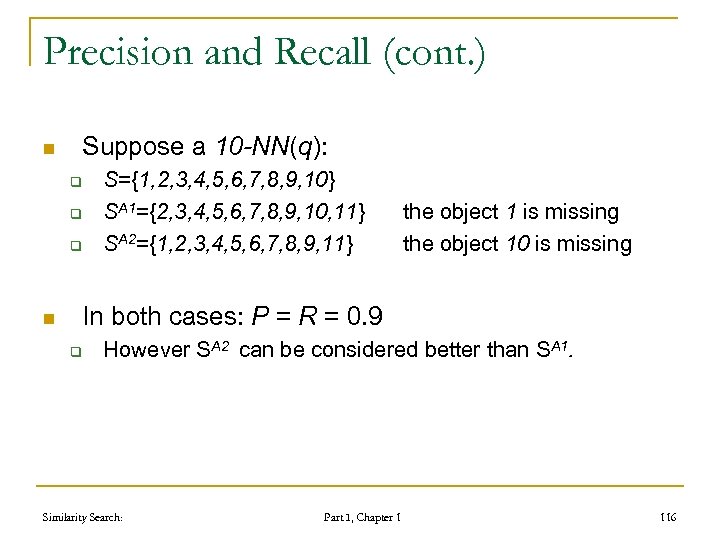

Precision and Recall (cont. ) n Suppose a 10 -NN(q): q q q n S={1, 2, 3, 4, 5, 6, 7, 8, 9, 10} SA 1={2, 3, 4, 5, 6, 7, 8, 9, 10, 11} SA 2={1, 2, 3, 4, 5, 6, 7, 8, 9, 11} the object 1 is missing the object 10 is missing In both cases: P = R = 0. 9 q However SA 2 can be considered better than SA 1. Similarity Search: Part I, Chapter 1 116

Precision and Recall (cont. ) n Suppose a 10 -NN(q): q q q n S={1, 2, 3, 4, 5, 6, 7, 8, 9, 10} SA 1={2, 3, 4, 5, 6, 7, 8, 9, 10, 11} SA 2={1, 2, 3, 4, 5, 6, 7, 8, 9, 11} the object 1 is missing the object 10 is missing In both cases: P = R = 0. 9 q However SA 2 can be considered better than SA 1. Similarity Search: Part I, Chapter 1 116

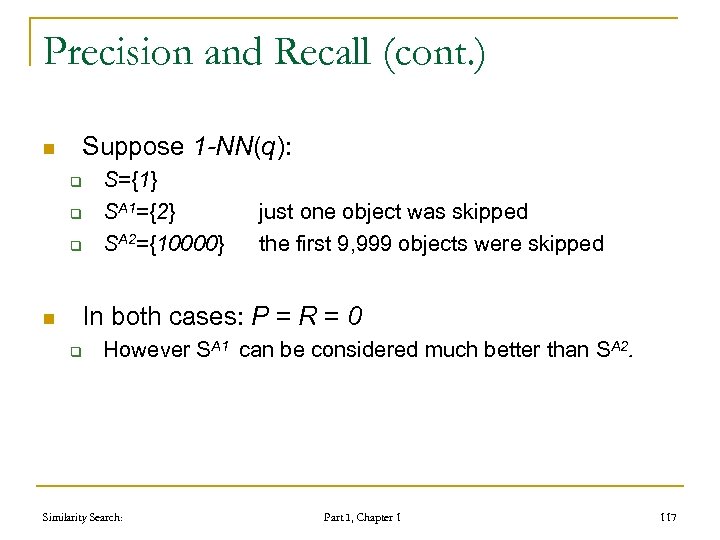

Precision and Recall (cont. ) n Suppose 1 -NN(q): q q q n S={1} SA 1={2} SA 2={10000} just one object was skipped the first 9, 999 objects were skipped In both cases: P = R = 0 q However SA 1 can be considered much better than SA 2. Similarity Search: Part I, Chapter 1 117

Precision and Recall (cont. ) n Suppose 1 -NN(q): q q q n S={1} SA 1={2} SA 2={10000} just one object was skipped the first 9, 999 objects were skipped In both cases: P = R = 0 q However SA 1 can be considered much better than SA 2. Similarity Search: Part I, Chapter 1 117

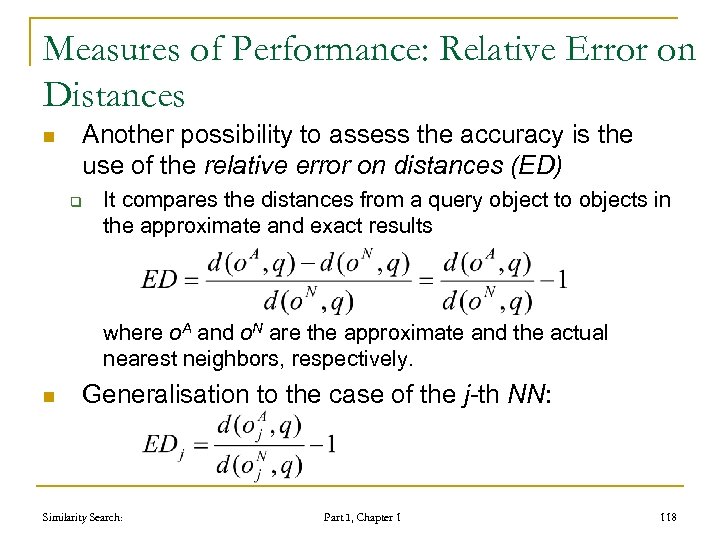

Measures of Performance: Relative Error on Distances n Another possibility to assess the accuracy is the use of the relative error on distances (ED) q It compares the distances from a query object to objects in the approximate and exact results where o. A and o. N are the approximate and the actual nearest neighbors, respectively. n Generalisation to the case of the j-th NN: Similarity Search: Part I, Chapter 1 118

Measures of Performance: Relative Error on Distances n Another possibility to assess the accuracy is the use of the relative error on distances (ED) q It compares the distances from a query object to objects in the approximate and exact results where o. A and o. N are the approximate and the actual nearest neighbors, respectively. n Generalisation to the case of the j-th NN: Similarity Search: Part I, Chapter 1 118

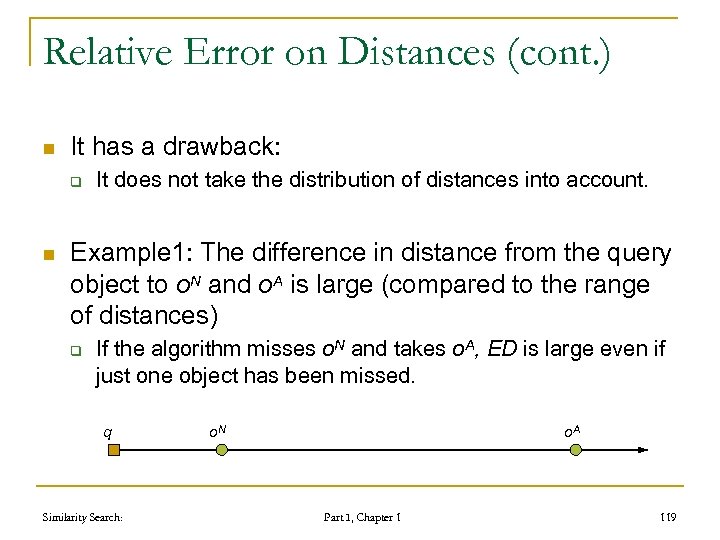

Relative Error on Distances (cont. ) n It has a drawback: q n It does not take the distribution of distances into account. Example 1: The difference in distance from the query object to o. N and o. A is large (compared to the range of distances) q If the algorithm misses o. N and takes o. A, ED is large even if just one object has been missed. q Similarity Search: o. N o. A Part I, Chapter 1 119

Relative Error on Distances (cont. ) n It has a drawback: q n It does not take the distribution of distances into account. Example 1: The difference in distance from the query object to o. N and o. A is large (compared to the range of distances) q If the algorithm misses o. N and takes o. A, ED is large even if just one object has been missed. q Similarity Search: o. N o. A Part I, Chapter 1 119

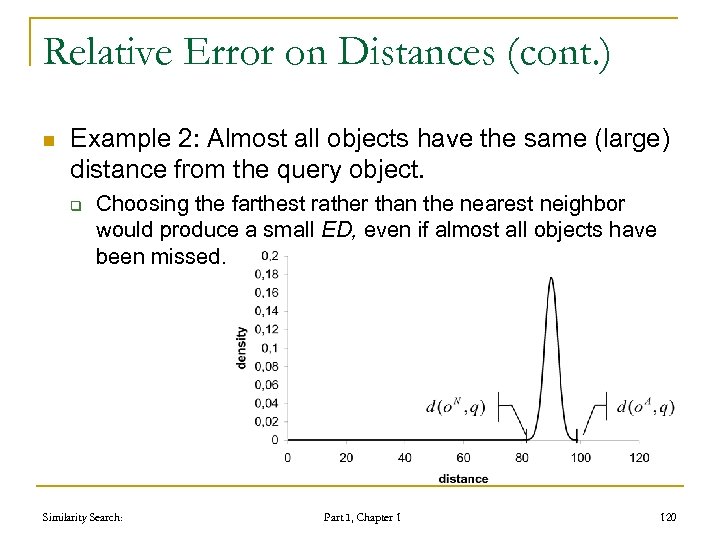

Relative Error on Distances (cont. ) n Example 2: Almost all objects have the same (large) distance from the query object. q Choosing the farthest rather than the nearest neighbor would produce a small ED, even if almost all objects have been missed. Similarity Search: Part I, Chapter 1 120

Relative Error on Distances (cont. ) n Example 2: Almost all objects have the same (large) distance from the query object. q Choosing the farthest rather than the nearest neighbor would produce a small ED, even if almost all objects have been missed. Similarity Search: Part I, Chapter 1 120

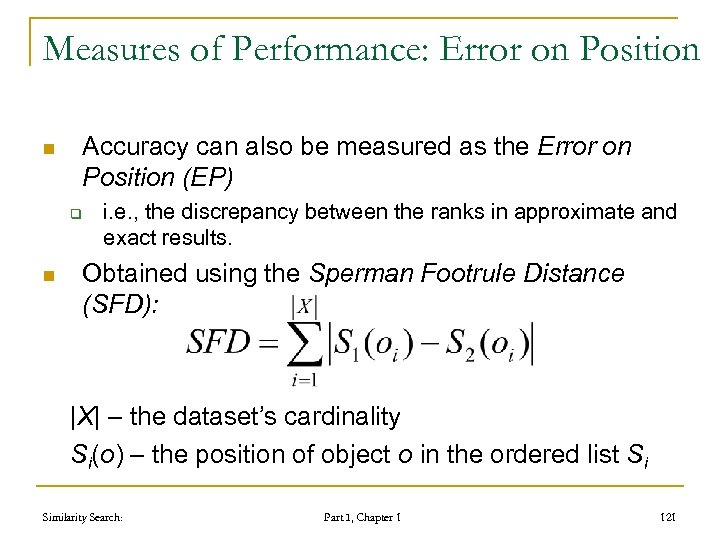

Measures of Performance: Error on Position n Accuracy can also be measured as the Error on Position (EP) q n i. e. , the discrepancy between the ranks in approximate and exact results. Obtained using the Sperman Footrule Distance (SFD): |X| – the dataset’s cardinality Si(o) – the position of object o in the ordered list Si Similarity Search: Part I, Chapter 1 121

Measures of Performance: Error on Position n Accuracy can also be measured as the Error on Position (EP) q n i. e. , the discrepancy between the ranks in approximate and exact results. Obtained using the Sperman Footrule Distance (SFD): |X| – the dataset’s cardinality Si(o) – the position of object o in the ordered list Si Similarity Search: Part I, Chapter 1 121

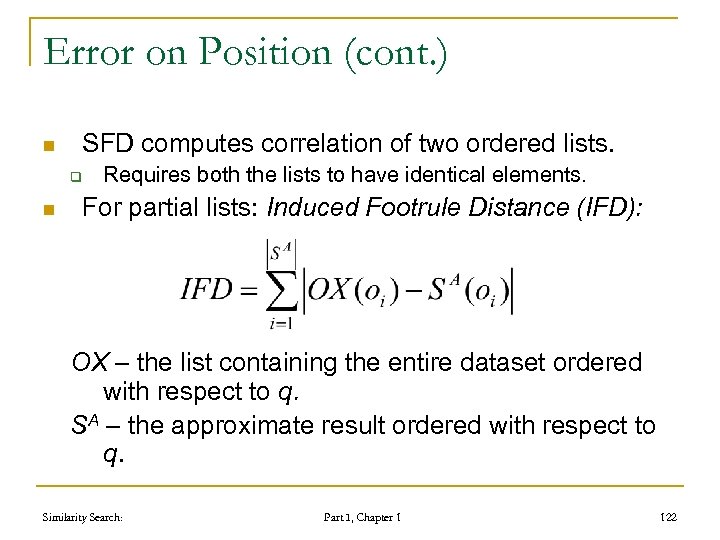

Error on Position (cont. ) n SFD computes correlation of two ordered lists. q n Requires both the lists to have identical elements. For partial lists: Induced Footrule Distance (IFD): OX – the list containing the entire dataset ordered with respect to q. SA – the approximate result ordered with respect to q. Similarity Search: Part I, Chapter 1 122

Error on Position (cont. ) n SFD computes correlation of two ordered lists. q n Requires both the lists to have identical elements. For partial lists: Induced Footrule Distance (IFD): OX – the list containing the entire dataset ordered with respect to q. SA – the approximate result ordered with respect to q. Similarity Search: Part I, Chapter 1 122

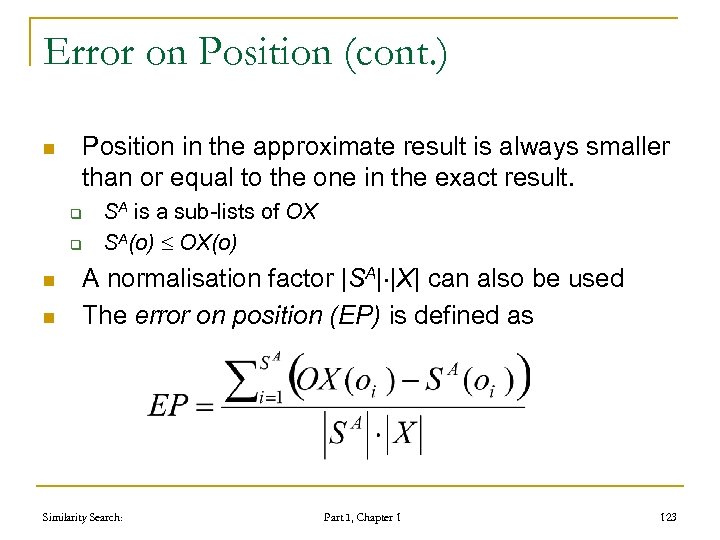

Error on Position (cont. ) n Position in the approximate result is always smaller than or equal to the one in the exact result. q q n n SA is a sub-lists of OX SA(o) OX(o) A normalisation factor |SA| |X| can also be used The error on position (EP) is defined as Similarity Search: Part I, Chapter 1 123

Error on Position (cont. ) n Position in the approximate result is always smaller than or equal to the one in the exact result. q q n n SA is a sub-lists of OX SA(o) OX(o) A normalisation factor |SA| |X| can also be used The error on position (EP) is defined as Similarity Search: Part I, Chapter 1 123

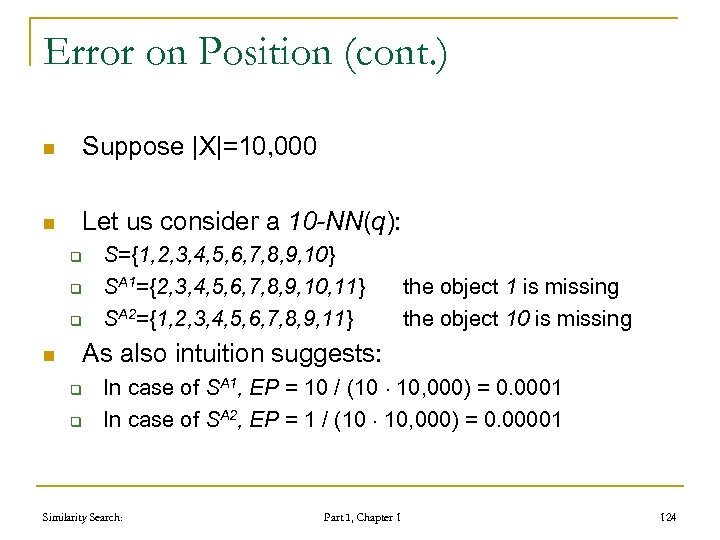

Error on Position (cont. ) n Suppose |X|=10, 000 n Let us consider a 10 -NN(q): q q q n S={1, 2, 3, 4, 5, 6, 7, 8, 9, 10} SA 1={2, 3, 4, 5, 6, 7, 8, 9, 10, 11} SA 2={1, 2, 3, 4, 5, 6, 7, 8, 9, 11} the object 1 is missing the object 10 is missing As also intuition suggests: q q In case of SA 1, EP = 10 / (10 10, 000) = 0. 0001 In case of SA 2, EP = 1 / (10 10, 000) = 0. 00001 Similarity Search: Part I, Chapter 1 124

Error on Position (cont. ) n Suppose |X|=10, 000 n Let us consider a 10 -NN(q): q q q n S={1, 2, 3, 4, 5, 6, 7, 8, 9, 10} SA 1={2, 3, 4, 5, 6, 7, 8, 9, 10, 11} SA 2={1, 2, 3, 4, 5, 6, 7, 8, 9, 11} the object 1 is missing the object 10 is missing As also intuition suggests: q q In case of SA 1, EP = 10 / (10 10, 000) = 0. 0001 In case of SA 2, EP = 1 / (10 10, 000) = 0. 00001 Similarity Search: Part I, Chapter 1 124

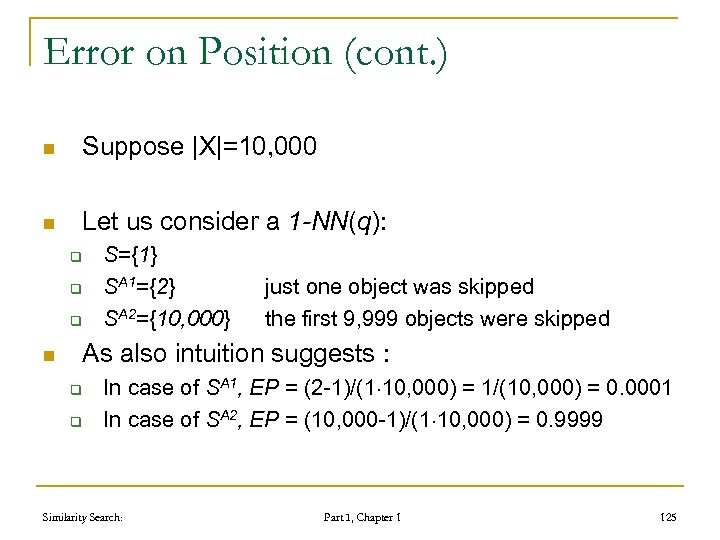

Error on Position (cont. ) n Suppose |X|=10, 000 n Let us consider a 1 -NN(q): q q q n S={1} SA 1={2} SA 2={10, 000} just one object was skipped the first 9, 999 objects were skipped As also intuition suggests : q q In case of SA 1, EP = (2 -1)/(1 10, 000) = 1/(10, 000) = 0. 0001 In case of SA 2, EP = (10, 000 -1)/(1 10, 000) = 0. 9999 Similarity Search: Part I, Chapter 1 125

Error on Position (cont. ) n Suppose |X|=10, 000 n Let us consider a 1 -NN(q): q q q n S={1} SA 1={2} SA 2={10, 000} just one object was skipped the first 9, 999 objects were skipped As also intuition suggests : q q In case of SA 1, EP = (2 -1)/(1 10, 000) = 1/(10, 000) = 0. 0001 In case of SA 2, EP = (10, 000 -1)/(1 10, 000) = 0. 9999 Similarity Search: Part I, Chapter 1 125

Foundations of metric space searching 1. 2. 3. 4. 5. 6. 7. 8. 9. distance searching problem in metric spaces metric distance measures similarity queries basic partitioning principles of similarity query execution policies to avoid distance computations metric space transformations principles of approximate similarity search advanced issues Similarity Search: Part I, Chapter 1 126

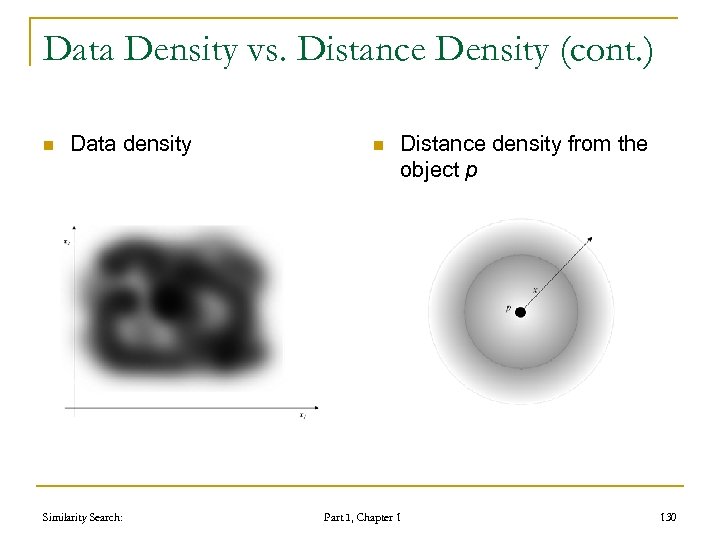

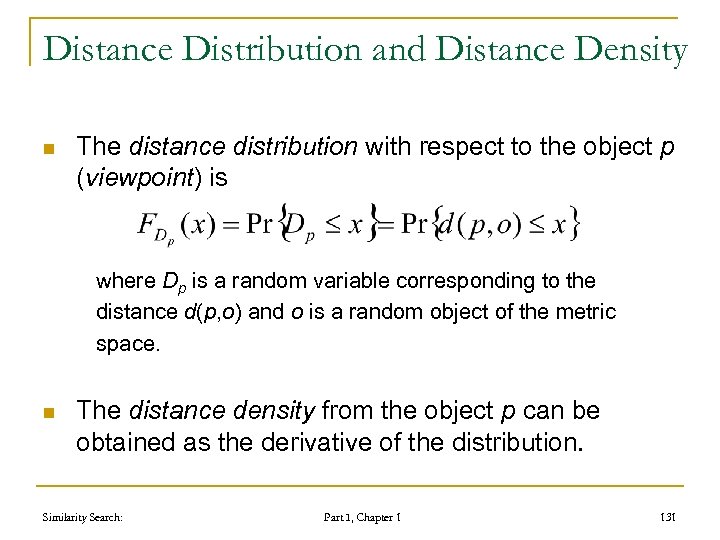

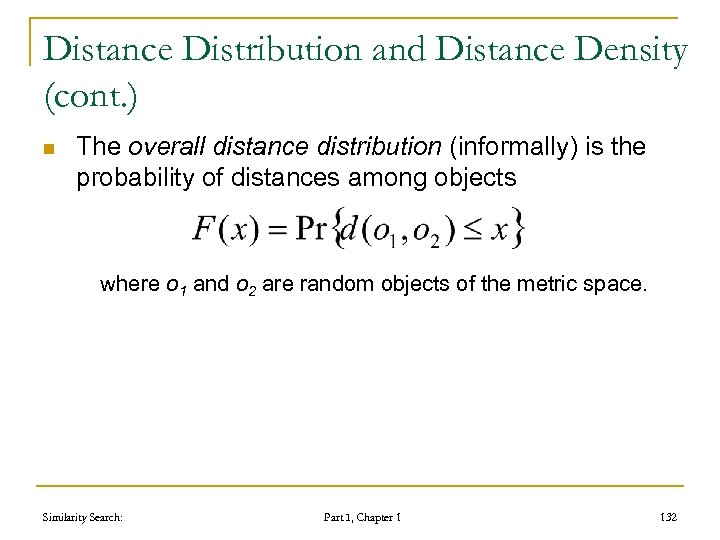

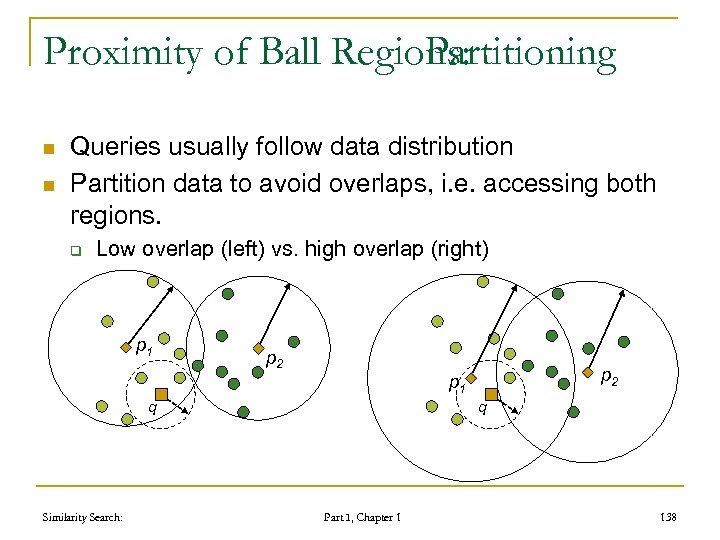

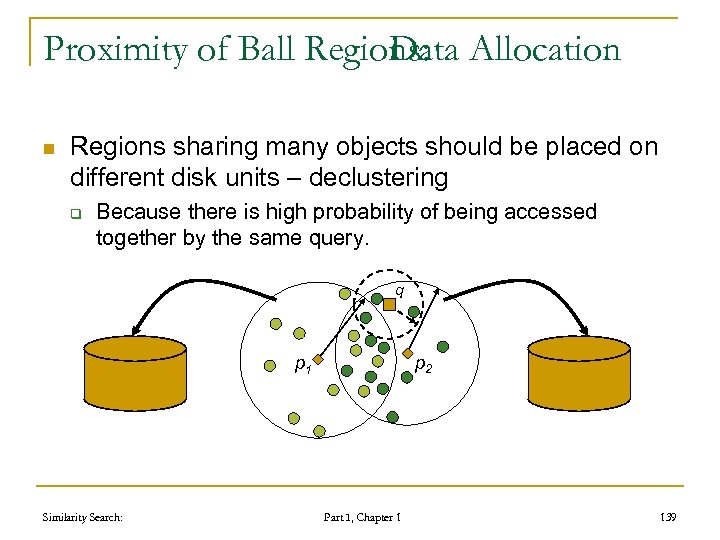

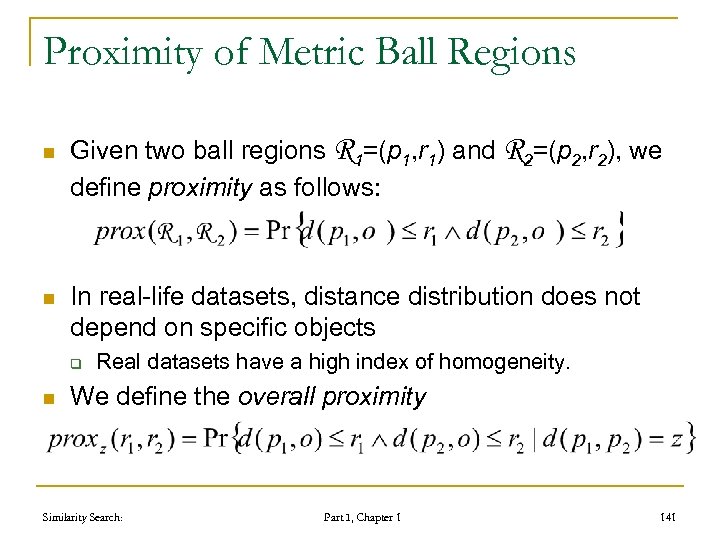

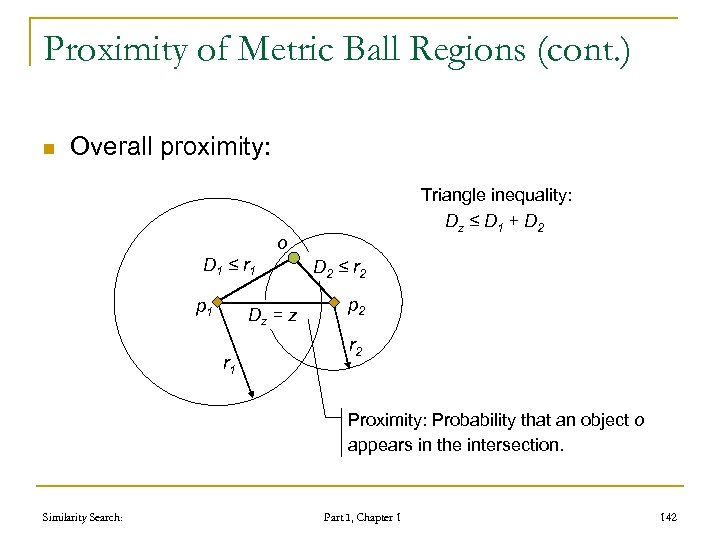

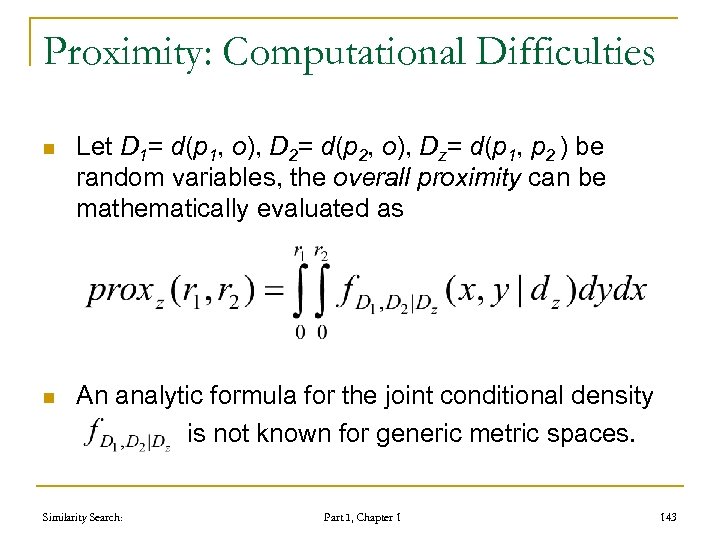

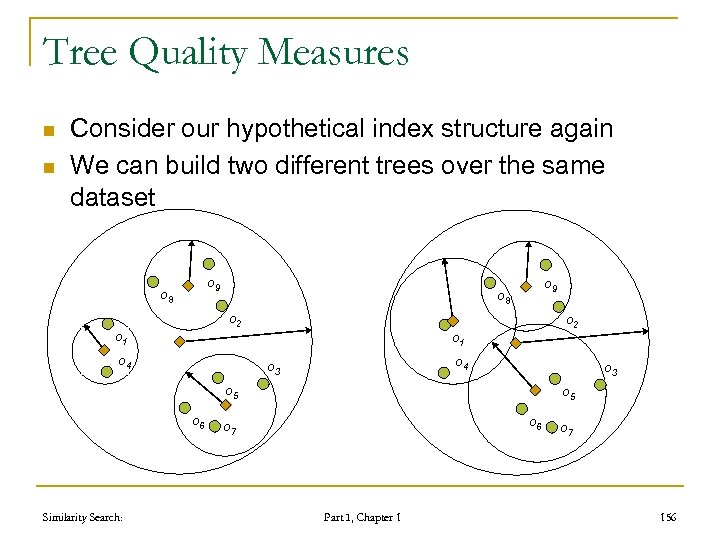

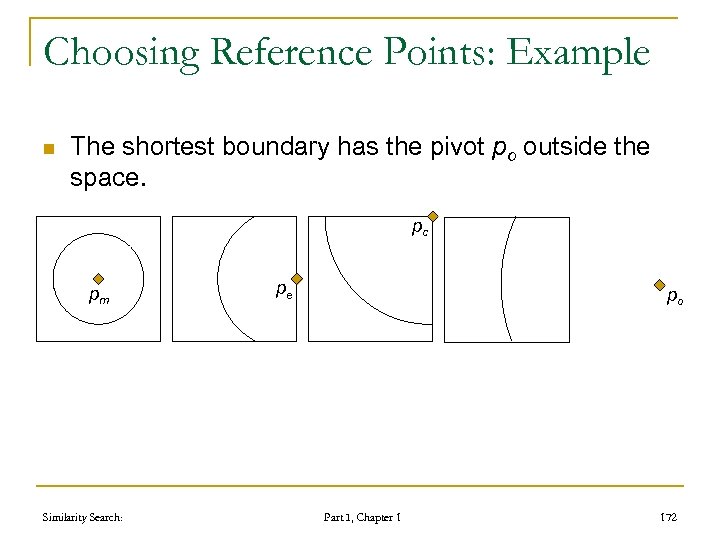

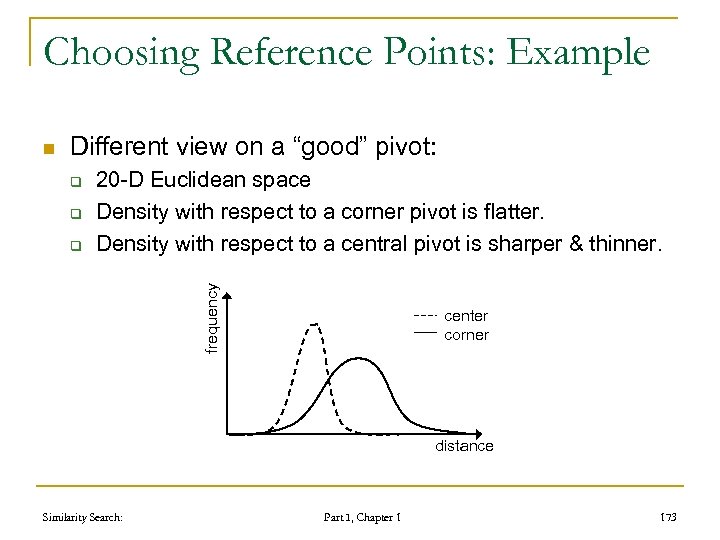

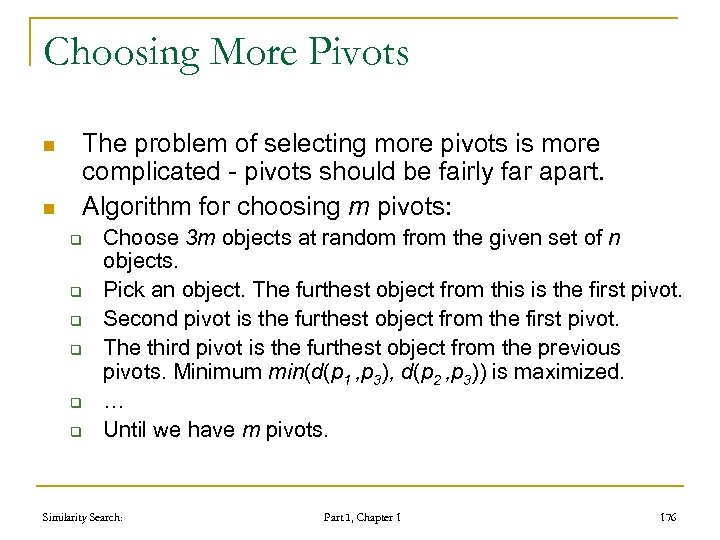

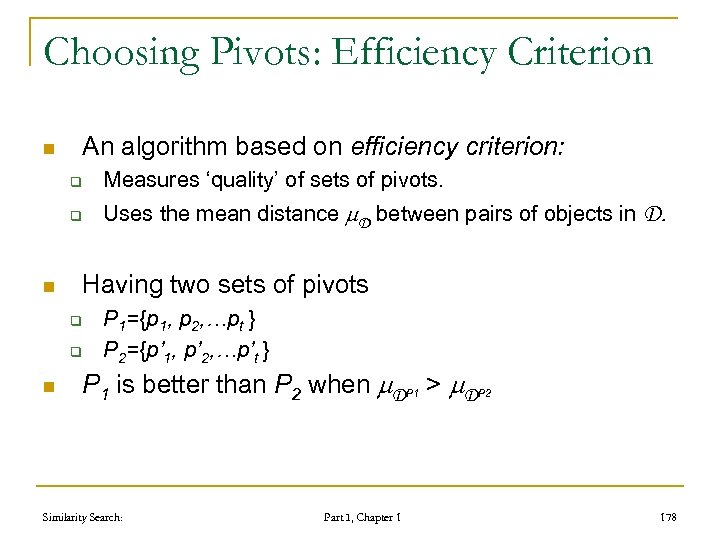

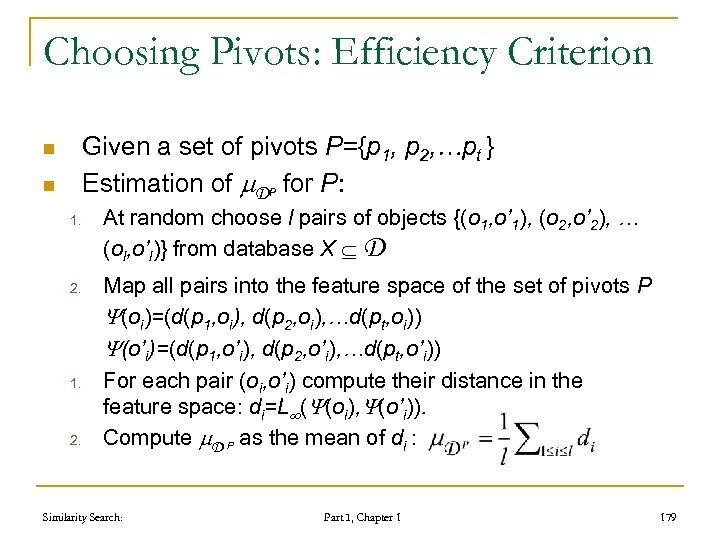

Foundations of metric space searching 1. 2. 3. 4. 5. 6. 7. 8. 9. distance searching problem in metric spaces metric distance measures similarity queries basic partitioning principles of similarity query execution policies to avoid distance computations metric space transformations principles of approximate similarity search advanced issues Similarity Search: Part I, Chapter 1 126