015eb837265d59d22c135219f3d17eb4.ppt

- Количество слайдов: 48

Scalable, Fault-tolerant Management of Grid Services: Application to Messaging Middleware Harshawardhan Gadgil hgadgil@cs. indiana. edu Ph. D. Defense Exam (Practice Talk) Advisor: Prof. Geoffrey Fox

Talk Outline n n Motivation Architecture n n Performance Evaluation n Service-oriented Management Application: Managing Grid Messaging Middleware Related Work Conclusion 2

Motivation: Characteristics of today’s (GRID) Applications n Increasing Application Complexity n Applications distributed and composed of multiple resources n n Components widely dispersed and disparate in nature and access n n Components (Nodes, network, processes) may fail Services must meet n n n Span different administrative domains Under differing network / security policies Limited access to resources due to presence of firewalls, NATs etc… Components in flux n n In future, much larger systems will be built General Qo. S and Life-cycle features (User defined) Application specific criteria Need to “manage” services to provide these capabilities 3

Motivation: Key Challenges in Management of Resources n Scalability n With Growing Complexity of application, number of resources that require management increases n n n E. g. LHC Grid consists of a large number of CPUs, disks and mass storage servers Web Service based applications such as Amazon’s EC 2 dynamically resizes compute capacity, so number of resources is NOT ONLY large BUT ALSO in a constant state of flux. Management framework MUST cope with large number of resources in terms of n Additional components Required 4

Motivation: Key Challenges in Management of Resources n n Scalability Performance n Performance Important in terms of n n Initialization Cost Recovery from failure Responding to run-time events Performance should not suffer with increasing resources and additional system components 5

Motivation: Key Challenges in Management of Resources n n n Scalability Performance Fault – tolerance n n Failures are Normal Resources may fail, but so also components of the management framework. Framework MUST recover from failure Recovery period must not increase drastically with increasing number of resources. 6

Motivation: Key Challenges in Management of Resources n n Scalability Performance Fault – tolerance Interoperability n n Resources exist on different platforms Written in different languages Typically managed using system specific protocols and hence not INTEROPERABLE Investigate the use of a service-oriented architecture for management 7

Motivation: Key Challenges in Management of Resources n n n Scalability Performance Fault – tolerance Interoperability Generality n n Management framework must be a generic framework. Should manage any type of resource (hardware / software) 8

Motivation: Key Challenges in Management of Resources n n n Scalability Performance Fault – tolerance Interoperability Generality Usability n n Simple to deploy. Built in terms of simple components (services) Autonomous operation (as much as possible) 9

Summary: Research Issues n n n Building a Fault-tolerant Management Architecture Making the architecture SCALABLE Investigate the overhead in terms of n n n Additional Components Required Typical response time Recovery Time Interoperable and Extensible Management framework General and usable system 10

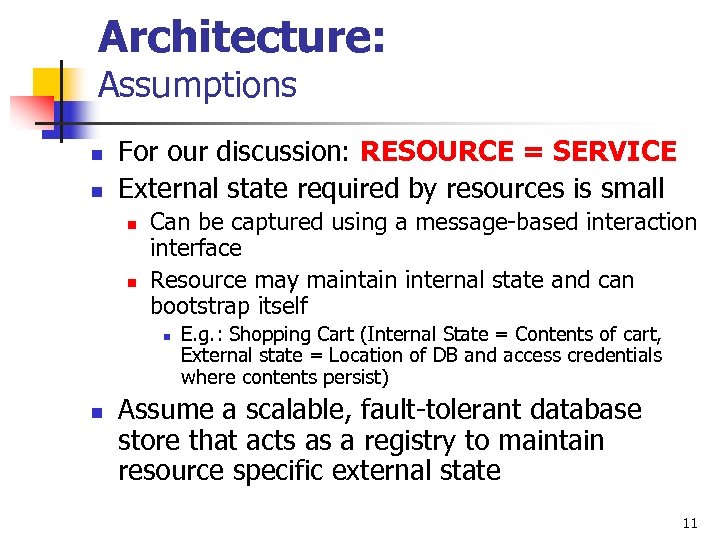

Architecture: Assumptions n n For our discussion: RESOURCE = SERVICE External state required by resources is small n n Can be captured using a message-based interaction interface Resource may maintain internal state and can bootstrap itself n n E. g. : Shopping Cart (Internal State = Contents of cart, External state = Location of DB and access credentials where contents persist) Assume a scalable, fault-tolerant database store that acts as a registry to maintain resource specific external state 11

Definition: The process of Management n n E. g. Consider Printer as a resource that will be managed Generates Events (E. g. Low Ink Level, Out of paper) n n Resource Manager appropriately handles these events as defined by Resource specific policies Job Queue Management is NOT the responsibility of our management architecture. n We imagine existence of a separate Job Management Process which itself can be managed by our framework (E. g. Make sure it is always up and running) 12

Management Architecture built in terms of n Hierarchical Bootstrap System – Robust itself by Replication n Registry for metadata (distributed database) – Robust by standard database techniques and our system itself for Service Interfaces n n n Message delivery between managers and managees Provides Secure delivery of messages Managers – Active stateless agents that manage resources. n n n Stores resource specific information (User-defined configuration / policies, external state required to properly manage a resource) Messaging Nodes form a scalable messaging substrate n n Resources in different domains can be managed with separate policies for each domain Periodically spawns a System Health Check that ensures components are up and running Resource specific management thread performs actual management Multi-threaded to improve scalability Managees – what you are managing (Resource / Service to manage) – Our system makes robust n n There is NO assumption that Managed system uses Messaging nodes Wrapped by a Service Adapter which provides a Web Service interface 13

Architecture: Scalability: Hierarchical distribution … ROOT Spawns if not present and ensure up and running Passive Bootstrap Nodes US EUROPE … • Only ensure that all child bootstrap nodes are always up and running Active Bootstrap Nodes /ROOT/EUROPE/CARDIFF CGL FSU CARDIFF • Responsible for maintaining a working set of management components in the domain • Always the leaf nodes in the hierarchy 14

Architecture: Conceptual Idea (Internals) Always ensure up and Always ensure up running and running Periodically Spawn Manager processes periodically checks available resources to manage. Also Read/Write resource specific external state from/to registry Connect to Messaging Node for sending and receiving messages User writes system configuration to registry 15

Architecture: User Component n Characteristics are determined by the user. n Events generated by the Managees are handled by the manager n Event processing is determined by via WS-Policy constructs n n n E. g. Wait for user’s decision on handling specific conditions The event handler has been specified, so execute default policy, etc… Note Managers will set up services if registry indicates that is appropriate; so writing information to registry can be used to start up a set of services n Generic and Application specific policies are written to the registry where it will be picked up by a manager process. 16

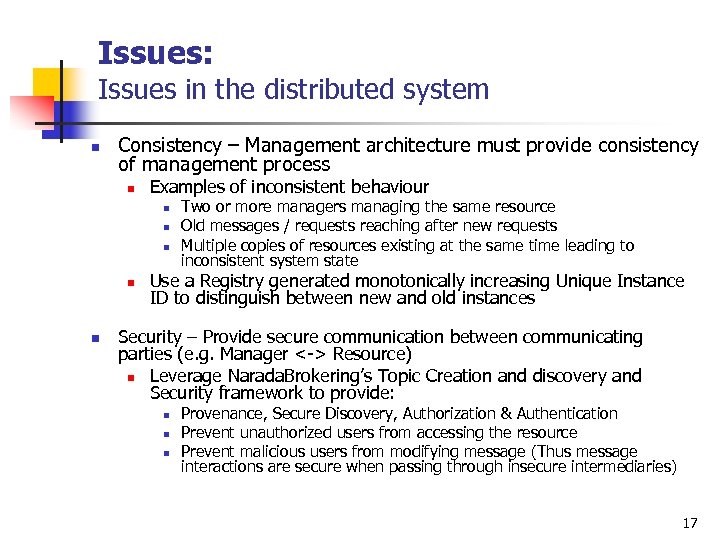

Issues: Issues in the distributed system n Consistency – Management architecture must provide consistency of management process n Examples of inconsistent behaviour n n n Two or more managers managing the same resource Old messages / requests reaching after new requests Multiple copies of resources existing at the same time leading to inconsistent system state Use a Registry generated monotonically increasing Unique Instance ID to distinguish between new and old instances Security – Provide secure communication between communicating parties (e. g. Manager <-> Resource) n Leverage Narada. Brokering’s Topic Creation and discovery and Security framework to provide: n n n Provenance, Secure Discovery, Authorization & Authentication Prevent unauthorized users from accessing the resource Prevent malicious users from modifying message (Thus message interactions are secure when passing through insecure intermediaries) 17

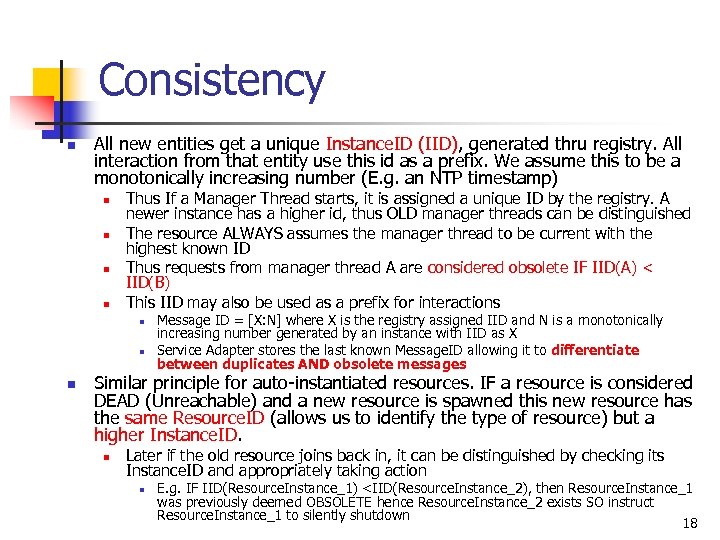

Consistency n All new entities get a unique Instance. ID (IID), generated thru registry. All interaction from that entity use this id as a prefix. We assume this to be a monotonically increasing number (E. g. an NTP timestamp) n n Thus If a Manager Thread starts, it is assigned a unique ID by the registry. A newer instance has a higher id, thus OLD manager threads can be distinguished The resource ALWAYS assumes the manager thread to be current with the highest known ID Thus requests from manager thread A are considered obsolete IF IID(A) < IID(B) This IID may also be used as a prefix for interactions n n n Message ID = [X: N] where X is the registry assigned IID and N is a monotonically increasing number generated by an instance with IID as X Service Adapter stores the last known Message. ID allowing it to differentiate between duplicates AND obsolete messages Similar principle for auto-instantiated resources. IF a resource is considered DEAD (Unreachable) and a new resource is spawned this new resource has the same Resource. ID (allows us to identify the type of resource) but a higher Instance. ID. n Later if the old resource joins back in, it can be distinguished by checking its Instance. ID and appropriately taking action n E. g. IF IID(Resource. Instance_1) <IID(Resource. Instance_2), then Resource. Instance_1 was previously deemed OBSOLETE hence Resource. Instance_2 exists SO instruct Resource. Instance_1 to silently shutdown 18

Interoperability: Service-Oriented Management n Existing systems n n Platforms, languages SNMP, JMX, WMI Quite successful, but not interoperable Move to Web Service based serviceoriented architecture that uses n XML based interactions that facilitate implementation in different languages, running on different platforms and over multiple transports. 19

Interoperability: WS – Distributed Management vs. WS-Management n Both systems provide Web service model for building application management solutions n WSDM – MOWS (Mgmt. Of Web Services) & MUWS (Mgmt. Using Web Services) n n n WS Management identifies core set of specification and usage requirements n n MUWS: unifying layer on top of existing management specifications such as SNMP, OMI (Object Management Interface) MOWS: Provide support for management framework such as deployment, auditing, metering, SLA management, life cycle management etc… E. g. CREATE, DELETE, GET / PUT, ENUMERATE + any number of resource specific management methods (if applicable) Selected WS-Management primarily due to its simplicity and also to leverage WS-Eventing implementation recently added for Web Service support in Narada. Brokering 20

![Implemented: n WS – Specifications n n n WS – Addressing [Aug 2004] and Implemented: n WS – Specifications n n n WS – Addressing [Aug 2004] and](https://present5.com/presentation/015eb837265d59d22c135219f3d17eb4/image-21.jpg)

Implemented: n WS – Specifications n n n WS – Addressing [Aug 2004] and SOAP v 1. 2 used (needed for WSManagement) Used Xml. Beans 2. 0. 0 for manipulating XML in custom container. Provides secure end-to-end delivery of messages Broker Discovery mechanism n n WS – Eventing (Leveraged from the WS – Eventing capability implemented in OMII) Security Framework for NB n n WS – Management (June 2005) parts (WS – Transfer [Sep 2004], WS – Enumeration [Sep 2004] and WS – Eventing) (could use WS-DM) May be leveraged to discover Messaging Nodes Currently implemented using JDK 1. 4. 2 (expect better performance moving to JDK 1. 5 or better) 21

Performance Evaluation Measurement Model – Test Setup n n Multithreaded manager process - Spawns a Resource specific management thread (A single manager can manage multiple different types of resources) Limit on maximum resources that can be managed n n Limited by Response time obtained Limited by maximum threads per JVM possible (memory constraints) 22

Performance Evaluation Results n n Response time increases with increasing number of resources Response time is RESOURCE-DEPENDENT and the shown times are typical MAY involve 1 or more Registry access which will increase overall response time Increases rapidly as no. of resources > (150 – 200) 23

Performance Evaluation Results: Increasing Managers on Same machine 24

Performance Evaluation Results: Increasing Managers on different machines 25

How to scale locally ROOT Cluster of Messaging Nodes US CGL … Node-1 Node-2 … Node-N 26

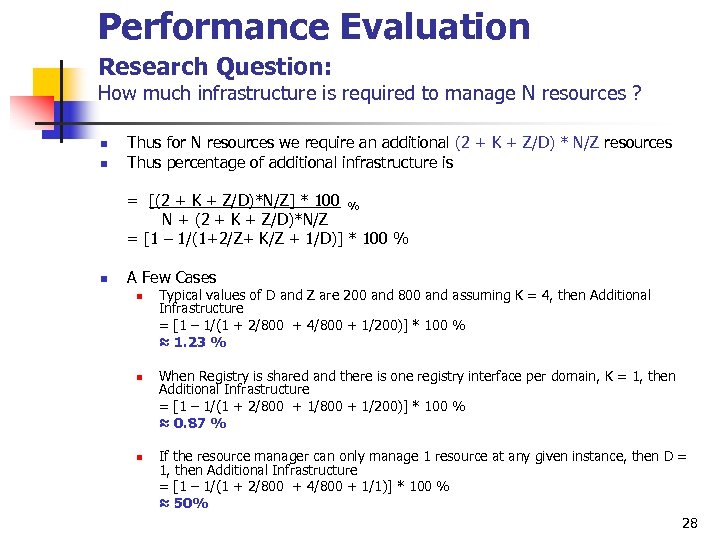

Performance Evaluation Research Question: How much infrastructure is required to manage N resources ? n n n n N = Number of resources to manage Z = Max. no. of entities connected to a single messaging node D = Max. no of resources managed by a single manager process K = min. no. of registry database instances required to provide faulttolerance Assume every leaf domain has 1 messaging node. Hence we have N/Z leaf domains. Further, No. of managers required per leaf domain is Z/D Thus total components at lowest level = Components Per domain * No. of Domains = (K + 1 Messaging Node + 1 Bootstrap Node + Z/D Managers) * N/Z = (2 + K + Z/D) * N/Z n n Note: Other passive bootstrap nodes are not counted here since (No. of Passive Nodes) << N E. g. : If it’s a shared registry, then the value of K = 1 for each domain which represents the service interface 27

Performance Evaluation Research Question: How much infrastructure is required to manage N resources ? n n Thus for N resources we require an additional (2 + K + Z/D) * N/Z resources Thus percentage of additional infrastructure is = [(2 + K + Z/D)*N/Z] * 100 % N + (2 + K + Z/D)*N/Z = [1 – 1/(1+2/Z+ K/Z + 1/D)] * 100 % n A Few Cases n n n Typical values of D and Z are 200 and 800 and assuming K = 4, then Additional Infrastructure = [1 – 1/(1 + 2/800 + 4/800 + 1/200)] * 100 % ≈ 1. 23 % When Registry is shared and there is one registry interface per domain, K = 1, then Additional Infrastructure = [1 – 1/(1 + 2/800 + 1/200)] * 100 % ≈ 0. 87 % If the resource manager can only manage 1 resource at any given instance, then D = 1, then Additional Infrastructure = [1 – 1/(1 + 2/800 + 4/800 + 1/1)] * 100 % ≈ 50% 28

Performance Evaluation XML Processing Overhead n n XML Processing overhead is measured as the total marshalling and un-marshalling time required. In case of Broker Management interactions, typical processing time (includes validation against schema) ≈ 5 ms n n n Broker Management operations invoked only during initialization and failure from recovery Reading Broker State using a GET operation involves 5 ms overhead and is invoked periodically (E. g. every 1 minute, depending on policy) Further, for most operation dealing with changing broker state, actual operation processing time >> 5 ms and hence the XML overhead of 5 ms is acceptable. 29

Prototype: Managing Grid Messaging Middleware n n We illustrate the architecture by managing the distributed messaging middleware: Narada. Brokering n This example motivated by the presence of large number of dynamic peers (brokers) that need configuration and deployment in specific topologies n Runtime metrics provide dynamic hints on improving routing which leads to redeployment of messaging system (possibly) using a different configuration and topology n Can use (dynamically) optimized protocols (UDP v TCP v Parallel TCP) and go through firewalls but no good way to make choices dynamically Broker Service Adapter n n Note NB illustrates an electronic entity that didn’t start off with an administrative Service interface So add wrapper over the basic NB Broker. Node object that provides WS – Management front-end n Allows CREATION, CONFIGURATION and MODIFICATION of broker topologies 30

Prototype: Use Case n Use case I: Audio – Video Conferencing Global. MMCS project, which uses Narada. Brokering as a event delivery substrate n n Use Case II: Sensor Network n n Consider a scenario where there is a teacher and 10, 000 students. One would typically form a TREE shaped hierarchy of brokers One broker can support up to 400 simultaneous video clients and 1500 simultaneous audio clients with acceptable quality*. So one would need (10000 / 400 ≈ 25 broker nodes). May also require additional links between brokers for fault-tolerance purposes Both use cases need high Qo. S streams of messages Use Case III: Management System itself A single participant sends audio / video … … … 400 participants * “Scalable Service Oriented Architecture for Audio/Video Conferencing”, Ahmet Uyar, Ph. D. Thesis, May 2005 31

Failure Handling WS - Policy n n Policy defines resource failure handling Implemented 2 policies (based on WSPolicy) n n Require User Input: No action taken against failure. A user interaction is required to handle Auto Instantiate: Tries auto instantiation of failed broker. n Location of a fork process is required. 32

Prototype: Costs (Individual Resources – Brokers) Time (msec) (average values) Operation Un-Initialized (First time) Initialized (Later modifications) Set Configuration 777 46 Create Broker 459 132 Create Link 175 43 Delete Link 109 35 Delete Broker 110 187 33

Recovery time: Topology Ring Cluster n Number of Resource specific Configuration Entries Recovery Time = T(Read State From Registry) + T(Bring Resource up to speed) = T(Read State) + T[Set. Config + Create Broker + Create. Link(s)] N nodes, N links (1 outgoing link per Node) 2 Resource Objects Per node 10 + (777 + 459 + 175) ≈ 1. 4 sec N nodes, Links per broker vary from 0 – 3 1 – 4 Resource Objects per node Min: 5 + (777 + 459) ≈ 1. 2 sec Max: 20 + {777 + 459 + (175*1 + 43*2)} ≈ 1. 5 sec Assuming 5 ms Read time from registry per resource object 34

Prototype: Observed Recovery Cost per Resource Operation *Spawn Process Read State Average (msec) 2362 ± 18 8± 1 Restore (1 Broker + 1 Link) 1421 ± 9 Restore (1 Broker + 3 Link) 1616 ± 82 Time for Create Broker depends on the number & type of transports opened by the broker E. g. SSL transport requires negotiation of keys and would require more time than simply opening a TCP connection If brokers connect to other brokers, the destination broker MUST be ready to accept connections, else topology recovery takes more time. 35

Management Console: Creating Nodes and Setting Properties 36

Management Console: Creating Links 37

Management Console: Policies 38

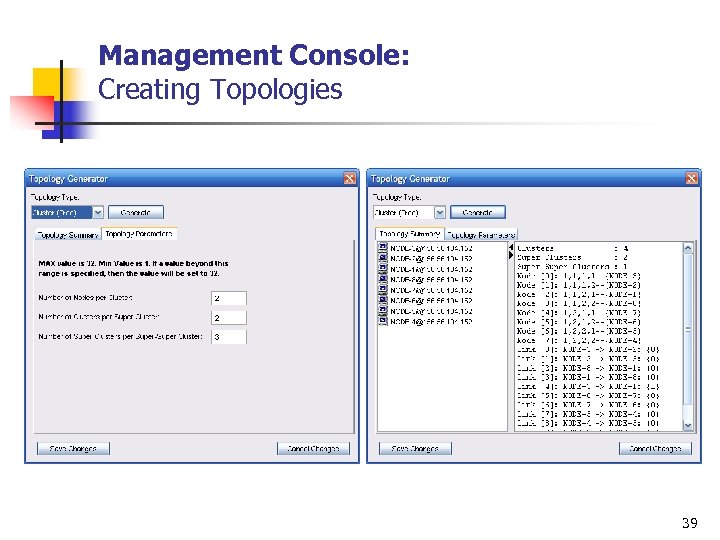

Management Console: Creating Topologies 39

Related work n Fault-Tolerance Strategies n Replication n Provide transfer of control to a new or existing backup service instance on failure Passive (primary / backup) OR Active E. g. Distributed databases, agents-based systems 40

Related work n Fault-Tolerance Strategies n n Replication Check-pointing n n n Allow computation to continue from point of failure OR for process migration E. g. MPI-based systems (Open MPI) Can be done independently (easier to do but complicates recovery) OR co-ordinated (performance issue but recovery is easy) 41

Related work n Fault-Tolerance Strategies n n n Replication Check-pointing Request-Retry Logging Checksum 42

Related work n n Fault-Tolerance Strategies Failure Detection n n Scalability n n Via periodic Heartbeats (E. g. Globus Heartbeat Monitor) Hierarchical organization (E. g. DNS) Resource Management (Monitoring / Scheduling) n E. g. Mon. ALISA, Globus GRAM 43

Conclusion n We have presented a scalable, fault-tolerant management framework that n n Adds acceptable cost in terms of extra resources required (about 1%) Provides a general framework for management of distributed resources Is compatible with existing Web Service standard We have applied our framework to manage resources that have modest external state n This assumption is important to improve scalability of management process 44

Summary Of Contributions n Designed and implemented a Resource Management Framework: n Tolerant to failures in management framework as well as resource failures by implementing resource specific policies n Scalable - In terms of number of additional resources required to provide fault-tolerance and performance n Implements Web Service Management to manage resources n Our implementation of global management by leveraging a scalable messaging substrate to traverse firewalls n Detailed evaluation of the system components to show that the proposed architecture has acceptable costs n n The architecture adds (approx. ) 1% extra resources Implemented Prototype to illustrate management of a distributed messaging middleware system: Narada. Brokering 45

Future Work n Current work assumes SMALL runtime state that needs to be maintained. n n Apply management framework and evaluate the system when this assumption does not hold true More messages / Higher sized messages n n XML processing overhead becomes significant Apply the framework to broader domains 46

Publications n On the proposed work: n n n Scalable, Fault-tolerant Management in a Service Oriented Architecture Harshawardhan Gadgil, Geoffrey Fox, Shrideep Pallickara, Marlon Pierce Submitted to IPDPS 2007 Managing Grid Messaging Middleware Harshawardhan Gadgil, Geoffrey Fox, Shrideep Pallickara, Marlon Pierce In Proceedings of “Challenges of Large Applications in Distributed Environments” ( CLADE), pp. 83 - 91, June 19, 2006, Paris, France Relevant to the proposed work: n n A Scripting based Architecture for Management of Streams and Services in Real-time Grid Applications Harshawardhan Gadgil, Geoffrey Fox, Shrideep Pallickara, Marlon Pierce, Robert Granat In Proceedings of the IEEE/ACM Cluster Computing and Grid 2005 Conference, CCGrid 2005, Vol. 2, pp. 710 -717, Cardiff, UK On the Discovery of Brokers in Distributed Messaging Infrastructure Shrideep Pallickara, Harshawardhan Gadgil, Geoffrey Fox In Proceedings of the IEEE Cluster 2005 Conference. Boston, MA On the Discovery of Topics in Distributed Publish/Subscribe systems Shrideep Pallickara, Geoffrey Fox, Harshawardhan Gadgil In Proceedings of the 6 th IEEE/ACM International Workshop on Grid Computing Grid 2005 Conference, pp. 25 -32, Seattle, WA (Selected as one of six Best Papers) A Framework for Secure End-to-End Delivery of Messages in Publish/Subscribe Systems Shrideep Pallickara, Marlon Pierce, Harshawardhan Gadgil, Geoffrey Fox, Yan, Yi Huang (To Appear) In Proceedings of “The 7 th IEEE/ACM International Conference on Grid Computing” ( Grid 2006), Barcelona, September 28 th-29 th, 2006 47

Publications: Others n n On the Secure Creation, Organization and Discovery of Topics in Distributed Publish/Subscribe systems Shrideep Pallickara, Geoffrey Fox, Harshawardhan Gadgil (To Appear) International Journal of High Performance Computing and Networking ( IJHPCN), 2006. Special Issue of extended versions of the 6 best papers at the ACM/IEEE Grid 2005 Workshop Building Messaging Substrates for Web and Grid Applications Geoffrey Fox, Shrideep Pallickara, Marlon Pierce, Harshawardhan Gadgil In special Issue on Scientific Applications of Grid Computing in Philosophical Transactions of the Royal Society, London, Volume 363, Number 1833, pp 1757 -1773, August 2005 Management of Real-Time Streaming Data Grid Services Geoffrey Fox, Galip Aydin, Harshawardhan Gadgil, Shrideep Pallickara, Marlon Pierce, and Wenjun Wu Invited talk at Fourth International Conference on Grid and Cooperative Computing (GCC 2005), Beijing, China Nov 30 -Dec 3, 2005, Lecture Notes in Computer Science, Volume 3795, Nov 2005, Pages 3 -12 SERVOGrid Complexity Computational Environments(CCE) Integrated Performance Analysis Galip Aydin, Mehmet S. Aktas, Geoffrey C. Fox, Harshawardhan Gadgil, Marlon Pierce, Ahmet Sayar As poster and In Proceedings of the 6 th IEEE/ACM International Workshop on Grid Computing Grid 2005 Conference, pp. 256 - 261, Seattle, WA, Nov 13 - 14, 2005 48

015eb837265d59d22c135219f3d17eb4.ppt