b12815a69cba54b1617eadc978b721bc.ppt

- Количество слайдов: 35

Scalability Tools: Automated Testing (30 minutes) Overview Hooking up your game external tools internal game changes Applications & Gotchas engineering, QA, operations production & management Summary & Questions

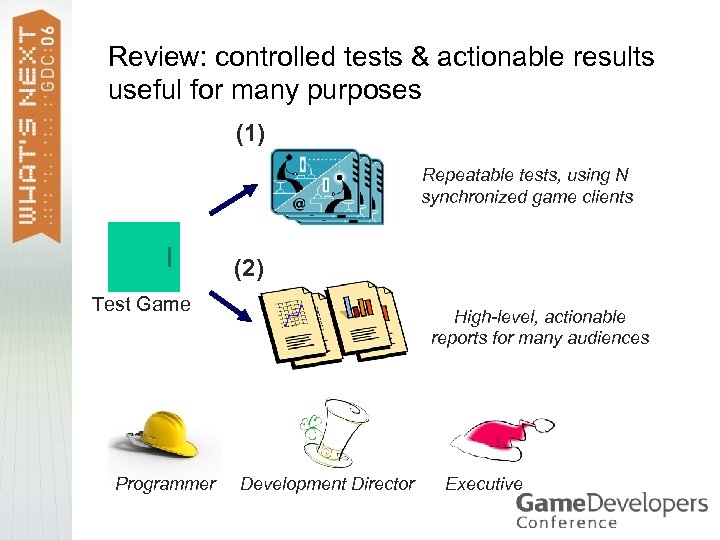

Review: controlled tests & actionable results useful for many purposes (1) Repeatable tests, using N synchronized game clients (2) Test Game Programmer High-level, actionable reports for many audiences Development Director Executive

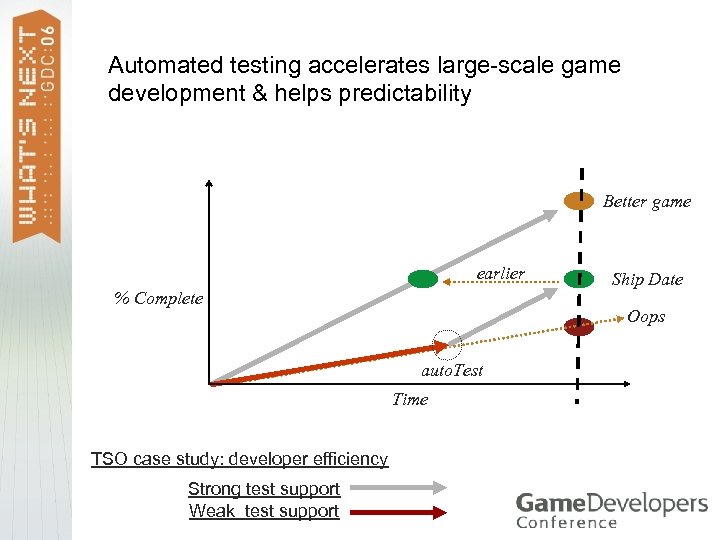

Automated testing accelerates large-scale game development & helps predictability Better game earlier Ship Date % Complete Oops auto. Test Project Start Time TSO case study: developer efficiency Strong test support Weak test support Target Launch

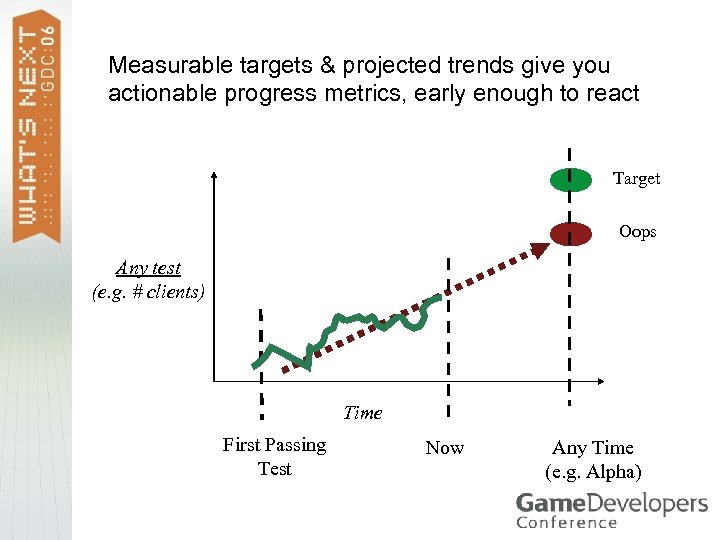

Measurable targets & projected trends give you actionable progress metrics, early enough to react Target Oops Any test (e. g. # clients) Time First Passing Test Now Any Time (e. g. Alpha)

Success stories > Many game teams work with automated testing > EA, Microsoft, any MMO, … > Automated testing has many highly successful applications outside of game development > Caveat: there are many ways to fail…

How to succeed > Plan for testing early > > > Fast, cheap test coverage is a major change in production, be willing to adapt your processes > > > Non-trivial system Architectural implications Make sure the entire team is on board Deeper integration leads to greater value Kearneyism: “make it easier to use than not to use”

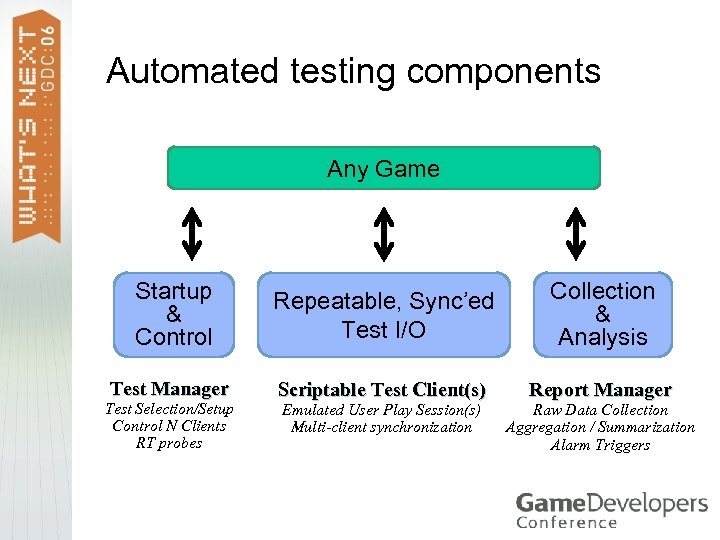

Automated testing components Any Game Startup & Control Repeatable, Sync’ed Test I/O Collection & Analysis Test Manager Scriptable Test Client(s) Report Manager Test Selection/Setup Control N Clients RT probes Emulated User Play Session(s) Multi-client synchronization Raw Data Collection Aggregation / Summarization Alarm Triggers

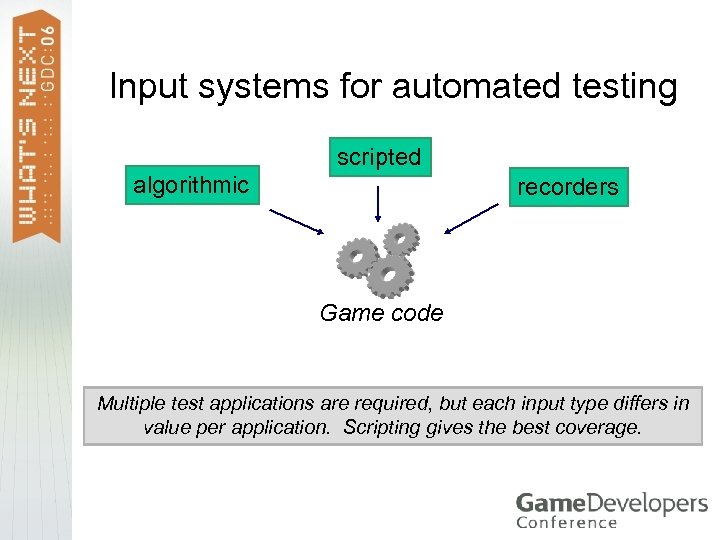

Input systems for automated testing scripted algorithmic recorders Game code Multiple test applications are required, but each input type differs in value per application. Scripting gives the best coverage.

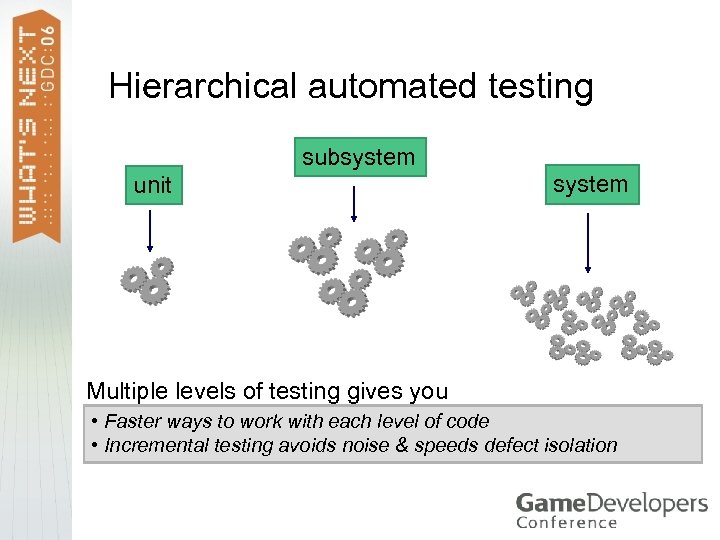

Hierarchical automated testing subsystem unit system Multiple levels of testing gives you • Faster ways to work with each level of code • Incremental testing avoids noise & speeds defect isolation

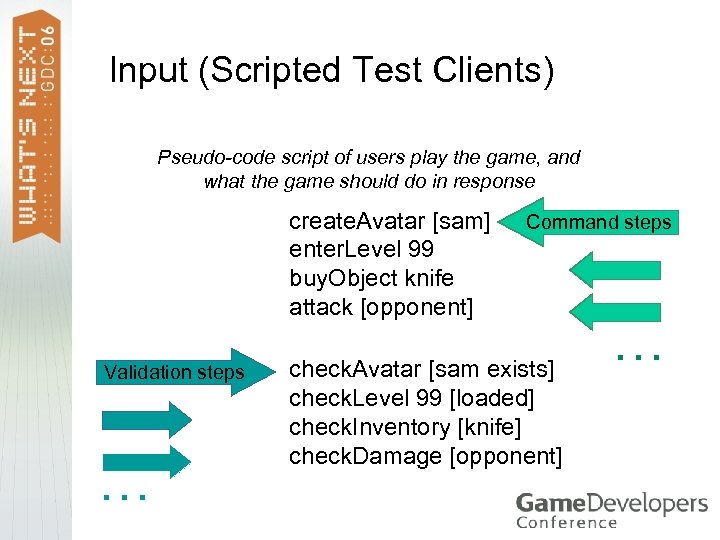

Input (Scripted Test Clients) Pseudo-code script of users play the game, and what the game should do in response create. Avatar [sam] enter. Level 99 buy. Object knife attack [opponent] Validation steps … Command steps check. Avatar [sam exists] check. Level 99 [loaded] check. Inventory [knife] check. Damage [opponent] …

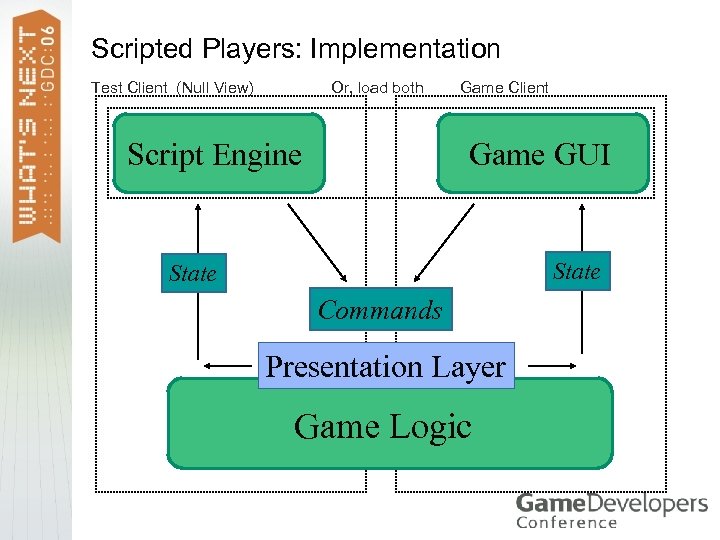

Scripted Players: Implementation Or, load both Test Client (Null View) Script Engine Game Client Game GUI State Commands Presentation Layer Game Logic

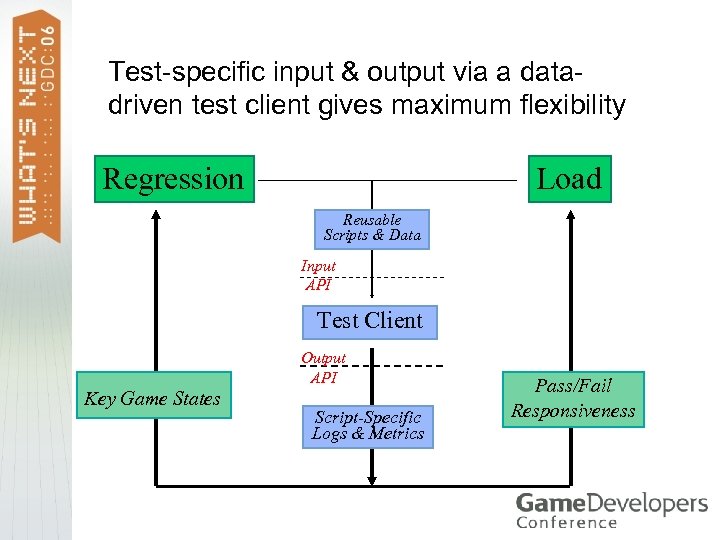

Test-specific input & output via a datadriven test client gives maximum flexibility Load Regression Reusable Scripts & Data Input API Test Client Output API Key Game States Script-Specific Logs & Metrics Pass/Fail Responsiveness

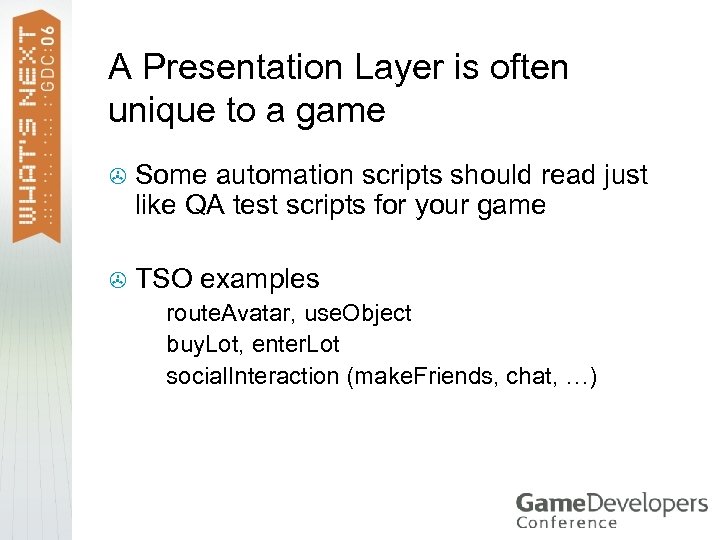

A Presentation Layer is often unique to a game > > Some automation scripts should read just like QA test scripts for your game Null. View TSO examples Client route. Avatar, use. Object > buy. Lot, enter. Lot > social. Interaction (make. Friends, chat, …) >

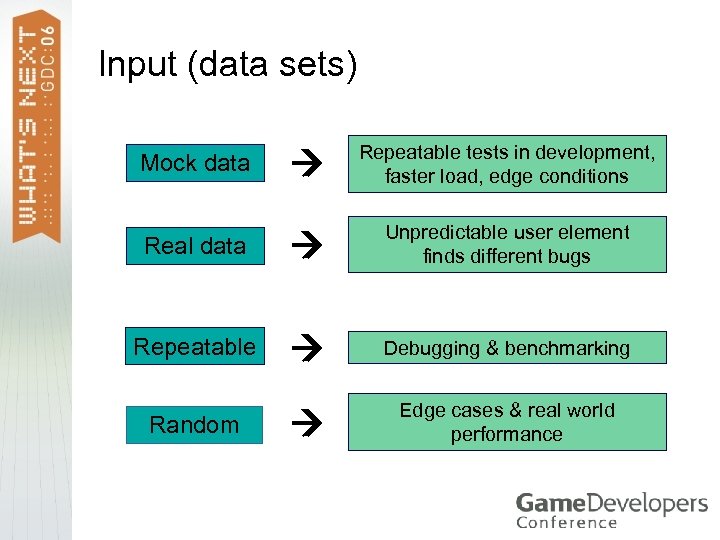

Input (data sets) Repeatable tests in development, faster load, edge conditions Real data Unpredictable user element finds different bugs Repeatable Debugging & benchmarking Edge cases & real world performance Mock data Random

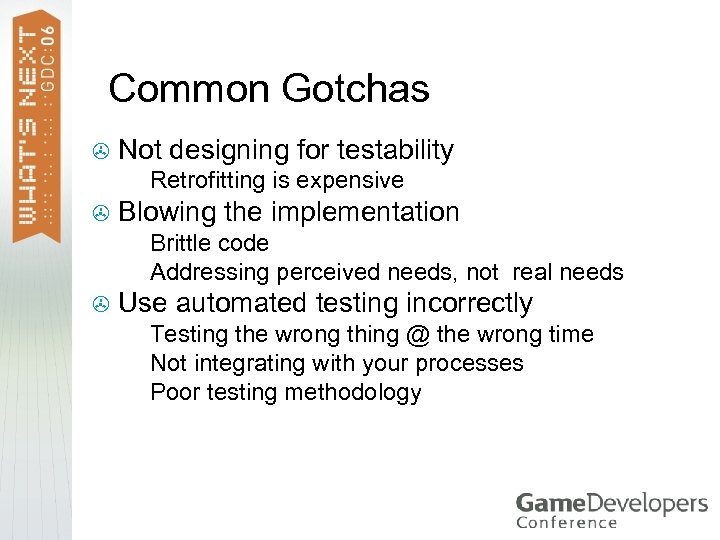

Common Gotchas > Not designing for testability > > Retrofitting is expensive Blowing the implementation Brittle code > Addressing perceived needs, not real needs > > Use automated testing incorrectly Testing the wrong thing @ the wrong time > Not integrating with your processes > Poor testing methodology >

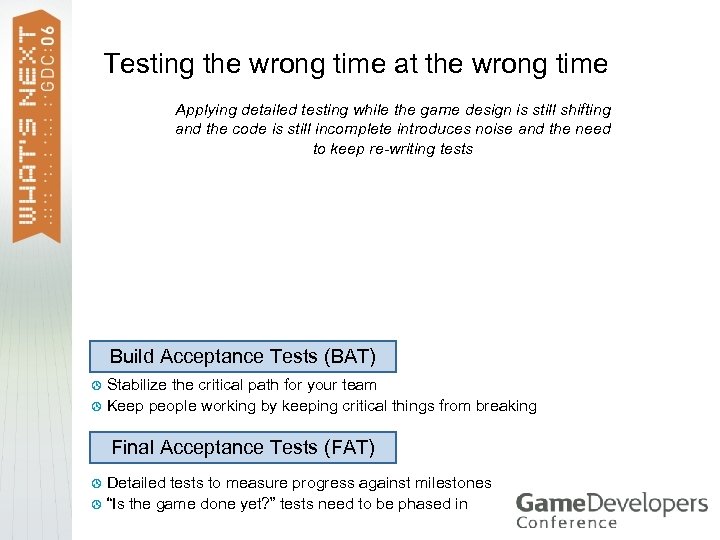

Testing the wrong time at the wrong time Applying detailed testing while the game design is still shifting and the code is still incomplete introduces noise and the need to keep re-writing tests Build Acceptance Tests (BAT) Stabilize the critical path for your team > Keep people working by keeping critical things from breaking > Final Acceptance Tests (FAT) Detailed tests to measure progress against milestones > “Is the game done yet? ” tests need to be phased in >

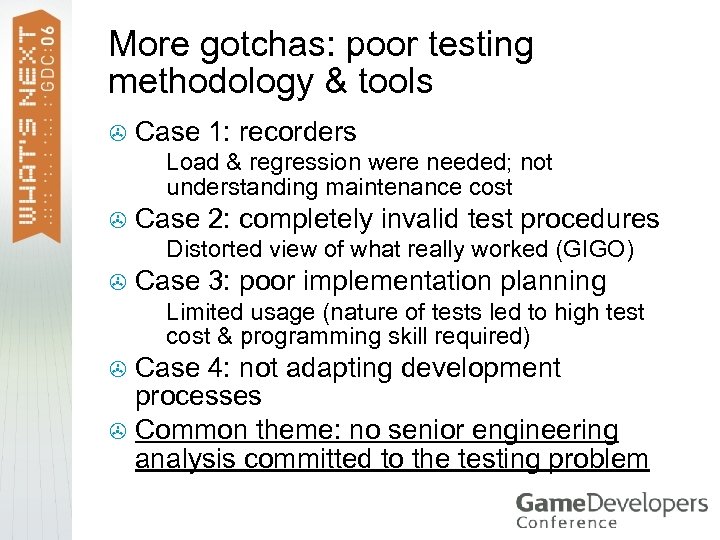

More gotchas: poor testing methodology & tools > Case 1: recorders > > Case 2: completely invalid test procedures > > Load & regression were needed; not understanding maintenance cost Distorted view of what really worked (GIGO) Case 3: poor implementation planning > Limited usage (nature of tests led to high test cost & programming skill required) Case 4: not adapting development processes > Common theme: no senior engineering analysis committed to the testing problem >

Automated Testing for Online Games Overview Hooking up your game external tools internal game changes Applications engineering, QA, operations production & management Summary & Questions

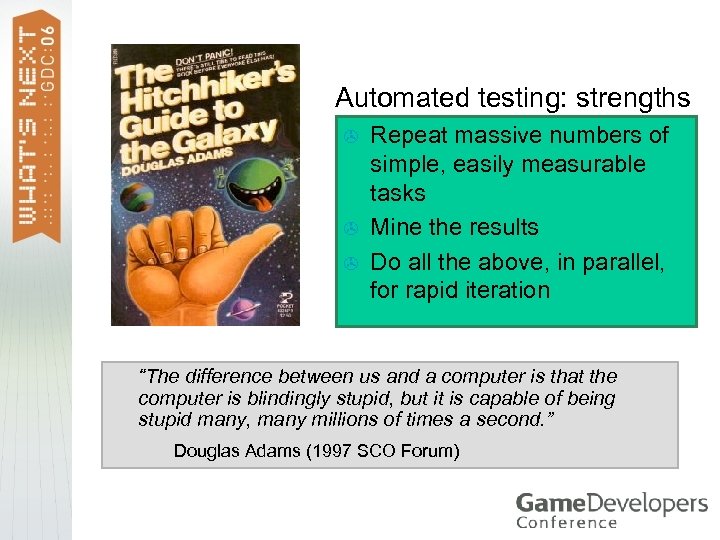

Automated testing: strengths > > > Repeat massive numbers of simple, easily measurable tasks Mine the results Do all the above, in parallel, for rapid iteration “The difference between us and a computer is that the computer is blindingly stupid, but it is capable of being stupid many, many millions of times a second. ” Douglas Adams (1997 SCO Forum)

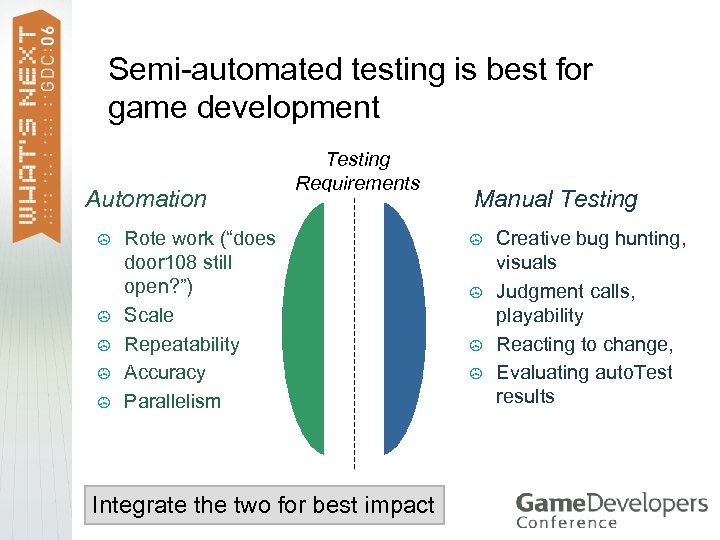

Semi-automated testing is best for game development Automation > > > Testing Requirements Rote work (“does door 108 still open? ”) Scale Repeatability Accuracy Parallelism Integrate the two for best impact Manual Testing > > Creative bug hunting, visuals Judgment calls, playability Reacting to change, Evaluating auto. Test results

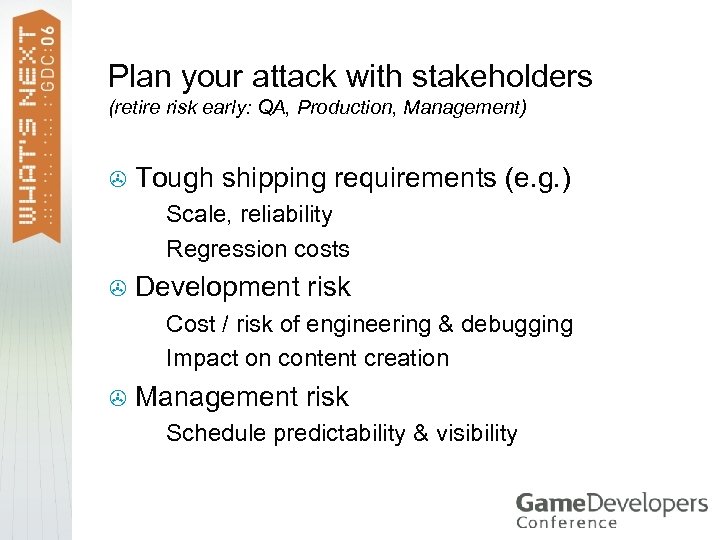

Plan your attack with stakeholders (retire risk early: QA, Production, Management) > Tough shipping requirements (e. g. ) Scale, reliability > Regression costs > > Development risk Cost / risk of engineering & debugging > Impact on content creation > > Management risk > Schedule predictability & visibility

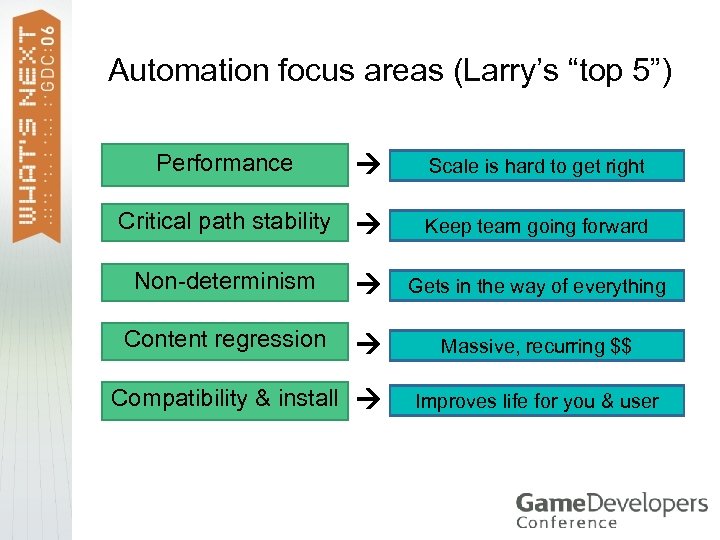

Automation focus areas (Larry’s “top 5”) Performance Scale is hard to get right Critical path stability Keep team going forward Non-determinism Gets in the way of everything Content regression Massive, recurring $$ Compatibility & install Improves life for you & user

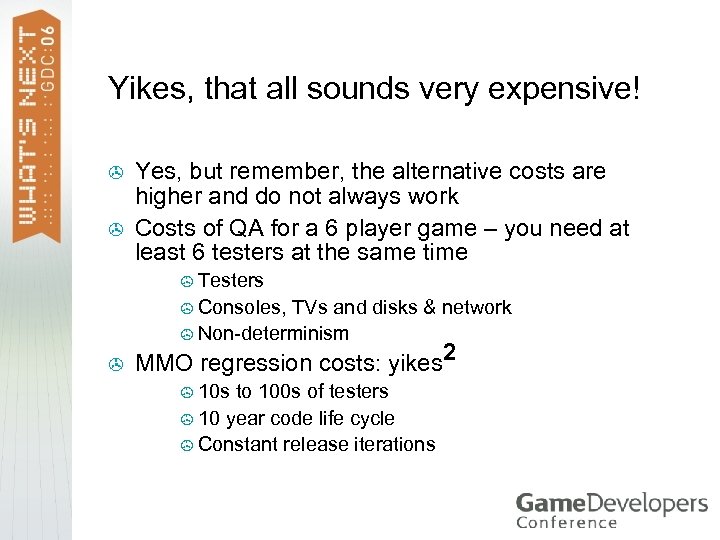

Yikes, that all sounds very expensive! > > Yes, but remember, the alternative costs are higher and do not always work Costs of QA for a 6 player game – you need at least 6 testers at the same time > Testers > Consoles, TVs and disks & network > Non-determinism > MMO regression costs: yikes 2 > 10 s to 100 s of testers > 10 year code life cycle > Constant release iterations

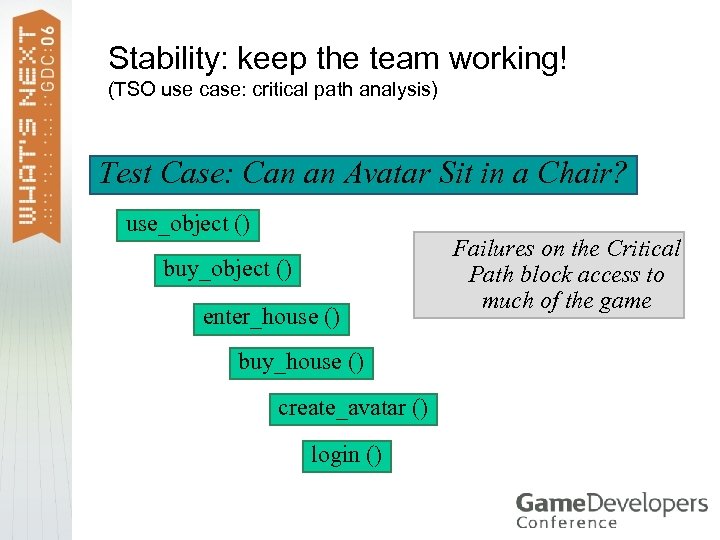

Stability: keep the team working! (TSO use case: critical path analysis) Test Case: Can an Avatar Sit in a Chair? use_object () buy_object () enter_house () buy_house () create_avatar () login () Failures on the Critical Path block access to much of the game

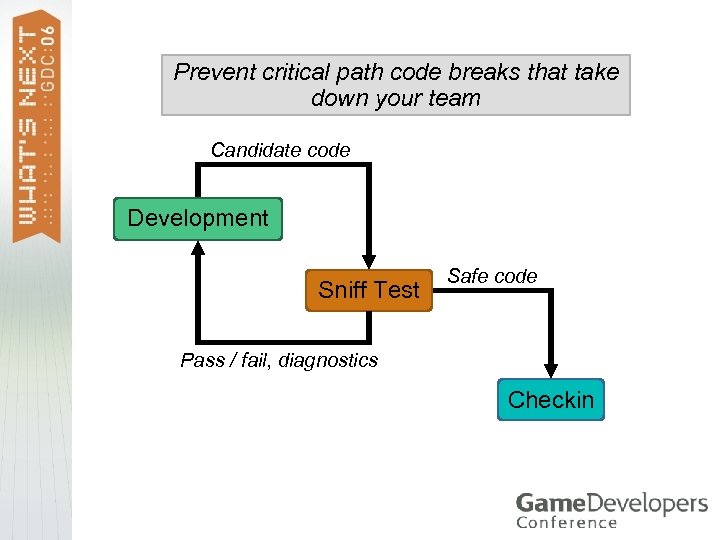

Prevent critical path code breaks that take down your team Candidate code Development Sniff Test Safe code Pass / fail, diagnostics Checkin

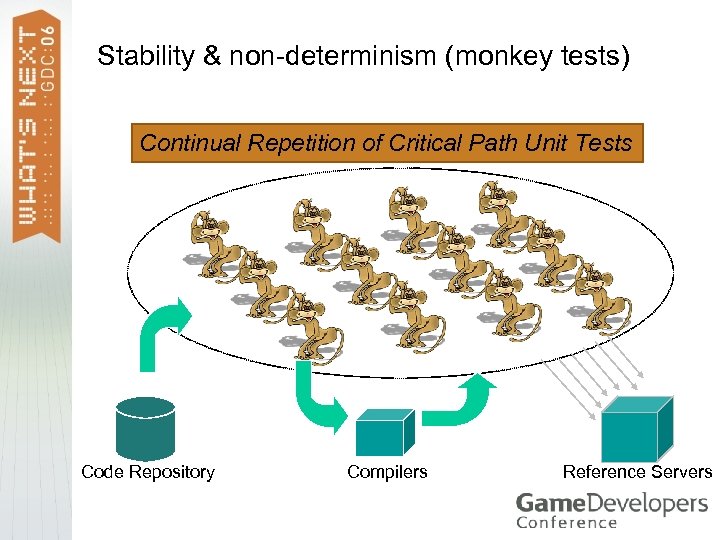

Stability & non-determinism (monkey tests) Continual Repetition of Critical Path Unit Tests Code Repository Compilers Reference Servers

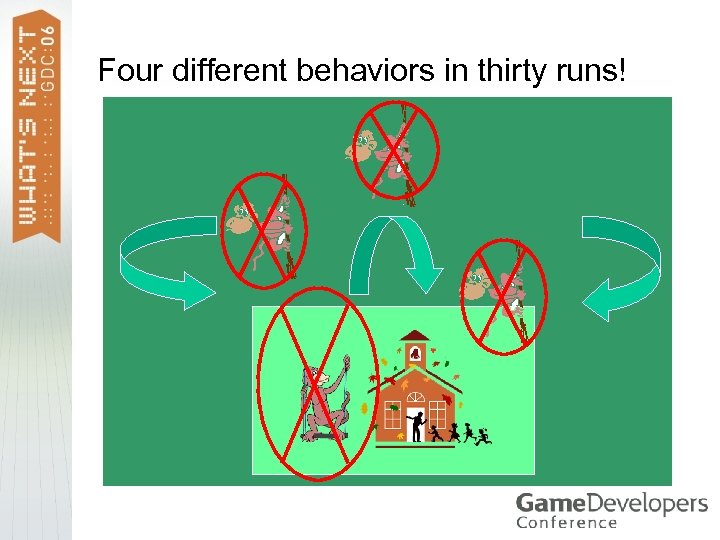

Auto. Test addresses non-determinism > Detection & reproduction of race condition defects > > Even low probability errors are exposed with sufficient testing (random, structured, load, aging) Measurability of race condition defects > Occurs x% of the time, over 400 x test runs

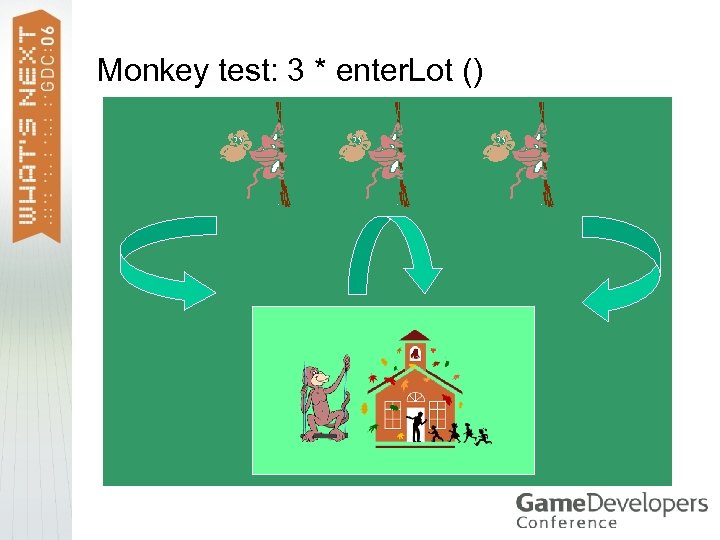

Monkey test: enter. Lot ()

Monkey test: 3 * enter. Lot ()

Four different behaviors in thirty runs!

Content testing (areas) > Regression Error detection Balancing / tuning > This topic is a tutorial in and of itself > > > Content regression is a huge cost problem Many ways to automate it (algorithmic, scripted & combined, …) Differs wildly across game genres

Content testing (more examples) Light mapping, shadow detection > Asset correctness / sameness > Compatibility testing > Armor / damage > Class balances > Validating against old user. Data > … (unique to each game) >

Automated Testing for Online Games (One Hour) Overview Hooking up your game external tools internal game changes Applications engineering, QA, operations production & management Summary & Questions

Summary: automated testing > Start early & make it easy to use > Strongly impacts your success > The bigger & more complex your game, the more automated testing you need > You need commitment across the team > Engineering, QA, management, content creation

Q&A & other resources > > My email: larry. mellon_@_emergent. net More material on automated testing for games > http: //www. maggotranch. com/mmp. html > > www. amazon. com: “Massively Multiplayer Game Development II” > > > Chapters on automated testing and automated metrics systems www. gamasutra. com: Dag Frommhold, Fabian Röken > > Last year’s online engineering slides This year’s slides Talks on automated testing & scaling the development process Lengthy article on applying automated testing in games Microsoft: various groups & writings From outside the gaming world > > > Kent Beck: anything on test-driven development http: //www. martinfowler. com/articles/continuous. Integration. ht ml#id 108619: Continual integration testing Amazon & Google: inside & outside our industry

b12815a69cba54b1617eadc978b721bc.ppt