52f529c92280c8076c5087145be0d6c7.ppt

- Количество слайдов: 24

S-Matrix and the Grid Geoffrey Fox Professor of Computer Science, Informatics, Physics Pervasive Technology Laboratories Indiana University Bloomington IN 47401 December 12 2003 gcf@indiana. edu http: //www. infomall. org 1

S-Matrix and the Grid Geoffrey Fox Professor of Computer Science, Informatics, Physics Pervasive Technology Laboratories Indiana University Bloomington IN 47401 December 12 2003 gcf@indiana. edu http: //www. infomall. org 1

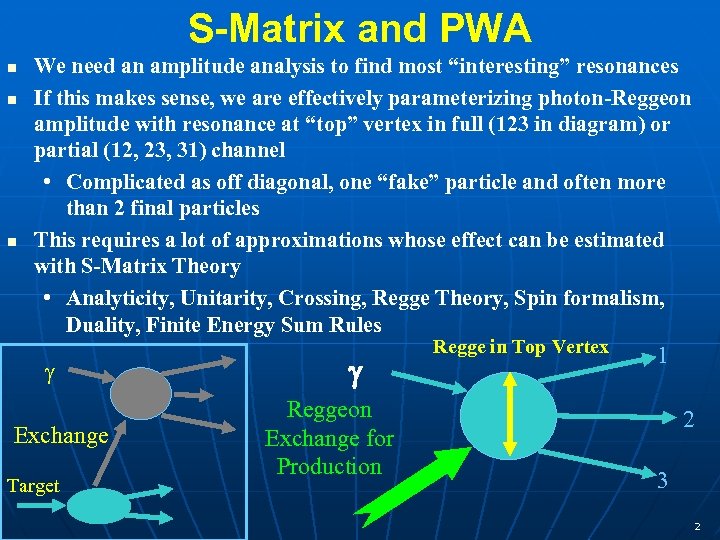

S-Matrix and PWA n n n We need an amplitude analysis to find most “interesting” resonances If this makes sense, we are effectively parameterizing photon-Reggeon amplitude with resonance at “top” vertex in full (123 in diagram) or partial (12, 23, 31) channel • Complicated as off diagonal, one “fake” particle and often more than 2 final particles This requires a lot of approximations whose effect can be estimated with S-Matrix Theory • Analyticity, Unitarity, Crossing, Regge Theory, Spin formalism, Duality, Finite Energy Sum Rules Exchange Target Reggeon Exchange for Production Regge in Top Vertex 1 2 3 2

S-Matrix and PWA n n n We need an amplitude analysis to find most “interesting” resonances If this makes sense, we are effectively parameterizing photon-Reggeon amplitude with resonance at “top” vertex in full (123 in diagram) or partial (12, 23, 31) channel • Complicated as off diagonal, one “fake” particle and often more than 2 final particles This requires a lot of approximations whose effect can be estimated with S-Matrix Theory • Analyticity, Unitarity, Crossing, Regge Theory, Spin formalism, Duality, Finite Energy Sum Rules Exchange Target Reggeon Exchange for Production Regge in Top Vertex 1 2 3 2

Some Lessons from the past I n n n All confusing effects exist and no fundamental (correct) way to remove. So one should: • Minimize effect of the hard (insoluble) problems such as “particles from wrong vertex”, “unestimatable exchange effects” sensitive to slope of unclear Regge trajectories, absorption etc. • Carefully identify where effects are “additive” and where confusingly overlapping Note many of effects are intrinsically MORE important in multiparticle case than in relatively well studied π N Try to estimate impact of uncertainties from each effect on results • It would be very helpful to get systematic very high statistic studies of relatively clean cases where spectroscopy may be less interesting but one can examine uncertainties • Possibilities are A 1 A 2 A 3 B 1 peripherally produced and even π N π π N; K or π beams good 3

Some Lessons from the past I n n n All confusing effects exist and no fundamental (correct) way to remove. So one should: • Minimize effect of the hard (insoluble) problems such as “particles from wrong vertex”, “unestimatable exchange effects” sensitive to slope of unclear Regge trajectories, absorption etc. • Carefully identify where effects are “additive” and where confusingly overlapping Note many of effects are intrinsically MORE important in multiparticle case than in relatively well studied π N Try to estimate impact of uncertainties from each effect on results • It would be very helpful to get systematic very high statistic studies of relatively clean cases where spectroscopy may be less interesting but one can examine uncertainties • Possibilities are A 1 A 2 A 3 B 1 peripherally produced and even π N π π N; K or π beams good 3

S-Matrix Approach n n n S-Matrix ideas that work reasonably include: Regge theory for production process Two-component duality adding Regge dual to Regge to background dual to the Pomeron • Can help to identify if a resonance is classic qq or exotic n n Use of Regge exchange at top vertex to estimate high partial waves in amplitude analysis Finite Energy Sum Rules for top vertex as constraints on low mass amplitudes and most quantitative way of linking high and low masses Ignore Regge Cuts in Production Unitarity effects not included directly due to duality double counting 4

S-Matrix Approach n n n S-Matrix ideas that work reasonably include: Regge theory for production process Two-component duality adding Regge dual to Regge to background dual to the Pomeron • Can help to identify if a resonance is classic qq or exotic n n Use of Regge exchange at top vertex to estimate high partial waves in amplitude analysis Finite Energy Sum Rules for top vertex as constraints on low mass amplitudes and most quantitative way of linking high and low masses Ignore Regge Cuts in Production Unitarity effects not included directly due to duality double counting 4

Investigate Uncertainties n n There are several possible sources of error • Errors in Quasi 2 -body and limited number of amplitudes approximation • Unitarity (final state interactions) • Errors in the two-component duality picture • Exotic particles are produced and are just different • Photon beams, π exchange or some other “classic effect” not present in original πN analyses behaves unexpectedly • Failure of quasi two body approximation • Regge cuts cannot be ignored • Background from other channels Develop tests for these in both “easy” cases (such as “old” meson beam data) and in photon beam data at Jefferson laboratory • Investigate all effects on any interesting result from PWA 5

Investigate Uncertainties n n There are several possible sources of error • Errors in Quasi 2 -body and limited number of amplitudes approximation • Unitarity (final state interactions) • Errors in the two-component duality picture • Exotic particles are produced and are just different • Photon beams, π exchange or some other “classic effect” not present in original πN analyses behaves unexpectedly • Failure of quasi two body approximation • Regge cuts cannot be ignored • Background from other channels Develop tests for these in both “easy” cases (such as “old” meson beam data) and in photon beam data at Jefferson laboratory • Investigate all effects on any interesting result from PWA 5

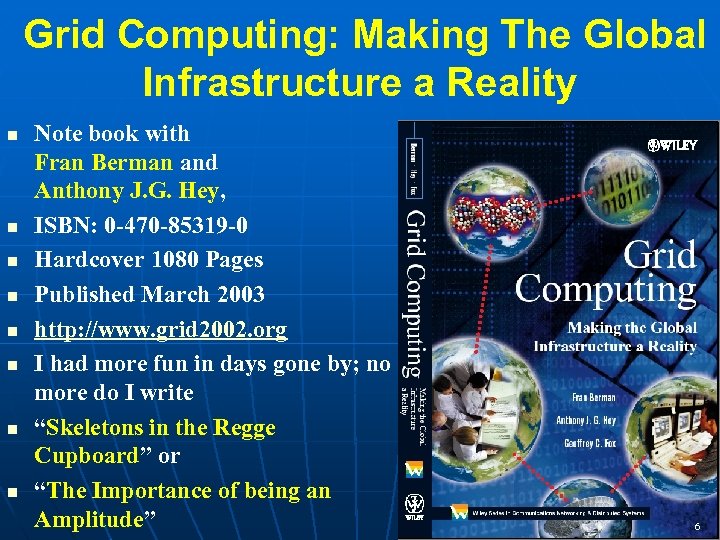

Grid Computing: Making The Global Infrastructure a Reality n n n n Note book with Fran Berman and Anthony J. G. Hey, ISBN: 0 -470 -85319 -0 Hardcover 1080 Pages Published March 2003 http: //www. grid 2002. org I had more fun in days gone by; no more do I write “Skeletons in the Regge Cupboard” or “The Importance of being an Amplitude” 6

Grid Computing: Making The Global Infrastructure a Reality n n n n Note book with Fran Berman and Anthony J. G. Hey, ISBN: 0 -470 -85319 -0 Hardcover 1080 Pages Published March 2003 http: //www. grid 2002. org I had more fun in days gone by; no more do I write “Skeletons in the Regge Cupboard” or “The Importance of being an Amplitude” 6

Some Further Links n A talk on Grid and e-Science was webcast in an Oracle technology series http: //webevents. broadcast. com/techtarget/Oracle/100303/index. asp? loc=10 n See also the “Gap Analysis” survey of Grid technology http: //grids. ucs. indiana. edu/ptliupages/publications/Gap. Analysis 30 June 03 v 2. pdf n n n This presentation is at http: //grids. ucs. indiana. edu/ptliupages/presentations Next Semester – course on “e-Science and the Grid” given by Access Grid Write up for May Conference describes proposed Physics Strategy http: //grids. ucs. indiana. edu/ptliupages/publications/gluonic_gcf. pdf http: //grids. ucs. indiana. edu/ptliupages/presentations/pwamay 03. ppt 7

Some Further Links n A talk on Grid and e-Science was webcast in an Oracle technology series http: //webevents. broadcast. com/techtarget/Oracle/100303/index. asp? loc=10 n See also the “Gap Analysis” survey of Grid technology http: //grids. ucs. indiana. edu/ptliupages/publications/Gap. Analysis 30 June 03 v 2. pdf n n n This presentation is at http: //grids. ucs. indiana. edu/ptliupages/presentations Next Semester – course on “e-Science and the Grid” given by Access Grid Write up for May Conference describes proposed Physics Strategy http: //grids. ucs. indiana. edu/ptliupages/publications/gluonic_gcf. pdf http: //grids. ucs. indiana. edu/ptliupages/presentations/pwamay 03. ppt 7

e-Business e-Science and the Grid n n n e-Business captures an emerging view of corporations as dynamic virtual organizations linking employees, customers and stakeholders across the world. • The growing use of outsourcing is one example e-Science is the similar vision for scientific research with international participation in large accelerators, satellites or distributed gene analyses. The Grid integrates the best of the Web, traditional enterprise software, high performance computing and Peerto-peer systems to provide the information technology infrastructure for e-moreorlessanything. A deluge of data of unprecedented and inevitable size must be managed and understood. People, computers, data and instruments must be linked. On demand assignment of experts, computers, networks and storage resources must be supported 8

e-Business e-Science and the Grid n n n e-Business captures an emerging view of corporations as dynamic virtual organizations linking employees, customers and stakeholders across the world. • The growing use of outsourcing is one example e-Science is the similar vision for scientific research with international participation in large accelerators, satellites or distributed gene analyses. The Grid integrates the best of the Web, traditional enterprise software, high performance computing and Peerto-peer systems to provide the information technology infrastructure for e-moreorlessanything. A deluge of data of unprecedented and inevitable size must be managed and understood. People, computers, data and instruments must be linked. On demand assignment of experts, computers, networks and storage resources must be supported 8

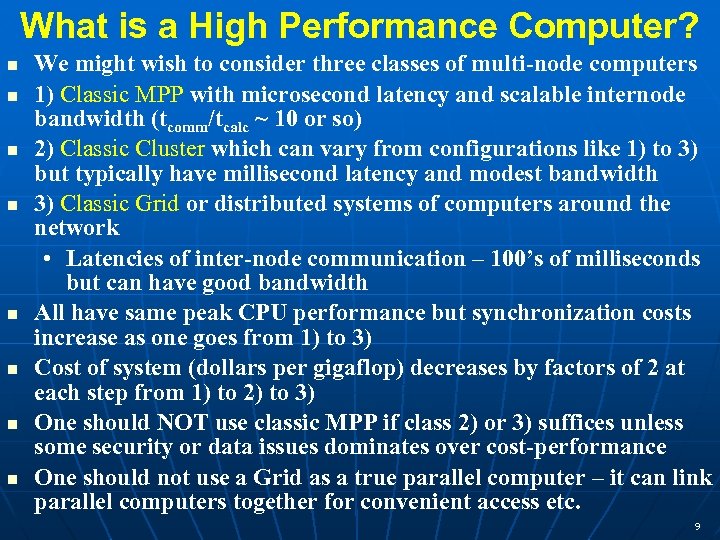

What is a High Performance Computer? n n n n We might wish to consider three classes of multi-node computers 1) Classic MPP with microsecond latency and scalable internode bandwidth (tcomm/tcalc ~ 10 or so) 2) Classic Cluster which can vary from configurations like 1) to 3) but typically have millisecond latency and modest bandwidth 3) Classic Grid or distributed systems of computers around the network • Latencies of inter-node communication – 100’s of milliseconds but can have good bandwidth All have same peak CPU performance but synchronization costs increase as one goes from 1) to 3) Cost of system (dollars per gigaflop) decreases by factors of 2 at each step from 1) to 2) to 3) One should NOT use classic MPP if class 2) or 3) suffices unless some security or data issues dominates over cost-performance One should not use a Grid as a true parallel computer – it can link parallel computers together for convenient access etc. 9

What is a High Performance Computer? n n n n We might wish to consider three classes of multi-node computers 1) Classic MPP with microsecond latency and scalable internode bandwidth (tcomm/tcalc ~ 10 or so) 2) Classic Cluster which can vary from configurations like 1) to 3) but typically have millisecond latency and modest bandwidth 3) Classic Grid or distributed systems of computers around the network • Latencies of inter-node communication – 100’s of milliseconds but can have good bandwidth All have same peak CPU performance but synchronization costs increase as one goes from 1) to 3) Cost of system (dollars per gigaflop) decreases by factors of 2 at each step from 1) to 2) to 3) One should NOT use classic MPP if class 2) or 3) suffices unless some security or data issues dominates over cost-performance One should not use a Grid as a true parallel computer – it can link parallel computers together for convenient access etc. 9

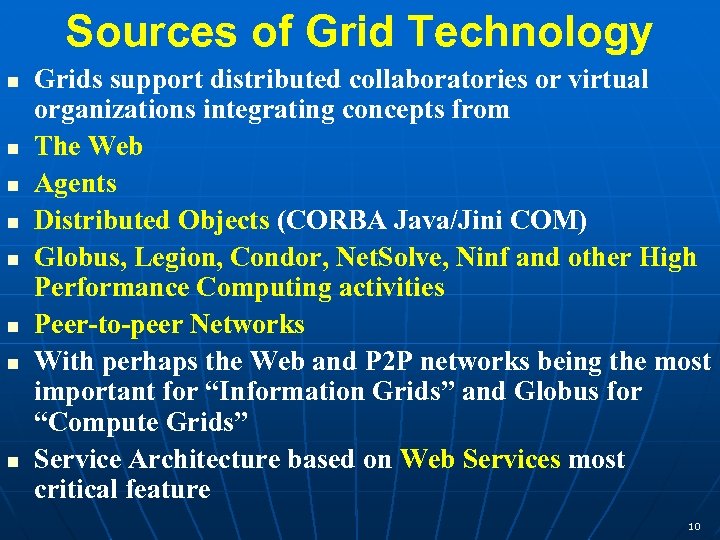

Sources of Grid Technology n n n n Grids support distributed collaboratories or virtual organizations integrating concepts from The Web Agents Distributed Objects (CORBA Java/Jini COM) Globus, Legion, Condor, Net. Solve, Ninf and other High Performance Computing activities Peer-to-peer Networks With perhaps the Web and P 2 P networks being the most important for “Information Grids” and Globus for “Compute Grids” Service Architecture based on Web Services most critical feature 10

Sources of Grid Technology n n n n Grids support distributed collaboratories or virtual organizations integrating concepts from The Web Agents Distributed Objects (CORBA Java/Jini COM) Globus, Legion, Condor, Net. Solve, Ninf and other High Performance Computing activities Peer-to-peer Networks With perhaps the Web and P 2 P networks being the most important for “Information Grids” and Globus for “Compute Grids” Service Architecture based on Web Services most critical feature 10

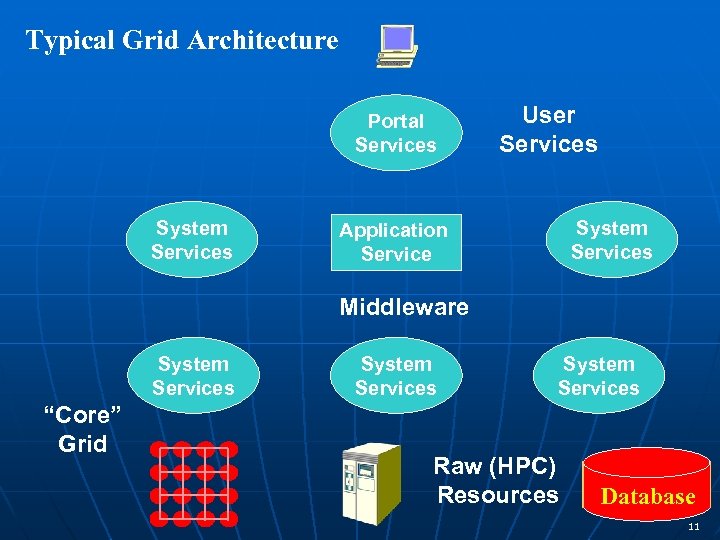

Typical Grid Architecture Portal Services System Services User Services System Services Application Service Middleware System Services “Core” Grid System Services Raw (HPC) Resources Database 11

Typical Grid Architecture Portal Services System Services User Services System Services Application Service Middleware System Services “Core” Grid System Services Raw (HPC) Resources Database 11

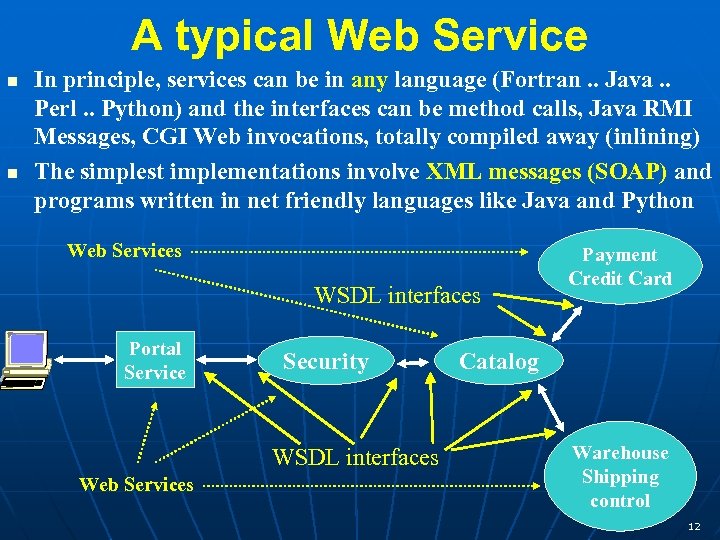

A typical Web Service n n In principle, services can be in any language (Fortran. . Java. . Perl. . Python) and the interfaces can be method calls, Java RMI Messages, CGI Web invocations, totally compiled away (inlining) The simplest implementations involve XML messages (SOAP) and programs written in net friendly languages like Java and Python Web Services WSDL interfaces Portal Service Security WSDL interfaces Web Services Payment Credit Card Catalog Warehouse Shipping control 12

A typical Web Service n n In principle, services can be in any language (Fortran. . Java. . Perl. . Python) and the interfaces can be method calls, Java RMI Messages, CGI Web invocations, totally compiled away (inlining) The simplest implementations involve XML messages (SOAP) and programs written in net friendly languages like Java and Python Web Services WSDL interfaces Portal Service Security WSDL interfaces Web Services Payment Credit Card Catalog Warehouse Shipping control 12

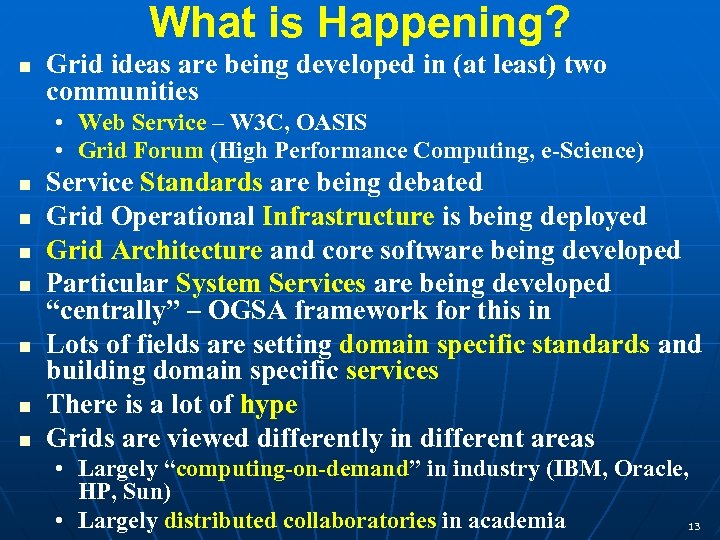

What is Happening? n Grid ideas are being developed in (at least) two communities • Web Service – W 3 C, OASIS • Grid Forum (High Performance Computing, e-Science) n n n n Service Standards are being debated Grid Operational Infrastructure is being deployed Grid Architecture and core software being developed Particular System Services are being developed “centrally” – OGSA framework for this in Lots of fields are setting domain specific standards and building domain specific services There is a lot of hype Grids are viewed differently in different areas • Largely “computing-on-demand” in industry (IBM, Oracle, HP, Sun) • Largely distributed collaboratories in academia 13

What is Happening? n Grid ideas are being developed in (at least) two communities • Web Service – W 3 C, OASIS • Grid Forum (High Performance Computing, e-Science) n n n n Service Standards are being debated Grid Operational Infrastructure is being deployed Grid Architecture and core software being developed Particular System Services are being developed “centrally” – OGSA framework for this in Lots of fields are setting domain specific standards and building domain specific services There is a lot of hype Grids are viewed differently in different areas • Largely “computing-on-demand” in industry (IBM, Oracle, HP, Sun) • Largely distributed collaboratories in academia 13

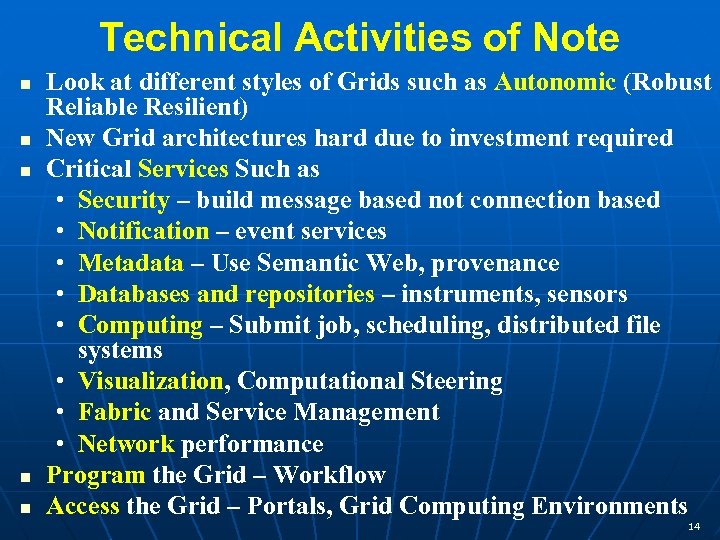

Technical Activities of Note n n n Look at different styles of Grids such as Autonomic (Robust Reliable Resilient) New Grid architectures hard due to investment required Critical Services Such as • Security – build message based not connection based • Notification – event services • Metadata – Use Semantic Web, provenance • Databases and repositories – instruments, sensors • Computing – Submit job, scheduling, distributed file systems • Visualization, Computational Steering • Fabric and Service Management • Network performance Program the Grid – Workflow Access the Grid – Portals, Grid Computing Environments 14

Technical Activities of Note n n n Look at different styles of Grids such as Autonomic (Robust Reliable Resilient) New Grid architectures hard due to investment required Critical Services Such as • Security – build message based not connection based • Notification – event services • Metadata – Use Semantic Web, provenance • Databases and repositories – instruments, sensors • Computing – Submit job, scheduling, distributed file systems • Visualization, Computational Steering • Fabric and Service Management • Network performance Program the Grid – Workflow Access the Grid – Portals, Grid Computing Environments 14

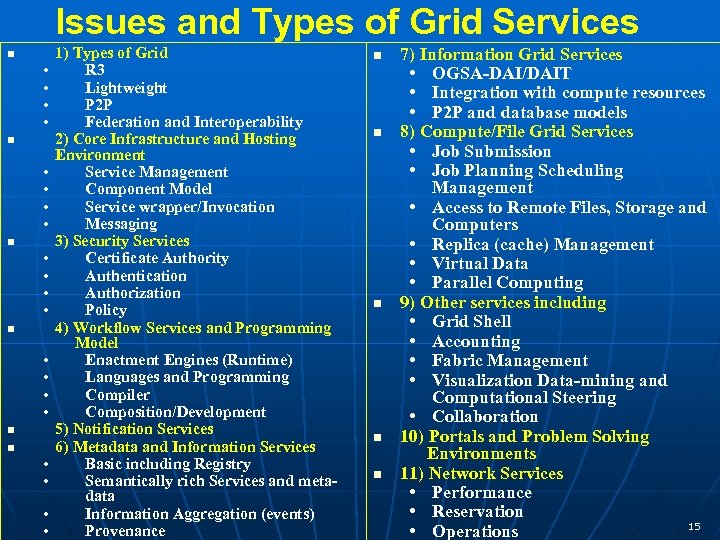

Issues and Types of Grid Services n • • • • n n • • 1) Types of Grid R 3 Lightweight P 2 P Federation and Interoperability 2) Core Infrastructure and Hosting Environment Service Management Component Model Service wrapper/Invocation Messaging 3) Security Services Certificate Authority Authentication Authorization Policy 4) Workflow Services and Programming Model Enactment Engines (Runtime) Languages and Programming Compiler Composition/Development 5) Notification Services 6) Metadata and Information Services Basic including Registry Semantically rich Services and metadata Information Aggregation (events) Provenance n n n 7) Information Grid Services • OGSA-DAI/DAIT • Integration with compute resources • P 2 P and database models 8) Compute/File Grid Services • Job Submission • Job Planning Scheduling Management • Access to Remote Files, Storage and Computers • Replica (cache) Management • Virtual Data • Parallel Computing 9) Other services including • Grid Shell • Accounting • Fabric Management • Visualization Data-mining and Computational Steering • Collaboration 10) Portals and Problem Solving Environments 11) Network Services • Performance • Reservation 15 • Operations

Issues and Types of Grid Services n • • • • n n • • 1) Types of Grid R 3 Lightweight P 2 P Federation and Interoperability 2) Core Infrastructure and Hosting Environment Service Management Component Model Service wrapper/Invocation Messaging 3) Security Services Certificate Authority Authentication Authorization Policy 4) Workflow Services and Programming Model Enactment Engines (Runtime) Languages and Programming Compiler Composition/Development 5) Notification Services 6) Metadata and Information Services Basic including Registry Semantically rich Services and metadata Information Aggregation (events) Provenance n n n 7) Information Grid Services • OGSA-DAI/DAIT • Integration with compute resources • P 2 P and database models 8) Compute/File Grid Services • Job Submission • Job Planning Scheduling Management • Access to Remote Files, Storage and Computers • Replica (cache) Management • Virtual Data • Parallel Computing 9) Other services including • Grid Shell • Accounting • Fabric Management • Visualization Data-mining and Computational Steering • Collaboration 10) Portals and Problem Solving Environments 11) Network Services • Performance • Reservation 15 • Operations

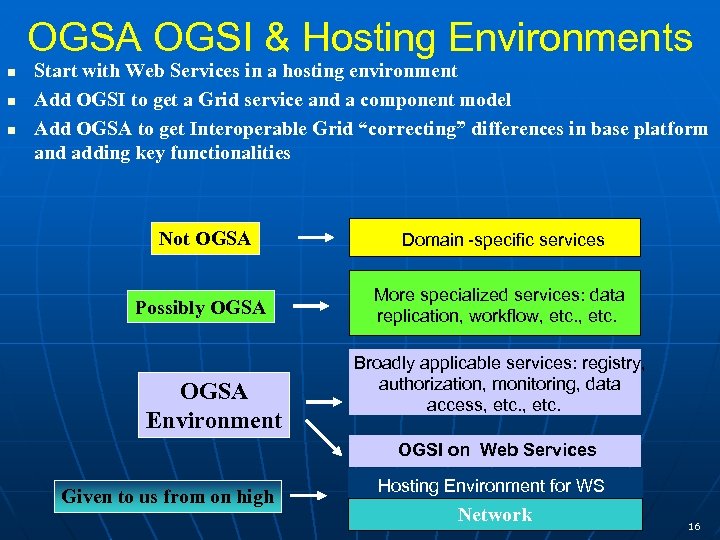

OGSA OGSI & Hosting Environments n n n Start with Web Services in a hosting environment Add OGSI to get a Grid service and a component model Add OGSA to get Interoperable Grid “correcting” differences in base platform and adding key functionalities Not OGSA Domain -specific services Possibly OGSA More specialized services: data replication, workflow, etc. OGSA Environment Broadly applicable services: registry, authorization, monitoring, data access, etc. OGSI on Web Services Given to us from on high Hosting Environment for WS Network 16

OGSA OGSI & Hosting Environments n n n Start with Web Services in a hosting environment Add OGSI to get a Grid service and a component model Add OGSA to get Interoperable Grid “correcting” differences in base platform and adding key functionalities Not OGSA Domain -specific services Possibly OGSA More specialized services: data replication, workflow, etc. OGSA Environment Broadly applicable services: registry, authorization, monitoring, data access, etc. OGSI on Web Services Given to us from on high Hosting Environment for WS Network 16

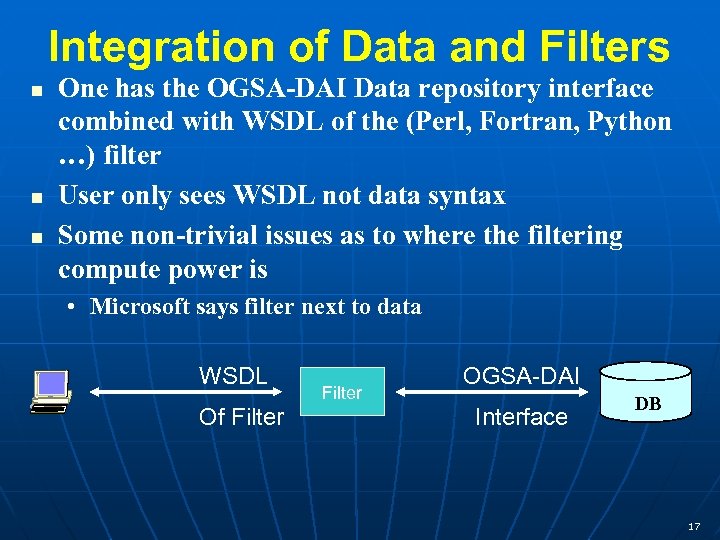

Integration of Data and Filters n n n One has the OGSA-DAI Data repository interface combined with WSDL of the (Perl, Fortran, Python …) filter User only sees WSDL not data syntax Some non-trivial issues as to where the filtering compute power is • Microsoft says filter next to data WSDL Of Filter OGSA-DAI Interface DB 17

Integration of Data and Filters n n n One has the OGSA-DAI Data repository interface combined with WSDL of the (Perl, Fortran, Python …) filter User only sees WSDL not data syntax Some non-trivial issues as to where the filtering compute power is • Microsoft says filter next to data WSDL Of Filter OGSA-DAI Interface DB 17

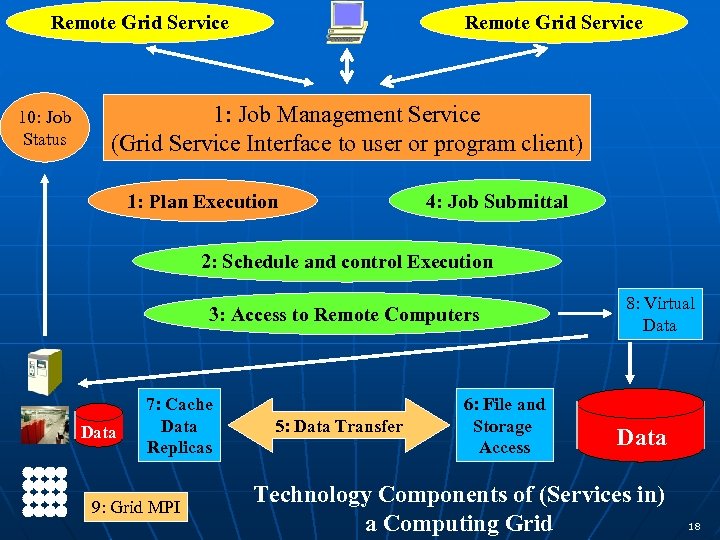

Remote Grid Service 10: Job Status Remote Grid Service 1: Job Management Service (Grid Service Interface to user or program client) 1: Plan Execution 4: Job Submittal 2: Schedule and control Execution 3: Access to Remote Computers Data 7: Cache Data Replicas 9: Grid MPI 5: Data Transfer 6: File and Storage Access 8: Virtual Data Technology Components of (Services in) a Computing Grid 18

Remote Grid Service 10: Job Status Remote Grid Service 1: Job Management Service (Grid Service Interface to user or program client) 1: Plan Execution 4: Job Submittal 2: Schedule and control Execution 3: Access to Remote Computers Data 7: Cache Data Replicas 9: Grid MPI 5: Data Transfer 6: File and Storage Access 8: Virtual Data Technology Components of (Services in) a Computing Grid 18

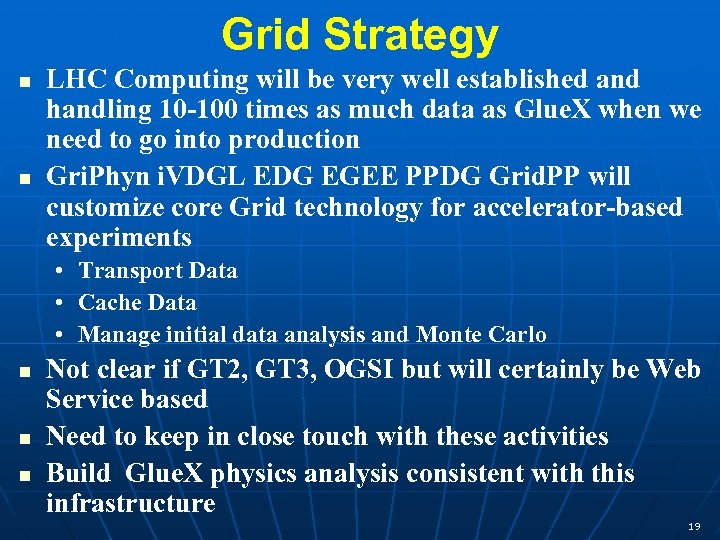

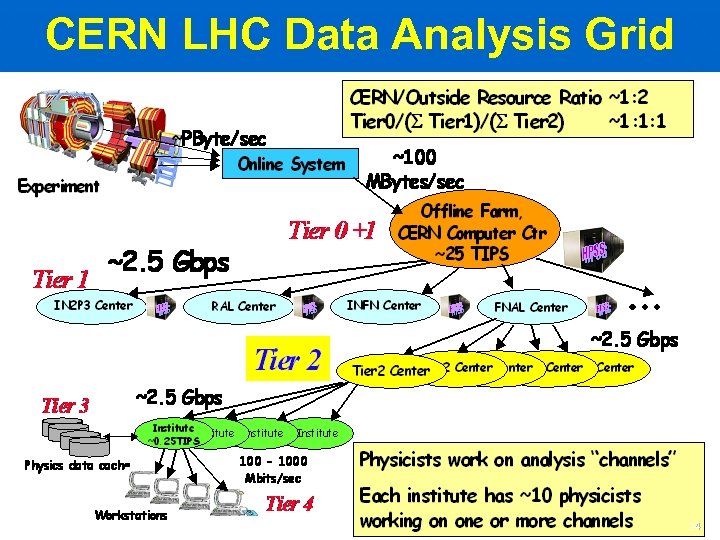

Grid Strategy n n LHC Computing will be very well established and handling 10 -100 times as much data as Glue. X when we need to go into production Gri. Phyn i. VDGL EDG EGEE PPDG Grid. PP will customize core Grid technology for accelerator-based experiments • Transport Data • Cache Data • Manage initial data analysis and Monte Carlo n n n Not clear if GT 2, GT 3, OGSI but will certainly be Web Service based Need to keep in close touch with these activities Build Glue. X physics analysis consistent with this infrastructure 19

Grid Strategy n n LHC Computing will be very well established and handling 10 -100 times as much data as Glue. X when we need to go into production Gri. Phyn i. VDGL EDG EGEE PPDG Grid. PP will customize core Grid technology for accelerator-based experiments • Transport Data • Cache Data • Manage initial data analysis and Monte Carlo n n n Not clear if GT 2, GT 3, OGSI but will certainly be Web Service based Need to keep in close touch with these activities Build Glue. X physics analysis consistent with this infrastructure 19

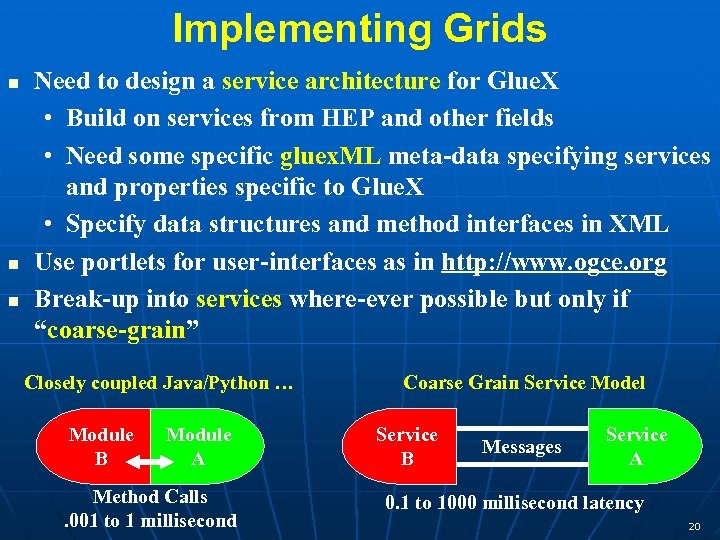

Implementing Grids n n n Need to design a service architecture for Glue. X • Build on services from HEP and other fields • Need some specific gluex. ML meta-data specifying services and properties specific to Glue. X • Specify data structures and method interfaces in XML Use portlets for user-interfaces as in http: //www. ogce. org Break-up into services where-ever possible but only if “coarse-grain” Closely coupled Java/Python … Module B Module A Method Calls. 001 to 1 millisecond Coarse Grain Service Model Service B Messages Service A 0. 1 to 1000 millisecond latency 20

Implementing Grids n n n Need to design a service architecture for Glue. X • Build on services from HEP and other fields • Need some specific gluex. ML meta-data specifying services and properties specific to Glue. X • Specify data structures and method interfaces in XML Use portlets for user-interfaces as in http: //www. ogce. org Break-up into services where-ever possible but only if “coarse-grain” Closely coupled Java/Python … Module B Module A Method Calls. 001 to 1 millisecond Coarse Grain Service Model Service B Messages Service A 0. 1 to 1000 millisecond latency 20

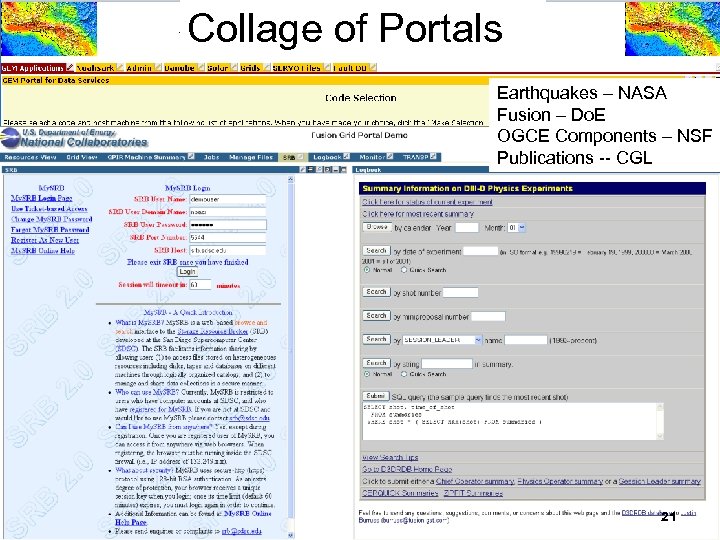

Collage of Portals Earthquakes – NASA Fusion – Do. E OGCE Components – NSF Publications -- CGL 21

Collage of Portals Earthquakes – NASA Fusion – Do. E OGCE Components – NSF Publications -- CGL 21

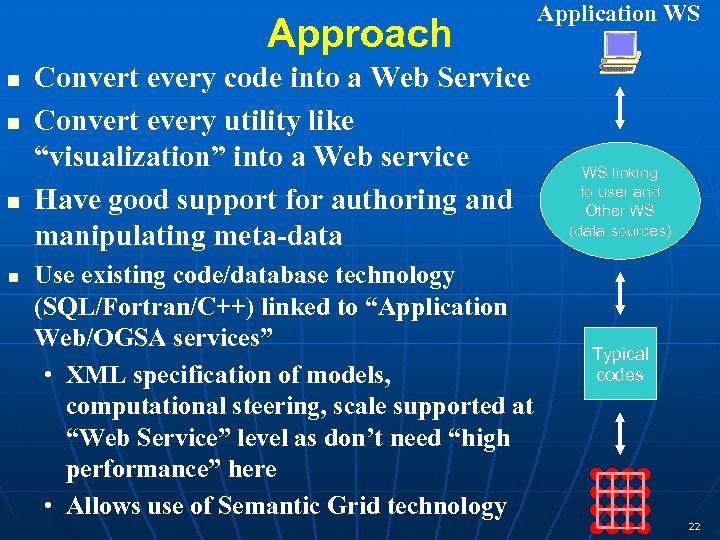

Approach n n Convert every code into a Web Service Convert every utility like “visualization” into a Web service Have good support for authoring and manipulating meta-data Use existing code/database technology (SQL/Fortran/C++) linked to “Application Web/OGSA services” • XML specification of models, computational steering, scale supported at “Web Service” level as don’t need “high performance” here • Allows use of Semantic Grid technology Application WS WS linking to user and Other WS (data sources) Typical codes 22

Approach n n Convert every code into a Web Service Convert every utility like “visualization” into a Web service Have good support for authoring and manipulating meta-data Use existing code/database technology (SQL/Fortran/C++) linked to “Application Web/OGSA services” • XML specification of models, computational steering, scale supported at “Web Service” level as don’t need “high performance” here • Allows use of Semantic Grid technology Application WS WS linking to user and Other WS (data sources) Typical codes 22

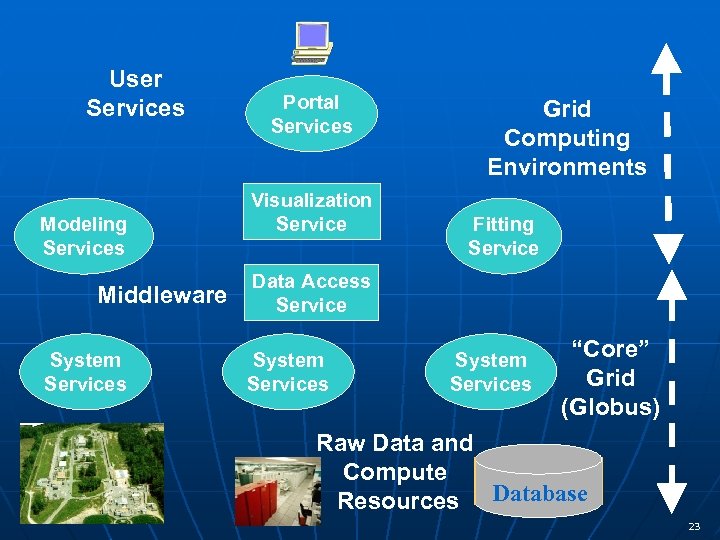

User Services Modeling Services Middleware System Services Portal Services Visualization Service Grid Computing Environments Fitting Service Data Access Service System Services “Core” Grid (Globus) Raw Data and Compute Resources Database 23

User Services Modeling Services Middleware System Services Portal Services Visualization Service Grid Computing Environments Fitting Service Data Access Service System Services “Core” Grid (Globus) Raw Data and Compute Resources Database 23

CERN LHC Data Analysis Grid 24

CERN LHC Data Analysis Grid 24