041146cebc9379b4f5f658d79deed81e.ppt

- Количество слайдов: 53

Review of Daresbury Workshop MICE DAQ Workshop Fermilab February 10, 2006 A. Bross

Daresbury MICE DAQ WS · The first MICE DAQ Workshop was held at Daresbury Lab in September of 05 · Focus was to give an overview of requirements of the experiment and discuss possible hardware and software implementations. · Included Front-end, monitoring and controls

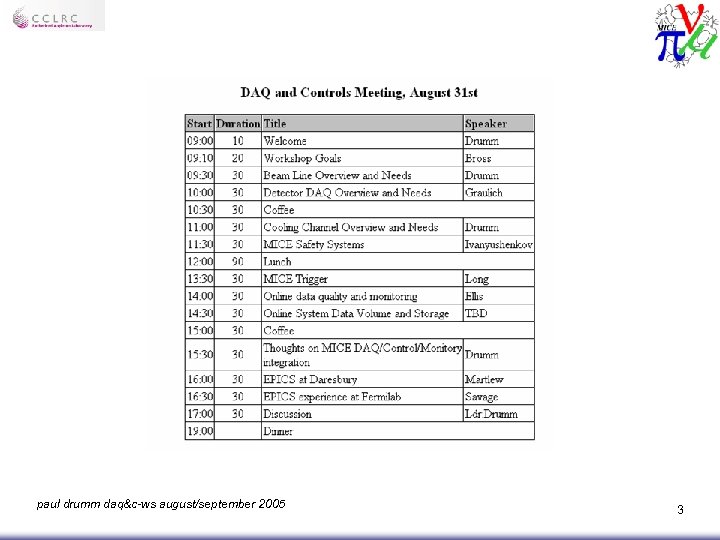

paul drumm daq&c-ws august/september 2005 3

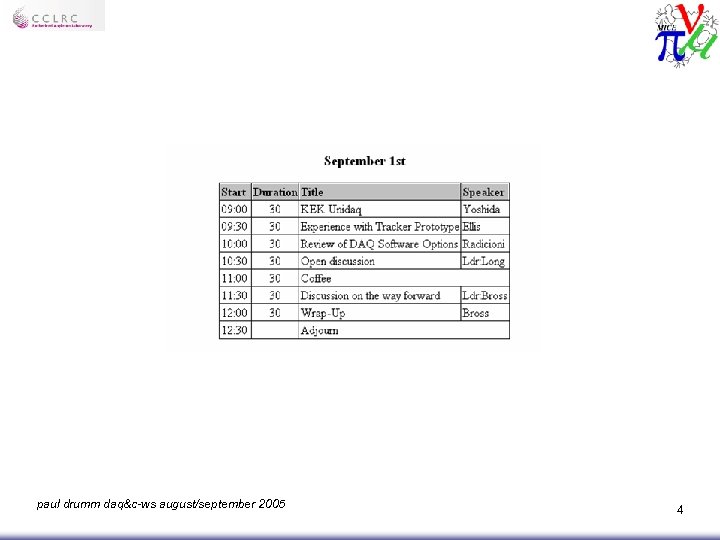

paul drumm daq&c-ws august/september 2005 4

DAQ Concepts

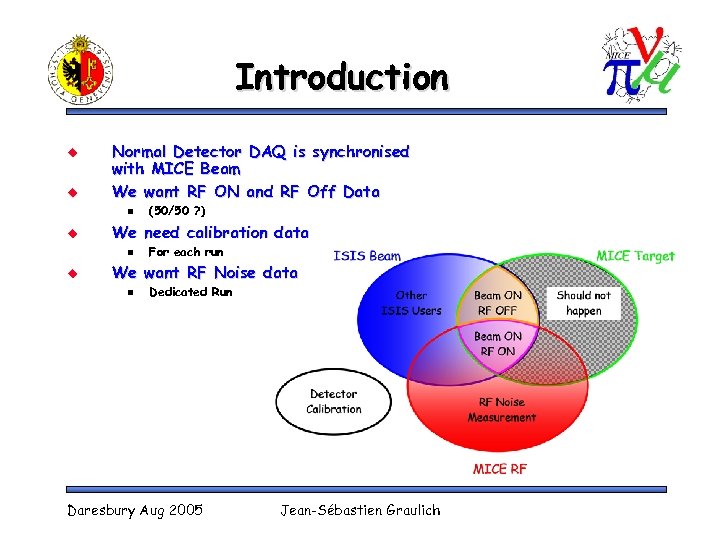

Introduction u u Normal Detector DAQ is synchronised with MICE Beam We want RF ON and RF Off Data n u We need calibration data n u (50/50 ? ) For each run We want RF Noise data n Dedicated Run Daresbury Aug 2005 Jean-Sébastien Graulich

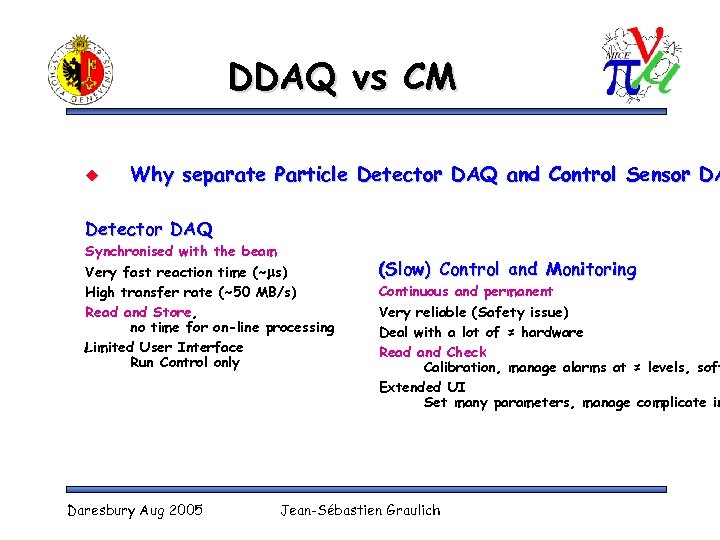

DDAQ vs CM u Why separate Particle Detector DAQ and Control Sensor DA Detector DAQ Synchronised with the beam Very fast reaction time (~ms) High transfer rate (~50 MB/s) Read and Store, no time for on-line processing Limited User Interface Run Control only Daresbury Aug 2005 (Slow) Control and Monitoring Continuous and permanent Very reliable (Safety issue) Deal with a lot of ≠ hardware Read and Check Calibration, manage alarms at ≠ levels, soft Extended UI Set many parameters, manage complicate in Jean-Sébastien Graulich

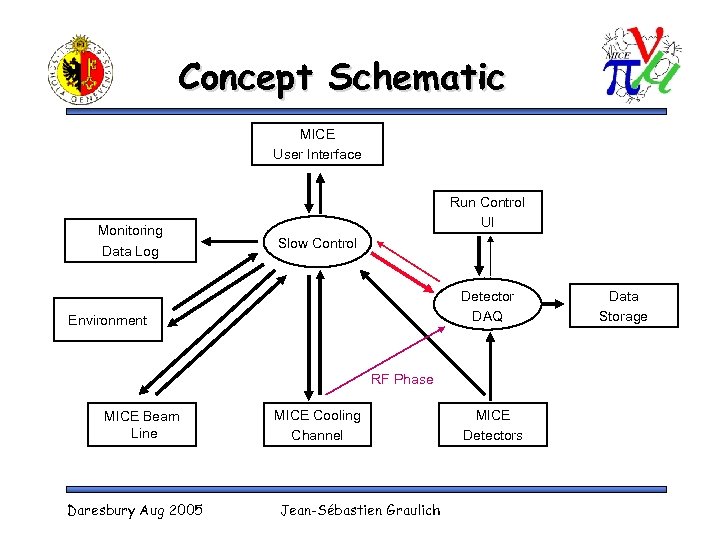

Concept Schematic MICE User Interface Monitoring Data Log Run Control UI Slow Control Detector DAQ Environment RF Phase MICE Beam Line Daresbury Aug 2005 MICE Cooling Channel Jean-Sébastien Graulich MICE Detectors Data Storage

The Parts of MICE Parts is Parts

Beam Line Overview and Controls Needs paul drumm daq&c-ws august/september 2005 10

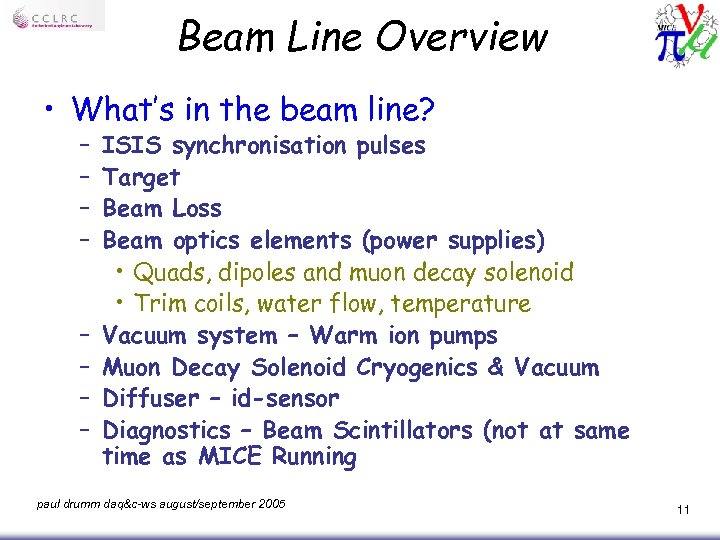

Beam Line Overview • What’s in the beam line? – – – – ISIS synchronisation pulses Target Beam Loss Beam optics elements (power supplies) • Quads, dipoles and muon decay solenoid • Trim coils, water flow, temperature Vacuum system – Warm ion pumps Muon Decay Solenoid Cryogenics & Vacuum Diffuser – id-sensor Diagnostics – Beam Scintillators (not at same time as MICE Running paul drumm daq&c-ws august/september 2005 11

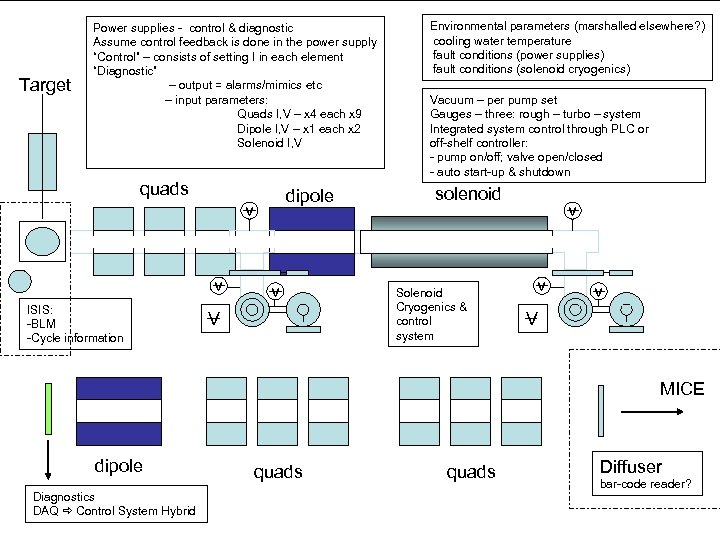

Target Power supplies - control & diagnostic Assume control feedback is done in the power supply “Control” – consists of setting I in each element “Diagnostic” – output = alarms/mimics etc – input parameters: Quads I, V – x 4 each x 9 Dipole I, V – x 1 each x 2 Solenoid I, V quads dipole v v ISIS: -BLM -Cycle information v V Environmental parameters (marshalled elsewhere? ) cooling water temperature fault conditions (power supplies) fault conditions (solenoid cryogenics) Vacuum – per pump set Gauges – three: rough – turbo – system Integrated system control through PLC or off-shelf controller: - pump on/off; valve open/closed - auto start-up & shutdown solenoid Solenoid Cryogenics & control system v v v V MICE dipole quads Diagnostics paul drumm daq&c-ws august/september 2005 DAQ Control System Hybrid quads Diffuser bar-code reader? 12

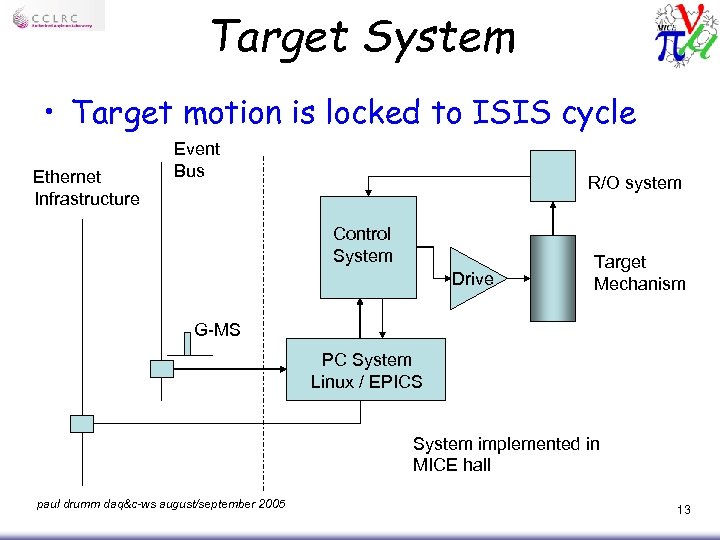

Target System • Target motion is locked to ISIS cycle Ethernet Infrastructure Event Bus R/O system Control System Drive Target Mechanism G-MS PC System Linux / EPICS System implemented in MICE hall paul drumm daq&c-ws august/september 2005 13

• Mix of levels of monitoring & control – Dedicated hardware • to drive the motors • Read the position • Control the current demand – Control system • Used to set parameters in hardware – Amplitude, delays, speed • Used to read & analyse diagnostic info – position… • GUI – simplified user interaction • Used to display diagnostics info paul drumm daq&c-ws august/september 2005 14

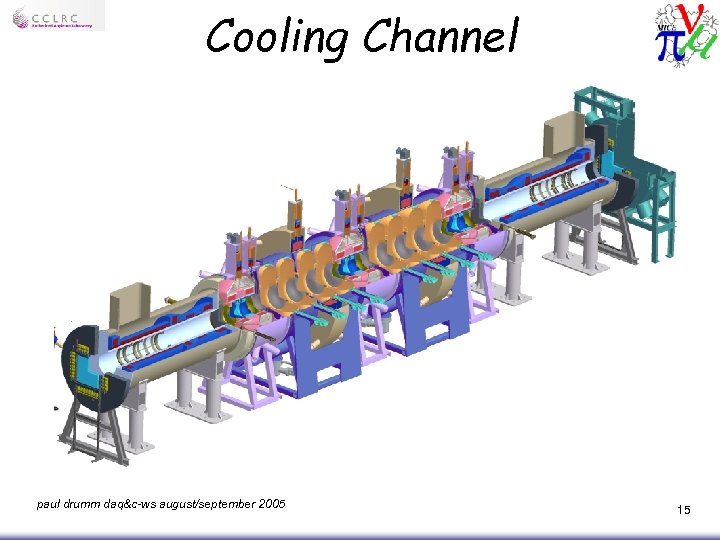

Cooling Channel paul drumm daq&c-ws august/september 2005 15

Physics Parameters • Monitoring system can record many parameters, but: • What are the critical parameters? • Is the following sufficient? – Beam Line & Target • • Almost irrelevant Dipole one = momentum of pions Dipole two = momentum setting of muon beam Diffuser = energy loss = momentum into MICE – Poor measurement & p measured by TOF and Tracker • Use Table of settings – record table-ID when loaded – “All ok” should be a gate condition – Magnets/Absorber • Table of settings as above – RF – more dynamic information is needed • High Q system – what does this mean for V & f? • Tuning mechanism – no details yet – pulse to pulse paul drumm daq&c-ws august/september 2005 16

Superconducting magnets • Current Setting – Process control – turn on/off/adjust – Passive monitoring – status • Power supply = lethargic parameters • Transducers = V&I • Temperature monitoring • Magnetic field measurements – 3 d Hall probes – CANBus – PCI/USB/VME interfaces available • Feedback system or… • Fault warnings – Temperatures / operational features • MLS Record Settings, Report Faults, “All ok” in gate paul drumm daq&c-ws august/september 2005 17

Absorber (and Focus Coils) • Absorber & Focus Coil Module – Mike Courthold’s talk on Hydrogen & Absorber Systems • Process control • Passive monitoring – Suit of instruments: » Temperature » Fluid Level » Thickness (tricky) • Fault warnings – MLS Record Settings, Report Faults, “All ok” in gate paul drumm daq&c-ws august/september 2005 18

RF (& Coupling Coil) • RF Cavity – Tuning – Cavity Power Level (Amplitude) – Cavity Phase • RF Power – LL RF (~k. W) – Driver RF (300 k. W) – Power RF (4 MW) paul drumm daq&c-ws august/september 2005 MICE has separate tasks to develop cavity and power systems but they are closely linked in a closed loop 19

The Parts of MICE Parts is Parts

MICE Safety System DE Baynham TW Bradshaw MJD Courthold Y Ivanyushenkov

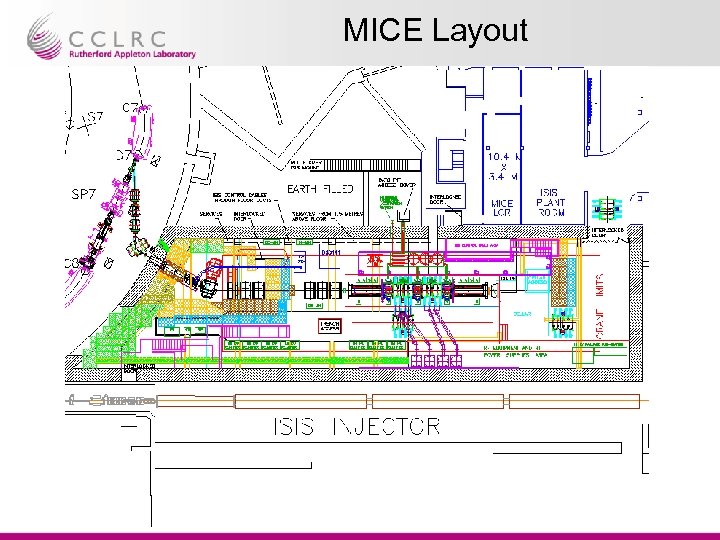

MICE Layout

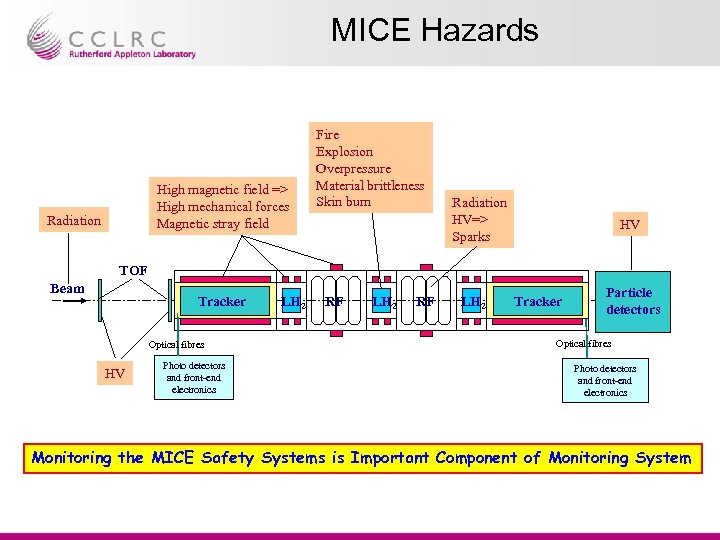

MICE Hazards High magnetic field => High mechanical forces Magnetic stray field Radiation Fire Explosion Overpressure Material brittleness Skin burn Radiation HV=> Sparks HV TOF Beam Tracker Optical fibres HV Photo detectors and front-end electronics LH 2 RF LH 2 Tracker Particle detectors Optical fibres Photo detectors and front-end electronics Monitoring the MICE Safety Systems is Important Component of Monitoring System

Controls and Monitoring Architecture

EPICS Experience at Fermilab Geoff Savage August 2005 Controls and Monitoring Group

What is EPICS? u Experimental Physics and Industrial Control System • Collaboration • Control System Architecture • Software Toolkit Integrated set of software building blocks for implementing a distributed control system u www. aps. anl. gov/epics u

Why EPICS? Availability of device interfaces that match or are similar to our hardware u Ease with which the system can be extended to include our experiment-specific devices u Existence of a large and enthusiastic user community that understands our problems and are willing to offer advice and guidance. u

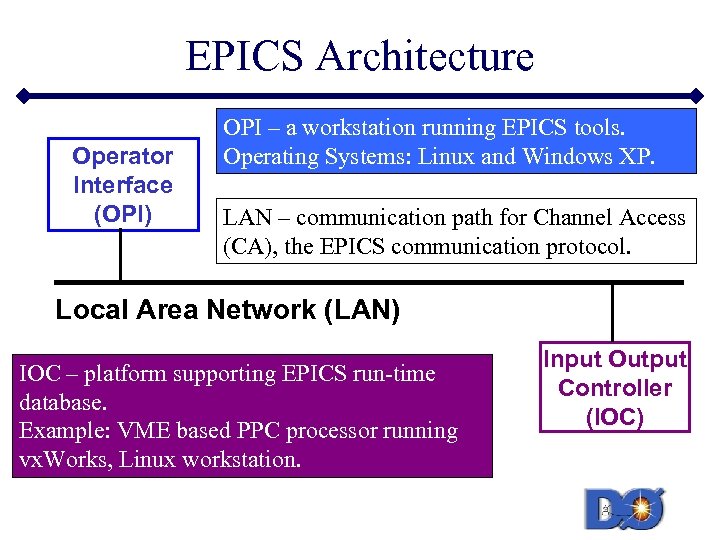

EPICS Architecture Operator Interface (OPI) OPI – a workstation running EPICS tools. Operating Systems: Linux and Windows XP. LAN – communication path for Channel Access (CA), the EPICS communication protocol. Local Area Network (LAN) IOC – platform supporting EPICS run-time database. Example: VME based PPC processor running vx. Works, Linux workstation. Input Output Controller (IOC)

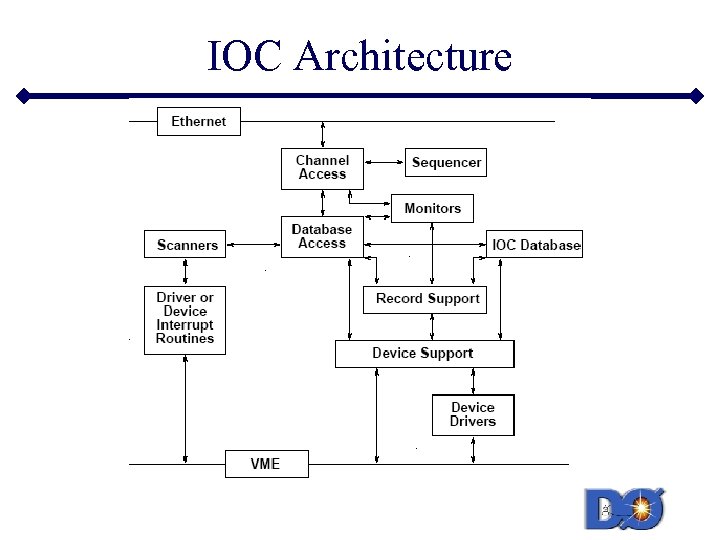

IOC Architecture

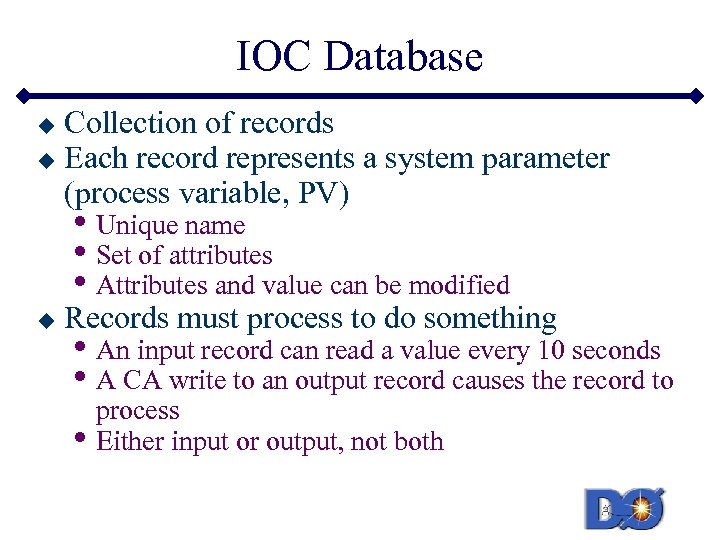

IOC Database Collection of records u Each record represents a system parameter (process variable, PV) u • Unique name • Set of attributes • Attributes and value can be modified u Records must process to do something • An input record can read a value every 10 seconds • A CA write to an output record causes the record to • process Either input or output, not both

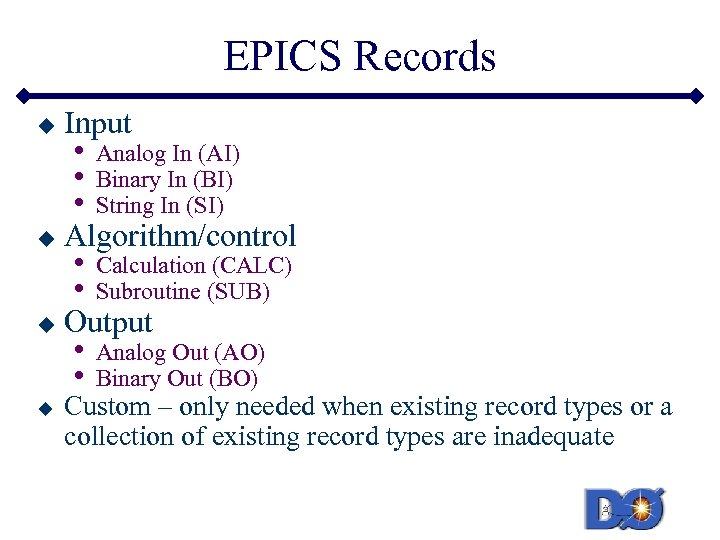

EPICS Records u u Input • • • Analog In (AI) Binary In (BI) String In (SI) • • Calculation (CALC) Subroutine (SUB) • • Analog Out (AO) Binary Out (BO) Algorithm/control Output Custom – only needed when existing record types or a collection of existing record types are inadequate

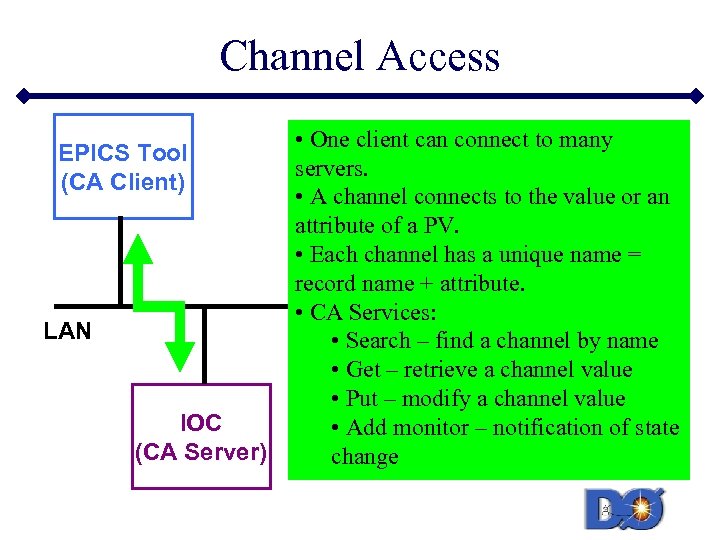

Channel Access EPICS Tool (CA Client) LAN IOC (CA Server) • One client can connect to many servers. • A channel connects to the value or an attribute of a PV. • Each channel has a unique name = record name + attribute. • CA Services: • Search – find a channel by name • Get – retrieve a channel value • Put – modify a channel value • Add monitor – notification of state change

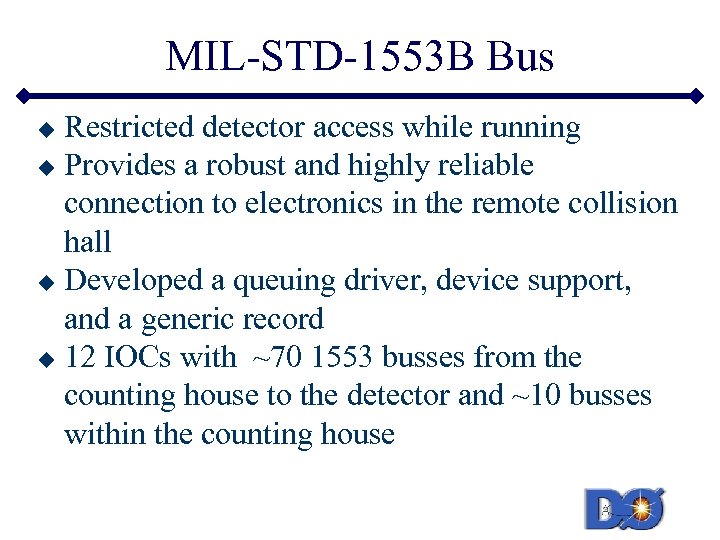

MIL-STD-1553 B Bus Restricted detector access while running u Provides a robust and highly reliable connection to electronics in the remote collision hall u Developed a queuing driver, device support, and a generic record u 12 IOCs with ~70 1553 busses from the counting house to the detector and ~10 busses within the counting house u

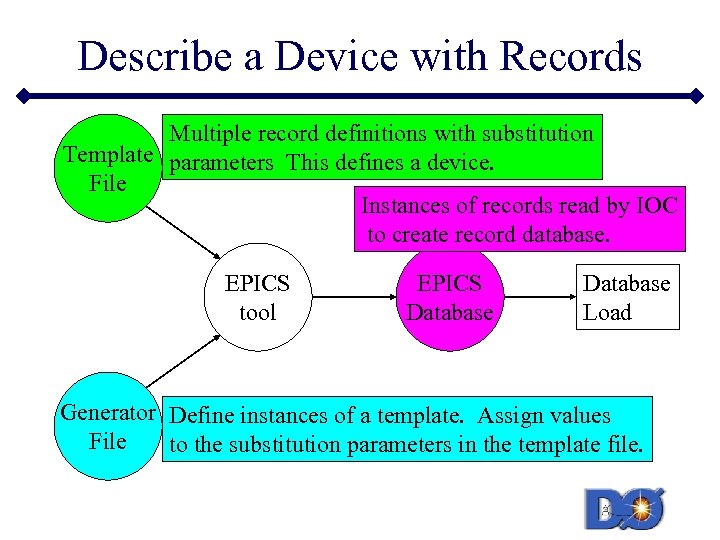

Describe a Device with Records Multiple record definitions with substitution Template parameters This defines a device. File Instances of records read by IOC to create record database. file ASCII EPICS tool EPICS Database Load Generator Define instances of a template. Assign values File to the substitution parameters in the template file.

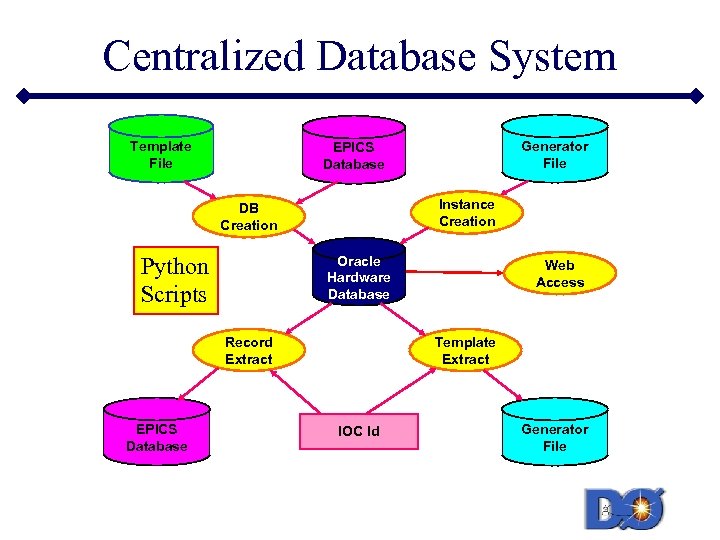

Centralized Database System Template File Instance Creation DB Creation Python Scripts Oracle Hardware Database Record Extract EPICS Database Generator File EPICS Database Web Access Template Extract IOC Id Generator File

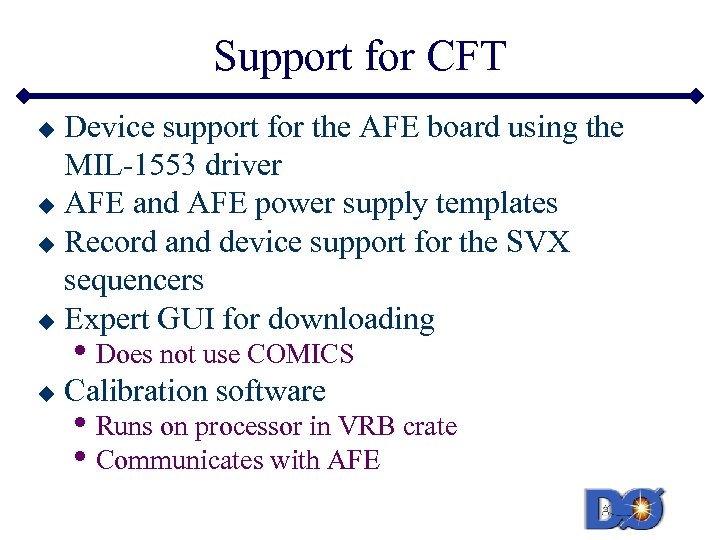

Support for CFT Device support for the AFE board using the MIL-1553 driver u AFE and AFE power supply templates u Record and device support for the SVX sequencers u Expert GUI for downloading u • Does not use COMICS u Calibration software • Runs on processor in VRB crate • Communicates with AFE

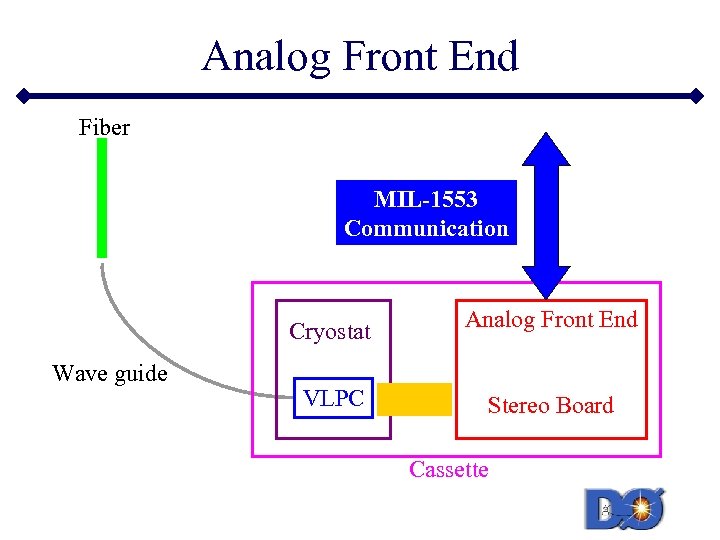

Analog Front End Fiber MIL-1553 Communication Cryostat Wave guide Analog Front End VLPC Stereo Board Cassette

EPICS at Fermilab u SMTF u ILC • Superconducting module test facility • Cavity test in October • DOOCS for LLRF – speaks CA • EPICS for everything else • No ACNET – beams division controls system • Control system to be decided • Management structure now forming

EPICS Lessons u Three Layers Applications • u Tools • u • Development § EPICS tools § OPI development - CA library § Build base § Build application - combine existing pieces § Develop device templates § Records, device, and driver support EPICS is not simple to use • • Need expertise in each layer Support is an email away IOC operating system selection

Integration of DAQ & Control systems paul drumm daq&c-ws august/september 2005 40

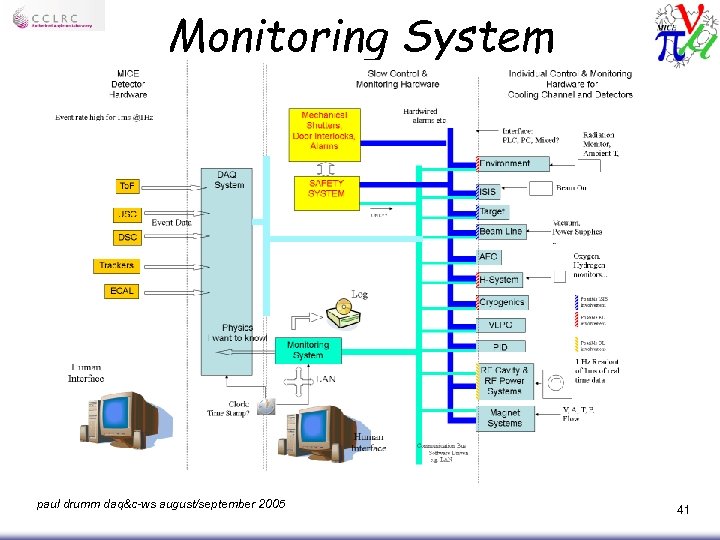

Monitoring System paul drumm daq&c-ws august/september 2005 41

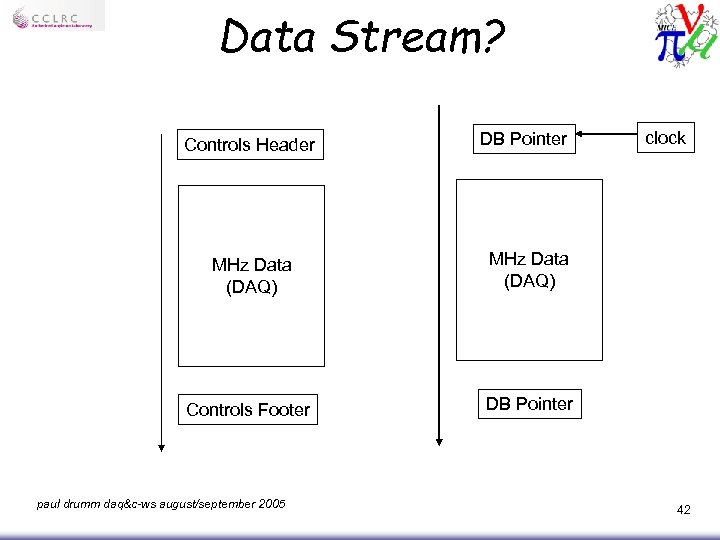

Data Stream? Controls Header DB Pointer MHz Data (DAQ) Controls Footer clock DB Pointer paul drumm daq&c-ws august/september 2005 42

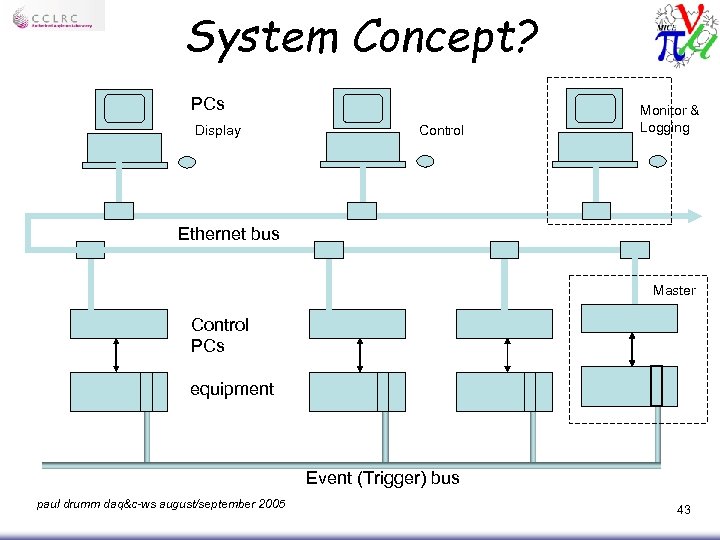

System Concept? PCs Display Control Monitor & Logging Ethernet bus Master Control PCs equipment Event (Trigger) bus paul drumm daq&c-ws august/september 2005 43

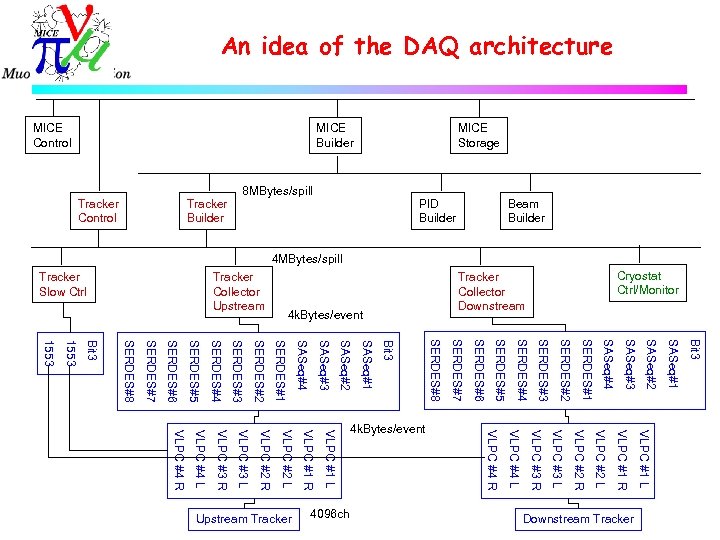

An idea of the DAQ architecture 4 k. Bytes/event Bit 3 SASeq#1 SASeq#2 SASeq#3 SASeq#4 SERDES#1 SERDES#2 SERDES#3 SERDES#4 SERDES#5 SERDES#6 SERDES#7 SERDES#8 Bit 3 1553 VLPC #1 L VLPC #1 R VLPC #2 L VLPC #2 R VLPC #3 L VLPC #3 R VLPC #4 L VLPC #4 R Downstream Tracker 4096 ch Upstream Tracker 4 k. Bytes/event Cryostat Ctrl/Monitor Tracker Collector Downstream Tracker Collector Upstream Tracker Slow Ctrl Beam Builder PID Builder 8 MBytes/spill Tracker Builder Tracker Control MICE Storage MICE Builder MICE Control 4 MBytes/spill

Online System

Uni. Daq · Makoto described the KEK Unidaq system u Used for Fiber Tracker test at KEK s u u Worked well, interface to tracker readout (AFEIIVLSB) would be the same as in MICE Could be used as MICE online system Needs event builder s Significant issue

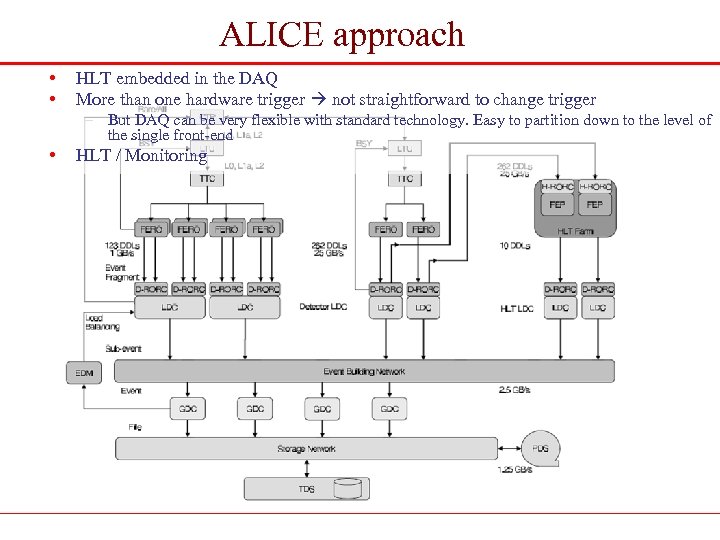

ALICE approach • • HLT embedded in the DAQ More than one hardware trigger not straightforward to change trigger – But DAQ can be very flexible with standard technology. Easy to partition down to the level of the single front-end • HLT / Monitoring

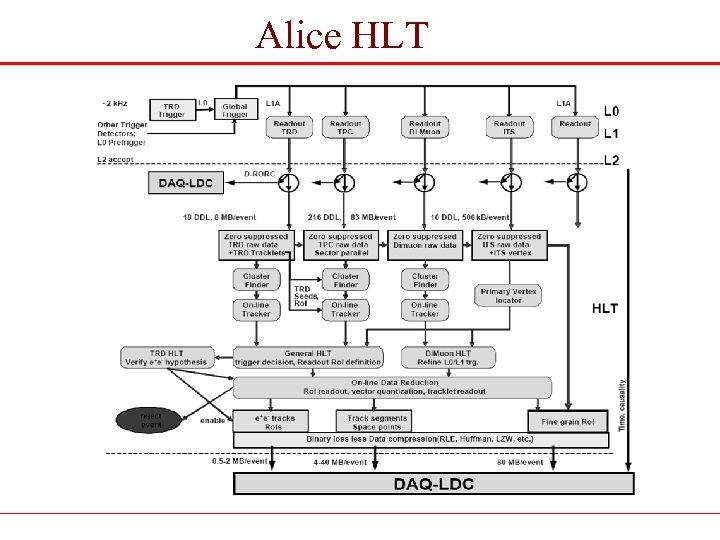

Alice HLT

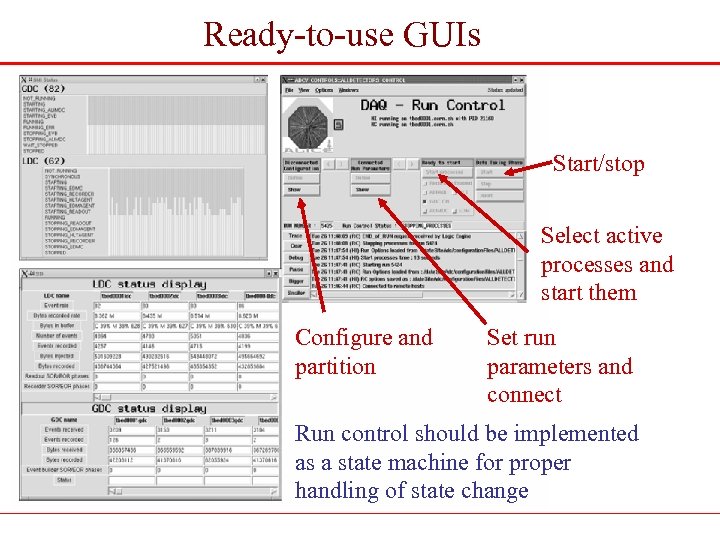

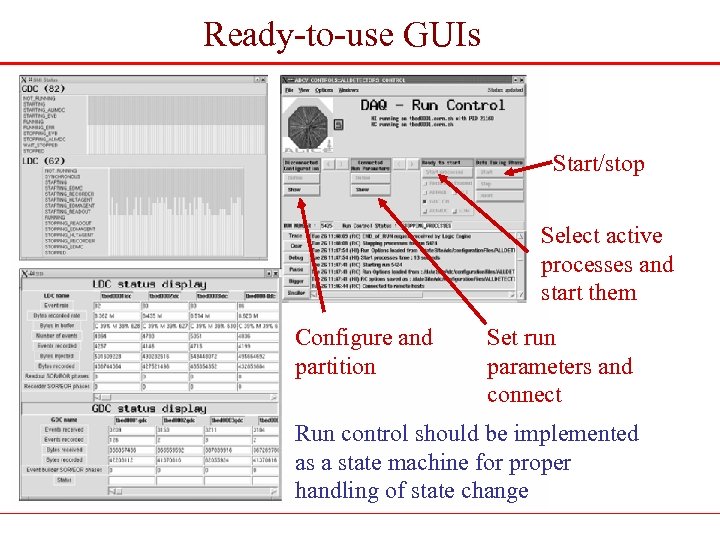

Ready-to-use GUIs Start/stop Select active processes and start them Configure and partition Set run parameters and connect Run control should be implemented as a state machine for proper handling of state change

Ready-to-use GUIs Start/stop Select active processes and start them Configure and partition Set run parameters and connect Run control should be implemented as a state machine for proper handling of state change

Conclusions: never underestimate … • Users are not experts: provide them the tools to work and to report problems effectively. • Flexible partitioning. • Event-building with accurate fragment alignment and validity checks, state reporting and reliability. • Redundancy / fault tolerance • A proper run-control with state-machine – And simplifying to the users the tasks of Partition, Configure, Trigger selection, Start, Stop • A good monitoring framework, with clear-cut separation between DAQ services and monitoring clients • Extensive and informative logging • GUIs This represents a significant amount of work Not yet started!

Conclusions Coming out of Daresbury · Made Decisions!: u u u EPICS Linux VME/PC s u Ethernet backbone Event Bus (maybe) · The Start of a Specifications document u Has had one iteration

Next · Specify/evaluate as much of the hardware as possible u u Front-end electronics needs to be fully specified since it can effect many aspects of the DAQ Crate specs s s PCs Various interface cards · Assemble Test System u Core system s Must make a decision here (UNIDAQ, ALICE-like TB system, ? ) – I think we need to make a decision here relatively soon and will likely involve some arbitrariness. • Who does the work will present a particular “POV” u u VME backbone Trigger architecture Front-end electronics EPICS interface s Need experts to be involved

041146cebc9379b4f5f658d79deed81e.ppt