f3aaa03743bed7987d3ff0d3060920fe.ppt

- Количество слайдов: 81

Resources for multilingual processing Georgiana Puşcaşu University of Wolverhampton, UK

Resources for multilingual processing Georgiana Puşcaşu University of Wolverhampton, UK

Outline l l Motivation and goals NLP Methods, Resources and Applications l l l Text Segmentation Part of Speech Tagging Stemming Lemmatization Syntactic Parsing Named Entity Recognition Term Extraction and Terminology Data Management Tools Text Summarization Language Identification Statistical Language Modeling Toolkits Corpora Conclusions 2

Outline l l Motivation and goals NLP Methods, Resources and Applications l l l Text Segmentation Part of Speech Tagging Stemming Lemmatization Syntactic Parsing Named Entity Recognition Term Extraction and Terminology Data Management Tools Text Summarization Language Identification Statistical Language Modeling Toolkits Corpora Conclusions 2

Motivation and goals Motivation l Most NLP research and resources deal with English l The Web is multilingual and ideally for all languages the current NLP state-of-the-art should be attained Goals l To present already available textual methods that can support multilingual NLP l To offer an inventory of existent tools and resources that can be exploited in order to avoid reinventing the wheel 3

Motivation and goals Motivation l Most NLP research and resources deal with English l The Web is multilingual and ideally for all languages the current NLP state-of-the-art should be attained Goals l To present already available textual methods that can support multilingual NLP l To offer an inventory of existent tools and resources that can be exploited in order to avoid reinventing the wheel 3

Text Segmentation l Electronic text is essentially just a sequence of characters l Before any real processing, text needs to be segmented l Text segmentation involves l Low-level text segmentation (performed at the initial stages of text processing) l l l Tokenization Sentence splitting High-level text segmentation l Intra-sentential: segmentation of linguistic groups such as Named Entities, Noun Phrases, splitting sentences into clauses l Inter-Sentential: grouping sentences and paragraphs into discourse topics 4

Text Segmentation l Electronic text is essentially just a sequence of characters l Before any real processing, text needs to be segmented l Text segmentation involves l Low-level text segmentation (performed at the initial stages of text processing) l l l Tokenization Sentence splitting High-level text segmentation l Intra-sentential: segmentation of linguistic groups such as Named Entities, Noun Phrases, splitting sentences into clauses l Inter-Sentential: grouping sentences and paragraphs into discourse topics 4

Tokenization l Tokenization is the process of segmenting text into linguistic units such as words, punctuation, numbers, alphanumerics, etc. l It is normally the first step in the majority of text processing applications l Tokenization in languages that are: l segmented: is considered a relatively easy and uninteresting part of text processing (words delimited by blank spaces and punctuation) l non-segmented: is more challenging (no explicit boundaries between words) 5

Tokenization l Tokenization is the process of segmenting text into linguistic units such as words, punctuation, numbers, alphanumerics, etc. l It is normally the first step in the majority of text processing applications l Tokenization in languages that are: l segmented: is considered a relatively easy and uninteresting part of text processing (words delimited by blank spaces and punctuation) l non-segmented: is more challenging (no explicit boundaries between words) 5

Tokenization in segmented languages l Segmented languages: all modern languages that use a Latin, Cyrillic- or Greek-based writing system l Traditionally, tokenization rules are written using regular expressions l Problems: l Abbreviations: solved by lists of abbreviations (pre-compiled or automatically extracted from a corpus), guessing rules l Hyphenated words: “One word or two? ” l Numerical and special expressions (Email addresses, URLs, telephone numbers, etc. ) are handled by specialized tokenizers (preprocessors) l Apostrophe: (they’re => they + ‘re; don’t => do + n’t) solved by language-specific rules 6

Tokenization in segmented languages l Segmented languages: all modern languages that use a Latin, Cyrillic- or Greek-based writing system l Traditionally, tokenization rules are written using regular expressions l Problems: l Abbreviations: solved by lists of abbreviations (pre-compiled or automatically extracted from a corpus), guessing rules l Hyphenated words: “One word or two? ” l Numerical and special expressions (Email addresses, URLs, telephone numbers, etc. ) are handled by specialized tokenizers (preprocessors) l Apostrophe: (they’re => they + ‘re; don’t => do + n’t) solved by language-specific rules 6

Tokenization in non-segmented languages l Non-segmented languages: Oriental languages l Problems: l l l tokens are written directly adjacent to each other almost all characters can be one-character word by themselves but can also form multi-character words Solutions: l Pre-existing lexico-grammatical knowledge l Machine learning employed to extract segmentation regularities from pre-segmented data l Statistical methods: character n-grams 7

Tokenization in non-segmented languages l Non-segmented languages: Oriental languages l Problems: l l l tokens are written directly adjacent to each other almost all characters can be one-character word by themselves but can also form multi-character words Solutions: l Pre-existing lexico-grammatical knowledge l Machine learning employed to extract segmentation regularities from pre-segmented data l Statistical methods: character n-grams 7

Tokenizers (1) ALEMBIC Author(s): M. Vilain, J. Aberdeen, D. Day, J. Burger, The MITRE Corporation Purpose: Alembic is a multi-lingual text processing system. Among other tools, it incorporates tokenizers for: English, Spanish, Japanese, Chinese, French, Thai. Access: Free by contacting day@mitre. org ELLOGON Author(s): George Petasis, Vangelis Karkaletsis, National Center for Scientific Research, Greece Purpose: Ellogon is a multi-lingual, cross-platform, general-purpose language engineering environment. One of the provided components that can be adapted to various languages can perform tokenization. Supported languages: Unicode. Access: Free at http: //www. ellogon. org/ GATE (General Architecture for Text Engineering) Author(s): NLP Group, University of Sheffield, UK Access: Free but requires registration at http: //gate. ac. uk/ HEART Of GOLD Author(s): Ulrich Schäfer, DFKI Language Technology Lab, Germany Purpose: Supported language: Unicode, Spanish, Polish, Norwegian, Japanese, Italian, Greek, German, French, English, Chinese. 8 Access: Free at http: //heartofgold. dfki. de/

Tokenizers (1) ALEMBIC Author(s): M. Vilain, J. Aberdeen, D. Day, J. Burger, The MITRE Corporation Purpose: Alembic is a multi-lingual text processing system. Among other tools, it incorporates tokenizers for: English, Spanish, Japanese, Chinese, French, Thai. Access: Free by contacting day@mitre. org ELLOGON Author(s): George Petasis, Vangelis Karkaletsis, National Center for Scientific Research, Greece Purpose: Ellogon is a multi-lingual, cross-platform, general-purpose language engineering environment. One of the provided components that can be adapted to various languages can perform tokenization. Supported languages: Unicode. Access: Free at http: //www. ellogon. org/ GATE (General Architecture for Text Engineering) Author(s): NLP Group, University of Sheffield, UK Access: Free but requires registration at http: //gate. ac. uk/ HEART Of GOLD Author(s): Ulrich Schäfer, DFKI Language Technology Lab, Germany Purpose: Supported language: Unicode, Spanish, Polish, Norwegian, Japanese, Italian, Greek, German, French, English, Chinese. 8 Access: Free at http: //heartofgold. dfki. de/

Tokenizers (2) LT TTT Author(s): Language Technology Group, University of Edinburgh, UK Purpose: LT TTT is a text tokenization system and toolset which enables users to produce a swift and individually-tailored tokenisation of text. Access: Free at http: //www. ltg. ed. ac. uk/software/ttt/ MXTERMINATOR Author(s): Adwait Ratnaparkhi Platforms: Platform independent Access: Free at http: //www. cis. upenn. edu/~adwait/statnlp. html QTOKEN Author(s): Oliver Mason, Birmingham University, UK Platforms: Platform independent Access: Free at http: //www. english. bham. ac. uk/staff/omason/software/qtoken. html SPro. UT Author(s): Feiyu Xu, Tim vor der Brück, LT-Lab, DFKI Gmb. H, Germany Purpose: SPro. UT provides tokenization for Unicode, Spanish, Japanese, German, French, English, Chinese. Access: Not free. More information at http: //sprout. dfki. de/ 9

Tokenizers (2) LT TTT Author(s): Language Technology Group, University of Edinburgh, UK Purpose: LT TTT is a text tokenization system and toolset which enables users to produce a swift and individually-tailored tokenisation of text. Access: Free at http: //www. ltg. ed. ac. uk/software/ttt/ MXTERMINATOR Author(s): Adwait Ratnaparkhi Platforms: Platform independent Access: Free at http: //www. cis. upenn. edu/~adwait/statnlp. html QTOKEN Author(s): Oliver Mason, Birmingham University, UK Platforms: Platform independent Access: Free at http: //www. english. bham. ac. uk/staff/omason/software/qtoken. html SPro. UT Author(s): Feiyu Xu, Tim vor der Brück, LT-Lab, DFKI Gmb. H, Germany Purpose: SPro. UT provides tokenization for Unicode, Spanish, Japanese, German, French, English, Chinese. Access: Not free. More information at http: //sprout. dfki. de/ 9

Tokenizers (3) THE QUIPU GROK LIBRARY Author(s): Gann Bierner and Jason Baldridge, University of Edinburgh, UK Access: Free at https: //sourceforge. net/project/showfiles. php? group_id=4083 TWOL Author(s): Lingsoft Purpose: Supported languages: Swedish, Norwegian, German, Finnish, English, Danish. Access: Not free. More information at http: //www. lingsoft. fi/ 10

Tokenizers (3) THE QUIPU GROK LIBRARY Author(s): Gann Bierner and Jason Baldridge, University of Edinburgh, UK Access: Free at https: //sourceforge. net/project/showfiles. php? group_id=4083 TWOL Author(s): Lingsoft Purpose: Supported languages: Swedish, Norwegian, German, Finnish, English, Danish. Access: Not free. More information at http: //www. lingsoft. fi/ 10

Sentence splitting l Sentence splitting is the task of segmenting text into sentences l In the majority of cases it is a simple task: . ? ! usually signal a sentence boundary l However, in cases when a period denotes a decimal point or is a part of an abbreviation, it does not always signal a sentence break. l The simplest algorithm is known as ‘period-spacecapital letter’ (not very good performance). Can be improved with lists of abbreviations, a lexicon of frequent sentence initial words and/or machine learning techniques 11

Sentence splitting l Sentence splitting is the task of segmenting text into sentences l In the majority of cases it is a simple task: . ? ! usually signal a sentence boundary l However, in cases when a period denotes a decimal point or is a part of an abbreviation, it does not always signal a sentence break. l The simplest algorithm is known as ‘period-spacecapital letter’ (not very good performance). Can be improved with lists of abbreviations, a lexicon of frequent sentence initial words and/or machine learning techniques 11

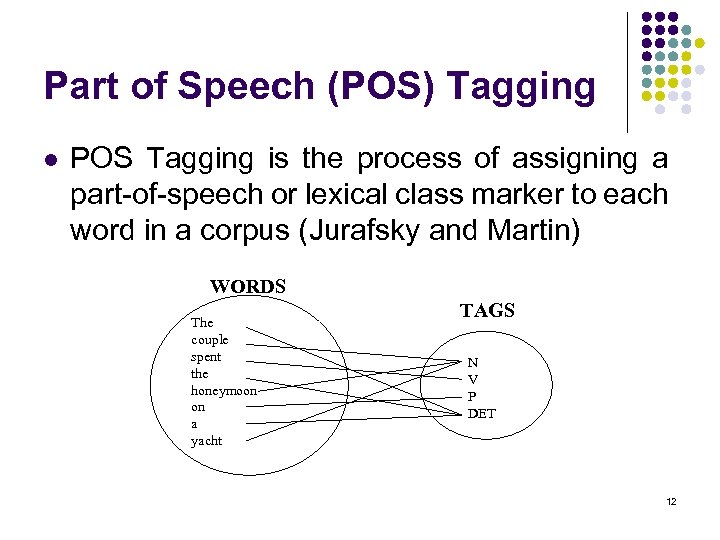

Part of Speech (POS) Tagging l POS Tagging is the process of assigning a part-of-speech or lexical class marker to each word in a corpus (Jurafsky and Martin) WORDS The couple spent the honeymoon on a yacht TAGS N V P DET 12

Part of Speech (POS) Tagging l POS Tagging is the process of assigning a part-of-speech or lexical class marker to each word in a corpus (Jurafsky and Martin) WORDS The couple spent the honeymoon on a yacht TAGS N V P DET 12

POS Tagger Prerequisites l Lexicon of words l For each word in the lexicon information about all its possible tags according to a chosen tagset l Different methods for choosing the correct tag for a word: l Rule-based methods l Statistical methods l Transformation Based Learning (TBL) methods 13

POS Tagger Prerequisites l Lexicon of words l For each word in the lexicon information about all its possible tags according to a chosen tagset l Different methods for choosing the correct tag for a word: l Rule-based methods l Statistical methods l Transformation Based Learning (TBL) methods 13

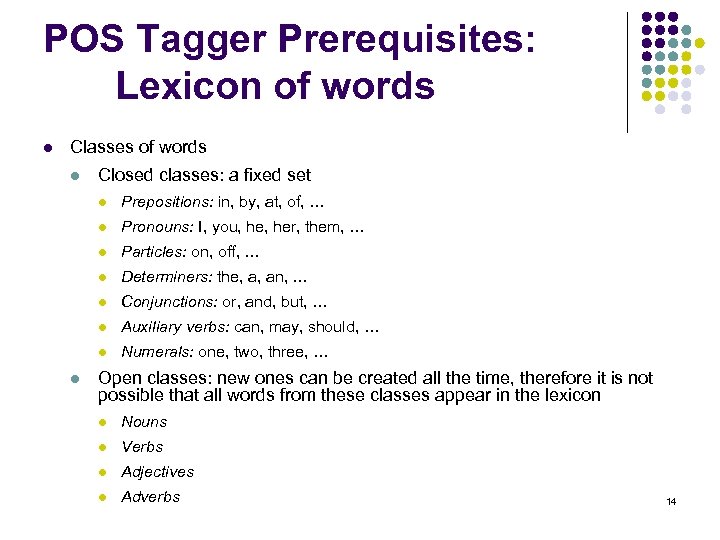

POS Tagger Prerequisites: Lexicon of words l Classes of words l Closed classes: a fixed set l l Pronouns: I, you, her, them, … l Particles: on, off, … l Determiners: the, a, an, … l Conjunctions: or, and, but, … l Auxiliary verbs: can, may, should, … l l Prepositions: in, by, at, of, … Numerals: one, two, three, … Open classes: new ones can be created all the time, therefore it is not possible that all words from these classes appear in the lexicon l Nouns l Verbs l Adjectives l Adverbs 14

POS Tagger Prerequisites: Lexicon of words l Classes of words l Closed classes: a fixed set l l Pronouns: I, you, her, them, … l Particles: on, off, … l Determiners: the, a, an, … l Conjunctions: or, and, but, … l Auxiliary verbs: can, may, should, … l l Prepositions: in, by, at, of, … Numerals: one, two, three, … Open classes: new ones can be created all the time, therefore it is not possible that all words from these classes appear in the lexicon l Nouns l Verbs l Adjectives l Adverbs 14

POS Tagger Prerequisites Tagsets l To do POS tagging, need to choose a standard set of tags to work with l A tagset is normally sophisticated and linguistically well grounded l Could pick very coarse tagets l N, V, Adj, Adv. l More commonly used set is finer grained, the “UPenn Tree. Bank tagset”, 48 tags l Even more fine-grained tagsets exist 15

POS Tagger Prerequisites Tagsets l To do POS tagging, need to choose a standard set of tags to work with l A tagset is normally sophisticated and linguistically well grounded l Could pick very coarse tagets l N, V, Adj, Adv. l More commonly used set is finer grained, the “UPenn Tree. Bank tagset”, 48 tags l Even more fine-grained tagsets exist 15

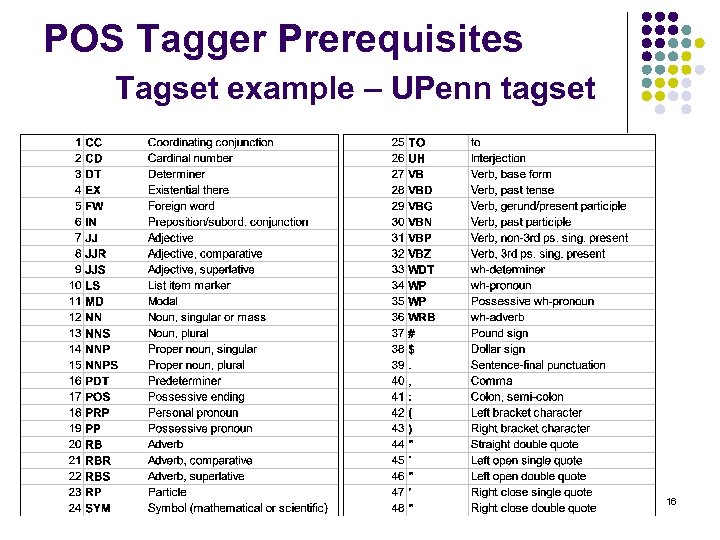

POS Tagger Prerequisites Tagset example – UPenn tagset 16

POS Tagger Prerequisites Tagset example – UPenn tagset 16

POS Tagging Rule based methods l Start with a dictionary l Assign all possible tags to words from the dictionary l Write rules by hand to selectively remove tags l Leaving the correct tag for each word 17

POS Tagging Rule based methods l Start with a dictionary l Assign all possible tags to words from the dictionary l Write rules by hand to selectively remove tags l Leaving the correct tag for each word 17

POS Tagging Statistical methods (1) The Most Frequent Tag Algorithm l Training l l Take a tagged corpus Create a dictionary containing every word in the corpus together with all its possible tags Count the number of times each tag occurs for a word and compute the probability P(tag|word); then save all probabilities Tagging l Given a new sentence, for each word, pick the most frequent tag for that word from the corpus 18

POS Tagging Statistical methods (1) The Most Frequent Tag Algorithm l Training l l Take a tagged corpus Create a dictionary containing every word in the corpus together with all its possible tags Count the number of times each tag occurs for a word and compute the probability P(tag|word); then save all probabilities Tagging l Given a new sentence, for each word, pick the most frequent tag for that word from the corpus 18

POS Tagging Statistical methods (2) Bigram HMM Tagger l Training l Create a dictionary containing every word in the corpus together with all its possible tags l Compute the probability of each tag generating a certain word, compute the probability each tag is preceded by a specific tag (Bigram HMM Tagger => probability is dependent only on the previous tag) l Tagging l Given a new sentence, for each word, pick the most likely tag for that word using the parameters obtained after training l HMM Taggers choose the tag sequence that maximizes this formula: P(word|tag) * P(tag|previous tag) 19

POS Tagging Statistical methods (2) Bigram HMM Tagger l Training l Create a dictionary containing every word in the corpus together with all its possible tags l Compute the probability of each tag generating a certain word, compute the probability each tag is preceded by a specific tag (Bigram HMM Tagger => probability is dependent only on the previous tag) l Tagging l Given a new sentence, for each word, pick the most likely tag for that word using the parameters obtained after training l HMM Taggers choose the tag sequence that maximizes this formula: P(word|tag) * P(tag|previous tag) 19

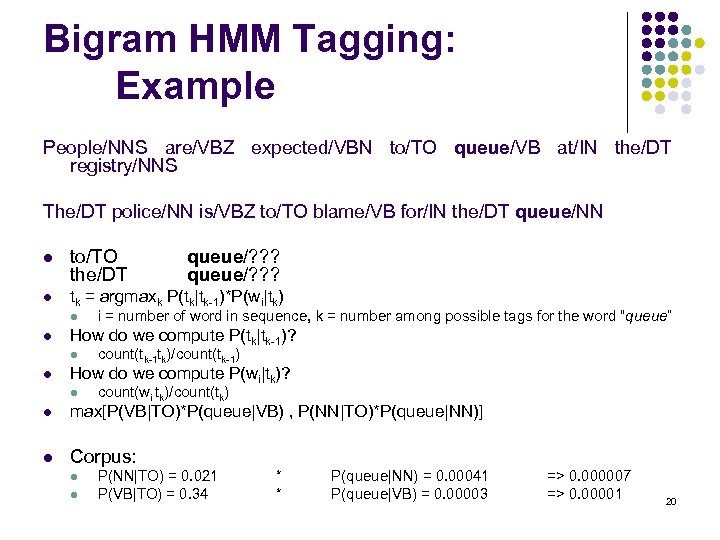

Bigram HMM Tagging: Example People/NNS are/VBZ expected/VBN to/TO queue/VB at/IN the/DT registry/NNS The/DT police/NN is/VBZ to/TO blame/VB for/IN the/DT queue/NN l to/TO the/DT l tk = argmaxk P(tk|tk-1)*P(wi|tk) l l i = number of word in sequence, k = number among possible tags for the word “queue” How do we compute P(tk|tk-1)? l l queue/? ? ? count(tk-1 tk)/count(tk-1) How do we compute P(wi|tk)? l count(wi tk)/count(tk) l max[P(VB|TO)*P(queue|VB) , P(NN|TO)*P(queue|NN)] l Corpus: l l P(NN|TO) = 0. 021 P(VB|TO) = 0. 34 * * P(queue|NN) = 0. 00041 P(queue|VB) = 0. 00003 => 0. 000007 => 0. 00001 20

Bigram HMM Tagging: Example People/NNS are/VBZ expected/VBN to/TO queue/VB at/IN the/DT registry/NNS The/DT police/NN is/VBZ to/TO blame/VB for/IN the/DT queue/NN l to/TO the/DT l tk = argmaxk P(tk|tk-1)*P(wi|tk) l l i = number of word in sequence, k = number among possible tags for the word “queue” How do we compute P(tk|tk-1)? l l queue/? ? ? count(tk-1 tk)/count(tk-1) How do we compute P(wi|tk)? l count(wi tk)/count(tk) l max[P(VB|TO)*P(queue|VB) , P(NN|TO)*P(queue|NN)] l Corpus: l l P(NN|TO) = 0. 021 P(VB|TO) = 0. 34 * * P(queue|NN) = 0. 00041 P(queue|VB) = 0. 00003 => 0. 000007 => 0. 00001 20

POS Tagging Transformation Based Tagging (1) l Combination of methodologies rule-based and stochastic tagging l l l Like rule-based because rule templates are used to learn transformations Like stochastic approach because machine learning is used — with tagged corpus as input Input: l tagged corpus l lexicon (with all possible tags for each word) 21

POS Tagging Transformation Based Tagging (1) l Combination of methodologies rule-based and stochastic tagging l l l Like rule-based because rule templates are used to learn transformations Like stochastic approach because machine learning is used — with tagged corpus as input Input: l tagged corpus l lexicon (with all possible tags for each word) 21

POS Tagging Transformation Based Tagging (2) l Basic Idea: l l l Set the most probable tag for each word as a start value Change tags according to rules of type “if word-1 is a determiner and word is a verb then change the tag to noun” in a specific order Training is done on tagged corpus: 1. Write a set of rule templates 2. Among the set of rules, find one with highest score 3. Continue from 2 until lowest score threshold is passed 4. Keep the ordered set of rules l Rules make errors that are corrected by later rules 22

POS Tagging Transformation Based Tagging (2) l Basic Idea: l l l Set the most probable tag for each word as a start value Change tags according to rules of type “if word-1 is a determiner and word is a verb then change the tag to noun” in a specific order Training is done on tagged corpus: 1. Write a set of rule templates 2. Among the set of rules, find one with highest score 3. Continue from 2 until lowest score threshold is passed 4. Keep the ordered set of rules l Rules make errors that are corrected by later rules 22

Transformation Based Tagging Example l Tagger labels every word with its most-likely tag l l For example: race has the following probabilities in the Brown corpus: l P(NN|race) = 0. 98 l P(VB|race)= 0. 02 Transformation rules make changes to tags l “Change NN to VB when previous tag is TO” … is/VBZ expected/VBN to/TO race/NN tomorrow/NN becomes … is/VBZ expected/VBN to/TO race/VB tomorrow/NN 23

Transformation Based Tagging Example l Tagger labels every word with its most-likely tag l l For example: race has the following probabilities in the Brown corpus: l P(NN|race) = 0. 98 l P(VB|race)= 0. 02 Transformation rules make changes to tags l “Change NN to VB when previous tag is TO” … is/VBZ expected/VBN to/TO race/NN tomorrow/NN becomes … is/VBZ expected/VBN to/TO race/VB tomorrow/NN 23

POS Taggers (1) ACOPOST Author(s): Jochen Hagenstroem, Kilian Foth, Ingo Schröder, Parantu Shah Purpose: ACOPOST is a collection of POS taggers. It implements and extends wellknown machine learning techniques and provides a uniform environment for testing. Platforms: All POSIX (Linux/BSD/UNIX-like OSes) Access: Free at http: //sourceforge. net/projects/acopost/ BRILL’S TAGGER Author(s): Eric Brill Purpose: Transformation Based Learning POS Tagger Access: Free at http: //www. cs. jhu. edu/~brill fn. TBL Author(s): Radu Florian and Grace Ngai, John Hopkins University, USA Purpose: fn. TBL is a customizable, portable and free source machine-learning toolkit primarily oriented towards Natural Language-related tasks (POS tagging, base NP chunking, text chunking, end-of-sentence detection). It is currently trained for English and Swedish. Platforms: Linux, Solaris, Windows Access: Free at http: //nlp. cs. jhu. edu/~rflorian/fntbl/ 24

POS Taggers (1) ACOPOST Author(s): Jochen Hagenstroem, Kilian Foth, Ingo Schröder, Parantu Shah Purpose: ACOPOST is a collection of POS taggers. It implements and extends wellknown machine learning techniques and provides a uniform environment for testing. Platforms: All POSIX (Linux/BSD/UNIX-like OSes) Access: Free at http: //sourceforge. net/projects/acopost/ BRILL’S TAGGER Author(s): Eric Brill Purpose: Transformation Based Learning POS Tagger Access: Free at http: //www. cs. jhu. edu/~brill fn. TBL Author(s): Radu Florian and Grace Ngai, John Hopkins University, USA Purpose: fn. TBL is a customizable, portable and free source machine-learning toolkit primarily oriented towards Natural Language-related tasks (POS tagging, base NP chunking, text chunking, end-of-sentence detection). It is currently trained for English and Swedish. Platforms: Linux, Solaris, Windows Access: Free at http: //nlp. cs. jhu. edu/~rflorian/fntbl/ 24

POS Taggers (2) LINGSOFT Author(s): LINGSOFT, Finland Purpose: Among the services offered by Lingsoft one can find POS taggers for Danish, English, German, Norwegian, Swedish. Access: Not free. Demos at http: //www. lingsoft. fi/demos. html LT POS (LT TTT) Author(s): Language Technology Group, University of Edinburgh, UK Purpose: The LT POS part of speech tagger uses a Hidden Markov Model disambiguation strategy. It is currently trained only for English. Access: Free but requires registration at http: //www. ltg. ed. ac. uk/software/pos/index. html MACHINESE PHRASE TAGGER Author(s): Connexor Purpose: Machinese Phrase Tagger is a set of program components that perform basic linguistic analysis tasks at very high speed and provide relevant information about words and concepts to volume-intensive applications. Available for: English, French, Spanish, German, Dutch, Italian, Finnish. Access: Not free. Free access to online demo at http: //www. connexor. com/demo/tagger/ 25

POS Taggers (2) LINGSOFT Author(s): LINGSOFT, Finland Purpose: Among the services offered by Lingsoft one can find POS taggers for Danish, English, German, Norwegian, Swedish. Access: Not free. Demos at http: //www. lingsoft. fi/demos. html LT POS (LT TTT) Author(s): Language Technology Group, University of Edinburgh, UK Purpose: The LT POS part of speech tagger uses a Hidden Markov Model disambiguation strategy. It is currently trained only for English. Access: Free but requires registration at http: //www. ltg. ed. ac. uk/software/pos/index. html MACHINESE PHRASE TAGGER Author(s): Connexor Purpose: Machinese Phrase Tagger is a set of program components that perform basic linguistic analysis tasks at very high speed and provide relevant information about words and concepts to volume-intensive applications. Available for: English, French, Spanish, German, Dutch, Italian, Finnish. Access: Not free. Free access to online demo at http: //www. connexor. com/demo/tagger/ 25

POS Taggers (3) MXPOST Author(s): Adwait Ratnaparkhi Purpose: MXPOST is a maximum entropy POS tagger. The downloadable version includes a Wall St. Journal tagging model for English, but can also be trained for different languages. Platforms: Platform independent Access: Free at http: //www. cis. upenn. edu/~adwait/statnlp. html MEMORY BASED TAGGER Author(s): ILK - Tilburg University, CNTS - University of Antwerp Purpose: Memory-based tagging is based on the idea that words occurring in similar contexts will have the same POS tag. The idea is implemented using the memorybased learning software package Ti. MBL. Access: Usable by email or on the Web at http: //ilk. uvt. nl/software. html#mbt µ-TBL Author(s): Torbjörn Lager Purpose: The µ-TBL system is a powerful environment in which to experiment with transformation-based learning. Platforms: Windows Access: Free at http: //www. ling. gu. se/~lager/mutbl. html 26

POS Taggers (3) MXPOST Author(s): Adwait Ratnaparkhi Purpose: MXPOST is a maximum entropy POS tagger. The downloadable version includes a Wall St. Journal tagging model for English, but can also be trained for different languages. Platforms: Platform independent Access: Free at http: //www. cis. upenn. edu/~adwait/statnlp. html MEMORY BASED TAGGER Author(s): ILK - Tilburg University, CNTS - University of Antwerp Purpose: Memory-based tagging is based on the idea that words occurring in similar contexts will have the same POS tag. The idea is implemented using the memorybased learning software package Ti. MBL. Access: Usable by email or on the Web at http: //ilk. uvt. nl/software. html#mbt µ-TBL Author(s): Torbjörn Lager Purpose: The µ-TBL system is a powerful environment in which to experiment with transformation-based learning. Platforms: Windows Access: Free at http: //www. ling. gu. se/~lager/mutbl. html 26

POS Taggers (4) QTAG Author(s): Oliver Mason, Birmingham University, UK Purpose: QTag is a probabilistic parts-of-speech tagger. Resource files for English and German can be downloaded together with the tool. Platforms: Platform independent Access: Free at http: //www. english. bham. ac. uk/staff/omason/software/qtag. html STANFORD POS TAGGER Author(s): Kristina Toutanova, Stanford University, USA Purpose: The Stanford POS tagger is a log-linear tagger written in Java. The downloadable package includes components for command-line invocation and a Java API both for training and for running a trained tagger. Platforms: Platform independent Access: Free at http: //nlp. stanford. edu/software/tagger. shtml SVM TOOL Author(s): TALP Research Center, University of Catalunya, Spain Purpose: The SVMTool is a simple and effective part-of-speech tagger based on Support Vector Machines. The SVMLight software implementation of Vapnik's Support Vector Machine by Thosten Joachims has been used to train the models for Catalan, English and Spanish. Access: Free. SVMTool at http: //www. lsi. upc. es/~nlp/SVMTool/ and 27 SVMLight at http: //svmlight. joachims. org/

POS Taggers (4) QTAG Author(s): Oliver Mason, Birmingham University, UK Purpose: QTag is a probabilistic parts-of-speech tagger. Resource files for English and German can be downloaded together with the tool. Platforms: Platform independent Access: Free at http: //www. english. bham. ac. uk/staff/omason/software/qtag. html STANFORD POS TAGGER Author(s): Kristina Toutanova, Stanford University, USA Purpose: The Stanford POS tagger is a log-linear tagger written in Java. The downloadable package includes components for command-line invocation and a Java API both for training and for running a trained tagger. Platforms: Platform independent Access: Free at http: //nlp. stanford. edu/software/tagger. shtml SVM TOOL Author(s): TALP Research Center, University of Catalunya, Spain Purpose: The SVMTool is a simple and effective part-of-speech tagger based on Support Vector Machines. The SVMLight software implementation of Vapnik's Support Vector Machine by Thosten Joachims has been used to train the models for Catalan, English and Spanish. Access: Free. SVMTool at http: //www. lsi. upc. es/~nlp/SVMTool/ and 27 SVMLight at http: //svmlight. joachims. org/

POS Taggers (5) Tn. T Author(s): Thorsten Brants, Saarland University, Germany Purpose: Tn. T, the short form of Trigrams'n'Tags, is a very efficient statistical part-ofspeech tagger that is trainable on different languages and virtually any tagset. The tagger is an implementation of the Viterbi algorithm for second order Markov models. Tn. T comes with two language models, one for German, and one for English. Platforms: Platform independent. Access: Free but requires registration at http: //www. coli. uni-saarland. de/~thorsten/tnt/ TREETAGGER Author(s): Helmut Schmid, Institute for Computational Linguistics, University of Stuttgart, Germany Purpose: The Tree. Tagger has been successfully used to tag German, English, French, Italian, Spanish, Greek and old French texts and is easily adaptable to other languages if a lexicon and a manually tagged training corpus are available. Access: Free at http: //www. ims. uni-stuttgart. de/projekte/corplex/Tree. Tagger/Decision. Tree. Tagger. html 28

POS Taggers (5) Tn. T Author(s): Thorsten Brants, Saarland University, Germany Purpose: Tn. T, the short form of Trigrams'n'Tags, is a very efficient statistical part-ofspeech tagger that is trainable on different languages and virtually any tagset. The tagger is an implementation of the Viterbi algorithm for second order Markov models. Tn. T comes with two language models, one for German, and one for English. Platforms: Platform independent. Access: Free but requires registration at http: //www. coli. uni-saarland. de/~thorsten/tnt/ TREETAGGER Author(s): Helmut Schmid, Institute for Computational Linguistics, University of Stuttgart, Germany Purpose: The Tree. Tagger has been successfully used to tag German, English, French, Italian, Spanish, Greek and old French texts and is easily adaptable to other languages if a lexicon and a manually tagged training corpus are available. Access: Free at http: //www. ims. uni-stuttgart. de/projekte/corplex/Tree. Tagger/Decision. Tree. Tagger. html 28

POS Taggers (6) Xerox XRCE MLTT Part Of Speech Taggers Author(s): Xerox Research Centre Europe Purpose: Xerox has developed morphological analysers and part-of-speech disambiguators for various languages including Dutch, English, French, German, Italian, Portuguese, Spanish. More recent developments include Czech, Hungarian, Polish and Russian. Access: Not free. Demos at http: //www. xrce. xerox. com/competencies/content-analysis/fsnlp/tagger. en. html YAMCHA Author(s): Taku Kudo Purpose: Yam. Cha is a generic, customizable, and open source text chunker oriented toward a lot of NLP tasks, such as POS tagging, Named Entity Recognition, base NP chunking, and Text Chunking. Yam. Cha is using Support Vector Machines (SVMs), first introduced by Vapnik in 1995. Yam. Cha is exactly the same system which performed the best in the Co. NLL 2000 Shared Task, Chunking and Base. NP Chunking task. Platforms: Linux, Windows Access: Free at http: //www 2. chasen. org/~taku/software/yamcha/ 29

POS Taggers (6) Xerox XRCE MLTT Part Of Speech Taggers Author(s): Xerox Research Centre Europe Purpose: Xerox has developed morphological analysers and part-of-speech disambiguators for various languages including Dutch, English, French, German, Italian, Portuguese, Spanish. More recent developments include Czech, Hungarian, Polish and Russian. Access: Not free. Demos at http: //www. xrce. xerox. com/competencies/content-analysis/fsnlp/tagger. en. html YAMCHA Author(s): Taku Kudo Purpose: Yam. Cha is a generic, customizable, and open source text chunker oriented toward a lot of NLP tasks, such as POS tagging, Named Entity Recognition, base NP chunking, and Text Chunking. Yam. Cha is using Support Vector Machines (SVMs), first introduced by Vapnik in 1995. Yam. Cha is exactly the same system which performed the best in the Co. NLL 2000 Shared Task, Chunking and Base. NP Chunking task. Platforms: Linux, Windows Access: Free at http: //www 2. chasen. org/~taku/software/yamcha/ 29

Stemming l Stemmers are used in IR to reduce as many related words and word forms as possible to a common canonical form – not necessarily the base form – which can then be used in the retrieval process. l Frequently, the performance of an IR system will be improved if term groups such as: CONNECT, CONNECTED, CONNECTING, CONNECTIONS are conflated into a single term (by removal of the various suffixes -ED, -ING, -IONS to leave the single term CONNECT). The suffix stripping process will reduce the total number of terms in the IR system, and hence reduce the size and complexity of the data in the system, which is always advantageous. 30

Stemming l Stemmers are used in IR to reduce as many related words and word forms as possible to a common canonical form – not necessarily the base form – which can then be used in the retrieval process. l Frequently, the performance of an IR system will be improved if term groups such as: CONNECT, CONNECTED, CONNECTING, CONNECTIONS are conflated into a single term (by removal of the various suffixes -ED, -ING, -IONS to leave the single term CONNECT). The suffix stripping process will reduce the total number of terms in the IR system, and hence reduce the size and complexity of the data in the system, which is always advantageous. 30

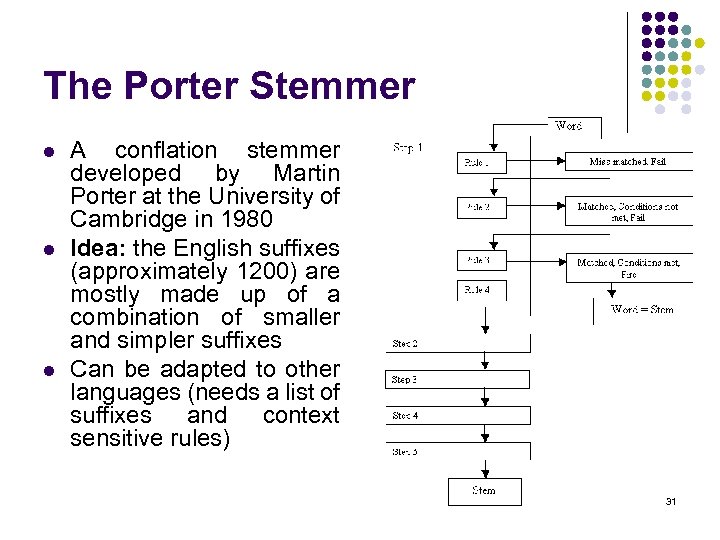

The Porter Stemmer l l l A conflation stemmer developed by Martin Porter at the University of Cambridge in 1980 Idea: the English suffixes (approximately 1200) are mostly made up of a combination of smaller and simpler suffixes Can be adapted to other languages (needs a list of suffixes and context sensitive rules) 31

The Porter Stemmer l l l A conflation stemmer developed by Martin Porter at the University of Cambridge in 1980 Idea: the English suffixes (approximately 1200) are mostly made up of a combination of smaller and simpler suffixes Can be adapted to other languages (needs a list of suffixes and context sensitive rules) 31

Stemmers (1) ELLOGON Author(s): George Petasis, Vangelis Karkaletsis, National Center for Scientific Research, Greece Access: Free at http: //www. ellogon. org/ FSA Author(s): Jan Daciuk, Rijksuniversiteit Groningen and Technical University of Gdansk, Poland Purpose: Supported languages: German, English, French, Polish. Access: Free at http: //juggernaut. eti. pg. gda. pl/~jandac/fsa. html HEART Of GOLD Author(s): Ulrich Schäfer, DFKI Language Technology Lab, Germany Purpose: Supported language: Unicode, Spanish, Polish, Norwegian, Japanese, Italian, Greek, German, French, English, Chinese. Access: Free at http: //heartofgold. dfki. de/ 32

Stemmers (1) ELLOGON Author(s): George Petasis, Vangelis Karkaletsis, National Center for Scientific Research, Greece Access: Free at http: //www. ellogon. org/ FSA Author(s): Jan Daciuk, Rijksuniversiteit Groningen and Technical University of Gdansk, Poland Purpose: Supported languages: German, English, French, Polish. Access: Free at http: //juggernaut. eti. pg. gda. pl/~jandac/fsa. html HEART Of GOLD Author(s): Ulrich Schäfer, DFKI Language Technology Lab, Germany Purpose: Supported language: Unicode, Spanish, Polish, Norwegian, Japanese, Italian, Greek, German, French, English, Chinese. Access: Free at http: //heartofgold. dfki. de/ 32

Stemmers (2) LANGSUITE Author(s): Peta. Mem Purpose: Supported languages: Unicode, Spanish, Polish, Italian, Hungarian, German, French, English, Dutch, Danish, Czech. Access: Not free. More information at http: //www. petamem. com/ SNOWBALL Purpose: Presentation of stemming algorithms, and Snowball stemmers, for English, Russian, Romance languages (French, Spanish, Portuguese and Italian), German, Dutch, Swedish, Norwegian, Danish and Finnish. Access: Free at http: //www. snowball. tartarus. org/ SPro. UT Author(s): Feiyu Xu, Tim vor der Brück, LT-Lab, DFKI Gmb. H, Germany Purpose: Available for: Unicode, Spanish, Japanese, German, French, English, Chinese Access: Not free. More information at http: //sprout. dfki. de/ TWOL Author(s): Lingsoft Purpose: Supported languages: Swedish, Norwegian, German, Finnish, English, Danish Access: Not free. More information at http: //www. lingsoft. fi/ 33

Stemmers (2) LANGSUITE Author(s): Peta. Mem Purpose: Supported languages: Unicode, Spanish, Polish, Italian, Hungarian, German, French, English, Dutch, Danish, Czech. Access: Not free. More information at http: //www. petamem. com/ SNOWBALL Purpose: Presentation of stemming algorithms, and Snowball stemmers, for English, Russian, Romance languages (French, Spanish, Portuguese and Italian), German, Dutch, Swedish, Norwegian, Danish and Finnish. Access: Free at http: //www. snowball. tartarus. org/ SPro. UT Author(s): Feiyu Xu, Tim vor der Brück, LT-Lab, DFKI Gmb. H, Germany Purpose: Available for: Unicode, Spanish, Japanese, German, French, English, Chinese Access: Not free. More information at http: //sprout. dfki. de/ TWOL Author(s): Lingsoft Purpose: Supported languages: Swedish, Norwegian, German, Finnish, English, Danish Access: Not free. More information at http: //www. lingsoft. fi/ 33

Lemmatization l The process of grouping the inflected forms of a word together under a base form, or of recovering the base form from an inflected form, e. g. grouping the inflected forms COME, COMES, COMING, CAME under the base form COME l Dictionary based l Input: token + pos l Output: lemma l Note: needs POS information l Example: l l left+v -> leave, left+a->left It is the same as looking for a transformation to apply on a word to get its normalized form (word endings: what word suffix should be removed and/or added to get the normalized form) => lemmatization can be modeled as a machine learning problem 34

Lemmatization l The process of grouping the inflected forms of a word together under a base form, or of recovering the base form from an inflected form, e. g. grouping the inflected forms COME, COMES, COMING, CAME under the base form COME l Dictionary based l Input: token + pos l Output: lemma l Note: needs POS information l Example: l l left+v -> leave, left+a->left It is the same as looking for a transformation to apply on a word to get its normalized form (word endings: what word suffix should be removed and/or added to get the normalized form) => lemmatization can be modeled as a machine learning problem 34

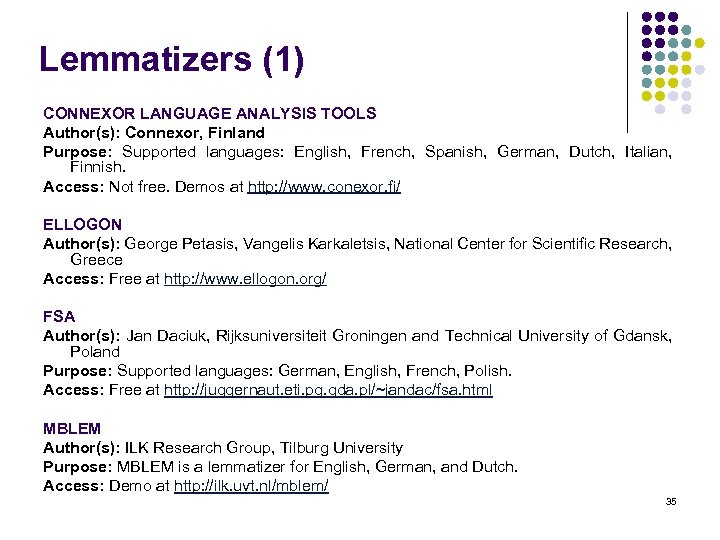

Lemmatizers (1) CONNEXOR LANGUAGE ANALYSIS TOOLS Author(s): Connexor, Finland Purpose: Supported languages: English, French, Spanish, German, Dutch, Italian, Finnish. Access: Not free. Demos at http: //www. conexor. fi/ ELLOGON Author(s): George Petasis, Vangelis Karkaletsis, National Center for Scientific Research, Greece Access: Free at http: //www. ellogon. org/ FSA Author(s): Jan Daciuk, Rijksuniversiteit Groningen and Technical University of Gdansk, Poland Purpose: Supported languages: German, English, French, Polish. Access: Free at http: //juggernaut. eti. pg. gda. pl/~jandac/fsa. html MBLEM Author(s): ILK Research Group, Tilburg University Purpose: MBLEM is a lemmatizer for English, German, and Dutch. Access: Demo at http: //ilk. uvt. nl/mblem/ 35

Lemmatizers (1) CONNEXOR LANGUAGE ANALYSIS TOOLS Author(s): Connexor, Finland Purpose: Supported languages: English, French, Spanish, German, Dutch, Italian, Finnish. Access: Not free. Demos at http: //www. conexor. fi/ ELLOGON Author(s): George Petasis, Vangelis Karkaletsis, National Center for Scientific Research, Greece Access: Free at http: //www. ellogon. org/ FSA Author(s): Jan Daciuk, Rijksuniversiteit Groningen and Technical University of Gdansk, Poland Purpose: Supported languages: German, English, French, Polish. Access: Free at http: //juggernaut. eti. pg. gda. pl/~jandac/fsa. html MBLEM Author(s): ILK Research Group, Tilburg University Purpose: MBLEM is a lemmatizer for English, German, and Dutch. Access: Demo at http: //ilk. uvt. nl/mblem/ 35

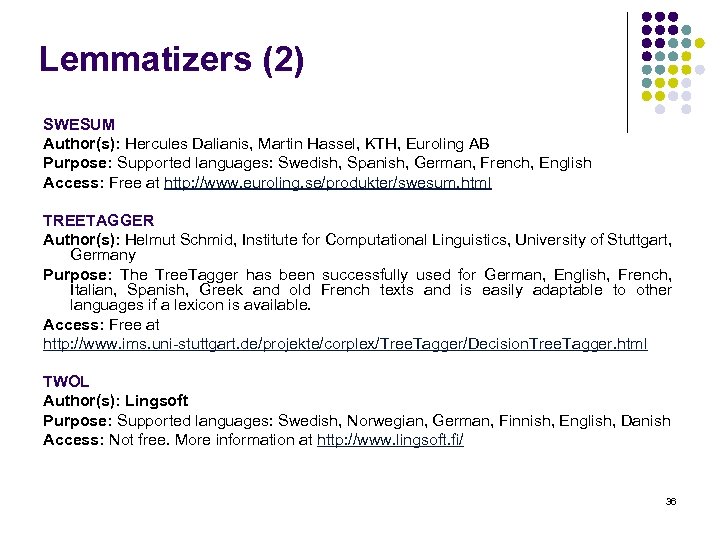

Lemmatizers (2) SWESUM Author(s): Hercules Dalianis, Martin Hassel, KTH, Euroling AB Purpose: Supported languages: Swedish, Spanish, German, French, English Access: Free at http: //www. euroling. se/produkter/swesum. html TREETAGGER Author(s): Helmut Schmid, Institute for Computational Linguistics, University of Stuttgart, Germany Purpose: The Tree. Tagger has been successfully used for German, English, French, Italian, Spanish, Greek and old French texts and is easily adaptable to other languages if a lexicon is available. Access: Free at http: //www. ims. uni-stuttgart. de/projekte/corplex/Tree. Tagger/Decision. Tree. Tagger. html TWOL Author(s): Lingsoft Purpose: Supported languages: Swedish, Norwegian, German, Finnish, English, Danish Access: Not free. More information at http: //www. lingsoft. fi/ 36

Lemmatizers (2) SWESUM Author(s): Hercules Dalianis, Martin Hassel, KTH, Euroling AB Purpose: Supported languages: Swedish, Spanish, German, French, English Access: Free at http: //www. euroling. se/produkter/swesum. html TREETAGGER Author(s): Helmut Schmid, Institute for Computational Linguistics, University of Stuttgart, Germany Purpose: The Tree. Tagger has been successfully used for German, English, French, Italian, Spanish, Greek and old French texts and is easily adaptable to other languages if a lexicon is available. Access: Free at http: //www. ims. uni-stuttgart. de/projekte/corplex/Tree. Tagger/Decision. Tree. Tagger. html TWOL Author(s): Lingsoft Purpose: Supported languages: Swedish, Norwegian, German, Finnish, English, Danish Access: Not free. More information at http: //www. lingsoft. fi/ 36

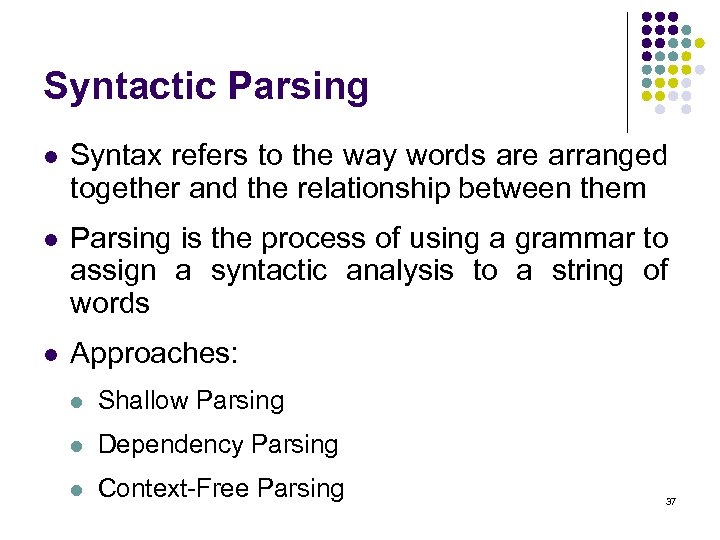

Syntactic Parsing l Syntax refers to the way words are arranged together and the relationship between them l Parsing is the process of using a grammar to assign a syntactic analysis to a string of words l Approaches: l Shallow Parsing l Dependency Parsing l Context-Free Parsing 37

Syntactic Parsing l Syntax refers to the way words are arranged together and the relationship between them l Parsing is the process of using a grammar to assign a syntactic analysis to a string of words l Approaches: l Shallow Parsing l Dependency Parsing l Context-Free Parsing 37

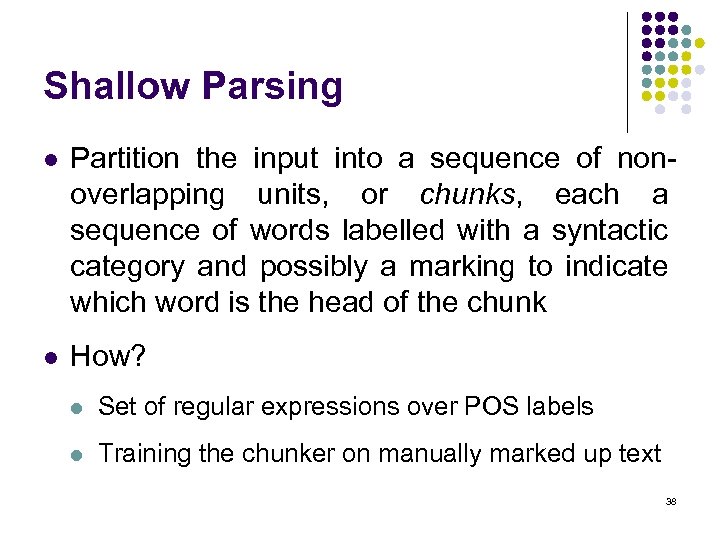

Shallow Parsing l Partition the input into a sequence of nonoverlapping units, or chunks, each a sequence of words labelled with a syntactic category and possibly a marking to indicate which word is the head of the chunk l How? l Set of regular expressions over POS labels l Training the chunker on manually marked up text 38

Shallow Parsing l Partition the input into a sequence of nonoverlapping units, or chunks, each a sequence of words labelled with a syntactic category and possibly a marking to indicate which word is the head of the chunk l How? l Set of regular expressions over POS labels l Training the chunker on manually marked up text 38

Dependency Parsing l Based on dependency grammars, where a syntactic analysis takes the form of a set of head-modifier dependency links between words, each link labelled with the grammatical function of the modifying word with respect to the head l Parser first labels each word with all possible function types and then applies handwritten rules to introduce links between specific types and remove other function-type readings 39

Dependency Parsing l Based on dependency grammars, where a syntactic analysis takes the form of a set of head-modifier dependency links between words, each link labelled with the grammatical function of the modifying word with respect to the head l Parser first labels each word with all possible function types and then applies handwritten rules to introduce links between specific types and remove other function-type readings 39

Context-Free (CF) Parsing l l CF parsing algorithms form the basis for almost all approaches to parsing that build hierarchical phrase structure CFG Example: l l l l l S -> NP VP NP -> Det NOMINAL -> Noun VP -> Verb Det -> a Noun -> flight Verb -> left A derivation is a sequence of rules applied to a string that accounts for that string (derivation tree) Parsing is the process of taking a string and a grammar and returning one (more? ) parse tree(s) for that string Treebanks = Parsed corpora in the form of trees 40

Context-Free (CF) Parsing l l CF parsing algorithms form the basis for almost all approaches to parsing that build hierarchical phrase structure CFG Example: l l l l l S -> NP VP NP -> Det NOMINAL -> Noun VP -> Verb Det -> a Noun -> flight Verb -> left A derivation is a sequence of rules applied to a string that accounts for that string (derivation tree) Parsing is the process of taking a string and a grammar and returning one (more? ) parse tree(s) for that string Treebanks = Parsed corpora in the form of trees 40

Probabilistic CFGs l Assigning probabilities to parse trees l l l Attach probabilities to grammar rules The expansions for a given non-terminal sum to 1 A derivation (tree) consists of the set of grammar rules that are in the tree The probability of a tree is just the product of the probabilities of the rules in the derivation. Needed: grammar, dictionary with POS, parser Task is to find the max probability tree for an input 41

Probabilistic CFGs l Assigning probabilities to parse trees l l l Attach probabilities to grammar rules The expansions for a given non-terminal sum to 1 A derivation (tree) consists of the set of grammar rules that are in the tree The probability of a tree is just the product of the probabilities of the rules in the derivation. Needed: grammar, dictionary with POS, parser Task is to find the max probability tree for an input 41

Noun Phrase (NP) Chunkers fn. TBL Author(s): Radu Florian and Grace Ngai, John Hopkins University, USA Purpose: fn. TBL is a customizable, portable and free source machine-learning toolkit primarily oriented towards Natural Language-related tasks (POS tagging, base NP chunking, text chunking, end-of-sentence detection, word sense disambiguation). It is currently trained for English and Swedish. Platforms: Linux, Solaris, Windows Access: Free at http: //nlp. cs. jhu. edu/~rflorian/fntbl/ YAMCHA Author(s): Taku Kudo Purpose: Yam. Cha is a generic, customizable, and open source text chunker oriented toward a lot of NLP tasks, such as POS tagging, Named Entity Recognition, base NP chunking, and Text Chunking. Yam. Cha is using Support Vector Machines (SVMs), first introduced by Vapnik in 1995. Yam. Cha is exactly the same system which performed the best in the Co. NLL 2000 Shared Task, Chunking and Base. NP Chunking task. Platforms: Linux, Windows Access: Free at http: //www 2. chasen. org/~taku/software/yamcha/ 42

Noun Phrase (NP) Chunkers fn. TBL Author(s): Radu Florian and Grace Ngai, John Hopkins University, USA Purpose: fn. TBL is a customizable, portable and free source machine-learning toolkit primarily oriented towards Natural Language-related tasks (POS tagging, base NP chunking, text chunking, end-of-sentence detection, word sense disambiguation). It is currently trained for English and Swedish. Platforms: Linux, Solaris, Windows Access: Free at http: //nlp. cs. jhu. edu/~rflorian/fntbl/ YAMCHA Author(s): Taku Kudo Purpose: Yam. Cha is a generic, customizable, and open source text chunker oriented toward a lot of NLP tasks, such as POS tagging, Named Entity Recognition, base NP chunking, and Text Chunking. Yam. Cha is using Support Vector Machines (SVMs), first introduced by Vapnik in 1995. Yam. Cha is exactly the same system which performed the best in the Co. NLL 2000 Shared Task, Chunking and Base. NP Chunking task. Platforms: Linux, Windows Access: Free at http: //www 2. chasen. org/~taku/software/yamcha/ 42

Syntactic parsers MACHINESE PHRASE TAGGER Author(s): Connexor Purpose: Machinese Phrase Tagger is a set of program components that perform basic linguistic analysis tasks at very high speed and provide relevant information about words and concepts to volume-intensive applications. Available for: English, French, Spanish, German, Dutch, Italian, Finnish. Access: Not free. Free access to online demo at http: //www. connexor. com/demo/tagger/ 43

Syntactic parsers MACHINESE PHRASE TAGGER Author(s): Connexor Purpose: Machinese Phrase Tagger is a set of program components that perform basic linguistic analysis tasks at very high speed and provide relevant information about words and concepts to volume-intensive applications. Available for: English, French, Spanish, German, Dutch, Italian, Finnish. Access: Not free. Free access to online demo at http: //www. connexor. com/demo/tagger/ 43

Named Entity Recognition l Identification of proper names in texts, and their classification into a set of predefined categories of interest: l l l entities: organizations, persons, locations temporal expressions: time, date quantities: monetary values, percentages, numbers l Two kinds of approaches Knowledge Engineering Learning Systems l rule based l use statistics or other machine learning l developed by experienced language engineers l developers do not need LE expertise l make use of human intuition l require large amounts of l small amount of training data annotated training data l very time consuming l some changes may require rel some changes may be hard to annotation of the entire 44 accommodate training corpus

Named Entity Recognition l Identification of proper names in texts, and their classification into a set of predefined categories of interest: l l l entities: organizations, persons, locations temporal expressions: time, date quantities: monetary values, percentages, numbers l Two kinds of approaches Knowledge Engineering Learning Systems l rule based l use statistics or other machine learning l developed by experienced language engineers l developers do not need LE expertise l make use of human intuition l require large amounts of l small amount of training data annotated training data l very time consuming l some changes may require rel some changes may be hard to annotation of the entire 44 accommodate training corpus

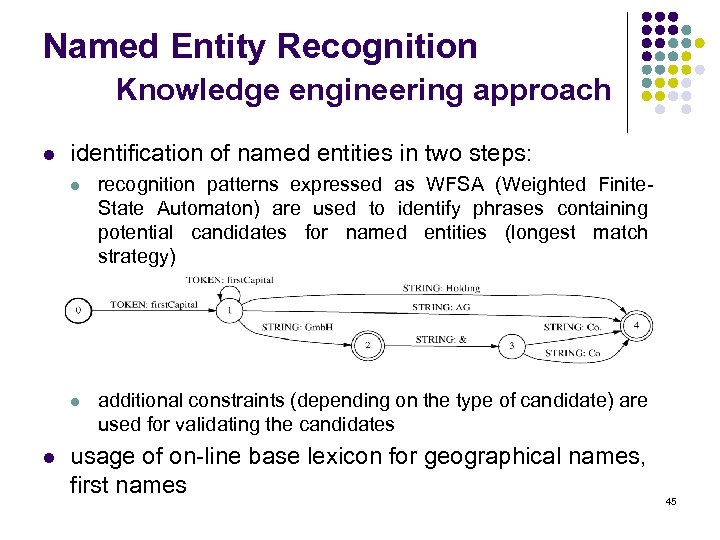

Named Entity Recognition Knowledge engineering approach l identification of named entities in two steps: l l l recognition patterns expressed as WFSA (Weighted Finite. State Automaton) are used to identify phrases containing potential candidates for named entities (longest match strategy) additional constraints (depending on the type of candidate) are used for validating the candidates usage of on-line base lexicon for geographical names, first names 45

Named Entity Recognition Knowledge engineering approach l identification of named entities in two steps: l l l recognition patterns expressed as WFSA (Weighted Finite. State Automaton) are used to identify phrases containing potential candidates for named entities (longest match strategy) additional constraints (depending on the type of candidate) are used for validating the candidates usage of on-line base lexicon for geographical names, first names 45

Named Entity Recognition Problems l Variation of NEs, e. g. John Smith, Mr. Smith, John l Since named entities may appear without designators (companies, persons) a dynamic lexicon for storing such named entities is used Example: “Mars Ltd is a wholly-owned subsidiary of Food Manufacturing Ltd, a nontrading company registered in England. Mars is controlled by members of the Mars family. ” l Resolution of type ambiguity using the dynamic lexicon: If an expression can be a person name or company name (Martin Marietta Corp. ) then use type of last entry inserted into dynamic lexicon for making decision. l Issues of style, structure, domain, genre etc. l Punctuation, spelling, spacing, formatting 46

Named Entity Recognition Problems l Variation of NEs, e. g. John Smith, Mr. Smith, John l Since named entities may appear without designators (companies, persons) a dynamic lexicon for storing such named entities is used Example: “Mars Ltd is a wholly-owned subsidiary of Food Manufacturing Ltd, a nontrading company registered in England. Mars is controlled by members of the Mars family. ” l Resolution of type ambiguity using the dynamic lexicon: If an expression can be a person name or company name (Martin Marietta Corp. ) then use type of last entry inserted into dynamic lexicon for making decision. l Issues of style, structure, domain, genre etc. l Punctuation, spelling, spacing, formatting 46

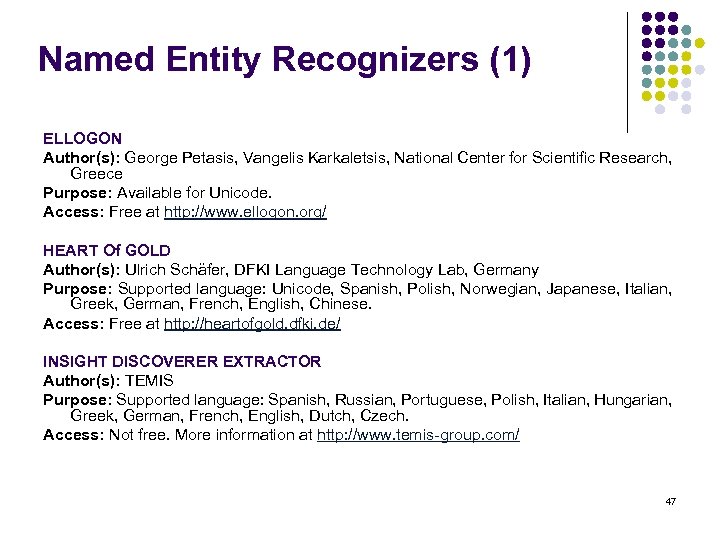

Named Entity Recognizers (1) ELLOGON Author(s): George Petasis, Vangelis Karkaletsis, National Center for Scientific Research, Greece Purpose: Available for Unicode. Access: Free at http: //www. ellogon. org/ HEART Of GOLD Author(s): Ulrich Schäfer, DFKI Language Technology Lab, Germany Purpose: Supported language: Unicode, Spanish, Polish, Norwegian, Japanese, Italian, Greek, German, French, English, Chinese. Access: Free at http: //heartofgold. dfki. de/ INSIGHT DISCOVERER EXTRACTOR Author(s): TEMIS Purpose: Supported language: Spanish, Russian, Portuguese, Polish, Italian, Hungarian, Greek, German, French, English, Dutch, Czech. Access: Not free. More information at http: //www. temis-group. com/ 47

Named Entity Recognizers (1) ELLOGON Author(s): George Petasis, Vangelis Karkaletsis, National Center for Scientific Research, Greece Purpose: Available for Unicode. Access: Free at http: //www. ellogon. org/ HEART Of GOLD Author(s): Ulrich Schäfer, DFKI Language Technology Lab, Germany Purpose: Supported language: Unicode, Spanish, Polish, Norwegian, Japanese, Italian, Greek, German, French, English, Chinese. Access: Free at http: //heartofgold. dfki. de/ INSIGHT DISCOVERER EXTRACTOR Author(s): TEMIS Purpose: Supported language: Spanish, Russian, Portuguese, Polish, Italian, Hungarian, Greek, German, French, English, Dutch, Czech. Access: Not free. More information at http: //www. temis-group. com/ 47

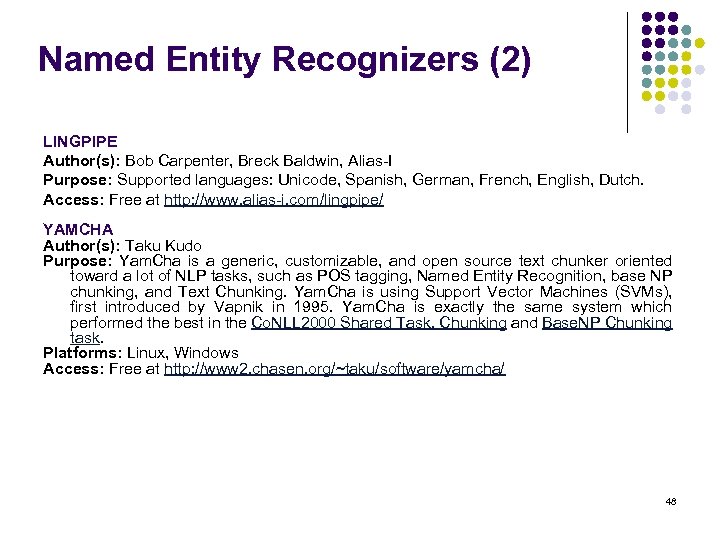

Named Entity Recognizers (2) LINGPIPE Author(s): Bob Carpenter, Breck Baldwin, Alias-I Purpose: Supported languages: Unicode, Spanish, German, French, English, Dutch. Access: Free at http: //www. alias-i. com/lingpipe/ YAMCHA Author(s): Taku Kudo Purpose: Yam. Cha is a generic, customizable, and open source text chunker oriented toward a lot of NLP tasks, such as POS tagging, Named Entity Recognition, base NP chunking, and Text Chunking. Yam. Cha is using Support Vector Machines (SVMs), first introduced by Vapnik in 1995. Yam. Cha is exactly the same system which performed the best in the Co. NLL 2000 Shared Task, Chunking and Base. NP Chunking task. Platforms: Linux, Windows Access: Free at http: //www 2. chasen. org/~taku/software/yamcha/ 48

Named Entity Recognizers (2) LINGPIPE Author(s): Bob Carpenter, Breck Baldwin, Alias-I Purpose: Supported languages: Unicode, Spanish, German, French, English, Dutch. Access: Free at http: //www. alias-i. com/lingpipe/ YAMCHA Author(s): Taku Kudo Purpose: Yam. Cha is a generic, customizable, and open source text chunker oriented toward a lot of NLP tasks, such as POS tagging, Named Entity Recognition, base NP chunking, and Text Chunking. Yam. Cha is using Support Vector Machines (SVMs), first introduced by Vapnik in 1995. Yam. Cha is exactly the same system which performed the best in the Co. NLL 2000 Shared Task, Chunking and Base. NP Chunking task. Platforms: Linux, Windows Access: Free at http: //www 2. chasen. org/~taku/software/yamcha/ 48

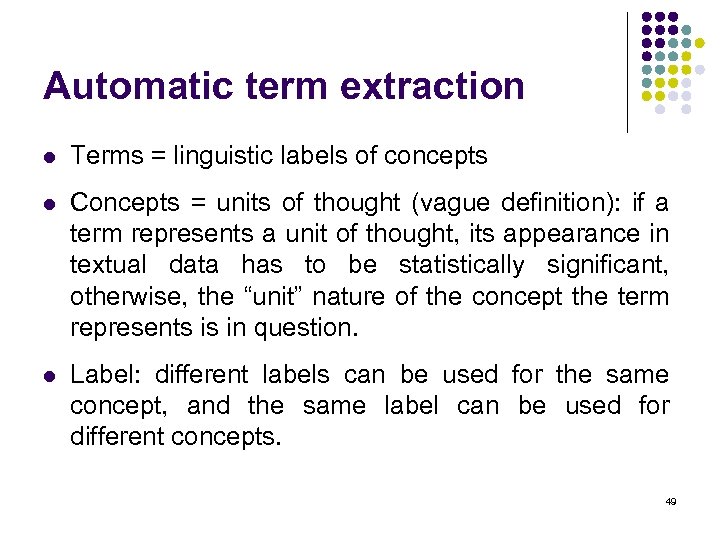

Automatic term extraction l Terms = linguistic labels of concepts l Concepts = units of thought (vague definition): if a term represents a unit of thought, its appearance in textual data has to be statistically significant, otherwise, the “unit” nature of the concept the term represents is in question. l Label: different labels can be used for the same concept, and the same label can be used for different concepts. 49

Automatic term extraction l Terms = linguistic labels of concepts l Concepts = units of thought (vague definition): if a term represents a unit of thought, its appearance in textual data has to be statistically significant, otherwise, the “unit” nature of the concept the term represents is in question. l Label: different labels can be used for the same concept, and the same label can be used for different concepts. 49

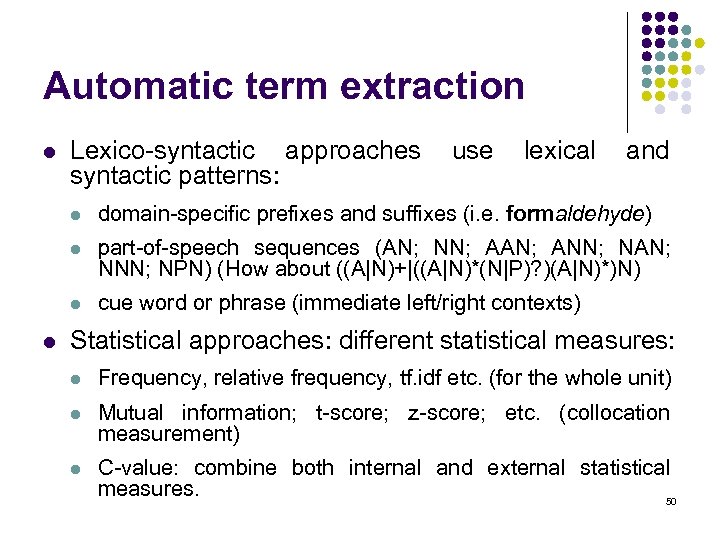

Automatic term extraction l Lexico-syntactic approaches syntactic patterns: use lexical and l l part-of-speech sequences (AN; NN; AAN; ANN; NAN; NNN; NPN) (How about ((A|N)+|((A|N)*(N|P)? )(A|N)*)N) l l domain-specific prefixes and suffixes (i. e. formaldehyde) cue word or phrase (immediate left/right contexts) Statistical approaches: different statistical measures: l Frequency, relative frequency, tf. idf etc. (for the whole unit) l Mutual information; t-score; z-score; etc. (collocation measurement) l C-value: combine both internal and external statistical measures. 50

Automatic term extraction l Lexico-syntactic approaches syntactic patterns: use lexical and l l part-of-speech sequences (AN; NN; AAN; ANN; NAN; NNN; NPN) (How about ((A|N)+|((A|N)*(N|P)? )(A|N)*)N) l l domain-specific prefixes and suffixes (i. e. formaldehyde) cue word or phrase (immediate left/right contexts) Statistical approaches: different statistical measures: l Frequency, relative frequency, tf. idf etc. (for the whole unit) l Mutual information; t-score; z-score; etc. (collocation measurement) l C-value: combine both internal and external statistical measures. 50

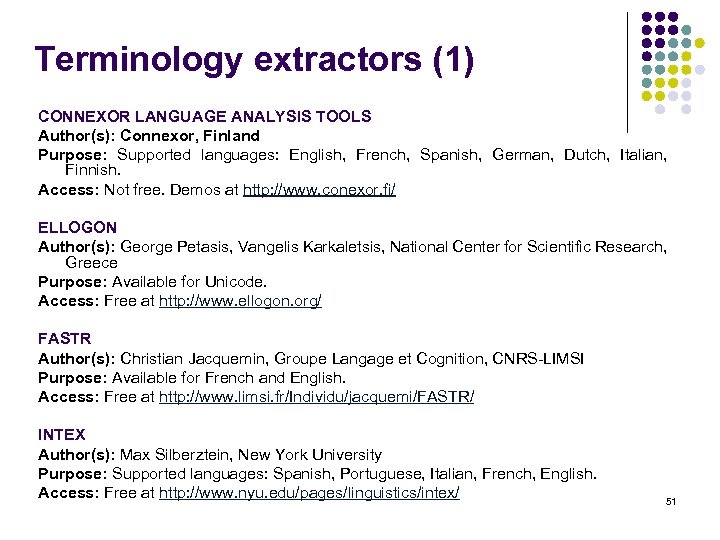

Terminology extractors (1) CONNEXOR LANGUAGE ANALYSIS TOOLS Author(s): Connexor, Finland Purpose: Supported languages: English, French, Spanish, German, Dutch, Italian, Finnish. Access: Not free. Demos at http: //www. conexor. fi/ ELLOGON Author(s): George Petasis, Vangelis Karkaletsis, National Center for Scientific Research, Greece Purpose: Available for Unicode. Access: Free at http: //www. ellogon. org/ FASTR Author(s): Christian Jacquemin, Groupe Langage et Cognition, CNRS-LIMSI Purpose: Available for French and English. Access: Free at http: //www. limsi. fr/Individu/jacquemi/FASTR/ INTEX Author(s): Max Silberztein, New York University Purpose: Supported languages: Spanish, Portuguese, Italian, French, English. Access: Free at http: //www. nyu. edu/pages/linguistics/intex/ 51

Terminology extractors (1) CONNEXOR LANGUAGE ANALYSIS TOOLS Author(s): Connexor, Finland Purpose: Supported languages: English, French, Spanish, German, Dutch, Italian, Finnish. Access: Not free. Demos at http: //www. conexor. fi/ ELLOGON Author(s): George Petasis, Vangelis Karkaletsis, National Center for Scientific Research, Greece Purpose: Available for Unicode. Access: Free at http: //www. ellogon. org/ FASTR Author(s): Christian Jacquemin, Groupe Langage et Cognition, CNRS-LIMSI Purpose: Available for French and English. Access: Free at http: //www. limsi. fr/Individu/jacquemi/FASTR/ INTEX Author(s): Max Silberztein, New York University Purpose: Supported languages: Spanish, Portuguese, Italian, French, English. Access: Free at http: //www. nyu. edu/pages/linguistics/intex/ 51

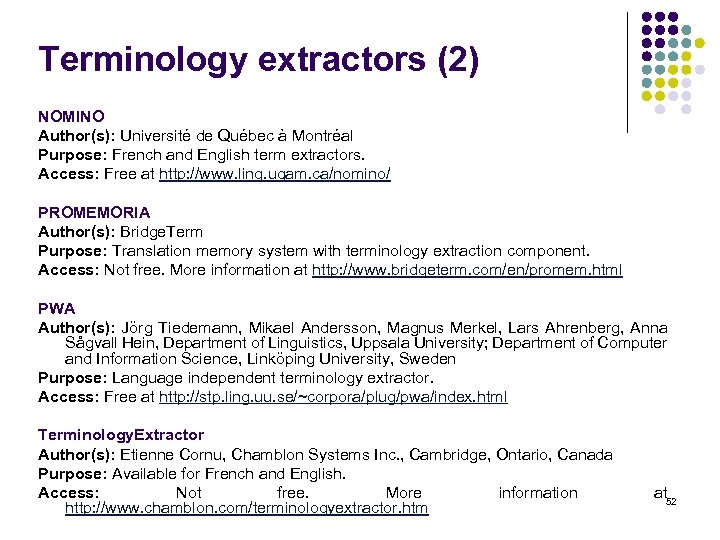

Terminology extractors (2) NOMINO Author(s): Université de Québec à Montréal Purpose: French and English term extractors. Access: Free at http: //www. ling. uqam. ca/nomino/ PROMEMORIA Author(s): Bridge. Term Purpose: Translation memory system with terminology extraction component. Access: Not free. More information at http: //www. bridgeterm. com/en/promem. html PWA Author(s): Jörg Tiedemann, Mikael Andersson, Magnus Merkel, Lars Ahrenberg, Anna Sågvall Hein, Department of Linguistics, Uppsala University; Department of Computer and Information Science, Linköping University, Sweden Purpose: Language independent terminology extractor. Access: Free at http: //stp. ling. uu. se/~corpora/plug/pwa/index. html Terminology. Extractor Author(s): Etienne Cornu, Chamblon Systems Inc. , Cambridge, Ontario, Canada Purpose: Available for French and English. Access: Not free. More information http: //www. chamblon. com/terminologyextractor. htm at 52

Terminology extractors (2) NOMINO Author(s): Université de Québec à Montréal Purpose: French and English term extractors. Access: Free at http: //www. ling. uqam. ca/nomino/ PROMEMORIA Author(s): Bridge. Term Purpose: Translation memory system with terminology extraction component. Access: Not free. More information at http: //www. bridgeterm. com/en/promem. html PWA Author(s): Jörg Tiedemann, Mikael Andersson, Magnus Merkel, Lars Ahrenberg, Anna Sågvall Hein, Department of Linguistics, Uppsala University; Department of Computer and Information Science, Linköping University, Sweden Purpose: Language independent terminology extractor. Access: Free at http: //stp. ling. uu. se/~corpora/plug/pwa/index. html Terminology. Extractor Author(s): Etienne Cornu, Chamblon Systems Inc. , Cambridge, Ontario, Canada Purpose: Available for French and English. Access: Not free. More information http: //www. chamblon. com/terminologyextractor. htm at 52

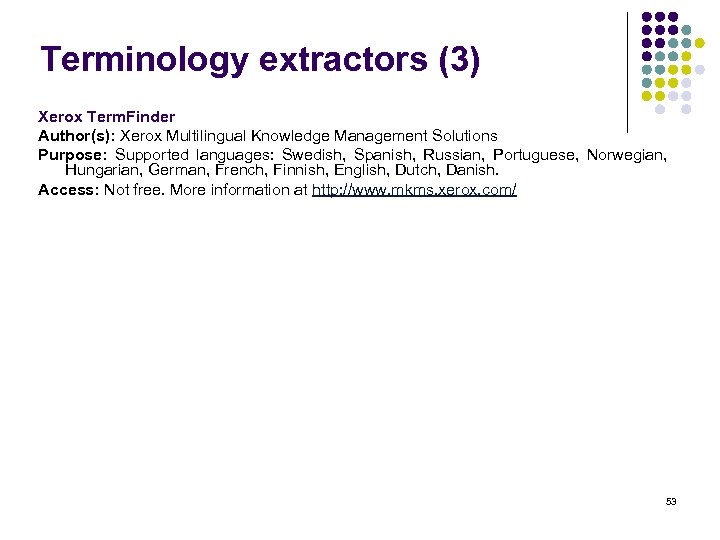

Terminology extractors (3) Xerox Term. Finder Author(s): Xerox Multilingual Knowledge Management Solutions Purpose: Supported languages: Swedish, Spanish, Russian, Portuguese, Norwegian, Hungarian, German, French, Finnish, English, Dutch, Danish. Access: Not free. More information at http: //www. mkms. xerox. com/ 53

Terminology extractors (3) Xerox Term. Finder Author(s): Xerox Multilingual Knowledge Management Solutions Purpose: Supported languages: Swedish, Spanish, Russian, Portuguese, Norwegian, Hungarian, German, French, Finnish, English, Dutch, Danish. Access: Not free. More information at http: //www. mkms. xerox. com/ 53

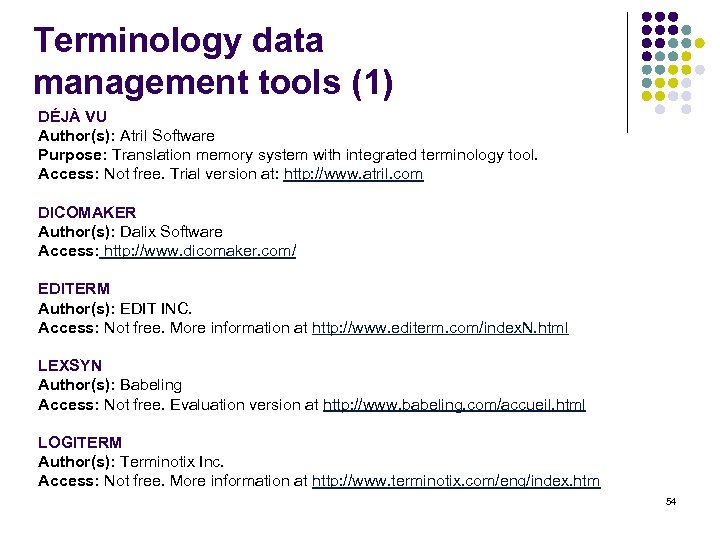

Terminology data management tools (1) DÉJÀ VU Author(s): Atril Software Purpose: Translation memory system with integrated terminology tool. Access: Not free. Trial version at: http: //www. atril. com DICOMAKER Author(s): Dalix Software Access: http: //www. dicomaker. com/ EDITERM Author(s): EDIT INC. Access: Not free. More information at http: //www. editerm. com/index. N. html LEXSYN Author(s): Babeling Access: Not free. Evaluation version at http: //www. babeling. com/accueil. html LOGITERM Author(s): Terminotix Inc. Access: Not free. More information at http: //www. terminotix. com/eng/index. htm 54

Terminology data management tools (1) DÉJÀ VU Author(s): Atril Software Purpose: Translation memory system with integrated terminology tool. Access: Not free. Trial version at: http: //www. atril. com DICOMAKER Author(s): Dalix Software Access: http: //www. dicomaker. com/ EDITERM Author(s): EDIT INC. Access: Not free. More information at http: //www. editerm. com/index. N. html LEXSYN Author(s): Babeling Access: Not free. Evaluation version at http: //www. babeling. com/accueil. html LOGITERM Author(s): Terminotix Inc. Access: Not free. More information at http: //www. terminotix. com/eng/index. htm 54

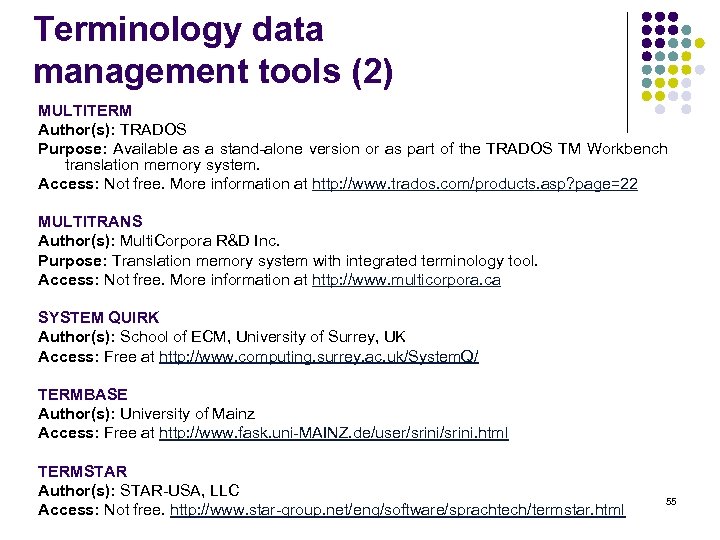

Terminology data management tools (2) MULTITERM Author(s): TRADOS Purpose: Available as a stand-alone version or as part of the TRADOS TM Workbench translation memory system. Access: Not free. More information at http: //www. trados. com/products. asp? page=22 MULTITRANS Author(s): Multi. Corpora R&D Inc. Purpose: Translation memory system with integrated terminology tool. Access: Not free. More information at http: //www. multicorpora. ca SYSTEM QUIRK Author(s): School of ECM, University of Surrey, UK Access: Free at http: //www. computing. surrey. ac. uk/System. Q/ TERMBASE Author(s): University of Mainz Access: Free at http: //www. fask. uni-MAINZ. de/user/srini. html TERMSTAR Author(s): STAR-USA, LLC Access: Not free. http: //www. star-group. net/eng/software/sprachtech/termstar. html 55

Terminology data management tools (2) MULTITERM Author(s): TRADOS Purpose: Available as a stand-alone version or as part of the TRADOS TM Workbench translation memory system. Access: Not free. More information at http: //www. trados. com/products. asp? page=22 MULTITRANS Author(s): Multi. Corpora R&D Inc. Purpose: Translation memory system with integrated terminology tool. Access: Not free. More information at http: //www. multicorpora. ca SYSTEM QUIRK Author(s): School of ECM, University of Surrey, UK Access: Free at http: //www. computing. surrey. ac. uk/System. Q/ TERMBASE Author(s): University of Mainz Access: Free at http: //www. fask. uni-MAINZ. de/user/srini. html TERMSTAR Author(s): STAR-USA, LLC Access: Not free. http: //www. star-group. net/eng/software/sprachtech/termstar. html 55

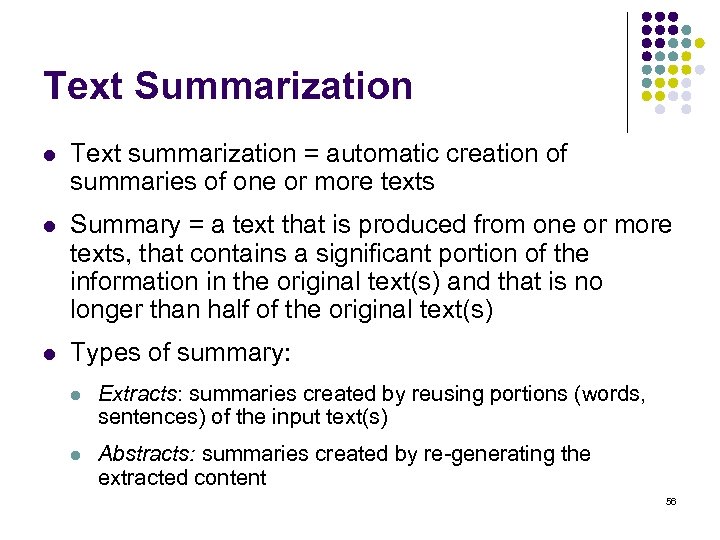

Text Summarization l Text summarization = automatic creation of summaries of one or more texts l Summary = a text that is produced from one or more texts, that contains a significant portion of the information in the original text(s) and that is no longer than half of the original text(s) l Types of summary: l Extracts: summaries created by reusing portions (words, sentences) of the input text(s) l Abstracts: summaries created by re-generating the extracted content 56

Text Summarization l Text summarization = automatic creation of summaries of one or more texts l Summary = a text that is produced from one or more texts, that contains a significant portion of the information in the original text(s) and that is no longer than half of the original text(s) l Types of summary: l Extracts: summaries created by reusing portions (words, sentences) of the input text(s) l Abstracts: summaries created by re-generating the extracted content 56

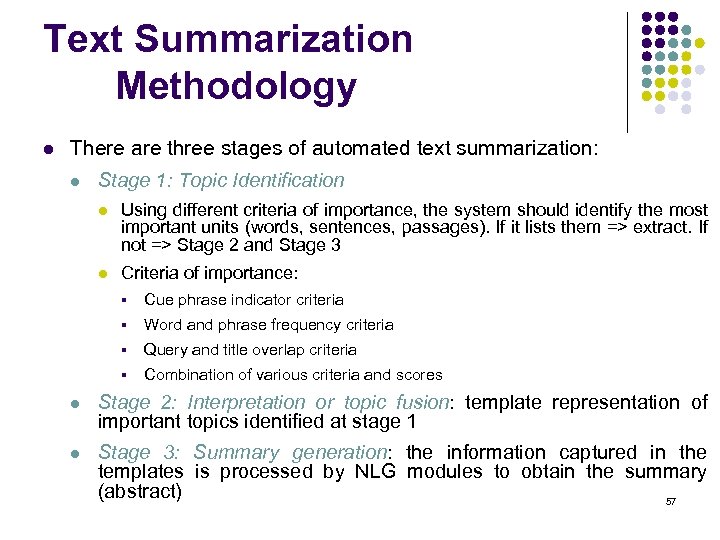

Text Summarization Methodology l There are three stages of automated text summarization: l Stage 1: Topic Identification l Using different criteria of importance, the system should identify the most important units (words, sentences, passages). If it lists them => extract. If not => Stage 2 and Stage 3 l Criteria of importance: § Cue phrase indicator criteria § Word and phrase frequency criteria § Query and title overlap criteria § Combination of various criteria and scores l Stage 2: Interpretation or topic fusion: template representation of important topics identified at stage 1 l Stage 3: Summary generation: the information captured in the templates is processed by NLG modules to obtain the summary (abstract) 57

Text Summarization Methodology l There are three stages of automated text summarization: l Stage 1: Topic Identification l Using different criteria of importance, the system should identify the most important units (words, sentences, passages). If it lists them => extract. If not => Stage 2 and Stage 3 l Criteria of importance: § Cue phrase indicator criteria § Word and phrase frequency criteria § Query and title overlap criteria § Combination of various criteria and scores l Stage 2: Interpretation or topic fusion: template representation of important topics identified at stage 1 l Stage 3: Summary generation: the information captured in the templates is processed by NLG modules to obtain the summary (abstract) 57

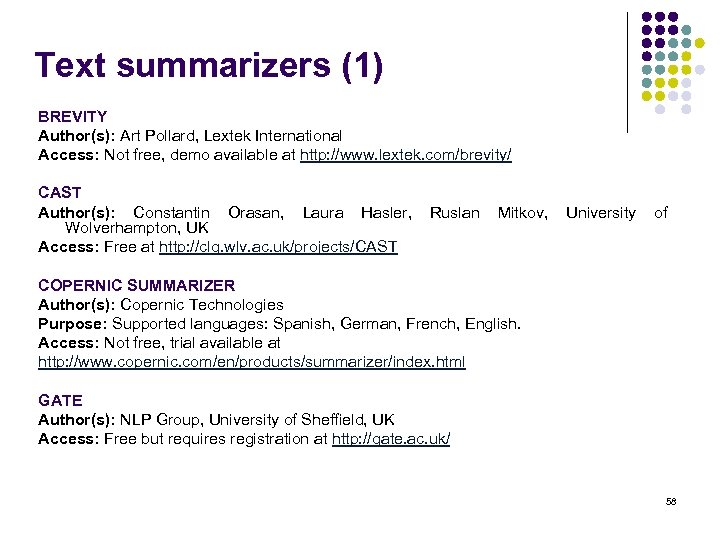

Text summarizers (1) BREVITY Author(s): Art Pollard, Lextek International Access: Not free, demo available at http: //www. lextek. com/brevity/ CAST Author(s): Constantin Orasan, Laura Hasler, Wolverhampton, UK Access: Free at http: //clg. wlv. ac. uk/projects/CAST Ruslan Mitkov, University of COPERNIC SUMMARIZER Author(s): Copernic Technologies Purpose: Supported languages: Spanish, German, French, English. Access: Not free, trial available at http: //www. copernic. com/en/products/summarizer/index. html GATE Author(s): NLP Group, University of Sheffield, UK Access: Free but requires registration at http: //gate. ac. uk/ 58

Text summarizers (1) BREVITY Author(s): Art Pollard, Lextek International Access: Not free, demo available at http: //www. lextek. com/brevity/ CAST Author(s): Constantin Orasan, Laura Hasler, Wolverhampton, UK Access: Free at http: //clg. wlv. ac. uk/projects/CAST Ruslan Mitkov, University of COPERNIC SUMMARIZER Author(s): Copernic Technologies Purpose: Supported languages: Spanish, German, French, English. Access: Not free, trial available at http: //www. copernic. com/en/products/summarizer/index. html GATE Author(s): NLP Group, University of Sheffield, UK Access: Free but requires registration at http: //gate. ac. uk/ 58

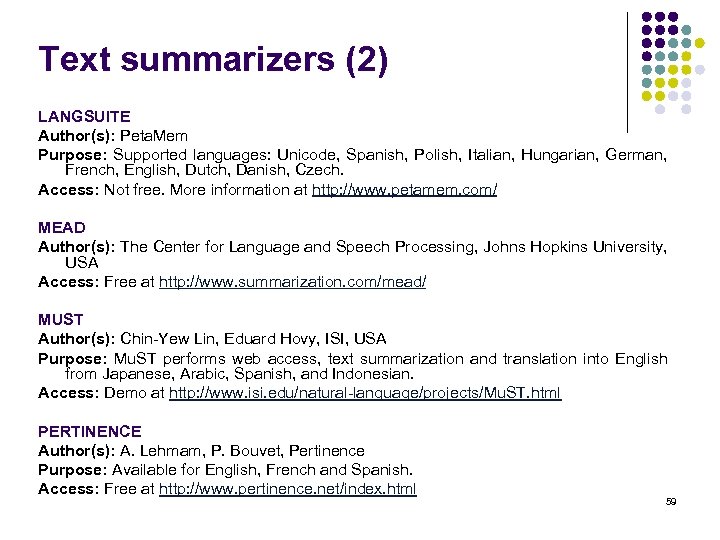

Text summarizers (2) LANGSUITE Author(s): Peta. Mem Purpose: Supported languages: Unicode, Spanish, Polish, Italian, Hungarian, German, French, English, Dutch, Danish, Czech. Access: Not free. More information at http: //www. petamem. com/ MEAD Author(s): The Center for Language and Speech Processing, Johns Hopkins University, USA Access: Free at http: //www. summarization. com/mead/ MUST Author(s): Chin-Yew Lin, Eduard Hovy, ISI, USA Purpose: Mu. ST performs web access, text summarization and translation into English from Japanese, Arabic, Spanish, and Indonesian. Access: Demo at http: //www. isi. edu/natural-language/projects/Mu. ST. html PERTINENCE Author(s): A. Lehmam, P. Bouvet, Pertinence Purpose: Available for English, French and Spanish. Access: Free at http: //www. pertinence. net/index. html 59

Text summarizers (2) LANGSUITE Author(s): Peta. Mem Purpose: Supported languages: Unicode, Spanish, Polish, Italian, Hungarian, German, French, English, Dutch, Danish, Czech. Access: Not free. More information at http: //www. petamem. com/ MEAD Author(s): The Center for Language and Speech Processing, Johns Hopkins University, USA Access: Free at http: //www. summarization. com/mead/ MUST Author(s): Chin-Yew Lin, Eduard Hovy, ISI, USA Purpose: Mu. ST performs web access, text summarization and translation into English from Japanese, Arabic, Spanish, and Indonesian. Access: Demo at http: //www. isi. edu/natural-language/projects/Mu. ST. html PERTINENCE Author(s): A. Lehmam, P. Bouvet, Pertinence Purpose: Available for English, French and Spanish. Access: Free at http: //www. pertinence. net/index. html 59

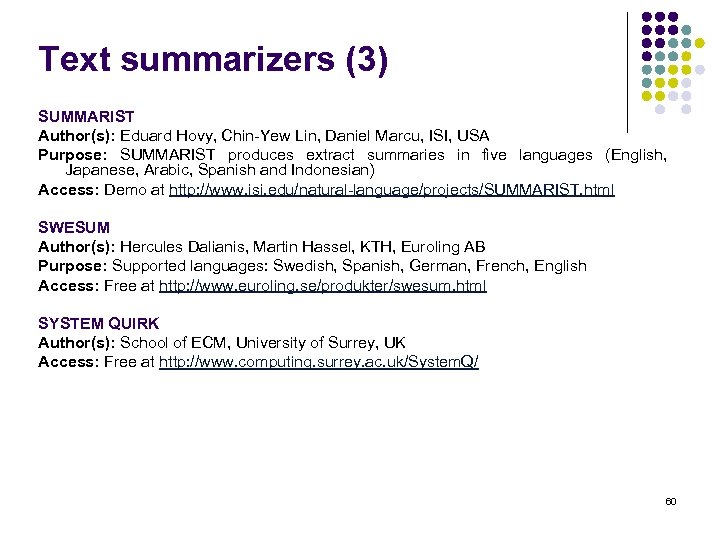

Text summarizers (3) SUMMARIST Author(s): Eduard Hovy, Chin-Yew Lin, Daniel Marcu, ISI, USA Purpose: SUMMARIST produces extract summaries in five languages (English, Japanese, Arabic, Spanish and Indonesian) Access: Demo at http: //www. isi. edu/natural-language/projects/SUMMARIST. html SWESUM Author(s): Hercules Dalianis, Martin Hassel, KTH, Euroling AB Purpose: Supported languages: Swedish, Spanish, German, French, English Access: Free at http: //www. euroling. se/produkter/swesum. html SYSTEM QUIRK Author(s): School of ECM, University of Surrey, UK Access: Free at http: //www. computing. surrey. ac. uk/System. Q/ 60

Text summarizers (3) SUMMARIST Author(s): Eduard Hovy, Chin-Yew Lin, Daniel Marcu, ISI, USA Purpose: SUMMARIST produces extract summaries in five languages (English, Japanese, Arabic, Spanish and Indonesian) Access: Demo at http: //www. isi. edu/natural-language/projects/SUMMARIST. html SWESUM Author(s): Hercules Dalianis, Martin Hassel, KTH, Euroling AB Purpose: Supported languages: Swedish, Spanish, German, French, English Access: Free at http: //www. euroling. se/produkter/swesum. html SYSTEM QUIRK Author(s): School of ECM, University of Surrey, UK Access: Free at http: //www. computing. surrey. ac. uk/System. Q/ 60

Language Identification l The task of detecting the language a text is written in. l Identifying the language of a text from some of the text’s attributes is a typical classification problem. l Two approaches to language identification: l Short words (articles, prepositions, etc. ) l N-grams (sequences of n letters). Best results are obtained for trigrams (3 letters). 61

Language Identification l The task of detecting the language a text is written in. l Identifying the language of a text from some of the text’s attributes is a typical classification problem. l Two approaches to language identification: l Short words (articles, prepositions, etc. ) l N-grams (sequences of n letters). Best results are obtained for trigrams (3 letters). 61

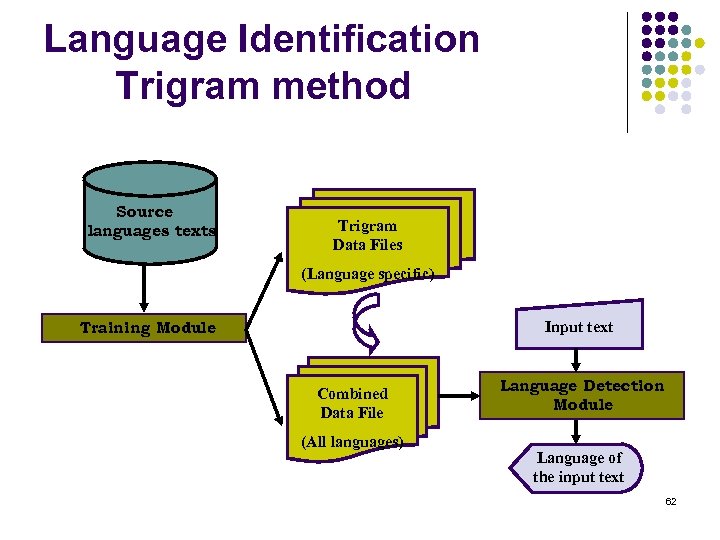

Language Identification Trigram method Source languages texts Trigram Data Files (Language specific) Training Module Input text Combined Data File (All languages) Language Detection Module Language of the input text 62

Language Identification Trigram method Source languages texts Trigram Data Files (Language specific) Training Module Input text Combined Data File (All languages) Language Detection Module Language of the input text 62

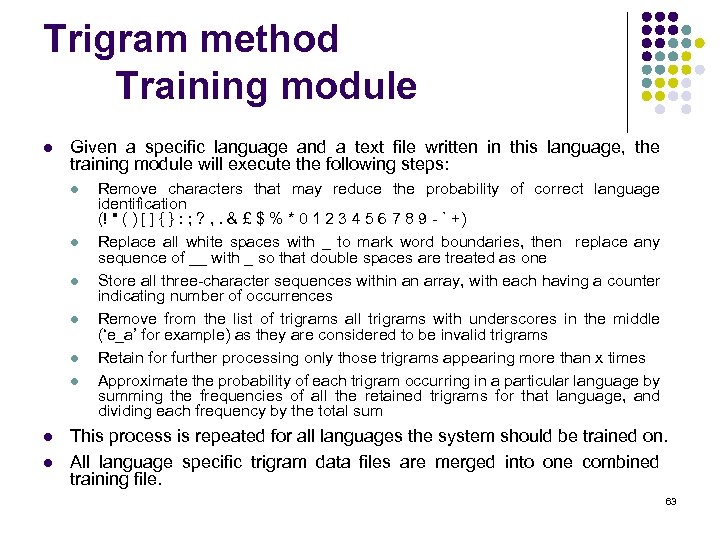

Trigram method Training module l Given a specific language and a text file written in this language, the training module will execute the following steps: l l l l Remove characters that may reduce the probability of correct language identification (! " ( ) [ ] { } : ; ? , . & £ $ % * 0 1 2 3 4 5 6 7 8 9 - ` +) Replace all white spaces with _ to mark word boundaries, then replace any sequence of __ with _ so that double spaces are treated as one Store all three-character sequences within an array, with each having a counter indicating number of occurrences Remove from the list of trigrams all trigrams with underscores in the middle (‘e_a’ for example) as they are considered to be invalid trigrams Retain for further processing only those trigrams appearing more than x times Approximate the probability of each trigram occurring in a particular language by summing the frequencies of all the retained trigrams for that language, and dividing each frequency by the total sum This process is repeated for all languages the system should be trained on. All language specific trigram data files are merged into one combined training file. 63

Trigram method Training module l Given a specific language and a text file written in this language, the training module will execute the following steps: l l l l Remove characters that may reduce the probability of correct language identification (! " ( ) [ ] { } : ; ? , . & £ $ % * 0 1 2 3 4 5 6 7 8 9 - ` +) Replace all white spaces with _ to mark word boundaries, then replace any sequence of __ with _ so that double spaces are treated as one Store all three-character sequences within an array, with each having a counter indicating number of occurrences Remove from the list of trigrams all trigrams with underscores in the middle (‘e_a’ for example) as they are considered to be invalid trigrams Retain for further processing only those trigrams appearing more than x times Approximate the probability of each trigram occurring in a particular language by summing the frequencies of all the retained trigrams for that language, and dividing each frequency by the total sum This process is repeated for all languages the system should be trained on. All language specific trigram data files are merged into one combined training file. 63

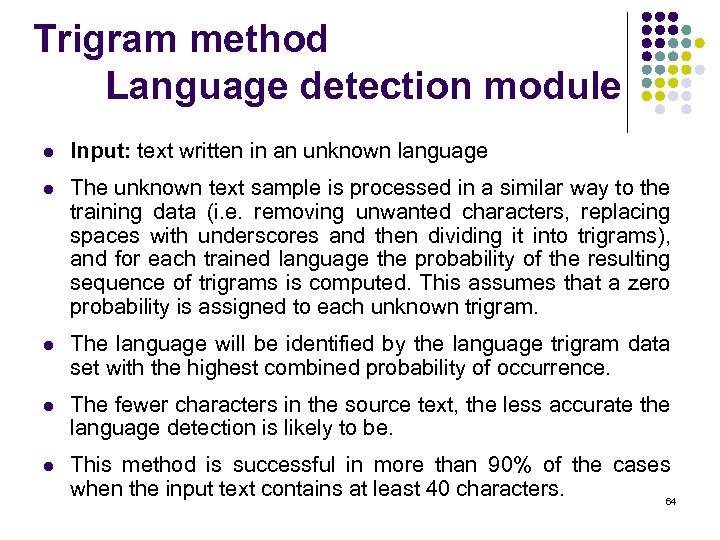

Trigram method Language detection module l Input: text written in an unknown language l The unknown text sample is processed in a similar way to the training data (i. e. removing unwanted characters, replacing spaces with underscores and then dividing it into trigrams), and for each trained language the probability of the resulting sequence of trigrams is computed. This assumes that a zero probability is assigned to each unknown trigram. l The language will be identified by the language trigram data set with the highest combined probability of occurrence. l The fewer characters in the source text, the less accurate the language detection is likely to be. l This method is successful in more than 90% of the cases when the input text contains at least 40 characters. 64

Trigram method Language detection module l Input: text written in an unknown language l The unknown text sample is processed in a similar way to the training data (i. e. removing unwanted characters, replacing spaces with underscores and then dividing it into trigrams), and for each trained language the probability of the resulting sequence of trigrams is computed. This assumes that a zero probability is assigned to each unknown trigram. l The language will be identified by the language trigram data set with the highest combined probability of occurrence. l The fewer characters in the source text, the less accurate the language detection is likely to be. l This method is successful in more than 90% of the cases when the input text contains at least 40 characters. 64

Language Guessers (1) SWESUM Author(s): Hercules Dalianis, Martin Hassel, KTH, Euroling AB Purpose: Supported languages: Swedish, Spanish, German, French, English Access: Free at http: //www. euroling. se/produkter/swesum. html LANGSUITE Author(s): Peta. Mem Purpose: Supported languages: Unicode, Spanish, Polish, Italian, Hungarian, German, French, English, Dutch, Danish, Czech. Access: Not free. More information at http: //www. petamem. com/ TED DUNNING'S LANGUAGE IDENTIFIER Author(s): Ted Dunning Access: Free at ftp: //crl. nmsu. edu/pub/misc/lingdet_suite. tar. gz TEXTCAT Author(s): Gertjan van Noord Purpose: Text. Cat is an implementation of the N-Gram-Based Text Categorization algorithm and at the moment, the system knows about 69 natural languages. Access: Free at http: //grid. let. rug. nl/~vannoord/Text. Cat/ 65

Language Guessers (1) SWESUM Author(s): Hercules Dalianis, Martin Hassel, KTH, Euroling AB Purpose: Supported languages: Swedish, Spanish, German, French, English Access: Free at http: //www. euroling. se/produkter/swesum. html LANGSUITE Author(s): Peta. Mem Purpose: Supported languages: Unicode, Spanish, Polish, Italian, Hungarian, German, French, English, Dutch, Danish, Czech. Access: Not free. More information at http: //www. petamem. com/ TED DUNNING'S LANGUAGE IDENTIFIER Author(s): Ted Dunning Access: Free at ftp: //crl. nmsu. edu/pub/misc/lingdet_suite. tar. gz TEXTCAT Author(s): Gertjan van Noord Purpose: Text. Cat is an implementation of the N-Gram-Based Text Categorization algorithm and at the moment, the system knows about 69 natural languages. Access: Free at http: //grid. let. rug. nl/~vannoord/Text. Cat/ 65

Language Guessers (2) XEROX LANGUAGE IDENTIFIER Author(s): Xerox Research Centre Europe Purpose: Supported languages: Albanian, Arabic, Basque, Breton, Bulgarian, Catalan, Chinese, Croatian, Czech, Danish, Dutch, English, Esperanto, Estonian, Finnish, French. Georgian, German, Greek, Hebrew, Hungarian, Icelandic, Indonesian, Irish, Italian, Japanese, Korean, Latin, Latvian, Lithuanian, Malay, Maltese, Norwegian, Polish, Poruguese, Romanian, Russian, Slovakian, Slovenian, Spanish, Swahili, Swedish, Thai, Turkish, Ukrainian, Vietnamese, Welsh Access: Not free. More information at http: //www. xrce. xerox. com/competencies/contentanalysis/tools/guesser-ISO-8859 -1. en. html 66

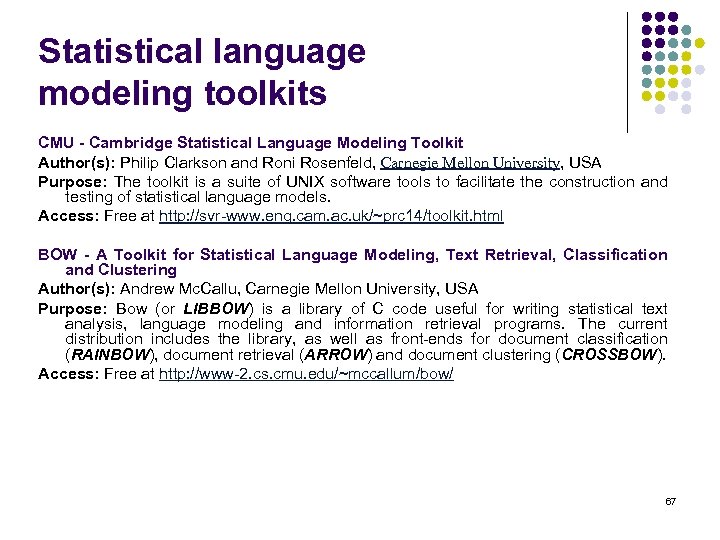

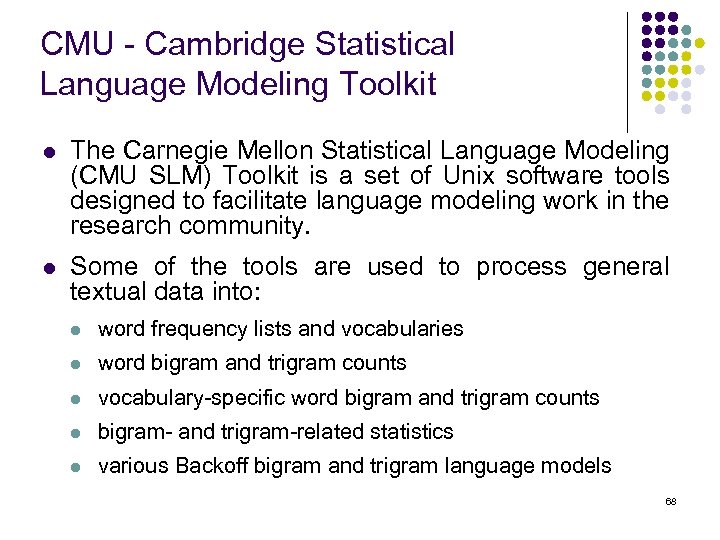

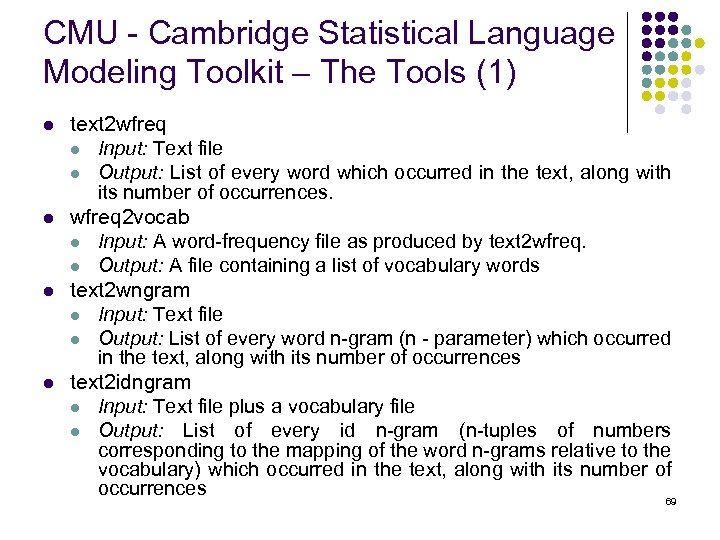

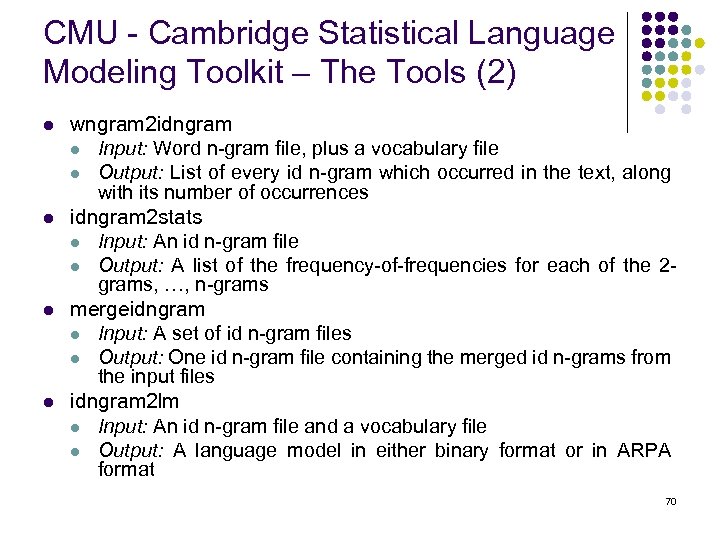

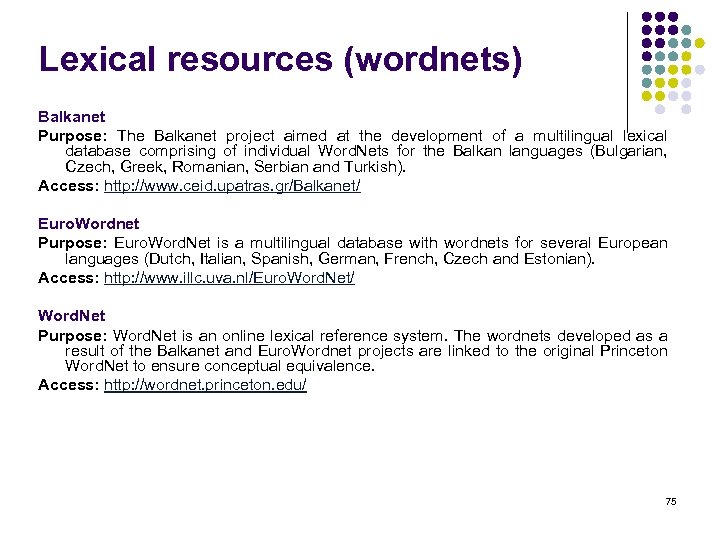

Language Guessers (2) XEROX LANGUAGE IDENTIFIER Author(s): Xerox Research Centre Europe Purpose: Supported languages: Albanian, Arabic, Basque, Breton, Bulgarian, Catalan, Chinese, Croatian, Czech, Danish, Dutch, English, Esperanto, Estonian, Finnish, French. Georgian, German, Greek, Hebrew, Hungarian, Icelandic, Indonesian, Irish, Italian, Japanese, Korean, Latin, Latvian, Lithuanian, Malay, Maltese, Norwegian, Polish, Poruguese, Romanian, Russian, Slovakian, Slovenian, Spanish, Swahili, Swedish, Thai, Turkish, Ukrainian, Vietnamese, Welsh Access: Not free. More information at http: //www. xrce. xerox. com/competencies/contentanalysis/tools/guesser-ISO-8859 -1. en. html 66