cc785546658696c0786e985051629586.ppt

- Количество слайдов: 9

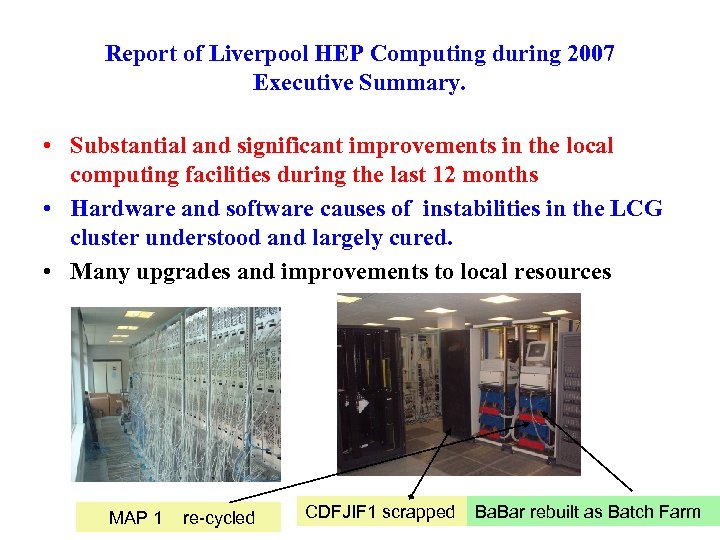

Report of Liverpool HEP Computing during 2007 Executive Summary. • Substantial and significant improvements in the local computing facilities during the last 12 months • Hardware and software causes of instabilities in the LCG cluster understood and largely cured. • Many upgrades and improvements to local resources MAP 1 re-cycled CDFJIF 1 scrapped Ba. Bar rebuilt as Batch Farm

Report of Liverpool HEP Computing during 2007 Executive Summary. • Substantial and significant improvements in the local computing facilities during the last 12 months • Hardware and software causes of instabilities in the LCG cluster understood and largely cured. • Many upgrades and improvements to local resources MAP 1 re-cycled CDFJIF 1 scrapped Ba. Bar rebuilt as Batch Farm

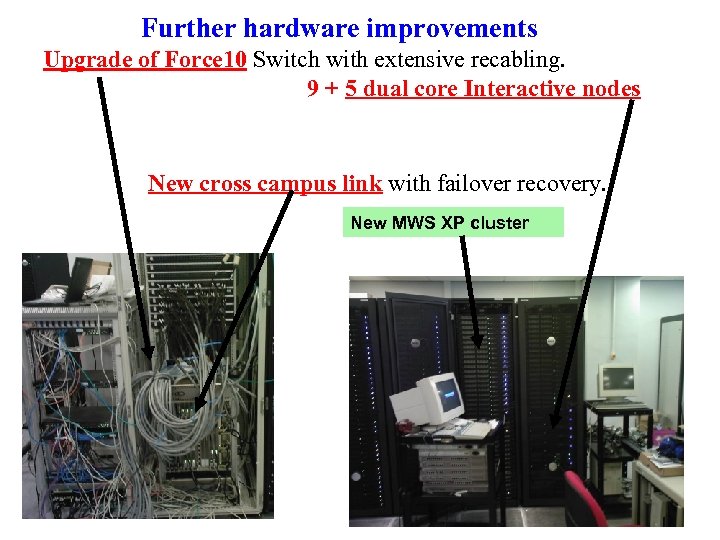

Further hardware improvements Upgrade of Force 10 Switch with extensive recabling. 9 + 5 dual core Interactive nodes New cross campus link with failover recovery. New MWS XP cluster .

Further hardware improvements Upgrade of Force 10 Switch with extensive recabling. 9 + 5 dual core Interactive nodes New cross campus link with failover recovery. New MWS XP cluster .

Further hardware improvements (continued) • • • • New servers set up : T 2 K-FE, Cockcroft-FE, ATLAS-FE…. New HEPSTORE RAID 6 New secure gateway machine allows SSH access from anywhere. New HEPWALL node to protect the cluster and balance network load. VO 10 replaced with Super. VO 10 authentication server. 100’s of machines repaired, refurbished or upgraded. Extensive re-cabling of racks in the cluster room (on-going). All non-HEP servers moved and isolated in the old CDFJIF 1 rack. 3 racks of DELL nodes taken back from Ai. Mes and installed. Switches replaced in some water-cooled racks (horrible job). New network and computers in former OL library. 10 TB RAID 6 added to LCG cluster. Complete upgrade of all office desktops finished. Major repairs to both chiller units on roof of OL.

Further hardware improvements (continued) • • • • New servers set up : T 2 K-FE, Cockcroft-FE, ATLAS-FE…. New HEPSTORE RAID 6 New secure gateway machine allows SSH access from anywhere. New HEPWALL node to protect the cluster and balance network load. VO 10 replaced with Super. VO 10 authentication server. 100’s of machines repaired, refurbished or upgraded. Extensive re-cabling of racks in the cluster room (on-going). All non-HEP servers moved and isolated in the old CDFJIF 1 rack. 3 racks of DELL nodes taken back from Ai. Mes and installed. Switches replaced in some water-cooled racks (horrible job). New network and computers in former OL library. 10 TB RAID 6 added to LCG cluster. Complete upgrade of all office desktops finished. Major repairs to both chiller units on roof of OL.

In the pipeline, going as fast as possible • Upgrades to the HEP and MON nodes to improve reliability, and speed for e-mail services etc. (big job, many services to be tested). • “Puppet” system for managing all the software installations on all machines in the cluster room. • Complete rebuild of LCG cluster : replace SE and CE nodes, add UPS to GRID servers, reconfigure d-Cache, upgrade to SL 4 and (hopefully) replace NFS with AFS. • Link most MAP 2 nodes into the LCG cluster with job Qs for different tasks, e. g. GRID computing, batch farm analysis, local MC production, MPI facilities (use multiply nodes as one computer). Every node has to earn its keep.

In the pipeline, going as fast as possible • Upgrades to the HEP and MON nodes to improve reliability, and speed for e-mail services etc. (big job, many services to be tested). • “Puppet” system for managing all the software installations on all machines in the cluster room. • Complete rebuild of LCG cluster : replace SE and CE nodes, add UPS to GRID servers, reconfigure d-Cache, upgrade to SL 4 and (hopefully) replace NFS with AFS. • Link most MAP 2 nodes into the LCG cluster with job Qs for different tasks, e. g. GRID computing, batch farm analysis, local MC production, MPI facilities (use multiply nodes as one computer). Every node has to earn its keep.

Further software upgrades. Complete database for all hardware. New hardware monitoring system for all machines SL 4 rolled out on some interactive nodes Automated daily backup of all critical system and user files

Further software upgrades. Complete database for all hardware. New hardware monitoring system for all machines SL 4 rolled out on some interactive nodes Automated daily backup of all critical system and user files

Problems still outstanding • Monitoring of water cooled racks to spot cooling failure and make a clean shutdown of the cluster is still needed. shut these down this Xmas (hopefully for last time). • Install more air con units: current ones running at full capacity so ageing rapidly. B&E responsibility. • Higher speed external network connection: currently limited to 1 GB. • Network within the OL is in need of updating. • Clean room legacy computers need attention. • Current sys admins office arrangements inadequate.

Problems still outstanding • Monitoring of water cooled racks to spot cooling failure and make a clean shutdown of the cluster is still needed. shut these down this Xmas (hopefully for last time). • Install more air con units: current ones running at full capacity so ageing rapidly. B&E responsibility. • Higher speed external network connection: currently limited to 1 GB. • Network within the OL is in need of updating. • Clean room legacy computers need attention. • Current sys admins office arrangements inadequate.

GRIDPP 3 Hardware upgrade and NW GRID Cluster • Disappointing outcome for the next round of allocations to add to our GRID hardware: whole of North. GRID unhappy with the result. Currently we will get ~£ 67 K over 2 years, was hoping for ~£ 200 K as in original (2006) plans. Making further bid for a share of an extra ~£ 100 K but outcome not clear. We will be lucky to get another £ 10 K. • New NW GRID cluster ~ MAP 2 to be situated in CSD. But the £ 270 K grant comes through Physics, and we plan to closely couple the two clusters. New cluster is very suitable for MPI jobs. Contract says cluster must be installed by end Jan 2008.

GRIDPP 3 Hardware upgrade and NW GRID Cluster • Disappointing outcome for the next round of allocations to add to our GRID hardware: whole of North. GRID unhappy with the result. Currently we will get ~£ 67 K over 2 years, was hoping for ~£ 200 K as in original (2006) plans. Making further bid for a share of an extra ~£ 100 K but outcome not clear. We will be lucky to get another £ 10 K. • New NW GRID cluster ~ MAP 2 to be situated in CSD. But the £ 270 K grant comes through Physics, and we plan to closely couple the two clusters. New cluster is very suitable for MPI jobs. Contract says cluster must be installed by end Jan 2008.

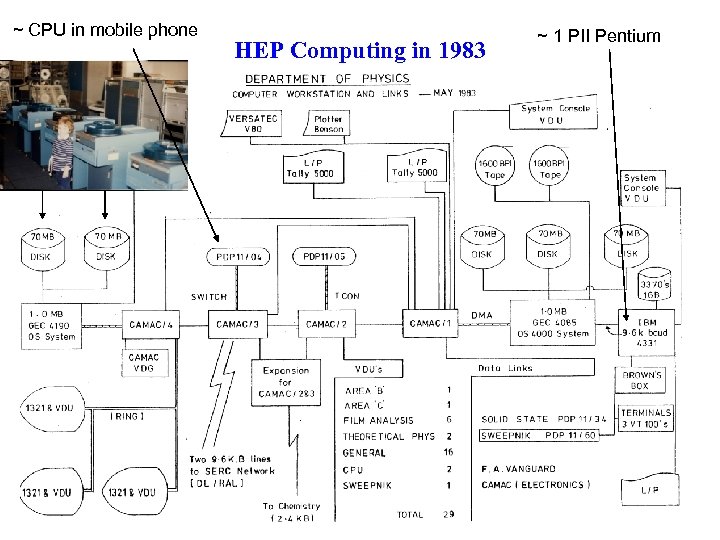

~ CPU in mobile phone HEP Computing in 1983 ~ 1 PII Pentium

~ CPU in mobile phone HEP Computing in 1983 ~ 1 PII Pentium

Lot of computing changes in 25 Years • Local computing resources have increased by ~ 7 orders of magnitude in 25 years (225 ~ 7 107)! • However the number of sys admins ~ same as in 1983. • So there is a clear need to continue to invest RG money to: • (a) Build in redundancy, UPS and backup in the critical systems; • (b) Have automatic failover recovery where possible; • (c) Have extensive monitoring for early warning of failures; • (d) Use RAID 6 (hardware or software). • (e) Ensure specialist computers for clean rooms etc. are futureproofed at the purchase stage, and have spare motherboards. • Fix your laptops to your desk with a security cable and back up the disk! PW protect them.

Lot of computing changes in 25 Years • Local computing resources have increased by ~ 7 orders of magnitude in 25 years (225 ~ 7 107)! • However the number of sys admins ~ same as in 1983. • So there is a clear need to continue to invest RG money to: • (a) Build in redundancy, UPS and backup in the critical systems; • (b) Have automatic failover recovery where possible; • (c) Have extensive monitoring for early warning of failures; • (d) Use RAID 6 (hardware or software). • (e) Ensure specialist computers for clean rooms etc. are futureproofed at the purchase stage, and have spare motherboards. • Fix your laptops to your desk with a security cable and back up the disk! PW protect them.