786a453b7721c79042ce38cc9e7b13ac.ppt

- Количество слайдов: 22

Remote-Rendering related research at Hasselt University (B) dr. Peter Quax Expertise Center for Digital Media Multimedia and Communication Technology group

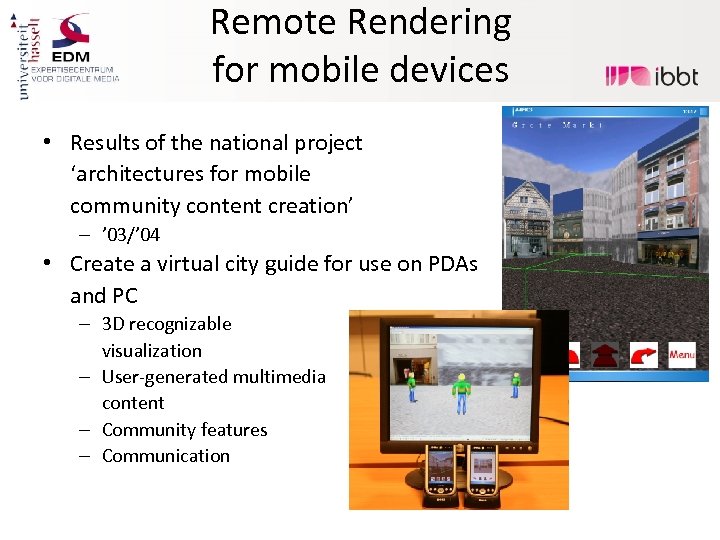

Remote Rendering for mobile devices • Results of the national project ‘architectures for mobile community content creation’ – ’ 03/’ 04 • Create a virtual city guide for use on PDAs and PC – 3 D recognizable visualization – User-generated multimedia content – Community features – Communication

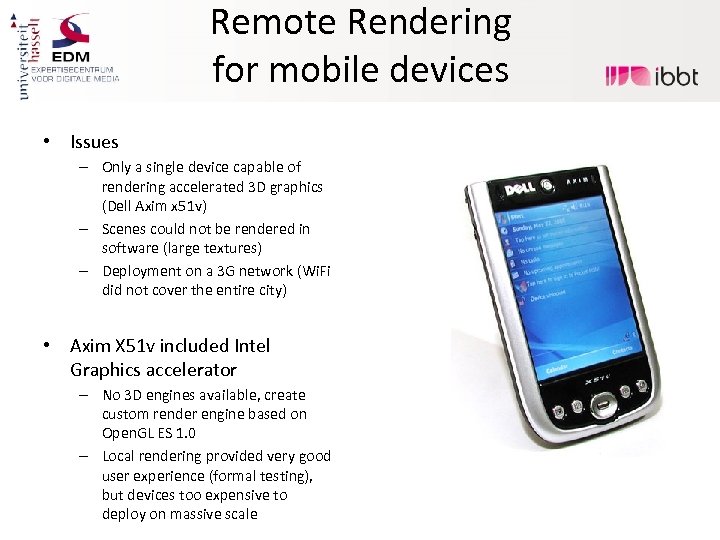

Remote Rendering for mobile devices • Issues – Only a single device capable of rendering accelerated 3 D graphics (Dell Axim x 51 v) – Scenes could not be rendered in software (large textures) – Deployment on a 3 G network (Wi. Fi did not cover the entire city) • Axim X 51 v included Intel Graphics accelerator – No 3 D engines available, create custom render engine based on Open. GL ES 1. 0 – Local rendering provided very good user experience (formal testing), but devices too expensive to deploy on massive scale

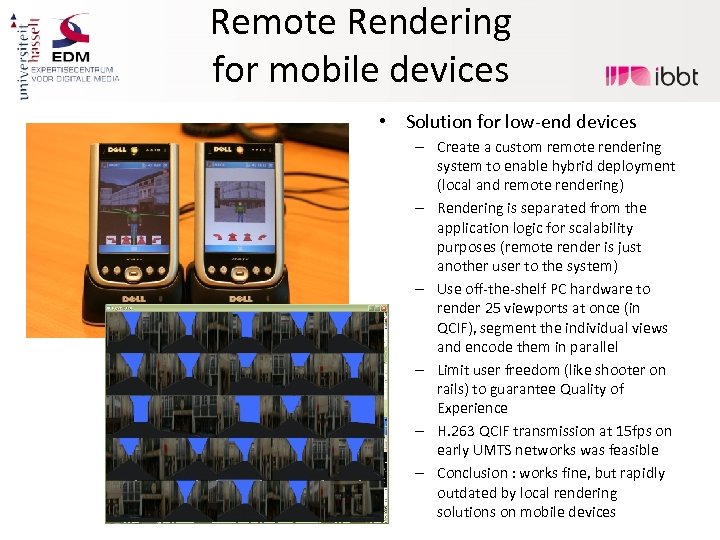

Remote Rendering for mobile devices • Solution for low-end devices – Create a custom remote rendering system to enable hybrid deployment (local and remote rendering) – Rendering is separated from the application logic for scalability purposes (remote render is just another user to the system) – Use off-the-shelf PC hardware to render 25 viewports at once (in QCIF), segment the individual views and encode them in parallel – Limit user freedom (like shooter on rails) to guarantee Quality of Experience – H. 263 QCIF transmission at 15 fps on early UMTS networks was feasible – Conclusion : works fine, but rapidly outdated by local rendering solutions on mobile devices

Remote Rendering and Interactivity Requirements • One of the most challenging contexts for interactive applications : multiplayer games • Number of studies available (see Net. Games series of workshops), show that most demanding genre is First Person Shooter – Most popular genre among hard-core gamers – High number of interactions per time frame • Experiment objective : determine whethere is an indication for a boundary above which players become aware of network delay – Objective (in terms of score) – Subjective (in terms of experience) • Experiment in context of access network delay, but results also apply to remote rendering of these games

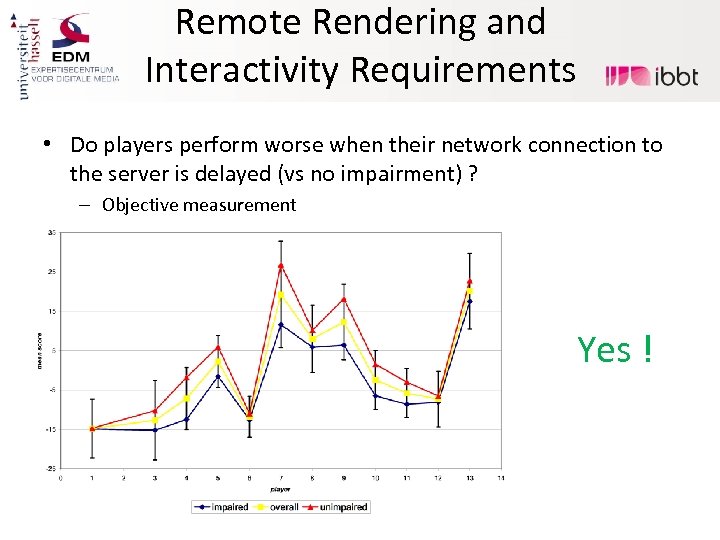

Remote Rendering and Interactivity Requirements • Do players perform worse when their network connection to the server is delayed (vs no impairment) ? – Objective measurement Yes !

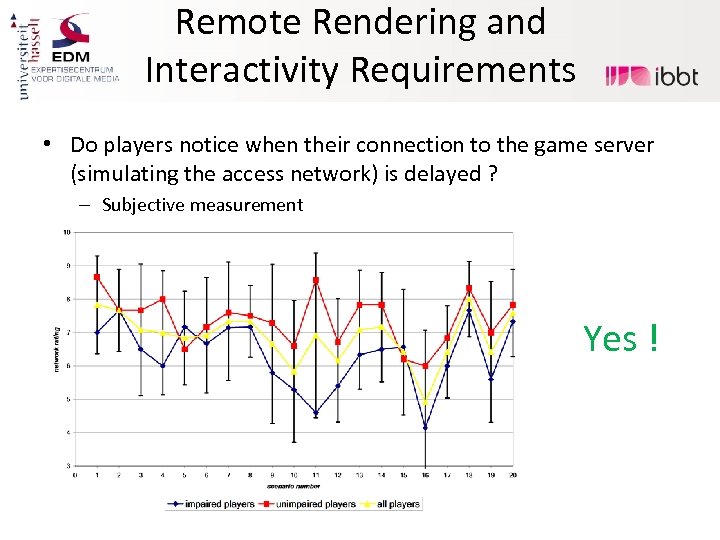

Remote Rendering and Interactivity Requirements • Do players notice when their connection to the game server (simulating the access network) is delayed ? – Subjective measurement Yes !

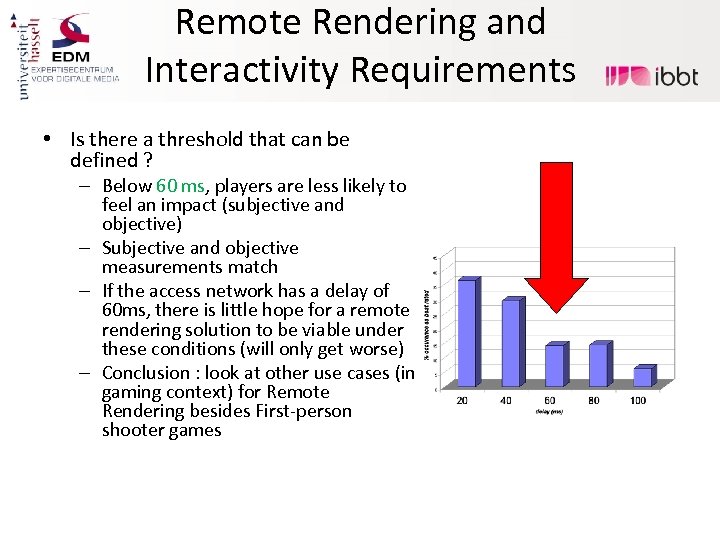

Remote Rendering and Interactivity Requirements • Is there a threshold that can be defined ? – Below 60 ms, players are less likely to feel an impact (subjective and objective) – Subjective and objective measurements match – If the access network has a delay of 60 ms, there is little hope for a remote rendering solution to be viable under these conditions (will only get worse) – Conclusion : look at other use cases (in gaming context) for Remote Rendering besides First-person shooter games

Remote Rendering for Massive Multiplayer Games • Architecture for Large-scale Virtual Interactive Communities (ALVIC) – Design a scalable client/server based solution for MMOGs (main focus on MMORPG genre) – Emphasis on practical issues • Deployment (firewall issues, delay experience) • Economical considerations (cost of deployment and maintenance) • Manageability (moderation, security, cheating) – Suitable for deployment on cloud platforms – As generically applicable as possible

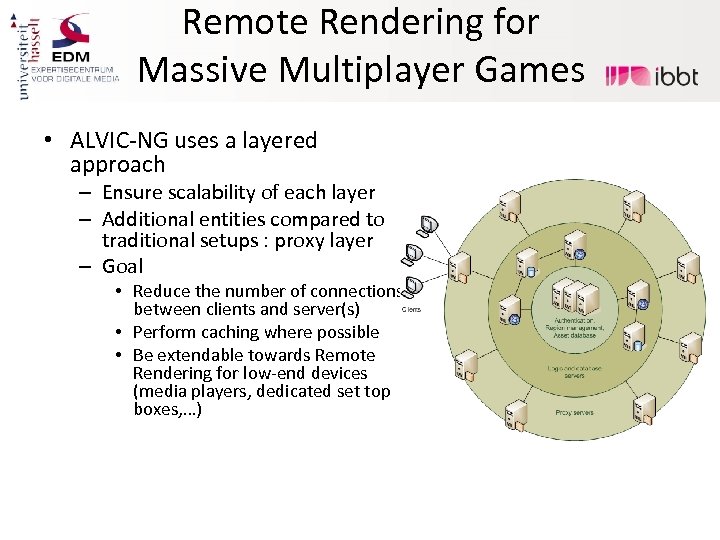

Remote Rendering for Massive Multiplayer Games • ALVIC-NG uses a layered approach – Ensure scalability of each layer – Additional entities compared to traditional setups : proxy layer – Goal • Reduce the number of connections between clients and server(s) • Perform caching where possible • Be extendable towards Remote Rendering for low-end devices (media players, dedicated set top boxes, …)

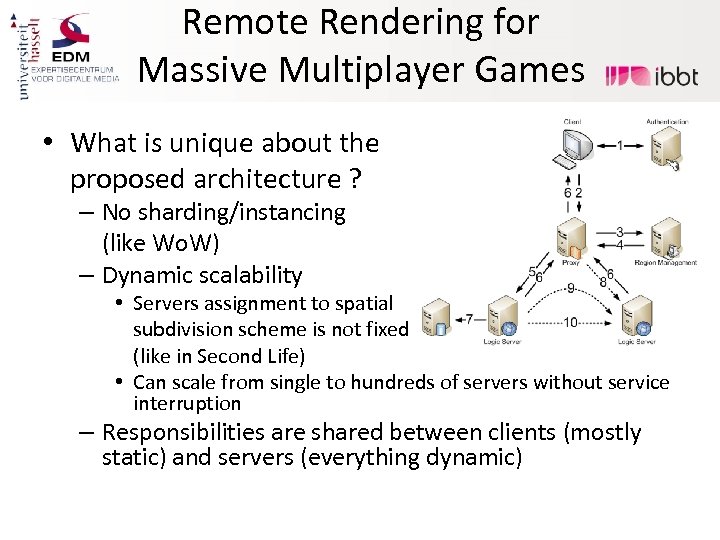

Remote Rendering for Massive Multiplayer Games • What is unique about the proposed architecture ? – No sharding/instancing (like Wo. W) – Dynamic scalability • Servers assignment to spatial subdivision scheme is not fixed (like in Second Life) • Can scale from single to hundreds of servers without service interruption – Responsibilities are shared between clients (mostly static) and servers (everything dynamic)

Remote Rendering for Massive Multiplayer Games • Applicability of Remote Rendering – Client is relatively ‘dumb’, mainly concerned with visualization and local interaction handling – Proxies perform boundary testing and act as message gateways • Easy to add another shell of remote rendering servers to the architectural design – Transparent transition between local and remote rendering cases (e. g. from PC to mobile device or low-end console) – Decoupling of game logic and remote rendering infrastructure – Conclusion : more generic solution for an entire class of games

In-network adaptation of Remote Rendering streams • Growing interest in so-called Spectator Mode – On. Live, Xbox Live, . . . • Generate stream once, serve large number of viewers • What about heterogenous contexts ? – Multitude of devices (high-end PCs, thin client consoles, tablets, smartphones, …) – Multitude of access networks (100 Mbit cable, 3 G HSDPA, EDGE) • Create a reusable infrastructure for multiple purposes – Not limited to Remote Rendering (generic video streaming-compliant) • No adaptations needed to remote rendering system – Can be applied to off-the-shelf remote rendering solutions or video services

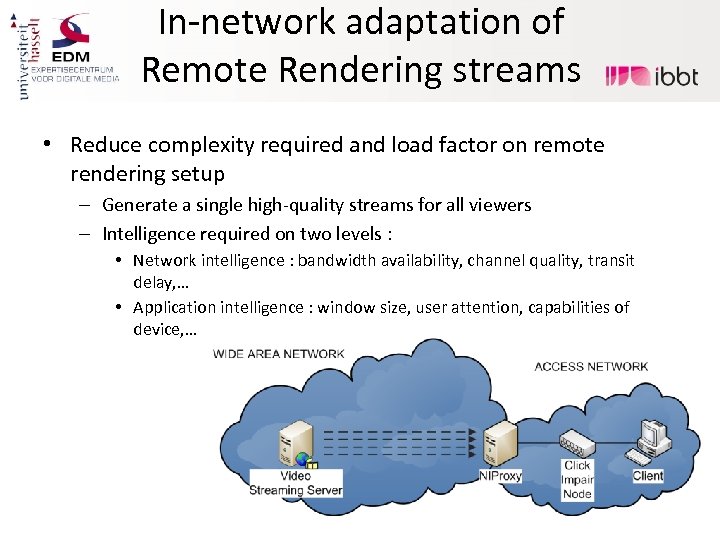

In-network adaptation of Remote Rendering streams • Reduce complexity required and load factor on remote rendering setup – Generate a single high-quality streams for all viewers – Intelligence required on two levels : • Network intelligence : bandwidth availability, channel quality, transit delay, … • Application intelligence : window size, user attention, capabilities of device, …

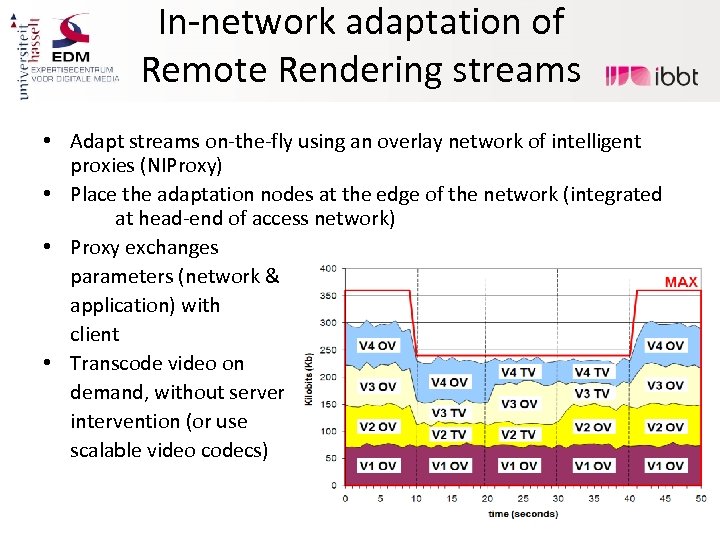

In-network adaptation of Remote Rendering streams • Adapt streams on-the-fly using an overlay network of intelligent proxies (NIProxy) • Place the adaptation nodes at the edge of the network (integrated at head-end of access network) • Proxy exchanges parameters (network & application) with client • Transcode video on demand, without server intervention (or use scalable video codecs)

Efficient delivery of omni-directional video • Omni-directional or panoramic video allows exploration by the user in recorded video sequences – Move orientation of the camera after capturing – Zoom in/out and maintain quality level – Like street view, but video-based • Distribution of these sequences is subject to optimization – Not really required for low quality sequences (e. g. 720 p or less) – Sequences captured by our hardware (typically) above 8000 x 1900 pixels • Cannot be streamed at once to a client (Set Top Box or Tablet device)

Efficient delivery of omni-directional video • Borrow ideas from remote rendering to optimize the flow – Perform pre-processing steps on the server to generate compliant streams – Do the precise customization for each user on the device (fat client) vs the server (thin client) – Retain interactivity (camera movement) as is typical in RR setups • Recompositing the scene for each client at server-side is not very scalable, so 2 alternatives : 1. 2. Add meta-data to the stream and customize streams by manipulating the compressed bitstream (using H. 264 slices) Cut the generated stream into small segments that are individually decodable, have client request only those required

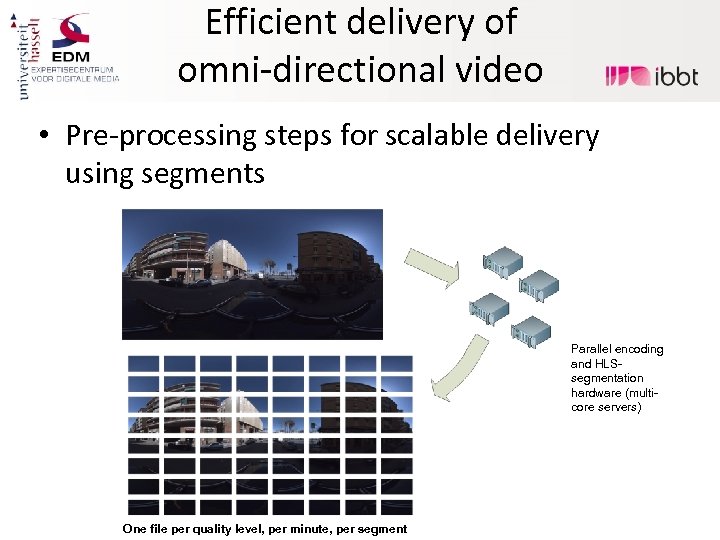

Efficient delivery of omni-directional video Original sequence • Pre-processing steps for scalable delivery using segments Output files Parallel encoding and HLSsegmentation hardware (multicore servers) One file per quality level, per minute, per segment

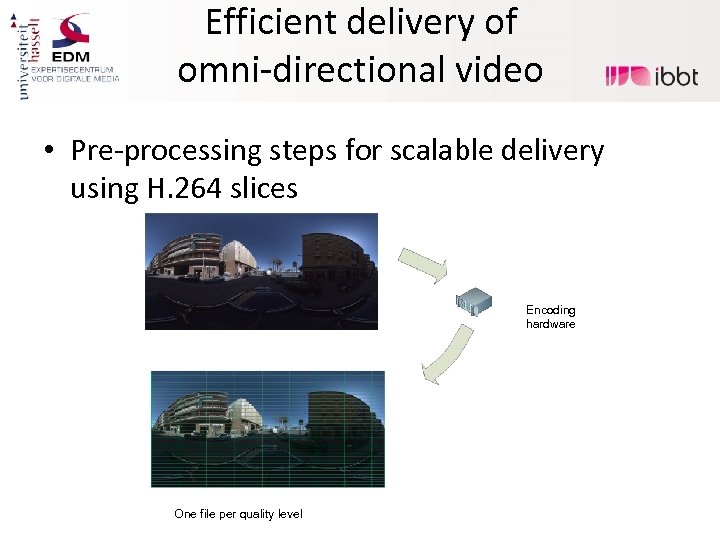

Efficient delivery of omni-directional video Original sequence • Pre-processing steps for scalable delivery using H. 264 slices Output file Encoding hardware One file per quality level

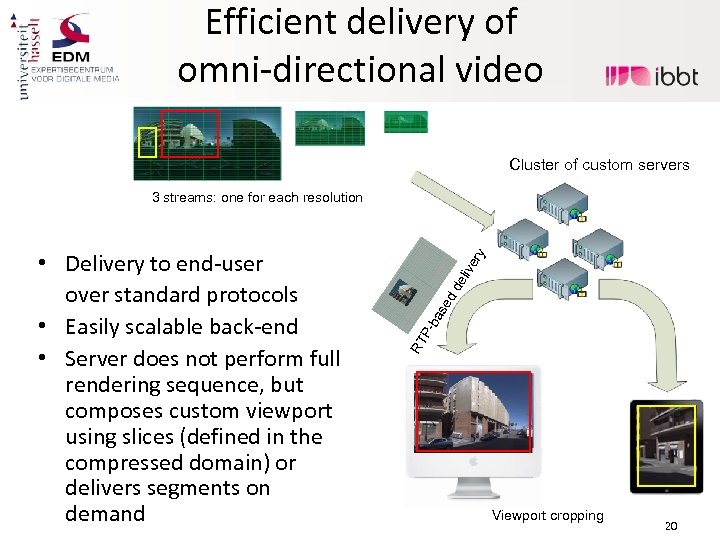

Efficient delivery of omni-directional video Cluster of custom servers eli dd se ba PRT • Delivery to end-user over standard protocols • Easily scalable back-end • Server does not perform full rendering sequence, but composes custom viewport using slices (defined in the compressed domain) or delivers segments on demand ve ry 3 streams: one for each resolution Viewport cropping 20

Efficient delivery of omni-directional video • End-user application – Based on (IP) Set Top Boxes – Second Screen applications (besides primary TV broadcast) on tablets (android/i. Pad) – Web platform (Web. GL with HTML 5 video features) • Currently in testing phase, will be rolled out on testbed in short term (EOY)

More information ? • • • "Adapting a Large Scale Networked Virtual Environment for Display on a PDA". Tom Jehaes, Peter Quax, Wim Lamotte, Proc. of ACE 2005, Jun. 2005. "On the applicability of Remote Rendering of Networked Virtual Environments on Mobile Devices". Peter Quax, Bjorn Geuns, Tom Jehaes, Gert Vansichem, Wim Lamotte, Proceedings of the International Conference on Systems and Network Communications, IEEE, Oct. 2006. "Objective and Subjective Evaluation of the Influence of Small Amounts of Delay and Jitter on a Recent First Person Shooter Game". Peter Quax, Patrick Monsieurs, Wim Lamotte, Danny De Vleeschauwer, Natalie Degrande, Proc. of NETGAMES 2004, ISBN 1 -58113 -942 -X, Aug. 2004. “Effective and Resource-Efficient Multimedia Communication Using the NIProxy “. Maarten Wijnants and Wim Lamotte. Proceedings of the 8 th IEEE International Conference on Networks (ICN 2009) "ALVIC-NG : State Management and Immersive Communication for Massively Multiplayer Online Games and Communities". Peter Quax, Bart Cornelissen, Jeroen Dierckx, Gert Vansichem, Wim Lamotte. Journal on Multimedia Tools and Applications, Special Issue on Massively Multiplayer Online Games. Volume 45, Issue 1 (2009), Page 109. Springer. http: //www. edm. uhasselt. be

786a453b7721c79042ce38cc9e7b13ac.ppt