ce677559ab79cd45f9b4a8b581df5225.ppt

- Количество слайдов: 38

Rapid Prototyping of WWW Niche Search Engines: Document Classification and Information Extraction Christian W. Omlin http: //www. cs. uwc. ac. za/~comlin http: //www. coe. uwc. ac. za Telkom/Cisco Center of Excellence for IP and Internet Computing Department of Computer Science University of the Western Cape

Rapid Prototyping of WWW Niche Search Engines: Document Classification and Information Extraction Christian W. Omlin http: //www. cs. uwc. ac. za/~comlin http: //www. coe. uwc. ac. za Telkom/Cisco Center of Excellence for IP and Internet Computing Department of Computer Science University of the Western Cape

1. 2. 3. 4. 5. 6. 7. 8. Why Niche Search Engines Technical Challenges and Issues for Niche SEs Deadliner: A Search Engine for Conference CFP What Does Deadliner Do? Deadliner Architecture Input Data Preprocessing Document Classifier Information Extraction: Simple Detectors, Optimal Detector Fusion, ROC curves 9. Neyman-Pearson 10. Presentation and Cataloging 11. Performance 12. Conclusions & Future Work

1. 2. 3. 4. 5. 6. 7. 8. Why Niche Search Engines Technical Challenges and Issues for Niche SEs Deadliner: A Search Engine for Conference CFP What Does Deadliner Do? Deadliner Architecture Input Data Preprocessing Document Classifier Information Extraction: Simple Detectors, Optimal Detector Fusion, ROC curves 9. Neyman-Pearson 10. Presentation and Cataloging 11. Performance 12. Conclusions & Future Work

Why Niche Search Engines (SEs) ? General SE • General • Low precision, recall not highly structured • High cost large database high bandwidth • Lots to crawl • More resources – all of which is rarely used Niche SE (specialized SEs) • Domain specific • High precision, recall structured data • Lower cost smaller database lower bandwidth • More up to date • Less resources – local, personalized

Why Niche Search Engines (SEs) ? General SE • General • Low precision, recall not highly structured • High cost large database high bandwidth • Lots to crawl • More resources – all of which is rarely used Niche SE (specialized SEs) • Domain specific • High precision, recall structured data • Lower cost smaller database lower bandwidth • More up to date • Less resources – local, personalized

Technical Challenges & Issues for Niche SEs • Focused crawling • Page classification • Automated data and knowledge extraction and indexing - Identification - Extraction - Summarization - Presentation - Integration • Creating (general purpose) tools to help build niche search engines Niche SE design research and system integration

Technical Challenges & Issues for Niche SEs • Focused crawling • Page classification • Automated data and knowledge extraction and indexing - Identification - Extraction - Summarization - Presentation - Integration • Creating (general purpose) tools to help build niche search engines Niche SE design research and system integration

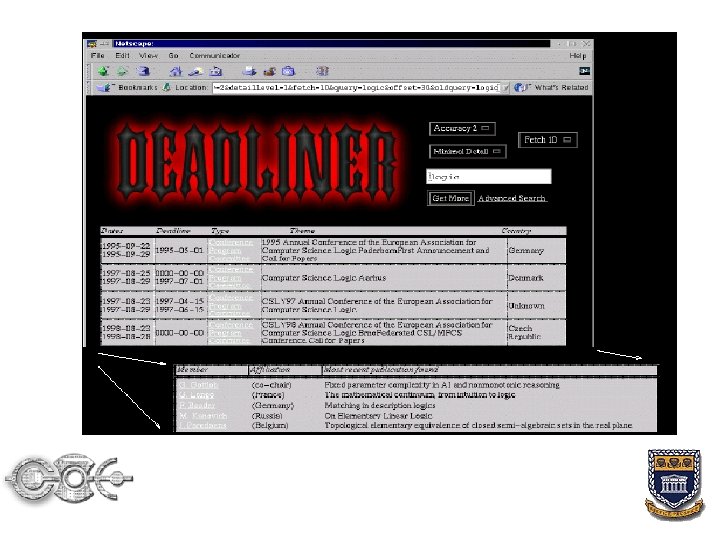

What does DEADLINER do? • Domain specific document retrieval • Meta-tool (uses and integrates other existing tools: focused crawler, meta search engine, newsgroups) • Prescreens gathered text using support vector machines • Constructs detectors for target information in text (e. g. theme of a conference) • Bayesian detector fusion • Presentation and cataloging of extracted information • Places data into structured database • Allows complex queries (e. g. search by date)

What does DEADLINER do? • Domain specific document retrieval • Meta-tool (uses and integrates other existing tools: focused crawler, meta search engine, newsgroups) • Prescreens gathered text using support vector machines • Constructs detectors for target information in text (e. g. theme of a conference) • Bayesian detector fusion • Presentation and cataloging of extracted information • Places data into structured database • Allows complex queries (e. g. search by date)

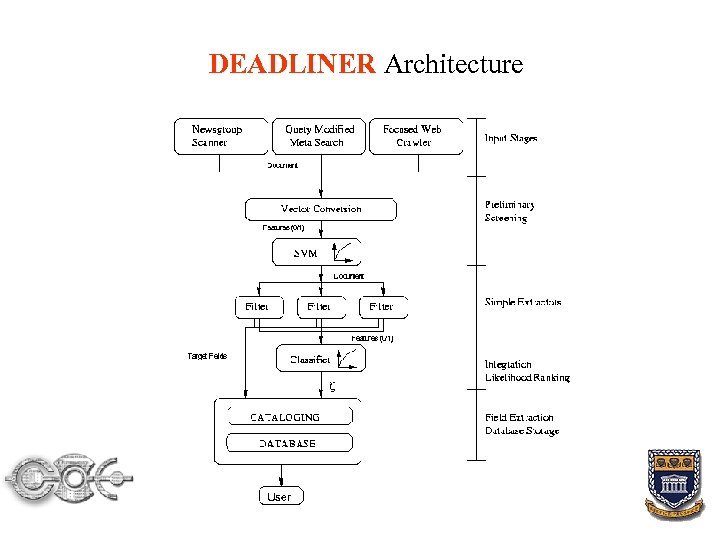

DEADLINER Architecture

DEADLINER Architecture

Input Stages Build upon other existing systems: • Newsgroup scanner • Query modified meta-search: learned query modifications used with text derived from a database of calls for papers • Focused WWW crawler - learns context of relevant documents - context graph models link hierarchies

Input Stages Build upon other existing systems: • Newsgroup scanner • Query modified meta-search: learned query modifications used with text derived from a database of calls for papers • Focused WWW crawler - learns context of relevant documents - context graph models link hierarchies

Support Vector Machines (SVMs) SVMs screen the harvested documents • Good text classifier • Handles high-dimensional input vectors • Resists overfitting by choosing salient features • Class vectors are separated by hyperplanes • Hyperplanes maximize margins between classes, which controls complexity and generalization

Support Vector Machines (SVMs) SVMs screen the harvested documents • Good text classifier • Handles high-dimensional input vectors • Resists overfitting by choosing salient features • Class vectors are separated by hyperplanes • Hyperplanes maximize margins between classes, which controls complexity and generalization

SVM Input Data Vectors Construct input vocabulary • A feature is a word, bi- or trigram • Features are chosen relative to a fixed vocabulary • If feature occurs on 7. 5% of true/false class, then the feature is a candidate for the vocabulary • Candidates are ranked in order of ratio of their frequency on true class to frequency on false class documents • The N highest ranked documents form the vocabulary • Feature vector is constructed from this vocabulary • Vectors are binary labeled {0, 1}

SVM Input Data Vectors Construct input vocabulary • A feature is a word, bi- or trigram • Features are chosen relative to a fixed vocabulary • If feature occurs on 7. 5% of true/false class, then the feature is a candidate for the vocabulary • Candidates are ranked in order of ratio of their frequency on true class to frequency on false class documents • The N highest ranked documents form the vocabulary • Feature vector is constructed from this vocabulary • Vectors are binary labeled {0, 1}

Heuristics to Combine Text Fields in Filters • Simplest filters perform keyword matching based on built vocabulary using regular expressions • Program committees: Text matched against a dictionary of known author names & affiliations obtained from Research. Index (~90 K) • Deadlines: Standard date formats from sentences with surrounding or immediately preceding text • Titles: Contains: city/state, date of meeting, deadline, list of sponsors, name, acronym for conference, theme/summary of conference Must contain at least 2 to match from the database

Heuristics to Combine Text Fields in Filters • Simplest filters perform keyword matching based on built vocabulary using regular expressions • Program committees: Text matched against a dictionary of known author names & affiliations obtained from Research. Index (~90 K) • Deadlines: Standard date formats from sentences with surrounding or immediately preceding text • Titles: Contains: city/state, date of meeting, deadline, list of sponsors, name, acronym for conference, theme/summary of conference Must contain at least 2 to match from the database

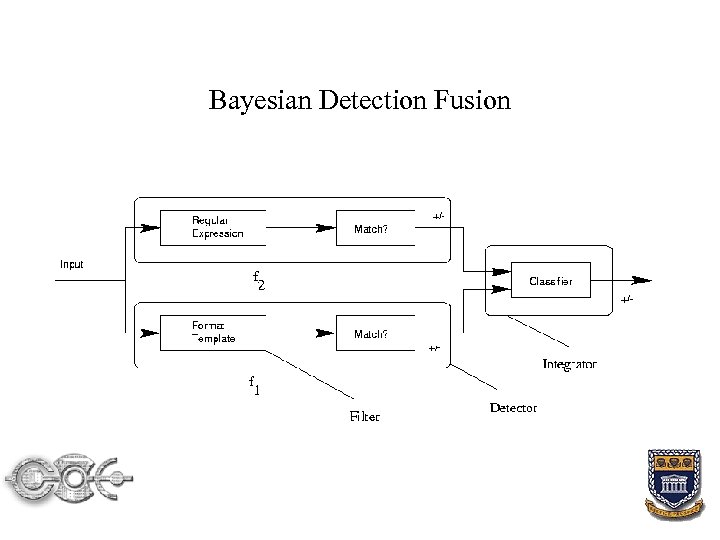

Bayesian Detector Fusion • How do we find suitable pieces of the document to apply extraction rules? • Optimally integrate simpler detectors for every target field • A regular expression or formatting rule is called a filter • Filter does/doesn’t match; combination of filter and match is called a detector • These partial detectors are combined and yield a new detector • New detectors’ precision/recall setting can be changed via a single parameter

Bayesian Detector Fusion • How do we find suitable pieces of the document to apply extraction rules? • Optimally integrate simpler detectors for every target field • A regular expression or formatting rule is called a filter • Filter does/doesn’t match; combination of filter and match is called a detector • These partial detectors are combined and yield a new detector • New detectors’ precision/recall setting can be changed via a single parameter

Bayesian Detector Fusion II • Every detector function is a binary classifier • We find the classifier with the highest probability of detection Pd for a given rate of false alarm Pf • Combined output of N detectors denoted as a bit string • Assume two hypotheses, either relevant, or irrelevant • Therefore 2 N possible bit strings, and 22 N distinct possible classification rules

Bayesian Detector Fusion II • Every detector function is a binary classifier • We find the classifier with the highest probability of detection Pd for a given rate of false alarm Pf • Combined output of N detectors denoted as a bit string • Assume two hypotheses, either relevant, or irrelevant • Therefore 2 N possible bit strings, and 22 N distinct possible classification rules

Bayesian Detection Fusion

Bayesian Detection Fusion

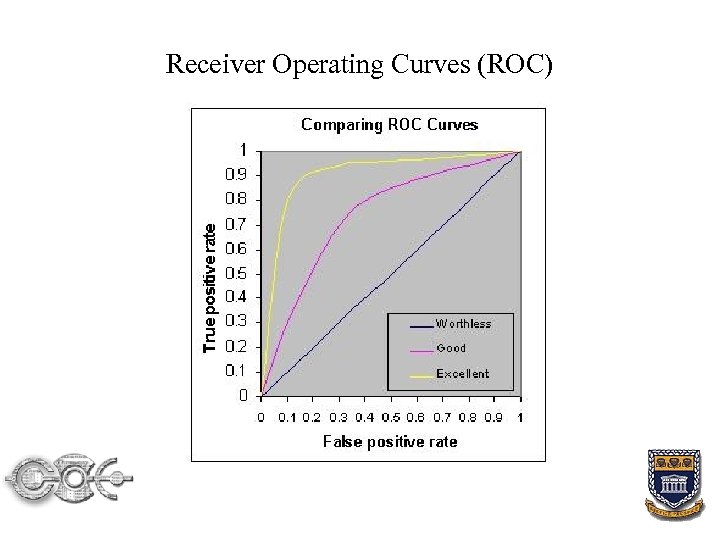

Receiver Operating Curves (ROC) • Graph of true positive rate vs. false positive rate • Area under the curve represents the probability of distinguishing between a (normal, abnormal) pair • The operating points defined by the 22 N binary mappings is called the Achievable Operating Set • The ROC is the set of operating points yielding the maximal detection rate for a given false alarm rate • The Neyman-Pearson (NP) procedure can be used to construct an optimal hypothesis test for a distribution parameter • NP ranks the 2 N possible strings according to likelihood ratio function

Receiver Operating Curves (ROC) • Graph of true positive rate vs. false positive rate • Area under the curve represents the probability of distinguishing between a (normal, abnormal) pair • The operating points defined by the 22 N binary mappings is called the Achievable Operating Set • The ROC is the set of operating points yielding the maximal detection rate for a given false alarm rate • The Neyman-Pearson (NP) procedure can be used to construct an optimal hypothesis test for a distribution parameter • NP ranks the 2 N possible strings according to likelihood ratio function

Receiver Operating Curves (ROC)

Receiver Operating Curves (ROC)

ROC Interpretation • Selecting features (y 1, y 2, …, y. N) yields a pair probability of detection Pd and probability of false alarm rate Pf • Pd and Pf are maxima for the chosen features

ROC Interpretation • Selecting features (y 1, y 2, …, y. N) yields a pair probability of detection Pd and probability of false alarm rate Pf • Pd and Pf are maxima for the chosen features

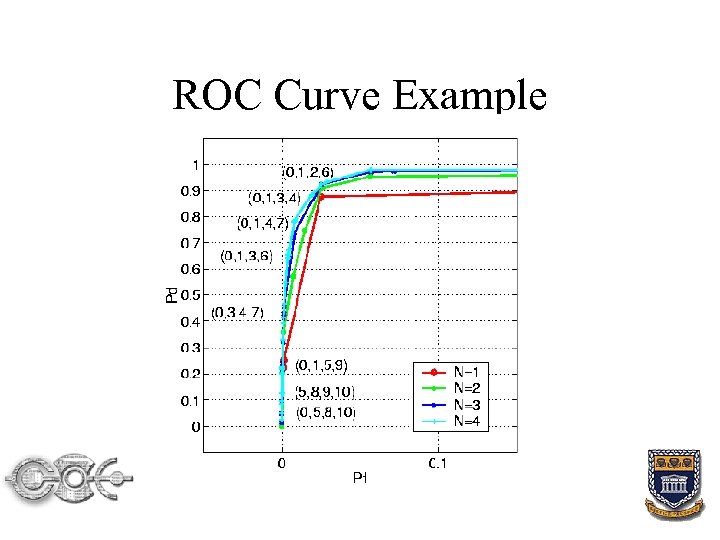

ROC Curve Example

ROC Curve Example

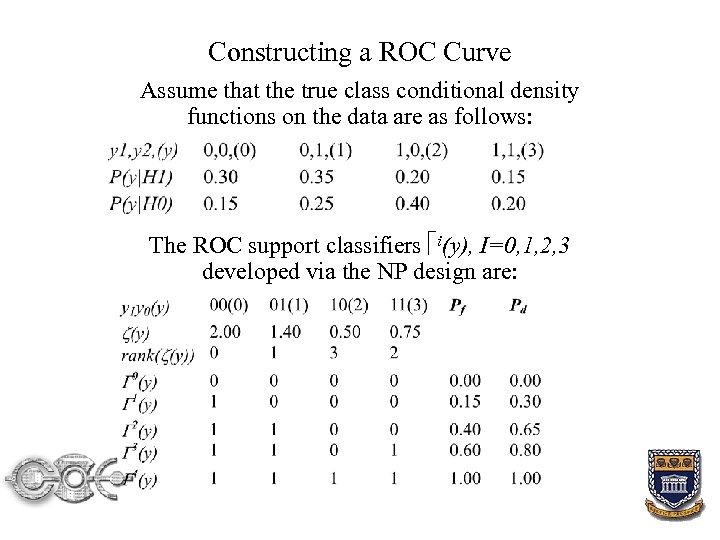

Constructing a ROC Curve Assume that the true class conditional density functions on the data are as follows: The ROC support classifiers i(y), I=0, 1, 2, 3 developed via the NP design are:

Constructing a ROC Curve Assume that the true class conditional density functions on the data are as follows: The ROC support classifiers i(y), I=0, 1, 2, 3 developed via the NP design are:

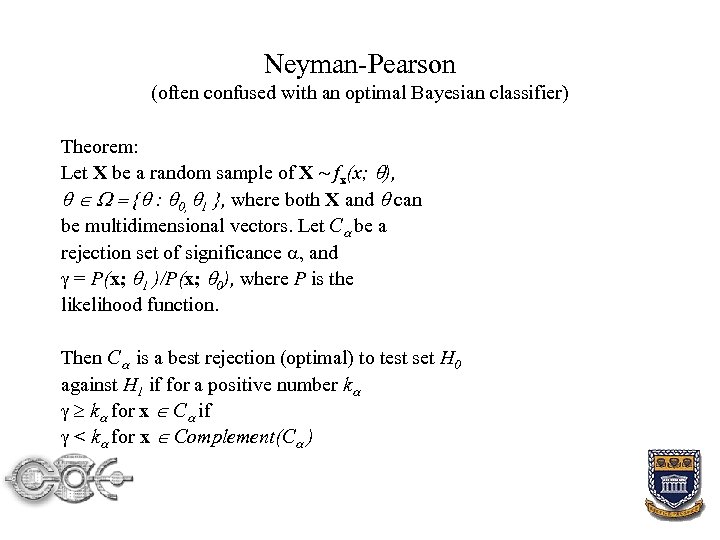

Neyman-Pearson (often confused with an optimal Bayesian classifier) Theorem: Let X be a random sample of X ~ fx(x; ), { : 0, 1 }, where both X and can be multidimensional vectors. Let C be a rejection set of significance , and = P(x; 1 )/P(x; 0), where P is the likelihood function. Then C is a best rejection (optimal) to test set H 0 against H 1 if for a positive number k k for x C if < k for x Complement(C )

Neyman-Pearson (often confused with an optimal Bayesian classifier) Theorem: Let X be a random sample of X ~ fx(x; ), { : 0, 1 }, where both X and can be multidimensional vectors. Let C be a rejection set of significance , and = P(x; 1 )/P(x; 0), where P is the likelihood function. Then C is a best rejection (optimal) to test set H 0 against H 1 if for a positive number k k for x C if < k for x Complement(C )

Presentation & Cataloging • After detection, need to extract target elements • A particular setting might overrule one or more constituent filters, require a certain combination of features, or enforce some other joint relationship • Matching filters and positive detectors are processed for extraction using heuristics • Match with highest confidence is used, but with “close calls”, all the matches are indexed • In principle no irreversible decisions are made

Presentation & Cataloging • After detection, need to extract target elements • A particular setting might overrule one or more constituent filters, require a certain combination of features, or enforce some other joint relationship • Matching filters and positive detectors are processed for extraction using heuristics • Match with highest confidence is used, but with “close calls”, all the matches are indexed • In principle no irreversible decisions are made

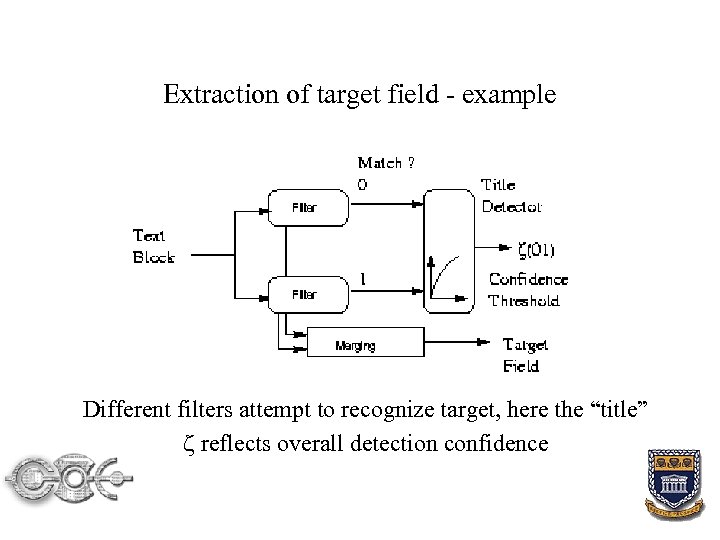

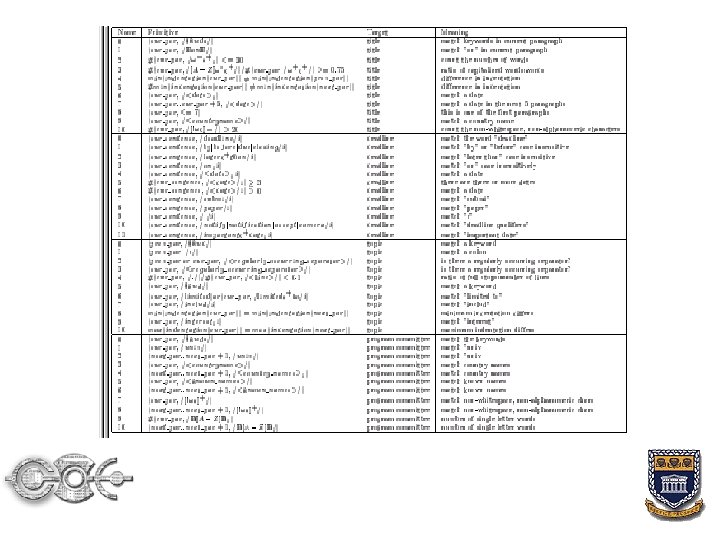

Extraction of target field - example Different filters attempt to recognize target, here the “title” reflects overall detection confidence

Extraction of target field - example Different filters attempt to recognize target, here the “title” reflects overall detection confidence

Primitives used in DEADLINER • Primitives are used to construct filters • Most conference materials follow a block layout, e. g. a title, abstracts, program committee, affiliations, discussion topics, venue, scope, and a miscellaneous section • Filters are constructed from dictionaries and/or heuristics Example: A title usually contains two or more of: a country name, city name, affiliation, date of meeting, deadline, conference name, list of sponsors, conference acronym, theme

Primitives used in DEADLINER • Primitives are used to construct filters • Most conference materials follow a block layout, e. g. a title, abstracts, program committee, affiliations, discussion topics, venue, scope, and a miscellaneous section • Filters are constructed from dictionaries and/or heuristics Example: A title usually contains two or more of: a country name, city name, affiliation, date of meeting, deadline, conference name, list of sponsors, conference acronym, theme

Examples of Primitives For a deadline, we use for example: (cur_sentence, /deadline/i) matches the word “deadline” (cur_sentence, /

Examples of Primitives For a deadline, we use for example: (cur_sentence, /deadline/i) matches the word “deadline” (cur_sentence, /

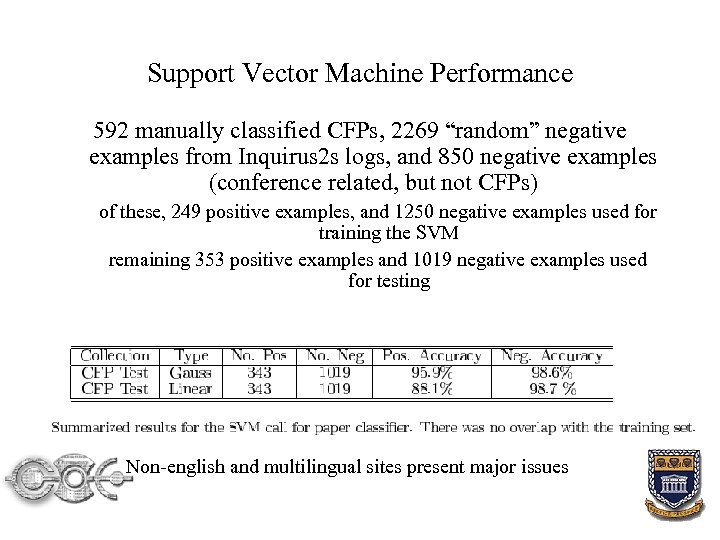

Support Vector Machine Performance 592 manually classified CFPs, 2269 “random” negative examples from Inquirus 2 s logs, and 850 negative examples (conference related, but not CFPs) of these, 249 positive examples, and 1250 negative examples used for training the SVM remaining 353 positive examples and 1019 negative examples used for testing Non-english and multilingual sites present major issues

Support Vector Machine Performance 592 manually classified CFPs, 2269 “random” negative examples from Inquirus 2 s logs, and 850 negative examples (conference related, but not CFPs) of these, 249 positive examples, and 1250 negative examples used for training the SVM remaining 353 positive examples and 1019 negative examples used for testing Non-english and multilingual sites present major issues

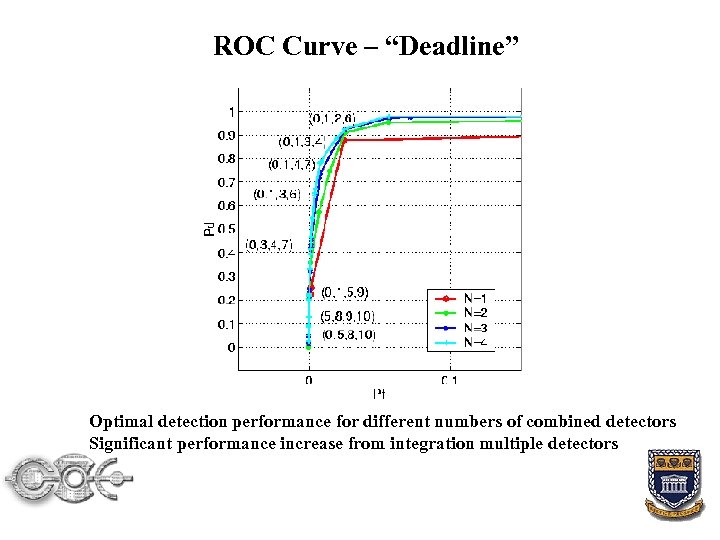

ROC Curve – “Deadline” Optimal detection performance for different numbers of combined detectors Significant performance increase from integration multiple detectors

ROC Curve – “Deadline” Optimal detection performance for different numbers of combined detectors Significant performance increase from integration multiple detectors

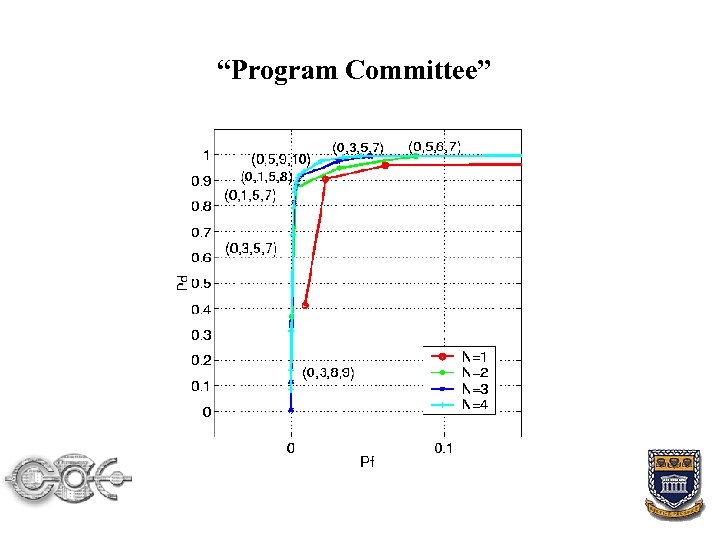

“Program Committee”

“Program Committee”

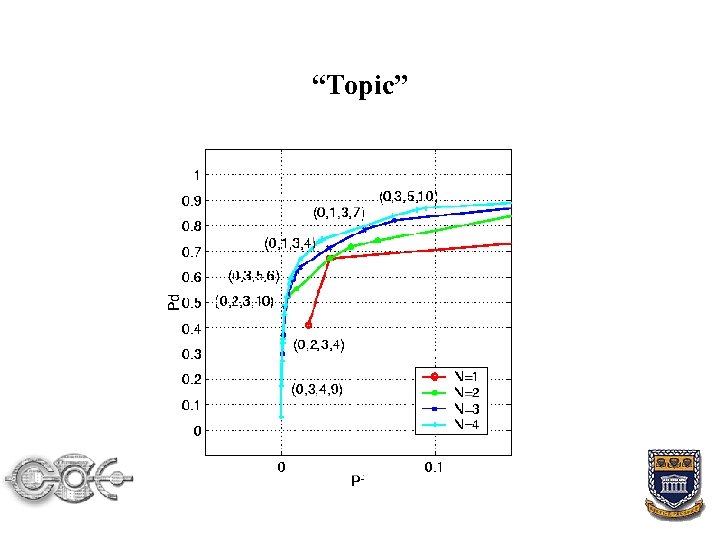

“Topic”

“Topic”

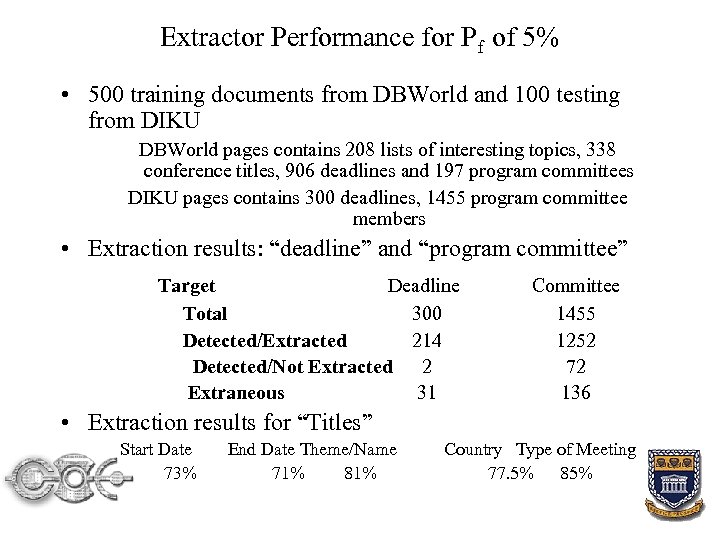

Extractor Performance for Pf of 5% • 500 training documents from DBWorld and 100 testing from DIKU DBWorld pages contains 208 lists of interesting topics, 338 conference titles, 906 deadlines and 197 program committees DIKU pages contains 300 deadlines, 1455 program committee members • Extraction results: “deadline” and “program committee” Target Deadline Total 300 Detected/Extracted 214 Detected/Not Extracted 2 Extraneous 31 Committee 1455 1252 72 136 • Extraction results for “Titles” Start Date 73% End Date Theme/Name 71% 81% Country Type of Meeting 77. 5% 85%

Extractor Performance for Pf of 5% • 500 training documents from DBWorld and 100 testing from DIKU DBWorld pages contains 208 lists of interesting topics, 338 conference titles, 906 deadlines and 197 program committees DIKU pages contains 300 deadlines, 1455 program committee members • Extraction results: “deadline” and “program committee” Target Deadline Total 300 Detected/Extracted 214 Detected/Not Extracted 2 Extraneous 31 Committee 1455 1252 72 136 • Extraction results for “Titles” Start Date 73% End Date Theme/Name 71% 81% Country Type of Meeting 77. 5% 85%

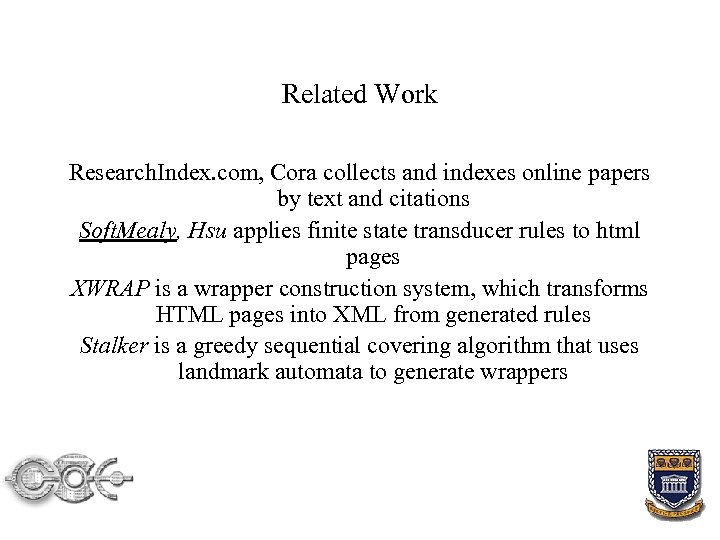

Related Work Research. Index. com, Cora collects and indexes online papers by text and citations Soft. Mealy, Hsu applies finite state transducer rules to html pages XWRAP is a wrapper construction system, which transforms HTML pages into XML from generated rules Stalker is a greedy sequential covering algorithm that uses landmark automata to generate wrappers

Related Work Research. Index. com, Cora collects and indexes online papers by text and citations Soft. Mealy, Hsu applies finite state transducer rules to html pages XWRAP is a wrapper construction system, which transforms HTML pages into XML from generated rules Stalker is a greedy sequential covering algorithm that uses landmark automata to generate wrappers

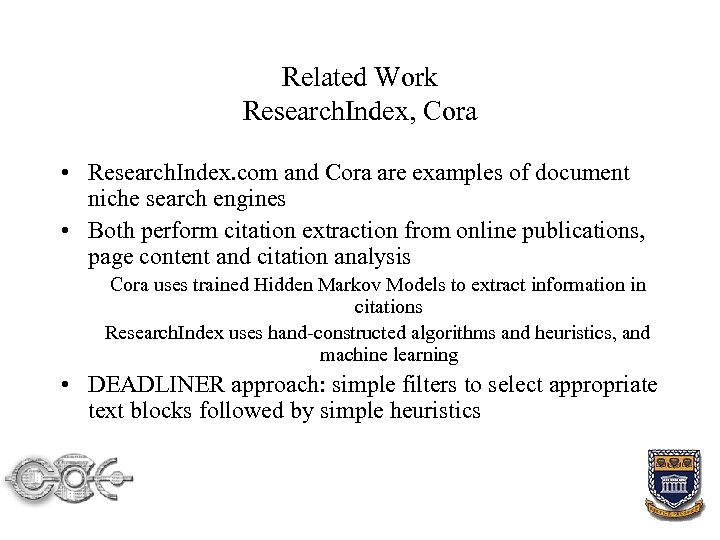

Related Work Research. Index, Cora • Research. Index. com and Cora are examples of document niche search engines • Both perform citation extraction from online publications, page content and citation analysis Cora uses trained Hidden Markov Models to extract information in citations Research. Index uses hand-constructed algorithms and heuristics, and machine learning • DEADLINER approach: simple filters to select appropriate text blocks followed by simple heuristics

Related Work Research. Index, Cora • Research. Index. com and Cora are examples of document niche search engines • Both perform citation extraction from online publications, page content and citation analysis Cora uses trained Hidden Markov Models to extract information in citations Research. Index uses hand-constructed algorithms and heuristics, and machine learning • DEADLINER approach: simple filters to select appropriate text blocks followed by simple heuristics

Related Work Soft. Mealy, Hsu • Trains a finite state transducer (FST) for token extraction • Uses a heuristic to prevent non-determinism (and therefore increase efficiency) • Contextual rules are produced by an induction algorithm • The FSTs obtained are applied to HTML pages (DEADLINER uses text-only pages in this experiment)

Related Work Soft. Mealy, Hsu • Trains a finite state transducer (FST) for token extraction • Uses a heuristic to prevent non-determinism (and therefore increase efficiency) • Contextual rules are produced by an induction algorithm • The FSTs obtained are applied to HTML pages (DEADLINER uses text-only pages in this experiment)

Related Work XWRAP, Liu, Pu, Han • XWRAP is a wrapper construction system, which transforms HTML pages into XML • Rules are generated and applied to HTML and interesting document regions are identified via an interactive interface Similarly for semantic tokens • These steps are follow by a hierarchy determination, resulting in a Context Free grammar

Related Work XWRAP, Liu, Pu, Han • XWRAP is a wrapper construction system, which transforms HTML pages into XML • Rules are generated and applied to HTML and interesting document regions are identified via an interactive interface Similarly for semantic tokens • These steps are follow by a hierarchy determination, resulting in a Context Free grammar

Related Work Stalker, Muslea, Minton, Knoblock • An algorithm that uses landmark automata to generate wrappers • Stalker is a greedy sequential covering algorithm • Generates a landmark automaton that accepts only true positives • Does so by finding a perfect disjunctive, or until it runs out of training examples • The best disjunctive covers the most positive examples and new disjunctives are added iteratively to cover uncovered positive candidates

Related Work Stalker, Muslea, Minton, Knoblock • An algorithm that uses landmark automata to generate wrappers • Stalker is a greedy sequential covering algorithm • Generates a landmark automaton that accepts only true positives • Does so by finding a perfect disjunctive, or until it runs out of training examples • The best disjunctive covers the most positive examples and new disjunctives are added iteratively to cover uncovered positive candidates

Future Work • Create feature extraction tools (to decrease modeling time) and improve classification/extraction • Increase the (types of) meta-data extracted • Apply the DEADLINER architecture model to other domains • Extension to WWW image searches • e. Africa. org: repository of timely documents related to elearning, e-commerce, e-government, e-healthcare, etc. for the African continent.

Future Work • Create feature extraction tools (to decrease modeling time) and improve classification/extraction • Increase the (types of) meta-data extracted • Apply the DEADLINER architecture model to other domains • Extension to WWW image searches • e. Africa. org: repository of timely documents related to elearning, e-commerce, e-government, e-healthcare, etc. for the African continent.

Reference: A. Kruger, C. L. Giles, F. Coetzee, E. Glover, G. W. Flake, S. Lawrence, C. W. Omlin, “Deadliner: Building A New Niche Search Engine’’, 9 th International Conference on Information and Knowledge Management (CIKM), 2000.

Reference: A. Kruger, C. L. Giles, F. Coetzee, E. Glover, G. W. Flake, S. Lawrence, C. W. Omlin, “Deadliner: Building A New Niche Search Engine’’, 9 th International Conference on Information and Knowledge Management (CIKM), 2000.