f97da742568fa0cbe10416247af4cab8.ppt

- Количество слайдов: 64

Radiobots Screen ‘n Tell April 24, 2006 institute for creative technologies 3/19/2018

Outline • • • David Traum: Jarrell Pair: Demo Susan Robinson: Antonio Roque: Vivek Kumar: Bilyana Martinovski: Anton Leuski: Antonio Roque: Susan Robinson: Ashish Vaswani: David Traum: Open demo: Intro to project Background on Ft Sill & UTM Data collection System architecture Speech recognizer Dialogue annotation Interpreter Dialogue manager Evaluation design Evaluation results Future directions 3/19/2018

Radiobots: Project History • 2004: Piloted within ICT Mission Rehearsal Exercise • Simple dialogue systems for radio characters • Output through radio • 2004 -2005: seedling effort • Further development of MRE radiobots • Analysis of radiobot domains & tools • Focus on call for fire • Tools for data collection & semi-automatic operation • Initial data collection at Ft Sill and analysis • 2005 - Present: Radiobots for JFETS 3/19/2018

Approach: Multiple Tools • Speech tools for Observer/Controller assistance • Automatic Transcription • Summarization, indexing & highlighting (for AAR) • Semi-automated tools • Push-button speech interface • Computer guided/human controlled • Fully automatic radiobots • Ability to switch between modes, mid-session 3/19/2018

Radiobots for JFETS: Team members • • – USC ICT (Dr. David Traum, Antonio Roque, Susan Robinson, Dr Anton Leuski, Jarrell Pair, Tae Yoon, Dr Bilyana Martinovski, Ashish Vaswani, Sudeep Gandhe, Emily Flores, Jillian Gerten) – overall integration & management – dialogue systems – corpus creation & development – evaluation USC SAIL (Dr. Shri Narayanan, Vivek Sridhar, Shankar Anathakrishnan) • speech processing Tech. Masters Inc (TMI) (Bill Millspaugh) – Fire. SIM XXI simulation – Text to tactical messaging (NLDI) ARL-HRED (Charles Hernandez, Dr Janet Sutton) – Evaluation With help from Ft Sill Battle Lab & Techrizon 3/19/2018

Outline • • • David Traum: Jarrell Pair: Demo Susan Robinson: Antonio Roque: Vivek Kumar: Bilyana Martinovski: Anton Leuski: Antonio Roque: Susan Robinson: Ashish Vaswani: David Traum: Open demo: Intro to project Background on Ft Sill & UTM Data collection System architecture Speech recognizer Dialogue annotation Interpreter Dialogue manager Evaluation design Evaluation results Future directions 3/19/2018

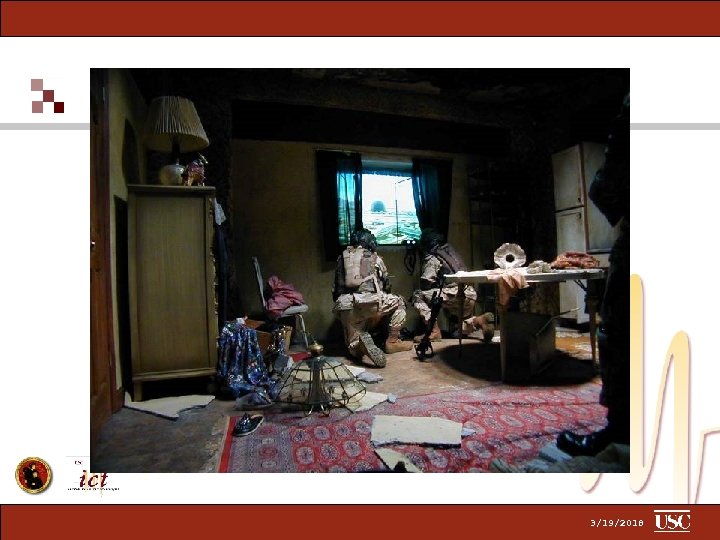

JFETS-D 3/19/2018

3/19/2018

3/19/2018

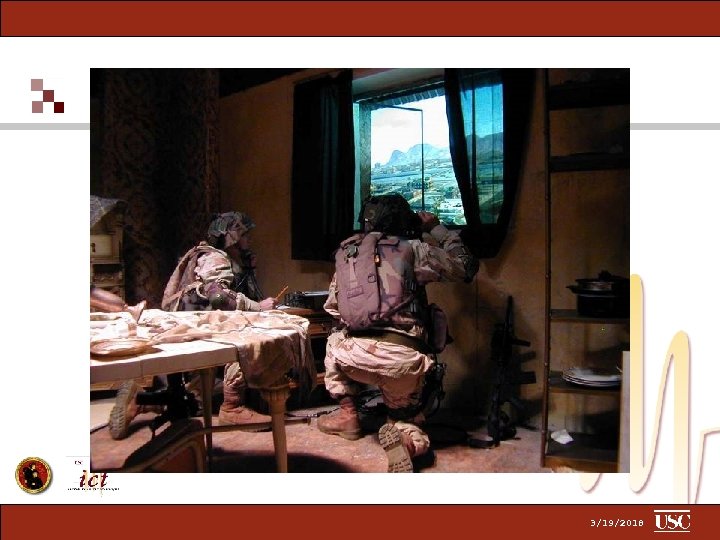

JFETS-TB 3/19/2018

institute for creative technologies 3/19/2018

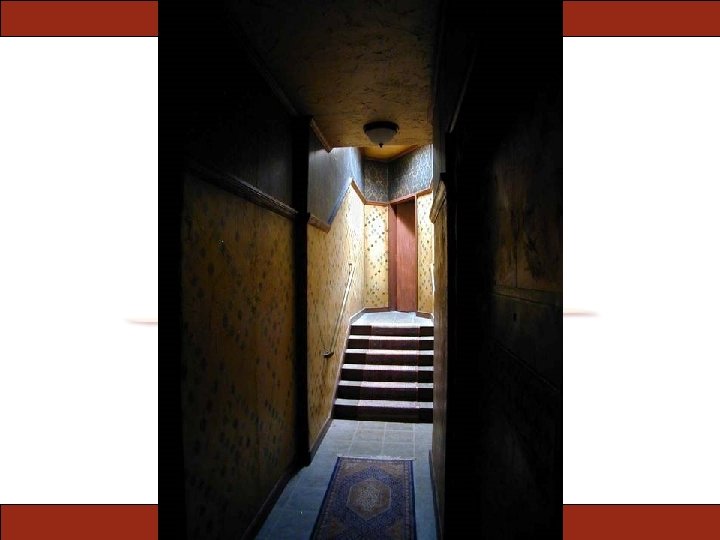

3/19/2018

3/19/2018

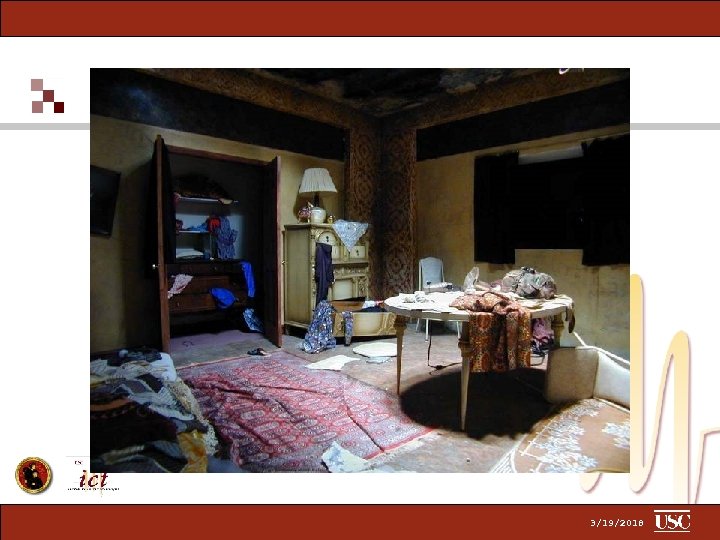

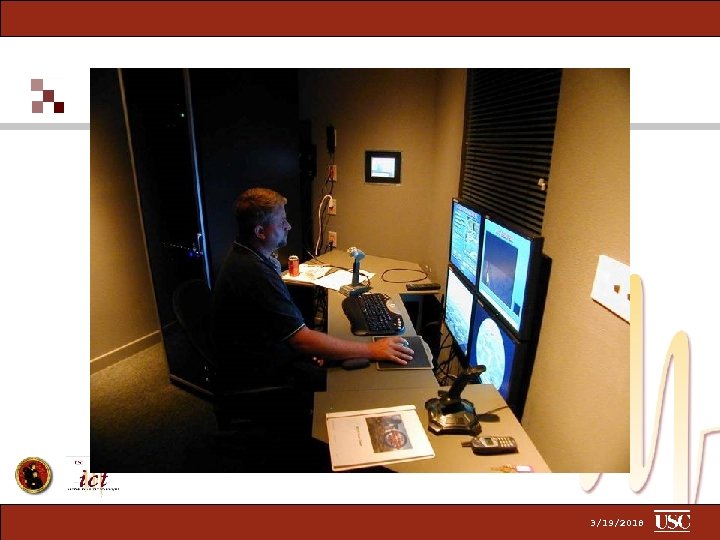

3/19/2018

3/19/2018

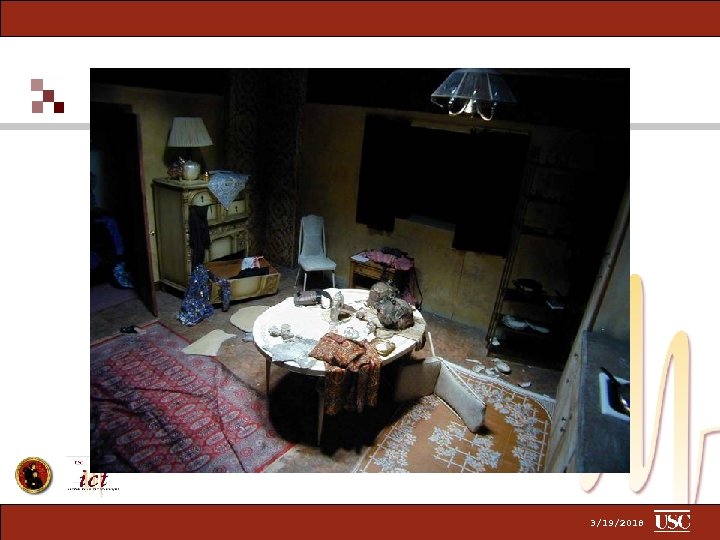

3/19/2018

Outline • • • David Traum: Jarrell Pair: Demo Susan Robinson: Antonio Roque: Vivek Kumar: Bilyana Martinovski: Anton Leuski: Antonio Roque: Susan Robinson: Ashish Vaswani: David Traum: Open demo: Intro to project Background on Ft Sill & UTM Data collection System architecture Speech recognizer Dialogue annotation Interpreter Dialogue manager Evaluation design Evaluation results Future directions 3/19/2018

Outline • • • David Traum: Jarrell Pair: Demo Susan Robinson: Antonio Roque: Vivek Kumar: Bilyana Martinovski: Anton Leuski: Antonio Roque: Susan Robinson: Ashish Vaswani: David Traum: Open demo: Intro to project Background on Ft Sill & UTM Data collection System architecture Speech recognizer Dialogue annotation Interpreter Dialogue manager Evaluation design Evaluation results Future directions 3/19/2018

Data Collection 1. 2. 3. JFETS Data: UTM regular training session recordings (over 100) Trips to Ft Sill for close talking mic data from UTM and OTM (3 trips, approx 30 hours data) Firesim and Radiobot test integration recordings (2 trips, 20 sessions) 3/19/2018

Outline • • • David Traum: Jarrell Pair: Demo Susan Robinson: Antonio Roque: Vivek Kumar: Bilyana Martinovski: Anton Leuski: Antonio Roque: Susan Robinson: Ashish Vaswani: David Traum: Open demo: Intro to project Background on Ft Sill & UTM Data collection System architecture Speech recognizer Dialogue annotation Interpreter Dialogue manager Evaluation design Evaluation results Future directions 3/19/2018

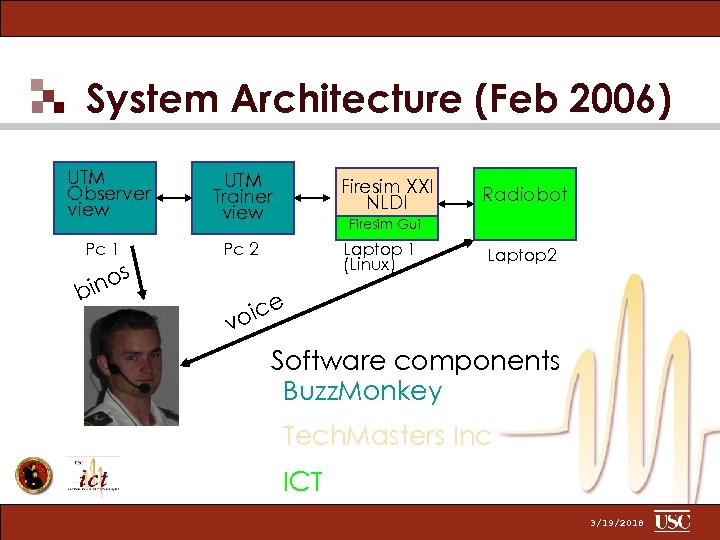

System Architecture (Feb 2006) UTM Observer view Pc 1 s ino b UTM Trainer view Firesim XXI NLDI Radiobot Firesim Gui Laptop 1 (Linux) Pc 2 Laptop 2 e oic v Software components Buzz. Monkey Tech. Masters Inc ICT 3/19/2018

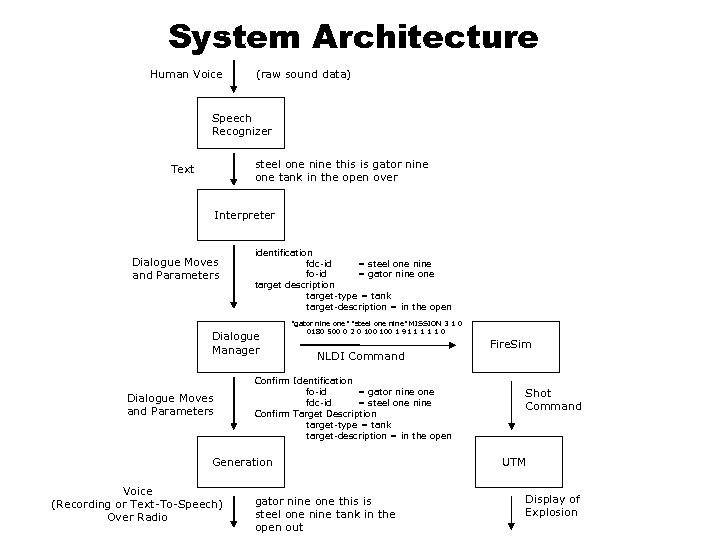

System Architecture Human Voice (raw sound data) Speech Recognizer steel one nine this is gator nine one tank in the open over Text Interpreter Dialogue Moves and Parameters identification fdc-id = steel one nine fo-id = gator nine one target description target-type = tank target-description = in the open Dialogue Manager Dialogue Moves and Parameters "gator nine one" "steel one nine" MISSION 3 1 0 0180 500 0 2 0 100 1 91 1 1 0 NLDI Command Confirm Identification fo-id = gator nine one fdc-id = steel one nine Confirm Target Description target-type = tank target-description = in the open Generation Voice (Recording or Text-To-Speech) Over Radio gator nine one this is steel one nine tank in the open out Fire. Sim Shot Command UTM Display of Explosion

Outline • • • David Traum: Jarrell Pair: Demo Susan Robinson: Antonio Roque: Vivek Kumar: Bilyana Martinovski: Anton Leuski: Antonio Roque: Susan Robinson: Ashish Vaswani: David Traum: Open demo: Intro to project Background on Ft Sill & UTM Data collection System architecture Speech recognizer Dialogue annotation Interpreter Dialogue manager Evaluation design Evaluation results Future directions 3/19/2018

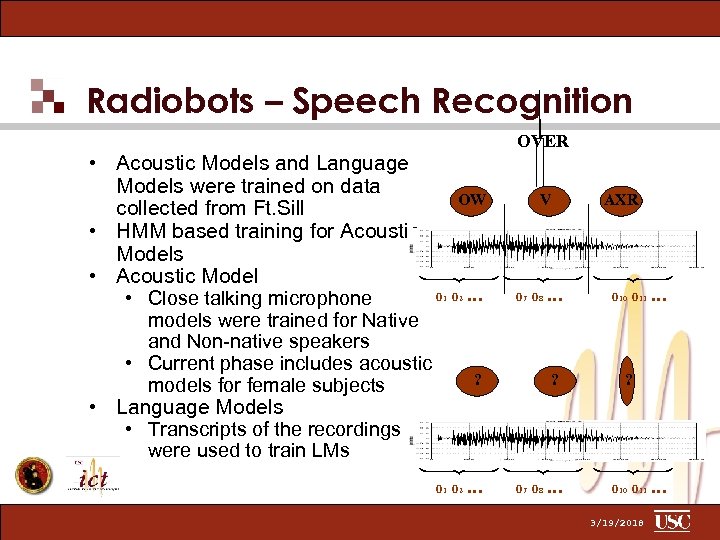

Radiobots – Speech Recognition • Acoustic Models and Language Models were trained on data collected from Ft. Sill • HMM based training for Acoustic Models • Acoustic Model • Close talking microphone models were trained for Native and Non-native speakers • Current phase includes acoustic models for female subjects OVER OW V o 1 o 2 … o 7 o 8 … ? ? o 1 o 2 … o 7 o 8 … AXR o 10 o 11 … ? • Language Models • Transcripts of the recordings were used to train LMs o 10 o 11 … 3/19/2018

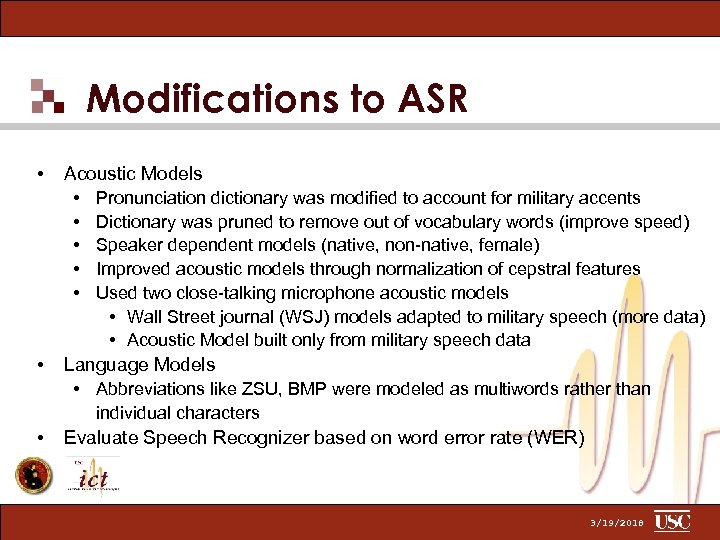

Modifications to ASR • • Acoustic Models • Pronunciation dictionary was modified to account for military accents • Dictionary was pruned to remove out of vocabulary words (improve speed) • Speaker dependent models (native, non-native, female) • Improved acoustic models through normalization of cepstral features • Used two close-talking microphone acoustic models • Wall Street journal (WSJ) models adapted to military speech (more data) • Acoustic Model built only from military speech data Language Models • Abbreviations like ZSU, BMP were modeled as multiwords rather than individual characters • Evaluate Speech Recognizer based on word error rate (WER) 3/19/2018

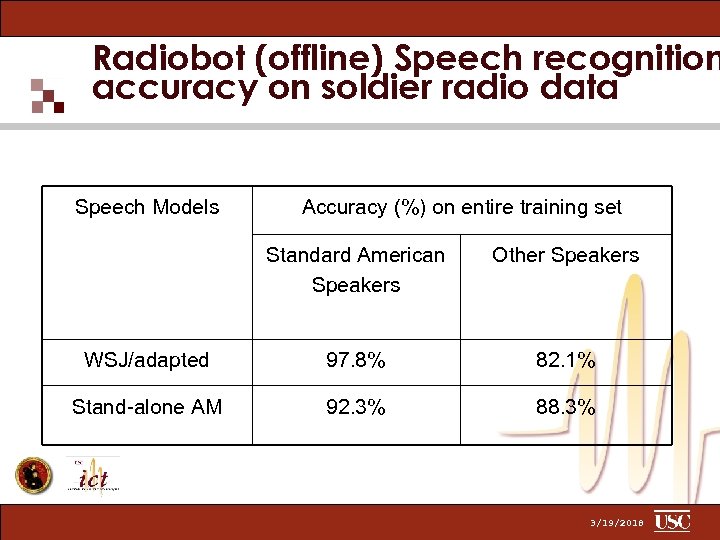

Radiobot (offline) Speech recognition accuracy on soldier radio data Speech Models Accuracy (%) on entire training set Standard American Speakers Other Speakers WSJ/adapted 97. 8% 82. 1% Stand-alone AM 92. 3% 88. 3% 3/19/2018

Outline • • • David Traum: Jarrell Pair: Demo Susan Robinson: Antonio Roque: Vivek Kumar: Bilyana Martinovski: Anton Leuski: Antonio Roque: Susan Robinson: Ashish Vaswani: David Traum: Open demo: Intro to project Background on Ft Sill & UTM Data collection System architecture Speech recognizer Dialogue annotation Interpreter Dialogue manager Evaluation design Evaluation results Future directions 3/19/2018

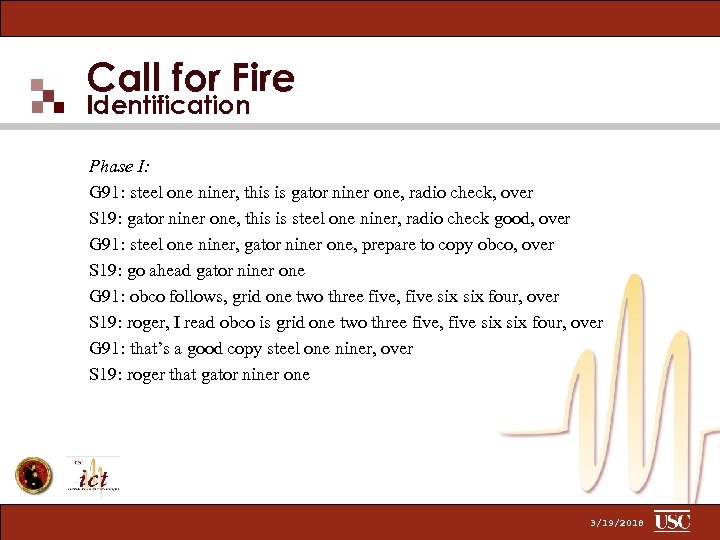

Call for Fire Identification Phase I: G 91: steel one niner, this is gator niner one, radio check, over S 19: gator niner one, this is steel one niner, radio check good, over G 91: steel one niner, gator niner one, prepare to copy obco, over S 19: go ahead gator niner one G 91: obco follows, grid one two three five, five six four, over S 19: roger, I read obco is grid one two three five, five six four, over G 91: that’s a good copy steel one niner, over S 19: roger that gator niner one 3/19/2018

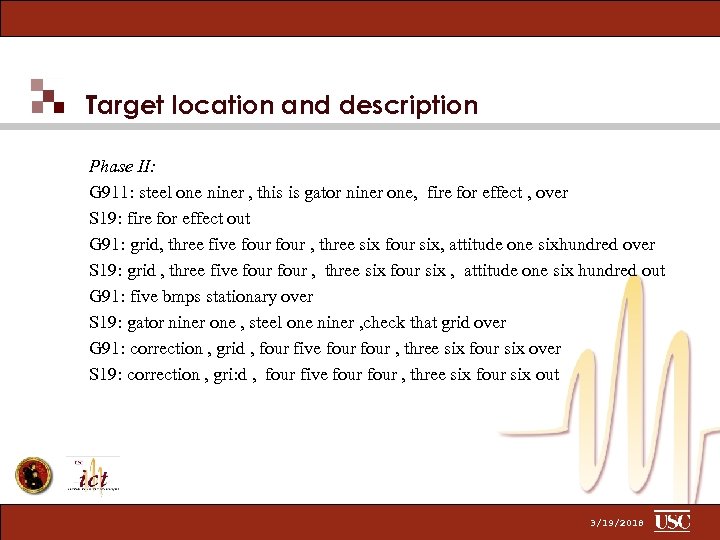

Target location and description Phase II: G 911: steel one niner , this is gator niner one, fire for effect , over S 19: fire for effect out G 91: grid, three five four , three six four six, attitude one sixhundred over S 19: grid , three five four , three six four six , attitude one six hundred out G 91: five bmps stationary over S 19: gator niner one , steel one niner , check that grid over G 91: correction , grid , four five four , three six four six over S 19: correction , gri: d , four five four , three six four six out 3/19/2018

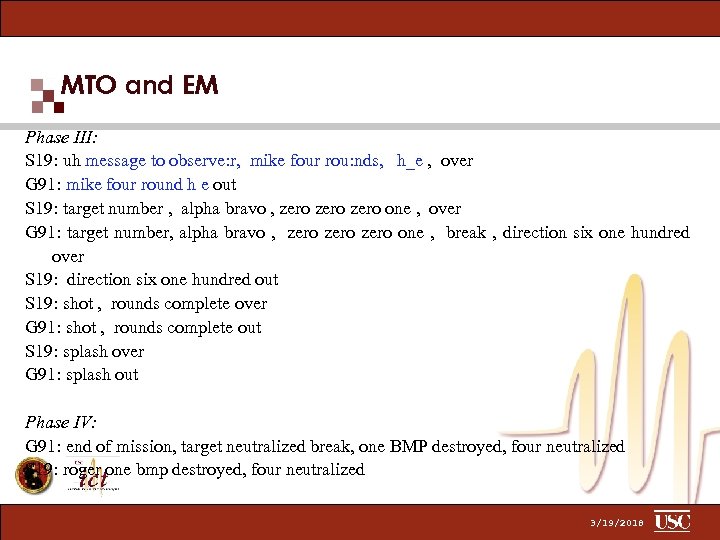

MTO and EM Phase III: S 19: uh message to observe: r, mike four rou: nds, h_e , over G 91: mike four round h e out S 19: target number , alpha bravo , zero one , over G 91: target number, alpha bravo , zero one , break , direction six one hundred over S 19: direction six one hundred out S 19: shot , rounds complete over G 91: shot , rounds complete out S 19: splash over G 91: splash out Phase IV: G 91: end of mission, target neutralized break, one BMP destroyed, four neutralized S 19: roger one bmp destroyed, four neutralized 3/19/2018

Processing of data • • • Transcription on Transcriber/Text files Analysis of data/activity Formulation of a coding scheme Coding of data on MMAX Training of classifier 3/19/2018

Coding categories • Dialogue moves: Fire, OBCO, MTO… • Dialogue regulators: Correction, Say Again, Over/out… • Grounding acts: Confirm, Preparation, Prompt… 3/19/2018

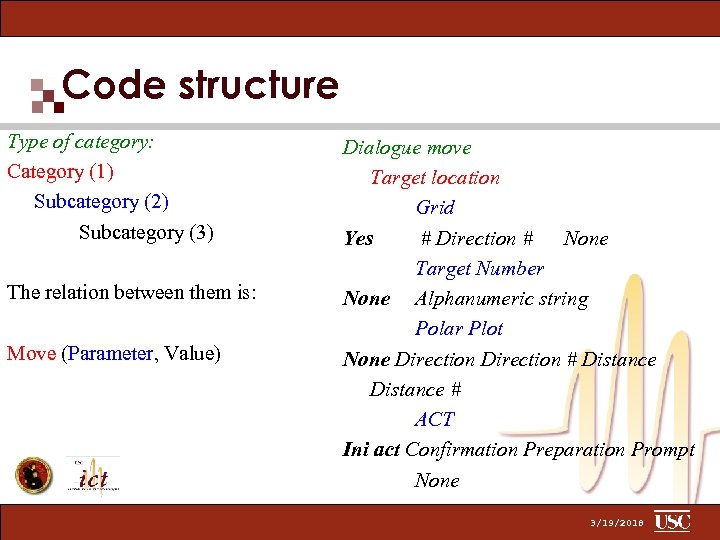

Code structure Type of category: Category (1) Subcategory (2) Subcategory (3) The relation between them is: Move (Parameter, Value) Dialogue move Target location Grid Yes # Direction # None Target Number None Alphanumeric string Polar Plot None Direction # Distance # ACT Ini act Confirmation Preparation Prompt None 3/19/2018

Example: Method of Engagement How FO would like the target to be attacked. (para. 6 -26) Parameter: Type of Adjustment (para. 6 -27 - 6 -29) - possible values: "Area Fire" or "Destruction” Parameter: Danger Close (para. 6 -30) - if friendly units are nearby - possible value: "Danger Close" 3/19/2018

MMAX 3/19/2018

Outline • • • David Traum: Jarrell Pair: Demo Susan Robinson: Antonio Roque: Vivek Kumar: Bilyana Martinovski: Anton Leuski: Antonio Roque: Susan Robinson: Ashish Vaswani: David Traum: Open demo: Intro to project Background on Ft Sill & UTM Data collection System architecture Speech recognizer Dialogue annotation Interpreter Dialogue manager Evaluation design Evaluation results Future directions 3/19/2018

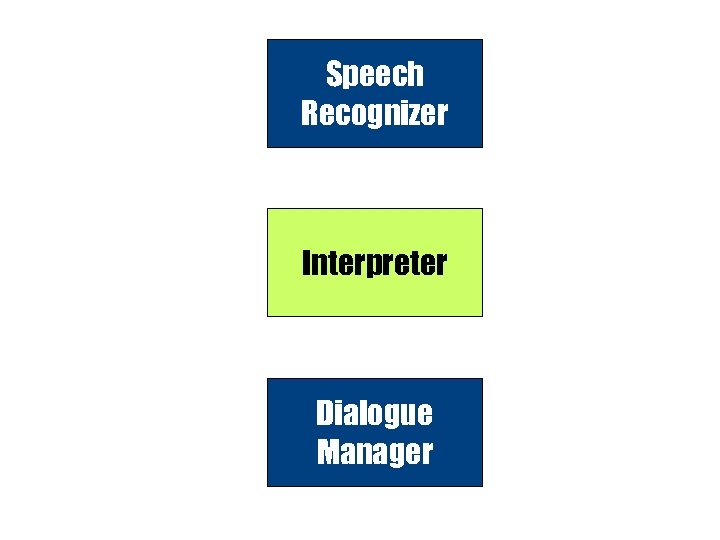

Speech Recognizer Interpreter Dialogue Manager

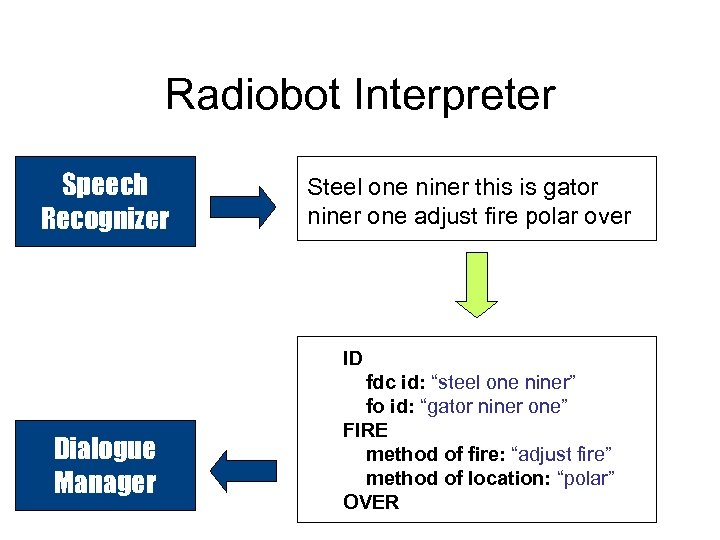

Radiobot Interpreter Speech Recognizer Steel one niner this is gator niner one adjust fire polar over ID Dialogue Manager fdc id: “steel one niner” fo id: “gator niner one” FIRE method of fire: “adjust fire” method of location: “polar” OVER

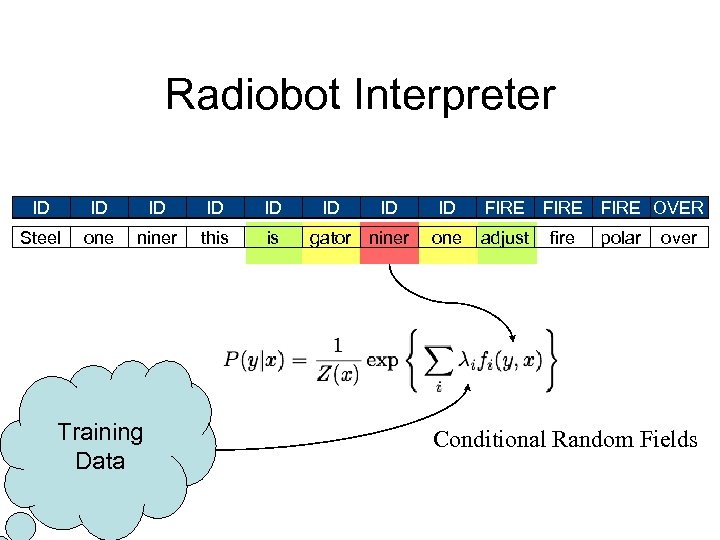

Radiobot Interpreter ? ID ? ? ID ID FIRE MTO Steel one niner Training Data ? ID TL this is ? ID TL ? ? ? ID ID OVER MTO FIRE OVER MTO ID OUT FIRE gator niner one adjust fire polar over Conditional Random Fields

Radiobot Interpreter

Outline • • • David Traum: Jarrell Pair: Demo Susan Robinson: Antonio Roque: Vivek Kumar: Bilyana Martinovski: Anton Leuski: Antonio Roque: Susan Robinson: Ashish Vaswani: David Traum: Open demo: Intro to project Background on Ft Sill & UTM Data collection System architecture Speech recognizer Dialogue annotation Interpreter Dialogue manager Evaluation design Evaluation results Future directions 3/19/2018

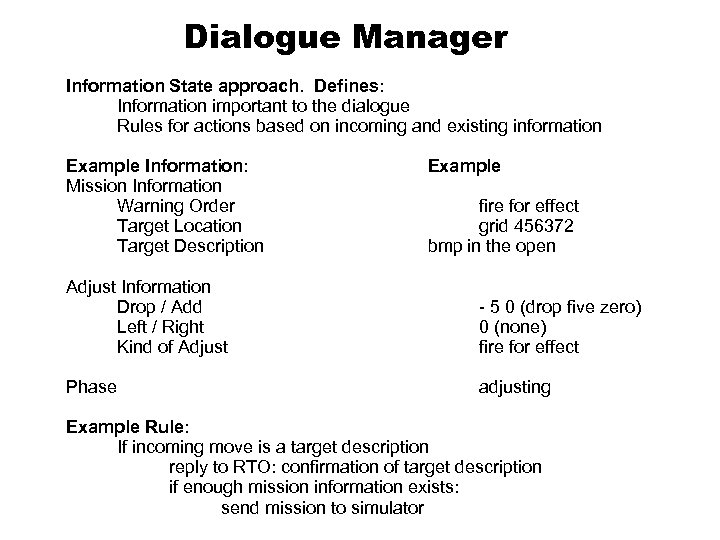

Dialogue Manager Information State approach. Defines: Information important to the dialogue Rules for actions based on incoming and existing information Example Information: Mission Information Warning Order Target Location Target Description Example fire for effect grid 456372 bmp in the open Adjust Information Drop / Add Left / Right Kind of Adjust - 5 0 (drop five zero) 0 (none) fire for effect Phase adjusting Example Rule: If incoming move is a target description reply to RTO: confirmation of target description if enough mission information exists: send mission to simulator

Outline • • • David Traum: Jarrell Pair: Demo Susan Robinson: Antonio Roque: Vivek Kumar: Bilyana Martinovski: Anton Leuski: Antonio Roque: Susan Robinson: Ashish Vaswani: David Traum: Open demo: Intro to project Background on Ft Sill & UTM Data collection System architecture Speech recognizer Dialogue annotation Interpreter Dialogue manager Evaluation design Evaluation results Future directions 3/19/2018

Evaluation Conditions • Automated: radiobot as FSO, automatically sends mission information to Firesim • Semi-automated: As above, but fills in form for human operator to review and submit • Human control: Human FSO sends radio dialogues and operator sends missions through Firesim 3/19/2018

Evaluation Sessions • Preliminary Evaluation Nov 2005 • 34 students in UTM training • Focused on semi-auto condition and refining user questionnaire • Final Evaluation Jan-Feb 2006 • 29 volunteers from Ft Sill, some repeats across conditions • Demographic and user surveys for each session • 2 subjects per group, FO and RTO each did 2 missions then switched roles. • Conditions were varied across groups 3/19/2018

Evaluation Data Overview • Eval 1: Jan 2006 • 20 sessions (10 teams) • 4 human, 8 semi-auto, 8 auto • Eval 2: Feb 2006 • 27 sessions (14 teams) • 6 human, 9 semi-auto, 12 auto 3/19/2018

Questionnaire Results: Dialogue 3/19/2018

Questionnaire Results: Trainee Performance 3/19/2018

Mission Performance • Average time to fire: 1 min 46 human, 2 min 19 semi, 1 min 44 auto • Task completion rate: 100% human, 98% semi, 86% auto • Accuracy rate: 100% human, 97% semi, 92% auto 3/19/2018

Outline • • • David Traum: Jarrell Pair: Demo Susan Robinson: Antonio Roque: Vivek Kumar: Bilyana Martinovski: Anton Leuski: Antonio Roque: Susan Robinson: Ashish Vaswani: David Traum: Open demo: Intro to project Background on Ft Sill & UTM Data collection System architecture Speech recognizer Dialogue annotation Interpreter Dialogue manager Evaluation design Component Performance Evaluation results Future directions 3/19/2018

Components evaluated • • Automatic Speech Recognizer (ASR) Interpreter ASR + Interpreter Dialogue Manager 3/19/2018

Evaluation Metrics • Compare System Results with Human coding (Gold Standard) • Basic Scoring Methods • Precision (correct recognized/ all recognized) • Recall (correct recognized / all correct) • F-Score (harmonic mean of P & R) • Error Rate (errors/ all correct) 3/19/2018

Example: ASR evaluation • Transcribed Utterance (Exact reproduction of audio signal) = • Output from ASR = • Merged view • • • Precision = 11/12 Recall = 11/11 WER = 1/11 F-Score( Harmonic mean of Precision and Recall) = 0. 957 DR, DP, DWER, DF, Av. R, Av. P, Av. WER, Av. F steel one nine this is gator niner one adjust fire over steel one nine this is gator one niner one adjust fire over steel one nine this is gator [one] niner one adjust fire over 3/19/2018

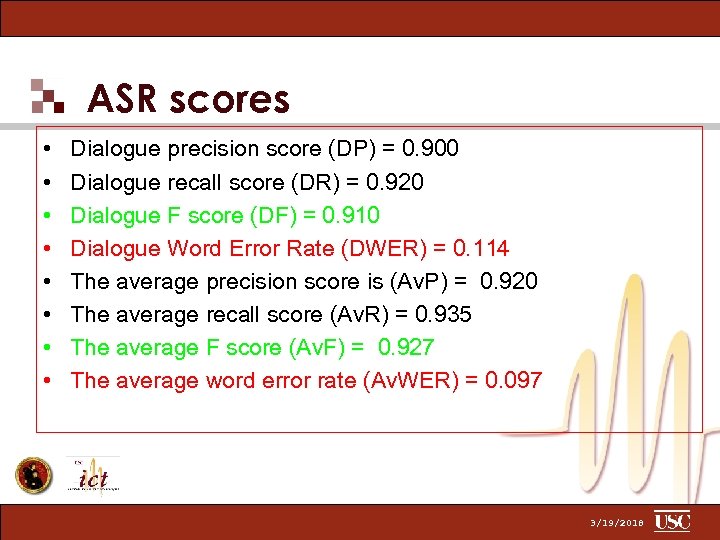

ASR scores • • Dialogue precision score (DP) = 0. 900 Dialogue recall score (DR) = 0. 920 Dialogue F score (DF) = 0. 910 Dialogue Word Error Rate (DWER) = 0. 114 The average precision score is (Av. P) = 0. 920 The average recall score (Av. R) = 0. 935 The average F score (Av. F) = 0. 927 The average word error rate (Av. WER) = 0. 097 3/19/2018

Interpreter and ASR + Interpreter evaluation • • Interpreter Evaluation • Interpreter results on perfect input compared to human coding ASR + Interpreter Evaluation • Interpreter coding on ASR output compared to human coding 3/19/2018

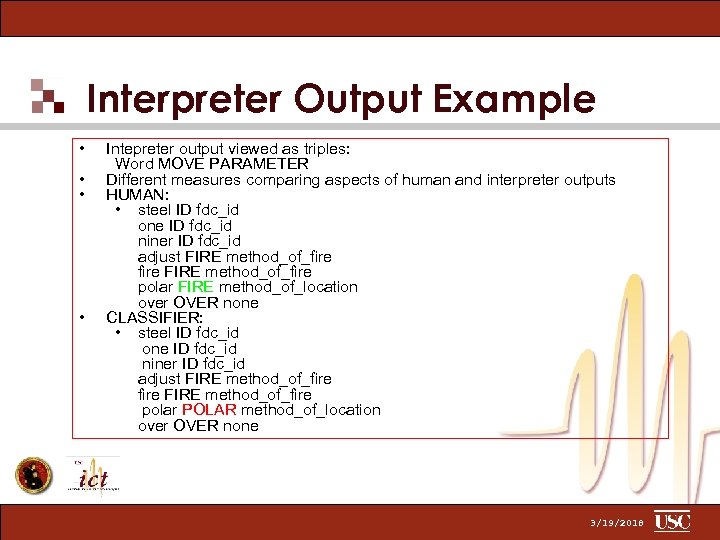

Interpreter Output Example • • Intepreter output viewed as triples: Word MOVE PARAMETER Different measures comparing aspects of human and interpreter outputs HUMAN: • steel ID fdc_id one ID fdc_id niner ID fdc_id adjust FIRE method_of_fire polar FIRE method_of_location over OVER none CLASSIFIER: • steel ID fdc_id one ID fdc_id niner ID fdc_id adjust FIRE method_of_fire polar POLAR method_of_location over OVER none 3/19/2018

Interpreter vs ASR+Interpreter 3/19/2018

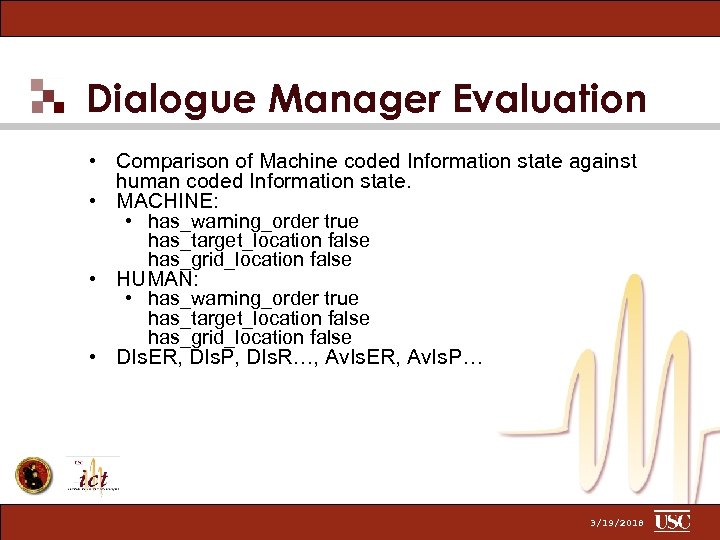

Dialogue Manager Evaluation • Comparison of Machine coded Information state against human coded Information state. • MACHINE: • has_warning_order true has_target_location false has_grid_location false • HUMAN: • has_warning_order true has_target_location false has_grid_location false • DIs. ER, DIs. P, DIs. R…, Av. Is. ER, Av. Is. P… 3/19/2018

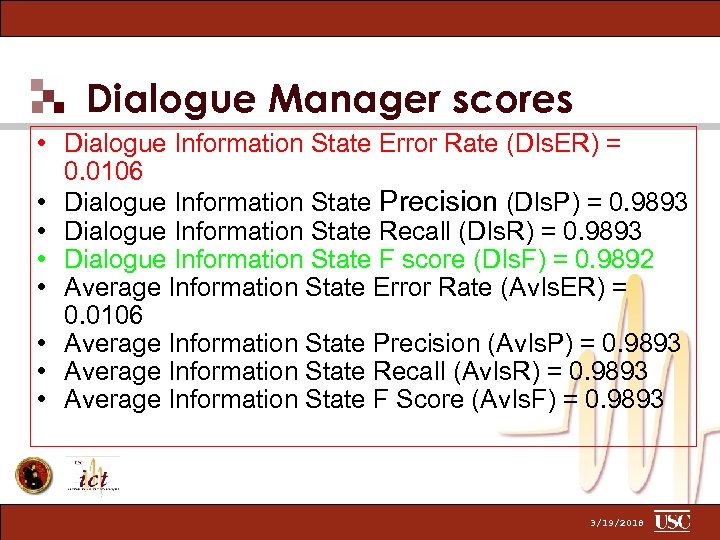

Dialogue Manager scores • Dialogue Information State Error Rate (DIs. ER) = 0. 0106 • Dialogue Information State Precision (DIs. P) = 0. 9893 • Dialogue Information State Recall (DIs. R) = 0. 9893 • Dialogue Information State F score (DIs. F) = 0. 9892 • Average Information State Error Rate (Av. Is. ER) = 0. 0106 • Average Information State Precision (Av. Is. P) = 0. 9893 • Average Information State Recall (Av. Is. R) = 0. 9893 • Average Information State F Score (Av. Is. F) = 0. 9893 3/19/2018

Outline • • • David Traum: Jarrell Pair: Demo Susan Robinson: Antonio Roque: Vivek Kumar: Bilyana Martinovski: Anton Leuski: Antonio Roque: Susan Robinson: Ashish Vaswani: David Traum: Open demo: Intro to project Background on Ft Sill & UTM Data collection System architecture Speech recognizer Dialogue annotation Interpreter Dialogue manager Evaluation design Evaluation results Future directions 3/19/2018

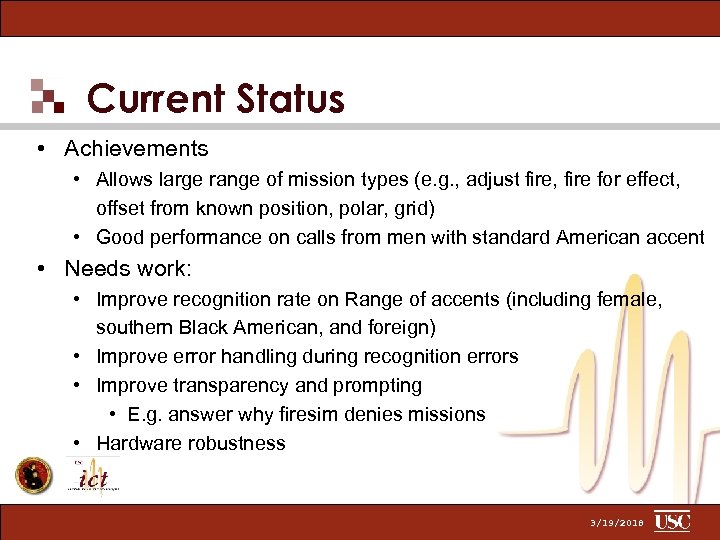

Current Status • Achievements • Allows large range of mission types (e. g. , adjust fire, fire for effect, offset from known position, polar, grid) • Good performance on calls from men with standard American accent • Needs work: • Improve recognition rate on Range of accents (including female, southern Black American, and foreign) • Improve error handling during recognition errors • Improve transparency and prompting • E. g. answer why firesim denies missions • Hardware robustness 3/19/2018

Next Steps 1. Improving UTM Radiobots to performance level capability • • • Suitable for use in regular training Improved error handling and feedback Multiple synchronous missions Better performance on wider range of speakers multiple use cases, trainer aids, AAR aids 2. Adaptation to other CFF domains & platforms • • • Other parts of JFETS Laptop trainer Mobile/field use 3/19/2018

Radiobot Plans: Long-term • Produce useful automation of radio communication • off-load tasks from operator controller • standardize training • Extension to other domains • E. g. , 9 -line, sitreps, fraternal unit communication • Toolkits for non-expert radiobot construction for new domains 3/19/2018

Outline • • • David Traum: Jarrell Pair: Demo Susan Robinson: Antonio Roque: Vivek Kumar: Bilyana Martinovski: Anton Leuski: Antonio Roque: Susan Robinson: Ashish Vaswani: David Traum: Open demo: Intro to project Background on Ft Sill & UTM Data collection System architecture Speech recognizer Dialogue annotation Interpreter Dialogue manager Evaluation design Evaluation results Future directions 3/19/2018

f97da742568fa0cbe10416247af4cab8.ppt