8b6612bf5c51777c6d16a20837f815df.ppt

- Количество слайдов: 44

Quality of a search engine Paolo Ferragina Dipartimento di Informatica Università di Pisa Reading 8

Quality of a search engine Paolo Ferragina Dipartimento di Informatica Università di Pisa Reading 8

Is it good ? n How fast does it index n n n How fast does it search n n Number of documents/hour (Average document size) Latency as a function of index size Expressiveness of the query language

Is it good ? n How fast does it index n n n How fast does it search n n Number of documents/hour (Average document size) Latency as a function of index size Expressiveness of the query language

Measures for a search engine n All of the preceding criteria are measurable n The key measure: user happiness …useless answers won’t make a user happy

Measures for a search engine n All of the preceding criteria are measurable n The key measure: user happiness …useless answers won’t make a user happy

Happiness: elusive to measure n Commonest approach is given by the relevance of search results n n How do we measure it ? Requires 3 elements: 1. 2. 3. A benchmark document collection A benchmark suite of queries A binary assessment of either Relevant or Irrelevant for each query-doc pair

Happiness: elusive to measure n Commonest approach is given by the relevance of search results n n How do we measure it ? Requires 3 elements: 1. 2. 3. A benchmark document collection A benchmark suite of queries A binary assessment of either Relevant or Irrelevant for each query-doc pair

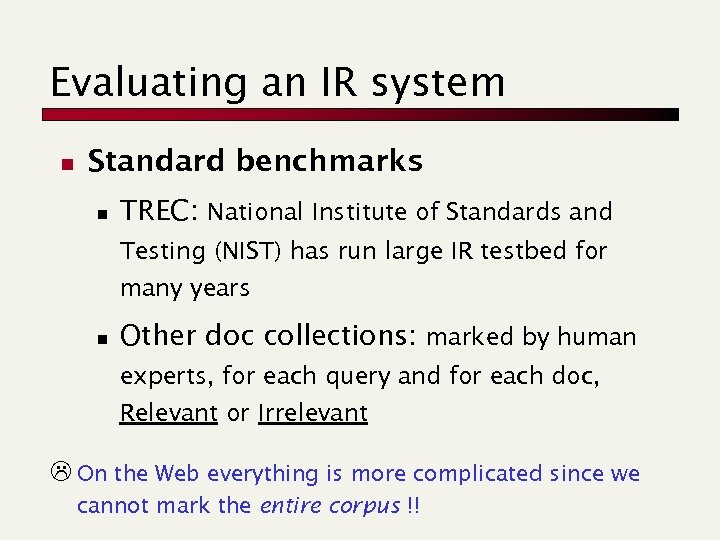

Evaluating an IR system n Standard benchmarks n TREC: National Institute of Standards and Testing (NIST) has run large IR testbed for many years n Other doc collections: marked by human experts, for each query and for each doc, Relevant or Irrelevant L On the Web everything is more complicated since we cannot mark the entire corpus !!

Evaluating an IR system n Standard benchmarks n TREC: National Institute of Standards and Testing (NIST) has run large IR testbed for many years n Other doc collections: marked by human experts, for each query and for each doc, Relevant or Irrelevant L On the Web everything is more complicated since we cannot mark the entire corpus !!

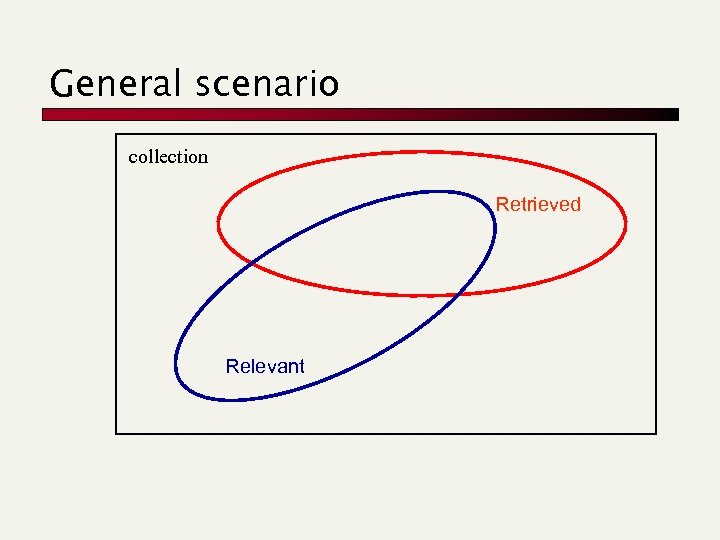

General scenario collection Retrieved Relevant

General scenario collection Retrieved Relevant

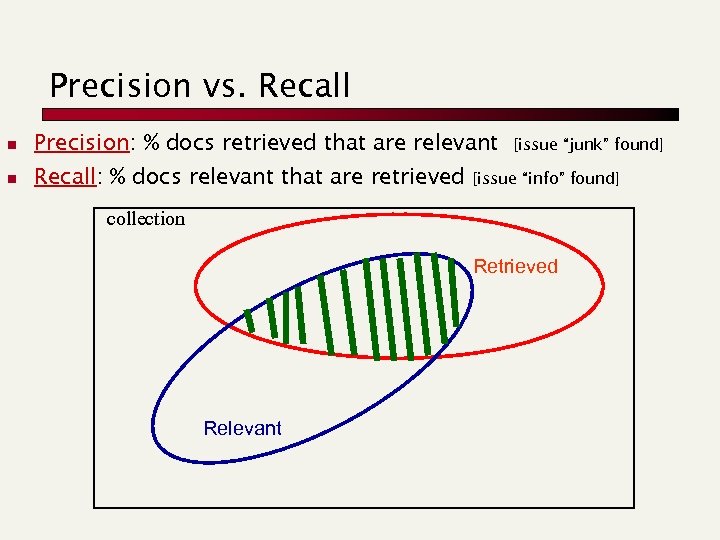

Precision vs. Recall n Precision: % docs retrieved that are relevant n Recall: % docs relevant that are retrieved [issue “junk” found] [issue “info” found] collection Retrieved Relevant

Precision vs. Recall n Precision: % docs retrieved that are relevant n Recall: % docs relevant that are retrieved [issue “junk” found] [issue “info” found] collection Retrieved Relevant

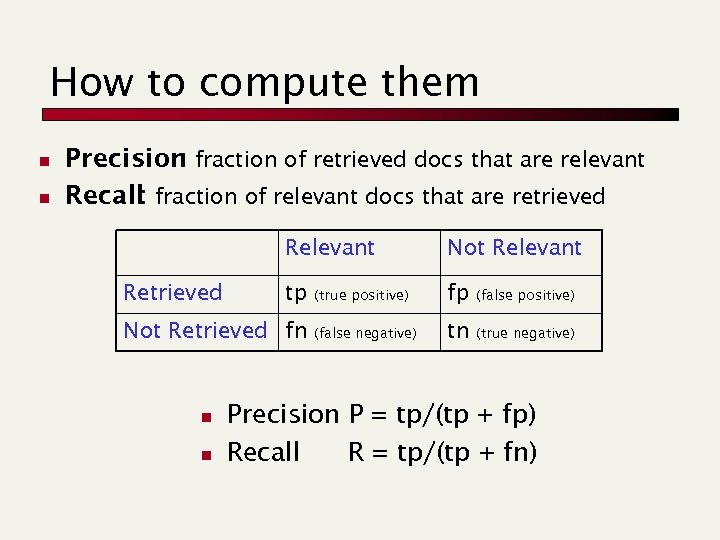

How to compute them n n Precision fraction of retrieved docs that are relevant : Recall fraction of relevant docs that are retrieved : Relevant Retrieved Not Relevant tp (true positive) fp (false positive) (false negative) tn (true negative) Not Retrieved fn n n Precision P = tp/(tp + fp) Recall R = tp/(tp + fn)

How to compute them n n Precision fraction of retrieved docs that are relevant : Recall fraction of relevant docs that are retrieved : Relevant Retrieved Not Relevant tp (true positive) fp (false positive) (false negative) tn (true negative) Not Retrieved fn n n Precision P = tp/(tp + fp) Recall R = tp/(tp + fn)

Some considerations n n n Can get high recall (but low precision) by retrieving all docs for all queries! Recall is a non-decreasing function of the number of docs retrieved Precision usually decreases

Some considerations n n n Can get high recall (but low precision) by retrieving all docs for all queries! Recall is a non-decreasing function of the number of docs retrieved Precision usually decreases

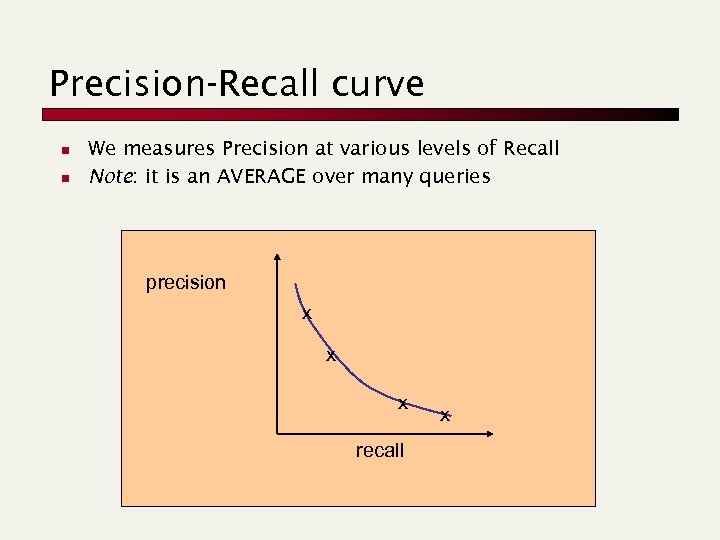

Precision-Recall curve n n We measures Precision at various levels of Recall Note: it is an AVERAGE over many queries precision x x x recall x

Precision-Recall curve n n We measures Precision at various levels of Recall Note: it is an AVERAGE over many queries precision x x x recall x

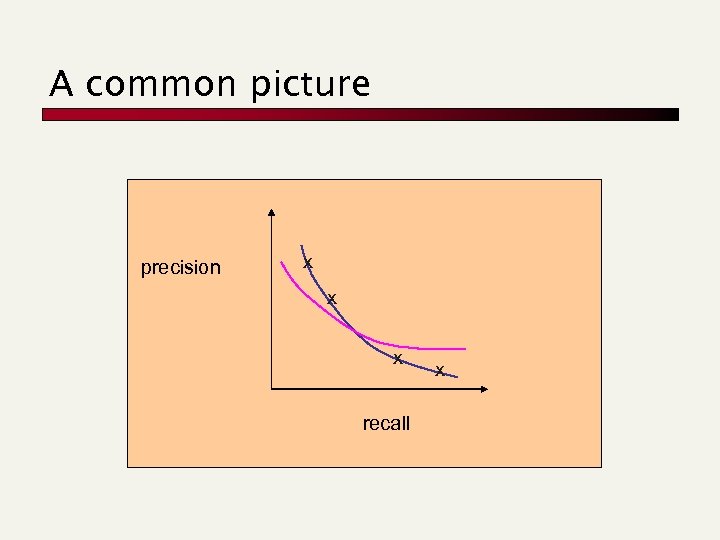

A common picture precision x x x recall x

A common picture precision x x x recall x

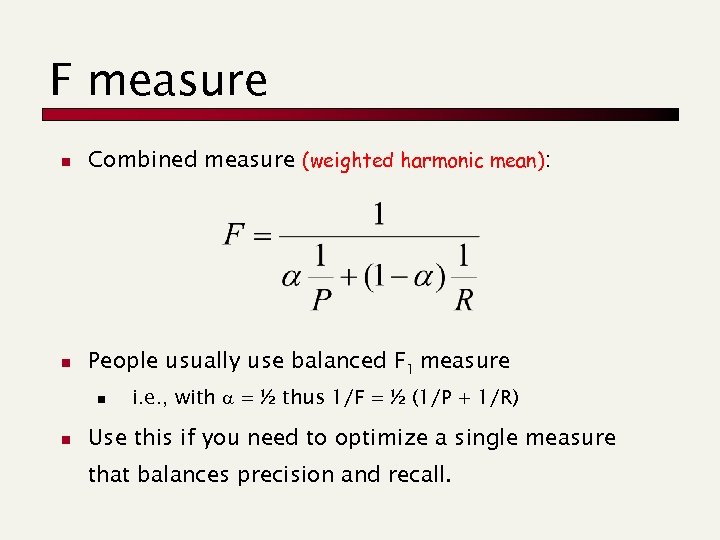

F measure n Combined measure (weighted harmonic mean): n People usually use balanced F 1 measure n n i. e. , with = ½ thus 1/F = ½ (1/P + 1/R) Use this if you need to optimize a single measure that balances precision and recall.

F measure n Combined measure (weighted harmonic mean): n People usually use balanced F 1 measure n n i. e. , with = ½ thus 1/F = ½ (1/P + 1/R) Use this if you need to optimize a single measure that balances precision and recall.

Recommendation systems Paolo Ferragina Dipartimento di Informatica Università di Pisa

Recommendation systems Paolo Ferragina Dipartimento di Informatica Università di Pisa

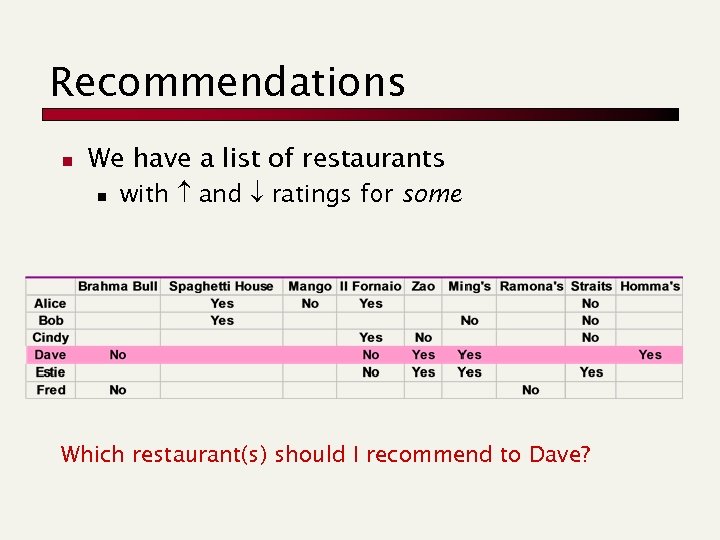

Recommendations n We have a list of restaurants n with and ratings for some Which restaurant(s) should I recommend to Dave?

Recommendations n We have a list of restaurants n with and ratings for some Which restaurant(s) should I recommend to Dave?

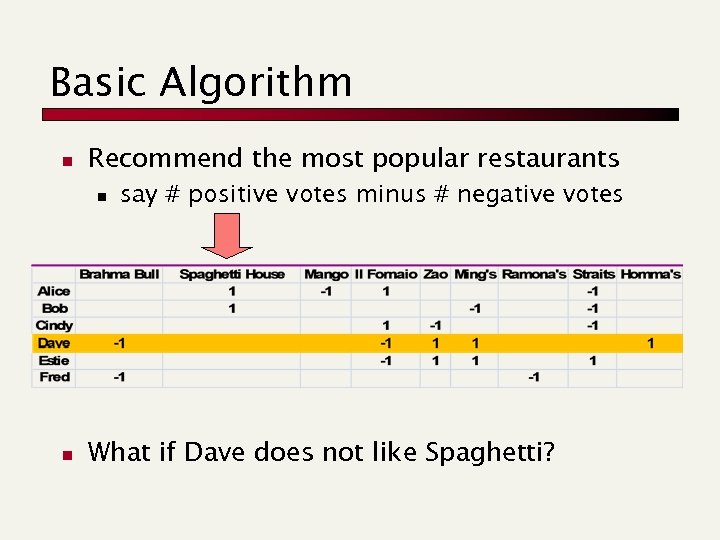

Basic Algorithm n Recommend the most popular restaurants n n say # positive votes minus # negative votes What if Dave does not like Spaghetti?

Basic Algorithm n Recommend the most popular restaurants n n say # positive votes minus # negative votes What if Dave does not like Spaghetti?

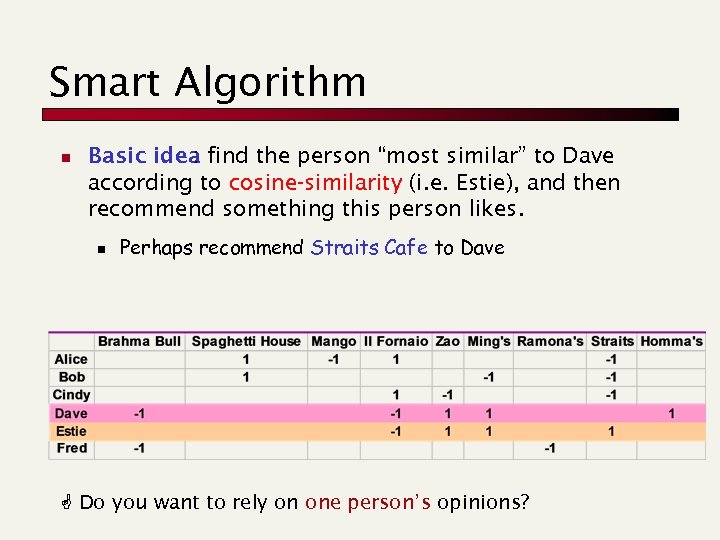

Smart Algorithm n Basic idea find the person “most similar” to Dave : according to cosine-similarity (i. e. Estie), and then recommend something this person likes. n Perhaps recommend Straits Cafe to Dave Do you want to rely on one person’s opinions?

Smart Algorithm n Basic idea find the person “most similar” to Dave : according to cosine-similarity (i. e. Estie), and then recommend something this person likes. n Perhaps recommend Straits Cafe to Dave Do you want to rely on one person’s opinions?

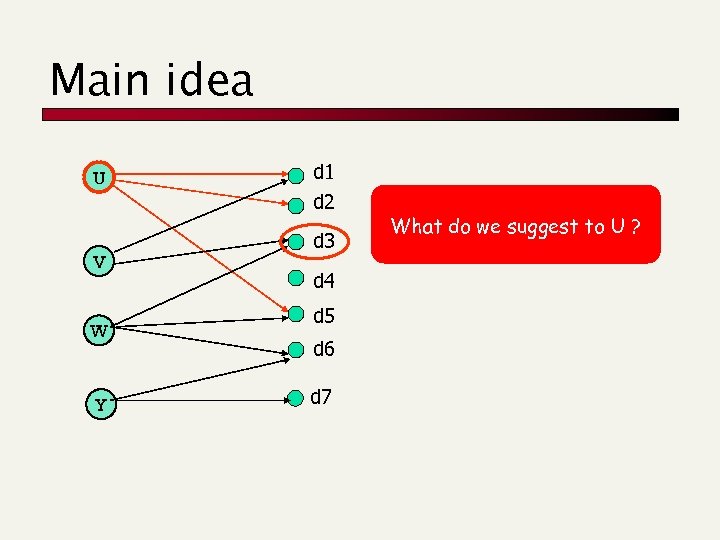

Main idea U V W Y d 1 d 2 d 3 d 4 d 5 d 6 d 7 What do we suggest to U ?

Main idea U V W Y d 1 d 2 d 3 d 4 d 5 d 6 d 7 What do we suggest to U ?

A glimpse on XML retrieval (e. Xtensible Markup Language) Paolo Ferragina Dipartimento di Informatica Università di Pisa Reading 10

A glimpse on XML retrieval (e. Xtensible Markup Language) Paolo Ferragina Dipartimento di Informatica Università di Pisa Reading 10

XML vs HTML n HTML is a markup language for a specific purpose (display in browsers) n n n XML is a framework for defining markup languages HTML has fixed markup tags, XML no HTML can be formalized as an XML language (XHTML)

XML vs HTML n HTML is a markup language for a specific purpose (display in browsers) n n n XML is a framework for defining markup languages HTML has fixed markup tags, XML no HTML can be formalized as an XML language (XHTML)

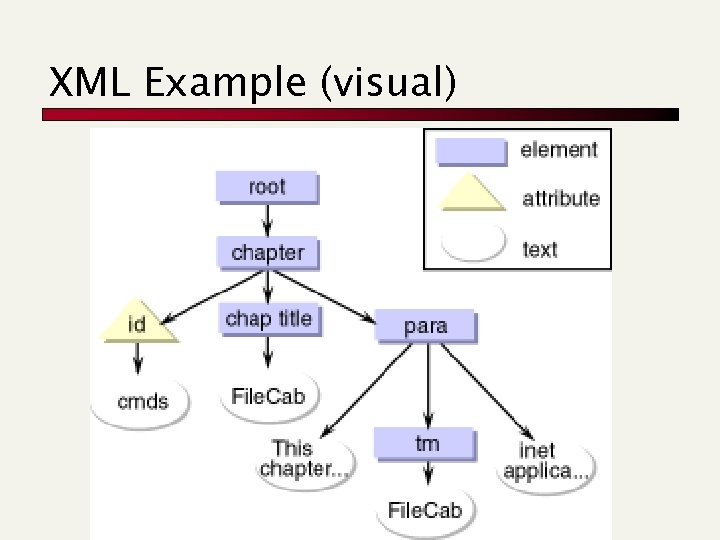

XML Example (visual)

XML Example (visual)

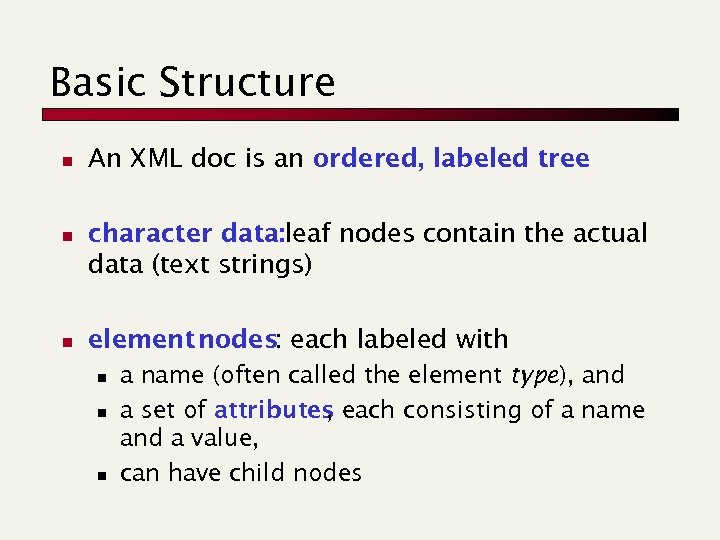

Basic Structure n n n An XML doc is an ordered, labeled tree character data: leaf nodes contain the actual data (text strings) element nodes: each labeled with n n n a name (often called the element type), and a set of attributes each consisting of a name , and a value, can have child nodes

Basic Structure n n n An XML doc is an ordered, labeled tree character data: leaf nodes contain the actual data (text strings) element nodes: each labeled with n n n a name (often called the element type), and a set of attributes each consisting of a name , and a value, can have child nodes

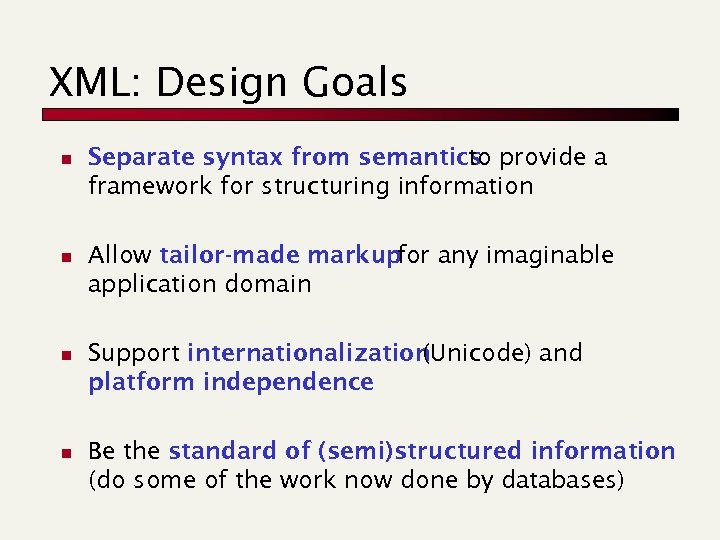

XML: Design Goals n n Separate syntax from semantics provide a to framework for structuring information Allow tailor-made markup any imaginable for application domain Support internationalization (Unicode) and platform independence Be the standard of (semi)structured information (do some of the work now done by databases)

XML: Design Goals n n Separate syntax from semantics provide a to framework for structuring information Allow tailor-made markup any imaginable for application domain Support internationalization (Unicode) and platform independence Be the standard of (semi)structured information (do some of the work now done by databases)

Why Use XML? n Represent semi-structured n XML is more flexible than DBs n XML is more structured than simple IR n You get a massive infrastructure for free

Why Use XML? n Represent semi-structured n XML is more flexible than DBs n XML is more structured than simple IR n You get a massive infrastructure for free

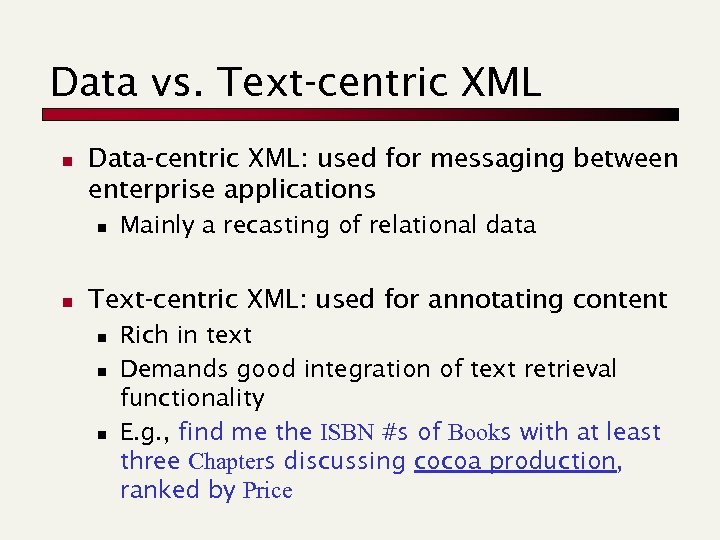

Data vs. Text-centric XML n Data-centric XML: used for messaging between enterprise applications n n Mainly a recasting of relational data Text-centric XML: used for annotating content n n n Rich in text Demands good integration of text retrieval functionality E. g. , find me the ISBN #s of Books with at least three Chapters discussing cocoa production, ranked by Price

Data vs. Text-centric XML n Data-centric XML: used for messaging between enterprise applications n n Mainly a recasting of relational data Text-centric XML: used for annotating content n n n Rich in text Demands good integration of text retrieval functionality E. g. , find me the ISBN #s of Books with at least three Chapters discussing cocoa production, ranked by Price

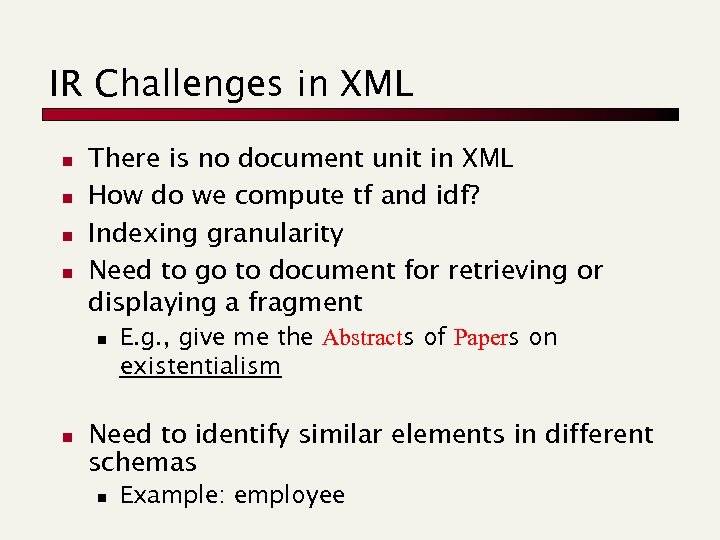

IR Challenges in XML n n There is no document unit in XML How do we compute tf and idf? Indexing granularity Need to go to document for retrieving or displaying a fragment n n E. g. , give me the Abstracts of Papers on existentialism Need to identify similar elements in different schemas n Example: employee

IR Challenges in XML n n There is no document unit in XML How do we compute tf and idf? Indexing granularity Need to go to document for retrieving or displaying a fragment n n E. g. , give me the Abstracts of Papers on existentialism Need to identify similar elements in different schemas n Example: employee

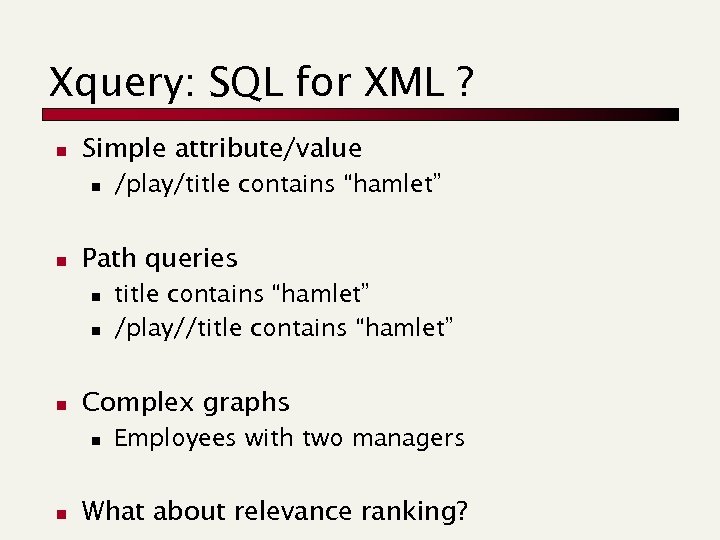

Xquery: SQL for XML ? n Simple attribute/value n n Path queries n n n title contains “hamlet” /play//title contains “hamlet” Complex graphs n n /play/title contains “hamlet” Employees with two managers What about relevance ranking?

Xquery: SQL for XML ? n Simple attribute/value n n Path queries n n n title contains “hamlet” /play//title contains “hamlet” Complex graphs n n /play/title contains “hamlet” Employees with two managers What about relevance ranking?

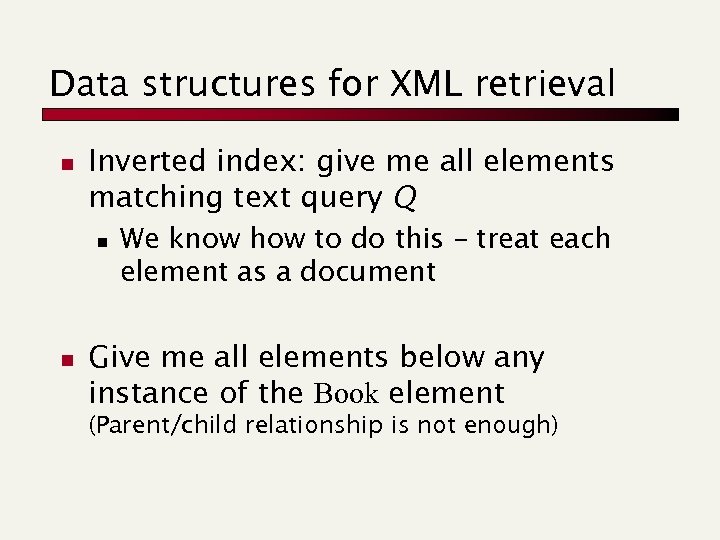

Data structures for XML retrieval n Inverted index: give me all elements matching text query Q n n We know how to do this – treat each element as a document Give me all elements below any instance of the Book element (Parent/child relationship is not enough)

Data structures for XML retrieval n Inverted index: give me all elements matching text query Q n n We know how to do this – treat each element as a document Give me all elements below any instance of the Book element (Parent/child relationship is not enough)

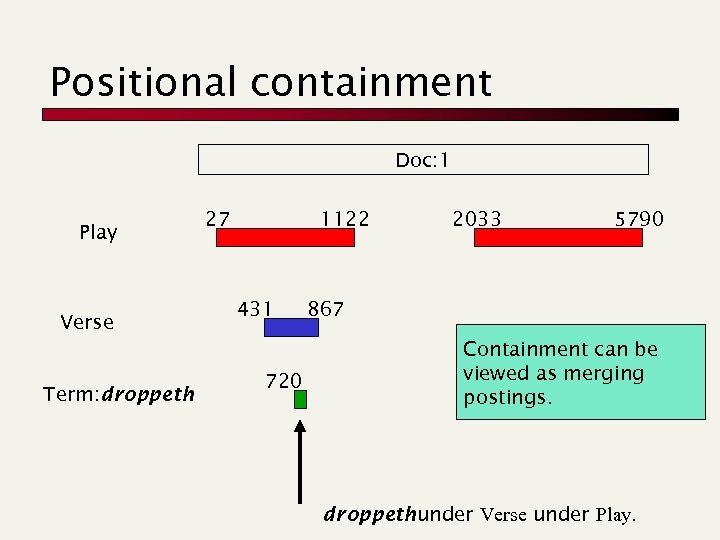

Positional containment Doc: 1 Play Verse Term: droppeth 27 1122 431 720 2033 5790 867 Containment can be viewed as merging postings. droppeth under Verse under Play.

Positional containment Doc: 1 Play Verse Term: droppeth 27 1122 431 720 2033 5790 867 Containment can be viewed as merging postings. droppeth under Verse under Play.

Summary of data structures n Path containment etc. can essentially be solved by positional inverted indexes n Retrieval consists of “merging” postings n All the compression tricks are still applicable n Complications arise from insertion/deletion of elements, text within elements n Beyond the scope of this course

Summary of data structures n Path containment etc. can essentially be solved by positional inverted indexes n Retrieval consists of “merging” postings n All the compression tricks are still applicable n Complications arise from insertion/deletion of elements, text within elements n Beyond the scope of this course

Search Engines Advertising

Search Engines Advertising

Classic approach… Socio-demo Geographic Contextual

Classic approach… Socio-demo Geographic Contextual

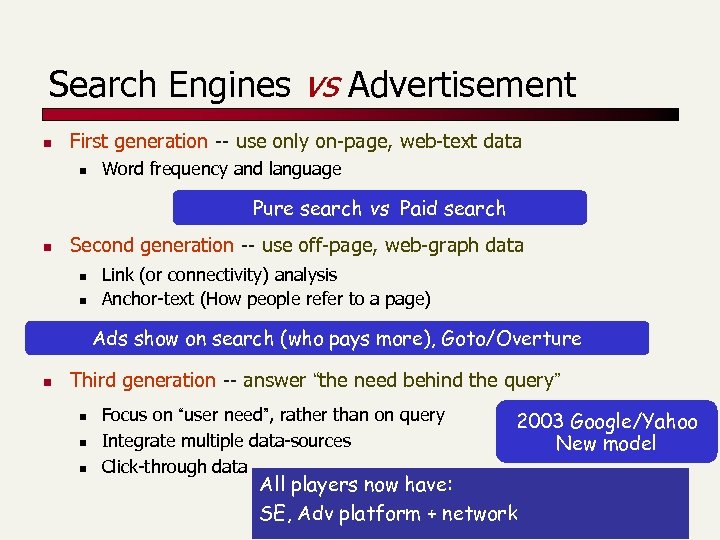

Search Engines vs Advertisement n First generation -- use only on-page, web-text data n Word frequency and language Pure search vs Paid search n Second generation -- use off-page, web-graph data n n Link (or connectivity) analysis Anchor-text (How people refer to a page) Ads show on search (who pays more), Goto/Overture n Third generation -- answer “the need behind the query” n n n Focus on “user need”, rather than on query Integrate multiple data-sources Click-through data 2003 Google/Yahoo New model All players now have: SE, Adv platform + network

Search Engines vs Advertisement n First generation -- use only on-page, web-text data n Word frequency and language Pure search vs Paid search n Second generation -- use off-page, web-graph data n n Link (or connectivity) analysis Anchor-text (How people refer to a page) Ads show on search (who pays more), Goto/Overture n Third generation -- answer “the need behind the query” n n n Focus on “user need”, rather than on query Integrate multiple data-sources Click-through data 2003 Google/Yahoo New model All players now have: SE, Adv platform + network

The new scenario n SEs make possible n n n aggregation of interests unlimited selection (Amazon, Netflix, . . . ) Incentives for specialized niche players The biggest money is in the smallest sales !!

The new scenario n SEs make possible n n n aggregation of interests unlimited selection (Amazon, Netflix, . . . ) Incentives for specialized niche players The biggest money is in the smallest sales !!

Two new approaches n Sponsored search: Ads driven by search keywords (and user-profile issuing them) Ad. Words

Two new approaches n Sponsored search: Ads driven by search keywords (and user-profile issuing them) Ad. Words

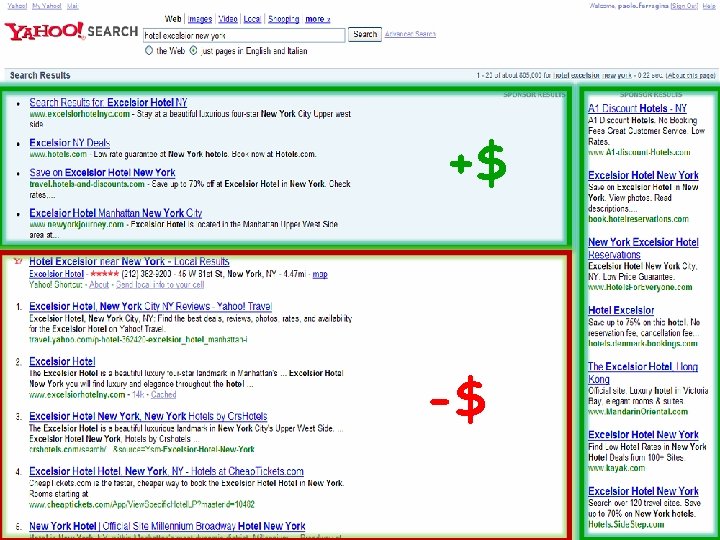

+$ -$

+$ -$

Two new approaches n Sponsored search: Ads driven by search keywords (and user-profile issuing them) Ad. Words n Context match: Ads driven by the content of a web page (and user-profile reaching that page) Ad. Sense

Two new approaches n Sponsored search: Ads driven by search keywords (and user-profile issuing them) Ad. Words n Context match: Ads driven by the content of a web page (and user-profile reaching that page) Ad. Sense

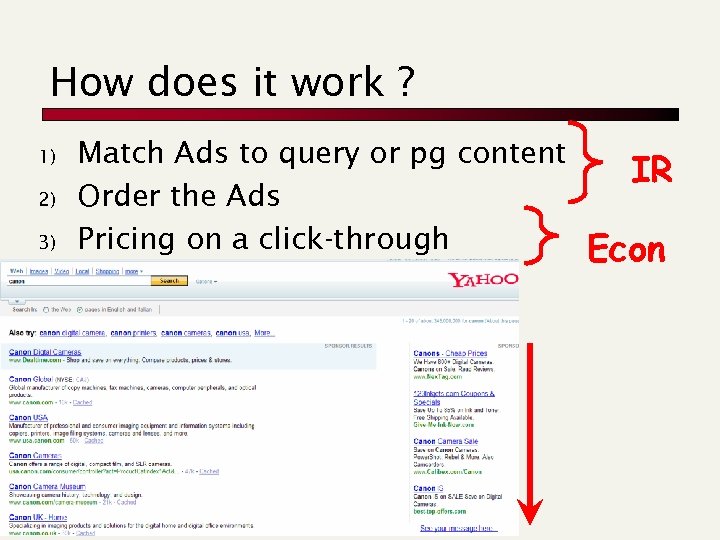

How does it work ? 1) 2) 3) Match Ads to query or pg content Order the Ads Pricing on a click-through IR Econ

How does it work ? 1) 2) 3) Match Ads to query or pg content Order the Ads Pricing on a click-through IR Econ

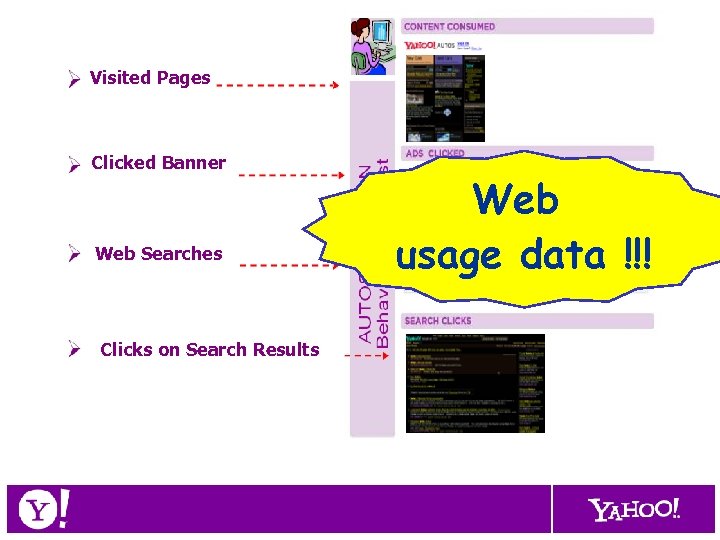

Visited Pages Clicked Banner Web Searches Clicks on Search Results Web usage data !!!

Visited Pages Clicked Banner Web Searches Clicks on Search Results Web usage data !!!

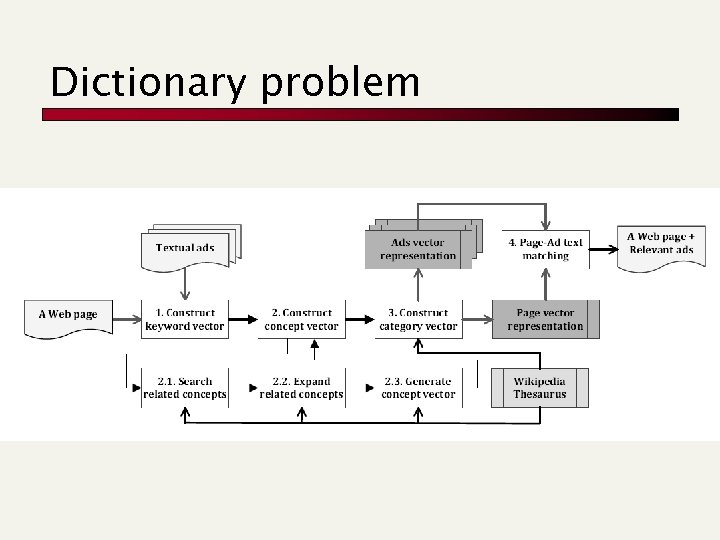

Dictionary problem

Dictionary problem

A new game n Similar to web searching, but: Ad-DB is smaller, Ad-items are small pages, ranking depends on clicks For advertisers: n n n What words to buy, how much to pay SPAM is an economic activity For search engines owners: n How to price the words n Find the right Ad n Keyword suggestion, geo-coding, business control, language restriction, proper Ad display

A new game n Similar to web searching, but: Ad-DB is smaller, Ad-items are small pages, ranking depends on clicks For advertisers: n n n What words to buy, how much to pay SPAM is an economic activity For search engines owners: n How to price the words n Find the right Ad n Keyword suggestion, geo-coding, business control, language restriction, proper Ad display