b03da9497462b97f49b645d2552bed8c.ppt

- Количество слайдов: 147

PS 1 PSPS Object Data Manager Design PSPS Critical Design Review November 5 -6, 2007 If. A

Outline § § § § § slide 2 ODM Overview Critical Requirements Driving Design Work Completed Detailed Design Spatial Querying [AS] ODM Prototype [MN] Hardware/Scalability [JV] How Design Meets Requirements WBS and Schedule Issues/Risks [AS] = Alex, [MN] = Maria, [JV] = Jan

ODM Overview The Object Data Manager will: § Provide a scalable data archive for the Pan. STARRS data products § Provide query access to the data for Pan-STARRS users § Provide detailed usage tracking and logging slide 3

ODM Driving Requirements § Total size 100 TB, • 1. 5 x 1011 P 2 detections • 8. 3 x 1010 P 2 cumulative-sky (stack) detections • 5. 5 x 109 celestial objects § Nominal daily rate (divide by 3. 5 x 365) • P 2 detections: 120 Million/day • Stack detections: 65 Million/day • Objects: 4. 3 Million/day § Cross-Match requirement: 120 Million / 12 hrs ~ 2800 / s § DB size requirement: • 25 TB / yr • ~100 TB by of PS 1 (3. 5 yrs) slide 4

Work completed so far § Built a prototype § Scoped and built prototype hardware § Generated simulated data • 300 M SDSS DR 5 objects, 1. 5 B Galactic plane objects § Initial Load done – Created 15 TB DB of simulated data • Largest astronomical DB in existence today § Partitioned the data correctly using Zones algorithm § Able to run simple queries on distributed DB § Demonstrated critical steps of incremental loading § It is fast enough • Cross-match > 60 k detections/sec • Required rate is ~3 k/sec slide 5

Detailed Design § § Reuse SDSS software as much as possible Data Transformation Layer (DX) – Interface to IPP Data Loading Pipeline (DLP) Data Storage (DS) • Schema and Test Queries • Database Management System • Scalable Data Architecture • Hardware § Query Manager (QM: Cas. Jobs for prototype) slide 6

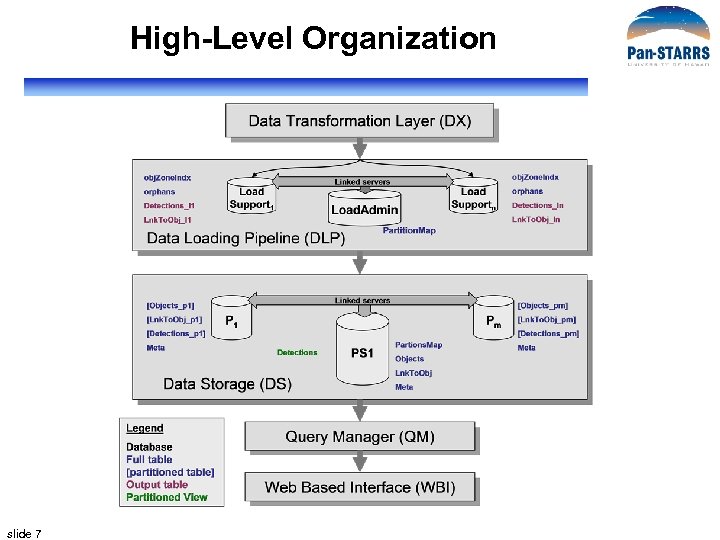

High-Level Organization slide 7

Detailed Design § § Reuse SDSS software as much as possible Data Transformation Layer (DX) – Interface to IPP Data Loading Pipeline (DLP) Data Storage (DS) • Schema and Test Queries • Database Management System • Scalable Data Architecture • Hardware § Query Manager (QM: Cas. Jobs for prototype) slide 8

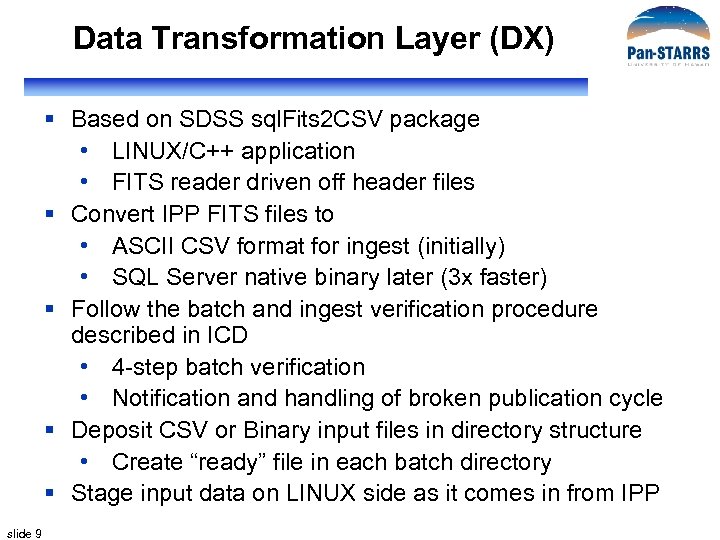

Data Transformation Layer (DX) § Based on SDSS sql. Fits 2 CSV package • LINUX/C++ application • FITS reader driven off header files § Convert IPP FITS files to • ASCII CSV format for ingest (initially) • SQL Server native binary later (3 x faster) § Follow the batch and ingest verification procedure described in ICD • 4 -step batch verification • Notification and handling of broken publication cycle § Deposit CSV or Binary input files in directory structure • Create “ready” file in each batch directory § Stage input data on LINUX side as it comes in from IPP slide 9

DX Subtasks DX Initialization Job Batch Verification Batch Conversion FITS schema FITS reader CSV Converter CSV Writer slide 10 Batch Ingest Interface with IPP Naming convention Uncompress batch Read batch Verify Batch Verify Manifest Verify FITS Integrity Verify FITS Content Verify FITS Data Handle Broken Cycle CSV Converter Binary Converter “batch_ready” Interface with DLP

DX-DLP Interface § Directory structure on staging FS (LINUX): • Separate directory for each Job. ID_Batch. ID • Contains a “batch_ready” manifest file – Name, #rows and destination table of each file • Contains one file per destination table in ODM – Objects, Detections, other tables § Creation of “batch_ready” file is signal to loader to ingest the batch § Batch size and frequency of ingest cycle TBD slide 11

Detailed Design § § Reuse SDSS software as much as possible Data Transformation Layer (DX) – Interface to IPP Data Loading Pipeline (DLP) Data Storage (DS) • Schema and Test Queries • Database Management System • Scalable Data Architecture • Hardware § Query Manager (QM: Cas. Jobs for prototype) slide 12

Data Loading Pipeline (DLP) § sql. Loader – SDSS data loading pipeline • Pseudo-automated workflow system • Loads, validates and publishes data – From CSV to SQL tables • Maintains a log of every step of loading • Managed from Load Monitor Web interface § Has been used to load every SDSS data release • EDR, DR 1 -6, ~ 15 TB of data altogether • Most of it (since DR 2) loaded incrementally • Kept many data errors from getting into database – Duplicate Obj. IDs (symptom of other problems) – Data corruption (CSV format invaluable in catching this) slide 13

sql. Loader Design § Existing functionality • Shown for SDSS version • Workflow, distributed loading, Load Monitor § New functionality • Schema changes • Workflow changes • Incremental loading – Cross-match and partitioning slide 14

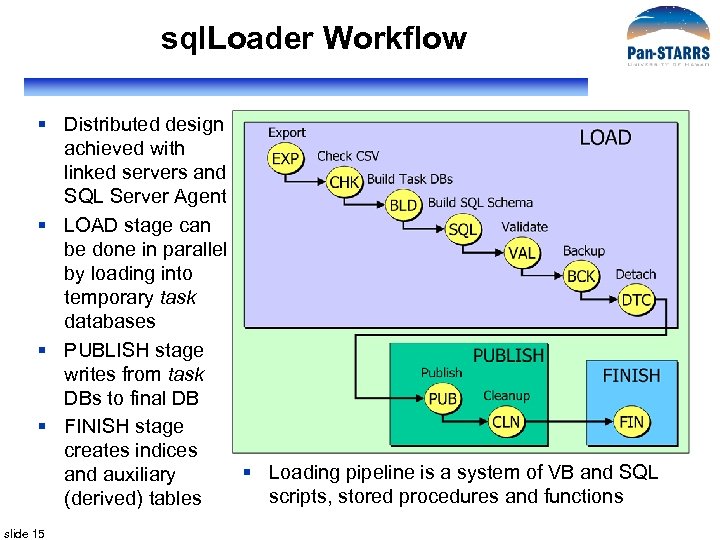

sql. Loader Workflow § Distributed design achieved with linked servers and SQL Server Agent § LOAD stage can be done in parallel by loading into temporary task databases § PUBLISH stage writes from task DBs to final DB § FINISH stage creates indices § Loading pipeline is a system of VB and SQL and auxiliary scripts, stored procedures and functions (derived) tables slide 15

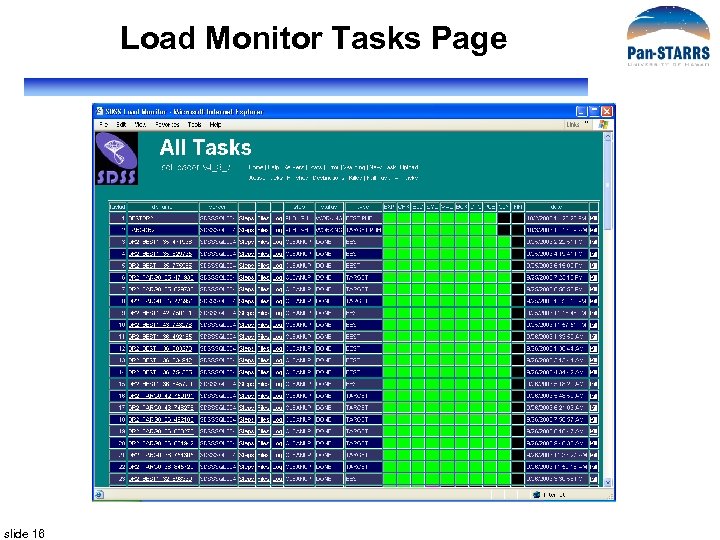

Load Monitor Tasks Page slide 16

Load Monitor Active Tasks slide 17

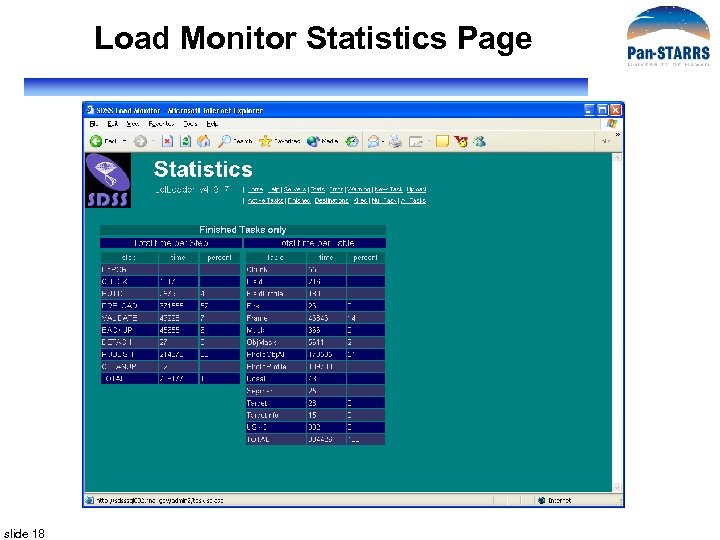

Load Monitor Statistics Page slide 18

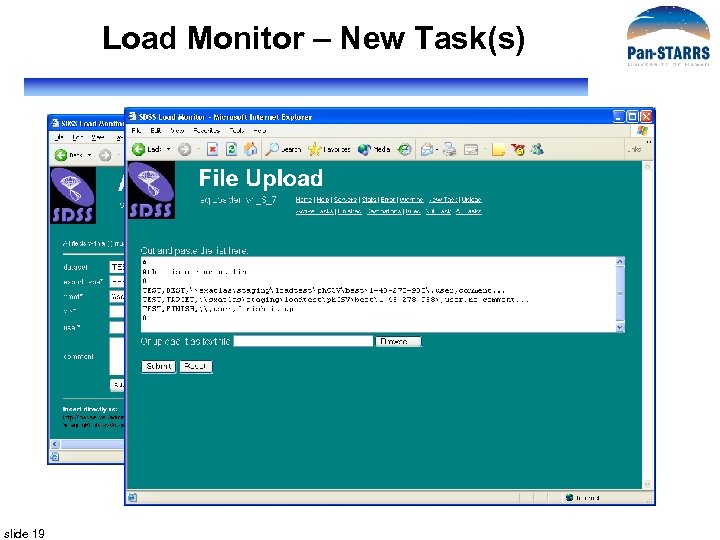

Load Monitor – New Task(s) slide 19

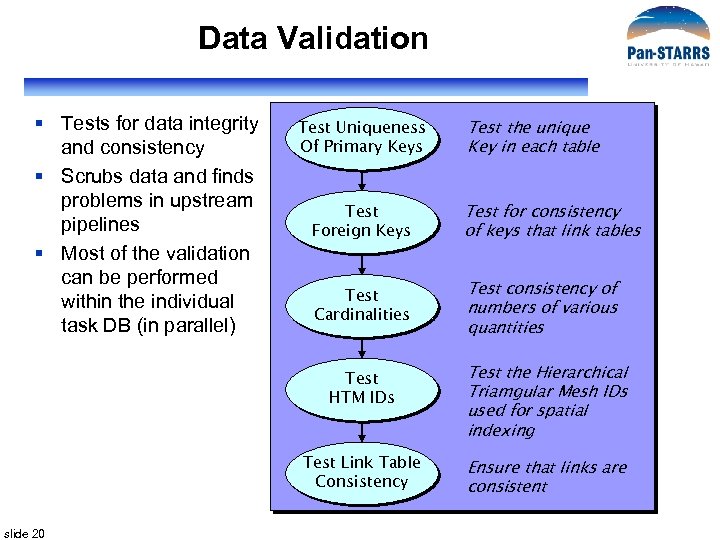

Data Validation § Tests for data integrity and consistency § Scrubs data and finds problems in upstream pipelines § Most of the validation can be performed within the individual task DB (in parallel) Test Uniqueness Of Primary Keys Test the unique Key in each table Test Foreign Keys Test for consistency of keys that link tables Test Cardinalities Test consistency of numbers of various quantities Test HTM IDs Test Link Table Consistency slide 20 Test the Hierarchical Triamgular Mesh IDs used for spatial indexing Ensure that links are consistent

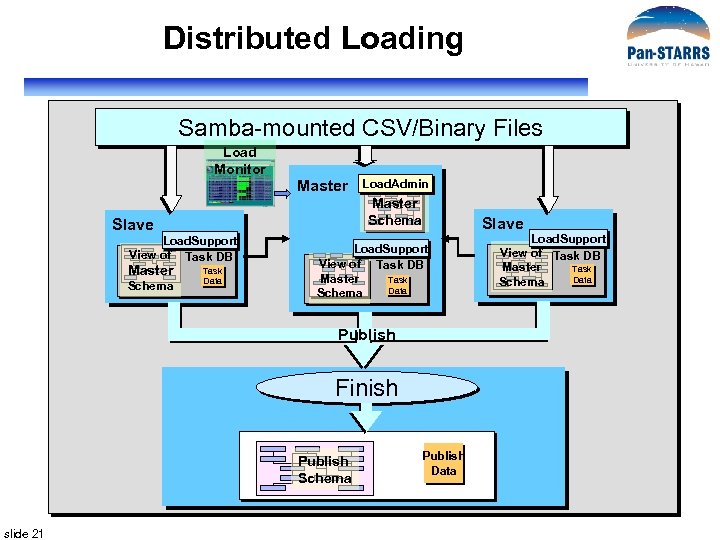

Distributed Loading Samba-mounted CSV/Binary Files Load Monitor Master Schema Slave Load. Support View of Task DB Master Schema Load. Admin Task Data Slave Load. Support View of Task DB Master Task Data Schema Publish Finish Publish Schema slide 21 Publish Data Load. Support View of Task DB Master Task Data Schema

Schema Changes § Schema in task and publish DBs is driven off a list of schema DDL files to execute (xschema. txt) § Requires replacing DDL files in schema/sql directory and updating xschema. txt with their names § PS 1 schema DDL files have already been built § Index definitions have also been created § Metadata tables will be automatically generated using metadata scripts already in the loader slide 22

Workflow Changes § Cross-Match and Partition steps will be added to the workflow § Cross-match will match detections to objects § Partition will horizontally partition data, move it to slice servers, and build DPVs on main slide 23 Export LOAD Check CSVs Create Task DBs Build SQL Schema Validate XMatch PUBLISH Partition

Matching Detections with Objects § Algorithm described fully in prototype section § Stored procedures to cross-match detections will be part of the LOAD stage in loader pipeline § Vertical partition of Objects table kept on load server for matching with detections § Zones cross-match algorithm used to do 1” and 2” matches § Detections with no matches saved in Orphans table slide 24

XMatch and Partition Data Flow Detections Loadsupport Load Detections Obj. Zone. Indx Detections_In XMatch Orphans Update Objects Detections_m Link. To. Obj_In Pm Merge Partitions Detections_chunk Link. To. Obj_chunk Pull Chunk PS 1 Objects_m slide 25 Pull Partition Objects_m Link. To. Obj_m Switch Partition Objects Link. To. Obj

Detailed Design § § Reuse SDSS software as much as possible Data Transformation Layer (DX) – Interface to IPP Data Loading Pipeline (DLP) Data Storage (DS) • Schema and Test Queries • Database Management System • Scalable Data Architecture • Hardware § Query Manager (QM: Cas. Jobs for prototype) slide 26

Data Storage – Schema slide 27

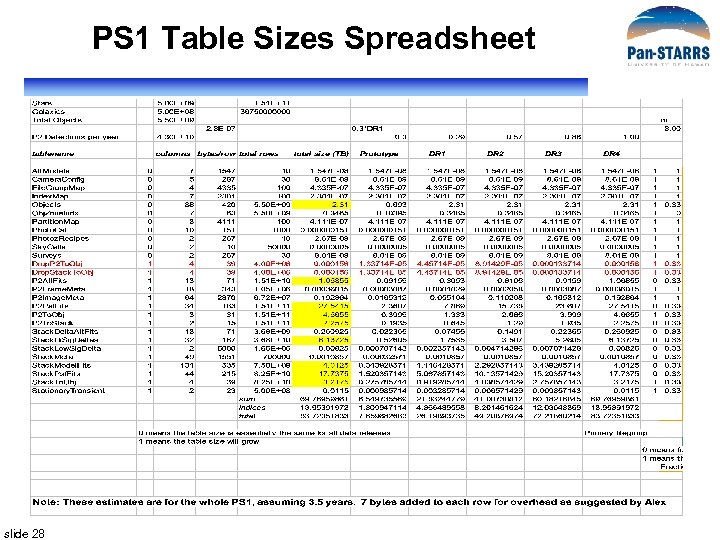

PS 1 Table Sizes Spreadsheet slide 28

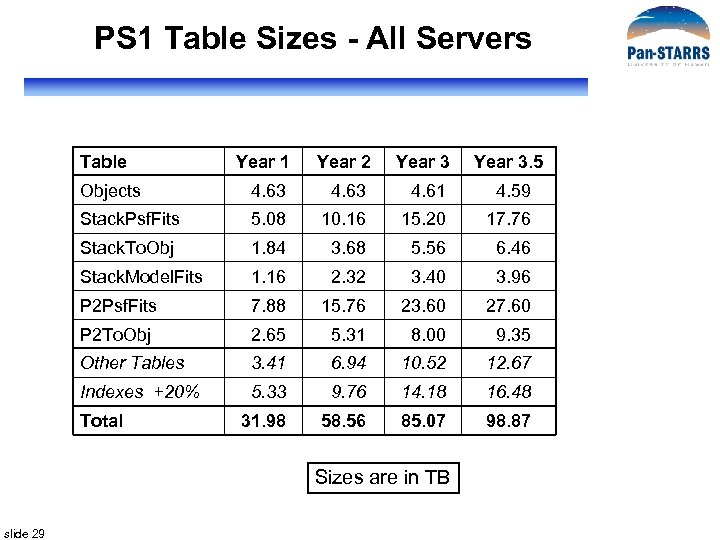

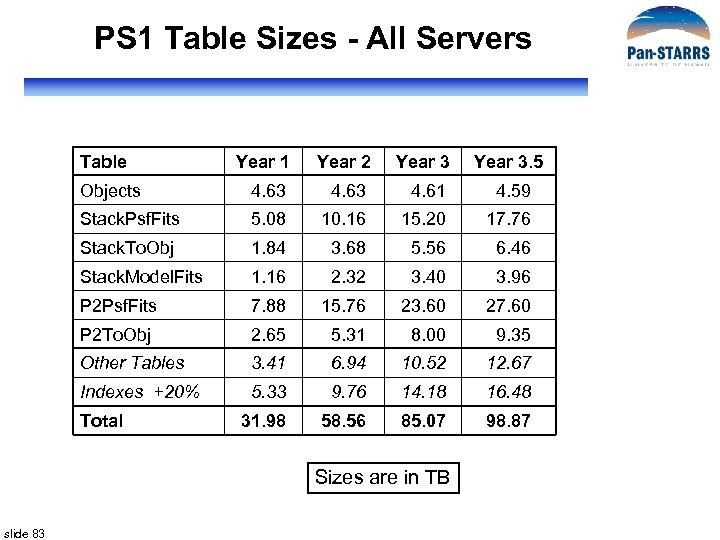

PS 1 Table Sizes - All Servers Table Year 1 Year 2 Year 3. 5 Objects 4. 63 4. 61 4. 59 Stack. Psf. Fits 5. 08 10. 16 15. 20 17. 76 Stack. To. Obj 1. 84 3. 68 5. 56 6. 46 Stack. Model. Fits 1. 16 2. 32 3. 40 3. 96 P 2 Psf. Fits 7. 88 15. 76 23. 60 27. 60 P 2 To. Obj 2. 65 5. 31 8. 00 9. 35 Other Tables 3. 41 6. 94 10. 52 12. 67 Indexes +20% 5. 33 9. 76 14. 18 16. 48 31. 98 58. 56 85. 07 98. 87 Total Sizes are in TB slide 29

Data Storage – Test Queries § Drawn from several sources • Initial set of SDSS 20 queries • SDSS Sky. Server Sample Queries • Queries from PS scientists (Monet, Howell, Kaiser, Heasley) § Two objectives • Find potential holes/issues in schema • Serve as test queries – Test DBMS iintegrity – Test DBMS performance § Loaded into Cas. Jobs (Query Manager) as sample queries for prototype slide 30

Data Storage – DBMS § Microsoft SQL Server 2005 • Relational DBMS with excellent query optimizer § Plus • Spherical/HTM (C# library + SQL glue) – Spatial index (Hierarchical Triangular Mesh) • Zones (SQL library) – Alternate spatial decomposition with dec zones • Many stored procedures and functions – From coordinate conversions to neighbor search functions • Self-extracting documentation (metadata) and diagnostics slide 31

Documentation and Diagnostics slide 32

Data Storage – Scalable Architecture § Monolithic database design (a la SDSS) will not do it § SQL Server does not have cluster implementation • Do it by hand § Partitions vs Slices • Partitions are file-groups on the same server – Parallelize disk accesses on the same machine • Slices are data partitions on separate servers • We use both! § Additional slices can be added for scale-out § For PS 1, use SQL Server Distributed Partition Views (DPVs) slide 33

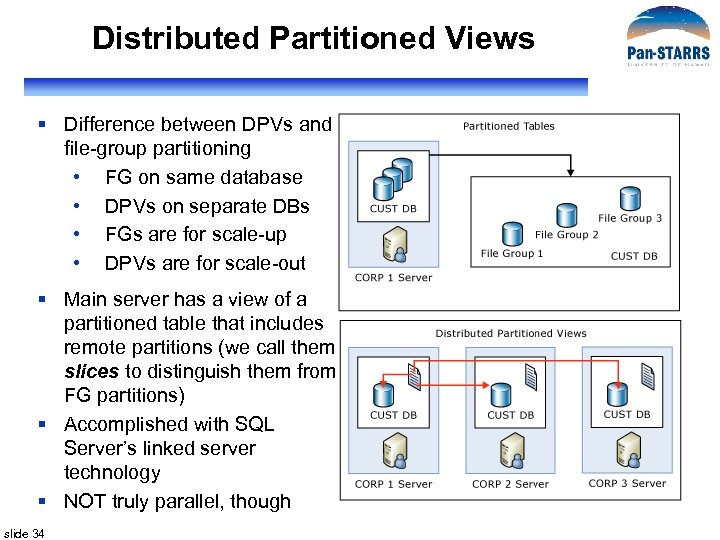

Distributed Partitioned Views § Difference between DPVs and file-group partitioning • FG on same database • DPVs on separate DBs • FGs are for scale-up • DPVs are for scale-out § Main server has a view of a partitioned table that includes remote partitions (we call them slices to distinguish them from FG partitions) § Accomplished with SQL Server’s linked server technology § NOT truly parallel, though slide 34

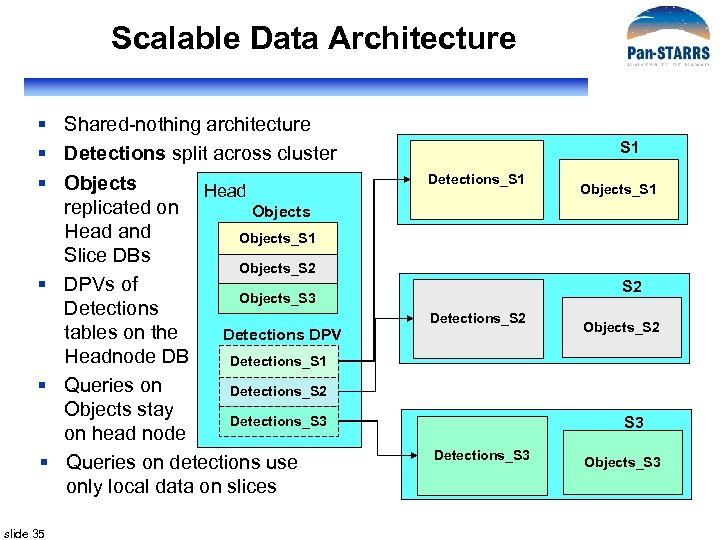

Scalable Data Architecture § Shared-nothing architecture § Detections split across cluster § Objects Head replicated on Objects Head and Objects_S 1 Slice DBs Objects_S 2 § DPVs of Objects_S 3 Detections tables on the Detections DPV Headnode DB Detections_S 1 § Queries on Detections_S 2 Objects stay Detections_S 3 on head node § Queries on detections use only local data on slices slide 35 S 1 Detections_S 1 Objects_S 1 S 2 Detections_S 2 Objects_S 2 S 3 Detections_S 3 Objects_S 3

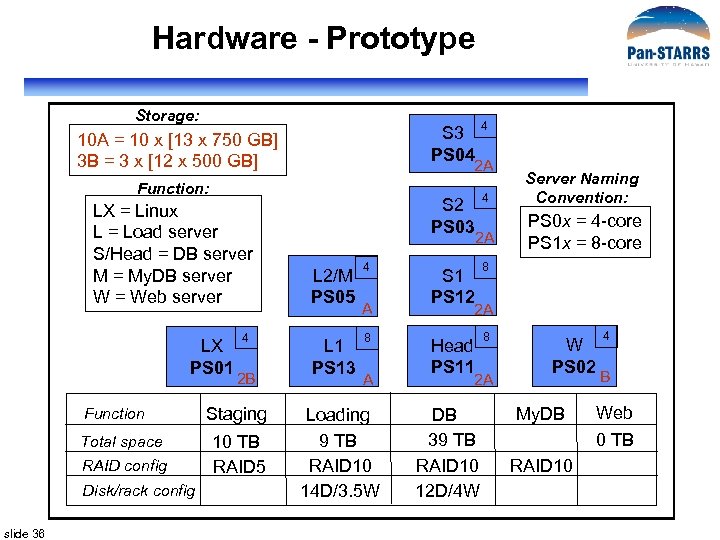

Hardware - Prototype Storage: S 3 PS 04 10 A = 10 x [13 x 750 GB] 3 B = 3 x [12 x 500 GB] 2 A Function: LX = Linux L = Load server S/Head = DB server M = My. DB server W = Web server LX PS 01 Function Total space RAID config Disk/rack config slide 36 4 2 B Staging 10 TB RAID 5 4 S 2 PS 03 4 S 1 PS 12 8 Head PS 11 8 2 A L 2/M PS 05 L 1 PS 13 4 A 8 A Loading 9 TB RAID 10 14 D/3. 5 W Server Naming Convention: PS 0 x = 4 -core PS 1 x = 8 -core 2 A 2 A DB 39 TB RAID 10 12 D/4 W W PS 02 My. DB 4 B Web 0 TB RAID 10

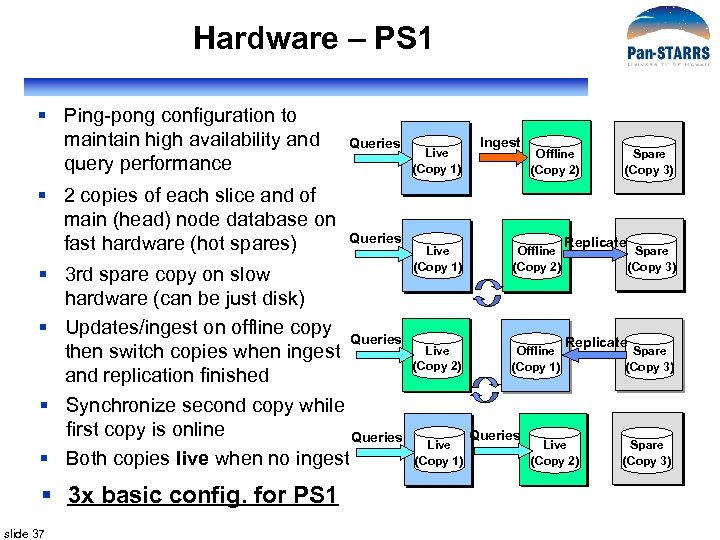

Hardware – PS 1 § Ping-pong configuration to maintain high availability and query performance § 2 copies of each slice and of main (head) node database on fast hardware (hot spares) Queries § 3 rd spare copy on slow hardware (can be just disk) § Updates/ingest on offline copy Queries then switch copies when ingest and replication finished § Synchronize second copy while first copy is online Queries § Both copies live when no ingest § 3 x basic config. for PS 1 slide 37 Live (Copy 1) Ingest Offline (Copy 2) Live (Copy 1) Offline (Copy 2) Live (Copy 2) Offline (Copy 1) Live (Copy 1) Queries Spare (Copy 3) Replicate Live (Copy 2) Spare (Copy 3)

Detailed Design § § Reuse SDSS software as much as possible Data Transformation Layer (DX) – Interface to IPP Data Loading Pipeline (DLP) Data Storage (DS) • Schema and Test Queries • Database Management System • Scalable Data Architecture • Hardware § Query Manager (QM: Cas. Jobs for prototype) slide 38

Query Manager § Based on SDSS Cas. Jobs § Configure to work with distributed database, DPVs § Direct links (contexts) to slices can be added later if necessary § Segregates quick queries from long ones § Saves query results server-side in My. DB § Gives users a powerful query workbench § Can be scaled out to meet any query load § PS 1 Sample Queries available to users § PS 1 Prototype QM demo slide 39

ODM Prototype Components § Data Loading Pipeline § Data Storage § Cas. Jobs • Query Manager (QM) • Web Based Interface (WBI) § Testing slide 40

Spatial Queries (Alex) slide 41

Spatial Searches in the ODM slide 42

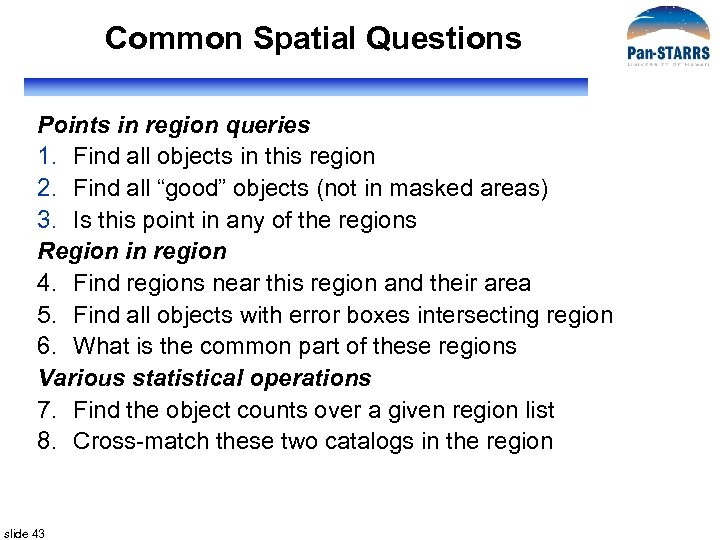

Common Spatial Questions Points in region queries 1. Find all objects in this region 2. Find all “good” objects (not in masked areas) 3. Is this point in any of the regions Region in region 4. Find regions near this region and their area 5. Find all objects with error boxes intersecting region 6. What is the common part of these regions Various statistical operations 7. Find the object counts over a given region list 8. Cross-match these two catalogs in the region slide 43

Sky Coordinates of Points § Many different coordinate systems • Equatorial, Galactic, Ecliptic, Supergalactic § Longitude-latitude constraints § Searches often in mix of different coordinate systems • gb>40 and dec between 10 and 20 • Problem: coordinate singularities, transformations § How can one describe constraints in a easy, uniform fashion? § How can one perform fast database queries in an easy fashion? • Fast: Indexes • Easy: simple query expressions slide 44

Describing Regions Spacetime metadata for the VO (Arnold Rots) § Includes definitions of • • • Constraint: single small or great circle Convex: intersection of constraints Region: union of convexes § Support both angles and Cartesian descriptions § Constructors for • CIRCLE, RECTANGLE, POLYGON, CONVEX HULL § Boolean algebra (INTERSECTION, UNION, DIFF) § Proper language to describe the abstract regions § Similar to GIS, but much better suited for astronomy slide 45

Things Can Get Complex slide 46

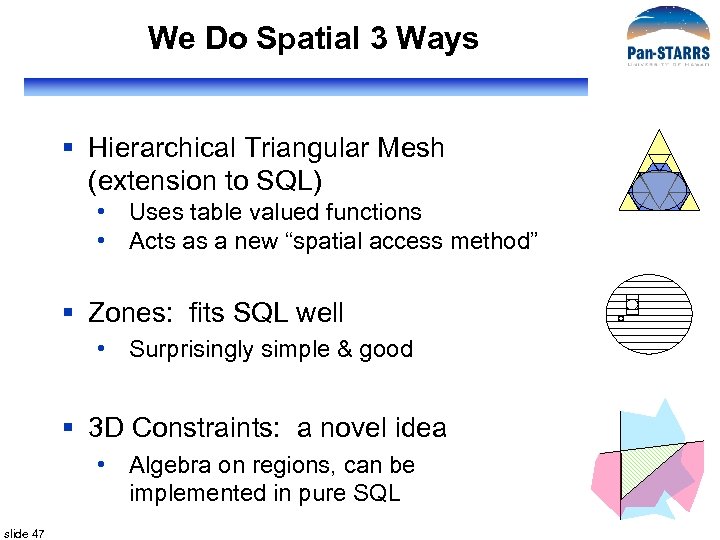

We Do Spatial 3 Ways § Hierarchical Triangular Mesh (extension to SQL) • Uses table valued functions • Acts as a new “spatial access method” § Zones: fits SQL well • Surprisingly simple & good § 3 D Constraints: a novel idea • Algebra on regions, can be implemented in pure SQL slide 47

PS 1 Footprint § Using the projection cell definitions as centers for tessellation (T. Budavari) slide 48

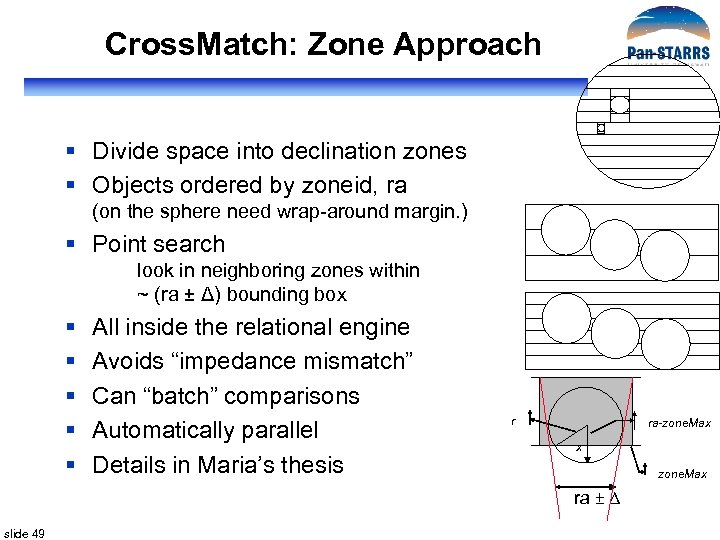

Cross. Match: Zone Approach § Divide space into declination zones § Objects ordered by zoneid, ra (on the sphere need wrap-around margin. ) § Point search look in neighboring zones within ~ (ra ± Δ) bounding box § § § All inside the relational engine Avoids “impedance mismatch” Can “batch” comparisons Automatically parallel Details in Maria’s thesis r ra-zone. Max x zone. Max ra ± Δ slide 49

Indexing Using Quadtrees § Cover the sky with hierarchical pixels § COBE – start with a cube § Hierarchical Triangular Mesh (HTM) uses trixels • Samet, Fekete § Start with an octahedron, and split each triangle into 4 children, 2, 0 20 down to 20 levels deep 2, 1 23 § Smallest triangles are 0. 3” 2, 2 2, 3 21 22 § Each trixel has a unique htm. ID slide 50 222 2, 3, 0 223 2, 3, 1 220 2, 3, 2 221 2, 3, 3

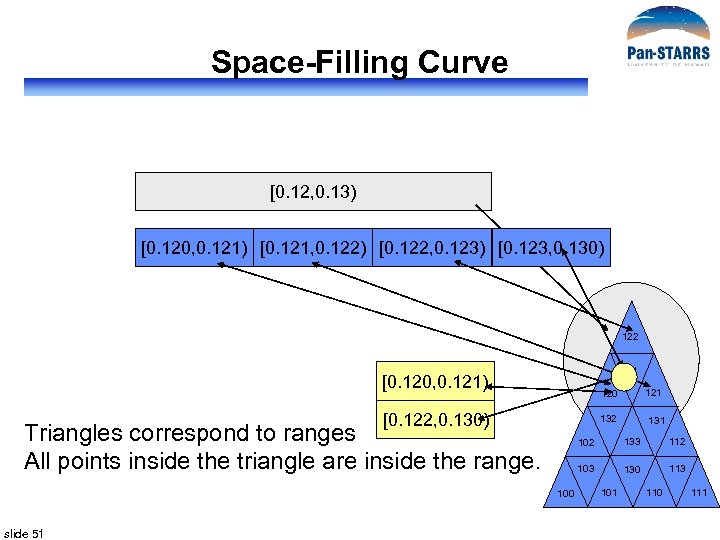

Space-Filling Curve [0. 12, 0. 13) [0. 120, 0. 121) [0. 121, 0. 122) [0. 122, 0. 123) [0. 123, 0. 130) 122 1, 2, 1 [0. 120, 0. 121) [0. 122, 0. 130) 132 Triangles correspond to ranges All points inside the triangle are inside the range. 131 102 133 112 103 100 slide 51 120 130 113 101 110 111

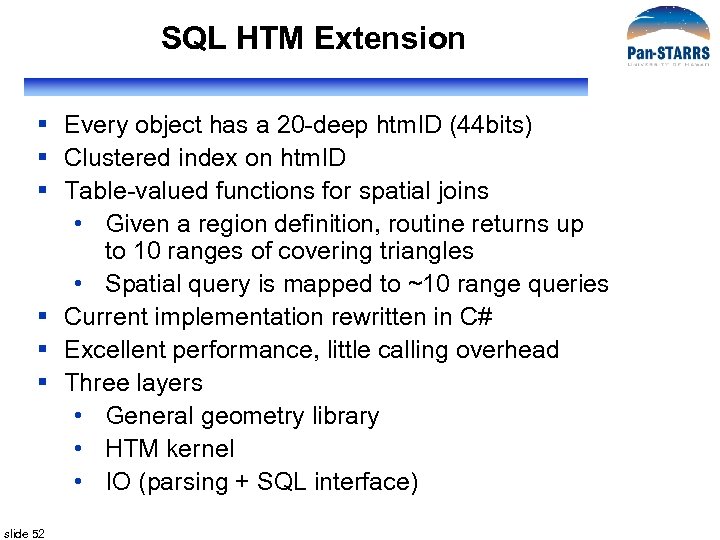

SQL HTM Extension § Every object has a 20 -deep htm. ID (44 bits) § Clustered index on htm. ID § Table-valued functions for spatial joins • Given a region definition, routine returns up to 10 ranges of covering triangles • Spatial query is mapped to ~10 range queries § Current implementation rewritten in C# § Excellent performance, little calling overhead § Three layers • General geometry library • HTM kernel • IO (parsing + SQL interface) slide 52

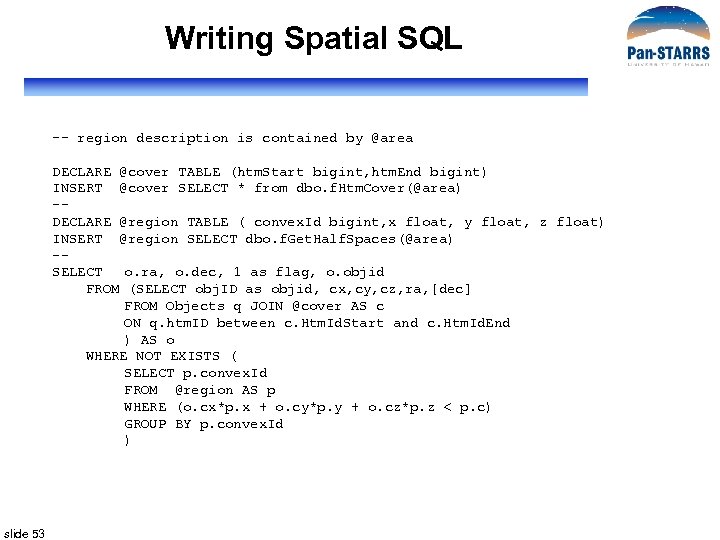

Writing Spatial SQL -- region description is contained by @area DECLARE @cover TABLE (htm. Start bigint, htm. End bigint) INSERT @cover SELECT * from dbo. f. Htm. Cover(@area) -DECLARE @region TABLE ( convex. Id bigint, x float, y float, z float) INSERT @region SELECT dbo. f. Get. Half. Spaces(@area) -SELECT o. ra, o. dec, 1 as flag, o. objid FROM (SELECT obj. ID as objid, cx, cy, cz, ra, [dec] FROM Objects q JOIN @cover AS c ON q. htm. ID between c. Htm. Id. Start and c. Htm. Id. End ) AS o WHERE NOT EXISTS ( SELECT p. convex. Id FROM @region AS p WHERE (o. cx*p. x + o. cy*p. y + o. cz*p. z < p. c) GROUP BY p. convex. Id ) slide 53

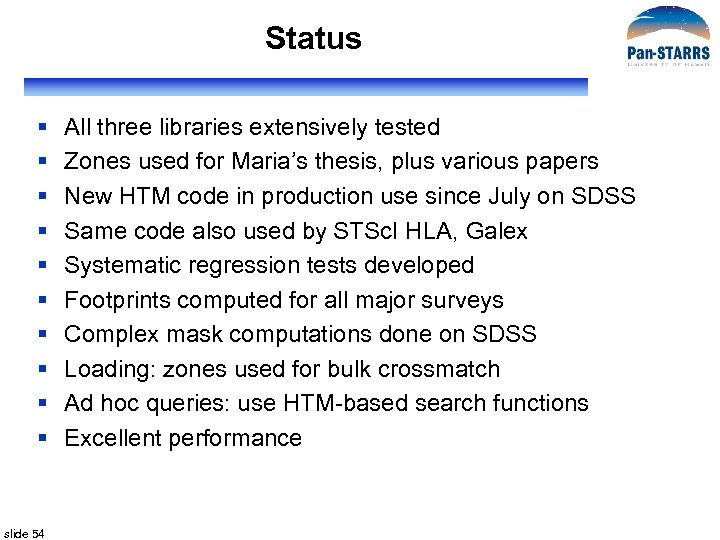

Status § § § § § slide 54 All three libraries extensively tested Zones used for Maria’s thesis, plus various papers New HTM code in production use since July on SDSS Same code also used by STSc. I HLA, Galex Systematic regression tests developed Footprints computed for all major surveys Complex mask computations done on SDSS Loading: zones used for bulk crossmatch Ad hoc queries: use HTM-based search functions Excellent performance

Prototype (Maria) slide 55

PS 1 PSPS Object Data Manager Design PSPS Critical Design Review November 5 -6, 2007 If. A slide 56

Detail Design § § slide 57 General Concepts Distributed Database architecture Ingest Workflow Prototype

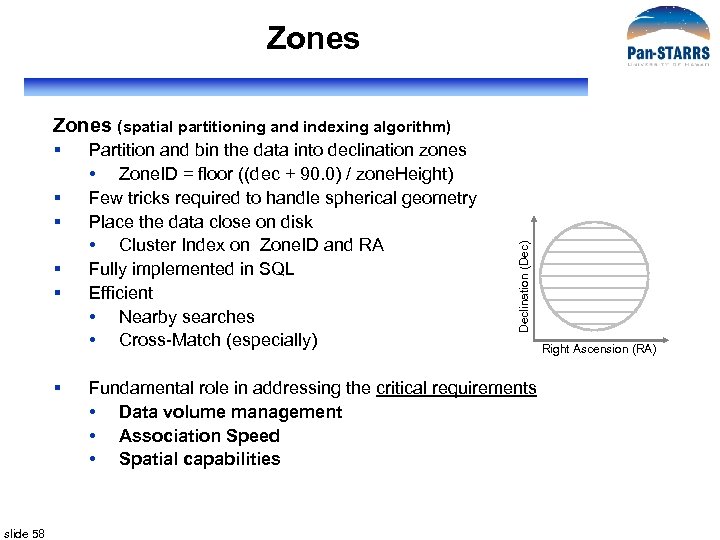

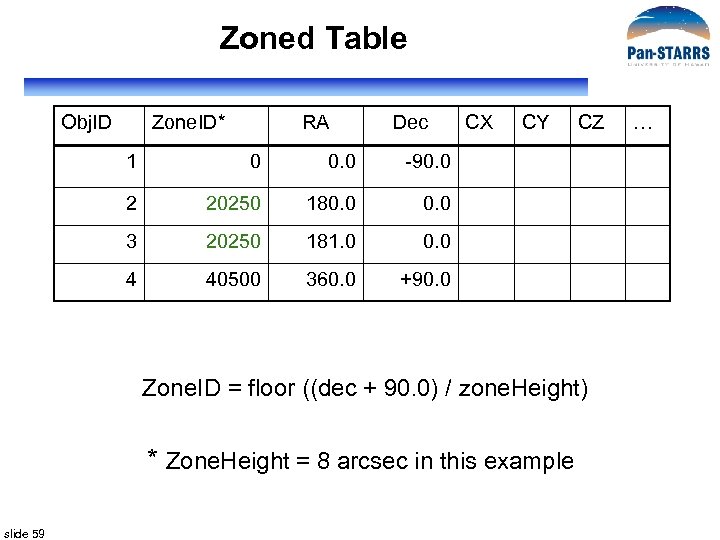

Zones § § § slide 58 Partition and bin the data into declination zones • Zone. ID = floor ((dec + 90. 0) / zone. Height) Few tricks required to handle spherical geometry Place the data close on disk • Cluster Index on Zone. ID and RA Fully implemented in SQL Efficient • Nearby searches • Cross-Match (especially) Declination (Dec) Zones (spatial partitioning and indexing algorithm) Fundamental role in addressing the critical requirements • Data volume management • Association Speed • Spatial capabilities Right Ascension (RA)

Zoned Table Obj. ID Zone. ID* RA Dec 1 0 0. 0 20250 181. 0 0. 0 4 40500 360. 0 CZ 0. 0 3 CY -90. 0 2 CX +90. 0 Zone. ID = floor ((dec + 90. 0) / zone. Height) * Zone. Height = 8 arcsec in this example slide 59 …

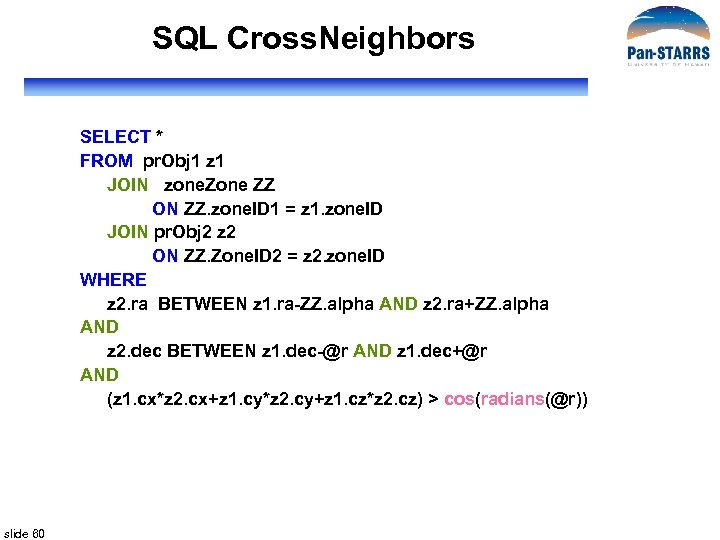

SQL Cross. Neighbors SELECT * FROM pr. Obj 1 z 1 JOIN zone. Zone ZZ ON ZZ. zone. ID 1 = z 1. zone. ID JOIN pr. Obj 2 z 2 ON ZZ. Zone. ID 2 = z 2. zone. ID WHERE z 2. ra BETWEEN z 1. ra-ZZ. alpha AND z 2. ra+ZZ. alpha AND z 2. dec BETWEEN z 1. dec-@r AND z 1. dec+@r AND (z 1. cx*z 2. cx+z 1. cy*z 2. cy+z 1. cz*z 2. cz) > cos(radians(@r)) slide 60

Good CPU Usage slide 61

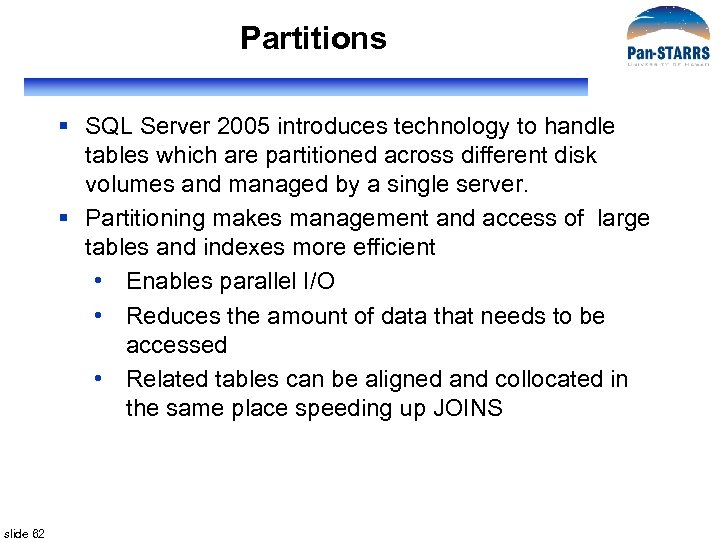

Partitions § SQL Server 2005 introduces technology to handle tables which are partitioned across different disk volumes and managed by a single server. § Partitioning makes management and access of large tables and indexes more efficient • Enables parallel I/O • Reduces the amount of data that needs to be accessed • Related tables can be aligned and collocated in the same place speeding up JOINS slide 62

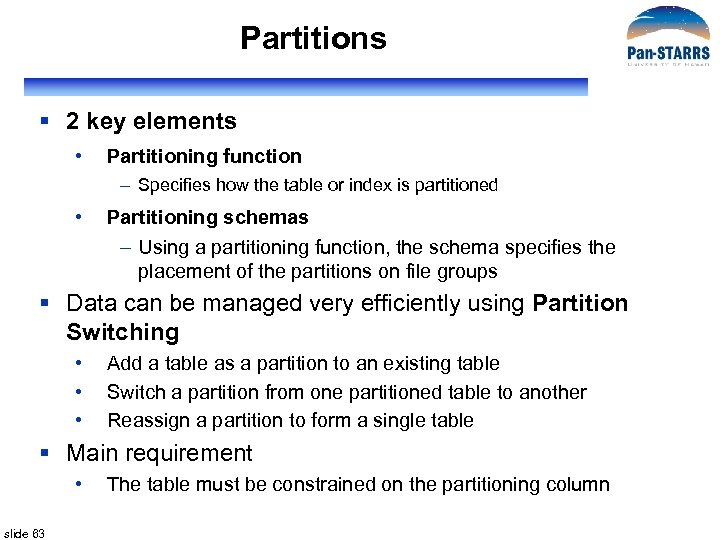

Partitions § 2 key elements • Partitioning function – Specifies how the table or index is partitioned • Partitioning schemas – Using a partitioning function, the schema specifies the placement of the partitions on file groups § Data can be managed very efficiently using Partition Switching • • • Add a table as a partition to an existing table Switch a partition from one partitioned table to another Reassign a partition to form a single table § Main requirement • slide 63 The table must be constrained on the partitioning column

Partitions § For the PS 1 design, • Partitions mean File Group Partitions • Tables are partitioned into ranges of Object. ID, which correspond to declination ranges. • Object. ID boundaries are selected so that each partition has a similar number of objects. slide 64

Distributed Partitioned Views § Tables participating in the Distributed Partitioned View (DVP) reside on different databases which reside in different databases which reside on different instances or different (linked) servers slide 65

Concept: Slices § In the PS 1 design, the bigger tables will be partitioned across servers § To avoid confusion with the File Group Partitioning, we call them “Slices” § Data is glued together using Distributed Partitioned Views § The ODM will manage slices. Using slices improves system scalability. § For PS 1 design, tables are sliced into ranges of Object. ID, which correspond to broad declination ranges. Each slice is subdivided into partitions that correspond to narrower declination ranges. § Object. ID boundaries are selected so that each slice has a similar number of objects. slide 66

Detail Design Outline § § slide 67 General Concepts Distributed Database architecture Ingest Workflow Prototype

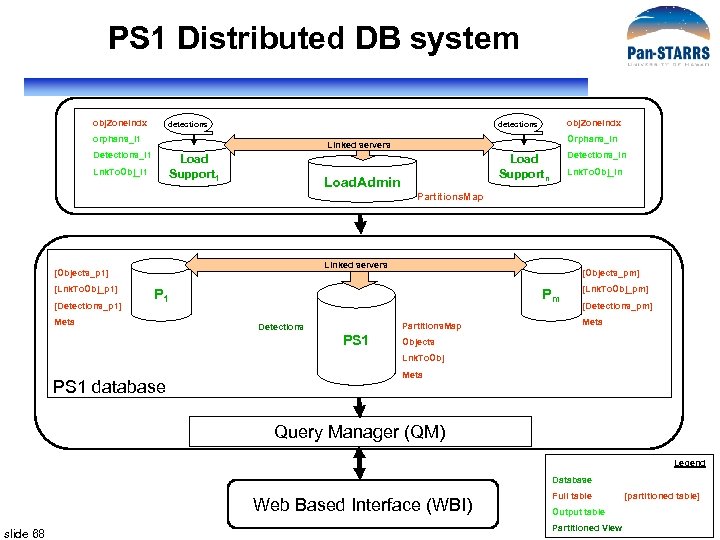

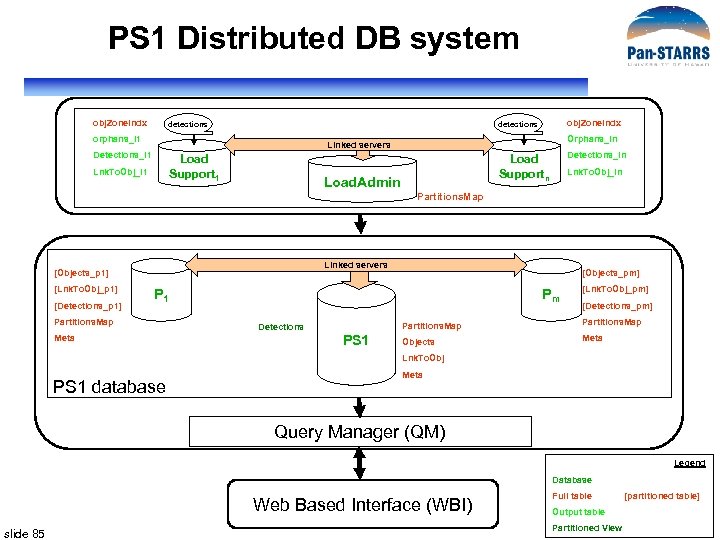

PS 1 Distributed DB system obj. Zone. Indx detections orphans_l 1 Orphans_ln Linked servers Detections_l 1 Load Support 1 Lnk. To. Obj_l 1 Detections_ln Load Supportn Load. Admin Lnk. To. Obj_ln Partitions. Map Linked servers [Objects_p 1] [Lnk. To. Obj_p 1] [Detections_p 1] [Objects_pm] P 1 Meta Pm Partitions. Map Detections PS 1 [Lnk. To. Obj_pm] [Detections_pm] Meta Objects Lnk. To. Obj PS 1 database Meta Query Manager (QM) Legend Database Web Based Interface (WBI) slide 68 Full table Output table Partitioned View [partitioned table]

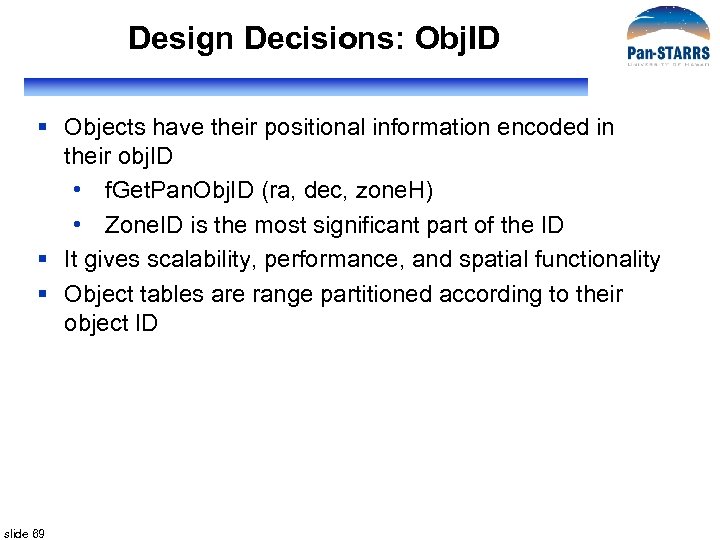

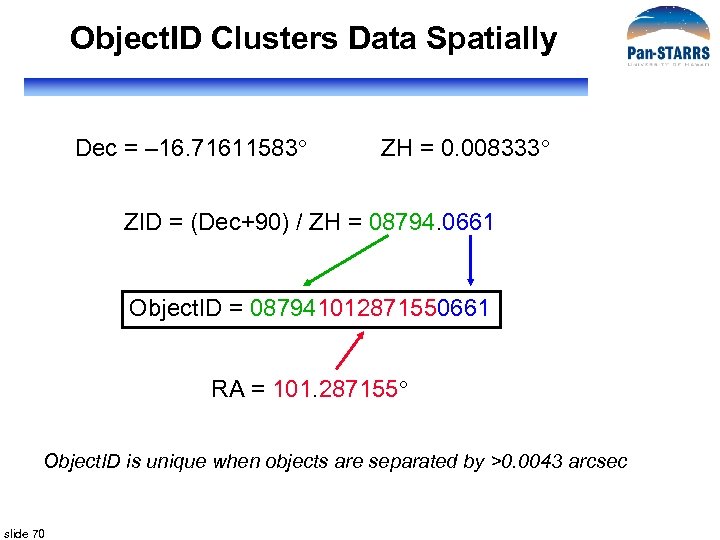

Design Decisions: Obj. ID § Objects have their positional information encoded in their obj. ID • f. Get. Pan. Obj. ID (ra, dec, zone. H) • Zone. ID is the most significant part of the ID § It gives scalability, performance, and spatial functionality § Object tables are range partitioned according to their object ID slide 69

Object. ID Clusters Data Spatially Dec = – 16. 71611583 ZH = 0. 008333 ZID = (Dec+90) / ZH = 08794. 0661 Object. ID = 087941012871550661 RA = 101. 287155 Object. ID is unique when objects are separated by >0. 0043 arcsec slide 70

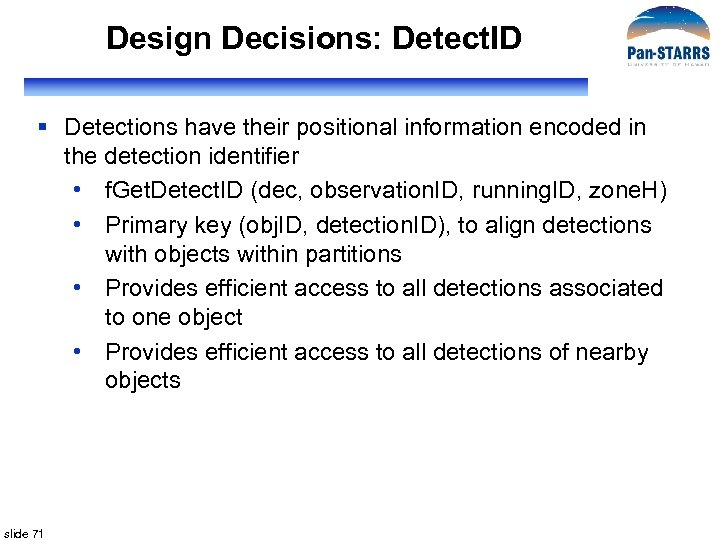

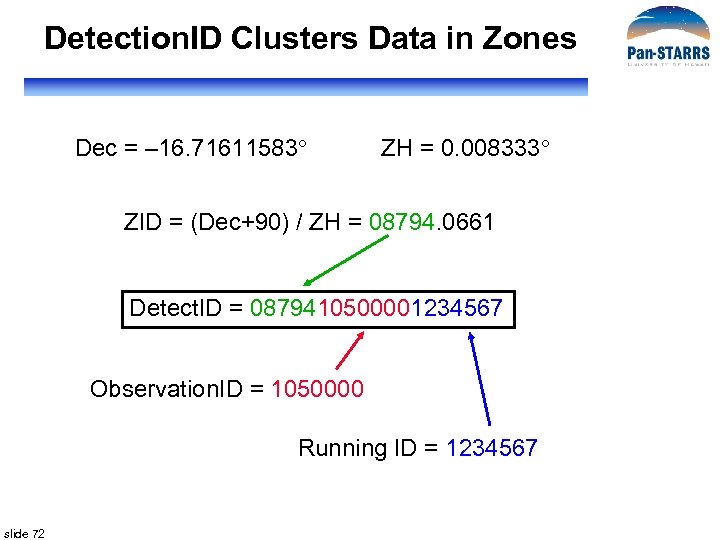

Design Decisions: Detect. ID § Detections have their positional information encoded in the detection identifier • f. Get. Detect. ID (dec, observation. ID, running. ID, zone. H) • Primary key (obj. ID, detection. ID), to align detections with objects within partitions • Provides efficient access to all detections associated to one object • Provides efficient access to all detections of nearby objects slide 71

Detection. ID Clusters Data in Zones Dec = – 16. 71611583 ZH = 0. 008333 ZID = (Dec+90) / ZH = 08794. 0661 Detect. ID = 0879410500001234567 Observation. ID = 1050000 Running ID = 1234567 slide 72

ODM Capacity 5. 3. 1. 3 The PS 1 ODM shall be able to ingest into the ODM a total of • 1. 5 1011 P 2 detections • 8. 3 1010 cumulative sky (stack) detections • 5. 5 109 celestial objects together with their linkages. slide 73

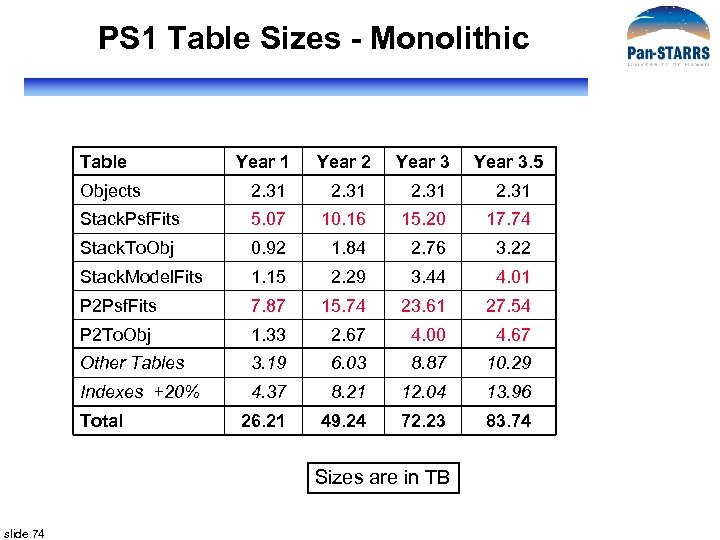

PS 1 Table Sizes - Monolithic Table Year 1 Year 2 Year 3. 5 Objects 2. 31 Stack. Psf. Fits 5. 07 10. 16 15. 20 17. 74 Stack. To. Obj 0. 92 1. 84 2. 76 3. 22 Stack. Model. Fits 1. 15 2. 29 3. 44 4. 01 P 2 Psf. Fits 7. 87 15. 74 23. 61 27. 54 P 2 To. Obj 1. 33 2. 67 4. 00 4. 67 Other Tables 3. 19 6. 03 8. 87 10. 29 Indexes +20% 4. 37 8. 21 12. 04 13. 96 26. 21 49. 24 72. 23 83. 74 Total Sizes are in TB slide 74

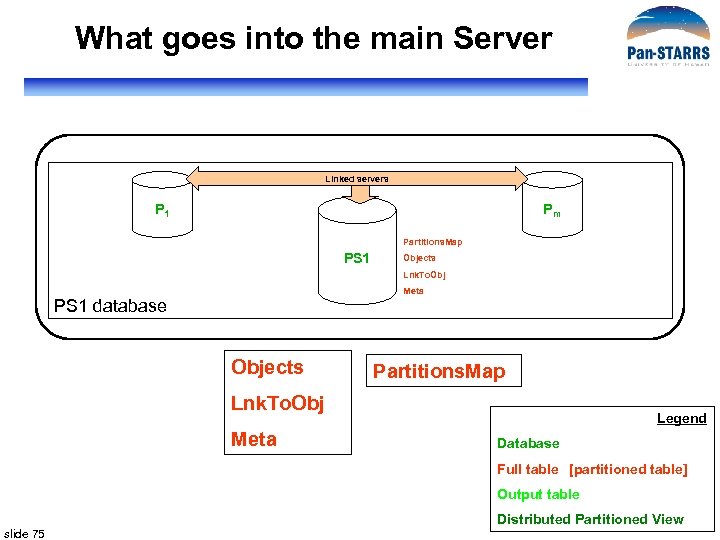

What goes into the main Server Linked servers P 1 Pm Partitions. Map PS 1 Objects Lnk. To. Obj Meta PS 1 database Objects Partitions. Map Lnk. To. Obj Meta Legend Database Full table [partitioned table] Output table slide 75 Distributed Partitioned View

![What goes into slices Linked servers [Objects_p 1] [Lnk. To. Obj_p 1] [Detections_p 1] What goes into slices Linked servers [Objects_p 1] [Lnk. To. Obj_p 1] [Detections_p 1]](https://present5.com/presentation/b03da9497462b97f49b645d2552bed8c/image-76.jpg)

What goes into slices Linked servers [Objects_p 1] [Lnk. To. Obj_p 1] [Detections_p 1] [Objects_pm] P 1 Pm Partitions. Map PS 1 [Detections_pm] Partitions. Map Meta [Lnk. To. Obj_pm] Meta Objects Lnk. To. Obj Meta PS 1 database [Objects_p 1] Partitions. Map [Lnk. To. Obj_p 1] Legend [Detections_p 1] Database Meta Full table [partitioned table] Output table slide 76 Distributed Partitioned View

![What goes into slices Linked servers [Objects_p 1] [Lnk. To. Obj_p 1] [Detections_p 1] What goes into slices Linked servers [Objects_p 1] [Lnk. To. Obj_p 1] [Detections_p 1]](https://present5.com/presentation/b03da9497462b97f49b645d2552bed8c/image-77.jpg)

What goes into slices Linked servers [Objects_p 1] [Lnk. To. Obj_p 1] [Detections_p 1] [Objects_pm] P 1 Pm Partitions. Map PS 1 [Detections_pm] Partitions. Map Meta [Lnk. To. Obj_pm] Meta Objects Lnk. To. Obj Meta PS 1 database [Objects_p 1] Partitions. Map [Lnk. To. Obj_p 1] Legend [Detections_p 1] Database Meta Full table [partitioned table] Output table slide 77 Distributed Partitioned View

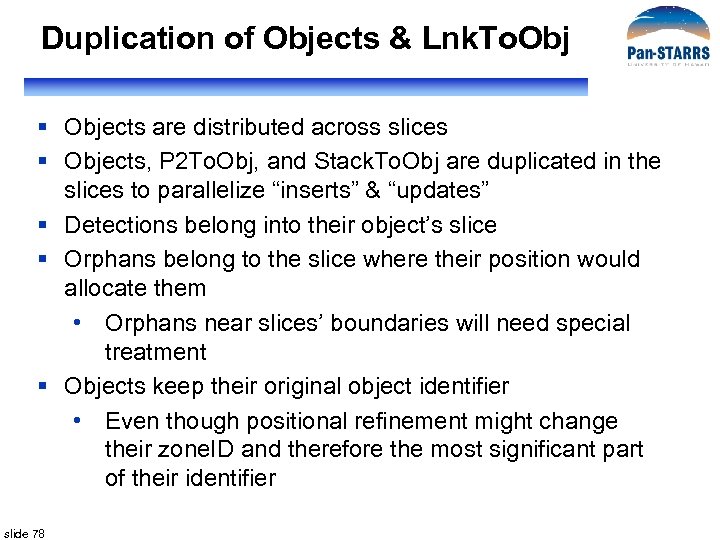

Duplication of Objects & Lnk. To. Obj § Objects are distributed across slices § Objects, P 2 To. Obj, and Stack. To. Obj are duplicated in the slices to parallelize “inserts” & “updates” § Detections belong into their object’s slice § Orphans belong to the slice where their position would allocate them • Orphans near slices’ boundaries will need special treatment § Objects keep their original object identifier • Even though positional refinement might change their zone. ID and therefore the most significant part of their identifier slide 78

![Glue = Distributed Views Linked servers [Objects_p 1] [Lnk. To. Obj_p 1] [Detections_p 1] Glue = Distributed Views Linked servers [Objects_p 1] [Lnk. To. Obj_p 1] [Detections_p 1]](https://present5.com/presentation/b03da9497462b97f49b645d2552bed8c/image-79.jpg)

Glue = Distributed Views Linked servers [Objects_p 1] [Lnk. To. Obj_p 1] [Detections_p 1] [Objects_pm] P 1 Partitions. Map Meta Pm PS 1 [Detections_pm] Partitions. Map Detections [Lnk. To. Obj_pm] Meta Objects Lnk. To. Obj Meta PS 1 database Detections Legend Database Full table [partitioned table] Output table slide 79 Distributed Partitioned View

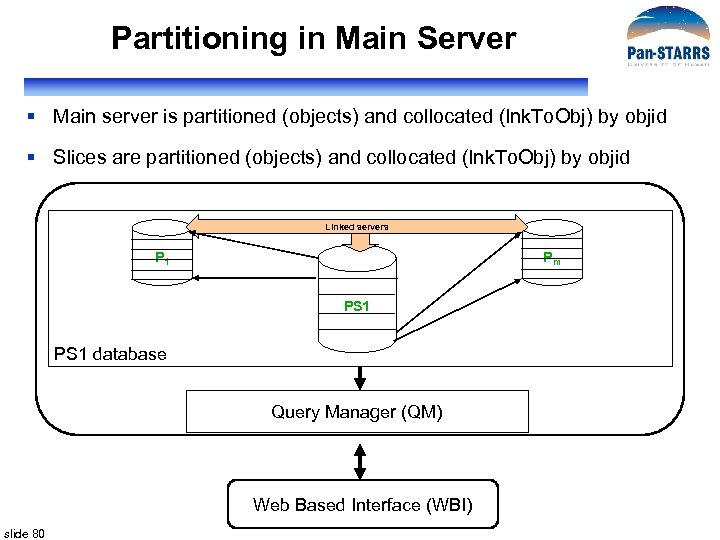

Partitioning in Main Server § Main server is partitioned (objects) and collocated (lnk. To. Obj) by objid § Slices are partitioned (objects) and collocated (lnk. To. Obj) by objid Linked servers P 1 Pm PS 1 database Query Manager (QM) Web Based Interface (WBI) slide 80

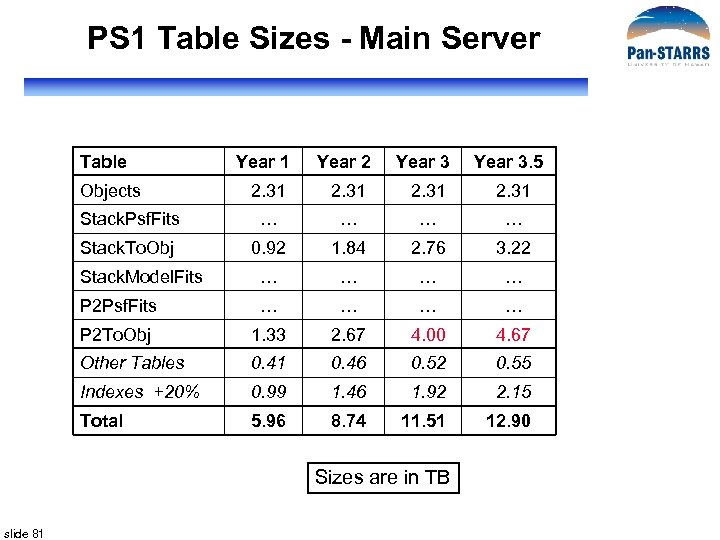

PS 1 Table Sizes - Main Server Table Year 1 Year 2 Year 3. 5 2. 31 Stack. Psf. Fits Stack. To. Obj 0. 92 1. 84 2. 76 3. 22 Stack. Model. Fits P 2 Psf. Fits P 2 To. Obj 1. 33 2. 67 4. 00 4. 67 Other Tables 0. 41 0. 46 0. 52 0. 55 Indexes +20% 0. 99 1. 46 1. 92 2. 15 Total 5. 96 8. 74 11. 51 12. 90 Objects Sizes are in TB slide 81

PS 1 Table Sizes - Each Slice m=4 m=8 m=10 m=12 Year 1 Year 2 Year 3. 5 Objects 0. 58 0. 29 0. 23 0. 19 Stack. Psf. Fits 1. 27 1. 52 1. 48 Stack. To. Obj 0. 23 0. 28 0. 27 Stack. Model. Fits 0. 29 0. 34 0. 33 P 2 Psf. Fits 1. 97 2. 36 2. 30 P 2 To. Obj 0. 33 0. 40 0. 39 Other Tables 0. 75 0. 81 1. 00 1. 01 Indexes +20% 1. 08 1. 04 1. 23 1. 19 Total 6. 50 6. 23 7. 36 7. 16 Table Sizes are in TB slide 82

PS 1 Table Sizes - All Servers Table Year 1 Year 2 Year 3. 5 Objects 4. 63 4. 61 4. 59 Stack. Psf. Fits 5. 08 10. 16 15. 20 17. 76 Stack. To. Obj 1. 84 3. 68 5. 56 6. 46 Stack. Model. Fits 1. 16 2. 32 3. 40 3. 96 P 2 Psf. Fits 7. 88 15. 76 23. 60 27. 60 P 2 To. Obj 2. 65 5. 31 8. 00 9. 35 Other Tables 3. 41 6. 94 10. 52 12. 67 Indexes +20% 5. 33 9. 76 14. 18 16. 48 31. 98 58. 56 85. 07 98. 87 Total Sizes are in TB slide 83

Detail Design Outline § § slide 84 General Concepts Distributed Database architecture Ingest Workflow Prototype

PS 1 Distributed DB system obj. Zone. Indx detections orphans_l 1 Orphans_ln Linked servers Detections_l 1 Load Support 1 Lnk. To. Obj_l 1 Detections_ln Load Supportn Load. Admin Lnk. To. Obj_ln Partitions. Map Linked servers [Objects_p 1] [Lnk. To. Obj_p 1] [Detections_p 1] [Objects_pm] P 1 Partitions. Map Meta Pm [Lnk. To. Obj_pm] [Detections_pm] Partitions. Map Detections PS 1 Partitions. Map Objects Meta Lnk. To. Obj PS 1 database Meta Query Manager (QM) Legend Database Web Based Interface (WBI) slide 85 Full table Output table Partitioned View [partitioned table]

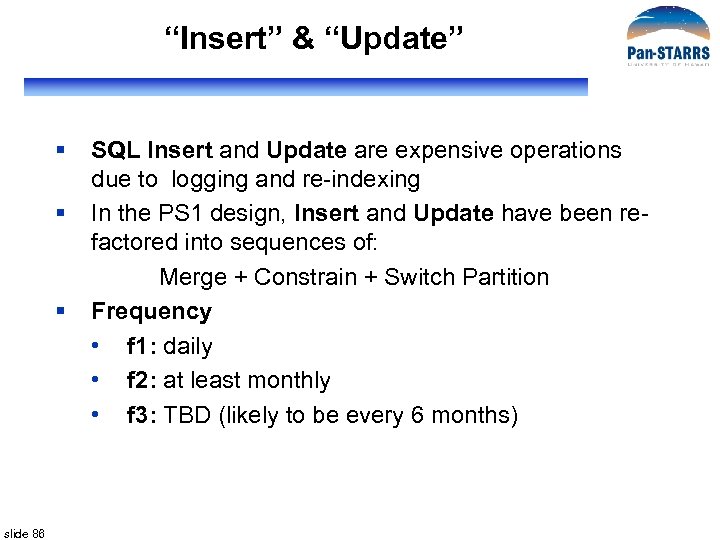

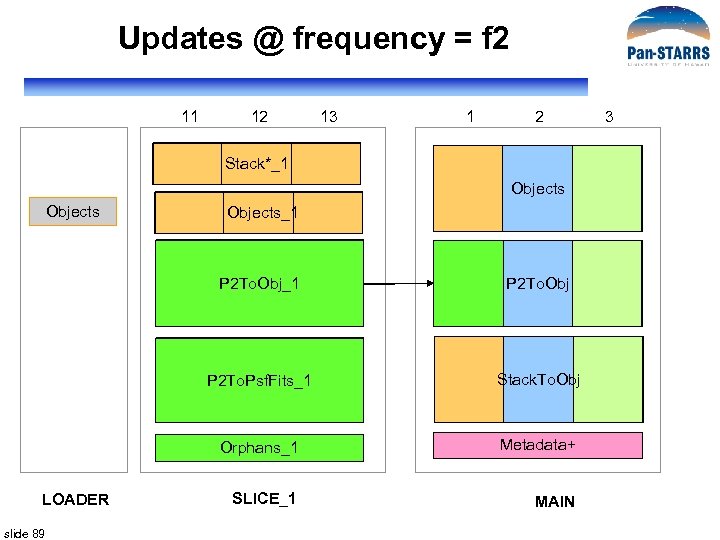

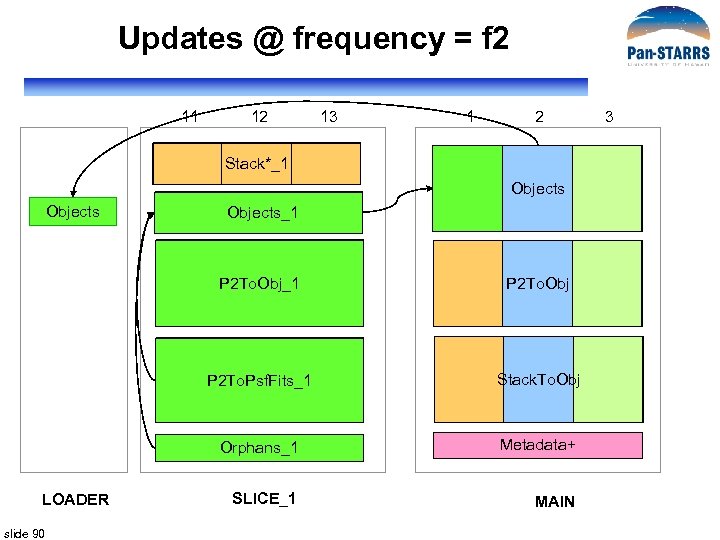

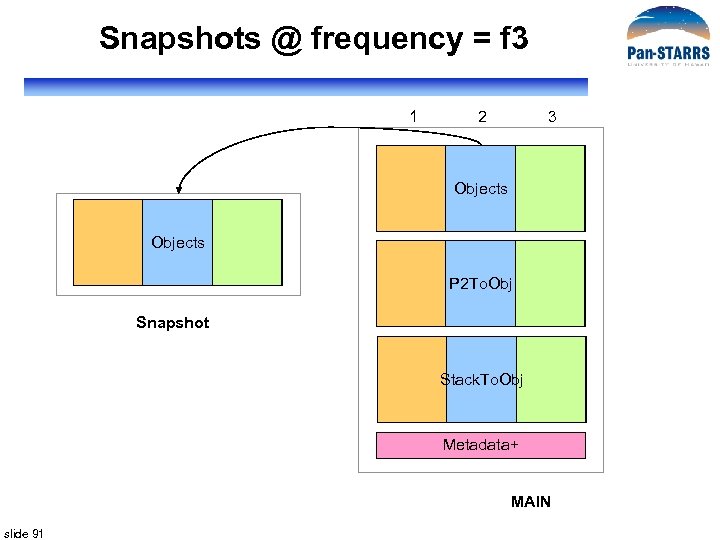

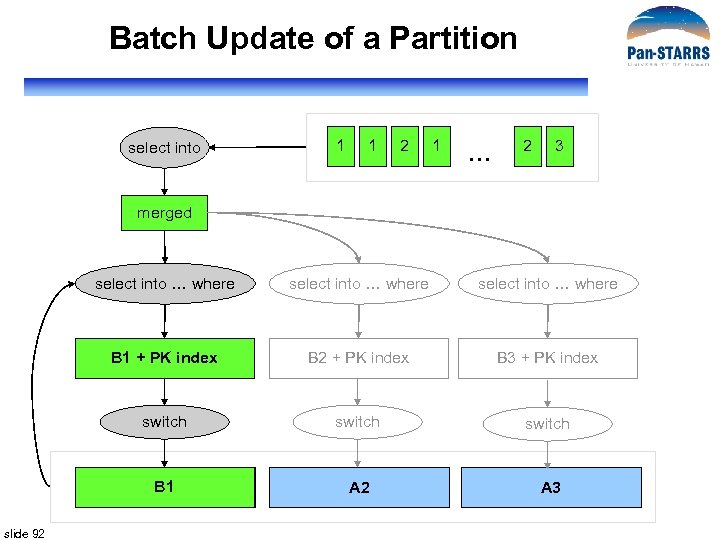

“Insert” & “Update” § § § slide 86 SQL Insert and Update are expensive operations due to logging and re-indexing In the PS 1 design, Insert and Update have been refactored into sequences of: Merge + Constrain + Switch Partition Frequency • f 1: daily • f 2: at least monthly • f 3: TBD (likely to be every 6 months)

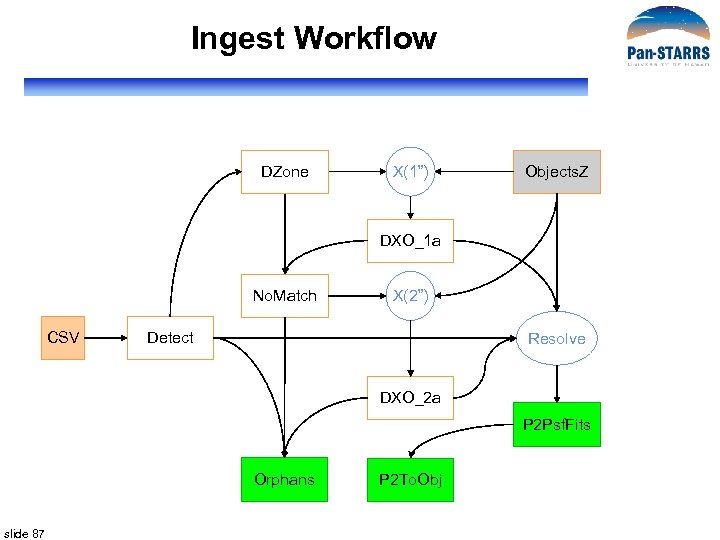

Ingest Workflow DZone X(1”) Objects. Z DXO_1 a No. Match CSV X(2”) Detect Resolve DXO_2 a P 2 Psf. Fits Orphans slide 87 P 2 To. Obj

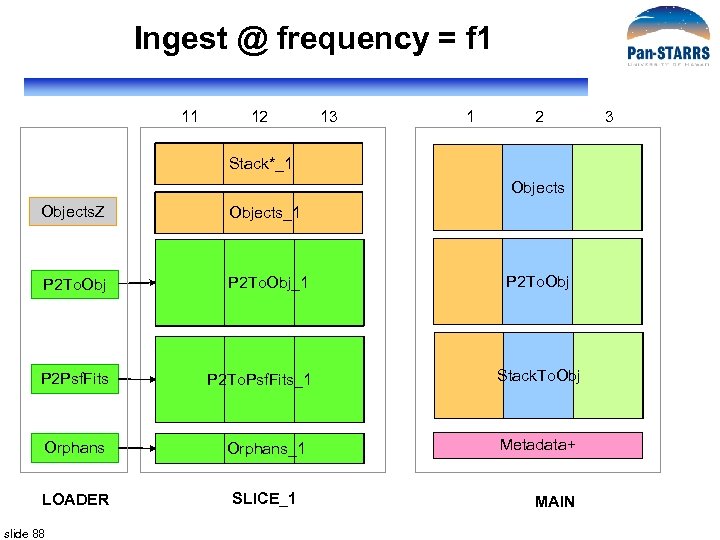

Ingest @ frequency = f 1 11 12 13 1 2 Stack*_1 Objects. Z Objects_1 P 2 To. Obj_1 P 2 Psf. Fits P 2 To. Psf. Fits_1 Stack. To. Obj Orphans_1 Metadata+ LOADER SLICE_1 slide 88 P 2 To. Obj MAIN 3

Updates @ frequency = f 2 11 12 13 1 2 Stack*_1 Objects_1 P 2 To. Obj_1 P 2 To. Psf. Fits_1 slide 89 Stack. To. Obj Orphans_1 LOADER P 2 To. Obj Metadata+ SLICE_1 MAIN 3

Updates @ frequency = f 2 11 12 13 1 2 Stack*_1 Objects_1 P 2 To. Obj_1 P 2 To. Psf. Fits_1 slide 90 Stack. To. Obj Orphans_1 LOADER P 2 To. Obj Metadata+ SLICE_1 MAIN 3

Snapshots @ frequency = f 3 1 2 3 Objects P 2 To. Obj Snapshot Stack. To. Obj Metadata+ MAIN slide 91

Batch Update of a Partition select into 1 1 2 1 … 2 3 merged select into … where B 1 + PK index B 2 + PK index B 3 + PK index switch B 1 A 1 slide 92 select into … where A 2 A 3

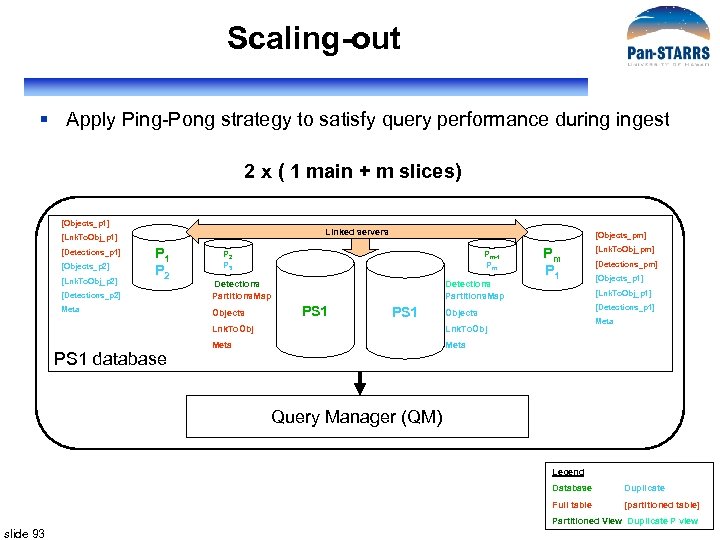

Scaling-out § Apply Ping-Pong strategy to satisfy query performance during ingest 2 x ( 1 main + m slices) [Objects_p 1] Linked servers [Lnk. To. Obj_p 1] [Detections_p 1] [Objects_p 2] [Lnk. To. Obj_p 2] P 1 P 2 [Objects_pm] P 2 P 3 [Detections_p 2] Detections Partitions. Map Meta Objects Pm-1 Pm Detections Partitions. Map PS 1 [Detections_pm] [Objects_p 1] [Detections_p 1] Meta Lnk. To. Obj Meta [Lnk. To. Obj_pm] [Lnk. To. Obj_p 1] Objects Lnk. To. Obj PS 1 database Pm P 1 Meta Query Manager (QM) Legend Database Duplicate Full table [partitioned table] Partitioned View Duplicate P view slide 93

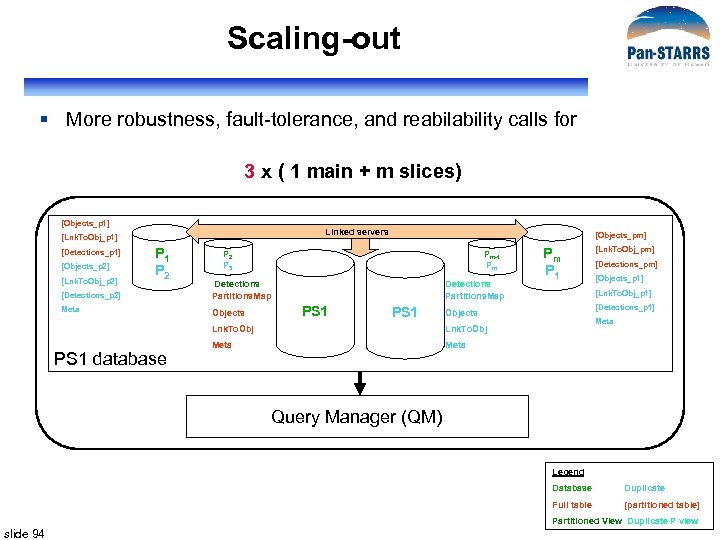

Scaling-out § More robustness, fault-tolerance, and reability calls for 3 x ( 1 main + m slices) [Objects_p 1] Linked servers [Lnk. To. Obj_p 1] [Detections_p 1] [Objects_p 2] [Lnk. To. Obj_p 2] P 1 P 2 [Objects_pm] P 2 P 3 [Detections_p 2] Detections Partitions. Map Meta Objects Pm-1 Pm Detections Partitions. Map PS 1 [Detections_pm] [Objects_p 1] [Detections_p 1] Meta Lnk. To. Obj Meta [Lnk. To. Obj_pm] [Lnk. To. Obj_p 1] Objects Lnk. To. Obj PS 1 database Pm P 1 Meta Query Manager (QM) Legend Database Duplicate Full table [partitioned table] Partitioned View Duplicate P view slide 94

Adding New slices SQL Server range partitioning capabilities make it easy § § § slide 95 Recalculate partitioning limits Transfer data to new slices Remove data from slices Define an d Apply new partitioning schema Add new partitions to main server Apply new partitioning schema to main server

Adding New Slices slide 96

Detail Design Outline § § slide 97 General Concepts Distributed Database architecture Ingest Workflow Prototype

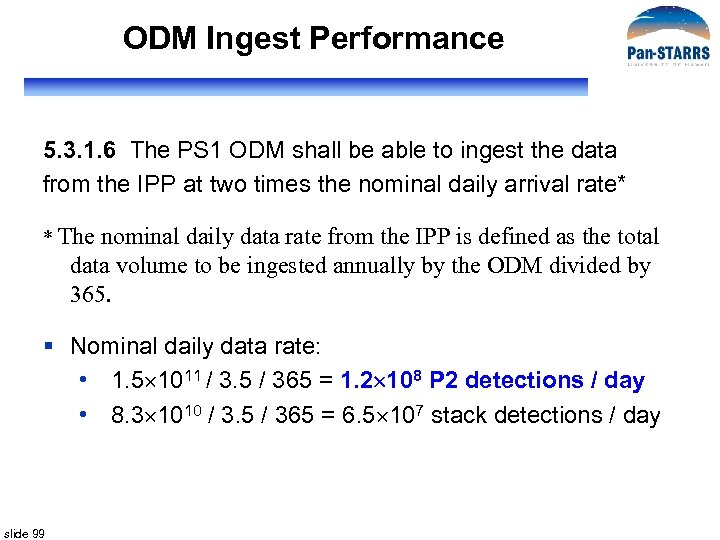

ODM Ingest Performance 5. 3. 1. 6 The PS 1 ODM shall be able to ingest the data from the IPP at two times the nominal daily arrival rate* * The nominal daily data rate from the IPP is defined as the total data volume to be ingested annually by the ODM divided by 365. § Nominal daily data rate: • 1. 5 1011 / 3. 5 / 365 = 1. 2 108 P 2 detections / day • 8. 3 1010 / 3. 5 / 365 = 6. 5 107 stack detections / day slide 99

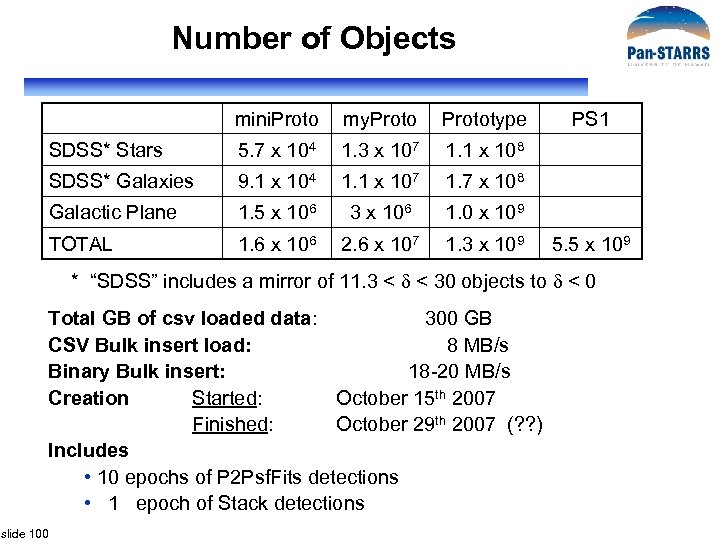

Number of Objects mini. Proto my. Prototype SDSS* Stars 5. 7 x 104 1. 3 x 107 1. 1 x 108 SDSS* Galaxies 9. 1 x 104 1. 1 x 107 1. 7 x 108 Galactic Plane 1. 5 x 106 3 x 106 1. 0 x 109 TOTAL 1. 6 x 106 2. 6 x 107 1. 3 x 109 PS 1 5. 5 x 109 * “SDSS” includes a mirror of 11. 3 < < 30 objects to < 0 Total GB of csv loaded data: 300 GB CSV Bulk insert load: 8 MB/s Binary Bulk insert: 18 -20 MB/s Creation Started: October 15 th 2007 Finished: October 29 th 2007 (? ? ) Includes • 10 epochs of P 2 Psf. Fits detections • 1 epoch of Stack detections slide 100

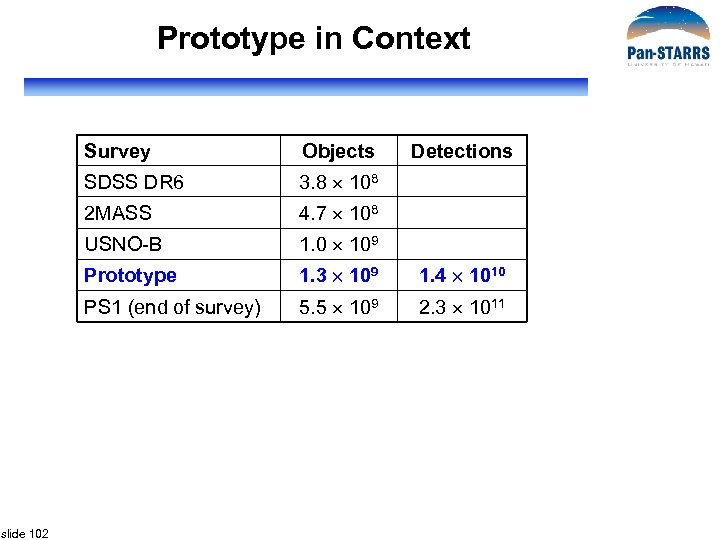

Prototype in Context Survey SDSS DR 6 3. 8 108 2 MASS 4. 7 108 USNO-B 1. 0 109 Prototype 1. 3 109 1. 4 1010 PS 1 (end of survey) slide 102 Objects Detections 5. 5 109 2. 3 1011

Size of Prototype Database Table Main Slice 1 0. 43 Slice 2 0. 43 Slice 3 Loader 0. 43 1. 30 Total Objects 1. 30 3. 89 Stack. Psf. Fits 6. 49 Stack. To. Obj 6. 49 Stack. Model. Fits 0. 87 P 2 Psf. Fits 4. 02 3. 90 3. 35 0. 37 11. 64 P 2 To. Obj 4. 02 3. 90 3. 35 0. 12 11. 39 Total 15. 15 8. 47 8. 23 7. 13 1. 79 40. 77 Extra Tables 0. 87 4. 89 4. 77 4. 22 6. 86 21. 61 Grand Total 16. 02 13. 36 13. 00 11. 35 8. 65 62. 38 Table sizes are in billions of rows slide 103

Size of Prototype Database Table Main Slice 1 Slice 2 Slice 3 Loader Total Objects 547. 6 165. 4 165. 3 137. 1 Stack. Psf. Fits 841. 5 841. 6 Stack. To. Obj 300. 9 Stack. Model. Fits 476. 7 P 2 Psf. Fits 879. 9 853. 0 733. 5 74. 7 2541. 1 P 2 To. Obj 125. 7 121. 9 104. 8 356. 2 2166. 7 1171. 0 1140. 2 1003. 6 215. 6 5697. 1 207. 9 987. 1 960. 2 840. 7 957. 3 3953. 2 Allocated / Free 1878. 0 1223. 0 1300. 0 1121. 0 666. 0 6188. 0 Grand Total 4252. 6 3381. 1 3400. 4 2965. 3 1838. 9 15838. 3 Total Extra Tables Table sizes are in GB slide 104 1180. 6 9. 6 TB of data in a distributed database

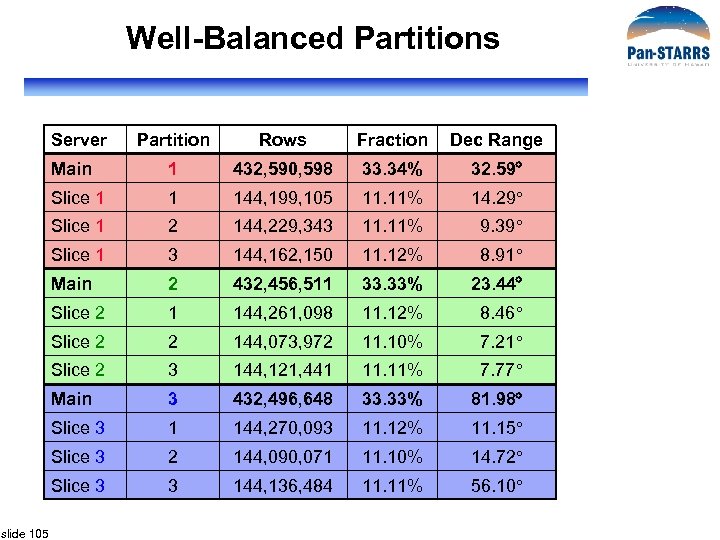

Well-Balanced Partitions Server Rows Fraction Dec Range Main 1 432, 590, 598 33. 34% 32. 59 Slice 1 1 144, 199, 105 11. 11% 14. 29 Slice 1 2 144, 229, 343 11. 11% 9. 39 Slice 1 3 144, 162, 150 11. 12% 8. 91 Main 2 432, 456, 511 33. 33% 23. 44 Slice 2 1 144, 261, 098 11. 12% 8. 46 Slice 2 2 144, 073, 972 11. 10% 7. 21 Slice 2 3 144, 121, 441 11. 11% 7. 77 Main 3 432, 496, 648 33. 33% 81. 98 Slice 3 1 144, 270, 093 11. 12% 11. 15 Slice 3 2 144, 090, 071 11. 10% 14. 72 Slice 3 slide 105 Partition 3 144, 136, 484 11. 11% 56. 10

Ingest and Association Times Task Measured Minutes Create Detections Zone Table 39. 62 X(0. 2") 121 M X 1. 3 B 65. 25 Build #no. Matches Table 1. 50 X(1") 12 k X 1. 3 B 0. 65 Build #all. Matches Table (121 M) 6. 58 Build Orphans Table 0. 17 Create P 2 Psf. Fits Table 11. 63 Create P 2 To. Obj Table 14. 00 Total of Measured Times slide 106 140. 40

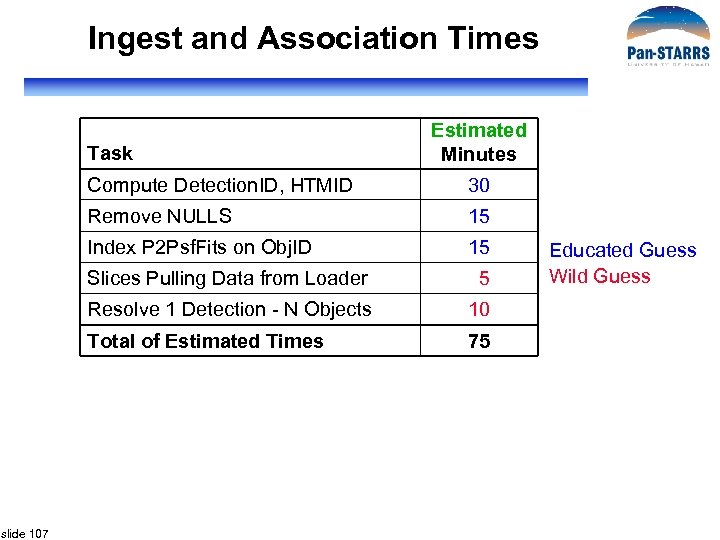

Ingest and Association Times Task Estimated Minutes Compute Detection. ID, HTMID 30 Remove NULLS 15 Index P 2 Psf. Fits on Obj. ID 15 Slices Pulling Data from Loader Resolve 1 Detection - N Objects 10 Total of Estimated Times slide 107 5 75 Educated Guess Wild Guess

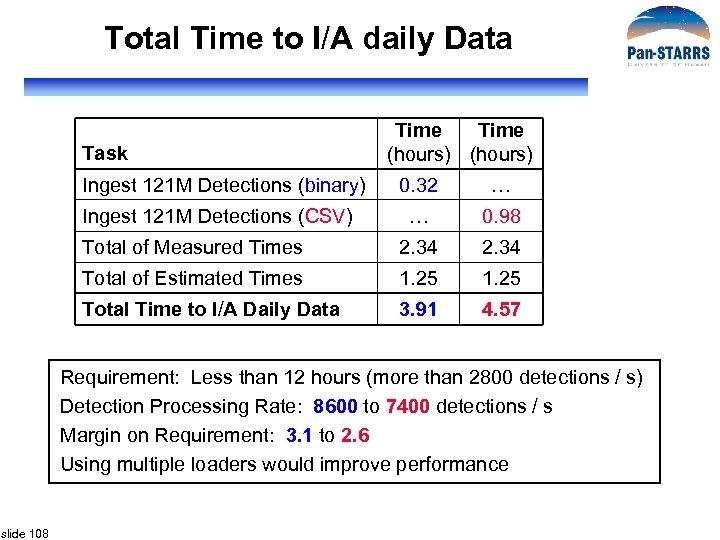

Total Time to I/A daily Data Task Time (hours) 0. 32 0. 98 Total of Measured Times 2. 34 Total of Estimated Times 1. 25 Total Time to I/A Daily Data 3. 91 4. 57 Ingest 121 M Detections (binary) Ingest 121 M Detections (CSV) Requirement: Less than 12 hours (more than 2800 detections / s) Detection Processing Rate: 8600 to 7400 detections / s Margin on Requirement: 3. 1 to 2. 6 Using multiple loaders would improve performance slide 108

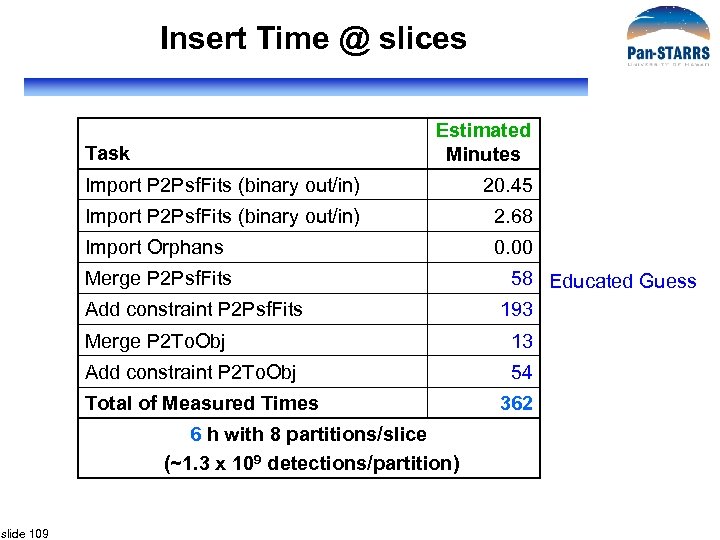

Insert Time @ slices Estimated Minutes Task Import P 2 Psf. Fits (binary out/in) 20. 45 Import P 2 Psf. Fits (binary out/in) 2. 68 Import Orphans 0. 00 Merge P 2 Psf. Fits 58 Educated Guess 193 Add constraint P 2 Psf. Fits Merge P 2 To. Obj 13 Add constraint P 2 To. Obj 54 Total of Measured Times 6 h with 8 partitions/slice (~1. 3 x 109 detections/partition) slide 109 362

Detections Per Partition Years Slices 0. 00 4 8 32 0. 00 1. 0 4. 29 1010 4 8 32 1. 34 109 1. 0 4. 29 1010 8 8 64 6. 7 108 2. 0 8. 57 1010 8 8 64 1. 34 109 2. 0 8. 57 1010 10 8 80 1. 07 109 3. 0 1. 29 1011 10 8 80 1. 61 109 3. 0 1. 29 1011 12 8 96 1. 34 109 3. 5 slide 110 Total Detections 1. 50 1011 12 8 96 1. 56 109 Partition Total Detections per Slice Partitions per Slice

Total Time for Insert @ slice Task Time (hours) Total of Measured Times 0. 25 Total of Estimated Times 5. 3 Total Time for daily insert 6 Daily insert may operate in parallel with daily ingest and association. Requirement: Less than 12 hours Margin on Requirement: 2. 0 Using more slices will improve insert performance. slide 111

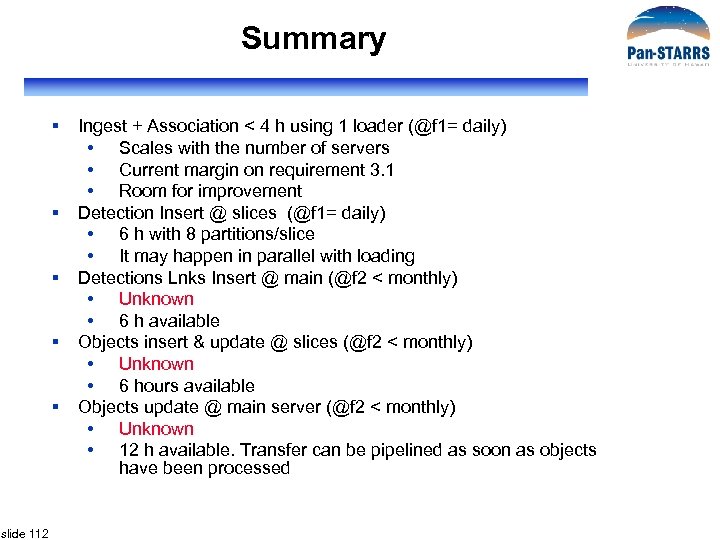

Summary § § § slide 112 Ingest + Association < 4 h using 1 loader (@f 1= daily) • Scales with the number of servers • Current margin on requirement 3. 1 • Room for improvement Detection Insert @ slices (@f 1= daily) • 6 h with 8 partitions/slice • It may happen in parallel with loading Detections Lnks Insert @ main (@f 2 < monthly) • Unknown • 6 h available Objects insert & update @ slices (@f 2 < monthly) • Unknown • 6 hours available Objects update @ main server (@f 2 < monthly) • Unknown • 12 h available. Transfer can be pipelined as soon as objects have been processed

Risks § Estimates of Insert & Update at slices could be underestimated • Need more empirical evaluation of exercising parallel I/O § Estimates and lay out of disk storage could be underestimated • Merges and Indexes require 2 x the data size slide 113

Hardware/Scalability (Jan) slide 114

PS 1 Prototype Systems Design Jan Vandenberg, JHU Early PS 1 Prototype slide 115

Engineering Systems to Support the Database Design § Sequential read performance is our life-blood. Virtually all science queries will be I/O-bound. § ~70 TB raw data: 5. 9 hours for full scan on IBM’s fastest 3. 3 GB/s Champagne-budget SAN • Need 20 GB/s IO engine just to scan the full data in less than an hour. Can’t touch this on a monolith. § Data mining a challenge even with good index coverage • ~14 TB worth of indexes: 4 -odd times bigger than SDSS DR 6. § Hopeless if we rely on any bulk network transfers: must do work where the data is § Loading/Ingest more cpu-bound, though we still need solid write performance slide 116

Choosing I/O Systems § So killer sequential I/O performance is a key systems design goal. Which gear to use? • FC/SAN? • Vanilla SATA? • SAS? slide 117

Fibre Channel, SAN § § § slide 118 Expensive but not-so-fast physical links (4 Gbit, 10 Gbit) Expensive switch Potentially very flexible Industrial strength manageability Little control over RAID controller bottlenecks

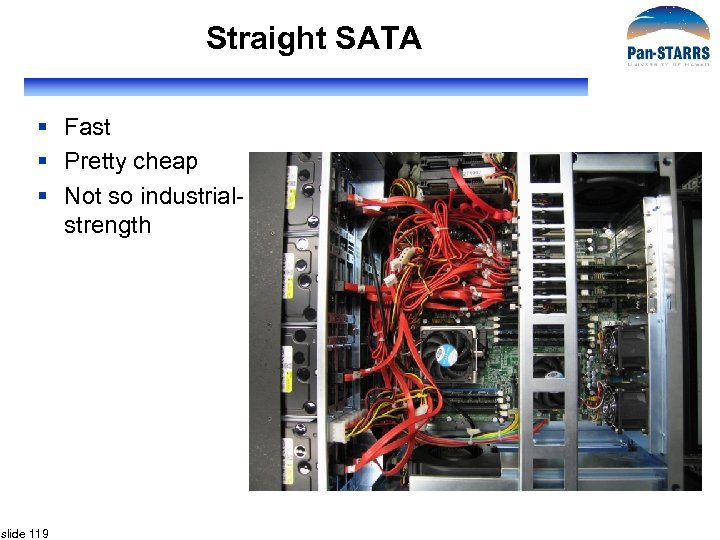

Straight SATA § Fast § Pretty cheap § Not so industrialstrength slide 119

SAS § Fast: 12 Gbit/s FD building blocks § Nice and mature, stable § SCSI’s not just for swanky drives anymore: takes SATA drives! § So we have a way to use SATA without all the “beige”. § Pricey? $4400 for full 15 x 750 GB system ($296/drive == close to Newegg media cost) slide 120

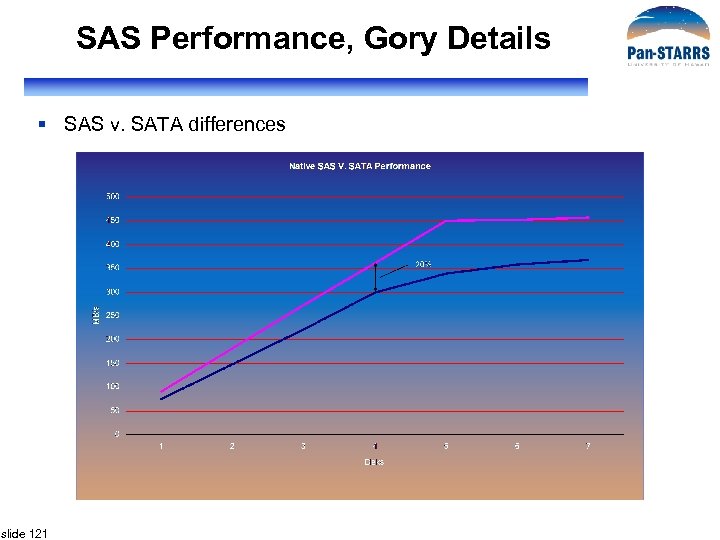

SAS Performance, Gory Details § SAS v. SATA differences slide 121

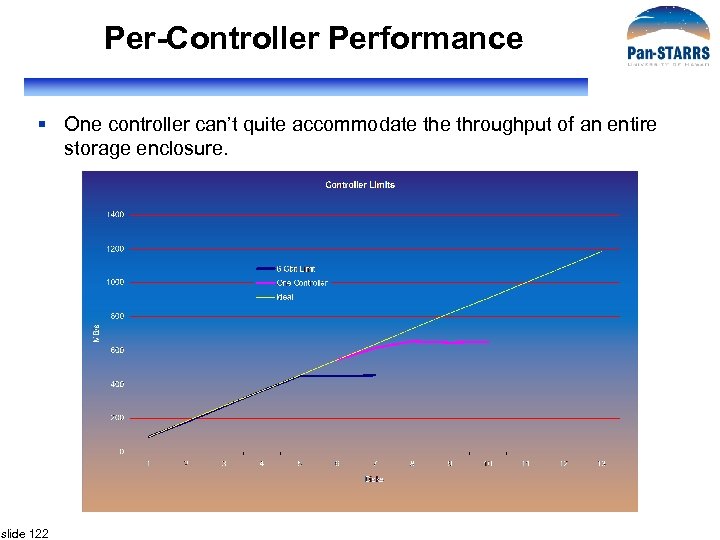

Per-Controller Performance § One controller can’t quite accommodate throughput of an entire storage enclosure. slide 122

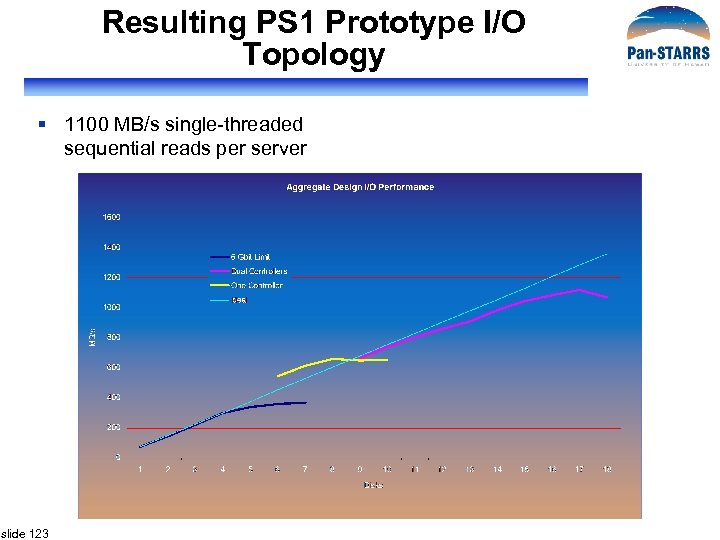

Resulting PS 1 Prototype I/O Topology § 1100 MB/s single-threaded sequential reads per server slide 123

RAID-5 v. RAID-10? § Primer, anyone? § RAID-5 perhaps feasible with contemporary controllers… § …but not a ton of redundancy § But after we add enough disks to meet performance goals, we have enough storage to run RAID-10 anyway! slide 124

RAID-10 Performance § 0. 5*RAID-0 for single-threaded reads § RAID-0 perf for 2 -user/2 -thread workloads § 0. 5*RAID-0 writes slide 125

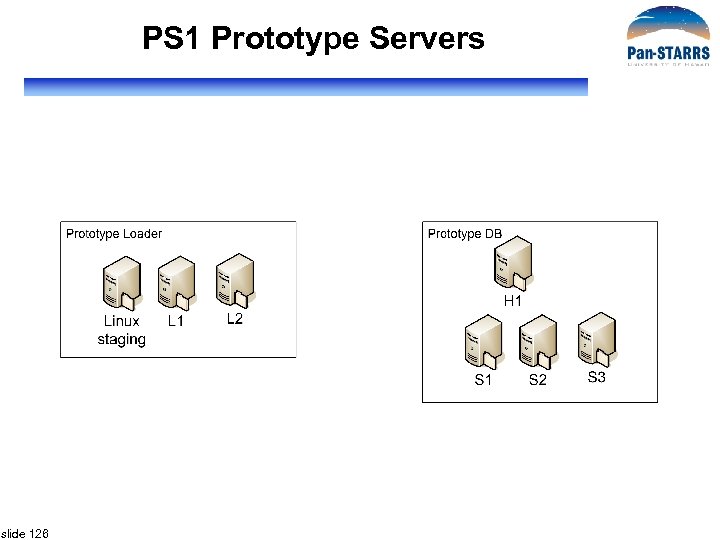

PS 1 Prototype Servers slide 126

PS 1 Prototype Servers PS 1 Prototype slide 127

PS 1 Prototype Servers slide 128

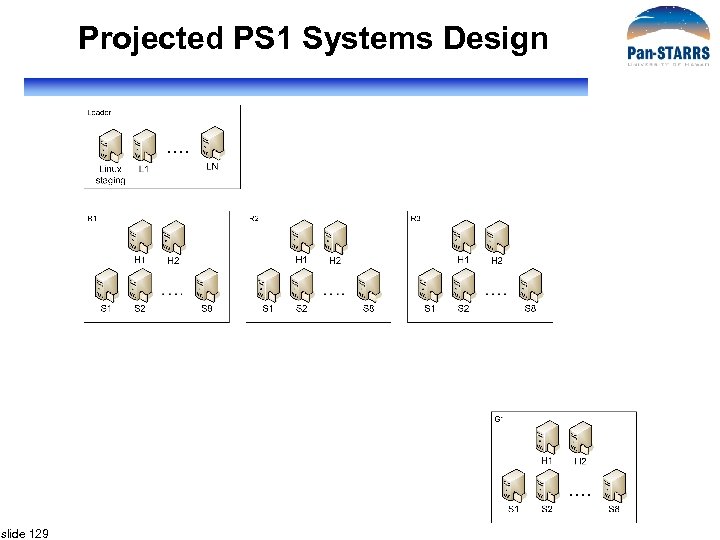

Projected PS 1 Systems Design slide 129

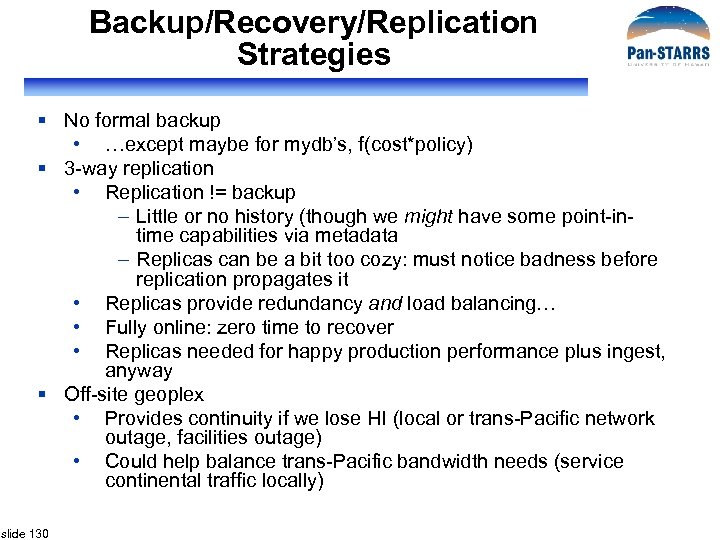

Backup/Recovery/Replication Strategies § No formal backup • …except maybe for mydb’s, f(cost*policy) § 3 -way replication • Replication != backup – Little or no history (though we might have some point-intime capabilities via metadata – Replicas can be a bit too cozy: must notice badness before replication propagates it • Replicas provide redundancy and load balancing… • Fully online: zero time to recover • Replicas needed for happy production performance plus ingest, anyway § Off-site geoplex • Provides continuity if we lose HI (local or trans-Pacific network outage, facilities outage) • Could help balance trans-Pacific bandwidth needs (service continental traffic locally) slide 130

Why No Traditional Backups? § Money no object… do traditional backups too!!! § Synergy, economy of scale with other collaboration needs (IPP? )… do traditional backups too!!! § Not super pricey… § …but not very useful relative to a replica for our purposes • Time to recover slide 131

Failure Scenarios (Easy Ones) § Zero downtime, little effort: • Disks (common) – Simple* hotswap – Automatic rebuild from hotspare or replacement drive • Power supplies (not uncommon) – Simple* hotswap • Fans (pretty common) – Simple* hotswap * Assuming sufficiently non-beige gear slide 132

Failure Scenarios (Mostly Harmless Ones) § Some downtime and replica cutover: • System board (rare) • Memory (rare and usually proactively detected and handled via scheduled maintenance) • Disk controller (rare, potentially minimal downtime via cold-spare controller) • CPU (not utterly uncommon, can be tough and time consuming to diagnose correctly) slide 133

Failure Scenarios (Slightly Spooky Ones) § Database mangling by human or pipeline error • Gotta catch this before replication propagates it everywhere • Need lots of sanity checks before replicating • (and so off-the-shelf near-realtime replication tools don’t help us) • Need to run replication backwards from older, healthy replicas. Probably less automated than healthy replication. § Catastrophic loss of datacenter • Okay, we have the geoplex – …but we’re dangling by a single copy ‘till recovery is complete – …and this may be a while. – …but are we still in trouble? Depending on colo scenarios, did we also lose the IPP and flatfile archive? slide 134

Failure Scenarios (Nasty Ones) § Unrecoverable badness fully replicated before detection § Catastrophic loss of datacenter without geoplex § Can we ever catch back up with the data rate if we need to start over and rebuild with an ingest campaign? Don’t bet on it! slide 135

Operating Systems, DBMS? § Sql 2005 EE x 64 • Why? • Why not DB 2, Oracle RAC, Postgre. SQL, My. SQL, <insert your favorite>? § (Win 2003 EE x 64) § Why EE? Because it’s there. <indexed DPVs? > § Scientific Linux 4. x/5. x, or local favorite § Platform rant from JVV available over beers slide 136

Systems/Database Management § § § slide 137 Active Directory infrastructure Windows patching tools, practices Linux patching tools, practices Monitoring Staffing requirements

Facilities/Infrastructure Projections for PS 1 § Power/cooling • Prototype is 9. 2 k. W (2. 6 Tons AC) • PS 1: something like 43 k. W, 12. 1 Tons § Rack space • Prototype is 69 RU, <2 42 U racks (includes 14 U of rackmount UPS at JHU) • PS 1: about 310 RU (9 -ish racks) § Networking: ~40 Gbit Ethernet ports § …plus sundry infrastructure, ideally already in place (domain controllers, monitoring systems, etc. ) slide 138

Operational Handoff to Uof. H § Gulp. slide 139

How Design Meets Requirements § Cross-matching detections with objects • Zone cross-match part of loading pipeline • Already exceeded requirement with prototype § Query performance • Ping-pong configuration for query during ingest • Spatial indexing and distributed queries • Query manager can be scaled out as necessary § Scalability • Shared-nothing architecture • Scale out as needed • Beyond PS 1 we will need truly parallel query plans slide 140

WBS/Development Tasks 2 PM Refine Prototype/Schema 3 PM Staging/Transformation 1 PM Initial Load 3 PM Load/Resolve Detections 3 PM Resolve/Synchronize Objects 1 PM Create Snapshot 2 PM Query Processing 2 PM Hardware 2 PM Documentation 2 PM Redistribute Data 4 PM slide 141 • Workflow Systems • Logging • Data Scrubbing • SSIS (? ) + C# Replication Module 2 PM 4 PM Testing • QM/Logging Total Effort: Delivery: 35 PM 9/2008

Personnel Available § § § § § slide 142 2 new hires (SW Engineers) 100% Maria 80% Ani 20% Jan 10% Alainna 15% Nolan Li 25% Sam Carliles 25% George Fekete 5% Laszlo Dobos 50% (for 6 months)

Issues/Risks § Versioning • Do we need to preserve snapshots of monthly versions? • How will users reproduce queries on subsequent versions? • Is it ok that a new version of the sky replaces the previous one every month? § Backup/recovery • Will we need 3 local copies rather than 2 for safety • Is restoring from offsite copy feasible? § Handoff to If. A beyond scope of WBS shown • This will involve several PMs slide 143

Mahalo!

Query Manager My. DB table that query results go into Context that query is executed in Load one of the sample queries into query buffer Check query syntax Name that this query job is given Get graphical query plan Query buffer Run query in quick (1 minute) mode Submit query to long (8 hour) queue slide 145

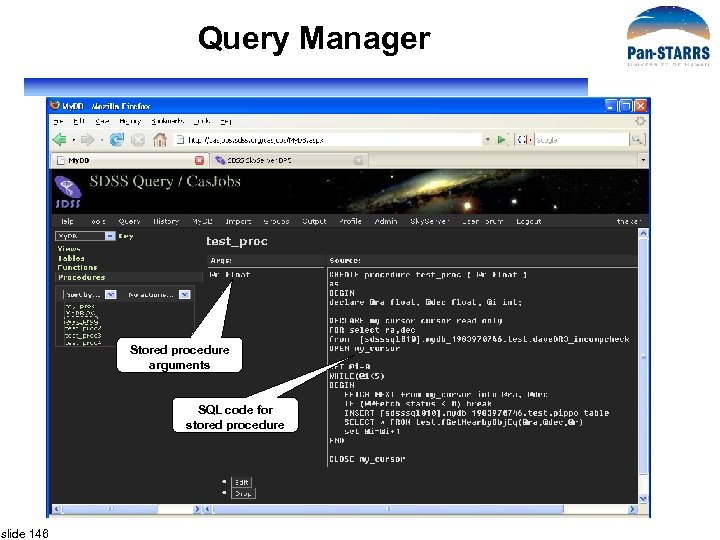

Query Manager Stored procedure arguments SQL code for stored procedure slide 146

Query Manager My. DB context is the default, but other contexts can be selected User can browse DB Views, Tables, Functions and Procedures The space used and total space available Multiple tables can be selected and dropped at once Table list can be sorted by name, size, type. slide 147

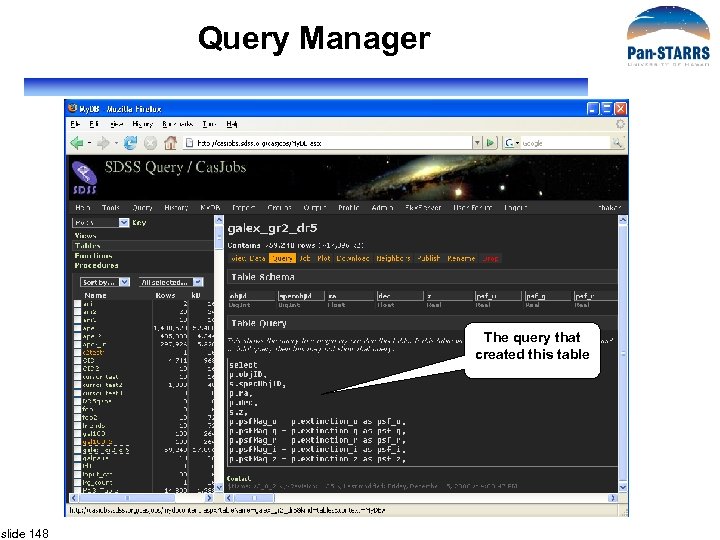

Query Manager The query that created this table slide 148

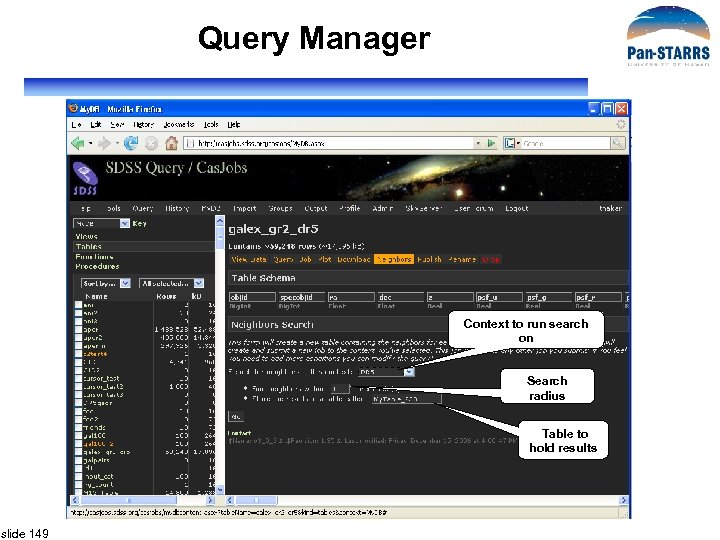

Query Manager Context to run search on Search radius Table to hold results slide 149

b03da9497462b97f49b645d2552bed8c.ppt