566b47e202d99e385940a9b8710455b6.ppt

- Количество слайдов: 121

Programming multithreaded code with Open. MP Jemmy Hu SHARCNET HPC Consultant University of Waterloo May 31, 2016 /work/jemmyhu/ss 2016/openmp/

Programming multithreaded code with Open. MP Jemmy Hu SHARCNET HPC Consultant University of Waterloo May 31, 2016 /work/jemmyhu/ss 2016/openmp/

Contents • Parallel Programming Concepts • Open. MP on SHARCNET • Open. MP - Getting Started - Open. MP Directives Parallel Regions Worksharing Constructs Data Environment Synchronization Runtime functions/environment variables • Case Studies • Recent Updates (SIMD, Task, …) • References

Contents • Parallel Programming Concepts • Open. MP on SHARCNET • Open. MP - Getting Started - Open. MP Directives Parallel Regions Worksharing Constructs Data Environment Synchronization Runtime functions/environment variables • Case Studies • Recent Updates (SIMD, Task, …) • References

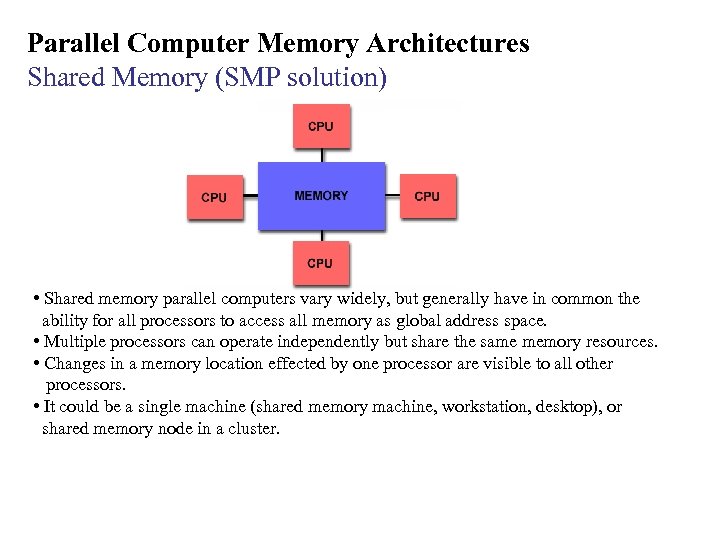

Parallel Computer Memory Architectures Shared Memory (SMP solution) • Shared memory parallel computers vary widely, but generally have in common the ability for all processors to access all memory as global address space. • Multiple processors can operate independently but share the same memory resources. • Changes in a memory location effected by one processor are visible to all other processors. • It could be a single machine (shared memory machine, workstation, desktop), or shared memory node in a cluster.

Parallel Computer Memory Architectures Shared Memory (SMP solution) • Shared memory parallel computers vary widely, but generally have in common the ability for all processors to access all memory as global address space. • Multiple processors can operate independently but share the same memory resources. • Changes in a memory location effected by one processor are visible to all other processors. • It could be a single machine (shared memory machine, workstation, desktop), or shared memory node in a cluster.

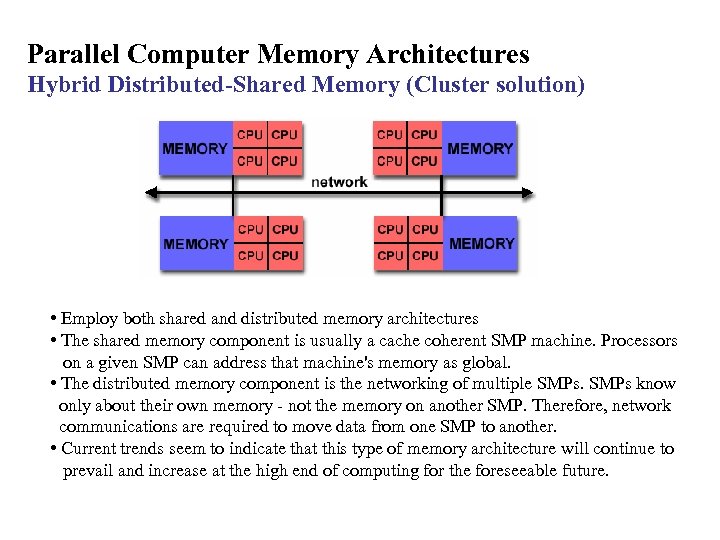

Parallel Computer Memory Architectures Hybrid Distributed-Shared Memory (Cluster solution) • Employ both shared and distributed memory architectures • The shared memory component is usually a cache coherent SMP machine. Processors on a given SMP can address that machine's memory as global. • The distributed memory component is the networking of multiple SMPs know only about their own memory - not the memory on another SMP. Therefore, network communications are required to move data from one SMP to another. • Current trends seem to indicate that this type of memory architecture will continue to prevail and increase at the high end of computing for the foreseeable future.

Parallel Computer Memory Architectures Hybrid Distributed-Shared Memory (Cluster solution) • Employ both shared and distributed memory architectures • The shared memory component is usually a cache coherent SMP machine. Processors on a given SMP can address that machine's memory as global. • The distributed memory component is the networking of multiple SMPs know only about their own memory - not the memory on another SMP. Therefore, network communications are required to move data from one SMP to another. • Current trends seem to indicate that this type of memory architecture will continue to prevail and increase at the high end of computing for the foreseeable future.

Parallel Computing: What is it? • Parallel computing is when a program uses concurrency to either: - decrease the runtime for the solution to a problem. - increase the size of the problem that can be solved. Gives you more performance to throw at your problems. • Parallel programming is not generally trivial, 3 aspects: – specifying parallel execution – communicating between multiple procs/threads – synchronization tools for automated parallelism are either highly specialized or absent • Many issues need to be considered, many of which don’t have an analog in serial computing – data vs. task parallelism – problem structure – parallel granularity

Parallel Computing: What is it? • Parallel computing is when a program uses concurrency to either: - decrease the runtime for the solution to a problem. - increase the size of the problem that can be solved. Gives you more performance to throw at your problems. • Parallel programming is not generally trivial, 3 aspects: – specifying parallel execution – communicating between multiple procs/threads – synchronization tools for automated parallelism are either highly specialized or absent • Many issues need to be considered, many of which don’t have an analog in serial computing – data vs. task parallelism – problem structure – parallel granularity

Distributed vs. Shared memory model • Distributed memory systems – For processors to share data, the programmer must explicitly arrange for communication -“Message Passing” – Message passing libraries: • MPI (“Message Passing Interface”) • PVM (“Parallel Virtual Machine”) • Shared memory systems – Compiler directives (Open. MP) – “Thread” based programming (pthread, …)

Distributed vs. Shared memory model • Distributed memory systems – For processors to share data, the programmer must explicitly arrange for communication -“Message Passing” – Message passing libraries: • MPI (“Message Passing Interface”) • PVM (“Parallel Virtual Machine”) • Shared memory systems – Compiler directives (Open. MP) – “Thread” based programming (pthread, …)

Open. MP Concepts: What is it? • An Application Program Interface (API) that may be used to explicitly direct multi-threaded, shared memory parallelism • Using compiler directives, library routines and environment variables to automatically generate threaded (or multi-process) code that can run in a concurrent or parallel environment. • Portable: - The API is specified for C/C++ and Fortran - Multiple platforms have been implemented including most Unix platforms and Windows NT • Standardized: Jointly defined and endorsed by a group of major computer hardware and software vendors • What does Open. MP stand for? Open Specifications for Multi Processing

Open. MP Concepts: What is it? • An Application Program Interface (API) that may be used to explicitly direct multi-threaded, shared memory parallelism • Using compiler directives, library routines and environment variables to automatically generate threaded (or multi-process) code that can run in a concurrent or parallel environment. • Portable: - The API is specified for C/C++ and Fortran - Multiple platforms have been implemented including most Unix platforms and Windows NT • Standardized: Jointly defined and endorsed by a group of major computer hardware and software vendors • What does Open. MP stand for? Open Specifications for Multi Processing

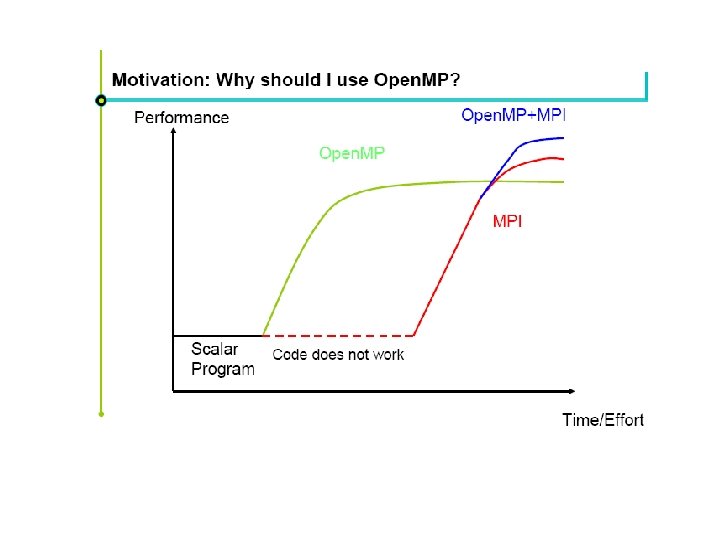

Open. MP: Benefits • Standardization: Provide a standard among a variety of shared memory architectures/platforms • Lean and Mean: Establish a simple and limited set of directives for programming shared memory machines. Significant parallelism can be implemented by using just 3 or 4 directives. • Ease of Use: Provide capability to incrementally parallelize a serial program, unlike message-passing libraries which typically require an all or nothing approach • Portability: Supports Fortran (77, 90, and 95), C, and C++ Public forum for API and membership

Open. MP: Benefits • Standardization: Provide a standard among a variety of shared memory architectures/platforms • Lean and Mean: Establish a simple and limited set of directives for programming shared memory machines. Significant parallelism can be implemented by using just 3 or 4 directives. • Ease of Use: Provide capability to incrementally parallelize a serial program, unlike message-passing libraries which typically require an all or nothing approach • Portability: Supports Fortran (77, 90, and 95), C, and C++ Public forum for API and membership

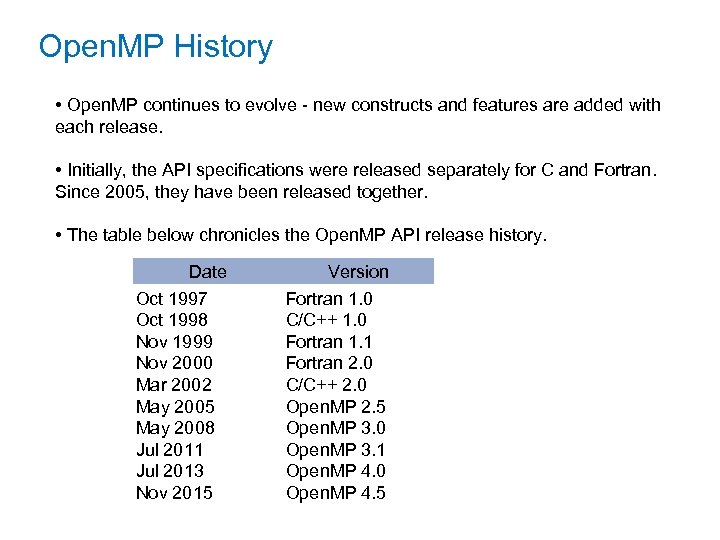

Open. MP History • Open. MP continues to evolve - new constructs and features are added with each release. • Initially, the API specifications were released separately for C and Fortran. Since 2005, they have been released together. • The table below chronicles the Open. MP API release history. Date Oct 1997 Oct 1998 Nov 1999 Nov 2000 Mar 2002 May 2005 May 2008 Jul 2011 Jul 2013 Nov 2015 Version Fortran 1. 0 C/C++ 1. 0 Fortran 1. 1 Fortran 2. 0 C/C++ 2. 0 Open. MP 2. 5 Open. MP 3. 0 Open. MP 3. 1 Open. MP 4. 0 Open. MP 4. 5

Open. MP History • Open. MP continues to evolve - new constructs and features are added with each release. • Initially, the API specifications were released separately for C and Fortran. Since 2005, they have been released together. • The table below chronicles the Open. MP API release history. Date Oct 1997 Oct 1998 Nov 1999 Nov 2000 Mar 2002 May 2005 May 2008 Jul 2011 Jul 2013 Nov 2015 Version Fortran 1. 0 C/C++ 1. 0 Fortran 1. 1 Fortran 2. 0 C/C++ 2. 0 Open. MP 2. 5 Open. MP 3. 0 Open. MP 3. 1 Open. MP 4. 0 Open. MP 4. 5

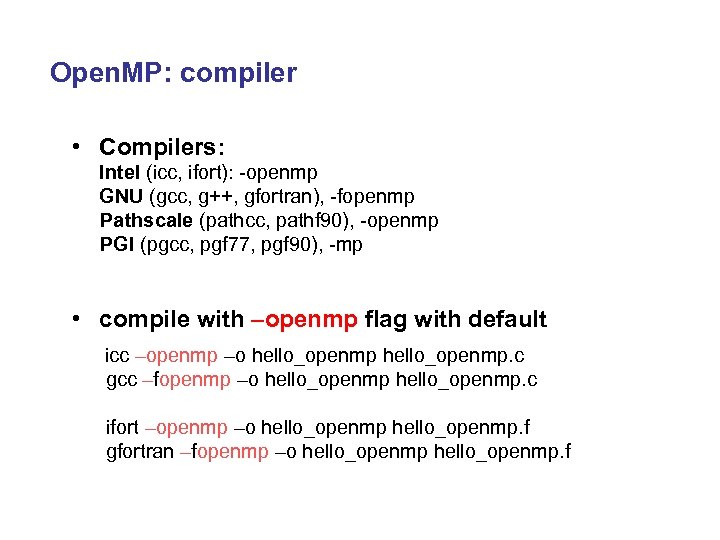

Open. MP: compiler • Compilers: Intel (icc, ifort): -openmp GNU (gcc, g++, gfortran), -fopenmp Pathscale (pathcc, pathf 90), -openmp PGI (pgcc, pgf 77, pgf 90), -mp • compile with –openmp flag with default icc –openmp –o hello_openmp. c gcc –fopenmp –o hello_openmp. c ifort –openmp –o hello_openmp. f gfortran –fopenmp –o hello_openmp. f

Open. MP: compiler • Compilers: Intel (icc, ifort): -openmp GNU (gcc, g++, gfortran), -fopenmp Pathscale (pathcc, pathf 90), -openmp PGI (pgcc, pgf 77, pgf 90), -mp • compile with –openmp flag with default icc –openmp –o hello_openmp. c gcc –fopenmp –o hello_openmp. c ifort –openmp –o hello_openmp. f gfortran –fopenmp –o hello_openmp. f

Open. MP on SHARCNET • SHARCNET systems https: //www. sharcnet. ca/my/systems All systems allow for SMP- based parallel programming (i. e. , Open. MP) applications, but the size (number of threads) per job differ from cluster to cluster (depends on the number of cores per node on the cluster)

Open. MP on SHARCNET • SHARCNET systems https: //www. sharcnet. ca/my/systems All systems allow for SMP- based parallel programming (i. e. , Open. MP) applications, but the size (number of threads) per job differ from cluster to cluster (depends on the number of cores per node on the cluster)

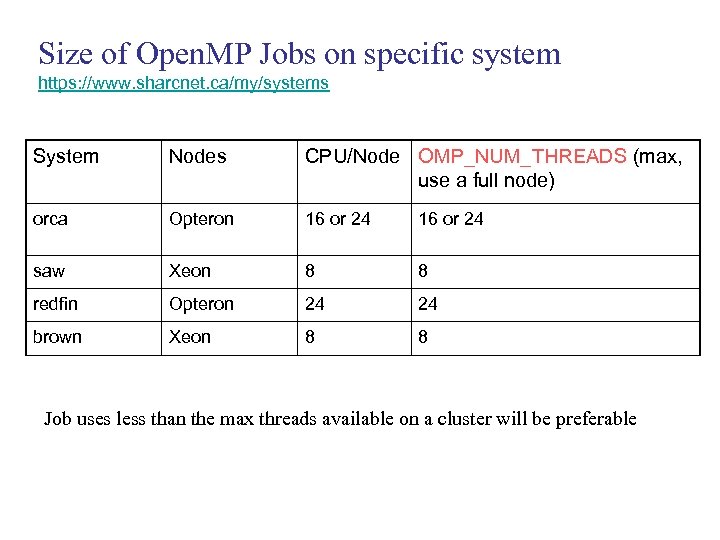

Size of Open. MP Jobs on specific system https: //www. sharcnet. ca/my/systems System Nodes CPU/Node OMP_NUM_THREADS (max, use a full node) orca Opteron 16 or 24 saw Xeon 8 8 redfin Opteron 24 24 brown Xeon 8 8 Job uses less than the max threads available on a cluster will be preferable

Size of Open. MP Jobs on specific system https: //www. sharcnet. ca/my/systems System Nodes CPU/Node OMP_NUM_THREADS (max, use a full node) orca Opteron 16 or 24 saw Xeon 8 8 redfin Opteron 24 24 brown Xeon 8 8 Job uses less than the max threads available on a cluster will be preferable

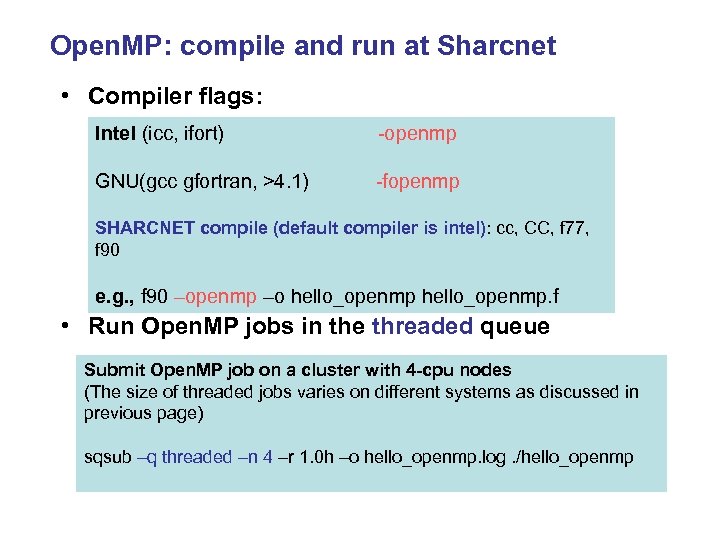

Open. MP: compile and run at Sharcnet • Compiler flags: Intel (icc, ifort) -openmp GNU(gcc gfortran, >4. 1) -fopenmp SHARCNET compile (default compiler is intel): cc, CC, f 77, f 90 e. g. , f 90 –openmp –o hello_openmp. f • Run Open. MP jobs in the threaded queue Submit Open. MP job on a cluster with 4 -cpu nodes (The size of threaded jobs varies on different systems as discussed in previous page) sqsub –q threaded –n 4 –r 1. 0 h –o hello_openmp. log. /hello_openmp

Open. MP: compile and run at Sharcnet • Compiler flags: Intel (icc, ifort) -openmp GNU(gcc gfortran, >4. 1) -fopenmp SHARCNET compile (default compiler is intel): cc, CC, f 77, f 90 e. g. , f 90 –openmp –o hello_openmp. f • Run Open. MP jobs in the threaded queue Submit Open. MP job on a cluster with 4 -cpu nodes (The size of threaded jobs varies on different systems as discussed in previous page) sqsub –q threaded –n 4 –r 1. 0 h –o hello_openmp. log. /hello_openmp

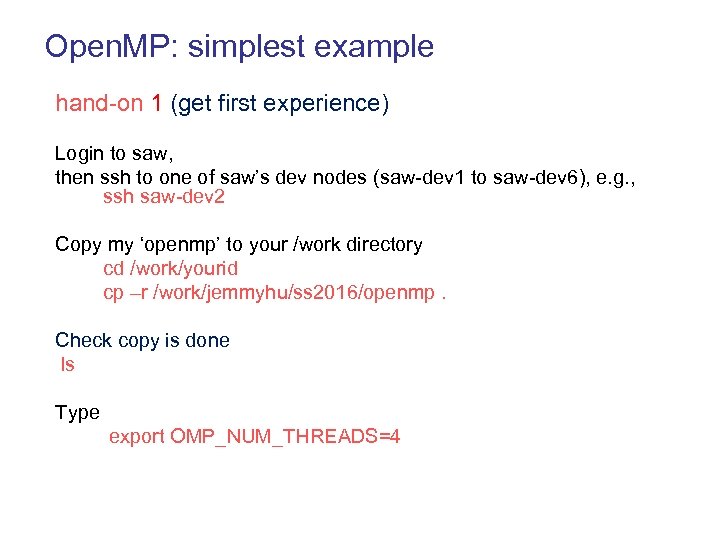

Open. MP: simplest example hand-on 1 (get first experience) Login to saw, then ssh to one of saw’s dev nodes (saw-dev 1 to saw-dev 6), e. g. , ssh saw-dev 2 Copy my ‘openmp’ to your /work directory cd /work/yourid cp –r /work/jemmyhu/ss 2016/openmp. Check copy is done ls Type export OMP_NUM_THREADS=4

Open. MP: simplest example hand-on 1 (get first experience) Login to saw, then ssh to one of saw’s dev nodes (saw-dev 1 to saw-dev 6), e. g. , ssh saw-dev 2 Copy my ‘openmp’ to your /work directory cd /work/yourid cp –r /work/jemmyhu/ss 2016/openmp. Check copy is done ls Type export OMP_NUM_THREADS=4

![[jemmyhu@saw 332: ~/work] cd ss 2016/openmp [jemmyhu@saw 332: ~/work/ss 2016/openmp] cd hellow hello-seq. f [jemmyhu@saw 332: ~/work] cd ss 2016/openmp [jemmyhu@saw 332: ~/work/ss 2016/openmp] cd hellow hello-seq. f](https://present5.com/presentation/566b47e202d99e385940a9b8710455b6/image-18.jpg) [jemmyhu@saw 332: ~/work] cd ss 2016/openmp [jemmyhu@saw 332: ~/work/ss 2016/openmp] cd hellow hello-seq. f 90: program hello write(*, *) "Hello, world!“ end program [jemmyhu@saw 332: ~] f 90 -o hello-seq. f 90 [jemmyhu@saw 332: ~]. /hello-seq Hello, world! Add the two ‘red’ lines to the above code, save it as ‘hello-par 1. f 90’, compile and run program hello !$omp parallel write(*, *) "Hello, world!" !$omp end parallel end program [jemmyhu@saw 332: ~] f 90 -o hello-par 1 -seq hello-par 1. f 90 [jemmyhu@saw 332: ~]. /hello-par 1 -seq Hello, world! Compiler ignore openmp directive; parallel region concept

[jemmyhu@saw 332: ~/work] cd ss 2016/openmp [jemmyhu@saw 332: ~/work/ss 2016/openmp] cd hellow hello-seq. f 90: program hello write(*, *) "Hello, world!“ end program [jemmyhu@saw 332: ~] f 90 -o hello-seq. f 90 [jemmyhu@saw 332: ~]. /hello-seq Hello, world! Add the two ‘red’ lines to the above code, save it as ‘hello-par 1. f 90’, compile and run program hello !$omp parallel write(*, *) "Hello, world!" !$omp end parallel end program [jemmyhu@saw 332: ~] f 90 -o hello-par 1 -seq hello-par 1. f 90 [jemmyhu@saw 332: ~]. /hello-par 1 -seq Hello, world! Compiler ignore openmp directive; parallel region concept

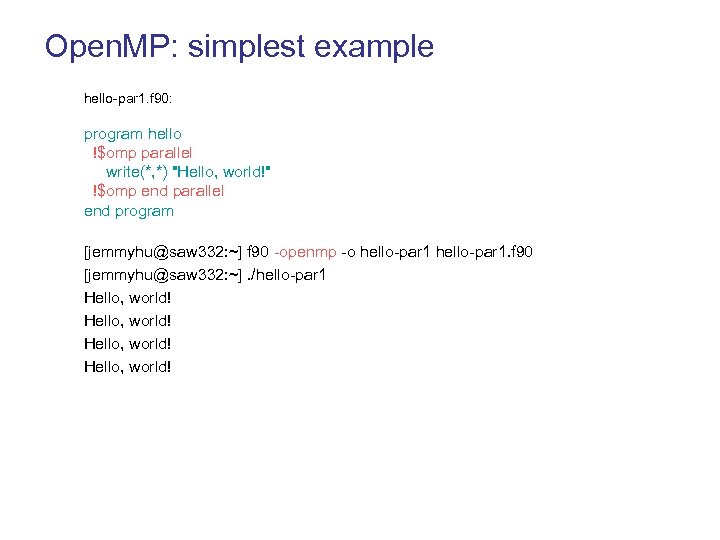

Open. MP: simplest example hello-par 1. f 90: program hello !$omp parallel write(*, *) "Hello, world!" !$omp end parallel end program [jemmyhu@saw 332: ~] f 90 -openmp -o hello-par 1. f 90 [jemmyhu@saw 332: ~]. /hello-par 1 Hello, world!

Open. MP: simplest example hello-par 1. f 90: program hello !$omp parallel write(*, *) "Hello, world!" !$omp end parallel end program [jemmyhu@saw 332: ~] f 90 -openmp -o hello-par 1. f 90 [jemmyhu@saw 332: ~]. /hello-par 1 Hello, world!

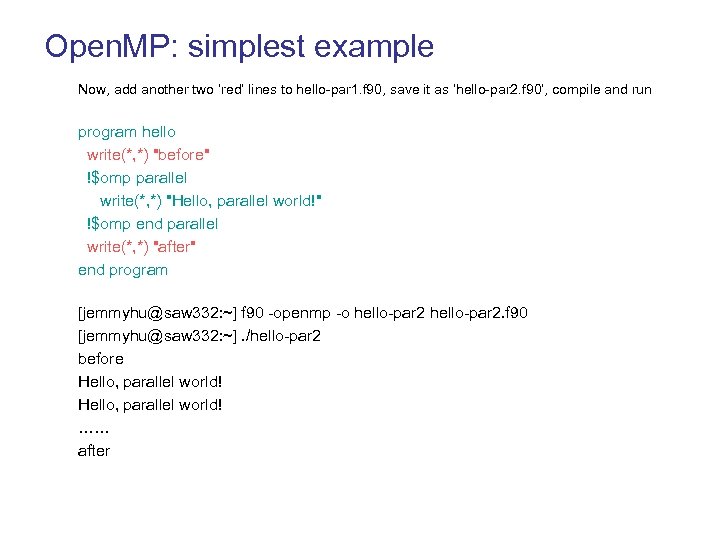

Open. MP: simplest example Now, add another two ‘red’ lines to hello-par 1. f 90, save it as ‘hello-par 2. f 90’, compile and run program hello write(*, *) "before" !$omp parallel write(*, *) "Hello, parallel world!" !$omp end parallel write(*, *) "after" end program [jemmyhu@saw 332: ~] f 90 -openmp -o hello-par 2. f 90 [jemmyhu@saw 332: ~]. /hello-par 2 before Hello, parallel world! …… after

Open. MP: simplest example Now, add another two ‘red’ lines to hello-par 1. f 90, save it as ‘hello-par 2. f 90’, compile and run program hello write(*, *) "before" !$omp parallel write(*, *) "Hello, parallel world!" !$omp end parallel write(*, *) "after" end program [jemmyhu@saw 332: ~] f 90 -openmp -o hello-par 2. f 90 [jemmyhu@saw 332: ~]. /hello-par 2 before Hello, parallel world! …… after

![Open. MP: simplest example [jemmyhu@saw 332: ~] sqsub -q threaded -n 4 -r 1. Open. MP: simplest example [jemmyhu@saw 332: ~] sqsub -q threaded -n 4 -r 1.](https://present5.com/presentation/566b47e202d99e385940a9b8710455b6/image-21.jpg) Open. MP: simplest example [jemmyhu@saw 332: ~] sqsub -q threaded -n 4 -r 1. 0 h -o hello-par 2. log. /hello-par 2 WARNING: no memory requirement defined; assuming 2 GB submitted as jobid 378196 [jemmyhu@saw 332: ~] sqjobs jobid queue state ncpus nodes time command ------ ------378196 test Q 4 - 8 s. /hello-par 2 2688 CPUs total, 2474 busy; 435 jobs running; 1 suspended, 1890 queued. 325 nodes allocated; 11 drain/offline, 336 total. Job <3910> is submitted to queue

Open. MP: simplest example [jemmyhu@saw 332: ~] sqsub -q threaded -n 4 -r 1. 0 h -o hello-par 2. log. /hello-par 2 WARNING: no memory requirement defined; assuming 2 GB submitted as jobid 378196 [jemmyhu@saw 332: ~] sqjobs jobid queue state ncpus nodes time command ------ ------378196 test Q 4 - 8 s. /hello-par 2 2688 CPUs total, 2474 busy; 435 jobs running; 1 suspended, 1890 queued. 325 nodes allocated; 11 drain/offline, 336 total. Job <3910> is submitted to queue

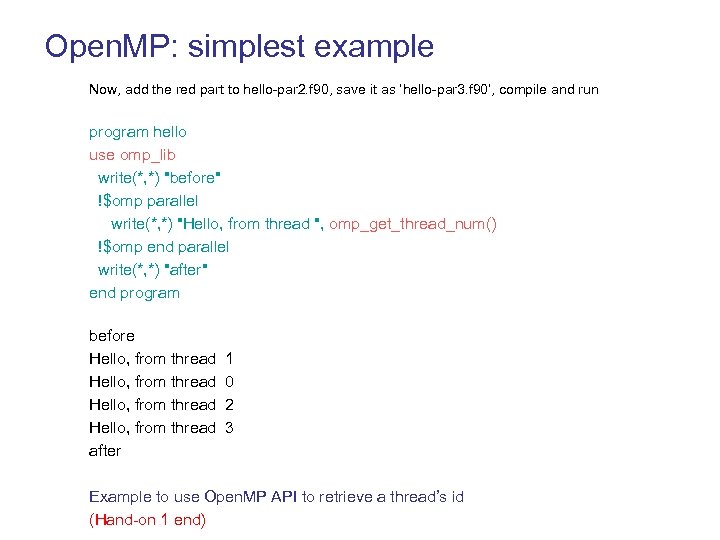

Open. MP: simplest example Now, add the red part to hello-par 2. f 90, save it as ‘hello-par 3. f 90’, compile and run program hello use omp_lib write(*, *) "before" !$omp parallel write(*, *) "Hello, from thread ", omp_get_thread_num() !$omp end parallel write(*, *) "after" end program before Hello, from thread 1 Hello, from thread 0 Hello, from thread 2 Hello, from thread 3 after Example to use Open. MP API to retrieve a thread’s id (Hand-on 1 end)

Open. MP: simplest example Now, add the red part to hello-par 2. f 90, save it as ‘hello-par 3. f 90’, compile and run program hello use omp_lib write(*, *) "before" !$omp parallel write(*, *) "Hello, from thread ", omp_get_thread_num() !$omp end parallel write(*, *) "after" end program before Hello, from thread 1 Hello, from thread 0 Hello, from thread 2 Hello, from thread 3 after Example to use Open. MP API to retrieve a thread’s id (Hand-on 1 end)

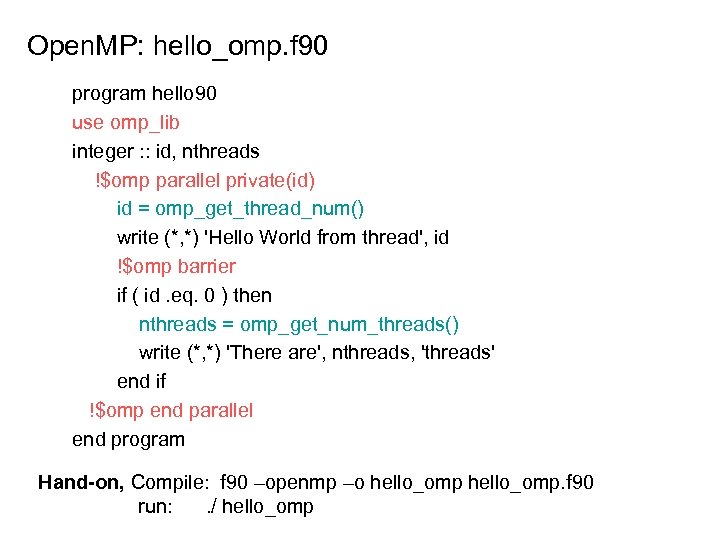

Open. MP: hello_omp. f 90 program hello 90 use omp_lib integer : : id, nthreads !$omp parallel private(id) id = omp_get_thread_num() write (*, *) 'Hello World from thread', id !$omp barrier if ( id. eq. 0 ) then nthreads = omp_get_num_threads() write (*, *) 'There are', nthreads, 'threads' end if !$omp end parallel end program Hand-on, Compile: f 90 –openmp –o hello_omp. f 90 run: . / hello_omp

Open. MP: hello_omp. f 90 program hello 90 use omp_lib integer : : id, nthreads !$omp parallel private(id) id = omp_get_thread_num() write (*, *) 'Hello World from thread', id !$omp barrier if ( id. eq. 0 ) then nthreads = omp_get_num_threads() write (*, *) 'There are', nthreads, 'threads' end if !$omp end parallel end program Hand-on, Compile: f 90 –openmp –o hello_omp. f 90 run: . / hello_omp

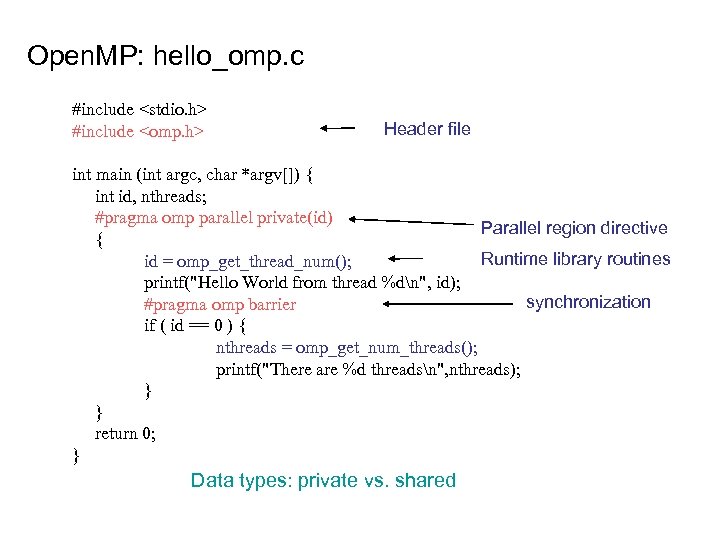

Open. MP: hello_omp. c #include

Open. MP: hello_omp. c #include

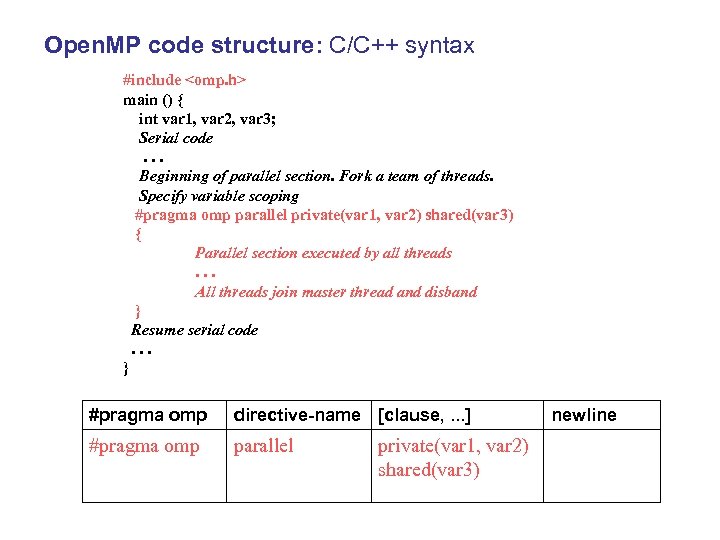

Open. MP code structure: C/C++ syntax #include

Open. MP code structure: C/C++ syntax #include

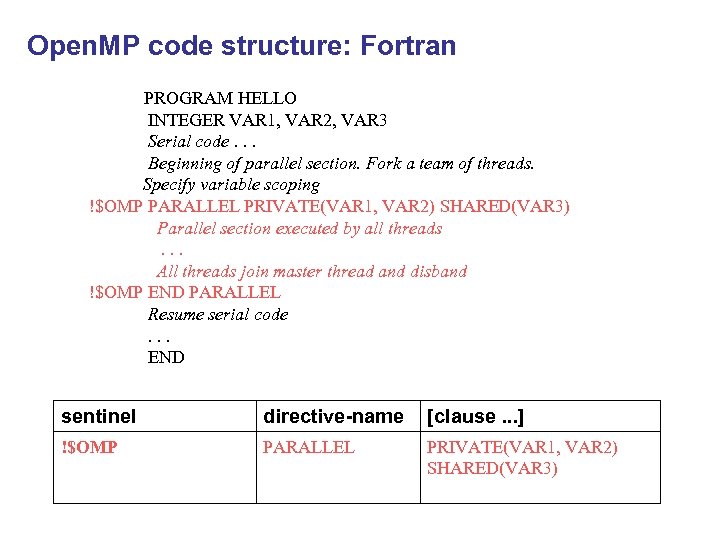

Open. MP code structure: Fortran PROGRAM HELLO INTEGER VAR 1, VAR 2, VAR 3 Serial code. . . Beginning of parallel section. Fork a team of threads. Specify variable scoping !$OMP PARALLEL PRIVATE(VAR 1, VAR 2) SHARED(VAR 3) Parallel section executed by all threads. . . All threads join master thread and disband !$OMP END PARALLEL Resume serial code . . . END sentinel directive-name [clause. . . ] !$OMP PARALLEL PRIVATE(VAR 1, VAR 2) SHARED(VAR 3)

Open. MP code structure: Fortran PROGRAM HELLO INTEGER VAR 1, VAR 2, VAR 3 Serial code. . . Beginning of parallel section. Fork a team of threads. Specify variable scoping !$OMP PARALLEL PRIVATE(VAR 1, VAR 2) SHARED(VAR 3) Parallel section executed by all threads. . . All threads join master thread and disband !$OMP END PARALLEL Resume serial code . . . END sentinel directive-name [clause. . . ] !$OMP PARALLEL PRIVATE(VAR 1, VAR 2) SHARED(VAR 3)

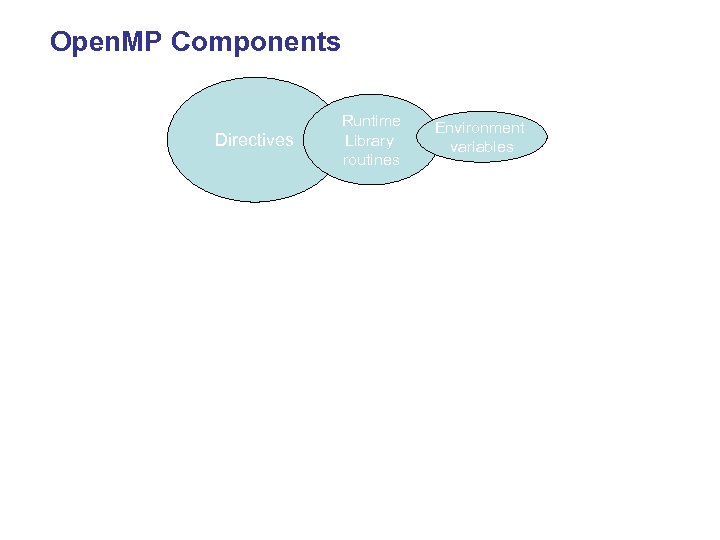

Open. MP Components Directives Runtime Library routines Environment variables

Open. MP Components Directives Runtime Library routines Environment variables

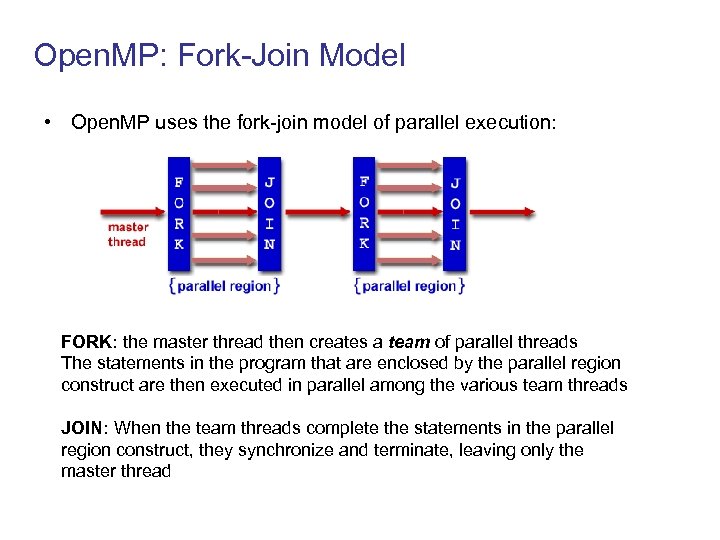

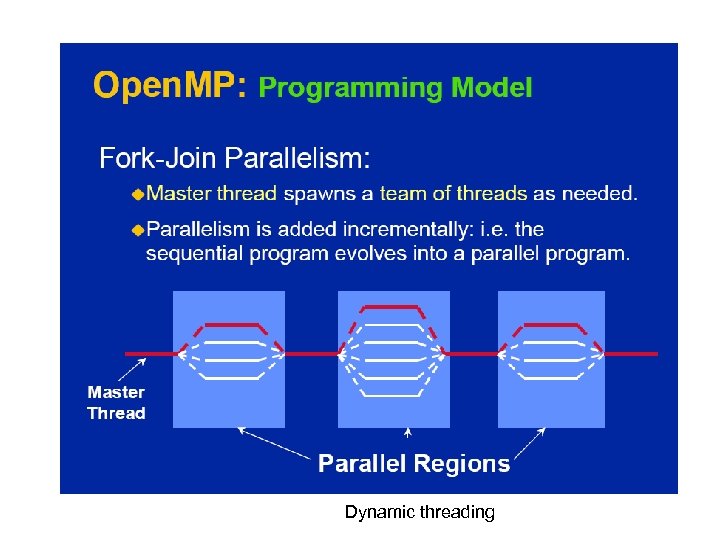

Open. MP: Fork-Join Model • Open. MP uses the fork-join model of parallel execution: FORK: the master thread then creates a team of parallel threads The statements in the program that are enclosed by the parallel region construct are then executed in parallel among the various team threads JOIN: When the team threads complete the statements in the parallel region construct, they synchronize and terminate, leaving only the master thread

Open. MP: Fork-Join Model • Open. MP uses the fork-join model of parallel execution: FORK: the master thread then creates a team of parallel threads The statements in the program that are enclosed by the parallel region construct are then executed in parallel among the various team threads JOIN: When the team threads complete the statements in the parallel region construct, they synchronize and terminate, leaving only the master thread

Shared Memory Model

Shared Memory Model

Open. MP Directives

Open. MP Directives

![Basic Directive Formats Fortran: directives come in pairs !$OMP directive [clause, …] [ structured Basic Directive Formats Fortran: directives come in pairs !$OMP directive [clause, …] [ structured](https://present5.com/presentation/566b47e202d99e385940a9b8710455b6/image-31.jpg) Basic Directive Formats Fortran: directives come in pairs !$OMP directive [clause, …] [ structured block of code ] !$OMP end directive C/C++: case sensitive #pragma omp directive [clause, …] newline [ structured block of code ]

Basic Directive Formats Fortran: directives come in pairs !$OMP directive [clause, …] [ structured block of code ] !$OMP end directive C/C++: case sensitive #pragma omp directive [clause, …] newline [ structured block of code ]

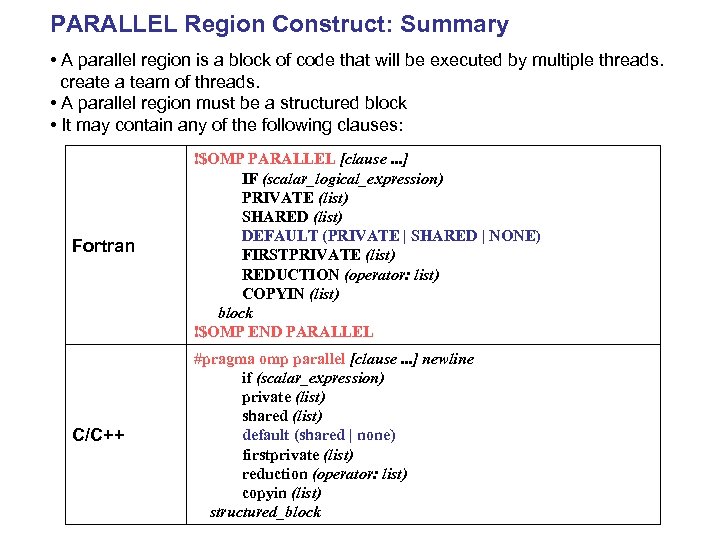

PARALLEL Region Construct: Summary • A parallel region is a block of code that will be executed by multiple threads. create a team of threads. • A parallel region must be a structured block • It may contain any of the following clauses: Fortran !$OMP PARALLEL [clause. . . ] IF (scalar_logical_expression) PRIVATE (list) SHARED (list) DEFAULT (PRIVATE | SHARED | NONE) FIRSTPRIVATE (list) REDUCTION (operator: list) COPYIN (list) block !$OMP END PARALLEL C/C++ #pragma omp parallel [clause. . . ] newline if (scalar_expression) private (list) shared (list) default (shared | none) firstprivate (list) reduction (operator: list) copyin (list) structured_block

PARALLEL Region Construct: Summary • A parallel region is a block of code that will be executed by multiple threads. create a team of threads. • A parallel region must be a structured block • It may contain any of the following clauses: Fortran !$OMP PARALLEL [clause. . . ] IF (scalar_logical_expression) PRIVATE (list) SHARED (list) DEFAULT (PRIVATE | SHARED | NONE) FIRSTPRIVATE (list) REDUCTION (operator: list) COPYIN (list) block !$OMP END PARALLEL C/C++ #pragma omp parallel [clause. . . ] newline if (scalar_expression) private (list) shared (list) default (shared | none) firstprivate (list) reduction (operator: list) copyin (list) structured_block

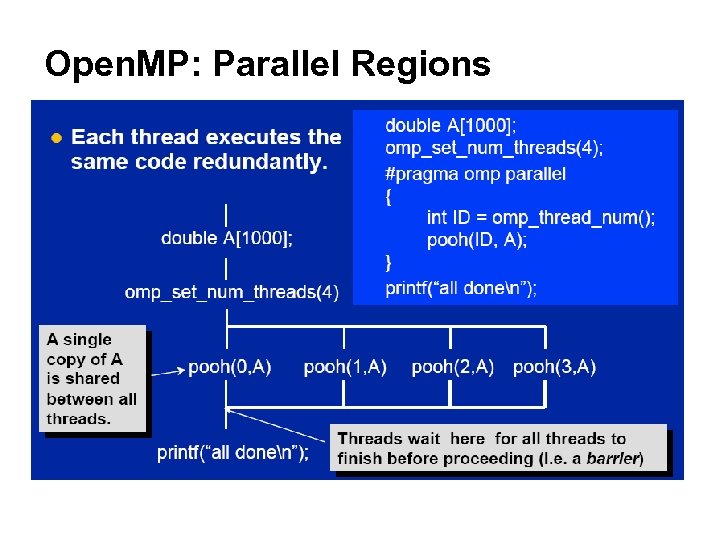

Open. MP: Parallel Regions

Open. MP: Parallel Regions

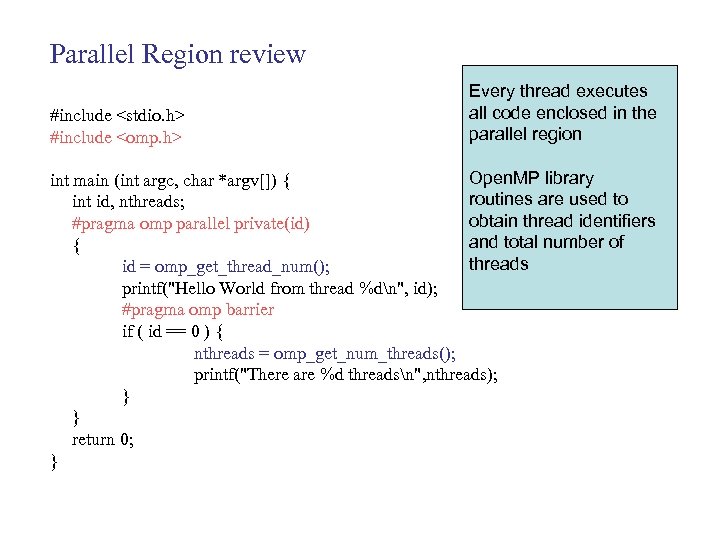

Parallel Region review #include

Parallel Region review #include

![Example: Matrix-Vector Multiplication A[n, n] x B[n] = C[n] for (i=0; i < SIZE; Example: Matrix-Vector Multiplication A[n, n] x B[n] = C[n] for (i=0; i < SIZE;](https://present5.com/presentation/566b47e202d99e385940a9b8710455b6/image-36.jpg) Example: Matrix-Vector Multiplication A[n, n] x B[n] = C[n] for (i=0; i < SIZE; i++) { for (j=0; j < SIZE; j++) c[i] += (A[i][j] * b[j]); } 2 1 0 4 1 9 5 2 3 2 1 1 3 14 13 9 3 4 3 1 2 3 0 2 0 Question: Can we simply add one parallel directive? #pragma omp parallel for (i=0; i < SIZE; i++) { for (j=0; j < SIZE; j++) c[i] += (A[i][j] * b[j]); } 4 1 = 19 13 17 4 11 3

Example: Matrix-Vector Multiplication A[n, n] x B[n] = C[n] for (i=0; i < SIZE; i++) { for (j=0; j < SIZE; j++) c[i] += (A[i][j] * b[j]); } 2 1 0 4 1 9 5 2 3 2 1 1 3 14 13 9 3 4 3 1 2 3 0 2 0 Question: Can we simply add one parallel directive? #pragma omp parallel for (i=0; i < SIZE; i++) { for (j=0; j < SIZE; j++) c[i] += (A[i][j] * b[j]); } 4 1 = 19 13 17 4 11 3

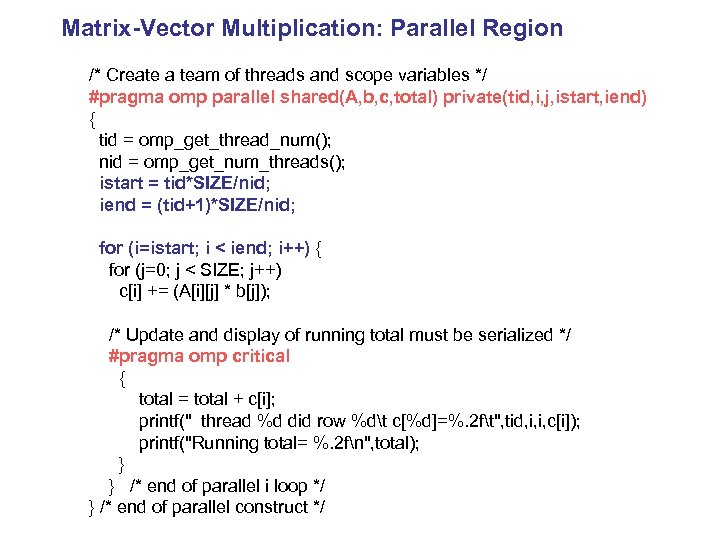

Matrix-Vector Multiplication: Parallel Region /* Create a team of threads and scope variables */ #pragma omp parallel shared(A, b, c, total) private(tid, i, j, istart, iend) { tid = omp_get_thread_num(); nid = omp_get_num_threads(); istart = tid*SIZE/nid; iend = (tid+1)*SIZE/nid; for (i=istart; i < iend; i++) { for (j=0; j < SIZE; j++) c[i] += (A[i][j] * b[j]); /* Update and display of running total must be serialized */ #pragma omp critical { total = total + c[i]; printf(" thread %d did row %dt c[%d]=%. 2 ft", tid, i, i, c[i]); printf("Running total= %. 2 fn", total); } } /* end of parallel i loop */ } /* end of parallel construct */

Matrix-Vector Multiplication: Parallel Region /* Create a team of threads and scope variables */ #pragma omp parallel shared(A, b, c, total) private(tid, i, j, istart, iend) { tid = omp_get_thread_num(); nid = omp_get_num_threads(); istart = tid*SIZE/nid; iend = (tid+1)*SIZE/nid; for (i=istart; i < iend; i++) { for (j=0; j < SIZE; j++) c[i] += (A[i][j] * b[j]); /* Update and display of running total must be serialized */ #pragma omp critical { total = total + c[i]; printf(" thread %d did row %dt c[%d]=%. 2 ft", tid, i, i, c[i]); printf("Running total= %. 2 fn", total); } } /* end of parallel i loop */ } /* end of parallel construct */

Open. MP: Work-sharing constructs: • A work-sharing construct divides the execution of the enclosed code region among the members of the team that encounter it. • Work-sharing constructs do not launch new threads • There is no implied barrier upon entry to a work-sharing construct, however there is an implied barrier at the end of a work sharing construct.

Open. MP: Work-sharing constructs: • A work-sharing construct divides the execution of the enclosed code region among the members of the team that encounter it. • Work-sharing constructs do not launch new threads • There is no implied barrier upon entry to a work-sharing construct, however there is an implied barrier at the end of a work sharing construct.

A motivating example

A motivating example

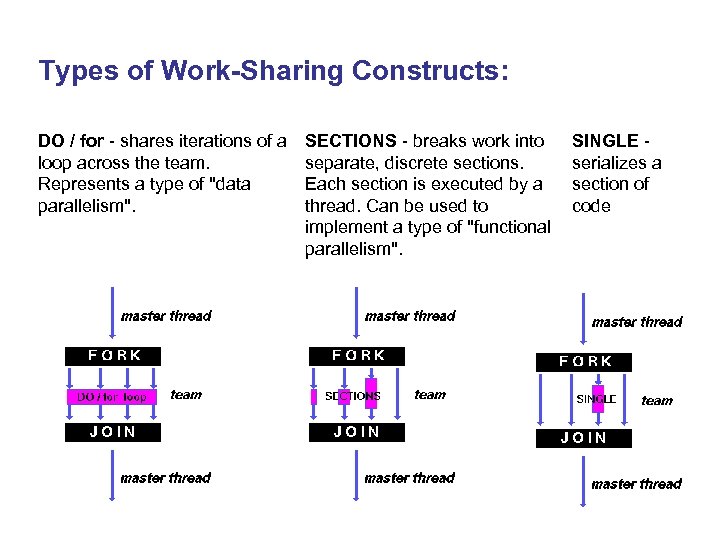

Types of Work-Sharing Constructs: DO / for - shares iterations of a loop across the team. Represents a type of "data parallelism". SECTIONS - breaks work into separate, discrete sections. Each section is executed by a thread. Can be used to implement a type of "functional parallelism". SINGLE - serializes a section of code

Types of Work-Sharing Constructs: DO / for - shares iterations of a loop across the team. Represents a type of "data parallelism". SECTIONS - breaks work into separate, discrete sections. Each section is executed by a thread. Can be used to implement a type of "functional parallelism". SINGLE - serializes a section of code

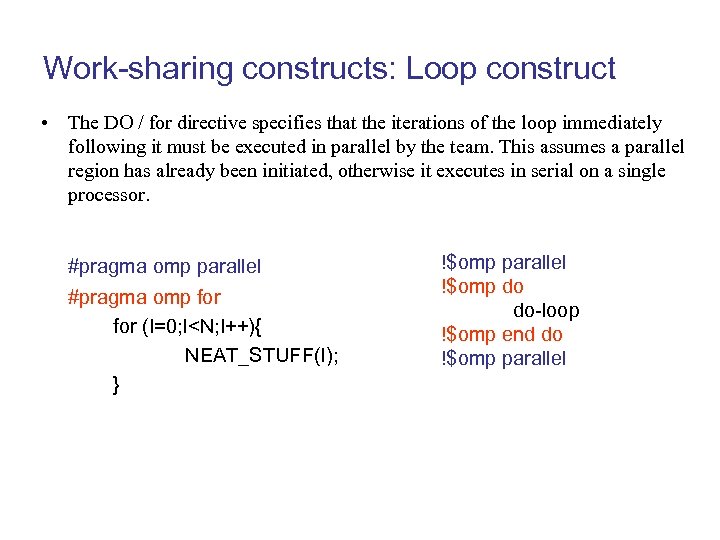

Work-sharing constructs: Loop construct • The DO / for directive specifies that the iterations of the loop immediately following it must be executed in parallel by the team. This assumes a parallel region has already been initiated, otherwise it executes in serial on a single processor. #pragma omp parallel #pragma omp for (I=0; I

Work-sharing constructs: Loop construct • The DO / for directive specifies that the iterations of the loop immediately following it must be executed in parallel by the team. This assumes a parallel region has already been initiated, otherwise it executes in serial on a single processor. #pragma omp parallel #pragma omp for (I=0; I

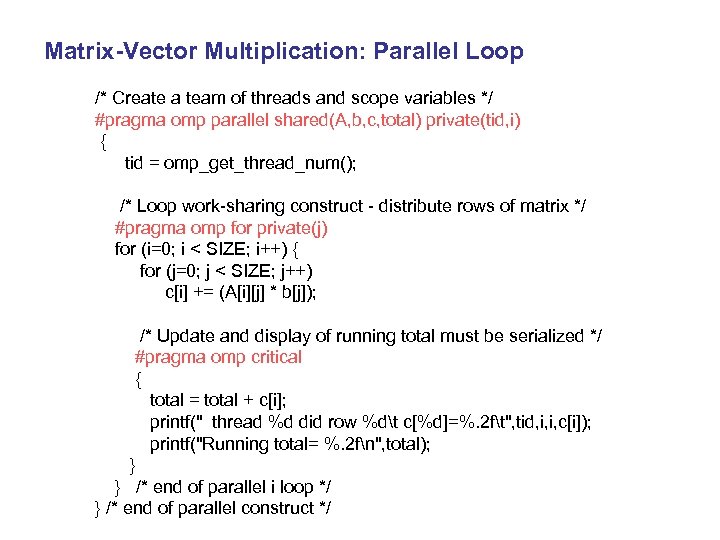

Matrix-Vector Multiplication: Parallel Loop /* Create a team of threads and scope variables */ #pragma omp parallel shared(A, b, c, total) private(tid, i) { tid = omp_get_thread_num(); /* Loop work-sharing construct - distribute rows of matrix */ #pragma omp for private(j) for (i=0; i < SIZE; i++) { for (j=0; j < SIZE; j++) c[i] += (A[i][j] * b[j]); /* Update and display of running total must be serialized */ #pragma omp critical { total = total + c[i]; printf(" thread %d did row %dt c[%d]=%. 2 ft", tid, i, i, c[i]); printf("Running total= %. 2 fn", total); } } /* end of parallel i loop */ } /* end of parallel construct */

Matrix-Vector Multiplication: Parallel Loop /* Create a team of threads and scope variables */ #pragma omp parallel shared(A, b, c, total) private(tid, i) { tid = omp_get_thread_num(); /* Loop work-sharing construct - distribute rows of matrix */ #pragma omp for private(j) for (i=0; i < SIZE; i++) { for (j=0; j < SIZE; j++) c[i] += (A[i][j] * b[j]); /* Update and display of running total must be serialized */ #pragma omp critical { total = total + c[i]; printf(" thread %d did row %dt c[%d]=%. 2 ft", tid, i, i, c[i]); printf("Running total= %. 2 fn", total); } } /* end of parallel i loop */ } /* end of parallel construct */

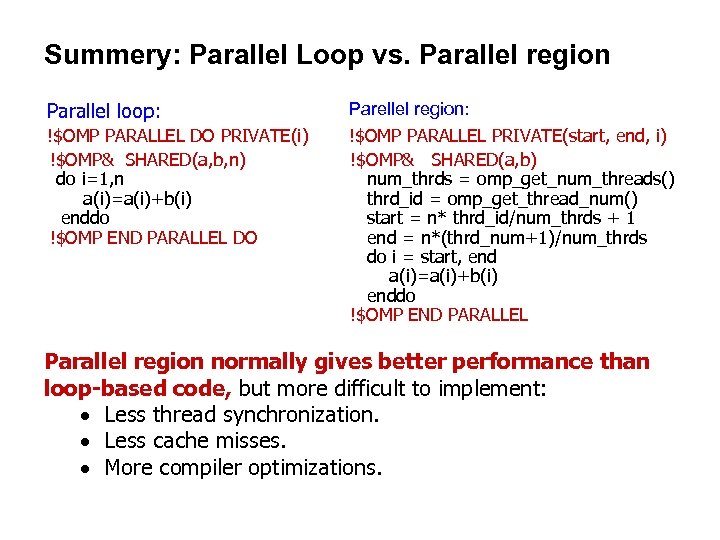

Summery: Parallel Loop vs. Parallel region Parallel loop: !$OMP PARALLEL DO PRIVATE(i) !$OMP& SHARED(a, b, n) do i=1, n a(i)=a(i)+b(i) enddo !$OMP END PARALLEL DO Parellel region: !$OMP PARALLEL PRIVATE(start, end, i) !$OMP& SHARED(a, b) num_thrds = omp_get_num_threads() thrd_id = omp_get_thread_num() start = n* thrd_id/num_thrds + 1 end = n*(thrd_num+1)/num_thrds do i = start, end a(i)=a(i)+b(i) enddo !$OMP END PARALLEL Parallel region normally gives better performance than loop-based code, but more difficult to implement: · Less thread synchronization. · Less cache misses. · More compiler optimizations.

Summery: Parallel Loop vs. Parallel region Parallel loop: !$OMP PARALLEL DO PRIVATE(i) !$OMP& SHARED(a, b, n) do i=1, n a(i)=a(i)+b(i) enddo !$OMP END PARALLEL DO Parellel region: !$OMP PARALLEL PRIVATE(start, end, i) !$OMP& SHARED(a, b) num_thrds = omp_get_num_threads() thrd_id = omp_get_thread_num() start = n* thrd_id/num_thrds + 1 end = n*(thrd_num+1)/num_thrds do i = start, end a(i)=a(i)+b(i) enddo !$OMP END PARALLEL Parallel region normally gives better performance than loop-based code, but more difficult to implement: · Less thread synchronization. · Less cache misses. · More compiler optimizations.

Hand-on 2 (read, compile, and run) /work/your. ID/ss 2016/openmp/MM • matrix-vector-seq. c • matrix-vector-parregion. c • matrix-vector-par. c • ser_mm_hu • omp_mm_hu. c

Hand-on 2 (read, compile, and run) /work/your. ID/ss 2016/openmp/MM • matrix-vector-seq. c • matrix-vector-parregion. c • matrix-vector-par. c • ser_mm_hu • omp_mm_hu. c

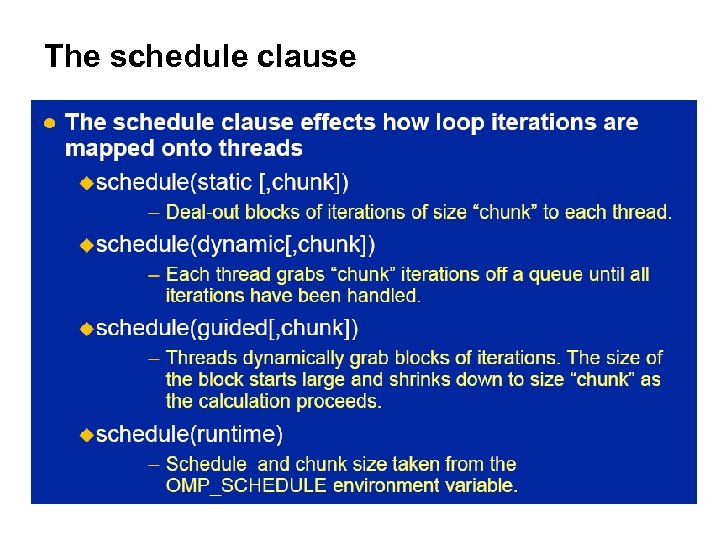

The schedule clause

The schedule clause

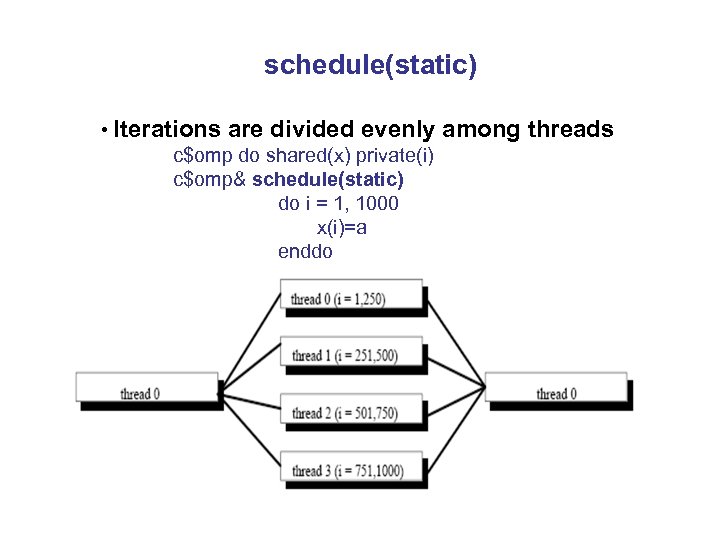

schedule(static) • Iterations are divided evenly c$omp do shared(x) private(i) c$omp& schedule(static) do i = 1, 1000 x(i)=a enddo among threads

schedule(static) • Iterations are divided evenly c$omp do shared(x) private(i) c$omp& schedule(static) do i = 1, 1000 x(i)=a enddo among threads

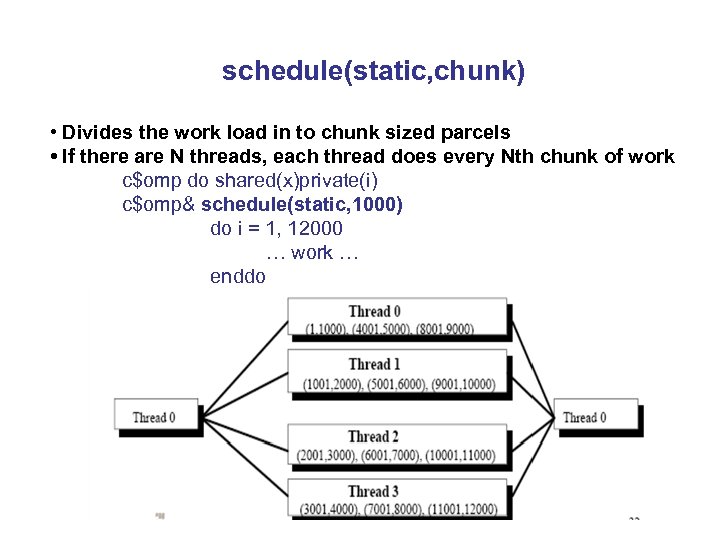

schedule(static, chunk) • Divides the work load in to chunk sized parcels • If there are N threads, each thread does every Nth chunk of work c$omp do shared(x)private(i) c$omp& schedule(static, 1000) do i = 1, 12000 … work … enddo

schedule(static, chunk) • Divides the work load in to chunk sized parcels • If there are N threads, each thread does every Nth chunk of work c$omp do shared(x)private(i) c$omp& schedule(static, 1000) do i = 1, 12000 … work … enddo

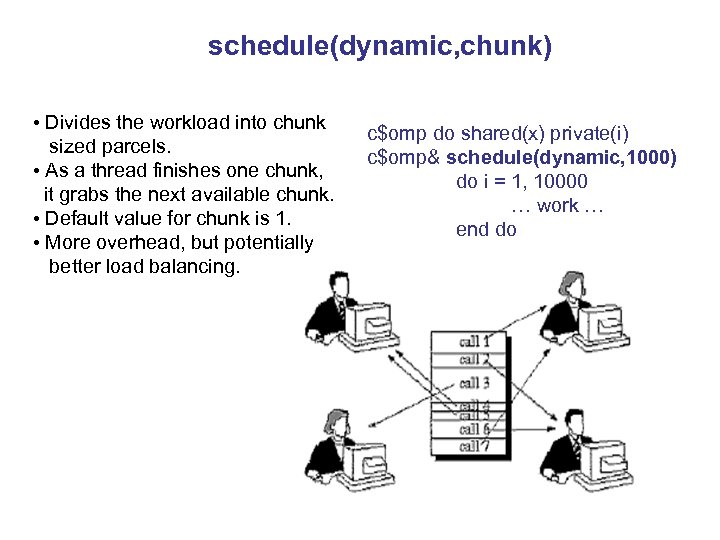

schedule(dynamic, chunk) • Divides the workload into chunk sized parcels. • As a thread finishes one chunk, it grabs the next available chunk. • Default value for chunk is 1. • More overhead, but potentially better load balancing. c$omp do shared(x) private(i) c$omp& schedule(dynamic, 1000) do i = 1, 10000 … work … end do

schedule(dynamic, chunk) • Divides the workload into chunk sized parcels. • As a thread finishes one chunk, it grabs the next available chunk. • Default value for chunk is 1. • More overhead, but potentially better load balancing. c$omp do shared(x) private(i) c$omp& schedule(dynamic, 1000) do i = 1, 10000 … work … end do

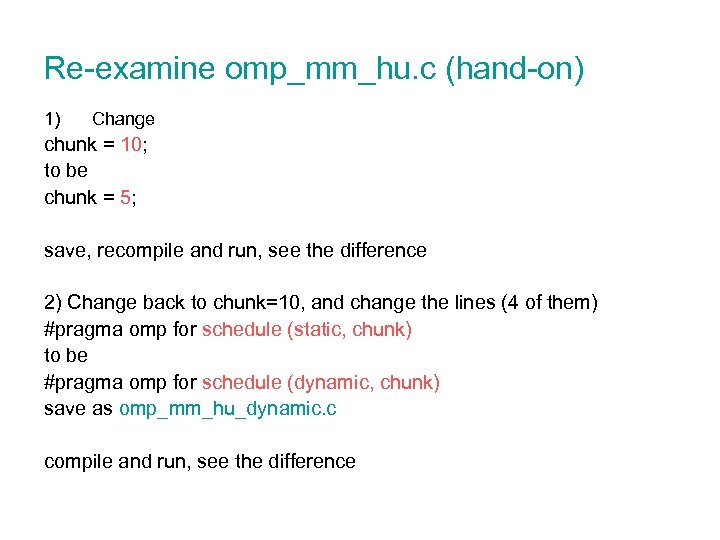

Re-examine omp_mm_hu. c (hand-on) 1) Change chunk = 10; to be chunk = 5; save, recompile and run, see the difference 2) Change back to chunk=10, and change the lines (4 of them) #pragma omp for schedule (static, chunk) to be #pragma omp for schedule (dynamic, chunk) save as omp_mm_hu_dynamic. c compile and run, see the difference

Re-examine omp_mm_hu. c (hand-on) 1) Change chunk = 10; to be chunk = 5; save, recompile and run, see the difference 2) Change back to chunk=10, and change the lines (4 of them) #pragma omp for schedule (static, chunk) to be #pragma omp for schedule (dynamic, chunk) save as omp_mm_hu_dynamic. c compile and run, see the difference

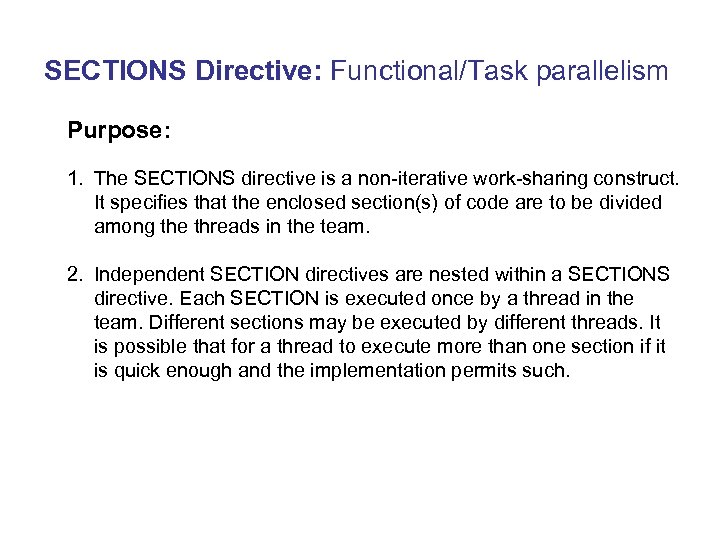

SECTIONS Directive: Functional/Task parallelism Purpose: 1. The SECTIONS directive is a non-iterative work-sharing construct. It specifies that the enclosed section(s) of code are to be divided among the threads in the team. 2. Independent SECTION directives are nested within a SECTIONS directive. Each SECTION is executed once by a thread in the team. Different sections may be executed by different threads. It is possible that for a thread to execute more than one section if it is quick enough and the implementation permits such.

SECTIONS Directive: Functional/Task parallelism Purpose: 1. The SECTIONS directive is a non-iterative work-sharing construct. It specifies that the enclosed section(s) of code are to be divided among the threads in the team. 2. Independent SECTION directives are nested within a SECTIONS directive. Each SECTION is executed once by a thread in the team. Different sections may be executed by different threads. It is possible that for a thread to execute more than one section if it is quick enough and the implementation permits such.

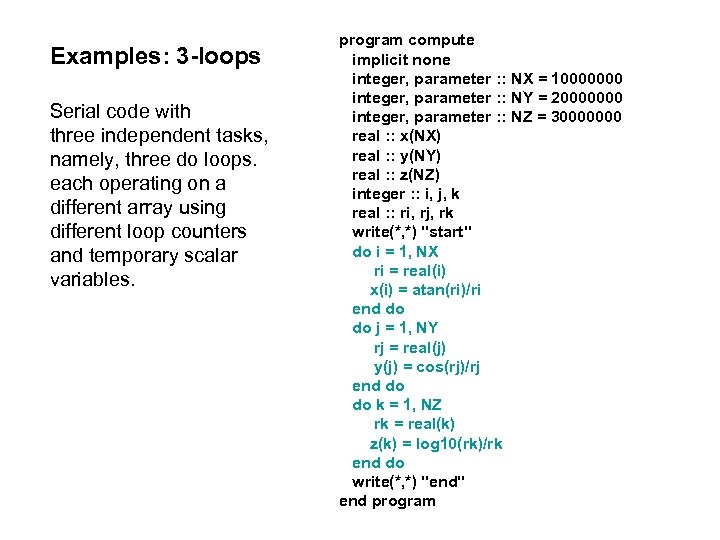

Examples: 3 -loops Serial code with three independent tasks, namely, three do loops. each operating on a different array using different loop counters and temporary scalar variables. program compute implicit none integer, parameter : : NX = 10000000 integer, parameter : : NY = 20000000 integer, parameter : : NZ = 30000000 real : : x(NX) real : : y(NY) real : : z(NZ) integer : : i, j, k real : : ri, rj, rk write(*, *) "start" do i = 1, NX ri = real(i) x(i) = atan(ri)/ri end do do j = 1, NY rj = real(j) y(j) = cos(rj)/rj end do do k = 1, NZ rk = real(k) z(k) = log 10(rk)/rk end do write(*, *) "end" end program

Examples: 3 -loops Serial code with three independent tasks, namely, three do loops. each operating on a different array using different loop counters and temporary scalar variables. program compute implicit none integer, parameter : : NX = 10000000 integer, parameter : : NY = 20000000 integer, parameter : : NZ = 30000000 real : : x(NX) real : : y(NY) real : : z(NZ) integer : : i, j, k real : : ri, rj, rk write(*, *) "start" do i = 1, NX ri = real(i) x(i) = atan(ri)/ri end do do j = 1, NY rj = real(j) y(j) = cos(rj)/rj end do do k = 1, NZ rk = real(k) z(k) = log 10(rk)/rk end do write(*, *) "end" end program

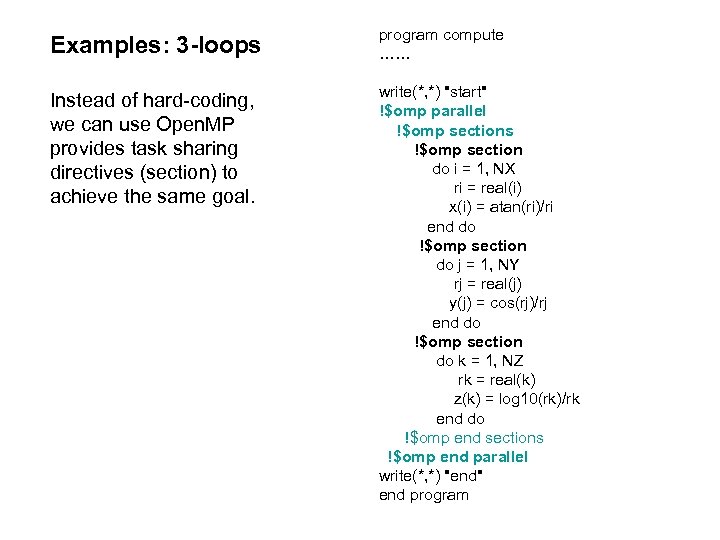

Examples: 3 -loops program compute …… Instead of hard-coding, we can use Open. MP provides task sharing directives (section) to achieve the same goal. write(*, *) "start" !$omp parallel !$omp sections !$omp section do i = 1, NX ri = real(i) x(i) = atan(ri)/ri end do !$omp section do j = 1, NY rj = real(j) y(j) = cos(rj)/rj end do !$omp section do k = 1, NZ rk = real(k) z(k) = log 10(rk)/rk end do !$omp end sections !$omp end parallel write(*, *) "end" end program

Examples: 3 -loops program compute …… Instead of hard-coding, we can use Open. MP provides task sharing directives (section) to achieve the same goal. write(*, *) "start" !$omp parallel !$omp sections !$omp section do i = 1, NX ri = real(i) x(i) = atan(ri)/ri end do !$omp section do j = 1, NY rj = real(j) y(j) = cos(rj)/rj end do !$omp section do k = 1, NZ rk = real(k) z(k) = log 10(rk)/rk end do !$omp end sections !$omp end parallel write(*, *) "end" end program

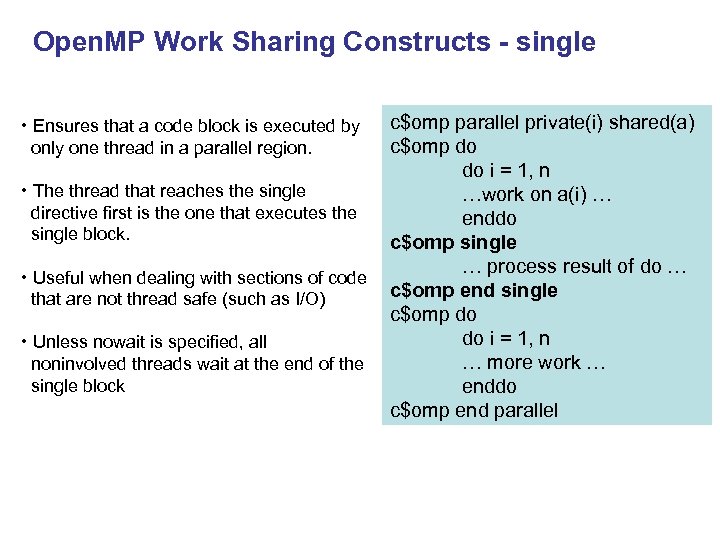

Open. MP Work Sharing Constructs - single c$omp parallel private(i) shared(a) c$omp do do i = 1, n • The thread that reaches the single …work on a(i) … directive first is the one that executes the enddo single block. c$omp single … process result of do … • Useful when dealing with sections of code c$omp end single that are not thread safe (such as I/O) c$omp do do i = 1, n • Unless nowait is specified, all … more work … noninvolved threads wait at the end of the single block enddo c$omp end parallel • Ensures that a code block is executed by only one thread in a parallel region.

Open. MP Work Sharing Constructs - single c$omp parallel private(i) shared(a) c$omp do do i = 1, n • The thread that reaches the single …work on a(i) … directive first is the one that executes the enddo single block. c$omp single … process result of do … • Useful when dealing with sections of code c$omp end single that are not thread safe (such as I/O) c$omp do do i = 1, n • Unless nowait is specified, all … more work … noninvolved threads wait at the end of the single block enddo c$omp end parallel • Ensures that a code block is executed by only one thread in a parallel region.

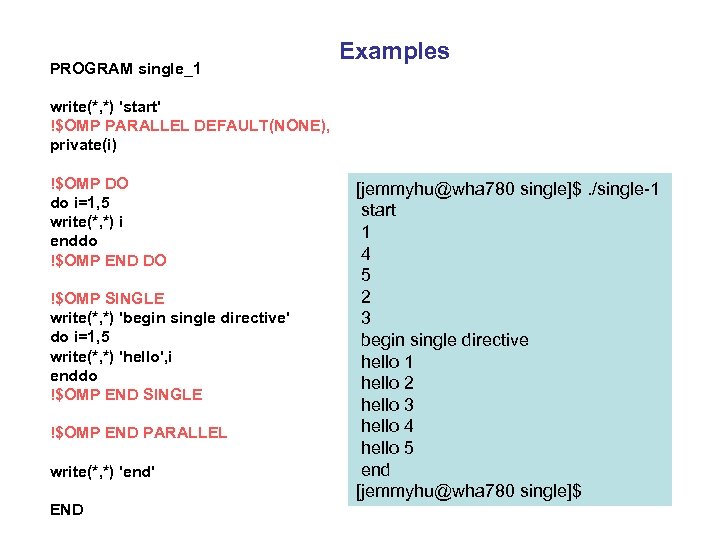

PROGRAM single_1 Examples write(*, *) 'start' !$OMP PARALLEL DEFAULT(NONE), private(i) !$OMP DO do i=1, 5 write(*, *) i enddo !$OMP END DO !$OMP SINGLE write(*, *) 'begin single directive' do i=1, 5 write(*, *) 'hello', i enddo !$OMP END SINGLE !$OMP END PARALLEL write(*, *) 'end' END [jemmyhu@wha 780 single]$. /single-1 start 1 4 5 2 3 begin single directive hello 1 hello 2 hello 3 hello 4 hello 5 end [jemmyhu@wha 780 single]$

PROGRAM single_1 Examples write(*, *) 'start' !$OMP PARALLEL DEFAULT(NONE), private(i) !$OMP DO do i=1, 5 write(*, *) i enddo !$OMP END DO !$OMP SINGLE write(*, *) 'begin single directive' do i=1, 5 write(*, *) 'hello', i enddo !$OMP END SINGLE !$OMP END PARALLEL write(*, *) 'end' END [jemmyhu@wha 780 single]$. /single-1 start 1 4 5 2 3 begin single directive hello 1 hello 2 hello 3 hello 4 hello 5 end [jemmyhu@wha 780 single]$

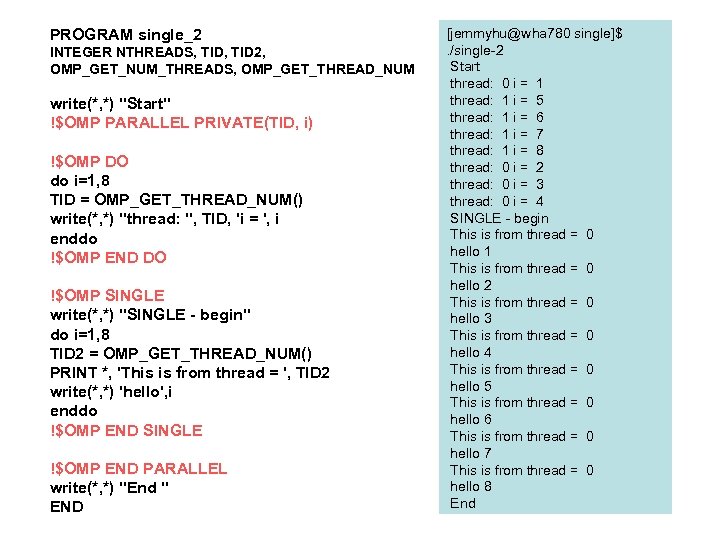

PROGRAM single_2 INTEGER NTHREADS, TID 2, OMP_GET_NUM_THREADS, OMP_GET_THREAD_NUM write(*, *) "Start" !$OMP PARALLEL PRIVATE(TID, i) !$OMP DO do i=1, 8 TID = OMP_GET_THREAD_NUM() write(*, *) "thread: ", TID, 'i = ', i enddo !$OMP END DO !$OMP SINGLE write(*, *) "SINGLE - begin" do i=1, 8 TID 2 = OMP_GET_THREAD_NUM() PRINT *, 'This is from thread = ', TID 2 write(*, *) 'hello', i enddo !$OMP END SINGLE !$OMP END PARALLEL write(*, *) "End " END [jemmyhu@wha 780 single]$ . /single-2 Start thread: 0 i = 1 thread: 1 i = 5 thread: 1 i = 6 thread: 1 i = 7 thread: 1 i = 8 thread: 0 i = 2 thread: 0 i = 3 thread: 0 i = 4 SINGLE - begin This is from thread = 0 hello 1 This is from thread = 0 hello 2 This is from thread = 0 hello 3 This is from thread = 0 hello 4 This is from thread = 0 hello 5 This is from thread = 0 hello 6 This is from thread = 0 hello 7 This is from thread = 0 hello 8 End

PROGRAM single_2 INTEGER NTHREADS, TID 2, OMP_GET_NUM_THREADS, OMP_GET_THREAD_NUM write(*, *) "Start" !$OMP PARALLEL PRIVATE(TID, i) !$OMP DO do i=1, 8 TID = OMP_GET_THREAD_NUM() write(*, *) "thread: ", TID, 'i = ', i enddo !$OMP END DO !$OMP SINGLE write(*, *) "SINGLE - begin" do i=1, 8 TID 2 = OMP_GET_THREAD_NUM() PRINT *, 'This is from thread = ', TID 2 write(*, *) 'hello', i enddo !$OMP END SINGLE !$OMP END PARALLEL write(*, *) "End " END [jemmyhu@wha 780 single]$ . /single-2 Start thread: 0 i = 1 thread: 1 i = 5 thread: 1 i = 6 thread: 1 i = 7 thread: 1 i = 8 thread: 0 i = 2 thread: 0 i = 3 thread: 0 i = 4 SINGLE - begin This is from thread = 0 hello 1 This is from thread = 0 hello 2 This is from thread = 0 hello 3 This is from thread = 0 hello 4 This is from thread = 0 hello 5 This is from thread = 0 hello 6 This is from thread = 0 hello 7 This is from thread = 0 hello 8 End

Try yourself (hand-on) Codes in …/ss 2016/openmp/others/ read the code, compile, and run 3 loops-seq. f 90 3 loops-region. f 90 3 loops-ompsec. f 90 single-3. f 90

Try yourself (hand-on) Codes in …/ss 2016/openmp/others/ read the code, compile, and run 3 loops-seq. f 90 3 loops-region. f 90 3 loops-ompsec. f 90 single-3. f 90

Data Scope Clauses • SHARED (list) • PRIVATE (list) • FIRSTPRIVATE (list) • LASTPRIVATE (list) • DEFAULT (list) • THREADPRIVATE (list) • COPYIN (list) • REDUCTION (operator | intrinsic : list)

Data Scope Clauses • SHARED (list) • PRIVATE (list) • FIRSTPRIVATE (list) • LASTPRIVATE (list) • DEFAULT (list) • THREADPRIVATE (list) • COPYIN (list) • REDUCTION (operator | intrinsic : list)

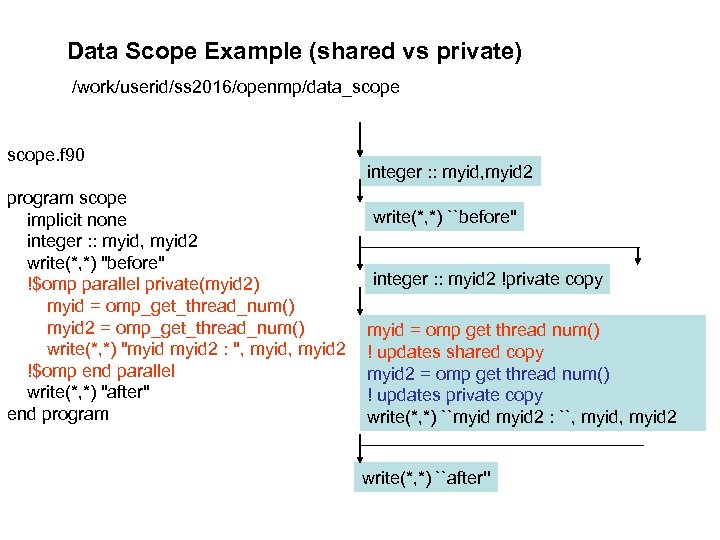

Data Scope Example (shared vs private) /work/userid/ss 2016/openmp/data_scope. f 90 program scope implicit none integer : : myid, myid 2 write(*, *) "before" !$omp parallel private(myid 2) myid = omp_get_thread_num() myid 2 = omp_get_thread_num() write(*, *) "myid 2 : ", myid 2 !$omp end parallel write(*, *) "after" end program integer : : myid, myid 2 write(*, *) ``before'' integer : : myid 2 !private copy myid = omp get thread num() ! updates shared copy myid 2 = omp get thread num() ! updates private copy write(*, *) ``myid 2 : ``, myid 2 write(*, *) ``after''

Data Scope Example (shared vs private) /work/userid/ss 2016/openmp/data_scope. f 90 program scope implicit none integer : : myid, myid 2 write(*, *) "before" !$omp parallel private(myid 2) myid = omp_get_thread_num() myid 2 = omp_get_thread_num() write(*, *) "myid 2 : ", myid 2 !$omp end parallel write(*, *) "after" end program integer : : myid, myid 2 write(*, *) ``before'' integer : : myid 2 !private copy myid = omp get thread num() ! updates shared copy myid 2 = omp get thread num() ! updates private copy write(*, *) ``myid 2 : ``, myid 2 write(*, *) ``after''

![[jemmyhu@saw-login 1: ~]. /scope before myid 2 : 4 3 myid 2 : 5 [jemmyhu@saw-login 1: ~]. /scope before myid 2 : 4 3 myid 2 : 5](https://present5.com/presentation/566b47e202d99e385940a9b8710455b6/image-59.jpg) [jemmyhu@saw-login 1: ~]. /scope before myid 2 : 4 3 myid 2 : 5 5 myid 2 : 5 0 myid 2 : 5 1 myid 2 : 5 4 myid 2 : 5 7 myid 2 : 5 2 myid 2 : 5 6 after scope-2. f 90, define both myid and myid 2 as private, it gives [jemmyhu@saw-login 1: ~]. /scope-2 before myid 2 : 1 1 myid 2 : 4 4 myid 2 : 2 2 myid 2 : 3 3 myid 2 : 0 0 myid 2 : 7 7 myid 2 : 5 5 myid 2 : 6 6 after

[jemmyhu@saw-login 1: ~]. /scope before myid 2 : 4 3 myid 2 : 5 5 myid 2 : 5 0 myid 2 : 5 1 myid 2 : 5 4 myid 2 : 5 7 myid 2 : 5 2 myid 2 : 5 6 after scope-2. f 90, define both myid and myid 2 as private, it gives [jemmyhu@saw-login 1: ~]. /scope-2 before myid 2 : 1 1 myid 2 : 4 4 myid 2 : 2 2 myid 2 : 3 3 myid 2 : 0 0 myid 2 : 7 7 myid 2 : 5 5 myid 2 : 6 6 after

Changing default scoping rules: C vs Fortran • Fortran default (shared | private | none) index variables are private • C/C++ default(shared | none) - no defualt (private): many standard C libraries are implemented using macros that reference global variables serial loop index variable is shared - C for construct is so general that it is difficult for the compiler to figure out which variables should be privatized. Default (none): helps catch scoping errors

Changing default scoping rules: C vs Fortran • Fortran default (shared | private | none) index variables are private • C/C++ default(shared | none) - no defualt (private): many standard C libraries are implemented using macros that reference global variables serial loop index variable is shared - C for construct is so general that it is difficult for the compiler to figure out which variables should be privatized. Default (none): helps catch scoping errors

![reduction(operator|intrinsic: var 1[, var 2]) • Allows safe global calculation or comparison. • A reduction(operator|intrinsic: var 1[, var 2]) • Allows safe global calculation or comparison. • A](https://present5.com/presentation/566b47e202d99e385940a9b8710455b6/image-61.jpg) reduction(operator|intrinsic: var 1[, var 2]) • Allows safe global calculation or comparison. • A private copy of each listed variable is created and initialized depending on operator or intrinsic (e. g. , 0 for +). • Partial sums and local mins are determined by the threads in parallel. • Partial sums are added together from one thread at a time to get gobal sum. • Local mins are compared from one thread at a time to get gmin. c$omp do shared(x) private(i) c$omp& reduction(+: sum) do i = 1, N sum = sum + x(i) end do c$omp do shared(x) private(i) c$omp& reduction(min: gmin) do i = 1, N gmin = min(gmin, x(i)) end do

reduction(operator|intrinsic: var 1[, var 2]) • Allows safe global calculation or comparison. • A private copy of each listed variable is created and initialized depending on operator or intrinsic (e. g. , 0 for +). • Partial sums and local mins are determined by the threads in parallel. • Partial sums are added together from one thread at a time to get gobal sum. • Local mins are compared from one thread at a time to get gmin. c$omp do shared(x) private(i) c$omp& reduction(+: sum) do i = 1, N sum = sum + x(i) end do c$omp do shared(x) private(i) c$omp& reduction(min: gmin) do i = 1, N gmin = min(gmin, x(i)) end do

![reduction. f 90: [jemmyhu@saw-login 1: ~]. /reduction Before Par Region: I= 1 J= 1 reduction. f 90: [jemmyhu@saw-login 1: ~]. /reduction Before Par Region: I= 1 J= 1](https://present5.com/presentation/566b47e202d99e385940a9b8710455b6/image-62.jpg) reduction. f 90: [jemmyhu@saw-login 1: ~]. /reduction Before Par Region: I= 1 J= 1 K= 1 Thread 3 I= 3 J= 3 K= 3 Thread 1 I= 1 J= 1 K= 1 Thread 0 I= 0 J= 0 K= 0 Thread 2 I= 2 J= 2 K= 2 Operator + * MAX After Par Region: I= 7 J= 0 K= 3 PROGRAM REDUCTION USE omp_lib IMPLICIT NONE INTEGER tnumber INTEGER I, J, K I=1 J=1 K=1 PRINT *, "Before Par Region: I=", I, " J=", J, " K=", K PRINT *, "" !$OMP PARALLEL PRIVATE(tnumber) REDUCTION(+: I) REDUCTION(*: J) REDUCTION(MAX: K) tnumber=OMP_GET_THREAD_NUM() I = tnumber J = tnumber K = tnumber PRINT *, "Thread ", tnumber, " I=", I, " J=", J, " K=", K !$OMP END PARALLEL PRINT *, "" print *, "Operator + * MAX" PRINT *, "After Par Region: I=", I, " J=", J, " K=", K END PROGRAM REDUCTION

reduction. f 90: [jemmyhu@saw-login 1: ~]. /reduction Before Par Region: I= 1 J= 1 K= 1 Thread 3 I= 3 J= 3 K= 3 Thread 1 I= 1 J= 1 K= 1 Thread 0 I= 0 J= 0 K= 0 Thread 2 I= 2 J= 2 K= 2 Operator + * MAX After Par Region: I= 7 J= 0 K= 3 PROGRAM REDUCTION USE omp_lib IMPLICIT NONE INTEGER tnumber INTEGER I, J, K I=1 J=1 K=1 PRINT *, "Before Par Region: I=", I, " J=", J, " K=", K PRINT *, "" !$OMP PARALLEL PRIVATE(tnumber) REDUCTION(+: I) REDUCTION(*: J) REDUCTION(MAX: K) tnumber=OMP_GET_THREAD_NUM() I = tnumber J = tnumber K = tnumber PRINT *, "Thread ", tnumber, " I=", I, " J=", J, " K=", K !$OMP END PARALLEL PRINT *, "" print *, "Operator + * MAX" PRINT *, "After Par Region: I=", I, " J=", J, " K=", K END PROGRAM REDUCTION

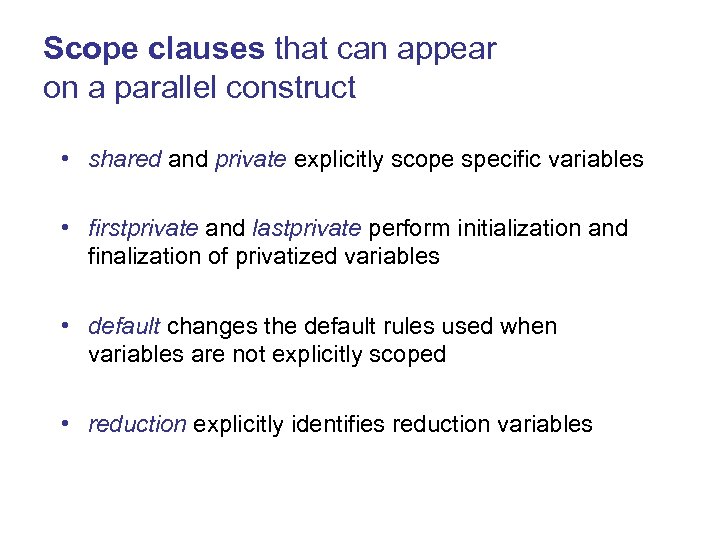

Scope clauses that can appear on a parallel construct • shared and private explicitly scope specific variables • firstprivate and lastprivate perform initialization and finalization of privatized variables • default changes the default rules used when variables are not explicitly scoped • reduction explicitly identifies reduction variables

Scope clauses that can appear on a parallel construct • shared and private explicitly scope specific variables • firstprivate and lastprivate perform initialization and finalization of privatized variables • default changes the default rules used when variables are not explicitly scoped • reduction explicitly identifies reduction variables

![Default scoping rules in C void caller(int a[ ], int n) { int i, Default scoping rules in C void caller(int a[ ], int n) { int i,](https://present5.com/presentation/566b47e202d99e385940a9b8710455b6/image-64.jpg) Default scoping rules in C void caller(int a[ ], int n) { int i, j, m=3; Variable Scope Is Use Safe? a shared yes declared outside par construct n shared yes declared outside par construct #pragma omp parallel for ( i = 0; i

Default scoping rules in C void caller(int a[ ], int n) { int i, j, m=3; Variable Scope Is Use Safe? a shared yes declared outside par construct n shared yes declared outside par construct #pragma omp parallel for ( i = 0; i

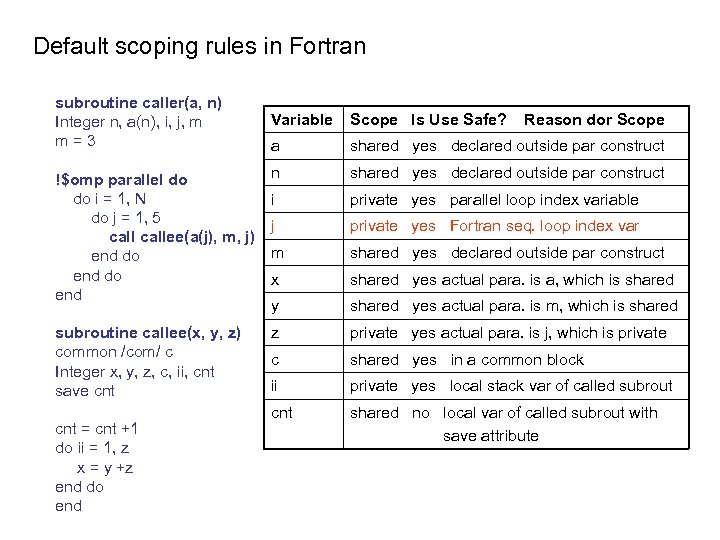

Default scoping rules in Fortran subroutine caller(a, n) Integer n, a(n), i, j, m m = 3 Variable Scope Is Use Safe? a shared yes declared outside par construct !$omp parallel do do i = 1, N do j = 1, 5 callee(a(j), m, j) end do end n shared yes declared outside par construct i private yes parallel loop index variable j private yes Fortran seq. loop index var m shared yes declared outside par construct x shared yes actual para. is a, which is shared y shared yes actual para. is m, which is shared subroutine callee(x, y, z) common /com/ c Integer x, y, z, c, ii, cnt save cnt z private yes actual para. is j, which is private c shared yes in a common block ii private yes local stack var of called subrout cnt shared no local var of called subrout with save attribute cnt = cnt +1 do ii = 1, z x = y +z end do end Reason dor Scope

Default scoping rules in Fortran subroutine caller(a, n) Integer n, a(n), i, j, m m = 3 Variable Scope Is Use Safe? a shared yes declared outside par construct !$omp parallel do do i = 1, N do j = 1, 5 callee(a(j), m, j) end do end n shared yes declared outside par construct i private yes parallel loop index variable j private yes Fortran seq. loop index var m shared yes declared outside par construct x shared yes actual para. is a, which is shared y shared yes actual para. is m, which is shared subroutine callee(x, y, z) common /com/ c Integer x, y, z, c, ii, cnt save cnt z private yes actual para. is j, which is private c shared yes in a common block ii private yes local stack var of called subrout cnt shared no local var of called subrout with save attribute cnt = cnt +1 do ii = 1, z x = y +z end do end Reason dor Scope

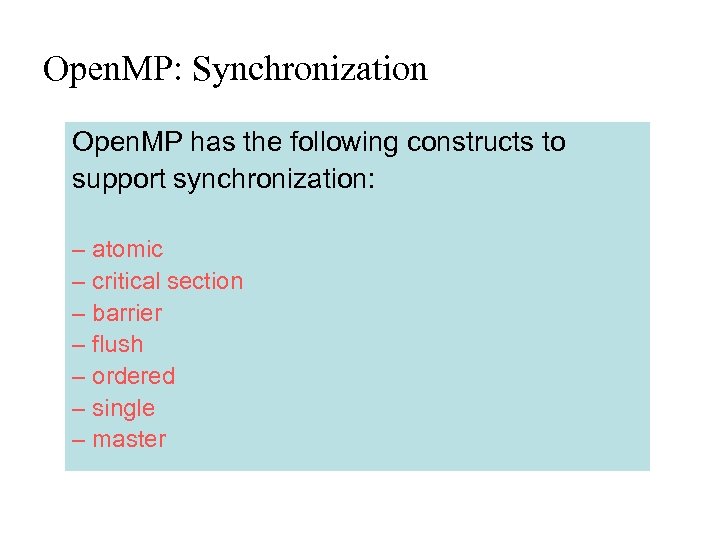

Open. MP: Synchronization Open. MP has the following constructs to support synchronization: – atomic – critical section – barrier – flush – ordered – single – master

Open. MP: Synchronization Open. MP has the following constructs to support synchronization: – atomic – critical section – barrier – flush – ordered – single – master

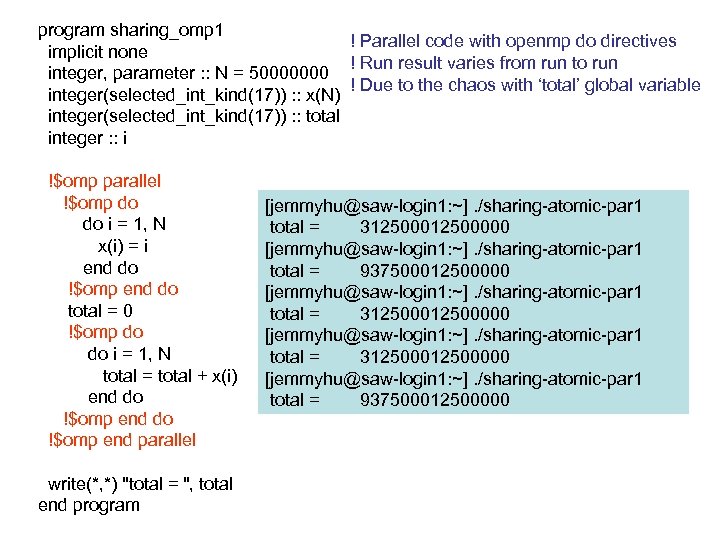

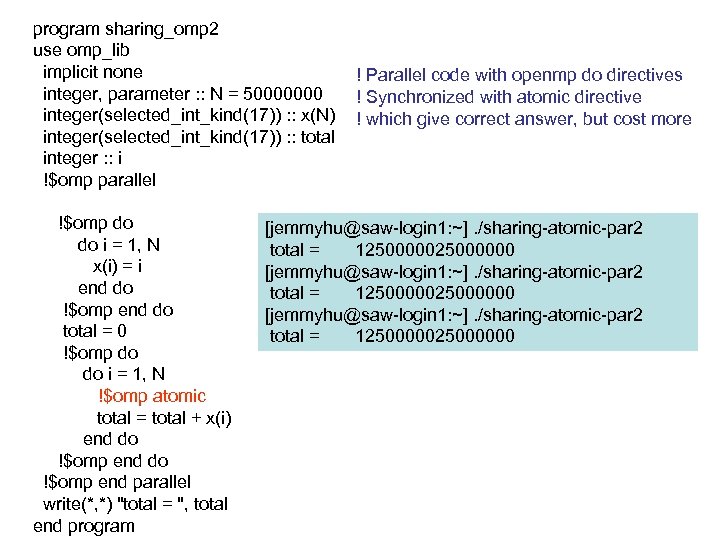

program sharing_omp 1 ! Parallel code with openmp do directives implicit none ! Run result varies from run to run integer, parameter : : N = 50000000 ! Due to the chaos with ‘total’ global variable integer(selected_int_kind(17)) : : x(N) integer(selected_int_kind(17)) : : total integer : : i !$omp parallel !$omp do i = 1, N x(i) = i end do !$omp end do total = 0 !$omp do i = 1, N total = total + x(i) end do !$omp end do !$omp end parallel write(*, *) "total = ", total end program [jemmyhu@saw-login 1: ~]. /sharing-atomic-par 1 total = 312500000 [jemmyhu@saw-login 1: ~]. /sharing-atomic-par 1 total = 937500012500000 [jemmyhu@saw-login 1: ~]. /sharing-atomic-par 1 total = 312500012500000 [jemmyhu@saw-login 1: ~]. /sharing-atomic-par 1 total = 937500012500000

program sharing_omp 1 ! Parallel code with openmp do directives implicit none ! Run result varies from run to run integer, parameter : : N = 50000000 ! Due to the chaos with ‘total’ global variable integer(selected_int_kind(17)) : : x(N) integer(selected_int_kind(17)) : : total integer : : i !$omp parallel !$omp do i = 1, N x(i) = i end do !$omp end do total = 0 !$omp do i = 1, N total = total + x(i) end do !$omp end do !$omp end parallel write(*, *) "total = ", total end program [jemmyhu@saw-login 1: ~]. /sharing-atomic-par 1 total = 312500000 [jemmyhu@saw-login 1: ~]. /sharing-atomic-par 1 total = 937500012500000 [jemmyhu@saw-login 1: ~]. /sharing-atomic-par 1 total = 312500012500000 [jemmyhu@saw-login 1: ~]. /sharing-atomic-par 1 total = 937500012500000

program sharing_omp 2 use omp_lib implicit none integer, parameter : : N = 50000000 integer(selected_int_kind(17)) : : x(N) integer(selected_int_kind(17)) : : total integer : : i !$omp parallel !$omp do i = 1, N x(i) = i end do !$omp end do total = 0 !$omp do i = 1, N !$omp atomic total = total + x(i) end do !$omp end do !$omp end parallel write(*, *) "total = ", total end program ! Parallel code with openmp do directives ! Synchronized with atomic directive ! which give correct answer, but cost more [jemmyhu@saw-login 1: ~]. /sharing-atomic-par 2 total = 1250000025000000 [jemmyhu@saw-login 1: ~]. /sharing-atomic-par 2 total = 125000000

program sharing_omp 2 use omp_lib implicit none integer, parameter : : N = 50000000 integer(selected_int_kind(17)) : : x(N) integer(selected_int_kind(17)) : : total integer : : i !$omp parallel !$omp do i = 1, N x(i) = i end do !$omp end do total = 0 !$omp do i = 1, N !$omp atomic total = total + x(i) end do !$omp end do !$omp end parallel write(*, *) "total = ", total end program ! Parallel code with openmp do directives ! Synchronized with atomic directive ! which give correct answer, but cost more [jemmyhu@saw-login 1: ~]. /sharing-atomic-par 2 total = 1250000025000000 [jemmyhu@saw-login 1: ~]. /sharing-atomic-par 2 total = 125000000

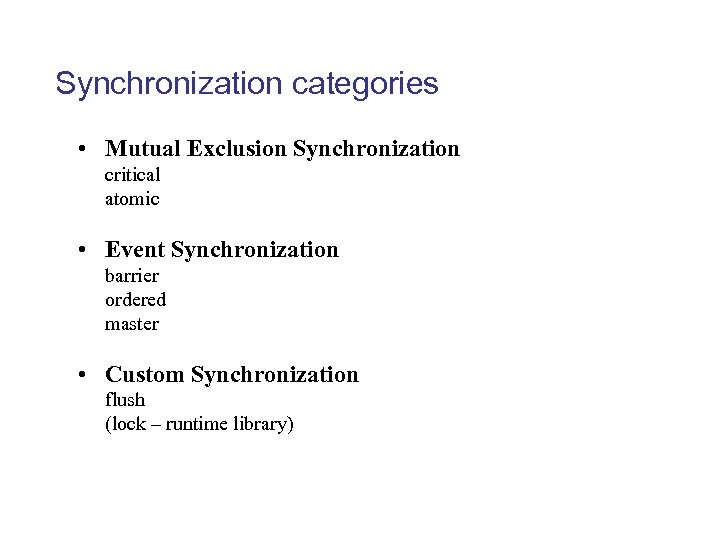

Synchronization categories • Mutual Exclusion Synchronization critical atomic • Event Synchronization barrier ordered master • Custom Synchronization flush (lock – runtime library)

Synchronization categories • Mutual Exclusion Synchronization critical atomic • Event Synchronization barrier ordered master • Custom Synchronization flush (lock – runtime library)

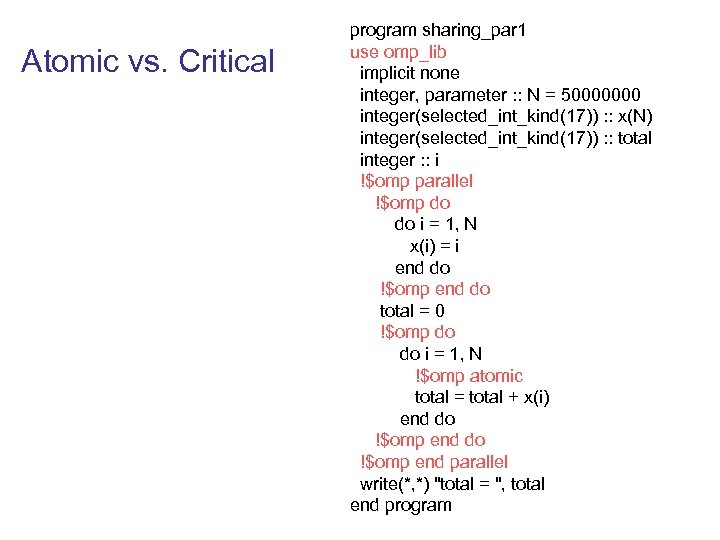

Atomic vs. Critical program sharing_par 1 use omp_lib implicit none integer, parameter : : N = 50000000 integer(selected_int_kind(17)) : : x(N) integer(selected_int_kind(17)) : : total integer : : i !$omp parallel !$omp do i = 1, N x(i) = i end do !$omp end do total = 0 !$omp do i = 1, N !$omp atomic total = total + x(i) end do !$omp end do !$omp end parallel write(*, *) "total = ", total end program

Atomic vs. Critical program sharing_par 1 use omp_lib implicit none integer, parameter : : N = 50000000 integer(selected_int_kind(17)) : : x(N) integer(selected_int_kind(17)) : : total integer : : i !$omp parallel !$omp do i = 1, N x(i) = i end do !$omp end do total = 0 !$omp do i = 1, N !$omp atomic total = total + x(i) end do !$omp end do !$omp end parallel write(*, *) "total = ", total end program

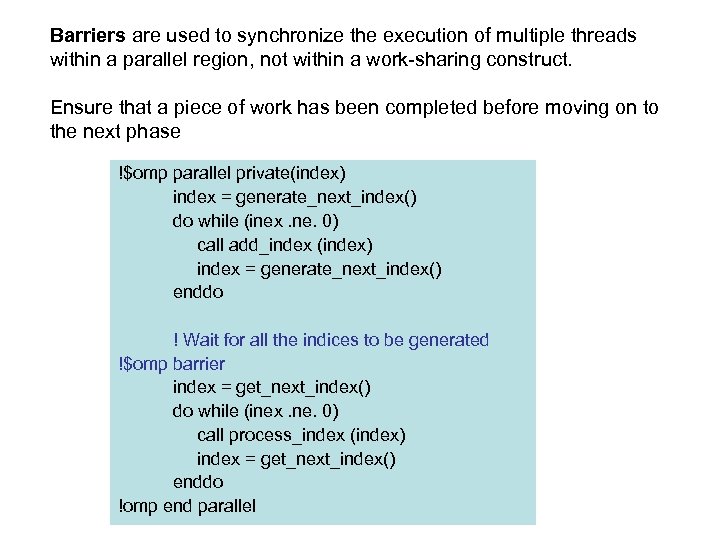

Barriers are used to synchronize the execution of multiple threads within a parallel region, not within a work-sharing construct. Ensure that a piece of work has been completed before moving on to the next phase !$omp parallel private(index) index = generate_next_index() do while (inex. ne. 0) call add_index (index) index = generate_next_index() enddo ! Wait for all the indices to be generated !$omp barrier index = get_next_index() do while (inex. ne. 0) call process_index (index) index = get_next_index() enddo !omp end parallel

Barriers are used to synchronize the execution of multiple threads within a parallel region, not within a work-sharing construct. Ensure that a piece of work has been completed before moving on to the next phase !$omp parallel private(index) index = generate_next_index() do while (inex. ne. 0) call add_index (index) index = generate_next_index() enddo ! Wait for all the indices to be generated !$omp barrier index = get_next_index() do while (inex. ne. 0) call process_index (index) index = get_next_index() enddo !omp end parallel

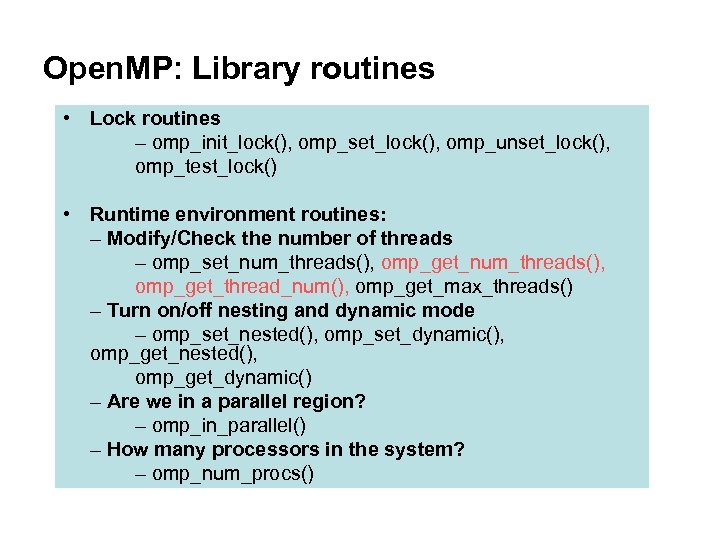

Open. MP: Library routines • Lock routines – omp_init_lock(), omp_set_lock(), omp_unset_lock(), omp_test_lock() • Runtime environment routines: – Modify/Check the number of threads – omp_set_num_threads(), omp_get_thread_num(), omp_get_max_threads() – Turn on/off nesting and dynamic mode – omp_set_nested(), omp_set_dynamic(), omp_get_nested(), omp_get_dynamic() – Are we in a parallel region? – omp_in_parallel() – How many processors in the system? – omp_num_procs()

Open. MP: Library routines • Lock routines – omp_init_lock(), omp_set_lock(), omp_unset_lock(), omp_test_lock() • Runtime environment routines: – Modify/Check the number of threads – omp_set_num_threads(), omp_get_thread_num(), omp_get_max_threads() – Turn on/off nesting and dynamic mode – omp_set_nested(), omp_set_dynamic(), omp_get_nested(), omp_get_dynamic() – Are we in a parallel region? – omp_in_parallel() – How many processors in the system? – omp_num_procs()

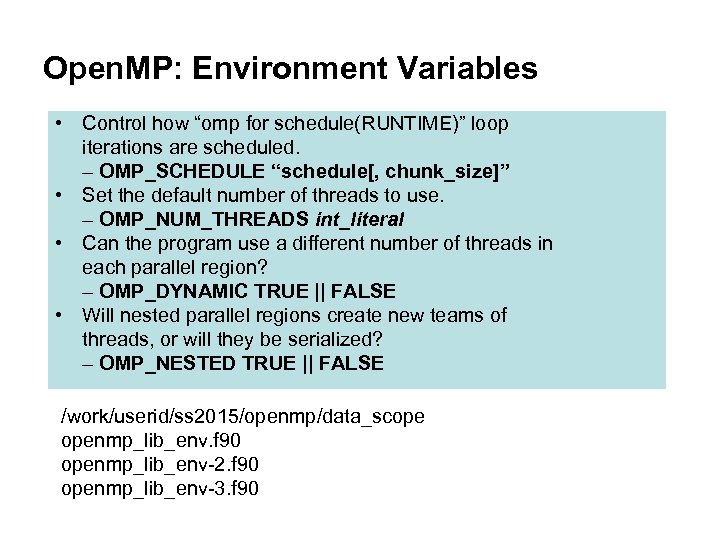

Open. MP: Environment Variables • Control how “omp for schedule(RUNTIME)” loop iterations are scheduled. – OMP_SCHEDULE “schedule[, chunk_size]” • Set the default number of threads to use. – OMP_NUM_THREADS int_literal • Can the program use a different number of threads in each parallel region? – OMP_DYNAMIC TRUE || FALSE • Will nested parallel regions create new teams of threads, or will they be serialized? – OMP_NESTED TRUE || FALSE /work/userid/ss 2015/openmp/data_scope openmp_lib_env. f 90 openmp_lib_env-2. f 90 openmp_lib_env-3. f 90

Open. MP: Environment Variables • Control how “omp for schedule(RUNTIME)” loop iterations are scheduled. – OMP_SCHEDULE “schedule[, chunk_size]” • Set the default number of threads to use. – OMP_NUM_THREADS int_literal • Can the program use a different number of threads in each parallel region? – OMP_DYNAMIC TRUE || FALSE • Will nested parallel regions create new teams of threads, or will they be serialized? – OMP_NESTED TRUE || FALSE /work/userid/ss 2015/openmp/data_scope openmp_lib_env. f 90 openmp_lib_env-2. f 90 openmp_lib_env-3. f 90

Dynamic threading

Dynamic threading

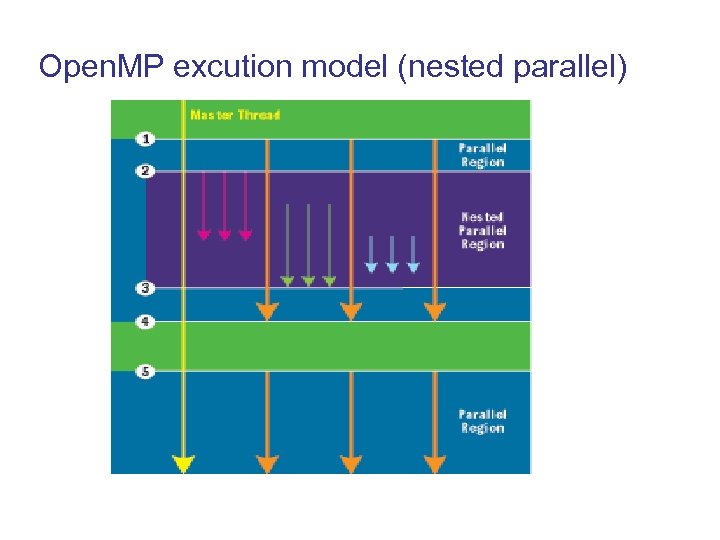

Open. MP excution model (nested parallel)

Open. MP excution model (nested parallel)

Case Studies • Example – pi • Partial Differential Equation – 2 D

Case Studies • Example – pi • Partial Differential Equation – 2 D

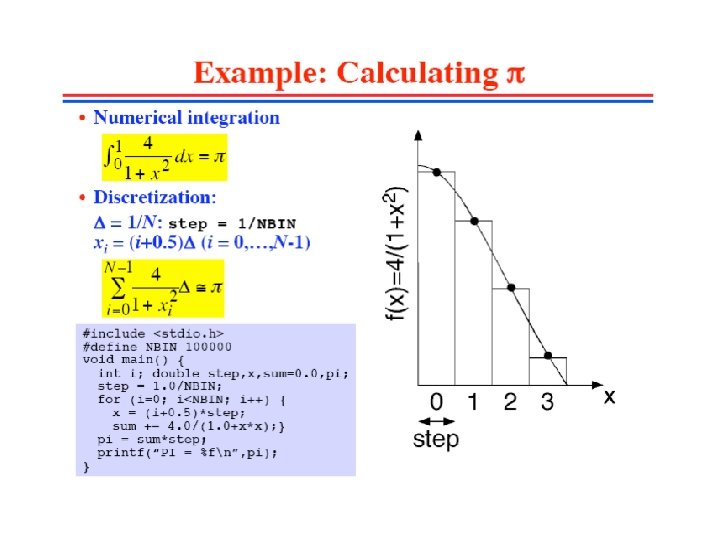

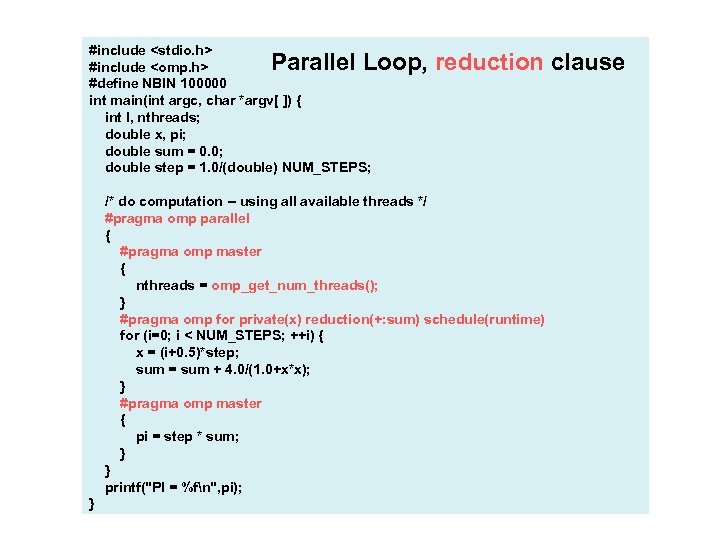

#include

#include

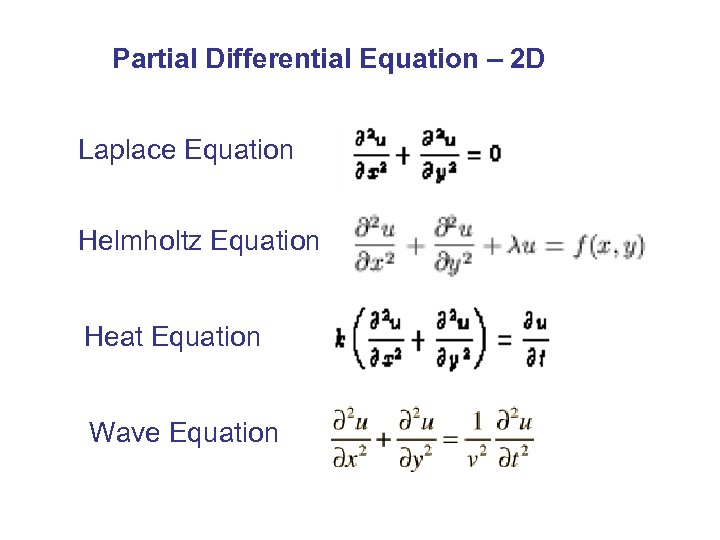

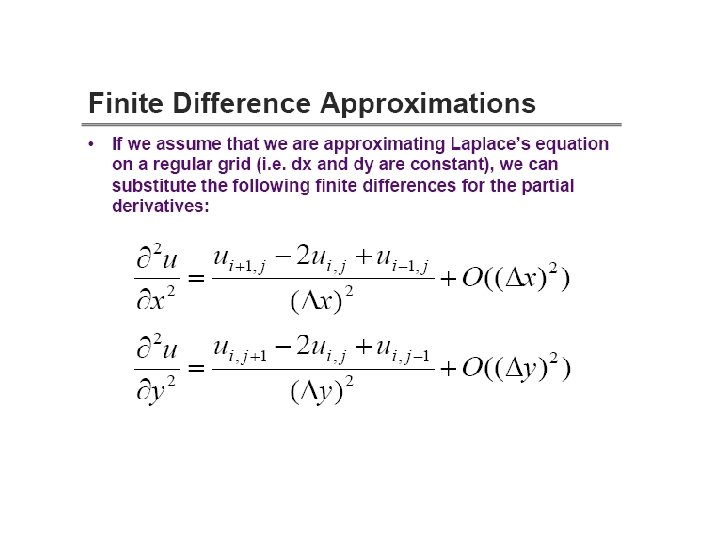

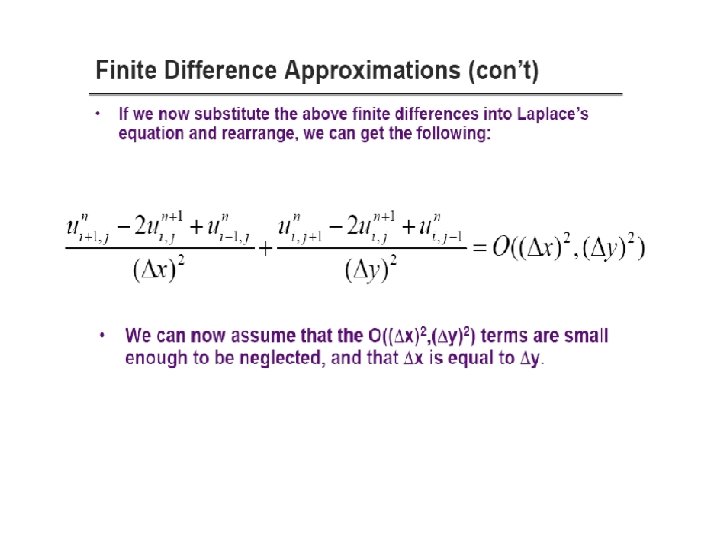

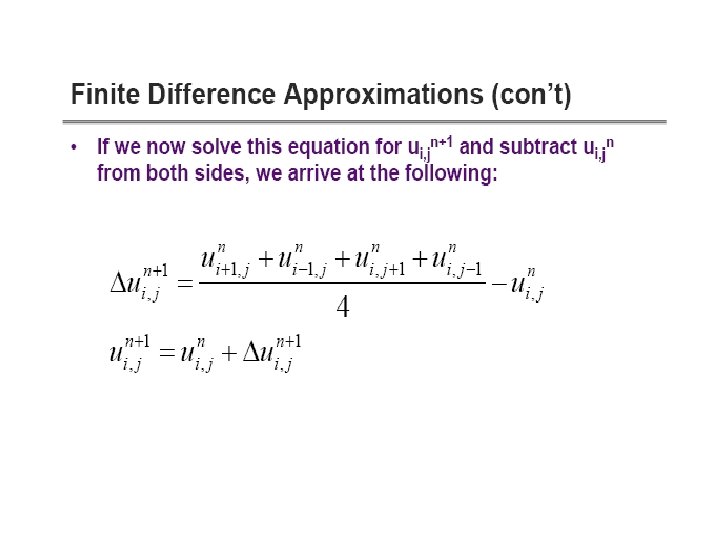

Partial Differential Equation – 2 D Laplace Equation Helmholtz Equation Heat Equation Wave Equation

Partial Differential Equation – 2 D Laplace Equation Helmholtz Equation Heat Equation Wave Equation

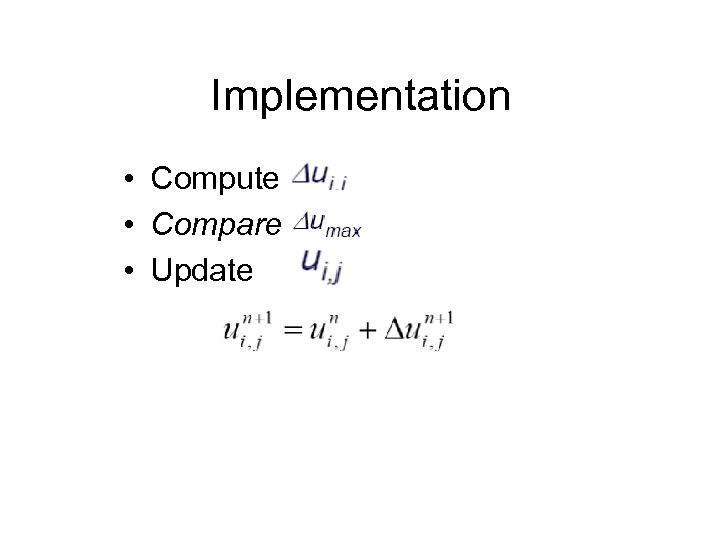

Implementation • Compute • Compare • Update

Implementation • Compute • Compare • Update

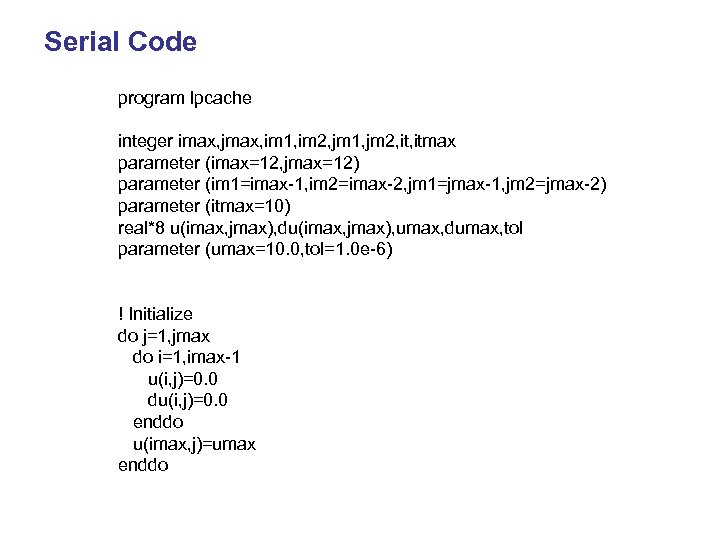

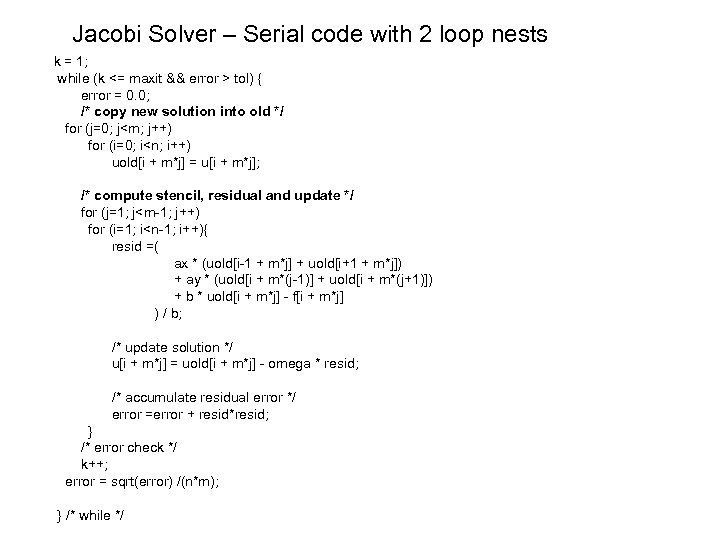

Serial Code program lpcache integer imax, jmax, im 1, im 2, jm 1, jm 2, itmax parameter (imax=12, jmax=12) parameter (im 1=imax-1, im 2=imax-2, jm 1=jmax-1, jm 2=jmax-2) parameter (itmax=10) real*8 u(imax, jmax), du(imax, jmax), umax, dumax, tol parameter (umax=10. 0, tol=1. 0 e-6) ! Initialize do j=1, jmax do i=1, imax-1 u(i, j)=0. 0 du(i, j)=0. 0 enddo u(imax, j)=umax enddo

Serial Code program lpcache integer imax, jmax, im 1, im 2, jm 1, jm 2, itmax parameter (imax=12, jmax=12) parameter (im 1=imax-1, im 2=imax-2, jm 1=jmax-1, jm 2=jmax-2) parameter (itmax=10) real*8 u(imax, jmax), du(imax, jmax), umax, dumax, tol parameter (umax=10. 0, tol=1. 0 e-6) ! Initialize do j=1, jmax do i=1, imax-1 u(i, j)=0. 0 du(i, j)=0. 0 enddo u(imax, j)=umax enddo

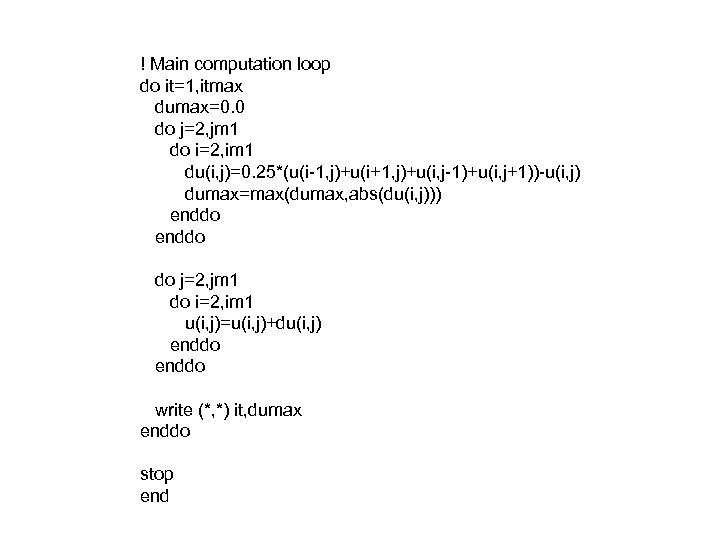

! Main computation loop do it=1, itmax dumax=0. 0 do j=2, jm 1 do i=2, im 1 du(i, j)=0. 25*(u(i-1, j)+u(i+1, j)+u(i, j-1)+u(i, j+1))-u(i, j) dumax=max(dumax, abs(du(i, j))) enddo do j=2, jm 1 do i=2, im 1 u(i, j)=u(i, j)+du(i, j) enddo write (*, *) it, dumax enddo stop end

! Main computation loop do it=1, itmax dumax=0. 0 do j=2, jm 1 do i=2, im 1 du(i, j)=0. 25*(u(i-1, j)+u(i+1, j)+u(i, j-1)+u(i, j+1))-u(i, j) dumax=max(dumax, abs(du(i, j))) enddo do j=2, jm 1 do i=2, im 1 u(i, j)=u(i, j)+du(i, j) enddo write (*, *) it, dumax enddo stop end

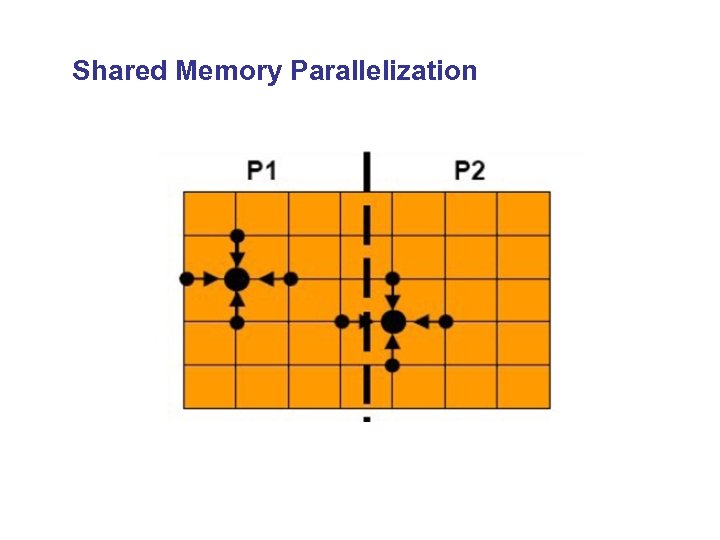

Shared Memory Parallelization

Shared Memory Parallelization

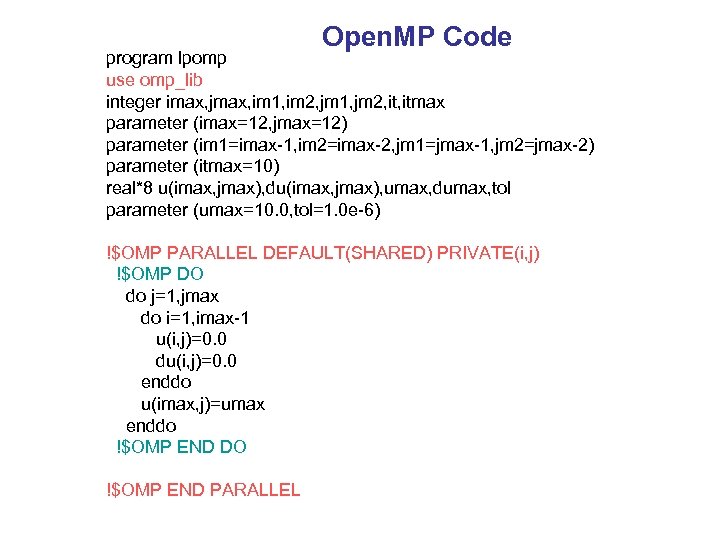

Open. MP Code program lpomp use omp_lib integer imax, jmax, im 1, im 2, jm 1, jm 2, itmax parameter (imax=12, jmax=12) parameter (im 1=imax-1, im 2=imax-2, jm 1=jmax-1, jm 2=jmax-2) parameter (itmax=10) real*8 u(imax, jmax), du(imax, jmax), umax, dumax, tol parameter (umax=10. 0, tol=1. 0 e-6) !$OMP PARALLEL DEFAULT(SHARED) PRIVATE(i, j) !$OMP DO do j=1, jmax do i=1, imax-1 u(i, j)=0. 0 du(i, j)=0. 0 enddo u(imax, j)=umax enddo !$OMP END DO !$OMP END PARALLEL

Open. MP Code program lpomp use omp_lib integer imax, jmax, im 1, im 2, jm 1, jm 2, itmax parameter (imax=12, jmax=12) parameter (im 1=imax-1, im 2=imax-2, jm 1=jmax-1, jm 2=jmax-2) parameter (itmax=10) real*8 u(imax, jmax), du(imax, jmax), umax, dumax, tol parameter (umax=10. 0, tol=1. 0 e-6) !$OMP PARALLEL DEFAULT(SHARED) PRIVATE(i, j) !$OMP DO do j=1, jmax do i=1, imax-1 u(i, j)=0. 0 du(i, j)=0. 0 enddo u(imax, j)=umax enddo !$OMP END DO !$OMP END PARALLEL

!$OMP PARALLEL DEFAULT(SHARED) PRIVATE(i, j) do it=1, itmax dumax=0. 0 !$OMP DO REDUCTION (max: dumax) do j=2, jm 1 do i=2, im 1 du(i, j)=0. 25*(u(i-1, j)+u(i+1, j)+u(i, j-1)+u(i, j+1))-u(i, j) dumax=max(dumax, abs(du(i, j))) enddo !$OMP END DO !$OMP DO do j=2, jm 1 do i=2, im 1 u(i, j)=u(i, j)+du(i, j) enddo !$OMP END DO enddo !$OMP END PARALLEL end

!$OMP PARALLEL DEFAULT(SHARED) PRIVATE(i, j) do it=1, itmax dumax=0. 0 !$OMP DO REDUCTION (max: dumax) do j=2, jm 1 do i=2, im 1 du(i, j)=0. 25*(u(i-1, j)+u(i+1, j)+u(i, j-1)+u(i, j+1))-u(i, j) dumax=max(dumax, abs(du(i, j))) enddo !$OMP END DO !$OMP DO do j=2, jm 1 do i=2, im 1 u(i, j)=u(i, j)+du(i, j) enddo !$OMP END DO enddo !$OMP END PARALLEL end

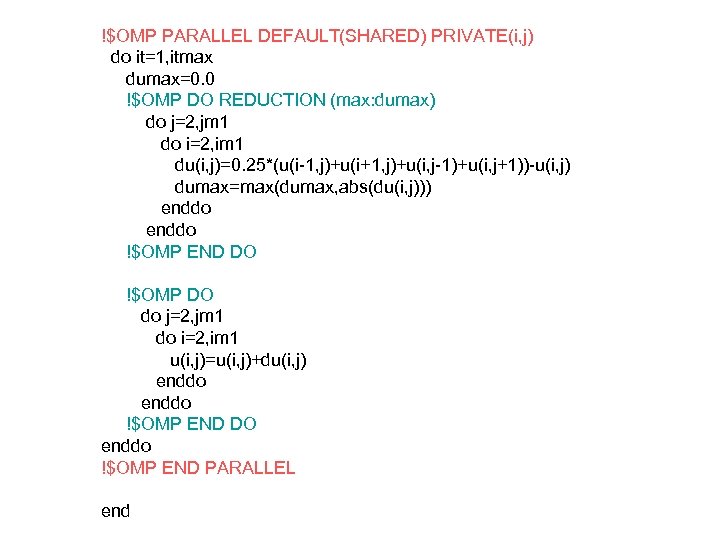

Jacobi-method: 2 D Helmholtz equation The iterative solution in centred finite-difference form where is the discrete Laplace operator and are known. If is the th iteration for this solution, we get the "residual" vector and we take the th iteration of such that the new residual is zero

Jacobi-method: 2 D Helmholtz equation The iterative solution in centred finite-difference form where is the discrete Laplace operator and are known. If is the th iteration for this solution, we get the "residual" vector and we take the th iteration of such that the new residual is zero

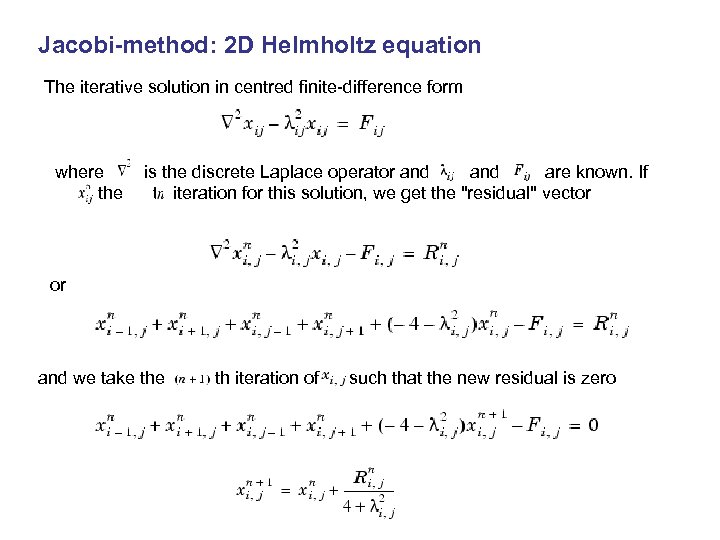

Jacobi Solver – Serial code with 2 loop nests k = 1; while (k <= maxit && error > tol) { error = 0. 0; /* copy new solution into old */ for (j=0; j

Jacobi Solver – Serial code with 2 loop nests k = 1; while (k <= maxit && error > tol) { error = 0. 0; /* copy new solution into old */ for (j=0; j

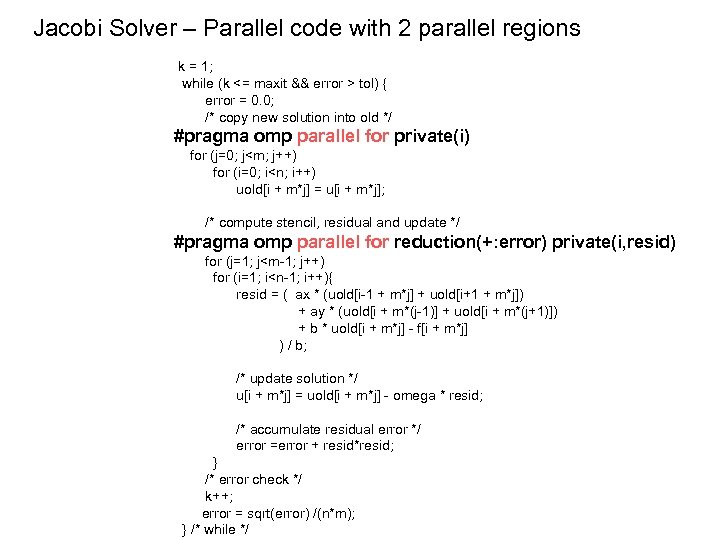

Jacobi Solver – Parallel code with 2 parallel regions k = 1; while (k <= maxit && error > tol) { error = 0. 0; /* copy new solution into old */ #pragma omp parallel for private(i) for (j=0; j

Jacobi Solver – Parallel code with 2 parallel regions k = 1; while (k <= maxit && error > tol) { error = 0. 0; /* copy new solution into old */ #pragma omp parallel for private(i) for (j=0; j

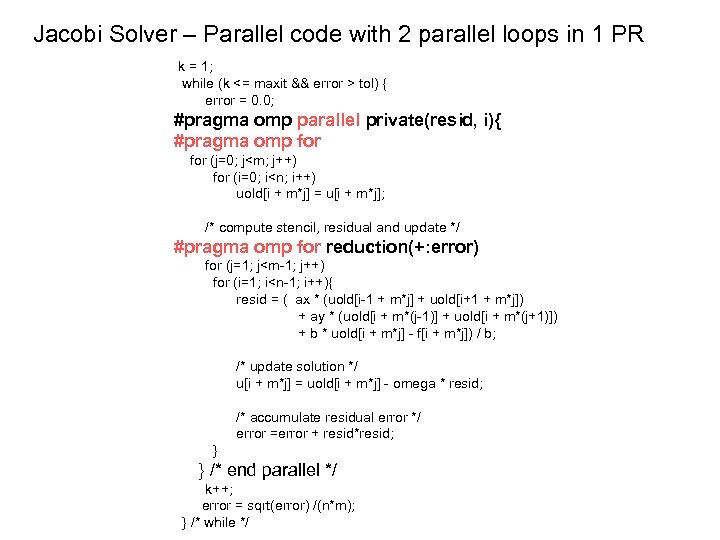

Jacobi Solver – Parallel code with 2 parallel loops in 1 PR k = 1; while (k <= maxit && error > tol) { error = 0. 0; #pragma omp parallel private(resid, i){ #pragma omp for (j=0; j

Jacobi Solver – Parallel code with 2 parallel loops in 1 PR k = 1; while (k <= maxit && error > tol) { error = 0. 0; #pragma omp parallel private(resid, i){ #pragma omp for (j=0; j

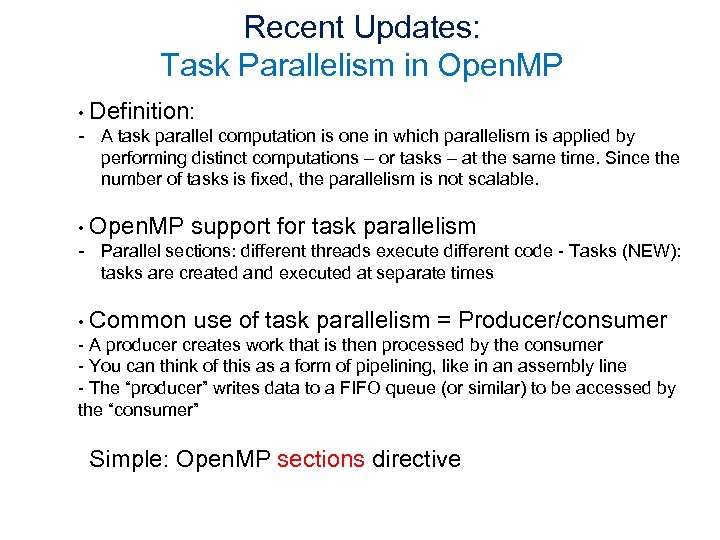

Recent Updates: Task Parallelism in Open. MP • Definition: - A task parallel computation is one in which parallelism is applied by performing distinct computations – or tasks – at the same time. Since the number of tasks is fixed, the parallelism is not scalable. • Open. MP support for task parallelism - Parallel sections: different threads execute different code - Tasks (NEW): tasks are created and executed at separate times • Common use of task parallelism = Producer/consumer - A producer creates work that is then processed by the consumer - You can think of this as a form of pipelining, like in an assembly line - The “producer” writes data to a FIFO queue (or similar) to be accessed by the “consumer” Simple: Open. MP sections directive

Recent Updates: Task Parallelism in Open. MP • Definition: - A task parallel computation is one in which parallelism is applied by performing distinct computations – or tasks – at the same time. Since the number of tasks is fixed, the parallelism is not scalable. • Open. MP support for task parallelism - Parallel sections: different threads execute different code - Tasks (NEW): tasks are created and executed at separate times • Common use of task parallelism = Producer/consumer - A producer creates work that is then processed by the consumer - You can think of this as a form of pipelining, like in an assembly line - The “producer” writes data to a FIFO queue (or similar) to be accessed by the “consumer” Simple: Open. MP sections directive

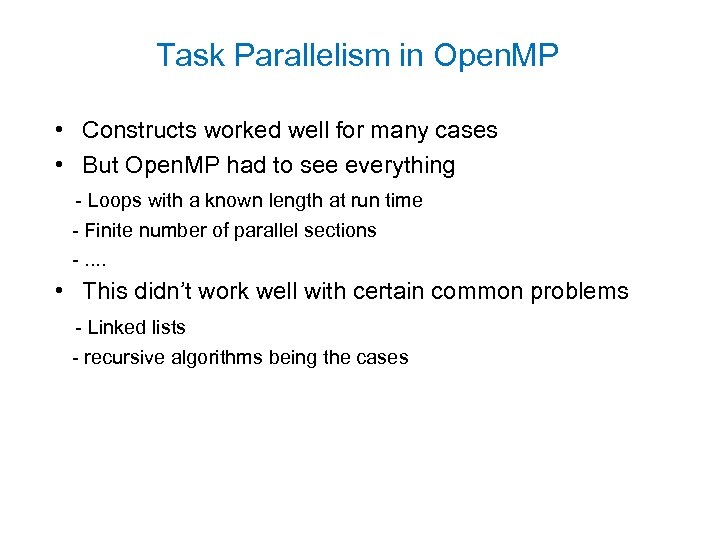

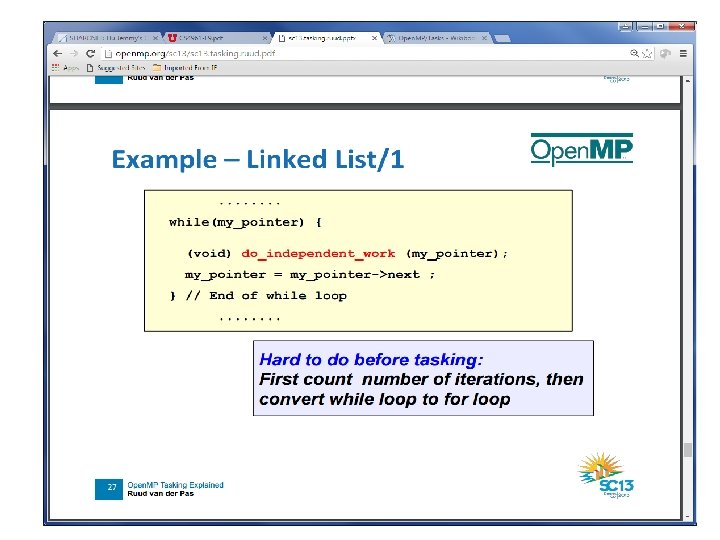

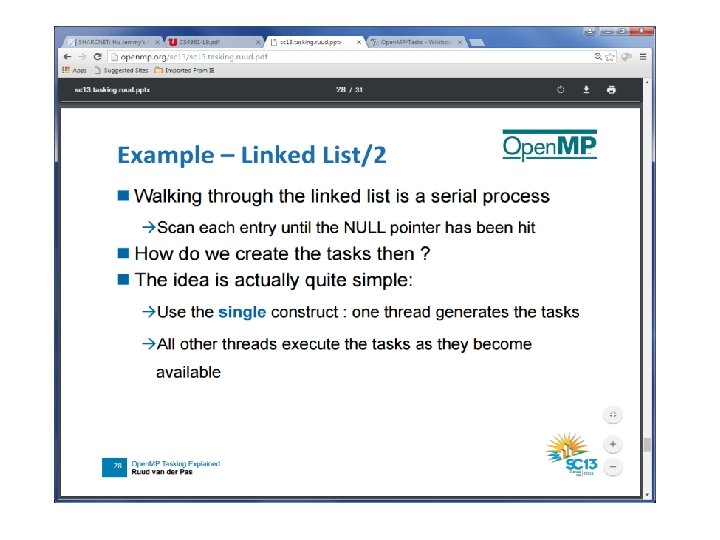

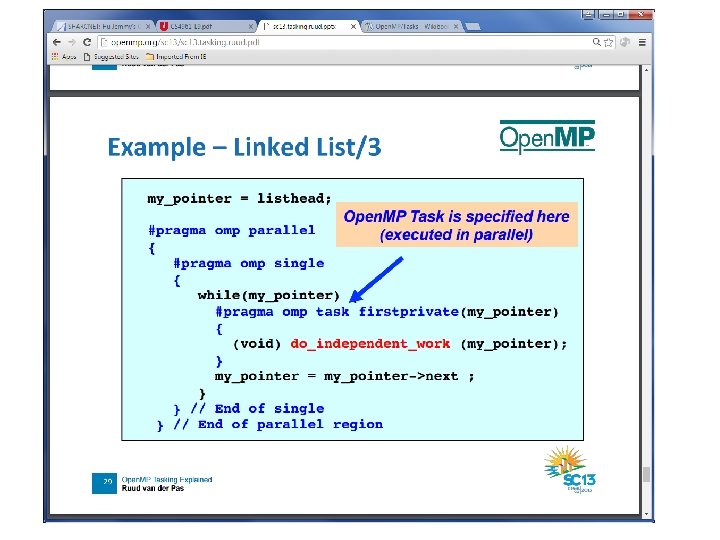

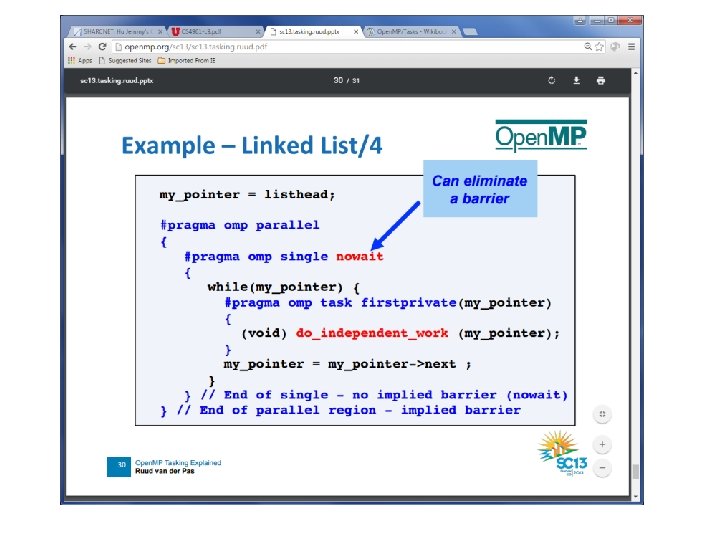

Task Parallelism in Open. MP • Constructs worked well for many cases • But Open. MP had to see everything - Loops with a known length at run time - Finite number of parallel sections -. . • This didn’t work well with certain common problems - Linked lists - recursive algorithms being the cases

Task Parallelism in Open. MP • Constructs worked well for many cases • But Open. MP had to see everything - Loops with a known length at run time - Finite number of parallel sections -. . • This didn’t work well with certain common problems - Linked lists - recursive algorithms being the cases

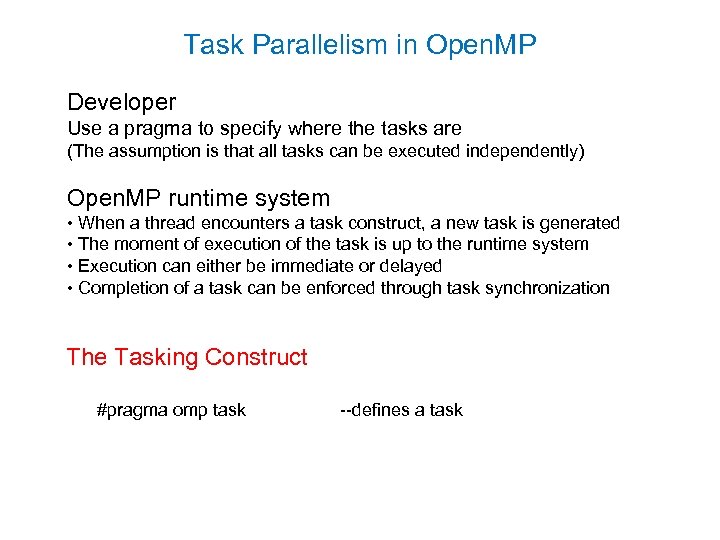

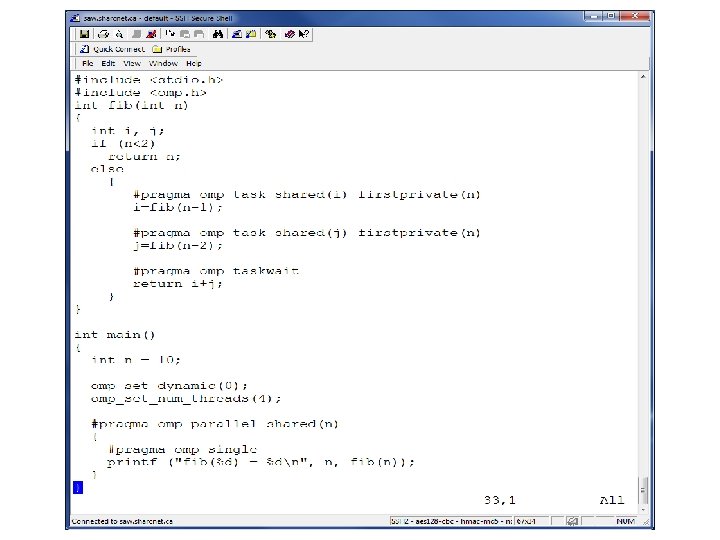

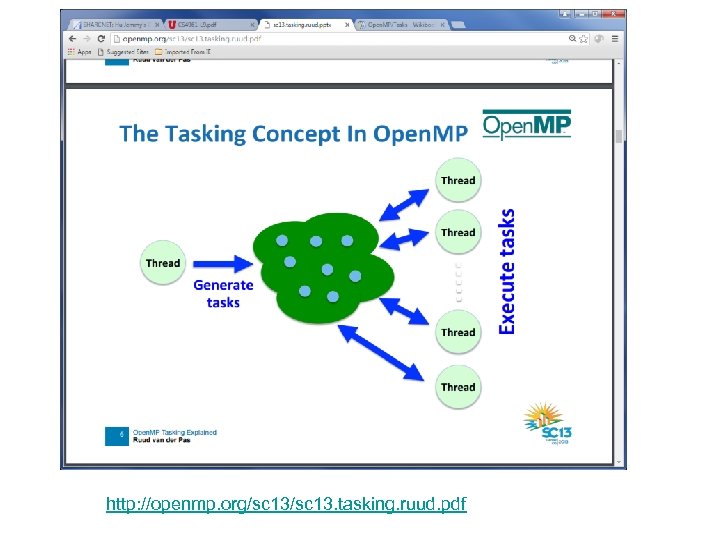

Task Parallelism in Open. MP Developer Use a pragma to specify where the tasks are (The assumption is that all tasks can be executed independently) Open. MP runtime system • When a thread encounters a task construct, a new task is generated • The moment of execution of the task is up to the runtime system • Execution can either be immediate or delayed • Completion of a task can be enforced through task synchronization The Tasking Construct #pragma omp task --defines a task

Task Parallelism in Open. MP Developer Use a pragma to specify where the tasks are (The assumption is that all tasks can be executed independently) Open. MP runtime system • When a thread encounters a task construct, a new task is generated • The moment of execution of the task is up to the runtime system • Execution can either be immediate or delayed • Completion of a task can be enforced through task synchronization The Tasking Construct #pragma omp task --defines a task

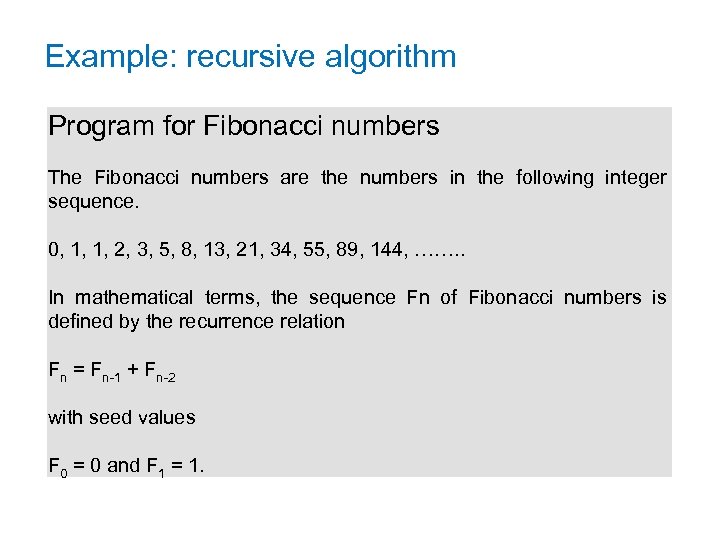

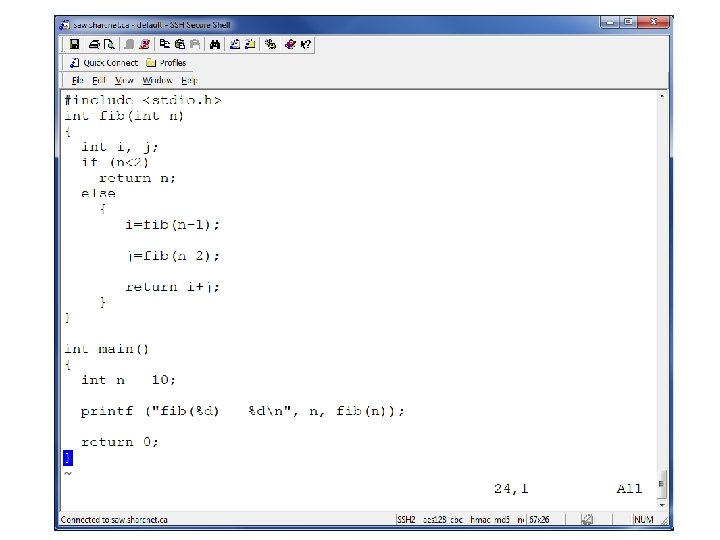

Example: recursive algorithm Program for Fibonacci numbers The Fibonacci numbers are the numbers in the following integer sequence. 0, 1, 1, 2, 3, 5, 8, 13, 21, 34, 55, 89, 144, ……. . In mathematical terms, the sequence Fn of Fibonacci numbers is defined by the recurrence relation Fn = Fn-1 + Fn-2 with seed values F 0 = 0 and F 1 = 1.

Example: recursive algorithm Program for Fibonacci numbers The Fibonacci numbers are the numbers in the following integer sequence. 0, 1, 1, 2, 3, 5, 8, 13, 21, 34, 55, 89, 144, ……. . In mathematical terms, the sequence Fn of Fibonacci numbers is defined by the recurrence relation Fn = Fn-1 + Fn-2 with seed values F 0 = 0 and F 1 = 1.

http: //openmp. org/sc 13. tasking. ruud. pdf

http: //openmp. org/sc 13. tasking. ruud. pdf

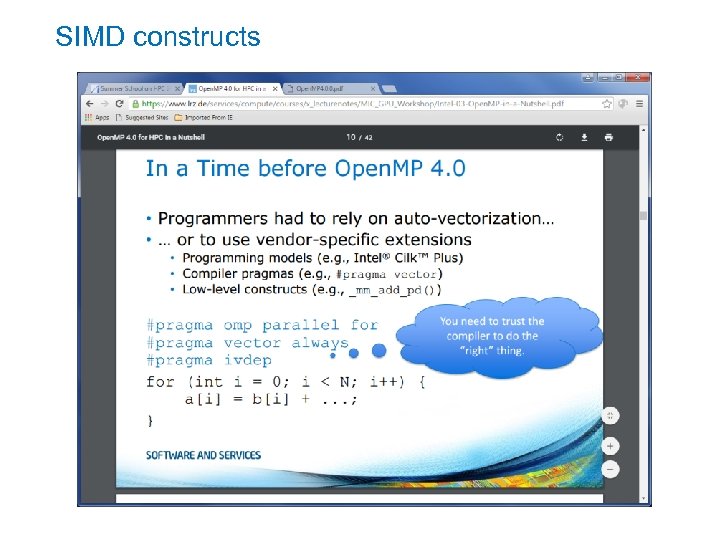

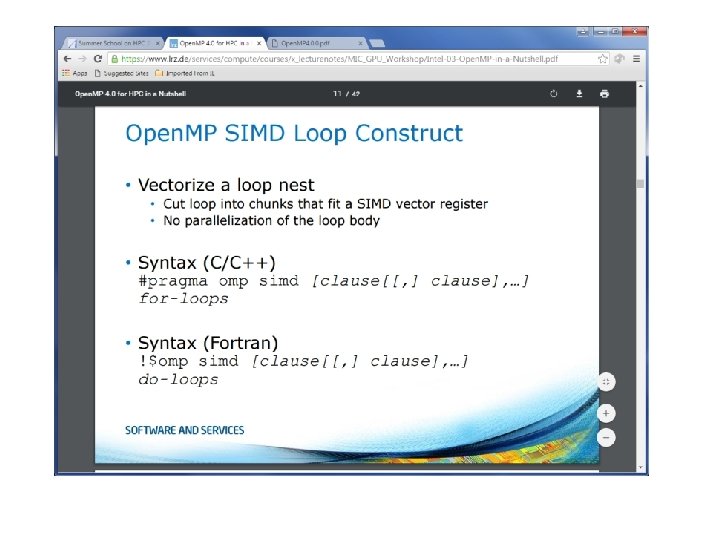

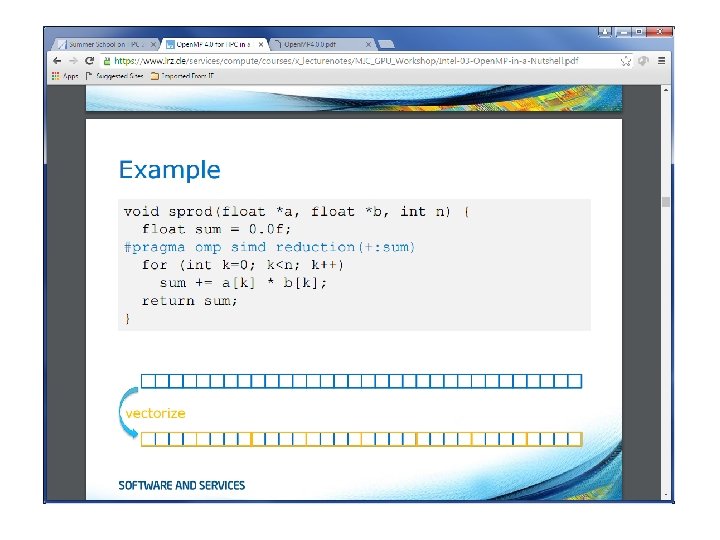

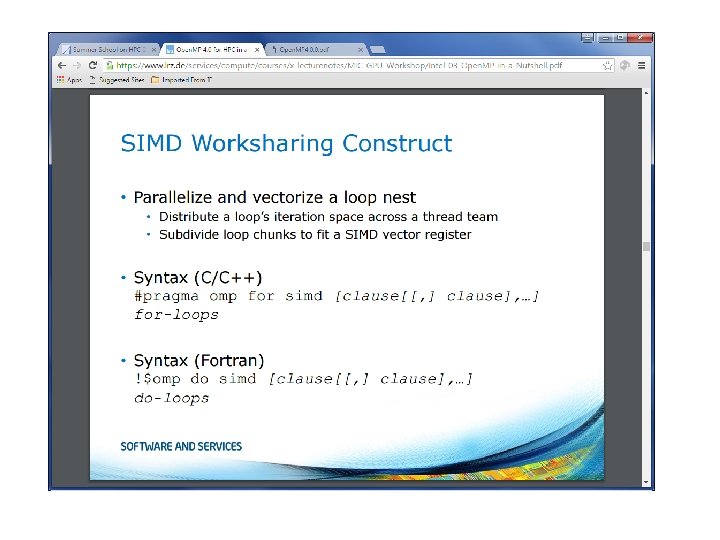

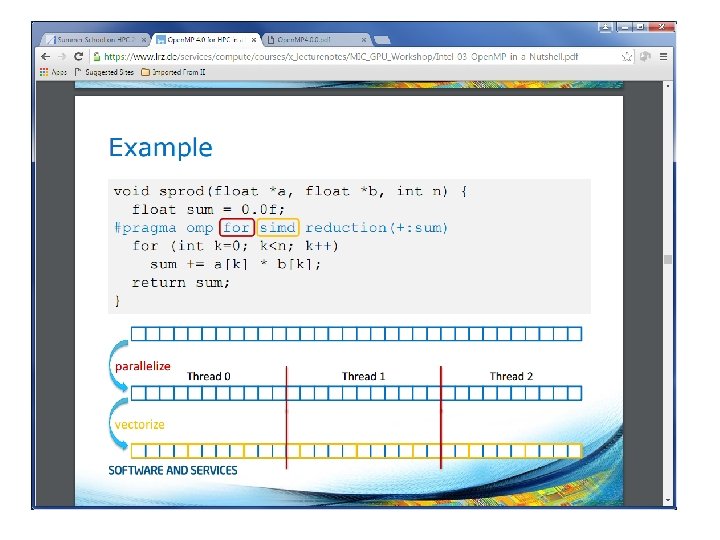

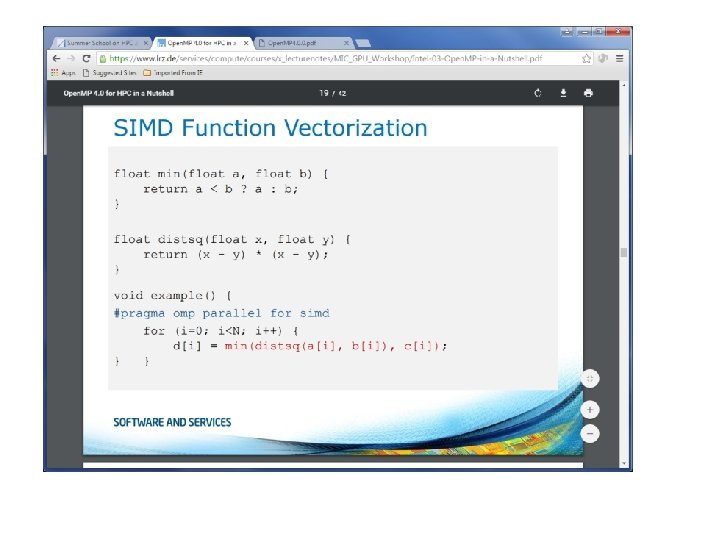

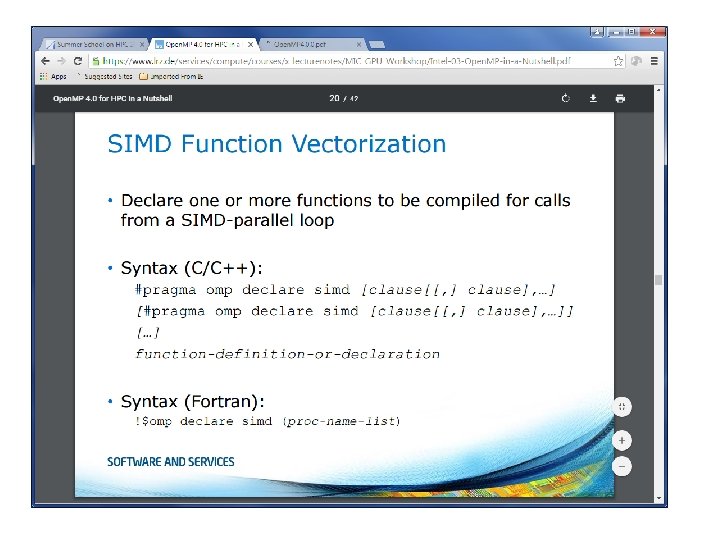

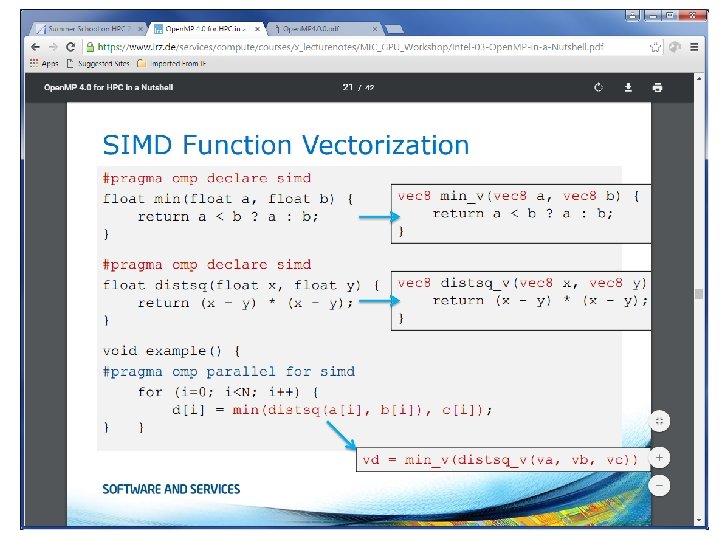

SIMD constructs

SIMD constructs

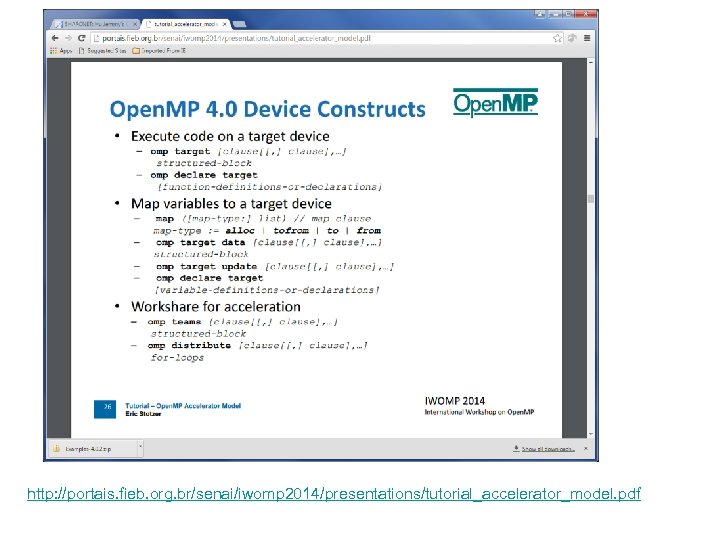

http: //portais. fieb. org. br/senai/iwomp 2014/presentations/tutorial_accelerator_model. pdf

http: //portais. fieb. org. br/senai/iwomp 2014/presentations/tutorial_accelerator_model. pdf

Open. MP 4. 5

Open. MP 4. 5

References 1. Open. MP specifications for C/C++ and Fortran, http: //www. openmp. org/ 2. Open. MP tutorial: http: //openmp. org/mp-documents/Intro_To_Open. MP_Mattson. pdf 3. Parallel Programming in Open. MP by Rohit Chandra, Morgan Kaufman Publishers, ISBN 1 -55860 -671 -8 4. http: //openmp. org/mp-documents/openmp-examples-4. 0. 2. pdf

References 1. Open. MP specifications for C/C++ and Fortran, http: //www. openmp. org/ 2. Open. MP tutorial: http: //openmp. org/mp-documents/Intro_To_Open. MP_Mattson. pdf 3. Parallel Programming in Open. MP by Rohit Chandra, Morgan Kaufman Publishers, ISBN 1 -55860 -671 -8 4. http: //openmp. org/mp-documents/openmp-examples-4. 0. 2. pdf