2b26b9bba2e17ce5987fbdd782a7f8dd.ppt

- Количество слайдов: 44

Privacy, Economics, and Immediate Gratification Theory and (Preliminary) Evidence Alessandro Acquisti Heinz School, Carnegie Mellon University acquisti@andrew. cmu. edu

Privacy, Economics, and Immediate Gratification Theory and (Preliminary) Evidence Alessandro Acquisti Heinz School, Carnegie Mellon University acquisti@andrew. cmu. edu

Privacy and economics • Privacy is an economic problem… • … even when privacy issues may not have direct economic interpretation • Privacy is about trade-offs: pros/cons of revealing/accessing personal information – Individuals – Organizations • … and trade-offs are the realm of economics

Privacy and economics • Privacy is an economic problem… • … even when privacy issues may not have direct economic interpretation • Privacy is about trade-offs: pros/cons of revealing/accessing personal information – Individuals – Organizations • … and trade-offs are the realm of economics

The economics of privacy • Early 1980 s – Chicago school: Posner, Stigler • Mid 1990 s – IT explosion: Varian, Noam, Laudon, Clarke • After 2001 – The Internet: personalization and dynamic behavior – Modeling: price discrimination, information and competition, costs of accessing customers (Taylor [2001], Calzolari and Pavan [2001], Acquisti and Varian [2001], etc. ) – Empirical: surveys and experiments – Economics of (Personal) Information Security: WEIS http: //www. andrew. cmu. edu/~acquisti/econprivacy. htm

The economics of privacy • Early 1980 s – Chicago school: Posner, Stigler • Mid 1990 s – IT explosion: Varian, Noam, Laudon, Clarke • After 2001 – The Internet: personalization and dynamic behavior – Modeling: price discrimination, information and competition, costs of accessing customers (Taylor [2001], Calzolari and Pavan [2001], Acquisti and Varian [2001], etc. ) – Empirical: surveys and experiments – Economics of (Personal) Information Security: WEIS http: //www. andrew. cmu. edu/~acquisti/econprivacy. htm

Issues in the economics of privacy that we will not be discussing today 1. Is too much privacy bad for you? 2. Do you really have zero privacy? 3. What are the costs of privacy?

Issues in the economics of privacy that we will not be discussing today 1. Is too much privacy bad for you? 2. Do you really have zero privacy? 3. What are the costs of privacy?

What we will be discussing today 5. Do people really care about privacy? and then only indirectly, Who should protect your privacy? It is true that there are potential costs of using Gmail for email storage […] The question is whether consumers should have the right to make that choice and balance the tradeoffs, or whether it will be preemptively denied to them by privacy fundamentalists out to deny consumers that choice. -- Declan Mc. Cullagh (2004)

What we will be discussing today 5. Do people really care about privacy? and then only indirectly, Who should protect your privacy? It is true that there are potential costs of using Gmail for email storage […] The question is whether consumers should have the right to make that choice and balance the tradeoffs, or whether it will be preemptively denied to them by privacy fundamentalists out to deny consumers that choice. -- Declan Mc. Cullagh (2004)

![Privacy attitudes… • Attitudes: usage – Top reason for not going online (Harris [2001]) Privacy attitudes… • Attitudes: usage – Top reason for not going online (Harris [2001])](https://present5.com/presentation/2b26b9bba2e17ce5987fbdd782a7f8dd/image-6.jpg) Privacy attitudes… • Attitudes: usage – Top reason for not going online (Harris [2001]) – 78% would increase Internet usage given more privacy (Harris [2001]) • Attitudes: shopping – $18 billion in lost e-tail sales (Jupiter [2001]) – Reason for 61% of Internet users to avoid ECommerce (P&AB [2001]) – 73% would shop more online with guarantee for privacy (Harris [2001])

Privacy attitudes… • Attitudes: usage – Top reason for not going online (Harris [2001]) – 78% would increase Internet usage given more privacy (Harris [2001]) • Attitudes: shopping – $18 billion in lost e-tail sales (Jupiter [2001]) – Reason for 61% of Internet users to avoid ECommerce (P&AB [2001]) – 73% would shop more online with guarantee for privacy (Harris [2001])

… versus privacy behavior • Behavior – Anecdotic evidence • DNA for Big. Mac – Experiments • Spiekermann, Grossklags, and Berendt (2001): privacy “advocates” & cameras – Everyday examples • Dot com deathbed • Abundance of information sharing

… versus privacy behavior • Behavior – Anecdotic evidence • DNA for Big. Mac – Experiments • Spiekermann, Grossklags, and Berendt (2001): privacy “advocates” & cameras – Everyday examples • Dot com deathbed • Abundance of information sharing

Explanations • Syverson (2003) – “Rational, after all” explanation • Shostack (2003) – “When it matters” explanation • Vila, Greenstadt, and Molnar (2003) – “Lemon market” explanation • Are there other explanations? – Acquisti and Grossklags (2003): privacy and rationality

Explanations • Syverson (2003) – “Rational, after all” explanation • Shostack (2003) – “When it matters” explanation • Vila, Greenstadt, and Molnar (2003) – “Lemon market” explanation • Are there other explanations? – Acquisti and Grossklags (2003): privacy and rationality

Personal information is a very peculiar economic good • Subjective – “Willingness to pay” affected by considerations beyond traditional market reasoning • Ex-post – Value uncertainty – Keeps on affecting individual after transaction

Personal information is a very peculiar economic good • Subjective – “Willingness to pay” affected by considerations beyond traditional market reasoning • Ex-post – Value uncertainty – Keeps on affecting individual after transaction

Personal information is a very peculiar economic good • Context-dependent (states of the world) – Anonymity sets – Recombinant growth – Sweeney (2002): 87% of Americans uniquely identifiable from ZIP code, birth date, and sex • Asymmetric information – Individual does not know how, how often, for how long her information will be used – Intrusions invisible and ubiquitous – Externalities and moral hazard

Personal information is a very peculiar economic good • Context-dependent (states of the world) – Anonymity sets – Recombinant growth – Sweeney (2002): 87% of Americans uniquely identifiable from ZIP code, birth date, and sex • Asymmetric information – Individual does not know how, how often, for how long her information will be used – Intrusions invisible and ubiquitous – Externalities and moral hazard

Personal information is a very peculiar economic good • Both private and public good aspects – As information, it is non rival and non excludable – Yet the more other parties use that personal information, the higher the risks for original data owner – Lump sum vs. negative annuity • Buy vs. sell – Individuals value differently protection and sale of same piece of information • Like insurance, but…

Personal information is a very peculiar economic good • Both private and public good aspects – As information, it is non rival and non excludable – Yet the more other parties use that personal information, the higher the risks for original data owner – Lump sum vs. negative annuity • Buy vs. sell – Individuals value differently protection and sale of same piece of information • Like insurance, but…

… maybe because… • … privacy issues actually originate from two different markets – Market for personal information – Market for privacy • Related, but not identical • Confusion leads to inconsistencies – Different rules, attitudes, considerations • • • Public vs. private Selling vs. buying Specific vs. generic Value for other people vs. damage to oneself Lump sum vs. negative annuity

… maybe because… • … privacy issues actually originate from two different markets – Market for personal information – Market for privacy • Related, but not identical • Confusion leads to inconsistencies – Different rules, attitudes, considerations • • • Public vs. private Selling vs. buying Specific vs. generic Value for other people vs. damage to oneself Lump sum vs. negative annuity

Privacy and rationality • Traditional economic view: forward looking agent, utility maximizer, bayesian updater, perfectly informed – Both in theoretical works on privacy – And in empirical studies • Exceptions: PEW Survey 2000, Annenberg Survey 2003

Privacy and rationality • Traditional economic view: forward looking agent, utility maximizer, bayesian updater, perfectly informed – Both in theoretical works on privacy – And in empirical studies • Exceptions: PEW Survey 2000, Annenberg Survey 2003

Yet: privacy trade-offs • Protect: – Immediate costs or loss of immediate benefits – Future (uncertain) benefits • Do not protect: – Immediate benefits – Future (uncertain) costs (sometimes, the reverse may be true)

Yet: privacy trade-offs • Protect: – Immediate costs or loss of immediate benefits – Future (uncertain) benefits • Do not protect: – Immediate benefits – Future (uncertain) costs (sometimes, the reverse may be true)

Why is this problematic? • Incomplete information • Bounded rationality • Psychological/behavioral distortions Theory: Acquisti ACM EC 04 Empirical approach: Acquisti and Grossklags WEIS 03, 04

Why is this problematic? • Incomplete information • Bounded rationality • Psychological/behavioral distortions Theory: Acquisti ACM EC 04 Empirical approach: Acquisti and Grossklags WEIS 03, 04

1. Incomplete information • What information has the individual access to when she takes privacy sensitive decisions? – For instance, is she aware of privacy invasions and associated risks? – Is she aware of benefits she may miss by protecting her personal data? – What is her knowledge of the existence and characteristics of protective technologies? • Privacy: – Asymmetric information • Exacerbating: e. g. , RFID, GPS – Material and immaterial costs and benefits – Uncertainty vs. risk, ex post evaluations

1. Incomplete information • What information has the individual access to when she takes privacy sensitive decisions? – For instance, is she aware of privacy invasions and associated risks? – Is she aware of benefits she may miss by protecting her personal data? – What is her knowledge of the existence and characteristics of protective technologies? • Privacy: – Asymmetric information • Exacerbating: e. g. , RFID, GPS – Material and immaterial costs and benefits – Uncertainty vs. risk, ex post evaluations

2. Bounded rationality • Is the individual able to consider all the parameters relevant to her choice? – Or is she limited by bounded rationality? – Herbert Simon’s “mental models” (or shortcuts) • Privacy: – Decisions must be based on several stochastic assessments and intricate “anonymity sets” – Inability to process all the stochastic information related to risks and probabilities of events leading to privacy costs and benefits – E. g. , HIPAA

2. Bounded rationality • Is the individual able to consider all the parameters relevant to her choice? – Or is she limited by bounded rationality? – Herbert Simon’s “mental models” (or shortcuts) • Privacy: – Decisions must be based on several stochastic assessments and intricate “anonymity sets” – Inability to process all the stochastic information related to risks and probabilities of events leading to privacy costs and benefits – E. g. , HIPAA

3. Psychological/behavioral distortions • Privacy and deviations from rationality – Optimism bias – Complacency towards large risks – Inability to deal with prolonged accumulation of small risks – Coherent arbitrariness – “Hot/cold” theory – Attitude (generic) vs. behavior (specific) • Fishbein and Ajzen. Attitude, Intention and Behavior: An Introduction to Theory and Research, 1975 – Hyperbolic discounting, immediate gratification

3. Psychological/behavioral distortions • Privacy and deviations from rationality – Optimism bias – Complacency towards large risks – Inability to deal with prolonged accumulation of small risks – Coherent arbitrariness – “Hot/cold” theory – Attitude (generic) vs. behavior (specific) • Fishbein and Ajzen. Attitude, Intention and Behavior: An Introduction to Theory and Research, 1975 – Hyperbolic discounting, immediate gratification

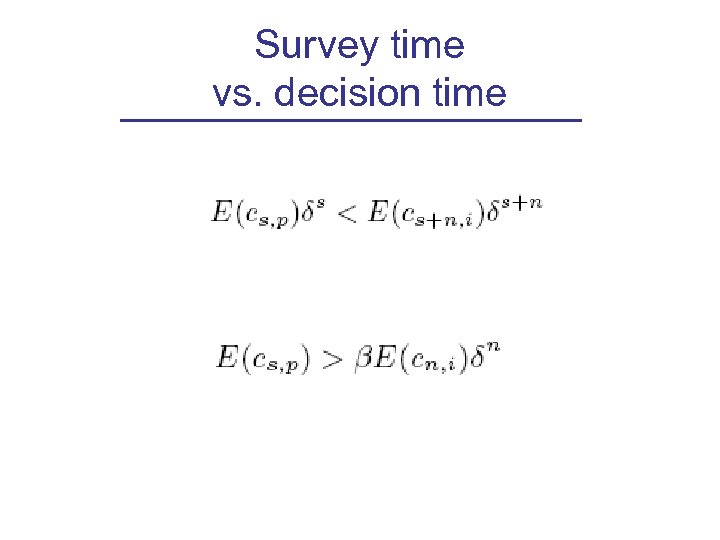

Hyperbolic discounting and privacy • Hyperbolic discounting (Laibson 94; O’Donoghue and Rabin 01) • Procrastination, immediate gratification • We do not discount future events in time-consistent way • We tend to assign lower weight to future events • Compare: • Privacy attitude in survey versus actual behavior • Privacy behavior when risks are more or less temporally close

Hyperbolic discounting and privacy • Hyperbolic discounting (Laibson 94; O’Donoghue and Rabin 01) • Procrastination, immediate gratification • We do not discount future events in time-consistent way • We tend to assign lower weight to future events • Compare: • Privacy attitude in survey versus actual behavior • Privacy behavior when risks are more or less temporally close

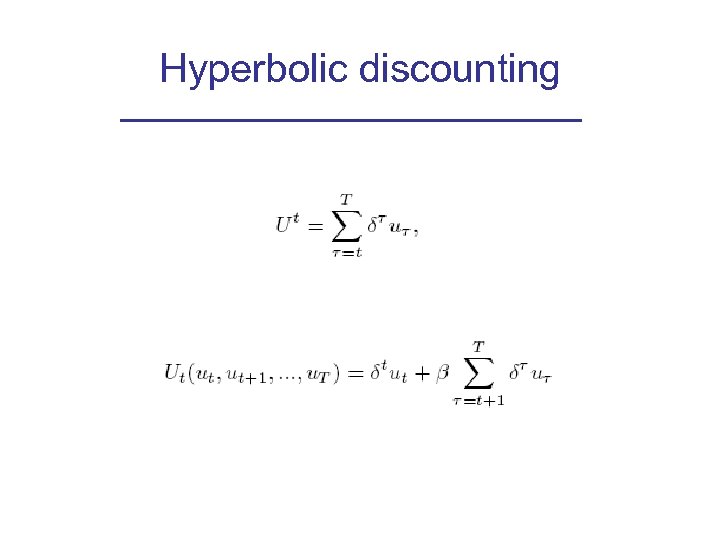

Hyperbolic discounting

Hyperbolic discounting

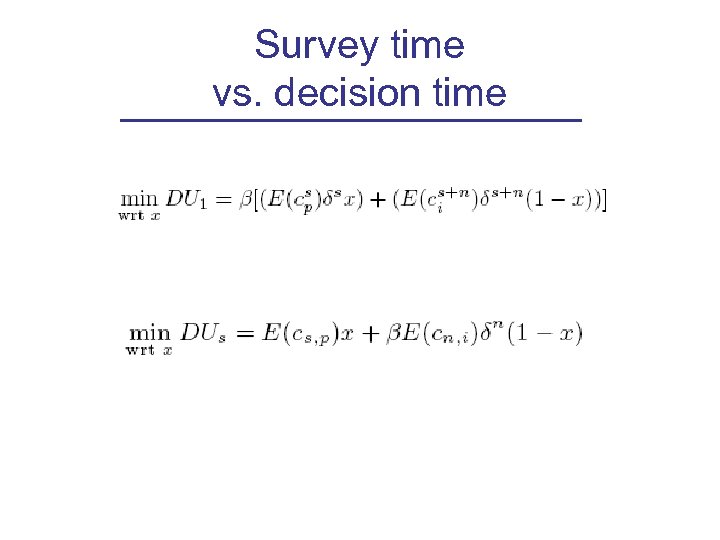

Survey time vs. decision time

Survey time vs. decision time

Survey time vs. decision time

Survey time vs. decision time

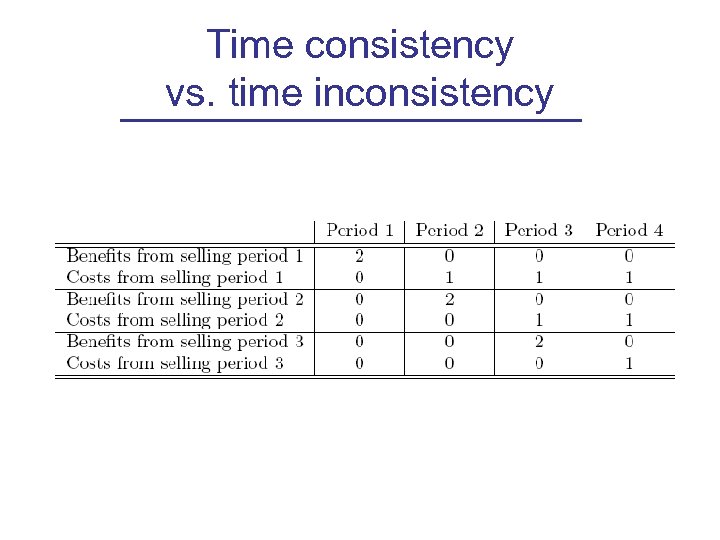

Time consistency vs. time inconsistency

Time consistency vs. time inconsistency

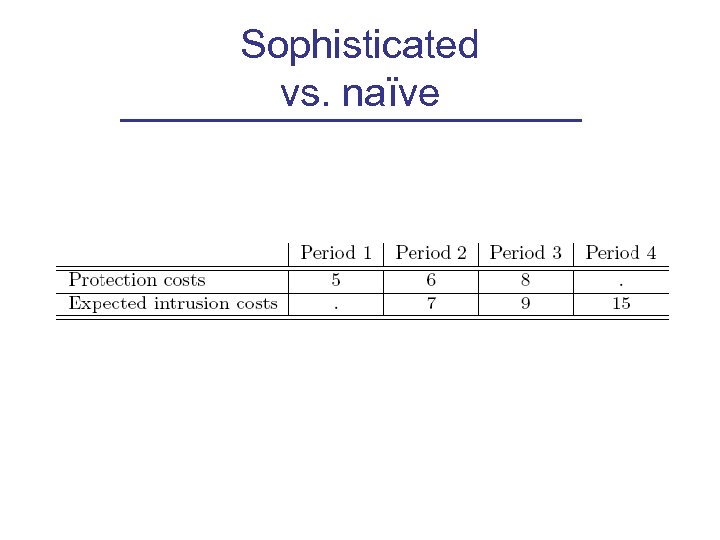

Sophisticated vs. naïve

Sophisticated vs. naïve

Consequences • Confounding factors: uncertainty, risk aversion, neutrality, etc. • Rationality model not appropriate to describe individual privacy behavior • Time inconsistencies lead to under protection and over release of personal information • Genuinely privacy concerned individuals may end up not protecting their privacy • Also sophisticated users will not protect themselves against risks • Large risks accumulate through small steps • Not knowing the risk is not always the issue

Consequences • Confounding factors: uncertainty, risk aversion, neutrality, etc. • Rationality model not appropriate to describe individual privacy behavior • Time inconsistencies lead to under protection and over release of personal information • Genuinely privacy concerned individuals may end up not protecting their privacy • Also sophisticated users will not protect themselves against risks • Large risks accumulate through small steps • Not knowing the risk is not always the issue

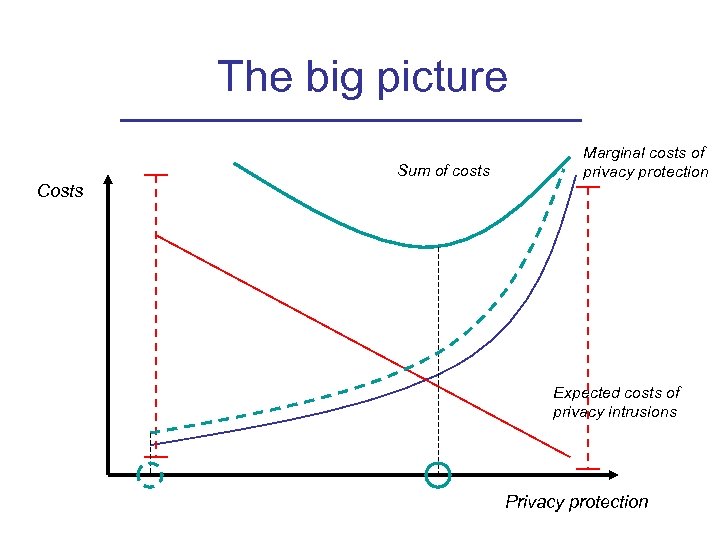

The big picture Sum of costs Marginal costs of privacy protection Costs Expected costs of privacy intrusions Privacy protection

The big picture Sum of costs Marginal costs of privacy protection Costs Expected costs of privacy intrusions Privacy protection

Survey and experiment • • Phase One: pilot Phase Two: ~100 questions, 119 subjects from CMU list. Paid, online survey (CMU Berkman Fund) Goals – Contrast three sets of data • Privacy attitudes • Privacy behavior • Market characteristics, information, and psychological distortions – Test rationality assumption – Explain behavior and dichotomy • Phase Three: experiment

Survey and experiment • • Phase One: pilot Phase Two: ~100 questions, 119 subjects from CMU list. Paid, online survey (CMU Berkman Fund) Goals – Contrast three sets of data • Privacy attitudes • Privacy behavior • Market characteristics, information, and psychological distortions – Test rationality assumption – Explain behavior and dichotomy • Phase Three: experiment

Questions 1. Demographics and IT usage 2. Knowledge of privacy risks 3. Knowledge of protection 4. Attitudes towards privacy (generic) 5. Attitudes towards privacy (specific) 6. Risk neutrality/aversion (unframed) 7. Strategic/unstrategic behavior (unframed) 8. Hyperbolic discounting (unframed) 9. Buy and sell value for same piece of information 10. Behavior, past: “Sell” behavior (i. e. , give away information) 11. Behavior, past: “Buy” behavior (i. e. , protect information)

Questions 1. Demographics and IT usage 2. Knowledge of privacy risks 3. Knowledge of protection 4. Attitudes towards privacy (generic) 5. Attitudes towards privacy (specific) 6. Risk neutrality/aversion (unframed) 7. Strategic/unstrategic behavior (unframed) 8. Hyperbolic discounting (unframed) 9. Buy and sell value for same piece of information 10. Behavior, past: “Sell” behavior (i. e. , give away information) 11. Behavior, past: “Buy” behavior (i. e. , protect information)

Demographics • Age: 19 -55 (average: 24) • Education: mostly college educated • Household income: from <15 k to 120 k+ • Nationalities: USA 83% • Jobs: full-time students 41. 32%, the rest in full time/part time jobs or unemployed

Demographics • Age: 19 -55 (average: 24) • Education: mostly college educated • Household income: from <15 k to 120 k+ • Nationalities: USA 83% • Jobs: full-time students 41. 32%, the rest in full time/part time jobs or unemployed

Preliminary results

Preliminary results

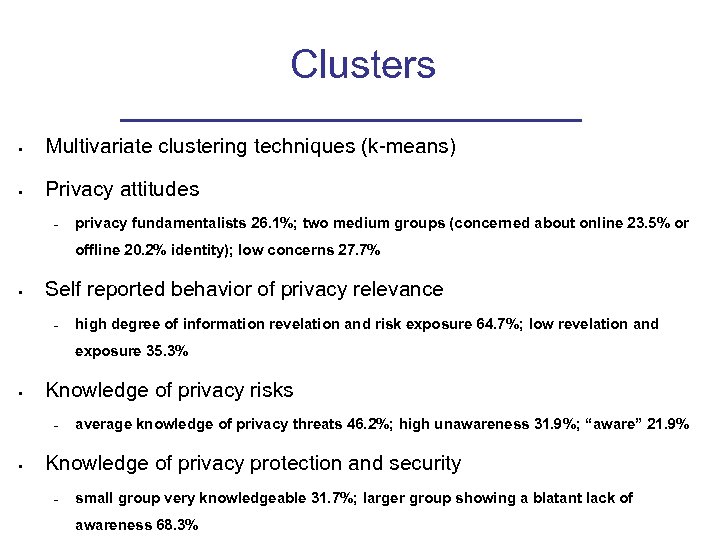

Clusters • Multivariate clustering techniques (k-means) • Privacy attitudes – privacy fundamentalists 26. 1%; two medium groups (concerned about online 23. 5% or offline 20. 2% identity); low concerns 27. 7% • Self reported behavior of privacy relevance – high degree of information revelation and risk exposure 64. 7%; low revelation and exposure 35. 3% • Knowledge of privacy risks – • average knowledge of privacy threats 46. 2%; high unawareness 31. 9%; “aware” 21. 9% Knowledge of privacy protection and security – small group very knowledgeable 31. 7%; larger group showing a blatant lack of awareness 68. 3%

Clusters • Multivariate clustering techniques (k-means) • Privacy attitudes – privacy fundamentalists 26. 1%; two medium groups (concerned about online 23. 5% or offline 20. 2% identity); low concerns 27. 7% • Self reported behavior of privacy relevance – high degree of information revelation and risk exposure 64. 7%; low revelation and exposure 35. 3% • Knowledge of privacy risks – • average knowledge of privacy threats 46. 2%; high unawareness 31. 9%; “aware” 21. 9% Knowledge of privacy protection and security – small group very knowledgeable 31. 7%; larger group showing a blatant lack of awareness 68. 3%

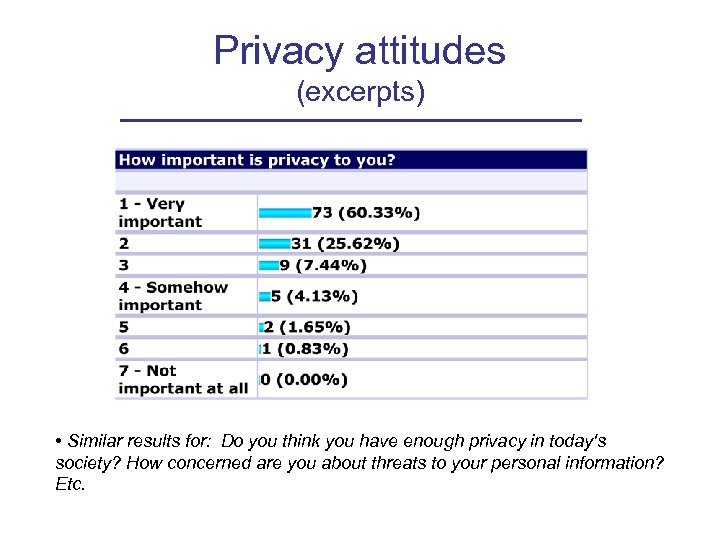

Privacy attitudes (excerpts) • Similar results for: Do you think you have enough privacy in today's society? How concerned are you about threats to your personal information? Etc.

Privacy attitudes (excerpts) • Similar results for: Do you think you have enough privacy in today's society? How concerned are you about threats to your personal information? Etc.

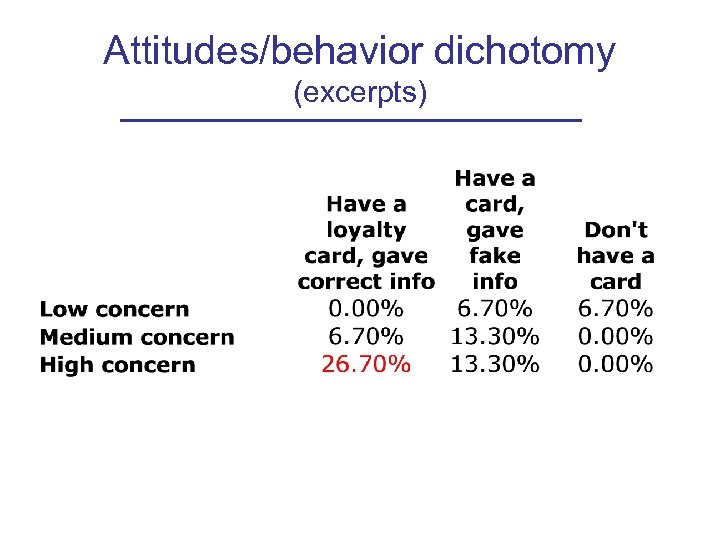

Attitudes/behavior dichotomy (excerpts)

Attitudes/behavior dichotomy (excerpts)

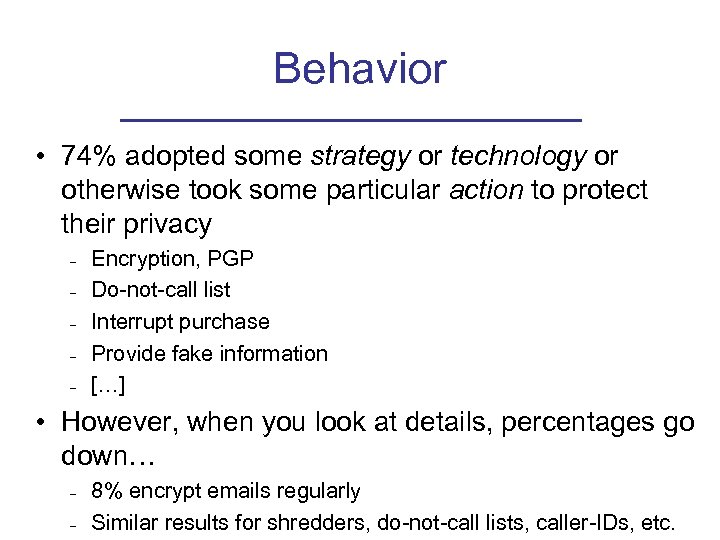

Behavior • 74% adopted some strategy or technology or otherwise took some particular action to protect their privacy – – – Encryption, PGP Do-not-call list Interrupt purchase Provide fake information […] • However, when you look at details, percentages go down… – – 8% encrypt emails regularly Similar results for shredders, do-not-call lists, caller-IDs, etc.

Behavior • 74% adopted some strategy or technology or otherwise took some particular action to protect their privacy – – – Encryption, PGP Do-not-call list Interrupt purchase Provide fake information […] • However, when you look at details, percentages go down… – – 8% encrypt emails regularly Similar results for shredders, do-not-call lists, caller-IDs, etc.

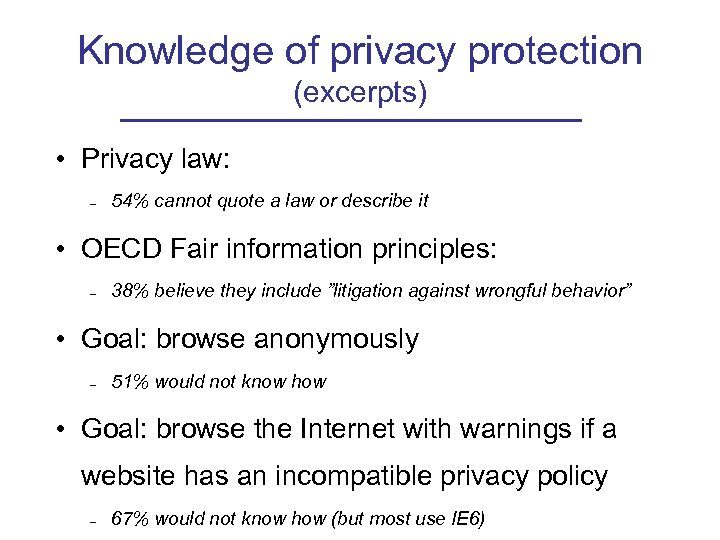

Knowledge of privacy protection (excerpts) • Privacy law: – 54% cannot quote a law or describe it • OECD Fair information principles: – 38% believe they include ”litigation against wrongful behavior” • Goal: browse anonymously – 51% would not know how • Goal: browse the Internet with warnings if a website has an incompatible privacy policy – 67% would not know how (but most use IE 6)

Knowledge of privacy protection (excerpts) • Privacy law: – 54% cannot quote a law or describe it • OECD Fair information principles: – 38% believe they include ”litigation against wrongful behavior” • Goal: browse anonymously – 51% would not know how • Goal: browse the Internet with warnings if a website has an incompatible privacy policy – 67% would not know how (but most use IE 6)

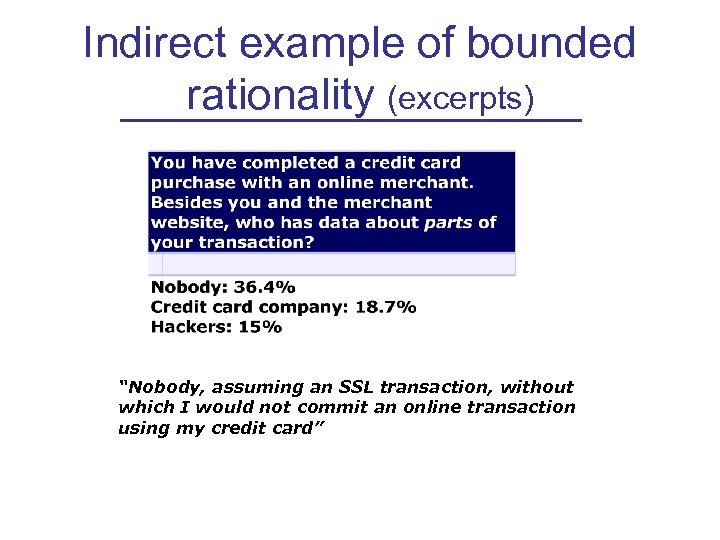

Indirect example of bounded rationality (excerpts) “Nobody, assuming an SSL transaction, without which I would not commit an online transaction using my credit card”

Indirect example of bounded rationality (excerpts) “Nobody, assuming an SSL transaction, without which I would not commit an online transaction using my credit card”

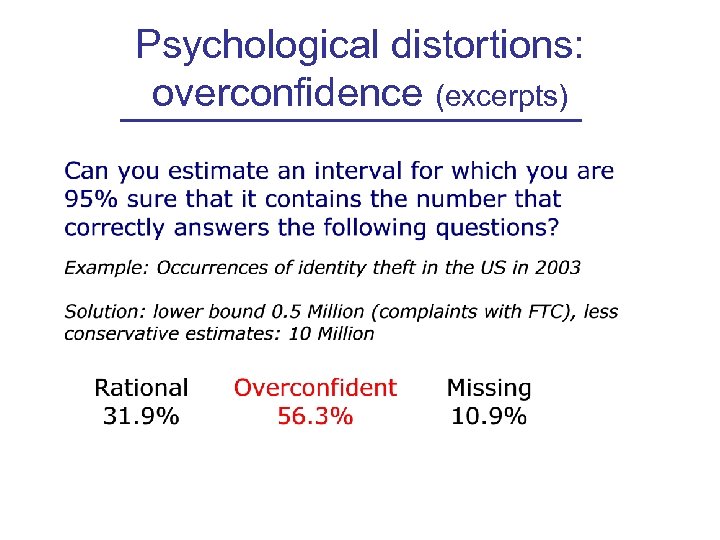

Psychological distortions: overconfidence (excerpts)

Psychological distortions: overconfidence (excerpts)

Unframed • (Economic traits) • Evidence of: – Hyperbolic discounting – Strong risk aversion – Non-strategic behavior (“Beauty contest” game)

Unframed • (Economic traits) • Evidence of: – Hyperbolic discounting – Strong risk aversion – Non-strategic behavior (“Beauty contest” game)

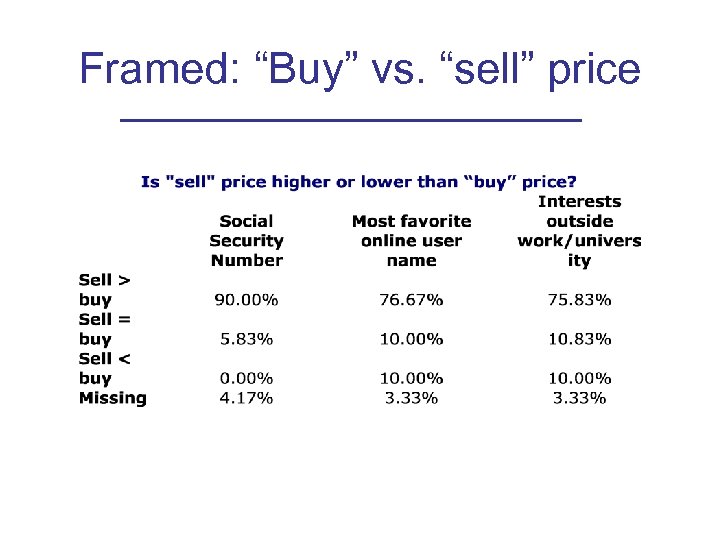

Framed: “Buy” vs. “sell” price

Framed: “Buy” vs. “sell” price

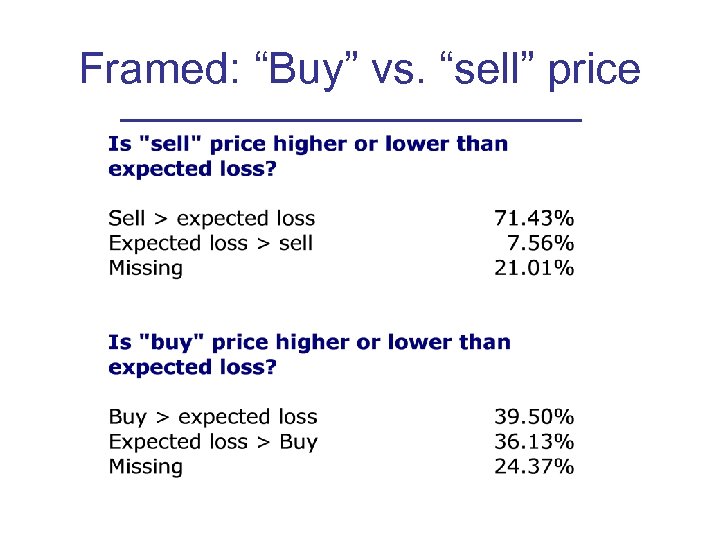

Framed: “Buy” vs. “sell” price

Framed: “Buy” vs. “sell” price

Framed: use of protection and discounting • 55% have registered their number under a do-not-call lists • 53% regularly use their answering machine or caller-ID to screen calls • 38% have demanded to be removed from specific calling lists …. • 17% put a credit alert on their credit card

Framed: use of protection and discounting • 55% have registered their number under a do-not-call lists • 53% regularly use their answering machine or caller-ID to screen calls • 38% have demanded to be removed from specific calling lists …. • 17% put a credit alert on their credit card

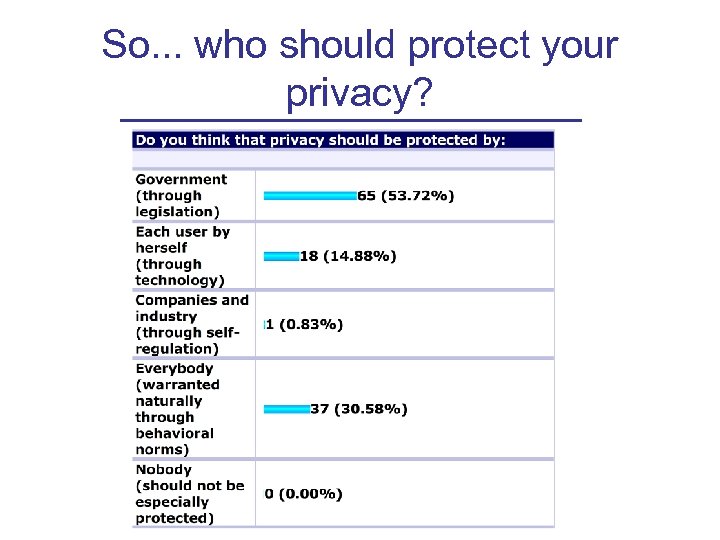

So. . . who should protect your privacy?

So. . . who should protect your privacy?

Conclusions • Theory – Time inconsistencies may lead to under-protection and over-release of personal information – Genuinely privacy concerned individuals may end up not protecting their privacy – Generic attitude, specific behavior • Evidence – Sophisticate attitudes, and (somewhat) sophisticate behavior – Evidence of overconfidence, incorrect assessment of own behavior, incomplete information about risks and protection, buy/sell dichotomy

Conclusions • Theory – Time inconsistencies may lead to under-protection and over-release of personal information – Genuinely privacy concerned individuals may end up not protecting their privacy – Generic attitude, specific behavior • Evidence – Sophisticate attitudes, and (somewhat) sophisticate behavior – Evidence of overconfidence, incorrect assessment of own behavior, incomplete information about risks and protection, buy/sell dichotomy

Conclusions • Implications – Rationality model not appropriate to describe individual privacy behavior – Privacy easier to protect than to sell – Self-regulation alone, or reliance on technology and user responsibility alone, will not work – Economics can show what to protect, what to share – Technology can implement chosen directions – Law can send appropriate signals to the market in order to enforce technology

Conclusions • Implications – Rationality model not appropriate to describe individual privacy behavior – Privacy easier to protect than to sell – Self-regulation alone, or reliance on technology and user responsibility alone, will not work – Economics can show what to protect, what to share – Technology can implement chosen directions – Law can send appropriate signals to the market in order to enforce technology