f34e82d57406231fdcec5ed19b416e8a.ppt

- Количество слайдов: 44

Policy Evaluation Antoine Bozio Institute for Fiscal Studies University of Oxford - January 2008

Outline I. Why is policy evaluation important? 1. 2. II. Policy view Academic view What are the evaluation problems? 1. 2. 3. III. The Quest for the Holy Grail: causality The generic problem: the counterfactual Specific problems: selection, endogeneity The evaluation methods 1. 2. 3. 4. Randomised social experiments Controlling for observables: regression, matching Natural experiments: diff-in-diff, regression discontinuity Instrument variable strategy

I. Why is policy evaluation important? (policy view) 1. Policy interventions are very expensive: UK government spends 41. 5 % of GDP Þ Need to know whether money is well spent Þ There are many alternative policies possible 2. Evaluation is key to modern democracies – Citizens can differ in their preferences, in the goals they want policy to achieve => politics – Policies are means to achieve these goals – Citizens need to be informed on the efficiency of these means => policy evaluation

I. Why is policy evaluation important? (policy view) 3. It’s rarely obvious to know what are a policy’s effects – Unless you have strong beliefs (ideology), policies can have wide-ranging effects (economy is very complex and hard to predict) – Economic theory is very useful but leaves many of the policy conclusions indeterminate => it depends on parameters (individuals behaviour, markets…) – Correlation is not causation…

I. Why is policy evaluation important? (academic view) Evaluation is now a crucial part of applied economics: - To estimate parameters - To test models and theories Evaluation’s techniques have become a field in themselves - Many advances in the last ten years - Turning away from descriptive correlations, and aiming at “causal relationship”

I. Why is policy evaluation important? (academic view) Many fields of economics now rely heavily on evaluation’s work • Labour market policies • Impact of taxes • Impact of savings incentives • Education policies • Aid to developing countries Þ use of micro data Þ different from macro analysis (cross-country…)

II. What are the evaluation problems? 1. The Quest for the Holy Grail: causal relationships 2. The generic problem: the counterfactuals are missing 3. Specific obstacles: selection, endogeneity

II -1. The Quest for Causality Correlation is not causality! • “Post hoc, ergo propter hoc” : looking at what happens after the introduction of a policy is not proper evaluation. – – Macroeconomics changes – • Long term trends Selection effects Rubin’s model for causal inference: the experience setting and language - See Holland (1986)

II- 1. The Quest for Causality We want to establish causal inference of a policy T on a population U composed agents u ÞTo measure how treatment or the cause (T) affects the outcome of interest (Y) Þ Define Y(u) as the outcome of interest defined over U (It can measure income, employment status, health, …)

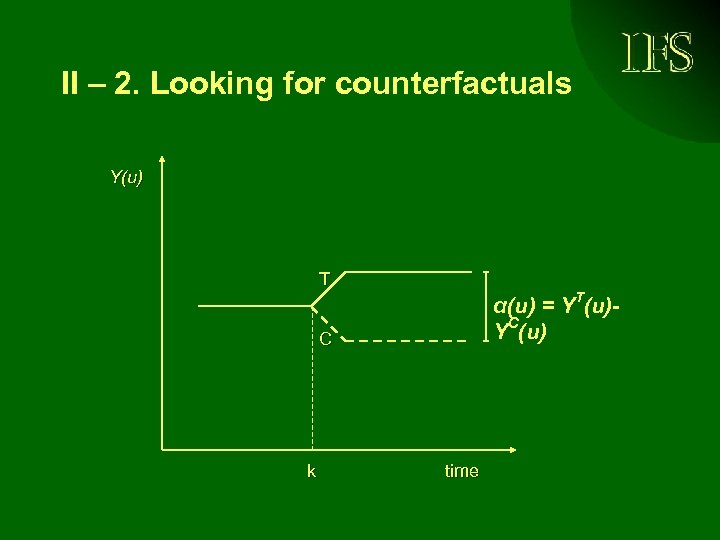

II – 2. Looking for counterfactuals Looking for counterfactual: What would have happened to this person’s behaviour under an alternative policy ? E. G. : - Do people work more when marginal taxes are lower ? - Do people earn more when they have more education ? - Do unemployed find more easily a job when unemployment benefit duration is reduced ?

II – 2. Looking for counterfactuals • Treated: agent that has been exposed to treatment (T) • Control: agent that has not been exposed to treatment (C, non T) • The role of Y is to measure the effect of treatment (causes have effects) YT(u) and YC(u) outcome that would be observed had unit u been exposed to treatment outcome that would be observed had unit u not been exposed to treatment

II – 2. Looking for counterfactuals • The causal effect of treatment T on unit u as measured by outcome Y is α(u) = YT(u) - YC(u) • It’s a missing data problem • Fundamental problem of causal inference It is impossible to observe YT(u) and YC(u) simultaneously on the same unit Þ Therefore, it is impossible to observe α(u) for any unit u

II – 2. Looking for counterfactuals Y(u) T α(u) = YT(u)YC(u) C k time

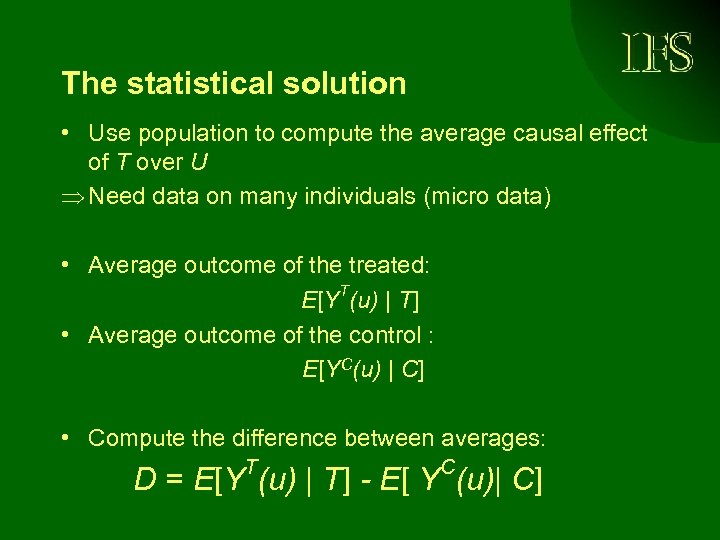

The statistical solution • Use population to compute the average causal effect of T over U Þ Need data on many individuals (micro data) • Average outcome of the treated: E[YT(u) | T] • Average outcome of the control : E[YC(u) | C] • Compute the difference between averages: T C D = E[Y (u) | T] - E[ Y (u)| C]

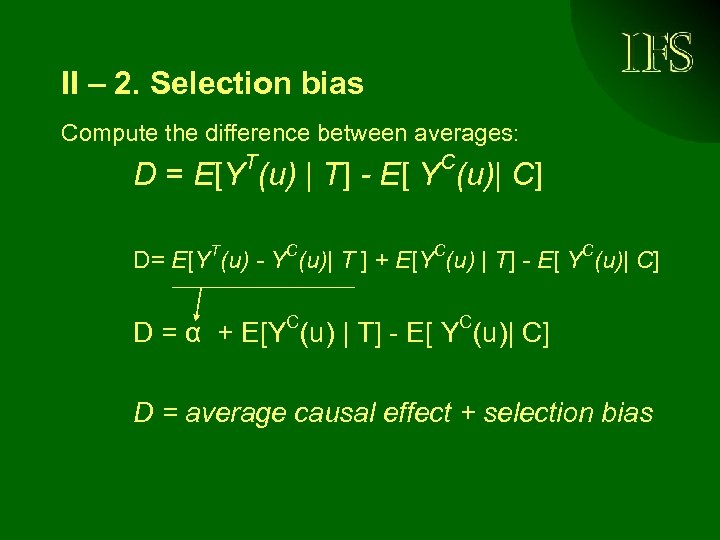

II – 2. Selection bias Compute the difference between averages: T C D = E[Y (u) | T] - E[ Y (u)| C] D= E[YT(u) - YC(u)| T ] + E[YC(u) | T] - E[ YC(u)| C] C C D = α + E[Y (u) | T] - E[ Y (u)| C] D = average causal effect + selection bias

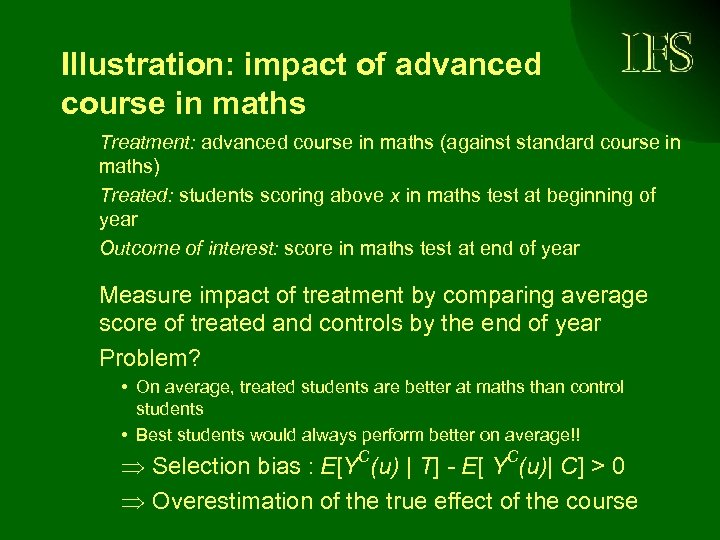

Illustration: impact of advanced course in maths Treatment: advanced course in maths (against standard course in maths) Treated: students scoring above x in maths test at beginning of year Outcome of interest: score in maths test at end of year Measure impact of treatment by comparing average score of treated and controls by the end of year Problem? • On average, treated students are better at maths than control students • Best students would always perform better on average!! Þ Selection bias : E[YC(u) | T] - E[ YC(u)| C] > 0 Þ Overestimation of the true effect of the course

Illustration: impact of advanced course in maths (cont) Compare before (t-1) and after treatment (t+1): before the advanced class and after. T D = E[Y (u) | T, t+1] - E[ YT(u)| T, t-1] Problems? -Many other things might change : student grow older, smarter (trend issue) -Hard to disentangle the impact of the advanced class from the regular class -Grading before and after might not be equivalent… => Estimates likely to be biased

III. Evaluation methods: how to construct the counterfactual 1. Randomised social experiments 2. Controlling for observables: OLS, matching 3. Natural experiment: Difference in difference, regression discontinuity design 4. Instrument variable methodology 5. Other methods : Selection model and structural estimation

III- 1. Randomized social experiments • Experiments solve the selection problem by randomly assigning units to treatment • Because assignment to treatment is not based on any criterion related with the characteristics of the units, it will be independent of possible outcomes • E[YC(u) | C] = E[YC(u) | T] now holds D = E[YT(u) | T] - E[YC(u) | C] D = α = E[YT(u) - YC(u)] = causal effect Þ Convincing results Þ More and more randomized policies

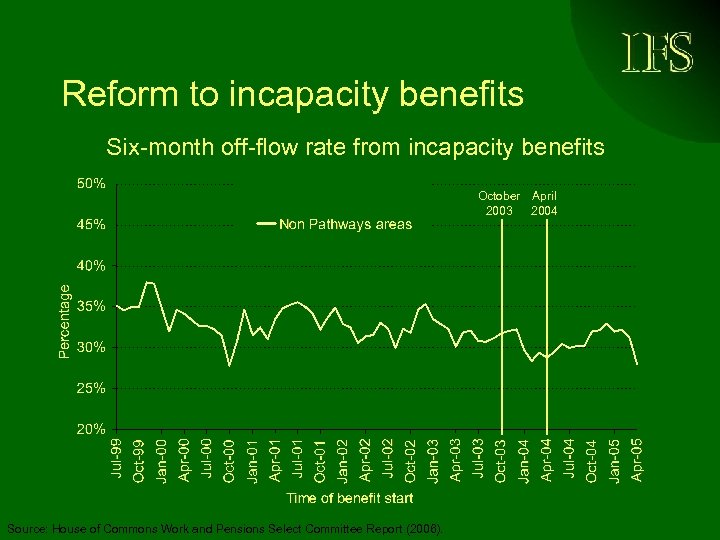

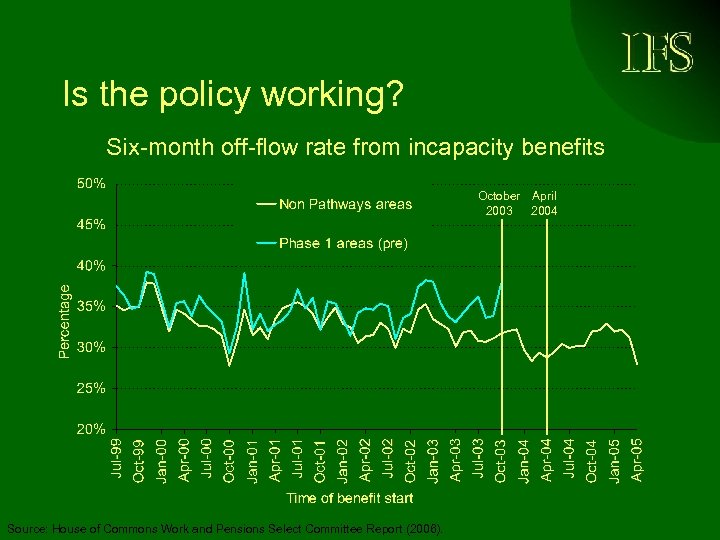

Reform to incapacity benefits Six-month off-flow rate from incapacity benefits October April 2003 2004 Source: House of Commons Work and Pensions Select Committee Report (2006).

Is the policy working? Six-month off-flow rate from incapacity benefits October April 2003 2004 Source: House of Commons Work and Pensions Select Committee Report (2006).

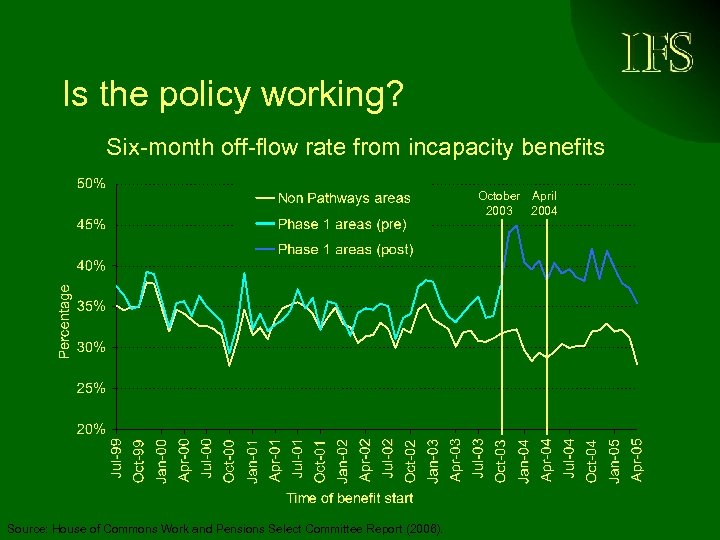

Is the policy working? Six-month off-flow rate from incapacity benefits October April 2003 2004 Source: House of Commons Work and Pensions Select Committee Report (2006).

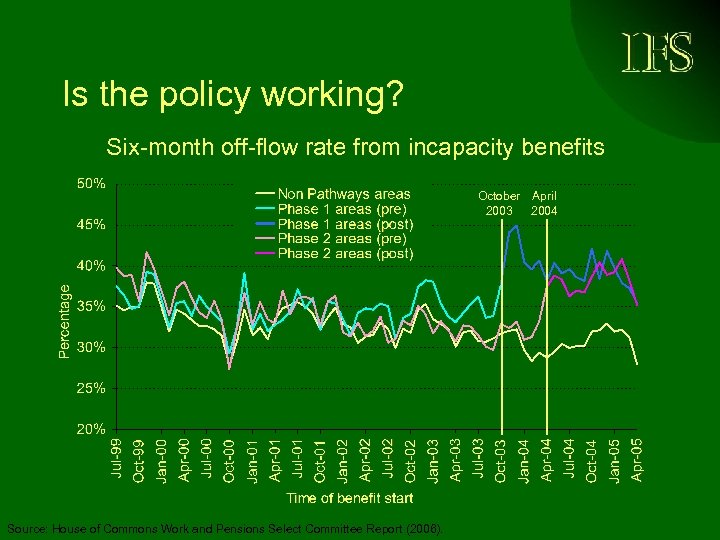

Is the policy working? Six-month off-flow rate from incapacity benefits October April 2003 2004 Source: House of Commons Work and Pensions Select Committee Report (2006).

Drawbacks to social experiments • Experiments are costly and therefore rare (less rare now) – Cost and ethical reasons, feasibility • Threats to internal validity – Non response bias. Non-random dropouts – Substitution between treated and control • Threats to external validity – Limited duration – Experiment specificity (region, timing…) – Agents know they are observed – General equilibrium effects • Threats to power – Small sample

Non-experimental approaches • Aim at recovering randomisation, thus recovering the missing counterfactual E(YC|T) • This is done in different ways by different methods • Which one is more appropriate depends on the treatment being studied, question of interest and available data

III 2. Controlling on observables 1/ Regression analysis (OLS) Y = a + b X 1 + c X 2 + d X 3 Problems : a/ Omitted variables lead to bias and some variables may be unobservable Example: Effect of education on earnings Ability or preference to work is hardly observable b/ Explanatory variables might be endogenous Example: Effect of unemployment benefit duration When unemployment increases, policies tend to increase unemployment benefit duration => Correlation is NOT causality !!

III 2. Controlling on observables 2/ Matching on observables: It is possible to compare groups that are similar relative to the variables we observe (same education, income…) • Explores all observable information • Take X to represent the observed characteristics of the units other than Y and D • It assumes that units with the same X are identical with respect to Y except possibly for the treatment status • Formally, what is being assumed is E[YC|D=T, X] = E[YC|D=C, X]

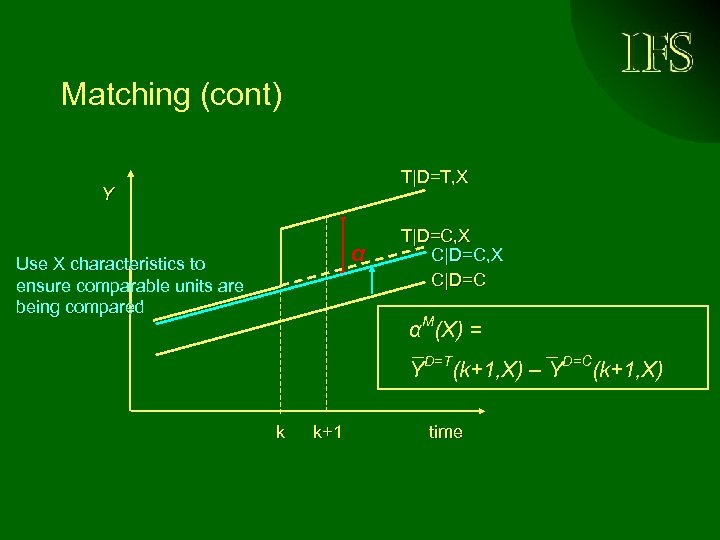

Matching (cont) T|D=T, X Y α Use X characteristics to ensure comparable units are being compared T|D=C, X C|D=C αM(X) = YD=T(k+1, X) – YD=C(k+1, X) k k+1 time

III - 3. Natural experiments • Explore sudden changes or spatial variation in the rules governing behaviour • Typically involve one group that is affected by the phenomena (the treated) and one other group that is not affected (the control) • Observe how behaviour (outcome of interest) changes as compared to change in unaffected group Þ Difference in difference Þ Regression discontinuity estimation

Difference in differences (DD) • Suppose a change in policy occurs at time k • We observe agents affected by policy change before and after the policy change, say at times k-1 and k+1 : A= E[YT|t=k+1] – E[YT|t=k-1] • We also observe agents not affected by the policy change at the same time periods: B=E[YD|t=k+1] – E[YD|t=k-1] • DD =A- B = true effect of the policy

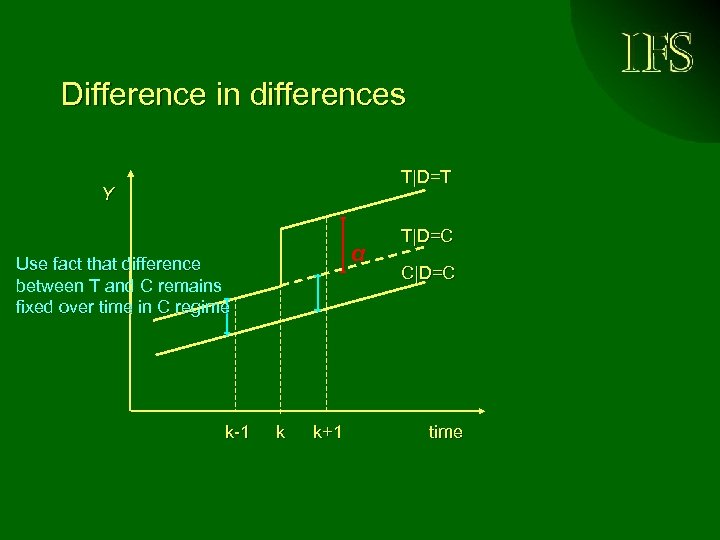

Difference in differences T|D=T Y α Use fact that difference between T and C remains fixed over time in C regime k-1 k k+1 T|D=C C|D=C time

Under certain conditions 1. No composition changes within groups 2. Common time effects across groups Checking the strategy - Checks the DD strategy before the reform Use different control groups Use an outcome variable not affected by the reform Þ Has to be a careful study ! Þ Can take into account unobservable variables !

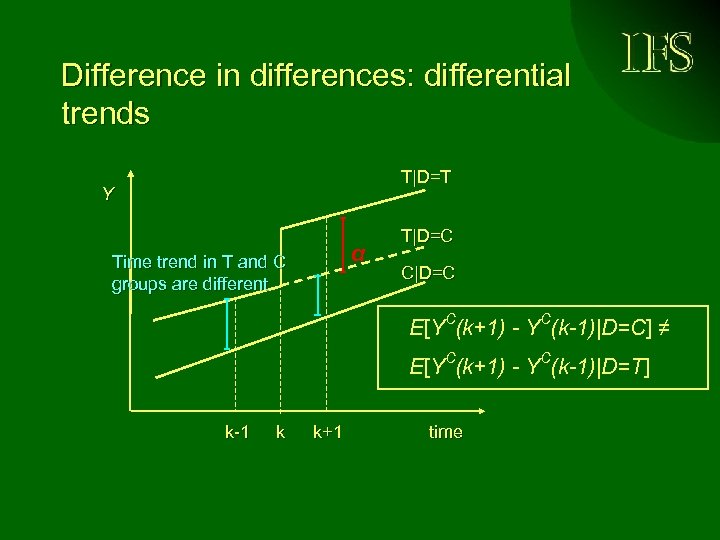

Difference in differences: differential trends T|D=T Y α Time trend in T and C groups are different T|D=C C|D=C E[YC(k+1) - YC(k-1)|D=C] ≠ E[YC(k+1) - YC(k-1)|D=T] k-1 k k+1 time

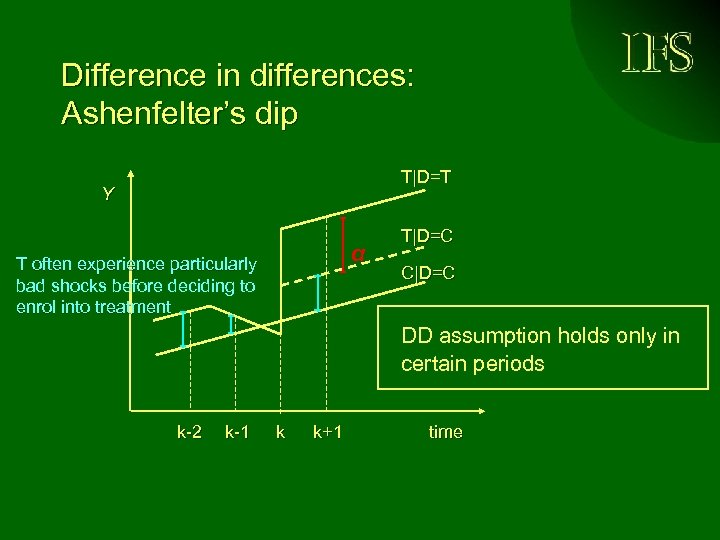

Difference in differences: Ashenfelter’s dip T|D=T Y α T often experience particularly bad shocks before deciding to enrol into treatment T|D=C C|D=C DD assumption holds only in certain periods k-2 k-1 k k+1 time

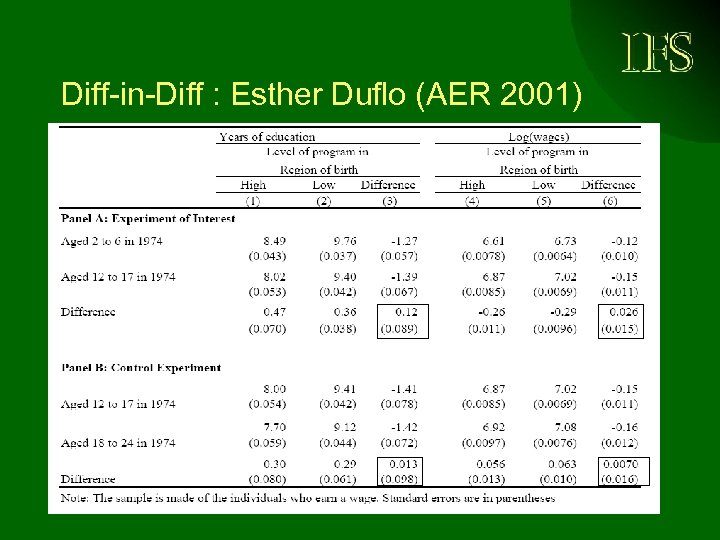

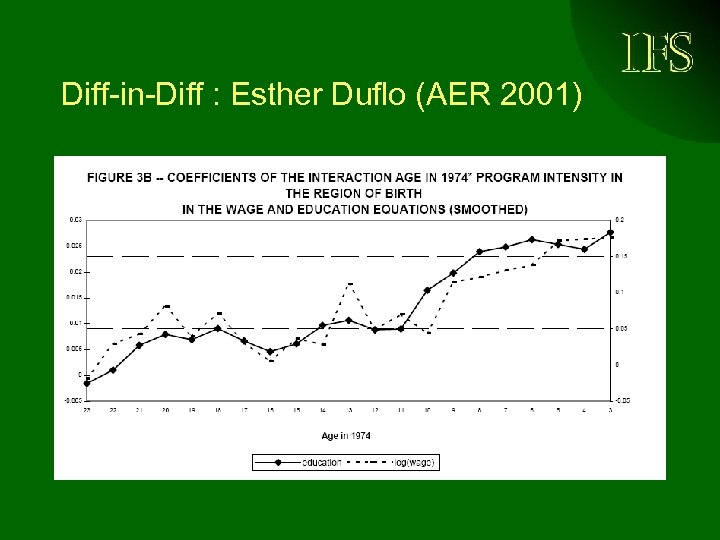

Diff-in-Diff : Esther Duflo (AER 2001) • Policy: school construction in Indonesia • Regional difference: low and high • Children young enough to be affected = treated • Children too old = control ÞEstimate the impact of building school on education ÞEstimate the impact of education on earnings

Diff-in-Diff : Esther Duflo (AER 2001)

Diff-in-Diff : Esther Duflo (AER 2001)

Common problems with DD • Long-term versus reliability trade-off: - Impact most reliable in the short term - True impact might take time • Heterogeneous behavioural response – Average effect might hide high/low effect for certain groups • Local estimation – Truly DD estimates are hard to generalize ÞNeed many estimations to establish general causal effect

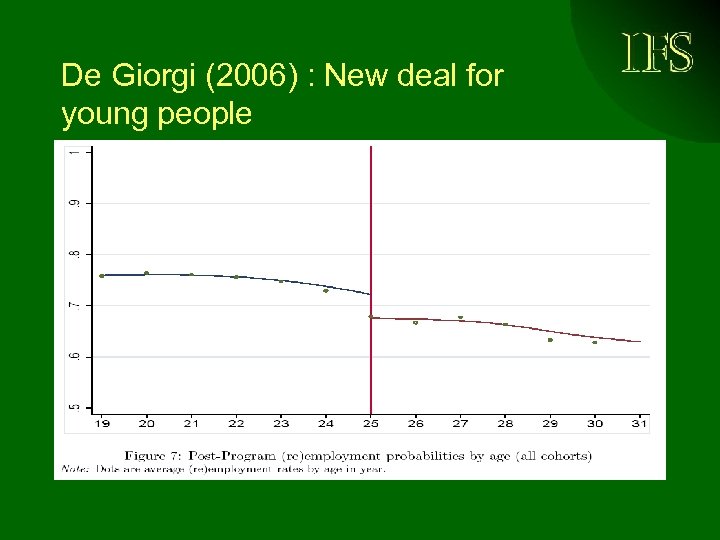

Regression discontinuity design (RD) • Use the discontinuity in the treatment Example: New deal for young people in the UK Program targeted to young unemployed aged 18 to 24 Þ Unemployed just older than 25 are in the control group ÞUnemployed just younger than 25 are in the treated group

De Giorgi (2006) : New deal for young people

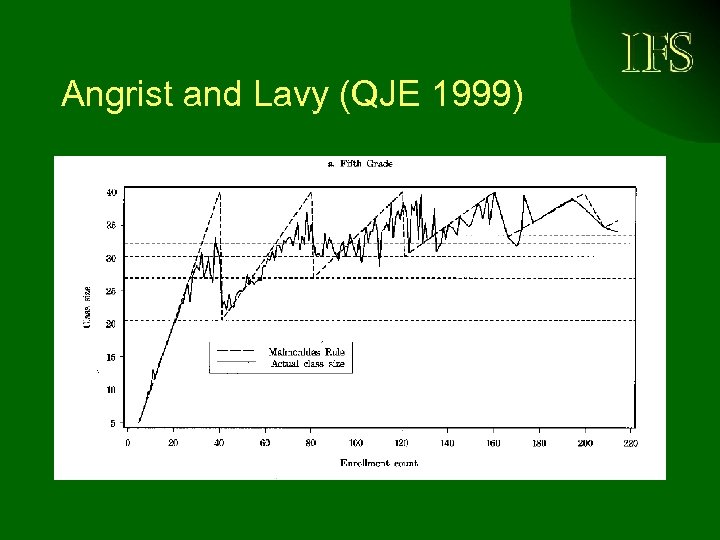

III - 4. Instrumental variable • Use the fact that a variable Z (the instrument) might be correlated with the endogenous variable X • BUT not with the outcome Y • Except through the variable X E. G. : number of student per class is endogenous to outcomes “test scores” => How to find a good instrument ?

Angrist and Lavy (QJE 1999)

III - 5. Other methods • Matching mixed with diff-in-diff • Selection estimator • Structural estimation ÞThere is a trade-off between reliability of the causal inference (identification) and the generalization of the results

Conclusion • Policy evaluation is crucial – For conducting efficient policies – For improving scientific knowledge • Correlation is not causality • Beware of the selection effect or of endogenous variable ! • Methods to draw causal inference are available => Need careful analysis !

f34e82d57406231fdcec5ed19b416e8a.ppt