2db497158a0a9d54b1e57ae4b5193d87.ppt

- Количество слайдов: 42

Polarimeter capability assessment using simulated uncertainties Kirk Knobelspiesse* and the GISS/Glory Team * NASA Postdoctoral Program

Goal: systematically estimate retrieval error given measurement characteristics Is it better to increase polarimetric accuracy? Number of viewing angles? Spectral range? • Estimation methodology, strengths and weaknesses • Sample investigations Number of viewing angle samples Comparison of RSP/APS to other instruments Optimal polarimetric units • Conclusions

Aerosol retrieval Scanning Polarimeters gather lots of information… This requires a mathematically rigorous retrieval approach We minimize a cost function: F- cost function to minimize F - forward (radiative transfer model), which depends on x aerosol parameters Y - observation vector Co - observation error Aerosol parameters, x, are refined until the minimum is found Can we predict retrieval accuracy given the observation and model characteristics, error?

Uncertainty estimation For maximum likelihood aerosol property retrieval methods - a link between observation and retrieval uncertainty (Rodgers, 2000*) Co - observation error covariance matrix • Diagonal terms express observation uncertainty [R 1, R 2, R 3, … Rp 1, Rp 2, Rp 3… Rm] J - Jacobian • Forward model sensitivity Cx - retrieval error covariance matrix • • Square root of diagonal terms are retrieval uncertainty [x 1, x 2, x 3, … xn] Off diagonal terms indicate correlation *C. Rodgers, Inverse Methods for Atmospheric Sounding: Theory and Practice. World Scientific, Singapore, 2000

Uncertainty estimation Observation error covariance matrix • Expresses individual squared observation errors (on diagonal) • Off diagonal terms indicate error correlation… neglected for now • Degree of Linear Polarization (Do. LP): 2 Do. LP = 0. 00152 • Total reflectance (R): 2 R = (0. 03 R)2 • Polarized reflectance (Rp) if used: 2 Rp = (0. 0015 R)2 + (0. 03 Rp)2 ( since Rp= R * Do. LP ) [m x m] See: F. Waquet, B. Cairns, K. Knobelspiesse, J. Chowdhary, L. Travis, B. Schmid, and M. Mishchenko. Polarimetric remote sensing of aerosols over land. J. Geophys. Res, 114(D 01206): 1– 23, 2009.

Uncertainty estimation The Jacobian matrix • Expresses forward model sensitivity Observation vector is m long, Aerosol parameter vector n long: J - Jacobian matrix [m x n] x - aerosol parameter vector [n] F - Forward (radiative transfer) model [m] • No analytical form for this… numerically estimated • Since F(x) is nonlinear, J must be estimated for a variety of scenes

Uncertainty estimation Retrieval error covariance matrix Cx • Square root of diagonal terms of Cx are estimated retrieval uncertainties

We can now understand the relationship between observation characteristics and retrieval success Analysis is computationally inexpensive once Jacobians are done • • Easily performed for a large number of simulations It is easy to modify observation configuration/accuracy for sensitivity studies Issues • • • Assumes forward model is ‘perfect’ Computing matrix inverses can be difficult for large Co Doesn’t necessarily simulate error for ill-posed problems Results are highly optimistic Best for comparison of relative errors of different sensors (rather than absolute assessment of accuracy)

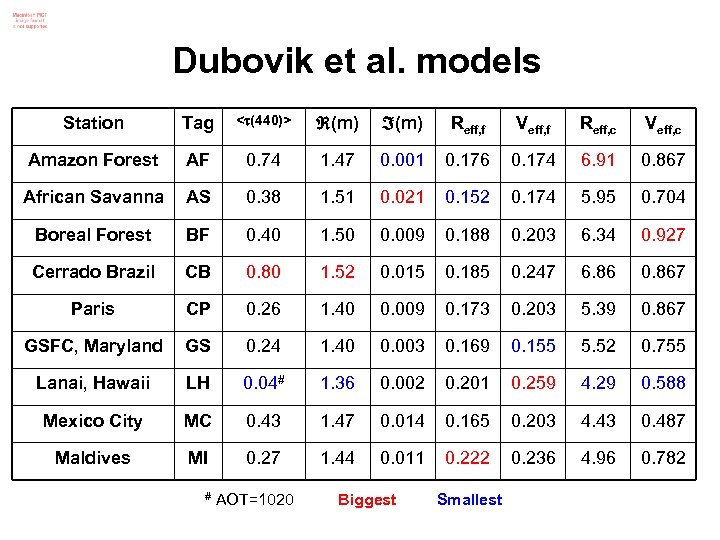

Scene simulations Aerosols are from Dubovik et al. 2002 AERONET climatologies# • • • 9 classes (dust and spectrally variant refractive index models excluded) Simulated for AOT(560 nm) of 0. 05, 0. 1, 0. 2, 0. 4, and 0. 8 Total: 9 x 5 = 45 simulations Universal assumptions/configuration • • • Geometry: Solar zenith angle = 45 , Relative solar-view angle = 45 Ocean surface with Chl=0. 1 mg/m 3, wind speed 5 m/s, no whitecaps Aerosols uniformly distributed with respect to pressure from ground to 1 km Aerosols are homogenous spheres Aerosols are well modeled in size using two lognormal size distributions (90% of AOT for fine mode, 10% for coarse mode) Aerosol refractive indices are spectrally invariant # O. Dubovik, B. Holben, T. Eck, A. Smirnov, Y. Kaufman, M. King, D. Tanre, and I. Slutsker. Variability of absorption and optical properties of key aerosol types observed in worldwide locations. J. Atmos. Sci. , 59(3): 590– 608, 2002.

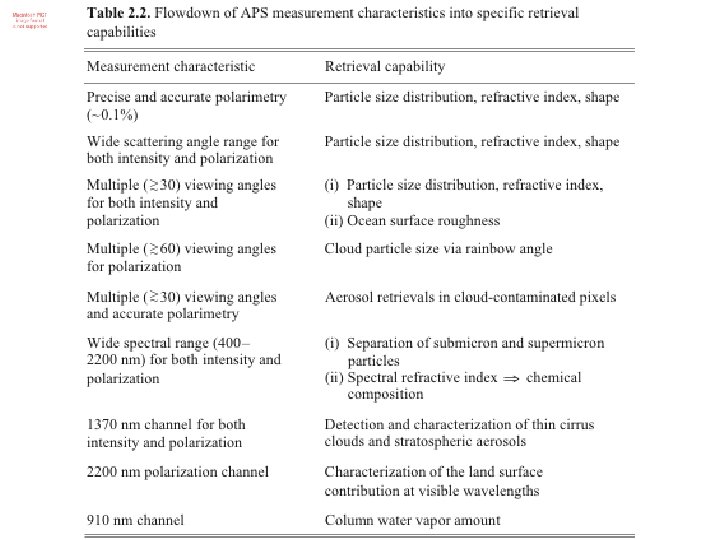

Reference instrument “APS” APS has • • 7 relevant spectral bands: 410, 440, 560, 670, 870, 1600, 2250 nm 199 angular observations from -45 to 45 in 0. 45 intervals 0. 15% polarimetric accuracy (other terms as previously described) Both Do. LP and R are utilized, for a total of 2786 observations 12 parameters are retrieved For each aerosol mode (100 nm < fine < 1µm; coarse > 1µm): • • • Size distribution (effective radius and variance) Complex refractive index Aerosol optical depth at 560 nm (2) (1) For the surface: • • Chlorophyll-a (mg/m 3) Wind speed (m/s) Total (1) 5 x 2 + 2 = (12)

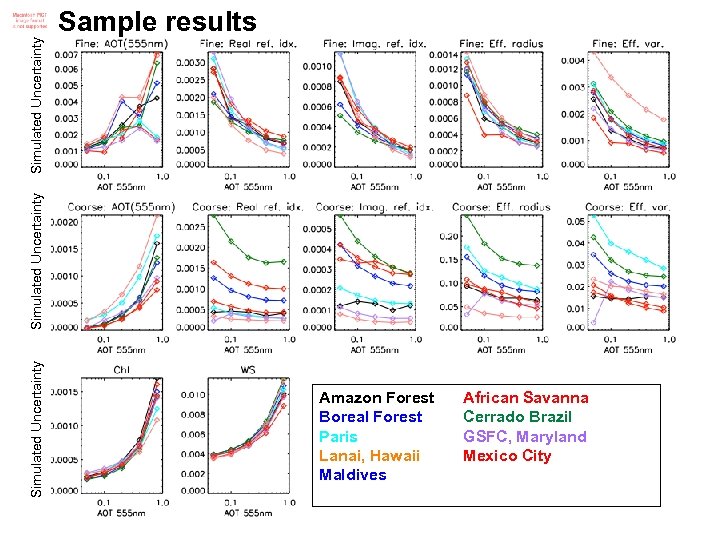

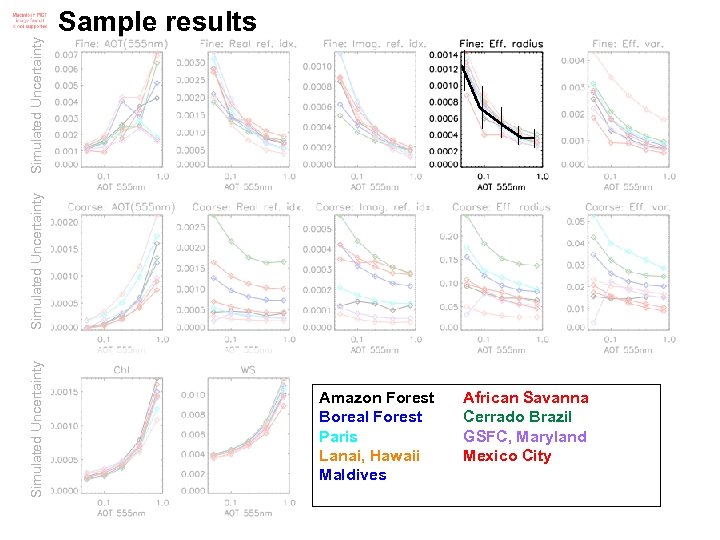

Simulated Uncertainty Sample results Amazon Forest Boreal Forest Paris Lanai, Hawaii Maldives African Savanna Cerrado Brazil GSFC, Maryland Mexico City

Simulated Uncertainty Sample results Amazon Forest Boreal Forest Paris Lanai, Hawaii Maldives African Savanna Cerrado Brazil GSFC, Maryland Mexico City

Simulations

What happens if we reduce capability? 0. 5 201 51 13 30 4 0 and 50 2 0 Test with various angular sampling resolutions # views 8 • Angular spacing 2 Test 1: Angular sampling 1 Test 2: “APS” vs. “PARASOL” vs. “MISR” Test 3: Polarization • Is it better to use Degree of Linear Polarization (Do. LP) or Polarized Reflectance (Rp)?

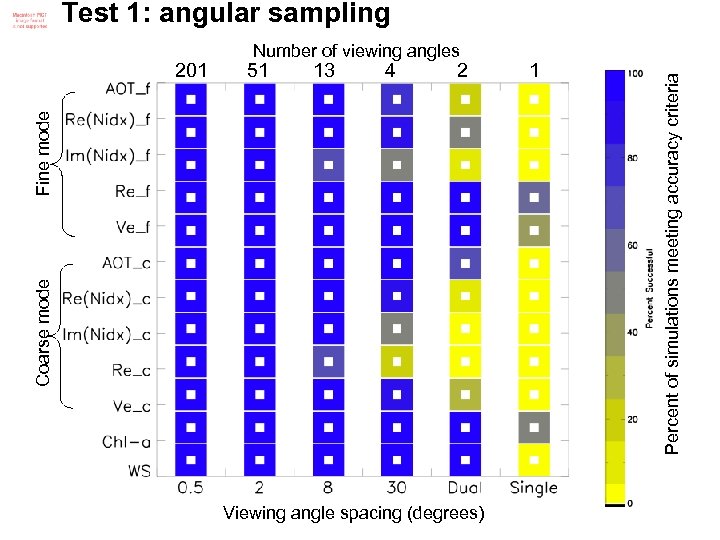

Angular spacing Test 1: angular sampling # views 0. 5 201 2 51 8 13 30 4 0 and 50 2 0 1

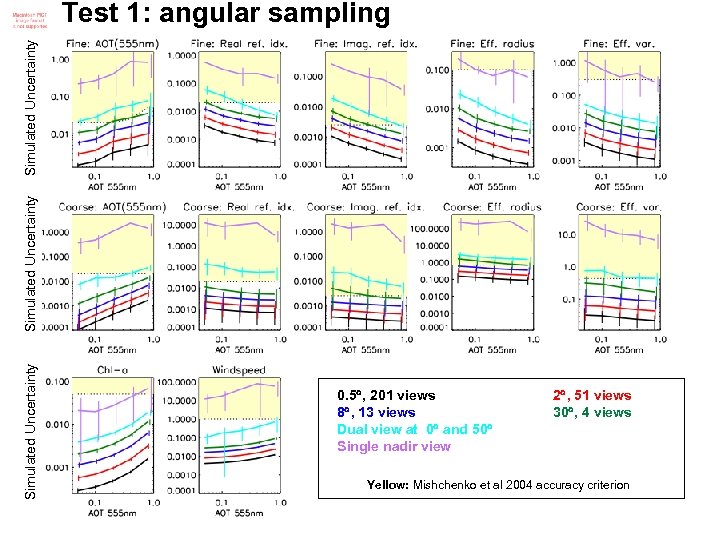

Simulated Uncertainty Test 1: angular sampling 0. 5 , 201 views 8 , 13 views Dual view at 0 and 50 Single nadir view 2 , 51 views 30 , 4 views Yellow: Mishchenko et al 2004 accuracy criterion

51 13 4 2 1 Coarse mode Fine mode 201 Number of viewing angles Viewing angle spacing (degrees) Percent of simulations meeting accuracy criteria Test 1: angular sampling

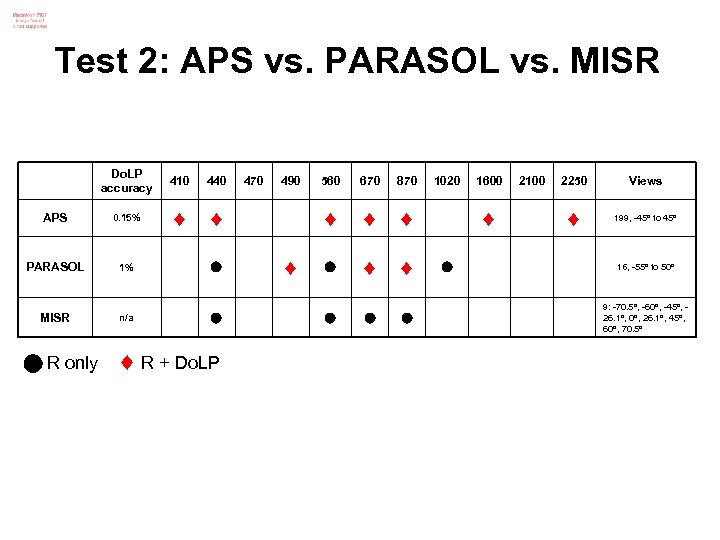

Test 2: Instrument comparison

Test 2: APS vs. PARASOL vs. MISR Do. LP accuracy 410 440 APS 0. 15% PARASOL 1% MISR n/a R only R + Do. LP 470 490 670 870 560 1020 1600 2100 2250 Views 199, -45 to 45 16, -55 to 50 9: -70. 5 , -60 , -45 , 26. 1 , 0 , 26. 1 , 45 , 60 , 70. 5

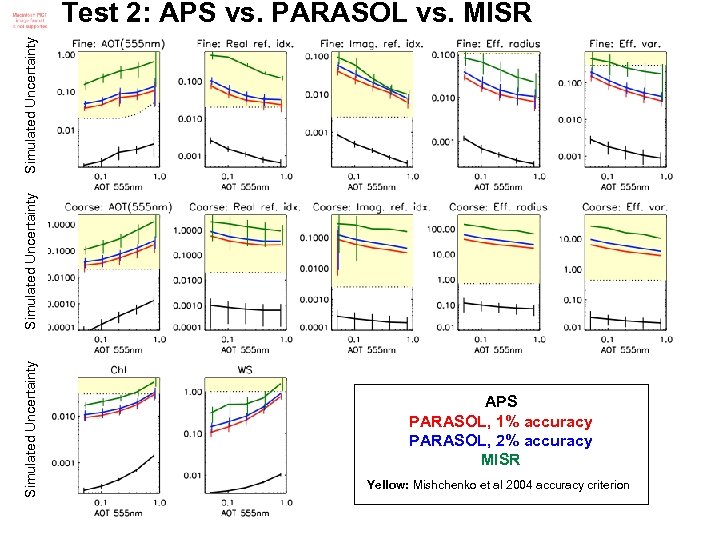

Simulated Uncertainty Test 2: APS vs. PARASOL vs. MISR APS PARASOL, 1% accuracy PARASOL, 2% accuracy MISR Yellow: Mishchenko et al 2004 accuracy criterion

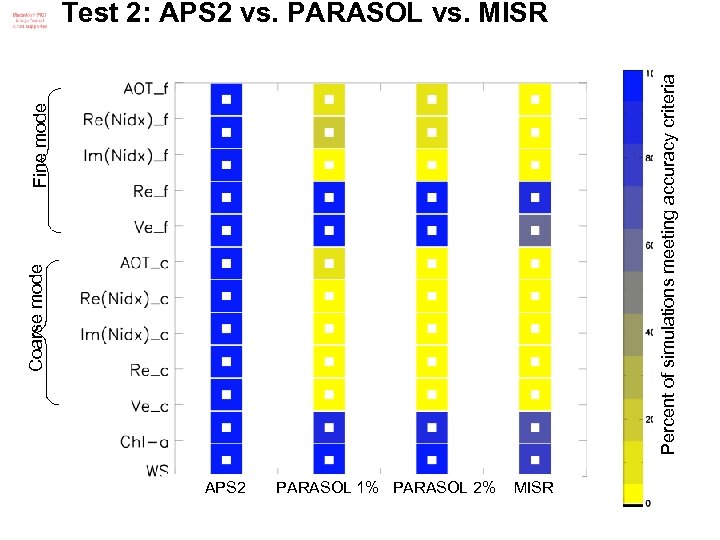

Coarse mode Fine mode Percent of simulations meeting accuracy criteria Test 2: APS 2 vs. PARASOL vs. MISR APS 2 PARASOL 1% PARASOL 2% MISR

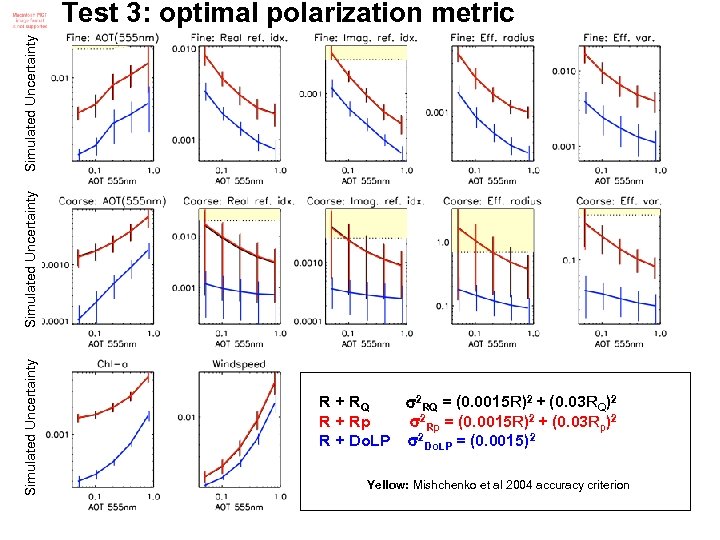

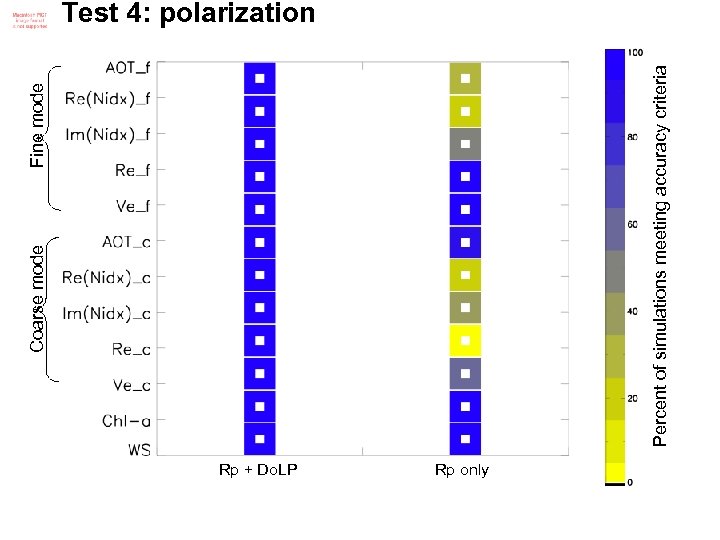

Test 3: optimal polarization metric What is best form of polarimetric observations to use? Degree of Linear Polarization, Do. LP=Q 2+U 2/I , or Polarized Reflectance, Rp=√(RQ 2 + RU 2), or Q reflectance, RQ

Simulated Uncertainty Test 3: optimal polarization metric R + RQ 2 RQ = (0. 0015 R)2 + (0. 03 RQ)2 R + Rp 2 Rp = (0. 0015 R)2 + (0. 03 Rp)2 R + Do. LP 2 Do. LP = (0. 0015)2 Yellow: Mishchenko et al 2004 accuracy criterion

Conclusions

Caveats and cautions… We have an infrastructure to systematically test instrument capability • • • Can be easily performed for a variety of types of scenes, configurations Can help us select the best retrieval strategy When compared to real retrievals can help us understand if our modeling or error characterization are accurate BUT These techniques do have caveats… • • • No modeling error Absolute error values are very optimistic We need to look into correlation in retrieval, observation error

Thank you!

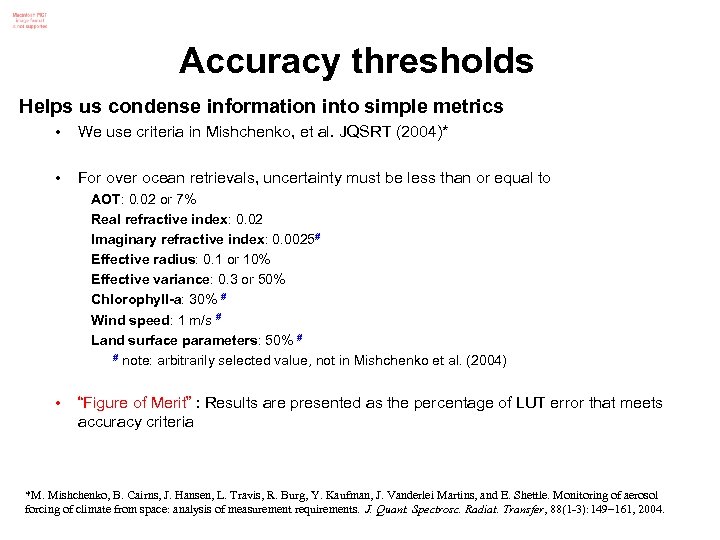

Accuracy thresholds Helps us condense information into simple metrics • We use criteria in Mishchenko, et al. JQSRT (2004)* • For over ocean retrievals, uncertainty must be less than or equal to AOT: 0. 02 or 7% Real refractive index: 0. 02 Imaginary refractive index: 0. 0025# Effective radius: 0. 1 or 10% Effective variance: 0. 3 or 50% Chlorophyll-a: 30% # Wind speed: 1 m/s # Land surface parameters: 50% # # note: arbitrarily selected value, not in Mishchenko et al. (2004) • “Figure of Merit” : Results are presented as the percentage of LUT error that meets accuracy criteria *M. Mishchenko, B. Cairns, J. Hansen, L. Travis, R. Burg, Y. Kaufman, J. Vanderlei Martins, and E. Shettle. Monitoring of aerosol forcing of climate from space: analysis of measurement requirements. J. Quant. Spectrosc. Radiat. Transfer, 88(1 -3): 149– 161, 2004.

Uncertainty estimation Observation error covariance matrix • Expresses individual squared observation errors (on diagonal) • Off diagonal terms indicate error correlation… neglected for now [m x m]

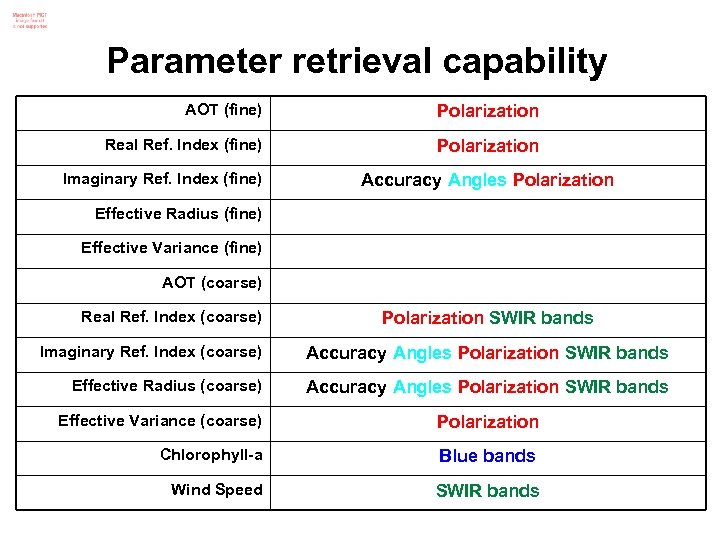

Parameter retrieval capability AOT (fine) Polarization Real Ref. Index (fine) Polarization Imaginary Ref. Index (fine) Accuracy Angles Polarization Effective Radius (fine) Effective Variance (fine) AOT (coarse) Real Ref. Index (coarse) Polarization SWIR bands Imaginary Ref. Index (coarse) Accuracy Angles Polarization SWIR bands Effective Radius (coarse) Accuracy Angles Polarization SWIR bands Effective Variance (coarse) Polarization Chlorophyll-a Blue bands Wind Speed SWIR bands

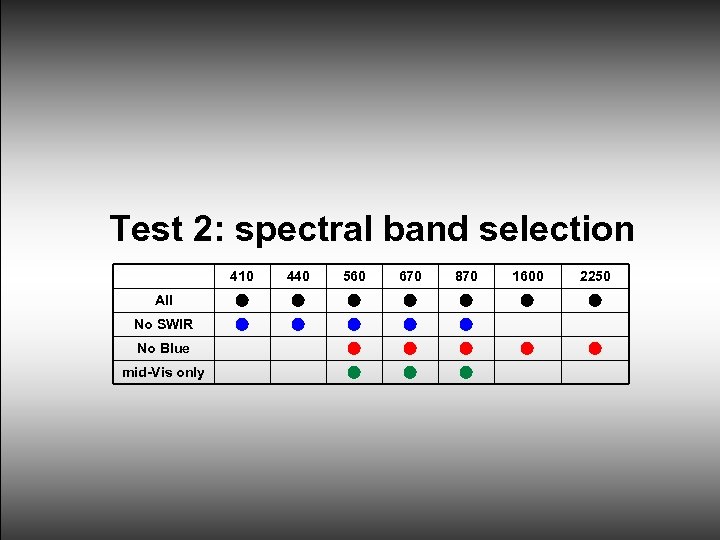

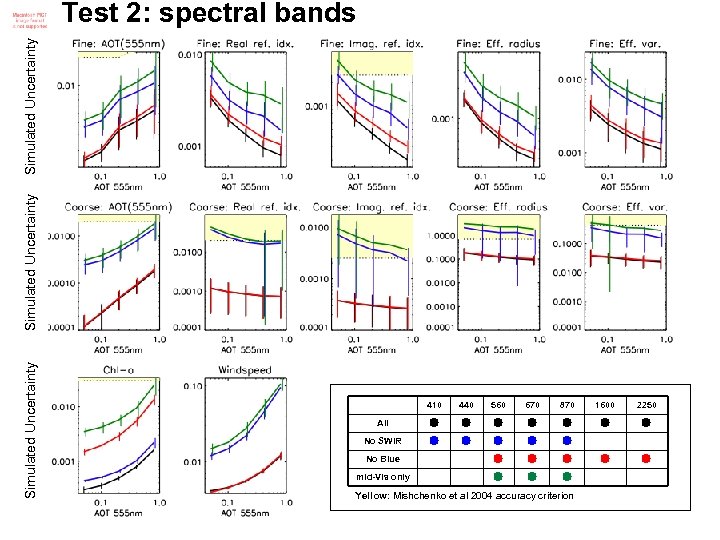

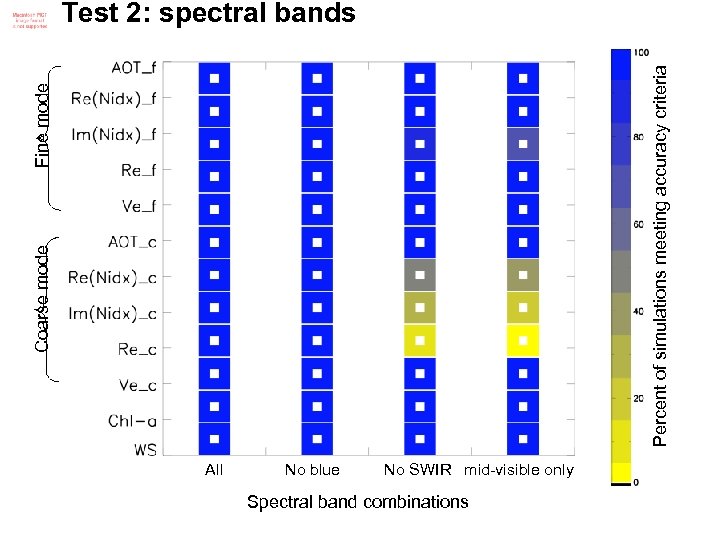

Test 2: spectral band selection 410 440 560 670 870 1600 2250 All No SWIR No Blue mid-Vis only

Simulated Uncertainty Test 2: spectral bands 410 440 560 670 870 1600 2250 All No SWIR No Blue mid-Vis only Yellow: Mishchenko et al 2004 accuracy criterion

Coarse mode Fine mode Percent of simulations meeting accuracy criteria Test 2: spectral bands All No blue No SWIR mid-visible only Spectral band combinations

Dubovik et al. models Station Tag < (440)> (m) Reff, f Veff, f Reff, c Veff, c Amazon Forest AF 0. 74 1. 47 0. 001 0. 176 0. 174 6. 91 0. 867 African Savanna AS 0. 38 1. 51 0. 021 0. 152 0. 174 5. 95 0. 704 Boreal Forest BF 0. 40 1. 50 0. 009 0. 188 0. 203 6. 34 0. 927 Cerrado Brazil CB 0. 80 1. 52 0. 015 0. 185 0. 247 6. 86 0. 867 Paris CP 0. 26 1. 40 0. 009 0. 173 0. 203 5. 39 0. 867 GSFC, Maryland GS 0. 24 1. 40 0. 003 0. 169 0. 155 5. 52 0. 755 Lanai, Hawaii LH 0. 04# 1. 36 0. 002 0. 201 0. 259 4. 29 0. 588 Mexico City MC 0. 43 1. 47 0. 014 0. 165 0. 203 4. 43 0. 487 Maldives MI 0. 27 1. 44 0. 011 0. 222 0. 236 4. 96 0. 782 # AOT=1020 Biggest Smallest

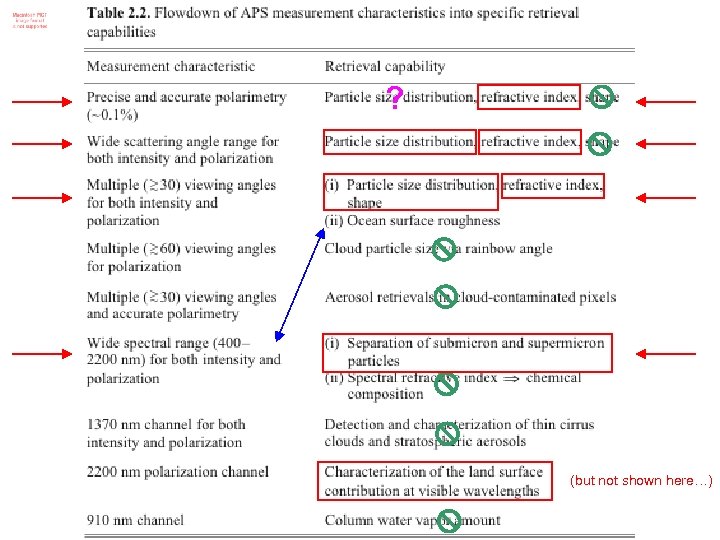

? (but not shown here…)

Coarse mode Fine mode Percent of simulations meeting accuracy criteria Test 4: polarization Rp + Do. LP Rp only

Retrievals over land

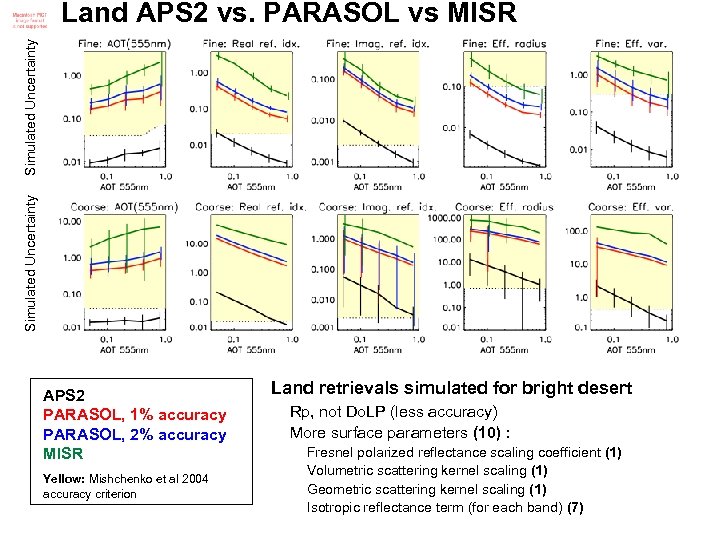

Simulated Uncertainty Land APS 2 vs. PARASOL vs MISR APS 2 PARASOL, 1% accuracy PARASOL, 2% accuracy MISR Yellow: Mishchenko et al 2004 accuracy criterion Land retrievals simulated for bright desert Rp, not Do. LP (less accuracy) More surface parameters (10) : Fresnel polarized reflectance scaling coefficient (1) Volumetric scattering kernel scaling (1) Geometric scattering kernel scaling (1) Isotropic reflectance term (for each band) (7)

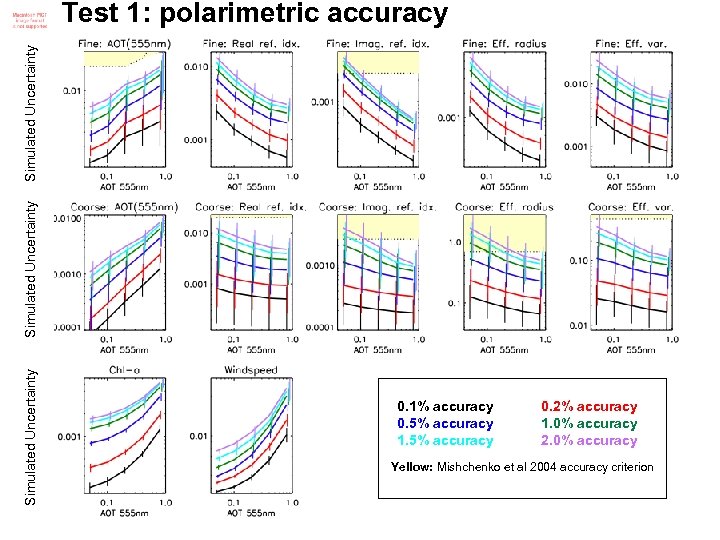

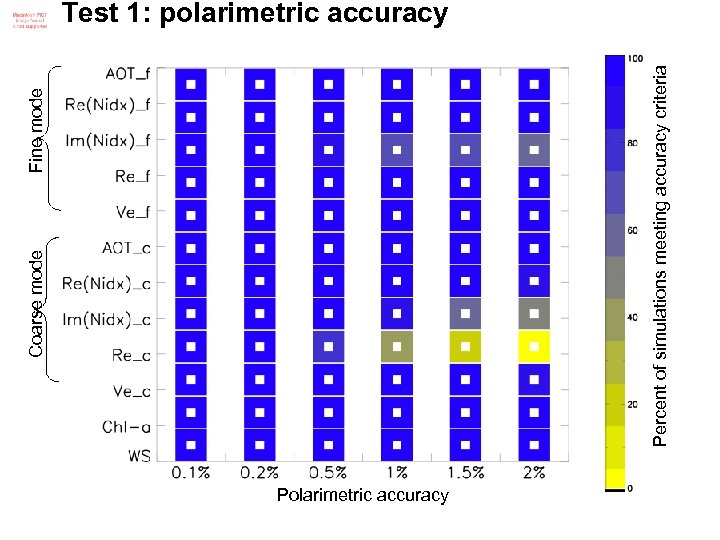

Test 1: polarimetric uncertainty Test with polarimetric uncertainty of 0. 1%, 0. 2%, 0. 5%, 1. 5% and 2%

Simulated Uncertainty Test 1: polarimetric accuracy 0. 1% accuracy 0. 5% accuracy 1. 5% accuracy 0. 2% accuracy 1. 0% accuracy 2. 0% accuracy Yellow: Mishchenko et al 2004 accuracy criterion

Fine mode Percent of simulations meeting accuracy criteria Coarse mode Test 1: polarimetric accuracy Polarimetric accuracy

I didn’t invent this Examples • C. Rodgers, Inverse Methods for Atmospheric Sounding: Theory and Practice. World Scientific, Singapore, 2000 • F. Waquet, B. Cairns, K. Knobelspiesse, J. Chowdhary, L. Travis, B. Schmid, and M. Mishchenko. Polarimetric remote sensing of aerosols over land. J. Geophys. Res, 114(D 01206): 1– 23, 2009. • O. Hasekamp and J. Landgraf. Retrieval of aerosol properties over land surfaces: capabilities of multiple-viewing-angle intensity and polarization measurements. Appl. Opt. , 46(16): 3332– 3344, 2007. • O. P. Hasekamp. Capability of multi-viewing-angle photo-polarimetric measurements for the simultaneous retrieval of aerosol and cloud properties. Atmospheric Measurement Techniques, 3(4): 839– 851, 2010. • K. Knobelspiesse, B. Cairns, J. Redemann, R. W. Bergstrom, and A. Stohl. Simultaneous retrieval of aerosol and cloud properties during the milagro field campaign. Atmospheric Chemistry and Physics Discussions, 11(2): 6363– 6413, 2011.

2db497158a0a9d54b1e57ae4b5193d87.ppt