6b2a42caa8d3717f513ba56c724ee2ed.ppt

- Количество слайдов: 68

Phonology from a computational point of view Phonemes, dialects, letterto-sound conversion March 2001

Phonology from a computational point of view Phonemes, dialects, letterto-sound conversion March 2001

Phonology: The study of the sound patterns of languages. We will extend this to include the letter patterns of languages.

Phonology: The study of the sound patterns of languages. We will extend this to include the letter patterns of languages.

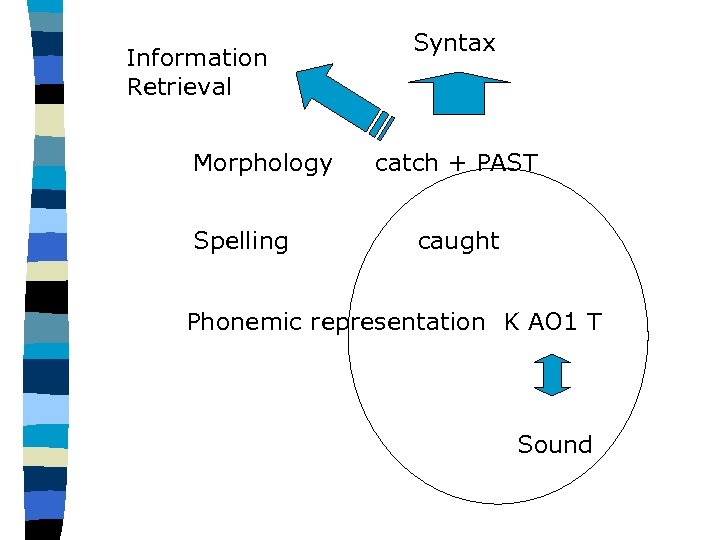

Information Retrieval Morphology Spelling Syntax catch + PAST caught Phonemic representation K AO 1 T Sound

Information Retrieval Morphology Spelling Syntax catch + PAST caught Phonemic representation K AO 1 T Sound

Why study phonology in this course? Text to speech (TTS) applications include a component which converts spelled words to sequences of phonemes ( = sound representations). E. g. , sight S AY 1 T John J AA 1 N

Why study phonology in this course? Text to speech (TTS) applications include a component which converts spelled words to sequences of phonemes ( = sound representations). E. g. , sight S AY 1 T John J AA 1 N

Keep separate: n Spelling ( = “orthography”) n Detailed description of pronunciation n Abstract description of pronunciation called “phonemic representation”

Keep separate: n Spelling ( = “orthography”) n Detailed description of pronunciation n Abstract description of pronunciation called “phonemic representation”

1. 2. 3. 4. Agenda: Phonology: set of phonemes; their realizations as phones; The phonemes are reasonably constant across a language. The phones vary a lot within a speaker and across speakers. Some of that variation is extremely rule-governed and must be understood: example, English “flap” (in butter).

1. 2. 3. 4. Agenda: Phonology: set of phonemes; their realizations as phones; The phonemes are reasonably constant across a language. The phones vary a lot within a speaker and across speakers. Some of that variation is extremely rule-governed and must be understood: example, English “flap” (in butter).

In addition to the phonemes: syllable structure, and 6. Prosody. Today: stress levels: 0, 1, 2 7. Text’s discussion of spelling errors, as a lead-in to Viterbi-ing the Minimum Edit Distance 8. Letter to sound (LTS) 5.

In addition to the phonemes: syllable structure, and 6. Prosody. Today: stress levels: 0, 1, 2 7. Text’s discussion of spelling errors, as a lead-in to Viterbi-ing the Minimum Edit Distance 8. Letter to sound (LTS) 5.

n All speakers have a set of several dozen basic pronunciation units (“phonemes”) to which they do not add (or from which delete) during their adult lifetimes. 39 phonemes in American English. n This phonemic inventory is not completely fixed and stable across the United States, but it is much more fixed and stable than is the pronunciation of these phonemes.

n All speakers have a set of several dozen basic pronunciation units (“phonemes”) to which they do not add (or from which delete) during their adult lifetimes. 39 phonemes in American English. n This phonemic inventory is not completely fixed and stable across the United States, but it is much more fixed and stable than is the pronunciation of these phonemes.

How is that possible? n I’m from New York; the vowel that I have in cat is very different from the vowel in a south Chicago native’s cat – but the phonemes are the same – they correspond across thousands of words.

How is that possible? n I’m from New York; the vowel that I have in cat is very different from the vowel in a south Chicago native’s cat – but the phonemes are the same – they correspond across thousands of words.

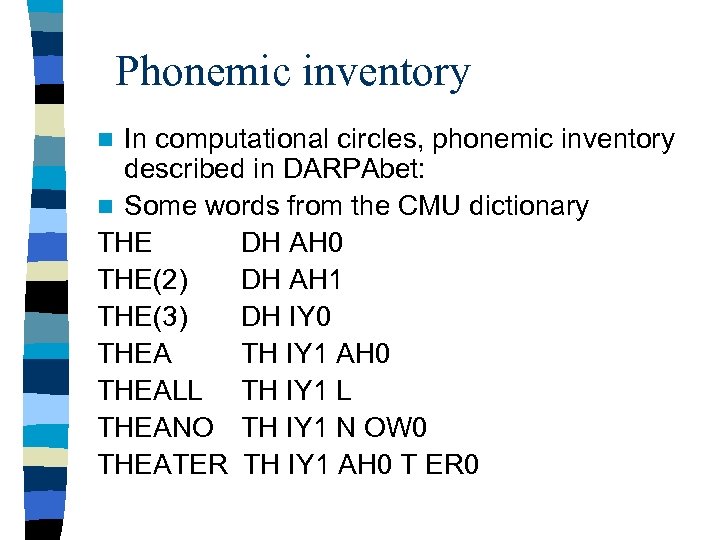

Phonemic inventory In computational circles, phonemic inventory described in DARPAbet: n Some words from the CMU dictionary THE DH AH 0 THE(2) DH AH 1 THE(3) DH IY 0 THEA TH IY 1 AH 0 THEALL TH IY 1 L THEANO TH IY 1 N OW 0 THEATER TH IY 1 AH 0 T ER 0 n

Phonemic inventory In computational circles, phonemic inventory described in DARPAbet: n Some words from the CMU dictionary THE DH AH 0 THE(2) DH AH 1 THE(3) DH IY 0 THEA TH IY 1 AH 0 THEALL TH IY 1 L THEANO TH IY 1 N OW 0 THEATER TH IY 1 AH 0 T ER 0 n

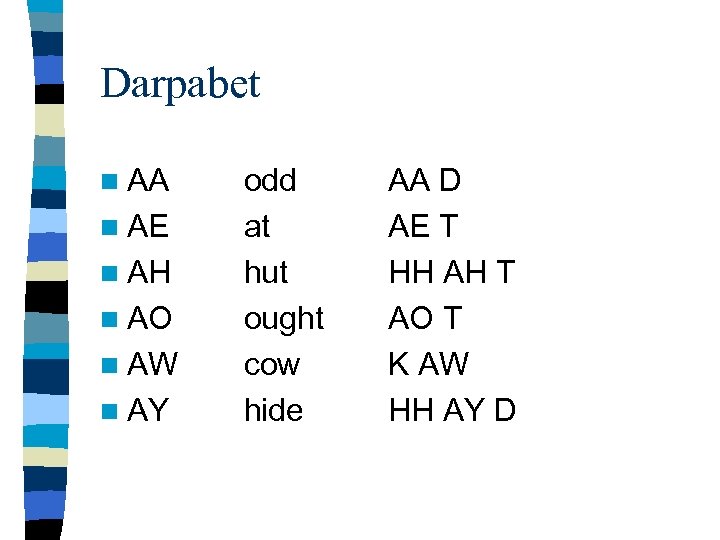

Darpabet n AA n AE n AH n AO n AW n AY odd at hut ought cow hide AA D AE T HH AH T AO T K AW HH AY D

Darpabet n AA n AE n AH n AO n AW n AY odd at hut ought cow hide AA D AE T HH AH T AO T K AW HH AY D

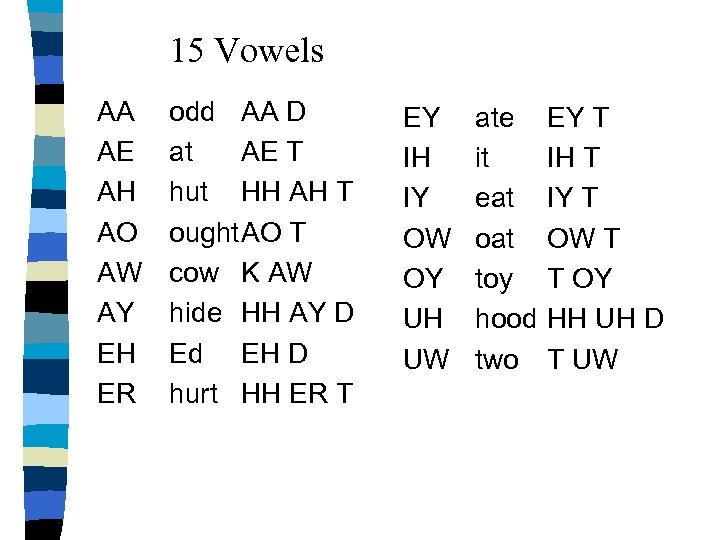

15 Vowels AA AE AH AO AW AY EH ER odd AA D at AE T hut HH AH T ought. AO T cow K AW hide HH AY D Ed EH D hurt HH ER T EY IH IY OW OY UH UW ate it eat oat toy hood two EY T IH T IY T OW T T OY HH UH D T UW

15 Vowels AA AE AH AO AW AY EH ER odd AA D at AE T hut HH AH T ought. AO T cow K AW hide HH AY D Ed EH D hurt HH ER T EY IH IY OW OY UH UW ate it eat oat toy hood two EY T IH T IY T OW T T OY HH UH D T UW

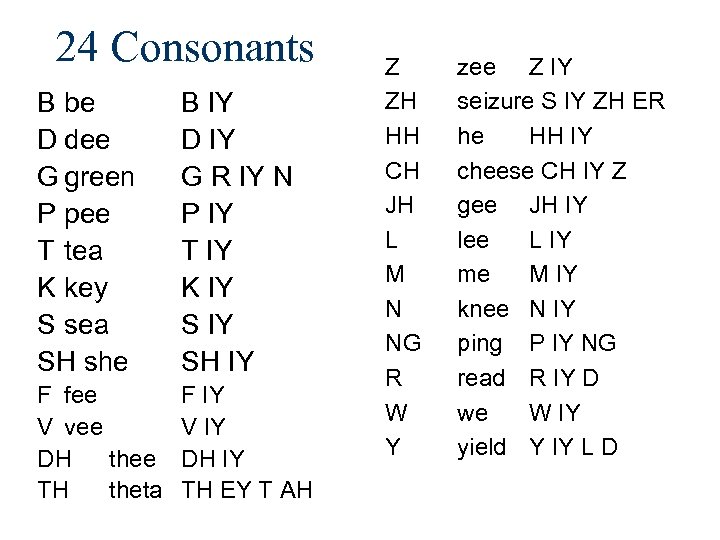

24 Consonants B be D dee G green P pee T tea K key S sea SH she B IY D IY G R IY N P IY T IY K IY SH IY F fee V vee DH thee TH theta F IY V IY DH IY TH EY T AH Z ZH HH CH JH L M N NG R W Y zee Z IY seizure S IY ZH ER he HH IY cheese CH IY Z gee JH IY lee L IY me M IY knee N IY ping P IY NG read R IY D we W IY yield Y IY L D

24 Consonants B be D dee G green P pee T tea K key S sea SH she B IY D IY G R IY N P IY T IY K IY SH IY F fee V vee DH thee TH theta F IY V IY DH IY TH EY T AH Z ZH HH CH JH L M N NG R W Y zee Z IY seizure S IY ZH ER he HH IY cheese CH IY Z gee JH IY lee L IY me M IY knee N IY ping P IY NG read R IY D we W IY yield Y IY L D

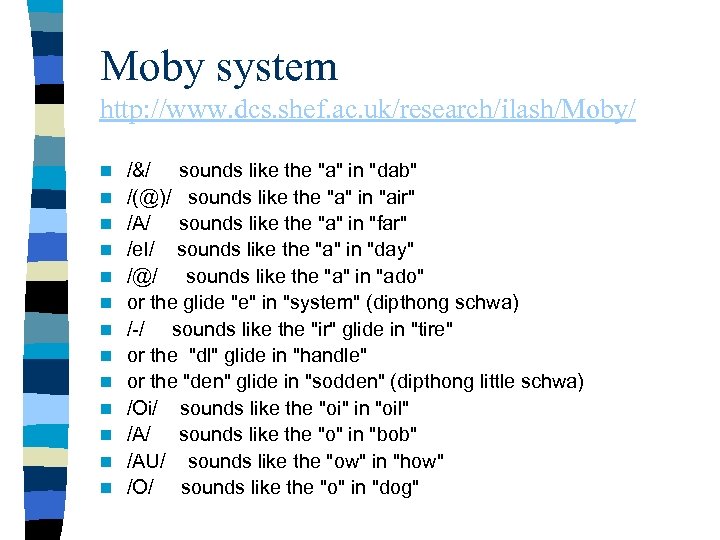

Moby system http: //www. dcs. shef. ac. uk/research/ilash/Moby/ n n n n /&/ sounds like the "a" in "dab" /(@)/ sounds like the "a" in "air" /A/ sounds like the "a" in "far" /e. I/ sounds like the "a" in "day" /@/ sounds like the "a" in "ado" or the glide "e" in "system" (dipthong schwa) /-/ sounds like the "ir" glide in "tire" or the "dl" glide in "handle" or the "den" glide in "sodden" (dipthong little schwa) /Oi/ sounds like the "oi" in "oil" /A/ sounds like the "o" in "bob" /AU/ sounds like the "ow" in "how" /O/ sounds like the "o" in "dog"

Moby system http: //www. dcs. shef. ac. uk/research/ilash/Moby/ n n n n /&/ sounds like the "a" in "dab" /(@)/ sounds like the "a" in "air" /A/ sounds like the "a" in "far" /e. I/ sounds like the "a" in "day" /@/ sounds like the "a" in "ado" or the glide "e" in "system" (dipthong schwa) /-/ sounds like the "ir" glide in "tire" or the "dl" glide in "handle" or the "den" glide in "sodden" (dipthong little schwa) /Oi/ sounds like the "oi" in "oil" /A/ sounds like the "o" in "bob" /AU/ sounds like the "ow" in "how" /O/ sounds like the "o" in "dog"

Some sources of dictionaries, including CMU’s ftp: //svrftp. eng. cam. ac. uk/pub/pub/comp. sp eech/dictionaries

Some sources of dictionaries, including CMU’s ftp: //svrftp. eng. cam. ac. uk/pub/pub/comp. sp eech/dictionaries

The tremendous variety of actual pronunciations that native speakers can blissfully ignore is staggering But speech recognition systems need to be trained on this, just as people are in their youth.

The tremendous variety of actual pronunciations that native speakers can blissfully ignore is staggering But speech recognition systems need to be trained on this, just as people are in their youth.

Varieties of sounds in everyone’s speech Most phonemes have several different pronunciations (called their allophones), determined by nearby sounds, most usually by the following sound. The most striking instance of such variation is in the realization of the phoneme /T/ in American English.

Varieties of sounds in everyone’s speech Most phonemes have several different pronunciations (called their allophones), determined by nearby sounds, most usually by the following sound. The most striking instance of such variation is in the realization of the phoneme /T/ in American English.

n We’ll return to the flap after the syllable.

n We’ll return to the flap after the syllable.

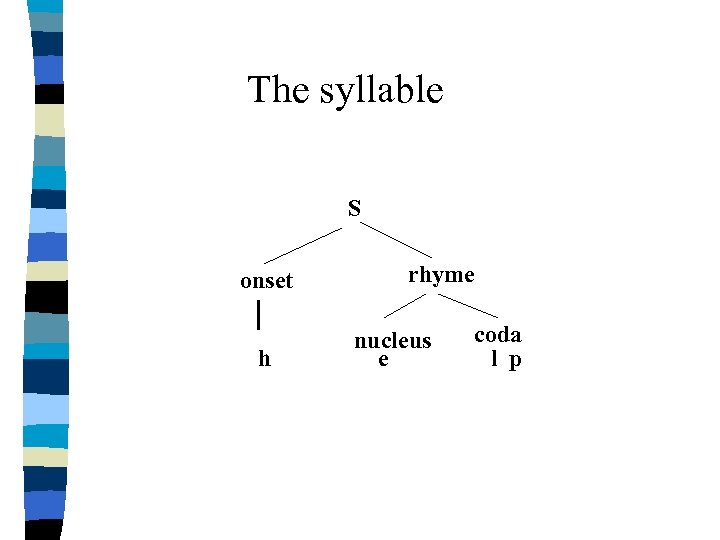

The syllable S onset h rhyme nucleus e coda l p

The syllable S onset h rhyme nucleus e coda l p

![Flap (D) in American English n We find the flap of water (wa[D]er) under Flap (D) in American English n We find the flap of water (wa[D]er) under](https://present5.com/presentation/6b2a42caa8d3717f513ba56c724ee2ed/image-20.jpg) Flap (D) in American English n We find the flap of water (wa[D]er) under these conditions strictly inside a word:

Flap (D) in American English n We find the flap of water (wa[D]er) under these conditions strictly inside a word:

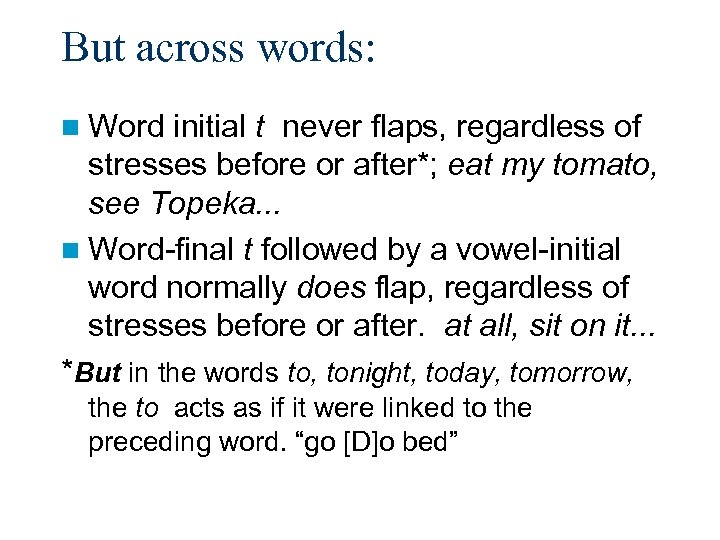

But across words: n Word initial t never flaps, regardless of stresses before or after*; eat my tomato, see Topeka. . . n Word-final t followed by a vowel-initial word normally does flap, regardless of stresses before or after. at all, sit on it. . . *But in the words to, tonight, today, tomorrow, the to acts as if it were linked to the preceding word. “go [D]o bed”

But across words: n Word initial t never flaps, regardless of stresses before or after*; eat my tomato, see Topeka. . . n Word-final t followed by a vowel-initial word normally does flap, regardless of stresses before or after. at all, sit on it. . . *But in the words to, tonight, today, tomorrow, the to acts as if it were linked to the preceding word. “go [D]o bed”

Generalization n English permits phonemes to belong simultaneously to two syllables ( = be ambisyllabic) under certain conditions. n Ambisyllabic t's convert to flaps. Generally speaking:

Generalization n English permits phonemes to belong simultaneously to two syllables ( = be ambisyllabic) under certain conditions. n Ambisyllabic t's convert to flaps. Generally speaking:

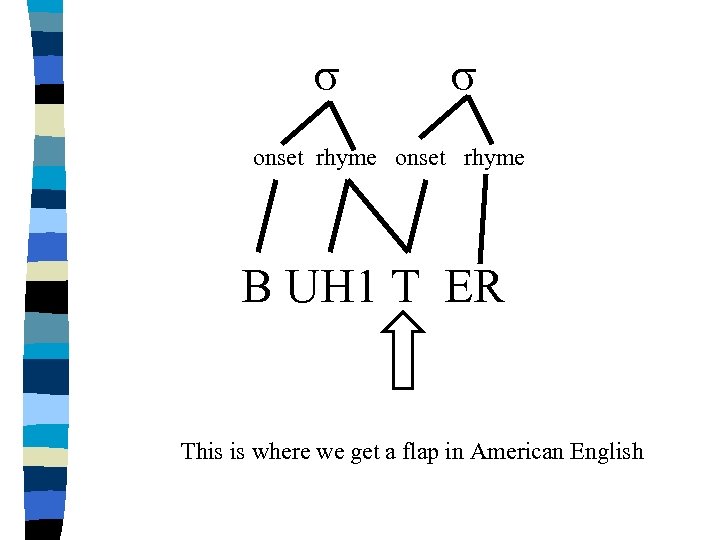

s s onset rhyme B UH 1 T ER This is where we get a flap in American English

s s onset rhyme B UH 1 T ER This is where we get a flap in American English

Within a word: n C becomes part of syllable with a following onset ("maximize syllable onset"):

Within a word: n C becomes part of syllable with a following onset ("maximize syllable onset"):

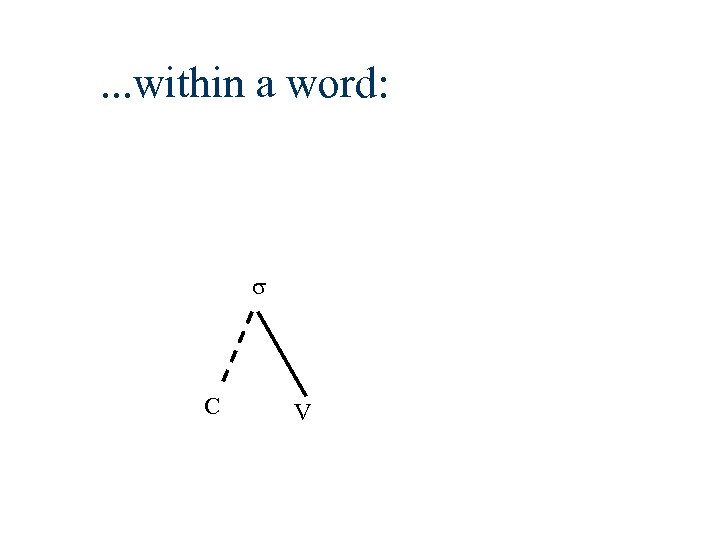

. . . within a word: s C V

. . . within a word: s C V

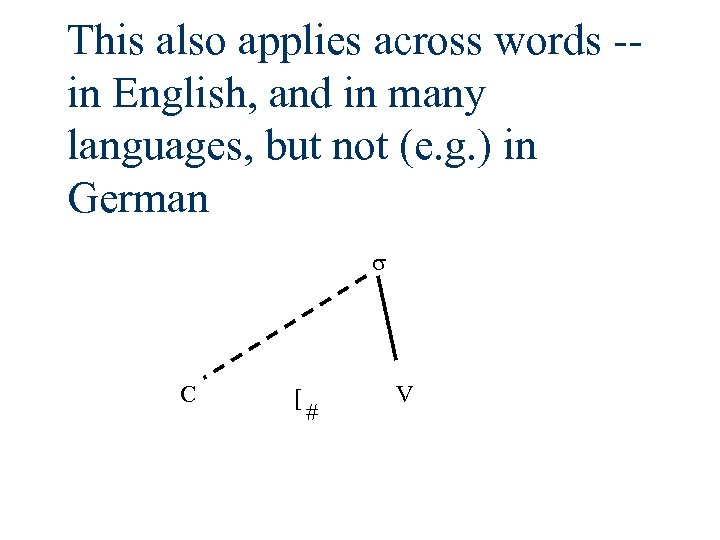

This also applies across words -in English, and in many languages, but not (e. g. ) in German s C [ # V

This also applies across words -in English, and in many languages, but not (e. g. ) in German s C [ # V

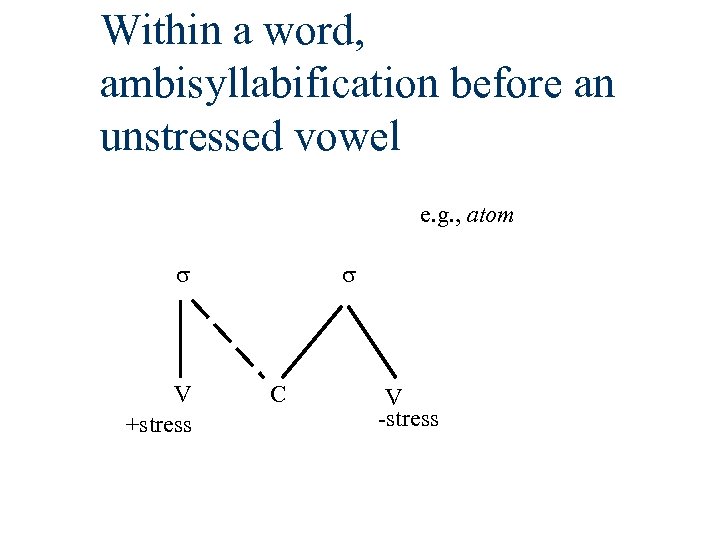

Within a word, ambisyllabification before an unstressed vowel e. g. , atom s V +stress s C V -stress

Within a word, ambisyllabification before an unstressed vowel e. g. , atom s V +stress s C V -stress

![But not across word boundaries we don't say my tomato my [D]omato But not across word boundaries we don't say my tomato my [D]omato](https://present5.com/presentation/6b2a42caa8d3717f513ba56c724ee2ed/image-28.jpg) But not across word boundaries we don't say my tomato my [D]omato

But not across word boundaries we don't say my tomato my [D]omato

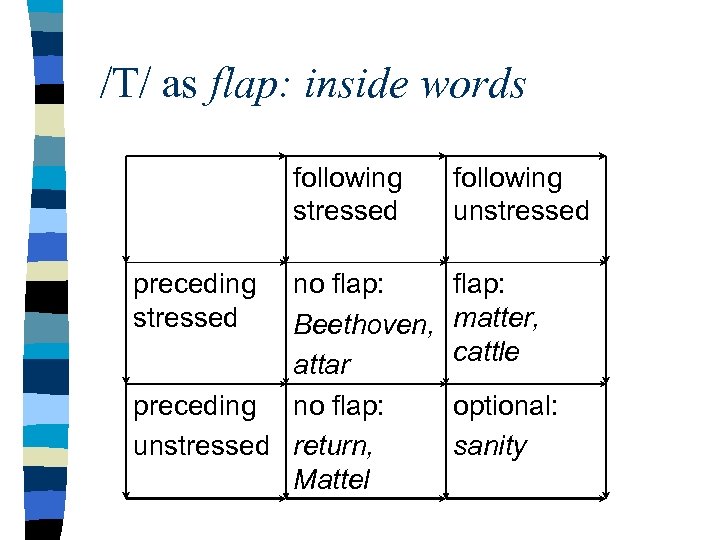

/T/ as flap: inside words following stressed preceding stressed no flap: Beethoven, attar preceding no flap: unstressed return, Mattel following unstressed flap: matter, cattle optional: sanity

/T/ as flap: inside words following stressed preceding stressed no flap: Beethoven, attar preceding no flap: unstressed return, Mattel following unstressed flap: matter, cattle optional: sanity

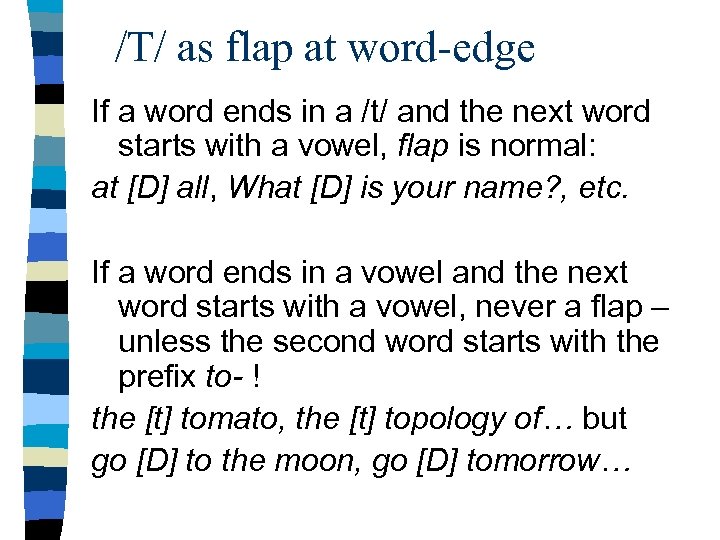

/T/ as flap at word-edge If a word ends in a /t/ and the next word starts with a vowel, flap is normal: at [D] all, What [D] is your name? , etc. If a word ends in a vowel and the next word starts with a vowel, never a flap – unless the second word starts with the prefix to- ! the [t] tomato, the [t] topology of… but go [D] to the moon, go [D] tomorrow…

/T/ as flap at word-edge If a word ends in a /t/ and the next word starts with a vowel, flap is normal: at [D] all, What [D] is your name? , etc. If a word ends in a vowel and the next word starts with a vowel, never a flap – unless the second word starts with the prefix to- ! the [t] tomato, the [t] topology of… but go [D] to the moon, go [D] tomorrow…

Most computational devices avoid worrying about these issues… by (always) treating phonemes in the context of their left- and right-hand neighbors. Need to produce an AE? Find out what neighbors it needs to be produced next to. H AE T? Find an AE that was produced after an H and before a T.

Most computational devices avoid worrying about these issues… by (always) treating phonemes in the context of their left- and right-hand neighbors. Need to produce an AE? Find out what neighbors it needs to be produced next to. H AE T? Find an AE that was produced after an H and before a T.

Variation in pronunciation is largely geographical, but it is also related to class, race, and gender William Labov is the master analyst of this material, and many papers are available at his web site: http: //www. ling. upenn. edu/~labov/home. html See especially his http: //www. ling. upenn. edu/phono_atlas/ICSLP 4. html …Dialect Diversity in North America

Variation in pronunciation is largely geographical, but it is also related to class, race, and gender William Labov is the master analyst of this material, and many papers are available at his web site: http: //www. ling. upenn. edu/~labov/home. html See especially his http: //www. ling. upenn. edu/phono_atlas/ICSLP 4. html …Dialect Diversity in North America

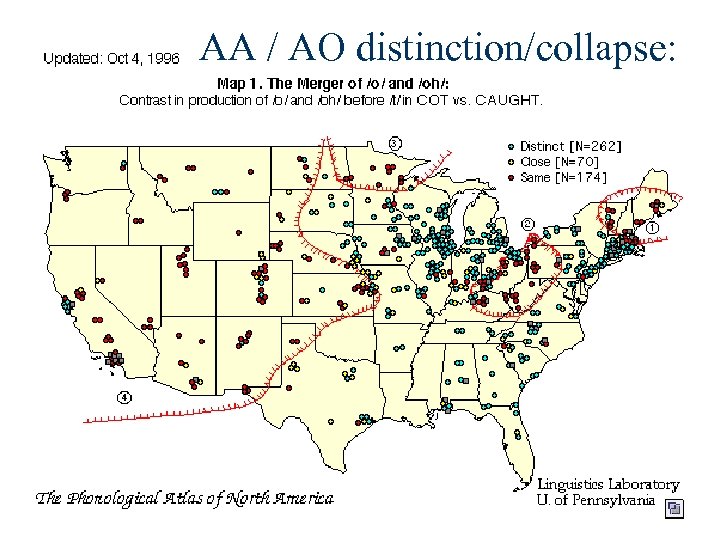

Ongoing changes in American English pronunciation 1. Loss of difference between AA (cot) and AO (caught). See also hot dog (h AA t d AO g). Some speakers produce these vowels differently (I do). Others do not. Labov’s group has produced the following map:

Ongoing changes in American English pronunciation 1. Loss of difference between AA (cot) and AO (caught). See also hot dog (h AA t d AO g). Some speakers produce these vowels differently (I do). Others do not. Labov’s group has produced the following map:

AA / AO distinction/collapse:

AA / AO distinction/collapse:

Distinction between vowels IH and EH before n ink-pen versus baby-pin: distinction lost in the South.

Distinction between vowels IH and EH before n ink-pen versus baby-pin: distinction lost in the South.

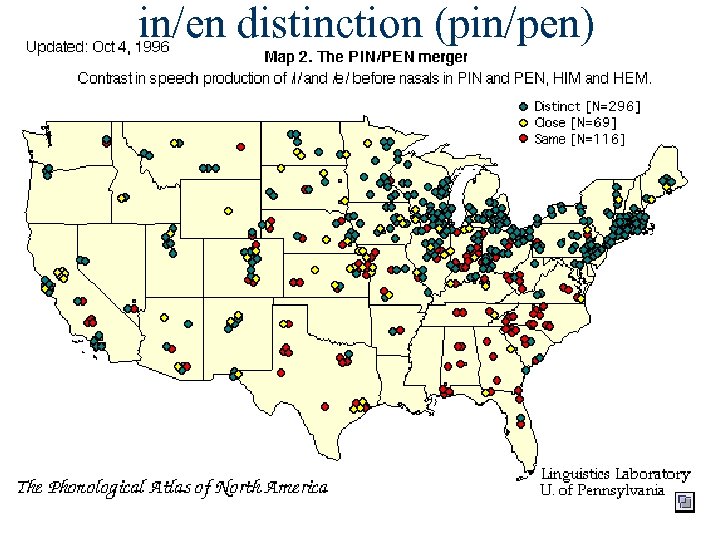

in/en distinction (pin/pen)

in/en distinction (pin/pen)

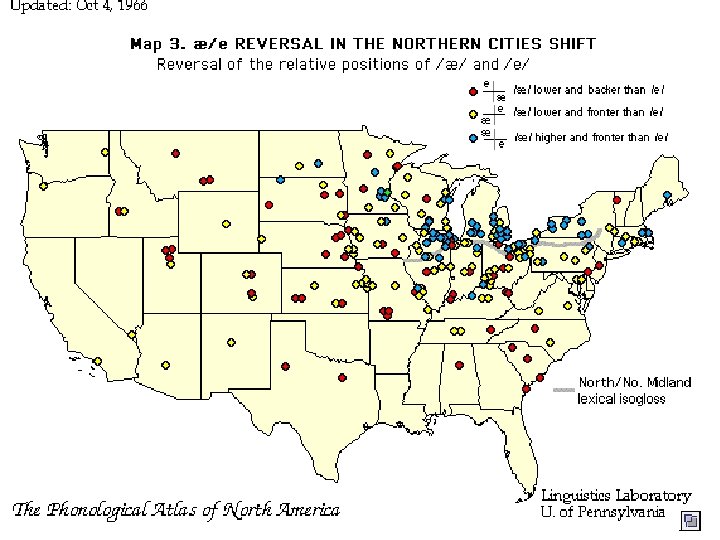

Variation in AE phoneme (“hat”) A very wide range of American speakers do NOT have the same vowels in sand sang. The vowels in cat and sang are the same, but in sand the vowel is much higher. However, in the Northern Cities shift, all AE is pronounced like the last two syllables of idea – this is prevalent right here in the south Chicago area.

Variation in AE phoneme (“hat”) A very wide range of American speakers do NOT have the same vowels in sand sang. The vowels in cat and sang are the same, but in sand the vowel is much higher. However, in the Northern Cities shift, all AE is pronounced like the last two syllables of idea – this is prevalent right here in the south Chicago area.

Sound – Letter relationships LTS: Letter to sound, or Phoneme-Grapheme relationships. In most languages, this is simple. But in English and in French, it’s very messy. Why? Because the spelling system in both is based on how the language used to be pronounced, and the pronunciation has since changed.

Sound – Letter relationships LTS: Letter to sound, or Phoneme-Grapheme relationships. In most languages, this is simple. But in English and in French, it’s very messy. Why? Because the spelling system in both is based on how the language used to be pronounced, and the pronunciation has since changed.

Other languages In most other languages, spelling reflects current pronunciation much more accurately. Stress: most languages don’t mark which syllable is stressed. In some languages, there are simple principles that tell us which syllable is stressed, but when there are no such principles (e. g. English, Russian), then you need to build word-lists with the stressed indicated.

Other languages In most other languages, spelling reflects current pronunciation much more accurately. Stress: most languages don’t mark which syllable is stressed. In some languages, there are simple principles that tell us which syllable is stressed, but when there are no such principles (e. g. English, Russian), then you need to build word-lists with the stressed indicated.

Letter to sound for English n Letter >> phoneme for speech synthesis n Phoneme >> letter for speech recognition

Letter to sound for English n Letter >> phoneme for speech synthesis n Phoneme >> letter for speech recognition

Challenges to Letter-to-Sound There always new words being found, and most of them are new proper names (people, places, products, companies, etc. )

Challenges to Letter-to-Sound There always new words being found, and most of them are new proper names (people, places, products, companies, etc. )

Damper, Marchand, Adamson and Gustafson 1998: Testing Letter to Sound Third ESCA/COCOSDA Workshop on SPEECH SYNTHESIS November 1998 They contest Liberman and Church’s statement in 1991: “We will describe algorithms for pronunciation of English words…that reduce the error rate to only a few tenths of a percent for ordinary text, about two orders of magnitude better than the word error rates of 15% or so that were common a decade ago. ” They write, “In this paper, we have shown that automatic pronunciation of novel words is not a solved problem in TTS synthesis. The best that can be done is about 70% words correct using Pb. A [Pronunciation by Analogy]…traditional rules…perform very badly – much worse than pronunciation by analogy and other data-driven approaches…. ”

Damper, Marchand, Adamson and Gustafson 1998: Testing Letter to Sound Third ESCA/COCOSDA Workshop on SPEECH SYNTHESIS November 1998 They contest Liberman and Church’s statement in 1991: “We will describe algorithms for pronunciation of English words…that reduce the error rate to only a few tenths of a percent for ordinary text, about two orders of magnitude better than the word error rates of 15% or so that were common a decade ago. ” They write, “In this paper, we have shown that automatic pronunciation of novel words is not a solved problem in TTS synthesis. The best that can be done is about 70% words correct using Pb. A [Pronunciation by Analogy]…traditional rules…perform very badly – much worse than pronunciation by analogy and other data-driven approaches…. ”

Damper et al. Compare 4 approaches: 1. Hand-written phonological rules 2. Pronunciation by analogy (based on Dedina and Nusbaum 1991) 3. Neural networks (based on Sejnowski and Rosenberg’s NETtalk) 4. Information theory-based approach (“Nearest neighbor”)

Damper et al. Compare 4 approaches: 1. Hand-written phonological rules 2. Pronunciation by analogy (based on Dedina and Nusbaum 1991) 3. Neural networks (based on Sejnowski and Rosenberg’s NETtalk) 4. Information theory-based approach (“Nearest neighbor”)

How to evaluate LTS? Systems typically use 1. a large dictionary 2. a set of “exceptional words” 3. a backoff strategy for words that slip through the first 2 steps. Is it fair to test the backoff strategy on words in the first two sets, then?

How to evaluate LTS? Systems typically use 1. a large dictionary 2. a set of “exceptional words” 3. a backoff strategy for words that slip through the first 2 steps. Is it fair to test the backoff strategy on words in the first two sets, then?

Damper et al propose: n Test on a single, entire, large dictionary; n Strict scoring, not frequency-weighted, giving credit only for full-word correct; n A standardized phoneme output set should be employed

Damper et al propose: n Test on a single, entire, large dictionary; n Strict scoring, not frequency-weighted, giving credit only for full-word correct; n A standardized phoneme output set should be employed

Evaluation n In reality, different descriptions of English use different sets of phonemes (e. g. , is stress marked on the vowels? British versus American) n Issues in testing data-driven methods, because the performance of a datadriven method is tightly linked to the data it was trained on.

Evaluation n In reality, different descriptions of English use different sets of phonemes (e. g. , is stress marked on the vowels? British versus American) n Issues in testing data-driven methods, because the performance of a datadriven method is tightly linked to the data it was trained on.

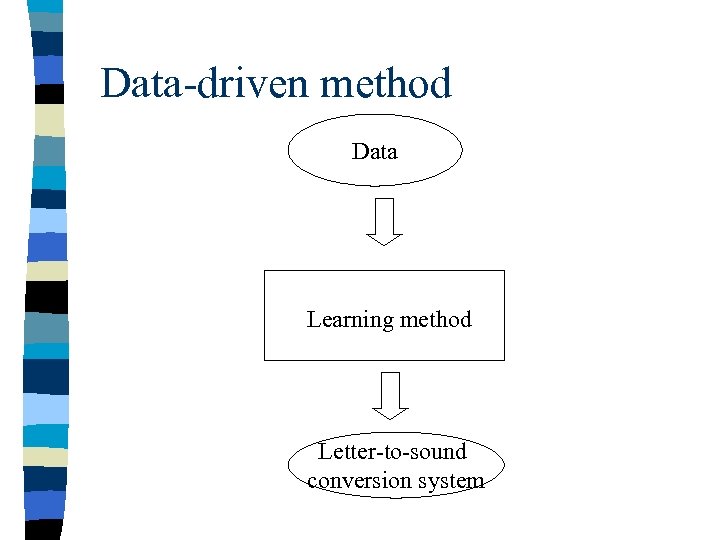

Data-driven method Data Learning method Letter-to-sound conversion system

Data-driven method Data Learning method Letter-to-sound conversion system

n In theory, you should never test a datadriven method on data that it was trained on…. n In theory, if you want to test the performance of the method on the whole dictionary, you can train the system on the whole dictionary less one word, and then test it on that word; and do all of that each time for each word. n But that takes too long! and we’re also interested in the relationship between training corpus size and total performance.

n In theory, you should never test a datadriven method on data that it was trained on…. n In theory, if you want to test the performance of the method on the whole dictionary, you can train the system on the whole dictionary less one word, and then test it on that word; and do all of that each time for each word. n But that takes too long! and we’re also interested in the relationship between training corpus size and total performance.

Damper et al’s work-around n For various values of N (up to half the size of the dictionary): n Take two random samples of the dictionary, each of size N. Train on one set, test on the other. n N = 100, 500, 1000, 2000, 5000 and 8, 140. n Dictionary is of size 16, 280.

Damper et al’s work-around n For various values of N (up to half the size of the dictionary): n Take two random samples of the dictionary, each of size N. Train on one set, test on the other. n N = 100, 500, 1000, 2000, 5000 and 8, 140. n Dictionary is of size 16, 280.

Results: Hand-written rules n Elovitz et al: hand-written rules for this purpose. 25. 7% of words were entirely correct. “Length errors (especially due to geminate consonants), /g/-/j/ confusions and vowel substitutions abound. ” Extensive efforts were made to make sure that this low figure was not an error!

Results: Hand-written rules n Elovitz et al: hand-written rules for this purpose. 25. 7% of words were entirely correct. “Length errors (especially due to geminate consonants), /g/-/j/ confusions and vowel substitutions abound. ” Extensive efforts were made to make sure that this low figure was not an error!

Pronunciation by analogy n Begin with a (hand-made) alignment of letters to sounds. For every observed string of letters, gather the set of phonemes that it can be associated with, and store in datastructure along with their frequency. n For the test word, find all ways of dividing the word up into pieces that are present in the data structure. Weight the resulting analyses by (1) how many subpieces are involved, and (2) frequencies of the subpieces, and choose the best.

Pronunciation by analogy n Begin with a (hand-made) alignment of letters to sounds. For every observed string of letters, gather the set of phonemes that it can be associated with, and store in datastructure along with their frequency. n For the test word, find all ways of dividing the word up into pieces that are present in the data structure. Weight the resulting analyses by (1) how many subpieces are involved, and (2) frequencies of the subpieces, and choose the best.

Results Pb. A; neural net n Pb. A: 71. 8% correct. n Neural net: 54. 4%, when trained on the whole dictionary

Results Pb. A; neural net n Pb. A: 71. 8% correct. n Neural net: 54. 4%, when trained on the whole dictionary

Information-Gain trees n IB 1 -IG: 57. 4% correct This approach is a variant on decisiontree learning (an important paradigm in machine learning)….

Information-Gain trees n IB 1 -IG: 57. 4% correct This approach is a variant on decisiontree learning (an important paradigm in machine learning)….

In simplest terms, a decision-tree approach studies a problem like, “What phoneme realizes this letter in this context? ” by looking at all relevant examples in the data, and considering all context data (what precedes, what follows, etc. ) and deciding, first, which factor “gives the most information”: Measure the uncertainty first: uncertainty of how this “t” should be pronounced; Measure the uncertainty if you know what the following letter is. Measuring uncertainty…

In simplest terms, a decision-tree approach studies a problem like, “What phoneme realizes this letter in this context? ” by looking at all relevant examples in the data, and considering all context data (what precedes, what follows, etc. ) and deciding, first, which factor “gives the most information”: Measure the uncertainty first: uncertainty of how this “t” should be pronounced; Measure the uncertainty if you know what the following letter is. Measuring uncertainty…

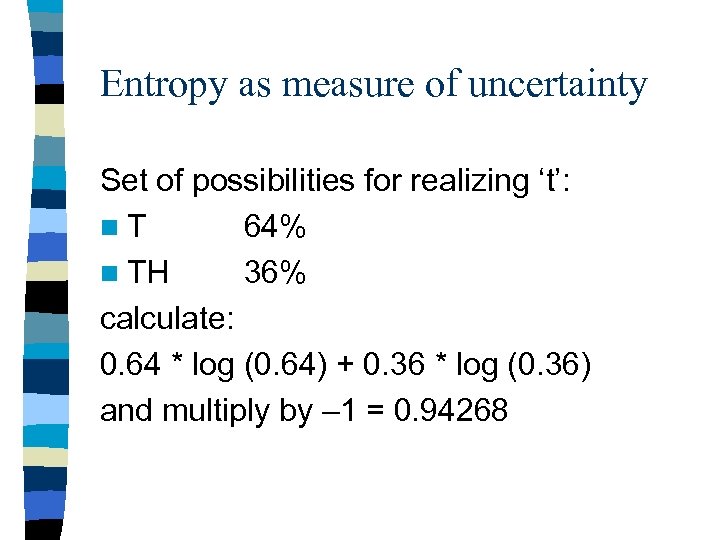

Entropy as measure of uncertainty Set of possibilities for realizing ‘t’: n. T 64% n TH 36% calculate: 0. 64 * log (0. 64) + 0. 36 * log (0. 36) and multiply by – 1 = 0. 94268

Entropy as measure of uncertainty Set of possibilities for realizing ‘t’: n. T 64% n TH 36% calculate: 0. 64 * log (0. 64) + 0. 36 * log (0. 36) and multiply by – 1 = 0. 94268

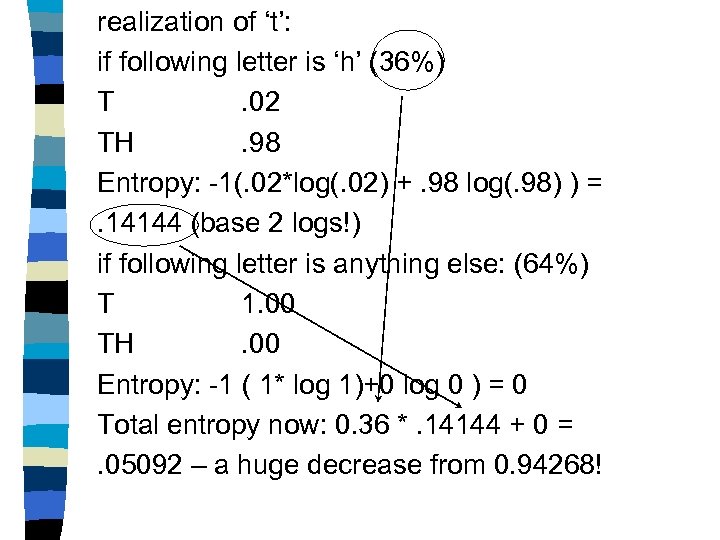

realization of ‘t’: if following letter is ‘h’ (36%) T. 02 TH. 98 Entropy: -1(. 02*log(. 02) +. 98 log(. 98) ) =. 14144 (base 2 logs!) if following letter is anything else: (64%) T 1. 00 TH. 00 Entropy: -1 ( 1* log 1)+0 log 0 ) = 0 Total entropy now: 0. 36 *. 14144 + 0 =. 05092 – a huge decrease from 0. 94268!

realization of ‘t’: if following letter is ‘h’ (36%) T. 02 TH. 98 Entropy: -1(. 02*log(. 02) +. 98 log(. 98) ) =. 14144 (base 2 logs!) if following letter is anything else: (64%) T 1. 00 TH. 00 Entropy: -1 ( 1* log 1)+0 log 0 ) = 0 Total entropy now: 0. 36 *. 14144 + 0 =. 05092 – a huge decrease from 0. 94268!

Information gain and LTS The idea is to use this method of testing to automatically determine which aspects of a letter’s neighborhood are most revealing in determining how that letter should be realized in that word. But: 57. 4% fully correct results in this experiment.

Information gain and LTS The idea is to use this method of testing to automatically determine which aspects of a letter’s neighborhood are most revealing in determining how that letter should be realized in that word. But: 57. 4% fully correct results in this experiment.

Bottom line n Still a lot of work to be done – both in getting results and testing how well various methods work.

Bottom line n Still a lot of work to be done – both in getting results and testing how well various methods work.

Minimal Edit Distance A first look at Viterbi in action

Minimal Edit Distance A first look at Viterbi in action

n What’s the best way to line up two different strings? To answer that question, we have to make some specifications. n One (p. 53 ff in textbook, Section 5. 6) could be that perfect alignments are “free”, while a deletion (non-alignment) costs 1 and a substitution costs 2.

n What’s the best way to line up two different strings? To answer that question, we have to make some specifications. n One (p. 53 ff in textbook, Section 5. 6) could be that perfect alignments are “free”, while a deletion (non-alignment) costs 1 and a substitution costs 2.

EXECUTION INTENTION These are free; and there are no reduced fares for any kind of partial match for the others.

EXECUTION INTENTION These are free; and there are no reduced fares for any kind of partial match for the others.

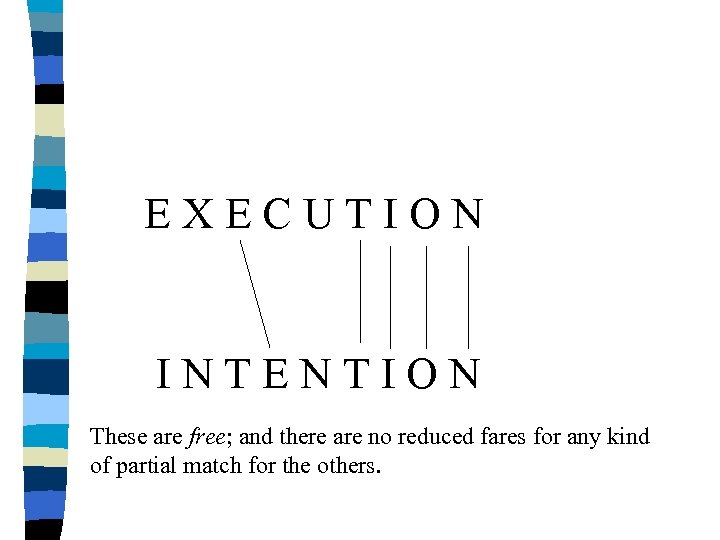

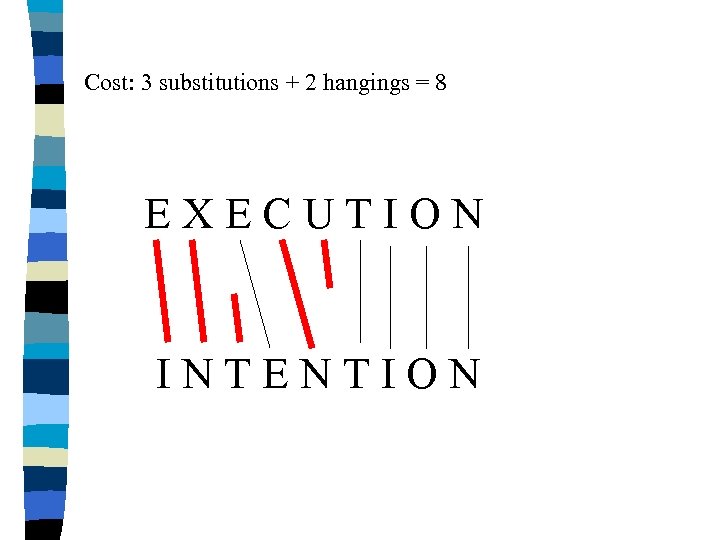

Cost: 3 substitutions + 2 hangings = 8 EXECUTION INTENTION

Cost: 3 substitutions + 2 hangings = 8 EXECUTION INTENTION

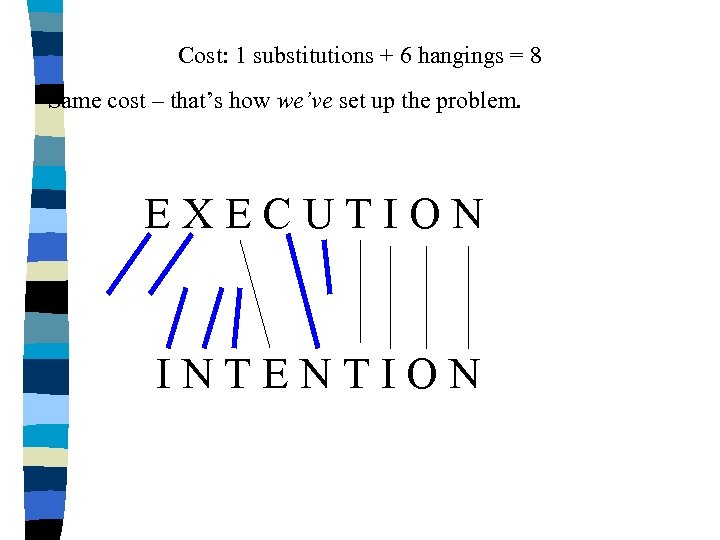

Cost: 1 substitutions + 6 hangings = 8 Same cost – that’s how we’ve set up the problem. EXECUTION INTENTION

Cost: 1 substitutions + 6 hangings = 8 Same cost – that’s how we’ve set up the problem. EXECUTION INTENTION

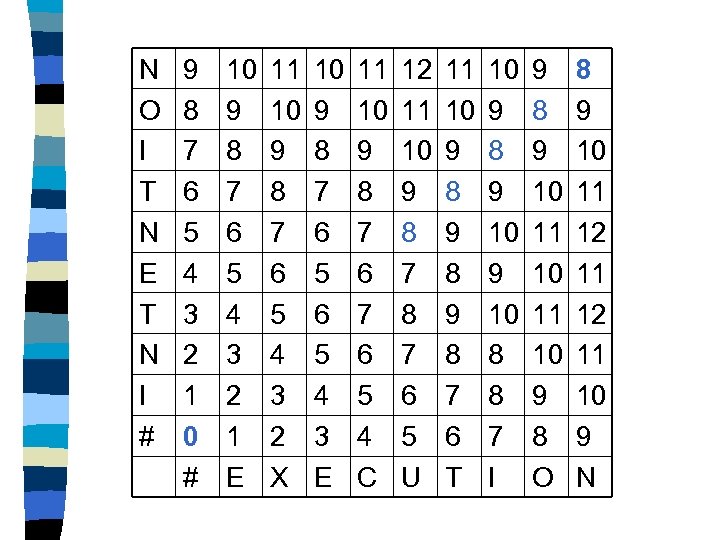

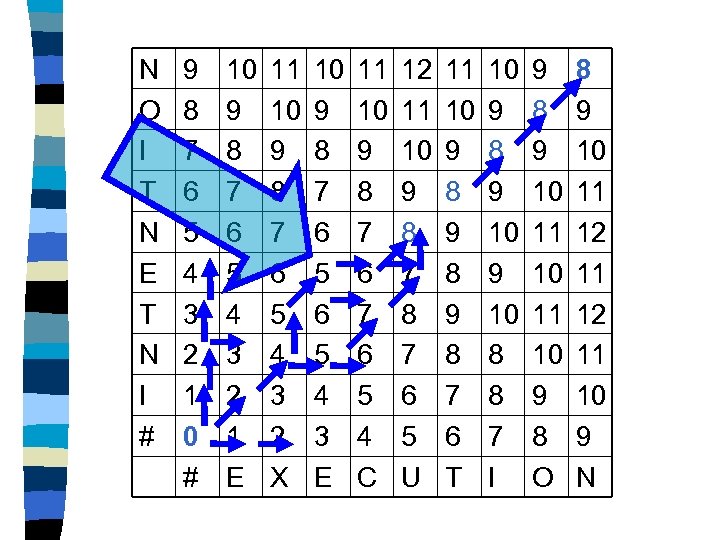

N O I T N E T N I # 9 8 7 6 5 4 3 2 1 0 # 10 9 8 7 6 5 4 3 2 1 E 11 10 9 8 7 6 5 4 3 2 X 10 9 8 7 6 5 4 3 E 11 10 9 8 7 6 5 4 C 12 11 10 9 8 7 6 5 U 11 10 9 8 9 8 7 6 T 10 9 8 9 10 8 8 7 I 9 8 9 10 11 10 9 8 O 8 9 10 11 12 11 10 9 N

N O I T N E T N I # 9 8 7 6 5 4 3 2 1 0 # 10 9 8 7 6 5 4 3 2 1 E 11 10 9 8 7 6 5 4 3 2 X 10 9 8 7 6 5 4 3 E 11 10 9 8 7 6 5 4 C 12 11 10 9 8 7 6 5 U 11 10 9 8 9 8 7 6 T 10 9 8 9 10 8 8 7 I 9 8 9 10 11 10 9 8 O 8 9 10 11 12 11 10 9 N

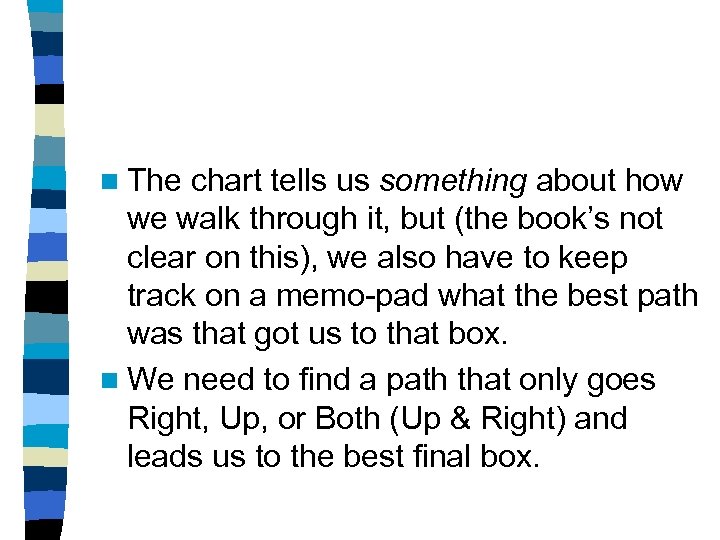

n The chart tells us something about how we walk through it, but (the book’s not clear on this), we also have to keep track on a memo-pad what the best path was that got us to that box. n We need to find a path that only goes Right, Up, or Both (Up & Right) and leads us to the best final box.

n The chart tells us something about how we walk through it, but (the book’s not clear on this), we also have to keep track on a memo-pad what the best path was that got us to that box. n We need to find a path that only goes Right, Up, or Both (Up & Right) and leads us to the best final box.

n We can arbitrarily choose one of the best ways to get to a box in this case, because the problem at hand doesn’t set different costs depending on the row -transitions. But very frequently such costs must be borne in mind.

n We can arbitrarily choose one of the best ways to get to a box in this case, because the problem at hand doesn’t set different costs depending on the row -transitions. But very frequently such costs must be borne in mind.

N O I T N E T N I # 9 8 7 6 5 4 3 2 1 0 # 10 9 8 7 6 5 4 3 2 1 E 11 10 9 8 7 6 5 4 3 2 X 10 9 8 7 6 5 4 3 E 11 10 9 8 7 6 5 4 C 12 11 10 9 8 7 6 5 U 11 10 9 8 9 8 7 6 T 10 9 8 9 10 8 8 7 I 9 8 9 10 11 10 9 8 O 8 9 10 11 12 11 10 9 N

N O I T N E T N I # 9 8 7 6 5 4 3 2 1 0 # 10 9 8 7 6 5 4 3 2 1 E 11 10 9 8 7 6 5 4 3 2 X 10 9 8 7 6 5 4 3 E 11 10 9 8 7 6 5 4 C 12 11 10 9 8 7 6 5 U 11 10 9 8 9 8 7 6 T 10 9 8 9 10 8 8 7 I 9 8 9 10 11 10 9 8 O 8 9 10 11 12 11 10 9 N