fea42c4aee53bf2a0cf2c9a33993a8a8.ppt

- Количество слайдов: 40

Performing Intent Borut Pfeifer Doug Church EALA Blueprint - Unnamed Spielberg Project (LMNO)

But go play it - it’s a fun game!

What if we want to… Create believable human characters? – (momentarily believable anyway) Give the player meaningful, emotional, choice? Simulate characters for emergent gameplay?

Character & Player Understanding Player must understand AI character motivation. No different than a fictional character. A character is unbelievable if: – They have no consistent rationale/motivation. – The player/reader never learns that rationale. – Doesn’t have to know it all, or all at once. How do we convey this interactively at high fidelity?

Peforming Intent Problematic performances crucial to interacting w/AI: – – – Conflicting motivations Reactions Attention Meaning Physicality High level strategies we’re pursuing on LMNO. Ways to turn Spielberg in to different parts of speech: – Spielbergian (adj. ), Spielbergify (v. ), Spielbergness (n. )

Spielbergness “When his films work, they work on every level that a film can reach. I remember seeing E. T. at the Cannes Film Festival, where it played before the most sophisticated filmgoers in the world and reduced them to tears and cheers. ” - Roger Ebert

These movie clips are all copyright somebody else. Please don’t report me for movie piracy. None of the clips are meant to suggest actual gameplay in LMNO. Remember, LMNO is not the game’s name, just the project codename. All clips are purely for illustrative purposes only. Any resemblance to any shipping version of a game I work on at EALA is purely coincidental. By using movie clips I do not mean to imply games should be more like movies in any fashion whatsoever, they used here simply as a tool for analyzing human dramatic performance. Remember, LMNO is just the codename. I said nothing. I was never here. Buy EA games. Thank you.

Conflicting Motivations Example: Scene from Munich

Conflicting Motivations Player needs to understand internal conflict to: – Reason about/model NPC behavior. – See NPCs as realistic (not constantly shifting behavior robotically). – Convey info NPC has that the player doesn’t know. Typically would be a bespoke behavior. Separate decision-making? – executing behavior vs. performing intent

Conflicting Motivations Conflicting behaviors maybe in parallel or in sequence. But real performance comes from combination of behaviors. How do you slot that in without exploding complexity? (N behaviors * N Behaviors)

Reactions Example: Raiders of the Lost Ark

Reactions Typically also implemented in decision making about executing behavior. Many different types of reactions: – Subtle initial reaction (overlay) taken over by bigger full body reaction – Big full body reaction gives way to subtle overlay performance that lasts much longer – Important reaction in the middle of non-interruptible action – May stop reaction to do something but need to return to reaction

Reactions Player needs to see: – Connection to world – Feedback if internal change to NPC is happening Reactions may cause direct behavior change: – Seeing a new enemy Or may not: – Maybe internally incrementing toward some behavior – Actual behavior change is delayed

Reactions Try to separate into pieces: – Initial overlay – Initial full body – Sustained overlay – Secondary full body How do you deal with different reaction types and: – Need to vary reaction based on lots of parameters (physical state, emotional state, world context). – May only have subset of pieces available. – What if you need to change entire flavor of current action?

Attention Example: Catch Me If You Can

Attention From the clip: – FBI agents searching for Abagnale (Leo Di. Caprio). – FBI agents distracted by the stewardesses. – Handratty (Tom Hanks) focused on object (phone). Player needs to be aware of: – NPC’s attitude towards attention targets. – Possible NPC behaviors towards those targets.

Attention Difficult to deal with multiple priorities: – – – Target(s) of current behavior Target(s) of current reaction Multiple ongoing reactions or behaviors? Interleaving authored clips with procedural targets? Non-active behaviors can suggest possible attention targets. How does each of those tell the attention system how important it is? How does the attention system tell others when it can’t comply so they can deal with it?

Meaning Example: E. T.

Meaning Objects have different semantic meanings: – – – Show: toys’ meaning to Elliot when E. T. is there Play: toys might mean this to Elliot in other circumstances Eat: car’s initial meaning to E. T. Scary: dog’s meaning to E. T. Threatening: E. T. ’s meaning to dog Makes them applicable for different behaviors. In a realistic environment, these meanings are dynamic (not just stored with the object).

Meaning Player needs to know: – Which meaning the NPC is applying to a target. – How to change it if possible. Need NPC to perform to what meaning is being applied. Don’t want the NPC to “learn” these changing meanings. How can we author the experience of the character going through the process of these objects changing meaning?

Emotional Simulation Example: War of the Worlds

Emotional Simulation Emotional states (long and/or short term): – Mob was frightened, violent, intimidated. – Daughter was panicking. – Son was angry. Father tries to plead, intimidate, fight. – Each has different effects based on NPCs’ emotional state. Point of emotional simulation? Helps to create: – New and interesting gameplay possibilities. – Believable, consistent, richer, characters.

Emotional Simulation Player needs to know: – NPCs’ emotional state. – Affordances for NPC behavior from emotional state. Ideally affordances are as natural as possible. Can’t communicate it all the time: – Some of this player will see, and then it becomes hidden again. – How much does the player have to keep track of that’s not directly available?

Emotional Simulation Can affect every level of behavior: – Goals/actions may become active/inactive – Attention targets & frequency of selection – Tone of reactions & movement (performance selection) – Filter interpretations of objects/characters How to reduce having emotion-specific logic in every single layer of AI?

Physicality Many aspects of spatial info & lower level body control: – – – Pathfinding Collision World registration Object interaction Social nuance: public vs. private, indoor vs. outdoor Player needs to see: – What is keeping NPC from acting on what it previously communicated it would do? – Realistic movement through the space with none of those elements interfering with each other.

So you may have noticed… Three recurring problem areas: – Control and feedback between layers - (mind vs. body problem). – Dealing with complexity explosion of assets (what else is new? ) – Interleaving authored data where necessary.

Mind vs. Body AI split into many layers to deal with complexity. One layer fails to talk to another: – NPCs walking into walls. – Thrashing back and forth between 2 behaviors. – NPCs pursuing a single behavior without acknowledging change in the world. Have to increase & better manage communication between layers.

Mind vs. Body If you’re looking for any great solutions here, sorry. Inherently a software architecture problem: – Each layer (pathfinding, steering, anim state machine, etc. ) has to pass up as much failure info as possible. – Typically only higher level layers can do something about that failure. – Or they have to pass that info to another lower module to deal with that failure.

Complexity & Authoring All these simulations will intersect at various times. Some of these moments are more crucial to the experience than others: – The way a character looks at you after a tense situation. – How a character decides to wander around an environment when exploring. How can we easily Spielbergify those moments?

The “Sparse Matrix” (Not a data structure btw – abstracting authoring principle) Possibility space is N-dimensional where N includes: – – emotional states physical state & limitations history of behavior And more! Which combined factors do you need to perform about to create the experience you want? Who knows!? ! Won’t be able to tell ahead of time.

Filling the “Sparse Matrix” Need to override behavior/performance for any new combination of factors. Need to easily define reasonable defaults & fallbacks. High level strategies include: – – – Search Filtered interpretation Context authoring

Search, Search Lots of things – behavior, animation, any content. Adding elements must be very easy. Declarative - don’t explicitly define connections. Requires more run-time visualization.

Filtered Interpretation Goal: Reduce # of things to search on: – – Don’t fill a row of N things, fill a row of M < N. M is an abstracted interpretation of the set of N. N->M mapping is modular, can change dynamically. Reduces complexity while achieving similar results. Can reuse each mapping on different characters. Use filter as simplifier, but keep original input.

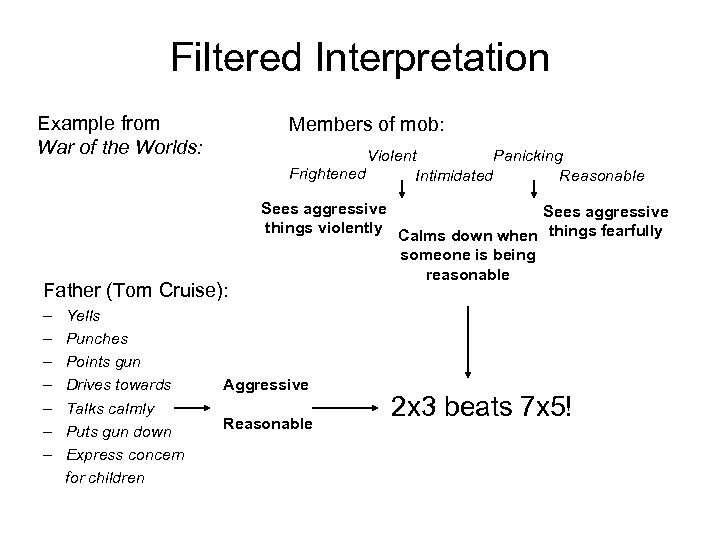

Filtered Interpretation Example from War of the Worlds: Members of mob: Violent Panicking Frightened Intimidated Reasonable Father (Tom Cruise): – – – – Yells Punches Points gun Drives towards Talks calmly Puts gun down Express concern for children Sees aggressive things violently Calms down when things fearfully someone is being reasonable Aggressive Reasonable 2 x 3 beats 7 x 5!

Authoring Context What do I mean by context? Multiple kinds: • Global: high level, many factors, lots of behaviors – Are we in combat? Are we sneaking around? What’s going on? – Typically detected/implemented in code. • Regional: one or a few factors, affects several behaviors – What type of space is this? Have I done something recently? – Data assigned by designers through simple script rules. – Used in filtering/mapping. • Local: one behavior can track lots of context – Only affects this current behavior. – Implemented only in the behavior code or related subsystems.

Authoring Context Classroom example: – Space has social context that would prevent outsider walking through. – Unless the circumstances were extreme. E. T. example: – – – Elliot’s relationship with E. T. is a piece of authored context. Track context that E. T. is with Elliot (in the room). Toys have one meaning when E. T. is present (show). Toys might have another meaning (play) if E. T. was not there. Dog has different meaning to Elliot than E. T. Does that change?

So… • • • Game events get tracked. Translated into context (by both design & code). Filtered, simplified into meanings/interpretations. Meanings get turned into behavior. All the context also gets passed into behavior & lower level systems. • Each system can search/modulate based on all that given context. • Each system has to pass back up & deal with failure info. • Just to get the character to believably perform about what it wants to do.

What next? 1. Finish making some of this stuff. 2. Ship the game!

Thank you! Borut Pfeifer – bpfeifer@ea. com Also: Separated at birth? Blog - http: //www. plushapocalypse. com/borut

fea42c4aee53bf2a0cf2c9a33993a8a8.ppt