1d8a3f3cfdb26de2980b6a0c57717d58.ppt

- Количество слайдов: 31

Pattern-growth Methods for Sequential Pattern Mining: Principles and Extensions Jiawei Han (UIUC) Jian Pei (Simon Fraser Univ. )

Pattern-growth Methods for Sequential Pattern Mining: Principles and Extensions Jiawei Han (UIUC) Jian Pei (Simon Fraser Univ. )

Outline n Sequential pattern mining n Pattern-growth methods n Performance study n Mining sequential patterns with regular expression constraints

Outline n Sequential pattern mining n Pattern-growth methods n Performance study n Mining sequential patterns with regular expression constraints

Why Sequential Pattern Mining? n n Sequential pattern mining: Finding time-related frequent patterns (frequent subsequences) Most data and applications are time-related n Customer shopping patterns, telephone calling patterns n E. g. , first buy computer, then CD-ROMS, software, within 3 mos. n Natural disasters (e. g. , earthquake, hurricane) n Disease and treatment n Stock market fluctuation n Weblog click stream analysis n DNA sequence analysis

Why Sequential Pattern Mining? n n Sequential pattern mining: Finding time-related frequent patterns (frequent subsequences) Most data and applications are time-related n Customer shopping patterns, telephone calling patterns n E. g. , first buy computer, then CD-ROMS, software, within 3 mos. n Natural disasters (e. g. , earthquake, hurricane) n Disease and treatment n Stock market fluctuation n Weblog click stream analysis n DNA sequence analysis

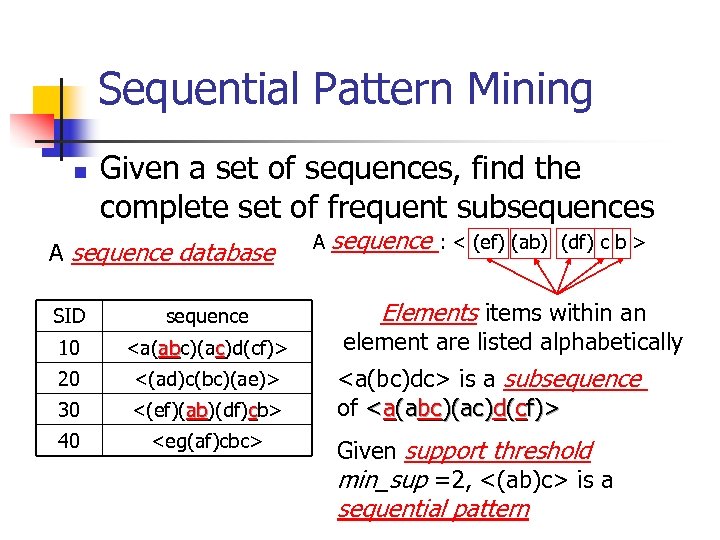

Sequential Pattern Mining n Given a set of sequences, find the complete set of frequent subsequences A sequence database SID sequence 10

Sequential Pattern Mining n Given a set of sequences, find the complete set of frequent subsequences A sequence database SID sequence 10

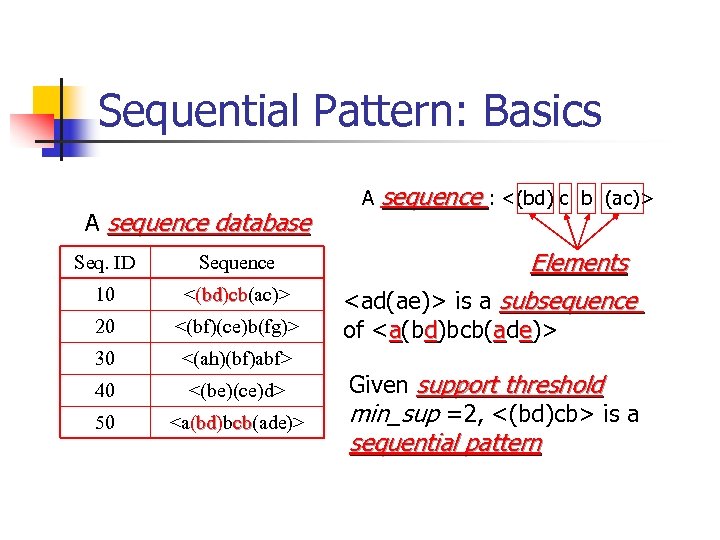

Sequential Pattern: Basics A sequence database Seq. ID Sequence 10 <(bd)cb(ac)> bd cb 20 <(bf)(ce)b(fg)> 30 <(ah)(bf)abf> 40 <(be)(ce)d> 50

Sequential Pattern: Basics A sequence database Seq. ID Sequence 10 <(bd)cb(ac)> bd cb 20 <(bf)(ce)b(fg)> 30 <(ah)(bf)abf> 40 <(be)(ce)d> 50

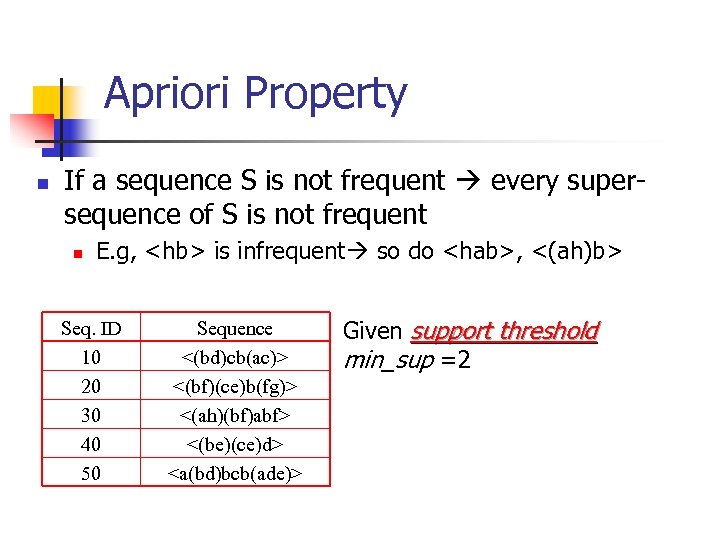

Apriori Property n If a sequence S is not frequent every supersequence of S is not frequent n E. g,

Apriori Property n If a sequence S is not frequent every supersequence of S is not frequent n E. g,

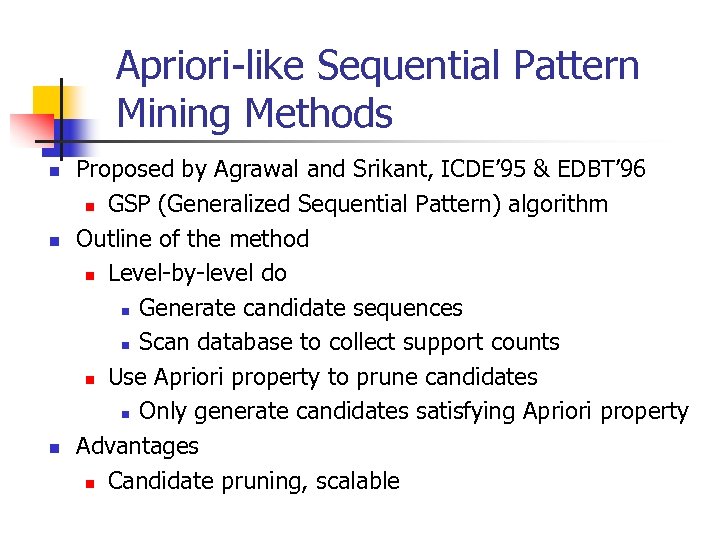

Apriori-like Sequential Pattern Mining Methods n n n Proposed by Agrawal and Srikant, ICDE’ 95 & EDBT’ 96 n GSP (Generalized Sequential Pattern) algorithm Outline of the method n Level-by-level do n Generate candidate sequences n Scan database to collect support counts n Use Apriori property to prune candidates n Only generate candidates satisfying Apriori property Advantages n Candidate pruning, scalable

Apriori-like Sequential Pattern Mining Methods n n n Proposed by Agrawal and Srikant, ICDE’ 95 & EDBT’ 96 n GSP (Generalized Sequential Pattern) algorithm Outline of the method n Level-by-level do n Generate candidate sequences n Scan database to collect support counts n Use Apriori property to prune candidates n Only generate candidates satisfying Apriori property Advantages n Candidate pruning, scalable

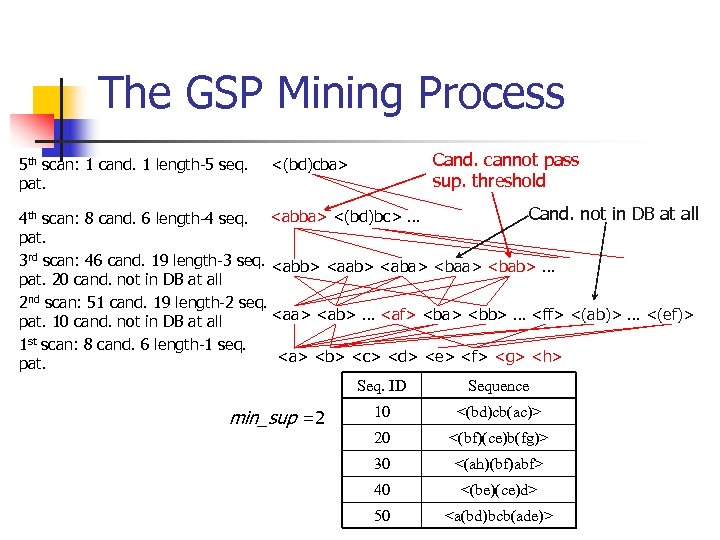

The GSP Mining Process 5 th scan: 1 cand. 1 length-5 seq. pat. Cand. cannot pass sup. threshold <(bd)cba> Cand. not in DB at all 4 th scan: 8 cand. 6 length-4 seq.

The GSP Mining Process 5 th scan: 1 cand. 1 length-5 seq. pat. Cand. cannot pass sup. threshold <(bd)cba> Cand. not in DB at all 4 th scan: 8 cand. 6 length-4 seq.

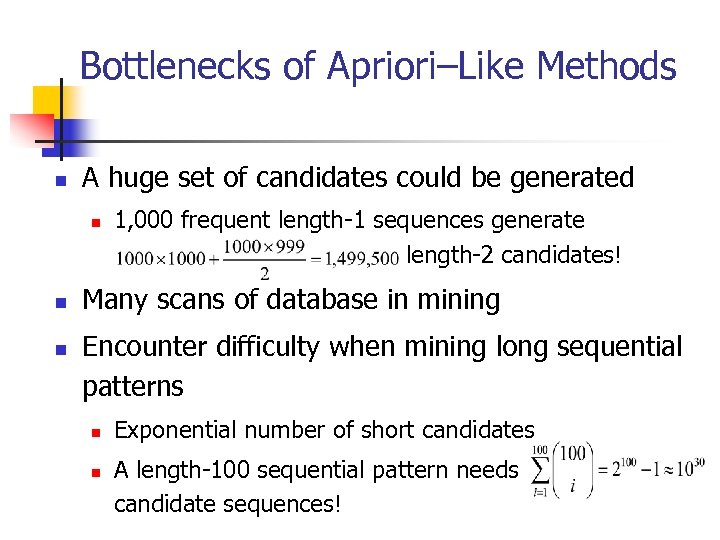

Bottlenecks of Apriori–Like Methods n A huge set of candidates could be generated n n n 1, 000 frequent length-1 sequences generate length-2 candidates! Many scans of database in mining Encounter difficulty when mining long sequential patterns n n Exponential number of short candidates A length-100 sequential pattern needs candidate sequences!

Bottlenecks of Apriori–Like Methods n A huge set of candidates could be generated n n n 1, 000 frequent length-1 sequences generate length-2 candidates! Many scans of database in mining Encounter difficulty when mining long sequential patterns n n Exponential number of short candidates A length-100 sequential pattern needs candidate sequences!

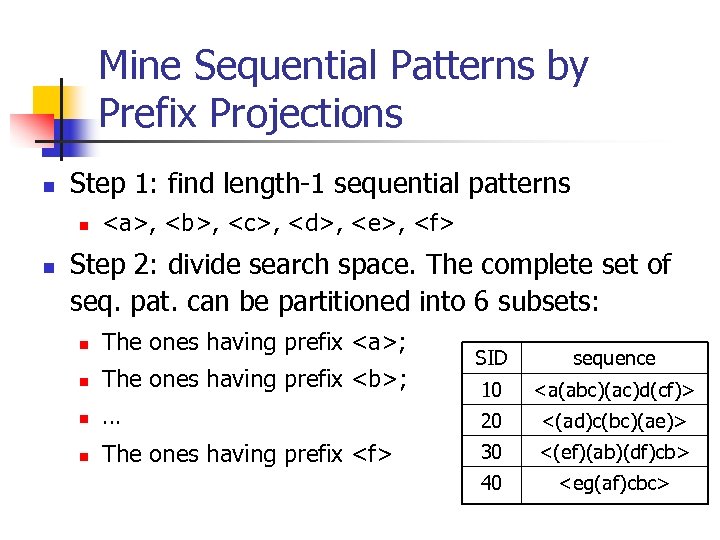

Mine Sequential Patterns by Prefix Projections n Step 1: find length-1 sequential patterns n n , ,

Mine Sequential Patterns by Prefix Projections n Step 1: find length-1 sequential patterns n n , ,

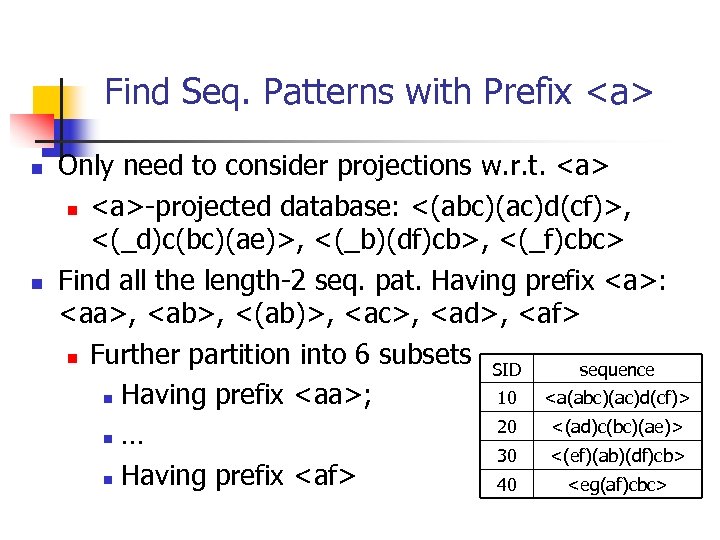

Find Seq. Patterns with Prefix n n Only need to consider projections w. r. t. n -projected database: <(abc)(ac)d(cf)>, <(_d)c(bc)(ae)>, <(_b)(df)cb>, <(_f)cbc> Find all the length-2 seq. pat. Having prefix :

Find Seq. Patterns with Prefix n n Only need to consider projections w. r. t. n -projected database: <(abc)(ac)d(cf)>, <(_d)c(bc)(ae)>, <(_b)(df)cb>, <(_f)cbc> Find all the length-2 seq. pat. Having prefix :

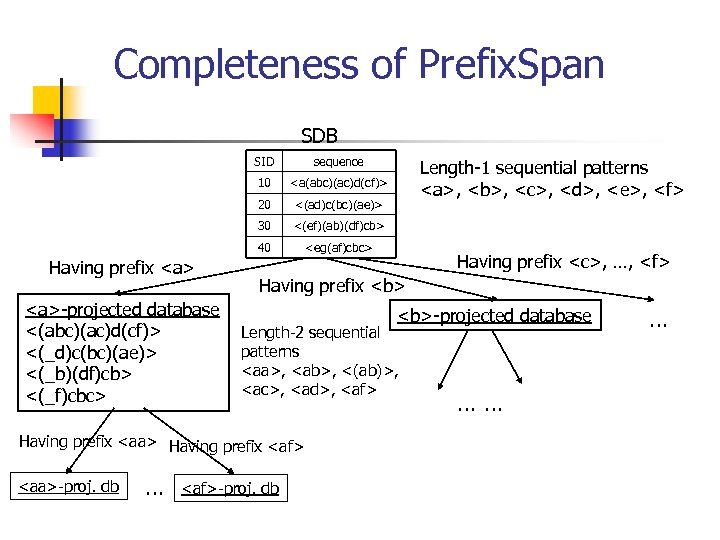

Completeness of Prefix. Span SDB SID 10 <(ad)c(bc)(ae)> 30 <(ef)(ab)(df)cb> 40 -projected database <(abc)(ac)d(cf)> <(_d)c(bc)(ae)> <(_b)(df)cb> <(_f)cbc>

Completeness of Prefix. Span SDB SID 10 <(ad)c(bc)(ae)> 30 <(ef)(ab)(df)cb> 40 -projected database <(abc)(ac)d(cf)> <(_d)c(bc)(ae)> <(_b)(df)cb> <(_f)cbc>

Efficiency of Prefix. Span n No candidate sequence needs to be generated n Projected databases keep shrinking n Major cost of Prefix. Span: constructing projected databases n Can be improved by bi-level projections

Efficiency of Prefix. Span n No candidate sequence needs to be generated n Projected databases keep shrinking n Major cost of Prefix. Span: constructing projected databases n Can be improved by bi-level projections

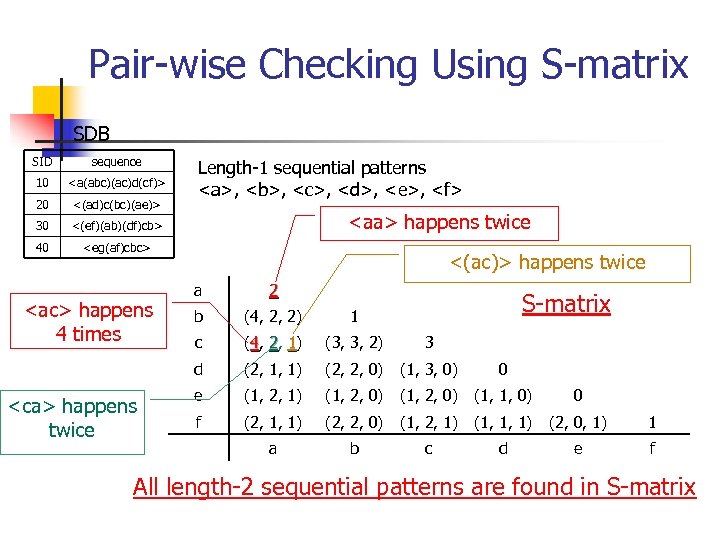

Pair-wise Checking Using S-matrix SDB SID sequence 10

Pair-wise Checking Using S-matrix SDB SID sequence 10

Scaling-up by Bi-level Projection n Partition search space based on length-2 sequential patterns n Only form projected databases and pursue recursive mining over bi-level projected databases

Scaling-up by Bi-level Projection n Partition search space based on length-2 sequential patterns n Only form projected databases and pursue recursive mining over bi-level projected databases

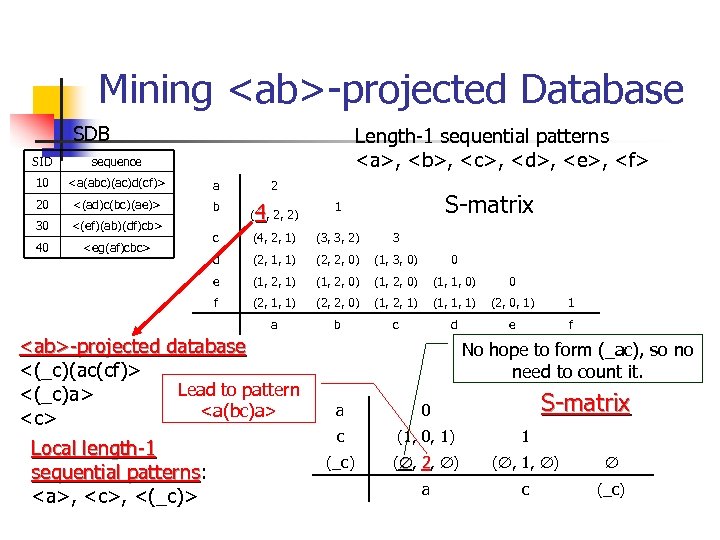

Mining

Mining

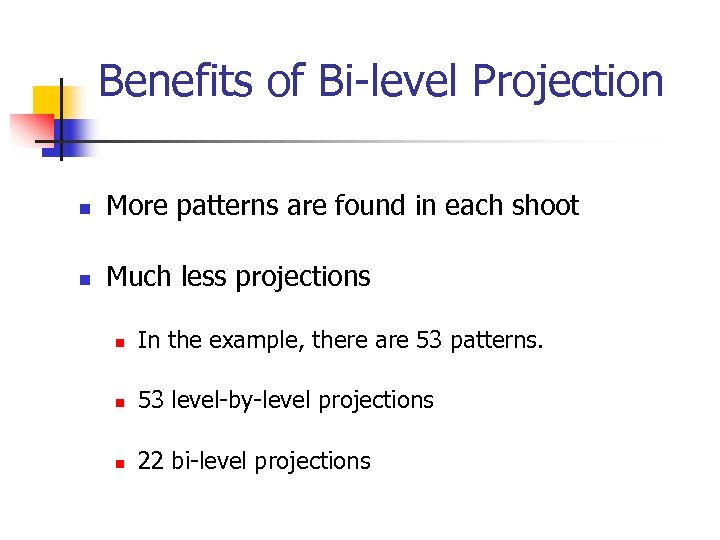

Benefits of Bi-level Projection n More patterns are found in each shoot n Much less projections n In the example, there are 53 patterns. n 53 level-by-level projections n 22 bi-level projections

Benefits of Bi-level Projection n More patterns are found in each shoot n Much less projections n In the example, there are 53 patterns. n 53 level-by-level projections n 22 bi-level projections

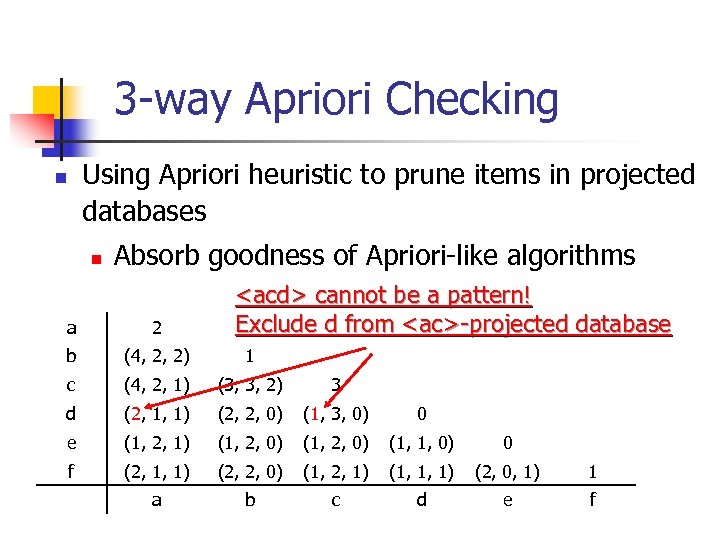

3 -way Apriori Checking Using Apriori heuristic to prune items in projected databases n n Absorb goodness of Apriori-like algorithms

3 -way Apriori Checking Using Apriori heuristic to prune items in projected databases n n Absorb goodness of Apriori-like algorithms

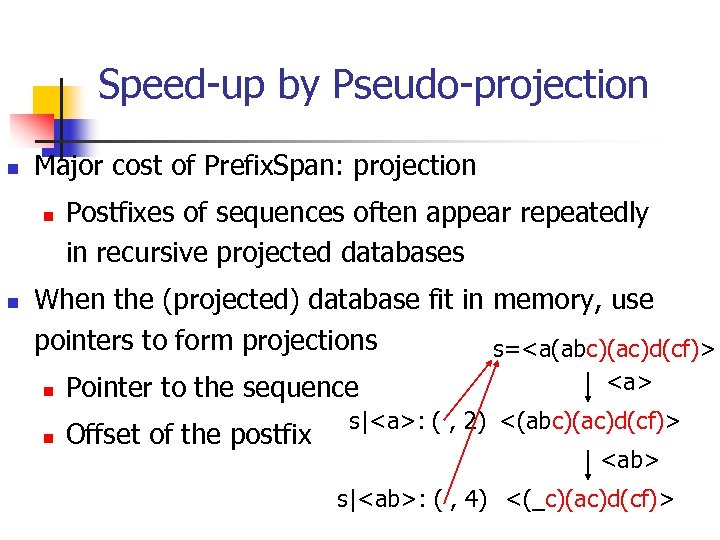

Speed-up by Pseudo-projection n Major cost of Prefix. Span: projection n n Postfixes of sequences often appear repeatedly in recursive projected databases When the (projected) database fit in memory, use pointers to form projections s=

Speed-up by Pseudo-projection n Major cost of Prefix. Span: projection n n Postfixes of sequences often appear repeatedly in recursive projected databases When the (projected) database fit in memory, use pointers to form projections s=

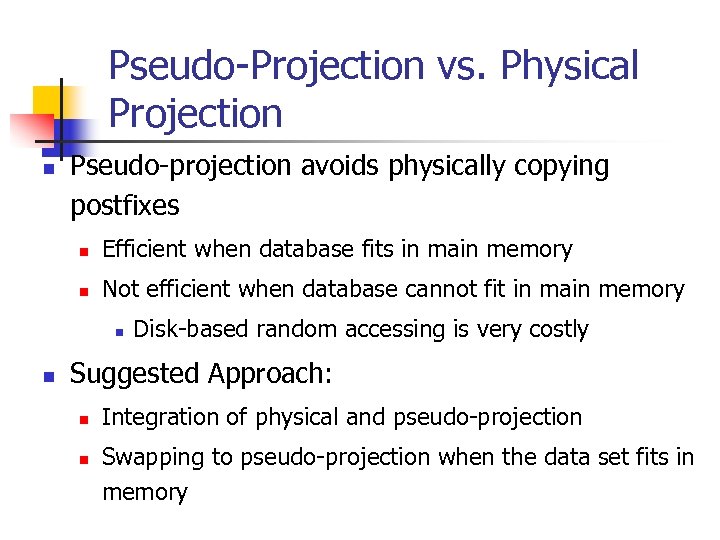

Pseudo-Projection vs. Physical Projection n Pseudo-projection avoids physically copying postfixes n Efficient when database fits in main memory n Not efficient when database cannot fit in main memory n n Disk-based random accessing is very costly Suggested Approach: n n Integration of physical and pseudo-projection Swapping to pseudo-projection when the data set fits in memory

Pseudo-Projection vs. Physical Projection n Pseudo-projection avoids physically copying postfixes n Efficient when database fits in main memory n Not efficient when database cannot fit in main memory n n Disk-based random accessing is very costly Suggested Approach: n n Integration of physical and pseudo-projection Swapping to pseudo-projection when the data set fits in memory

Seeing is Believing: Experiments and Performance Analysis n Comparing Prefix. Span with GSP and Free. Span in large databases n n GSP (IBM Almaden, Srikant & Agrawal EDBT’ 96) Free. Span (J. Han J. Pei, B. Mortazavi-Asi, Q. Chen, U. Dayal, M. C. Hsu, KDD’ 00) n Prefix-Span-1 (single-level projection) n Prefix-Scan-2 (bi-level projection) n Comparing effects of pseudo-projection n Comparing I/O cost and scalability

Seeing is Believing: Experiments and Performance Analysis n Comparing Prefix. Span with GSP and Free. Span in large databases n n GSP (IBM Almaden, Srikant & Agrawal EDBT’ 96) Free. Span (J. Han J. Pei, B. Mortazavi-Asi, Q. Chen, U. Dayal, M. C. Hsu, KDD’ 00) n Prefix-Span-1 (single-level projection) n Prefix-Scan-2 (bi-level projection) n Comparing effects of pseudo-projection n Comparing I/O cost and scalability

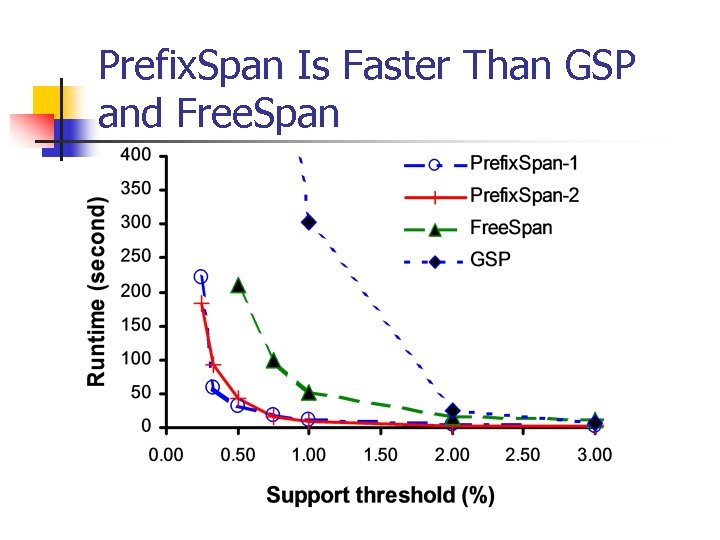

Prefix. Span Is Faster Than GSP and Free. Span

Prefix. Span Is Faster Than GSP and Free. Span

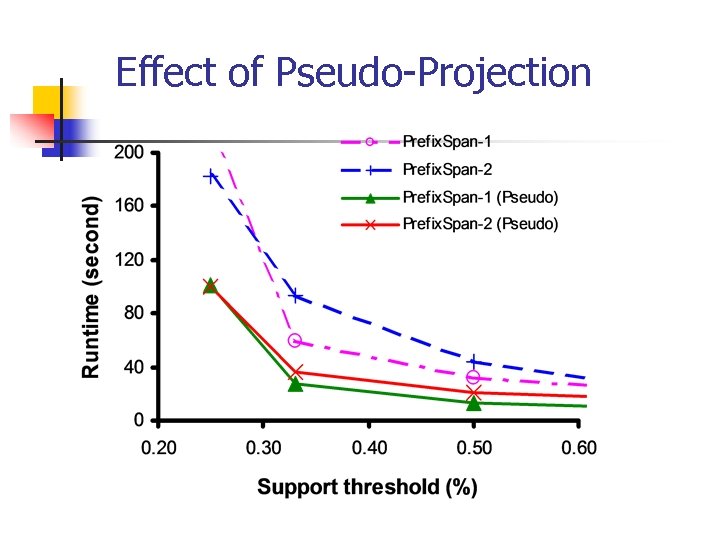

Effect of Pseudo-Projection

Effect of Pseudo-Projection

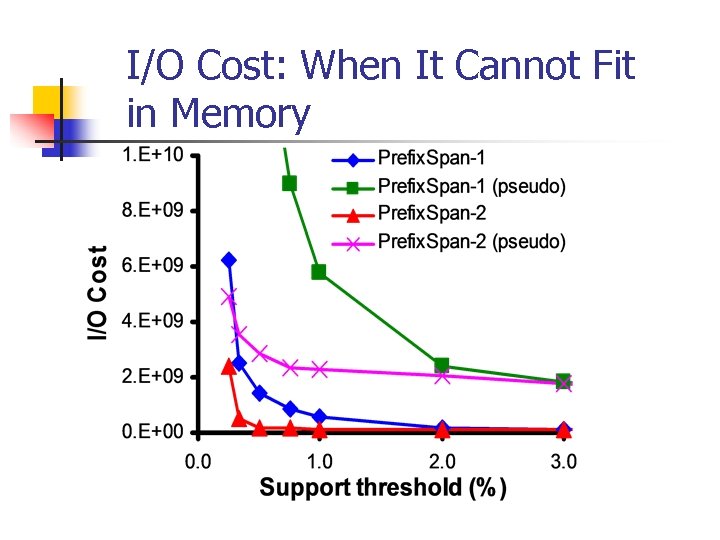

I/O Cost: When It Cannot Fit in Memory

I/O Cost: When It Cannot Fit in Memory

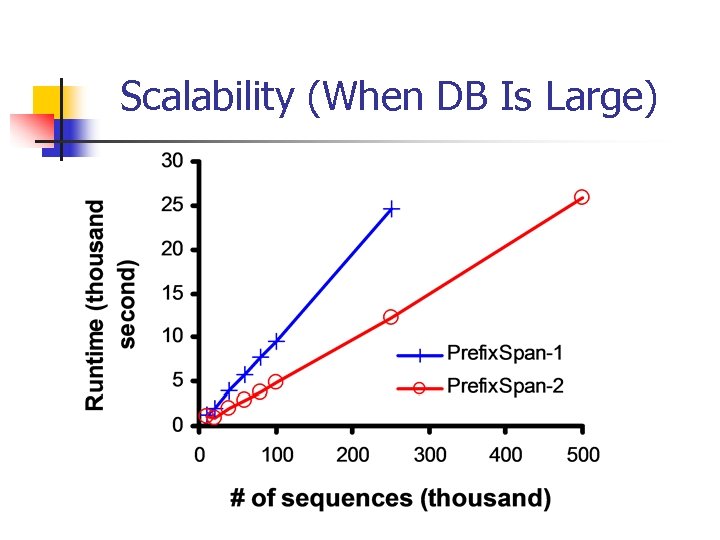

Scalability (When DB Is Large)

Scalability (When DB Is Large)

Major Features of Prefix. Span n Both Prefix. Span and Free. Span are pattern-growth methods n n Searches are more focused and thus efficient Prefix-projected pattern growth (Prefix. Span) is more elegant than frequent pattern-guided projection (Free. Span) Apriori heuristic is integrated into bi-level projection Prefix. Span Pseudo-projection substantially enhances the performance of the memory-based processing

Major Features of Prefix. Span n Both Prefix. Span and Free. Span are pattern-growth methods n n Searches are more focused and thus efficient Prefix-projected pattern growth (Prefix. Span) is more elegant than frequent pattern-guided projection (Free. Span) Apriori heuristic is integrated into bi-level projection Prefix. Span Pseudo-projection substantially enhances the performance of the memory-based processing

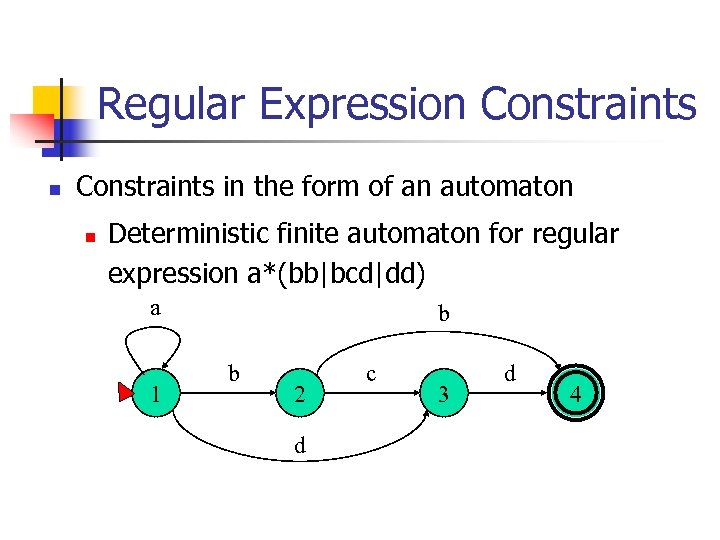

Regular Expression Constraints in the form of an automaton n Deterministic finite automaton for regular expression a*(bb|bcd|dd) a 1 b b 2 d c 3 d 4

Regular Expression Constraints in the form of an automaton n Deterministic finite automaton for regular expression a*(bb|bcd|dd) a 1 b b 2 d c 3 d 4

Prefix. Span for Constrained Mining n Any prefix failing an RE-constraint cannot lead to a valid pattern n Prune invalid patterns immediately n Only grow prefix satisfying a RE-constraint n Only project items in the remaining of the RE

Prefix. Span for Constrained Mining n Any prefix failing an RE-constraint cannot lead to a valid pattern n Prune invalid patterns immediately n Only grow prefix satisfying a RE-constraint n Only project items in the remaining of the RE

Conclusions n Prefix. Span: an efficient sequential pattern mining method n n General idea: examine only the prefixes and project only their corresponding postfixes Two kinds of projections: level-by-level & bilevel Pseudo-projection Extending Prefix. Span to mine with REconstraints n Prune invalid prefix immediately

Conclusions n Prefix. Span: an efficient sequential pattern mining method n n General idea: examine only the prefixes and project only their corresponding postfixes Two kinds of projections: level-by-level & bilevel Pseudo-projection Extending Prefix. Span to mine with REconstraints n Prune invalid prefix immediately

References (1) n n n R. Agrawal and R. Srikant. Fast algorithms for mining association rules. VLDB'94, pages 487 -499. R. Agrawal and R. Srikant. Mining sequential patterns. ICDE'95, pages 314. C. Bettini, X. S. Wang, and S. Jajodia. Mining temporal relationships with multiple granularities in time sequences. Data Engineering Bulletin, 21: 32 -38, 1998. M. Garofalakis, R. Rastogi, and K. Shim. Spirit: Sequential pattern mining with regular expression constraints. VLDB'99, pages 223 -234. J. Han, G. Dong, and Y. Yin. Efficient mining of partial periodic patterns in time series database. ICDE'99, pages 106 -115. J. Han, J. Pei, B. Mortazavi-Asl, Q. Chen, U. Dayal, and M. -C. Hsu. Free. Span: Frequent pattern-projected sequential pattern mining. KDD'00, pages 355 -359.

References (1) n n n R. Agrawal and R. Srikant. Fast algorithms for mining association rules. VLDB'94, pages 487 -499. R. Agrawal and R. Srikant. Mining sequential patterns. ICDE'95, pages 314. C. Bettini, X. S. Wang, and S. Jajodia. Mining temporal relationships with multiple granularities in time sequences. Data Engineering Bulletin, 21: 32 -38, 1998. M. Garofalakis, R. Rastogi, and K. Shim. Spirit: Sequential pattern mining with regular expression constraints. VLDB'99, pages 223 -234. J. Han, G. Dong, and Y. Yin. Efficient mining of partial periodic patterns in time series database. ICDE'99, pages 106 -115. J. Han, J. Pei, B. Mortazavi-Asl, Q. Chen, U. Dayal, and M. -C. Hsu. Free. Span: Frequent pattern-projected sequential pattern mining. KDD'00, pages 355 -359.

References (2) n n n J. Han, J. Pei, and Y. Yin. Mining frequent patterns without candidate generation. SIGMOD'00, pages 1 -12. H. Lu, J. Han, and L. Feng. Stock movement and n-dimensional intertransaction association rules. DMKD'98, pages 12: 1 -12: 7. H. Mannila, H. Toivonen, and A. I. Verkamo. Discovery of frequent episodes in event sequences. Data Mining and Knowledge Discovery, 1: 259 -289, 1997. B. "Ozden, S. Ramaswamy, and A. Silberschatz. Cyclic association rules. ICDE'98, pages 412 -421. J. Pei, J. Han, B. Mortazavi-Asl, H. Pinto, Q. Chen, U. Dayal, and M. -C. Hsu. Prefix. Span: Mining sequential patterns efficiently by prefixprojected pattern growth. ICDE'01, pages 215 -224. R. Srikant and R. Agrawal. Mining sequential patterns: Generalizations and performance improvements. EDBT'96, pages 3 -17.

References (2) n n n J. Han, J. Pei, and Y. Yin. Mining frequent patterns without candidate generation. SIGMOD'00, pages 1 -12. H. Lu, J. Han, and L. Feng. Stock movement and n-dimensional intertransaction association rules. DMKD'98, pages 12: 1 -12: 7. H. Mannila, H. Toivonen, and A. I. Verkamo. Discovery of frequent episodes in event sequences. Data Mining and Knowledge Discovery, 1: 259 -289, 1997. B. "Ozden, S. Ramaswamy, and A. Silberschatz. Cyclic association rules. ICDE'98, pages 412 -421. J. Pei, J. Han, B. Mortazavi-Asl, H. Pinto, Q. Chen, U. Dayal, and M. -C. Hsu. Prefix. Span: Mining sequential patterns efficiently by prefixprojected pattern growth. ICDE'01, pages 215 -224. R. Srikant and R. Agrawal. Mining sequential patterns: Generalizations and performance improvements. EDBT'96, pages 3 -17.