4539fc6d70918b2752a5383f07e94b00.ppt

- Количество слайдов: 13

Parsing in Multiple Languages Dan Bikel University of Pennsylvania August 22 nd, 2003 0

Problems with many parser designs • Many parsing models are largely language-independent, but their implementations do not have layer of abstraction for language-specific information • Most generative parsers do not have easy method for experimenting with different parameterizations and backoff levels • Most parsers need large database of smoothed probability estimates, but this database is often tightly coupled with decoder – Parallel and/or distributed computing cannot easily be exploited 1

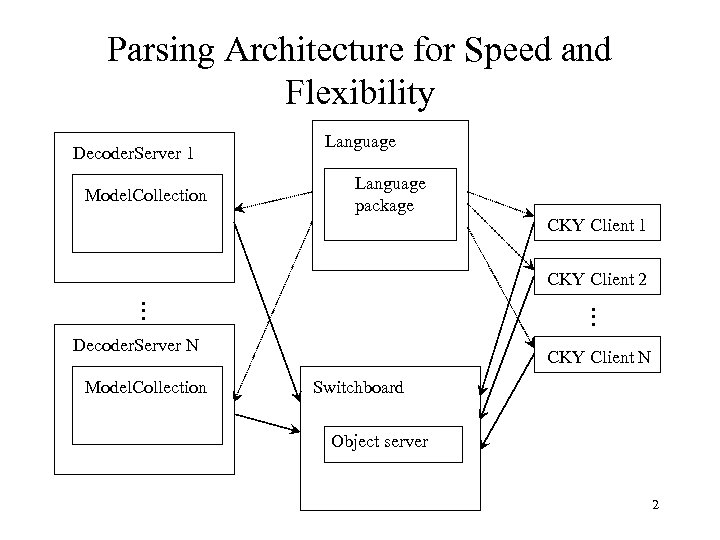

Parsing Architecture for Speed and Flexibility Decoder. Server 1 Model. Collection Language package CKY Client 1 CKY Client 2 M M Decoder. Server N Model. Collection CKY Client N Switchboard Object server 2

Architecture for Parsing II • Highly parallel, multi-threaded – Can take advantage of, e. g. , clustered computing environment • • Fully fault-tolerant Significant flexibility: layers of abstraction Optimized for speed Highly portable for new domains, including new languages 3

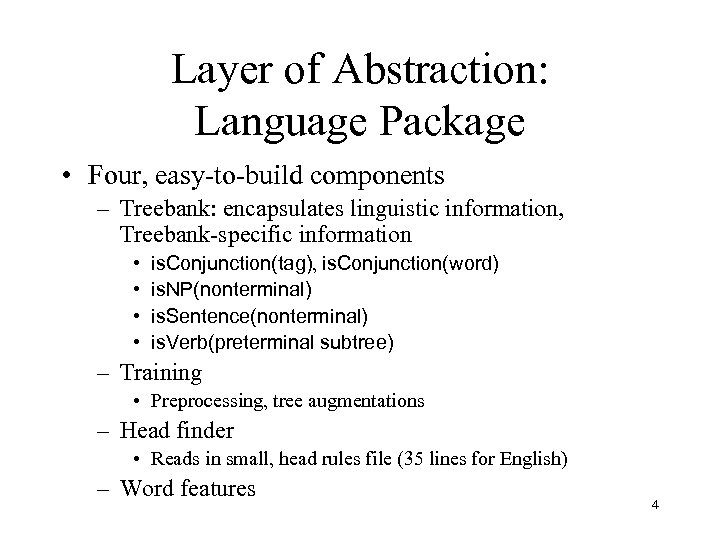

Layer of Abstraction: Language Package • Four, easy-to-build components – Treebank: encapsulates linguistic information, Treebank-specific information • • is. Conjunction(tag), is. Conjunction(word) is. NP(nonterminal) is. Sentence(nonterminal) is. Verb(preterminal subtree) – Training • Preprocessing, tree augmentations – Head finder • Reads in small, head rules file (35 lines for English) – Word features 4

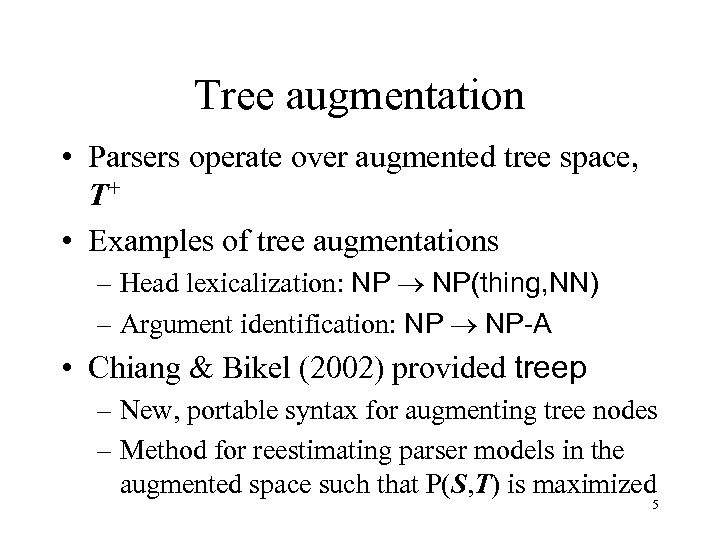

Tree augmentation • Parsers operate over augmented tree space, T+ • Examples of tree augmentations – Head lexicalization: NP ® NP(thing, NN) – Argument identification: NP ® NP-A • Chiang & Bikel (2002) provided treep – New, portable syntax for augmenting tree nodes – Method for reestimating parser models in the augmented space such that P(S, T) is maximized 5

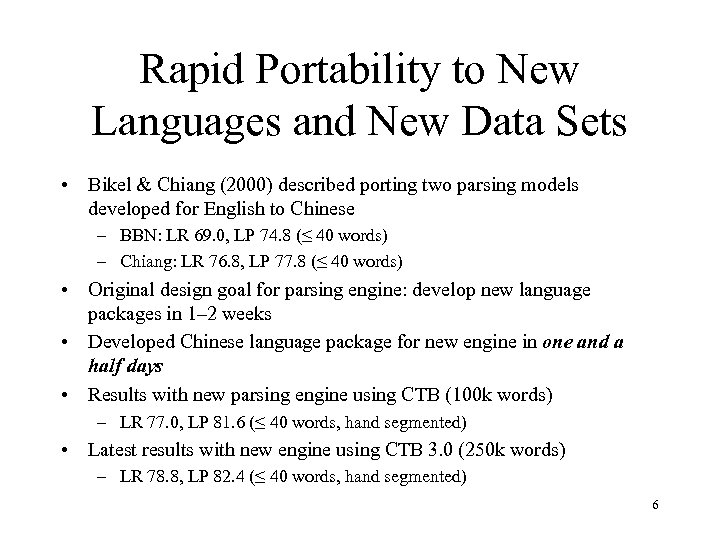

Rapid Portability to New Languages and New Data Sets • Bikel & Chiang (2000) described porting two parsing models developed for English to Chinese – BBN: LR 69. 0, LP 74. 8 (≤ 40 words) – Chiang: LR 76. 8, LP 77. 8 (≤ 40 words) • Original design goal for parsing engine: develop new language packages in 1– 2 weeks • Developed Chinese language package for new engine in one and a half days • Results with new parsing engine using CTB (100 k words) – LR 77. 0, LP 81. 6 (≤ 40 words, hand segmented) • Latest results with new engine using CTB 3. 0 (250 k words) – LR 78. 8, LP 82. 4 (≤ 40 words, hand segmented) 6

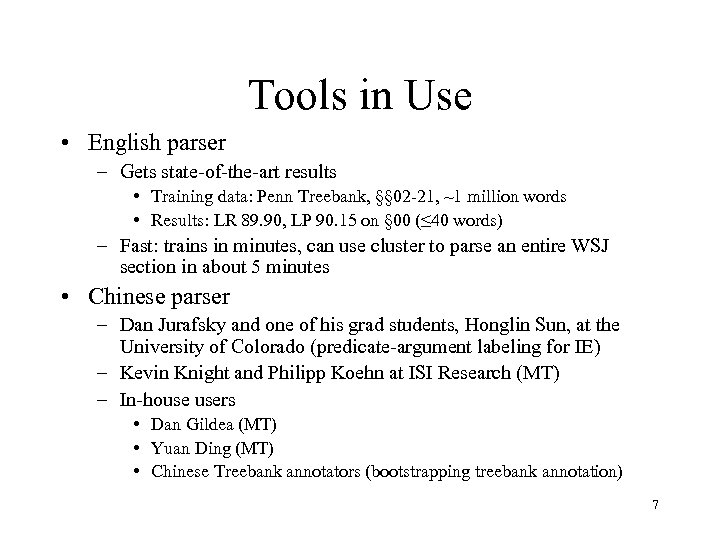

Tools in Use • English parser – Gets state-of-the-art results • Training data: Penn Treebank, §§ 02 -21, ~1 million words • Results: LR 89. 90, LP 90. 15 on § 00 (≤ 40 words) – Fast: trains in minutes, can use cluster to parse an entire WSJ section in about 5 minutes • Chinese parser – Dan Jurafsky and one of his grad students, Honglin Sun, at the University of Colorado (predicate-argument labeling for IE) – Kevin Knight and Philipp Koehn at ISI Research (MT) – In-house users • Dan Gildea (MT) • Yuan Ding (MT) • Chinese Treebank annotators (bootstrapping treebank annotation) 7

Tools in Use II • Arabic Parser – Once representation/format issues ironed out, porting to Arabic also proceeded rapidly – Encouraging preliminary results • Training data: 150 k words • LR 75. 6, LP 77. 4 (≤ 40 words, mapped gold-standard tags) – Currently being used by Rebecca Hwa at the University of Maryland (MT) – We believe • Much room for improvement after analysis of initial results, but… • performance is already more than good enough for bootstrapping Treebank annotation 8

Tools in Use III • Classical Portugese Parser – Rapid development of language package (with help of Tony Kroch) – Successfully used for bootstrapping of treebank for the Statistical Physics, Pattern Recognition and Language Change project at State University of Campinas, São Paolo, Brazil 9

Obtaining Tools and Data • Obtaining tools – For my parsing engine (English, Arabic, Chinese and soon, Korean), please contact me (dbikel@cis. upenn. edu) – For Penn’s Chinese segmenter, contact Bert Xue (xueniwen@linc. cis. upenn. edu) • Data – Treebanks and Prop. Banks (mpalmer@cis. upenn. edu) 10

Future Work • Develop Java version of David Chiang’s and my tree augmentation program, treep, and incorporate into parsing engine • Develop user-level documentation for developing a new language package (in progress) • Provide layer of abstraction for language-specific lexical resources, such as semantic hierarchy (in progress) • Explore language-independent parsing model changes, to find those that yield positive effect across all languages – Employ the layers of abstraction for exploring parameter space – Perform “regression tests” across treebanks/languages 11

fin 12

4539fc6d70918b2752a5383f07e94b00.ppt