6c51c7aca64ad3a0c94fb4fa5447f433.ppt

- Количество слайдов: 44

Overview of regional centers plans Guy Wormser HEPCCC Chairman (IN 2 P 3, France) z. Role of regional centers z. Plans and status for each center z. Experience from the BABAR experiment z. The role of HEP-CCC z. Conclusion March 11, 2002 Guy Wormser, LCG worshop

Overview of regional centers plans Guy Wormser HEPCCC Chairman (IN 2 P 3, France) z. Role of regional centers z. Plans and status for each center z. Experience from the BABAR experiment z. The role of HEP-CCC z. Conclusion March 11, 2002 Guy Wormser, LCG worshop

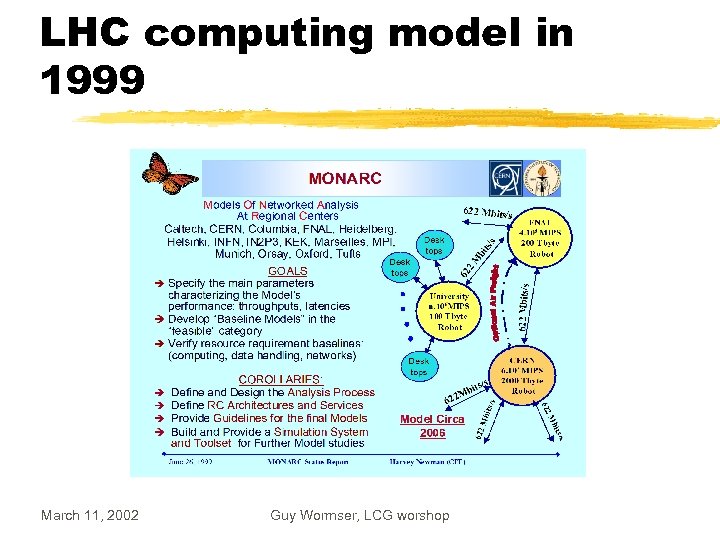

LHC computing model in 1999 March 11, 2002 Guy Wormser, LCG worshop

LHC computing model in 1999 March 11, 2002 Guy Wormser, LCG worshop

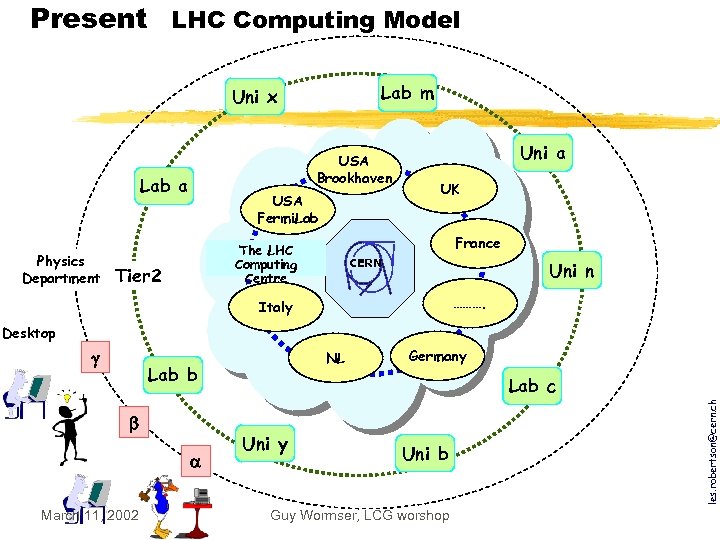

Present LHC Computing Model Lab m Uni x USA Brookhaven Lab a Physics Department USA Fermi. Lab UK France The Tier LHC 1 Computing Tier 2 Uni a CERN Centre Uni n ………. Italy Desktop NL Lab b March 11, 2002 Germany Lab c Uni y Uni b Guy Wormser, LCG worshop les. robertson@cern. ch

Present LHC Computing Model Lab m Uni x USA Brookhaven Lab a Physics Department USA Fermi. Lab UK France The Tier LHC 1 Computing Tier 2 Uni a CERN Centre Uni n ………. Italy Desktop NL Lab b March 11, 2002 Germany Lab c Uni y Uni b Guy Wormser, LCG worshop les. robertson@cern. ch

The main differences for a Tier 1 site in the GRID era z A Tier 1 site is linked to all other Tier 1 sites z National Tier 2 clusters not necessarily tied to the regional Tier 1 z Tier 1 is very likely to welcome users from the whole collaboration using GRID mechanisms z Much faster networks required (and available!) March 11, 2002 Guy Wormser, LCG worshop

The main differences for a Tier 1 site in the GRID era z A Tier 1 site is linked to all other Tier 1 sites z National Tier 2 clusters not necessarily tied to the regional Tier 1 z Tier 1 is very likely to welcome users from the whole collaboration using GRID mechanisms z Much faster networks required (and available!) March 11, 2002 Guy Wormser, LCG worshop

The official list of regional centers Project Overview Board z. From Monarc to GRID Scientific Computing Chair: CERN Director for Secretary: CERN IT Division Leader Membership: Spokespersons of LHC experiments CERN Director for Colliders Representatives of countries/regions with Tier-1 center : France, Germany, Italy, Japan, United Kingdom, United States of America 4 Representatives of countries/regions with Tier-2 center from CERN Member States In attendance: Project Leader SC 2 Chairperson March 11, 2002 Guy Wormser, LCG worshop

The official list of regional centers Project Overview Board z. From Monarc to GRID Scientific Computing Chair: CERN Director for Secretary: CERN IT Division Leader Membership: Spokespersons of LHC experiments CERN Director for Colliders Representatives of countries/regions with Tier-1 center : France, Germany, Italy, Japan, United Kingdom, United States of America 4 Representatives of countries/regions with Tier-2 center from CERN Member States In attendance: Project Leader SC 2 Chairperson March 11, 2002 Guy Wormser, LCG worshop

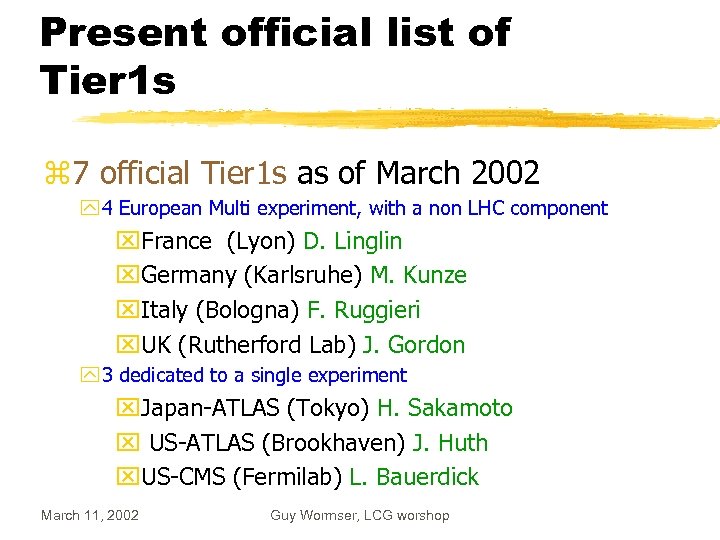

Present official list of Tier 1 s z 7 official Tier 1 s as of March 2002 y 4 European Multi experiment, with a non LHC component x. France (Lyon) D. Linglin x. Germany (Karlsruhe) M. Kunze x. Italy (Bologna) F. Ruggieri x. UK (Rutherford Lab) J. Gordon y 3 dedicated to a single experiment x. Japan-ATLAS (Tokyo) H. Sakamoto x US-ATLAS (Brookhaven) J. Huth x. US-CMS (Fermilab) L. Bauerdick March 11, 2002 Guy Wormser, LCG worshop

Present official list of Tier 1 s z 7 official Tier 1 s as of March 2002 y 4 European Multi experiment, with a non LHC component x. France (Lyon) D. Linglin x. Germany (Karlsruhe) M. Kunze x. Italy (Bologna) F. Ruggieri x. UK (Rutherford Lab) J. Gordon y 3 dedicated to a single experiment x. Japan-ATLAS (Tokyo) H. Sakamoto x US-ATLAS (Brookhaven) J. Huth x. US-CMS (Fermilab) L. Bauerdick March 11, 2002 Guy Wormser, LCG worshop

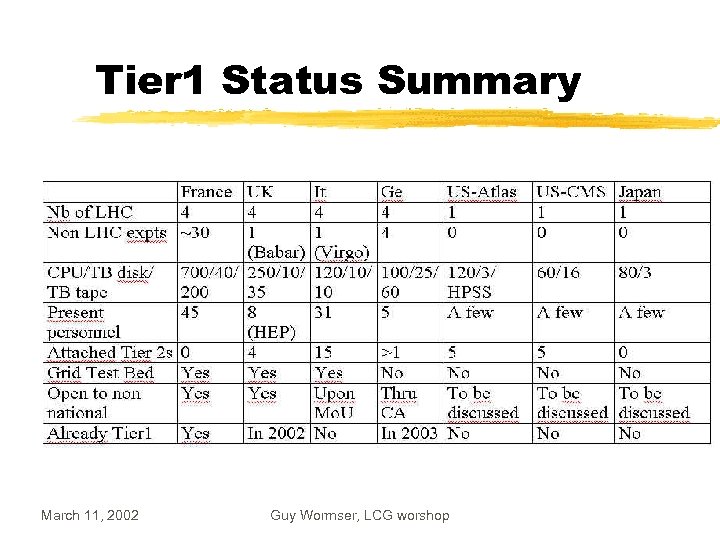

Tier 1 Status Summary March 11, 2002 Guy Wormser, LCG worshop

Tier 1 Status Summary March 11, 2002 Guy Wormser, LCG worshop

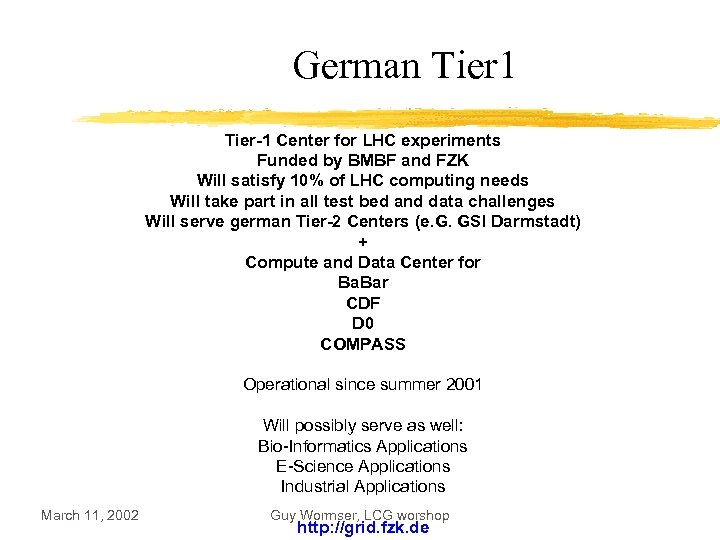

German Tier 1 Tier-1 Center for LHC experiments Funded by BMBF and FZK Will satisfy 10% of LHC computing needs Will take part in all test bed and data challenges Will serve german Tier-2 Centers (e. G. GSI Darmstadt) + Compute and Data Center for Ba. Bar CDF D 0 COMPASS Operational since summer 2001 Will possibly serve as well: Bio-Informatics Applications E-Science Applications Industrial Applications March 11, 2002 Guy Wormser, LCG worshop http: //grid. fzk. de

German Tier 1 Tier-1 Center for LHC experiments Funded by BMBF and FZK Will satisfy 10% of LHC computing needs Will take part in all test bed and data challenges Will serve german Tier-2 Centers (e. G. GSI Darmstadt) + Compute and Data Center for Ba. Bar CDF D 0 COMPASS Operational since summer 2001 Will possibly serve as well: Bio-Informatics Applications E-Science Applications Industrial Applications March 11, 2002 Guy Wormser, LCG worshop http: //grid. fzk. de

The German Tier 1 z Overview Board (OB) FZK Management, Director of Computing, Project Leader, Experiments, BMBF, KET, KHK z Technical Advisory Board (TAB) Project Leader (and Deputy), IT-Experts from Experiments, Representatives from LCG, other Tier centers, DESY, KET, KHK z New department for Grid Computing Infrastructure & Services, GIS, Hardware (Holger Marten) z New department for Grid Computing and e-Science, GES, Software (Marcel Kunze) March 11, 2002 Guy Wormser, LCG worshop

The German Tier 1 z Overview Board (OB) FZK Management, Director of Computing, Project Leader, Experiments, BMBF, KET, KHK z Technical Advisory Board (TAB) Project Leader (and Deputy), IT-Experts from Experiments, Representatives from LCG, other Tier centers, DESY, KET, KHK z New department for Grid Computing Infrastructure & Services, GIS, Hardware (Holger Marten) z New department for Grid Computing and e-Science, GES, Software (Marcel Kunze) March 11, 2002 Guy Wormser, LCG worshop

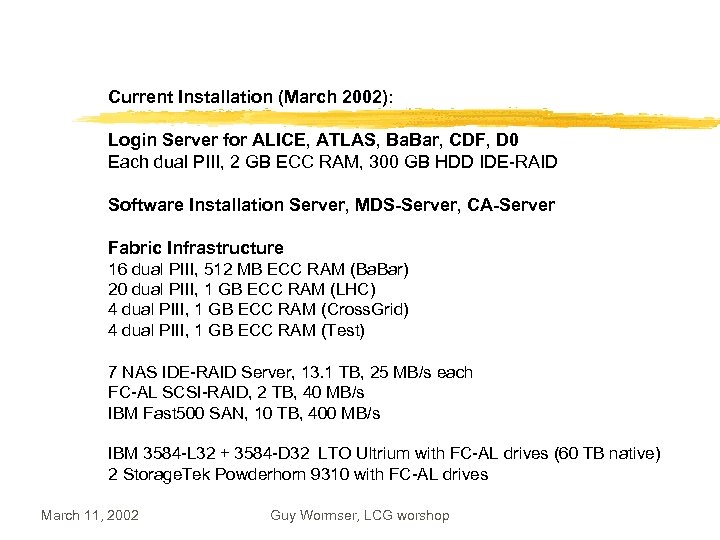

Current Installation (March 2002): Login Server for ALICE, ATLAS, Ba. Bar, CDF, D 0 Each dual PIII, 2 GB ECC RAM, 300 GB HDD IDE-RAID Software Installation Server, MDS-Server, CA-Server Fabric Infrastructure 16 dual PIII, 512 MB ECC RAM (Ba. Bar) 20 dual PIII, 1 GB ECC RAM (LHC) 4 dual PIII, 1 GB ECC RAM (Cross. Grid) 4 dual PIII, 1 GB ECC RAM (Test) 7 NAS IDE-RAID Server, 13. 1 TB, 25 MB/s each FC-AL SCSI-RAID, 2 TB, 40 MB/s IBM Fast 500 SAN, 10 TB, 400 MB/s IBM 3584 -L 32 + 3584 -D 32 LTO Ultrium with FC-AL drives (60 TB native) 2 Storage. Tek Powderhorn 9310 with FC-AL drives March 11, 2002 Guy Wormser, LCG worshop

Current Installation (March 2002): Login Server for ALICE, ATLAS, Ba. Bar, CDF, D 0 Each dual PIII, 2 GB ECC RAM, 300 GB HDD IDE-RAID Software Installation Server, MDS-Server, CA-Server Fabric Infrastructure 16 dual PIII, 512 MB ECC RAM (Ba. Bar) 20 dual PIII, 1 GB ECC RAM (LHC) 4 dual PIII, 1 GB ECC RAM (Cross. Grid) 4 dual PIII, 1 GB ECC RAM (Test) 7 NAS IDE-RAID Server, 13. 1 TB, 25 MB/s each FC-AL SCSI-RAID, 2 TB, 40 MB/s IBM Fast 500 SAN, 10 TB, 400 MB/s IBM 3584 -L 32 + 3584 -D 32 LTO Ultrium with FC-AL drives (60 TB native) 2 Storage. Tek Powderhorn 9310 with FC-AL drives March 11, 2002 Guy Wormser, LCG worshop

The German Tier 1 Grid CA • Hardware for CA available • Delivery of certificates tested • CP and CPS draft document available “FZK-Grid CA, Certificate Policy and Certification Practice Statement” Open questions: which other CAs accept our policy (trust us)? ð do we trust all other CAs? RDCCG Manpower and Office Space 2002: 5 FTE 2006: 40 FTE 2004: New building with office space (130 seats) March 11, 2002 Guy Wormser, LCG worshop

The German Tier 1 Grid CA • Hardware for CA available • Delivery of certificates tested • CP and CPS draft document available “FZK-Grid CA, Certificate Policy and Certification Practice Statement” Open questions: which other CAs accept our policy (trust us)? ð do we trust all other CAs? RDCCG Manpower and Office Space 2002: 5 FTE 2006: 40 FTE 2004: New building with office space (130 seats) March 11, 2002 Guy Wormser, LCG worshop

INFN – TIER 1 Project z Location: CNAF – Bologna z Multi-Experiment TIER 1: ALICE, ATLAS, CMS, LHCb. z Support to Tier 2 Centers z Already Started and participating in Testbed (Data. GRID) and Data Challenges (2002). z Resources: Assigned to Experiments on a Year Plan. Usage by other countries will be regulated by a Mo. U. z Authentication: Certificates (GRID) and/or Kerberos 5. March 11, 2002 Guy Wormser, LCG worshop

INFN – TIER 1 Project z Location: CNAF – Bologna z Multi-Experiment TIER 1: ALICE, ATLAS, CMS, LHCb. z Support to Tier 2 Centers z Already Started and participating in Testbed (Data. GRID) and Data Challenges (2002). z Resources: Assigned to Experiments on a Year Plan. Usage by other countries will be regulated by a Mo. U. z Authentication: Certificates (GRID) and/or Kerberos 5. March 11, 2002 Guy Wormser, LCG worshop

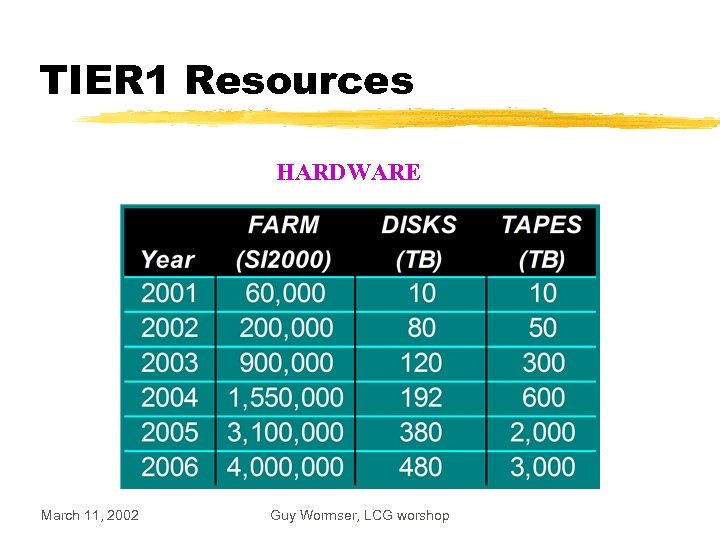

TIER 1 Resources HARDWARE March 11, 2002 Guy Wormser, LCG worshop

TIER 1 Resources HARDWARE March 11, 2002 Guy Wormser, LCG worshop

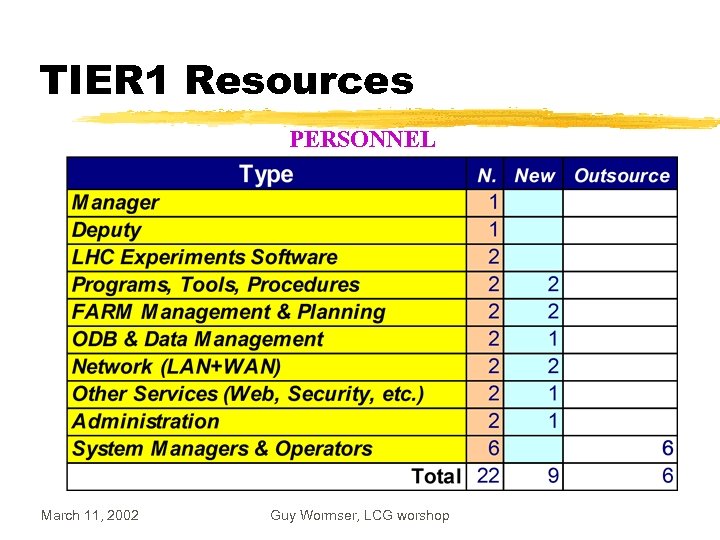

TIER 1 Resources PERSONNEL March 11, 2002 Guy Wormser, LCG worshop

TIER 1 Resources PERSONNEL March 11, 2002 Guy Wormser, LCG worshop

INFN – Tier 2 z 10 -15 INFN sites and 3 Experiments: ALICE, ATLAS, CMS (LHCb will have only Tier 3). z. Many of them already active in Testbed (Data. GRID) and Data Challenges. March 11, 2002 Guy Wormser, LCG worshop

INFN – Tier 2 z 10 -15 INFN sites and 3 Experiments: ALICE, ATLAS, CMS (LHCb will have only Tier 3). z. Many of them already active in Testbed (Data. GRID) and Data Challenges. March 11, 2002 Guy Wormser, LCG worshop

RAL Plans z RAL is the prototype Tier 1 Regional Centre for the UK y providing resources and playing a part in the Data Challenges of all four LHC experiments y supporting Tier 2 centres in the UK x. UK plans approx 4 Tier 2 centres, not yet clear which. y and is also a Tier. A Centre for Ba. Bar z Already doing simulation for LHCb and CMS now y and acting as a major repository of data for many experiments xold and new, EU and US z UK PP grid support provided by core of four sites (RAL, Manchester, Bristol, Imperial, lead by RAL ) not just the Tier 1 y all other UK sites want to be Tier 2 - see political points March 11, 2002 Guy Wormser, LCG worshop

RAL Plans z RAL is the prototype Tier 1 Regional Centre for the UK y providing resources and playing a part in the Data Challenges of all four LHC experiments y supporting Tier 2 centres in the UK x. UK plans approx 4 Tier 2 centres, not yet clear which. y and is also a Tier. A Centre for Ba. Bar z Already doing simulation for LHCb and CMS now y and acting as a major repository of data for many experiments xold and new, EU and US z UK PP grid support provided by core of four sites (RAL, Manchester, Bristol, Imperial, lead by RAL ) not just the Tier 1 y all other UK sites want to be Tier 2 - see political points March 11, 2002 Guy Wormser, LCG worshop

RAL Current Resources for HEP z Now 250 cpus, 10 TB disk, 35 TB tape (robot capacity 330 TB) z In March 2002, adding 312 1. 4 GHz cpus, 45 TB disk, 50 TB extra tape z Will spend similar amounts of cash in 2002/3 and 2003/4 y on cpu, disk, and upgrading robot capacity and throughput z Currently about 8 FTE running HEP part of centre y not including physicists and development staff (eg EDG) z Currently have negotiated funding for 13 FTE y Estimate we need about 16 FTE y Negotiating on exact tasks to be performed March 11, 2002 Guy Wormser, LCG worshop

RAL Current Resources for HEP z Now 250 cpus, 10 TB disk, 35 TB tape (robot capacity 330 TB) z In March 2002, adding 312 1. 4 GHz cpus, 45 TB disk, 50 TB extra tape z Will spend similar amounts of cash in 2002/3 and 2003/4 y on cpu, disk, and upgrading robot capacity and throughput z Currently about 8 FTE running HEP part of centre y not including physicists and development staff (eg EDG) z Currently have negotiated funding for 13 FTE y Estimate we need about 16 FTE y Negotiating on exact tasks to be performed March 11, 2002 Guy Wormser, LCG worshop

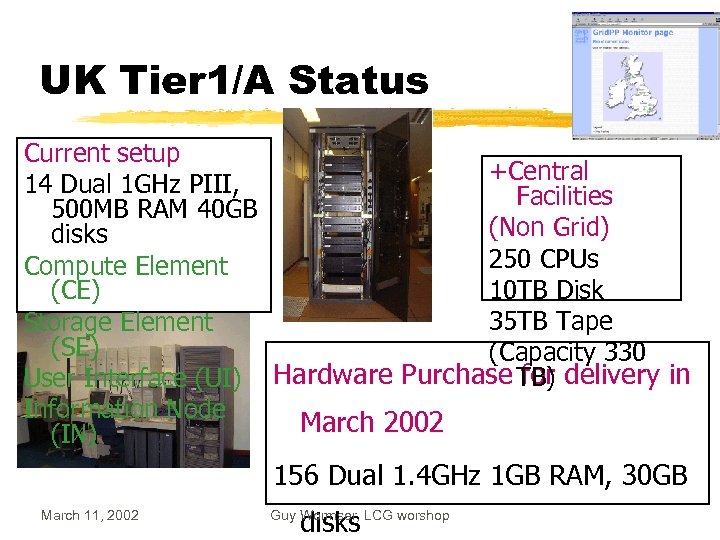

UK Tier 1/A Status Current setup +Central 14 Dual 1 GHz PIII, Facilities 500 MB RAM 40 GB (Non Grid) disks 250 CPUs Compute Element 10 TB Disk (CE) 35 TB Tape Storage Element (SE) (Capacity 330 TB) User Interface (UI) Hardware Purchase for delivery in Information Node March 2002 (IN) 156 Dual 1. 4 GHz 1 GB RAM, 30 GB March 11, 2002 disks Guy Wormser, LCG worshop

UK Tier 1/A Status Current setup +Central 14 Dual 1 GHz PIII, Facilities 500 MB RAM 40 GB (Non Grid) disks 250 CPUs Compute Element 10 TB Disk (CE) 35 TB Tape Storage Element (SE) (Capacity 330 TB) User Interface (UI) Hardware Purchase for delivery in Information Node March 2002 (IN) 156 Dual 1. 4 GHz 1 GB RAM, 30 GB March 11, 2002 disks Guy Wormser, LCG worshop

RAL Planned Use z Testbeds y EDG testbed 1, 2, 3 y EDG development testbed, y Data. TAG/GRIT y LCG testbeds y other UK testbeds z Data Challenges y Alice, Atlas, CMS, and LHCb confirmed they will use RAL z Production y Ba. Bar and others March 11, 2002 Guy Wormser, LCG worshop

RAL Planned Use z Testbeds y EDG testbed 1, 2, 3 y EDG development testbed, y Data. TAG/GRIT y LCG testbeds y other UK testbeds z Data Challenges y Alice, Atlas, CMS, and LHCb confirmed they will use RAL z Production y Ba. Bar and others March 11, 2002 Guy Wormser, LCG worshop

RAL Political Issues(1) z Computer security z a political approach to persuading sites to trust each other would be useful z Acceptable use policies - very important, coupled with above z Is the funding coming with strings attached? (a la: accessible only for local country) z No, as long as experiments are supported by UK then collaborators can use us. z Software configuration - we have problems when different experiments demand different configurations, compilers, priorities, etc. March 11, 2002 Guy Wormser, LCG worshop

RAL Political Issues(1) z Computer security z a political approach to persuading sites to trust each other would be useful z Acceptable use policies - very important, coupled with above z Is the funding coming with strings attached? (a la: accessible only for local country) z No, as long as experiments are supported by UK then collaborators can use us. z Software configuration - we have problems when different experiments demand different configurations, compilers, priorities, etc. March 11, 2002 Guy Wormser, LCG worshop

RAL Political Issues(2) z Scheduling between experiments - z UK experiments are prepared to negotiate on scheduling but they won’t have control over global data challenges so there needs to be scheduling of DCs at the top level. z What is a Tier 1/2/3 Centre? z In the UK all sites want to be Tier 2 Centres. If they are, do we have any Tier 3/4 Centres? What is the distinction between Tier 1/2/3 etc March 11, 2002 Guy Wormser, LCG worshop

RAL Political Issues(2) z Scheduling between experiments - z UK experiments are prepared to negotiate on scheduling but they won’t have control over global data challenges so there needs to be scheduling of DCs at the top level. z What is a Tier 1/2/3 Centre? z In the UK all sites want to be Tier 2 Centres. If they are, do we have any Tier 3/4 Centres? What is the distinction between Tier 1/2/3 etc March 11, 2002 Guy Wormser, LCG worshop

CMS Regional Centers in the U. S. z Fermilab is host-lab for U. S. CMS Software and Computing y DOE/NSF sponsored program to build “User Facilities” for CMS in the U. S. z User Facilities: Tier-1 center at Fermilab + 5 Tier-2 centers at U. S. Universities y Fermilab and U. S. Universities also involved in CMS Core Software y Fermilab support for Tier-2 centers, “user community”, physics analysis center z Prototypes and test-beds are operational now y R&D prototype for Tier-1 at Fermilab operational y Two prototype Tier-2 centers at UCSD/Caltech and U. Florida operational z R&D and test-bed efforts are very important y Computing R&D, Grid integration, test-beds for CMS y Making Fermilab “fit” to become a major international partner y Serving a user community for CMS physics studies: Trigger, DAQ, Detector z These facilities play a major role for CMS production efforts for physics studies March 11, 2002 Guy Wormser, LCG worshop and upcoming data challenges

CMS Regional Centers in the U. S. z Fermilab is host-lab for U. S. CMS Software and Computing y DOE/NSF sponsored program to build “User Facilities” for CMS in the U. S. z User Facilities: Tier-1 center at Fermilab + 5 Tier-2 centers at U. S. Universities y Fermilab and U. S. Universities also involved in CMS Core Software y Fermilab support for Tier-2 centers, “user community”, physics analysis center z Prototypes and test-beds are operational now y R&D prototype for Tier-1 at Fermilab operational y Two prototype Tier-2 centers at UCSD/Caltech and U. Florida operational z R&D and test-bed efforts are very important y Computing R&D, Grid integration, test-beds for CMS y Making Fermilab “fit” to become a major international partner y Serving a user community for CMS physics studies: Trigger, DAQ, Detector z These facilities play a major role for CMS production efforts for physics studies March 11, 2002 Guy Wormser, LCG worshop and upcoming data challenges

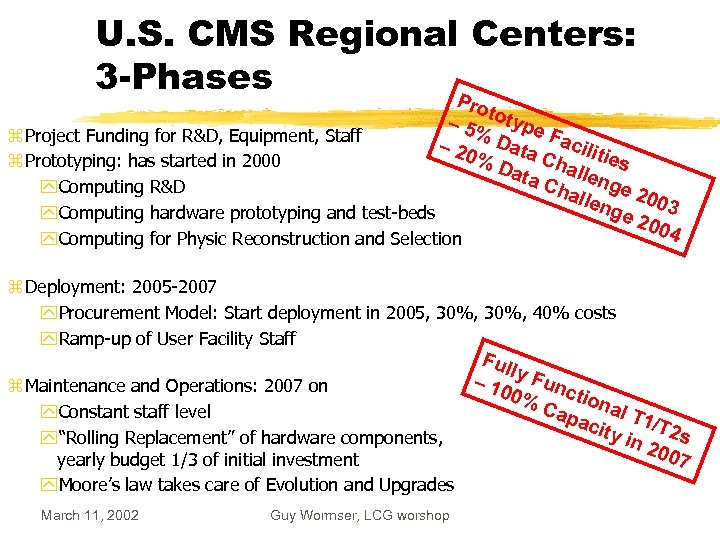

U. S. CMS Regional Centers: 3 -Phases P roto – 5% type z Project Funding for R&D, Equipment, Staff – 20 Data Facilit Cha ies %D z Prototyping: has started in 2000 ll ata Cha enge 2 y. Computing R&D llen 0 ge 03 y. Computing hardware prototyping and test-beds 200 4 y. Computing for Physic Reconstruction and Selection z Deployment: 2005 -2007 y. Procurement Model: Start deployment in 2005, 30%, 40% costs y. Ramp-up of User Facility Staff z Maintenance and Operations: 2007 on y. Constant staff level y“Rolling Replacement” of hardware components, yearly budget 1/3 of initial investment y. Moore’s law takes care of Evolution and Upgrades March 11, 2002 Guy Wormser, LCG worshop Ful l – 10 y Func ti 0% Cap onal T 1 acit y in /T 2 s 200 7

U. S. CMS Regional Centers: 3 -Phases P roto – 5% type z Project Funding for R&D, Equipment, Staff – 20 Data Facilit Cha ies %D z Prototyping: has started in 2000 ll ata Cha enge 2 y. Computing R&D llen 0 ge 03 y. Computing hardware prototyping and test-beds 200 4 y. Computing for Physic Reconstruction and Selection z Deployment: 2005 -2007 y. Procurement Model: Start deployment in 2005, 30%, 40% costs y. Ramp-up of User Facility Staff z Maintenance and Operations: 2007 on y. Constant staff level y“Rolling Replacement” of hardware components, yearly budget 1/3 of initial investment y. Moore’s law takes care of Evolution and Upgrades March 11, 2002 Guy Wormser, LCG worshop Ful l – 10 y Func ti 0% Cap onal T 1 acit y in /T 2 s 200 7

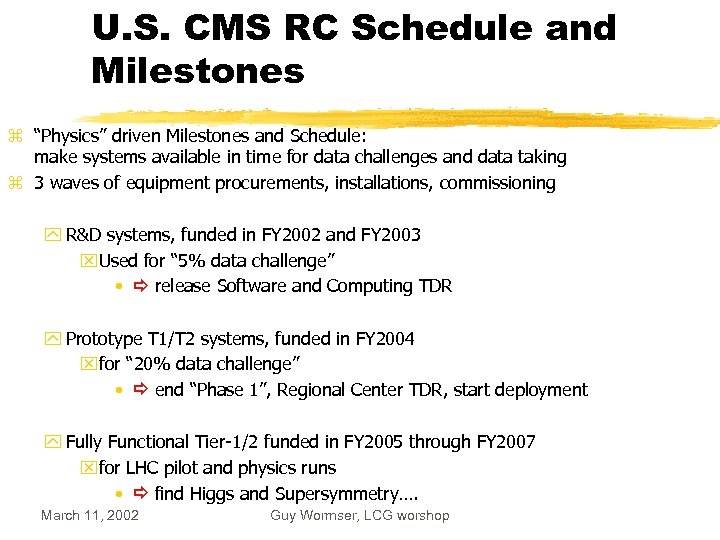

U. S. CMS RC Schedule and Milestones z “Physics” driven Milestones and Schedule: make systems available in time for data challenges and data taking z 3 waves of equipment procurements, installations, commissioning y R&D systems, funded in FY 2002 and FY 2003 x. Used for “ 5% data challenge” • release Software and Computing TDR y Prototype T 1/T 2 systems, funded in FY 2004 xfor “ 20% data challenge” • end “Phase 1”, Regional Center TDR, start deployment y Fully Functional Tier-1/2 funded in FY 2005 through FY 2007 xfor LHC pilot and physics runs • find Higgs and Supersymmetry…. March 11, 2002 Guy Wormser, LCG worshop

U. S. CMS RC Schedule and Milestones z “Physics” driven Milestones and Schedule: make systems available in time for data challenges and data taking z 3 waves of equipment procurements, installations, commissioning y R&D systems, funded in FY 2002 and FY 2003 x. Used for “ 5% data challenge” • release Software and Computing TDR y Prototype T 1/T 2 systems, funded in FY 2004 xfor “ 20% data challenge” • end “Phase 1”, Regional Center TDR, start deployment y Fully Functional Tier-1/2 funded in FY 2005 through FY 2007 xfor LHC pilot and physics runs • find Higgs and Supersymmetry…. March 11, 2002 Guy Wormser, LCG worshop

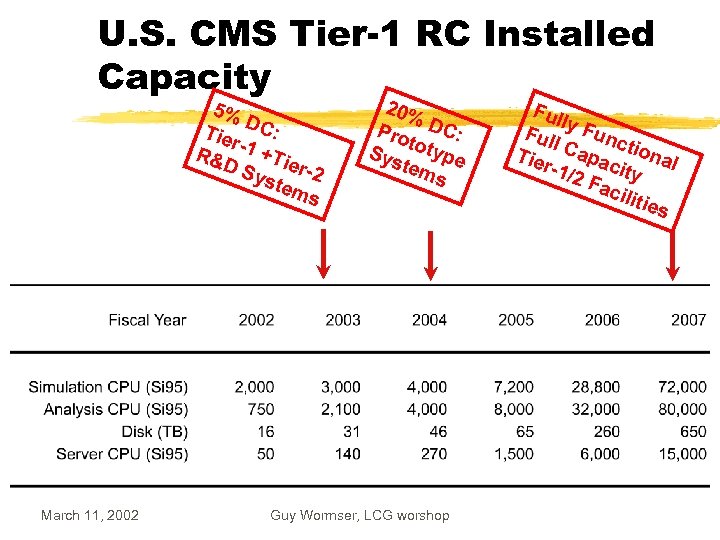

U. S. CMS Tier-1 RC Installed Capacity 5% D Tie C: r R& -1 +Tie DS yst r-2 em s March 11, 2002 20% Pro DC: t Sys otype tem s Guy Wormser, LCG worshop Ful l Ful y Func l Tie Capac tional r-1/ 2 F ity acil itie s

U. S. CMS Tier-1 RC Installed Capacity 5% D Tie C: r R& -1 +Tie DS yst r-2 em s March 11, 2002 20% Pro DC: t Sys otype tem s Guy Wormser, LCG worshop Ful l Ful y Func l Tie Capac tional r-1/ 2 F ity acil itie s

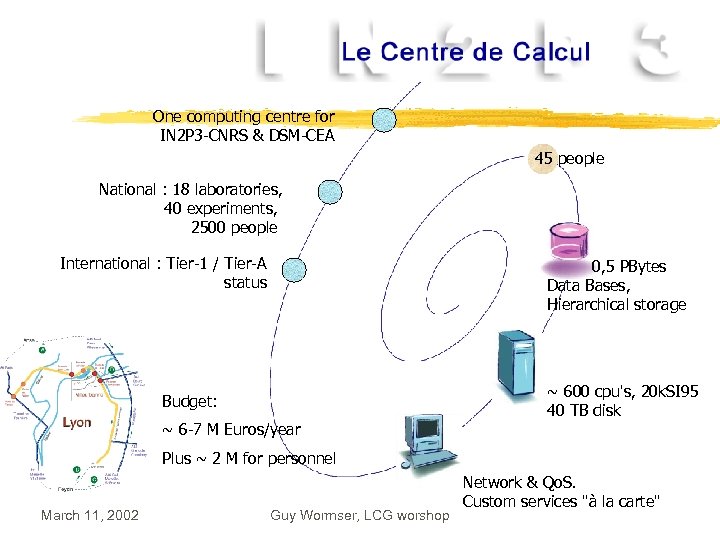

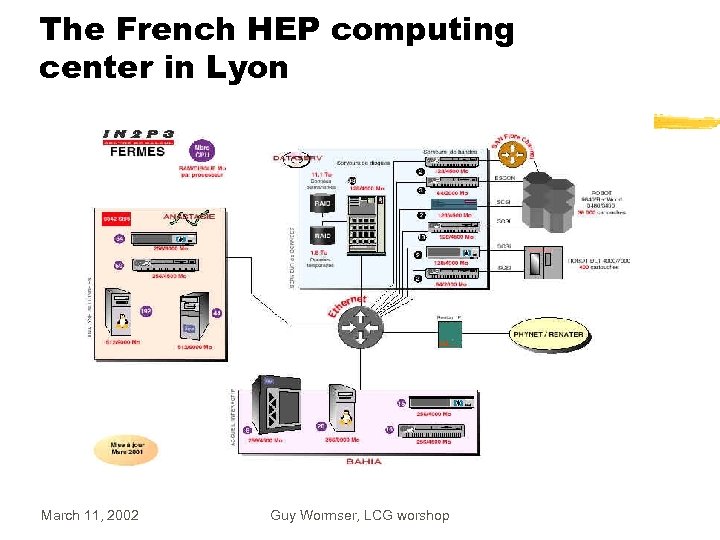

One computing centre for IN 2 P 3 -CNRS & DSM-CEA 45 people National : 18 laboratories, 40 experiments, 2500 people International : Tier-1 / Tier-A status 0, 5 PBytes Data Bases, Hierarchical storage ~ 600 cpu's, 20 k. SI 95 40 TB disk Budget: ~ 6 -7 M Euros/year Plus ~ 2 M for personnel March 11, 2002 Guy Wormser, LCG worshop Network & Qo. S. Custom services "à la carte"

One computing centre for IN 2 P 3 -CNRS & DSM-CEA 45 people National : 18 laboratories, 40 experiments, 2500 people International : Tier-1 / Tier-A status 0, 5 PBytes Data Bases, Hierarchical storage ~ 600 cpu's, 20 k. SI 95 40 TB disk Budget: ~ 6 -7 M Euros/year Plus ~ 2 M for personnel March 11, 2002 Guy Wormser, LCG worshop Network & Qo. S. Custom services "à la carte"

The French HEP computing center in Lyon March 11, 2002 Guy Wormser, LCG worshop

The French HEP computing center in Lyon March 11, 2002 Guy Wormser, LCG worshop

1 hour ~ 4 -5 SI-95 hours March 11, 2002 Guy Wormser, LCG worshop

1 hour ~ 4 -5 SI-95 hours March 11, 2002 Guy Wormser, LCG worshop

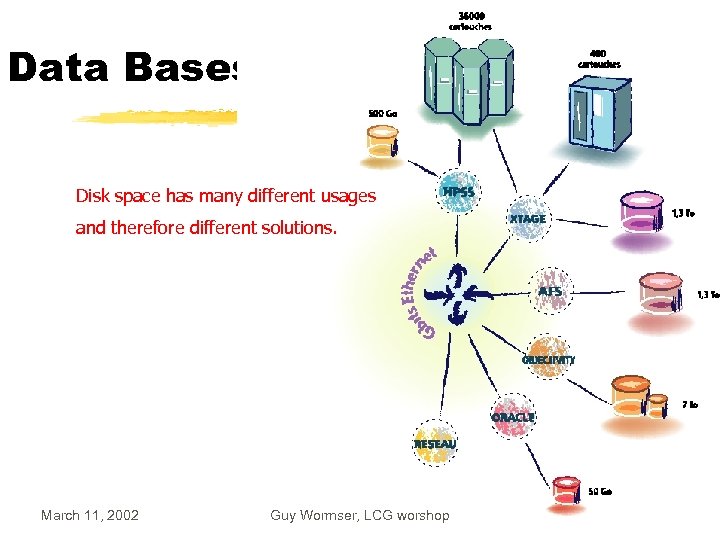

Data Bases Disk space has many different usages and therefore different solutions. March 11, 2002 Guy Wormser, LCG worshop

Data Bases Disk space has many different usages and therefore different solutions. March 11, 2002 Guy Wormser, LCG worshop

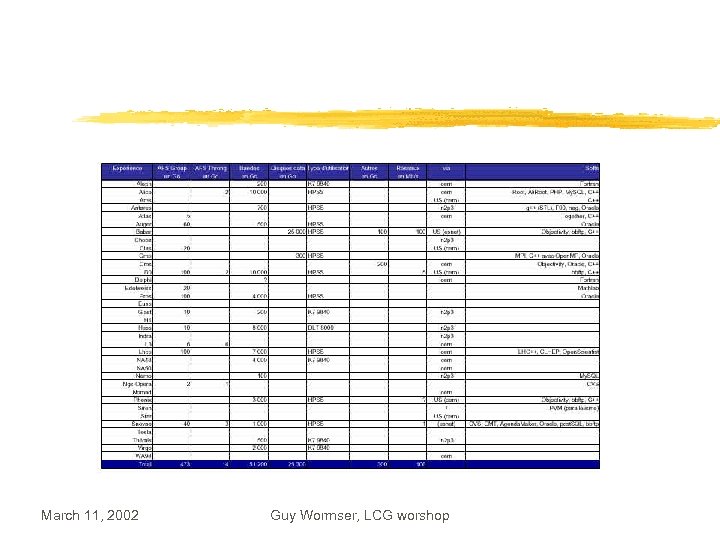

March 11, 2002 Guy Wormser, LCG worshop

March 11, 2002 Guy Wormser, LCG worshop

The BABAR experience z In 2001(pregrid area) manual load repartition between SLAC and IN 2 P 3 y account opening in yon (<24 hours) for BABAR members y data transfert (reco, microdst) in <48 h z 2002: Continue this mode and y Gradual deployment of GRID technology x. Very interesting test bench y RAL and Padova ramping up (Padova for dedicated purpose) z Importance of human interaction y Weekly meetings y Personel exchange (~1 month visit) z Mo. U : win-win situation y Value : what is saved at SLAC y Investment return : 50% of the value March 11, 2002 Guy Wormser, LCG worshop

The BABAR experience z In 2001(pregrid area) manual load repartition between SLAC and IN 2 P 3 y account opening in yon (<24 hours) for BABAR members y data transfert (reco, microdst) in <48 h z 2002: Continue this mode and y Gradual deployment of GRID technology x. Very interesting test bench y RAL and Padova ramping up (Padova for dedicated purpose) z Importance of human interaction y Weekly meetings y Personel exchange (~1 month visit) z Mo. U : win-win situation y Value : what is saved at SLAC y Investment return : 50% of the value March 11, 2002 Guy Wormser, LCG worshop

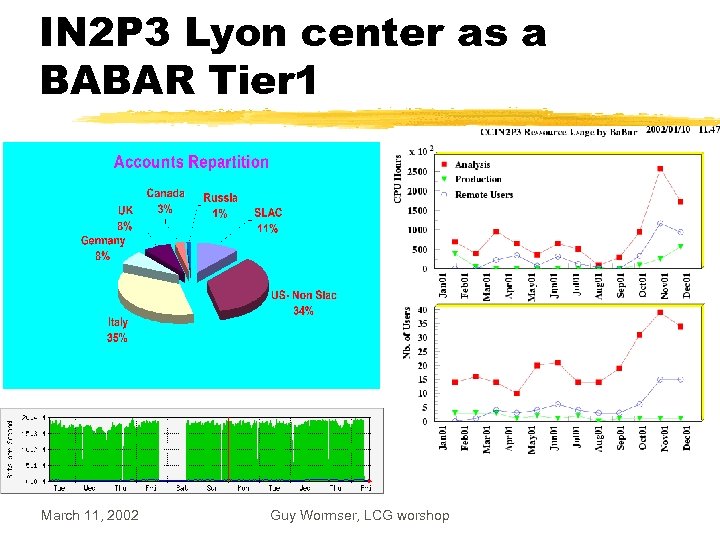

IN 2 P 3 Lyon center as a BABAR Tier 1 March 11, 2002 Guy Wormser, LCG worshop

IN 2 P 3 Lyon center as a BABAR Tier 1 March 11, 2002 Guy Wormser, LCG worshop

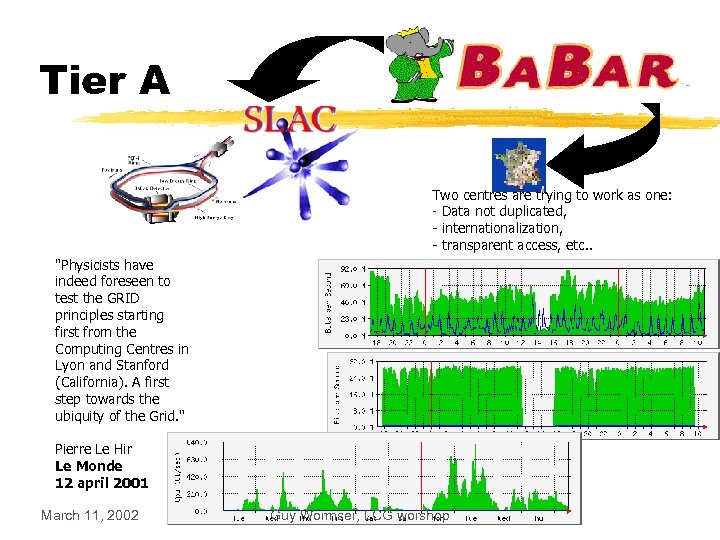

Tier A Two centres are trying to work as one: - Data not duplicated, - internationalization, - transparent access, etc. . "Physicists have indeed foreseen to test the GRID principles starting first from the Computing Centres in Lyon and Stanford (California). A first step towards the ubiquity of the Grid. " Pierre Le Hir Le Monde 12 april 2001 March 11, 2002 Guy Wormser, LCG worshop

Tier A Two centres are trying to work as one: - Data not duplicated, - internationalization, - transparent access, etc. . "Physicists have indeed foreseen to test the GRID principles starting first from the Computing Centres in Lyon and Stanford (California). A first step towards the ubiquity of the Grid. " Pierre Le Hir Le Monde 12 april 2001 March 11, 2002 Guy Wormser, LCG worshop

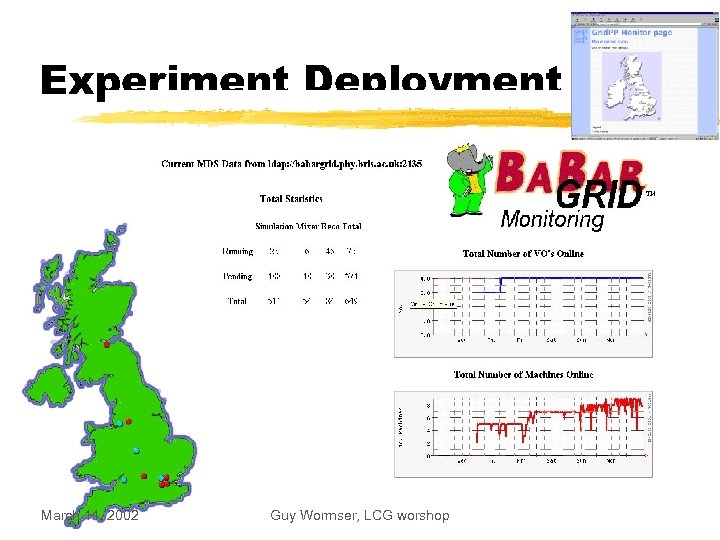

Experiment Deployment March 11, 2002 Guy Wormser, LCG worshop

Experiment Deployment March 11, 2002 Guy Wormser, LCG worshop

The role of HEP-CCC z. Several meetings per year of director of the lage regional centers z. Non LHC- dedicated z. Importance to get global representation: contact with ICFA March 11, 2002 Guy Wormser, LCG worshop

The role of HEP-CCC z. Several meetings per year of director of the lage regional centers z. Non LHC- dedicated z. Importance to get global representation: contact with ICFA March 11, 2002 Guy Wormser, LCG worshop

HEPCCC Terms of reference z CCC/CERN/89/08 Rev. z The High-Energy Physics Computing Co-ordination Committee - HEP-CCC - provides a forum in which the Directors responsible for Computing at the major European Institutes which provide funds for HEP are able to discuss the organisation, coordination and optimisation of computing in terms both of money and personnel. z In HEP-CCC, members analyse potential problems and develop common or complementary strategies to solve them in the most cost-effective way for the European HEP community. z The aim is to achieve a European-wide co-ordination of computing resources. It is believed that this improves the opportunities of the national HEP communities to satisfy their computing needs. March 11, 2002 Guy Wormser, LCG worshop

HEPCCC Terms of reference z CCC/CERN/89/08 Rev. z The High-Energy Physics Computing Co-ordination Committee - HEP-CCC - provides a forum in which the Directors responsible for Computing at the major European Institutes which provide funds for HEP are able to discuss the organisation, coordination and optimisation of computing in terms both of money and personnel. z In HEP-CCC, members analyse potential problems and develop common or complementary strategies to solve them in the most cost-effective way for the European HEP community. z The aim is to achieve a European-wide co-ordination of computing resources. It is believed that this improves the opportunities of the national HEP communities to satisfy their computing needs. March 11, 2002 Guy Wormser, LCG worshop

HEP CCC terms of reference (2) z HEP-CCC may set up some technical committees whose role is to gather the information, to select the best courses to follow, and to present them with the pros and cons to the HEP-CCC for decision. In this context, HEP-CCC has established in 1995 the HEP-CCC Technical Advisory Sub-Committee (HTASC), the mandate of which may be found at HTASC Mandate. z The members of the HEP-CCC endeavour to disseminate the information gathered and to implement the recommendations in their respective areas of responsibility. HEP-CCC takes every precaution to consider also the problems and interests of the countries and institutes of the HEP community, which do not have members on the committee; these are explicitly represented by the Chairman of ECFA. HEP-CCC may maintain further communications through Correspondents, or by inviting Observers. z The conclusions of HEP-CCC are thus meant to serve also as carefully elaborated, strong recommendations to the whole European HEP community March 11, 2002 Guy Wormser, LCG worshop

HEP CCC terms of reference (2) z HEP-CCC may set up some technical committees whose role is to gather the information, to select the best courses to follow, and to present them with the pros and cons to the HEP-CCC for decision. In this context, HEP-CCC has established in 1995 the HEP-CCC Technical Advisory Sub-Committee (HTASC), the mandate of which may be found at HTASC Mandate. z The members of the HEP-CCC endeavour to disseminate the information gathered and to implement the recommendations in their respective areas of responsibility. HEP-CCC takes every precaution to consider also the problems and interests of the countries and institutes of the HEP community, which do not have members on the committee; these are explicitly represented by the Chairman of ECFA. HEP-CCC may maintain further communications through Correspondents, or by inviting Observers. z The conclusions of HEP-CCC are thus meant to serve also as carefully elaborated, strong recommendations to the whole European HEP community March 11, 2002 Guy Wormser, LCG worshop

Attendance in Nov 2001 z PRESENT: G. Barreira, M. Cattaneo (for P. Jeffreys) W. de. Boer, M. Delfino, J. Le Foll, W. Hoogland, D. Jacobs (secretary), T. Haas, H. F. Hoffmann (near the end), D. Kelsey, D. Linglin, S. Lloyd, L. Price, F. Ruggieri (item 5 onwards), E. Valente, H. von der Schmitt, G. Wormser (chairman) z EXCUSED: A. Ferrer, L. Foà, I. Gaines, P. Jeffreys, J. May, K. Peach, P. Villemoes z INVITED: F. Gagliardi, J. Harvey (for D. Schlatter) L. Robertson March 11, 2002 Guy Wormser, LCG worshop

Attendance in Nov 2001 z PRESENT: G. Barreira, M. Cattaneo (for P. Jeffreys) W. de. Boer, M. Delfino, J. Le Foll, W. Hoogland, D. Jacobs (secretary), T. Haas, H. F. Hoffmann (near the end), D. Kelsey, D. Linglin, S. Lloyd, L. Price, F. Ruggieri (item 5 onwards), E. Valente, H. von der Schmitt, G. Wormser (chairman) z EXCUSED: A. Ferrer, L. Foà, I. Gaines, P. Jeffreys, J. May, K. Peach, P. Villemoes z INVITED: F. Gagliardi, J. Harvey (for D. Schlatter) L. Robertson March 11, 2002 Guy Wormser, LCG worshop

Proposal z. Explore the possibility for HEPCCC to become a IUPAP/ICFA committee z. Form a working group to that effect to report in a few months z. Membership : SCIC chair , HEPCCC chair, . . z. Will be discussed at the next HEPCCC meeting on March 22 March 11, 2002 Guy Wormser, LCG worshop

Proposal z. Explore the possibility for HEPCCC to become a IUPAP/ICFA committee z. Form a working group to that effect to report in a few months z. Membership : SCIC chair , HEPCCC chair, . . z. Will be discussed at the next HEPCCC meeting on March 22 March 11, 2002 Guy Wormser, LCG worshop

Long term plans z. Ramping up plans of all present Tier 1 s in phase with the LCG plans : y ~10% of total LHC needs in each Tier 1 in 2007 z. Tier 2 plans in Tier 1 countries require consolidation z. Bring new Tier 1 s in ? y(Brazil, Netherlands, . . . ? ) March 11, 2002 Guy Wormser, LCG worshop

Long term plans z. Ramping up plans of all present Tier 1 s in phase with the LCG plans : y ~10% of total LHC needs in each Tier 1 in 2007 z. Tier 2 plans in Tier 1 countries require consolidation z. Bring new Tier 1 s in ? y(Brazil, Netherlands, . . . ? ) March 11, 2002 Guy Wormser, LCG worshop

Conclusions z Tier 1 building up well under way in each country: y. Hardware, Personnel, Participation in Grid testing y. Long term plans in hand z Important to define tasks, specifications, minimum requirements y. Tier 2 roles, relationship with Tier 1 z Tighten human links between Tier 1 s March 11, 2002 Guy Wormser, LCG worshop

Conclusions z Tier 1 building up well under way in each country: y. Hardware, Personnel, Participation in Grid testing y. Long term plans in hand z Important to define tasks, specifications, minimum requirements y. Tier 2 roles, relationship with Tier 1 z Tighten human links between Tier 1 s March 11, 2002 Guy Wormser, LCG worshop

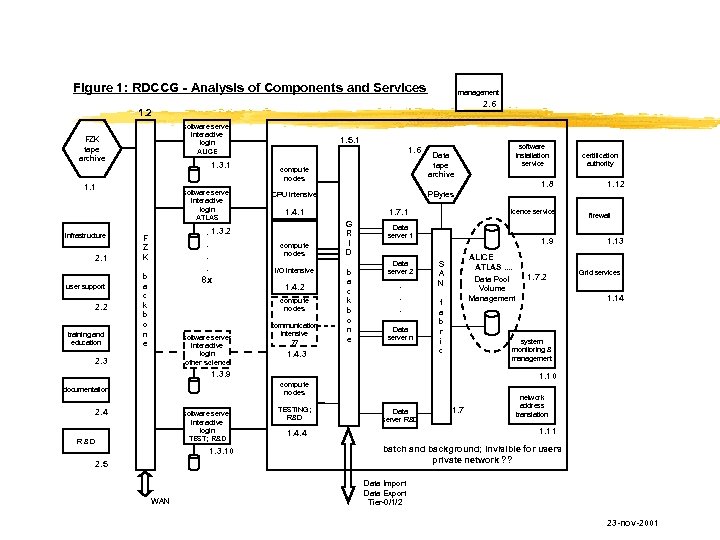

Figure 1: RDCCG - Analysis of Components and Services management 2. 6 1. 2 software server interactive login ALICE FZK tape archive 1. 3. 1 1. 1 infrastructure 2. 1 user support 2. 2 training and education software server interactive login ATLAS . 1. 3. 2. . . 8 x F Z K b a c k b o n e 1. 5. 1 1. 6 compute nodes CPU intensive PBytes 1. 4. 1 compute nodes I/O intensive 1. 4. 2 compute nodes software server interactive login other science 2. 3 communication intensive ? ? 1. 7. 1 G R I D b a c k b o n e Data server 1 Data server 2 . . . Data server n 1. 4. 3 1. 9 ALICE ATLAS. . Data Pool Volume Management S A N f a b r i c software server interactive login TEST; R&D 1. 3. 10 2. 5 WAN TESTING; R&D 1. 7. 2 certification authority 1. 12 firewall 1. 13 Grid services 1. 14 system monitoring & management 1. 10 compute nodes 2. 4 1. 8 licence service 1. 3. 9 documentation software installation service Data tape archive Data server R&D 1. 7 network address translation 1. 11 1. 4. 4 batch and background; invisible for users private network ? ? Data Import Data Export Tier-0/1/2 23 -nov-2001

Figure 1: RDCCG - Analysis of Components and Services management 2. 6 1. 2 software server interactive login ALICE FZK tape archive 1. 3. 1 1. 1 infrastructure 2. 1 user support 2. 2 training and education software server interactive login ATLAS . 1. 3. 2. . . 8 x F Z K b a c k b o n e 1. 5. 1 1. 6 compute nodes CPU intensive PBytes 1. 4. 1 compute nodes I/O intensive 1. 4. 2 compute nodes software server interactive login other science 2. 3 communication intensive ? ? 1. 7. 1 G R I D b a c k b o n e Data server 1 Data server 2 . . . Data server n 1. 4. 3 1. 9 ALICE ATLAS. . Data Pool Volume Management S A N f a b r i c software server interactive login TEST; R&D 1. 3. 10 2. 5 WAN TESTING; R&D 1. 7. 2 certification authority 1. 12 firewall 1. 13 Grid services 1. 14 system monitoring & management 1. 10 compute nodes 2. 4 1. 8 licence service 1. 3. 9 documentation software installation service Data tape archive Data server R&D 1. 7 network address translation 1. 11 1. 4. 4 batch and background; invisible for users private network ? ? Data Import Data Export Tier-0/1/2 23 -nov-2001

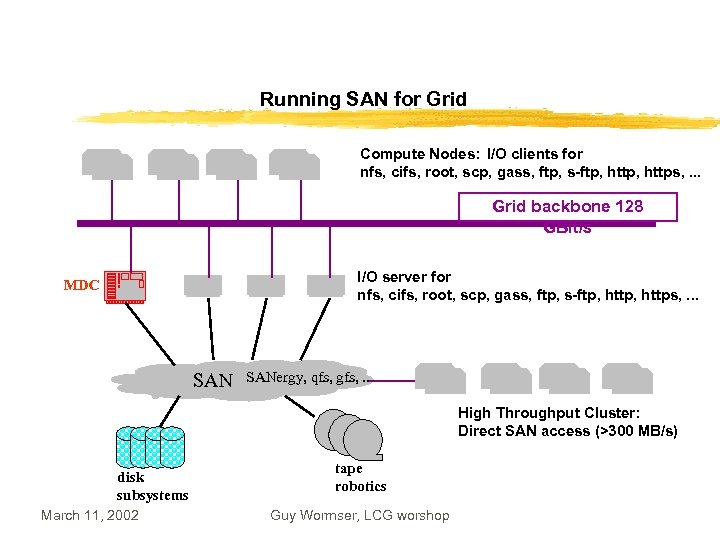

Running SAN for Grid Compute Nodes: I/O clients for nfs, cifs, root, scp, gass, ftp, s-ftp, https, . . . Grid backbone 128 GBit/s I/O server for nfs, cifs, root, scp, gass, ftp, s-ftp, https, . . . MDC SANergy, qfs, gfs, . . . High Throughput Cluster: Direct SAN access (>300 MB/s) disk subsystems March 11, 2002 tape robotics Guy Wormser, LCG worshop

Running SAN for Grid Compute Nodes: I/O clients for nfs, cifs, root, scp, gass, ftp, s-ftp, https, . . . Grid backbone 128 GBit/s I/O server for nfs, cifs, root, scp, gass, ftp, s-ftp, https, . . . MDC SANergy, qfs, gfs, . . . High Throughput Cluster: Direct SAN access (>300 MB/s) disk subsystems March 11, 2002 tape robotics Guy Wormser, LCG worshop

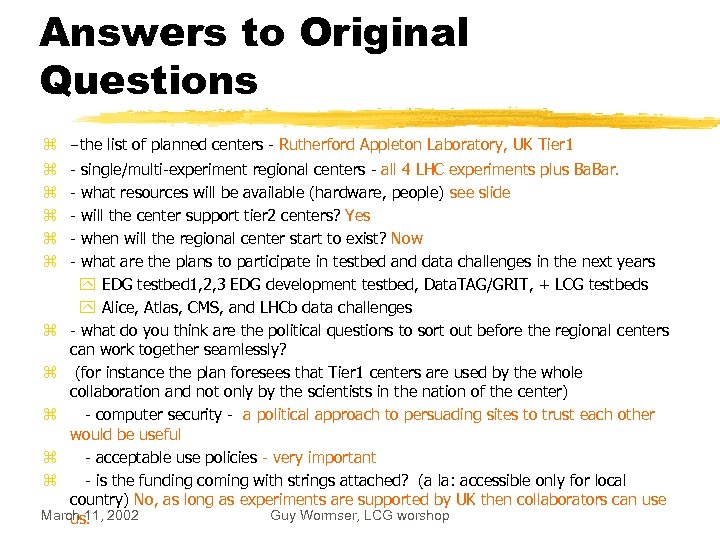

Answers to Original Questions z -the list of planned centers - Rutherford Appleton Laboratory, UK Tier 1 - single/multi-experiment regional centers - all 4 LHC experiments plus Ba. Bar. - what resources will be available (hardware, people) see slide - will the center support tier 2 centers? Yes - when will the regional center start to exist? Now - what are the plans to participate in testbed and data challenges in the next years y EDG testbed 1, 2, 3 EDG development testbed, Data. TAG/GRIT, + LCG testbeds y Alice, Atlas, CMS, and LHCb data challenges z - what do you think are the political questions to sort out before the regional centers can work together seamlessly? z (for instance the plan foresees that Tier 1 centers are used by the whole collaboration and not only by the scientists in the nation of the center) z - computer security - a political approach to persuading sites to trust each other would be useful z - acceptable use policies - very important z - is the funding coming with strings attached? (a la: accessible only for local country) No, as long as experiments are supported by UK then collaborators can use March 11, 2002 Guy Wormser, LCG worshop us. z z z

Answers to Original Questions z -the list of planned centers - Rutherford Appleton Laboratory, UK Tier 1 - single/multi-experiment regional centers - all 4 LHC experiments plus Ba. Bar. - what resources will be available (hardware, people) see slide - will the center support tier 2 centers? Yes - when will the regional center start to exist? Now - what are the plans to participate in testbed and data challenges in the next years y EDG testbed 1, 2, 3 EDG development testbed, Data. TAG/GRIT, + LCG testbeds y Alice, Atlas, CMS, and LHCb data challenges z - what do you think are the political questions to sort out before the regional centers can work together seamlessly? z (for instance the plan foresees that Tier 1 centers are used by the whole collaboration and not only by the scientists in the nation of the center) z - computer security - a political approach to persuading sites to trust each other would be useful z - acceptable use policies - very important z - is the funding coming with strings attached? (a la: accessible only for local country) No, as long as experiments are supported by UK then collaborators can use March 11, 2002 Guy Wormser, LCG worshop us. z z z